Abstract

Due to recent technological developments, vignette studies that have traditionally been done in text or video formats can now be done in immersive formats using virtual reality—but are such virtual reality video vignettes superior to traditional vignettes? To address this question, we examine participants’ experiences within a fictitious organization by comparing their responses to a relevant and particularly sensitive organizational phenomenon presented either through written text, a video recording, or a virtual reality experience. The results indicate that participants prefer more immersive methods, and that these increase their attention to critical study details. Moreover, this augments the effect sizes of several measured employee reactions—particularly those with high emotional content—suggesting that virtual reality technology offers a promising avenue for developing ecologically valid vignette studies to measure employee affect. To facilitate and expediate the use of virtual reality video vignettes in organizational research, we provide organizational scholars with a step-by-step instructional guide to develop immersive vignette studies.

Experimental research in the organizational sciences—for example, organizational behavior, industrial psychology, management—often presents participants with written vignettes describing people, environments, and events in detail (Aguinis & Bradley, 2014; Atzmüller & Steiner, 2010; Auspurg & Hinz, 2015; Hughes & Huby, 2002). Vignette studies have provided scholars with a valuable tool for establishing causal relationships (Antonakis et al., 2010), by studying organizational phenomena while mitigating the influence of contextual confounds (Whiting et al., 2012). Given that causality is a cornerstone of science, the popularity of vignettes has steadily grown since their introduction to organizational research in the 1990s (Aguinis & Bradley, 2014; Pierce & Aguinis, 1997). However, vignettes have not escaped criticism, with researchers raising concerns that this methodology lacks realism and is thus not ecologically valid. To overcome this limitation, several methodologists have argued for videos as a more externally valid method for vignette presentation, which is believed to improve the accuracy of research findings (Atzmüller & Steiner, 2010; Finch, 1987; Loman & Larkin, 1976).

Recently, researchers have proposed going beyond video, and using virtual reality (VR) techniques to study employees in immersive environments (Aguinis & Bradley, 2014; Alcañiz et al., 2018; Hubbard & Aguinis, 2023; Jolink & Niesten, 2021; Niebuhr & Tegtmeier, 2019). Compared to watching a desktop video or reading a written scenario, fully immersing participants into a virtual environment is theorized to elicit more realistic reactions, potentially leading to a more accurate representation of reality in the study's conclusions (Adão et al., 2018; Slater, 2009; Taylor, 2006). However, there is a paucity of scholarship contrasting virtual environments to other approaches (Hoyt & Blascovich, 2003). Therefore, it remains unclear to what extent study participants can immerse themselves in fictional organizational scenarios and settings, and more importantly, whether immersive methodology fundamentally enhances the quality of data. In this article we therefore aim to empirically compare and evaluate the effectiveness of three study methods—text-based vignettes, video vignettes, and virtual reality video vignettes (VRVV)—by contrasting employees’ experiences with and reactions to a particularly sensitive scenario in a hypothetical organizational setting.

We present a comprehensive evaluation of the validity of different experimental vignette methodologies (hereafter referred to as ‘vignettes’; Aguinis & Bradley, 2014) in the organizational sciences. First, we illustrate the current shortcomings of vignette research and discuss how participant immersion influences the measurement of employee affect, behavior, and cognitions. Second, we demonstrate that the more immersive the vignette format, the greater participants’ engagement in a scenario, resulting in more attention to study manipulations and an enhanced user experience. Third, we provide a nuanced conclusion on when VRVVs are worth the effort over traditional vignettes, with evidence that using more immersive vignette formats can result in larger effect sizes, albeit primarily for variables that would be unethical, unrealistic, unsafe, sensitive, or difficult to study in the field. Finally, we provide recommendations for organizational scholars—weighing the advantages and disadvantages of VR research based on the demands of a given research project—and make concrete suggestions regarding the tools, time, and budget required to develop VRVVs.

Conceptual Background

Text-based vignettes have been used by organizational researchers for many decades (Pierce & Aguinis, 1997), while video vignettes have been used, too, but less frequently (Adão et al., 2018; Hughes & Huby, 2002). VRVVs, in contrast, remain understudied as a research method. To capture what a VRVV entails precisely, we combine the definitions of a vignette (Atzmüller & Steiner, 2010) and of VR (Nilsson et al., 2016) to describe a VRVV as an immersive and virtual scenario depicting people, objects, environments, and/or events, that users observe through a head-mounted display, and that researchers systematically manipulate on a predetermined combination of characteristics. It is crucial to highlight the existence of two subsets of VR simulations, delineated by users’ degrees of freedom. In simulations employing three degrees of freedom, users lack the ability to interact and move within the virtual environment. Conversely, simulations with six degrees of freedom empower users to synchronize their real-world movements with their virtual counterparts, fostering greater interactivity (Hubbard & Villano, 2023). Considering our objective to compare various vignette methodologies, inherently static in nature without any interaction (Atzmüller & Steiner, 2010), we deliberately position VRVVs as VR simulations with three degrees of freedom. To understand why VRVVs—through heightened participant immersion—may be a useful tool to measure employee affect (Parsons, 2015), behaviors (Innocenti, 2017), and cognitions (Peeters, 2019), we first discuss the shortcomings of traditional vignette studies.

Traditional Vignette Studies

Historically, organizational and managerial research has predominantly consisted of cross-sectional observational studies (Aguinis & Edwards, 2014), meaning that most studies found evidence of covariation on a multitude of variables, yet failed to establish causality between them (Antonakis et al., 2010; Hsu et al., 2017; Spector, 1981). With the acceptance of vignettes as a viable research methodology (for an extensive review of vignettes see: Aguinis & Bradley, 2014), researchers can—in addition to addressing causality concerns—collect data on unethical (e.g., stealing ideas from co-workers), unrealistic (e.g., working with robots on the moon), unsafe (e.g., workplace hazards), sensitive (e.g., conflicts with co-workers), or just difficult to study in the real world (e.g., time-constrained events) topics from employees, which cannot be readily acquired through field studies (De Boeck et al., 2018; Garcia-Retamero & López-Zafra, 2006; Hubbard & Aguinis, 2023).

Most importantly, vignettes allow researchers to manipulate organizational context factors within a single study, whereas the alternative—multilevel field studies—requires surveying hundreds of employees in dozens of real-life organizations that systematically vary on several contextual variables, making it extraordinarily difficult to establish causality in multilevel studies (Antonakis et al., 2010; Eckardt et al., 2021). Researchers thus faced the dilemma of choosing between vignettes that offer high internal validity, versus nonexperimental designs that maximize external validity (Aguinis & Bradley, 2014). Given the complexity, time commitment, and added cost of experimental research, it makes sense that most researchers have opted for more time-efficient research projects with nonexperimental designs.

Types of Vignette Studies

Vignettes can be presented in various formats, such as using text, images, videos, and other digital media (Hughes & Huby, 2002). One major concern is the degree to which participants are engaged with the vignette, and the extent to which they can immerse themselves in the described scenario. Specifically, the general notion is that the number of senses individuals activate during the experiment can be directly related to how much attention they are paying to the experiment, rather than to the environment around them (Hudson et al., 2019; Hughes & Huby, 2002; Slater, 2009, 2018). Back in 1997, Pierce and Aguinis already advocated for the use of VR technology in organizational research to physically place participants within a situation—activating multiple senses—rather than presenting it to them on a piece of paper. While VR took a decade or two to become more accessible to nontechnically oriented researchers (i.e., VR is presently user friendly, and basic VR equipment can be purchased and set up without the demand for specific expertise), it is now commonplace in other disciplines (Rizzo et al., 2023), and considered practically feasible for management scholars (Hubbard & Aguinis, 2023).

Vignette studies typically describe a detailed scenario participants are asked to immerse themselves in (e.g., Raaijmakers et al., 2015). Realism is key, as participants are often asked to respond as if they were in the given situation—or had experienced the events—described in the vignette themselves (Finch, 1987). The crux of the problem is that researchers assume that participants’ reading comprehension, imagination, and—if applicable—their ability to take on the perspective of the individual in the vignette, is adequate to reliably respond to the depicted scenario. However, individual differences between participants’ abilities will inevitably create systematic differences in how they experience and respond to the vignette. We will argue that immersing participants in a scenario using VR technology will mitigate this drawback.

Participant Immersion in Virtual Reality Video Vignettes

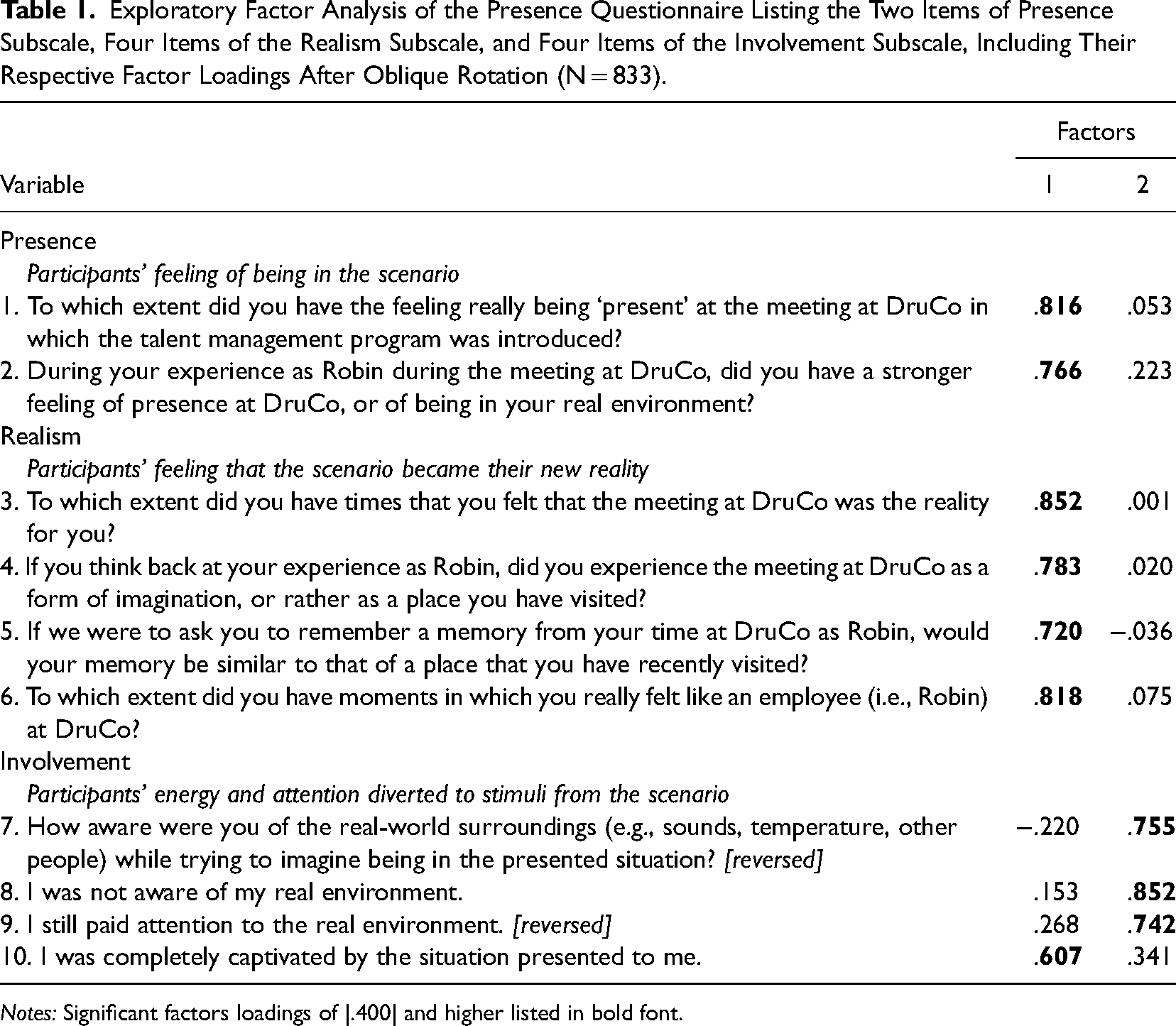

The most prominent underlying mechanism that explains why individuals feel immersed in VR environments is through the psychological experience of being “present” in a virtual environment. Overriding one's senses with stimuli from a virtual environment is what characterizes a fully immersive experience (Slater, 2018), such that the user is unaware of being present in their physical location (Slater & Usoh, 1993), is completely involved in the virtual world (Schubert et al., 2001), and perceives the virtual world as their new reality (Witmer & Singer, 1994). We therefore conceptualize participants’ immersion in vignette studies using three factors: presence, involvement, and realism (see Table 1). While some researchers have considered presence, realism, or involvement (also referred to as engagement), synonymous to immersion (Nilsson et al., 2016), other experts argue that it is possible for VR studies to involve participants while lacking presence (Slater, 1999). Similarly, virtual worlds that are unjustifiably unrealistic (e.g., where gravity is inverted) may elicit involvement but inhibit a participants’ sense of realism, whereas technical malfunctions with the VR equipment can prohibit an involving experience, reminding the user that the virtual environment is just a simulated illusion of reality (Slater, 2018; Witmer & Singer, 1994).

Exploratory Factor Analysis of the Presence Questionnaire Listing the Two Items of Presence Subscale, Four Items of the Realism Subscale, and Four Items of the Involvement Subscale, Including Their Respective Factor Loadings After Oblique Rotation (N = 833).

Notes: Significant factors loadings of |.400| and higher listed in bold font.

Participant immersion can best be understood through media richness theory, which explains that the richness (i.e., the quantity of explicit and implicit information presented in the same time span) of the medium of presentation determines how participants feel, think, and act (Daft & Lengel, 1986). Specifically, media formats are considered richer when more cues can be communicated to observers (e.g., facial expressions, body language, and tone of voice). Accordingly, a face-to-face meeting is considered the richest medium and an email the least rich medium (Ishii et al., 2019). Given that individuals use information from their environment to make sense of the situation they find themselves in (Weick, 1995), limited information in a vignette may inhibit participants’ ability to develop an appropriate response. We therefore argue that richer media provide more information for participants to construe their affective, behavioral, and cognitive responses—in addition to offering more stimuli that replace stimuli from reality (Witmer & Singer, 1994)—to explain why VRVVs ought to be the most immersive medium available for researchers.

The exploration of VR through the lens of media richness theory remains limited. Currently, only one VRVV study has empirically demonstrated participants’ enhanced perceptions of usefulness and enjoyment for tourism content in VR compared to traditional media (Lee, 2022). While text-based vignettes can evoke a sense of immersion, akin to how perspective-taking thought experiments prompt participants to imagine being in someone else's shoes by closing their eyes (Sorensen, 1998), achieving a comparable effect becomes challenging if pivotal senses constituting reality—predominantly sight and sound—are not entirely replaced by stimuli within the virtual environment (Hudson et al., 2019). Consequently, VRVVs can be regarded as more true-to-life than traditional media formats (Slater, 2018).

Ecological Validity of Research Findings Using Virtual Reality Video Vignettes

It is assumed that research outcomes will become more ecologically valid as the mode of presentation is more immersive (Aguinis & Bradley, 2014), in line with media richness theory (Daft & Lengel, 1986). Concretely, we propose research outcomes will be more generalizable to the real world through three unique processes: (1) heightened participant immersion, (2) greater participant attention, and (3) enhanced participant reactions to study manipulations. We explore each of these points in depth below.

Enhancing Participant Immersion Through Virtual Reality

We argue that study outcomes will be enhanced through several ways when participants are fully immersed in a VRVV. First, by simulating a realistic virtual environment, researchers can create scenarios that are more representative of real-world situations—or depict believable hypothetical situations that adhere to concepts based on a world participants can comprehend—and allow participants to respond to stimuli in a manner that either more closely resembles their behavior as if they were really there themselves (Slater, 2018), or their behavior from the perspective of an alternate role (e.g., a subordinate being a leader in a VR simulation; Alcañiz et al., 2018). Taylor (2006) also suggested that increasing the similarity between experimental and natural settings is essential to enhance the observed employee outcomes. This is partly because greater immersion enables more accurate measurements of actual behavioral intentions (Innocenti, 2017), focusing on what a participant will do that very moment (VRVVs may even be programmed in such a way that participants must make time-pressured decisions on the spot), as opposed to what they would do if the scenario depicted in the text-based vignette was the reality for them. In addition, the vignette “noise” (e.g., the virtual co-workers inaudibly chatting at the water cooler, or the label on the coffee mug on the desk) inserted by the researchers into immersive vignettes enables more lifelike scenarios that foster engagement with the scenario (Huang et al., 2021; Schubert et al., 2001), and heighten the ecological validity (Adão et al., 2018; Finch, 1987; Slater, 2009), without compromising the study's internal validity (Pierce & Aguinis, 1997).

Second, VR technology can provide researchers with greater control over experimental conditions similar to the vignette “noise” previously mentioned, preventing potential confounds that typically hinder text-based vignettes (Parsons, 2015). Conclusions drawn from prior research on media richness theory and sensemaking illustrate that—when exposed to media poor formats such as text-based vignettes—participants may develop an image in their mind that deviates notably from the image of other participants, or the image the researcher intended to convey (Ishii et al., 2019; Weick, 1995). Participants may also unintentionally begin filling in gaps based on their own preconceptions—such as their relationship with fictional co-workers or the organizational climate—if it is not explicitly communicated in text (Loman & Larkin, 1976). For instance, it is documented in communication research that the lack of context in media poor formats (e.g., written communications) often leave much to individual interpretation (Sproull & Kiesler, 1986) and that written communication is less effective when compared to more media rich methods (Daft & Lengel, 1984). These interpretations can be seen as a form of confirmation bias, where participants interpret or supplement the presented information in such a way that fits their assumptions of the contextual environment, thereby confounding the experiment and negatively affecting the ecological validity of the study (Whiting et al., 2012). While researchers can take steps to limit this issue by developing elaborately written scenarios, VRVVs standardize the experience further by enabling a consistent and rich virtual environment—leaving less for the imagination of the participant—and limiting the impact of individual differences and personal interpretations. Moreover, it provides researchers with the ability to have full control over the flow of events and eliminate extraneous variables that may negatively affect the results (Martingano & Persky, 2021). We thus expect that VRVVs will increase participant immersion in vignette studies, relative to text, and video-based vignettes.

Enhancing Participant Attention Through Virtual Reality

The emergence of VR technology has introduced a novel approach to study well-established organizational phenomenon and employee reactions, while VRVVs can also portray scenarios that organizational scholars have not been able to study before. Yet the potential of VR goes beyond that as VR technology provides researchers with another way to control how participants experience experimental studies (Peeters, 2019). By simulating sensory experiences that engage the participants’ visual, auditory, and in some cases tactile senses, VRVVs can fully involve participants and focus their attention on pivotal pieces of information (Hudson et al., 2019; Slater, 2018). Concretely, richer, and immersive vignette formats allow researchers to manipulate the attentional demands of the vignette, steering participants’ point of focus through additional sensory cues (e.g., flashing lights or directional speech). Text-based vignettes, however, can only rely on printed cues such as a bold, underlined, enlarged and/or colorful font, which may not be as effective in directing attention. As described by Hughes and Huby (2002), descriptions of people and their actions are less easily retained and remembered from text-based vignettes than when participants are given the opportunity to observe that same behavior through richer media such as in person or on video. Moreover, VR technology has been shown to help users recall important information presented to them (Suh & Lee, 2005).

These events and behaviors portrayed in vignettes need to be actively understood by participants for them to respond to the experimental manipulations accordingly, which researchers typically verify through attention and manipulation checks (Abbey & Meloy, 2017). Not only can these checks uncover problems in the study's validity after the main experiment has already been conducted—such as a manipulated construct that was misinterpreted by participants (Whiting et al., 2012)—failed checks also frequently force researchers to abandon data or conduct another costly experiment. It is thus crucial that researchers have participants’ attention during a study to prevent a costly process of rectifying data loss or design errors stemming from manipulation and/or attention checks (Hsu et al., 2017).

VRVVs offer a distinct advantage over video and text-based vignettes in terms of attentional control as the virtual environment can be more easily isolated from external distractions (Hudson et al., 2019). Previous studies have demonstrated that participants involved in VR simulations pay more attention to important details in the virtual environment—such as driving signs next to a road—in comparison to videos or simulations on a monitor (Li et al., 2020). That said, researchers will need to be creative to ensure participants understand what is essential to remember during a VRVV study, and what constitutes as mere “noise” intended to set the scene and immerse the participant in the virtual environment (Huang et al., 2021). Unlike text-based vignettes, where underlining a particular section can draw attention to it, highlighting an important section of a VRVV through increased volume or duration may appear unnatural and detract from its significance. Instead, Lyons et al. (2010) found that multiple sensory stimuli help to focus attention on the virtual environment, supporting the notion that immersion through participant involvement is key for a successful VRVV study (Schubert et al., 2001). In sum, we expect that participants will be more attentive to study manipulations in a VRVV, in comparison to text or video-based vignettes.

Enhancing Effect Sizes Through Virtual Reality

Overall, the use of VRVVs in organizational research presents a promising avenue for studying employee affect, behavior, and cognitions, with the potential for more vignette studies high on both ecological and internal validity that subsequently allow for a greater understanding of complex real-world situations. Through VR technology, these scenarios come to life and enable participants to feel like their actions have real consequences on the world they are currently a part of—that is, that of the virtual world that has become their new reality (Witmer & Singer, 1994). Furthermore, participant immersion may have the potential to strengthen the impact of manipulations (Adão et al., 2018; Pan & Slater, 2011), increasing the effect sizes of research outcomes. Daft & Lengel (1986), for instance, argue that greater media richness predicts employee performance as there is more information at employees’ disposal to construe their response to.

It is unrealistic to expect and argue that VRVVs are a panacea for all types of research questions that are best addressed with a vignette study, as it is not always evidently superior to traditional tools (Sanchez et al., 2022). Instead, it is thus necessary to identify the specific scenarios and research questions in which VR technology can provide an advantage over traditional vignette formats. Through careful consideration and exploration of empirical data, we aim to identify the types of manipulations and employee reactions where VRVV outcomes are similar to traditional vignette formats, and where VRVVs can provide a more comprehensive understanding and aid researchers in finding support for their research hypotheses. By doing so, we can develop a research agenda—and identify future streams of research—that leverage the strengths of VR technology but also acknowledge its limitations, ultimately advancing the field of organizational and managerial research. Based on prior research on media richness theory and participant immersion, we generally expect greater effect sizes for study manipulations in VRVVs, in comparison to text and video-based vignettes. However, the magnitude will depend on the necessity of additional information—such as facial expressions and tone of voice—for participants to make sense of the fictional scenario presented in the vignette (Ishii et al., 2019; Weick, 1995), and the degree to which participants feel immersed (Adão et al., 2018; Huang et al., 2021; Slater, 2009).

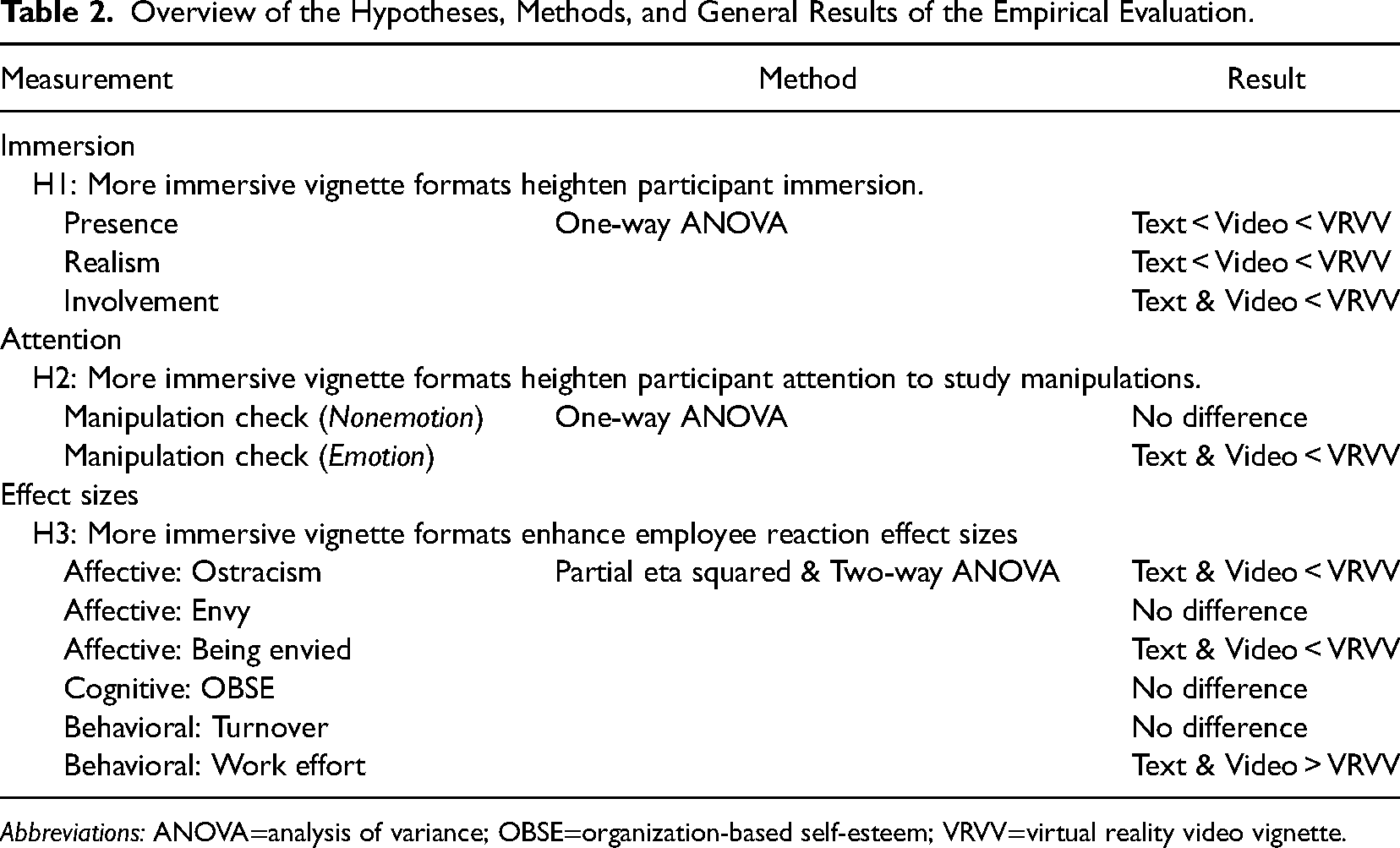

In the next section, using three datasets detailing participants’ experience with the vignette and their responses to the manipulated factors, we provide an illustrative evaluation of three vignette formats: text-based vignettes, video-vignettes, and VRVVs. We provide evidence that relates participants’ immersion in a vignette with their attention to manipulations and their effect sizes. To nuance the benefits provided by VRVVs, we identify the boundary conditions where participant immersion plays a role in significantly enhancing the data collected. Finally, we use the three datasets and the empirical evaluation to develop concrete suggestions for organizational scholars wishing to conduct their own VRVV study. A list summarizing the hypotheses, methods and results of our empirical example can be found in Table 2.

Overview of the Hypotheses, Methods, and General Results of the Empirical Evaluation.

Abbreviations: ANOVA=analysis of variance; OBSE=organization-based self-esteem; VRVV=virtual reality video vignette.

Empirical Illustration

To evaluate and compare the effectiveness of text-based, video, and VRVVs, we draw support from three individual datasets. All three datasets were collected using the exact same fictitious scenario, study manipulations, and measurement scales. The first dataset (N = 413) was collected using text-based vignettes, the second dataset (N = 306) using video vignettes, and the third dataset (N = 114) using VRVVs. The specific variables and topic of study are less relevant for our purposes here, so we will not interpret and discuss all direct and indirect effects. Rather, we will focus on comparing outcomes between the three vignette formats to assess participants’ experience of the used vignette format and the quality of the data collected through each method.

Empirical Context

The vignettes represented a topical and sensitive organizational phenomenon: workforce differentiation, which is the practice of distinguishing between employees based on their performance and potential, leading to different career opportunities and differences in both symbolic and tangible rewards (Gallardo-Gallardo & Thunnissen, 2016). This HR practice is considered critical to organizational success and is very prevalent in the field (Church & Rotolo, 2013), ensuring that most study participants could relate to the setting and immerse themselves in the presented scenarios—a key requirement for conducting vignette studies (Auspurg & Hinz, 2015). Additionally, this practice is associated with negative employee reactions such as envy and intergroup conflicts (De Boeck et al., 2018), making it an ideal context to compare sensitive variables between vignette formats as well as more practical outcomes, such as work effort. Moreover, workforce differentiation practices typically have a significant impact on employee affect, behaviors, and cognitions (Gallardo-Gallardo & Thunnissen, 2016), meaning that even subtle differences between vignette formats should be detectable.

Procedure and Participants

Our sample consists of employees who participated in one of several studies to examine the impact of various designs and implementations of workforce differentiation practices, which was either presented through text, video, or VR. All participants were instructed to step into the shoes of Robin—a common gender-neutral name—and imagine working at a fictional organization (see Supplemental Figures S1 and S2 for the full vignette description). In all the vignettes it was explained to participants that the organization would be introducing a workforce differentiation practice to its employees. Participants were then exposed to one out of six vignette conditions. First, the participant (i.e., Robin) was informed if they benefitted from the workforce differentiation practice or not. Next, Robin's co-workers responded in an emotionally positive (e.g., with enthusiasm) or negative (e.g., with hostility) manner—based on the emotions listed in the PANAS (i.e., Positive and Negative Affect Schedule; Watson et al., 1988). In the control condition their co-workers responded in a neutral fashion. After reading (Dataset 1), watching (Dataset 2), or virtually experiencing the scenario (Dataset 3), participants completed a questionnaire assessing their affective, behavioral, and cognitive responses (i.e., envy, organization-based self-esteem, anticipated ostracism, turnover intentions, and work effort), as well as their experience with and appraisal of the used vignette format. Participants learned of the exact same events unfolding within the fictional organization across the three vignette formats, with the primary difference being that the video vignettes and VRVVs offered more visual study-irrelevant information to participants (e.g., color of the drapes, hair style of co-workers) that was not included in the text-based vignettes—while other study-irrelevant information related to the fictional organization was presented to all participants (e.g., name, size, and brief history of the fictional organization). We engage in detail with this topic in the discussion and list potential benefits and pitfalls of vignette noise.

A total of 833 full- or part-time employees working in Belgium participated in one of the three studies at random. Participants from Dataset 1 (N = 413) were on average 37.1 years old and 57.1% was female. Dataset 2 participants (N = 306) were on average 38.2 years old and 56.2% was female. Finally, Dataset 3 participants (N = 114) were on average 35.4 years old and 50.0% was female. Across all datasets, participants had an average of 14.8 years of working experience, 87.6% of participants had a higher education degree (i.e., bachelor or higher), and the greatest group of participants were employed in the HR sector (11.9%) and healthcare (9.2%). Only three participants were excluded from the total sample and analyses due to malfunctioning VR equipment. All participants who failed one or more manipulation checks were included in the sample as they are used to assess the attention people paid to various elements of the vignettes.

Study Equipment and Materials

Development of the VRVV and Video Vignettes

All scenes for the vignettes of Datasets 2 and 3 were recorded using a 360° camera (Garmin VIRB®360), which allowed for video and sound capture in a 360° angle around the camera. Participants could therefore move their head to freely look around the room while wearing the VR headset (Dataset 3) or use their mouse or phone to do so (Dataset 2). For the recording a boardroom was rented out at a local university campus containing a large circular meeting table, rendering it particularly well suited to capture the entire experience from one camera angle. Name tags, office accessories, and slide presentations were added to the surroundings to enhance realism. The people presented in the video vignettes were university staff with formal acting experience and acted out the roles of HR staff or that of Robin's co-workers. They were instructed to act out specific emotions in response to the workforce differentiation practice (e.g., envy, resentment, admiration, and hope) using both verbal and facial expressions (the entire script can be found in Supplemental Figure S1). Finally, the name tag “Robin” was also clearly displayed in front of the camera (see Figure 1)—the first-person perspective of the participant. The camera's tripod was draped with a formal shirt to create a life-like “body” for the participant while in VR (see Figure 2).

Still from one of the experimental 360°-video vignettes (seen from the participant's—i.e., Robin's—point of view).

The 360° camera (Garmin VIRB®360) was mounted on a tripod and draped with a shirt to enhance realism for the viewer in first-person perspective.

Development of the Text-Based Vignettes

Using the script of the video vignettes as the basis—ensuring the exact same explicit information was communicated toward participants—text-based vignettes were developed to present the same fictional organization and its history to participants, including the same conditions that were manipulated (the entire vignette can be found in Supplemental Figure S2). All the emotional expressions of co-workers were also given to participants in text, using the exact same terminologies as in the other vignettes.

Data Collection

All participants completed the questionnaires through Qualtrics. The text and video-based vignettes were also made available on Qualtrics, with the video embedded through YouTube. For the VRVV, participants came to a set location where the researchers could present the vignettes using VR headsets (i.e., Oculus Quest 2). Participants also put on noise-cancelling headphones to limit audible distractions from their real environment.

Measures

Immersion

Following the recommendations from Whelan (2008), participants’ level of immersion was gauged using an adapted version of the Presence Questionnaire version 3 (PQ). The subscales of presence (Witmer & Singer, 1994), realism (Slater & Usoh, 1993), and involvement (Schubert et al., 2001) were used as they could be administered after all three studies (cf. agency would only function in an interactive environment). Participants responded to all items on a seven-point Likert scale ranging from 1 (strongly disagree/not immersed) to 7 (strongly agree/fully immersed). For the full list of variables and their factor loadings see Table 1.

User Experience

Second, participants had the opportunity to indicate several methodological appraisals and experiences they had with the vignettes—depending on the type of vignette format—such as the quality of video and audio, the number of times they were distracted during the vignette, their preference for vignette format, and whether they believed the 360° feature enriched the experience. Participants could also provide qualitative feedback on the used research method if they had any. These results are reported in the Supplemental material.

Manipulation and Attention Checks

Manipulation checks were included in the postvignette questionnaire to ascertain whether participants correctly understood whether they benefited from the workforce differentiation practice introduced by the HR staff at the fictional organization, and if they correctly identified the emotions expressed by their co-workers. Moreover, while attention checks typically serve to weed out inattentive participants (Abbey & Meloy, 2017), we also wanted to find out how much attention was given to vignette noise. We therefore asked participants to indicate how many years ago the fictional organization was established, as well as the name of the HR director.

Participants’ Affective, Behavioral, and Cognitive reactions

Participants’ affective reactions of feeling of envy and the feeling of being envied were measured using the scales developed by Vecchio (2005), and anticipated ostracism was measured using the scale from Ferris et al. (2008). The cognitive reaction of Organization-based self-esteem (OBSE) was measured using the scale from Pierce et al. (1989). The behavioral reactions of turnover intentions were measured using the scales from Kopelman et al. (1992) and Hom et al. (1984), and work effort was measured using the scale from Kacmar et al. (2007). Items were minimally adjusted to fit an experimental vignette study (e.g., “I feel like I am taken seriously at work” was changed to “At DruCo (the fictional organization), I would feel like I am taken seriously at work”). All questionnaire items, except for envy, were rated on a seven-point Likert scale ranging from −3 (much less than before) to +3 (much more than before), a typical adaptation used to assess the difference in employee reactions after for the implementation of a new HR practice (Auspurg & Hinz, 2015; Heggestad et al., 2019). Envy was rated on a five-point scale ranging from 1 (very unlikely) to 5 (very likely). All items and their factor loadings can be found in Supplemental Table S1.

Results

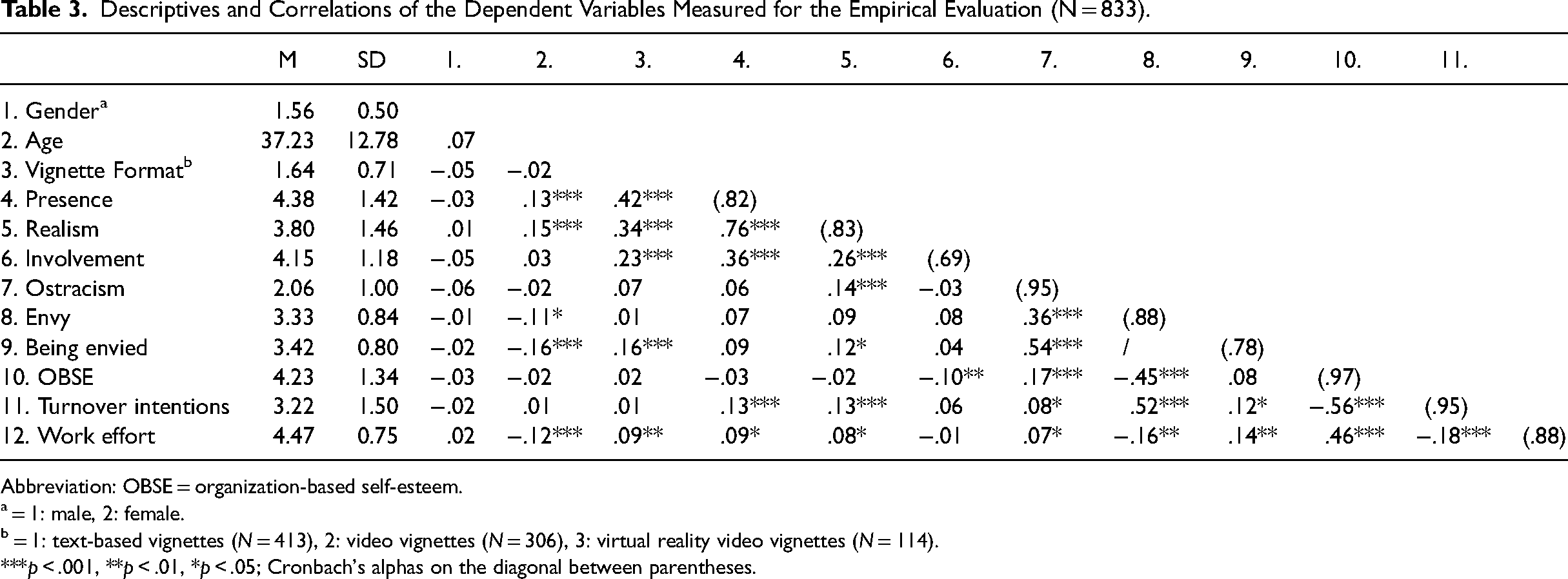

The means and correlations of our measured variables can be found summarized in Table 3.

Descriptives and Correlations of the Dependent Variables Measured for the Empirical Evaluation (N = 833).

Abbreviation: OBSE = organization-based self-esteem.

= 1: male, 2: female.

= 1: text-based vignettes (N = 413), 2: video vignettes (N = 306), 3: virtual reality video vignettes (N = 114).

***p < .001, **p < .01, *p < .05; Cronbach's alphas on the diagonal between parentheses.

Participant Immersion

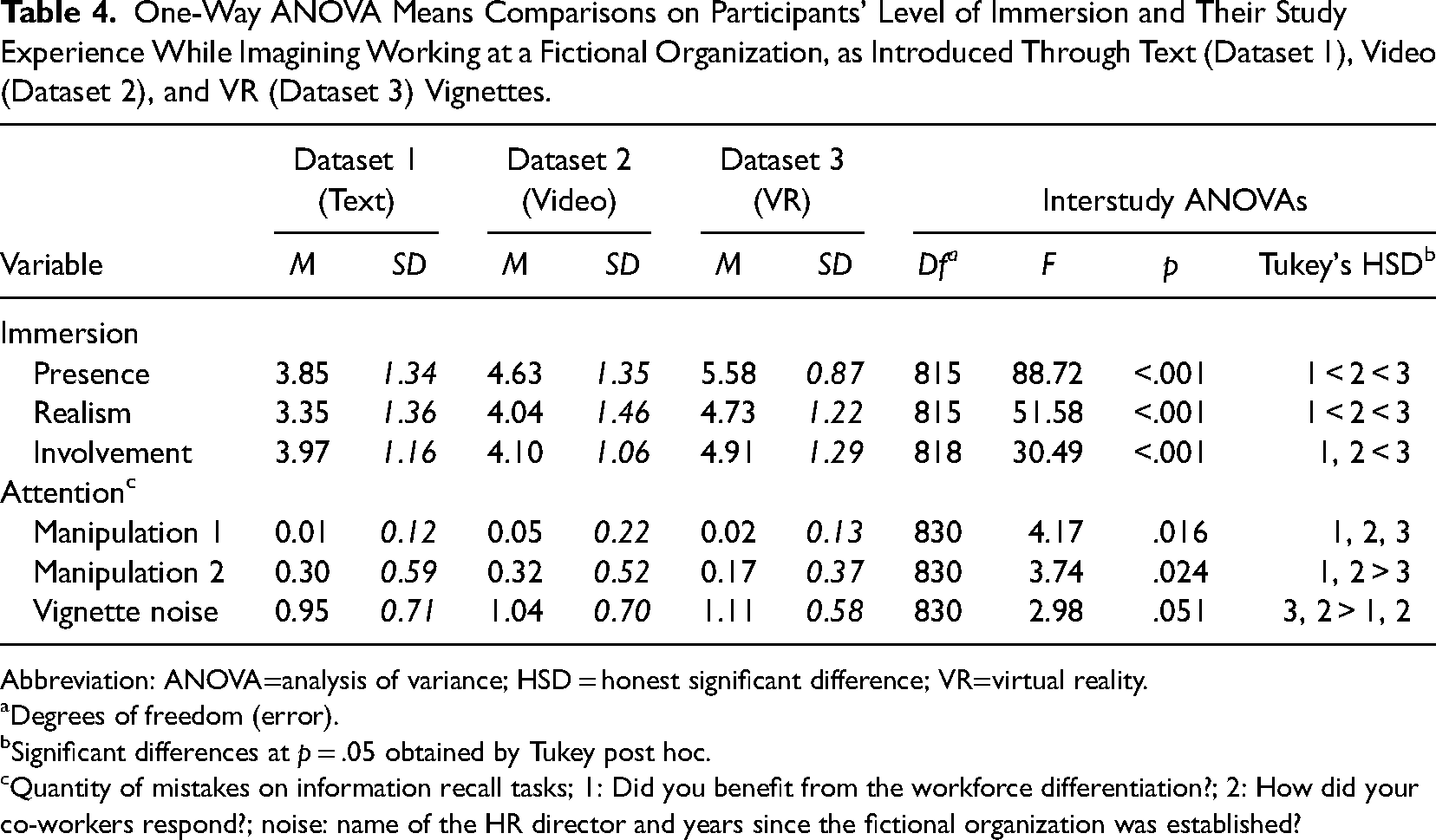

Supporting our first hypothesis, our results show that more immersive methods lead to higher levels of immersion among participants. Concretely, participants experienced significantly more presence (F(3, 815) = 88.72, p < .001) and realism (F(3, 815) = 51.58, p < .001) during the vignettes the more immersive the vignette format used. Participants did not, however, feel significantly more involved watching video vignettes in comparison to reading text-based vignettes, while VRVVs did offer the most involving experience (F(3, 818) = 30.49, p < .001). The latter is not surprising considering that VR headsets inhibit people's perceptions of their real surroundings, essentially forcing them to shift their entire consciousness to the VRVV (Sanchez-Vives & Slater, 2005). The means found for the video vignette and VRVV are similar to other studies measuring participants’ immersion using the same vignette format (e.g., Gold & Windscheid, 2020; Hoffman, 2021; Ventura et al., 2021)—there are presently no studies that measured participants’ immersion for a text-based vignette study.

Participant Attention

The results show that, overall, participants across vignette formats make a significantly different number of mistakes during the manipulation checks (F(2, 830) = 4.22, p = .015), supporting our second hypothesis. Participants experiencing a VRVV made the least mistakes (M = 0.18, SD = 0.39)—out of a maximum of three mistakes—and participants reading a text-based vignette (M = 0.32, SD = 0.62) or watching a video vignette (M = 0.37, SD = 0.62) the most (see Table 4). Zooming in on the individual manipulations we found that participants could equally (in)adequately indicate whether they benefitted from it themselves (F(2, 830) = 1.52, p = .220), regardless of the vignette format. However, we did find surprising differences between the manipulated emotions and the control condition. Specifically, we found that positive and negative emotions (e.g., admiration and anger) were recalled much better when it was presented using more immersive vignette formats (F(2, 830) = 9.50, p < .001), whereas the neutral condition was recalled more frequently the less immersive the vignette format. The data showed that participants in a video vignette or VRVV tend to more frequently attach (any) emotion to a neutral reaction, than those who read it in a text-based vignette (β = .46, p = .043). And 112 participants (79%) in Dataset 1 correctly recalled the neutral condition, whereas only 81 participants (68%) across Datasets 2 and 3 did so too. Finally, unrelated to the study manipulations, we found that participants recalled slightly less vignette noise (i.e., information used to simulate a fictional organization) the more immersive the vignette (F(2, 830) = 2.98, p = .051).

One-Way ANOVA Means Comparisons on Participants’ Level of Immersion and Their Study Experience While Imagining Working at a Fictional Organization, as Introduced Through Text (Dataset 1), Video (Dataset 2), and VR (Dataset 3) Vignettes.

Abbreviation: ANOVA=analysis of variance; HSD = honest significant difference; VR=virtual reality.

Degrees of freedom (error).

Significant differences at p = .05 obtained by Tukey post hoc.

Quantity of mistakes on information recall tasks; 1: Did you benefit from the workforce differentiation?; 2: How did your co-workers respond?; noise: name of the HR director and years since the fictional organization was established?

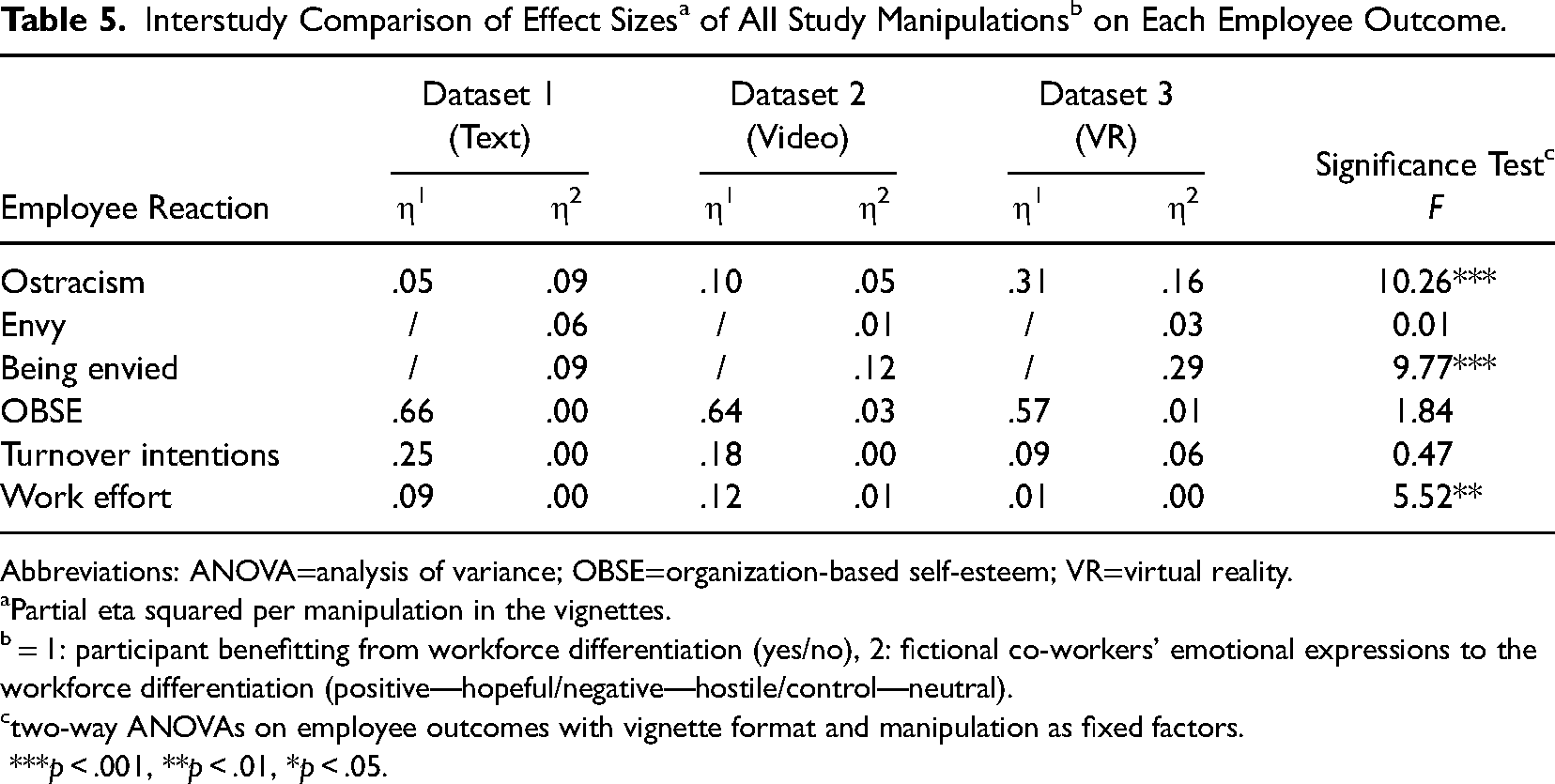

Participant Affective, Behavioral, and Cognitive Reactions

Comparing the effect sizes between vignette formats on the study manipulations (i.e., whether the participant benefits from the workforce differentiation practice, and co-workers’ emotional expressions) and employee reactions (i.e., envy, being envied, anticipated ostracism, organization-based self-esteem, turnover intentions, and work effort) yielded some significant outcomes (see Table 5), partially supporting our third hypothesis. Particularly affective outcomes were influenced by the vignette format, with effect sizes for ostracism (F(2, 811) = 10.26, p < .001) more than three times greater for VRVVs (η2 = .31) in comparison to text-based (η2 = .05) and video vignettes (η2 = .10). Similarly, the emotional expressions of co-workers had a much more profound impact on participants’ feeling of being envied (F(2, 404) = 9.77, p < .001) in the VRVV (η2 = .29), than in the text-based (η2 = .09) and video vignettes (η2 = .12). No measurable difference could be noted for cognitive and behavioral employee reactions, with the exception of work effort which had less of an impact the more immersive the vignette format (F(2, 821) = 5.52, p = .004). The data thus depicts a trend toward higher effect sizes with more immersive vignette formats, particularly for manipulations and employee reactions that rely on emotion. Furthermore, while several study manipulations did not make more of a pronounced—statistically significant—impact on participants across vignette formats, we can note that certain employee outcomes were experienced more vividly by participants and link this to their experience of immersion—as detailed in the robustness analyses below.

Interstudy Comparison of Effect Sizesa of All Study Manipulationsb on Each Employee Outcome.

Abbreviations: ANOVA=analysis of variance; OBSE=organization-based self-esteem; VR=virtual reality.

Partial eta squared per manipulation in the vignettes.

= 1: participant benefitting from workforce differentiation (yes/no), 2: fictional co-workers’ emotional expressions to the workforce differentiation (positive—hopeful/negative—hostile/control—neutral).

two-way ANOVAs on employee outcomes with vignette format and manipulation as fixed factors.

***p < .001, **p < .01, *p < .05.

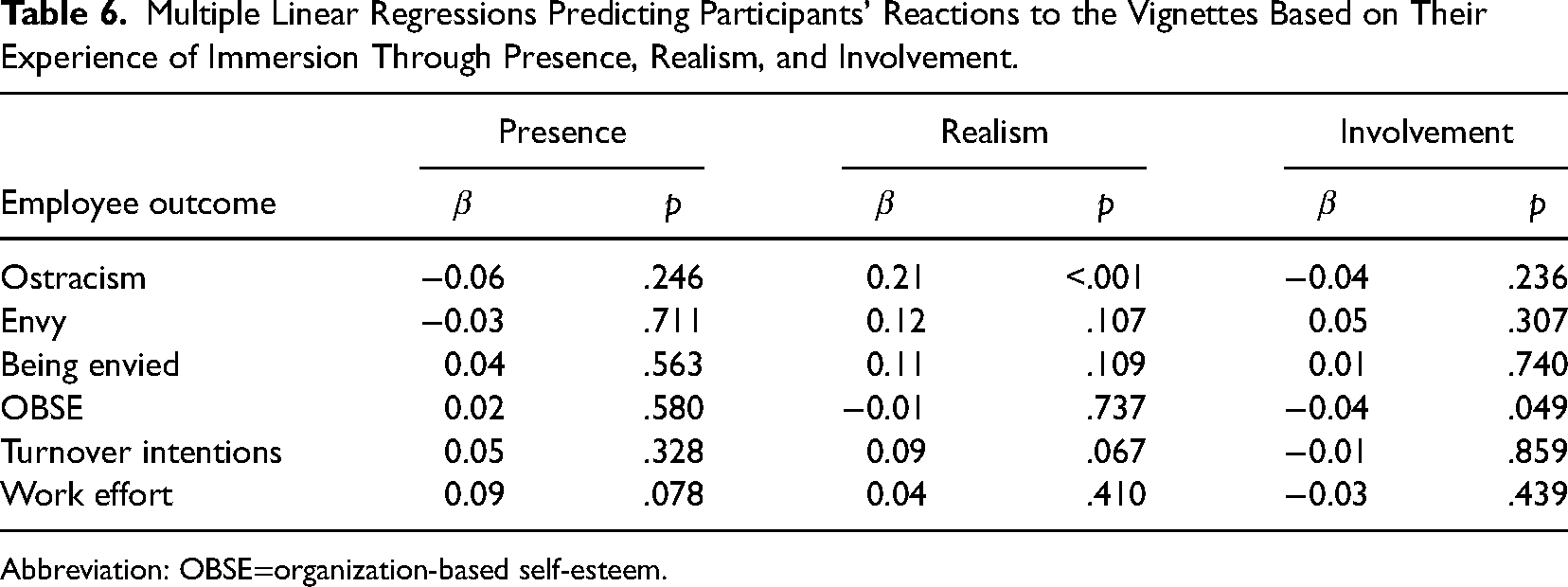

Robustness Analyses

Role of Immersion

It has been argued that higher levels of immersion are one of the reasons why participants devote more attention to important information (Hughes & Huby, 2002). Through a mediation analysis (PROCESS macro, model 4; Hayes, 2017) we can conclude that participant involvement had a negative relationship with manipulation check mistakes (β = −0.04, standard error [SE] = 0.02, p = .040). Examining the specific vignette types, we found that this relationship was mediated for VRVVs only (indirect effect = −0.04, boot SE = 0.02, boot 95% confidence interval [CI] = [−0.07, −0.01]) and not for video vignettes (indirect effect = −0.01, boot SE = 0.00, boot 95% CI = [−0.01, 0.00]). No relationship was found between presence (β = −0.01, SE = 0.02, p = .572) and realism (β = 0.00, SE = 0.02, p = .845) with the number of manipulation check mistakes. This reinforces the necessity of ensuring that participants are wholly absorbed by the presented vignette—such that their real environment will not act as a source of distraction—to avoid having to exclude many participants from a study sample for failing one or more manipulation checks.

The data shows that some employee reactions may also be influenced by participants’ experience of immersion (Table 6). Again, particularly affective and cognitive reactions are more likely to be influenced by participant immersion. Participants who feel that the vignette has become their new reality—such as is the case with more immersive vignette formats (Table 4)—tend to anticipate more ostracism (β = 0.21, p < .001) by co-workers within the fictional organization. Participants who feel more involved with the scenario presented to them tend to suffer greater reductions in their organization-based self-esteem (β = −0.04, p = .049).

Multiple Linear Regressions Predicting Participants’ Reactions to the Vignettes Based on Their Experience of Immersion Through Presence, Realism, and Involvement.

Abbreviation: OBSE=organization-based self-esteem.

Discussion

There is a notable lack of published VRVV studies in the organizational and managerial literature despite numerous calls and recommendations for VR research in the past few years across various research streams such as leadership (Alcañiz et al., 2018), entrepreneurship (Hsu et al., 2017), business sustainability (Jolink & Niesten, 2021), and human resources (Schmid Mast et al., 2018), to name just a few. The demand for VR research comes from an increasing need to study hypothetical situations (e.g., futuristic work settings; Yam et al., 2021), leverage unique VR features (e.g., being in the body of a co-worker of a different ethnicity; Cebolla et al., 2019), and to manipulate true-to-life scenarios with high ecological validity in a setting with high experimental control (Aguinis & Bradley, 2014; Alcañiz et al., 2018). The question remains, however, whether VRVVs are superior to traditional vignettes.

In this article we thus set out to evaluate and compare the effectiveness of different vignette formats—text-based, video, and virtual reality video vignettes—in simulating a fictitious organization for employees to imagine working in. Most importantly, the results validate the claim that more immersive vignette formats are the key to research findings with greater ecological validity as participants exhibit higher levels of immersion—that is, presence, realism, and involvement—in VRVVs than in text-based vignettes. As we have explained earlier, increasing the immersion of participants is also expected to have several other benefits. Most particularly, participants immersed in a vignette are more involved with the fictional scenario (Huang et al., 2021), causing them to remember and recall important information better (Hughes & Huby, 2002), and react more strongly—and naturally—to the fictional situation (Taylor, 2006). This is indeed what we generally found in our results, albeit not for all study variables. Primarily vignette manipulations that rely on emotion as well as affective employee reactions—such as ostracism which is mostly detected by facial expressions (Spoor & Williams, 2007)—will yield more valid and enhanced results when studied using VRVVs. In addition, manipulated study variables concerning employee emotion are better recalled for VRVVs than for other vignette formats. For more general organizational research endeavors, such as those related to turnover, scholars should feel less discouraged to use text-based vignettes.

We can therefore safely conclude that the majority of organizational vignette studies—which predominantly relied on text-based vignettes (Aguinis & Bradley, 2014; Pierce & Aguinis, 1997)—are not presently at risk of being invalidated by the rise in popularity of VR technology and immersive vignette research. Accordingly, while VR is a popular method for the replication of high-impact psychological studies (e.g., Milgram's obedience experiment; Neyret et al., 2020), we do not believe the same is necessary for traditional text-based vignette studies. Moreover, considering that vignette research relies on the statistical difference between manipulated factors and/or between their respective factor levels—with the goal to confirm or reject hypotheses through regressions or mean comparisons—VRVVs would typically have minimal impact on accepting or rejecting hypotheses given the lack of differential effect sizes between vignette formats on behavioral and cognitive employee reactions. Nevertheless, affective employee reactions are noticeably stronger after a VRVV. The difference between affective and other outcomes may lie in the fact that the additional information presented through richer media (e.g., unfriendly tone of voice, distraught faces) that are inevitably communicated—and felt—through VRVVs, further strengthens participants’ affective reactions (Pan & Slater, 2011; Rawski et al., 2022). The additional information that VRVVs and videos convey to participants may also work against the researcher. A surprising outcome was that neutral co-worker expressions were more often recalled incorrectly after a VRVV than a text-based vignette, whereas positive and negative expressions were more often recalled correctly. We can explain this finding using media richness theory, and the ambiguity that comes with additional information—and subsequent vignette noise—conveyed to participants.

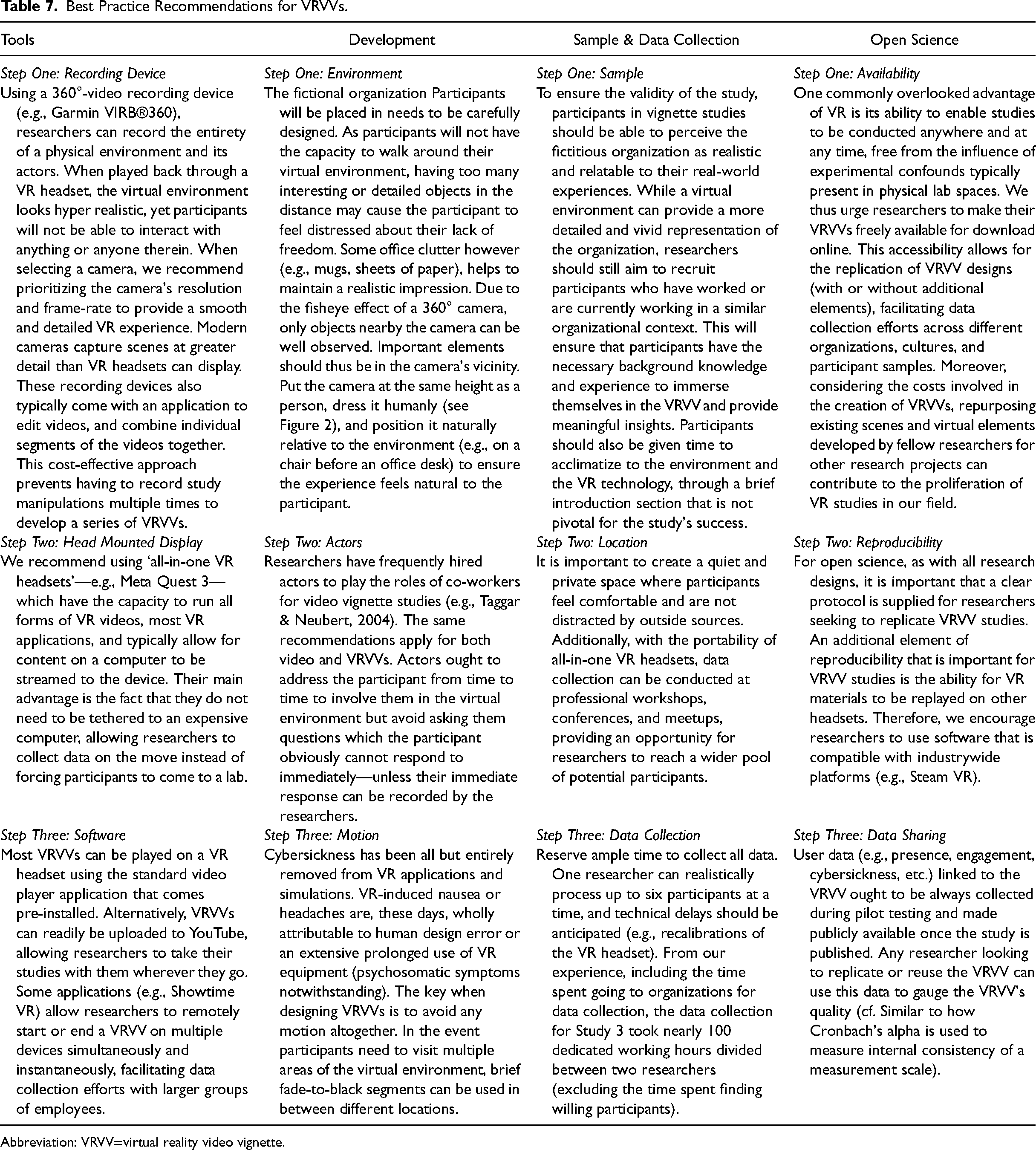

Practical Guidelines for Virtual Reality Video Vignettes

We recommend researchers to conduct an immersive vignette study when they want to (1) explore hypothetical situations that are difficult or unethical to simulate in real-life settings, (2) study an organizational phenomenon that is sensitive in nature and may elicit socially desirable responses in the field, (3) involve the manipulation and/or measurement of emotionally laden employee reactions, or (4) replicate traditional vignette studies to enhance their ecological validity. To be clear, there is no compelling reason not to conduct a VRVV study over a text-based or video vignette, unless the researcher is seriously constrained by time and funding. In Table 7 we provide information on all aspects of the process of a VRVV research project, such as the equipment used, vignette development, sample, and data collection process. We recommend considering these in addition to the recommendations for general vignette studies from Aguinis and Bradley (2014).

Best Practice Recommendations for VRVVs.

Abbreviation: VRVV=virtual reality video vignette.

Data Collection

Data collection for vignettes typically occurs through Qualtrics, utilizing surveys sent directly to participants, irrespective of whether they were sampled through convenience sampling, panels, or other methods. Researchers using VRVVs still depend on platforms like Qualtrics for postexperiment measurements, as the limited interactivity resulting from having only three degrees of freedom complicates the measurement of live behaviors in VR (excluding live eye-tracking data; Meißner & Oll, 2019). Distributing the VRVVs to participants, however, poses a unique challenge. As detailed in Table 7, VRVVs can either be uploaded online (e.g., YouTube) or stored locally. In both scenarios, researchers have the flexibility to collect data on campus at their VR laboratory or visit organizations with their VR equipment—essentially rendering most VR labs highly mobile. If stored online, VR headset owning panelists can be instructed to don their headset, access a designated website, and stream the VRVV (Hubbard & Villano, 2023). However, at present, panelists with VR headsets are disproportionately represented by young males (Kelly et al., 2021).

Costs

A study using VRVVs comes with similar costs as any other study (e.g., participant reimbursement), yet imposes additional financial burdens for its development and execution. Based on the desired quality of the VR experience, we present two distinguishable approaches. The cost-effective option involves acquiring a consumer-ready 360° camera, sourcing actors from university networks, and using smartphone-enabled headsets. Within most universities these are freely available (cf. we reimbursed co-workers with acting experience with food and drinks, and rented the 360° camera from the media department). To conduct the VRVV, researchers may purchase a VR headset or find other researchers to borrow a VR headset from. The headset is the only incremental cost of a VRVV over a video vignette. Text-based vignettes are the most budget friendly option, given that it only requires an active subscription to a survey platform such as Qualtrics. Conversely, a higher-quality but pricier approach involves upgrading to a professional-grade camera, investing in paid professional actors, and opting for an all-in-one headset like Meta Quest 3. Despite the potential for improved quality, the specialized requirements for this approach will at least be ten times as expensive—using current cost structures—as the most cost-effective approach detailed above. Additionally, whereas the time required to develop a text-based vignette is negligible, video vignettes and VRVVs may require a full day of filming on top of writing scripts, arranging filming equipment, decorating environments, and finding actors. VRVVs will also take up a lot of time for data collection, given that participants must go through the VRVV one by one, whereas video and text-based vignettes can be done on one's computer. Researchers should weigh the trade-offs between costs (financially and timewise) and quality based on their budget constraints and study requirements.

Safety

Ensure participant safety in VRVVs by prioritizing the prevention of cybersickness, a risk commonly associated with older VR experiences (Caserman et al., 2021). This discomfort arises from a sensory disconnect between VR input and participants’ internal sense of reality, resulting in nausea, headaches, eye strain, and general discomfort. As a best practice, inform participants that they can remove the headset at any sign of discomfort. To mitigate cybersickness in VRVVs, avoid recording motion in the video, opt for high-quality hardware, and limit VRVV duration to under 20 min, as suggested by Caserman et al. (2021). Moreover, participants should be explicitly instructed to remain seated during the VRVV. Active monitoring during the VR simulation, and pilot testing with a cybersickness scale (e.g., CSQ-VR; Kourtesis et al., 2023), further help ensure participant safety.

Ethical Considerations

The utilization of VRVVs raises important ethical considerations as well. While VR, by its nature, doesn’t subject participants to physical peril, it introduces an elevated risk of psychological harm, particularly when compared to other vignette formats (Rawski et al., 2022). The heightened immersion provided by VR, while advantageous for experimental richness, also amplifies the potential for adverse psychological experiences, as illustrated by participants experiencing physiological distress when delivering electric shocks to virtual avatars (Neyret et al., 2020). Consequently, researchers and ethical review boards must exercise diligence in preparing participants for the VRVV and mitigate potential psychological risks. A key strategy to address this ethical concern is to transparently communicate to participants that they have the autonomy to exit the VR experience at any point by removing the headset, and that such a decision will not result in any negative consequences. By empowering participants with control over their virtual experience, VR also offers ethical benefits. Researchers can immerse individuals in challenging scenarios, such as ethical dilemmas (King et al., 2013), while allowing participants to observe these scenarios on their own terms. This approach proves especially valuable in VRVVs, where exposure to uncomfortable environments may be necessary for the study's objectives.

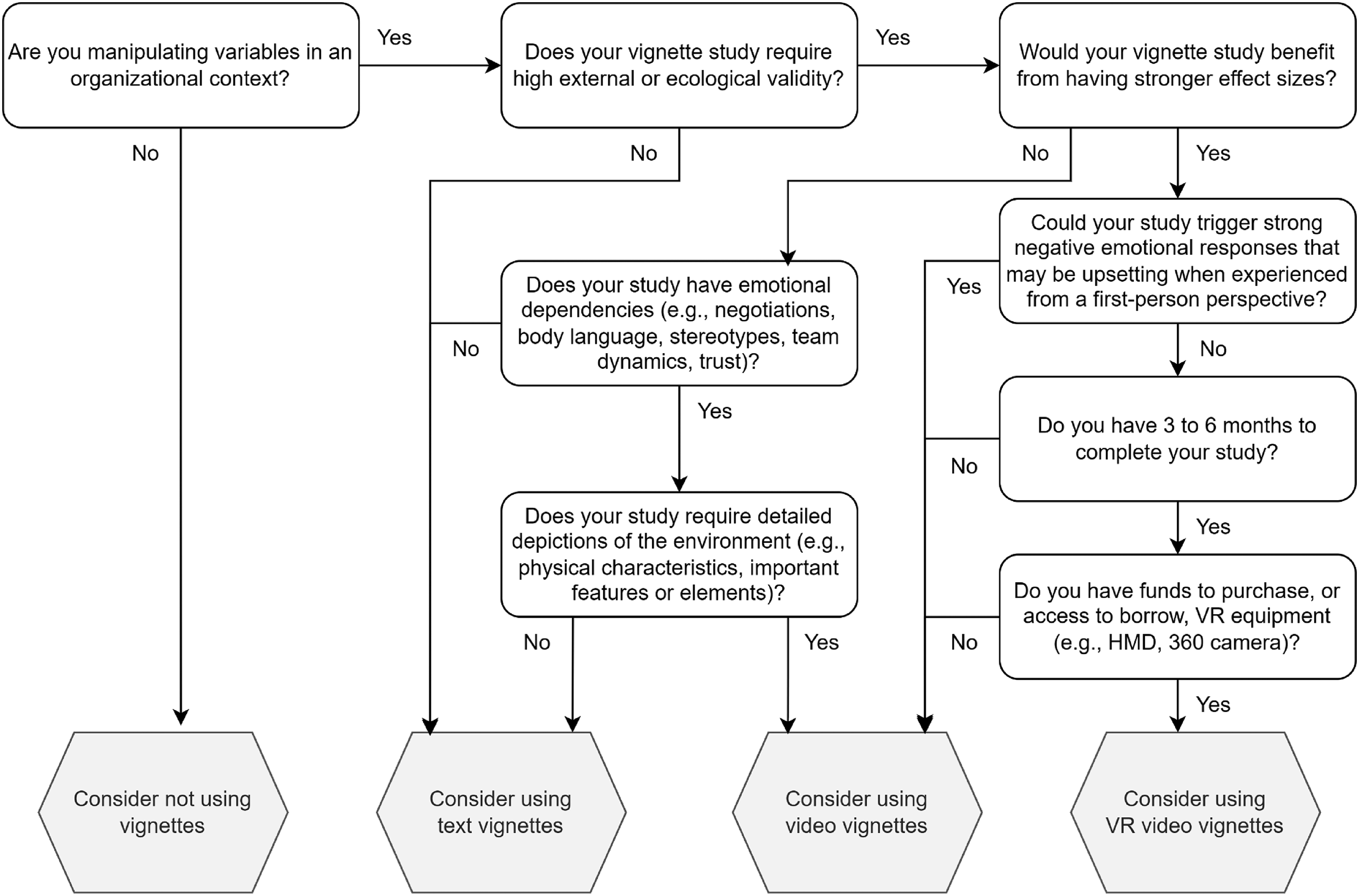

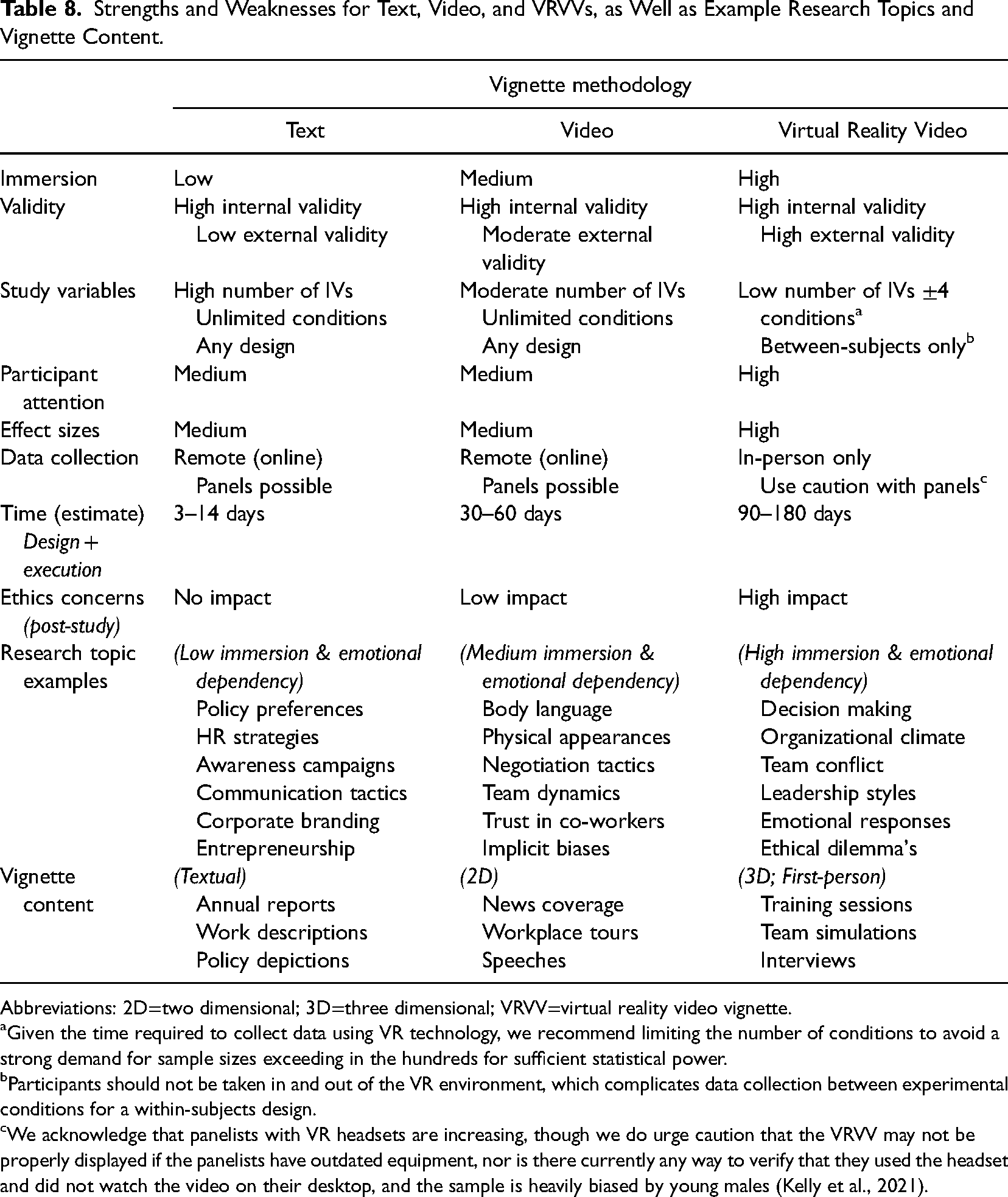

We integrate the above factors to construct a comprehensive overview outlining the strengths and weaknesses of each vignette methodology (see Table 8), as well as a decision tree to help prospective researchers choose the most appropriate vignette methodology (see Figure 3). Notably, we emphasize the additional costs and logistical considerations associated with developing and conducting VRVVs, compared to video and text-based vignettes, and include some example research questions that can be tackled with each type of vignette. Our overall recommendation for researchers is to employ a combination of vignette types to test theorized models, leveraging text-based vignettes for testing numerous variables and factor levels while incorporating VRVVs to enrich the overall study's external validity and nuance findings.

Decision tree to guide researchers into choosing an appropriate vignette methodology. These serve as general recommendations and should not be considered as binding decisions.

Strengths and Weaknesses for Text, Video, and VRVVs, as Well as Example Research Topics and Vignette Content.

Abbreviations: 2D=two dimensional; 3D=three dimensional; VRVV=virtual reality video vignette.

aGiven the time required to collect data using VR technology, we recommend limiting the number of conditions to avoid a strong demand for sample sizes exceeding in the hundreds for sufficient statistical power.

Participants should not be taken in and out of the VR environment, which complicates data collection between experimental conditions for a within-subjects design.

We acknowledge that panelists with VR headsets are increasing, though we do urge caution that the VRVV may not be properly displayed if the panelists have outdated equipment, nor is there currently any way to verify that they used the headset and did not watch the video on their desktop, and the sample is heavily biased by young males (Kelly et al., 2021).

Vignette Noise: The Benefits and Pitfalls

We found that participants made more mistakes recalling study-irrelevant information (i.e., the name of the HR director and the years since the fictional organization was established) the more immersive the vignette format. In a manner of speaking, this is another selling point for VRVVs as the recalled information serves no specific purpose besides setting a realistic scene for the participant (Aguinis & Bradley, 2014; Auspurg & Hinz, 2015). While steps can be undertaken to enhance the relative importance of manipulations over vignette noise in text-based vignettes—for instance, by repeating manipulations in bold and underlined font—participants themselves decide what they deem important. Through more immersive vignette formats the attention can be entirely focused on specific aspects of the vignette by using both audio and visual cues that are impossible to miss when fully immersed in the vignette. Moreover, modern VR headsets have eye-tracking, allowing researchers to document and verify that participants have adequately observed all the study-relevant information in the vignette (Meißner & Oll, 2019).

However, as illustrated earlier, our results also found that participants made more mistakes when vignette noise caused manipulations to become ambiguous. Concretely, unambiguous emotional expressions—such as admiration and anger—are evident to observers whereas an ambiguous emotional expression—such as neutrality—may hide other underlying emotions such as regret (Lee et al., 2008). Across vignette formats, the primary difference lies in learning about their emotional state through text (e.g., “your co-workers feel upset and angry about the situation”) in comparison to being present at the location and hearing, seeing—“feeling”—the emotions of co-workers (Gold & Windscheid, 2020; Pierce & Aguinis, 1997; Ventura et al., 2021). As a form of emotional contagion—which tends to rely much more heavily on body language than on words (Barsade, 2002)—the VRVV had a greater impact on the unambiguous emotions participants recollected (Hulse et al., 2007), in comparison to a video or text-based vignette. For the neutral condition, however, the actors playing as co-workers were instructed not to say anything or make any facial expressions (cf. it was only stated that co-worker expressions were “neutral” in the text-based vignette). The neutral expressions and faces may then have confounded the experiment, as they were frequently interpreted as a negative emotion (i.e., if you are not visibly happy about an event, then something must be wrong; Lee et al., 2008). Richer media therefore inevitably communicate implicit information (e.g., co-workers’ facial expressions) which may inadvertently cause study manipulations to be misinterpreted more frequently if they are left open to diverse interpretations (Huang et al., 2021; Whiting et al., 2012). Thus, when designing a vignette study, researchers should be mindful that immersive vignette formats may unintentionally amplify the ambiguity of presented information due to the additional noise introduced in a VRVV.

Vignette noise, which includes all the study-irrelevant information presented in a vignette, is nevertheless still a necessity. While conjoint analyses may use vignettes consisting of a sentence or two (necessary when participants need to read a few dozen different vignettes in one sitting; Jasso & Rossi, 1977), paper people studies rely on describing a situation that is realistic and believable (Auspurg & Hinz, 2015)—which innately requires rich descriptions of the situational context (Wason et al., 2002). Despite that immersive vignette formats thereby add more noise, they also enrich other elements of the vignette which may be too concise and pauper to conceptualize in participants’ minds when presented through text only. To illustrate, a participant can hardly be expected to make an accurate assessment of their desire to work with an imagined person based on simple textual cues (e.g., “she is friendly, cooperative, and is loved by her co-workers at the local animal shelter”) without experiencing this person's personality and behavior “in the flesh.” That said, the additional noise generated by more immersive vignettes may confound the study if participants are biased toward a fictional person based on their name, hair color, or queer resemblance to their ex-lover. It is thus important that organizational scholars meticulously design their vignette experiments to balance these features, and to take note of the potential benefits and pitfalls that each vignette format adds to their study design.

Limitations and Future Directions

A limitation to consider is that published vignette studies—no matter how immersive—can never be objectively compared to field experiments. The primary purpose of vignettes is to create hypothetical scenarios that could occur in real-life (or may believably occur in the future; Yam et al., 2021), but which either ethical and/or legislative rules (e.g., Neyret et al., 2020), data-access issues (e.g., De Boeck et al., 2018; Garcia-Retamero & López-Zafra, 2006), or feasibility concerns (Cebolla et al., 2019) prohibit researchers to study the phenomenon in a real organizational setting. Therefore, they do not assess behavioral outcomes, but behavioral intentions, cognitions, and/or feelings related to the scenario presented in the vignette (Atzmüller & Steiner, 2010). We can therefore never be a hundred percent certain that the outcome of any vignette study is an exact delineation of employee behavior within organizations. We rely instead on the underlying psychological mechanisms (i.e., presence; Slater, 2018), the enhanced richness (Daft & Lengel, 1986), and greater realism (Finch, 1987) that VRVVs provide in order to elicit more ecologically valid responses.

Furthermore, we empirically evaluated employees’ experiences and reactions to a sensitive and emotionally laden organizational phenomenon. While the advantages of using more immersive vignettes are clear, they may be less noticeable in more “mundane” work settings where the vignette format used cannot vary in their ability to induce an intense emotional response. As more organizational scholars begin developing new studies—or replicate traditional vignettes studies—using VR technology, we will gain a better understanding of the benefits and limitations of using immersive vignette studies in different organizational contexts. This will help us to better understand how to apply these methods to a wider range of organizational phenomena, and to identify situations where they may be most useful in enhancing our understanding of employee reactions. Similarly, VR research may be the more optimal approach when researchers endeavor to explore the gravity of employee perceptions and/or experiences of an organizational phenomenon at a fictional organization, rather than test assumptions and/or theory by measuring the relationships between independent and dependent variables. Therefore, based on the works of Chatman and Flynn (2005), VRVVs and video vignettes may be particularly well suited to explore emerging organizational phenomenon—such as the future of work (Yam et al., 2021)—that can reliably be presented in a realistic manner to employees using vignettes.

Despite the advantages offered by VRVVs and video vignettes in examining emerging organizational phenomena, these vignettes may face limitations due to additional confounding variables. Although we recommend researchers strive for consistency across study manipulations (e.g., the environment, dialogue spoken by actors, camera and object placement), human elements, such as occasional slips of the tongue or slight variations in pitch and intonation, may inadvertently impact participants’ vignette experiences. Thorough pilot-testing with unbiased respondents would enable an accurate assessment of whether the manipulations and overall study ambiance align with the researchers’ intentions. While text-based vignettes afford more control over the consistency of vignette perceptions, computer-generated VR simulations—with constant virtual environments, and avatar animations, voices, and appearances—could serve as a viable alternative.

Interactive Virtual Reality

We assessed the effectiveness of VRVVs and did not delve into VR simulations employing sic degrees of freedom. In these simulations, users possess the capability to navigate the virtual environment and engage with computer-generated objects and avatars (Hubbard & Villano, 2023). Theoretically, such VR simulations offer a heightened sense of immersion by introducing user agency—the feeling that one controls a virtual body in virtual space that mimics one's own real movements—another important facet of user immersion (Kilteni et al., 2012). The added degrees of freedom contribute to a stronger sense of being an integral living part of the virtual world, separating it from a video where one assumes the role of an observer. However, the use of computer-generated graphics—a necessity for most interactive VR simulations—may diminish users’ sense of realism, consequently reducing participant immersion and affective responses (Newman et al., 2022). Particularly with avatars displaying certain emotions, the uncanny valley effect—a discomfort when trying to distinguish between reality and artificiality—becomes more pronounced (Tinwell et al., 2011), which may confound research outcomes tied to manipulated emotions. Moreover, computer-generated VR simulations demand substantially more resources than VRVVs, including high-grade VR headsets, gaming computers with robust graphics processing units (GPUs), and potentially tens of thousands of dollars for developing customized research scenarios in the likely event the required assets are not commercially available.

Despite the inherent technical constraints associated with computer-generated VR simulations, we strongly advocate for empirical studies that systematically measure the impact of participants’ degrees of freedom on their immersion within simulated organizations. This fell outside of the scope of the current investigation as it necessitates a more streamlined research design that enforces participants’ navigation within the simulated organization—an unrealizable feature in vignettes. By varying the degrees of freedom in computer-generated VR simulations, we not only facilitate a comprehensive exploration of the outcomes associated with the interactive aspects of VR, but also create opportunities for incorporating other technologies—such as artificial intelligence—to enhance user immersion. Notably, Leavitt et al. (2021) propose leveraging electronic confederates (e.g., virtual co-workers) to dynamically respond to participants’ inputs, fostering heightened immersion through agency and active involvement (Lyons et al., 2010). Additionally, exploring techniques relying on olfactory stimulations and haptic feedback, which have proven difficult in most VR simulations thus far, as well as incorporating facial recognition in multiplayer settings with several participants or research confederates (Hubbard & Villano, 2023), could further enhance user immersion and pave the way for future research endeavors. As VR technology develops and our understanding of human functioning in simulated organizations deepens, we may find that VR simulations with six degrees of freedom become more commonplace for organizational research projects, alongside VRVVs.

Conclusion

While VRVVs enable researchers to causally study organizational phenomenon that would otherwise be impossible to study through field experiments and longitudinal surveys, they do not supersede text-based and video vignettes entirely. Instead, VRVVs primarily benefit researchers studying employee emotions and affect. While this disclosure ought not discourage organizational scholars from developing and conducting their own VRVV study on employee behavior and cognition as well, we do urge researchers to carefully contemplate whether the provided benefits are pivotal to the success and validity of the research findings, and if these outweigh the resources necessary to realize a VRVV research project from start to finish. We recommend researchers to consider the guidelines illustrated here (see Table 7), and to allocate sufficient time to develop videos or computer-generated imagery for a VRVV, as well as time needed to collect data individually and in-person from employees—although panelists with a VR headset may become more commonplace in the near future. To conclude, VRVVs are a powerful tool for organizational scholars seeking to study realistic workplace scenarios, and VR research methodologies should be used in lieu of traditional research methodologies when time and resources permit.

Supplemental Material

sj-docx-1-orm-10.1177_10944281241246770 - Supplemental material for Simulating Virtual Organizations for Research: A Comparative Empirical Evaluation of Text-Based, Video, and Virtual Reality Video Vignettes

Supplemental material, sj-docx-1-orm-10.1177_10944281241246770 for Simulating Virtual Organizations for Research: A Comparative Empirical Evaluation of Text-Based, Video, and Virtual Reality Video Vignettes by Anand P. A. van Zelderen, Theodore C. Masters-Waage, Nicky Dries, Jochen I. Menges and Diana R. Sanchez in Organizational Research Methods

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by an aspirant fellowship grant (1113919N) and a project grant (G074418N) from the FWO–Research Foundation Flanders, by an Internal Funds C1 grant from the KU Leuven (C14/17/014), as well as generously supported by the Digitalization Initiative of the Zurich Higher Education Institutions and Swiss universities.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.