Abstract

Qualitative meta-studies (QMS) have emerged as a promising methodology for synthesizing qualitative research within organization and management studies. However, despite considerable progress, increasingly fragmented applications of QMS impede the advancement of the methodology. To address this issue, we review and analyze the expanding body of QMS in organization and management studies. We propose a framework that encompasses the core decisions and methodological choices in the formal QMS protocol as well as the reflective—yet often implicit—meta-practices essential for deriving meaningful results from QMS. Based on our analysis, we develop two guidelines to help researchers reflectively align formal methodological choices with the intended purpose of the QMS, which can be either confirmatory or exploratory.

Introduction

In the last decade, scholars have begun to devote attention to qualitative meta-studies (QMS) as a novel and fruitful methodology to generate new insights in organization and management research (Combs et al., 2019; Graebner, 2021; Hoon, 2013; Kunisch et al., 2018; Rousseau et al., 2008). A QMS systematically synthesizes primary qualitative accounts with the aim of refining, extending, and building new theories while preserving much of the primary accounts’ rich insights. Especially in fields such as nursing and healthcare (e.g., Campbell et al., 2011; Finfgeld-Connett, 2018; Sandelowski & Barroso, 2007), psychotherapy (e.g., Timulak, 2009, 2014), psychology (e.g., Levitt et al., 2018), anthropology (e.g., Noblit, 2018; Noblit & Hare, 1988), and sociology (e.g., Pawson, 2002; Pawson et al., 2004; Zhao, 1991) QMS has become an influential methodological approach.

In organization and management studies, QMS is still in its infancy. Recently, there has been a significant rise in QMS-related publications, which have introduced various protocols and standards for synthesizing qualitative data (e.g., Berente et al., 2019, 2022; Habersang et al., 2019; Omanović & Langley, 2023; Sarkar & Mateus, 2022). Given the increasing use of QMS in our field, it is critical to understand how it is practiced and how it can be improved. Several authors have developed their own protocols and standards (e.g., Hoon, 2013; Point et al., 2017; Rauch et al., 2014), while others have adopted and refined protocols from other disciplines (e.g., Beigi et al., 2018; Berente et al., 2019; Feuls et al., 2021; Omanović & Langley, 2023). Most of these protocols share similarities regarding what steps should be performed, that is, the search, selection, analysis, and synthesis of primary qualitative accounts (e.g., Hoon, 2013; Noblit & Hare, 1988; Rauch et al., 2014; Sandelowski & Barroso, 2007; Timulak, 2009), but significantly differ in how these steps are performed. Yet, beyond such cross-protocol differences, within-protocol differences are also becoming more prevalent. This is evident as authors interpret the same protocol differently, leading to diverse and occasionally conflicting methodological practices in searching, selecting, analyzing, and synthesizing primary accounts. Hence, the practices associated with conducting QMS have become increasingly fragmented, as authors not only rely on different protocols but also interpret the same protocols differently.

The proliferation of such fragmented and sometimes conflicting practices poses significant challenges in establishing a novel methodology like QMS. When fragmentation encourages authors to mix and match methodological practices without engaging in more profound reflection, the QMS may lead to inadequate results. This not only jeopardizes the credibility of the research findings but also undermines the legitimacy of the methodology. Therefore, it is essential that authors coherently align their methodological choices with the purpose of their study (Pratt et al., 2022). This alignment is not only crucial for enhancing the trustworthiness and rigor of the QMS but also for ensuring meaningful theoretical outcomes (Harley & Cornelissen, 2022). To address the implications of increasing fragmentation in QMS, we suggest that the time is ripe to consolidate diverse protocols and methodological practices-in-use to develop guidelines on how researchers can coherently apply QMS.

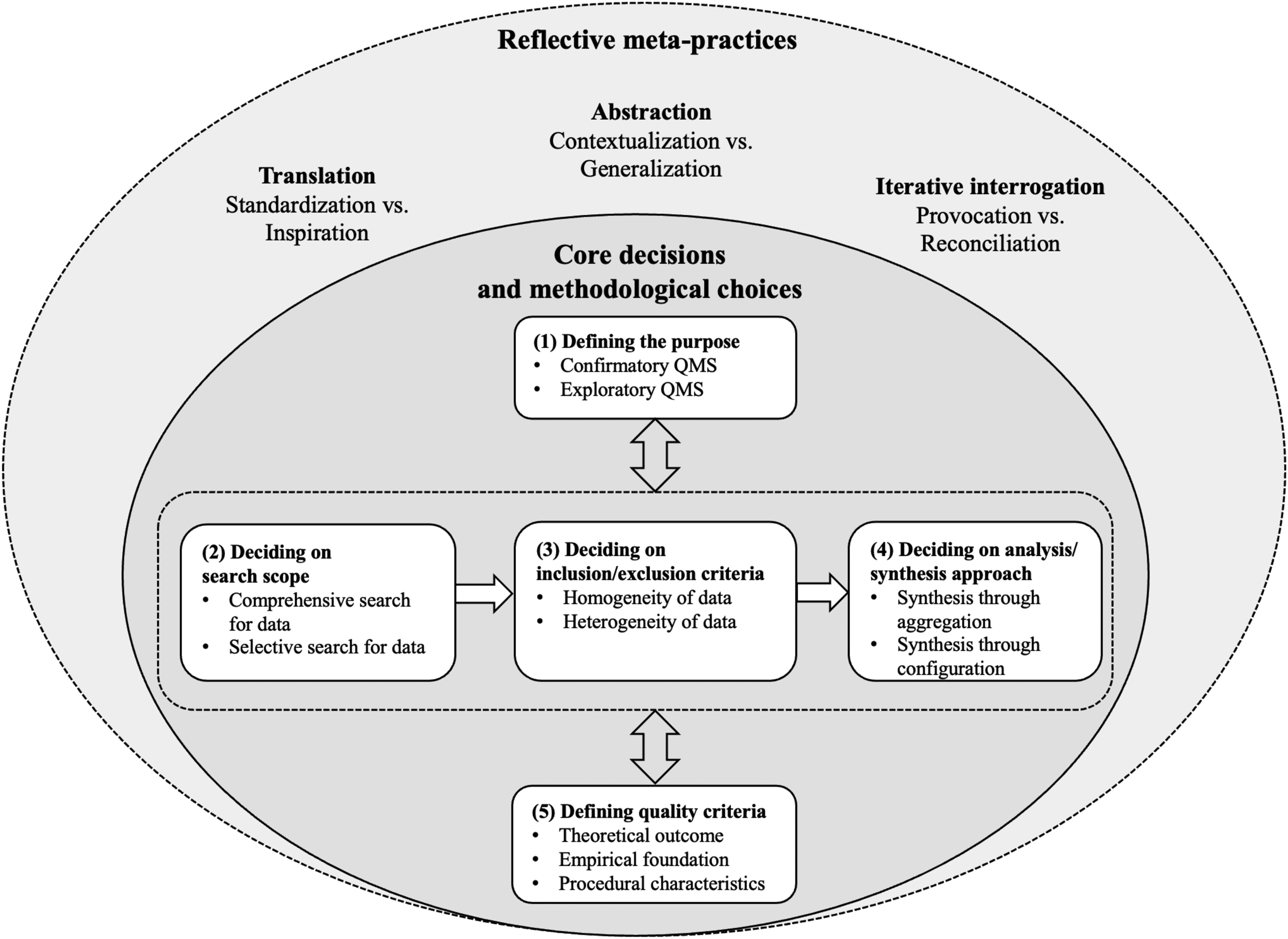

We conducted a comprehensive analysis of published QMS in organization and management studies. Based on our analysis of the practices-in-use, we develop a framework encompassing the core decisions and methodological choices in QMS. Our framework adopts a realist perspective, consistent with the majority of published QMS. It posits the existence of a mind-independent reality, while acknowledging that this reality can be subjected to different interpretations of which researchers construct accurate and truthful accounts (Bunge, 1996; Reihlen et al., 2022; Rescher, 2023). A key insight from our framework is that QMS can pursue different purposes. The purpose varies based on whether researchers merely summarize existing research (descriptive QMS), test and refine established theory (confirmatory QMS), or build new theory (exploratory QMS). Our analysis also points to two important shortcomings in the current application of QMS. First, we identify a lack of systematic recommendations for aligning different methodological choices with the intended purpose of the QMS. As a result, it is unclear how the purpose of the QMS influences the appropriateness of specific methodological choices in the formal protocol. Second, current QMS practices, often characterized by a linear, step-by-step adherence to established protocols, overlook the necessity for reflective work in the research process. To address these shortcomings, we seek to advance the practice of doing QMS in two ways. First, we introduce three reflective meta-practices that are vital for conducting any QMS, namely (1) translation, (2) abstraction, and (3) iterative interrogation. Second, we formulate two guidelines to assist researchers in reflectively aligning methodological choices with the intended purpose of the QMS.

Qualitative Meta-Studies—Current State and Core Challenges

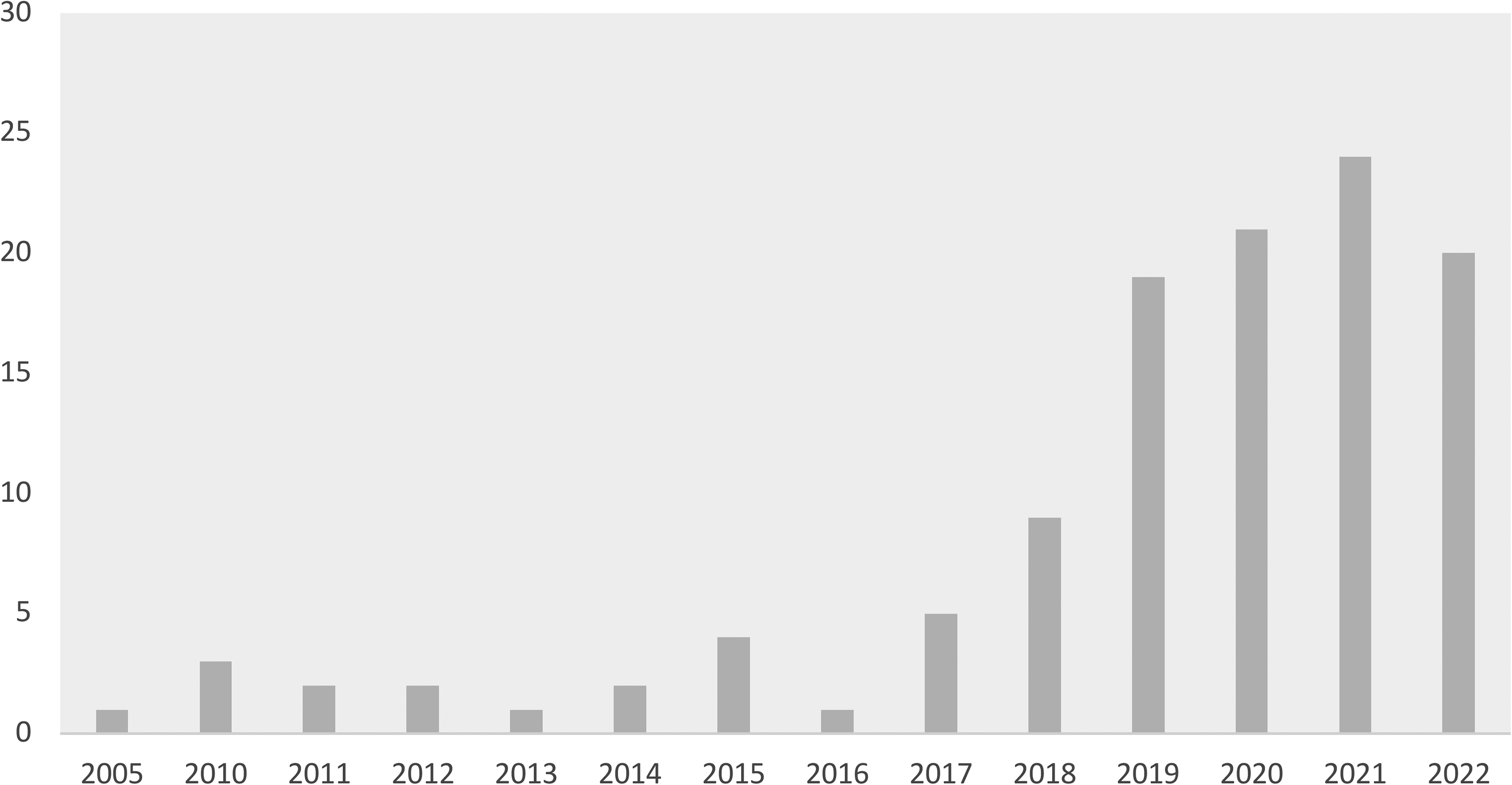

QMS is a methodology to systematically synthesize rich, contextualized qualitative accounts, such as qualitative case studies, to develop a more substantive understanding than those resulting from individual accounts (Paterson et al., 2001; Sandelowski & Barroso, 2007). Synthesis, in general, is defined as the “activity […] where some set of parts is combined or integrated into a whole” (Strike & Posner, 1983, p. 346). QMS brings together the findings on a specific topic with the goal of creating a conceptual output greater than the sum of its parts. Contrasting with methods that predominantly use quantitative techniques to integrate qualitative data, that is, QCA (Furnari et al., 2021; Greckhamer et al., 2018) or case survey methods (Larsson, 1993), QMS primarily rely on a nonmathematical, interpretive process carried out by researchers. In organization and management studies, authors referred to QMS as “qualitative meta-analysis” (e.g., Berente et al., 2019; Habersang et al., 2019; Küberling-Jost, 2021), “qualitative meta-synthesis” (e.g., Hoon, 2013; Omanović & Langley, 2023; Sarkar & Mateus, 2022), or just “meta-synthesis” (e.g., Beigi et al., 2018). Over the past few years, interest in QMS has increased steadily, especially after Hoon's seminal methodological contribution in 2013. Figure 1 shows the rising body of research that empirically applies or discusses QMS in organization and management studies.

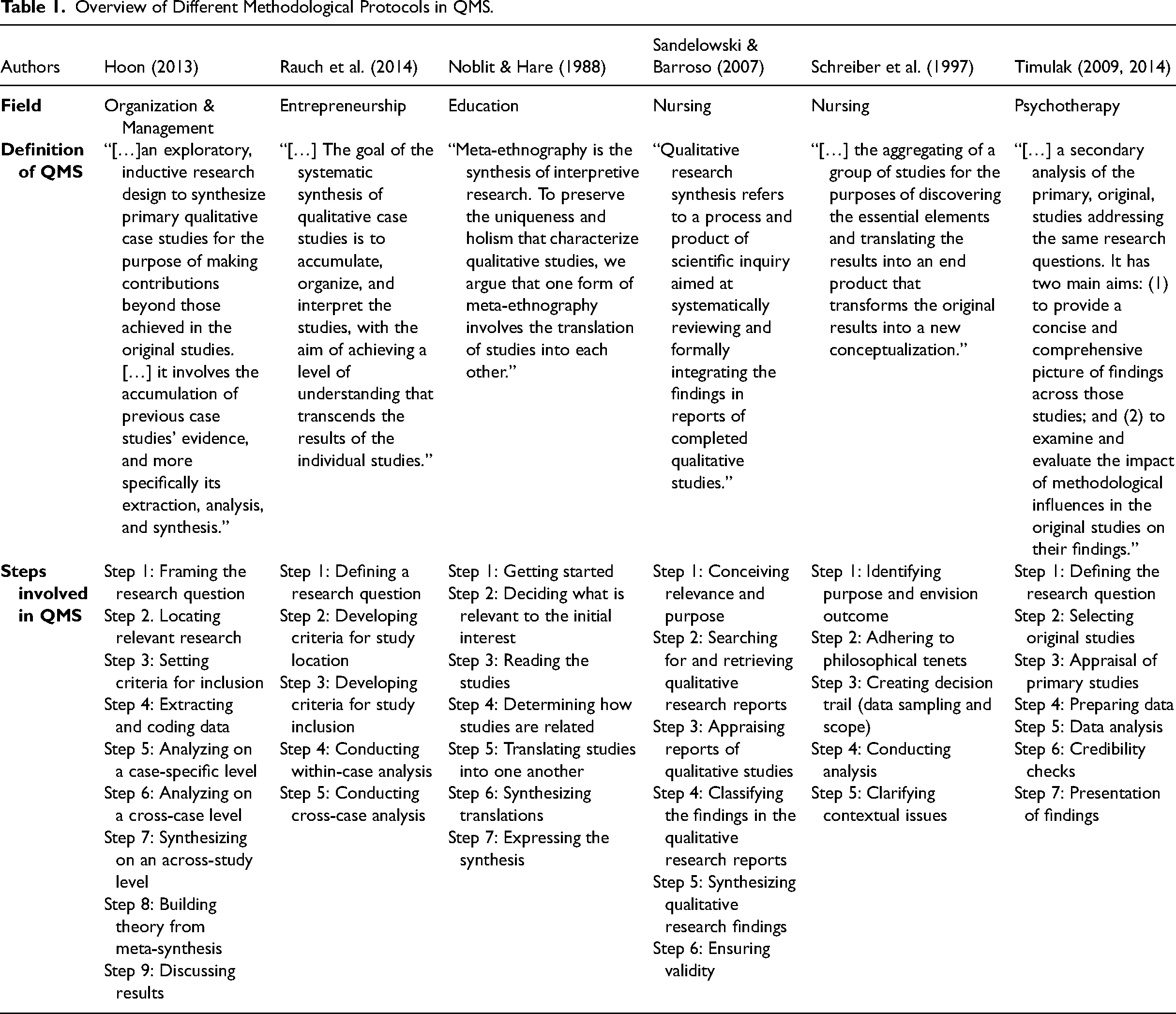

Increasing number of published QMS according to Web of Science*.

However, QMS is still an emerging methodology in the field. As with most emerging methodologies, establishing coherent guidelines, and standards takes time (Bluhm et al., 2011; Gephart, 2004). Yet, two significant challenges have arisen with the increasing use of QMS. First, different authors have independently developed distinct protocols, resulting in an increasing fragmentation of approaches in our field (e.g., Hoon, 2013; Rauch et al., 2014; Rousseau et al., 2008). For example, Hoon advocates an interpretive logic of synthesizing qualitative studies. Synthesis refers to the “accumulation of primary evidence with the purpose to generate interpretive explanation” (Hoon, 2013, p. 526). In contrast, Rauch et al. (2014) draw on an aggregative logic. This logic is rooted in an evidence-based approach guided by the purpose of building predictions and generalizing “evidence that was initially contextualized” (Rauch et al., 2014, p. 341). Despite these differences, both protocols are frequently cited together in QMS (e.g., Habersang et al., 2019; Küberling-Jost, 2021; Sarkar & Mateus, 2022) leaving the impression that both protocols follow a similar purpose and synthesis logic. Adding to the confusion, other QMS in organization and management studies draw on entirely different QMS protocols (e.g., Beigi et al., 2018; Berente et al., 2019, 2022; Feuls et al., 2021; Omanović & Langley, 2023). For example, Berente et al. (2019) and Omanović and Langley (2023) follow Noblit and Hare's (1988) protocol from the field of education. Beigi et al. (2018) follow Sandelowski and Barroso's (2007) ideas established in the field of nursing, and Feuls et al. (2021) draw on Timulak's (2009) protocol established in psychotherapy research. Compared to the protocols established by Hoon (2013) and Rauch et al. (2014), these protocols not only contain different steps but also different ideas about how these steps should be performed. Table 1 illustrates different QMS protocols used in organization and management studies.

Overview of Different Methodological Protocols in QMS.

Another challenge in developing the methodology is the underlying assumption in the protocols that the research process should unfold linearly and that showing this linearity is considered useful for illustrating procedural rigor. Researchers ideally rely on predefined search, selection, and analysis criteria to enhance the validity and reliability of their QMS (Hoon, 2013; Rauch et al., 2014). Following a protocol can indeed be useful as it provides “a valuable template for how to cope with the requirements evolving from the analysis and synthesis of existing evidence” (Hoon, 2013, p. 528). Hence, templates help structure the research process (Cloutier & Ravasi, 2021) and provide legitimacy in the publishing process (Köhler et al., 2022). However, recent work also points to the difficulties of a rigid template application (Corley et al., 2021; Köhler et al., 2022; Mees-Buss et al., 2022; Pratt et al., 2022). Unreflective template use can limit critical reflection and creativity, which is crucial for navigating specific challenges in the research project (Pratt et al., 2022). In healthcare, for example, there is a growing trend of QMS employing protocols mechanistically producing syntheses “that lose the vitality, viscerality, and vicariism of the human experiences represented in the original studies” (Sandelowski et al., 1997, p. 366). When researchers overly emphasize procedural rigor, they risk missing the actual goal of QMS, enhancing our theoretical understanding, rather than producing “findings comprised of thematic similarities” (Thorne, 2017, p. 1). Hence, prioritizing procedural rigor over tailoring protocols to specific research needs may lead to an “explosion of technical reports” (Thorne, 2017, p. 4) that erodes the reflective analysis and synthesis of primary qualitative accounts.

Taking these insights together, we argue that the development of QMS in organization and management studies is currently at a crossroads. On the one hand, we see an increasing fragmentation of how researchers conduct their QMS. This results in the existence of competing standards and a multitude of, at times, conflicting methodological practices-in-use. On the other hand, many QMS are characterized by a strong emphasis on procedural rigor, which often undermines critical thinking and creativity in the research process. We propose that understanding QMS not merely as a linear execution of a protocol but as an iterative process where researchers thoughtfully adjust the protocol to suit their study's goal is crucial for unleashing the method's full potential. In the next step, we analyze a body of published QMS to compile a detailed overview of methodological practices-in-use and their translation into different methodological choices. Following this analysis, we formulate two guidelines demonstrating how researchers can implement diverse methodological choices reflectively.

Method—Analysis of Published QMS

We conducted a search of all published QMS in the Web of Science (WoS) database until August 2023 to examine the practices-in-use. We used the search terms “qualitative meta-study” OR “qualitative meta-analysis” OR “qualitative meta-synthesis” OR “meta-synthesis” OR “metasynthesis”. We did not set a specific time frame. This initial search resulted in 3,387 articles. We further refined our search using the WoS categories “business” and “management” to ground our analysis in the broader management and business discipline. This resulted in 123 articles published between 2005 and August 2023. We then read each QMS and excluded false positive results (n = 51), such as (1) articles using a mixed method approach which include QMS as one of several methods, (2) systematic literature reviews that use the term QMS, (3) conceptual and methodological articles discussing but not applying QMS, (4) articles using other methods, such as quantitative meta-analysis or interviews, and (5) QMS published in other disciplines, such as healthcare, nursing, tourism, or sport (management) journals. This step yielded a set of 72 articles. We then excluded articles published in nonranked outlets or articles published in journals ranked lower than two according to the Academic Journal Guide 2021 (n = 40) to ensure a minimum of methodological standards. This resulted in a set of 32 articles. After this step, we manually undertook a forward and backward search from each article to find additional QMS that our initial search query did not identify. We identified three additional relevant articles. Finally, we read each of the 35 articles in depth to verify that they provide sufficient methodological detail to understand how authors performed their QMS. After carefully reading each article in detail, we further excluded nine articles due to a lack of information on how authors conducted their QMS, that is, insufficient description of data collection and/or data analysis (n = 8) or because the QMS did not contain a method section at all (n = 1). The Supplemental online material Appendix I offers a detailed list of all articles included in our search and their inclusion and exclusion based on the abovementioned criteria. Overall, our sample includes 26 published QMS.

Next, we analyzed the selected articles individually and then on a comparative level. Our data analysis aimed to code the practices researchers reported in their QMS and find patterns across QMS and protocols. We started our coding with the articles published first in our dataset: the articles by Hoon (2013) and Rauch et al. (2014). Since both articles offer comprehensive QMS protocols, the coding provided detailed insights into the methodological nature of the QMS, the basic steps in a QMS, and the rationale for making specific decisions within each step. The articles served as valuable sources for developing a preliminary coding scheme on the core decisions involved in QMS. This coding scheme was continuously refined and extended as our data analysis proceeded. We retained the sequential analysis based on publication year to see if an article drew on one of the seminal publications by Hoon (2013) and Rauch et al. (2014) or if the authors used a different protocol from another field. Indeed, more than half of the studies in our sample (17 out of 26) primarily drew on the protocols developed by Hoon (2013) and/or Rauch et al. (2014). The remaining articles primarily referred to protocols from education (Noblit & Hare, 1988) and healthcare and nursing (Sandelowski & Barroso, 2007; Schreiber et al., 1997; Timulak, 2009). We read and compared all protocols to understand the purpose, the philosophical underpinnings, the core logic of the synthesis, and the main steps and options suggested.

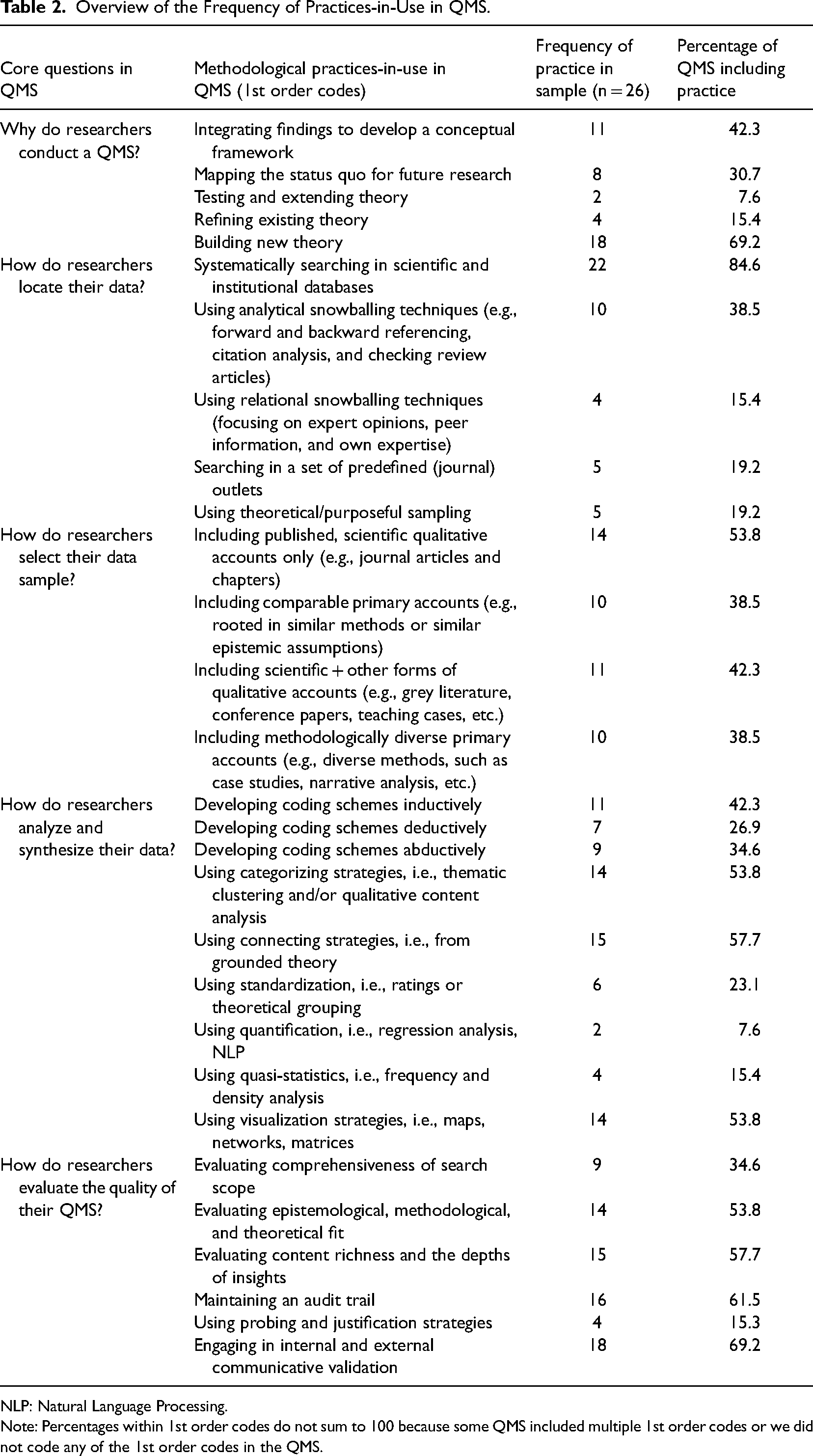

We then focused our analysis on the main steps and methodological practices researchers reported in their QMS. Our coding revealed several divergent methodological practices related to (1) why researchers conduct their QMS, (2) how researchers locate their data, (3) how researchers select their data, (4) how researchers analyze and synthesize their data, and (5) how researchers evaluate the quality of their QMS. Table 2 provides an overview of the frequency of the different practices we coded across our sample. Our results indicate that authors used various—even contradictory—practices. For example, some authors only included scientific peer-reviewed studies, while others used diverse and non-peer-reviewed qualitative accounts such as book chapters, conference papers, or teaching cases. We systematically compared these practices in a comparative analysis to identify similarities and differences within and across protocols. In this step, we not only identified differences in how researchers conducted their QMS across research protocols but also among those who claimed to have used the same protocol (see Supplemental online material Appendix II). We concluded that referencing a specific protocol did not result in applying similar practices in QMS.

Overview of the Frequency of Practices-in-Use in QMS.

NLP: Natural Language Processing.

Note: Percentages within 1st order codes do not sum to 100 because some QMS included multiple 1st order codes or we did not code any of the 1st order codes in the QMS.

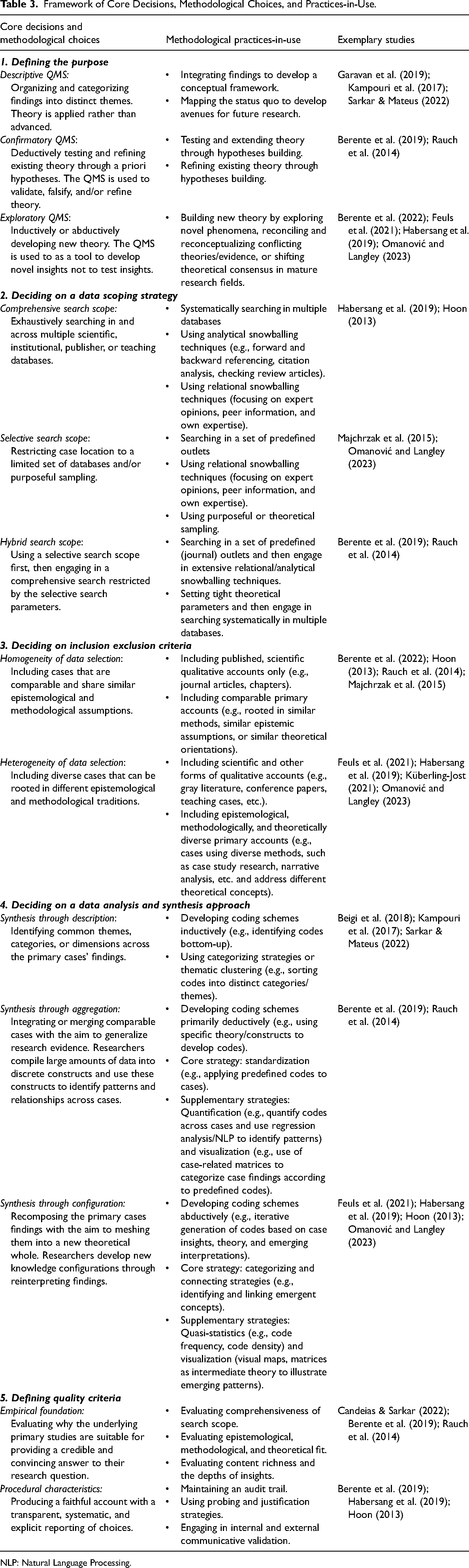

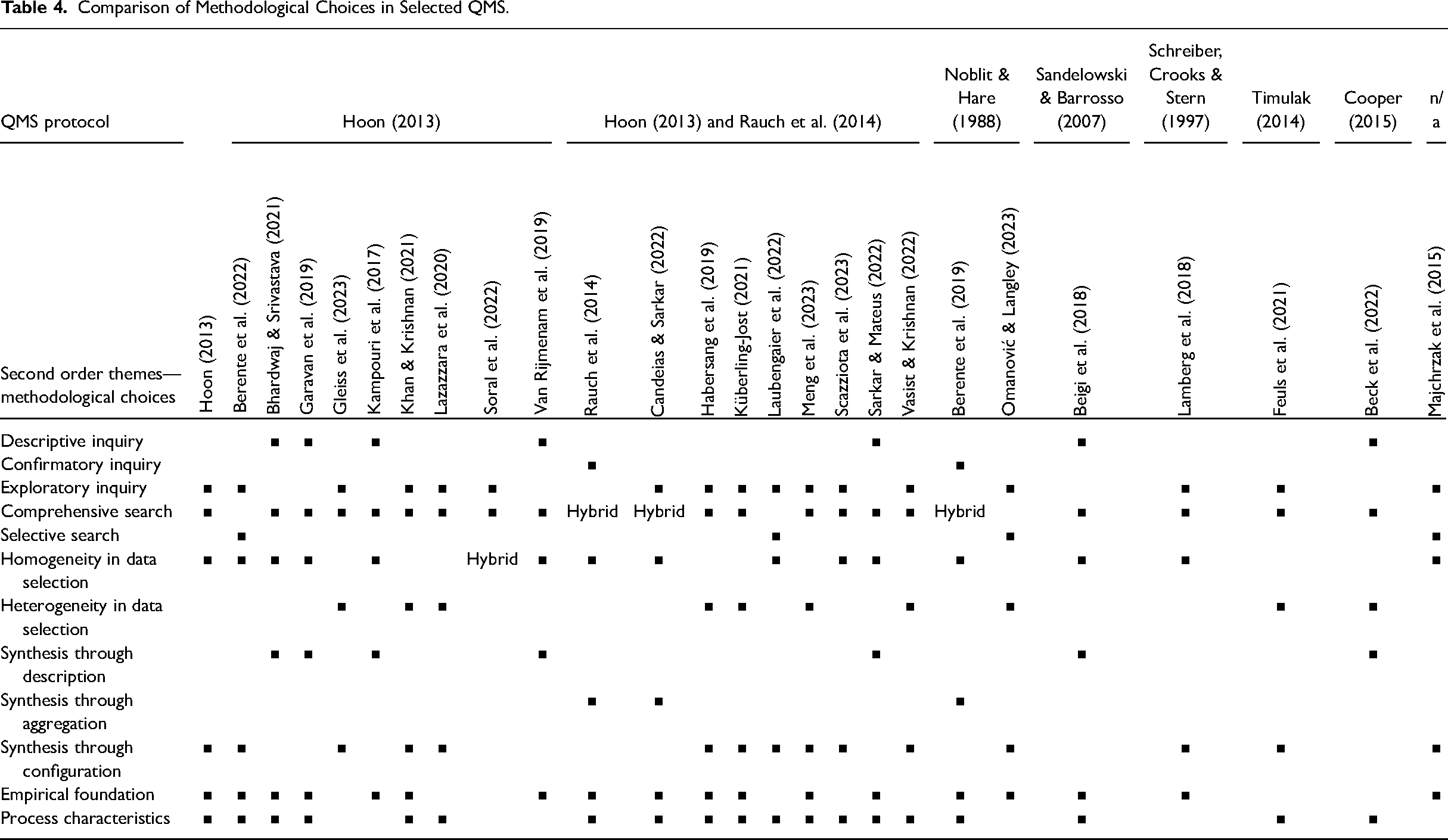

Next, we compared and clustered practices into distinct methodological choices. Methodological choices present the clustering of similar practices-in-use. In our analysis, these choices represent distinct options in the meta-analytical process. For example, a “comprehensive search for data” or a “selective search for data” presents two opposite methodological choices when deciding on the data search strategy. Similarly, “homogeneity in data selection” or “heterogeneity in data selection” are distinct methodological choices when setting inclusion and exclusion criteria. Overall, we identified 12 different methodological choices related to five core decisions in QMS: (1) defining the purpose, (2) deciding on a data search strategy, (3) deciding on inclusion/exclusion criteria, (4) deciding on an analysis and synthesis approach, and (5) defining quality criteria. Table 3 provides a framework that summarizes the core decisions and methodological choices based on the practices-in-use identified in our sample. Table 4 illustrates our interpretation of researchers’ methodological choices in their QMS. Additionally, our Supplemental online material Appendix III provides a detailed list of illustrative quotes for each practice-in-use from our sample. In the following, we first present the descriptive findings of our analysis, focusing on the core decisions and methodological choices based on the practices-in-use. Afterwards, we move beyond the formal QMS protocol, formulating our prescriptive insights of the informal dimensions of QMS. We integrate both sets of findings into two guidelines for reflectively conducting QMS.

Framework of Core Decisions, Methodological Choices, and Practices-in-Use.

NLP: Natural Language Processing.

Comparison of Methodological Choices in Selected QMS.

Descriptive Findings: Core Decisions and Methodological Choices in QMS

Defining the Purpose: Descriptive vs. Confirmatory vs. Exploratory QMS

The first major difference in conducting QMS is the purpose researchers pursue in their QMS. We identified three different purposes and, thus, types of QMS in our analysis: descriptive (n = 7), confirmatory (n = 2), and exploratory (n = 17) QMS (see Table 4). QMS, following a descriptive purpose, organize and categorize previous findings (e.g., Garavan et al., 2019) into “key themes that are likely to set the stage for future work” (Kampouri et al., 2017, p. 367). The aim is to map the status quo by illustrating “theoretical approaches, key findings and concepts” and to consolidate existing findings into a comprehensive framework that “contributes towards the consolidation of the […] literature” (Sarkar & Mateus, 2022, p. 1). Authors report evidence descriptively of a concept or phenomenon rather than seeking to advance theory in their field of research (see also Colquitt & Zapata-Phelan, 2007). Yet, we contend that descriptive QMS often fail to harness the potential of QMS fully. First, the outcomes, that is, conceptual frameworks and mappings of a field, are similar to systematic literature reviews. Because QMS are based on the synthesis of qualitative accounts only, descriptive QMS may offer an even less comprehensive picture than systematic literature reviews, which capitalize on the entire (conceptual as well as qualitative and quantitative) body of research in a field (Rousseau et al., 2008). Second, a description should be an intermediate step of any QMS, but not the result. The strength of QMS is that researchers re-analyze the rich, contextualized insights of primary accounts and lift those insights to a new theoretical level (Hoon, 2013). We therefore argue that two other types of QMS, namely confirmatory and exploratory QMS, offer greater potential in our research field.

Confirmatory or exploratory QMS specifically focus on theory advancement. QMS, following a confirmatory purpose, seek to test and refine existing theory. Researchers introduce new distinctions, boundary conditions, or situational constraints that increase the predictive power of a theory (Rauch et al., 2014). A decisive feature of confirmatory QMS is that researchers deductively develop a priori hypotheses (e.g., Rauch et al., 2014) or conjectures (e.g., Berente et al., 2019) to test and/or refine the relationship between variables or constructs of interest. Authors hypothesize possible relationships based on prior theory and then use the QMS to validate, falsify, and/or refine these relationships. In contrast, authors following an exploratory purpose aim to build a new theory. Researchers use the QMS to inductively or abductively investigate novel relationships and concepts that contribute to new theory development (e.g., Feuls et al., 2021; Habersang et al., 2019; Küberling-Jost, 2021). New theory development is driven by a broader interest in a topic or research field, in which researchers study novel or underexplored phenomena (e.g., Omanović & Langley, 2023), reconcile and integrate conflicting theories (e.g., Habersang et al., 2019), or shift theoretical consensus in mature research fields (e.g., Hoon, 2013).

Deciding on the Data Search Strategy: Comprehensive vs. Selective Search Scope

A second difference lies between researchers who engage in a comprehensive search strategy (n = 19), a selective search strategy (n = 4), and a hybrid approach (n = 3) (see Table 4). Authors who draw on a comprehensive search strategy identify qualitative accounts across various electronic databases using a broad range of keywords. These authors use various scientific databases to identify published and peer-reviewed qualitative research (e.g., Beigi et al., 2018; Habersang et al., 2019; Hoon, 2013; Omanović & Langley, 2023) and rely on Google Scholar to locate book chapters (e.g., Garavan et al., 2019), conference proceedings (e.g., Bhardwaj & Srivastava, 2021), or dissertations and working papers (e.g., Feuls et al., 2021). Similarly, they use publisher databases (e.g., Garavan et al., 2019; Kampouri et al., 2017), case databases (e.g., Rauch et al., 2014), or teaching case databases (e.g., Habersang et al., 2019; Omanović & Langley, 2023) to complement their search strategy for cases. A comprehensive search is often supplemented by extensive snowballing techniques, such as reference chasing (backward search) and citation screening (forward search) (e.g., Habersang et al., 2019) or scanning review articles (e.g., Hoon, 2013). In contrast, authors favoring a selective search strategy restrict their case location to a limited set of data sources and/or purposeful sampling. Those authors focus their search on publications in predefined journals (e.g., Majchrzak et al., 2015) or rely on purposeful sampling procedures (e.g., Omanović & Langley, 2023). In our sample, some authors also use a hybrid approach. These authors engage a selective search first (e.g., searching in specific predefined journals) and then broaden their search through extensive snowballing procedures (e.g., Berente et al., 2019, 2022).

Deciding on Inclusion/Exclusion Criteria: Homogeneity vs. Heterogeneity in Data Selection

A third difference arises from deciding what kind of data researchers include or exclude in their QMS sample. Some authors in our sample advocate for homogeneity and comparability of studies (n = 15), while others favor heterogeneity and diversity in primary studies (n = 10) or a hybrid approach (n = 1) (see Table 4). This divide becomes evident on two dimensions: (1) the degree to which researchers include scientific versus other types of primary qualitative accounts and (2) the degree to which researchers include epistemological and methodological diverse versus comparable primary accounts. The first dimension relates to how “scientific” the underlying primary accounts should be to qualify for inclusion. Multiple authors argue for including scientific accounts only, that is, including only published and peer-reviewed articles (e.g., Berente et al., 2019, 2022; Kampouri et al., 2017; Sarkar & Mateus, 2022). However, others have been less strict and included scientific but non-peer-reviewed cases published as conference proceedings, working papers, or book chapters in their QMS (e.g., Bhardwaj & Srivastava, 2021; Feuls et al., 2021; Hoon, 2013). Others even included teaching cases (e.g., Habersang et al., 2019; Omanović & Langley, 2023), newspaper cases (e.g., Gleiss et al., 2023), or cases published by established institutions, that is, the ILO or World Bank (e.g., Rauch et al., 2014).

The second dimension relates to how comparable the primary accounts should be in their epistemological, methodological, and theoretical approach. Primary qualitative accounts can be based on different methodologies, that is, case studies, grounded theory, ethnography, phenomenology, discourse analysis, etc. In addition, teaching or institutional case studies often do not express any methodology or entail only abridged data collection and method sections. Many authors argue in favor of epistemological, methodological, and/or theoretical comparability between the primary accounts (e.g., Beigi et al., 2018; Berente et al., 2019; Hoon, 2013; Rauch et al., 2014). For example, cases based on methodological principles advocated by Yin (2013) or Eisenhardt (1989) share similar assumptions about the nature of reality and standards for collecting and analyzing data. In contrast, other authors include diverse primary accounts and argue that synthesizing different types of qualitative accounts helps to triangulate findings (Rauch et al., 2014), develop a more comprehensive understanding of the phenomenon under study (Habersang et al., 2019; Küberling-Jost, 2021), or stimulate reflexivity because “different qualitative traditions and data sources can enrich the pool of possible insights” (Omanović & Langley, 2023, p. 5).

Deciding on the Data Analysis and Synthesis Approach: Description vs. Aggregation vs. Configuration

A fourth difference in conducting QMS relates to researchers’ analytical strategies to synthesize the cases. In our sample, we distinguish between synthesis through description (n = 7), synthesis through aggregation (n = 3), and/or synthesis through configuration (n = 16) (see Table 4). Authors who use synthesis through description identify common themes, categories, or dimensions across the primary studies’ findings. Authors mainly use an “inductive and open coding strategy […]” which involves “breaking down, examining, comparing, conceptualizing, and categorizing data” (Sarkar & Mateus, 2022, p. 4 citing Strauss & Corbin, 1998). As the primary analytical strategy, authors use categorizing strategies from grounded theory (Strauss & Corbin, 1998) to identify common themes (Kampouri et al., 2017) and to cluster similarities and differences across studies (Sarkar & Mateus, 2022). Authors using synthesis through description stick close to the primary cases’ empirical findings and focus on mapping findings through thematic clustering.

In contrast, authors who use synthesis through aggregation merge cases with the aim of homogenizing and generalizing research evidence (Rauch et al., 2014). The aggregation metaphor captures the idea that “knowledge progresses steadily upward, as one brick of knowledge is placed upon another […] building higher and higher on tested foundations” (Pratt et al., 2020, p. 4). In their analysis, authors draw on standardization as the primary analytical strategy to compile large amounts of qualitative data into discrete constructs to identify patterns and relationships across cases (Rauch et al., 2014). Authors develop unified “coding rules and codes, which are often developed in a deductive way and rely on existing theories” (Rauch et al., 2014, p. 341). These deductively developed coding schemes help authors operationalize each case's findings into standardized constructs to compare the findings from one case to another (Berente et al., 2019). In the synthesis, authors use additional comparative strategies, such as quantification and visualization. In quantification, authors perform additional correlation analyses to examine the constructs’ relationships (Rauch et al., 2014). Authors who draw on visualization, in contrast, use graphical representations “to examine the relationships grounded in different contexts” (Candeias & Sarkar, 2022, p. 8) or case-related matrices to contrast findings and identify patterns (Berente et al., 2019). Authors who wish to reduce the complexity inherent in qualitative data use the synthesis through aggregation approach by compiling rich insights into standardized constructs.

Authors drawing on a synthesis through configuration approach mesh instead of merge the primary cases’ findings into a new theoretical whole. The configuration metaphor captures the idea of a “mosaic, big picture, more-than-the-sum-of-parts, and novel-whole view of the way knowledge advances” (Sandelowski et al., 2012, p. 10). A decisive feature of synthesis through configuration is that authors follow an abductive, iterative process. They are “taking stock of existing theories […] as potential lenses for interpreting data; performing subsequent abstraction from the case study raw data by coding, categorizing, and linking categories to emerging themes; and reflecting them with existing theories” (Habersang et al., 2019, p. 7). The analysis and synthesis cannot be separated as they are inherently intertwined within an iterative process in which researchers get more and more informed by the case data, existing literature, and emerging interpretations. Authors following this type of synthesis draw on diverse analytical strategies, such as categorizing and connecting, as well as visualization and quasi-statistics. Categorizing and connecting strategies borrowed from grounded theory assist authors in identifying themes and concepts across cases and connecting those into analytical narratives (e.g., Omanović & Langley, 2023), typologies (e.g., Berente et al., 2022), temporal sequences (e.g., Habersang et al., 2019; Küberling-Jost, 2021), or causal relationships (e.g., Hoon, 2013). Visualization strategies, for example, drawing causal maps (e.g., Hoon, 2013) or matrices (e.g., Feuls et al., 2021) support authors’ sensemaking when developing links between patterns across cases. Quasi-statistics such as frequency and density analysis (e.g., Habersang et al., 2019) aid authors in validating their findings. Authors using synthesis through configuration tend to abstract from the primary cases’ empirical findings as they aim to compose the findings into a new theoretical whole (Omanović & Langley, 2023).

Defining Quality Criteria: Empirical Foundation vs. Procedural Characteristics

A key distinction in QMS involves how authors demonstrate the quality of their research. Authors do this either by discussing the search and selection of their empirical foundation (n = 18) and/or by discussing the procedural characteristics of the data analysis and synthesis (n = 19) (see Table 4). Authors discussing the empirical foundation justify why the underlying primary studies are suitable for providing a credible and convincing answer to their research question. Typically, authors evaluate the comprehensiveness of the search scope as a proxy for quality (Habersang et al., 2019; Rauch et al., 2014), the degree of epistemological, methodological, and theoretical fit among the primary accounts (Berente et al., 2019; Majchrzak et al., 2015; Rauch et al., 2014), and/or the content richness and the depths of insights of the underlying cases (Feuls et al., 2021; Habersang et al., 2019).

Authors emphasizing procedural characteristics justify how they conducted their QMS by producing a faithful account with a “transparent, systematic, and explicit reporting of […] choices” (Hoon, 2013, p. 543). Authors maintain an audit trail by documenting the procedural and interpretive moves made during the study to enhance the transparency and comprehensibility of the QMS (e.g., Hoon, 2013; Rauch et al., 2014). They also use probing and justification strategies, that is, triangulation processes (e.g., Habersang et al., 2019), checking the meaning of outliers (e.g., Rauch et al., 2014) or searching for contrary evidence (e.g., Berente et al., 2019). Finally, authors also engage in internal and external communicative validation through intersubjective comprehensibility checks among co-authors (e.g., Habersang et al., 2019) or by involving experts from the field, such as other researchers or practitioners (e.g., Berente et al., 2019; Rauch et al., 2014). While Table 4 suggests that most authors are indeed concerned with the empirical and procedural quality of their QMS, on closer inspection, most authors address only partial aspects of quality, leading to an inconsistent reporting on quality criteria across studies (see Supplemental online material Appendix II).

Summary of Findings and Shortcomings in Existing QMS

Our empirical analysis of published QMS provides two important insights. Firstly, we present a comprehensive overview of the practices-in-use, the methodological choices, and the core decisions in QMS. Our analysis empirically shows that researchers employ different, sometimes conflicting, methodological practices and choices, even though their QMS is based on the same protocol. Secondly, our analysis highlights that QMS can be categorized into different purposes: descriptive, confirmatory, and exploratory. The purpose determines the type of inquiry researchers derive from their QMS. Categorizing QMS based on the purpose offers a significant yet overlooked distinction, which may help explain the researchers’ contradictory methodological practices and choices. However, these insights also highlight two important shortcomings in the current application of QMS. Firstly, there is a lack of systematic recommendations that effectively align different methodological choices with the intended purpose of the QMS. Despite researchers providing descriptions of the methodological choices employed in the formal protocol, it is unclear how the purpose of the study impacts these choices. As a result, we lack an understanding of how the purpose of the QMS influences the appropriateness of specific methodological choices in the formal protocol. Secondly, there is a notable scarcity of information regarding researchers’ reflective practices when making methodological choices. Existing QMS are convincing at following a formal step-by-step protocol but do not discuss the reflective practices that occur outside this formal protocol.

We therefore aim to advance QMS in two ways. Firstly, we enrich existing protocols by delving into the reflective and often informal aspects of conducting QMS. More specifically, we introduce three reflective meta-practices: (1) translation, (2) abstraction, and (3) iterative interrogation. We understand reflective meta-practices as the deliberate and critical actions researchers undertake when conducting QMS. These practices are not strictly governed by formal rules. Instead, they are shaped by the researchers’ specific preferences and the QMS's purpose. By explicitly highlighting reflective meta-practices, we demonstrate how researchers reflectively weight diverse and sometimes conflicting methodological choices within the “formal” QMS protocol. Secondly, we show how researchers can employ these reflective meta-practices in their QMS. We develop two guidelines that align methodological choices and reflective meta-practices in confirmatory and exploratory QMS. Figure 2 summarizes our general framework for conducting QMS.

Framework for conducting QMS.

Prescriptive Findings: Advancing QMS in Organization and Management Research

Introducing Reflective Meta-Practices in QMS

Standardization involves harmonizing the case sample and unifying parts of the analysis and synthesis. It reduces complexity by applying templates to increase the comparability of cases. For example, researchers can use standardized deductive codes or comparative tables to detect and develop homologies between the cases. Inspiration, in contrast, signifies the artful and imaginative interpretation of the original cases throughout the research process. It involves the application of creative techniques such as “disciplined imagination” (Weick, 1989) and “conceptual leaping” (Klag & Langley, 2013) to transform and reinterpret the cases in the light of the other cases. While standardization aids researchers in ensuring greater reliability and reproducibility, inspiration aims at cultivating a creative and discretionary research approach.

Contextualization conserves the meaning and contexts of the underlying cases by providing sufficient information about the setting of the original cases. This way, researchers preserve as much meaning from the cases as possible. For example, researchers can use power quotes to infuse the QMS with contextual evidence from the cases (e.g., Feuls et al., 2021) or develop comprehensive summary tables to provide an overview of the specific sociohistorical context of each case (e.g., Omanović & Langley, 2023). Generalization, in contrast, involves theorizing about higher-order categories and their interrelations, including contextual conditions. Researchers abstract from each case's idiographic setting of time, place, and people by developing higher-level concepts that can be found across cases (Glaser, 2002). By moving between contextualization and generalization, researchers can, for example, develop a theory's boundary conditions or situational constraints. Generalization aids researchers in developing meaningful concepts and categories that hold across cases, while contextualization ensures that researchers include sufficient details on the specifics of each case in the QMS to avoid inaccurate conclusions from ignoring important contextual variations.

Provocation involves deliberately engaging in repeated cycles of critical examination and exploration. It is necessary, especially when researchers revise previous search, selection, and analysis decisions in light of new insights from contradictory case findings, different theoretical perspectives, and/or divergent interpretations. Provocation prolongs the research process as it stimulates critical thinking, encourages the exploration of alternative explanations, and disrupts entrenched thought patterns among team members. Reconciliation, in contrast, is the process of resolving discrepancies that arise from provocation. Reconciliation is vital for ensuring the continual progression of the research endeavor and ultimately concluding it by closing iterative cycles between different steps in the QMS. Through reconciliation, researchers form a coherent rhetoric about the research journey and the outcomes of the QMS. Hence, reconciliation ensures that the research process moves toward consolidation, while provocation ensures an ongoing critical engagement.

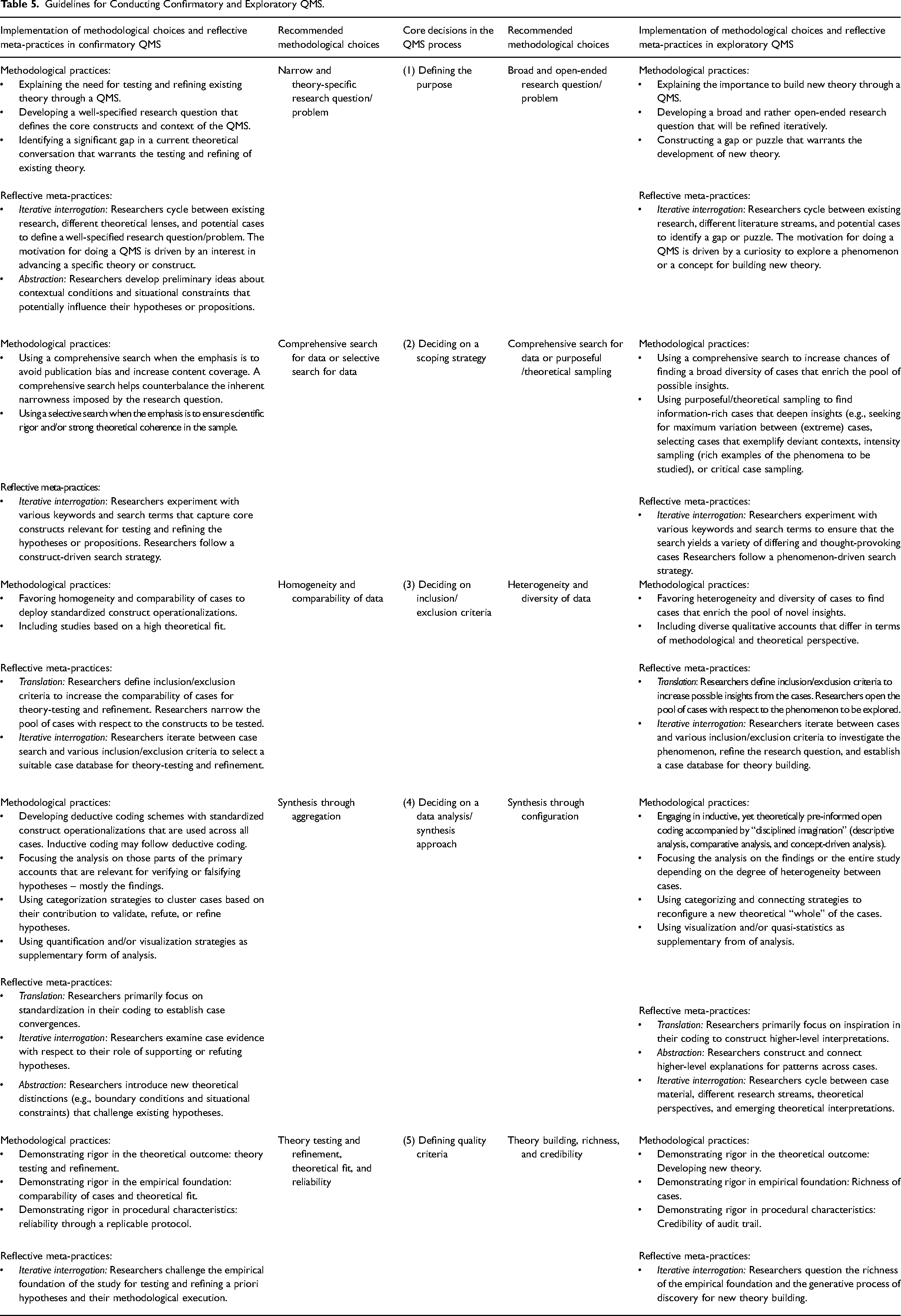

We propose to employ all three reflective meta-practices—translation, abstraction, and iterative interrogation—when conducting QMS. In the subsequent section, we demonstrate how researchers can incorporate these reflective meta-practices into their methodological choices for both confirmatory and exploratory QMS (see Table 5).

Guidelines for Conducting Confirmatory and Exploratory QMS.

Guidelines for Reflectively Conducting Confirmatory QMS

However, excessive reliance on identifying theoretical gaps in the literature can inadvertently create blind spots, naturalizing one specific perspective while overlooking others. We recommend that researchers actively search for potential cases early in the research process to challenge their tentative hypotheses. The core idea is to identify constructs that might be relevant to refine the hypotheses (abstraction) further. Researchers use the case material as a supplementary source to generate ideas about distinctions and boundary conditions affecting their hypotheses (contextualization). At the same time, the iterative refinement of the hypotheses should yield to the development of parsimonious and testable hypotheses (generalization). For example, Berente et al. (2019) distinguish two core situational constraints: the (in-)congruence of institutional logics underlying a user's practice with that of the enterprise system logic and “whether strong or weak pressure accompanies the system implementation” (Berente et al., 2019, pp. 877–878). Contextual factors can lay the foundation for establishing links between the central concepts in the hypotheses, ultimately forming a theoretical framework (Berente et al., 2019) or relationship (Rauch et al., 2014).

In searching for cases, researchers rarely come up with the right set of keywords instantly. They typically undergo several rounds of experimentation, trying different keywords across various databases (iterative interrogation). Following a construct-driven search strategy while simultaneously challenging existing definitions of the constructs to be tested is most useful (provocation). In doing so, researchers adjust their keywords in a trial-and-error process during the search. Although existing QMS do not discuss iterations in the search for cases, researchers may go back and forth between keyword refinement, their research question, and the core constructs to be tested. This reflective practice is crucial during the case search to ensure that researchers locate an adequate number of cases for moving their work beyond mere theory testing and toward theory refinement. We recommend that researchers transparently document this search and eventually select a set of keywords that generates a comprehensive case database covering a wide array of content (e.g., by relying on scientific and institutional databases) while being sensitive not to artificially inflate the database and risk diluting the thematic focus of the QMS (e.g., by using keywords related to construct definitions used in the hypotheses) (reconciliation). Every case identified during the search must be read and pre-analyzed as part of the case selection. Hence, through thoughtful keyword selection and multiple search iterations, researchers can skillfully navigate the quantity and variety of cases retrieved in the search.

Beyond selecting cases for theoretical fit, researchers need to iteratively refine their inclusion and exclusion criteria and their search for cases to select those cases that are best suited for testing and refining their hypotheses (iterative interrogation). One way is to experiment with different inclusion and exclusion criteria, such as setting parameters for inclusion and exclusion very tightly or broadly, and then observe how the composition of the case database changes (provocation). Depending on the chosen criteria, databases can contain fewer or more cases, stimulating researchers to revise their initial search terms (going back to step 2). For instance, when conducting a selective search, researchers may discover that applying strict inclusion and exclusion criteria results in a very limited case database. They can then reevaluate their inclusion and exclusion criteria and define less restrictive criteria to encompass a broader range of cases. Alternatively, they can begin a new search employing a comprehensive search approach, leading to a more extensive initial case database, and then experiment with different inclusion and exclusion criteria to generate a sufficiently large case base. After several rounds of iteration, it is vital to settle on a set of inclusion and exclusion criteria that ensure an “adequate” number of comparable cases (reconciliation). Without prescribing a specific number of cases, we recommend that researchers balance theory-driven diversity with an ongoing process of deliberately examining sources of (dis)confirmation, primarily through techniques like data juxtaposition and constant comparison (Pauwels & Matthyssens, 2004).

During the synthesis, cycling between analysis and theory refinement is key (iterative interrogation). Different case interpretations among team members offer the greatest potential for engaging in theory refinement (provocation). Diverse interpretations are important because they challenge initial understandings and assumptions, leading to deeper insights and more nuanced theories by forcing researchers to consider alternative viewpoints and explanations. However, the extent to which these different interpretations contribute to theory refinement depends significantly on the researchers’ communicative validation and “negotiated agreement” (Berente et al., 2019, p. 884). By sharing and discussing their coding and research protocols, researchers can resolve differences in interpretations and settle whether hypotheses are validated or refuted or should be refined further (reconciliation). This step is crucial because it ensures that the refinements made to the theory are not just the product of individual biases or perspectives but are collectively agreed upon and validated by a broader group of knowledgeable individuals. If researchers cannot reconcile their interpretations, they may engage in another translation round. By revisiting the cases, the nuances and complexities of each case may become more apparent, creating more substantial interpretations and contributions to theory refinement.

In the second step, we suggest that researchers systematically aggregate the cases by identifying the core constructs in each case (abstraction). The core task is to evaluate how each case supports or refutes the hypotheses. Leveraging the contextual richness provided in each case, researchers identify significant contextual factors that account for the support or refutation of hypotheses (contextualization). For instance, researchers can create case-ordered matrices (e.g., Berente et al., 2019) or descriptive case tables (see Appendix in Rauch et al., 2014) to present the specific context of each case and its implications for the tested hypotheses. While contextualization makes the specifics of each case visible, researchers must also abstract these specifics into broader boundary conditions or situational constraints related to their hypotheses (generalization). For instance, Berente et al. (2019) highlight that it was only by examining the case-ordered matrices through their chosen theoretical lens that they were able to identify “unexpected changes in responses over time, which were evident in several cases” (Berente et al., 2019, p. 884). This insight served as the primary basis for the theoretical contribution of the study.

Guidelines for Reflectively Conducting Exploratory QMS

In contrast, a purposeful sampling strategy aims to search for a narrower selection of particularly information-rich cases. Researchers using this strategy seek to identify cases that help deepen insights on a specific topic. Different techniques may be used, such as extreme sampling (selecting cases that exemplify deviant contexts), intensity sampling (rich examples of the phenomena to be studied), or critical case sampling (searching cases representing phenomena critical for advancing theory) (see Suri, 2011). Omanović and Langley (2023), for instance, highlight the importance of different contexts for understanding the organizational socialization practices of migrants. The authors chose a theoretical sampling strategy that accounts for “[…] different situations where contexts, ideas, interests and activities interact […] to capture a variety of contingencies” (Omanović & Langley, 2023, p. 5). We suggest that a maximum variation strategy can supplement this strategy. Cases from different data sources illuminate different aspects of a phenomenon (e.g., a descriptive book chapter focuses on different aspects than a theory-driven case publication). In that case, maximum variation sampling can be utilized to construct a holistic understanding of the phenomenon and uncover many different facets of the same phenomenon.

We suggest that researchers work with various keywords in their search for cases. This process can be a journey of creative discovery. As researchers uncover diverse cases, they can explore new lines of inquiry and delve into fresh insights (iterative interrogation). Such an iterative search is a crucial step in further refining the research question, and theoretical sampling is a useful strategy to identify additional cases that contribute to emerging theory. Experimenting with different keywords based on different theoretical perspectives ensures that the search yields a diverse set of cases (provocation). Therefore, a cyclic refinement of the keywords—and consequently the research question—effectively narrows the scope of the QMS to a manageable number of cases. Ideally, researchers select a set of keywords that yield a case database which ensures sufficient case diversity necessary for new theory building but not too many cases that would dilute the capacity of the researchers to engage in a reflective selection and subsequent deep analysis of cases (reconciliation).

In the case selection, researchers create convergences and homologies among cases not originally intended to be synthesized (translation). This is accomplished by choosing cases with certain structural and/or thematic similarities (standardization). Researchers focus on structural similarities by focusing on cases with similar richness and narrative style. For example, they include only those cases that provide vivid and lengthy quotes (Berente et al., 2022; Omanović & Langley, 2023) or comprehensive, multilevel case narratives (Habersang et al., 2019). Thematic similarities, in contrast, relate to the comparability of findings regarding specific case aspects, that is, geographical and/or social settings (e.g., Omanović & Langley, 2023), industries (e.g., Feuls et al., 2021), or temporal aspects (e.g., Küberling-Jost, 2021). However, researchers should also break free from these standardization efforts and include extreme or unique cases that broaden the phenomenological domain (inspiration). For example, Omanović and Langley (2023) included both scientific case studies and rich descriptive empirical descriptions from teaching cases. Others included cases that draw on a variety of methodologies (e.g., interview-based case studies and ethnographies), as well as novel and distinct forms of secondary qualitative data (e.g., online threads and social media posts) that yield new insights into a phenomenon (Vasist & Krishnan, 2022).

The more diversity researchers accept in their case selection, the more they must abstract from the findings and develop higher-order interpretations (Paterson et al., 2001). Each case is not interpreted as a stand-alone entity but rather in the light of the other cases, leading to interpretations that can be far removed from the findings of the original cases. For example, some cases may seek to paint a comprehensive picture of a phenomenon (i.e., case study research), while others provide a zoomed-in perspective to illuminate certain aspects (i.e., in phenomenological studies or discourse studies). However, both types of cases can reveal different facets of the same phenomenon and thus help to extend, explain, and modify the insights gained from other cases. Considering this, researchers alternate between the identified cases from the search and various inclusion and exclusion criteria (iterative interrogation). While previous QMS primarily view inclusion and exclusion criteria as tools to weed out low-quality cases or those with content misfit (e.g., Hoon, 2013), we propose that researchers engage deeply with the case material at this stage. Selecting structurally and thematically similar cases to allow for meaningful comparisons while also ensuring diversity to uncover the necessary frictions for originality in theory building is crucial (provocation). The iterative process of moving back and forth between case search, selection, and different theoretical perspectives allows to continually refine the research question and theoretical framing, ultimately establishing a case database suitable for generating novel insights (reconciliation).

In contrast, researchers should also strive to transcend these standardized insights, imbuing them with new, higher-order meaning through concept-driven analysis (inspiration). Drawing on conceptual devices, such as dialectics/tensions (e.g., Omanović & Langley, 2023), logics (e.g., Berente et al., 2022), archetypes (e.g., Habersang et al., 2019; Küberling-Jost, 2021), and/or rhetorical devices, such as metaphors (e.g., Feuls et al., 2021) or dualities (e.g., Habersang et al., 2019) researchers can stimulate creativity. For example, Feuls et al. (2021) used metaphors to describe different creative leadership styles in haute cuisine. These metaphors are “prototypical characters […] that emerged from the practices described by the chefs themselves (primary data) or the authors of the analyzed texts (interpretations of primary data)” (Feuls et al., 2021, p. 787).

While engaging in translation efforts, researchers connect higher-order meanings, weaving them into meaningful patterns across multiple cases (abstraction). Such connecting strategies help to identify “key relationships that tie the data together into a narrative or sequence and, information that is not germane to these relationships” (Maxwell & Miller, 2008, p. 467). It is crucial that researchers offer sufficient information on the context of the original cases, diving into their sociohistorical and organizational backgrounds, and making the case materials and original interpretations understandable in the synthesis (contextualization). For example, Omanović and Langley (2023) provide an extensive descriptive overview of each case's contextual conditions. At the same time, re-interpretating each case in the light of their specific context is essential for crafting more abstract conceptual models, meta-vignettes, or (temporal) narratives (generalization). Some authors develop a typology (e.g., Feuls et al., 2021; Meng et al., 2023; Soral et al., 2022), others provide an analytical narrative (e.g., Berente et al., 2022; Omanović & Langley, 2023), a causal model (e.g., Hoon, 2013), a process model (e.g., Küberling-Jost, 2021) or combine multiple theorizing styles (e.g., Habersang et al., 2019). This diversity emphasizes the flexibility that exploratory QMS provides in theoretical output.

Synthesis through configuration requires researchers to iteratively compare case insights with existing theory (iterative interrogation). On the one hand, researchers should question their emerging interpretations from the case analysis and examine various ways the cases are interconnected, as this can also influence the chosen theory (provocation). For example, after coding their cases, Omanović and Langley (2023) discovered contradictions in the cases that shifted the QMS's theoretical focus toward power relations in migrants’ organizational socialization practices. Researchers should be prepared for provocation to potentially lead to the disruption of initial theoretical frameworks as new connections between cases become visible in the analysis and therefore require an adaptation of the theoretical framework. On the other hand, researchers should engage in regular feedback meetings to harmonize different perspectives and interpretations among team members (reconciliation). For example, Habersang et al. (2019) used quasi-statistics as a supplementary analytical strategy to validate concepts and patterns across cases. Developing detailed protocols is crucial for maintaining coherence and consistency in the research process and defining when and why the analysis and synthesis are considered complete.

Conclusion

We have argued that the methodological development of QMS in organization and management studies is currently at a crossroads caused by an increasing fragmentation of methodological practices and a firm adherence to procedural rigor that often comes at the expense of creativity and flexibility in the research process. To advance the development of the QMS, we provide a comprehensive overview of practices-in-use, methodological choices, and core decisions in QMS. We also delve into the necessary reflective meta-practices for conducting QMS. Based on our empirical analysis, we offer two reflective guidelines for how researchers can conduct confirmatory and exploratory QMS. While the majority of published QMS adhere to an exploratory logic, confirmatory QMS have been seen so far with limited use. From our viewpoint, both types of QMS offer valuable advantages depending on the researchers’ purpose.

Opportunities also exist to develop the methodology further. First, while most QMS, including our guidelines, are based on a (critical/scientific) realist perspective, questions remain about how QMS application might vary with interpretivist and post-structuralist approaches (e.g., Blagoev & Costas, 2022). While the field of healthcare has already developed some ideas for synthesizing qualitative research from an interpretivist perspective (e.g., Paterson et al., 2001), the field of organization and management studies could benefit from an increasing plurality in empirical examples grounded in interpretive and critical paradigms (Habersang & Reihlen, 2018; Point et al., 2017). Second, as natural language processing (NLP) continues to advance in organization and management studies (Hannigan et al., 2018), QMS are ripe for experimentation with new technologies for research analysis. While some researchers have already ventured into this territory (e.g., Van Rijmenam et al., 2019), we believe substantial potential exists to enhance the process of searching for and analyzing cases by integrating new technology (e.g., Pandey & Pandey, 2019; Tonidandel et al., 2018), yet only provided it is used responsibly (Gatrell et al., 2024). Finally, QMS can play a role in democratizing academic practices. Since not all researchers may have the resources for extensive field studies, QMS offers a pathway to conduct empirical research using readily accessible archival data. Furthermore, many valuable qualitative single case studies often remain underrepresented in public discourse, partly due to biases against small sample sizes. The synthesis of qualitative research can help shine a spotlight on the significant findings of qualitative research, making them more visible to the broader public and informing managers and policymakers.

Supplemental Material

sj-pdf-1-orm-10.1177_10944281241240180 - Supplemental material for Advancing Qualitative Meta-Studies (QMS): Current Practices and Reflective Guidelines for Synthesizing Qualitative Research

Supplemental material, sj-pdf-1-orm-10.1177_10944281241240180 for Advancing Qualitative Meta-Studies (QMS): Current Practices and Reflective Guidelines for Synthesizing Qualitative Research by Stefanie Habersang and Markus Reihlen in Organizational Research Methods

Footnotes

Acknowledgements

We are sincerely grateful to Tine Köhler and three anonymous reviewers, who made a significant contribution to the development of this manuscript. We also thank Matthias Wenzel, Dennis Schoeneborn, Thomas Farchi and the Leuphana LOST group for their invaluable feedback on this manuscript throughout the various stages. Finally, we are also grateful to the AOM Research Methods Division for awarding an earlier version of this manuscript with the “RMD Best Student Conference Paper Award 2018.”

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.