Abstract

Management and applied psychology scholars are confronted with a crisis undermining trust in their findings. One solution to this crisis is the publication format Registered Reports (RRs). Here, authors submit the frontend of their paper for peer review before data collection. While this format can help increase the trustworthiness of research, authors’ usage of RRs—although emerging—has been scarce and scattered. Eventually, common beliefs regarding the (dis)advantages of RRs and a lack of best practices can limit the broad implementation of this approach. To address these issues, we utilized a systematic review process to identify 50 RRs in management and applied psychology and surveyed authors with (N = 86) and without experience (N = 161) in publishing RRs and reviewers/editors who have handled RRs (N = 59). On this basis, we (a) scrutinize prevalent beliefs surrounding the RR format in the fields of management and applied psychology and (b) derive hands-on best practices. In sum, we provide a fact check and guidelines for authors interested in writing RRs, which can also be used by reviewers to evaluate such submissions.

The relevance and impact of scientific findings crucially depend on their credibility (Fiske & Dupree, 2014). In light of a larger replication crisis in the social sciences (Shrout & Rodgers, 2018), however, the fields of management and applied psychology have come under scrutiny, as ample evidence indicates that the trustworthiness of many published results is dubious at best (Bergh et al., 2017; O’Boyle et al., 2017). A key reason for this crisis is the widespread usage of questionable research practices where scholars exercise flexibility in cleaning or analyzing their data to purposefully produce statistically significant results (Banks et al., 2016; Kepes et al., 2022). The ubiquity of such questionable research practices and most journals’ reluctance to publish null results have led to a prevalence of published findings that nearly unanimously (seem to) support hypotheses (Banks et al., 2015; Harrison et al., 2017). Against this backdrop, scholars (Aguinis et al., 2020; O’Boyle et al., 2017) as well as governmental and nongovernmental bodies (DORA, 2012; PRME, 2008) have repeatedly called for measures intended to make research in the fields of management and applied psychology more transparent, replicable, and trustworthy.

While there are several potential solutions to these problems, the submission format of Registered Reports (RRs) represents a particularly promising approach. Two key features define RRs: First, scholars submit only the frontends of their manuscripts (i.e., the so-called stage-1 submission, which contains the theory, hypotheses, methods, and planned analyses) and undergo peer review before collecting data or observing results. Second, if scholars revise their manuscripts successfully and pass the initial peer review stage, they may receive in-principle acceptance (IPA). IPA entails that a manuscript will be published irrespective of the final results of the associated study should the author(s) faithfully adhere to the accepted protocol (Hardwicke & Ioannidis, 2018; Hummer et al., 2017). Initial evidence indicates that RRs are of higher quality than conventionally submitted manuscripts and contain more rigorous methods and analyses (Soderberg et al., 2021). Several studies hint toward the notion that RRs reduce questionable research practices, as hypotheses in RRs are substantially less likely to find statistical support as compared with conventionally submitted manuscripts (e.g., Scheel et al., 2021; Toth et al., 2021). Against the backdrop of this empirical evidence, scholars in management and applied psychology put great confidence in this submission format's potential to provide more transparent tests of scientific claims (Aguinis et al., 2020; Byington & Felps, 2017). That said, RRs cannot fully prevent deliberate unethical author behaviors or scientific fraud. For example, as in conventional manuscript submissions, scrupulous scholars could very well still fabricate or falsify their data during an RR process to produce results they feel are more interesting and more likely to advance their careers (e.g., significant or counterintuitive results). Yet, RRs change scientists’ incentive structures from producing specific findings (e.g., significant results) to more theoretically and methodologically rigorous research (Chambers et al., 2014). Thus, scholars have called for a broader implementation of this submission format and claimed that RRs can even serve as “a vaccine against research bias” (Chambers, 2018, p. 1; Grand et al., 2018).

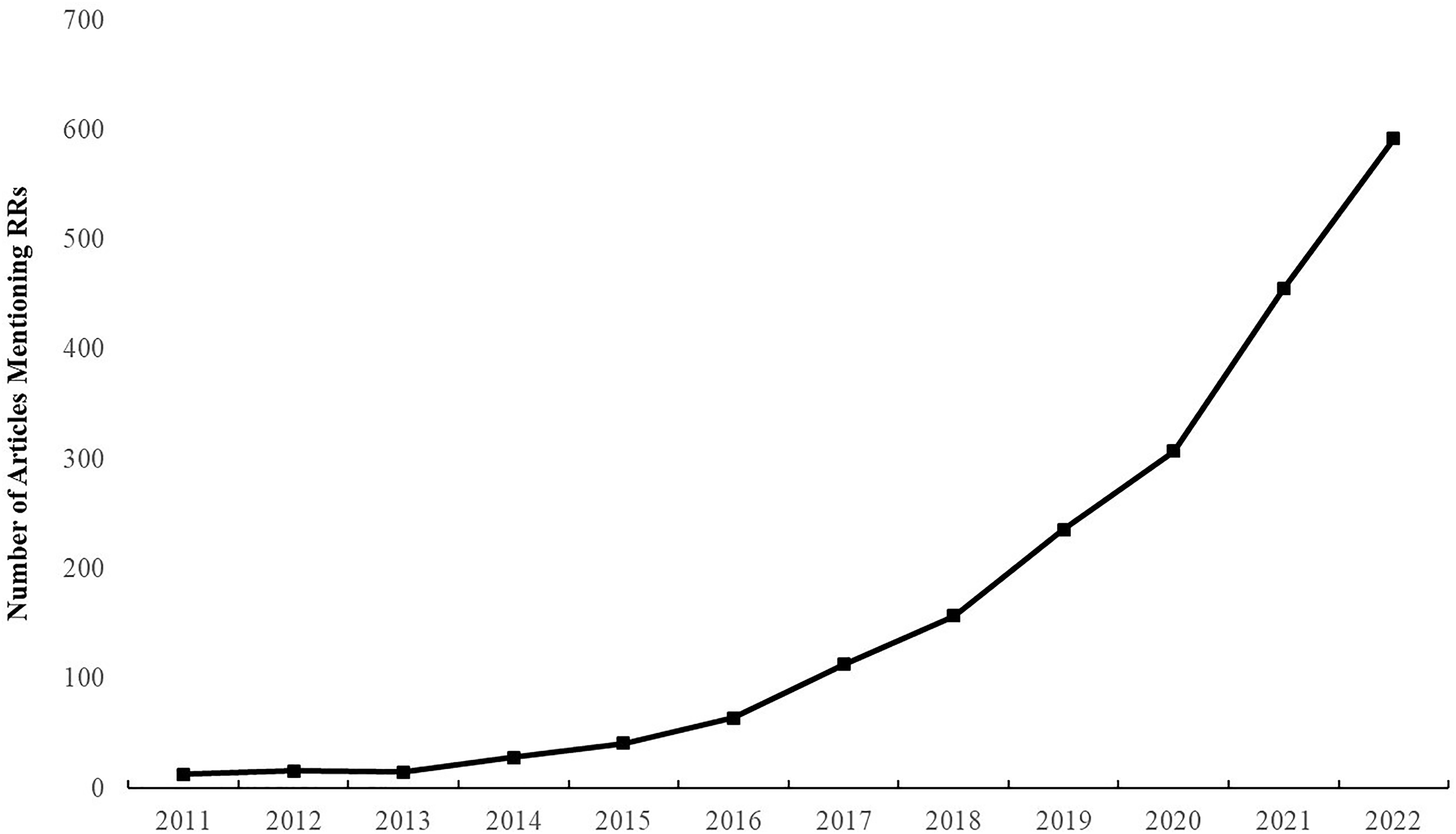

Given the promising nature of RRs (Timming et al., 2021), it is unsurprising that more than 300 journals in various scientific fields have already implemented this submission approach (Chambers & Tzavella, 2022; see www.cos.io/initiatives/registered-reports, for an overview). Journals and scholars in management and applied psychology have also shown initial interest in this publication format. A Google Scholar search using the terms “registered reports” and “management” indicates a vast increase in the number of articles referring to this format over the past years (see Figure 1). However, compared to other fields, the usage of RRs in management and applied psychology only slowly picks up speed. In fact, insecurities regarding submission opportunities and relevant processes may explain why some management and applied psychology scholars have opted to publish their RRs in broader scientific flagship journals (e.g., Nature Human Behavior, Scientific Reports; He & Côté, 2019; Whillans & West, 2022).

Number of articles in Google Scholar mentioning the term “Registered Reports” along with “Management.”

For this format to become a valuable alternative to existing publishing routes, the confusion as to whether and how RRs can be successfully published needs to be addressed. Scholars are often unaware of how developing an RR manuscript differs from the process of writing conventional (i.e., full manuscript) submissions, thus leading to ill-developed RR proposals (Timming et al., 2021; Van’t Veer & Giner-Sorolla, 2016). In addition, prevalent (potentially unwarranted) beliefs restrict the usage of this publication format among management and applied psychology scholars. For example, researchers have voiced concerns regarding whether submitting RRs may be detrimental to their careers given that this format is perceived as riskier and both editors and scholars fear that the publication of an RR would result in fewer citations than would be the case for a conventionally submitted manuscript (Chambers & Tzavella, 2022; Weinhardt et al., 2019). Such common beliefs can hamper the widespread adoption of RRs in management and applied psychology. To summarize, a lack of knowledge constitutes a critical hurdle for scholars to regularly and successfully utilize this publication format. Moreover, common (mis)beliefs may bias reviewers to not adequately assess RR submissions. Thus, the time has come to clarify prevailing (mis)beliefs concerning RRs and identify hands-on best practices for this submission format. The present paper aims to address these challenges headfirst to (1) help authors in management and applied psychology better leverage the full potential of RRs and (2) provide a checklist that can support review teams to better assess the quality of an RR submission.

This review is structured in three parts. First, we identify accepted RRs in management and applied psychology and the authors associated with these papers. This overview is intended to provide go-to-resources that authors and review teams can use as concrete inspiration for writing and assessing RR submissions, respectively. This is particularly relevant against the backdrop that management and applied psychology research yield different conventions and challenges than other fields (e.g., collecting data in organizations, using archival data; cf. Moore et al., 2022). Second, we extract and scrutinize 11 common (mis)beliefs surrounding RRs via surveys of (1) experienced RR authors as well as (2) the general management/applied psychology scholarly audience and (3) RR-experienced reviewers and editors. Finally, we outline potential best practices for writing high-quality RRs. We assess the relevance of these potential best practices based on the survey responses of RR-experienced authors, the general management and applied psychology audience, and reviewers/editors with experience handling RRs.

With this review, we aim to make several contributions aimed at increasing the quantity and quality of RRs in management and applied psychology. By extension, we thereby hope to contribute to improved trustworthiness of findings in these fields in general. To begin with, we provide the first comprehensive review of RRs in management and applied psychology. By doing so, we move beyond existing work focusing on pre-registrations (e.g., Logg & Dorison, 2021; Toth et al., 2021) or discussions of RRs in adjacent disciplines (Kiyonaga & Scimeca, 2019; Manago, 2023) and showcase the state of this new submission format in management and applied psychology. The RR format has started to gain traction in management and applied psychology (Carsten et al., 2023), and calls for a wider implementation of this submission format have become louder recently (Aguinis et al., 2020; Banks, 2023; Torka et al., 2023). Together, this points to a need to assess the status quo of RRs in these fields, which can then further inspire future RR submissions. Indeed, scholars are increasingly expected to utilize open science practices, in general, and RRs, in particular, for example when applying for jobs, adhering to promotion criteria, or trying to meet the requirements of funding agencies (Banks et al., 2019). The present overview can be of help in that regard by exemplifying the magnitude of RRs in management and applied psychology including trends and shortcomings, and demonstrating the variety of research topics, methodologies, and contexts in which RRs are conducted.

Second, we offer a quantitative assessment of a large number of both common beliefs about RRs and potential best practices for writing and evaluating such submissions. Initial discussions of (mis)beliefs surrounding this format have focused on isolated tests of single beliefs (e.g., whether RRs contain less novel findings; Soderberg et al., 2021) and happened mostly in neighboring fields. Similarly, authors have suggested some initial best practices for writing or reviewing RRs but these constitute narrative pieces in fields outside of management and applied psychology (Arpinon & Espinosa, 2023; Chambers & Tzavella, 2022). In contrast to that, the present review presents evidence-based assessments of a large number of common (mis)beliefs and field-specific best practices. By openly discussing common (mis)beliefs about RRs, we hope to inform authors and reviewers about barriers as well as potentially unwarranted worries when utilizing or evaluating this novel submission format. On a more practical note, by outlining potential best practices, we offer concrete advice for authors interested in writing high-quality RRs that will have a high likelihood of getting published. Additionally, this best practice list can also help reviewers by offering them a readily accessible tool with which to better evaluate RR submissions by comparing them against a “gold standard” when writing their manuscript review.

Purpose and Relevance of Registered Reports

Before outlining the submission process and relevance of RRs, it is critical to differentiate RRs from other open science approaches that this format is commonly confused with. First, RRs differ from pre-registration, a detailed time-stamped plan of study hypotheses, methodology, and analyses without peer review (Logg & Dorison, 2021). While pre-registrations may be included in an RR, the latter is different as authors go through a peer review process before collecting the data (which is not required for pre-registered studies). Thus, RR authors receive guidance from the review team on how to improve, for example, their proposed sample (size), data collection, or measures. Second, RRs differ from (but can overlap with) replication studies (i.e., re-examining an existing result or study using a similar or identical methodological approach; Köhler & Cortina, 2021). RRs might aim to (directly) replicate a seminal finding (e.g., Hopp et al., 2023) but more often test new hypotheses or phenomena (e.g., Everett et al., 2021). Moreover, most replication studies in management and applied psychology are not RRs (Köhler & Cortina, 2021).

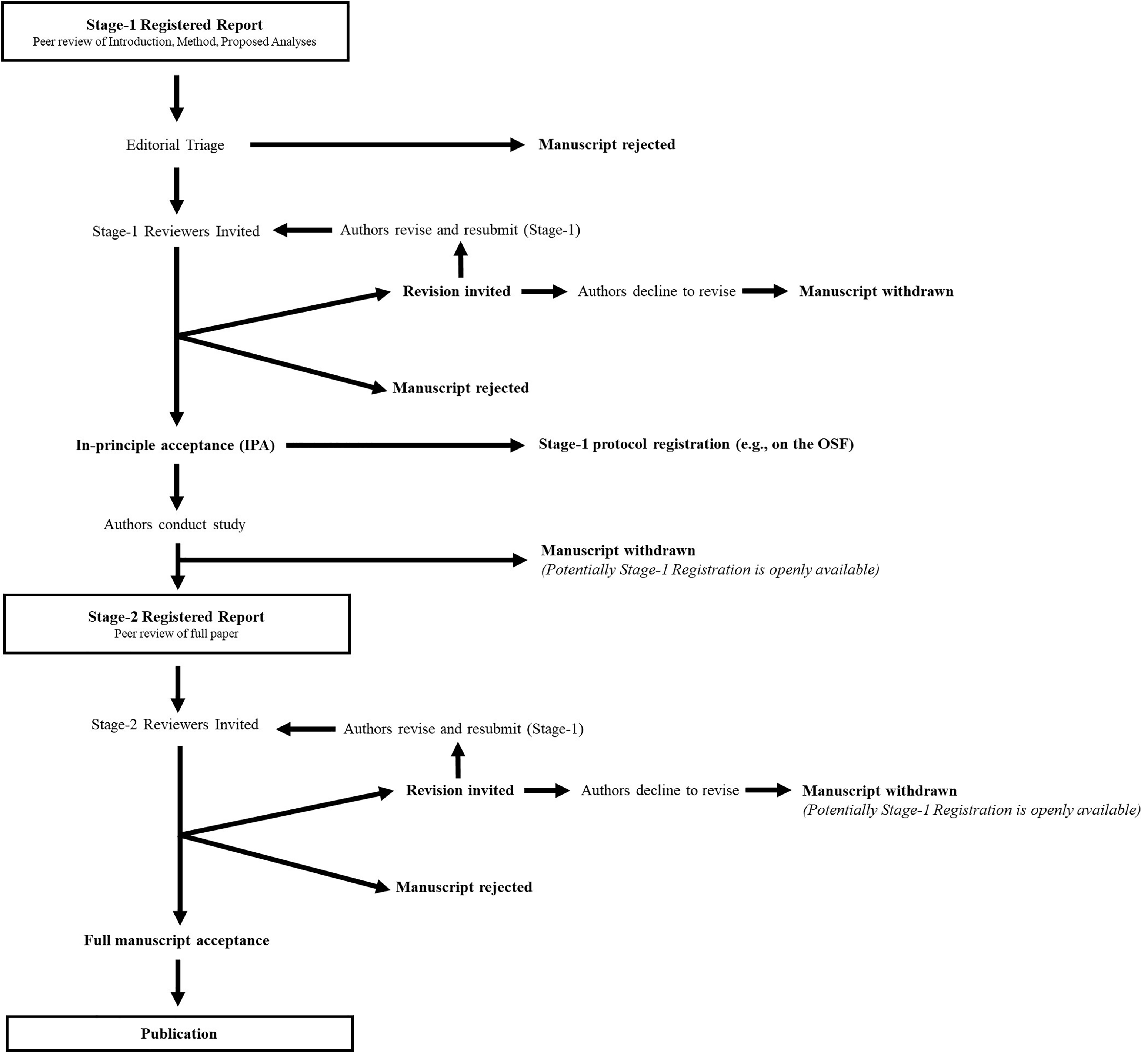

For RRs, in particular, the review process is similar to that for conventional manuscript submissions (i.e., anonymous peer review and potentially several rounds of revision) with one important difference: Scholars submit only the frontends of their papers, including the introduction, theory, and hypotheses development, and planned methods and analyses sections, to journals before collecting data. 1 If a paper is sent to peer review (as opposed to being desk rejected), the review team may either reject the paper or invite the authors to revise and resubmit their manuscript. The decision to accept the (stage-1) manuscript is based on its theoretical and methodological rigor (Grand et al., 2018). Should the authors successfully revise their manuscript, they may then receive IPA, which guarantees acceptance regardless of the nature of the results. In the case that IPA is granted, the authors are invited to collect and analyze their data following the agreed-upon protocol. After writing the results and the discussion sections, the authors can re-submit their papers. The manuscript will then once again be scrutinized by the review team but only concerning whether (a) the authors followed the agreed-upon protocol and (b) the quality of the discussion. If the authors followed the protocol and resolved the remaining comments, the manuscript will be fully accepted and subsequently published (see Figure 2 for the RR submission and review process).

Flow chart of the Registered Report submission and review process. Note. This figure was adapted from the Center of Open Science.

RRs hold great promise to increase the trustworthiness of published research. This is because, in this approach, scholars have no incentive to purposefully produce statistically significant findings or to change design or analytic features post hoc given that their studies will be published regardless of the (non-)support for their hypotheses (Nosek & Lakens, 2014). As such, RRs address the key issues that negatively affect most social and behavioral science disciplines, namely the usage of questionable research practices, in particular p-hacking and HARKing (Scheel et al., 2021), as well as publication bias (O’Boyle et al., 2014). Although these attractive features of RRs have led to increased use of this format across diverse scientific fields (e.g., medicine; Chambers & Tzavella, 2022), authors in the disciplines of management and applied psychology have not yet widely adopted this publication format. While many journals in these fields have introduced the RR format over recent years, the present review indicates that the overall number of RRs published in management and applied psychology journals is still low. In fact, when asked about the status quo concerning RRs in their respective journals, several editors replied that they had not received any or only very few (i.e., fewer than five) RR submissions in total. For example, the editor of a management journal that has been inviting RRs for several years replied, “Unfortunately, despite our continuous efforts, we have not received any registered reports yet … I really do not know what else we can do in order to attract registered reports.” In another example, a special issue inviting RRs was withdrawn due to a lack of submissions. In summary, it seems that concerning the use of RRs in management and applied psychology, “this unique approach to epistemology and method of publishing are foreign to many of us” (Timming et al., 2021, p. 596). In line with the general observation that “the fields of organizational behavior and management have been latecomers to the open science revolution” (Moore et al., 2022, p. 1), it can be concluded that RRs are still viewed with suspicion.

Several beliefs (e.g., RR submissions are excessively risky or not suitable for field research and/or early-career researchers [ECRs]) could explain why scholars are still reluctant toward this submission format. Furthermore, scholars may lack specific best practices and guidelines on how to approach the RR submission process in management and applied psychology. For example, given that field research in cooperation with companies is common in these fields, interested authors may struggle with navigating the tensions between reviewer requests, the journal timeline before and after the IPA, and company preferences and expectations. Specifically, companies often have additional requirements (e.g., approval through the workers’ council) that could impact the research design or timeline of the data collection. As such, those considerations may already need to be incorporated when writing up the initial (and revised) RR submission. The present manuscript aims to provide guidance in this regard by systematically reviewing accepted RRs in management and applied psychology, scrutinizing existing (mis)beliefs about RRs, and identifying best practices for authors.

Methodological Approach of the Present Review

In the following, we first outline how we identified accepted RRs in the fields of management and applied psychology to offer a comprehensive overview and go-to-resources for authors and review teams. Thereafter, we present the results of surveys with (1) RR-experienced authors in management and applied psychology, (2) the general management and applied psychology audience, and (3) reviewers and editors with experience in handling RRs that collectively aim to assess ongoing (mis)beliefs regarding RRs and potential best practices.

Accepted Registered Reports

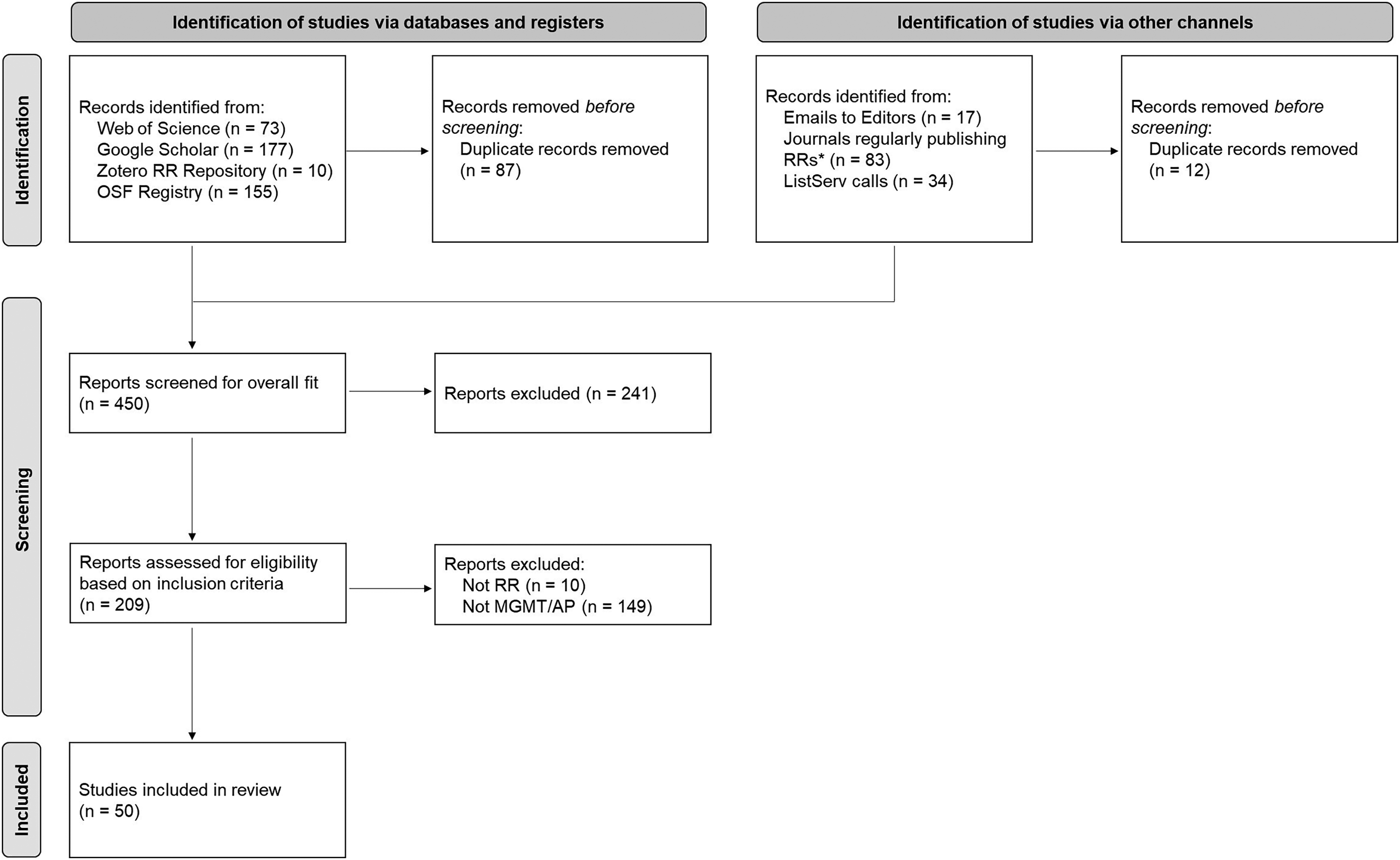

To identify accepted RRs in the fields of management and applied psychology, we followed a multistage process in line with the Preferred Reporting Items for Systematic Reviews and Meta-Analyses protocol (PRISMA; Moher et al., 2009; see Figure 3 for an overview).

Process overview of the Literature Search according to the PRISMA system. Note. AP = Applied Psychology; MGMT = Management; RR = Registered Report. *These journals that regularly publish RRs in a related field are Nature Human Behavior, Comprehensive Results in Social Psychology, and Psychological Science.

Search Strategy

We started by searching the Center of Open Science's list of journals offering RRs to identify relevant outlets in the fields of management and applied psychology (https://www.cos.io/initiatives/registered-reports). Thereafter, we searched Google Scholar for articles mentioning management and applied psychology journals that invite RRs (e.g., editorials describing the introduction of this format in a particular journal often cite other journals as examples; see Timming et al., 2021). We screened this list and the respective articles and, after discussion among the author team, identified 23 outlets that potentially fall into the fields of management and applied psychology. To confirm whether these journals are indeed associated with these fields, we consulted Web of Science's journal classification to ensure that these outlets were classified as either “Business, Management, and Accounting” or “Applied Psychology” (see Aguinis et al., 2023, for a similar procedure). One outlet (Business & Information Systems Engineering) was dropped because it did not belong to either of these categories.

We then screened the websites and contacted the editor(s)-in-chief of the remaining 22 journals to (a) confirm that they had indeed invited RR submissions and (b) identify all RRs accepted by or published in each journal. The editors of four journals indicated that they neither have been nor are offering RRs or that they are only inviting Hybrid RRs. 2 We excluded these four journals from our review given our focus on “classic” RR submissions, where the main dataset for a proposed study is collected before IPA. Because the review process for Hybrid RRs is fundamentally different compared to that for classic RRs—for example, reviewers cannot suggest any changes to data collection procedures in a Hybrid RR—we decided to focus on outlets that offer classic RRs. The remaining journals’ editors confirmed that their respective journals offer the RR format. Overall, we identified 18 journals in management and applied psychology that invite RR submissions. 3 We note that (a) several editors explained that they do not keep track of which papers were submitted as RRs or that papers submitted as RRs are oftentimes not “branded” as such, (b) scholars might conduct RRs that fall within the fields of management and applied psychology but are published in broader scientific outlets or neighboring disciplines, and (c) although RRs were published in journals classified as management or applied psychology, they may still examine a topic not central to these fields’ main research areas. Hence, we followed several steps to identify additional manuscripts matching the scope of the present review and make sure that the ones we already identified were indeed focusing on key management and applied psychology topics.

First, from the Center for Open Science's list of outlets inviting RRs, we identified three outlets (Nature Human Behavior, Psychological Science, and Comprehensive Results in Social Psychology) that regularly publish RRs that fall within the fields of management and applied psychology (see, e.g., Beylat et al., 2021; Nichols et al., stage-1 accepted). We identified all RRs published in these three outlets and saved these manuscripts for later coding. Second, we searched two scientific databases for RRs using predefined search criteria and keywords. Specifically, for Web of Science, we searched for articles containing the term “registered report*” in the title or abstract and limited the search to the research areas of (a) business and economics and (b) psychology. We then further searched these findings for articles containing the terms “manage*,” “organization*,” “work*,” “business*,” “industr*,” job*,” economic*,” and “applied psycholog*.” We screened the remaining articles to retain empirical papers and excluded all theory papers, reviews, editorials, or commentaries. For Google Scholar, we combined the search term “registered report” with “management” or “organizational behavior.” We screened the remaining papers to identify papers that were empirical and could be considered to broadly fall into either the management or applied psychology fields. All remaining manuscripts were retained for further rating. Third, we screened two online repositories of RRs (the Zotero collection of RRs [https://bit.ly/3wBYgUB] and the Open Science Framework registry of IPA RR protocols [https://bit.ly/491ZnxI]). We used the keywords noted above to identify relevant RRs and saved them for later rating. Finally, we posted requests for accepted or published RRs on the listservs of Academy of Management division groups, as well as in Open Science and Psychological Research Methods discussion groups on Google Groups and Facebook, respectively. In addition, we posted calls on listservs or Twitter accounts, including those of the Society for Personality and Social Psychology, the Society for Improvement of Psychological Science, the Society for Judgment and Decision Making, the European Association for Decision Making, and the German Psychological Society. We saved all papers we received through these calls for later ratings. In total, we identified 209 manuscripts up until spring 2022 that could potentially be included in the present review.

Inclusion Criteria

We included both stage-1 accepted and fully accepted RRs in the fields of management and applied psychology. We made this decision because stage-1 (or in-principle) accepted manuscripts have already received a “stamp of approval from editors and reviewers” (Aguinis et al., 2023, p. 3) that allows for publication (assuming that the authors execute the agreed-upon proposal). As such, authors who have received stage-1 acceptance have successfully navigated the RR review process, which entails that scholars interested in pursuing RRs could learn from these manuscripts and their authors. While, in theory, such a manuscript could be rejected or withdrawn (e.g., should the author team not collect the data as per the accepted RR proposal), preliminary evidence suggests that such cases are extremely rare (Chambers & Tzavella, 2022).

We decided to include each of the identified stage-1 or fully accepted RRs based on whether they fulfilled two criteria: (1) a manuscript had to be a “classic” RR (as opposed to, e.g., a Hybrid RR or a manuscript that had been erroneously marked as an RR) and (2) it had to fall into the management and/or applied psychology fields. To ensure that the first criterion was fulfilled, we asked all authors (when inviting them to participate in the experienced author survey described later) to verify that the paper we identified was indeed an RR. In the case of 10 papers, authors informed us that their respective papers were not classic RRs but had simply been pre-registered (with data having been collected before peer review), were Hybrid RRs, or had been erroneously marked as RRs by the publishing journals. We excluded these papers from further consideration. To verify the second criterion, we relied on the following procedure: Two management and organizational behavior graduate students were trained to provide ratings for all identified RRs. The raters were intensively trained on how to decide whether a study fell into these disciplines. In particular, we asked raters to carefully read and discuss the journal scope statements of a prototypical management outlet (Journal of Management) and an applied psychology outlet (Journal of Applied Psychology). In addition, the raters read the division statements of the Organizational Behavior and Human Resources divisions of the Academy of Management (as these represent many management and applied psychology scholars). To train the raters, they provided independent judgments for 20 RRs; these ratings were subsequently discussed with the author team to establish a common approach to decision making within the abovementioned scope. Thereafter, both raters independently coded all RRs based on an include (1) or not (0) categorization. The raters agreed in 179 out of 199 cases (90%). In cases where they disagreed, a third trained rater (also a doctoral student in organizational behavior who had received similar training) made a final decision as to whether the remaining RRs (20) should be included (which was the case for 2 out of 20 [10%]).

After applying inclusion criteria, the final number of RRs included in the present review was 50. Table S1 in the Online Supplement displays the number of (stage-1) accepted RRs included in this review per journal. These RRs addressed a variety of management and applied psychology topics, including leadership (36%), diversity and cross-cultural differences (24%), personnel selection (12%), and work stress and wellbeing (10%). The earliest publication date for an RR included in this review was 2016, while 5 RRs had IPA status (in summer 2023) and thus had not yet been (fully) published. All RRs included in this review are listed in the References section.

Sample 1 (Authors With RR Experience)

The purpose of Sample 1 was to gather perceptions of a respondent group that could best evaluate common (mis)beliefs and potential best practices from own experiences, namely authors of RR papers. Thus, we contacted the authors of the 50 identified RRs plus the authors of four RRs that were accepted or published in management and applied psychology outlets (as classified by WoS) but not included in the overall review. 4 We chose this population as the most fitting given that these authors have actual experience in (successfully) writing, submitting, and revising RR manuscripts in these fields. We pre-registered the survey and our assumptions (described below) on the Open Science Framework (https://bit.ly/3POpy3l). The survey, data, syntax, and output files, as well as a document describing any changes to the pre-registered structure, are openly available (https://osf.io/wseha).

Sample and Procedures

Overall, we invited 215 authors of the RRs to participate in this study. 5 We extracted the email addresses of these authors from the published manuscripts and university or personal websites. We then sent the authors individualized emails with a request to participate in a brief survey on Registered Reports. Participants first had to provide informed consent and verify that they had (co-)authored an (in-principle or fully) accepted RR (and were asked to contact us if they had not). They then indicated when they had started conducting their first RR, and how many of these had been accepted. Thereafter, participants evaluated the (mis)beliefs and best practices described below and provided input as to how (likely) they would be to utilize RRs in the future. We additionally invited these authors to provide open comments. Finally, participants provided demographic information, and we thanked and debriefed them.

A total of 86 authors with RR experience participated in the survey, which represents a response rate of 40%. We note that these numbers are comparable to recent investigations surveying authors with experience in publishing RRs (DeHaven et al., 2019; Toth et al., 2021). Robustness analyses (i.e., chi-square tests) revealed no significant differences between respondents and nonrespondents of the experienced author survey in terms of gender, career stage, or departmental affiliation (all p > .05; see Online Supplement). These scholars were, on average, Mage = 38.9 years old (SD = 9.2), and mostly male (64.2%). The majority of them worked in psychology departments (50.6%), followed by business schools (42.0%). They mostly were full professors (32.1%), followed by untenured post-docs (17.3%), PhD students/candidates, and untenured assistant professors (both 13.6%). Overall, these authors had worked on 1.6 accepted RRs (SD = 1.5) and submitted 1.8 RRs (SD = 1.5).

Samples 2a (Management and Applied Psychology Audience) and 2b (Reviewers/Editors)

Although the respondents in Sample 1 all had first-hand experiences in successfully writing RRs, they may be positively biased. This is because they already invested efforts to submit a manuscript through this format and had a (potentially single) positive experience in this regard; as such, they may not have engaged in counterfactual thinking (i.e., imagining how they would respond if the RR experience would have turned out differently/unsuccessful; see McDermott, 2023) when expressing their opinions about RRs. Our goal in study 2 was to address these shortcomings. We, therefore, contacted management and applied psychology scholars who knew what an RR is but have had no (successful) RR experiences themselves (Sample 2a) as well as reviewers/editors with experience in handling RRs in the review process (Sample 2b). We pre-registered our assumptions for these samples (https://osf.io/mf4bd) and we provide study materials, data, syntax, and output files (https://osf.io/wseha).

Sample and Procedures

It was our goal to reach as many management and applied psychology scholars as well as RR-experienced reviewers and editors as possible to get generalizable feedback. For both samples, we asked for participation via the listservs described previously and by reaching out to scholars in our network who would fit the criteria for either of the samples. For Sample 2b, in addition, we reached out to all editors of journals that accepted or published at least one of the RRs published in management and applied psychology. We asked them to participate in the survey themselves and to either name the action and/or associate editors of RRs accepted or published in the journal or to forward them the survey link. Procedures were nearly identical to the ones in the experienced author sample. Exceptions include that participants for Sample 2a had to indicate whether they had previously successfully submitted an RR (which would lead to terminating the study) and/or whether they had reviewed or edited an RR (which would re-direct them to the survey for Sample 2b). For Sample 2b, we similarly asked whether they had reviewed and/or edited an RR before and we terminated the survey if they declined in both cases.

For Sample 2a, a total of 161 management and applied psychology scholars with no (successful) experiences with RRs participated in the study (Mage = 36.4 years old, 50.3% male). They mostly worked in business schools (64.9%) and PhD students constituted the largest group of participants (28.6%). For Sample 2b, 59 scholars with experience in reviewing and/or editing RRs participated (Mage = 43.6 years old, 62% male). On average, those who edited RRs did so for 3.6 RRs (SD = 3.4) and RR-experienced reviewers had reviewed 2.6 RRs (SD = 2.7). They had 6.6 years of overall editorial experience and 14.9 years of reviewer experience. They mostly worked in psychology departments (52%) and business schools (33%) and as full professors (40.7%).

In all three samples, responses for ratings to the (mis)beliefs and best practices were forced to avoid missing data. In cases where participants responded to some questions but then terminated the survey, we kept the remaining responses to increase statistical power and retain relevant information. Deleting participants who only partially filled in the survey did not affect any significance tests or conclusions described below. In addition, we followed best practices to avoid insufficient effort responding by combining open-ended with forced-choice responses, as well as integrating statements of different valence and direction, among others (Zickar & Keith, 2023). Post hoc checks (e.g., long strings of identical responses, response times, and evaluations of open-ended responses) and robustness analyses revealed that removing participants who potentially demonstrated insufficient effort did not affect the direction or statistical significance of the findings. Finally, excluding participants indicating that their (primary) field of study was outside of management or applied psychology did not affect any of the results and significance levels reported below. All robustness analyses are available in the Online Supplement (Tables S5–S8).

Common Beliefs About Registered Reports

To derive the most relevant (mis)beliefs about RRs, we compiled a list of common beliefs from editorials, commentaries, and theory pieces mentioning the RR format in general (e.g., Hardwicke & Ioannidis, 2018; Mellor, 2017) or introducing this format to a particular management or applied psychology journal (e.g., Antonakis, 2017; Timming et al., 2021). Thereafter, we discussed overlaps and identified the most commonly mentioned (mis)beliefs concerning the potential downsides of RRs. This resulted in 10 (mis)beliefs, to which we added an eleventh one, namely that working on RRs is less enjoyable as compared with conventional manuscript submissions. We added this (mis)belief because this concern has recently been noted in a top-tier applied psychology journal (Collins et al., 2021) and prompted a heated debate (e.g., Times Higher Education, 2021). While we do not claim that this list of concerns is exhaustive, we aimed to produce a list that represents the most frequent, relevant, and impactful (mis)beliefs in management and applied psychology without any unnecessary repetition.

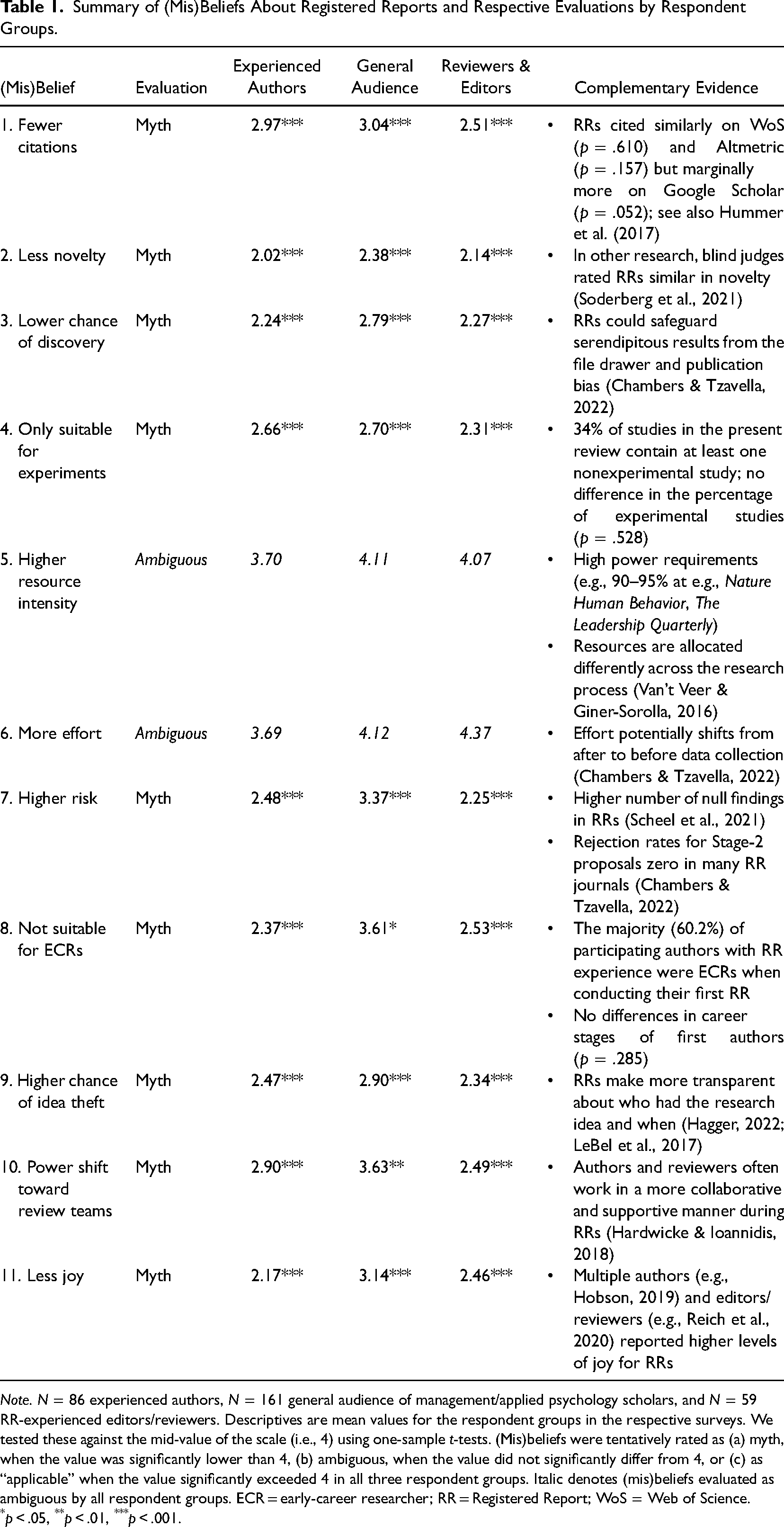

In the following, we discuss each (mis)belief and present the respondent groups’ evaluation thereof. We tested the mean ratings for each (mis)belief with one-sample t-tests against the mid-scale value ([4] on a scale that ranged from strongly disagree [1] to strongly agree [7]). We classified (mis)beliefs preliminary as “myths” when all three respondent groups significantly disagreed with them (i.e., statistically lower than the mid-point of our scale). If participants’ assessment of a (mis)belief did not differ from this value, we denoted that (mis)belief as currently “ambiguous.” If they agreed with a (mis)belief (i.e., statistically higher than the mid-point of our scale), we marked it as tentatively “applicable.” Where possible and meaningful, we added archival data that compares RRs with conventionally submitted manuscripts to better inform the evaluation of a (mis)belief. We note that the small number of accepted RRs in management and applied psychology (and, thus, the number of authors and editors/reviewers with RR experiences) limits the generalizability of both the subjective assessments and the additional archival data. Furthermore, it is important to note that in order to make causal claims about the differences between RRs and conventionally submitted manuscripts, one would need to conduct randomized controlled trials. We echo Nosek et al. (2020) that such an experimental approach would only be feasible in cooperation with a journal that assigns authors to submit papers via the RR or conventional route. When interpreting the subjective evaluations from the three respondent groups and the archival data we collected, these results should not be taken as definite, universal truths. Rather, our results constitute a preliminary assessment of the status quo of RRs as perceived by relevant stakeholders and evaluated through initial data. Table 1 provides an overview of (mis)beliefs and participants’ evaluations of these.

Summary of (Mis)Beliefs About Registered Reports and Respective Evaluations by Respondent Groups.

Note. N = 86 experienced authors, N = 161 general audience of management/applied psychology scholars, and N = 59 RR-experienced editors/reviewers. Descriptives are mean values for the respondent groups in the respective surveys. We tested these against the mid-value of the scale (i.e., 4) using one-sample t-tests. (Mis)beliefs were tentatively rated as (a) myth, when the value was significantly lower than 4, (b) ambiguous, when the value did not significantly differ from 4, or (c) as “applicable” when the value significantly exceeded 4 in all three respondent groups. Italic denotes (mis)beliefs evaluated as ambiguous by all respondent groups. ECR = early-career researcher; RR = Registered Report; WoS = Web of Science.

*p < .05, **p < .01, ***p < .001.

Although citation numbers and impact factors are far from ideal measures of academic performance and quality (Simons, 2008), they are still among the most commonly used evaluation criteria (Nkomo, 2009). In this regard, authors have repeatedly voiced concerns that publishing RRs may prove detrimental to their careers because such manuscripts might receive fewer citations when compared with conventionally submitted manuscripts (Roloff & Zyphur, 2019; Weinhardt et al., 2019). The main reason behind this concern is that RRs combat publication bias by transparently reporting null results (Scheel et al., 2021). Given the thirst for statistical significance in management and applied psychology (Lewin et al., 2016), scholars may therefore fear that RRs may receive less attention from other scholars (Grote & Cortina, 2018).

Neither experienced RR authors nor the general management and applied psychology audience nor editors and reviewers with experience handling RR manuscripts shared this concern, Ms = 2.51 to 3.04, all p < .001, Cohen's d = 0.57 to 1.04. For additional data to examine such fears, we followed the guidance of a pre-print by Hummer et al. (2017) to empirically investigate this concern through a naturalistic comparative approach. In particular, we examined differences in citation counts (Web of Science and Google Scholar) and Altmeric scores between the RRs in this review and conventionally submitted comparison papers. With available citations/scores for 18 RRs and 52 comparison papers, results suggest that RRs do not differ in terms of citations on Web of Science (Md = 0.00, z = −0.51 p = .610) or scores on Altmetric (Md = 0.00, z = 1.42, p = .157) but received marginally more citations on Google Scholar (Md = 0.40, z = 1.94, p = .052). 6 These results mirror similar findings in other fields (Hummer et al., 2017). Overall, these evaluations from different respondent groups and additional analyses of citation counts suggest that concerns about fewer citations may be unwarranted.

RRs Include Ideas That Are Less Novel: Myth

Although there has been heated debate as to the extent to which scientific output should be evaluated based on novelty (Cornelissen & Durand, 2012), academics and media outlets favor novel research over more well-known phenomena (Antonakis, 2017). As such, worries that RRs contain fewer novel ideas or reduce the novelty of scientific output in general (DeHaven et al., 2019; Higgs & Gelman, 2021) may limit scholars’ willingness to utilize this submission format.

However, all respondent groups strongly disagreed with this statement, Ms = 2.02 to 2.38, all p < .001, d = 1.05 to 1.72. A study by Soderberg et al. (2021) provides additional evidence for this evaluation from outside of the fields of management and applied psychology. They asked researchers to rate RRs and non-RRs on a wide range of criteria, including novelty (with the scholars being blind to which articles belonged to which category). They found that there was no difference in terms of novelty between RRs and non-RRs, with RRs being rated slightly (but not significantly) higher in novelty. Nonetheless, these initial results and the different respondent groups’ assessments suggest worries regarding RRs studying less novel topics or presenting less novel findings may be discarded as unfounded myths.

RRs Reduce the Chance of Discovery (e.g., of Interesting/Not Anticipated Results): Myth

Across sciences, there are many prominent examples of serendipitous or post hoc insights that have greatly benefited the academy and society at large (Roberts, 1989). Some scholars have voiced concerns that approaching research primarily from a registered, confirmatory perspective may bind scientists to mechanistic approaches that prevent additional discoveries beyond a priori hypotheses testing (Baumeister, 2016; Cropley, 2018). Thus, management and applied psychology scholars may fear “losing out” on intriguing findings that they did not think of beforehand, which may, in turn, reduce their motivation to conduct RRs (Collins et al., 2021).

None of the respondent groups seemed to share this worry, Ms = 2.24 to 2.79, all p < .001, d = 0.67 to 1.16. In this regard, Soderberg et al. (2021) also showed that RRs are rated as containing more (important) discoveries than conventionally submitted manuscripts. Importantly, the goal of RRs is not to impede serendipity; rather, this format aims to make transparent which findings are based on a priori assumptions and which on post hoc exploration (Higgs & Gelman, 2021). In fact, given that serendipity is crucial for scientific progress in management and applied psychology (Moore et al., 2022), RRs can play an active role in encouraging such findings. While scholars may fear that specific findings are not sufficiently interesting or excessively critical of prevalent paradigms to warrant publication, the IPA inherent in the RR process can safeguard serendipitous findings from publication bias and the file drawer (Chambers & Tzavella, 2022).

RRs Are Only Suitable for Experimental Studies: Myth

The fields of management and applied psychology have a larger variety of methodological approaches and rely on experiments to a lesser extent when compared with other disciplines (e.g., social psychology). Scholars who are interested in using, for example, survey designs, archival data, or a qualitative approach may therefore discard the format of RRs altogether should they believe that this submission format only fits experiments. Such worries have been reported by several management (see Timming et al., 2021) and psychology scholars (Reich, 2021).

RR-experienced authors, the general management and applied psychology audience, and RR-experienced reviewers and editors disagreed with this statement, Ms = 2.31 to 2.70, all p < .001, d = 0.81 to 1.20. Additional data similarly support this assessment. First, a third of RRs in the present review used nonexperimental formats (34%), including (field) surveys (18%) and archival data (12%). We again utilized modified z-scores (Hummer et al., 2017) to further examine potential differences in the type of studies conducted (experimental vs. not) reported across RRs and comparable conventionally submitted manuscripts. These analyses revealed no differences in terms of the percentage of experimental studies included in a paper (0 to 100%; Md = 0.00, z = 0.63, p = .528). Finally, the present review revealed that scholars may even rely on the RR format even in the absence of formal and/or confirmatory hypotheses. In particular, Fasey et al. (2021) relied on a mixed-methods convergent design (Turner et al., 2017) that utilized both qualitative and quantitative data to specify and examine organizational resilience. While there is certainly room for more nonexperimental RRs in the future, our review indicates that the claim that RRs are only suited for this methodological approach is rather a myth.

RRs Require More Resources (e.g., Time and Money): Ambiguous

Temporal and monetary resources are scarce among academics (Certo et al., 2010). As such, worries that larger resource investments will be required for RRs when compared with conventional submissions may play a role in why authors are hesitant to approach projects through this format (Carsten et al., in press). Allen and Mehler (2019, p. 5) noted, for example, that some power requirements (e.g., min. 95% at Nature Human Behavior) may “run up against feasibility constraints.” Similarly, scholars voiced concerns that for RRs, “the time cost inherent in these practices is often conceptualized as an inconvenience” (Tiokhin et al., 2021, p. 862; see also Reich, 2021). While the abovementioned articles also state that these demands have positive consequences for the scientific community, these additional requirements may limit many scholars’ willingness or ability to conduct RRs.

The three scholarly respondent groups had heterogeneous views concerning this worry, such that there was neither strong agreement nor disagreement with the belief that RRs require more resources, Ms = 3.70 to 4.11, p = .09 to .78, d = 0.04 to 0.19. Indeed, authors may have to invest significantly more effort in planning data collection and analyses beforehand and subsequently spend less time and effort after collecting the data or during the review process (Chambers & Tzavella, 2022; Van’t Veer & Giner-Sorolla, 2016). The heterogeneous views regarding resources required for RRs were also mirrored in the experienced authors’ qualitative responses. For example, one author stated that “the downside is that completing a Registered Report style manuscript has taken me significantly longer than my other kinds of research” and a participant from the reviewer/editor sample noted, “There's a huge time cost to registered reports … It needs to be factored into projects.” Other scholars suggested, however, that, when conducting an RR, the investments are simply allocated differently as compared to a conventionally submitted manuscript (i.e., higher time investment before rather than after the data collection). Concerning monetary resources, RRs are often higher powered (Soderberg et al., 2021) and thus require larger (and more expensive) samples. In summary, it seems that as of now, this concern may apply to some extent—showcasing the need for management and applied psychology to consider potentially higher resource investments (at least in some cases).

RRs Require More Effort: Ambiguous

While the previous belief focused on the higher resource intensity of RRs in terms of time and money, there are also worries about RRs requiring more intensive and demanding work when compared with conventional manuscripts. Weinhardt et al. (2019, p. 383) have stated, for example, that “[i]t is an open question whether adopting Registered Reports results in more of an effort for the author” (see also McAbee et al., 2018). The need to invest more energy and intensive work in an RR when compared with the already intensive conventional submission process may discourage researchers from conducting RRs.

All of the groups of participants voiced ambiguous opinions as to whether RRs require more effort, such that no group significantly agreed or disagreed with the statement, Ms = 3.69 to 4.37, p = .09 to .39, d = 0.07 to 0.21. Some open comments by experienced authors illustrate these divergent views. For example, concerning the process of conducting an RR, one author mentioned that “[i]t was a lot of work, more than any other project I have undertaken.” By contrast, other scholars noted that RRs do not require more effort per se but that the effort involved (as compared with conventional submissions) is higher before submitting a paper for review but lower after data collection. This view was mirrored by several experienced authors in our survey, with one, for example, stating that “[t]he starkest experience was the shift in workload from post-data to pre-data collection. Overall, I think the paper needed the same time until publication.” In summary, there are divergent opinions as to whether RRs require more effort or not, and we encourage future research to examine which boundary conditions (e.g., outlet, methodological approach, and research topic) affect (differences in) the amount of effort that authors need to invest (Nosek et al., 2020).

RRs Are Riskier (e.g., Because Reviewers Might Reject the Stage-2 Proposal After All): Myth

For most scholars, securing a “safe” publication on their CVs is crucial for academic success, including getting a job and tenure. One fear associated with the RR process is that a review team may reject a manuscript if the associated results are not statistically significant or sufficiently “novel.” For example, mirroring similar worries across fields (Chambers et al., 2014; Chambers & Tzavella, 2022), a participant with no RR experience noted that “Part of the dilemma with registered reports is that if they would be rejected, it is difficult to place elsewhere.”

RR experienced authors, the broad management and applied audience, and reviewers/editors with RR experiences all disagreed with this statement, Ms = 2.25 to 3.37, all p < .001, d = 0.37 to 1.15. In fact, published RRs include a substantially higher number of null results than conventional manuscripts (Scheel et al., 2021). Suggesting support for the view that null findings are unlikely to lead to rejection at stage-2, several journal editors in other fields indicated that their rejection rate after IPA is currently zero (Chambers & Tzavella, 2022). Or in the words of an editor of a management journal who wrote us during this data collection, RRs “are the fail-safe way to get an acceptance.” We note, however, that even in the publication process of RRs, reviewers may still be narrowly focused on results having to be statistically significant or novel (Smaldino & McElreath, 2016). For example, one RR author explained that during an RR project (which was beyond the focus of the present review), the stage-2 paper was rejected (due to deviations from the stage-1 protocol), and other authors recalled debates with review teams after obtaining null results. Particularly given potentially high costs in terms of resources and effort associated with RRs, such stage-2 rejections may constitute severe and costly setbacks for scholars. Although such cases are extremely rare, it is relevant to educate scholars (e.g., on how to conscientiously adhere to their stage-1 protocols), and editors and reviewers (e.g., what constitutes a deviation from a stage-1 protocol), to limit the number of these instances to valid rejections (i.e., actual, severe violations of stage-1 protocols).

RRs Are Less Suited for Early-Career Researchers: Myth

A prominent worry in academia is that RRs are not suited for ECRs (Allen & Mehler, 2019). The reasons for that are mentioned in the previous beliefs (i.e., RRs lead to fewer citations, require excessive resources, and may limit the possibility of top publications).

However, all respondent groups disagreed with this worry, Ms = 2.37 to 3.61, p < .001 to p = .011, d = 0.20 to 1.22. Additional empirical evidence also suggests that RRs may constitute a valuable submission format for ECRs. First, the vast majority of the authors of RRs in our sample worked on their first RRs while they were ECRs (60.2%; namely 28.4% PhD student/candidate, 15.9% untenured assistant professor, 15.9% untenured post doc). Second, when comparing RRs and comparable conventionally submitted manuscripts in terms of first authors’ career stages, we found no differences across submission formats (Md = 0.00, z = −1.07, p = .285). Journal editors in other fields even reported that RRs were more likely to be led by ECRs when compared with conventional submissions (Chambers & Tzavella, 2022). It should be noted that some participants mentioned that RRs “should be used in moderation for Ph.D. students because it takes so much longer to finish a RR.” Nonetheless, it is worth emphasizing the critical benefits of conducting an RR, “especially as an early-career researcher” (Hobson, 2019, p. 1010). An RR may help an ECR to secure an early publication that they can include on their CV and minimize the risk of investing resources in a project that may be discarded due to nonsignificant findings (Allen & Mehler, 2019; Henderson & Chambers, 2022). Thus, while ECRs should consider the costs of RRs, our review suggests that RRs constitute a potentially more beneficial approach for them compared with conventional submissions.

Researchers’ Ideas Are More Likely to Get Stolen Through an RR Format: Myth

The fear of having their research stolen (e.g., by reviewers) may prevent researchers from conducting RRs; this anxiety is commonly noted by scholars in management and applied psychology (see Banks et al., 2019; Toth et al., 2021). Although preliminary evidence shows that this scenario is unlikely (LeBel et al., 2017), in theory, one could imagine that reviewers with more resources could steal a research idea while the original authors are undergoing the RR process.

However, all of the respondent groups strongly disagreed with this worry, Ms = 2.34 to 2.90, all p < .001, d = 0.69 to 1.10. The reason is that only the review team is likely to view the stage-1 proposal (in most cases, authors can opt to not make the stage-1 proposal public until the final publication). If an unscrupulous reviewer or editor were to publish a similar or identical study, the other members of the review team might easily become aware of this fact (Van’t Veer & Giner-Sorolla, 2016). Second, IPA guarantees publication irrespective of novelty. Thus, even in the unlikely event of a research idea or project being stolen, this would have no bearing on the publication of a paper that has received IPA. As such, the logic inherent in the RR process makes scooping less likely. The reason for the reduced likelihood of an idea being stolen is that—the latest after final publication—there will be a public and time-stamped record of who and when originally submitted a given research idea, making it unappealing for anyone to attempt to steal registered research (Hagger, 2022; LeBel et al., 2017).

RRs Give Review Teams too Much Power to Decide What Research is Undertaken: Myth

In most instances, authors are excited about their own initial research ideas. One inherent worry regarding RR submission processes is therefore that a review team might substantially change the research question in a study such that it suits an idea, paradigm, or method they—but potentially not the authors—find interesting or suitable (see Chambers & Tzavella, 2022). These concerns were also voiced by some of the scholars in this survey, with one editor/reviewer, for example, stating that “Some (reviewers) can be more lenient with the planned study and others dictatorial. It's probably beneficial to provide guidance on how much reviewers should seek to influence the planned study.”

Overall, however, RR authors, the general management and applied psychology audience as well as reviewers and editors with experience handling RRs rated this worry as a myth, Ms = 2.49 to 3.63, p < .001 to = .002, d = 0.24 to 1.11. In fact, multiple scholars noted in their open comments that they found the communication with the review team more supportive and collaborative when compared with that in a conventional submission process. The reason for this is that instead of identifying failures and drawbacks of a study, which authors are often incapable of addressing at the point in time when they receive such feedback, reviewers may actually strengthen the research design through their expertise (Hardwicke & Ioannidis, 2018; Van’t Veer & Giner-Sorolla, 2016). While this suggests that worries about giving the review team excessive power may be unwarranted, we concur with the comment from an author with RR experience in the survey that “you have to be careful to find a balance between ‘your plan’ and the potential plans of reviewers. It is important to stay true to your research and taking the advice of the reviewers” (emphasis added).

Less Joy: Myth

A final worry that has sparked discussion in the academy recently is that even should RRs have many positive aspects, they could have the drawback that they are simply less enjoyable than conventional submissions (Collins et al., 2021). The reason for this concern is that scholars may become excessively preoccupied with a mechanistic and potentially anxiety-inducing process of (dis)confirming hypotheses—thereby potentially eliminating the enjoyable exploration or discovery aspect of the research process (Weinhardt et al., 2019). While this concern may seem less consequential than others, many academics chose this profession due to their intrinsic motivation for this work. Accordingly, switching to a format that is potentially less enjoyable may jeopardize their work engagement and satisfaction.

All respondent groups, however, disagreed with this presumption, Ms = 2.17 to 3.14, all p < .001, d = 0.54 to 1.41. Several narrative comments reflect these positive experiences. For example, one author who had experiences with RRs wrote, “I found the processes overall more enjoyable (as compared to normal manuscripts).” This perspective is in line with more general reports that RRs “brought the joy back into data collection” (Hobson, 2019, p. 1010). Reich et al. (2020, p. 1) even noted that editors and authors “were enthusiastic” following their experiences with the format, comparing the move from a conventional to an RR process to “upgrading from a typewriter to a computer.” While it is likely unavoidable that authors will also have negative RR experiences (especially as this format becomes more established), our results suggest that there is no real reason to assume that RRs are, in general, less enjoyable than conventional review processes.

Summary of (Mis)Beliefs

Many prevalent beliefs (e.g., fewer citations, less suitable for many research questions, and less intriguing results) seem to limit the widespread usage of RRs in the fields of management and applied psychology. Importantly, however, authors with experience in conducting research through the RR format, the general management and applied psychology, and reviewers and editors with experience handling RR manuscripts all suggest that most of these concerns—at least at this stage—may be unwarranted myths. 7 Thus, our survey suggests that RRs offer many positive consequences not only for the academy but also for individual scholars. Potential exceptions to these include the resources and effort that scholars may have to invest in conducting RRs. Specifically, the responses provided by the respondent groups in our sample, as well as additional insights, suggest that RRs may at times require more resources (e.g., larger samples and longer review processes) and greater workloads. To put matters into perspective, however, recent work suggests that even should such larger investments be necessary in some cases, the potential benefits and the opportunity to produce more high-quality, trustworthy research will likely outweigh these costs in most instances (LeBel et al., 2017; Tiokhin et al., 2021).

Registered Reports: Recommendations and Best Practices

Having scrutinized prevalent (mis)beliefs concerning RRs, we next aimed to develop hands-on, practicable guidelines that authors can readily utilize when approaching an RR project in management and applied psychology. To establish these recommendations, we applied a multistep procedure similar to that outlined previously for the (mis)beliefs. First, we searched for articles mentioning RRs in management and applied psychology using the same databases and keywords described previously. We relied on editorials of journals introducing this format, as well as theoretical, commentary or narrative discussions of RRs and potential guidelines both within (e.g., Brinkerink, 2023; Gattiker et al., 2021; Grand et al., 2018) and outside these fields (e.g., Chambers & Tzavella, 2022; Kiyonaga & Scimeca, 2019; Van’t Veer & Giner-Sorolla, 2016). We then extracted best practices relevant to the fields of management and applied psychology. Finally, we screened the RRs included in this review for laudable approaches and examples. Importantly, we want to point interested readers to existing individual journal guidelines regarding RRs (e.g., The Leadership Quarterly, Journal of Occupational and Organizational Psychology; see also Table S3 for links to RR guidelines across management and applied psychology journals). These journal guidelines can provide specific details and recommendations (e.g., whether statistical power should be min. 70% or 95%) that interested authors should consider. Yet, we note that these guidelines focus on declarative knowledge such that they outline what an RR is and what should be included in a submission (e.g., power analysis, using future tense in the methods section, etc.). By contrast, the best practices outlined below focus on procedural knowledge, or in other words, they provide an evidence-based guidebook on how authors should approach an RR submission in management and applied psychology in particular. Nonetheless, we encourage authors to reach out to editors or authors that have dealt with RRs at a journal they aim to submit their paper to in order to get highly contextualized recommendations that may be not reflected in the below recommendations.

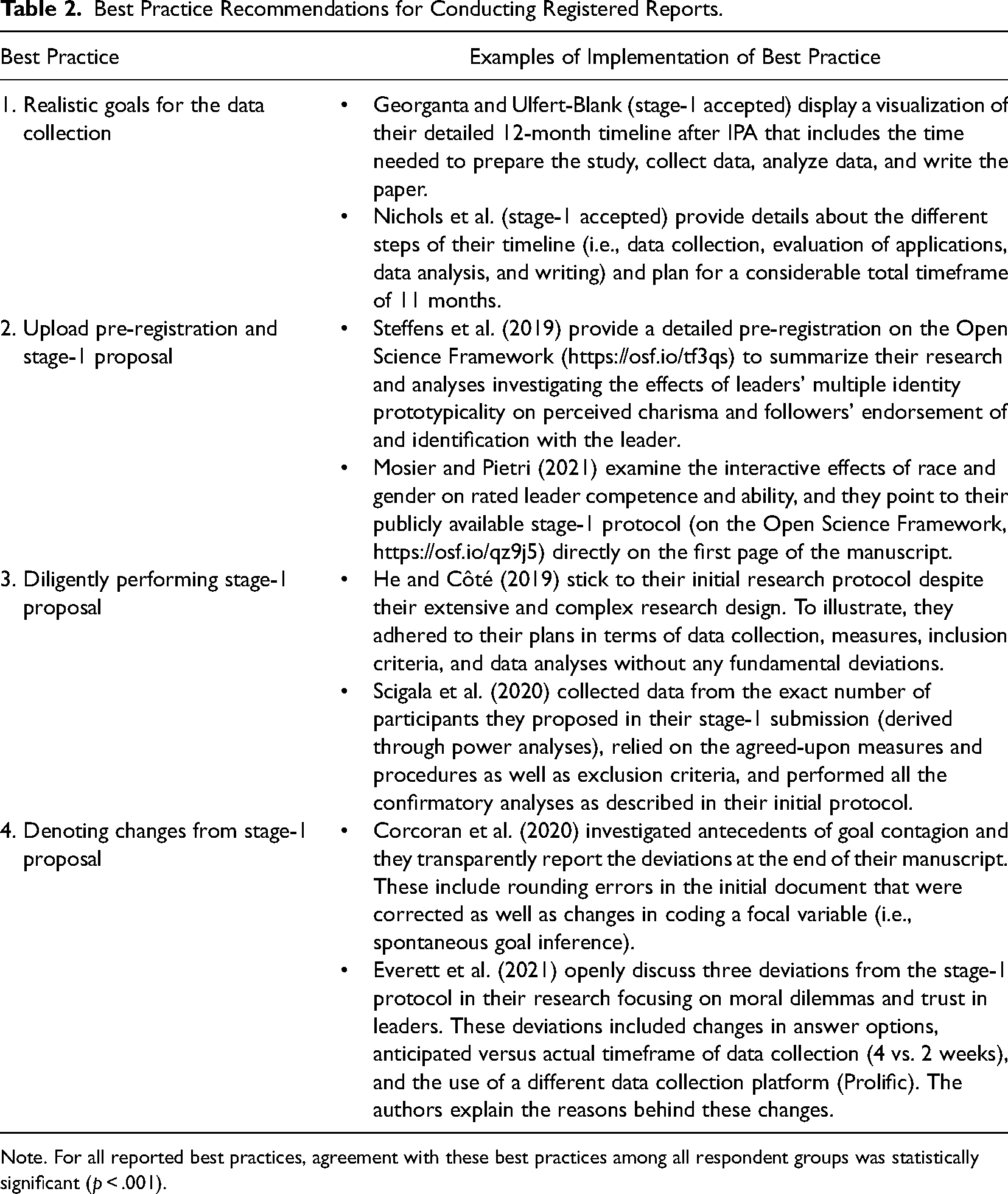

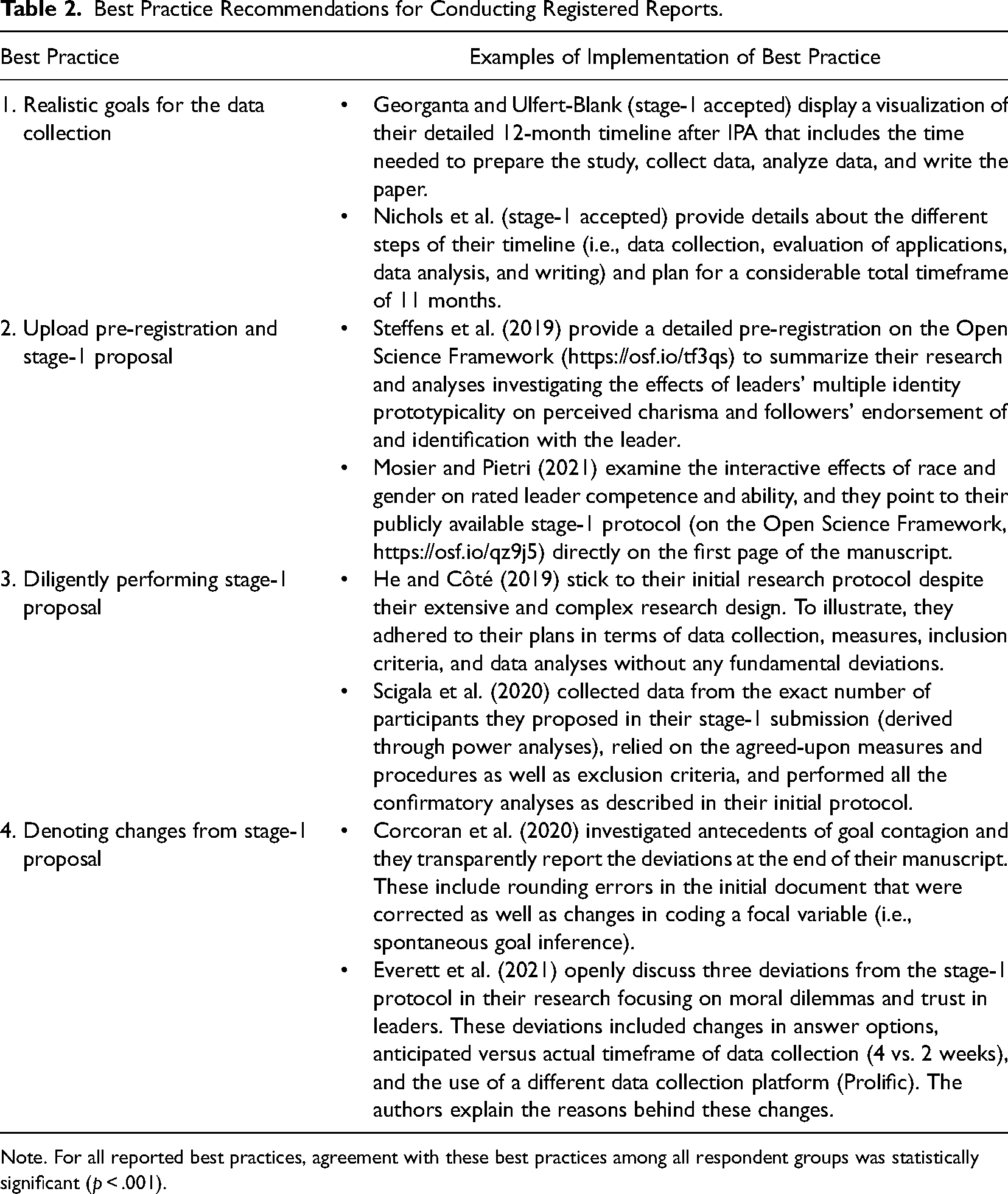

Through the above three-step process, we identified 12 potential best practices. 8 We then let Samples 1, 2a, and 2b evaluate whether each guideline constitutes a best practice on a scale from strongly disagree (1) to strongly agree (7). We include a best practice if all respondent groups significantly agreed with a guideline being a best practice (i.e., significantly higher than 4). The participants overall aligned in their recommendations, such that they uniformly and strongly agreed that 11 recommendations constitute best practices (all p < .001, average d = 1.85 to 2.58). 9 Of note, many of these recommendations are helpful for different types of research (i.e., also non-RR submissions). To focus on those guidelines that are most different from conventionally submitted manuscripts, we highlight and discuss below in detail four (out of these 11) best practices and provide examples in accepted RR manuscripts. While some of these four best practices can be useful as guidelines for conventionally submitted manuscripts, we deemed that these are the ones most relevant for authors new to the RR format. Table 2 provides an overview of these four best practices and examples of publications that showcase these practices. In addition, Table S4 in the Online Supplement displays all 11 best practices including positive examples from accepted RRs in management and applied psychology.

Best Practice Recommendations for Conducting Registered Reports.

Best Practice Recommendations for Conducting Registered Reports.

Note. For all reported best practices, agreement with these best practices among all respondent groups was statistically significant (p < .001).

The fact that, for RRs, data are not collected before manuscript submission provides space for both opportunities and challenges regarding the scope of the data collection process. Given these potential opportunities, authors and review teams should carefully deliberate on the best possible approach to examining the phenomenon in question. With this in mind, one could consider employing highly sophisticated research designs—including those with enormous sample sizes, multiple waves of data measurement, and/or a variety of different experimental intervention groups. While doing so, however, authors as well as review teams should consider which methodological approaches are feasible in light of resource constraints (Chambers & Tzavella, 2022). The feasibility of sample size, timeline, and methods is a common evaluation criterion for RRs in many journals, and conscientiously assessing these aspects a priori can help to prevent later deviations from the stage-1 protocol. We recommend that—while attempting to conduct the best possible test of a research question—one should rely on the simplest, most resource-efficient approach to do so (Kiyonaga & Scimeca, 2019). Scholars and review teams should also agree on a contingency plan intended to assist in addressing unforeseen obstacles and difficulties during the data collection and analysis process and plan in buffer times. In some instances, authors may reach out to editors to discuss potential constraints in timing or resources to avoid pitfalls after stage-1 acceptance (Henderson & Chambers, 2022).

In terms of examples among existing RRs, Georganta and Ulfert-Blank (stage-1 accepted), provide a visualization of a detailed 12-month timeline after IPA that includes time needed to prepare the suggested study, collect and analyze data, and write the paper. Nichols et al. (stage-1 accepted) also outline the different steps of their timeline (including data collection, coding of data/materials, data analysis, and writing) and plan for an extensive total timeframe of 11 months.

Upload Pre-Registration and Stage-1 Proposal

Once a stage-1 protocol has been approved, authors should upload a pre-registration as well as their stage-1 proposal to an online repository. As mentioned previously, a pre-registration includes a detailed, time-stamped plan outlining the hypotheses, methodology, and analyses (commonly uploaded to www.osf.io or www.aspredicted.org; see Logg & Dorison, 2021 and Toth et al., 2021, for a detailed discussion of the pre-registration process). The purpose of the pre-registration is to concisely and, after acceptance, publicly present the main hypotheses and planned approaches of the agreed-upon stage-1 protocol to other researchers. We (and the majority of journals offering RRs) additionally recommend uploading the agreed-upon stage-1 protocol to an online repository (such as the Open Science Framework). The upload of the finalized stage-1 protocol can be used as a reference point to compare the final stage-2 protocol to the propositions made at stage-1 and check whether authors deviated from the agreed-upon protocol. Furthermore, other scholars can treat openly available stage-1 protocols as best practices on how to prepare an RR submission and further build on these. Notably, if they wish, authors and/or journals can choose to “freeze” the upload of a stage-1 protocol with a temporary embargo until final stage-2 acceptance.

Concerning existing examples, Mosier and Pietri (2021) investigate the effects of race and gender on rated leader competence and leadership ability and their publicly available stage-1 protocol (on the Open Science Framework; https://osf.io/qz9j5) is directly visible on the first page of the manuscript. In another example, Steffens et al. (2019) conducted a detailed pre-registration on the Open Science Framework (https://osf.io/tf3qs) to summarize their research question and proposed analyses examining the effects of leaders’ multiple identity prototypicality on perceived charisma and followers’ endorsement of and identification with the leader.

Diligently Performing the Stage-1 Proposal

Diligent execution of the stage-1 protocol is the most relevant criterion for acceptance of the full manuscript at stage-2 (Chambers & Tzavella, 2022). We therefore recommend that authors should make conscientiously adhering to their initial plans a priority and that review teams should carefully check whether the stage-1 proposal was executed as proposed. This includes gathering data from exactly the number of participants/observations initially stated, using the proposed methods (e.g., measures), relying on the agreed-upon exclusion criteria, and performing the exact analyses as originally stated (ideally utilizing the statistical code prepared during the stage-1 review; Gattiker et al., 2021). In the methods parts, such adherence would ideally mean that authors, in most instances, only need to fill in some values (e.g., sample demographics) and change the wording from future to past tense. Similarly, authors should build on and consult their stage-1 protocol while writing up every step of the results section (data analyses, exact wording and testing of hypotheses, confirmatory vs. exploratory analyses, etc.).

Scigala et al. (2020) demonstrate exemplary conscientiousness in executing their stage-1 protocol. In particular, they sampled data from the exact number of participants they proposed in their stage-1 submission (derived through power analyses), used the measures and procedures as well as exclusion criteria agreed upon, and they performed all the confirmatory analyses as described in their initial protocol. Similarly, despite their extensive and complex research design, He and Côté (2019) stuck to their initial research protocol, for example, in terms of data collection, measures, inclusion criteria, and analyses without any fundamental deviations.

Denoting Changes From Stage-1 Proposal

Although adhering to the stage-1 protocol is crucial for successful manuscript acceptance at stage-2 (Kiyonaga & Scimeca, 2019), deviations from stage-1 submission are both prevalent and allowed (Toth et al., 2021; Van’t Veer & Giner-Sorolla, 2016). Two points are worth considering, however, when deviating from the agreed-upon protocol, namely the magnitude of deviation and transparency. First, should authors find themselves having to substantially deviate from the stage-1 protocol, it is recommended that they immediately consult the editor of the journal to which they are submitting before further proceeding (Chambers & Tzavella, 2022; The Leadership Quarterly, 2022). Such deviations may include changes from the initially suggested sample size, in exclusion criteria (e.g., due to unforeseen technical error), data collection mode (e.g., online instead of in-person), or changes in focal variables (e.g., the use of a different measure or operationalization; Toth et al., 2021). While some authors might feel anxious about reaching out to editors regarding these deviations, we note that journals often specifically encourage this practice in case of deviations from the agreed-upon protocol (e.g., Nature Human Behavior; The Leadership Quarterly). Second, authors need to be transparent about any (even minor) changes from the stage-1 protocol to prevent rejection at stage-2 (Gattiker et al., 2021; Nosek & Lakens, 2014). We recommend a statement (or an overview table/checklist) that clearly outlines whether any deviations occurred and, if so, in which cases. In such a statement, the authors should explain why and how exactly the procedures or analyses deviated from those outlined in the stage-1 protocol (e.g., at the end of the methods section).

In terms of best practices, several RRs thoroughly disclose changes from the agreed-upon stage-1 protocol. For example, Everett et al. (2021) openly discuss three deviations in their research on moral dilemmas and trust in leaders. These deviations included changes in the answer options of a comprehension question (because the authors noted that, in a soft launch, the initial wording was misleading), the time anticipated versus needed for data collection (four instead of two weeks), and the usage of a different data collection platform than initially outlined (Prolific instead of Lucid). The authors highlight these deviations clearly and provide reasons as well as mention that they had discussed these with their editor. Similarly, Corcoran et al. (2020) transparently reported their deviations at the end of the manuscript that investigated antecedents of goal contagion. These instances included rounding errors in the initial document that were corrected as well as changes in coding a focal variable (i.e., spontaneous goal inference).

Discussion

The present review of existing RRs and feedback from authors with RR experiences, the general management and applied psychology audience as well as reviewers and editors with experience in handling RRs suggests that most concerns surrounding RRs may be unwarranted myths. Through our research, we extend existing work that has highlighted the advantages of study pre-registration more broadly (Logg & Dorison, 2021; Toth et al., 2021). Furthermore, we move beyond research focusing on single facets of RRs (Hummer et al., 2017; Soderberg et al., 2021) and papers that have rather narratively described the potential (dis)advantages of RRs across disciplines (Chambers & Tzavella, 2022; Grand et al., 2018). The present work, by contrast, provides an evidence-based overview of what RRs in the fields of management and applied psychology (do not) bring with them at this moment in time. Moreover, we review accepted RRs and extract and empirically test best practices for writing such successful RRs. Thus, we provide a hands-on, step-by-step guide for successfully navigating RRs in management and applied psychology that extends existing broader and rather narrative guidelines (e.g., Henderson & Chambers, 2022; Kiyonaga & Scimeca, 2019).

While we acknowledge that we could not test all potential (mis)beliefs, our review shows that many (mis)beliefs may constitute myths, pointing toward the benefits of RRs. At the same time, we note that both the subjective assessment of the respondent groups as well as the (limited) archival data comparing RRs and conventionally submitted manuscripts constitute a preliminary status quo. As RRs become more numerous in the fields of management and applied psychology, more data will be available. We encourage future research to continue evaluating RRs on different criteria once this submission format has become increasingly established. As such, we also want to stress that this submission format is not a panacea for reproducibility or restoring trustworthiness in findings. We, therefore, next critically reflect on the limitations of RRs and offer an outlook on the future of RRs in management and applied psychology.

Limitations of Registered Reports

First, preliminary evidence indicates that RRs do not contribute to making controversial findings more believable to the public. Rather, a study by Costa et al. (2022) found that the public perceives RRs as similarly (non)credible as conventionally submitted manuscripts. Second, some scholars have worried that RRs could lead to a conservative shift such that review teams might prohibit authors from collecting data to test a controversial research topic, paradigm, or hypothesis (Kupferschmidt, 2018). While review teams likely have similar power in the conventional submission process, it seems possible that if all journals would only allow publications via the RR route, gatekeepers could have the power to limit the variety of research questions for which data are collected (Bian et al., 2020). Third, scholars have noted that authors or reviewers may have insufficient resources to ensure that study procedures and data collection are executed as proposed in stage-1 protocols (Claesen et al., 2021; Hardwicke & Ioannidis, 2018). Ultimately, this could lead to an accumulation of research that has a stamp of reliability but does not deliver more trustworthy results. Finally, it is clear that RRs cannot prevent deliberate scientific fraud. In theory, authors could cheat the RR format by already having their data collected (a [mis]belief surrounding RRs we have not evaluated in this review). Yet, as discussed by Chambers and colleagues (e.g., Chambers et al., 2014; Chambers & Tzavella, 2022), this normally constitutes a “myth” as reviewers and editors often request changes in the methods and data collection suggested in the initially submitted proposal—thus making already collected data basically useless. Nonetheless, even though RRs reduce the incentive to produce significant findings, fraudulent researchers may still feel that faking their data to obtain statistically significant, counterintuitive, or otherwise more “interesting” findings will help advance their scientific careers—similarly, as they could in conventional manuscript submissions. To conclude, the RR format is not a silver bullet capable of addressing all problems that may arise in management and applied psychology science; it offers incentives to not engage in questionable research practices but cannot fully stop scholars who approach science with a questionable mindset.

The Future of Registered Reports