Abstract

Artificial intelligence (AI) technologies have fundamentally transformed numerical-based high-performance computing (HPC) applications with data-driven approaches and endeavored to address existing challenges, e.g., high computational intensity, in various scientific domains. In this study, we explore the scenarios of coupling HPC and AI (HPC-AI) in the context of emerging scientific applications, presenting a novel methodology that incorporates three coupling patterns: surrogate, directive, and coordinate. Each pattern exemplifies a distinct coupling strategy, an AI-driven prerequisite, and typical HPC-AI ensembles. Through case studies in materials science, we demonstrate the application and effectiveness of these patterns. The study highlights technical challenges, performance improvements, and implementation details, providing insight into promising perspectives of HPC-AI coupling. The proposed coupling patterns are applicable not only to materials science but also to other scientific domains, offering valuable guidance for future HPC-AI ensembles in scientific discovery.

Keywords

Introduction

High-performance scientific and engineering computing has become an indispensable pillar that underpins scientific discovery, technology innovation, and large-scale engineering undertakings, positioning it as a critical element of national strategic capabilities Asch et al. (2018). Against this backdrop, recent successes of artificial intelligence (AI) in computer vision Liu et al. (2024) and natural language processing Achiam et al. (2023) have catalyzed a growing convergence of AI methodologies with advanced computational frameworks. The trend of convergence has begun to reshape scientific and engineering computing paradigms: AI-driven approaches are increasingly deployed to accelerate large-scale simulations, optimize complex scientific workflows, and uncover intricate patterns within high-dimensional datasets Jumper et al. (2021); Abramson et al. (2024). Taken together, these developments signal a powerful trend towards the integration of AI with high-performance computing (HPC) systems, fostering a new era in which data-intensive, intelligent computational strategies drive both transformative scientific insights and the accelerated design of innovative engineering solutions.

As inquiries into multidisciplinary scientific and engineering problems are significantly growing in terms of complexity and scale, even reaching extreme conditions, the technical challenges they present are escalating Dongarra and Keyes (2024). Conventional HPC strategies, while effective in traditional simulation paradigms, increasingly confront formidable hurdles, including the soaring computational costs of high-fidelity numerical methods for enhancing spatiotemporal resolution and significantly low efficiency of scaling simulations on next-generation supercomputing architectures Wang et al. (2024). As an alternative, AI approaches, which have demonstrated remarkable efficiency and flexibility, remain constrained by limited training data availability, insufficient generalization capability, and challenges related to accuracy and interpretability Yang et al. (2024).

Bridging the two methodologies, combining the predictive rigor of HPC-based physics-driven simulations with the adaptive and data-intensive AI models, offers a promising pathway towards a new computational research paradigm. By holistically combining HPC’s robust numerical foundations with AI’s heuristic efficiency, researchers can overcome the respective shortcomings of each approach. Then, a sophisticated HPC-AI methodology stands poised to become essential in tackling the complex and imperative scientific and engineering challenges. This aims to facilitate scalable, high-dimensional simulations, accelerate the solution of intricate physical models, and eventually build novel systems for more efficient scientific discovery.

In this study, we investigate the coupling of HPC and AI in order to overcome scientific discovery and engineering problems, proposing a methodology that encompasses three distinct coupling patterns:

The main contributions of this work are as follows: 1. To achieve more efficient and effective integration of HPC and AI, we propose a novel methodology comprising three distinct coupling patterns: surrogate, directive, and coordinate. 2. We develop and enhance HPC-AI ensemble applications by exemplifying each interaction pattern in the comprehensive case studies, highlighting implementation strategies and addressing the technical challenges (e.g., limitations in accuracy and scalability). 3. We demonstrate significant performance improvements in materials science applications, providing insights into the potential for future HPC-AI ensembles and offering applicable guidance for conducting HPC-AI driven research across various scientific domains.

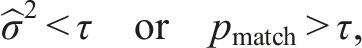

HPC-AI coupling pattern

In this section, we establish a fundamental methodology that characterizes the variety of HPC-AI coupling patterns: Illustrating the three patterns of HPC-AI coupling. (1) Surrogate pattern: AI models are used to replace part of entire simulations. (2) Directive pattern: AI provides real-time guidance to HPC simulations, optimizing parameters or providing intermediate feedback. (3) Coordinate pattern: HPC and AI operate interactively, exchanging data and feedback to solve complex problems in tandem.

Surrogate pattern

In the surrogate pattern, AI models are trained on existing simulation data to replace compute-intensive components of the simulation. By learning an approximate mapping from input parameters to target output, AI-driven models serve as efficient alternatives to complex physics-based simulations used in HPC, significantly reducing computational time.

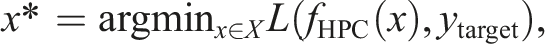

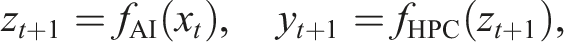

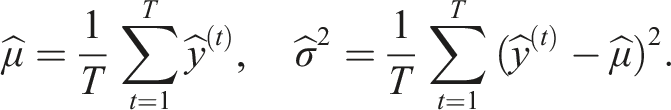

• Let x ∈ X represent the input parameters of the simulation, and y ∈ Y denote the target outputs obtained from the HPC simulation. • fHPC: X → Y is the high-fidelity function employed in HPC simulations, typically computationally expensive. • fAI: X → Y is a data-driven surrogate model trained to approximate fHPC by minimizing the discrepancy between their outputs.

The objective is to find fAI that best approximates fHPC by minimizing a suitable discrepancy metric over the input distribution p(x). For example, using the L2 norm, the optimization can be formulated as:

Other norms or physics-informed loss functions can also be adopted depending on the nature of the simulation task.

The surrogate AI model fAI operates as a black-box function whose accuracy inherently depends on the quality and diversity of the training dataset {x i , y i }. It provides a computationally efficient approximation of fHPC, facilitating rapid predictions without the need for extensive computational resources.

Directive pattern

In the directive pattern, AI model works in conjunction with HPC by handling, configuring, and optimizing the simulation tasks. It adjusts parameters or provides corrections based on intermediate simulation results. This model is particularly useful in high-dimensional parameter optimization problems, where AI can significantly reduce the search space and enhance computational efficiency.

• Let X denote the input parameter space of the HPC simulation, and fHPC: X → Y be the objective function of the HPC simulation. • AI acts as an optimizer fAI, dynamically adjusting the input x to find the optimal input x*. • The optimization objective is defined as:

The role of AI is to provide real-time guidance through a feedback mechanism, helping HPC simulations achieve better procedures and higher precision. By dynamically adjusting simulation parameters, AI enhances compute efficiency and accelerates convergence towards optimal solutions.

Coordinate pattern

The coordinate pattern represents a multi-role coupling approach, where HPC and AI collaborate and incorporate third-party intelligent roles, such as pre-trained large language models (LLMs) and agents. Unlike directive pattern, AI module provides real-time insights to the HPC system, and the HPC system, in turn, supplies feedback to the AI models. In addition, the third-party roles can interact with AI module to provide external feedback. This multi-directional interaction allows for iterative refinement of AI-guided simulation inputs and HPC results through feedback exchange, leading to improved overall accuracy and performance. While the current framework does not explicitly involve continuous retraining of the AI model (as in active learning), it enables dynamic adaptation of simulation parameters based on real-time insights.

• Let fAI: X → Z and fHPC: Z → Y be the objective functions of the AI and HPC systems, respectively, where Z represents the intermediate feedback information space. • •

The interaction between AI and HPC occurs over multiple iterative steps, with continuous feedback exchange refining and optimizing the problem-solving process. This collaborative approach leverages the strengths of both AI and HPC, leading to improved compute efficiency and solution quality.

HPC-AI pattern implementation

In this section, we provide a concrete demonstration of the ensemble method of AI and numerical simulations within the domain of materials science, illustrating how these combined methodologies can drive scientific advances and engineering innovation. Specifically, we will detail the motivations that led to the formulation of these three coupling patterns and outline how each one can leverage AI’s pattern-recognition capabilities alongside the computational rigor of high-performance simulation tools. We will examine their core algorithmic components, data-flow protocols, and computational workflows, illustrating how subtle variations in the design strategy can lead to distinct advantages, including enhanced computational efficiency, improved AI model accuracy, better uncertainty quantification, or accelerated optimization of material properties.

To this end, we design three patterns as follows: • • •

These patterns illustrate the complementary roles of the HPC and AI, providing a comprehensive method for accelerating modeling in materials science.

Density functional theory calculation surrogate pattern

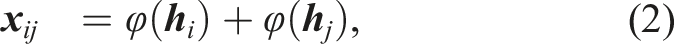

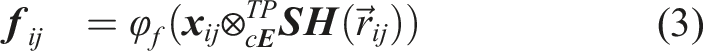

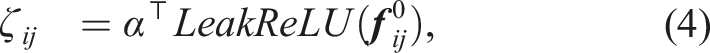

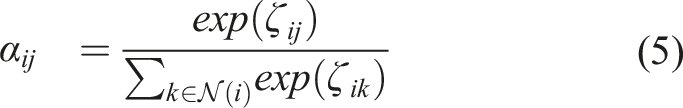

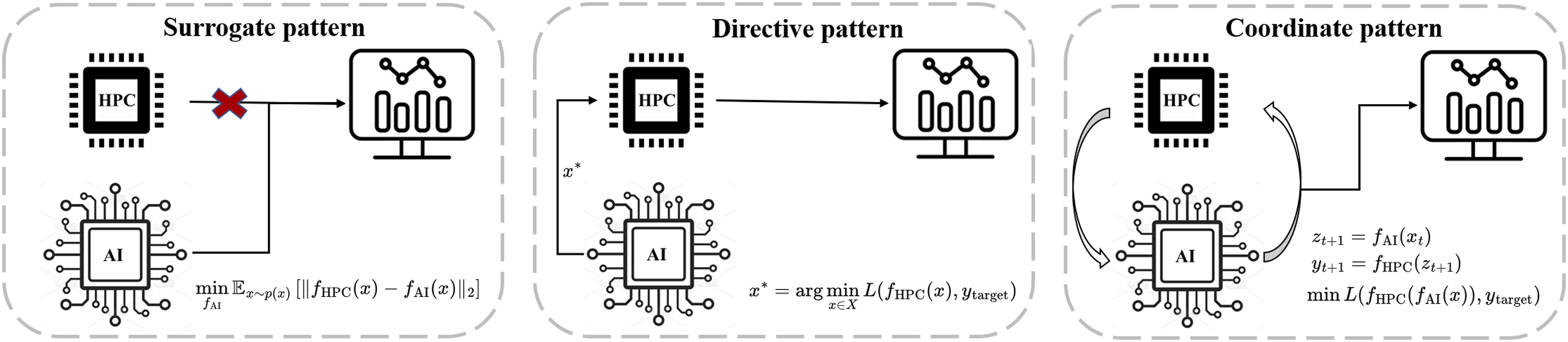

Density Functional Theory (DFT) is a quantum mechanical method widely used in materials science to calculate electronic structure and predict material properties from first principles Geerlings et al. (2003). It provides a balance between accuracy and computational feasibility, making it a cornerstone in the simulation of solid-state systems. However, DFT remains computationally expensive, especially for large or complex systems, limiting its scalability in high-throughput applications. To overcome this limitation, AI models have been increasingly used to rapidly approximate DFT-level results through data-driven learning, enabling accelerated access to material property predictions Huang et al. (2023). In this context, DFT computations are often employed as the ground truth to train surrogate models that mimic their output. As depicted in Figure 2(a), DFT calculations typically involve solving the Schrödinger equation using iterative or approximate methods to derive key material characteristics, such as total energy and electronic band gaps. The computational complexity of these methods is influenced by various factors, including the size of the atomic system under consideration, the choice of functional, and the level of convergence accuracy required. Consequently, DFT simulations are often categorized as computationally intensive tasks. In contrast, AI approaches can directly infer material properties from the given structural inputs, effectively establishing a direct “structure-property“ relationship without necessitating explicit solutions to the Schrödinger equation. By circumventing this iterative quantum-mechanical procedure, AI-driven models can substantially enhance computational efficiency and offer a promising alternative for rapid materials screening and discovery. (a) Schematic diagram of Crysformer replacing DFT for material property prediction. (b) Methodology for 3D graph embedding method. (c) Equivariant graph attention layer.

To estimate the properties of candidate crystal structures, we adopt a graph-based neural network model named Crysformer. This model aims to learn a mapping from atomic configuration to material properties by representing each crystal as a graph and applying geometric deep learning techniques. This prediction serves as a surrogate for DFT calculations during the screening process, significantly accelerating the evaluation of large numbers of generated structures.

Figure 2(b) and (c) provide a detailed illustration of the operational workflow in Crysformer for processing material structures. In this approach, the three-dimensional atomic coordinates of a given material are represented as vectorized input features, which are then fed into a neural network. During the training phase, the model parameters are iteratively refined to closely approximate the target material properties. Upon completion of training, Crysformer can directly infer these properties from structural data alone, eliminating the need for computationally demanding intermediate calculations. We will provide the details of Crysformer as follows. 1. 2.

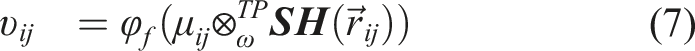

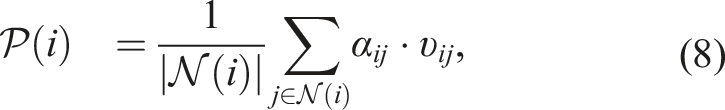

In the given expression,

Given 1.

Here, α is a trainable vector with the same dimensionality as 2.

We utilize the equivariant gate activation, as outlined in Weiler et al. (2018), to modulate the output features in a symmetry-preserving manner. This mechanism allows scalar and tensorial features to interact while maintaining SE (3) equivariance. Subsequently, a method similar to that described in equation (3) is employed to compute the message υ ij passed from node j to node i, integrating atomic features and geometric relationships through gated non-linear transformations.

In the final step, α

ij

and υ

ij

are converted into scalars via multiplication. A mean aggregation is then performed across all nodes to predict the property value.

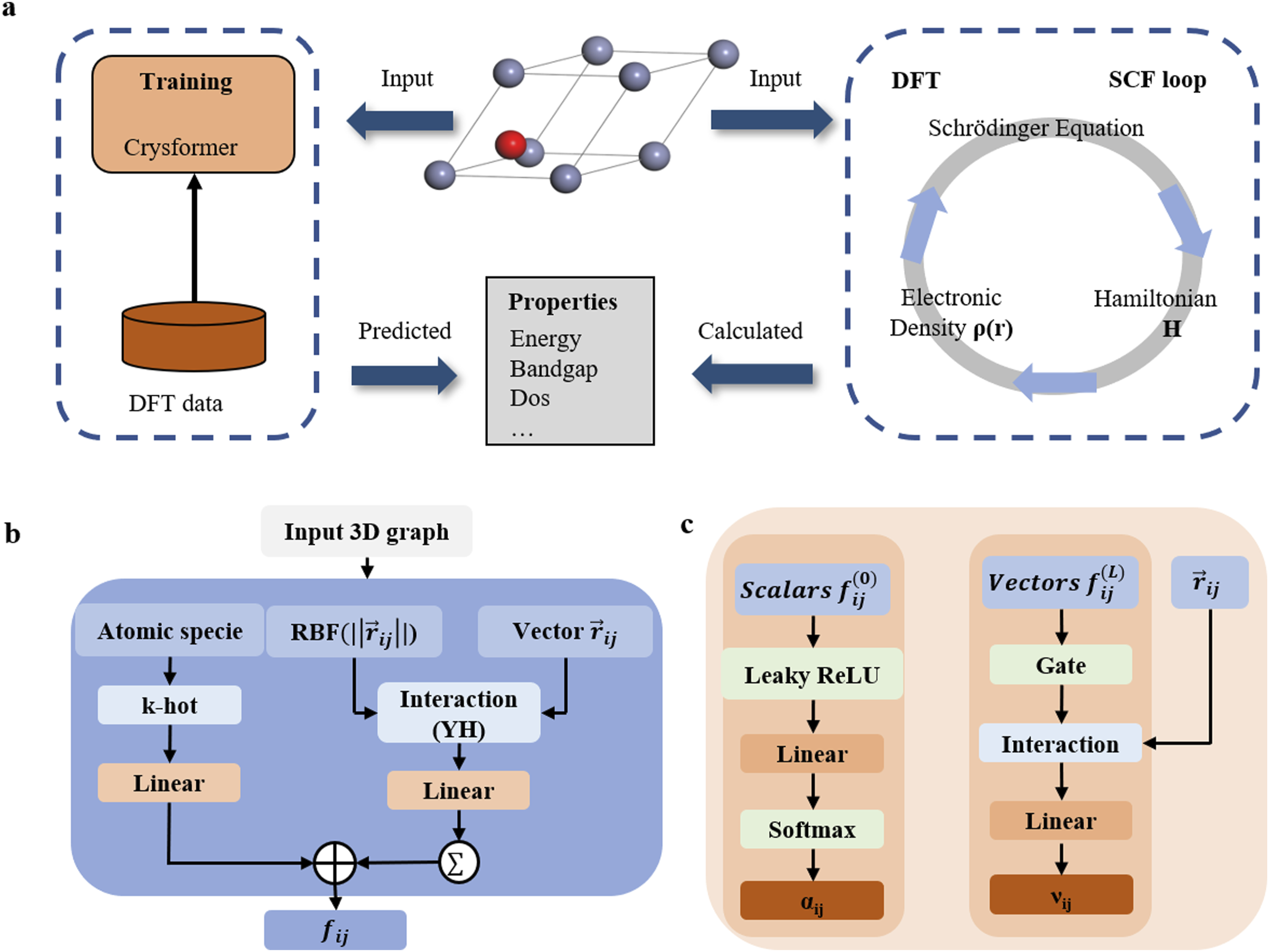

Directive pattern for materials structure space search

In materials discovery, a central task is the search for stable and synthesizable crystal structures across vast chemical and structural spaces. This problem, often referred to as crystal structure prediction (CSP), is challenging due to the combinatorial explosion of possible atomic arrangements, compositions, and symmetries Woodley and Catlow (2008). Traditional search algorithms typically rely on heuristic strategies and stochastic sampling, which require extensive DFT evaluations to identify energetically favorable structures. As shown in Figure 3, exploring the compound space in materials design represents a significant challenge, especially in the discovery of novel materials. Traditional approaches, such as genetic algorithms Oganov and Glass (2006); Glass et al. (2006), particle swarm optimization Call et al. (2007), or random search Pickard and Needs (2011), are computationally expensive, thereby limiting the depth and breadth of the exploration. We designed a diffusion generative model that characterizes known structures as latent variables, from which element types, crystal cell structures, and atomic coordinates are sampled to generate new structures. These newly generated structures are then input into DFT calculation software for structural optimization and subsequent property calculations. (a) A projected 3D material structure search space. (b) Schematic of the crystal diffusion model. (c) Schematic of crystal ab initio generation. (d) DFT calculation workflow of the generated material structure.

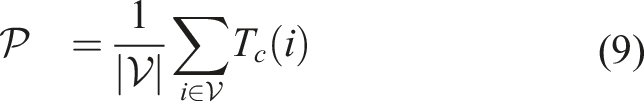

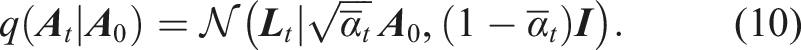

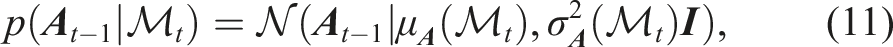

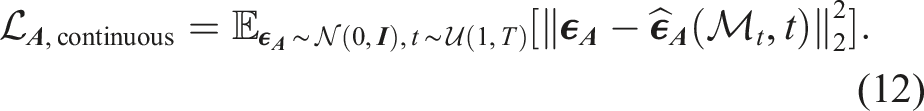

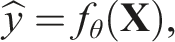

EquiCSP, introduced in our previous work (Lin et al., 2024), is a diffusion-based framework specifically designed to learn stable structure distributions for the CSP task. This method leverages a periodic E (3) equivariant model, enabling the joint optimization of the lattice matrix

The composition

Here, the variance is modulated by β

t

∈ (0, 1), with

The combined training objective for the joint diffusion model, encompassing

For the CSP task, we set λ

Initially, we faced the challenge of obtaining a sufficient number of effective components 1. 2.

Pre-training

We pre-train the model on approximately 1.14 million non-redundant 3D crystal structures obtained from existing databases, including the Materials Project 1 , OQMD 2 , Matgen 3 , and ICSD.

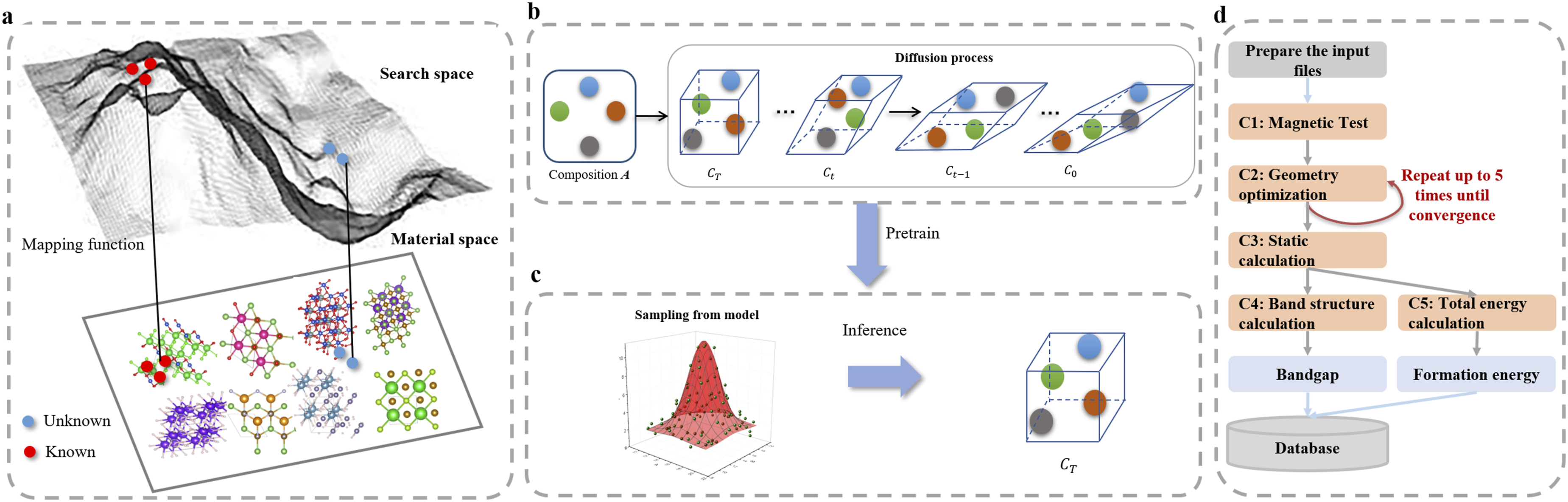

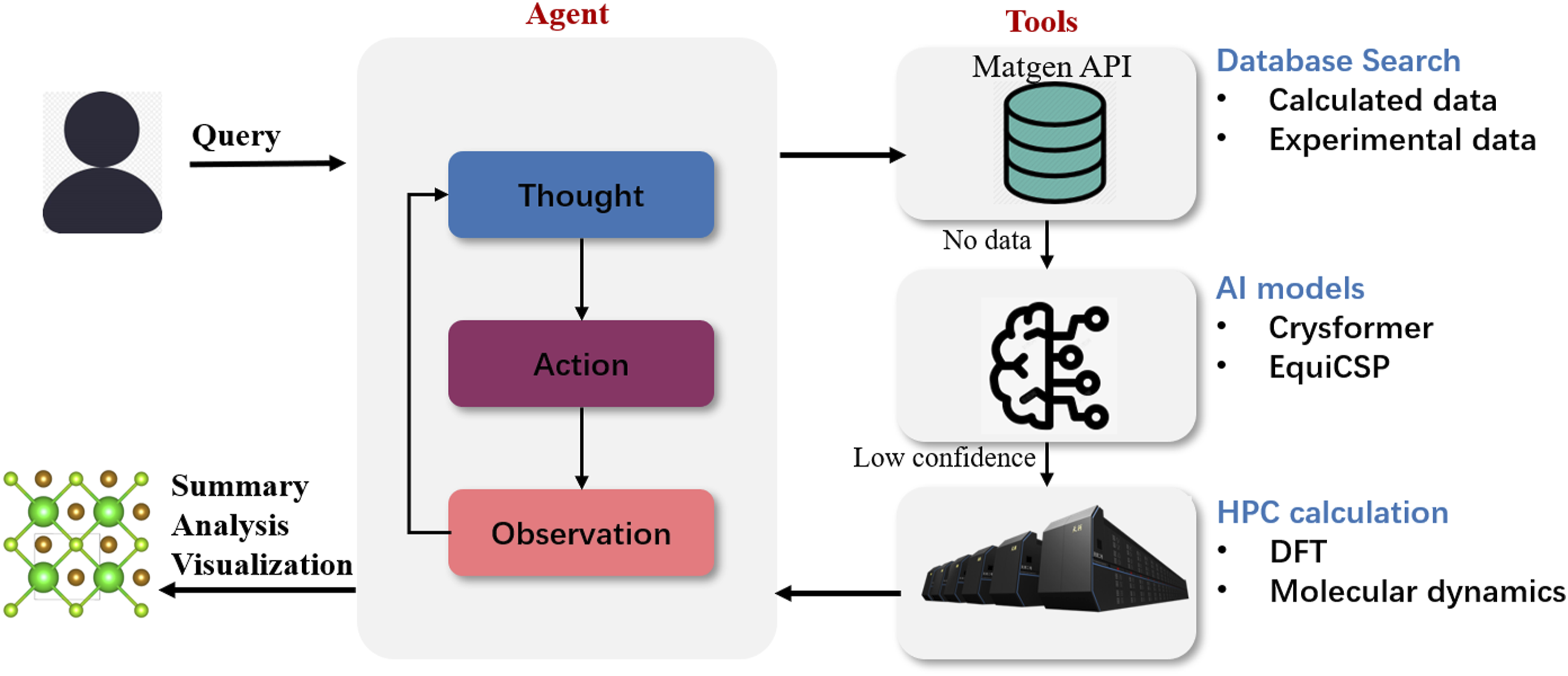

Coordinate pattern for LLM-based material designing

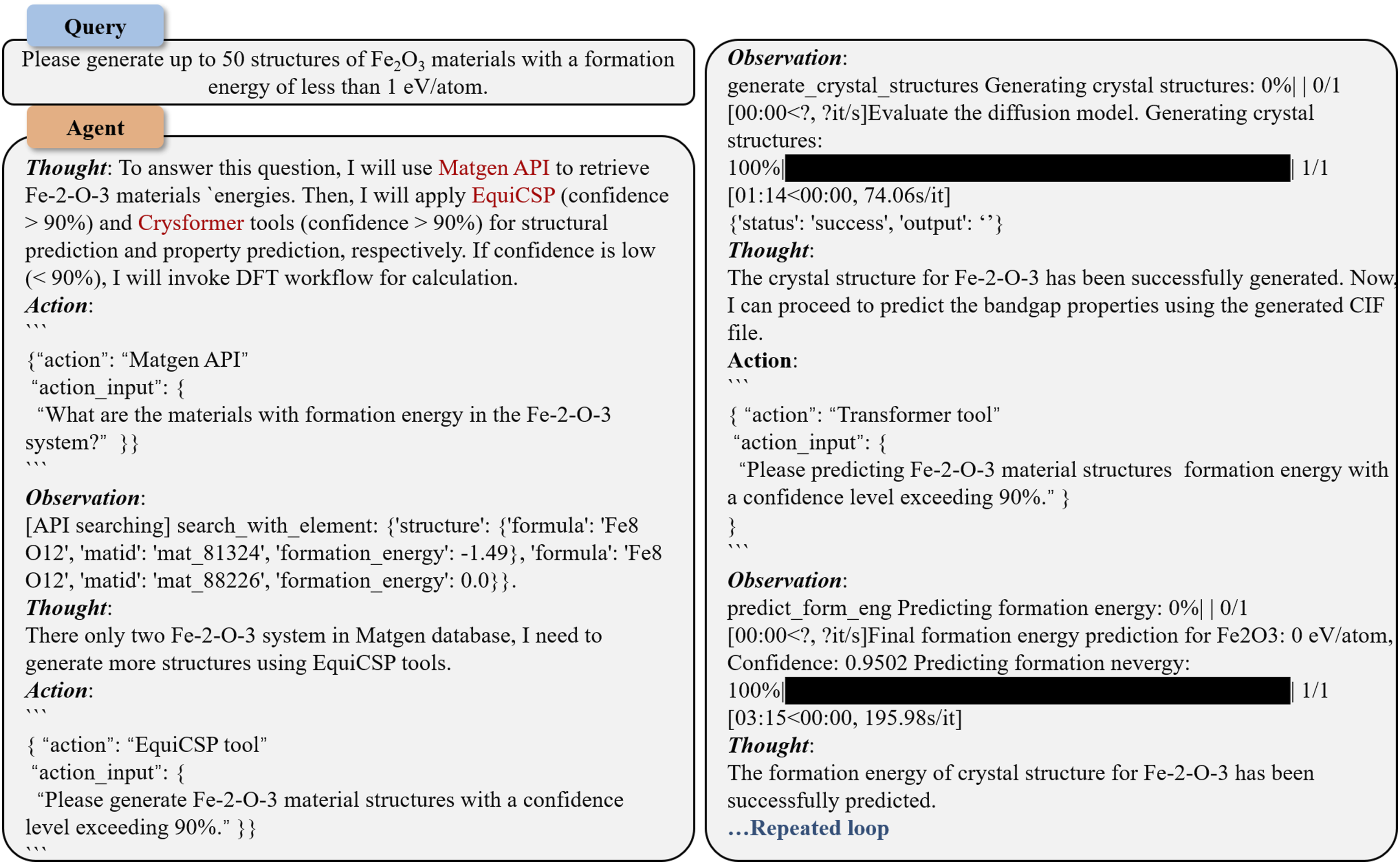

In the context of HPC-AI collaborative scheduling, we propose a streamlined workflow for materials design, leveraging the complementary strengths of AI predictions and DFT computations. For material properties of interest, the process begins with AI-based predictions, which are rapid but may lack precision. To address this, we incorporate a confidence evaluation mechanism where the AI-generated results are assessed for reliability. If the confidence is regarded as insufficient, a more accurate yet time-intensive DFT calculation is triggered.

As shown in Figure 4, this workflow is implemented through an intelligent agent that dynamically coordinates between AI and DFT, optimizing the computational resources of HPC systems. Over time, the DFT results obtained from low-confidence predictions are buffered, and once a threshold (e.g., in sample size or time interval) is reached, the AI model undergoes periodic fine-tuning using these high-fidelity data. This asynchronous refinement allows the model to gradually improve its predictive performance without interrupting the ongoing inference process. This iterative refinement establishes a positive feedback loop, improving AI reliability while reducing dependency on exhaustive DFT calculations. Such a synergistic approach ensures efficient utilization of HPC resources, accelerates the material discovery process, and continuously refines the AI’s capability to predict material properties with greater precision, paving the way for scalable and intelligent materials design frameworks. Hierarchical react agent planning in coordinate pattern. Deployment via a standardized langchain interface with support for hierarchical tool invocation, including material data repository, DFT calculation workflows and AI pre-trained models.

In this pattern, we integrate a decision-making agent that interacts with both a pre-computed DFT database and AI-based predictive models to provide material property predictions and generate novel structures if necessary. The procedure is as follows: 1.

If a matching structure and its computed properties are found, the agent immediately returns the known properties. 2. 3.

A small variance 4.

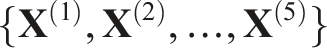

A binary classifier (the MD) is trained to predict the probability pmatch that a newly generated structure is a “match”: 5.

Conversely, if the confidence is low, the agent initiates direct DFT calculations:

By integrating the agent with both uncertainty-quantified prediction and generation models, we achieve a robust and adaptive system that dynamically chooses between leveraging existing databases, employing AI model outputs, or resorting to computationally intensive DFT calculations based on estimated confidence.

Experiments and results

In this section, we conduct experiments on three designed HPC-AI coupling patterns. All experiments are performed on high-end HPC resources. The AI model training and inference are carried out on NVIDIA A800 GPUs. For DFT calculations, we utilize the VASP version 5.4.4 Wang and Pickett (1983); Chan and Ceder (2010), running on Intel(R) Xeon(R) Platinum 8358P processors.

Surrogate pattern for DFT calculation

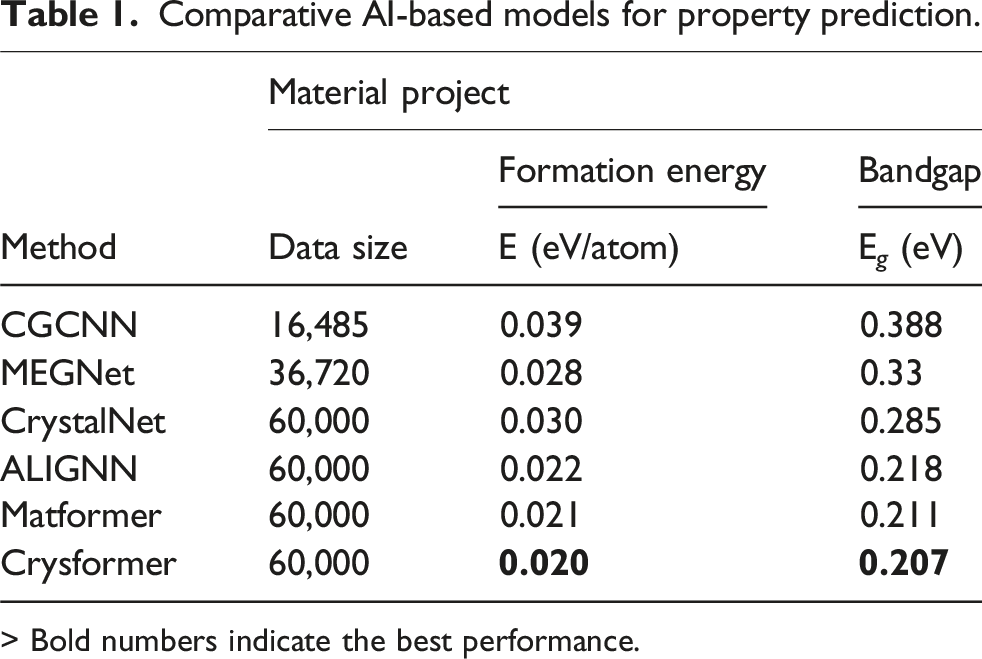

Comparative AI-based models for property prediction.

> Bold numbers indicate the best performance.

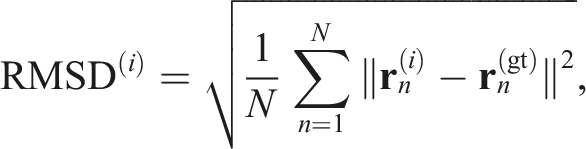

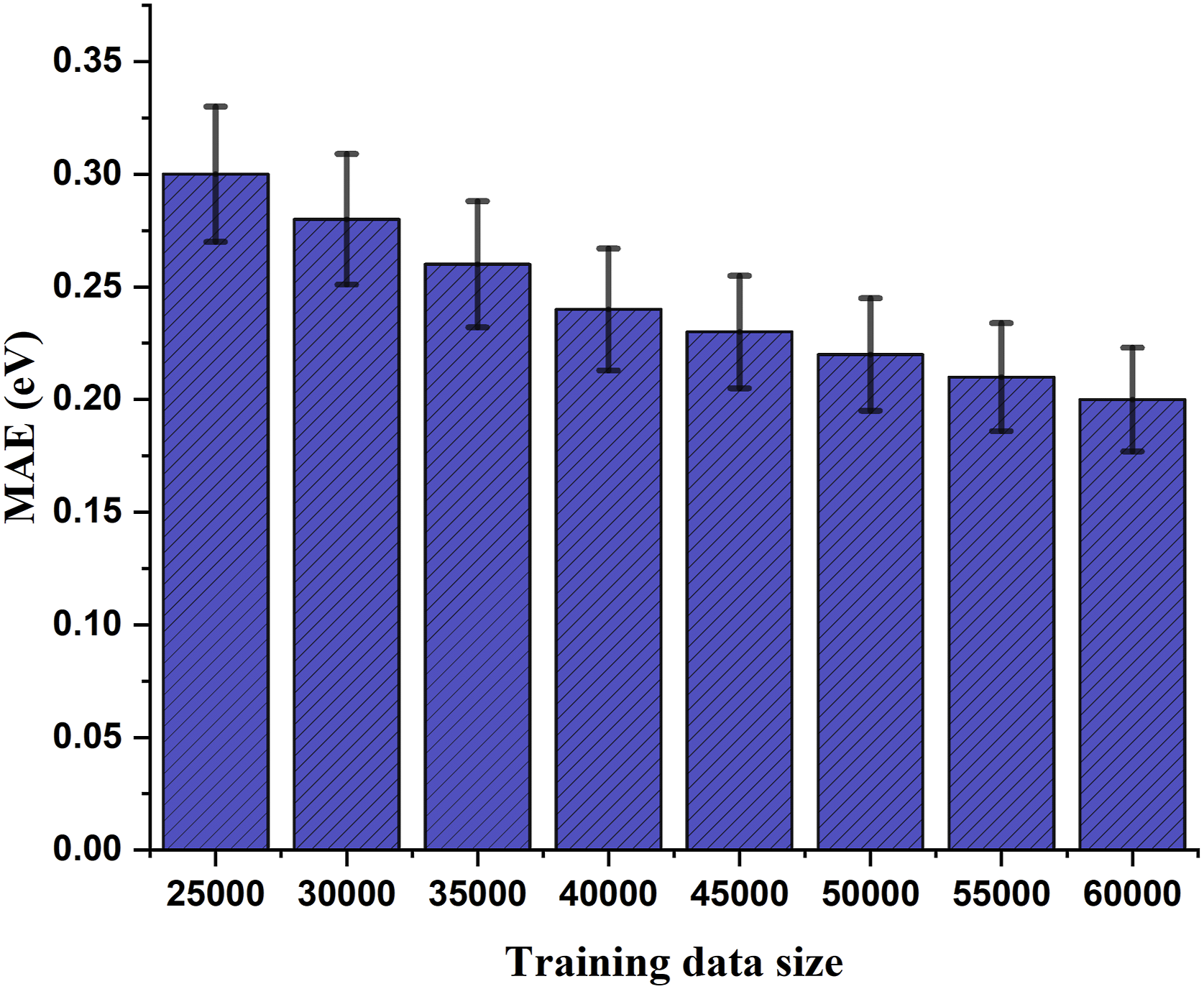

We further evaluate the impact of data size on the performance of the Crysformer model, as illustrated in Figure 5. The MAE decreases from 0.31 eV to 0.207 eV as the training sample size increases from 25,000 to 60,000. Training sizes were selected at intervals of 5000 to capture the overall trend while maintaining computational feasibility. This demonstrates that data-driven AI models can significantly benefit from the construction of larger data repositories. Impact of dataset size on MAE performance for bandgap property prediction.

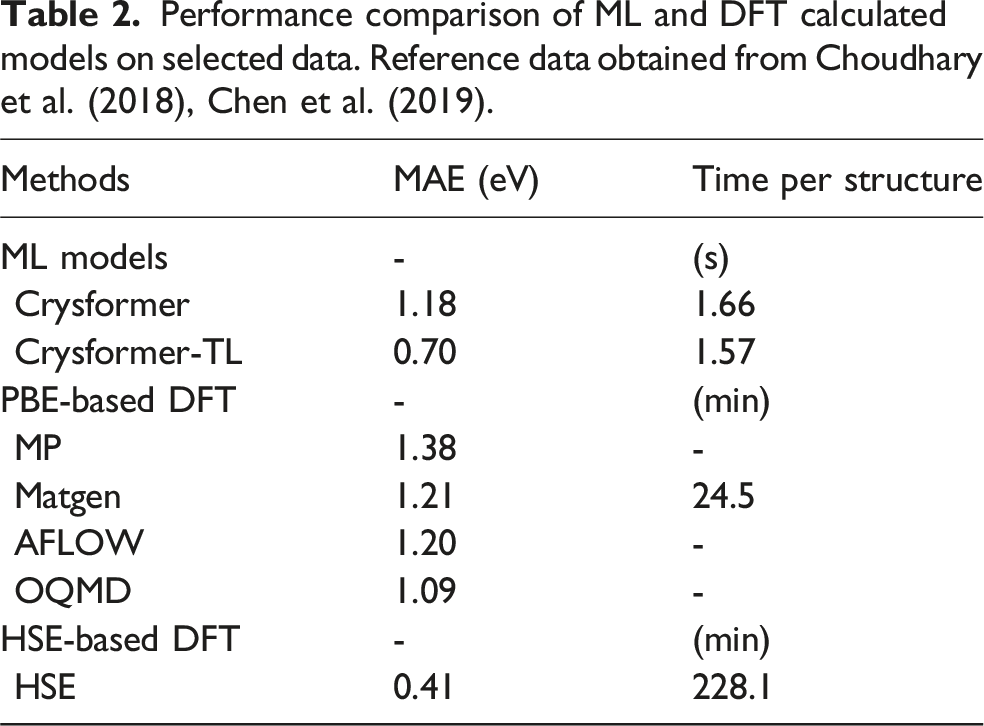

Performance comparison of ML and DFT calculated models on selected data. Reference data obtained from Choudhary et al. (2018), Chen et al. (2019).

Directive pattern for materials structure space search

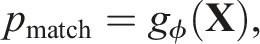

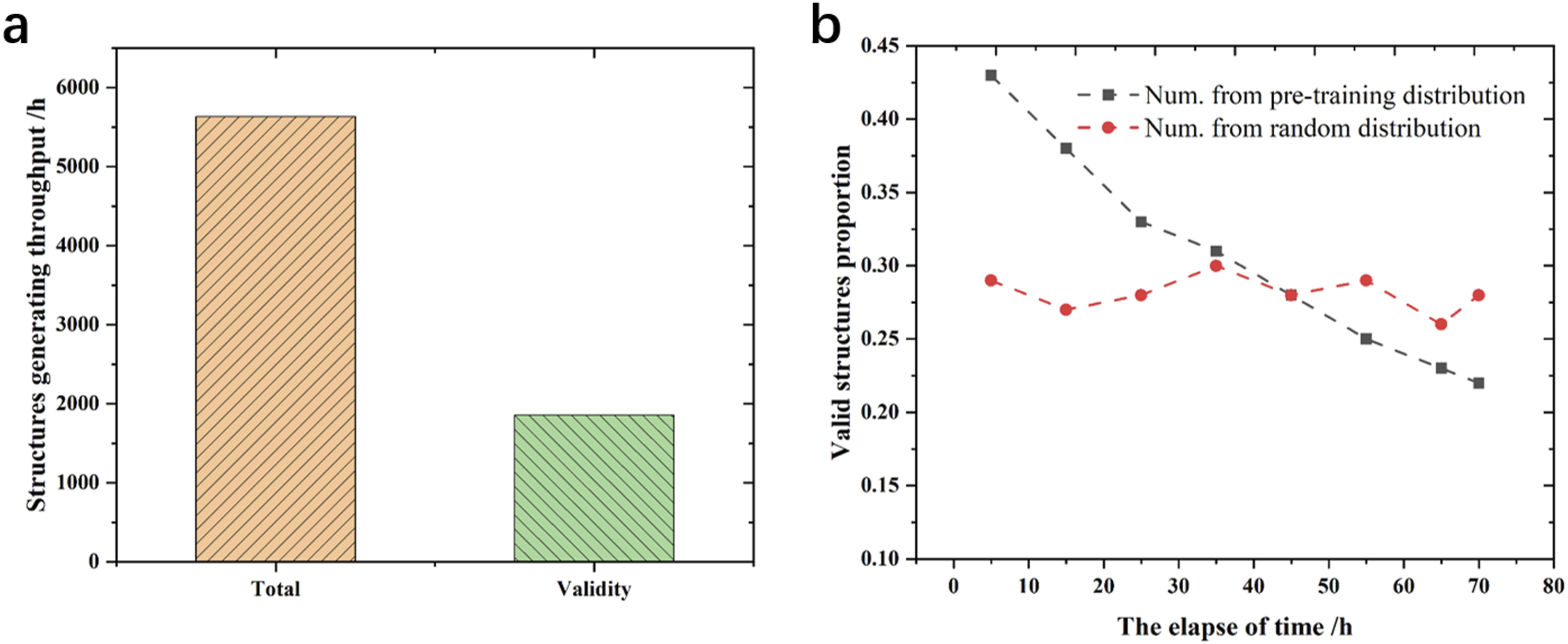

As illustrated in Figure 6(a), we utilize EquiCSP to perform de novo sampling for large-scale structure generation. This approach enables the generation of approximately 5663 structures per hour, among which around 1859 are preliminarily validated on an hourly basis. For structure generation, a preliminary validity assessment is conducted using the following methods to ensure the quality of the sampled structures. 1. 2. 3. 4. Experimental results. (a) Throughput of structure generation using EquiCSP. (b) Evolution of the proportion of valid structures over time.

Furthermore, we evaluated two sampling strategies for p(N): one based on the pre-trained data distribution and the other using purely random sampling. As shown in Figure 6(b), the pre-trained distribution initially generates a high proportion of valid structures (43%), benefiting from the learned structural priors of known stable materials. However, this advantage diminishes over time, dropping to 22%, as the sampling space becomes saturated and redundant structures are filtered out. In contrast, the random sampling strategy maintains a relatively stable, valid generation rate around 28%, due to its broader exploration of the compositional and structural space, despite the lower initial success rate. These results suggest that a hybrid strategy—starting with pre-trained distribution sampling to efficiently generate high-quality structures, followed by random sampling to enhance diversity—offers a more effective approach for valid structure discovery.

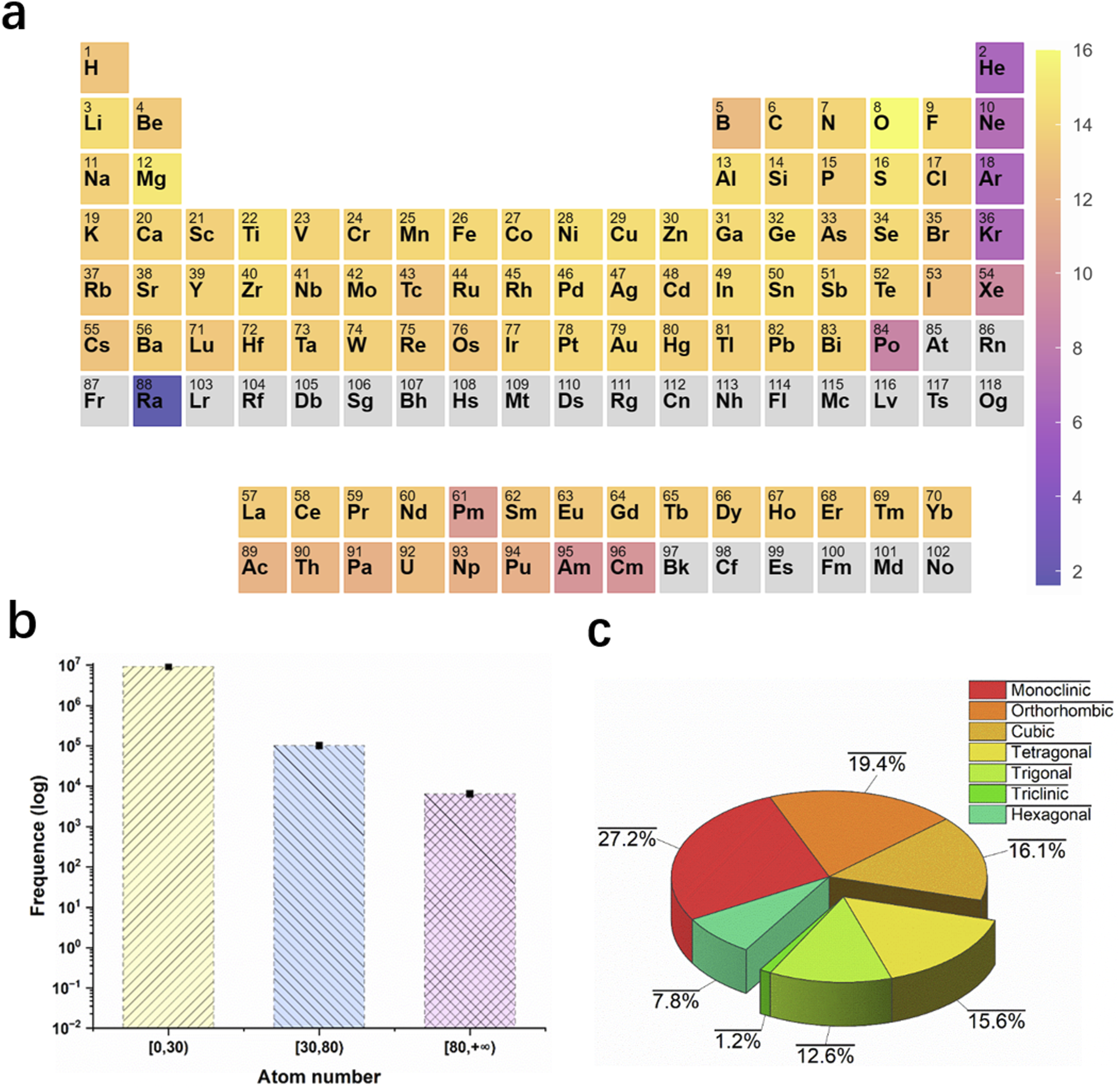

We conducted a statistical analysis of approximately 10 million potentially valid material structures generated by the model. As shown in Figure 7(a), the chemical element distribution of these structures spans nearly the entire periodic table, encompassing 82 different element types. Most structures have atomic counts below 30, although a significant number of structures contain more than 80 atoms (Figure 7(b)). Furthermore, the crystal systems of the generated material structures include all seven categories. Among these, the monoclinic system accounts for the largest proportion at 27.2%, while the triclinic system represents the smallest fraction at 1.2% (Figure 7(c)). Statistics of material structures generated by generative models. (a) Frequency distribution of chemical species. (b) Distribution of atom counts in primary unit cells. (c) Classification distribution across crystal systems.

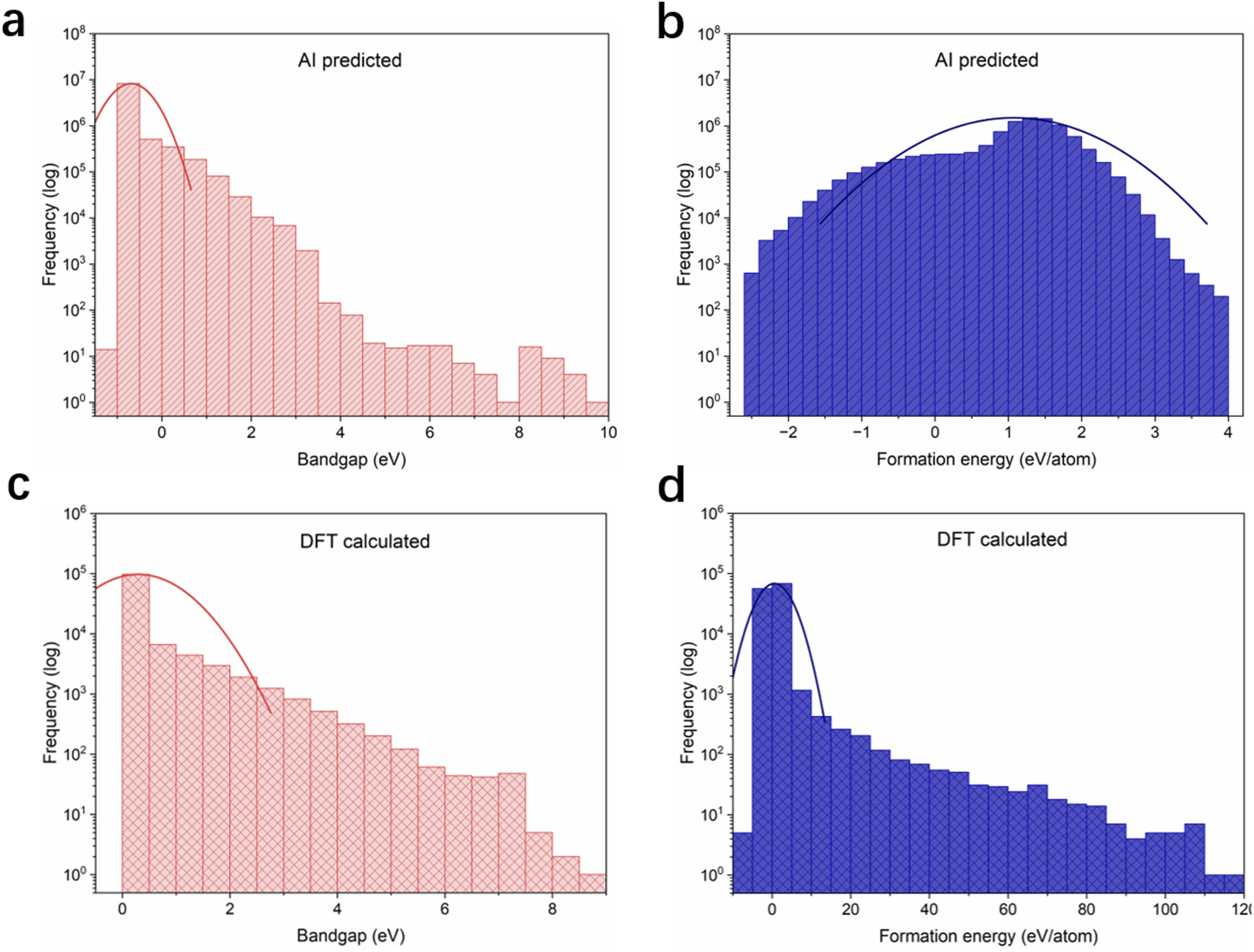

Figure 8 presents the frequency distributions of the predicted and DFT-calculated values for bandgap and formation energy. Figure 8(a) and (c) compare the bandgap distributions, where the AI-predicted values exhibit a narrower range, with a peak around 0-2 eV and a rapid decline for higher values. In contrast, the DFT-calculated distribution demonstrates a smoother decay and broader coverage, particularly for bandgap values exceeding 6 eV. Figure 8(b) and (d) analyze formation energy distributions, showing that AI predictions are concentrated between −2 and 4 eV/atom, with a symmetric peak near 1 eV/atom. The DFT results, however, display a significantly wider range, capturing negative values and extending up to 12 eV/atom. These differences highlight the trade-off between the efficiency and generalization of AI models and the comprehensive nature of DFT calculations, emphasizing the necessity of validating AI predictions against high-fidelity DFT data to ensure robustness, particularly for rare or extreme material properties. Frequency distribution of predicted bandgap (a) and formation energy values (b), respectively. Frequency distribution of DFT calculated bandgap (c) and formation energy (d) values, respectively.

Coordinate pattern for LLM-based material designing

In this experiment, we will integrate the previously developed model, Crysformer, to perform DFT calculations on generated material structures. Given the vast compositional space of material structures, we will focus on calculating the structures of potentially stable materials. Specifically, Crysformer will be used to predict formation energies and provide confidence scores. For material structures with low confidence scores, DFT calculations will be performed to ensure accurate evaluations.

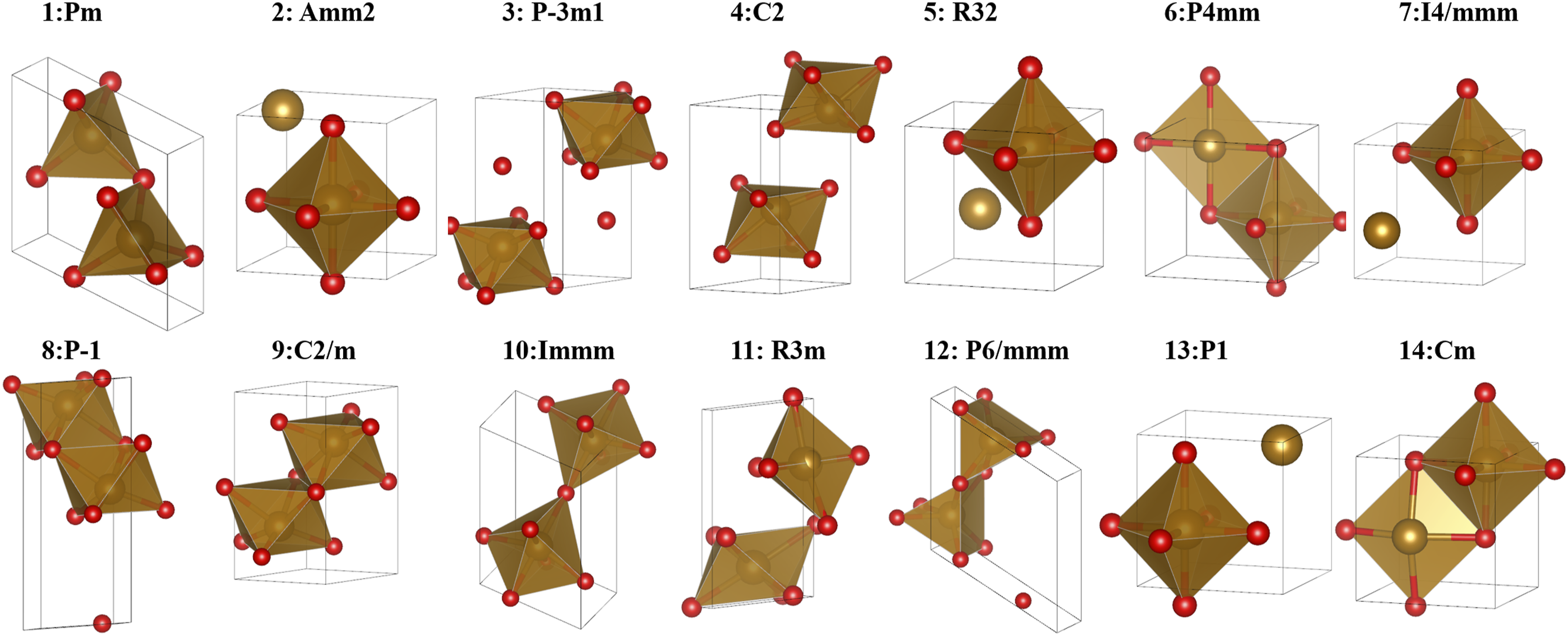

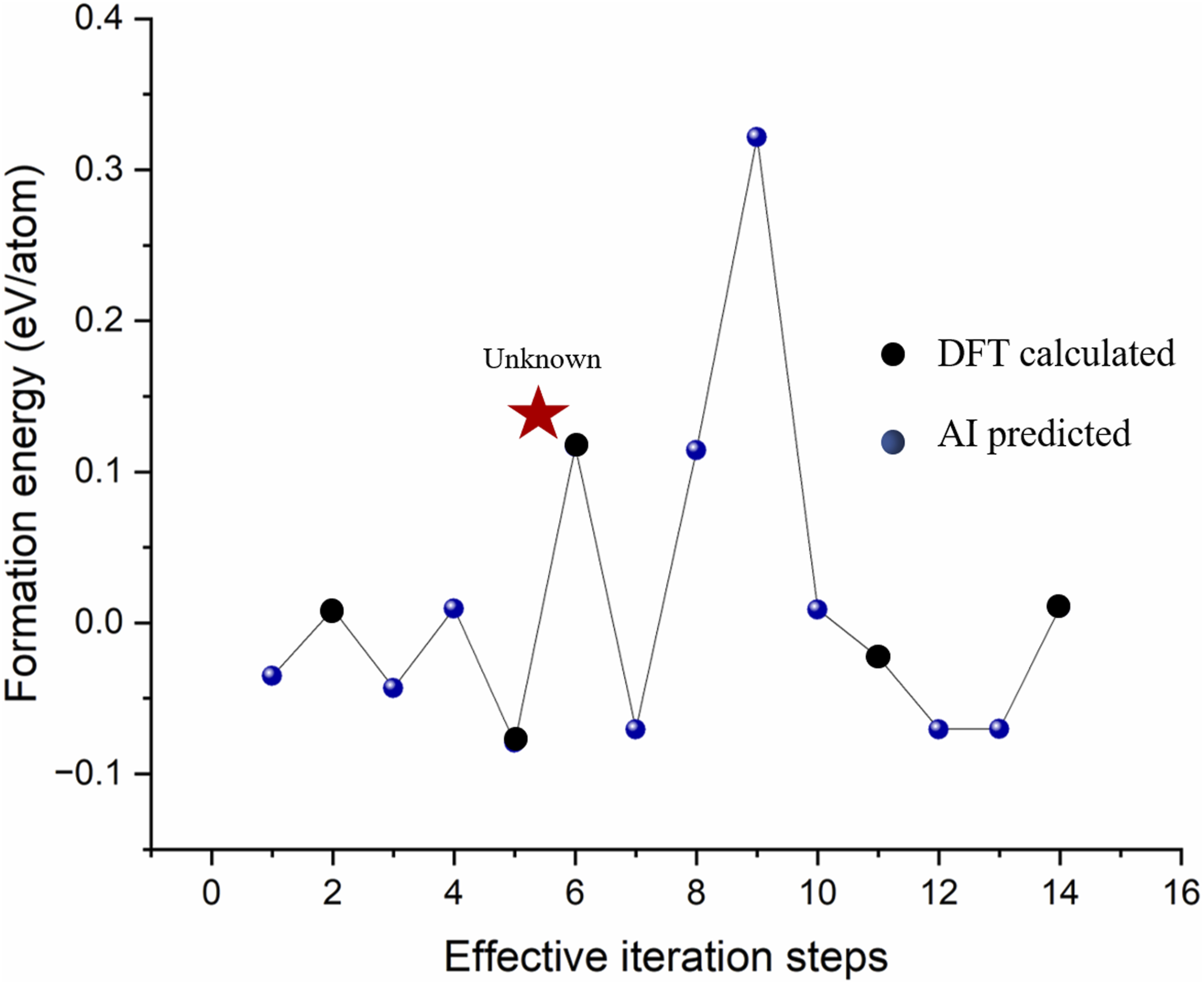

As illustrated in Figure 9, we employ an LLM-based agent for materials design, addressing the specific query: ”Please generate up to 50 structures of Fe2O3 materials with a formation energy of less than 1 eV/atom.” In response, the agent initially retrieves existing structures from the database, identified as mat_81324 and mat_88226. To further explore novel Fe2O3 structures, the agent utilizes the EquiCSP tool for structure generation and predicts the formation energies of the generated structures. Considering that potential novel structures may not be included in the training dataset of Crysformer, we assess the model’s confidence to decide its applicability. If the model confidence falls below 90%, numerical simulations are performed using DFT software. The 90% confidence threshold was chosen based on model calibration and supported by reported DFT formation energy MAEs of 0.081–0.136 eV/atom Xie and Grossman (2018). We conservatively adopt 0.12 eV/atom as the acceptable upper bound for prediction error, and 90% confidence corresponds to the region where model predictions typically fall within this range. This iterative process is repeated 50 times. Subsequently, duplicate structures and those with a space group of 1 are filtered out, yielding 14 unique structures with formation energies below 1 eV/atom. Figure 10 presents the detailed 3D structures of the 14 designed materials along with their corresponding space group information. Figure 11 presents the formation energies obtained during the iterative process, combining HPC and AI computations. In these 50 iterations, the AI model was applied nine times to predict formation energies. When the model exhibited low confidence, five DFT calculations were performed to ensure reliability. This HPC–AI coupling approach effectively circumvented nine additional time-consuming DFT computations, thereby enhancing the overall efficiency of the materials design workflow. Multi-workflow retrieval-augmented generation for materials informatics. Structural design for Fe2O3: AI-generated material structures optimized via DFT, resulting in 14 valid structures and their corresponding space groups after multiple iterations. Iterating 50 steps to discover potential novel structures. Failed and duplicate material structures are omitted here.

Discussion

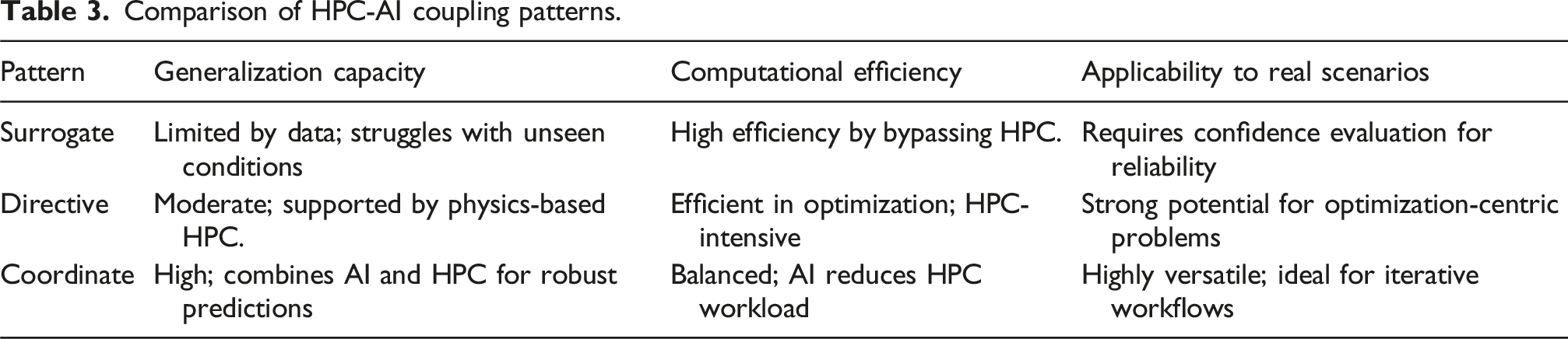

Comparison of HPC-AI coupling patterns.

Generalization capacity

Surrogate pattern leverages data-driven AI models to approximate HPC computations. Its generalization capacity is limited by the scope and quality of the training dataset. Models trained on insufficiently diverse or under-representative data may struggle to accurately predict results for novel or complex scientific problems. This limitation highlights the inherent trade-off between computational simplicity and predictive robustness. While directive pattern also depends on AI models, its reliance on physics-based HPC computations provides a safeguard against AI generalization limitations. The combination of data-driven insights with physical principles enhances reliability but does not completely overcome the AI’s challenges in extrapolating beyond the training data. Coordinate pattern addresses the generalization problem by integrating AI predictions with high-confidence HPC validation. The iterative feedback between AI and HPC reduces the risk of overfitting or extrapolation errors, thus enhancing the generalization capacity compared to standalone AI or surrogate approaches.

Computational efficiency

Surrogate pattern excels in computational efficiency by completely replacing resource-intensive HPC calculations with AI predictions. This efficiency enables rapid exploration of large parameter spaces or complex scenarios that would otherwise be computationally prohibitive. However, the reliance on a pre-trained model means the efficiency is front-loaded and may degrade in scenarios requiring frequent retraining for new conditions. The directive pattern achieves a balance between efficiency and accuracy by using AI to streamline the parameter optimization phase. Rather than explicitly shrinking the parameter space, AI-guided optimization focuses computational effort on regions more likely to yield optimal or valid outcomes, effectively narrowing the explored search space. For instance, in our structure generation task, the learned generative model prioritizes high-quality candidates by leveraging prior distributions, thereby reducing the number of expensive but unproductive evaluations. However, the final stages of HPC calculations, such as solving large-scale differential equations or conducting DFT computations, remain resource-intensive, making this pattern less efficient than surrogate approaches. The cooperative nature of this pattern combines AI’s computational speed with HPC’s precision. By offloading simpler calculations to AI and reserving HPC for critical validation steps, the overall computational load is reduced. This selective allocation of resources results in a significant efficiency gain compared to directive pattern, while retaining the accuracy benefits of HPC.

Usability to real scientific scenarios

The usability of surrogate pattern in real scientific problems is constrained by their confidence and reliability. In domains where uncertainty quantification is critical, such as materials discovery or drug design, surrogate pattern must incorporate mechanisms to evaluate and report prediction confidence. Without such mechanisms, their deployment in high-stakes scenarios remains limited. The directive pattern offers strong applicability in scientific contexts requiring iterative optimization, such as the search for optimal material properties or parameter tuning in large-scale simulations. The combination of AI-guided exploration and HPC’s rigorous validation makes this pattern particularly suitable for the problems demanding both exploration and precision. Coordinate pattern is the most versatile for real scientific applications. By integrating AI and HPC in a collaborative workflow, it ensures both speed and accuracy. For instance, in materials science, AI predictions can guide initial exploration, while HPC methods like DFT validate and refine results. Moreover, the validated outputs can serve as enhanced training data for AI, creating a feedback loop that continuously improves both efficiency and scientific insight.

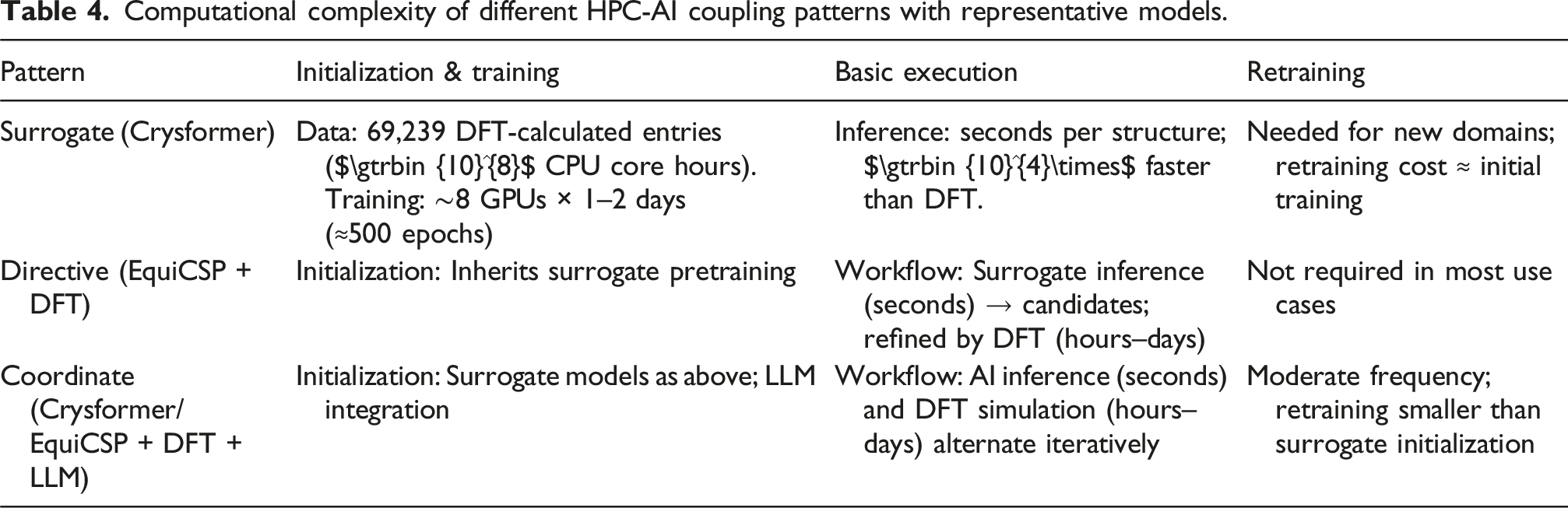

Computational complexity of coupling patterns

To better clarify the computational characteristics of different HPC-AI coupling strategies, we decomposed the total workflow into three stages: (i) initialization and training, (ii) basic execution of HPC-AI workflows, and (iii) retraining. Representative models from this work were selected to illustrate these patterns, including Crysformer as a surrogate model, “EquiCSP + DFT” as a directive pattern, and “Crysformer/EquiCSP + DFT + LLM” as a coordinate pattern.

Computational complexity of different HPC-AI coupling patterns with representative models.

Related work

Surrogate pattern

In the domain of materials science, surrogate AI models have emerged as powerful tools for accelerating the prediction of electronic structures, properties, and optimized molecular configurations. Unlike traditional HPC-based simulations that rely on first-principles methods such as DFT or on large-scale molecular dynamics simulations, surrogate pattern leverages data-driven approaches to approximate the underlying physics at significantly reduced computational cost. For instance, electronic structure prediction AI models such as ChargeE3Net Koker et al. (2024) and Hamiltonian estimation frameworks like DeepH Li et al. (2022) have been developed to predict electronic charge distributions and Hamiltonians, respectively, from representative training data without the need to solve the full set of quantum mechanical equations at runtime. Additionally, property prediction AI models including CrystalNet Chen et al. (2022), ALIGNN Choudhary and DeCost (2021), and Matformer Yan et al. (2022) have shown remarkable success in estimating material properties (e.g., formation energies, band gaps) based on crystal graphs or chemical compositions. Molecular structure optimization AI models, exemplified by DPA2 Zhang et al. (2023), directly suggest low-energy configurations of molecules or crystals, circumventing exhaustive searches.

A common characteristic of these surrogate AI models is that they are trained on either experimentally measured data or on computational datasets previously generated by DFT and other HPC-based simulations. While this approach substantially reduces computational overhead and allows for rapid inference, the generalization capability of surrogate AI models is often limited by the scope and quality of the training data. Consequently, their applicability to materials previously unseen or outside the distribution of the training set can be compromised. Recent research efforts focus on improving AI model robustness through uncertainty quantification, domain adaptation, and physics-informed neural networks, ensuring that these surrogates remain reliable tools for materials discovery and design.

Directive pattern

Directive pattern integrates AI models as guides within HPC workflows, leveraging data-driven insights to enhance the efficiency and accuracy of physically rigorous simulations. In materials science, one prominent example is DeepMD Jia et al. (2020), which employs neural networks to learn atomic force fields from DFT reference calculations. By accurately capturing interatomic potentials, DeepMD can direct classical moculelar dynamics simulations towards physically meaningful trajectories with fewer computations. This approach improves the search and exploration of stable crystal structures, reaction pathways, or phase diagrams by mitigating the inefficiencies of randomly sampling vast configuration spaces.

Similar methods, which we collectively denote as “AI-augmented HPC frameworks”, have explored a range of strategies to guide simulations, such as employing Bayesian optimization to target regions of chemical space with high potential for desired properties Jablonka et al. (2021). Although directive AI model still depends on HPC for final validation and refinement, the interplay between AI-guided exploration and physics-based solvers results in more focused and informative searches. This synergy helps to reduce the cost of large-scale computations while maintaining scientific rigor, ultimately accelerating the materials design cycle.

Coordinate pattern

Coordinate pattern represents a cooperative paradigm where HPC and AI models iteratively inform and improve one another. This pattern is often realized through reinforcement learning, active learning, or agent-based methods that dynamically update both AI predictive models and simulation parameters as new information is obtained. For example, frameworks like LLaMP Chiang et al. (2024), ChatMOF Kang and Kim (2024), ChemCrow M Bran et al. (2024) (an AI-driven pipeline for materials exploration) employ an agent-based approach. The validated outcomes, in turn, serve as new training data that enhance the AI models’ accuracy and generalization.

In practice, this coordinated approach creates a closed-loop system in which HPC computations and AI predictions form a feedback cycle. AI quickly proposes candidate materials or configurations, HPC tests these candidates at high fidelity, and the results are fed back into the AI models. Over successive iterations, this coordination not only improves the quality of predictions but also reduces the computational load compared to purely brute-force HPC simulations. Such reciprocal refinement is particularly promising for discovering novel materials with tailored properties, enabling more efficient and directed searches of the vast chemical and configurational spaces inherent in materials science.

Conclusion

In this work, we have investigated the coupling of HPC and AI within the context of materials science, proposing a coupling methodology that encompasses three distinct patterns: surrogate, directive, and coordinate. The surrogate pattern leverages data-driven AI models trained on experimental or DFT-computed data to bypass expensive HPC calculations, thereby accelerating material property predictions and structural optimizations. The directive pattern guides HPC workflows through AI-driven force field fitting or targeted search, balancing the accuracy of physics-based simulations with the efficiency afforded by machine learning. Finally, the coordinate pattern integrates HPC, AI, and third-party intelligent roles in a dynamic, closed-loop feedback system, leveraging reinforcement learning, active learning, or agent-based methods to iteratively refine both AI predictive models and simulation parameters.

Our exploration highlights significant performance gains and methodological advancements. These include fast inference and broad usability in surrogate AI models, targeted parameter explorations, and improved resource utilization in directive pattern, and enhanced robustness and adaptability in coordinate pattern. By presenting concrete implementations, ranging from materials property predictors such as Crysformer, as well as integration frameworks like EquiCSP and LLM-based Agent, we offer insights into successful HPC-AI coupling.

These interaction patterns, though exemplified in the materials science domain, possess the flexibility to be extended to other scientific fields. Their underlying principles, combining computational rigor with intelligent guidance, can serve as a blueprint for similar HPC-AI collaborations aimed at unraveling complex, high-dimensional problem spaces. As the roles of AI in HPC continue to evolve, the approaches outlined here provide valuable strategies for accelerating discovery, enhancing simulation fidelity, and ultimately expanding the horizons of scientific inquiry.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Guangdong Provincial Key Area R&D Program (Grant No. 2024B0101040005).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Notes

Author biographies

Yutong Lu (Member, IEEE) received the MSc and PhD degrees in computer science from the National University of Defense Technology (NUDT), Changsha, China. She is currently a professor with the School of Computer Science and Engineering, Sun Yat-sen University, Guangzhou, China. She is also the director of National Supercomputer Center in Guangzhou. Her research interests include parallel system management, high-speed communication, distributed file systems, and advanced programming environments with the MPI.

Dan Huang received the BS degree from Jilin University, Changchun, the MS degree from Southeast University, Nanjing, and the PhD degree in computer engineering from the University of Central Florida, Orlando, 2018. He currently is an associate professor in the School of Computer Science and Engineering, Sun Yat-sen University, Guangzhou. His research interests are parallel and distributed systems, high-performance AI systems.

Pin Chen received the PhD degrees in computer science from the School of Computer Science and Engineering, Sun Yat-sen University, Guangzhou, China. He is currently an Associate Research Fellow at the National Supercomputer Center in Guangzhou. His research interests include scientific numerical simulations, AI model development, and domain-specific platform development.