Abstract

The field of High-Performance Computing (HPC) is defined by providing computing devices with highest performance for a variety of demanding scientific users. The tight co-design relationship between HPC providers and users propels the field forward, paired with technological improvements, achieving continuously higher performance and resource utilization. A key device for system architects, architecture researchers, and scientific users are benchmarks, allowing for well-defined assessment of hardware, software, and algorithms. Many benchmarks exist in the community, from individual niche benchmarks testing specific features, to large-scale benchmark suites for whole procurements. We survey the available HPC benchmarks, summarizing them in table form with key details and concise categorization, also through an interactive website. For categorization, we present a benchmark taxonomy for well-defined characterization of benchmarks.

1. Introduction

High-Performance Computing (HPC) is, by definition, aiming for excellent performance of the executed workloads. State-of-the-art hardware is deployed for scientists, engineers, and other researchers with ever-increasing performance capabilities. At the same time, these users solve computational challenges of ever-increasing sophistication, driving the demand for HPC systems.

While some expert users might understand the performance characteristics and hardware-software interplay of their applications, that is not generally the case for most users, system administration, HPC engineers, and support staff. Hence, dedicated applications with well-defined workloads are utilized to assess the performance of systems. These benchmarks have a long tradition in the HPC field, with the High-Performance LINPACK (HPL, Dongarra (1992)), used to rank supercomputers in the Top500 1 , arguably the most prominent. But many benchmarks exist, focusing on diverse aspects of hardware and software of HPC installations: Individual niche benchmarks test dedicated hardware characteristics, other more integrated programs test interplay of different components, and some benchmarks entirely focus on software components. The choice is plentiful, but keeping track is hard.

1.1. Profile of benchmarks

For good reason, benchmarks are one of the key elements in the HPC researcher/engineer toolbox. Their benefit has many layers:

1.1.1. Clarity

Through the well-defined setup, workloads, and execution instruction, benchmarks allow objective, repeatable, and transparent performance measurements; the key is a clear – and ideally simple – metric.

1.1.2. Comparability

Analysis of various hardware installations with identical or similar benchmark execution makes the installations comparable and assessable by means of the benchmark’s metric.

1.1.3. Durability

Through well-designed benchmarks, an accessible and continuous assessment of systems is enabled, allowing for tracking historical data and understanding technological improvements and trends.

1.1.4. Advancement

Modeling the complex interplay of hardware and software, benchmarks enable focused hardware research and application development towards ecosystem advancement; especially in relation to the theoretical capabilities of hardware (peak performance). A benchmark results database can create a competitive drive for system improvement.

1.1.5. Decisiveness

Ultimately, analyzing robust benchmarks across different hardware installations over time allows informed, objective decisions about system investment. Benchmarks are key in modern system procurements, where execution performance counts more than the hardware peak performance.

1.1.6. Validation

Well-defined benchmarks allow tracking resource utilization and performance regressions on in-production systems; for new systems, well-known benchmarks can be used to validate the system for production; they can also be used for stress-testing systems and support reliability of the system.

The benefit of individual benchmarks is amplified when they are collected into benchmark suites. Various workloads are combined to conduct holistic system characterization, usually with normalized, comparable metrics.

Benchmarks not only have value for HPC researchers and engineers, but also for HPC users. By having a well-defined version of their HPC application, users may track performance regressions in their program and understand performance limiters. By supplying benchmarks to the HPC community, users have a direct impact on system design and procurement decisions. In turn, seeing benchmark results for HPC systems, users have comparable baselines and can gain confidence in the capabilities of the system.

Of course, benchmarks have many limitations. Comparability crossing hardware generations and vendors is a hard task, as highly-optimized HPC applications optimize for specific hardware, which is a priori not directly transferable. A certain benchmark metric may be valuable, but might not convey general information about a system – especially synthetic benchmarks tend to measure specific aspects of a system, which have limited real-world applicability for more involved applications. Finally, creating a robust, repeatable, portable, versatile, stable, and clear benchmark is a very involved task, which requires significant effort.

1.2. Contribution

Because of their importance, the HPC field created a vast set of different benchmarks over the years. They range from simple synthetic benchmarks, over mini-apps of scientific applications, to full-blown large-scale applications with possibly large input files. Some benchmarks are collected into benchmark suites, typically created for system procurements, to replicate a desired measurement and workload mix.

Some benchmarks are well-known in the field – like HPL or STREAM (McCalpin, 1995) – while others are only known to a small subset. The benchmarks/suites may be published only on websites accompanying a procurement, or are hosted in a GitHub repository attached to a journal publication; finding them can be challenging.

To improve their visibility, findability, and, ultimately, benefit for the field, we contribute in this work a

1.3. Structure

The rest of the paper is structured as follows. In section 2 we concisely assess the state-of-the-field and discuss related work. In section 3, we present the Benchmark Taxonomy. Finally, in section 4 we present the benchmarks in table form. Some observations and evaluations are discussed in section 5. In section 6, we conclude our paper.

2. Related work

Efforts to publicly post performance-focused benchmark results relating to deployed HPC systems range from the long-running Top500 and aligned Green500 3 and IO500 4 , to sub-discipline benchmarking such as Machine Learning Commons (MLCommons, Mattson et al., 2020; ML Commons, 2023) and HPL-MxP 5 . Notable is also https://OpenBenchmarking.org, which openly collects results from the Phoronix Test Suite 6 and schema-compliant further benchmarks; the focus is on end-user devices.

There are numerous efforts to make benchmarking easier, by encoding build/run/evaluation rules for entire suites of benchmarks; examples include Pavilion (LANL Pre-Team, 2019), Reframe (Karakasis et al., 2020), JUBE (Breuer et al., 2022), Ramble (Jacobsen and Bird, 2023), and Benchpark (Pearce et al., 2023). While they showcase somewhat different approaches, the proliferation of such efforts underscores the vital importance – and difficulty – of benchmarking.

There have of course been attempts at surveying the HPC benchmarks – meta-benchmarking, in a sense. Here we list just a few of the recent ones. A survey of convergence of big data, HPC, and ML systems has been done by Ihde et al. (2022) and cites 25 benchmarks/suites (our paper covers the HPC-specific ones from this list). In similar spirit list Thiyagalingam et al. (2022) a number of scientific ML benchmarks and present the SciMLBench framework. Some works argue that although we already have too many benchmarks in the ML space (Zhang et al., 2019) (citing 54 ML benchmarking papers), we still need more - while needing convergence. This underscores the need for the community awareness of existing benchmarking work, better benchmark characterization, and, hopefully, collaboration to both improve the quality of benchmarks, and understand their applicability.

3. Taxonomy

Benchmarks come in different flavors with different execution profiles, focus points, dependencies, and other properties. While comparing well-known benchmarks colloquially is easy, a thorough, structured comparison is more involved – especially for more niche and specialized benchmarks.

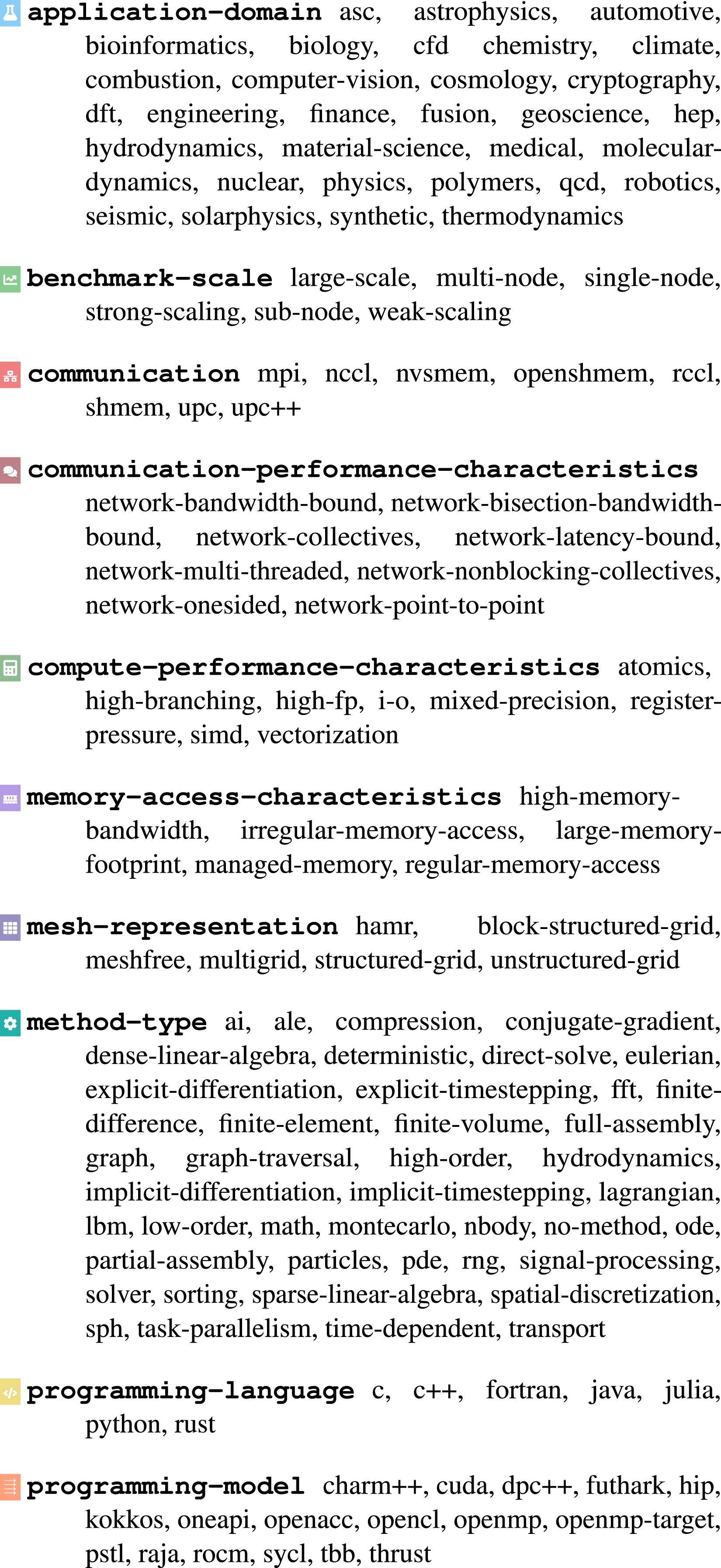

In our survey, we identify the different flavors and develop a systematic approach for characterization of benchmarks, considering a variety of different aspects of the individual benchmarks. This

Categories range from the application domain, where a benchmark originates from (like astrophysics), over the method employed for execution (like FFT), and the employed programming language (like Fortran), to detailed aspects like memory access characteristics (like regular access).

The categories are presented in Figure 1 with all currently collected entries (normalized to lower-case). The list of possible entries is likely not complete for every possible workload; it rather represents the result of our collection, augmented with other obvious entries. Taxonomy overview with top-level categories (printed in bold with added symbol) and each multiple entries.

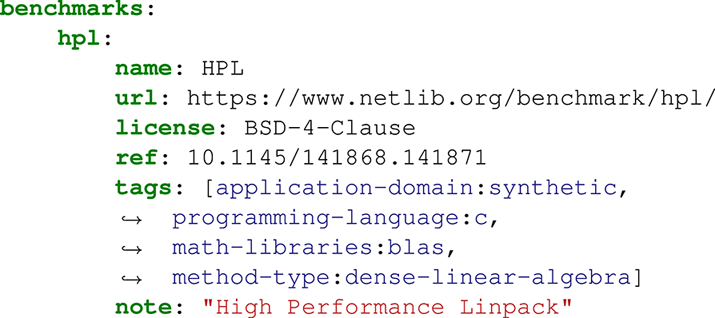

To allow for extension of the taxonomy and support future work building up on it, the raw data is available in concise YAML form on GitHub. The schema employed uses the categories for keys, and individual entries available as a list for the values; for example

Each category has an associated symbol and color, which is used in tag form within the full version of the table (Table A1) and in the interactive online version. An example tag for a benchmark utilizing MPI communication is  mpi. In the machine-readable raw form within the benchmark list, the category-entry-combination is key-value-combined with a colon:

mpi. In the machine-readable raw form within the benchmark list, the category-entry-combination is key-value-combined with a colon:  cuda).

cuda).

4. Benchmark survey

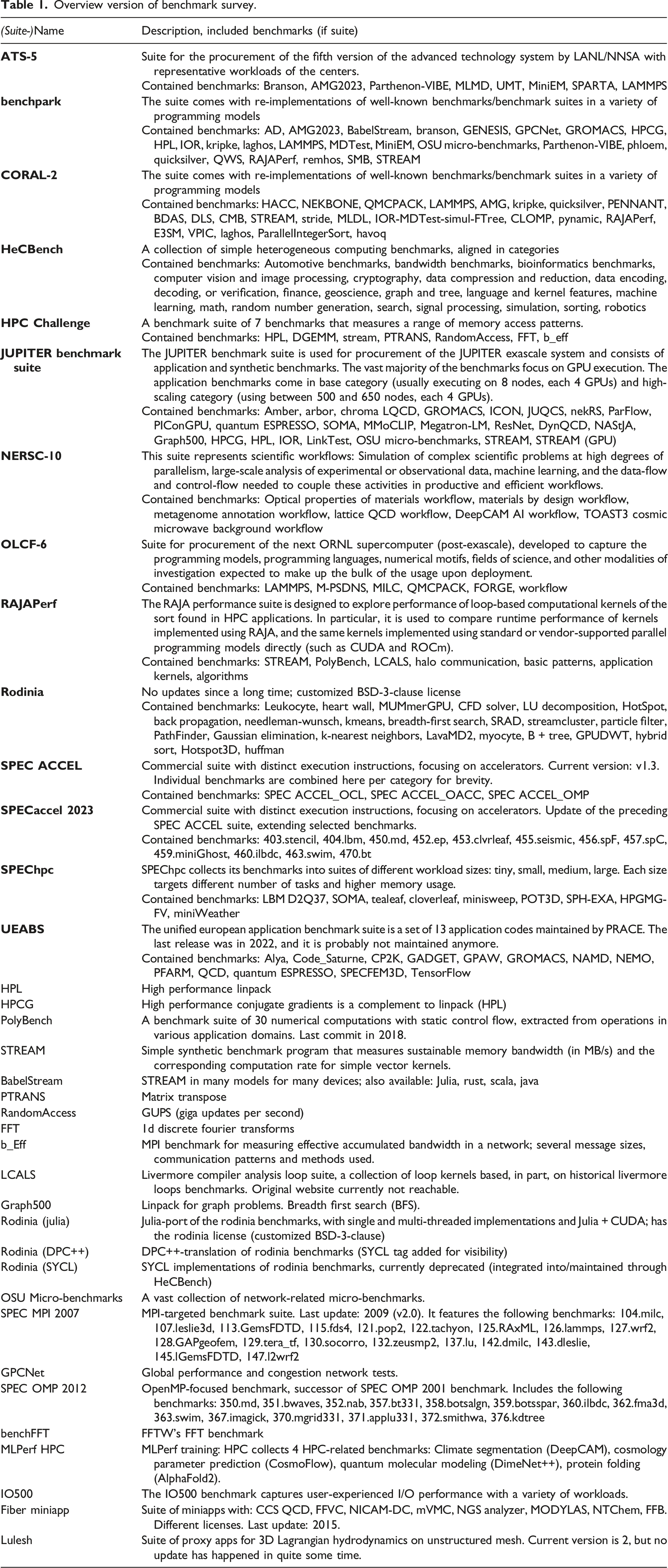

Overview version of benchmark survey.

The shortened overview in Table 1 first lists suites of benchmarks with commentary about the suite and contained benchmarks, and then free-standing benchmarks with respective notes. The complete table Table A1 and the online version contain more details. Each table entry consists of the most relevant information for a benchmark/suite:

4.1. Name

The self-given name of each benchmark/suite is used for identification; when ambivalent, the more commonly used term was taken

4.2. Taxonomy

Tags Building up on the Benchmark Taxonomy of section 3, each entry is characterized by a set of taxonomy tags. For suites, tags common to (nearly) all contained benchmarks are promoted to top-level tags of the suite itself and are not separately displayed for each individual benchmark.

4.3. License

As the scope of applicability of a benchmark is closely related to its license, an effort is made to collect the information here. For brevity, each license is shown in symbol form using the following keys:  No license;

No license;  Free (no (clear) license specified, but freely available);

Free (no (clear) license specified, but freely available);  Proprietary/Custom;

Proprietary/Custom;  MIT;

MIT;  /

/ /

/ BSD-2/3/4-Clause;

BSD-2/3/4-Clause;  /

/ GPL-2.0/3.0;

GPL-2.0/3.0;  LGPL-2.1 (logo abbreviates 2.1 to 2);

LGPL-2.1 (logo abbreviates 2.1 to 2);  Apache-2.0;

Apache-2.0;  MPL-2.0;

MPL-2.0;  CC-BY-4.0.

CC-BY-4.0.

4.4. Reference

For easy access, a URL for each benchmark is provided in  link form.

link form.

4.5. Notes

In the notes, comments and details about the benchmarks/suites are added, as well as names of benchmarks of suites-within-suites (HeCBench, SPEC ACCEL) and older suites (SPEC MPI, SPEC OMP). If available, a scientific publication is added as reference at the end of the notes. But only a minority of benchmarks expose readily available publications.

The completeness of this metadata is highly dependent on the offered information at the source of the data; i.e. only if clear description is provided, it could be added as well-formed metadata. Especially, the taxonomy tags referring to workload profiles are highly reliant on the available data. In addition, the entries are augmented with the authors’ knowledge of the workload, building up on their experiences in the field. The authors gladly welcome contributions by the community on GitHub to further extend this list.

The collected data is available in machine-readable, raw YAML form on the accompanying GitHub repository (Herten et al., 2025). This allows for easy extension; and also for future developments building up on the data. The YAML schema uses the following keys for each entry:

5. Evaluation of survey

5.1. Overview and highlights

In the complete survey, 13 benchmark suites were recorded. They can be roughly sorted into three groups. References are omitted here, as they can be neatly found in Table A1 and online.

5.1.1. System procurements

These suites were created for large system procurements and used for evaluation of large installations. They are arguable the most thoroughly documented benchmarks surveyed, as they document the needs and requirements of whole user communities to system integrators. Nearly all benchmarks in this list are GPU-accelerated and combine application benchmarks (or mini-apps thereof) with synthetic benchmarks.

OLCF-6 is the suite for the next-generation supercomputer to be hosted at Oak Ridge National Lab, the RFP closed in autumn 2024. ATS-5 is the suite for the next supercomputer at Los Alamos National Lab, due to be installed in 2026/2027. NERSC-10 is the benchmark suite for the successor of the Perlmutter system at NERSC, to be deployed in 2026. The JUPITER Benchmark Suite Herten et al. (2024) was used for the procurement of JUPITER, currently being built at Jülich Supercomputing Center. The CORAL-2 benchmark suite was used for the acquisitions of Frontier and El Capitan, hosted at Oak Ridge National Lab and Lawrence Livermore National Lab, respectively; the systems are already operational.

5.1.2. Research and community collections

Grown out of endeavors of research institutes and partly supported by the community, a number of benchmark suites has emerged which focus on certain niches. For example RAJAPerf Pearce et al. (2025), which started as a suite to verify and showcase the RAJA programming model Beckingsale et al. (2019a), but now incorporates other parallel programming models in comparative fashion; the focus is on typical parallel patterns and simple algorithms. RAJAPerf includes other suites, for example LCALS and Polybench. HeCBench is similar and includes a vast amount of simple computational benchmarks in a variety of different GPU programming models. In the table, we only list the categories, as the suite contains more than 400 individual programs. Another well-known collection of benchmarks for heterogeneous machines is the Rodinia benchmark suite. It is frequently used in the community for many performance investigations. The future of Rodinia is unsure, as the benchmark currently appears unmaintained. The case is similar for UEABS (Unified European Applications Benchmark Suite) by the European PRACE project. The suite captures many well-known applications with detailed execution instructions and input data, but appears not maintained anymore. The HPC Challenge benchmark suite combines many synthetic benchmarks, and also shares results on their website; but the project seems currently abandoned.

5.1.3. (Semi-)commercial offerings

The Standard Performance Evaluation Corporation, SPEC, provides different benchmark suites targeted for a variety of use-cases. Relevant for HPC are SPEChpc, offering a number of benchmarks in different workload sizes, SPEC ACCEL, last updated 2019, and focusing on different GPU benchmarks (with OpenCL, OpenMP, and OpenACC), and SPECaccel 2023, updating the previous suite and using OpenMP- and OpenACC-accelerated applications for benchmarking. Further benchmarks relevant to HPC exist. The benchmark setups are closed source and can be commercially acquired.

The choice for individual benchmarks is plentiful – be it freestanding or integrated into a suite. Famous and well-used benchmarks are HPL, which is particular compute-intensive and used for the Top500 ranking, HPCG, a conjugate gradient benchmark with data access patterns seen in applications, STREAM, which measures memory bandwidth with simple data movement routines, BabelStream, a STREAM implementation for a variety of GPUs, the Ohio State University Micro-Benchmarks, which test communication libraries (for example MPI), MLPerf, one of the few AI/ML benchmark in the suite, or IOR, a tool to determine I/O performance also used for the IO500.

Well-curated benchmarks are scarce, and the silent sunsetting of established suites is a loss for the field. It appears challenging to keep pace with the fast-moving update cycle in HPC, resulting in some benchmarks discontinued to various degrees. The authors hope that with this work, not only an overview can be gotten, but also awareness raised for the trove of choice currently existing.

5.2. Statistical evaluation

Albeit identification of characterizing aspects was at times challenging, many taxonomy tags could be attached to the benchmarks. The approach of using a YAML scheme for the taxonomy and the survey allows for some first, imperfect 8 evaluation of the state-of-the-practice. For the following numbers, taxonomy tags common to benchmarks in a suite have been, temporarily, added again to the benchmarks themselves. Categories can, of course, be present multiple times for individual benchmarks, for example if a benchmark is available in both OpenMP and CUDA.

Over 400 times a  programming model was identified, with OpenMP being the most prominent parallelization choice (158 entries), followed by CUDA (94 times); OpenMP Target (48), OpenACC (43), and HIP (31) follow. Not claiming perfect representativeness, the results still appear to reflect the current trends in the field: CPU-focused benchmarks almost exclusively utilize OpenMP for CPU-parallelization and GPU benchmarks mostly use CUDA for GPU-parallelization. A

programming model was identified, with OpenMP being the most prominent parallelization choice (158 entries), followed by CUDA (94 times); OpenMP Target (48), OpenACC (43), and HIP (31) follow. Not claiming perfect representativeness, the results still appear to reflect the current trends in the field: CPU-focused benchmarks almost exclusively utilize OpenMP for CPU-parallelization and GPU benchmarks mostly use CUDA for GPU-parallelization. A  programming languages could be identified 222 times, with C and C++ equally the most prominent ones (80 entries each). Fortran has 50 entries, Python only 10. Despite Fortran’s importance in HPC, the vast majority of benchmarks use C/C++. Surprising is the little count of Python benchmarks, being the driving force in many sub-domains of HPC. The most used

programming languages could be identified 222 times, with C and C++ equally the most prominent ones (80 entries each). Fortran has 50 entries, Python only 10. Despite Fortran’s importance in HPC, the vast majority of benchmarks use C/C++. Surprising is the little count of Python benchmarks, being the driving force in many sub-domains of HPC. The most used  benchmark scale is single-node with 88 entries; multi-node follows with 52 entries. A focus on intra-node tests can be seen, removing network effects and related implementation/evaluation complications.

benchmark scale is single-node with 88 entries; multi-node follows with 52 entries. A focus on intra-node tests can be seen, removing network effects and related implementation/evaluation complications.  Application information is available 122 times, with synthetic being the front-runner (18), physics (17), molecular dynamics (MD, 17), and computational fluid dynamics (CFD) and climate following (both 12). A slight focus appears to be on synthetic benchmarks, determining intricate and specific hardware features inspired by actual application workloads. Physics, MD, CFD, climate research are traditional HPC use-cases, which are expectedly represented in the survey. 92 benchmarks were identified to use MPI for

Application information is available 122 times, with synthetic being the front-runner (18), physics (17), molecular dynamics (MD, 17), and computational fluid dynamics (CFD) and climate following (both 12). A slight focus appears to be on synthetic benchmarks, determining intricate and specific hardware features inspired by actual application workloads. Physics, MD, CFD, climate research are traditional HPC use-cases, which are expectedly represented in the survey. 92 benchmarks were identified to use MPI for  communication, with much distance to NCCL (5). MPI is the de-facto standard for communication in HPC, which can clearly be seen.

communication, with much distance to NCCL (5). MPI is the de-facto standard for communication in HPC, which can clearly be seen.

The taxonomy categories referring to more involved benchmark details are harder to identify and require thorough description or deeper knowledge of the benchmarks. Characteristics of  memory access could be identified 48 times, of

memory access could be identified 48 times, of  communication performance 40 times, and of

communication performance 40 times, and of  compute performance 28 times.

compute performance 28 times.

6. Conclusions

Benchmarks are essential tools in the HPC field, enabling well-defined and comparable assessments of HPC systems. They characterize hardware features and software capabilities in an objective manner, support the advancement of the field, and are key for investment decisions.

In the presented work, we collect available HPC benchmarks and benchmark suites and survey them in a concise and comparable manner. An overview with strongly reduced level of detail is available in Table 1. The much longer full survey, including characterization tags, is available as supplemental material in Table A1 and online (interactive). 13 benchmark suites and over 180 benchmarks could be collected with detailed meta-data, like associated license, references, notes, and characterization. For the latter, we create a Benchmark Taxonomy (Figure 1) to describe different aspects of the individual benchmarks and suites and enable easy comparison. The raw data of the survey and the taxonomy are available for further extension and collaboration as open source software on GitHub, feeding directly into the interactive website.

The benchmark suites are either created for large system procurement (like Frontier, El Capitan, or JUPITER), or come from parts of the community (like RAJAPerf). Without claiming representativeness, we attempt a first evaluation of the collected taxonomy tags and find, for example, OpenMP appears to be by far the most-used programming model for parallelization on the CPU. On the GPU, CUDA is the main model.

In the future, we expect to further extend the Benchmark Taxonomy, adding, for example, further application domains, methods, or libraries; we also consider extending it by status-related information to cover functionality and topicality of benchmarks, since some defunct benchmarks were collected. Although being thorough in our review of available benchmarks, we are certain that some benchmarks escaped our attention. We expect to extend the benchmark survey in the future to benchmarks not yet included in the current data; in hopes of help by the community online.

Supplemental Material

Supplemental Material - An HPC benchmark survey and taxonomy for characterization

Supplemental Material for An HPC benchmark survey and taxonomy for characterization by Andreas Herten, Olga Pearce, Filipe S. M. Guimarães in The International Journal of High Performance Computing Applications.

Footnotes

Acknowledgements

The authors would like to thank Jens Domke for his thoughts relating to significant benchmarks for this work.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was performed under the auspices of the U.S. Department of Energy by Lawrence Livermore National Laboratory under Contract DE-AC52-07NA27344 and was supported by the LLNL-LDRD Program under Project No. 24-SI-005 (LLNL-JRNL-2001672).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.