Abstract

The last lecture course that Nobel Prize winner Richard Feynman gave to students at Caltech was not on physics but on computer science. The course in 1983 was an overview of standard and not-so-standard topics in computer science, all given in Feynman’s inimitable style. This talk will begin by giving an overview of Feynman’s long-time interest in computing - starting with his time at Los Alamos during the war through to his suggestion of simulating physics on a quantum computer at a ‘Physics of Computation Conference at MIT in 1981 and his acting as a consultant for the parallel computer company, Thinking Machines. The talk will conclude with a summary of Feynman’s long-term interest in the physical limits to computation and the exemplary way he could communicate complex ideas to all types of audience.

Keywords

1. Introduction

The citation for the 1965 Nobel Prize in Physics that was awarded jointly to Sin-Itiro Tomonaga, Julian Schwinger and Richard P. Feynman reads: ‘for their fundamental work in quantum electrodynamics, with deep-ploughing consequences for the physics of elementary particles’. Nowadays, calculations using ‘Feynman diagrams’ are ubiquitous in many areas of theoretical physics. In addition to his pioneering and unconventional approach to making sense of relativistic quantum field theory, Feynman is perhaps better known to a much larger world-wide community of physicists through his three ‘red books’ – the famous ‘Feynman Lectures on Physics’. However, for the general public Feynman is probably best known for three other activities. The first are the wonderful TV interviews captured in recordings made on his annual visits to Ripponden, his wife’s home-town in West Yorkshire in the north of England. These include Yorkshire Television’s ‘Take the World from Another Point of View’ [Richard Feynman - The World from another point of view (youtube.com)] in 1973 and the later documentaries made for the BBC by Christopher Sykes such as ‘The Pleasure of Finding Things Out’ [Horizon - 1981-1982: The Pleasure of Finding Things Out - BBC iPlayer]. Feynman really became known to a much broader audience through the publication of many of his anecdotes put together by Ralph Leighton in the book ‘Surely You’re Joking, Mr Feynman’ published in 1985 [SYJMF]. This includes stories from his work on the Manhattan project during the war in the essay ‘Los Alamos from Below’ up to his serious and thought-provoking Caltech commencement address on ‘Cargo Cult Science’ in 1974. However, the most impactful way that Feynman became known to journalists and the wider world was due to his membership of the Presidential Commission tasked with the official inquiry into the reasons for the 1986 Challenger space shuttle disaster. It was Feynman’s live TV demonstration of the effect of cold on the critical rubber O-rings that electrified the journalists reporting on the Commissions hearings. In the end Feynman only agreed to have his name on the official report if he could publish his own detailed analysis of the risks leading up to the disastrous launch of the Challenger shuttle. His report was titled ‘Personal Observations on the Reliability of the Shuttle’ and ended with the memorable sentence [WDYC]: For a successful technology, reality must take precedence over public relations, for Nature cannot be fooled.

The day after the report of the Presidential Commission was released - with Feynman’s personal report as an appendix - he met his friend and colleague Carver Mead at the annual Caltech Commencement ceremony. Mead says that Feynman was gleeful about the headline he had seen in the papers that morning [FAC]. He was delighted because the headline was not ‘Caltech Professor Issues Report’ nor ‘Commission Member Issues Report’ but ‘FEYNMAN ISSUES REPORT’.

The next section is about Feynman’s development of pipeline parallel computing at Los Alamos before the arrival of digital computers. His continued interest in computation is the subject of the next section on ‘Feynman diagrams’. In the words of co-Nobel Prize winner Julian Schwinger, Feynman brought ‘computation to the masses’ for his intuitive method of making calculations in the relativistic quantum field theory of Quantum Electrodynamics or QED. The remaining sections detail Feynman’s long-held interest in the physics of computation and how a lecture he gave at MIT in in 1981 has led directly to the current enthusiasm for building quantum computers. Feynman also had an early interest in using artificial neural networks for what he called ‘advanced applications’ in the ’Feynman Lectures on Computation’ [FLOC]. These would now be called ‘applications of Artificial Intelligence’. The paper concludes with some remarks on Feynman’s ‘Lectures on Computation’ and his elementary ‘File Clerk’ model of a computer [FLOC].

2. Computing at Los Alamos: parallel computing without computers

The feasibility of an atom bomb had been shown in 1940 by Otto Frisch and Rudolf Peierls, two Jewish German refugee physicists from Hitler’s Germany, then working at Birmingham University in England (Hey, 2024). Otto Frisch had just been working with his aunt, the nuclear physicist Lise Meitner in Sweden trying to explain some puzzling results obtained by Meitner’s previous colleague, Otto Hahn, before she had to leave Germany. Frisch coined the word ‘fission’ to describe the process by which a uranium nucleus could be split apart under bombardment by a neutron and release significant amounts of energy. For his part, Peierls had worked in Germany with Werner Heisenberg, one of the founders of quantum mechanics and generally regarded as one of the most able theoretical physicists in the world. Peierls and Frisch then had a genuine fear that Heisenberg could now be leading a team of German physicists to build a nuclear explosive device. Fortunately, just before the war, Bohr and Wheeler had written a paper showing that the common 238 isotope of uranium was not suitable for building a nuclear bomb. However, one day in February or March 1940, Frisch asked Peierls the question: Suppose someone gave you a quantity of pure 235 isotope of uranium - what would happen?

Since Peierls had developed a formula to calculate critical masses for fissionable elements they put in plausible numbers for the U235 isotope. They were both amazed at how small a mass would be needed to generate a chain reaction to cause an explosion: We estimated the critical size to be about a pound, whereas speculations concerned with natural uranium had tended to come out with tons.

Although their number for the critical size turned out to be a little on the low side because their guess about the fission cross section of U235 was not quite right, they were correct in that only pounds and not tons of the 235 isotope were needed. Peierls then made a rough estimate ‘on the back-of-the proverbial envelope’ that showed a pound or so of the U235 isotope would release the equivalent of several thousand tons of ordinary explosive. Separation of tons of U235 was clearly not a practical proposition but constructing an isotope separation plant to produce just a few pounds was definitely feasible. As Frisch and Peierls said: Even if this plant costs as much as a battleship, it would be worth having.

Because of the need for absolute secrecy, Peierls had to type a report on their findings himself and this became known as the ‘Frisch-Peierls Memorandum’. Their report ended with a bleak warning about the danger of a possible German bomb: Since the separation of the necessary amount of uranium is, in the most favourable circumstances, a matter of several months, it would obviously be too late to start production when such a bomb is known to be in the hands of Germany, and the matter seems, therefore, very urgent.

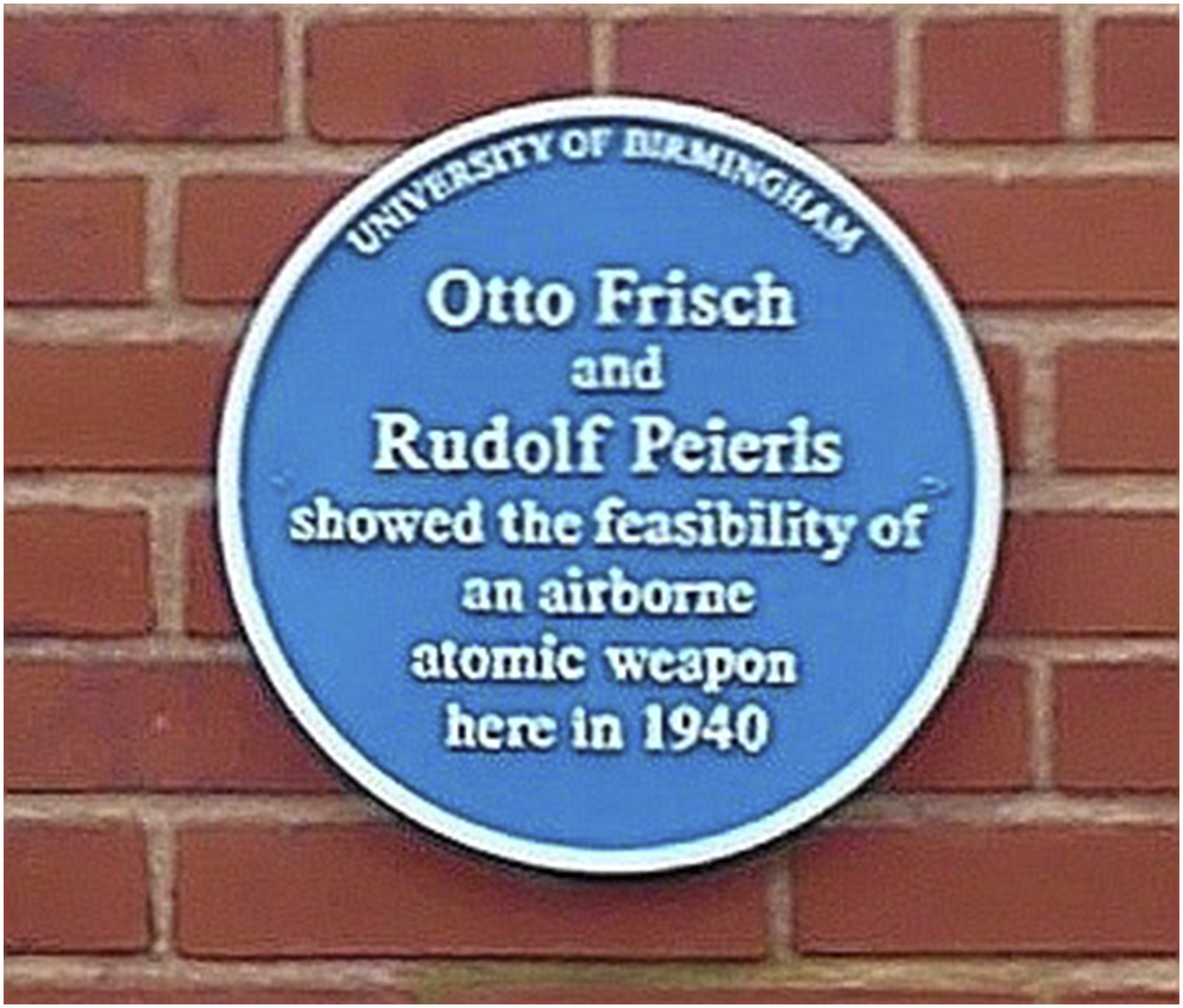

They passed their report to Mark Oliphant, their professor at Birmingham, who promised to get it to the relevant decision makers in the Government. After several bureaucratic obstacles – such as initially banning Peierls and Frisch from being able to read their own report - the UK Government set up the secret ‘Tube Alloys’ project to build an atomic bomb. Peierls was delegated to work on the problem of isotope separation and in 1941 he recruited another very able German refugee physicist, Klaus Fuchs, to work with him. However, Fuchs had left Hitler’s Germany not because he was a Jew but because he had been a card-carrying communist and this would later have grave consequences for the secrecy of the project (Figure 1). Blue plaque to physicists Frisch and Peierls on the wall of the Poynting Physics Building, University of Birmingham (PicturePrince CC-BY-SA image).

In spite of the famous ‘Einstein letter’ to President Roosevelt in 1939, there had been little progress in setting up a serious project in the US to actually build an atom bomb. Oliphant had sent the findings of the UK Government’s research into the possibility of building an atom bomb to the US Uranium Committee in March 1941. Because of the extreme urgency to prevent Hitler’s Germany acquiring such a weapon before the Allies, Oliphant flew to see the US Uranium Committee in person to find out why the UK reports had apparently been ignored. He was dismayed to find out that the head of the Uranium Committee had not even shown the UK findings to other members of the Committee. Oliphant then went his friend Ernest Lawrence in Berkeley to explain the urgency of setting up a serious project in the USA. This resulted in General Groves being appointed to lead what became known as the Manhattan project with the clear goal of developing an atomic bomb before Germany.

General Groves chose Robert Oppenheimer, from the University of California, Berkeley, to be the technical leader of the project. Oppenheimer then picked a remote plateau called Los Alamos near Santa Fe in New Mexico as the site for the main laboratory. This soon became home for many of the most famous scientists, not only from the US and the UK but also for refugees from occupied Europe. Oppenheimer chose another German refugee, Hans Bethe, to be the director of the Theoretical Division, and this turned out to be very fortunate for Feynman. The Laboratory was still just starting up and as Feynman later said [QMK]: Most of the big shots were out of town for one reason or the other, getting their furniture transferred or something. Except for Hans Bethe. It seems that when he was working on an idea he always liked to discuss it with someone. He couldn’t find anybody around, so he came down to my office … and started to explain what he was thinking. When it comes to physics I forget exactly who I’m talking to, so I was saying, ‘No, no! That’s crazy!’ and so on. Whenever I objected, I was always wrong, but nevertheless that’s what he wanted.

Bethe was wise enough to recognize Feynman’s unique talents and he made him the youngest group leader in the Division with four people reporting to him. One of the most important things that Feynman had learnt from Bethe was the necessity to connect theoretical calculations with experimental results [QMK]: Bethe had a characteristic which I learned, which is to calculate numbers. If you have a problem, the real test of everything … you’ve got to get the numbers out; if you don’t get down to earth with it, it really isn’t much. So his perpetual attitude is to use the theory. To see how it really works is to really use it.

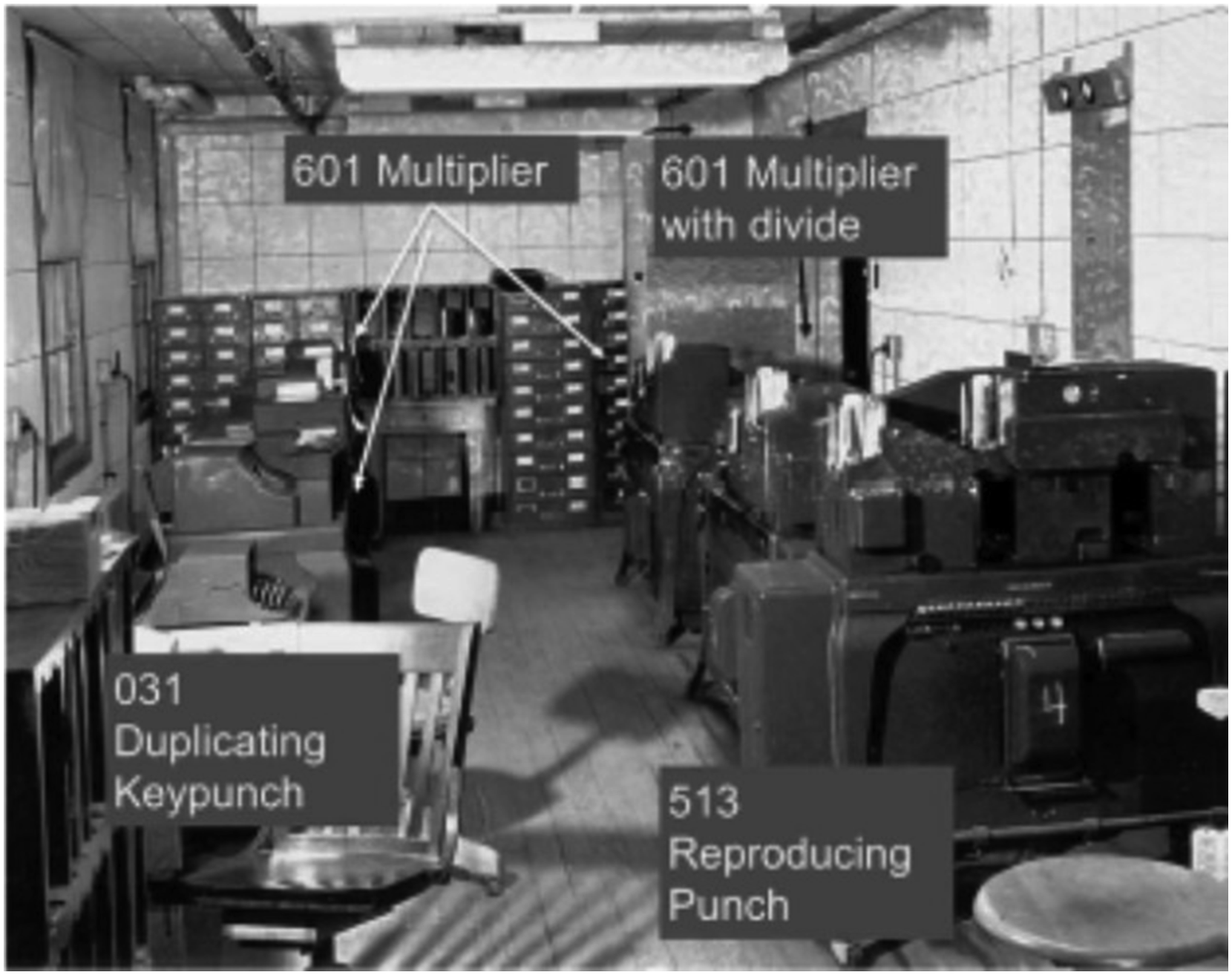

During the final phases of designing and building the plutonium implosion bomb, Feynman was put in charge of the key calculations of the energy release of the different designs. The computing group had just been tasked with doing the calculations needed for the final design of the plutonium implosion bomb. One of the previous leaders of the computing group, Stanley Frankel, had realized that the hydrodynamic equations for the implosion problem required more calculating power than was achievable with their Marchant mechanical calculating machines. He made some research visits to other computing centers and thought that the necessary calculations could be done using the more modern electromechanical machines produced by IBM. These were the IBM Punch-Card Accounting Machines or PCAMs. This was some years before IBM made fully digital computers – the PCAM machines were just adding and multiplying machines plus collators and sorters (see Figure 2). IBM later produced a modified PCAM for Los Alamos that was also capable of implementing division operations. Part of the IBM PCAM installation at Los Alamos during the Manhattan Project after November 1944. Right side, front to back: type-513 reproducer punch, type-601 multiplier with divide unit, type-601 multiplier without divide unit. Left side, front to back: type-031 duplicating keypunch, type-601 multiplier, type-601 multiplier. Image courtesy Los Alamos National Laboratory.

Frankel and colleagues Eldred Nelson and Naomi Livesey had broken down the required calculation into a sequence of different numerical steps – multiply this, then do this, then subtract that and so on. Now instead of just having one person doing all steps of the calculation, different people could be allocated to different machines to do specific parts of the total calculation. When a person in the calculating team received a card they did the required operation, updated the card and then passed it along to the person in charge of the next operation.

When the IBM machines finally arrived at Los Alamos, they were only partly assembled and without any IBM engineers to put them together. Because of the urgency of the situation Frankel and Feynman decided to assemble the machines themselves, despite being warned about the likelihood of their breaking something. Since the location of the Los Alamos site was classified, IBM were not able to send a maintenance man with the machines to assemble them. However, IBM’s best PCAM maintenance man had been drafted and assigned to Los Alamos by the Army. As a result, he was able to arrive at Los Alamos only a few days after the delivery of the PCAM machines and was able to complete the final tuning (Archer, 2021).

At this point, as Feynman describes it: Mr Frankel, who started this program, began to suffer from the computer disease that anybody who works with computers now knows about. It’s a very serious disease and it interferes completely with work. The trouble with computers is you play with them. They are so wonderful.

Because the computing group were not delivering results fast enough, Feynman was asked to take over leadership of the IBM PCAM group. Feynman identified that the real problem was that although members of the group had been selected from high schools across the country as students with high engineering ability, they had been told nothing about what they were doing and why it was important to the project. Because of Feynman’s intervention, Oppenheimer talked to security and got permission for Feynman to give them a lecture about the importance of the calculations they were doing. In the 9 months prior to Feynman taking charge, the group had only managed to complete three design calculations. After his lecture the result was a complete transformation. The team began to invent ways of doing the calculations better and even worked at night. As a result, instead of taking 9 months to do three problems, they did nine problems in 3 months. The team achieved this nearly 10-fold speed-up through parallel computing. Using pipelining, they were able to have two or three problem sets of cards moving round the machines at the same time. However, this performance improvement led to another problem. A short time before the test explosion was going to take place at the Trinity site near Albuquerque, the team was asked to produce results about how much energy would be released from the specific design that would be used. Since they had done nine problems in 3 months, the management assumed that the team could produce the required results in about 3 weeks. Although Feynman had to leave the team to go to see his wife Arlene who was very seriously ill at a sanatorium in Albuquerque, his highly motivated team succeeded in solving the more difficult problem of parallelizing just the one calculation.

Bethe later described Feynman’s contribution in the following terms [QMK]: Feynman could do anything, anything at all. At one time, the most important group in our division was concerned with calculating machines … The two men I had put in charge of these computers just played with them, and they never gave us the answers we wanted … I asked Feynman to take over. As soon as he got in there, we got answers every week – lots of them, and very accurate. He always knew what was needed, and he always knew what had to be done to get it … (I should mention that the computer arrived in boxes – about ten boxes for each. Feynman and one of the former group leaders put the machines together … Later we got some professionals from IBM who said, ‘This has never been done before. I have never seen laymen put together one of these machines, and it’s perfect!)’

3. Feynman diagrams

In the late 1920s, Heisenberg, Fermi and Dirac were among the leaders of the theoretical physics community who tried to create a consistent relativistic quantum theory of the interactions of electrons and photons. It was Dirac who first coined the name Quantum Electrodynamics (QED) for the proposed combination of Maxwell’s theory of electromagnetism with his relativistic ‘Dirac equation’ for the electron. Unlike the Schrödinger equation which describes non-relativistic quantum mechanics, the Dirac equation for the relativistic electrons allows both positive and negative energy solutions. By postulating that the normal ‘vacuum’ state has all these negative energy states occupied, Dirac was able to interpret a ‘hole’ in this Dirac negative energy ‘sea’ of electrons as a particle of the same mass but positive charge since, relative to the filled vacuum state, a hole in the sea lacks both negative energy and negative charge. In this way, an excitation of a negative energy electron to a positive energy electron state can be seen as equivalent to the creation of an electron-positron pair. By this curious chain of reasoning, Dirac predicted the existence of the positron as the antiparticle of an electron. In 1932, four years after Dirac had written down his equation – or as Feynman said, ‘… guessing an equation’ - the positron was observed in cosmic ray experiments by Caltech physicist Carl Anderson. Although the notion of Dirac's negative energy sea was the way antimatter was predicted, it was a very awkward and asymmetrical way of looking at antiparticles.

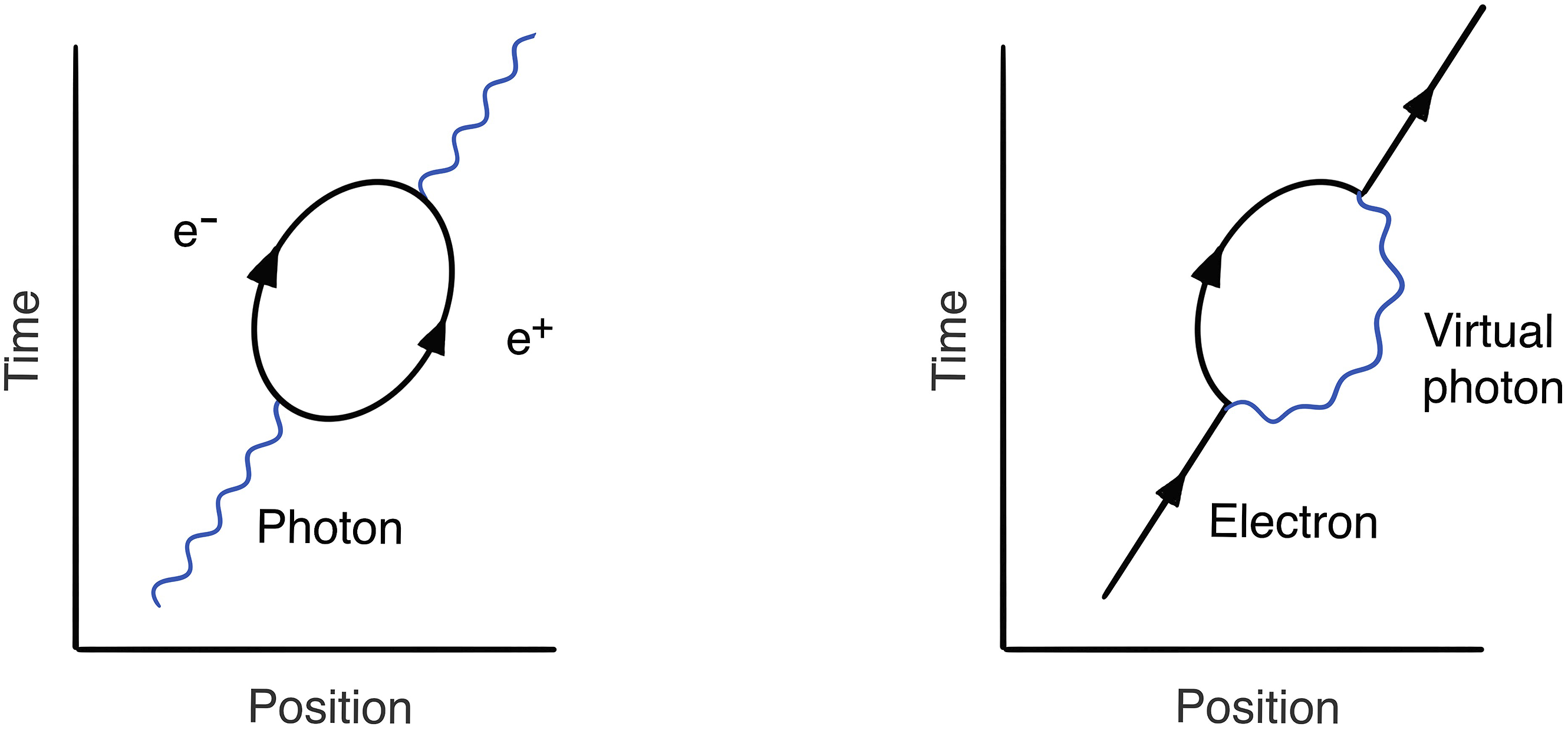

The new feature of relativistic quantum mechanics − illustrated by the pair creation process above − is the possibility of transforming energy into matter. Although classically we can never change the total amount of energy without violating the conservation of energy, in quantum mechanics there is an energy–time version of Heisenberg’s famous Uncertainty Principle. The uncertainties in measurements of energy (ΔE) and time (Δt) are related to Planck’s constant ‘h’, which characterizes the extreme smallness of all quantum effects, by the formula (ΔE) (Δt) ≈ h. In the context of QED, this means that we can borrow an energy ΔE and this loan will be undetected so long as we put it back within a time Δt ≈ h/ΔE. With the possibility of particle creation in relativistic quantum mechanics, this means that a particle need not always remain a single particle. Enough energy could be borrowed to create another particle or pair of particles for a very short time. For example, a photon may borrow enough energy to turn into a virtual electron–positron pair or an electron borrow enough energy to emit a virtual photon (see Figure 3). Feynman diagrams for two virtual processes.

Such transient processes are called ‘virtual processes’ and the particles created on the borrowed energy are known as ‘virtual particles’. Therefore, unlike the original non-relativistic quantum mechanics of Schrödinger and Heisenberg, the number of quantum particles in a relativistic theory can change. Dirac’s invocation of a filled negative energy sea of electrons was just a trick that enabled him to continue to use a single particle wave equation in a regime where a genuinely many-particle theory is required. The ‘Dirac sea’, with its apparently infinite negative charge and mass, disappears from a proper many-body formulation of quantum theory that allows particle creation and annihilation processes right from the outset. Instead of single particle wave mechanics, this approach is called ‘quantum field theory’.

As Feynman’s mentor at Los Alamos, Hans Bethe, later said [NOG]: Quantum Electrodynamics had been in the forefront of theoretical physics since about 1929 – seventeen years or so – before Feynman got to it. … The trouble was that this quantum electrodynamics worked very well if you calculated everything only in first order of approximation. If you tried to do it more accurately, it gave the result: infinity. Which was obviously wrong, because neither the mass of the electron, nor its interaction with radiation is infinite. So, a method had to be found to get rid of the infinities. … So it was an extremely difficult problem on which some of the best people worked, and none of them could do it.

This was the very gloomy situation after the war. Isidor Rabi, the famous experimental physicist, was moved to complain to a colleague about the inability of theorists to make sense of QED by saying ‘The last 18 years have been the most sterile of the century’. All this changed in April 1947 when Willis Lamb and his student Robert Retherford, both from Rabi’s group at Columbia University, made the most accurate measurements of the fine structure of the spectral lines of hydrogen. Their results conclusively showed that there was a tiny but significant disagreement with the predictions of Dirac’s theory. There was now a clear challenge to theorists to compute this ‘Lamb shift’, a small but finite correction to the first approximation QED predictions. One immediate result was that the US National Academy of Sciences organized a conference in June 1947 on the ‘Foundations of Quantum Theory’ on Shelter Island off Long Island, New York. Many of the great physicists from the Manhattan project were invited, including Oppenheimer, Bethe and Feynman as well as Rabi and Lamb. One of the most significant talks for Feynman was by the Dutch physicist Hendrick Kramers on the classical problem of the infinite self-energy of the electron. By starting from calculations involving the ‘bare mass’ of the electron and defining the actual mass of the electron as the sum of this bare mass plus the infinite electromagnetic contribution, Kramers showed that it was possible to recast the theory in a form in which only the actual mass, as defined above, appeared and no other infinite divergent quantities. This was the germ of the idea of ‘renormalization’ which turned out to be the key to obtaining finite corrections to QED calculations. Feynman later said that this was ‘the most important conference he had ever attended’.

In the last session of the conference Feynman gave a talk about his ‘space-time’ approach to quantum mechanics which had been the subject of his thesis at Princeton. While Feynman was in high school, his physics teacher, Mr Bader, saw that Feynman was looking bored and decided to tell him something that would keep his interest. This was the Principle of Least Action, a reformulation of Newton’s laws of motion by the mathematician and astronomer, Joseph-Louis Lagrange. We all have an idea of the trajectory a tennis ball will follow when thrown between two friends. However, let’s ignore our intuition and consider all the possible crazy trajectories that a tennis ball could follow. If you calculate the value of the kinetic energy minus the potential energy for all points on each possible path and then sum up these values over the entire path, then the specific path predicted by Newton’s laws is the one that minimizes this quantity. This is the Principle of Least Action and the combination of kinetic energy minus the potential energy is known as the Lagrangian. Feynman was fascinated by such Least Action principles all his life.

At Princeton before the war, Feynman discovered that Dirac had written a paper that showed how you could reformulate non-relativistic quantum mechanics in terms of a ‘Least Action’ principle using the quantum version of the Lagrangian. The traditional approach starts with the Schroedinger equation and uses the quantum version of the Hamiltonian, the kinetic energy plus the potential energy, to predict the time evolution of the wavefunction. In Feynman’s Least Action formulation of quantum mechanics there are quantum probability amplitudes for all possible paths from a given starting point in space-time, to a given space-time end-point. Feynman was able to show that for short travel times, his Least Action Lagrangian approach gave the same time evolution of the wave function as the Schrödinger equation.

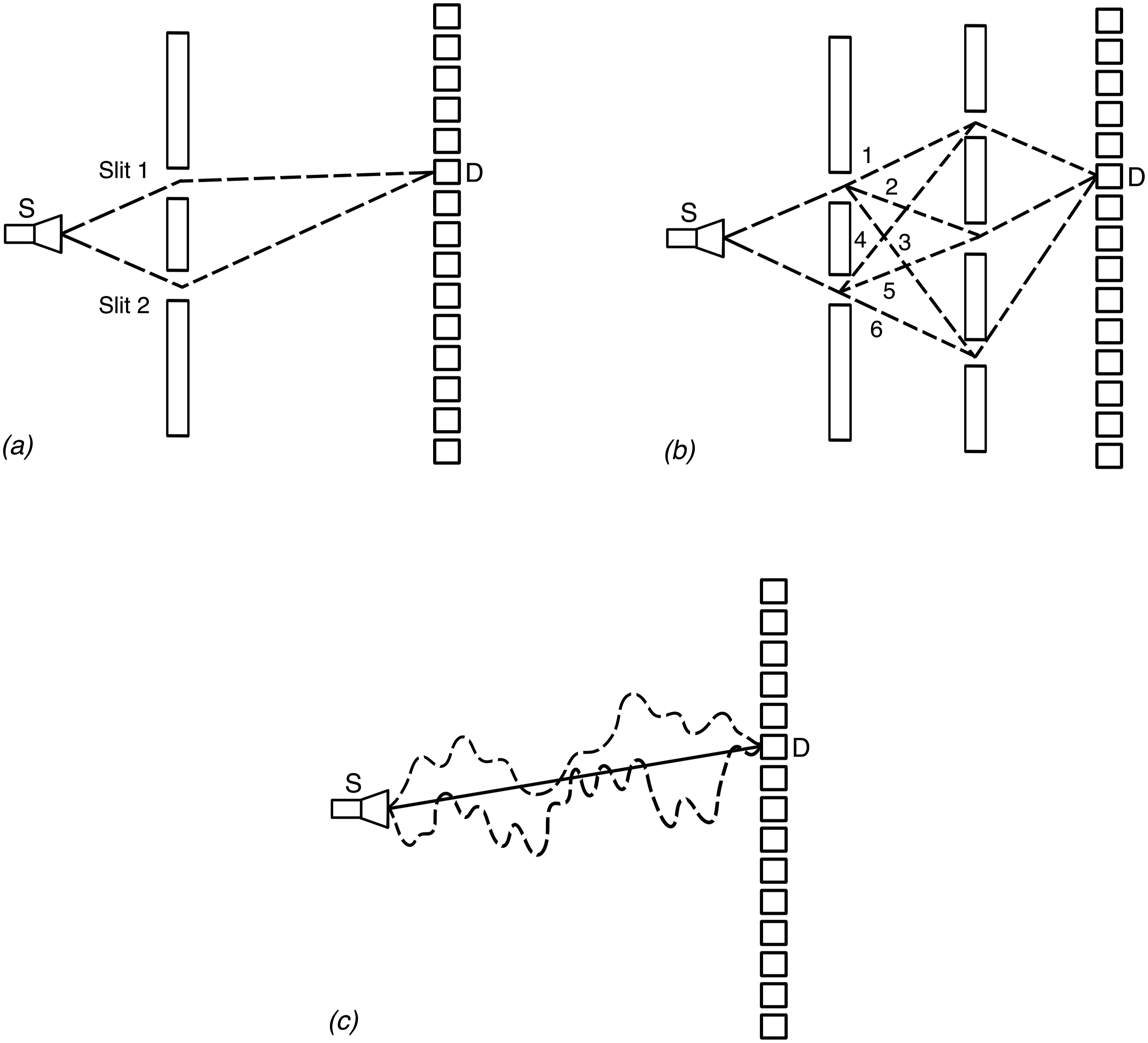

The idea of Feynman’s ‘sum-over-paths’ approach can be motivated by generalizing the description of the quantum double-slit experiment quantum mechanics (see Figure 4). The quantum amplitude may be obtained by adding the amplitudes for all possible paths between source S and detector D. (a) The original double-slit experiment with two possible paths for the electron. (b) With two screens between source and detector and a total of five slits there are now six possible paths. (c) Adding more screens and then cutting more and more slits leads to the situation where there are no screens at all! The quantum amplitude for an electron to travel from S to D may therefore be regarded as a sum over all possible paths. Two of the infinite number of possible quantum paths are shown as dotted lines in this figure. The path taken by a classical particle is shown as a solid line.

Feynman submitted his thesis on ‘The Principle of Least Action in Quantum Mechanics’ in 1942 and was awarded his PhD just before he joined the Manhattan project. At Cornell after the war, Feynman was able to re-work his thesis for publication under the title ‘Space-Time Approach to Non-Relativistic Quantum Mechanics’ in which he first talks about the action being a sum over all possible paths. The challenge for Feynman was now how to extend this ‘path integral’ approach to non-relativistic quantum mechanics to the relativistic domain.

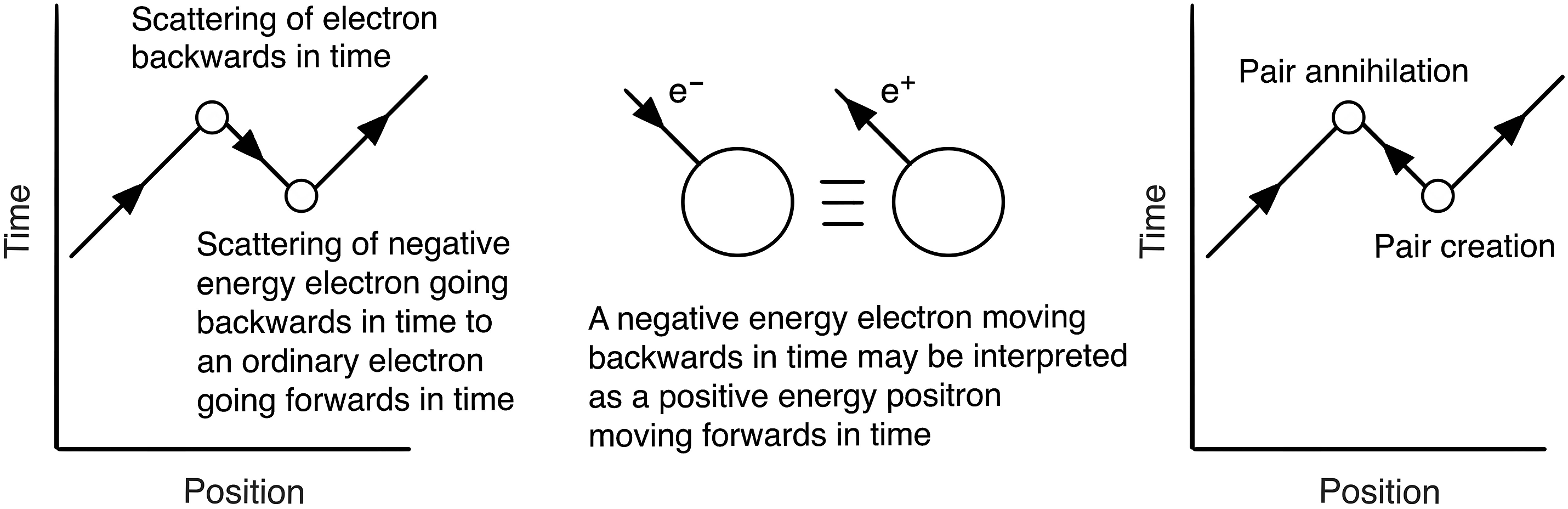

In trying to incorporate paths for Dirac’s relativistic electrons into his sum over paths approach to quantum theory, Feynman remembered an idea that his PhD supervisor, John Wheeler, had about interpreting electrons traveling backwards in time as positrons. Instead of treating electrons and holes separately in QED calculations, Feynman realized he was able to get the same results by including only electron paths in his space-time picture provided paths with electrons going backwards in time were included (see Figure 5). Feynman realized that diagrams with ‘backwards in time’ electron paths could be understood as the physical process of pair creation followed by pair annihilation. Negative energy electrons travelling backwards in time are equivalent to positive energy positrons travelling forwards in time.

In the introduction to his first paper on his path integral methods called ‘The Theory of Positrons’ published in 1949, Feynman used a war-time bombardier analogy to explain virtual electron-positron pair creation and annihilation in terms of electron paths going both forwards and backwards in time: It is as though a bombardier flying low over a road suddenly sees three roads and it is only when two of them come together and disappear again that he realizes that he has simply passed over a long switchback in a single road.

The successful Shelter Island Conference was followed about 9 months later by a similar conference held in the Pocono mountains in Pennsylvania in March 1948. The audience was a similar group of scientists from the Manhattan project but this time also included some eminent overseas physicists. Since both Schwinger and Feynman had independently developed their fully relativistic - but very different - calculational approaches to QED, one day had been set aside for them both to talk at the meeting. Feynman later recalled the combative atmosphere of the meeting [DFST]: Each of us [Schwinger and I] had worked out quantum electrodynamics and we were going to describe it to the tigers. He described his in the morning, first, and he gave one of these lectures which are intimidating. They are so perfect that you don’t want to ask any questions because it might interrupt the train. But the people in the audience like Bohr, and Dirac, Teller and so forth, were not to be intimidated, so after a bit there were some questions. A slight disorganization, a mumbling, confusion.

Feynman’s presentation at Pocono was on an ‘Alternative Formulation of Quantum Electrodynamics’ and followed Schwinger’s which had taken most of the day. His talk was not a success - he had questions from Dirac about the unitarity of his approach, from Teller about the Pauli Exclusion principle for intermediate states, and from Niels Bohr about his use of particle trajectories. Feynman’s space-time formalism was too unfamiliar to the audience and he later lamented that ‘My machines came from too far away.’

Feynman left the meeting determined to write up his methods for publication, which he did in two landmark papers in 1949. However, before he had published either of these papers a young English mathematician, Freeman Dyson, had arrived at Cornell in the fall of 1947 as a new PhD student in Bethe’s group. By spring of 1948 Dyson was trying to understand Feynman’s approach to performing QED calculations. This was helped by a now legendary journey that Dyson made with Feynman over the summer of 1948. Dyson had decided to go to Ann Arbor in Michigan for a summer symposium at which Schwinger was going to lecture on his most recent work. Since Feynman was driving to Albuquerque in New Mexico to resolve a romantic entanglement, he offered Dyson a ride. During their journey Dyson understood Feynman’s diagrammatic approach to calculations in QED in detail. After spending 6 weeks at the Ann Arbor symposium, Dyson also felt he now really understood Schwinger’s much more elaborate version of doing QED calculations. However, it was only when he was on a Greyhound bus traveling back to Cornell that Dyson realized how the Feynman and Schwinger approaches fitted together. His paper on ‘The Radiation Theories of Tomonaga, Schwinger, and Feynman’ proving the equivalence of the three apparently different theories of QED was published early in 1949, some months before Feynman’s two papers explaining his approach had appeared in print.

At the suggestion of Bethe, Dyson was spending his second year in Princeton, at the Institute for Advanced Study with Oppenheimer. He had given Oppenheimer a copy of his paper showing the equivalence of the three approaches to QED but was dismayed to find that Oppenheimer remained convinced that ‘Feynman’s ideas were worthless’. Fortunately for Dyson, Bethe visited the Institute in November and persuaded Oppenheimer to arrange for Dyson to present his results in three special seminars. This time Oppenheimer listened carefully and did not interrupt him. After his final seminar Dyson found a note in his mailbox saying ‘Nolo Contendere. R.O.’ (NOG). Dyson was instrumental in showing the physics community that using Feynman diagrams was much easier than using Schwinger’s approach. He illustrated the difference between the two methods by describing an early calculation he did when he was at Cornell [QMK]: ‘The calculation I did for Hans Bethe using the orthodox theory took me several months of work and several hundred sheets of paper. Dick Feynman could get the same answer, calculating on a blackboard, in half an hour.’

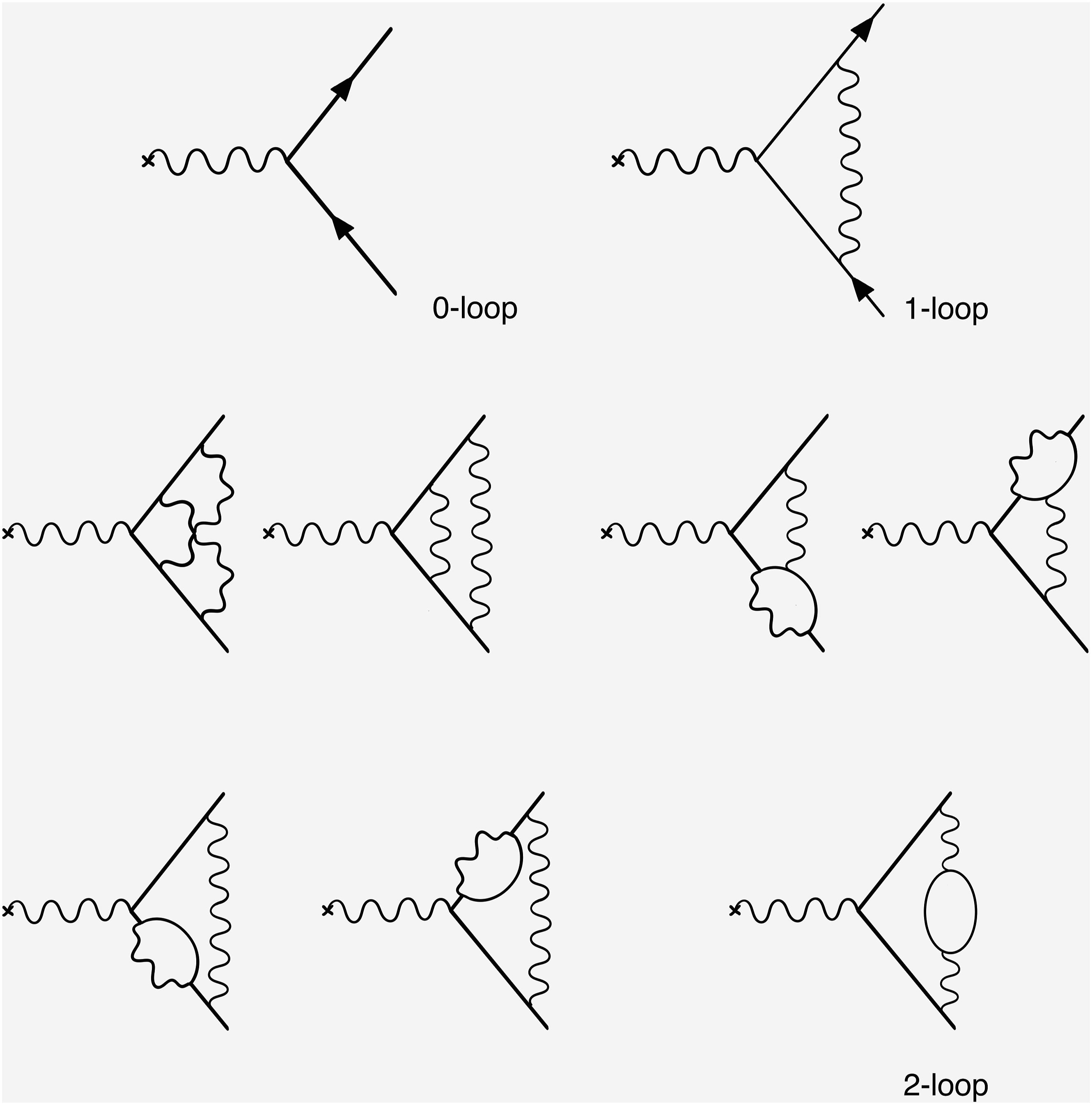

One of the most accurate tests of QED predictions is the calculation of the so-called anomalous magnetic moment of the electron. Figure 6 shows the ‘one loop’ and ‘two loop’ diagrams for this calculation. While the Dirac value for ae is zero, the present results of the theoretical QED calculations and experimental measurements agree to 9 decimal places 115965218 × 10−12. As is self-evident, the agreement between theory and experiment is truly extraordinary! One loop and two loop Feynman diagrams needed to calculate the magnetic moment of the electron.

This is how Feynman gave the physics community a practical toolkit for computations in quantum field theory. His long-term mentor Hans Bethe said [NOG]: Feynman’s great secret in solving the problem of quantum electrodynamics was that he developed this way to do it graphically, rather than by writing down formulae. As you know, this led to the famous Feynman diagrams which everybody is using now for any kind of calculation in field theory. The great power of Feynman’s diagrams is that they combine many steps of the older calculations in one. In the time before Feynman, we would do it all long-hand on paper, in algebra, and we would have to consider electrons and positrons separately. This was a very lengthy affair. Feynman was able to combine this, so that only one diagram needed to be calculated.

4. Quantum computers

Feynman had a long-standing interest in the limitations in physics due to size, beginning with his famous 1959 lecture ‘There’s Plenty of Room at the Bottom’, subtitled ‘an invitation to enter a new field of physics’ [FAC]. In this astonishing lecture, given as an after-dinner talk at a meeting of the American Physical Society in Pasadena, Feynman talked about ‘the problem of manipulating and controlling things on a small scale’ by which he meant the ‘staggeringly small world that is below’. He went on to speculate that: … in the year 2000, when they look back at this age, they will wonder why it was not until the year 1960 that anybody began seriously to move in this direction.

In his talk, Feynman offered two prizes of $1,000 – one ‘to the first guy who makes an operating electric motor …[which] is only 1/64 inch cube’ and a second ‘to the first guy who can take the information on the page of a book and put it on area 1/25,000 smaller in linear scale in such a manner that it can be read by an electron microscope’. He paid out on both these challenges – the first less than a year later to Bill McLellan, a Caltech alumnus, for an electric motor which met Feynman’s specifications. This was a disappointment to Feynman since the tiny motor required no new technical advances and he later lamented that he had not made the challenge small enough. In an updated version of his talk (Feynman, 1993) that he gave in 1983 at the Jet Propulsion Laboratory near Caltech, he predicted that ‘with today’s technology we can easily … construct motors a fortieth of that size in each dimension, making them 64,000 times smaller than … McLellan’s motor’. However, it was not until 26 years after his challenge on tiny writing that Feynman had to pay out on the second prize, this time to Tom Newman, a Stanford graduate student. The scale of Feynman’s challenge was equivalent to writing all twenty-four volumes of Encyclopedia Brittanica on the head of pin. Newman had calculated that each individual letter would be only about fifty atoms wide and, when his PhD adviser was away from Stanford at a meeting, he was able to use electron-beam lithography to write out the famous first page of ‘A Tale of Two Cities’ by Charles Dickens at the required smallness.

Feynman’s paper is often credited with starting the field of nanotechnology but in fact references to this paper are a rather recent phenomenon. For the first twenty-five to thirty years after his talk there were very few references to Feynman’s paper (Baumberg and Baumberg, 2024). ‘… around 1990 people finally started to take note of it. And why did they take note? They started to be able to work on this nano scale. So it was not until the technology caught up that these visions could have any real impact and they did not spur the way to get to the technology - it worked the other way around’

Feynman first met the two MIT computer science professors Marvin Minsky and Ed Fredkin in Pasadena in 1962 [FAC]. After a trip up to Mount Wilson in the nearby San Gabriel mountains on their rented Honda trail motorcycles, they were passing through Pasadena when Fredkin suggested that they go and visit Linus Pauling whom he knew from his time at Caltech. Pauling was not at home so they decided to call Feynman, whom they knew of but had never met. Feynman was at home and agreed to talk with them about computers and small machines. The three of them discussed many things until the early hours of the morning including the problem of whether a computer could perform algebraic manipulations. Fredkin credits the origin of MIT’s MACSYMA algebraic computing project to this discussion in Pasadena. Fredkin later visited Caltech as a Fairchild scholar in 1974 and agreed a deal with Feynman that Fredkin would teach Feynman computer science and Feynman would teach Fredkin quantum mechanics. Fredkin believes he got the better of the deal: It was very hard to teach Feynman something because he didn’t want to let anyone teach him anything. What Feynman always wanted was to be told a few hints as to what the problem was and then to figure it out for himself. When you tried to save him time by just telling him what he needed to know, he got angry because you would be depriving him of the satisfaction of discovering it for himself.

Besides learning quantum mechanics, Fredkin’s other assignment to himself during this year was to understand the problem of reversible computation. Feynman and Fredkin had a year of what Feynman called ‘wonderful, intense, and interminable arguments.’ During his time at Caltech, Fredkin invented ‘Conservative Logic’ – a model of computation that explicitly incorporates some fundamental principles of physics such as reversibility and energy conservation (Fredkin and Toffoli, 1982). He also invented a ‘controlled exchange’ logic gate, now called the Fredkin Gate, and the idea of a billiard ball computer. The Fredkin gate is a reversible logic gate that is universal and can be used to implement any logical operation. Similar reversible gates are important in the quest to build a quantum computer, as was later suggested by Feynman.

Feynman was persuaded to attend a 1981 conference at MIT on the ‘Physics of Computation’ organized by Ed Fredkin, Rolf Landauer and Tom Toffoli. In his talk ‘Simulating Physics with Computers’, Feynman raised the possibility of building a new type of computer made up of intrinsically quantum mechanical elements [FAC]. His motivation was that a large quantum system with N particles cannot be simulated on a normal computer with the number of computational elements proportional to N because the quantum wave function has too many variables. He therefore suggested a new type of computer – a ‘quantum computer’ – that could be used to simulate large quantum systems. Remarkably, Feynman also stated in his talk that such a computer was ‘not a Turing machine, but a machine of a different kind’. He ended his talk with a call to arms: Nature isn’t classical, dammit, and if you want to make a simulation of Nature, you’d better make it quantum mechanical.

Rolf Landauer, from IBM Research, and one of the organizers of the MIT conference, said that (Landauer, 1998): Feynman’s mere participation, together with his willingness to accept an occasional lecture invitation in this area, have helped to emphasize that this is an interesting subject.

Although, as Landauer says, Feynman’s participation in the conference gave great impetus to the ideas about reversible computing and the possibility of building a computer from quantum components. However, Feynman was not very scrupulous in reading and citing other relevant work. For example, in his MIT talk, Feynman gives a clear discussion of the well-known Bell Inequalities as an example of a property of a quantum system that cannot be simulated on a classical computer. However, Feynman’s paper has no references, and John Bell is never mentioned! Similarly, Paul Benioff, an engineer from Argonne National Laboratory, who was also attending the conference, and had been thinking (and writing) about reversible computing and the possibility of quantum computation, also does not get a mention. It is notable that in his second paper on quantum computers, described below, there is the footnote: ‘I would like to thank T.Toffoli for his help with the references.’

At a meeting in Anaheim, California in June of 1984, Feynman gave a second talk on ‘Quantum Mechanical Computers’ [FLOC] which he introduced by explicitly referring to the work of Charles Bennett of IBM Research on reversible classical computing (Bennett, 1979): This work is a part of an effort to analyze the physical limitations of computers due to the laws of physics. For example, Bennett has made a careful study of the free energy dissipation that must accompany computation. He found it to be virtually zero. He suggested to me the question of the limitations due to quantum mechanics and the uncertainty principle. I have found that, aside from the obvious limitation to size if the working parts are to be made of atoms, there is no fundamental limit from these sources either.

In his paper, Feynman explicitly considers how to build a quantum mechanical computer by imitating the conventional sequential architecture of a normal digital computer. His quantum computer used reversible quantum gates and a Hamiltonian to describe a system of interacting parts. His paper concludes with the statement: It seems that the laws of physics present no barrier to reducing the size of computers until bits are the size of atoms, and quantum behavior holds dominant sway.

John Hopfield, a fellow professor at Caltech, who had many conversations with Feynman about both neural networks and quantum computing, said [FAC]: While he [Feynman] is often given credit for helping originate ideas of quantum computation, my recollections of the many conversations with him on the subject contain no notion of his that quantum computers could in some sense of N scaling be better than classical computing machines. He only emphasized that the physical scale and speed of computers were not limited by the classical world, since conceptually they could be built of reversible components at the atomic level. The insight that quantum computers were really different came only later, and to others, not to Feynman.

It was David Deutsch (Deutsch, 1985) who, in 1985, first suggested that quantum computers might have an advantage over classical computers at solving problems that had nothing to do with quantum physics. His suggestion was spectacularly vindicated 4 years later by Peter Shor’s remarkable quantum algorithm for factorizing large numbers (Shor, 1999).

5. Feynman and computation

The official record at Caltech lists Feynman joining Caltech colleagues John Hopfield and Carver Mead in 1981 to give an interdisciplinary course with the title ‘The Physics of Computation’. The course was given for 2 years but the three of them never actually managed to give the course together: one year Feynman was ill with cancer, and the second year Mead was on leave. John Hopfield remembers the first year of the course as a disaster, with him and Mead wandering ‘over an immense continent of intellectual terrain without a map’. Mead is more charitable in his reminiscences but both agreed that the course left many students mystified.

In the second year, the lectures were given by Feynman and Hopfield with guest lectures from experts such as Marvin Minsky, John Cocke and Charles Bennett [FAC]: The format consisted of two lectures a week. The first of these lectures was usually from an outside lecturer. Marvin Minsky led off. The second lecture in a week was a critique by Feynman of the first lecture. He would summarize the important points from the first lecture with his own organization, integrating the lecture into the rest of science and computer science in Feynman's inimitable fashion. Occasionally, this would become a lecture on what the speaker should have said if he had really understood the essence of his subject. And of course, the students loved it.

On quantum computing, Hopfield remembers: Feynman himself did the lecture on quantum computation. Charles Bennett had already prepared the way by talking about reversible computation, and Norman Margolus on the billiard ball computer, so Feynman developed quantum computation in ways which made its connection to classical reversible computers obvious. His lecture was chiefly developing the idea that an entirely reversible quantum system, without damping processes, could perform universal computation.

A handout from the course of 1982/3 reveals the flavor of the course: a basic primer on computation, computability and information theory followed by a section entitled ’Limits on computation arising in the physical world and “fundamental” limits on computation’. However, after this second year, the three of them concluded that there was enough material for three courses and that each would go his own way.

When Feynman’s son Carl went to MIT, his father introduced him to his friend Marvin Minsky [NOG]. Danny Hillis was living in Minsky’s basement at the time and trying to turn his thesis ideas about a massively parallel computer into a commercial reality. When Hillis mentioned he was going out to Los Angeles, Carl suggested that he go and meet his father. Hillis was very surprised to find that Feynman himself had driven out to the airport to pick him up. Feynman wanted to know about ‘this crazy project his son was involved with’ and so they began talking about the basic ideas of the computer. Over lunch, one day in the spring of 1983, Hillis told Feynman he was founding a company to actually build his machine [FAC]. After saying that this was ‘positively the dopiest idea I ever heard’, Feynman agreed to work as a consultant for the new company. As Hillis recounts, when he later told Feynman the name of the company, ‘Thinking Machines Corporation’, he was delighted: ‘That’s good. Now I don’t have to explain to people that I work with a bunch of loonies. I can just tell them the name of the company.’ Feynman arrived in Boston the day after the company was officially incorporated in May 1983 and introduced himself to Danny and the group of ‘not-quite-graduated’ MIT students with the words: ‘Richard Feynman reporting for duty. OK, boss, what’s my assignment?’ After a hurried private discussion along the lines of ‘I don’t know, you hired him …’, the group sent him out to buy some office supplies. While Feynman was out of the building, they decided to give him the problem of analyzing the router for the machine that allows the 64,000 processors to communicate. Using an unconventional method of analysis based on differential equations, Feynman came up with a more efficient design than that of the engineers who had used conventional discrete methods in their analysis. Hillis describes how engineering constraints on chip size forced them to set aside their initial distrust of Feynman’s solution and use it in anger. During his time with Thinking Machines, Feynman also developed interesting implementations of Hopfield’s associate memory neural network and of Quantum ChromoDynamics or QCD. Feynman worked at Thinking Machines on and off for the next five years.

In the fall of 1983, Feynman first gave a course on computing by himself, listed in the Caltech record as being called ’Potentialities and Limitations of Computing Machines’. Gerry Sussman was on leave from MIT that year where he had been supervising Feynman’s son Carl as a student in Computer Science. Over lunch at the Athenaeum, the Faculty Club at Caltech, Sussman agreed a characteristic deal with Feynman – Sussman would help Feynman develop his new lecture course in return for Feynman having lunch with him after the lectures. Sussman says ‘that was one of the best deals I ever made in my life’ [FAC]. In the years 1984/85 and 1985/86, the lectures were taped and it was from these tapes and Feynman’s notebooks that I was able to reconstruct the ‘The Feynman Lectures on Computation’ [FLOC]. Like the earlier course with Hopfield and Mead, there were several guest lecturers giving one or more lectures on topics ranging from the programming language ‘Scheme’ to physics applications on the ‘Cosmic Cube’. Besides giving the ’Feynman treatment’ to subjects such as computability, Turing machines (or as Feynman says, ‘Mr Turing’s machines’), Shannon’s theorem and information theory, Feynman also discusses reversible computation, thermodynamics, quantum computation and semiconductor circuit technology. Such a wide-ranging discussion of the fundamental basis of computers is a unique and ‘sideways’, Feynman-type view of the whole of computing.

In addition to all the above activity, Feynman also found time to lead a workshop on ‘Idiosyncratic Thinking’ in 1984 and 1985 at the Esalen retreat center on the Big Sur coast in California. In addition to talking about ‘Quantum Mechanics and Reality’, he also gave a lecture on ‘Tiny Machines’ – essentially an updated version of his ‘Plenty of Room at the Bottom’ paper – and on ‘Computers from the Inside Out’. The audience at these workshops would not have been Feynman’s typical academic audience and Feynman used his well-known ability to connect with such an eclectic audience. In his lecture on computers, for example, Feynman uses the analogy of a computer as ‘a high-class, super-speed, nice, streamlined Filing Clerk’ [NOG]: I would like to say some words about what the computers can do and can’t do, and you’ll see they can do only what you would expect to be able to do with a filing system, and a reasonable File Clerk. What we need to do, of course, is to convert what we want the Clerk to do into an absolutely definite procedure, where each step is precisely defined in a stupid way, but exactly. If you can do that, then you can get the system to work.

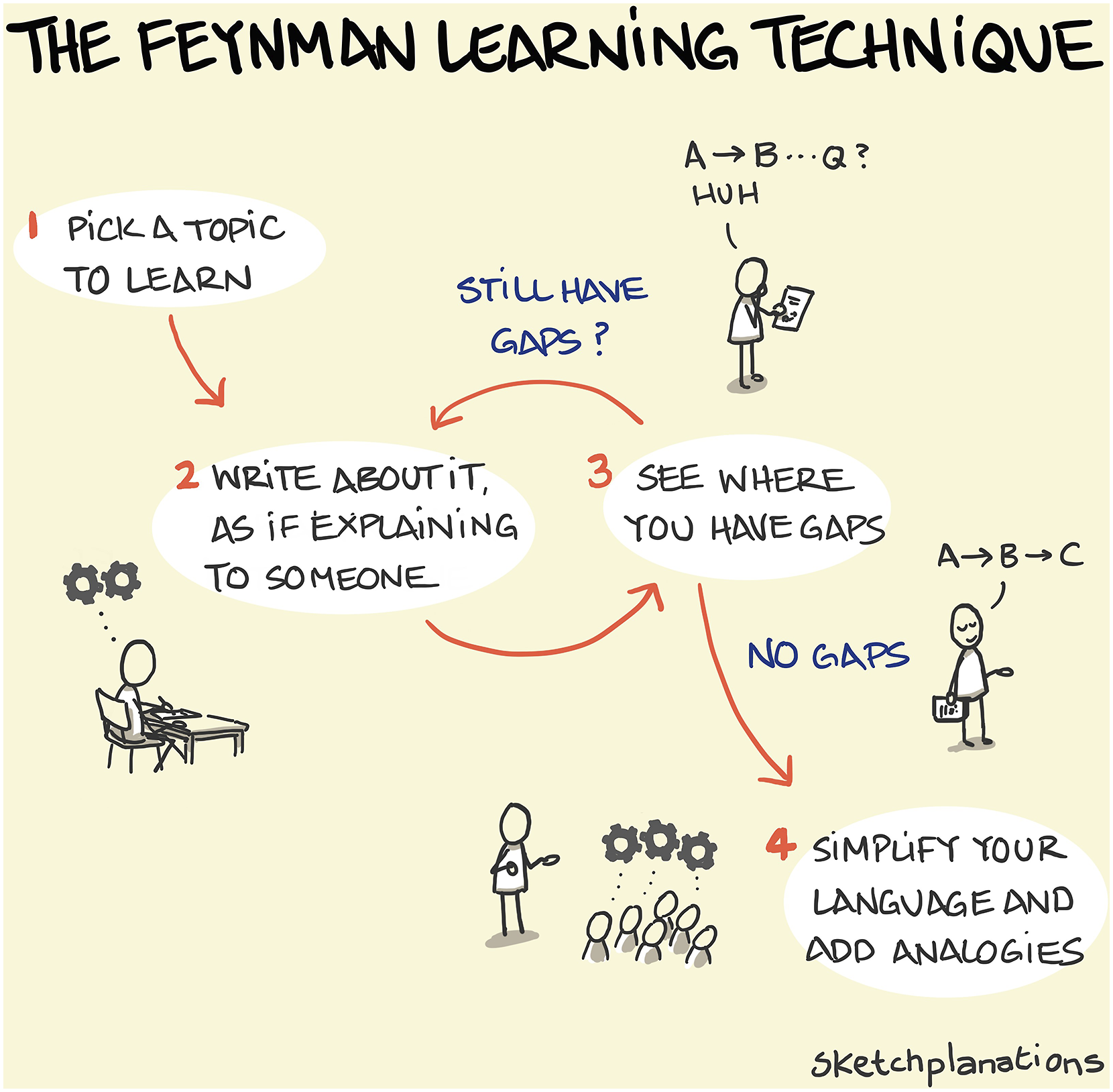

Figure 7 shows a cartoon drawing illustrating Feynman’s learning and teaching technique. A Sketchplanations drawing illustrating Feynman’s method of learning and teaching [URL: The Feynman Learning Technique - Sketchplanations].

As a final comment on Feynman’s long-term interest in computation, John Hopfield summarized this in terms of three fundamental questions [FAC]: There were three basic aspects of computers and computation which intrigued Feynman, and made the subject sufficiently interesting that he would spend time teaching it. First, what limits did the laws of physics place on computation? Second, why was it that when we wanted to do a hard problem a little better, it always seemed to need an exponentially greater amount of computing resources? Third, how did the human mind work, and could it somehow get around the second problem?

6. Sources

The major sources of information and quotations can all be found in the following books: [SYJMF]: ‘Surely You’re Joking, Mr Feynman: Adventures of a Curious Character’, as told by Ralph Leighton, W.W. Norton and Company, 1985. [WDYC]: ‘What Do You Care What Other People Think’: Further Adventures of a Curious Character’, as told by Ralph Leighton, W.W. Norton and Company, 1988. [NOG]: ‘No Ordinary Genius: The Illustrated Richard Feynman’, edited by Christopher Sykes, W.W.Norton & Company, USA, 1994 [FAC]: ‘Feynman and Computation: Exploring the Limits of Computers’, edited by Anthony J.G. Hey, Perseus Books Publishing, 1999. [FLOC]: ‘Feynman Lectures on Computation’, edited by Tony Hey, Anniversary Edition, CRC Press, 2023. [QMK]. ‘Quantum Man’, Lawrence M. Krauss, W.W. Norton & Company, USA, 2011 [DFST]: ‘QED and the Men who made it: Dyson, Feynman, Schwinger, and Tomonaga’, Silvan S. Schweber, Princeton University Press, 1994 [SPRF]: ‘Selected Papers of Richard Feynman with Commentary’, edited by Laurie M. Brown, World Scientific Publishing, Singapore, 2000. [RFC]: ‘Richard Feynman and Computation’, Tony Hey, in Contemporary Physics, volume 40, p257, 1999. [QCI]: ‘Quantum Computing: an Introduction’, Tony Hey, Computing and Control Engineering Journal, June 1999.

Footnotes

Authors’ Note

Talk at the CCDSC 2024 workshop, near Lyon, France, September 2024.

Acknowledgements

Thanks are due to Jack Dongarra and Bernard Tourancheau for the opportunity to participate in the 2024 CCDSC workshop near Lyon, France. Thanks also to Jonathan Hey for the figure drawings and permission to use his Sketchplanations picture of Feynman’s Learning technique. I also thank Jessie Hey, Nancy Hey, Jonathan Hoare, and Christopher Hey for critically reading the manuscript and making suggestions for improvements. I am also grateful for the comments of one of the referees for suggestions on how to improve this paper.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Author biography

Tony Hey began his career as a theoretical physicist with a doctorate in particle physics from the University of Oxford. After a career in particle physics that included research positions at Caltech, MIT and CERN, and a professorship at the University of Southampton, he became interested in computing and computer science. His parallel computing research group in the Electronics and Computer Science Department at Southampton were one of the pioneers of distributed memory message-passing computers. His group also produced the first distributed memory