Abstract

High-performance computing systems are more and more often based on accelerators. Computing applications targeting those systems often follow a host-driven approach, in which hosts offload almost all compute-intensive sections of the code onto accelerators; this approach only marginally exploits the computational resources available on the host CPUs, limiting overall performances. The obvious step forward is to run compute-intensive kernels in a concurrent and balanced way on both hosts and accelerators. In this paper, we consider exactly this problem for a class of applications based on lattice Boltzmann methods, widely used in computational fluid dynamics. Our goal is to develop just one program, portable and able to run efficiently on several different combinations of hosts and accelerators. To reach this goal, we define common data layouts enabling the code to exploit the different parallel and vector options of the various accelerators efficiently, and matching the possibly different requirements of the compute-bound and memory-bound kernels of the application. We also define models and metrics that predict the best partitioning of workloads among host and accelerator, and the optimally achievable overall performance level. We test the performance of our codes and their scaling properties using, as testbeds, HPC clusters incorporating different accelerators: Intel Xeon Phi many-core processors, NVIDIA GPUs, and AMD GPUs.

Keywords

1 Background and related works

The architecture of high-performance computing (HPC) systems is increasingly based on accelerators; typical HPC systems today—including several leading entries in the TOP500 list (TOP500, 2016)—are large clusters of processing nodes interconnected by a fast low-latency network, each node containing standard processors coupled to one or more accelerator units.

Accelerators promise higher computing performance and better energy efficiency, improving on traditional processors by up to one order of magnitude; in fact, they (i) allow massive parallel processing by a combination of a large number of processing cores and vector units, (ii) allow for massive multi-threading, in order to hide memory access latencies, and (iii) trade a streamlined control structure (e.g. executing instructions in-order only) for additional data-path processing for a given device or energy budget.

Typical accelerated applications usually follow a host-driven approach, in which the host processor offloads (almost) all compute-intensive sections of the code onto accelerators; the host itself typically only orchestrates global program flow or processes sequential segments of the application; this approach wastes a non-negligible amount of the available computational resources, reducing overall performance.

The obvious step forward is that even compute-intensive application kernels should be executed in a balanced way on both hosts and accelerators. This improvement has been hampered so far by several non-trivial obstacles, especially because CPUs and accelerators often present different architectures, so efficient accelerated codes may involve different data structures and operation schedules. Moreover, the lack of well-established performance-portable programming heterogeneous frameworks has, so far, required the use of specific programming languages (or at least proprietary variants of standard languages) for each different accelerator, harming portability, maintainability, and possibly even correctness of the application.

Improvements on this latter aspect come with the recent evolution of

In spite of these weaknesses, and in the hope that a converging trend is in progress, one would like to (i) understand how difficult it is to design one common code using common domain data structures running concurrently on hosts and accelerators, which is portable and also performance-portable across traditional processors and different accelerators, and (ii) quantify the performance gains made possible by concurrent execution on host and accelerator.

To explore this problem, one has to understand the impact on performance that different data layouts and execution schedules have for different accelerator architectures. One can then define a common data layout and write a common code for a given application with optimal (or close to optimal) performance on several combinations of host processors and accelerators. Assuming that one can identify a common data layout giving good performance on several combinations of host processors and accelerators, then one has to find efficient partitioning criteria to split the execution of the code among hosts and processors.

In this paper, we tackle exactly these issues for a class of applications based on lattice Boltzmann (LB) methods, which are widely used in computational fluid dynamics. This class of applications offers a large amount of easily identified available parallelism, making LB an ideal target for accelerator-based HPC systems. We consider alternate data layouts, processing schedules, and optimal ways to compute concurrently on host and accelerator. We quantify the impact on performance, and use these findings to develop production-grade massively parallel codes. We run benchmarks and test our codes on HPC systems whose nodes have dual Intel Xeon processors and a variety of different accelerators, namely the NVIDIA K80 (NVIDIA, 2015) and AMD Hawaii GPUs (AMD, 2016), and the Intel Xeon Phi (Chrysos, 2012).

Over the years, LB codes have been written and optimized for large clusters of commodity CPUs (Pohl et al., 2004) and for application-specific machines (Belletti et al., 2009; Biferale et al., 2010; Pivanti et al., 2014). More recent work has focused on exploiting the parallelism of powerful traditional many-core processors (Mantovani et al., 2013) and of power-efficient accelerators. such as GP-GPU (Bailey et al., 2009; Biferale et al., 2013; Calore et al., 2016b) and Xeon Phi processors (Crimi et al., 2013), and even FPGAs (Sano et al., 2007).

Recent analyses of optimal data layouts for LB have been made (Wittmann et al., 2011; Shet et al., 2013a,b). However, Wittmann et al. (2011) focuses only on the

This paper is structured as follows: Section 2 introduces the main hardware features of CPUs and GPUs that we have taken into account in this paper and Section 3 gives an overview of LB methods. Section 4 discusses design and implementation options for data layouts suitable for LB codes and measures the impact of different choices in terms of performances, while Section 5 describes the implementation of codes that we have developed concurrently running on both host and accelerator. Section 6 describes two important optimization steps for our heterogeneous code and defines a performance model, while Section 7 analyzes our performance results on two different clusters, one with K80 GPUs and one with Xeon Phi accelerators. Finally, Section 8 summarizes our main results and highlights our conclusions.

2 Architectures of HPC systems

Heterogeneous systems have recently enjoyed increasing popularity in the HPC landscape. These systems combine, within a single processing node, commodity multi-core architectures with off-chip accelerators, GPUs, Xeon Phi many-core units, and (sometimes) FPGAs. This architectural choice comes from an attempt to boost overall performance by adding an additional processor (the accelerator) that exploits massively parallel data paths to increase performance (and energy efficiency) at the cost of reduced programming flexibility. In this section, we briefly review the architectures of the state-of-the-art accelerators that we have considered—GPUs and Xeon Phi many-core processors—focusing on the impact that their diverging architectures have on the possibility of developing a common HPC code able to run efficiently on both of them.

GPUs are multi-core processors with a large number of processing units, all executing in parallel. The NVIDIA K80 (NVIDIA, 2015) is a dual GK210 GPU, each containing 13 processing units, called streaming multiprocessors (SMX). Each processing unit has 192 compute units, called CUDA-cores, concurrently executing groups of 32 operations in a SIMT (single instruction, multiple thread) fashion; much like traditional SIMD processors, cores within a group execute the same instruction at the same time but are allowed to take different branches (at a performance penalty). The AMD FirePro W9100 (AMD, 2016) is conceptually similar to the K80 NVIDIA GPU; it has 44 processing units, each with 64 compute units (stream processors).

The clock of an NVIDIA K80 has a frequency of 573 MHz, which can be boosted up to 875 MHz. The aggregate peak performance is then 5.6 TFlops in single precision and 1.87 TFlops in double precision (only one-third of the SMXs work concurrently when performing double-precision operations). Working at 930 MHz, the processing units of the AMD FirePro W9100 deliver up to 5.2 TFlops in single precision and 2.6 TFlops in double precision.

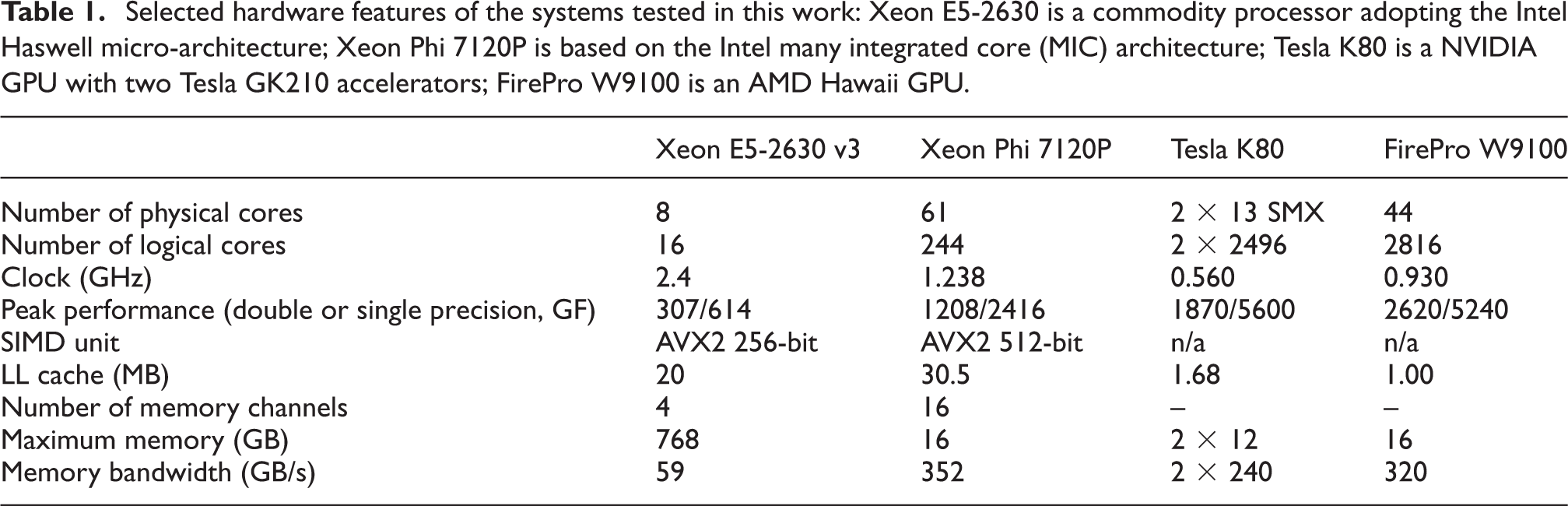

In general, GPUs sustain their huge potential performance thanks to large memory bandwidth—to avoid starving the processors—and massive multi-threading—to hide memory access latency. Consequently, register files are huge in GPUs, as they have to store the states of many different threads, while data caches are less important. For example (see also Table 1), a K80 GPU has a combined peak memory bandwidth of 480 GB/s, while each SMX has a register file of 512 KB and just 128 KB L1 cache/shared memory; SMX units share a 1536 KB L2 cache. Similarly, the AMD W9100 has a peak memory bandwidth of 320 GB/s and its last-level cache is just 1 MB.

Selected hardware features of the systems tested in this work: Xeon E5-2630 is a commodity processor adopting the Intel Haswell micro-architecture; Xeon Phi 7120P is based on the Intel many integrated core (MIC) architecture; Tesla K80 is a NVIDIA GPU with two Tesla GK210 accelerators; FirePro W9100 is an AMD Hawaii GPU.

The architecture of Xeon Phi (Chrysos, 2012) processors—the other class of accelerators that we consider—builds on a very large number of more traditional x86 cores, each optimized for streaming parallel processing, with a streamlined and less versatile control part and enhanced vector processing facilities. For instance, the currently available version of this processor family—the Knights Corner (KNC)—integrates up to 61 CPU cores, each supporting the execution of four threads, for an aggregate peak performance of 1 TFlops in double precision, with a clock running at 1.2 GHz. Each core has a 32 KB L1-cache and a 512 KB L2-cache. The L2-caches, private at the core level, are interconnected through a bi-directional ring and data are kept coherent and indirectly accessible by all cores. The ring also connects to a GDDR5 memory bank of 16 GB, with a peak bandwidth of 352 GB/s. Each core has a vector processing unit (VPU), executing SIMD operations on vectors of 512 bits.

Present-generation GPUs and Xeon Phi processors are connected to their host through a PCI-express interface, allowing data exchange between the two processors. Typically, 16 PCI-express lanes are used, with an aggregate bandwidth of 8 GB/s, much smaller than the typical memory bandwidth of these processors, so processor-accelerator data exchanges may easily become serious performance bottlenecks.

For both processor classes, in this work we consider a host-driven heterogeneous programming model, with applications executing on both the host and the accelerator. (The Xeon Phi also supports “native mode,” thus acting as an independent node capable of running applications independently. This approach will be enhanced in the next-generation Xeon Phi system, the Knights Landing, which will also be available as a stand-alone processor. We do not consider this mode of operation in this paper.) So far, different programming languages have been available for each specific accelerator; indeed, NVIDIA GPUs have a proprietary programming language, and AMD GPUs are supported by the OpenCL programming environment. On the contrary, the Xeon Phi uses the same programming environment as its Xeon multi-core counterpart, so one can develop codes following a directive-based approach (e.g. OpenMP), slightly reducing the effort of application migration. Conversely, many studies have shown that extracting a large fraction of the performance capabilities of the KNC, the first-generation Xeon Phi co-processors, still requires significant effort and fine restructuring of the code (Crimi et al., 2013; Gabbana et al., 2013; Liu et al., 2013).

Following recent improvements in directive-based programming environments, this work aims to explore ways of writing common codes that (i) have optimization features that can be exploited by traditional CPUs and both accelerator architectures, and (ii) are written using a common directive-based (e.g. OpenMP, OpenACC) programming environment.

3 Lattice Boltzmann methods

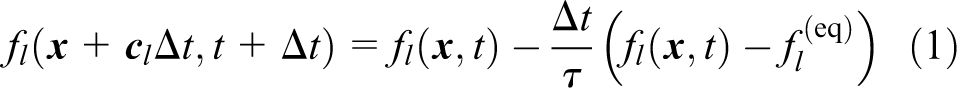

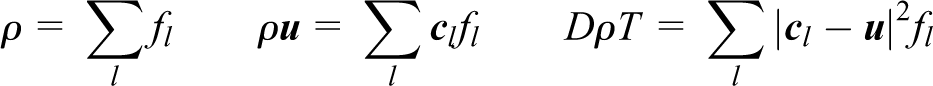

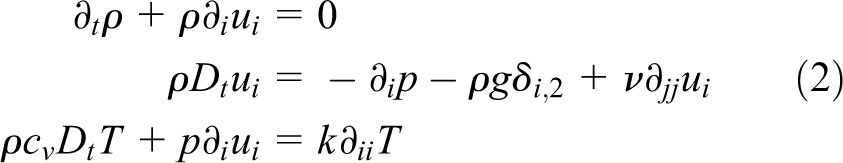

In this section, we sketchily introduce the computational method that we adopt, based on an advanced LB scheme. Lattice Boltzmann methods (see Succi (2001) for an introduction) are discrete in position and momentum spaces; they are based on the synthetic dynamics of

Over the years, several LB models have been developed, describing flows in two or three dimensions, and using sets of populations of different sizes (a model in

The macroscopic physics variables, density ρ, velocity

the equilibrium distributions (

In our case, we study a two-dimensional system (

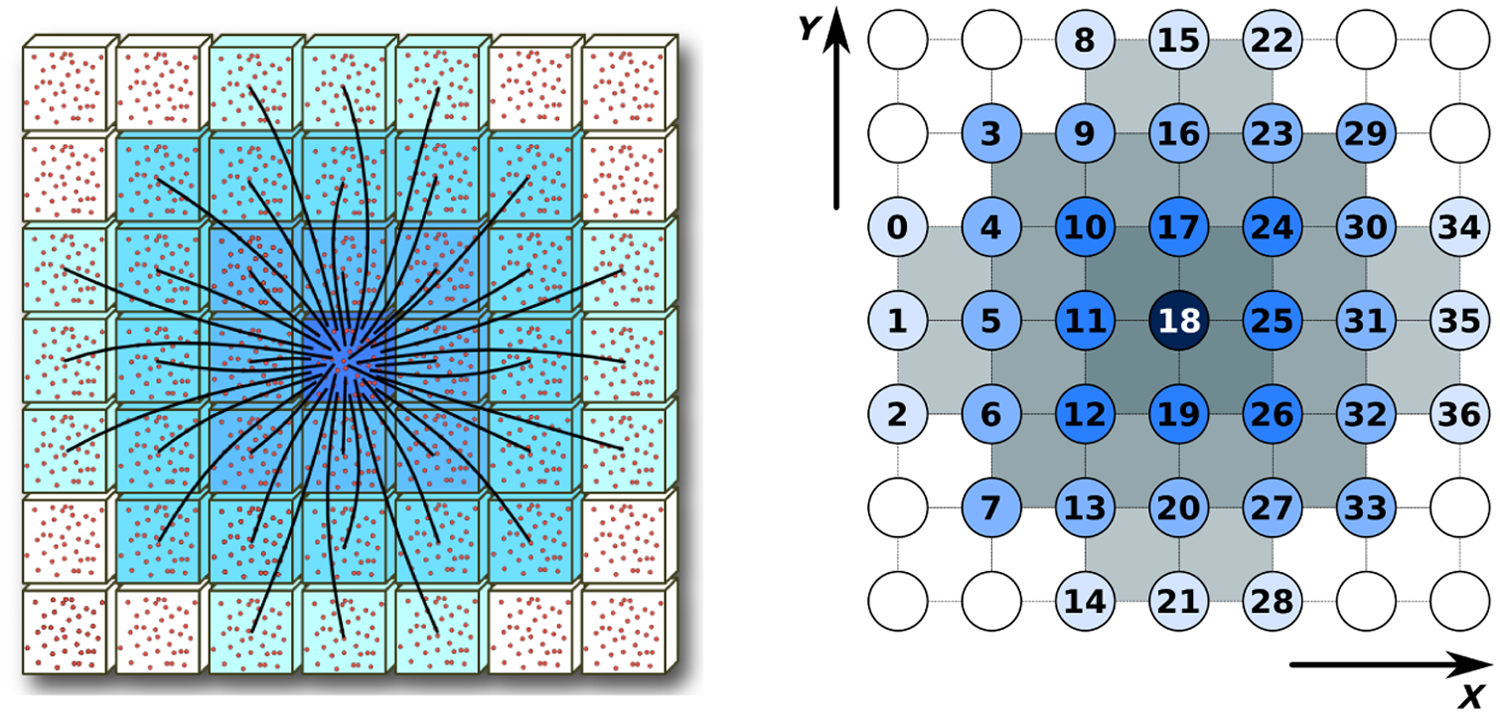

Left: Velocity vectors for the lattice Boltzmann populations of the D2Q37 model. Right: Populations are identified by an arbitrary label, associated with the lattice hop that they perform in the

An LB code starts with an initial assignment of the populations, corresponding to a given initial condition at

From the computational point of view, the LB approach offers a huge degree of easily identified available parallelism. Defining

one easily identifies the overall structure of the computation that evolves the system by one time step Δ

As already remarked, our D2Q37 model correctly and consistently describes the thermohydrodynamic equations of motion and the equation of state of a perfect gas; the price to pay is that, from a computational point of view, its implementation is more complex than for simpler LB models. This translates to demanding requirements for memory bandwidth and floating point throughput. Indeed, step 1 implies accessing 37 neighbor cells to gather all populations, while step 2 implies ≈ 7000 double-precision floating point operations per lattice point, some of which can be optimized away, e.g. by the compiler.

4 Data layout optimization for lattice Boltzmann kernels

Our goal is to design a performance-portable code capable of running efficiently on recent Intel multi-core CPUs as well as on Xeon Phi and GPU accelerators.

Our intended common application is written in plain

As remarked in Section 1, data layout has a critical role in extracting performance from accelerators.

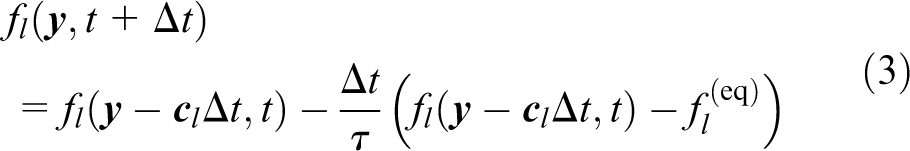

Data layouts for LB methods, as for many other stencil applications, have been traditionally based either on

Top and Middle:

In our implementation, we have arbitrarily chosen to store the lattice in column-major order (

4.1 Data layout optimization for propagate

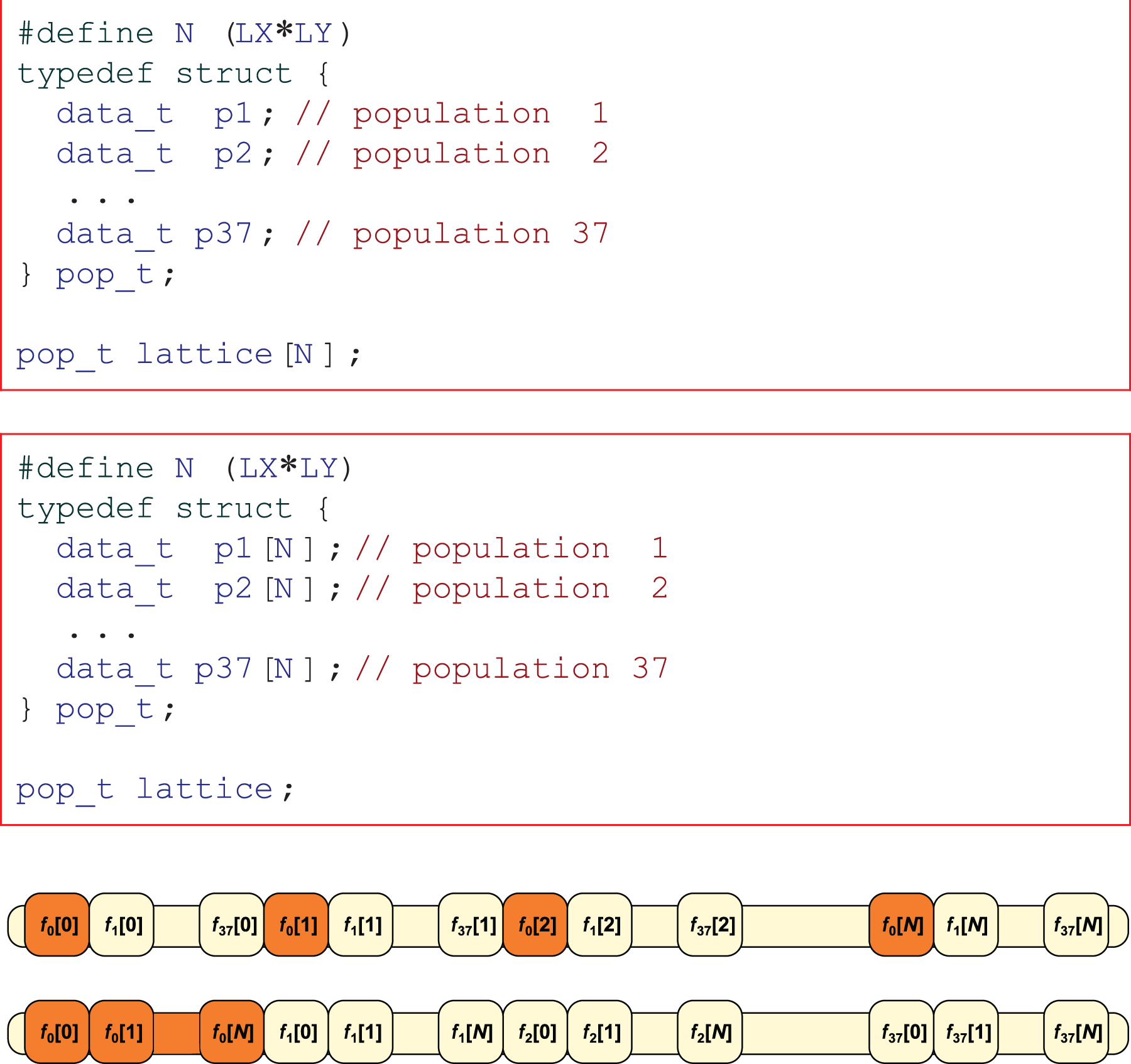

In our LB code, the

This kernel can be implemented using either a

The

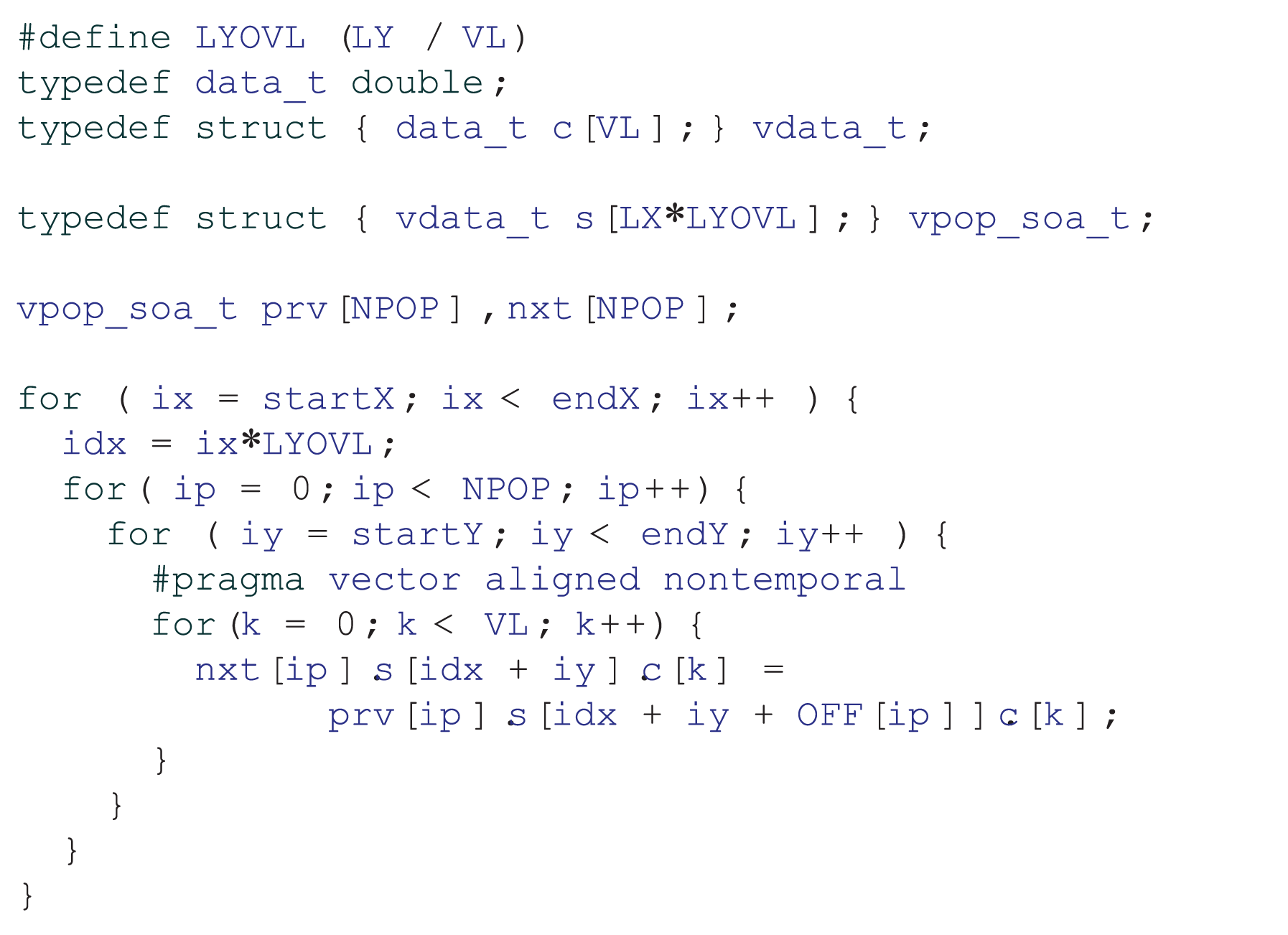

Codes of the

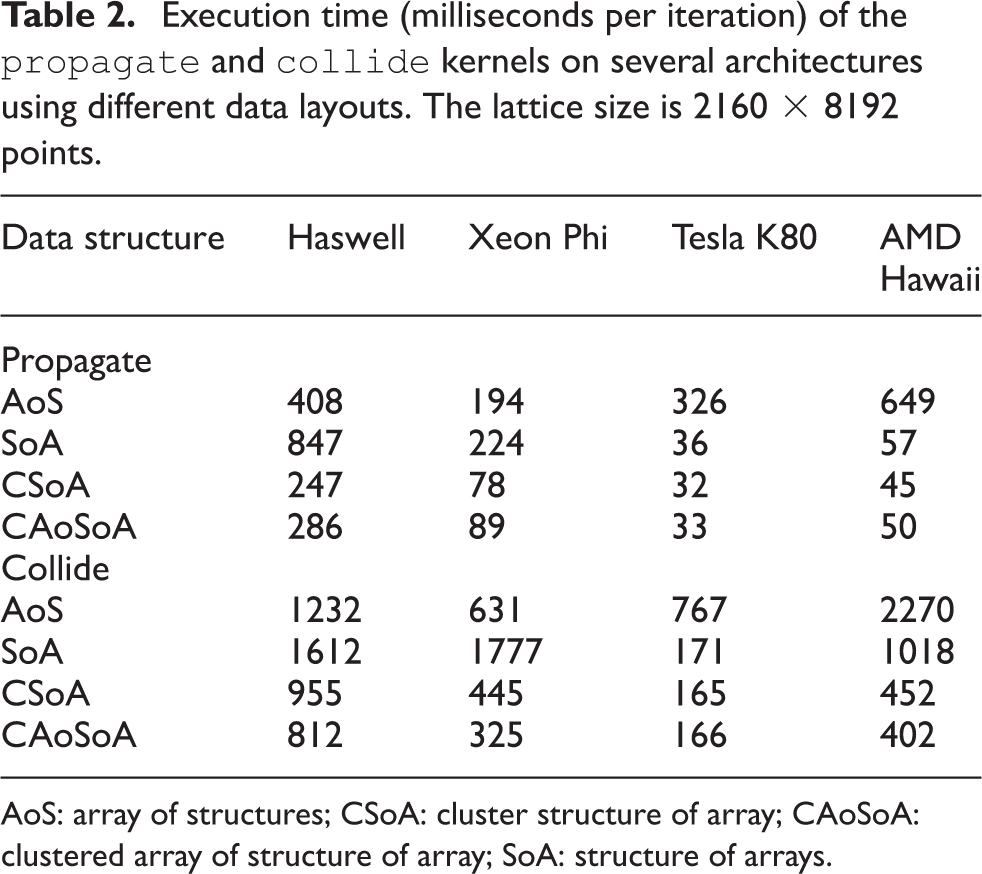

The first two rows of Table 2 compare the performance obtained with the two layouts on all the processors that we consider. As expected, the SoA layout is much more efficient on GPUs, but this is not true for both Intel processors; inspection of the assembly codes and compiler logs shows that read operations are not vectorized on Intel processors, owing to

Execution time (milliseconds per iteration) of the

AoS: array of structures; CSoA: cluster structure of array; CAoSoA: clustered array of structure of array; SoA: structure of arrays.

The reason why compilers fail to vectorize the code is that load addresses are computed as the sum of the destination-site address—which is memory-aligned—and an offset, so they point to the neighbor sites from which populations are read. This does not guarantee that the resulting address is properly aligned to the vector size, i.e., 32 bytes for CPUs and 64 bytes for the Xeon Phi. Store addresses, however, are always aligned if the lattice base address is properly aligned and

A simple modification to the data layout solves this problem. Starting from the lattice stored in the SoA scheme, we cluster

Source code of the

Table 2 (see the first three rows of the

4.2 Data layout optimization for collide

The

Following results obtained in Section 4.1, we consider first the CSoA data layout, which—as seen before—gives very good performance results with the

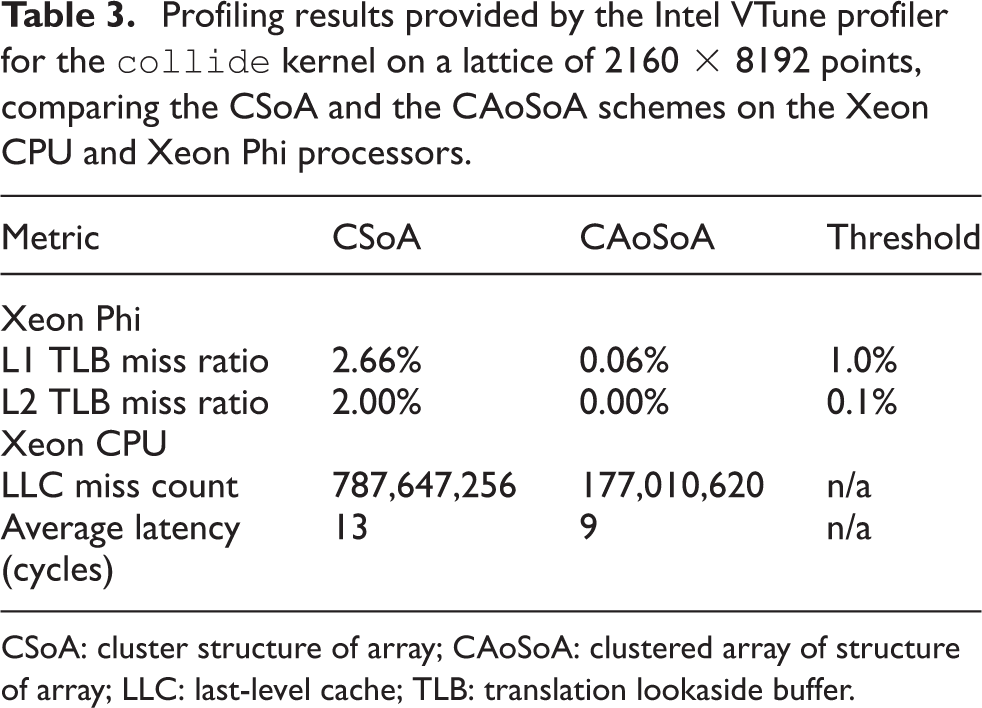

Profiling results provided by the Intel VTune profiler for the

CSoA: cluster structure of array; CAoSoA: clustered array of structure of array; LLC: last-level cache; TLB: translation lookaside buffer.

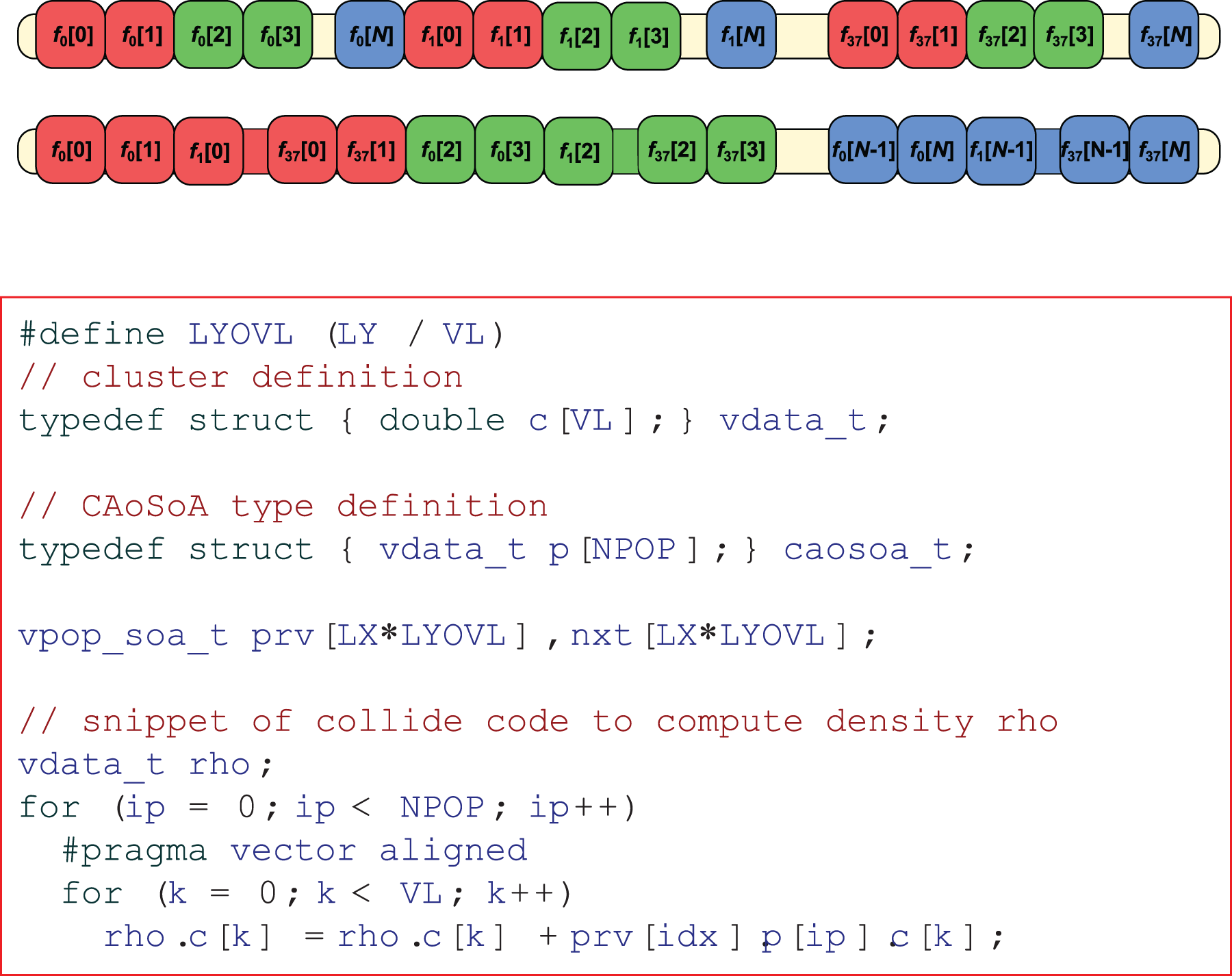

To overcome these problems, we further modify the CSoA scheme, and define a new data layout, which takes into account the locality requirements of both

Top: data arrangement for the CAoSoA layout; for illustration purposes, we take VL = 2. Bottom: sample code for

Table 3 shows the impact of the CAoSoA data layout on memory misses: on Xeon Phi, the TLB misses have been reduced well below the threshold values, and on Xeon CPU have been reduced by a factor of 4.5 with respect to the CSoA scheme. Table 2 shows the execution time of the

The overall performance gain for the

5 Heterogeneous implementation

In this section, we describe the implementation of our code designed to involve and exploit compute capabilities of both host and accelerators. We only consider the CAoSoA layout, as it grants the best overall performances on all the processors and accelerators that we have studied.

Our implementation uses MPI libraries and each MPI process manages one accelerator. The MPI process runs on the host CPU; part of the lattice domain is processed on the host itself, and part is offloaded and processed by the accelerator. Using one MPI process per accelerator makes it easy to extend the implementation to a cluster of accelerators installed on either the same or different hosts.

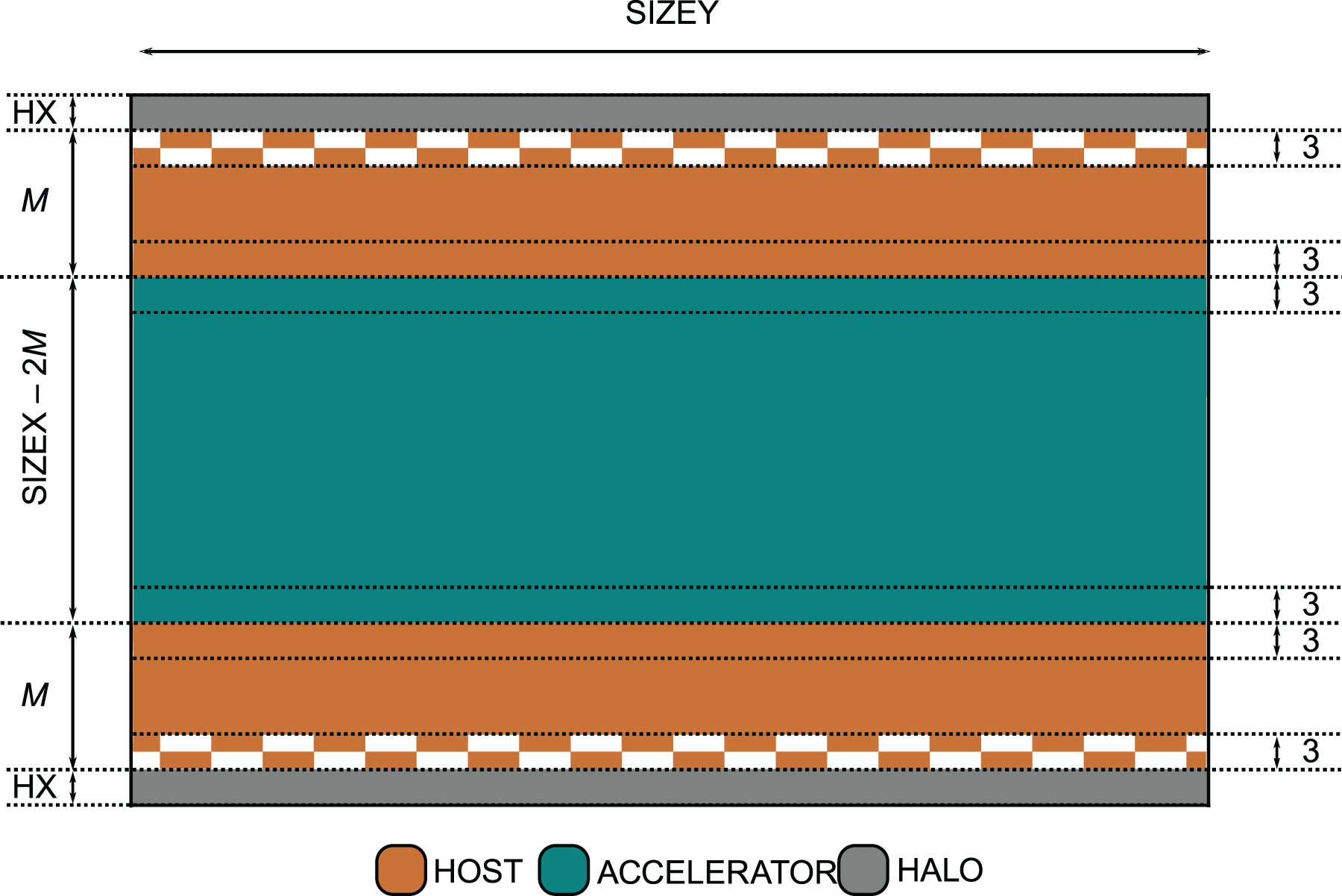

A lattice of size

Logic partitioning of the lattice domain among host and accelerator. The central (dark-green) region is allocated on the accelerator, side (orange and gray) regions on the host. Checkerboard textures flag lattice regions involved in MPI communications with neighbor nodes.

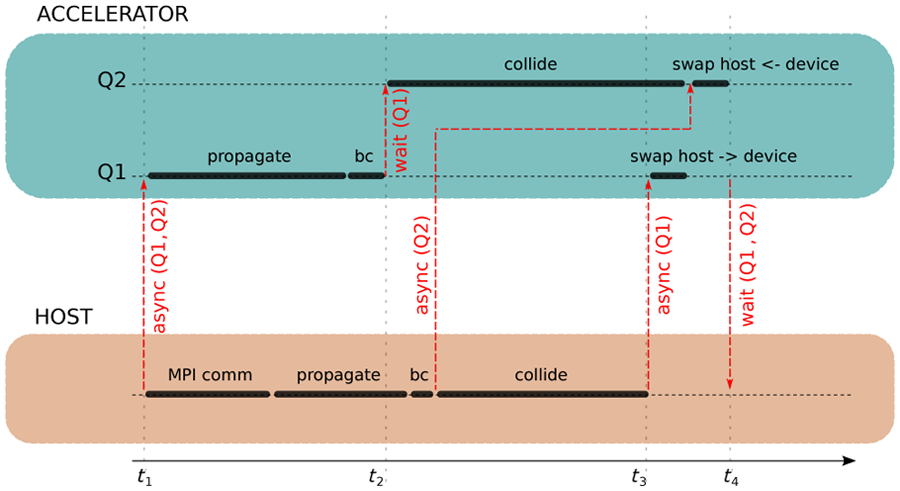

Each MPI process performs a loop over time steps, and at each iteration it launches, in sequence, the

Control flow executed by each MPI process. The schedule executed on the accelerator is on the upper band, while that executed by the host is on the lower band. Execution on the accelerator runs on two concurrent queues. Synchronization points are marked with red lines.

The code for KNC is implemented using the offload features available in the Intel compiler and runtime framework. In this case, we have a unique code and the compiler produces the executable codes for both Xeon Phi and CPU. For GPUs, the situation is somewhat different. In fact, we have to use two different compilers, one for GPUs and one for CPUs. We have written the code for GPUs, both NVIDIA and AMD, using

6 Parameter optimization

In this section, we describe two important optimization steps for our heterogeneous code. The first is about the optimal partitioning of the computation between host and accelerator, and the second is about the optimal cluster size for the CAoSoA data layout.

6.1 Workload partitioning

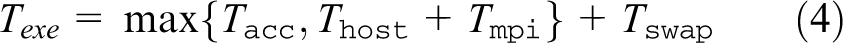

Hosts and accelerators have different peak (and sustained) performance, so careful workload balancing between the two concurrent processors is necessary. We model the execution time

where

Our code has an initial auto-tuning phase, in which it runs a set of mini-benchmarks to estimate approximate values of

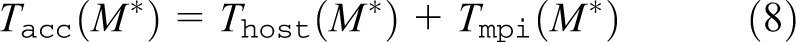

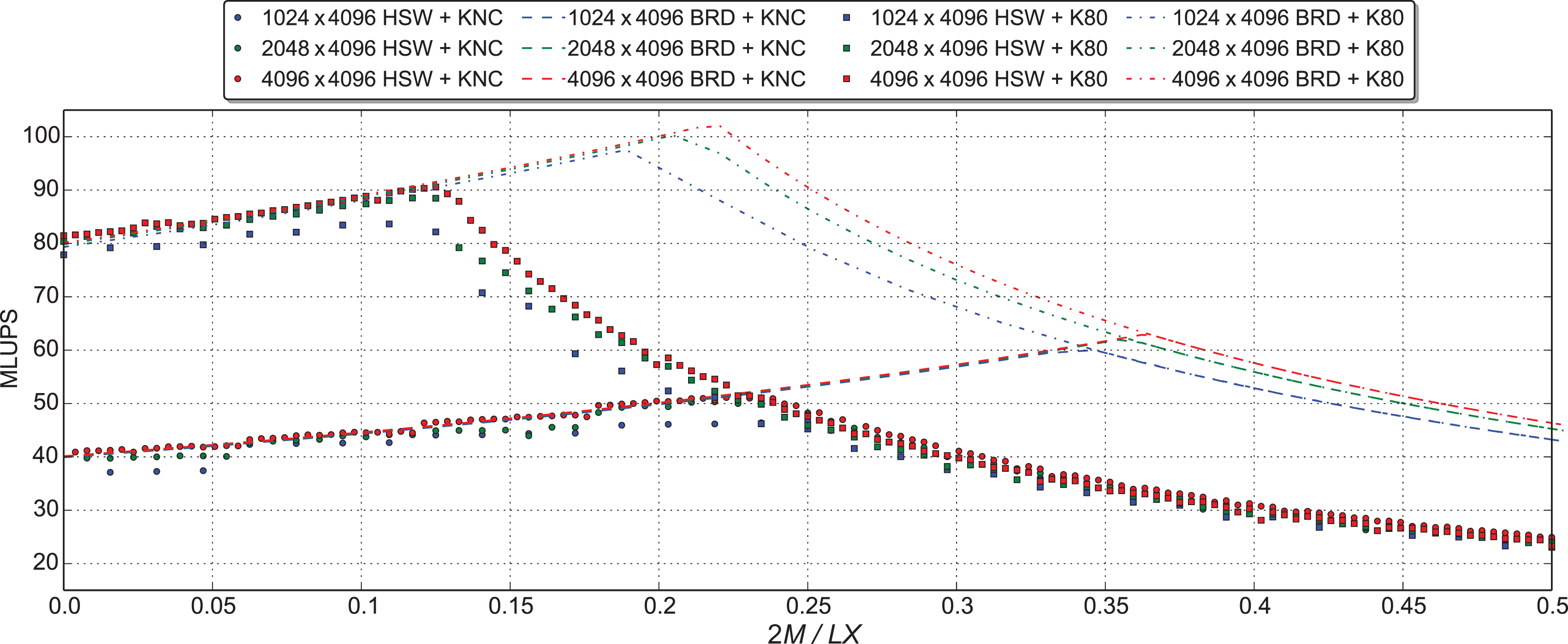

Figure 8 shows the performance of our code for three different lattice sizes as a function of 2

Performance of the heterogeneous code (measured in MLUPS (million updates per second)) for all three platforms, as a function of the fraction of lattice sites (2

6.2 Fine-tuning of data layout cluster size

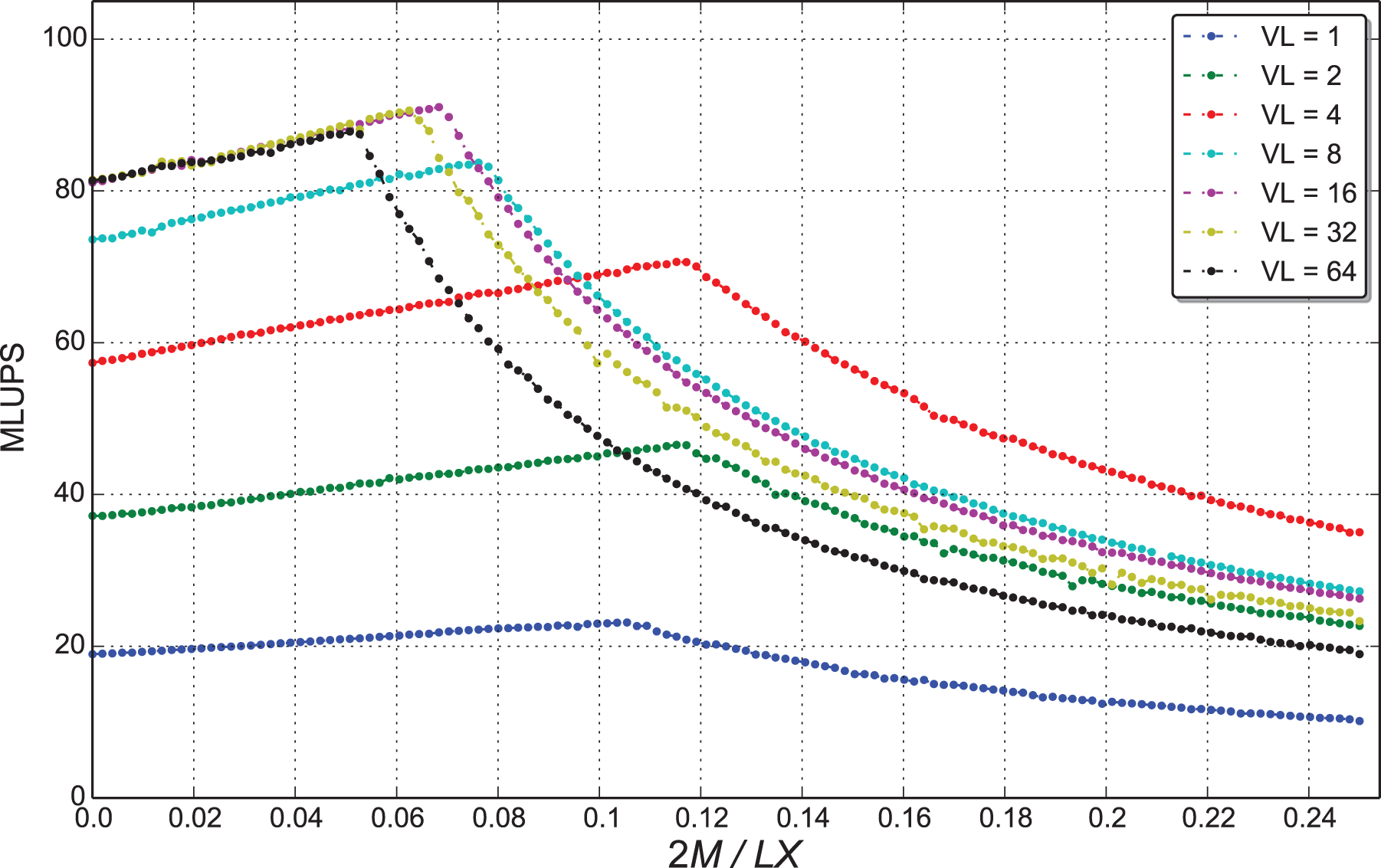

An important parameter of the CAoSoA layout is the cluster size

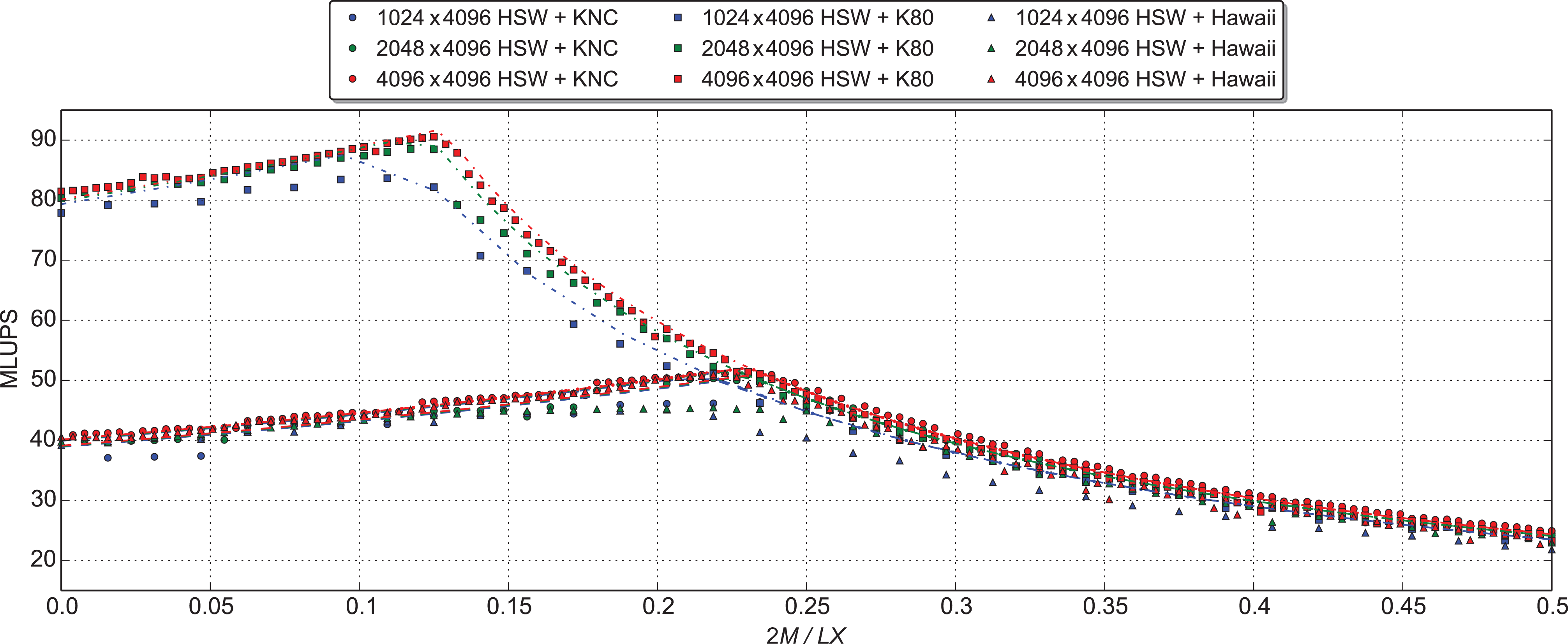

Figure 9 shows the impact on performances (measured again in MLUPS) of our code running on a node with K80 GPUs and using different choices for

Performance (measured in MLUPS (million updates per second)) for different values of the

Impact on performance of different cluster sizes (

6.3 Performance prediction on new hardware

A further interesting result of the model developed in Section 6.1 is that we can use it to predict to which extent the performance of our codes is affected if either the host CPU or the accelerator is replaced by a different processor; in particular, one may ask what happens if announced but not yet available processors or accelerators are adopted. One such exercise replaces the host processor that we have used for our previous tests with the new Intel multi-core Xeon E5-2697v4, based on the latest

Performance predictions (dashed lines) of our model for a would-be system using as host the recently released Broadwell (BRD) CPU compared with measured data on a Haswell (HSW) CPU (dots). Measurements refer to three different lattice sizes.

7 Scalability performances

In this section, we analyze scalability performances of our codes running on the

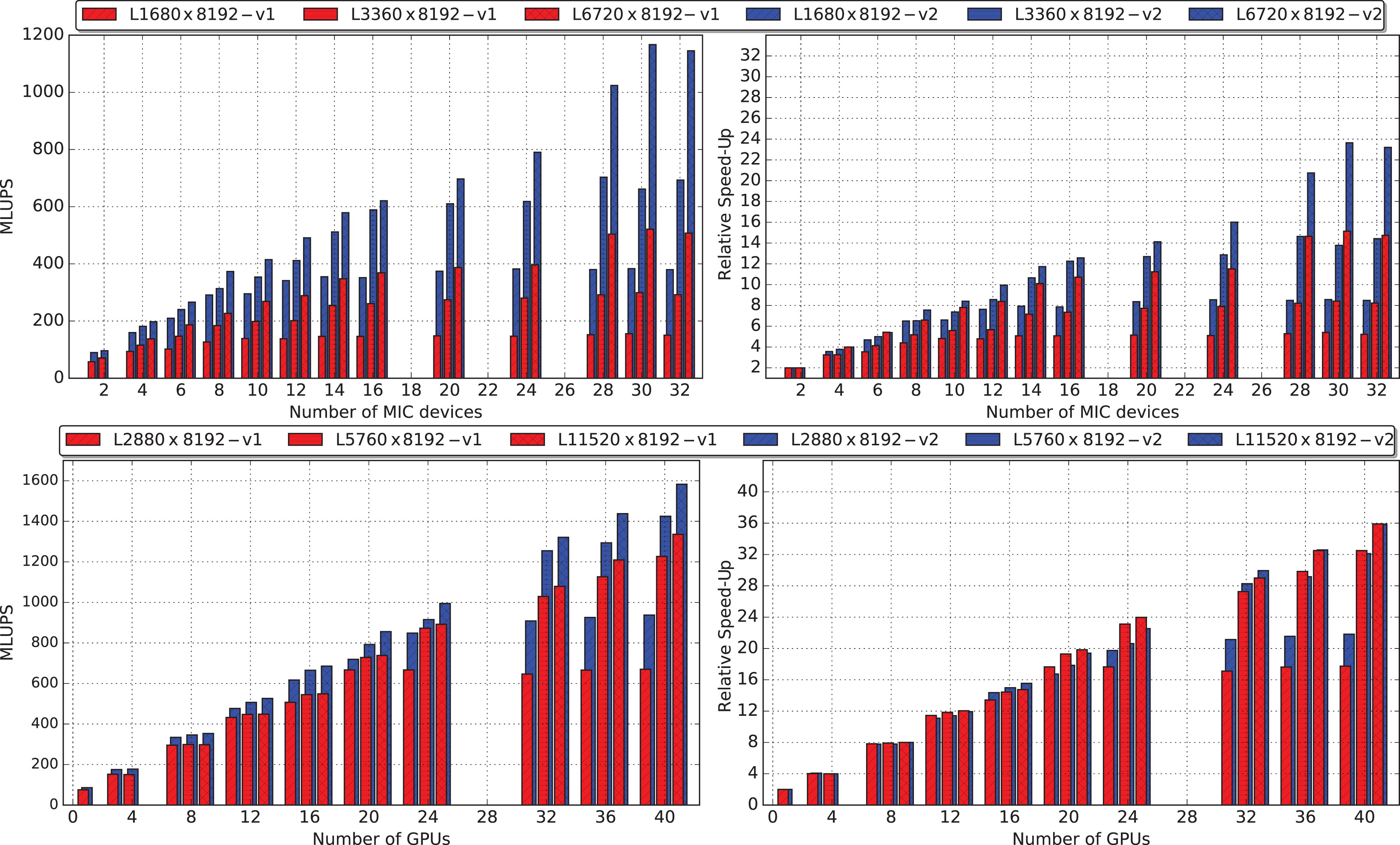

In Figure 12 (top left), we show the performance of our code running on larger and larger KNC partitions of the

Multi-node scalability results measured on the KNC (top) and K80 (bottom) partitions of the Galileo cluster. We compare performances—MLUPS and relative speed-up—on three different lattice sizes of the heterogeneous code described in this paper (v2) with an earlier version (v1), with all critical computational kernels running on accelerators only.

In Figure 12 (bottom left), we show the performance of our code running on the K80 partition. In this case, owing to the larger difference in performance between the host CPU and the accelerator, we have a different behavior. The newer version (

8 Analysis of results and conclusions

In this section, we analyze our results and outline our conclusions.

The first important contribution of this work highlights the critical role played by data layouts in the development of a common LB code that runs concurrently on CPUs and accelerators and is also

This paper introduces two slightly more complex data layouts, the CSoA and the CAoSoA, to exploit vectorization on both classes of architecture while still guaranteeing efficient data memory accesses and vector processing for both critical kernels. These data structures differ from those used in Shet et al. (2013a) and Valero-Lara et al. (2015) in the way that populations are packed into SIMD vectors; this allows operations involved in the

The CSoA layout improves performance on Intel architectures by factors of 2 over the AoS for

It can be interesting to compare our results with those obtained by Shet et al. (2013a), who have also analyzed the relative advantages of several data layouts. This comparison is necessarily partial, since Shet et al. (i) use three-dimensional lattices and a different LB scheme (D3Q27); (ii) run on earlier processor architectures (Intel E5-2670, using the SandyBridge micro-architecture); and (iii) do not use accelerators, so they map the whole lattice on the CPU. In spite of these differences, the comparison can still be meaningful for the

The definition of the appropriate layouts has allowed us to code our LB application in a common program that executes concurrently on host CPUs and all kinds of accelerators, obviously improving the portability and long-term support of this code.

Another important contribution of this paper is the development of an analytic model (see Section 6.1) that is able to predict the optimal partitioning of the workload among host CPU and accelerators; using this model, we can automatically tune this parameter for best performance on running systems, or predict performances for not yet available hardware configurations (see Section 6.3).

The final result of this contribution is that single node performances can be improved by ≈10–20% with respect to earlier implementations that simply wasted the computational power offered by the host CPU. Our implementation also significantly improves the (hard)-scaling behavior on relatively large clusters (see Figure 12). This follows from the fact that node-to-node communications in our code do not imply host-accelerator transfers. This improvement is very large on KNC-accelerated clusters, while on GPUs, owing to the larger performance unbalance between host and accelerator, the observed improvement is smaller.

In conclusion, an important result of this work is that it is possible to design and implement directive-based codes that are performance-portable across different present—and hopefully future—architectures. This requires an appropriate choice of the data layout, which is able to meet conflicting requirements of different parts of the code and to match hardware features of different architectures. Defining these data layouts is today in the hands of programmers, and still out of the scope of currently available and stable compilers commonly used in the HPC context. For this reason, on a longer time horizon, we look forward to further progress in compilers allowing data definitions that abstract from the actual in-memory implementation, and of tools able to make appropriate allocation choices for specific target architectures.

Another related open question, that we leave for further investigation, is whether our proposed hybrid approach has a positive impact on energy-related aspects (e.g. reducing the value of

Footnotes

Acknowledgement

We thank CINECA (Bologna, Italy) for access to the Galileo computing cluster.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was conducted within the framework of the COKA, COSA and Suma projects of INFN. AG was supported by the European Union’s Horizon 2020 research and innovation program under the Marie Sklodowska-Curie grant agreement (no 642069).