Abstract

A complete understanding of decision-making in military domains requires gathering insights from several fields of study. To make the task tractable, here we consider a specific example of short-term tactical decisions under uncertainty made by the military at sea. Through this lens, we sketch out relevant literature from three psychological tasks each underpinned by decision-making processes: categorisation, communication and choice. From the literature, we note two general cognitive tendencies that emerge across all three stages: the effect of cognitive load and individual differences. Drawing on these tendencies, we recommend strategies, tools and future research that could improve performance in military domains – but, by extension, would also generalise to other high-stakes contexts. In so doing, we show the extent to which domain general properties of high order cognition are sufficient in explaining behaviours in domain specific contexts.

To comprehensively understand the psychological mechanisms underpinning our behaviour in high stakes, applied, contexts (e.g. medical, legal, military or financial decision-making), we must integrate insights from many disparate fields. In this review, we demonstrate this by exploring psychological fields (e.g. judgement and decision-making, category learning, communication) and factors (e.g. cognitive load, individual differences) relevant to understanding the process of decision-making (i.e. choosing between different options) in military contexts. We are particularly interested in military tasks because the nature of the domain means relying on multiple actors, at different levels of a hierarchy, where effective coordination of information across actors is critical, because the stakes are high (Goodwin et al., 2018). The general approach we take is to draw domain general insights from psychology to explore how they apply to a specific domain, and then generalise back to consider the extent to which aspects of cognitive processes are found generally in high-stake, dynamic, real-world contexts,

The review is organised in the following way. We begin by outlining a particular scenario in maritime military decision-making. We structure our review by dividing the scenario into three distinct tasks and considering the relevant psychological literature of each in turn. We end by identifying and discussing the key psychological factors that are important to consider for all three stages and discussing potential areas where improvements are possible.

The Scenario

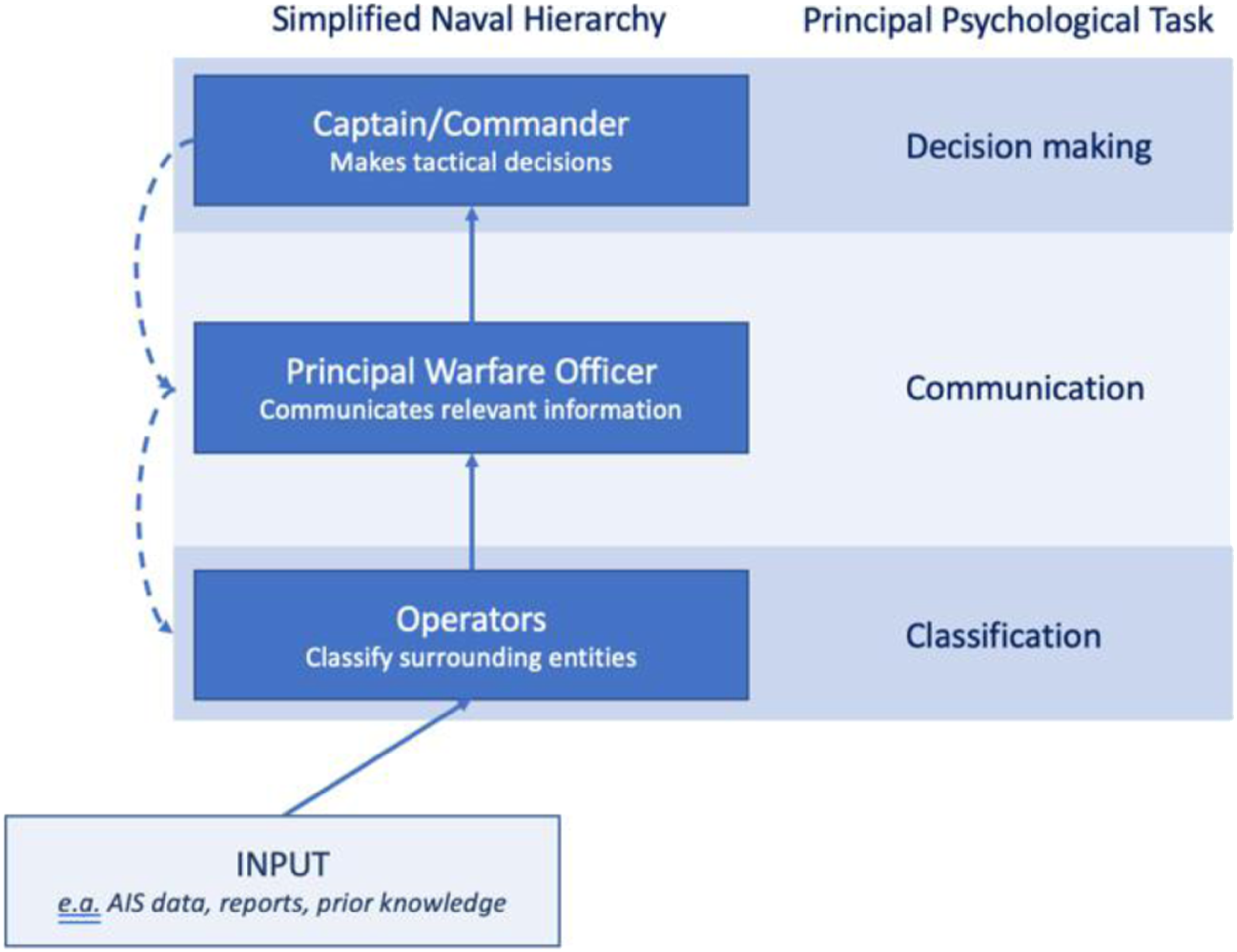

So, what do we mean by ‘maritime military decision-making’? By definition, maritime decision-making encompasses all the decisions that might be made at sea, from whether to enter potentially hostile waters, to whether to stock up the ship’s freezer at the next port. In this review, we focus on one specific, narrowly defined, scenario – from which we subsequently draw broad parallels to other areas of high stakes decision-making. Specifically, we consider the key tactical decision of whether, where and how a ship engages a nearby enemy vessel (Holmes, 2001). For a simplified visualisation of the scenario, see Figure 1. Simplified Naval structure shown alongside the principal psychological process shown at that point. Solid arrows represent the main direction of information flow as considered in the current article. Inclusion of the dashed arrows acknowledges that, in reality, there will be bidirectional communications.

A widely accepted prerequisite for making tactical decisions is Maritime Domain Awareness (MDA); an understanding of how the ship relates to its surroundings (Carvalho et al., 2011; Hammond, 2006). One aspect of this requires commanders (or other decision makers) to have awareness of the physical locations of surrounding craft as well as other relevant information such as their type (e.g. whether it’s an aircraft, submarine or ship) and especially their status (i.e. whether they are friendly or hostile). Thus, the first task in our scenario is to determine the status of surrounding entities – ships, aeroplanes and submarines. Entity status is typically estimated by integrating position data with information from the cooperative Automatic Identification System (AIS; Hammond, 2006). This is a signal emitted by all vessels over a certain tonnage and includes information such as the vessel’s identity number, navigation status (at anchor, underway using engines etc.), speed, direction, and details about course (destination, estimated arrival time etc.).

On a Royal Navy vessel, junior operators situated in the operations room use available positional and AIS data to partition the surrounding entities into four categories: ‘unknown’, ‘neutral’, ‘entity of concern’ or ‘entity of allies’ (‘NATO Joint Military Symbology’, 2017). Unknown is the default category. New vessels that enter the monitored area are automatically assigned Unknown, until an operator decides otherwise. Entities might also be assigned to Unknown if the information available does not exceed the threshold for one of the other categories. Neutral craft are those which will not cause harm but are also not able to help if needed (e.g. a passenger airline). Entities of concern are those likely to be Hostile and, finally, entities of allies are those which are Friendly and could possibly provide or require assistance.

Once an entity’s status has been assigned by operators in the operation room, this information is passed up the chain of command, firstly to the Principal Warfare Officer who integrates the information from multiple operators and then passes up the chain of command to those making tactical decisions. Tactical decisions involve evaluating which of a set of possible actions is the optimal one to take, given information about classifications and positions of surrounding entities (Cummings et al., 2010; Kobus et al., 2001; Matthews et al., 2009). Options are evaluated based on the overarching goals of the mission as well as the proximity and inferred intentions of the surrounding entities and other considerations such as costs and available equipment. Further, these short- and long-term goals may change over time, as does the surrounding situation. Thus, the military planner must evaluate and prioritise between conflicting goals, whilst maintaining an up-to-date awareness and understanding of the situation.

The ‘simple’ scenario which is the focus of the present review can thus be decomposed into three stages: classification of entities’ statuses, communicating that information up the hierarchy and finally, choosing an appropriate action. Each of these stages has parallels in areas of psychological research. In the following, we examine each of these tasks, and their corresponding literatures, in turn.

Classification of Entities

The classification of entities, as described in the previous section, closely mirrors work from the categorisation (or ‘category learning’) literature. In a typical experiment in this field, naïve participants sort stimuli into categories; participants are shown a stimulus, asked to assign it to a category, then are given corrective feedback on every trial (Kurtz, 2015). Generally, categorisation studies aim to understand how participants learn, understand, and generalise the underlying category structure(s), the (usually) experimenter-defined mapping of stimuli to category labels. The category structure is usually chosen to explore the underlying psychological mechanisms of categorisation (Ashby & Valentin, 2018; Kurtz, 2015). Thus, these experiments examine how participants’ performance is affected by varying the category structure factorially with other features of the task. For instance the experimenters may add a concurrent task or increase time pressure by only giving participants a limited time to respond. These manipulations affect the ‘cognitive load’ of the task – that is, how many cognitive resources are needed to complete the task effectively.

How are Classification Decisions Made?

In our maritime scenario, operators estimate entity status from diverse sources of information, principally AIS and position data. This data can be supplemented by information requested from others and by knowledge of the combat theatre. Reframing this as a categorisation problem, operators infer category membership by combining many stimulus dimensions. These stimulus dimensions could be continuous, such as bearing; ordinal, such as overall AIS transmission quality; or discrete, such as destination. This is not only difficult but also highly consequential as some of the objects of interest may be fast moving and pose a serious threat (Finger & Bisantz, 2002; Liebhaber & Feher, 2002; Riveiro et al., 2018). In the following, we will explore the possible mechanisms by which operators may make these categorisation judgements.

The dominant explanation, seen in many different theoretical accounts of categorisation (Pothos & Wills, 2011), is that operators compare information from every stimulus dimension to representations of every category (Nosofsky, 1986; Wills et al., 2020). The entity would then be assigned to the category to which it is most similar, where ‘most similar’ is typically determined geometrically, in terms of minimising the psychological distance between the stimuli and the representation of the category (e.g. Nosofsky, 1986).

To the unfamiliar, this account suggests that categorisation might be a long, tedious, and cognitively demanding process; not ideal during a high-stakes scenario when an enemy fighter jet may be flying towards you at speeds surpassing the speed of sound. However, seminal categorisation research suggests that increasing the cognitive load (by increasing time pressure or adding a second task) resulted in participants using more attributes to categorise (e.g. Kemler Nelson, 1984; Smith & Kemler Nelson, 1984; Ward, 1983). From these results, researchers inferred that people represent stimuli holistically, as an undifferentiated ‘mass’. Thus, comparing multiple attributes would be less cognitively demanding that differentiating a single attribute from that representation.

Another theoretical approach argues that whether people use holistic representations of entities depends on the underlying category structure. The COVIS (COmpetition between Verbal and Implicit Systems) model of category learning proposes a dual-system mechanism (Ashby et al., 1998; Edmunds & Wills, 2016). This theory argues that simple category structures are learnt using rules based on a subset of the available information. Structures that are difficult to verbalise are learnt through an implicit, overall similarity approach, where stimuli are represented holistically. Much evidence has been argued to support this perspective (for reviews see Ashby & Maddox, 2004, 2011; Ashby & Valentin, 2017; Smith & Church, 2018). Given that the operator’s job of classifying an entity as hostile would be hard to verbalise, this approach supports the seminal research in recommending (somewhat paradoxically) that the way to optimise classification in a military setting would be to increase cognitive load.

In contrast, and of critical importance, Wills et al. (2015) showed two critical findings that conflict with earlier theoretical claims. Firstly, participants used a wider range of strategies than previously supposed (many of them resulting in reduced accuracy). Secondly, participants were actually more likely to use fewer dimensions with a greater cognitive load.

In a similar vein, there is no evidence that participants use different types of stimulus representation depending on the category structure (for partial reviews see Newell et al., 2011; Wills et al., 2019). Participants report using rule-based strategies in both simple and complex categorisation tasks (Edmunds et al., 2015, 2016, 2019, 2020). For instance they may categorise using a single attribute or perhaps generate more complex rules (such as conjunctions or other combinations of single dimension rules). Thus, recent evidence suggests that participants are most likely to use a subset of the available information using a rule-like strategy, even though this type of strategy often results in reduced accuracy.

In sum, the categorisation literature suggests that only when participants have enough time or resources do they use all the available dimensions to categorise. The rest of the time they use a ‘satisficing’ heuristic: they use less of the available information than would be optimum (Simon, 1947). This finding is mirrored in the defence literature. For instance Liebhaber et al. (2000) found that the number of potential cues available to inform the classification of an entity as a threat was independent of the actual threat rating given to an object. That is it appears that military planners classify entities based on a small subset of attributes rather than integrating all the information together. Further, the attributes that are used depend on the prior knowledge of threat profiles that correspond to the available data the military planner has available to them. Altogether, this work suggests that minimising cognitive load may be critical in improving the performance of operators trying to estimate the status of surrounding entities.

On What Information are Classifications Made?

The evidence in the previous section suggests that, in both military and lab settings, it is unlikely that all the available information at any one time will be used to make a classification. Failing to use all the available information could result in serious errors being made in a defence context. For instance operators appear to be susceptible to focussing on information that confirms their initial hypotheses regarding the threat profile of particular entities (Matthews et al., 2009). Narrowing the focus of attention to data that is consistent with prior hypotheses comes at the expense of attending to evidence that contradicts and undermines the focal hypotheses that the planner(s) are working with. Thus, failing to incorporate all the available evidence could have significant negative consequences as potentially relevant information could be neglected.

However, it is also possible that satisficing in this context is an adaptive way of responding given the considerable time pressure. After all, sorting and identifying the most valuable information is key in military decision-making (Mishra et al., 2015). Therefore, if operators are responding using a subset of the available information, but that information is almost as accurate as using all the available information and is made faster, then this is more efficient overall. In the next section, we explore whether people are tactical in selecting which subset of attributes they use.

Do People Classify by Picking the Most Useful Dimensions, or Choosing at Random?

For both participants in psychology experiments and operators on Royal Navy warships, the best approach would be to focus on the information that is most predictive of the outcome(s). For simple categorisation tasks, there is plenty of evidence that participants can identify the most predictive attribute in a unidimensional categorisation task (e.g. Ashby & Valentin, 2017; Shepard et al., 1961; Nosofsky et al., 1994; Wills et al., 2015), and can do so quickly (e.g. Nosofsky et al., 1994; Shepard et al., 1961).

Yet, in the field, operators may have to classify stimuli that are far more complex, under considerable cognitive load. Laboratory studies suggest that, when there are many additional, non-predictive attributes (Vong et al., 2019), or when a cognitive load is added (Wills et al., 2015), participants are less likely to find the optimum simple rule and instead rely on less predictive attribute, resulting in poorer accuracy.

Two other features of our maritime military scenario are that the information that can be used to make classifications changes over time, and there is uncertainty in the data received by the operator (Irandoust et al., 2010; McCloskey, 1996; Potter et al., 2012). Unfortunately, very few categorisation experiments have examined scenarios where the category rule is maintained (for instance Category A represents small stimuli), but the distribution of stimuli changes over time (for instance at the beginning of the experiment, Category A stimuli are about 1 cm, by the end they are around 0.1 cm). Rather, studies have investigated the category structure changing over time (for instance at the beginning of the experiment the participant needed to focus on size, later they needed to focus on orientation). Some have looked at analogical transfer where participants are first trained on one category structure and then in a second phase, they must transfer this knowledge to another set of stimuli, outside of the range of the training stimuli (Casale et al., 2012; Edmunds et al., 2020; Soto & Ashby, 2019; Zakrzewski et al., 2018). Helie et al. (2015) looked at categorisation performance after the category structure was changed (see also Cantwell et al., 2015). Navarro et al. (2013) examined whether people could learn a unidimensional ‘height’ category structure where the category boundary slowly changed over time. Generally, these studies show that people are poor at these tasks: participants are slow to adapt (Navarro et al., 2013), do not take advantage of previously acquired knowledge (Edmunds et al., 2020), or may even fail to notice the change (Cantwell et al., 2015; Helie et al., 2015).

Similarly, uncertainty is rarely explored in the categorisation literature, but the limited available evidence suggests that people find it difficult to incorporate it effectively. For instance people make slower and less accurate responses when unsure about category membership (Grinband et al., 2006). The predictiveness and uncertainty associated with stimulus features in category learning studies are also closely linked with reward. Typically, the studies follow the stimulus-response-feedback protocol (Kurtz, 2015) where reward is often contingent on the proportion of correct responses. Predictive dimensions are rewarded more often, whereas uncertain dimensions are likely to be rewarded less (or perhaps more erratically). This area is particularly relevant given the highly consequential nature of military decision-making.

Given the high stakes in maritime military contexts, the incentives that may influence classification are preventing or minimising significant losses. In the lab, the only way to approximate this ethically is to introduce high stakes by offering a greater reward for better responding. Fortunately for the maritime military context (and the ability to generalise results from the lab to this context) it appears that reward has a negligible effect of the quality of categorisation. Schlegelmilch and von Helversen (2020) examined categorisation of three-dimensional stimuli, where one stimulus in each category was rewarded more for a correct answer than the other stimuli. Generally, they found that adding a high reward reduced performance on the stimuli with lower rewards. However, they also found that the addition of a higher reward had no effect on participants’ self-reported attention to each stimulus dimension. This contrasts with other similar paradigms where the value of the outcomes modulate attention: predictors of high value rewards receive especially high levels of attention (Le Pelley et al., 2016). This evidence suggests that categorisation may only be slightly affected by reward magnitude. Thus, perhaps the extreme possible consequences of categorisation in our scenario may not affect performance. It remains an open question whether the pressure of anticipating a positive reward and the pressure of avoiding a negative consequence can be equated in a categorisation task.

Categorisation: Recommendations, Limitations and Future Work

Is the categorisation literature useful in providing insights to help field operators improve classification of entity status? Generally, the findings reviewed contribute some useful insights. The laboratory evidence suggests that it is unlikely that operators will use all available data in their categorisation decisions (e.g. Edmunds et al., 2020; Wills et al., 2015). Rather, they are likely to focus on a subset of the available information, probably that which they found worked on previous occasions (Liebhaber et al., 2000; Matthews et al., 2009). By being aware of these heuristics, we can design displays and formulate training to help operators make better classification decisions. For instance if we know which types of evidence operators are focussing on, we can predict the types of errors they will make. In other words, the types of entities that will be misclassified given the internal representation of the category structure they have constructed. Thus, we can go some way to alleviating errors that might arise by using suboptimum, but efficient, strategies. Also, the laboratory evidence from the work examining changes in category structure over time suggests that operators might fail to notice changes and thus, may be overconfident in their judgements. However, this is an extremely tentative conclusion that needs to be investigated in future research.

Transferring insights from the general categorisation literature to maritime operators, requires an assumption that those laboratory experiments reported in the literature generalise to the real-world maritime situation. There are, however, key differences between these situations. Generally, laboratory studies of categorisation are substantially more restricted because they must allow researchers to isolate experimental effects from participants’ prior knowledge. In contrast, stimuli outside the lab are far more complex, with many more dimensions. Further, the dimensions in ‘real-life’ stimuli are likely to be correlated (for instance as size goes up, so does weight) whereas most experimenter-constructed categories deliberately avoid interactions between dimensions (Rehder & Murphy, 2003).

There are other differences between a typical category learning study (Kurtz, 2015) and ‘real-world’ classification. Operators’ estimation of a craft’s status may be influenced by the status of other surrounding craft and other features of the environment that are subject to change. Additionally, the dimensions operators are using to classify entities may be unreliable and subject to changing levels of uncertainty. Finally, the data from the AIS signal must be carefully scrutinised for errors or logical inconsistencies and the reason for mismatches ascertained (Riveiro et al., 2018).

Therefore, conducting category learning studies with more complex stimuli and category structures is extremely important to check that the results generalise to military (and other high stakes) contexts. Moreover, devising tasks that incorporate dynamic uncertainty can provide useful insights into the ways in which people detect (or not) discrepancies in changing information, and the reliability of the classifications resulting from it.

Communication

In our scenario, once the incoming data has been classified, it must be communicated to those who need to use it to make tactical decisions. Maritime military decision-making is distributed across individuals and teams (Song & Kleinman, 1994; see Figure 1); information must be passed from operators near the bottom of the hierarchy to commanders near the top. An added difficulty is that this information may still be associated with some uncertainty. For instance operators may only be 80% sure that a craft is friendly or there may be some uncertainty surrounding the craft’s position or direction. Failing to acknowledge and appropriately incorporate uncertainty into decision-making can result in failing to appropriately judge which options are least likely to result in negative outcomes (Kahneman, 2011; Pizer, 1999; Sunstein, 2002, 2003). This means that it is important for commanders to consider any uncertainty associated with the information they are using to make tactical decisions. In other words, successful maritime military decision-making ought to incorporate uncertainty, and clearly. The question is, how are uncertainties best communicated?

What are the Best Ways of Representing and Communicating Uncertainty?

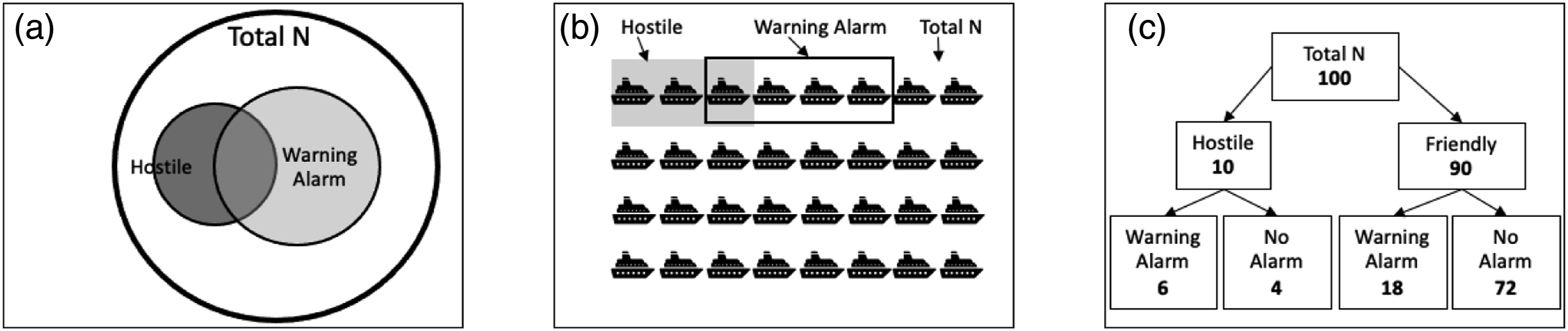

The literature suggests three approaches to communicating uncertainty: verbal probability expressions (VPEs), numerical uncertainty statements, and visualisations. VPEs add a verbal qualifier to a factual statement to indicate uncertainty (Budescu & Wallsten, 1995). Numerical uncertainty statements go a step further and quantify the uncertainty (Joslyn et al., 2011). The simplest numerical statements simply add an explicit, numerical estimate of the likelihood of something, whereas more complex numerical statements might add range information around a numerical estimate. Finally, uncertainty visualisations represent uncertainty visually using symbols or graphics rather than words or numbers (McDowell & Jacobs, 2017). Common visualisations of uncertainty include Euler diagrams, frequency grids, glyphs (discrete objects), hybrids of Euler circles and glyphs, and tree diagrams (see Figure 2 for examples). Examples of uncertainty communications showing the probability of potentially overlapping states in a military context. Here, the probability that a vessel is hostile (as opposed to Friendly), and the presence/absence of an automatic (but not perfect) warning alarm shown as (a) Euler diagram, (b) frequency grid and (c) tree diagram.

These approaches all have their strengths and weaknesses. For instance VPEs are simple and flexible but also prone to misinterpretation (e.g. Budescu & Wallsten, 1995; Dhami & Mandel, 2020; Theil, 2002). Adding numbers tends to increase precision in understanding but perhaps relies on the communicators being proficient in mathematical reasoning (Dieckmann et al., 2012). Visualisations can help and are often spontaneously generated by both civilians and military personnel (King, 2006; B. Tversky et al., 2011; Zahner & Corter, 2010), but the precise format matters. Some visualisations have been found to aid reasoning, whilst others do not (Zahner & Corter, 2010). The general principle that appears to underlie these disparate results is that optimal decision-making is supported by formats that make uncertainty information easiest to use. As ‘easiest’ is highly subjective, this means the optimum communication format is considerably impacted by the attributes of the context, task, and communicators. The focus of the remainder of this section is on visualisation of uncertainty, which is often the common mode of representation of uncertainty that appears in military contexts (Chung & Wark, 2016).

Effectiveness of Visually Communicating Uncertainty

The task in the military scenario we have described relies on operators communicating information to commanders, for the latter to remain apprised of the spatial-temporal context on which tactical decisions are based (Carvalho et al., 2011; Hammond, 2006; John et al., 2000). Practically, commanders need to quickly understand what an entity is (status), where it is (position), where it is going (direction) as well as any uncertainty associated with these dimensions. The research below indicates that the uncertainty communicated by operators needs to be represented in a manner congruent to the dimension it is associated with.

Firstly, consider the uncertainty associated with entity status. This is an inherent property of the entity, and thus, the literature suggests that its uncertainty is best represented intrinsically, where features of the extant display are manipulated, such as changing the colour or shape (Kinkeldey et al., 2014, 2017). In contrast, extrinsic representations of uncertainty add new items to a data display. Finger and Bisantz (2002) found that participants were more efficient when only using icons that were degraded to a greater or lesser degree (representing uncertainty) rather than using them alongside numerical probability estimates, an additional extrinsic element. Kolbeinsson et al. (2015) and Bisantz et al. (2005) also found superior performance with representations that used intrinsic representations of uncertainty for status information.

Secondly, consider the assessment of the entity’s position. Andre and Cutler (1998) showed that spatial uncertainty was communicated most reliably when it was represented spatially (i.e. matching the task at hand). In one of their tasks, participants navigated a spacecraft to a goal, whilst avoiding a meteor. The position of the meteor was noisy, and the authors explored representing this uncertainty in various formats. They found a spatial region of uncertainty around the target (an additional ring) was preferable to other representations (colour or text).

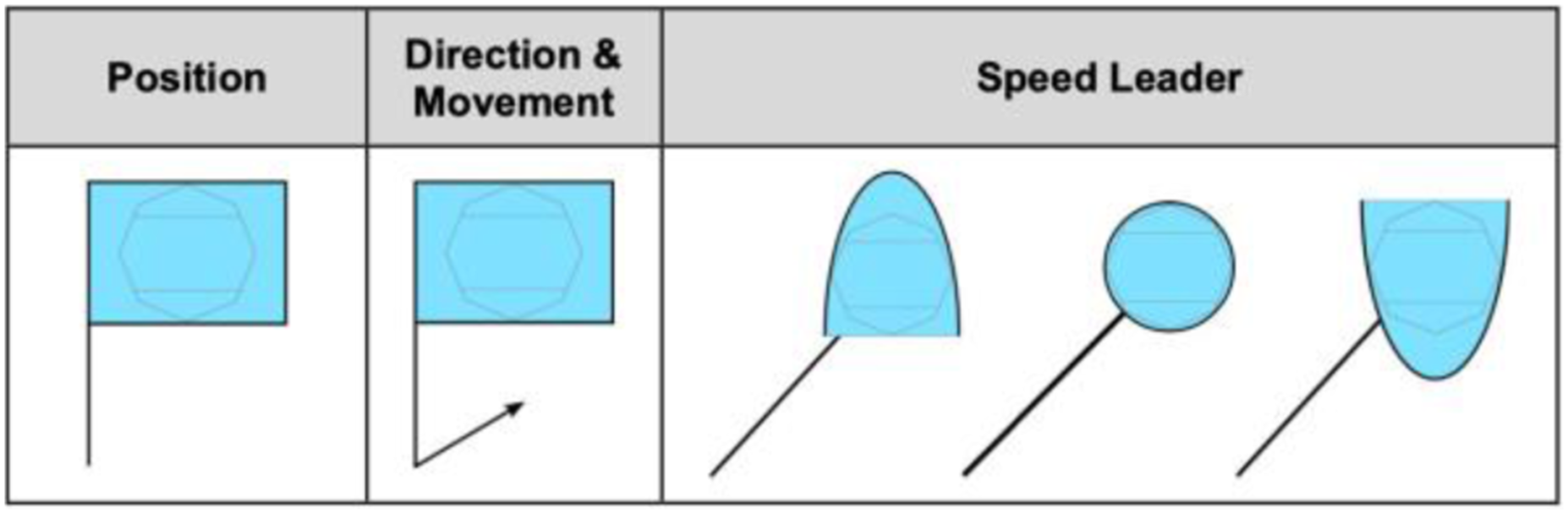

Thirdly, for the assessment of the entity’s trajectory, again visualisations that matched the task enhanced communication of the uncertainty associated with the judgement. Andre and Cutler (1998) asked participants to control a gun turret and shoot down hostile, whilst avoiding friendly, entities. Here, uncertainty was associated with the direction of the craft. Participants who saw visual representations of this uncertainty were not as negatively affected when the uncertainty level was high compared to a version where the uncertainty was displayed numerically. Along the same lines, John et al. (2000) found that directional information was superior to text information when trying to predict where a unit would be in the future.

Thus, this literature suggests that optimal uncertainty communication in this context might be achieved by combining these representations of position, direction, and status. Indeed, this is frequently done in military contexts (see Figure 3 ‘NATO Joint Military Symbology’, 2017). Example of a symbol that incorporates position, speed and direction in a single icon. Taken from ‘NATO Joint Military Symbology’ (2017). The direction of the arrow represents direction, and the length represents speed.

Cognitive Abilities and Prior Experience

Visualisation formats vary in the amount they challenge cognitive abilities (Anderson et al., 2011). Bisantz et al. (2011) used a missile defence game where participants had to select and destroy missiles heading towards a city whilst not harming birds or planes. They found that adding numerical uncertainty information to the visualisations did not improve performance. Finger and Bisantz (2002) found similar results in a task where participants had to classify moving entities as either friendly or hostile (see also Bisantz et al., 2005). Others have found that including numbers can reduce the speed (Andre & Cutler, 1998; Finger & Bisantz, 2002; Hope & Hunter, 2007) and accuracy (Andre & Cutler, 1998) of decision making with uncertainty visualisations (for a review see Kinkeldey et al., 2017).

Some of the practical benefits of using visualisations can be directly tied to the fact they reduce cognitive load. Hegarty and Steinhoff (1997) found that participants with lower visual working memory capacity, compared to those with a higher working memory capacity, were helped more by diagrams when solving a mechanical reasoning problem. This suggests that the diagrams might perform as external or distributed memory. Additionally, visualisations, compared to verbal, auditory communications, allow for asynchronous communication. As mentioned above, maritime military decision makers are often not in the same place as those gathering and classifying the information needed for a decision. Thus, to obtain the required information, decision makers monitor both an auditory communication channel and visual displays. Performing a visual task can result in inattentional deafness, where people fail to hear auditory stimuli because of high visual perceptual load (Molloy et al., 2015). This suggests that serious problems may arise by decision makers missing key pieces of information from one or other of these sources of information. However, visualisations are easier to understand and change more slowly. Thus, decision makers can go back and double-check information they might have missed.

Thus, this literature suggests that by trying to include all the available uncertainty information simultaneously, we might increase the cognitive difficulty of the task beyond the point where communicating uncertainty is useful. However, people may be able to compensate for this limitation by using the representation as an external memory store. Indeed, this might explain why improvements associated with communication format are moderated by prior experience. Kirschenbaum et al. (2014) looked at a submarine task where participants were asked to manoeuvre a submarine to torpedo another craft. Again, they found that participants performed better when positional uncertainty was represented spatially by a ring, rather than numerically in an associated table. However, in this experiment the benefit was limited to novices. This contrasts with previous evidence that found that experienced naval commanders also performed better when visual uncertainty information was included (Kirschenbaum & Arruda, 1994). One explanation for this apparent discrepancy is that the failure of spatial visualisations to improve performance for experts in Kirschenbaum et al. (2014) may be due a ceiling effect: the experts found the task easy, even with sub-optimum display formats.

Interpretation Errors and Biases

Although the evidence consistently suggests visualisations as the medium for communication in our scenario, the specific format of those visualisations still needs to be carefully considered. This is because in the literature there is no overall superior pictorial representation of uncertainties that has marked facilitative effects on decision-making performance (Binder et al., 2015; Böcherer-Linder & Eichler, 2017; Micallef et al., 2012; Spiegelhalter et al., 2011). For instance the use of icons (or glyphs) has been shown to be generally effective in some studies (Zikmund-Fisher et al., 2014) but not others (Sirota et al., 2014). There is also not clear evidence that Euler diagrams are reliably effective in generating superior decision making (Micallef et al., 2012; Sirota et al., 2015; Sloman et al., 2003). Similarly, the use of roulette wheels in some studies only show marginal improvements (Brase, 2014; Starns et al., 2019), whereas others show significant superior performance as compared to tree-diagrams and text-only representations (Yamagishi, 2003). Others again have shown that tree diagrams are extremely effective as compared to other visual aids and text-only information (Binder et al., 2015; Friederichs et al., 2014). This demonstrates the necessity to consider the requirements of the specific individual context when designing an appropriate visualisation.

The existence of cognitive biases is an important issue to consider when selecting a visualisation format. Here we consider three biases. Deterministic construal error is where someone misinterprets an uncertainty communication as a deterministic one (for alternatives see Gigerenzer et al., 2005; S. Joslyn & Savelli, 2010; Juanchich & Sirota, 2016; Morss et al., 2008, 2010; A. H. Murphy et al., 1980; Sink, 1995). The closely related containment error is where people perceive uncertain, fuzzy boundaries as representing fixed, deterministic points (Brown, 2004; Lundström et al., 2007; MacEachren et al., 2005; Ruginski et al., 2016). Finally, anchoring might guide the qualitative nature of such a construal (e.g. Broad et al., 2007; Oppenheimer et al., 2008; Tversky & Kahneman, 1973; Turner & Schley, 2016). Thus, the most effective visualisations will be those which minimises the likelihood of these errors being made.

Correcting biases. Identification of such biases can aid with the design of visualisations such that participants are guided towards the correct inferences and away from incorrect inferences (see also Card et al., 1999; Padilla, 2018). Some ways of doing this have begun to be explored. The basic approach is to use features of attentional processes to design visualisations for which questions about the underlying data can be answered more easily, more accurately or faster (Jänicke & Chen, 2010). In other words, designing the visual representation so that it matches the demands of the task (Vessey, 1991; 1994; 2006; Vessey & Galletta, 1991).

Human visual attention can be broadly defined as two processes: one bottom-up and stimulus-driven, the other top-down and goal-driven (Corbetta & Shulman, 2002; Petersen & Posner, 2012). Bottom-up attention is driven by external stimuli (Padilla, 2018) and is typically characterised as automatic, unconscious, and physiologically based (Connor et al., 2004). Visualisations can leverage bottom-up attention by manipulating the salience of visualisation components (Padilla, 2018). Visual salience is the degree to which an item stands out from those nearby (Jänicke & Chen, 2010). These items might ‘pop-out’ due to differences in colour, shape, orientation, size or movement (Fabrikant et al., 2010; Haroz & Whitney, 2012).

However, bottom-up attention is often modulated by top-down processes. Top-down attention involves deliberately searching for features in relation to a goal and is thought to be slow, conscious and effortful (Connor et al., 2004). Haider and Frensch (1999) found that task-redundant information tends to be ignored at the perceptual rather than the conceptual level. Visualisations that do not comply with existing schemas are generally less effective (Padilla, 2018), whereas those that respect commonly inferred meanings are more effective (Norman, 1988; B. Tversky et al., 2011). For example if designing a coloured ‘danger’ scale in the UK, it would be misleading to provide a key whereby green represented a dangerous location and red the safest location, since this is contrary to people’s common expectations (Wogalter et al., 2002). However, the opposite would be true in China where red is more likely to have positive connotations (e.g. He, 2009; Yu, 2014).

The interaction of top-down and bottom-up processes can also improve how people interpret and use uncertainty information. Bisantz et al. (2009) tested the degree to which people agreed (with no instruction) on the mapping of different levels of saturation, brightness and transparency to uncertainty. They found good agreement between individuals that greater brightness, saturation, and opaqueness (i.e. more ‘intense’ colouring) was typically associated with more certain information. However, this result was found to be driven predominantly by the degree of contrast between the target object and the background, and to be qualified by the requirements of the task. Objects with greater contrast against the background were perceived as more relevant for the task at hand. That is if the task was to identify uncertain data points, greater contrast (and therefore visual saliency) was seen as representing greater uncertainty. This highlights the importance of considering the key requirements of the decision-maker (Doyle et al., 2019; Griethe, 2006; Hegarty et al., 2016; Kinkeldey et al., 2014; 2017; Loucks, 2003; Pang et al., 1997) who will be using the visualisation, such that the most task-relevant information can be highlighted within an uncertainty visualisation.

Communication: Recommendations, Limitations and Future Work

Is the communication literature useful in providing insights to help recommend how to represent uncertainty to military personnel? There are important insights from the vast literature on communication of uncertainty that can be applied to the military domain. The literature on effective communication suggests that the optimum format is one that best matches the information to be communicated. Thus, visualisations are likely to be superior for communicating the status, position and direction of surrounding entities to decision makers. In this maritime military context, visualisations are less likely to be misunderstood and additionally allow for asynchronous communication, thereby reducing the cognitive load associated with understanding incoming information.

Do laboratory experiments examining communication of uncertainty generalise to military contexts? The literature allows us to infer some general principles to be considered when designing displays for use in military contexts. However, the literature also suggests that it is very hard to predict exactly which factors are most important given a particular scenario, with a variety of personnel with different backgrounds. So, selecting the precise format these visualisations should take requires some finesse and further experimental study. Interpretation of visualisations can still be subject to cognitive biases, whether general pitfalls or those due to communicators’ unique abilities or background. One approach to minimising misunderstanding is to take advantage of attentional processes. Visualisation designs need to make sure that both top-down and bottom-up attentional processes support optimal performance in tasks. This means that the information that is conceptually most important to the task should be the most salient (Vessey, 1991; 1994; 2006; Vessey & Galletta, 1991). This principle has shown to be effective in work by Hegarty et al. (2010). When trying to predict weather, participants were better able to use the (task-relevant) pressure information on a map when this information was highlighted with colour, rather than task-irrelevant temperature information. When these attentional processes are aligned, people will not have to work to inhibit irrelevant information.

Making Decisions

So far in our scenario, the operators have determined the statuses of surrounding entities and communicated this information, along with the associated uncertainty, to a key decision-maker (e.g. the Captain or their delegated Commander). In the final stage, the decision-maker must now decide what action to take (Szeligowski, 2018). They might decide to change course, engage an enemy craft, communicate with other vessels or do nothing (Lipshitz & Strauss, 1997). In the following, we review the literature relevant to making these types of dynamic decisions.

Dynamic Decision-Making

This scenario has much in common with tasks in the so-called dynamic decision-making literature (for reviews see Holt & Osman, 2017; Osman, 2010, 2011). Studies in this area are concerned with investigating how decision makers cope with an environment where events and their probabilities change over time. Dynamic decision-making is also iterative. It requires sequential decision-making, where each decision taken generates an outcome that requires a further decision to be made (making the decisions interdependent over time). The outcomes of the decisions change over time, their effects are cumulative and any changes experienced can result directly from the decisions made as well as independently of them (i.e. endogenous properties of the system; Brehmer, 1992; Dörner & Schaub, 1994; Holt & Osman, 2017; Osman, 2010). Thus, whether or not the changes experienced are stable or unstable (Osman, 2011), whether the outcomes of decisions are experienced in real time or only periodically (frequent vs. intermittent outcome feedback; Osman et al., 2017), whether or not the goals of the decision process are highly specific or general (Osman, 2008a), and whether or not the outcomes of the decisions taken also included additional information (augment positive vs. negative feedback, financial costs and benefits; Osman, 2012b); all of these features have implications for the effectiveness of the decisions made.

Here, we will discuss the literature about three key factors that affect decision-making in dynamic contexts. Firstly, how the goals of the task influences decision-making. Secondly, we consider the uncertainty inherent in the task. Finally, we discuss the role of feedback in dynamic tasks.

Goals

Research, both in dynamic and military decision-making, has shown that goals can have a substantial impact on the way people make decisions. Generally, studies have shown that in highly uncertain dynamic contexts, specifying a precise, static goal, such as controlling an outcome to precise criteria (for instance aiming for a chemical level of 5) leads to good decision-making performance in achieving that goal. However, when the decision-maker is required to adapt their decision-making to new goals in the same decision-making context, their performance suffers. In contrast, when the goals are broader to start with, then the decision-maker is better able to sample relevant information from the decision context to learn plans of actions to a variety of goals they might face in the future (Burns & Vollmeyer, 2002; Locke & Latham, 2006; Osman, 2008a; 2008b; 2008c; 2012a; Vollmeyer et al., 1996). Moreover, when the costs (e.g. financial penalties) in failing to reach the goal are high, this can lead to highly erroneous decisions over time, regardless of whether the dynamic changes to the outcome are stable or unstable. The reason for this is that the decision-maker is overly concerned with minimising the negative consequences which leads to sub-optimal sampling of the information and poorer decision-making over time (Kerstholt, 1996; Kerstholt & Raaijmakers, 1997).

These findings are echoed in the military literature. Indeed, goals can substantially influence situational awareness (van Westrenen & Praetorius, 2014). The military distinguishes between strategic, tactical and control decisions, each of which focus on different goals. Strategic decisions concern selecting the means to achieve a goal (e.g. ordering tugs), tactical decisions aim to deploy the means to achieve affordances (e.g. positioning the tugs or overtaking), and control decisions concern selecting the means to achieve a desired state (e.g. realising a speed and direction). Thus, the information a decision-maker needs to be aware of critically depends on the type of decision they are making.

However, unlike existing psychological research, military scenarios often have many competing goals at different levels. For instance typically the commander has been briefed on the overarching goals of the mission as well as more specific goals that their vessel is responsible for. In addition, they are responsible for the smooth running of the ship as well as other considerations such as limiting running costs. Thus, the psychological literature still has some ways to go before being able to completely understand how competing goals with varying stakes attached may impact decision-making in such a complex environment.

Uncertainty

Making good decisions in our dynamic decision-making scenario also requires dealing appropriately with uncertainty. Riveiro et al. (2014) examined dynamic decision-making in an air defence task. In this task, participants had to protect a radar station, whilst at the same time monitoring a map of the region to detect possible aerial threats. Practically, the decision makers had to identify and prioritise targets of interest to be communicated to a higher authority to determine the appropriate countermeasures. They found that uncertainty information helped participants make a final judgement more swiftly, although it had no effect on decision accuracy. In other words, in this task uncertainty appeared to make participants more confident their decisions.

However, in other military tasks, uncertainty appears to interact in unpredictable ways with expertise. Kobus et al. (2001) examined experts and non-experts in developing a battle plan in a dynamic tactical scenario. They found that the level of uncertainty interacted with experience in predicting time to gain situational awareness and time to execute their plan. They found that for both high and low uncertainty conditions, experts took more time to gain situational awareness than non-experts. However, in the high uncertainty condition, experts were much faster to then execute their plan, although the level of experience made no difference when uncertainty was minimal. John et al. (2000) also found that increasing uncertainty influenced tactical decisions. However, in contrast to Kobus et al. (2001), they only found this for less experienced Marines in Combat Operation Centres: the less experienced officers tended to adopt a ‘wait and see’ approach showing greater levels of caution.

Perhaps part of the reason for these disparate results may come from how uncertainty is communicated to (and thus understood by) participants. These studies examine uncertainty using different tasks and thus, present the information in differing ways. This is an issue as many studies have found that the more salient the uncertainties around data are, the lower the confidence in decisions, and the greater the likelihood of making more conservative decisions (Finger & Bisantz, 2002; Riveiro et al., 2014; Svenson et al., 2010). Therefore, the differences between these studies could be driven by an interaction between the salience of information and expertise. After all, experts are obviously more experienced with the task. However, they may not have experience with how exactly the abstract research task is presented to them.

Dynamic tasks can also change their contingencies over time and there is preliminary evidence that participants are sensitive to this. When the fluctuations in outcome are moderately stable, decision makers tend to make small conservative changes to the system (Osman & Speekenbrink, 2011). In contrast, when experiencing large noisy fluctuations to the outcomes over time, decision makers often made multiple and dramatic changes simultaneously. It is important to note that, in these experiments, decision makers learn to make more effective decisions over time through extensive repeated exposure to the task environment.

In sum, the effect of uncertainty on performance varies with the attributes of the participants and the method by which uncertainty is communicated. These factors need to be explored in much greater depth before we can determine how best to facilitate optimum performance.

Feedback

Optimum decision-making requires decision makers to understand which options lead to which outcomes with what probabilities and select the option that draws them closest to their goal. Receiving feedback is an important part of learning these relationships. In several studies the findings show that outcome feedback (i.e. simply finding out the actual effects on the outcome experienced because of a decision taken) leads to better performance than the presence of either positive or negative feedback (i.e. value judgments attached to the outcomes; Osman, 2012a; Osman et al., 2017). The presence of positive or negative feedback has similar effects to decision makers being aware of the high stakes attached to their decisions, namely that they are overly focused on achieving positive feedback or reducing negative feedback than focussing on what information is relevant in achieving a desirable outcome over time. This aligns with a substantial body of literature looking at the role of feedback in many other decision-making contexts (for a review, see Kluger & DeNisi, 1996). This work suggests that unless the decision-making task is simple, additional feedback other than outcome feedback can, at best, add little additional benefits to decision-making performance, and at worst, impair decision-making performance.

The probabilistic nature of dynamic decision-making tasks adds an additional hurdle to interpreting feedback. Feedback on a single trial is not particularly diagnostic due to the large role chance may play on achieving an outcome. Rather, what is most important is the success of decisions over time. One way of adapting feedback to prevent overweighting of the outcomes on single trials is to give feedback intermittently. That is, providing feedback (as well as reward information, financial benefits, financial costs; or social rewards and costs) periodically at set intervals, rather than every time a decision is made. Unexpectedly, however, direct comparisons of the effect of intermittent versus consistent feedback show that overall dynamic decision-making performance suffered under intermittent outcome feedback (Osman et al., 2017). This is consistent with other work that shows intermittent feedback does not perform well, even with non-probabilistic outcomes (Le Pelley et al., 2019; Smith et al., 2014).

Decision-Making: Recommendations, Limitations and Future Work

Are laboratory studies on dynamic decision-making useful in providing insights to help recommend what strategies to adopt when facing military contexts? Work examining decision-making in dynamic contexts reveals that, whilst people can learn to manage uncertainty and to determine plans of action that require regular interaction with an environment that is constantly changing, there are factors that contribute to less effective decision-making. Directing decision makers to focus on achieving highly specific outcomes, especially in a decision-context that they are unfamiliar with, can overly constrain what they learn, and lead to less adaptive decision-making in the long run. Feedback has a significant role to play in guiding the way in which decision makers act in dynamic environments, because the environments often present a high level of uncertainty. Therefore, signals about the efficacy of decisions taken do provide useful guidance as to how to proceed, but can also overly constrain the focus of attention onto specific, narrow, features of the decision problem of the decision problem at the expense of other useful information.

Do laboratory experiments examining dynamic decision-making generalise to military contexts? Although the experimental research above suggests that participants can be quite successful at dynamic decision-making tasks, there is one key (and likely obvious) difference between the experimental and military contexts that needs to be addressed. The dynamic decision-making scenarios are far simpler than the decision environments seen in military examples. For instance in Osman et al. (2017) participants manipulated the proportions of three inputs to control a single output. In contrast, military decision-making is only becoming more complex as the sophistication, complexity, volume and quality of information on which to base decisions increases (Mishra et al., 2015; Riveiro et al., 2014; Szeligowski, 2018; van Westrenen & Praetorius, 2014). This difference in complexity has implications for interpretation of the results. For example within a simplified laboratory setup it is reasonable simple to determine whether a particular decision was optimal or not. Outside the lab, it is much more difficult.

One approach to judging whether a decision was optimum or not is to look at the strategy that people use to come to their decision. Military decision makers are trained to weigh the pros and cons of each possible option and to select the alternative that leads to the best outcome (Kobus et al., 2001; Lipshitz & Strauss, 1997). However, the evidence suggests that often military decisions in the field are not optimal in this sense (Kobus et al., 2001; Riveiro et al., 2014). In a qualitative study exploring military decision-making, Kaempf et al. (1993) reported that 95% of operators use sub-optimum strategies based on matching the situation to past experiences. However, it is difficult to tell whether this is an appropriate way of dealing with uncertainty. After all, decision makers are limited. They can be limited by gaps in knowledge, computation capacity (e.g. Frühling, 2014; van Westrenen & Praetorius, 2014; Yang et al., 2009), and motivational factors, such as time pressure (e.g. Frühling, 2014) and competing goals (Hammond, 2006; T. Murphy, 2010; van Westrenen & Praetorius, 2014). Further, given the dynamic nature of the environment, it may be the case that using a sub-optimum strategy or heuristic that provides a quick answer may be better than carefully weighing the evidence, given that the scenario may change in that time. This is especially so as decisions in dynamic scenarios are interdependent: if the ship goes down because you failed to take prompt action, there will not be an opportunity to fix the mistake.

One possible way future research might attempt to judge the efficacy of decisions, and thereby improving training and understanding, is by using causal models. We know from the empirical literature is that causal representations are essential in developing plans of actions in learning, decision-making and reasoning (Bramley et al., 2017; Meder et al., 2014; Sloman & Lagnado, 2015). By mathematically modelling the causal relations of a problem, we can better determine the optimal solution. This may be especially important given that there are a host of biases and heuristics found even when studying decision making in static environment (Kahneman, 2011). In addition, fleshing out causal models has been shown to reduce bias (Krynski & Tenenbaum, 2007).

We could also use causal models as a decision-support tool. Much recent work in military decision-making talks about using decision-support tools. However, much of this work focuses on uncertainty visualisation, including uncertainty information so that it can be used in decision-making (e.g. Bisantz et al., 1999), rather than tools to help people make decisions. One approach to improving decision makers’ understanding of the underlying causal structure of the system is to use visualisations to represent that system. Some researchers have begun to explore the effectiveness of diagrams that represent the available options and their associated probabilities in improving decision-making (e.g. Bae et al., 2019; Hänninen et al., 2014; Laskey et al., 2011; Pilato et al., 2012; Riveiro et al., 2014; Snidaro et al., 2015; Svenson et al., 2010; Zhang et al., 2008). Generally, these representations of the available options help decision makers improve their decision-making. However, like the visualisations mentioned above, they can still be subject to misinterpretations. Without training, the decision-maker is liable to impose their own understanding of the decision-problem and re-interpret the diagram to support their own preconceptions.

General Discussion

In this review, we aimed to fully explore the psychological processes involved in a complex decision-making task. Specifically, we focussed on a simplified military scenario: how information is gathered and used in short-term tactical decisions (Figure 1). To make the task tractable, we firstly divided this overarching scenario into three parts (classification, communication and choice) and then examined in detail the relevant literature at each stage. Like many other decision-making tasks, the complexity of the psychological processes involved in the scenario is demonstrated by the wide range of literature reviewed, from basic perceptual processes to the high-level influences of goal format. However, two core psychological factors repeatedly emerged as key factors in performance: cognitive load and individual differences.

Throughout the three stages, we consistently found that task performance was related to participants’ ability to deal effectively with cognitive load. For instance in the communication literature the most successful uncertainty communications are those that minimise the amount of cognitive effort required for understanding (e.g. Andre & Cutler, 1998; Finger & Bisantz, 2002; Kirschenbaum et al., 2014). Participants also tend to ignore uncertainty information. Deterministic construal errors (Gigerenzer et al., 2005), containment (MacEachren et al., 2005) and anchoring (Broad et al., 2007) are all examples of biases where participants mistakenly treat uncertain information as certain and thereby reduce the amount of information they must consider. Further, adding more information does not necessarily improve understanding or performance (e.g. Andre & Cutler, 1998; Bisantz et al., 2011; Finger & Bisantz, 2002; Hope & Hunter, 2007; S. L. Joslyn & Grounds, 2015).

In categorisation and decision-making tasks, the role of cognitive load emerges as a tendency to satisfice: to use less of the available information than would be optimum (Simon, 1947). For instance in categorisation the evidence suggests participants are most likely to use simple rules based on a subset of the available information (e.g. Edmunds et al., 2015, 2018, 2019; Rehder & Hoffman, 2005a; Wills et al., 2015, 2020). Similarly, in dynamic decision-making participants will systematically vary one variable at a time to work out the underlying causal structure of a dynamic task (e.g. Osman & Speekenbrink, 2011; Osman et al., 2017). Therefore, at all levels of the scenario, people are likely to try and minimise the amount of information they use at any one time, at least when learning to figure out what is relevant in the task at hand.

There is nothing wrong with this approach. After all, expertise is often associated with subjectively evaluating the cognitive load of a task as less. However, by considering this tendency carefully we can leverage it to improve performance in these tasks in military contexts: both by using them to promote desirable behaviour and devising strategies to attenuate undesirable behaviours. Some ways of doing this have begun to be explored. For instance in the communication literature some have leveraged features of attentional processes to design visualisations for which questions about the underlying data can be answered more easily, more accurately or faster (Jänicke & Chen, 2010). In other words, designing the visual representation so that it matches the demands of the task (Vessey, 1991; 1994; 2006; Vessey & Galletta, 1991). This suggests that similar approaches may be fruitful in categorisation and decision-making experiments. For instance one could include explicit instructions to attend to a specific, randomly selected attribute every so often to avoid participants focussing on a small subset of the available information.

These results also suggest that cognitive load is likely to be a key factor to consider in other complex decision-making tasks. The literature we reviewed here suggests that one approach to improving performance is by leveraging any small opportunity to reduce load. Such an approach will be beneficial in any high stakes, high load decision making context. For instance the effectiveness of the compounding influence of numerous small changes (e.g. changing visual displays and alert sounds) to reduce load has been demonstrated within medical decision making (Phansalkar et al., 2010). Further, the literature reviewed here suggests that it is often difficult to know a priori which method of communication is most intuitive given a task. Thus, running (adequately powered, Bartlett et al., Under review) user studies that compare communication formats in scenarios as representative as possible is likely to improve final outcomes. For instance military exercises could be used as an opportunity to test different ways of representing information. There is a similar move in medical fields: virtual reality training is becoming more common and more realistic (Ruthenbeck & Reynolds, 2015).

Another tendency that has emerged from the literature is that task performance can vary greatly due to differences between individuals. Although overall there is a tendency to try and minimise the amount of information needed to complete a task, people vary in the information they select to use (e.g. Edmunds et al., 2015; Haider & Frensch, 1996). Similarly, the success of particular uncertainty communication formats often depends on attributes of the recipient such as their background (e.g. Brun & Teigen, 1988; Doupnik & Richter, 2003; Harris et al., 2013), expertise (e.g. Kirschenbaum & Arruda, 1994; Kirschenbaum et al., 2014; Willems et al., 2020), expectations (e.g. Norman, 1988; Padilla, 2018; B. Tversky et al., 2011; Wogalter et al., 2002), cognitive skills (e.g. Hegarty & Steinhoff, 1997). Thus, in some way, successful maritime military decision-making requires adapting to the individual differences of the personnel involved.

So, how do we overcome the difficulties raised by individual differences? Perhaps the most obvious answer is through training. Training is a core part of the military organisation and for the most part these training procedures are extremely effective. However, when it comes to decision-making there still appears to be a discrepancy between the strategies that military personnel are trained to use (Kobus et al., 2001; Lipshitz & Strauss, 1997) and those they actually use (Kaempf et al., 1993). Personnel are trained in how to determine the optimum option (Kobus et al., 2001; Lipshitz & Strauss, 1997). By weighing the pros and cons, personnel are likely to gain a deeper understanding of the situation and thereby, improve their decision-making (Osman & Speekenbrink, 2012). However, this strategy is not consistent with how people make decisions: personnel will very rarely have the time or resources to consider every possible action. Rather, the evidence suggests that they focus on key information and the similarity to past events and choose the best seeming option.

To some extent, the mismatch between the optimal strategy given in training and the ad-hoc strategies generated in the moment may be alleviated by many rounds of training operations. By repeating the same thing over and over, personnel can now use their imperfect strategy (matching to past experience), which will hopefully also match the optimum solution. However, this approach does not take into consideration that, due to their differences, people may interpret these training opportunities differently and thus, inadvertently learn different representations of the task. This suggests that perhaps more individualised training routines may help in making sure that military personnel are all on the same page. Indeed, work on developing more individualised training has begun in the decision-making literature. For instance Parpart et al. (2015) developed active learning algorithms that could better determine participants’ strategies whilst completing the task. Future work could take these algorithms and use them to guide participants to the optimum solution.

Again, this suggests that individual differences need to be carefully considered in other complex decision-making tasks. The literature suggests that assuming that everyone uses the same approach (even broadly) in psychological tasks is something that needs to be demonstrated using appropriate evidence.

Other Factors

This review focused on the flow of information from the bottom of the hierarchy to the top. It implied that the end of the scenario occurs when the commander decides what to do. However, military hierarchies are not one-way systems; information also travels from the top to the bottom, usually via orders (Szeligowski, 2018). Thus, decision-making is not the end of the scenario, rather command is. In other words, a commander’s success depends not only on making the optimum choice but also on implementing it effectively. This highlights a final key factor that influences behaviour in this scenario which we have avoided discussing in the current work: interpersonal dynamics. However, the scope of this literature is wide and would take too much space to explore here, although this is something critically important for future work. After all, making a good tactical decision but being unable to implement it because your crew has mutinied would in the end be equivalent to an incredibly poor decision.

Conclusion

To conclude, maritime military decision-making is an incredibly complex task. However, by drawing together diverse research from three different fields (categorisation, communicating uncertainty and dynamic decision making) we have gained a much greater understanding of the general tendencies that affect performance at each stage. Throughout the three tasks, the evidence shows that people generally try to minimise cognitive load by simplifying the complex environment around them, but that the way they do this varies between individuals. By identifying these two features of complex decision making, we can, in future, exploit them to improve performance in maritime military decision-making as well as other high-stakes scenarios.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by a grant from the Defence Science & Technology Laboratory, UK Government entitled Investigating ways of improving communication of uncertainty to enhance maritime domain awareness.