Abstract

This review highlights noteworthy literature published in 2025 pertinent to anesthesiologists and critical care physicians caring for patients undergoing abdominal organ transplantation. We feature 19 studies from 5334 peer-reviewed publications on kidney transplantation, four studies from 2231 publications on pancreas transplantation, and four studies from 2162 publications on intestinal transplantation. The liver transplantation section includes a special focus on 28 studies from 4925 clinical trials published in 2025. The notable research articles were found in the areas including machine perfusion in kidney and liver transplantations, donor and recipient pain management, and impact of recipient sarcopenia on the outcome in liver transplantation.

Keywords

Introduction

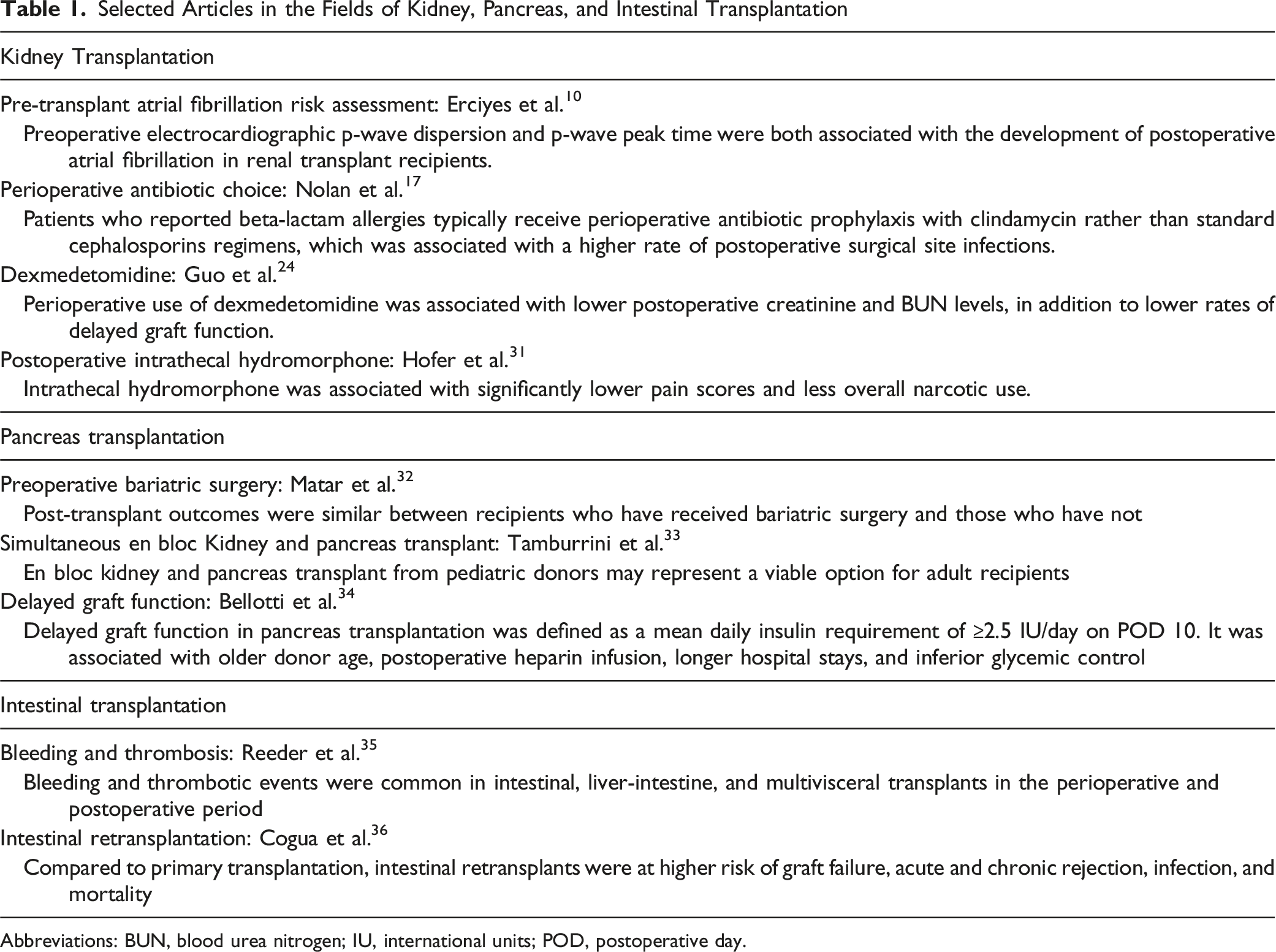

Selected Articles in the Fields of Kidney, Pancreas, and Intestinal Transplantation

Abbreviations: BUN, blood urea nitrogen; IU, international units; POD, postoperative day.

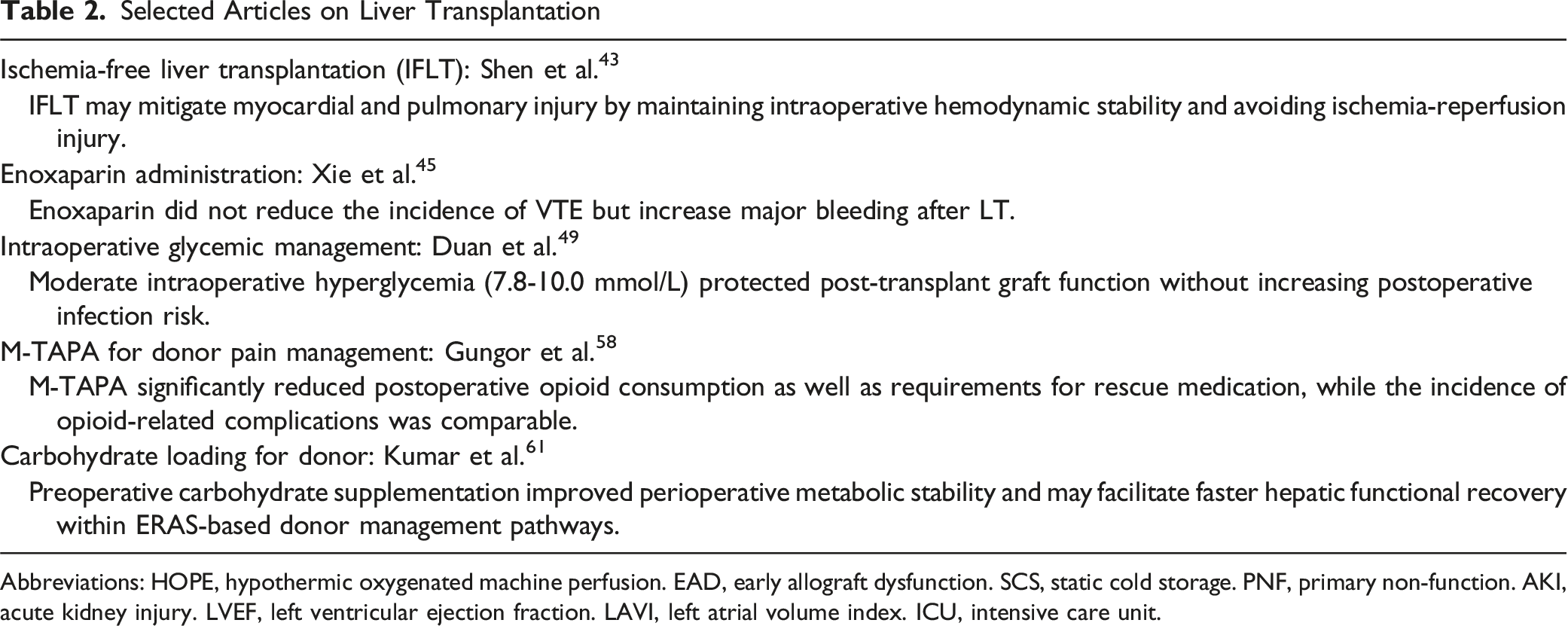

Selected Articles on Liver Transplantation

Abbreviations: HOPE, hypothermic oxygenated machine perfusion. EAD, early allograft dysfunction. SCS, static cold storage. PNF, primary non-function. AKI, acute kidney injury. LVEF, left ventricular ejection fraction. LAVI, left atrial volume index. ICU, intensive care unit.

Kidney Transplantation

OPTN/SRTR 2023 Annual Data Report: Kidney

The calendar year of 2023 saw a record 28 142 kidney transplants performed in the United States, with this growth primarily driven by continual increases in the number of deceased donor kidney transplants (DDKT), 1 with 21 303 DDKT procedures performed in 2023. There were 46 661 adults added to the kidney transplant list in 2023, which continues the strong recovery from the nadir during the COVID-19 pandemic. There were at least 5 years of waiting for 11.4% of patients on the waitlist. Significantly, the rate of recovered deceased donor grafts that were not utilized for transplantation reached a high of 27.7%, notably up from 26.6% in 2022, with even higher rates (41.4%) in those kidneys which were biopsied, kidneys from donors 65 years and older (72.2%), and kidneys with a kidney donor profile index of 85% or higher (72.5%). Disparities in access to living donation, discussed in previous iterations of this report, persist, particularly in non-white and publicly insured populations. 5 Reassuringly, rates of delayed graft function (DGF) appear to have plateaued at 26.1%, after a decade of rising. 1

Preoperative Considerations

Machine Perfusion

It has previously been established that kidney machine perfusion is associated with reduced rates of DGF. Cojuc-Konigsberg et al 6 conducted a retrospective analysis of the United Network for Organ Sharing (UNOS) database inclusive of adult DDKT recipients from 2010 to 2019 to determine if the reduced rates of DGF were associated with improved long-term graft survival. Specifically, only DDKT recipients from dual-kidney donors were included in which one kidney received machine perfusion, while the other kidney was stored using the usual static cold storage (SCS) method. In total, 2355 kidney pairs were included in this review with a median follow-up period of 5.8 years. Prior findings of reduced rates of DGF with machine perfusion were confirmed (odds ratio [OR] 0.41; 95% confidence interval [CI] 0.34-0.51). Moreover, there was a lower risk of long-term graft failure in those kidneys which received machine perfusion (hazard ratio [HR] 0.86; 95% CI 0.75-0.98). Mediation analysis was performed, which found 76.8% of the risk-adjusted association between machine perfusion and long-term graft failure was mediated by DGF.

Given the increasingly apparent utility of machine perfusion, its role in extended criteria donor kidneys has also come into question. Dajti et al 7 examined this in a randomized controlled trial which included 109 kidney recipients between 2019 and 2022, all of whom received an extended criteria donor kidney. These kidneys were subsequently randomized to receive either hypothermic oxygenated perfusion (HOPE) (n = 54) or SCS (n = 55) prior to implantation with a primary endpoint of DGF. DGF developed in 31 (57%) of HOPE kidneys and 37 (67%) of SCS kidneys (P = .3). Similarly, duration of DGF and rates of acute rejection were not different between the groups. However, a subgroup analysis of donor kidneys specifically from older (age>60) extended criteria donors did show a reduced rate of DGF in the HOPE group (OR 0.32; 95% CI 0.12-0.87; P = .026). As such, more studies need to be conducted, ideally with larger study populations, to further elucidate the role of HOPE in extended criteria donor kidneys.

Donor Ischemic Time

With rising national and global demand for kidney transplantations, the utilization of kidneys donated after circulatory death (DCD) remains essential to address the ongoing organ shortage. However, the discard rate of DCD organs remains significantly higher than that of organs procured from donation after brain death (DBD), primarily due to concerns regarding donor warm ischemia time (DWIT). Chumdermpadetsuk et al 8 conducted a retrospective analysis to assess DWIT and its impact on death-censored graft failure. They analyzed the Standard Transplant Analysis and Research (STAR) data set using risk-adjusted analysis stratified by Kidney Donor Risk Index (KDRI). In total, 28 032 DCD kidney recipients were included in the analysis. When stratified by KDRI in quartiles (<0.78; 0.78-0.94; 0.95-1.14; >1.14), a DWIT of 26 minutes was associated with an increasing death-censored graft failure as follows, all in reference to KDRI<0.78: HR1.32 (95% CI 1.14-1.52) for KDRI 0.78-0.94; HR 1.81 (95% CI 1.58-2.08) for KDRI 0.95-1.14; and HR 2.60 (95% CI 2.26-2.98) for KDRI >1.14. When the DWIT was increased beyond 90 minutes, there was minimal impact for recipients with low-risk grafts ≤ 0.94). Higher KDRI scores (0.95-1.14; n = 7046) demonstrated a slightly increased risk of death-censored graft failure HR 1.82 (95% CI 1.57-2.11) at 30 minutes, though the effect leveled off with longer DWIT. However, for the highest risk quartile grafts with DWIT of 90 minutes, the HR for death-censored graft failure rose to 3.67 (95% CI 2.47-5.44). Overall, this is a valuable study which demonstrates the resilience of lower KDRI kidneys when exposed to longer DWITs. Ultimately, this may lead to increased acceptance of such organs for transplantation.

Atrial Fibrillation

Atrial fibrillation is a relatively common postoperative complication after major surgery, and it is associated with increased in-hospital mortality, longer length of stay, and greater risk of stroke and myocardial infarction. 9 As such, appropriate preoperative risk stratification in the transplant population is imperative. Erciyes et al 10 specifically examined the association between preoperative p-wave dispersion (PWD), the difference between the longest and shortest p-wave duration across all leads, and p-wave peak time (PWPT), the time from p-wave onset to its peak, and the occurrence of postoperative atrial fibrillation in kidney transplant recipients via a retrospective analysis. In all, 166 renal transplant recipients were included in this study; recipients with a history of atrial fibrillation or moderate-to-severe valvular disease were excluded. For all included patients, two independent physicians examined preoperative ECGs, calculating PWD and PWPT in lead II and lead V1 (PWPT-II and PWPT-V1, respectively). In all, 39 (23.5%) of the renal transplant recipients developed postoperative atrial fibrillation. Penalized regression analysis revealed hypertension (OR 1.366; 95% CI 0.464-4.017; P = .047), coronary artery disease (OR 2.018; 95% CI 0.438-9.293; P = .034), and PWD (OR 1.133; 95% CI 1.087-1.180; P < .001) to be the most relevant independent factors predicting postoperative atrial fibrillation. Despite the retrospective nature and relatively small sample size included in this study, these findings represent a potential novel avenue to risk stratify preoperative renal transplant recipients or identify high-risk patients who may benefit from postoperative atrial fibrillation prophylaxis.

Intraoperative Management

Anesthetic Technique in Donors

Kidney donation is a well-established risk factor for the future development of end-stage renal disease, despite strict selection criteria. 11 As such, it is imperative for all necessary steps to be taken to avoid further placing these donors at risk of kidney injury and/or failure. It has previously been established that propofol may attenuate ischemia-reperfusion injury (IRI) via its antioxidant properties while sevoflurane has also been demonstrated to have anti-inflammatory properties which may protect against acute kidney injury. However, no direct comparison of the two anesthetic techniques in donor populations currently exists. Cai et al 12 in a randomized controlled trial (RCT) of 70 kidney transplant donors, compared a propofol-based anesthetic technique to a sevoflurane-based anesthetic technique and sought to analyze which technique led to elevated blood markers of renal injury. Such blood markers included kidney injury marker (KIM)-1, interleukin (IL)-18, and tissue inhibitor of metalloproteinase (TIMP)-2, in addition to more universal markers of kidney function such as glomerular filtration rate (GFR), urine output, and cystatin C. Analyses were conducted on the first, second, and sixth postoperative days (POD). Donor groups were identical other than the sevoflurane required higher doses of phenylephrine to maintain blood pressure. All blood markers of kidney injury (KIM-1, TIMP-2, and IL-18) increased in the postoperative period. Similarly, GFR and urine output both decreased. However, no differences in any of the parameters studied were observed between the two donor cohorts, even considering the higher cumulative doses of phenylephrine (750 mcg vs 80 mcg, P < .001) required to maintain blood pressure in the group receiving sevoflurane. Further studies comparing propofol to inhalational anesthetics in kidney transplant recipients to assess their impact on graft function and survival are needed and potentially underway with the VAPOR-2 trial. 13

Fluid Choice

The optimal choice of intraoperative intravenous fluid for kidney transplant recipients has been a subject of debate and study for decades, with practices varying across institutions and internationally. In a meta-analysis, Chang et al 14 analyzed trials comparing normal saline (NS) to plasmalyte (PL), albumin 5-20%, 5% hypertonic saline, Lactated Ringer’s, and sodium bicarbonate solutions. Sixteen total trials published from 2003 to 2023 were included with a total cohort of 2034 patients with the primary outcome again being incidence of DGF. Included RCTs specifically compared PL to NS in six studies, NS or lactated ringers (LR) in six studies, NS to NS plus albumin in two studies, and NS to other crystalloid fluids (NS plus sodium bicarbonate, hypertonic saline, etc.) in four studies. Pooled results demonstrated PL was associated with a significantly reduced incidence of DGF, relative risk (RR) 0.82 (95% CI 0.69-0.96, P < .05) when compared directly to NS. When compared to NS, hypertonic saline and LR had similar rates of DGF. Notably, hypertonic saline, when compared to NS, was shown to have the most marked reduction on POD 1 serum creatinine levels (mean difference [MD] −2.13; 95% CI −2.63 to −1.63; P < .05). No other intravenous fluid type when compared to NS reached statistical significance in this category. These findings redemonstrate that PL is superior to NS with respect to incidence of DGF. Though hypertonic saline showed promising results compared to normal saline with respect to creatinine level reduction, additional studies comparing it to PL and examining their impact on DGF are needed.

Goal-Directed Fluid Management

Ensuring adequate tissue perfusion is widely recognized as a critical role of the anesthesiologist in the perioperative setting. Traditional parameters used to judge fluid status and perfusion, such as blood pressure and central venous pressure (CVP), have ultimately proved unreliable, and goal-directed fluid therapy (GDT) techniques have begun to emerge in a variety of surgical specialties. In the kidney transplant recipient, ensuring ideal organ perfusion in the setting of large volume shifts in a high-risk patient is even more critical and has previously been shown to reduce rates of perioperative edema and respiratory complications. 15 Cristiana et al 16 recently published a multicenter, single-blind RCT comparing conventional fluid management strategies to newer GDT strategies, specifically non-invasive pulse pressure contour analysis with the ClearSightTM system (Edwards Lifesciences, Irvine, California, USA). Outcomes of interest included hospital and intensive care unit (ICU) length of stay (LOS), as well as rates of DGF. Over a period of 32 months, 181 kidney transplant patients were enrolled, with the control group assigned to fluid management with more traditional CVP and invasive blood pressure monitors while the experimental group received ClearSightTM monitoring with target variables for cardiac index (CI) ≥2.5 L/min/m2 and stroke volume variation (SVV) < 10%. Based upon CI and SVV parameters, either additional crystalloid or inotrope/vasopressor was administered. Between groups, recipient and donor characteristics were not statistically different. Total crystalloid volumes between the groups were ultimately similar (6.65 mL/kg/hr in the GDT group and 6.41 mL/kg/hr in the control group, P = 0.869). Overall, no other differences were observed between the groups in terms of hospital LOS or ICU LOS. Similarly, DGF occurred in 50.5% of the GDT group vs 55.6% of the conventional therapy group, which was not statistically significant (P = 0.599).

Antibiotic Choice

Beta-lactam allergies are commonly reported in surgical patients—either true allergy or a side effect—and may influence perioperative antibiotic prophylaxis choice. There has previously been limited data examining whether the use of non-standard surgical site prophylaxis impacts outcomes in the kidney transplant population. Nolan et al 17 conducted a retrospective review of 1523 adult kidney transplantation patients at a single hospital who received transplants between 2019 and 2024. Of this population, 257 (16.9%), reported a history of a beta-lactam allergy. Per hospital protocol, standard surgical site prophylaxis for kidney transplantation is 24-hours of cefazolin in non-allergic patients or 24-hours of clindamycin in patients reporting allergies to beta-lactams. The authors analyzed incidences of both surgical site infections (SSI) and Clostridium difficile infections. No difference in the occurrence of C. difficile infections was noted between the cohorts. However, 15.9% of the group receiving clindamycin developed SSIs compared to only 2.99% of those receiving cefazolin (P = .006). Given the high-risk nature of kidney transplantation and requisite immunosuppression, beta-lactam allergic reactions should be clarified with the patient. Depending on the reaction type, cefazolin may still be the appropriate antibiotic choice due to low cross-reactivity. 18

Sugammadex

Despite growing widespread use of sugammadex over the last decade, its pharmacokinetic and safety profiles in kidney transplant patients remain a matter of debate. Tang et al 19 conducted a comparative, prospective study examining the pharmacokinetic profile of sugammadex in 17 kidney transplant recipients compared to the pharmacokinetic profile in 17 healthy patients (American Society of Anesthesiologists Physical Status Classification I or II without renal disease) undergoing non-transplant surgery. For all patients and procedures, paralysis was maintained with an initial 0.6 mg/kg bolus of rocuronium followed by further boluses of 0.15 mg/kg upon reappearance of a second twitch on quantitative monitoring. Upon surgical completion, 2 mg/kg sugammadex was administered following reappearance of a second twitch. Following reversal, time to train of four ratio (TOFR) > 0.9 was measured, in addition to pharmacokinetic parameters such as mean clearance, maximum plasma concentration (Cmax) and time to maximum concentration (Tmax). Finally, patient safety parameters were closely followed by tracking TOFR for a minimum of 30 minutes after reversal, clinical signs of respiratory difficulty, or any signs of TOFR <0.9 after initial complete reversal. No difference was observed between groups for geometric mean recovery times (2.04 min vs 1.99 min, P = .866) between cohorts. Sugammadex clearance was significantly prolonged in the kidney transplant group (15.2 mL/min vs 82.5 mL/min, P < .001) resulting in a significantly prolonged half-life (t1/2) (10.0 h vs 2.0 h, P < .001). No such differences were observed in either Cmax or Tmax. Bradycardia, a documented adverse effect of sugammadex, was seen in one patient in each cohort without adverse clinical sequelae. No cases of recurrence of neuromuscular blockade, defined as TOFR <0.9, was observed in either cohort. Though sugammadex clearance was slower in the kidney transplant population, it was still safe and effective for the reversal of rocuronium.

Desmopressin

Desmopressin, a synthetic analog of vasopressin, is commonly used to prevent uremic bleeding, a common concern in patients undergoing renal transplantation. However, it can complicate the assessment of graft function as desmopressin is associated with oliguria, albeit this oliguria is not due to an impairment of GFR. Mondal et al 20 conducted a retrospective single-center cohort study at a major tertiary care center evaluating the impact of desmopressin administration on urine output in the immediate postoperative period. The study was inclusive of 938 patients receiving cadaveric kidney transplants. Desmopressin was administered to 359 (38%) patients at a dose of 0.3 mcg/kg of actual body weight at the discretion of the surgeon when uremic coagulopathy was suspected. The remaining patients received no desmopressin during transplantation (n = 579). Urine output during the perioperative period, the primary outcome, was significantly lower in the desmopressin cohort vs the control group (2.5 cc/kg vs 3.1 cc/kg, P = .04). To account for potentially longer operative times due to surgical complexity, urine outputs at 12- and 24-hours postoperative were followed and similarly demonstrated reduced urine output in the desmopressin group (12-hours: 17.3 cc/kg vs 20.4 cc/kg; 24-hours: 27.3 cc/kg vs 34.0 cc/kg; both P < .05). One potential confounder is there were greater estimated blood loss and elevated rates of packed red cell administration in the desmopressin group compared to controls (0.60 vs 0.30 units, respectively, P = .001). This difference was likely due to higher bleeding risk patients preferentially receiving desmopressin. DGF incidence was similar between the cohorts (34% vs 37%, P = .09). As such, desmopressin may reduce urine output in newly transplanted renal grafts, yet this study suggests there is no impact on the incidence of DGF.

Aspirin

Aspirin use is widespread, particularly in patient populations at risk for coronary artery disease or who have had prior intracardiac stents, with wide overlap between this population and patients with end-stage renal disease. As such, many transplant recipients present to the operating theater with recent, daily aspirin use. Prior data on aspirin use and perioperative bleeding in the kidney transplant recipient is limited and largely inconclusive. Friedersdorff et al 21 retrospectively analyzed 157 kidney transplant recipients, 59 (38%) of which were taking aspirin on the day of their transplant compared to a control group of 98 (62%) patients who were not taking aspirin. The aspirin cohort was older and had a predictably greater burden of cardiovascular disease. No differences were observed between groups in terms of the magnitude of hemoglobin decrease across the perioperative period (0.93 vs 1.06 g/dL, P = .31), need for intraoperative transfusion (0% vs 1%, P = .44), postoperative transfusion (22% vs 13.3%, P = .15), or postoperative hematomas (28.8% vs 26.5%, P = 0.75). Daily aspirin therapy was not associated with clinically significant bleeding in the kidney transplant population.

Dexmedetomidine

Dexmedetomidine, a highly selective alpha-2 adrenergic agonist, has anti-inflammatory properties via its modification of pro-inflammatory cytokine release. 22 Furthermore, dexmedetomidine may have reno-protective effects during ischemia-reperfusion events. 23 Guo et al 24 conducted a meta-analysis to examine the current body of evidence for dexmedetomidine and its benefits in the renal transplant population. Specifically, they evaluated eleven studies (n = 1417 kidney recipients) examining dexmedetomidine and its effects on serum creatinine, blood urea nitrogen (BUN), urine output, and DGF were. Dosing protocols in the eleven studies typically included a loading dose of 0.5-1.0 mcg/kg followed by a maintenance infusion of 0.2-0.7 mcg/kg/hr, initiated on anesthesia induction and maintained for various time periods, including until 30 minutes after reperfusion to two hours after surgery-. Pooled standard mean reduction (SMD) of creatinine levels in dexmedetomidine patients was −0.75 (95% CI −1.18 to −0.32), pooled SMD of BUN levels was −0.87 (95% CI −1.30 to −0.44), and urine output was higher (SMD 0.98, 95% CI 0.23-1.74), all of which indicate improved graft function. Finally, dexmedetomidine significantly reduced the incidence of DGF with a pooled OR of 0.71 (95% CI 0.52-0.97). These are promising results; however, the pooled studies were quite heterogeneous, and additional safety endpoints such as rates of hypotension and bradycardia were not studied or documented. 25

Remimazolam

Remimazolam is a novel ultrashort-acting, hemodynamically stable benzodiazepine with organ-independent metabolism, potentially rendering it a useful anesthetic for end-stage organ failure patients. However, given its novel nature, international experience and data tend to be limited. Wu et al 26 conducted a randomized controlled trial in 86 living donor kidney transplant recipients at a single center assessing the perioperative efficacy and post-transplant renal outcomes of remimazolam in comparison to propofol. With 43 patients in each trial arm, remimazolam patients were induced with a bolus dose of remimazolam (0.2 mg/kg) followed by a maintenance infusion of 1 mg/kg/hr. Induction in the propofol-based control arm was achieved with a bolus dose of 1.5 mg/kg propofol and maintenance with an infusion of 4-10 mg/kg/hr. Both trial arms received remifentanil infusions and anesthetic doses were titrated to bispectral index levels. Notable differences in perioperative outcomes included a longer time to loss of consciousness in the remimazolam group (83.21 ± 22.54 seconds vs 75.17 ± 12.17 seconds; 95% CI 0.234−15.840; P = .044), less hypotension during the induction period for remimazolam patients (16.3% vs 32.6%, P < .05), and a longer post-anesthesia care unit stay in the remimazolam group (61.23 ± 21.55 min vs 52.05 ± 13.99 min; 95% CI 1.375-16.997; P = .021). There was no difference in time between infusion discontinuation and extubation between the two groups (1292 seconds vs 1269 seconds, P > 0.05), though this result was confounded by 23.2% of remimazolam patients receiving flumazenil. There were ultimately no differences in postoperative creatinine levels, BUN levels, GFR, or other markers of renal function between the two trial arms. Overall, remimazolam may become a popular anesthetic choice for transplant recipients in the future given its metabolism by non-specific tissue esterases and potentially less hypotension associated with induction, though these benefits will need to be weighed against potential increases in induction and recovery times and cost.

Postoperative Pain Management

Regional Anesthesia

Much work has been done this calendar year focusing on regional anesthetic techniques and optimal pain control regimens in renal transplantation recipients. An et al 27 completed a single-center RCT involving 92 patients comparing postoperative pain scores at rest and on ambulation between patients who received anterior quadratus lumborum blocks (QLB), erector spinae blocks (ESP), or no regional anesthetic. Results demonstrated significantly reduced rates of moderate-to-severe pain scores in the QLB group vs the ESP vs no regional anesthetic groups (41.9% vs 83.9% vs 96.7%; P < .001) respectively over the first 12 hours after surgery.

Chae et al 28 in a propensity score-matched retrospective analysis involving 524 living donor kidney transplant recipients, assessed the post-transplant analgesic profiles of patients receiving postoperative TAP blocks vs local wound infiltration. Both patient cohorts received approximately 20 mL of 0.375% ropivacaine. The pain scores for both groups were nearly identical in the immediate postoperative period. However, at the 1-hour and 8-hour marks, TAP block patients reported significantly improved pain scores on the visual analog scale (1-hour: 3.5 ± 1.1 vs 4.7 ± 1.4, P < .001; 8-hour: 3.0 ± 0.9 vs 3.8 ± 1.5, P < .001), while requiring significantly lower mean rescue fentanyl doses during postoperative day (POD) 1 (67.7 ± 30.6 mcg vs 119.1 ± 71.8 mcg, P < .001). There were no complications due to regional technique reported in either patient cohort.

Similarly, Viderman et al 29 conducted a meta-analysis comparing TAP blocks to IV-only analgesia in both kidney transplant recipients and donors, with similar aims of analyzing postoperative pain scores and total narcotic consumption. Using 11 RCTs which looked specifically at these populations, they demonstrated a statistically significant mean decrease in cumulative 24-hour morphine-equivalent requirements of 14.13 mg (95% CI −23.64 to −4.63). Patients receiving TAP blocks went a significantly longer time without requiring additional doses of opioid based analgesia in the immediate postoperative period (MD 5.92 hours, 95% CI 3.63-8.22, P < .00001). Mean pain scores were lower by an average of 0.65 in the TAP block groups (95% CI −0.88 to −0.42, P < .0001). However, this difference may not be clinically relevant. When stratified by donor or recipient surgery, pain intensity scores at 24 hours were significantly lower in the donor population receiving TAP blocks (MD −0.70; 95% CI −1.16 to −0.24; P = 0.003), but not in the recipients (MD −0.59; 95% CI −1.23 to 0.05; P = .07). Overall, studies were heterogeneous and many different analgesic outcomes were considered. Additional studies are needed to evaluate the impact of TAP blocks in kidney transplant recipients in reducing 24-hour pain scores and opioid requirements.

Neuraxial Analgesia

In a single-center RCT, Ojha et al 30 examined pain scores and opioid consumption in renal transplant recipients receiving a TAP block plus catheter with continuous 0.25% ropivacaine infusion vs epidural analgesia with a similar continuous infusion of 0.25% ropivacaine. Over the first 24 hours after transplantation, total pain and patient satisfaction scores were similar between the two groups. Additionally, total fentanyl consumption was not noticeably different between the continuous TAP group and the epidural group. While the non-inferiority of TAP catheters is notable, the infusion rate of local anesthetic was set between 4 and 10 mL/hr based on patient characteristics, while patient-controlled analgesia was only available in the form of IV fentanyl. This strategy may not reflect the analgesic regimens of other institutions which may prescribe patient-control of local anesthetic boluses through the epidural or TAP catheter.

Hofer et al 31 performed a retrospective single-center cohort study to examine the analgesic impact of intrathecal hydromorphone in kidney transplant recipients over a 5-year period, from 2017 to 2022. Patients in both cohorts were treated with IV narcotics during the intraoperative period and received a combined bupivacaine or liposomal bupivacaine unilateral TAP block. A total of 1012 kidney recipients were included. At the 72-hour mark, patients receiving intrathecal hydromorphone had significantly less cumulative postoperative opioid requirement in milligram morphine equivalents (30 mg [interquartile range 0-68] vs 64 mg [interquartile range 22-120]), and the propensity score weighted ratio of total milligram morphine equivalents was 0.34 (95% CI 0.26-0.43, P < .001) for the hydromorphone group. The treatment group was also associated with decreased maximum pain scores at both the 24-hour (OR 0.28; 95% CI 0.21-0.37; P < .01) and 72-hour marks (OR 0.41; 0.31-0.54: P < .01) compared to controls. However, the intrathecal hydromorphone cohort had higher rates of postoperative nausea and vomiting (OR 2.16; 95% CI 1.63-2.86; P < .001). These findings indicate intrathecal hydromorphone may have utility as an analgesic option for patients undergoing kidney transplantation; however, the associated increase in postoperative nausea and vomiting should be considered as well.

Pancreas Transplantation

OPTN/SRTR 2023 Annual Data Report: Pancreas

The total number of pancreas transplants in the United States remained relatively stable in 2023 with 915 transplants compared to 918 transplants in 2022. 2 While the number of transplants stayed largely the same, there was an increase in the number of patients added to the waiting list with 1876 added in 2023, whereas 1736 were added in 2022. The majority of the increase stemmed from simultaneous pancreas kidney (SPK) and pancreas after kidney (PAK) candidates. The number of PAK transplants continued to decline, reaching its lowest point in a decade of 36 transplants in 2023. The proportion of recipients with type 2 diabetes (25.4%) was comparable to the proportion of candidates on the waiting list with type 2 diabetes (25.2%). This was an increase from 2022 with only 22.5% of recipients having type 2 diabetes and 23.4% of candidates on the waiting list with type 2 diabetes. This aligns with the trend of an increasing proportion of patients on the waiting list that are older, obese, and have type 2 diabetes. Despite the number of transplants staying nearly the same, there was a decrease in donors in 2023 compared to 2022, but the discard rate also declined. Outcomes of SPK, pancreas transplant alone (PTA), and PAK transplants have largely remained stable from 2020-2022 with one year survival rates of 90.8%, 87.5%, and 84.4%, respectively.

Preoperative Bariatric Surgery

In a single-center retrospective case-controlled study from 1998 to 2024, Matar et al examined the perioperative complications and long-term outcomes of patients who underwent bariatric surgery prior to pancreatic transplant. 32 Among 1542 transplants examined, 17 patients had a history of bariatric surgery (11 Roux-en-Y, 5 sleeve gastrectomy, and 1 vertical band gastroplasty) and underwent a mix of SPK, PTA, and PAK transplants. Compared to the non-bariatric surgery patients, patients with prior bariatric surgery had similar rates of graft thrombosis (5.9% vs 3.9%, P = .76) and reversible acute rejection episodes (29.4% vs 29.4%, P > .99). There were not statistically significant differences in length of stay (P = .22), 30-day readmission (P = .24), 1-year readmission (P = .70), or median death-censored graft survival and patient survival. At four years, patient and graft survival were both 100%. The findings suggest that pretransplant bariatric surgery may not be associated with increased perioperative complications, which may allow candidates who would not otherwise be listed due to elevated body mass index (BMI) the opportunity for transplantation.

Graft Outcomes

Simultaneous En Bloc Kidney and Pancreas Transplant

As the waitlist continues to grow, the search for ways to expand the donor pool continues. Tamburrini et al reported on their center’s experience with en bloc SPK transplants from pediatric donors to eight adult patients from 1997-2018. 33 The mean donor age was 5.0 ± 1.7 year-old and donor weight was 19.8 ± 4.8 kg, while the mean recipient age was 46.6 ± 12.8 year-old with BMI 25.2 ± 3.8 kg/m2. The surgery was well tolerated by all recipients with immediate pancreatic graft function and insulin independence, and renal grafts were functional with only one patient requiring renal replacement therapy which resolved prior to discharge. On average, recipient hemoglobin A1C decreased from 7.2 ± 1.1% to 5.5 ± 0.5% one month after transplant and remained at 5.7 ± 0.4% at five years. Recipient creatinine prior to transplant averaged 7.4 ± 2.5 mg/dL and decreased to 1.1 ± 0.3 mg/dL at one month and 1.0 ± 0.3 mg/dL at five years. The study demonstrates small pediatric donors may be viable options for adult SPK, but center-specific patient populations and protocols, such as whether SPK recipients are managed in the intensive care unit postoperatively, may impact these outcomes.

Delayed Graft Function

Bellotti et al. 34 performed a retrospective cohort study in 151 consecutive pancreas transplants at a single center to define pancreatic DGF, establish incidence and risk factors, as well as evaluate its influence on short- and long-term outcomes. Utilizing a series of receiver operating characteristic curve analyses evaluating cumulative and daily mean insulin requirements over the first 14 days and their predictive accuracy for 5-year death-censored pancreas graft survival, the authors set a cutoff of ≥2.5 IU/day on POD 10 as DGF. Of the 151 pancreas transplants examined, nine had early graft failure and were excluded, and of the remaining 142 patients, 36.6% of them experienced DGF. Independent risk factors for DGF were older donor age (OR 1.05; 95% CI 1.01-1.09; P = .010) and continuous heparin infusion during the postoperative period (OR 2.52; 95% CI 1.12-5.64; P = .025). Patients with DGF also experienced longer lengths of stay (23 vs 20 days, P = .049) and inferior glucose control on POD 10 (146.0 vs 126.4 mg/dL, P < .001). Patient and graft survival at 5 years was not significantly associated with DGF. Pancreatic DGF may be a clinically relevant marker for graft vulnerability which warrants further investigation to determine if it is associated with longer term graft or patient outcomes or amenable to targeted interventions.

Intestinal Transplantation

OPTN/SRTR 2023 Annual Data Report: Intestine

The number of intestinal transplants significantly decreased over the past two decades; however, over the past five years, 3 that number has stabilized. In 2023, there were 95 intestinal transplants performed in the United States, an increase from the 82 performed in 2022. Of these, 62 were performed in adults and 33 in pediatric patients. There were 16 adult and 18 pediatric combined liver-intestine transplants. Of the 99 intestine grafts recovered in 2023, almost 96% were transplanted. Outcomes of these transplants have largely remained the same with graft failure rates for intestine alone at 1 year, 5 years, and 10 years at 14.6%, 55.3%, and 70.7%, respectively, and combined LIT having failure rates at 1 year, 5 years, and 10 years of 47.4%, 58.6%, and 65.6%, respectively.

Bleeding and Thrombosis

In this retrospective single-center study, Reeder et al examined 145 intestinal, LIT, and MVTs in 138 adult recipients, from 2007 to 2023, for their risk of bleeding and thrombosis in the perioperative period. 35 The authors found that intraoperatively and on POD 1, 95% of transplants received blood products, with MVTs requiring the most (median 21 units of packed red blood cells [pRBCs],interquartile range 0-38 units), and intestine alone transplants required the least (median 5 units of pRBCs, interquartile range 2-8 units). Thrombotic events were less common with six events occurring in that time span. From POD 2 to 92, bleeding and thrombosis were both common, with 38.0% (95% CI 30.0-46.0%) of patients experiencing major bleeding and 26.1% (95% CI 19.1-33.5%) developing thrombosis. Bleeding events were primarily gastrointestinal, surgical site, or intra-abdominal, with MVTs having the highest rate of bleeding at 44% and intestine alone the lowest at 25%. These findings underscore the high hemostatic risk in this population and suggest the need for further multicenter research to optimize management strategies.

Intestinal Retransplant

In a retrospective cohort analysis utilizing the UNOS database, Cogua et al compared intestinal retransplant to primary intestinal transplant from 2010 to 2024. 36 There were 741 patients, with 60 of those being retransplant recipients. The retransplant group had higher rates of graft failure (46.7% vs 21.0%, P < .001), acute rejection (18.3% vs 8.7%, P = 0.014), chronic rejection (23.3% vs 11.2%, P = .006), and infection (5.0% vs 1.2%, P = .019) compared to primary transplantation. Further research is needed in the field to identify modifiable risk factors for intestinal retransplant considering the relatively few numbers of cases performed.

Liver Transplantation

OPTN/SRTR 2023 Annual Data Report: Liver

In 2023, a total of 10 659 liver transplantations (LTs) were performed in the United States, representing a new record high. Of these, 10 125 (95.0%) were adults and 534 (5.0%) were pediatric recipients. 4 This growth was driven largely by increased utilization of donation after circulatory death (DCD) grafts and older donors, supported by the expanding adoption of machine perfusion technologies, which enhance organ viability and mitigate ischemic injury. The overall non-use rate of recovered liver grafts declined slightly to 9.7%, while 16.7% of adult recipients received DCD grafts, up from 11.3% in 2022. Living donor liver transplantation also increased, accounting for 5.7% of adult and 14.6% of pediatric liver transplants.

A major policy milestone occurred in July 2023 with the nationwide implementation of Model for End-Stage Liver Disease (MELD) 3.0, replacing MELD-Na. MELD 3.0 incorporates female gender and serum albumin, and updates coefficients for other variables, with the goal of reducing gender-based inequities in liver allocation. Following its adoption, the disparity in deceased donor liver transplant rates narrowed to 91.4 (women) vs 96.9 (men) transplants per 100 patient-years. Still, women continued to experience higher pretransplant mortality (13.9 vs 12.2 deaths per 100 patient-years).

The liver transplant waiting list continued to decline. During 2023, 24 492 adult candidates were listed and 14 747 were removed: 64.5% for deceased donor LT, 3.9% for living donor LT, 6.4% due to death, 6.6% for being too sick to transplant, and 7.5% due to clinical improvement. Waiting times shortened substantially, with 63.8% of recipients transplanted within 90 days of listing. Alcohol-associated liver disease remained the leading indication (41.1%), followed by metabolic dysfunction-associated steatohepatitis (20.3%) and hepatocellular carcinoma (HCC) (10.4%). Despite increasing disease severity at listing, the pretransplant mortality rate remained stable at 12.9 deaths per 100 patient-years, continuing a downward trend observed since 2014.

Use of DCD grafts expanded markedly, comprising 20.1% of all deceased donor livers recovered, compared with 14.1% in 2022 and 6.3% in 2013. Although DCD grafts remained more likely to be unused than donation after brain death (DBD) livers (22.8% vs 6.5%), non-use rates declined for both groups, reflecting increasing confidence in normothermic and hypothermic machine perfusion strategies.

In adults, 804 simultaneous liver–kidney (SLK) transplants were performed, representing 7.9% of all LTs, down from 9.6% in 2017 following implementation of standardized SLK medical eligibility criteria. This decline likely reflects more judicious candidate selection and the impact of the kidney “safety net” policy, which prioritizes kidney transplantation for liver recipients with persistent renal failure after isolated LT. Collectively, these policies have improved kidney allograft utilization and system-wide efficiency.

Post-transplant outcomes continued to improve. Among deceased donor LTs, graft failure occurred in 7.9% at 1 year and 20.7% at 5 years, while patient mortality was 6.5% at 1 year and 19.0% at 5 years—both substantially improved compared with a decade ago. Although recipients of DCD grafts continued to have slightly lower five-year graft survival (75.6%) compared with DBD recipients (79.2%), this gap continues to narrow, likely reflecting broader use of machine perfusion to reduce ischemia-reperfusion injury.

In summary, 2023 marked a pivotal year for LT in the United States. The implementation of MELD 3.0 began addressing long-standing gender disparities in access, while rapid adoption of machine perfusion technologies enabled record transplant activity and expanded safe utilization of DCD grafts—key advances poised to shape future allocation strategies and outcomes.

Machine Perfusion

Machine perfusion has gained increasing attention as a strategy to optimize extended criteria donor (ECD) and DCD liver grafts while mitigating ischemia-reperfusion injury (IRI). Several RCTs and systematic reviews published in 2025 have further clarified the relative roles, benefits, and limitations of distinct perfusion modalities.

Hypothermic Oxygenated Perfusion (HOPE)

HOPE has emerged as the most clinically validated preservation strategy in LT, with robust evidence supporting not only short-term graft protection but also durable clinical benefits and cost-effectiveness, particularly in DCD grafts.37,38 Recent multicenter randomized studies have demonstrated that dual-HOPE provides sustained reductions in biliary complications and rejection risk, supporting HOPE as a leading and well-validated preservation strategy for DCD LT.37,38

Van Rijn and colleagues conducted the dual-HOPE-DCD Trial, 37 a multicenter randomized controlled study evaluating long-term outcomes of dual-HOPE in DCD LT. In this trial, 156 DCD LT recipients across six European centers were randomized to dual-HOPE or static cold storage (SCS). The primary endpoint was the incidence of non-anastomotic biliary strictures (NAS) at five years; secondary endpoints included acute cellular rejection (ACR), graft survival, and patient survival. At five years, symptomatic NAS occurred in 14% of recipients in the dual-HOPE group compared with 26% in the SCS group (HR 0.47; 95% CI 0.23-0.99; P = .048). Among recipients with immune-mediated liver disease, ACR was completely absent in the dual-HOPE group, compared with 32% in the SCS group (P = .036). Five-year graft and patient survival did not differ significantly between groups (82% machine perfusion vs 79% control group; P = .806 and 63% machine perfusion vs 70% control group; P = .254, respectively). These findings demonstrate that dual-HOPE confers durable biliary protection and immunomodulatory benefits without compromising long-term graft or patient survival.

The same group subsequently reported an economic evaluation comparing dual-HOPE with SCS using data from the dual-HOPE-DCD Trial. 38 A total of 119 recipients (60 dual-HOPE, 59 SCS) were included. Costs related to surgery, intensive care, hospitalization, readmissions, and outpatient care within the first post-transplant year were analyzed. Three cost scenarios were modeled: (1) perfusion device and disposables only; (2) perfusion device and disposables plus personnel costs; and (3) perfusion device, disposables, personnel costs, and a dedicated perfusion facility. Mean total medical costs were €126,221 (approximately $139,00) per patient in the SCS group vs €110,794 (approximately $122,000) in the dual-HOPE group, representing a 12.2% reduction. Cost savings were most pronounced in intensive care (−28.4%) and nonsurgical interventions (−24.3%), reflecting fewer complications and shorter lengths of stay. Dual-HOPE was cost-effective after as few as one procedure per year in scenario-1, or after 25-30 procedures when personnel and facility costs were included. These findings support routine integration of dual-HOPE into DCD LT programs from both clinical and economic perspectives.

Systematic Reviews Comparing Machine Perfusion and SCS

Several systematic reviews published in 2025 compared hypothermic, normothermic, and regional perfusion strategies with SCS, providing a comprehensive evaluation of machine perfusion across donor subtypes. Sanha et al performed a systematic review and meta-analysis of HOPE in ECD grafts from both donation after brain death (DBD-ECD) and DCD donors, including 12 studies comprising 1833 recipients, of whom 29% received HOPE and 71% underwent SCS. 39 Compared with SCS, HOPE significantly reduced early allograft dysfunction (EAD) (RR 0.59; 95% CI 0.46-0.76; P < .001), 1-year graft failure (RR 0.56; 95% CI 0.33-0.94; P = .02), retransplantation (RR 0.30; 95% CI 0.12-0.71; P = .007), NAS (RR 0.46; 95% CI 0.27-0.78; P = .004), and Clavien–Dindo grade ≥3 complications (RR 0.64; 95% CI 0.49-0.84; P = .001). In subgroup analyses of DBD-ECD grafts, HOPE was associated with reduced EAD (RR 0.59; 95% CI 0.43-0.82; P = .002) and shorter hospital stay (MD −3.92 days; 95% CI −7.64 to −0.19; P = .04). In DCD grafts, HOPE reduced EAD (RR 0.59; 95% CI 0.40-0.89; P = .01), improved 1-year graft survival (RR 0.38; 95% CI 0.20-0.73; P = .004), and lowered NAS incidence (RR 0.35; 95% CI 0.18-0.69; P = .002).

Jaber et al conducted a systematic review and network meta-analysis of RCTs comparing HOPE/dual-HOPE, normothermic machine perfusion (NMP), and NMP–ischemia-free LT (NMP-IFLT). 40 Twelve RCTs involving 1628 recipients (801 machine perfusion, 827 SCS) were included. HOPE/dual-HOPE significantly reduced EAD compared with SCS (RR 0.53; 95% CI 0.37-0.74; P < .001), reduced graft loss (RR 0.38; 95% CI 0.16-0.90; P = 0.03), decreased biliary complications (RR 0.52; 95% CI 0.43-0.75; P < .001), and improved 1-year graft survival (RR 1.07; 95% CI 1.01-1.14; P = .02). In contrast, NMP and NMP-IFLT significantly reduced post-reperfusion syndrome (NMP: RR 0.49; 95% CI 0.24-0.96; P = .04; NMP-ILT: RR 0.15; 95% CI 0.04-0.57; P = .005) but did not significantly affect graft survival, biliary outcomes, ICU or hospital stay, or patient survival.

Viana et al performed a systematic review and meta-analysis comparing NMP with SCS that included 1295 recipients (592 NMP, 703 SCS). 41 No significant differences were observed in 1-year graft survival, patient survival, primary non-function, or serious adverse events. However, in RCT-only analyses, NMP significantly reduced non-anastomotic biliary strictures (RR 0.39; 95% CI 0.17-0.91; P = 0.03), post-reperfusion syndrome (RR 0.39; 95% CI 0.27-0.56; P < .001), and EAD (RR 0.57; 95% CI 0.36-0.91; P = .02), while increasing organ utilization (RR 1.10; 95% CI 1.02-1.18; P = .01) without prolonging ICU or hospital stay.

Comparative Network Meta-Analysis of Machine Perfusion Strategies

Patrono et al performed a comprehensive systematic review and meta-analysis comparing HOPE, NMP, and NRP, with ischemic cholangiopathy and graft survival as primary outcomes. 42 HOPE was associated with a significant reduction in ischemic cholangiopathy (RR 0.50; 95% CI 0.31-0.79; P = 0.003) and improved graft survival (RR 1.08; 95% CI 1.05-1.11; P < .001), with consistent effects across both DBD and DCD grafts. NMP did not significantly affect ischemic cholangiopathy or graft survival overall, although selected studies suggested potential benefits in higher-risk grafts. NRP, based on retrospective cohort data, demonstrated marked reductions in ischemic cholangiopathy (RR 0.10; 95% CI 0.05-0.21; P < .001) and superior graft survival (RR 1.11; 95% CI 1.05-1.17; P < .001) compared with super-rapid recovery in controlled DCD donors.

Ischemia-free Liver Transplantation (IFLT)

Ischemia-free LT (IFLT), a process in which the donor liver is procured, preserved, and implanted without interruption of blood supply, prevents IRI and reduces IRI-related complications. 43 Shen et al conducted a post hoc analysis of the IFLT-DBD RCT to evaluate the impact of IFLT on perioperative myocardial injury after noncardiac surgery (MINS) and postoperative pulmonary complications (PPCs). 43 A total of 65 patients were included, with 32 undergoing IFLT and 33 receiving conventional LT (CLT). Compared with CLT, the IFLT group demonstrated greater intraoperative hemodynamic stability, characterized by significantly lower vasopressor requirements, smoother changes in pulmonary arterial pressure, and superior oxygenation following reperfusion. Although the incidence of MINS did not differ significantly between groups (28.1% vs 45.5%, P = .15), peak troponin T levels were significantly lower in the IFLT group (0.056 ± 0.007 vs 0.088 ± 0.016 ng/mL, P = .04). In addition, IFLT was associated with a lower incidence of PPCs (37.5% vs 66.7%, P = .02) and a shorter duration of mechanical ventilation (median 12.5 h vs 18 h, P < .001). Multivariable logistic regression analysis confirmed that IFLT was an independent protective factor against PPCs (OR 0.30; 95% CI 0.11-0.88; P = .03). The authors concluded that IFLT mitigates myocardial and pulmonary injury by preserving intraoperative hemodynamic stability and eliminating IRI, thereby improving perioperative cardiopulmonary outcomes compared with CLT.

In summary, across machine perfusion modalities, contemporary evidence reinforces the pivotal role of advanced graft preservation strategies in modern LT. HOPE, supported by multiple RCTs, has emerged as the most evidence-based approach, demonstrating durable graft protection and cost-effectiveness, particularly in DCD grafts. NMP and NRP further contribute by enabling graft viability assessment, increasing organ utilization, and reducing ischemic cholangiopathy. IFLT represents a next-generation and fully ischemia-free strategy that may redefine perioperative organ protection. Collectively, these advances reflect a paradigm shift toward physiological, mechanism-driven, and increasingly personalized graft preservation in LT.

Coagulation and Transfusion

Coagulation management during LT remains a delicate balance between preventing hemorrhage and minimizing thrombotic complications. Recent RCTs and evidence from meta-analyses44-47 have refined current practice by clarifying the role of antifibrinolytic therapy, transfusion thresholds, and thromboprophylaxis, with increasing emphasis on individualized, physiology-guided strategies.

Nascimento et al conducted a randomized, double-blind trial evaluating prophylactic antifibrinolysis with epsilon aminocaproic acid (EACA) during LT. 44 Fifty adult patients were randomized to receive either EACA (20 mg/kg/h from skin incision to the end of surgery; n = 24) or saline placebo (n = 26), with coagulation monitored using rotational thromboelastometry (ROTEM) at predefined intraoperative time points. Compared with placebo, EACA effectively suppressed intraoperative fibrinolysis, particularly during the anhepatic phase (P < 0.001). However, EACA did not significantly reduce transfusion requirements, including pRBCs (2.42 vs 1.12 units, P = 0.573), fresh frozen plasma (FFP) (1.79 vs 0.654 units, P = .728), cryoprecipitate (3.87 vs 0.961 units, P = .601), or platelet concentrate (3.25 vs 0.692 units, P = .100). Postoperative outcomes were similarly unaffected. Importantly, no increase in thrombotic or ischemic complications was observed, and early mortality rates were comparable between groups (three-month mortality: 12.5% in the EACA group vs 23.1% in controls, P = 0.467). The authors concluded that EACA safely attenuates fibrinolysis during LT, although this hemostatic effect does not necessarily translate into reduced transfusion requirements, supporting its selective use in high-risk patients within a viscoelastic-guided transfusion framework.

Following evaluation of antifibrinolytic therapy, attention has also turned to thromboprophylaxis strategies after LT, where balancing bleeding and thrombotic risks remains equally challenging. Xie et al conducted a multicenter RCT to evaluate the efficacy and safety of postoperative low-molecular-weight heparin (enoxaparin) for thromboprophylaxis after LT. 45 A total of 462 recipients were randomized to receive either enoxaparin (40 mg daily subcutaneous injection for 14 days) or placebo. The primary outcome was the incidence of venous thromboembolism, with secondary outcomes including major bleeding, graft loss, and mortality within 30 days post-transplant. Enoxaparin did not significantly reduce the incidence of venous thromboembolism compared with placebo (17.3% vs 21.2%; RR 0.82; 95% CI 0.56-1.19; P = .29). In contrast, major bleeding, defined according to the International Society on Thrombosis and Haemostasis criteria as bleeding resulting in hemoglobin decrease of ≥20 g/L or requiring transfusion of at least 2 units of whole blood or pRBCs within 24 hours, occurred more frequently in the enoxaparin group (35.5% vs 25.5%; RR 1.39; 95% CI 1.05-1.84; P = .02). Subgroup analysis suggested a potential benefit among recipients with HCC, in whom enoxaparin reduced thrombotic events without increasing bleeding risk (HR 0.44; 95% CI 0.23-0.86; P = .02). The authors concluded that routine enoxaparin prophylaxis does not confer a net clinical benefit after LT, as any modest reduction in thrombotic risk is offset by an increased incidence of bleeding, underscoring the need for individualized thromboprophylaxis guided by patient-specific risk factors rather than universal pharmacologic intervention.

Beyond individual trials, recent systematic reviews have synthesized emerging evidence on coagulation management strategies during LT. Among these, the most comprehensive analyses have focused on prothrombin complex concentrate (PCC) and viscoelastic testing (VET)–guided transfusion algorithms, reflecting a paradigm shift from empirical component replacement toward individualized, factor-based therapy. In this context, Kojundzic et al conducted a systematic review and meta-analysis evaluating the efficacy and safety of PCC in adult LT. 46 Across eight retrospective studies, PCC administration was typically guided by VET-based algorithms or used as rescue therapy in patients with severe coagulopathy and high MELD scores. Compared with conventional plasma-based management, PCC use was associated with significantly reduced exposure to pRBCs (OR 0.53; 95% CI 0.32-0.86; I 2 = 0%) and FFP (OR 0.35; 95% CI 0.13-0.92; I 2 = 50%), without an associated increase in thromboembolic events or mortality. However, study quality was limited by significant indication bias and residual confoundings, as PCC was typically administered to clinically sicker patients (e.g., higher MELD scores and coagulopathy severity), frequently as rescue therapy, with heterogeneous and non-standardized treatment protocols across studies. The authors concluded that, when judiciously dosed and guided by VET parameters, PCC represents a safe and effective alternative to FFP for correcting intraoperative coagulopathy, while emphasizing the need for high-quality prospective studies to confirm these findings.

Building on this focus on algorithm-based hemostatic management, de Oliveira Júnior et al conducted a broader systematic review examining the impact of VET-guided transfusion algorithms on transfusion practices and clinical outcomes in LT. 47 Seventeen studies were included (15 observational studies and two RCTs), encompassing 1670 patients. Algorithm-guided VET use was associated with a significant reduction in pRBC transfusion compared with non-algorithmic approaches (standardized mean difference [SMD] −0.44; 95% CI −0.62 to −0.25; P < .01). Differences in FFP transfusion (SMD −0.29; 95% CI −0.71 to 0.14; P = .07) and platelet transfusion (SMD −0.28; 95% CI −0.78 to 0.22; P = .27) were not statistically significant. Notably, fibrinogen utilization was higher in VET-guided protocols (SMD 0.51; 95% CI 0.37 to 0.65; P < .01), likely reflecting earlier and more targeted correction of coagulation deficits identified through real-time testing. No significant differences were observed in operative duration (SMD −0.07; 95% CI −0.38 to 0.24; P = .26), intensive care unit length of stay (SMD −0.17; 95% CI −0.36 to 0.02; P = .08), or mortality (OR 0.89; 95% CI 0.64-1.23; P = .48). Overall, the quality of evidence was graded as low to moderate due to heterogeneity in study design and algorithm structure. This review reinforces that while VET offers a rational, individualized framework for transfusion management, its clinical impact depends on standardized, validated algorithms to ensure consistent implementation and meaningful outcome improvement in LT.

Metabolic and Physiologic Optimization During LT

Optimizing intraoperative metabolic and physiological targets is critical for graft function and perioperative outcomes in LT. Recent RCTs have evaluated strategies aimed at improving physiological stability during surgery, including fluid composition and glucose control, providing new insights into metabolic and hemodynamic optimization.

In an open-label, single-center RCT, Yanase et al compared a bicarbonate-buffered crystalloid with Plasma-Lyte in 52 adult patients undergoing LT to assess acid–base stability during reperfusion. 48 The authors hypothesized that a bicarbonate-buffered solution would be non-inferior to Plasma-Lyte in preventing metabolic acidosis, as measured by standard base excess (SBE) 5 minutes after donor liver reperfusion. Median SBE at this time point was −4.86 mEq/L in the bicarbonate group and −4.75 mEq/L in the Plasma-Lyte group, yielding an estimated median difference of −0.04 mEq/L (95% CI −1.99 to 1.90), meeting the prespecified non-inferiority margin of −2.5 mEq/L (one sided P = .02). No significant differences were observed between groups in intraoperative or early postoperative pH (P = 0.86), bicarbonate concentration (P = .29), lactate levels (P = .86), or strong ion difference (P = .29). Rates of postoperative complications, acute kidney injury (AKI), and 30-day mortality were also comparable. The authors concluded that bicarbonate-buffered crystalloid was non-inferior to Plasma-Lyte for maintaining acid–base homeostasis following reperfusion and appeared equally safe for intraoperative volume replacement in LT.

Duan et al conducted a single-center RCT to determine the optimal intraoperative glycemic target in LT recipients, focusing on early graft function and glycemic variability. 49 A total of 182 adult recipients were randomized to either less intensive glucose management (LIGM; 7.8-10.0 mmol/L) or more intensive glucose management (MIGM; 4.5-6.7 mmol/L) during the pre-anhepatic and anhepatic phases, with both groups managed within a conventional range of 4.1-10.0 mmol/L during the neohepatic and postoperative phases. The incidence of EAD was significantly lower in the LIGM group compared with the MIGM group (10.1% vs 31.2%; RR 0.32; 95% CI 0.11-0.56; P < .001). Recipients in the LIGM group also demonstrated lower peak aspartate aminotransferase levels (456.2 U/L vs 601.4 U/L, P = .04), lower alanine aminotransferase levels (437.7 U/L vs 604.1 U/L, P = .001), shorter hospital length of stay (13 days vs 17 days, P = .001), and higher 1-year graft survival (92.1% vs 80.9%, P = .03). Rates of postoperative infection (16.9% vs 14.6%, P = .68), ACR (1.1% vs 0%, P = .31), and primary non-function within ten days (2.3% vs 8.6%, P = .06) did not differ significantly between groups. The authors concluded that maintaining moderate control of intraoperative glycemia during the pre-anhepatic and anhepatic phases reduces glycemic variability and protects graft function without increasing postoperative infection risk, potentially by attenuating oxidative stress and inflammatory responses associated with IRI. Collectively, these findings suggest that avoiding excessively strict intraoperative glucose control may mitigate IRI and promote early graft recovery following LT.

Infectious and Renal Complications

Postoperative infectious and renal complications remain major determinants of morbidity and mortality following LT. Recent studies have provided new evidence regarding risk factors for SSI and strategies for early detection of AKI, underscoring the importance of timely recognition and targeted prevention.

Jin et al conducted a systematic review and meta-analysis to identify risk factors for SSI after LT. 50 Across 18 studies involving more than 9500 recipients, the pooled incidence of SSI was 21%, confirming SSI as one of the most frequent postoperative complications. The analysis identified several significant risk factors, including Roux-en-Y biliary reconstruction (OR 2.61; 95% CI 1.95-3.26; I 2 = 43.4%), graft-to-recipient weight ratio less than 1% (OR 2.19; 95% CI 1.54-2.84; I 2 = 0%), preoperative hemodialysis (OR 2.99; 95% CI 2.21-3.76; I 2 = 77.7%), biliary complications (OR 8.26; 95% CI 2.47-14.06; I 2 = 99.7%), retransplantation (OR 3.76; 95% CI 2.02-5.50; I 2 = 96.4%), and prior surgical history (OR 3.42; 95% CI 1.06-5.77; I 2 = 98.6%), with substantial heterogeneity and variables, incompletely reported definitions of surgical history across studies. Several pooled estimates demonstrated substantial interstudy heterogeneity, warranting cautious interpretation despite the consistent direction of effect. The authors emphasized that technical complexity, biliary pathology, and preexisting renal dysfunction are key contributors to postoperative SSI, highlighting the need for individualized infection prevention strategies in high-risk LT recipients.

Yan et al performed a systematic review and meta-analysis evaluating neutrophil gelatinase-associated lipocalin (NGAL) as an early biomarker for AKI in the perioperative period of LT. 51 Sixteen case-control studies encompassing 1271 recipients were included. Both preoperative and postoperative NGAL levels were significantly higher in patients who developed AKI compared with those who did not (preoperative NGAL: SMD 0.53; 95% CI 0.15-0.91; P < .001; postoperative NGAL: SMD 0.63; 95% CI 0.24-1.03; P < .001). Subgroup analyses demonstrated that postoperative NGAL elevation was most pronounced in plasma (SMD 1.29; 95% CI 0.21-2.38; P = .01) and urine (SMD 0.88; 95% CI 0.18-1.59; P = 0.04), whereas serum NGAL did not significantly differ between AKI and non-AKI patients (SMD 0.01; 95% CI −0.28 to 0.31; P = .15). Geographic subgroup analyses indicated that European and Asian cohorts exhibited the clearest discrimination. Although heterogeneity across studies was substantial, sensitivity analyses confirmed the robustness of the findings. The authors concluded that NGAL, particularly when measured in plasma or urine, may facilitate early detection of AKI after LT, potentially enabling earlier renal-protective interventions, while noting the need for further studies to define diagnostic thresholds and underlying mechanisms.

Body Composition Impairment

Body composition impairment, encompassing both reduced skeletal muscle mass (sarcopenia) and diminished muscle quality due to fat infiltration (myosteatosis), has emerged as a key determinant of perioperative and long-term outcomes following LT. Recent studies and meta-analyses published in 2025 have further clarified the prognostic significance of these morphologic alterations, demonstrating strong and independent associations with post-transplant morbidity, mortality, and graft-related complications. These findings underscore the importance of systematic body composition assessment as an integral component of risk stratification and perioperative optimization in LT candidates.

Sarcopenia

Akabane et al conducted a comprehensive meta-analysis evaluating the prognostic impact of sarcopenia in LT recipients. 52 Eighteen cohort studies comprising 6297 LT patients were included, with an overall sarcopenia prevalence of 27%. A higher pooled prevalence of sarcopenia was observed among female recipients and patients with Child–Pugh class C cirrhosis. Across studies, sarcopenia was consistently associated with increased post-transplant mortality, with a pooled adjusted HR of 1.55 (95% CI 1.28-1.89) and very low heterogeneity (I 2 = 3%). This association remained stable across multiple subgroup analyses, including geographic region, diagnostic definitions, donor type, and study quality. Sensitivity analysis excluding cohorts with a high proportion of HCC patients yielded similar results (HR 1.63; 95% CI 1.13-2.35). Survival analyses further demonstrated significantly lower 1-, 3-, and 5-year survival rates among sarcopenic recipients compared with non-sarcopenic patients (1-year: 89.0% vs 91.0%, P = .03; 3-year: 80.3% vs 88.2%, P = .04; 5-year: 72.6% vs 80.7%, P = .03). The authors emphasized that despite variability in diagnostic criteria, sarcopenia consistently predicted worse post-LT outcomes, underscoring the importance of routine assessment during LT candidate evaluation. Importantly, they highlighted that sarcopenia should not serve as an exclusion criterion but rather be considered a modifiable risk factor amenable to targeted preoperative interventions such as nutritional optimization and structured physical rehabilitation.

Consistent findings were reported in other systematic reviews. Fernandes et al conducted a systematic review focusing specifically on opportunistic computed tomography–based diagnosis of sarcopenia using the psoas muscle index (PMI). 53 Their meta-analysis of 382 patients demonstrated that PMI-defined sarcopenia was associated with a four-fold increase in post-transplant mortality (OR 4.1; 95% CI 2.42-7.07; I 2 = 0%), highlighting the prognostic value of standardized CT-derived muscle metrics. By emphasizing PMI assessment at the third lumbar vertebral level, this study underscored the importance of methodological consistency in sarcopenia evaluation.

In addition, Markakis et al performed a broader systematic review and meta-analysis including 30 studies and 5875 patients, incorporating multiple diagnostic approaches such as skeletal muscle index, psoas muscle area, and PMI. 54 Pre-transplant sarcopenia was associated with significantly increased mortality (RR 1.84; 95% CI 1.41-2.39; I 2 = 67.3%) and a higher risk of postoperative infections (RR 1.35; 95% CI 1.13-1.62; I 2 = 0%). Sarcopenia was also linked to increased surgical complications (RR 1.54; 95% CI 1.26-1.90), greater FFP transfusion requirements (SMD 0.20; 95% CI 0.04-0.37), and prolonged intensive care unit stay (SMD 0.41; 95% CI 0.17-0.66; I 2 = 86.1%). By focusing exclusively on patients with cirrhosis undergoing LT, this analysis reinforces the robust and clinically meaningful impact of sarcopenia across multiple outcomes, including mortality, infections, surgical complications, transfusion requirements, and ICU length of stay.

Myosteatosis

In addition to sarcopenia, myosteatosis—defined as pathological fat infiltration into skeletal muscle—has emerged as another clinically relevant dimension of body composition impairment in LT candidates. Whereas sarcopenia reflects quantitative loss of muscle mass, myosteatosis represents qualitative deterioration of muscle tissue. These conditions frequently coexist, compounding frailty and increasing postoperative vulnerability. Two recent systematic reviews demonstrated the prognostic importance of myosteatosis in LT.

Huang et al conducted a comprehensive meta-analysis including 28 studies and 7068 patients, demonstrating that pre-transplant myosteatosis was a strong independent predictor of mortality (HR 1.69; 95% CI 1.51-1.89; I 2 = 0%), irrespective of whether myosteatosis was analyzed as a categorical or continuous variable. 55 Myosteatosis was also associated with prolonged ICU stay (MD 7.9 days; 95% CI 2.41-13.30), longer overall hospital stay (MD 2.0 days; 95% CI 0.56-3.53), and a higher incidence of postoperative complications (OR 2.35; 95% CI 1.93-2.87). Notably, effect sizes for myosteatosis were comparable to or greater than those reported for sarcopenia, underscoring its substantial clinical impact.

Similarly, Yang et al performed a separate systematic review including 13 studies and 3351 patients, confirming that myosteatosis was associated with significantly reduced survival at 1, 3, and 5 years following LT (OR 0.38; 95% CI 0.30-0.47; P < .01, OR 0.43; 95% CI 0.35-0.52; P < .01, and OR 0.45; 95% CI 0.34-0.59; P < .01, respectively). 56 In addition, myosteatosis was associated with higher rates of EAD (OR 2.20; 95% CI 1.57-3.07; P < 0.01) and prolonged ICU and hospital stays (SMD 0.41; 95% CI 0.12-0.70; P < .01, and SMD 0.68; 95% CI 0.50-0.86; P < .01, respectively).

Collectively, these studies demonstrate that qualitative muscle deterioration, reflected by intramuscular fat accumulation, provides prognostic information beyond muscle mass alone and should be incorporated as a key component of pre-transplant risk stratification in LT candidates.

Donor Management

Ensuring donor safety remains the foremost priority in living donor liver transplantation (LDLT), in which healthy individuals undergo major hepatectomy solely for the benefit of another. Optimizing perioperative management—including analgesic strategies, metabolic conditioning, and interventions that support hepatic regeneration—is therefore essential to minimizing donor morbidity and accelerating recovery. Multiple RCTs published in 2025 focused on two key dimensions of donor care: postoperative pain control through regional anesthesia techniques and perioperative interventions designed to enhance liver regenerative capacity following hepatectomy. Collectively, these studies provide important insights into improving donor comfort, reducing physiological stress, and promoting rapid restoration of liver function after donation. This section reviews emerging evidence from both domains, highlighting strategies that may further refine contemporary donor management protocols in LDLT.

Donor Pain Management

Ultrasound-guided fascial plane blocks have assumed an increasingly important role in donor pain management, aiming to minimize systemic opioid exposure and associated adverse effects. In this context, Sahin et al conducted a prospective RCT comparing two upper abdominal wall blocks—the external oblique intercostal (EOI) block and the subcostal transversus abdominis plane (TAP) block—in living liver donors undergoing open right hepatectomy. 57 The EOI block, a relatively novel technique, involves deposition of local anesthetic between the external oblique and intercostal muscles to target the lateral cutaneous branches of the thoracoabdominal nerves, thereby providing somatic analgesia to the upper lateral abdominal wall. In this study, 65 donors were randomized to receive either bilateral EOI blocks or bilateral subcostal TAP blocks at the conclusion of surgery, with both techniques using a total of 40 mL of 0.25% bupivacaine. The primary endpoint, cumulative morphine consumption within the first 24 postoperative hours, was similar between groups (EOI vs TAP: 23.5 mg vs 26 mg, P = .08). Pain scores at rest and with movement did not differ significantly throughout the first postoperative day. Secondary outcomes were also comparable, including the requirement for rescue analgesia (P = .29), incidence of postoperative nausea and vomiting (P = .34), and duration of post-anesthesia care unit stay (P = .68). Importantly, overall opioid requirements were low in both groups, and no block-related adverse events were reported. Collectively, these findings indicate that the EOI block provides analgesic efficacy comparable to the well-established subcostal TAP block in living liver donors, while potentially offering practical advantages related to its superficial sonoanatomy and relative ease of performance.

A second RCT conducted by Gungor et al evaluated another ultrasound-guided fascial plane technique—the modified thoracoabdominal nerves block through a perichondrial approach (M-TAPA)—for postoperative analgesia in living liver donors. 58 M-TAPA is a relatively novel technique which targets both the anterior and lateral branches of the thoracoabdominal nerves (T6–T12) by depositing local anesthetic beneath the perichondrium of the costal cartilage. This anatomical approach provides broader dermatomal coverage than conventional TAP blocks and is particularly well suited for upper abdominal incisions such as those used in right hepatectomy. In this single-blind RCT, 50 donors undergoing open right hepatectomy were assigned to receive either bilateral M-TAPA in addition to standard multimodal analgesia or standard analgesic care alone without any regional blocks. The primary endpoint, cumulative fentanyl consumption during the first 48 postoperative hours, was significantly lower in the M-TAPA group (median 60 µg vs 120 µg, P = .002), accompanied by a reduced requirement for rescue meperidine (median 0 mg vs 30 mg, P = .048). Both static and dynamic pain scores were consistently lower at all assessed postoperative time points in the M-TAPA group, indicating sustained and clinically meaningful analgesic efficacy throughout the early recovery period. Although a trend toward reduced postoperative nausea was observed in the M-TAPA group, the incidence of other opioid-related adverse effects did not differ between groups, and no block-related complications were reported. Overall, these findings suggest that M-TAPA is a safe and effective component of multimodal analgesia for living donor open hepatectomy, with the potential to substantially reduce perioperative opioid requirements while providing wide and reliable abdominal wall analgesia.

Liver Regeneration After Hepatectomy in Donors

Early restoration of remnant liver volume is essential to ensure donor safety following hepatectomy, and several RCTs published in 2025 have evaluated whether anesthetic or pharmacologic interventions can enhance hepatic regenerative capacity in living liver donors. Because volatile anesthetics undergo hepatic biotransformation and may generate potentially hepatotoxic metabolites, Abhinaya et al hypothesized that isoflurane could impair early liver regeneration compared with propofol. 59 They conducted a single-center RCT comparing isoflurane-based anesthesia with propofol in 60 living donors undergoing hepatectomy. Liver regeneration was assessed using computed tomography volumetry on POD 7 and POD 14, alongside serial measurements of regeneration-related biomarkers and standard liver function tests. Regenerated liver volume did not differ significantly between groups at POD 7 (MD −1.35 cm3; 95% CI −3.92 to 1.22; P = .67) or POD 14 (MD −2.50 cm3; 95% CI −10.72 to 5.73; P = .55), indicating comparable volumetric recovery. However, donors receiving isoflurane demonstrated higher bile acid concentrations on POD 4 (P = .048) and elevated plasma stromal cell–derived factor-1 levels on POD 7 (P = .042). In addition, modest differences in conventional liver function markers were observed, including a higher international normalized ratio immediately after surgery (MD−0.11; 95% CI −0.19 to −0.03; P = .006) and lower serum albumin levels on POD 7 (MD 0.29 g/dL; 95% CI 0.02 to 0.56; P = .04) in the isoflurane group. Importantly, these biochemical differences did not translate into clinically meaningful impairment of liver regeneration or donor recovery. The authors concluded that both isoflurane and propofol appear safe for anesthesia in living donor hepatectomy, although propofol may be associated with a slightly more favorable postoperative biochemical marker profile.

Building on prior findings related to perioperative donor physiology, Yalin et al conducted a RCT to evaluate whether a subcostal TAP block with bupivacaine modulates the inflammatory response in living liver donors undergoing right hepatectomy. 60 Given evidence suggesting potential anti-inflammatory properties of local anesthetics, the authors hypothesized that regional anesthesia might attenuate postoperative cytokine release related to surgical stress, including interleukin (IL)-1, IL-6, and tumor necrosis factor–alpha (TNF-α). In this trial involving 72 donors, bilateral subcostal TAP block did not significantly reduce perioperative plasma concentrations of IL-1, IL-6, or TNF-α compared with general anesthesia alone. Interestingly, plasma bupivacaine concentrations were positively correlated with IL-1 and IL-6 levels at multiple postoperative time points, although the clinical significance of this association remains uncertain. Overall, the findings indicate that subcostal TAP block does not meaningfully alter systemic inflammatory responses in healthy living donors, reinforcing that the primary benefit of regional anesthesia in LDLT is analgesic rather than immunomodulatory.

Kumar et al conducted a single-center, open-label RCT to evaluate whether preoperative oral carbohydrate loading improves metabolic responses and enhances liver recovery in living donors undergoing hepatectomy. 61 Seventy donors were randomized to receive either oral carbohydrate loading with standardized maltodextrin drinks on the night before surgery and two hours preoperatively, or conventional overnight fasting. The carbohydrate loading markedly attenuated perioperative insulin resistance, reducing the Homeostatic Model for Assessment of Insulin Resistance by more than 50% on POD 1 and POD 2 (POD 1: 3.49 µU/mL vs 6.98 µU/mL; P = .003 and POD 2: 2.34 µU/mL vs 4.88 µU/mL; P = .001). Improved glycemic control was also observed in the carbohydrate group on PODs (POD 0: 136.7 mg/dL vs 175.5 mg/dL; P = .001, POD 1: 94.9 mg/dL vs 149.1 mg/dL; P = .001, and POD 2: 103.6 mg/dL vs 154.6 mg/dL; P < .001). Although inflammatory markers and overall postoperative morbidity did not differ between groups, donors receiving carbohydrate loading experienced significantly less postoperative nausea and vomiting and earlier tolerance of a soft diet (nausea: 68.6% vs 91.4%; P = .01, vomiting: 23% vs 51.4%; P = .01, and tolerance of soft diet: 2.57 vs 3.23 days; P = .002). Notably, functional recovery of the remnant liver was accelerated in the carbohydrate group, as reflected by earlier normalization of serum bilirubin levels (4.31 days vs 6.37 days; P = .005). The authors concluded that preoperative carbohydrate supplementation is safe, improves perioperative metabolic stability, and may facilitate faster hepatic functional recovery, supporting its integration into enhanced recovery after surgery-based donor management pathways.

Preoperative Body Mass Index and Post-LT Outcomes