Abstract

Academic Abstract

A core part of human intelligence is the ability to work flexibly with others to achieve goals. The incorporation of artificial agents into human spaces is making increasing demands on artificial intelligence (AI) to demonstrate and facilitate this ability. However, this kind of flexibility is not well understood because existing approaches to intelligence typically construe this either as an individual-difference trait or as a property of groups. We argue that by focusing either on individual or collective intelligence without considering their dynamic interaction, existing conceptualizations of intelligence limit the potential of people and AI systems. To address this impasse, we propose a new kind of intelligence—socially minded intelligence—that can be applied to both individuals and collectives. We outline how socially minded intelligence might be measured and cultivated within people, how it might be modelled in AI agents, and how it might be applied to other intelligent systems.

Public Abstract

In psychology, “intelligence” is generally understood to be something that either individuals or groups have. However, the extent to which people can make each other more intelligent by working collectively—and the extent to which groups are smarter for having individuals who can think for themselves—is underexplored. Artificial intelligence (AI) research has a similar problem, meaning that artificial agents lack the ability to engage in this kind of intelligence, both with each other and with people. To address this gap in the literature, we outline a new kind of intelligence for psychology and AI—socially minded intelligence—which can be applied to individuals, groups, and artificial agents. We discuss how socially minded intelligence might be measured, improved, modeled in AI agents, and applied to other intelligent systems such as teams consisting of people and AI agents.

Keywords

Introduction

Imagine the following scenario: You are stranded with a small group of strangers on a deserted island after your intercontinental flight was forced to make an emergency crash landing. Furthermore, imagine that your primary goal—what you want more than anything else—is to get home alive. How might each of the following features of this scenario help you achieve this goal? (A) You have a high intelligence quotient (IQ); (B) you and the other survivors of the crash immediately form a cohesive, yet intellectually diverse group; or (C) you and the other survivors are able to act as individuals, subgroups, or as a single unit depending on the demands of the situation.

Individual perspectives on intelligence would say that Feature A (IQ) will be important, but that Features B and C are irrelevant to understanding your intelligence because they are contingent on other people rather than being individual traits or capabilities. Collective perspectives on intelligence would say that Feature B (group cohesion combined with cognitive diversity) is important for the group’s ability to achieve its goals—but these may not overlap with your goals as an individual. In the present article, we focus on Feature C, which relies on an individual capability (being able to flexibly act as an individual or a group member), but also on other individuals and the social context. Regarding your personal goal of getting home alive, Feature C allows you either to work with the group if they share your goal, or to split off on your own if the group decides they want to stay on the island forever. Moreover, Feature C also contributes something unique beyond Feature B to the group’s collective intelligence, as it allows the group to do such things as split into different foraging and exploration subgroups, or for subgroups to challenge the group’s consensus in decision-making. In short, Feature C—the ability of agents to switch between acting as individuals or as group members depending on the demands of the situation—is a basis for both you and your group to have socially minded intelligence.

We propose that socially minded intelligence is a key feature of intelligence more broadly (i.e., both for individuals and collectives), but that this concept has been neglected in social science and artificial intelligence (AI) research because researchers have tended to focus either on individual intelligence or on collective intelligence without considering their dynamic interaction. In the present article, we address this lacuna by first identifying an appropriate definition of intelligence that can encompass both individual and collective intelligence in different kinds of agents. We then review literature on the intelligence of humans (individuals and groups) and artificial systems (single- and multi-agent) before outlining and defining the interactionist concept of socially minded intelligence and deriving relevant metrics. We conclude by exploring applications of this concept.

Positionality Statement

The authors of this article are all White and hold academic positions at a university in a wealthy, White-majority country (Australia). Their social environment has shaped both their views on intelligence as a concept, and indeed has shaped how their own “intelligence” has been understood and enabled. Regarding the first point, the first two authors take a social identity approach to psychology (Tajfel & Turner, 1979; Turner et al., 1987), which emphasizes the importance of considering relations between groups and group members in understanding psychological phenomena. This metatheoretical approach was developed explicitly to challenge more individualistic conceptualizations of psychology (see Reicher et al., 2012). Moreover, the third author has worked extensively on topics where the importance of social contexts for individuals is highly relevant, such as social robotics, natural language, and human-centered computing. Accordingly, the specific research backgrounds of the authors have informed their conceptualization of intelligence as a social process in the present article.

Furthermore, throughout their lives, the authors have benefitted from working in a world in which their intelligence has been judged in relation to norms, standards, and metrics that reflect the norms and values of White, wealthy Westerners—particularly as these are framed by a cultural conceptualization of intelligence that sees this as an individual trait existing independently of the social environment. The perceived value of this kind of intelligence within the cultural context they live in has provided the authors with a range of benefits, including career opportunities and a legitimated voice on issues akin to those discussed in the present article. Beyond these privileges, and in line with the broader definition of intelligence used in this article, their intelligence in practice has been amplified by formal and informal assistance from other people, including senior collaborators and students. Their ability to achieve their goals has been facilitated further by groups, organizations, and institutions—lab groups, universities, and funding agencies. These social resources have amplified their intelligence in practice (i.e., goal achievement) beyond their individual abilities. The intention of this article is not just to acknowledge that existing intelligence metrics are often biased (see Gould, 1981; Richardson, 2002; Sternberg & Grigorenko, 2004) but also to capture how intelligence is enabled by the efforts of other people and groups and to make this social component of intelligence visible and distinct.

Defining Intelligence

There are many ways to define intelligence, with this concept having been applied to a diverse range of systems (Sternberg, 2020b). In the present article, we seek to understand the intelligence not only of humans but also of AI systems: “intelligent machines, especially intelligent computer programs” (McCarthy, 2004, p. 2). We focus on both human and artificial intelligence for several reasons. First, these kinds of intelligence are increasingly integrated through the practice of embedding AI in tools and systems (Afroogh et al., 2024; Gillespie et al., 2023), the growing implementation of AI agents within human environments (Casheekar et al., 2024; Gao et al., 2024), and the development of human–AI teaming (O’Neill et al., 2022; Schmutz et al., 2024). Second, there is a deep two-way relationship between psychology and AI, with a long history of the former shaping the latter (Barto et al., 1981; Hebb, 1949; Hinton & Salakhutdinov, 2006; Krizhevsky et al., 2012; Laird, 2012; Legg & Hutter, 2007; McCulloch & Pitts, 1943; Oettinger, 1952; Turing, 1948, 1950; Widrow & Hoff, 1960) and vice versa (Anderson, 1990; Casey & Moran, 1989; Goel & Davies, 2020; Neisser, 1967; Newell & Simon, 1972; Solso, 1988). Third, although these fields have different aims—with psychology generally seeking to explain and facilitate existing intelligence and AI often focusing on creating new kinds of intelligence—both share the same broad conceptualization of intelligence as something that either individuals or groups have independently. Accordingly, both fields share the same blind spot (focusing either on individual or collective intelligence without considering their dynamic interaction)—and therefore the same opportunity that might be addressed simultaneously through conceptual reframing.

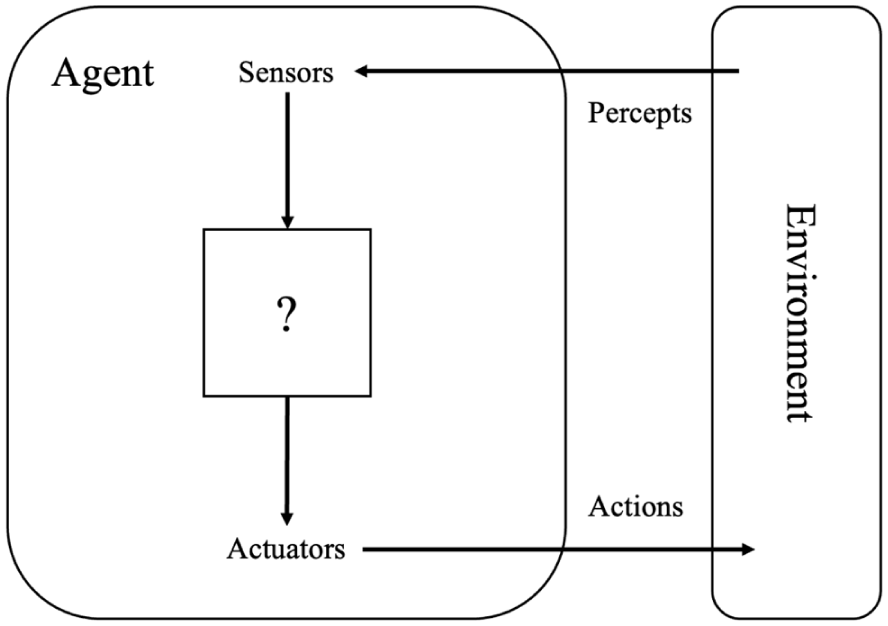

Agent-Based Intelligence

With this scope in mind, we take a perspective on intelligence that is agent-based—where an agent is “anything that can be viewed as perceiving its environment through sensors and acting upon that environment through actuators” (Russell & Norvig, 2021, p. 51). Agent-based approaches have been used successfully to create both single-agent (Singh et al., 2022) and multi-agent (Gronauer & Diepold, 2022) artificial systems. They have also been applied to understand the behavior of both individual people (Scalco et al., 2019) and human groups (Goldstone & Janssen, 2005). Agent-based intelligence is defined by Legg and Hutter (2007, p. 402) as “an agent’s ability to achieve goals in a wide range of environments.” This definition of intelligence is intended to represent “the essence of intelligence in its most general form” (Legg & Hutter, 2007, p. 402) and can therefore be used to understand the intelligence of both people and AI systems. Furthermore, we define “environment,” specifically performance or task environment, as the problem(s) faced by an agent in achieving its goals (following Russell & Norvig, 2021, p. 40).

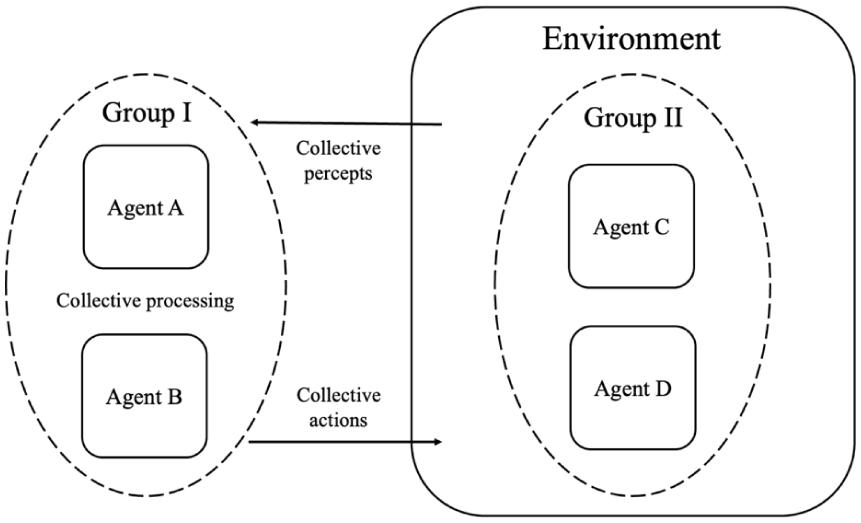

In the present article, we base our definition of intelligence on that of Legg and Hutter but adjust the framing of this in two key ways. First, we broaden the term “agent” to include multi-agent systems (MAS), not just individual agents. Specifically, we propose that because MAS are capable of having higher-level goals (Braubach et al., 2005; Dorri et al., 2018) and have the potential to act in a coordinated manner in their environment (Cao et al., 2013), Legg and Hutter’s definition can be applied to a group of agents to the extent that they (a) have shared higher-level goals and (b) act in their environment as a collective. This conceptualization of groups as agents is similar to that proposed by a number of other researchers (e.g., Bratman, 2013; Malone, 2018).

Second, for our present purpose, we assume that relevant measures of the intelligence of agents and MAS will capture their performance in relation to specific goals (whether these are desired states of the world, or more open-ended drives to maximize or minimize a particular state). In other words, we exclude the process of choosing high-level goals. However, we include the selection of new subgoals as a valid way of achieving existing higher-level goals, so long as the latter still exist to measure the current state of goal attainment. This restriction somewhat limits our definition of intelligence as it applies to humans, as goal setting and goal adaptation are considered to be part of human intelligence (Sternberg, 2020a). We make this simplification in order to be able to apply a similar standard to measuring the intelligence of both humans and agent-based AI systems (which have specified high-level goals in the form of desired states, utility functions, or performance standards; Russell & Norvig, 2021). Indeed, without this exclusion of choosing high-level goals from intelligence, no existing AI system would be considered intelligent (Fjelland, 2020; Goertzel, 2014). An implication of this approach is that the measurement of intelligence requires us to specify the goals that it corresponds to (Malone, 2018).

Taking all the above considerations into account, for the purposes of the present article, our working definition of intelligence extends that of Legg and Hutter (2007) to suggest that intelligence is the ability of an agent or MAS to achieve specific goals in a wide range of environments. Following this definition, a person or group is considered intelligent to the extent that they can achieve specific goals across different environments. This conceptualization aligns with the way that intelligence is understood across the various literatures we are discussing. For example, commonly used individual-difference measures such as IQ scores are intended to predict goal attainment in a variety of domains (Kim, 2008; Kriegbaum et al., 2018; Strenze, 2007). Similarly, collective intelligence metrics are used to predict group goal attainment across different kinds of tasks (Riedl et al., 2021). Regarding AI, an artificial agent or MAS can be said to be intelligent to the extent that it can achieve specified goals (e.g., with reference to performance benchmarks) in a wide variety of environments. Accordingly, our chosen definition can be used to discuss intelligence in all of the different kinds of systems in which we are interested.

Existing Approaches to Human and Artificial Intelligence

Approaches to Understanding Human Intelligence

Researchers in psychology and AI have tended to focus either on individual or collective intelligence, but not on the interaction between these levels of analysis. For example, regarding psychology, there are many theories that conceptualize intelligence in terms of differences between individuals. Some of these theories, such as the Cattell-Horn-Carroll theory (CHC; McGrew, 2009) focus on a single factor of intelligence (“g” in the CHC theory) within a hierarchy of lower-level factors. Other theories focus on specific kinds of intelligence, such as practical intelligence (Sternberg, 2000), emotional intelligence (Salovey & Mayer, 1990), social intelligence (Kihlstrom & Cantor, 2020), or cultural intelligence (Earley & Ang, 2003); or on combinations of intelligences (Gardner, 2011). A common feature of these approaches is that they treat intelligence as an individual-difference trait or capability—that is, as something that an individual person “has an amount of” and that remains fairly stable across contexts. This perspective is epitomized by IQ tests, which ostensibly identify an individual’s level of g, primarily for the purposes of comparing individuals to each other (Byington & Felps, 2010; Richardson, 2002).

In contrast to these individualistic accounts of intelligence, other researchers have focused on collective intelligence. This is generally conceptualized as a group-level variable and has been defined as “groups of individuals acting collectively in ways that seem intelligent” (Malone & Bernstein, 2015, p. 3). For example, Woolley and colleagues (2010) have identified a general factor called “c,” which is analogous to g in the CHC theory of individual human intelligence. Several variables that impact on collective intelligence have been identified, including the abilities of group members (Adair et al., 2013; Woolley et al., 2010), the specific configuration of group members’ traits (Aggarwal & Woolley, 2013; Williams Woolley et al., 2007), collective attention (Aggarwal & Woolley, 2013; Woolley, 2009a, 2009b), group structure (Girotra et al., 2010; Larson, 2009; Malone, 2018; Momennejad, 2021), and intragroup processes (Faraj & Xiao, 2006; Malone et al., 2010; Okhuysen & Bechky, 2009). However, while collective intelligence research points to ways in which a group might be more or less intelligent, it does not specify how acting collectively impacts on the intelligence of group members themselves (in terms of their own individual goals). At the same time, as things stand, it is not clear how the group-level factors that contribute to collective intelligence, such as group structures and intergroup processes, are mediated through individual-level psychological variables such as trust (Costa et al., 2001; De Jong et al., 2016) and social identity (Ellemers et al., 2004; Haslam, 2004b).

Limitations of Existing Approaches to Human Intelligence

Limitations of Individualistic Approaches

Many high-level constructs within human intelligence research (such as g and c), as well as more specific factors (such as processing speed and emotional intelligence), predict variance in performance outcomes (Luo et al., 2006; O’Boyle et al., 2011; Schmidt & Hunter, 2004; Woolley et al., 2010). For example, c was found by Woolley and colleagues (2010) to account for 43% of performance on a variety of group tasks. Similarly, basic cognitive processes such as processing speed have been found to be positively correlated with scholastic performance (Luo et al., 2006). However, regardless of the construct or chosen outcome, there is substantial variance in performance left unexplained by existing conceptualizations of human intelligence (Fox & Spector, 2000; Luo et al., 2003; Woolley et al., 2010). We propose that additional variance might be accounted for by the ability of a person to solve problems by thinking and acting together with other people. Specifically, we suggest that being able to work with others (and having others to work with) will predict variance in a person’s intelligence beyond that explained by their individual-difference traits and capabilities. Moreover, we propose that the ability of people to work with others in a flexible, context-sensitive way will predict variance in collective intelligence when accounting for existing variables that predict this outcome.

Existing individual tests of human intelligence inherently reflect the influence of the social environment (Sternberg & Grigorenko, 2004). These tests measure intelligence within existing human social structures, such as organizations, schools, and cultures. However, they either ignore the input of these social structures (as in IQ tests; Richardson, 2002), or use them to fine-tune the testing context (as in the case of successful intelligence as conceptualized by Sternberg, 1999; or in social intelligence as conceptualized by Kihlstrom and Cantor, 2020). In either case, these tests fail to account for the contribution of the social environment itself to a person’s intelligence. For example, regarding the first approach, asking two people from different cultures or socio-demographic backgrounds to complete an IQ test and then comparing their scores fails to account for the variance in their performance that arises from the fact that IQ tests have been designed to predict outcomes within a specific (i.e., Western, white collar) social context (Gould, 1981; Richardson, 2002), and hence the test is likely to undervalue the intelligence of people from other backgrounds (Greenfield, 1997). Practical intelligence methods address this problem by testing more context-specific outcomes (e.g., assessing domain-relevant tacit knowledge; Sternberg, 2020a). However, if a person tends to solve problems with others (regardless of their socio-cultural background), requiring them to perform an intelligence test without those others will also undervalue their intelligence—defined as their ability to achieve their goals in a wide range of environments. In contrast, another person who tends to solve problems by themselves may have their intelligence overestimated since the range of environments evaluated does not include those in which working with others would be advantageous. We propose that the social context should be accounted for in theories and tests of intelligence, and indeed that its influence should be measured as part of intelligence itself.

Limitations of Approaches to Collective Intelligence

The current lack of integration between individual and collective human intelligence limits our understanding of the intelligence of groups and group members in two key ways.

The first is that current conceptualizations of collective human intelligence cannot be used to understand how intelligent a given person is in the practical contexts of everyday life. In particular, while collective intelligence research has identified features of individual group members that can help a group achieve its goals (e.g., task-relevant abilities; Malone & Woolley, 2020), this perspective provides no insight into how working with a group might improve the intelligence of a group member in terms of their own individual goals. For example, factors that contribute to collective intelligence may not predict whether a researcher’s personal goal to write highly-cited first-author papers will be better achieved by working with one lab group versus another—as this depends on more than just how effective those lab groups are as collectives. In particular, if one lab group is cohesive but distributes authorship evenly among group members, while another is fragmented but allows the researcher to claim first-authorship for more papers, then the researcher may be better able to achieve their individual goal in the “less effective” group. Similarly, an elite sportsperson playing a team sport may be better able to achieve their personal goal of being acknowledged as the best player in the world on a worse team where they are able to shine individually, rather than on a better team where they are required to play a reduced role. In other words, we agree with Malone and Woolley’s (2020, pp. 793–794) claim that “studying collective intelligence provides a link between cognitive psychology and high-level social, organizational, and economic processes” but observe that this link has tended to be one-way in intelligence research—that is, it focuses on the ways in which individual cognitive psychology impacts on collective processes. The inverse link from social processes to individual psychology is neither imagined nor explored in intelligence research—although it has been explored in other domains (e.g., group-based trust, Cruwys et al., 2021; the impacts of social rituals on cognition, emotion, and behavior, Hobson et al., 2018; social norm change as a function of group communication about prototypes, Hogg & Reid, 2006).

The second problem that arises from focusing on the group level of analysis concerns the lack of clarity around how intragroup dynamics shape collective intelligence (Janssens et al., 2022). In particular, while independent yet integrated decision-making has been highlighted as important for collective intelligence outcomes (Caruso & Williams, 2008; Surowiecki, 2004; Woolley, 2009b), and different kinds of intragroup decision-making structures have been identified (Malone, 2018), the dynamics of these processes in terms of changes over time have not been clearly articulated. For example, the intermediate level of analysis between individuals and groups consists of subgroups that can (and often will) have their own subgoals that may contribute to or hamper the achievement of the overall goals of the group (Cronin et al., 2011). Accordingly, the extent to which group members see themselves and act as a subgroup rather than group members in different contexts needs to be analyzed to determine the ability of the group to function as a whole (Bezrukova et al., 2009; Cooper et al., 2014; Dovidio et al., 2009). In some contexts, group members may benefit the group best by acting more as differentiated individuals rather than as undifferentiated group members (Adelman & Dasgupta, 2019; Packer, 2008). For example, an individual group member going against the group’s consensus (when this consensus is incorrect) may help to avoid problems such as groupthink; and having group members who retain some degree of individuality may allow them to contribute differing viewpoints, potentially increasing the group’s intelligence (Nemeth et al., 2001). Finally, because different decision-making structures are optimal in different circumstances (Malone, 2018), being able to change between these structures may help groups be more intelligent (Lei et al., 2016; Siegel Christian et al., 2014). Existing accounts of collective intelligence do not have an effective way of conceptualizing and predicting these kinds of dynamics.

Approaches to Artificial Intelligence

While there are many subfields and approaches within the field of AI, we propose that there is, broadly speaking, a gap between research into single- and multi-agent AI that mirrors the gap between individual and collective intelligence research in psychology. For example, many tests of AI system intelligence focus on performance against individual human benchmarks (Bringsjord & Schimanski, 2003; Mahoney, 1999). These approaches are examples of “psychological AI,” which focuses on designing systems that think like (individual) humans (Goel & Davies, 2020). More broadly, though, even researchers in AI who do not measure system performance by comparison to individual humans have generally conceptualized the intelligence of artificial systems as an individual-difference variable—that is, as something a given system has an amount of by itself, which is relatively stable and can be compared against other systems across contexts (e.g., Chauhan et al., 2024; Desnoyer & Wettergreen, 2010; Hernández-Orallo, 2017). Moreover, many AI systems have been inspired by ideas from individualistic paradigms within psychology such as associative learning and cognitive psychology (Anderson, 1990; Goel & Davies, 2020; Hinton & Salakhutdinov, 2006; Laird, 2012; R. S. Sutton & Barto, 1981), propagating an implicitly individual model of human intelligence in AI. Accordingly, there is a perspective within AI that implies that the best way to improve a system’s intelligence is to change its internal processes or components in some way (e.g., by improving processing speed, cognitive architecture, neural network size and depth, reinforcement learning, algorithm optimization, and training datasets; Bostrom, 2014; Cong & Zhou, 2023; Russell & Norvig, 2021). In other words, this perspective implies that the way to make AI systems more intelligent (as with people) is to make them smarter as individuals.

A system of cooperating artificial agents (a MAS) is comparable to a human group comprised of cooperating group members (Chmait et al., 2016), and the ability of a MAS to achieve its goals can be thought of as its collective intelligence. One approach to designing MAS, inspired by game theory, is to coordinate agents by utilizing utility calculations. For example, this can involve aligning agents’ individual utility calculations to achieve equilibria (Stankovic et al., 2012; Tang & Yi, 2023), optimizing group-level utility (Caillou et al., 2010; Cui et al., 2012; Gronauer & Diepold, 2022; Rădulescu et al., 2019), or combining different types of equilibria-seeking behaviors (Semsar-Kazerooni & Khorasani, 2009; Verbeeck et al., 2002).

Another approach to designing MAS, “swarm AI,” is to construct systems that take inspiration from animals and other non-human biological systems (particularly ants and bees; Chakraborty & Kar, 2017) to produce emergent collective behavior (Askay et al., 2019; Bonabeau et al., 2020; Gray et al., 2018; Innocente & Grasso, 2019; Meng et al., 2016; Neshat et al., 2014). Game-theoretic and swarm approaches can be combined in a single MAS (Hahn et al., 2020). Agents in MAS are generally designed as components, with the aim of making the overall system more intelligent (Russell & Norvig, 2021). This aim is reflected in the assessment of collective artificial intelligence, which tends to focus on the goals of the system as a whole (e.g., Sami, 2020; Sathiyaraj & Bharathi, 2020). Accordingly, MAS agents are often designed to be less individually intelligent than they would be if they were independent agents. As Altshuler (2023, p. 4) puts it, “while designing intelligent swarm systems we must assume (and often even aspire for) having . . . available individual agents that are myopic, mute, senile and rather stupid.” Indeed, the kind of individualized thinking that typifies much human intelligence in groups is seen by some MAS researchers as a threat to coordination (L. Rosenberg & Willcox, 2020). In contrast to the uniform, simple agents in the majority of approaches to MAS intelligence, some studies have looked to investigate individuality, subgroups, and intragroup dynamics within collective systems (Aydin, 2012; Dorri et al., 2018; List et al., 2008). However, such approaches have focused primarily on the impact of these dynamics on collective (not individual) outcomes.

In summary, AI researchers have generally built and tested AI systems either as individual agents or as MAS. In the following section, we discuss some of the limitations of this exclusive focus on either the individual or the collective level of analysis.

Limitations of Existing Approaches to AI

Limitations of Individualistic Agents

Just as the individualistic approach to intelligence poses problems for psychology, it also causes issues for AI. For example, one of the most apparent differences between biological and artificial intelligence is that the former tends to be general and the latter tends to be narrow (Neftci & Averbeck, 2019). This means that while AI systems are often very powerful, they are at the same time fragile and rely on humans to construct and maintain their performance environment, in ways that limit their intelligence in the sense that we have defined it in this article (Brachman & Levesque, 2022). This is most apparent in contexts of use in which AI systems act with a high degree of autonomy in unconstrained settings involving interactions between agents (e.g., self-driving cars and other social robots). Such contexts can present a potentially infinite number of novel challenges outside the scope of any finite set of rules, where a human is not available to simplify the environment.

Progress in such fields, and toward solving the broader problem of “common sense” in AI, has been slow compared with other areas where humans use narrow AI systems as tools (Brachman & Levesque, 2022; Shanahan et al., 2020; Zhu et al., 2020). An individualistic approach to improving general AI (e.g., Bostrom, 2014; Sandberg & Bostrom, 2008; Yampolskiy, 2012, p. 99) assumes that the way to increase the intelligence of an agent is to improve something about that agent itself (e.g., its architecture, model size, reward function, data set). This approach is epitomized in the design of artificial agents, in that other agents are typically considered as part of the problem to solve, rather than a way to solve the problem—unless the agent is explicitly designed to be part of a MAS (e.g., Gronauer & Diepold, 2022). In contrast, most humans have the capacity to form part of a multi-agent team when this is appropriate or required to solve a given problem, and indeed this allows them to do so across varied environments (Malone, 2018; E. A. Smith, 2010; Von Hippel, 2018). In other words, a human who can work with others around them does not necessarily need to change anything about themselves to be better able to achieve their goals. More generally, many other naturally occurring intelligent systems, including animals, bacteria, and even cancers, rely on higher-level cooperation to increase the ability of agents to reach their goals (Capp et al., 2023; Cheney, 2011; Lee et al., 2022; Sachs et al., 2004; Whiten et al., 2021). Along these lines, we propose that individual artificial agents might perform better on benchmarks of goal attainment across different environments if they were able to work with other agents to achieve their own goals when appropriate and/or necessary.

Limitations of Multi-Agent Systems

Existing conceptualizations of intelligence also limit the potential of MAS in two important ways. The first results from the fact that treating collective intelligence as a coordination problem is computationally demanding (Russell & Norvig, 2021). In particular, approaches that aim to coordinate the “selfish” motivations of individual agents by establishing equilibria become intractable as the number of agents expands (Han & Hu, 2020; Papadimitriou, 2015; Wooldridge, 2009). This scalability problem also affects multi-agent reinforcement learning (Gronauer & Diepold, 2022; Mguni et al., 2018). In contrast, human cooperation can be scaled up to allow cooperation on a scale of hundreds, thousands, or millions of agents (Apicella & Silk, 2019). The second limitation is that, as with models of collective human intelligence, MAS designs tend to be static rather than dynamic, in the sense that the relationships between agents and the groups they belong to do not change in response to circumstances. For example, MAS typically have fixed organizational structures, such as hierarchical (“agents have tree-like relations”) or holonic (“agents are organized in multiple groups which are known as holons based on particular features, e.g., heterogeneity”), each of which is better suited to particular kinds of tasks (Dorri et al., 2018, p. 28,585). By comparison, human cooperation is fluid and context-sensitive (Rand et al., 2011). This means that while a group may have a particular structure at a given time, this is subject to change depending on the context and the task at hand (Hagemann et al., 2012; Stachowski et al., 2009). These two features of human cooperation—scalability and flexibility—help people to solve problems in changing environments in ways that artificial MAS currently cannot.

In sum, failing to account for the dynamic interaction between individuals and groups leads to both overestimation and underestimation of the intelligence of people and human groups. The lack of such abilities in artificial systems also reduces the design space and hence the potential intelligence of artificial agents and MAS. These, we suggest, are problems that a model of socially minded intelligence can help us address and resolve.

Socially Minded Intelligence

Thus far, our review of the literature on intelligence in individuals and groups (human and artificial) has revealed factors that contribute to individual intelligence (i.e., the ability of individuals to achieve goals) and collective intelligence (the ability of collectives to achieve goals) but it has also identified limitations that result from failing to account for dynamic interactions between these levels of analysis. In this section, we propose a new kind of intelligence to capture these interactions. We call this socially minded intelligence. This kind of intelligence only exists in contexts containing more than one agent.

For a target agent (individual or group) we define socially minded intelligence as the extent to which the agent (for an individual) or component agents (for a group) can flexibly perceive, think, and act alone or collectively towards the goals of the target agent. In contexts with more than one agent, individual agents with socially minded abilities have the potential (but not the requirement) to form an intersubjective “social mind” with others (Turner & Oakes, 1997). Such a social mind is a dynamic assembly of individual agents, each with their own individual goals, who in the present moment define themselves, and act, as group members and work toward shared goals. It is important to note that being able to perceive, think, and act with others requires others to be present—which means that other agents are inherently part of a target agent’s socially minded intelligence.

At the individual level, agents that can flexibly participate in a social mind with others have a theoretically increased potential to achieve their goals across different environments relative to agents that can only operate either as individuals or group members. We make this argument on the basis that a socially minded agent can achieve its goals in environments where this kind of flexibility is required, whereas an agent lacking this intelligence cannot. This, therefore, increases the number of potential problems that can be addressed. For example, a person caught in a violent political protest with the goal of survival who is able to join either the protestors or the authorities (or act as an individual) has more potential avenues for achieving their goal than a person who can only see themselves as a protestor, or only as an individual. Similarly, at the collective level, groups made up of such agents have an increased potential to achieve their goals relative to groups made up of agents that work only as individuals or only as group members. For example, the protestors in the same hypothetical situation have an increased number of future states in which they can achieve their collective goal of social change if they can temporarily act as individuals rather than always having to act as protestors. We argue that a similarly expanded set of goal-achievement states exists for socially minded artificial agents in environments requiring joint action for success populated by other agents that can be flexibly friendly, hostile, or neutral.

Indeed, there is evidence from the psychological literature to suggest that socially minded intelligence may have benefits in a range of domains required for intelligence (Malone, 2018), including learning, remembering, sensing, creating possibilities, and deciding on action. For example, individuals can learn more effectively with the help of others (Bentley, 2019; Vygotsky, 1978); other people can act as aids to memory (Lanzi et al., 2017; Momennejad, 2021; J. Sutton et al., 2010); creativity is unlocked in particular social contexts (S. A. Haslam et al., 2013); and decision-making can be improved through interaction with others (Gigerenzer, 2020; Mercier & Sperber, 2018). Collective intelligence might also be improved through the ability of group members to act flexibly as individuals or in subgroups. For example, having group members who can operate with some degree of independence can improve the ability of a group to generate ideas (Bissola & Imperatori, 2011; M. Rosenberg et al., 2022) and to decide on an effective course of action (Juni & Eckstein, 2015; Kameda et al., 2022; Surowiecki, 2004). Moreover, pro-social dissent within groups (whether by individuals or subgroups) can improve collective intelligence by challenging and changing harmful social norms (Packer, 2008) and improving creativity (De Dreu & West, 2001).

In light of the potential for collectives to contribute to individual intelligence and individuals to contribute to collective intelligence, we therefore propose that a new concept of socially minded intelligence might explain unique variance in the performance of individuals and groups. In this sense, we conceptualize socially minded intelligence as a phenomenon that contributes to, rather than constitutes, individual and collective intelligence. In other words, we assume that socially minded individuals and groups have a degree of intelligence (a capacity to achieve goals across different environments) that is independent of socially minded intelligence, and that socially minded intelligence therefore impacts on—rather than constitutes—this capacity. This contribution may be positive (e.g., when an individual forms a social mind with others that moves them toward their individual goal) or negative (e.g., when group members act as two subgroups with opposing goals to that of the group as a whole).

Socially Minded Intelligence for Individual Agents

Given our definition of intelligence as the ability to achieve goals in different environments, socially minded intelligence is formulated differently when viewed from an individual versus a collective perspective, since the goals of these entities may differ. The socially minded intelligence of an individual agent is defined in relation to the individual’s goals as follows:

An agent’s individual socially minded intelligence is the extent to which it can flexibly perceive, think, and act with other agents towards its own individual goals.

An agent with a high level of socially minded intelligence must (a) be able to flexibly perceive, think, and act with other agents using its own social abilities; (b) have other agents in its social environment to perceive, think, and act with; and (c) be able to act toward its own individual goals together with those other agents. In contrast, an agent that lacks socially minded intelligence might (a) be unable to flexibly perceive, think, and act with other agents due to a lack of social capability; (b) not have other agents in their social environment to perceive, think, and act with; or (c) be unable to act toward its own individual goals with those other agents. In other words, the socially minded intelligence of a given agent is a function of the agent itself, other agents, their relationship, and the alignment between agents’ individual and shared goals.

Socially Minded Intelligence for Collectives

We define a group as a social self-category that is superordinate to the individual (following Turner et al., 1987; Wiles et al., 2022). Further, “social self-category” is defined as a social category that is subjectively self-relevant to at least one agent (through explicit self-representation or encoded implicitly); and “superordinate to the individual” means higher-order (i.e., potentially applying to at least two agents). Examples of such groups might be a work team comprised of individual team members, a nation made up of citizens, or an emergent “minimal” group based a single shared characteristic such as shared fate or interests. A group may look like a single individual from the “outside,” but that individual may be subjectively pursuing the goals of a group even above their own individual goals. For example, despite its official disbandment in 1945, the Imperial Japanese Army existed until 1974 in the mind of Hiroo Onoda, a Japanese soldier who did not surrender after World War 2 and continued to fight until 1974, including several years completely alone. The superordinate group in this case was the Imperial Japanese Army, of which he was the only member. Nevertheless, this group shaped his behavior such that he attacked others on the basis of being enemy combatants (opponents of this group). This can be contrasted with his subsequent behavior as an individual, and as a representative of a different group (e.g., Japan as a nation in peacetime). Moreover, based on our definition of “group,” individual agents do not need to interact to constitute a group. They do need to share an identity or goals (to the extent that these constitute a superordinate social category)—but they do not need to know reflexively that they share these. For example, people can see themselves as fans of a sporting team (a superordinate group) without necessarily interacting or being aware of other fans.

The collective socially minded intelligence of a group is defined in relation to the group’s goals as follows:

A group’s collective socially minded intelligence is the extent to which individual agents in the group can flexibly perceive, think, and act as group members, subgroup members, or individuals towards the group’s superordinate goals.

Accordingly, a group with a high degree of collective socially minded intelligence must be comprised of agents that (a) can perceive, think, and act as individuals or group members; (b) can change between these states dynamically; and (c) in doing so can act toward the group or system’s superordinate goals. A group that lacks socially minded intelligence might be comprised of agents that (a) can perceive, think, and act either as individual or group members, but not both; (b) can perceive, think, and act either as individuals or group members but are not able to move between these states in response to the situation; or (c) in flexibly acting as individuals or group members fail to act toward the system’s overall goals or act in ways that work against these goals. Accordingly, the socially minded intelligence of a group is determined by the perceptual, cognitive, emotional, and behavioral flexibility of component agents; and the extent to which this flexibility leads to actions that align the agents with the goals of the group.

There is a fundamental asymmetry to our conceptualizations of individual and collective socially minded intelligence. Specifically, we treat both individual and collective socially minded intelligence as operating through the internal states of individual agents. This is because while an individual agent can be intelligent without a collective, a group cannot be intelligent (in the sense of achieving its goals) without its members. Indeed, a group is only an agent at all to the extent that it has members who can perceive, think, and act together. Moreover, the potential for a group to be an agent in this sense only exists when its members perceive it as a group, which is again mediated through individual states. Accordingly, we refer to the internal states of individual agents, rather than an internal state of the group itself, when discussing collective socially minded intelligence. In other words, we treat collective socially minded intelligence as an emergent property of group members and their relation to the group.

Stable, Variable, and Transferable Components of Socially Minded Intelligence

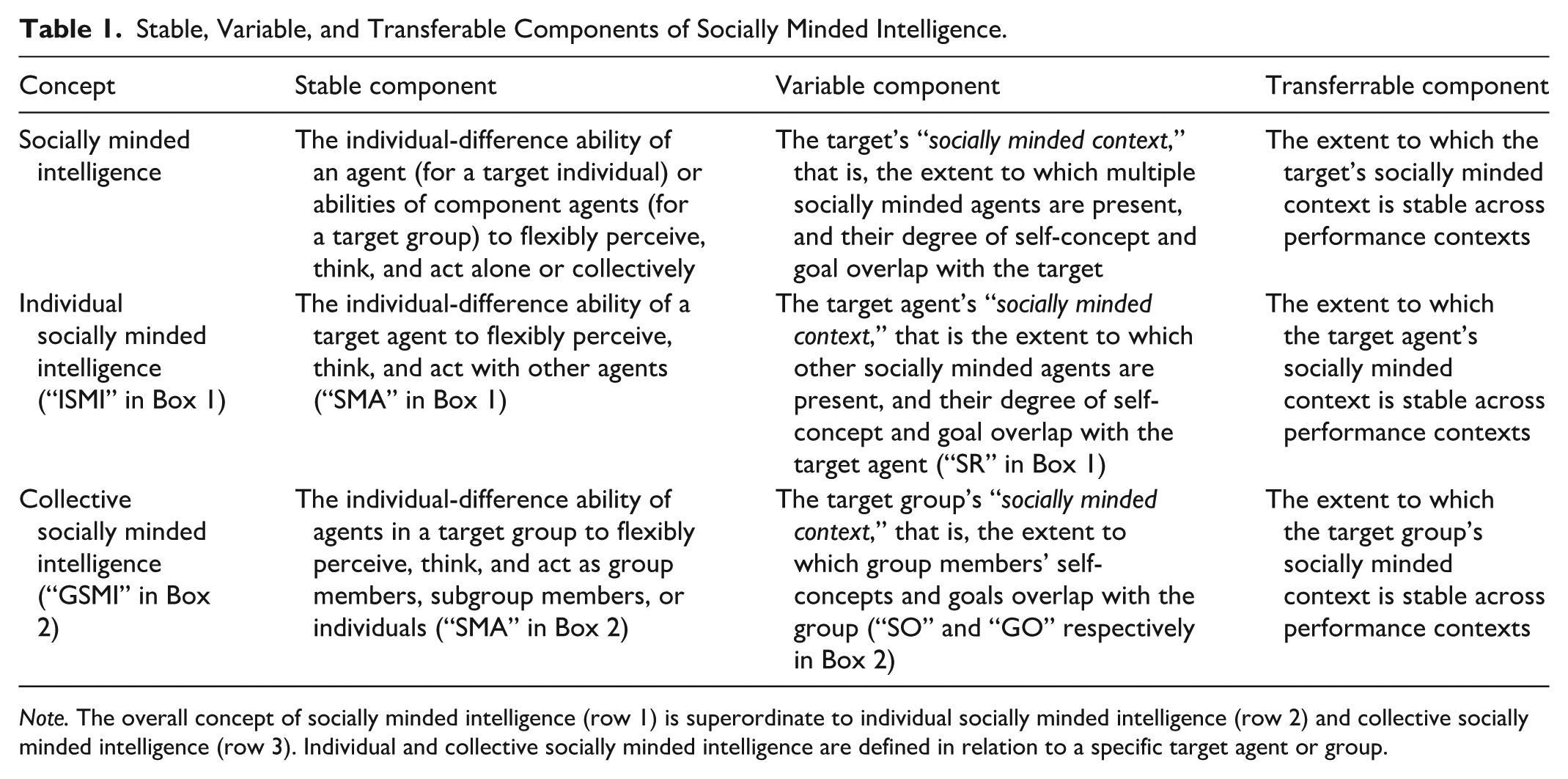

Inherent in the definitions of socially minded intelligence outlined above are two key elements: a relatively stable component (i.e., agents’ capacity to be socially minded that comes from their own individual-difference abilities), and a variable component (i.e., the extent to which other socially minded agents are present, and their self-concept and goal overlap with the target). This variable component can also be called the target agent’s “socially minded context,” and it reflects the extent to which other agents have shifted from being part of the problem to be solved (i.e., part of the performance environment) to being part of problem-solving (i.e., part of the target agent’s ability to achieve its goals). These elements combine in a given context to produce socially minded intelligence, following the dynamic person × situation conceptualization of interactionist social psychology (e.g., Turner et al., 1987). Moreover, there is the potential for a third element that is important for intelligence as we have defined it in this article: a transferable component. This component occurs when a socially minded context remains stable across performance environments, such as when a cohesive human group stays together to solve problems in changing situations. In other words, socially minded intelligence always contains a relatively stable component and a situationally determined component, with the latter potentially being transferable. These components are outlined in reference to the definitions of socially minded intelligence in Table 1.

Stable, Variable, and Transferable Components of Socially Minded Intelligence.

Note. The overall concept of socially minded intelligence (row 1) is superordinate to individual socially minded intelligence (row 2) and collective socially minded intelligence (row 3). Individual and collective socially minded intelligence are defined in relation to a specific target agent or group.

The stable component of socially minded intelligence is represented by the individual-difference ability to be socially minded—to be able to flexibly perceive, think, and act with others—present in the target agent (for individual socially minded intelligence) or in the individual agents who make up a group (for collective socially minded intelligence). As an individual-difference ability, this component exists even when only a single socially minded agent is present. However, in such a situation it is not predicted to impact on that agent’s intelligence because it has nothing to interact with. Moreover, even when other socially minded agents are present, it is not the case that this ability will necessarily combine with the social context to increase a person or group’s intelligence, as other socially minded agents may have goals that contradict the target’s, leading to an overall negative effect on their intelligence. Accordingly, this stable component does not represent socially minded intelligence per se. Rather, its impact on intelligence in a given context depends on the variable component, and therefore, it is not attempting to explain the same variance in intelligence as other conceptually stable intelligence constructs such as g and c. Indeed, an individual who always perceives, thinks, and acts with others is not necessarily more intelligent as a result, and may, in some circumstances, be less intelligent. In this sense, their intelligence is more dependent on others—such that we propose that more socially minded agents have a “higher ceiling and lower floor” for their intelligence compared with less socially minded agents.

Because socially minded intelligence contains a variable component that interacts with the stable component to impact on intelligence, it might be argued that such a construct is too contextual to have predictive value across performance environments. Accordingly, it is important to restate that a socially minded context can be transferable to the extent that it does not change with the performance environment. And, to the extent that it is transferable, the variable component is predicted to impact systematically on the target agent’s intelligence across performance environments, thereby explaining unique variance in the target agent’s ability to solve problems. However, the socially minded context does not have to be stable. Indeed, it is precisely because socially minded context can be transferable—but need not necessarily be—that socially minded intelligence is theoretically context-sensitive in a way that other intelligences are not. In other words, a transferable socially minded context is like a tool that can be picked up and used to solve different problems by socially minded agents, but can potentially be abandoned if it is useless or counterproductive. We explore how some individuals might be better or worse at selectively utilizing this tool (which is different from their ability to use it at all) in the section “Factors Impacting on Socially Minded Intelligence.”

Examples of Socially Minded Intelligence

So far, we have defined socially minded intelligence in abstract terms so that it can be applied to people, AI systems, individuals, and groups. To make the concept more concrete and distinct from other intelligence constructs, we can consider hypothetical examples of what socially minded intelligence might look like. For example, an elite sporting or military team may have a great deal of collective intelligence in their normal sphere of operation—and indeed team members may also have high levels of individual intelligence. However, such a team may not have much individual or collective socially minded intelligence if group members are “fused” (Swann et al., 2009) such that they permanently see themselves in terms of this collective identity (as this would reduce the self-definitional flexibility of group members). Accordingly, such a group may be high performing in its regular performance environment, but both individuals and the group itself may be susceptible to environmental change that requires individual action or action in terms of a different collective identity. For example, members of such a group and the group itself would be predicted to struggle in peacetime, or during ceasefires (for military units); or in the offseason, in other levels of competition (e.g., domestic vs. international), and in retirement (for athletes). If the stable component of socially minded intelligence were greater for such a group, this would predict that the group would find such transitions easier. For example, an elite military team that has high socially minded intelligence would be predicted to be able to disband more effectively during ceasefires or peacetime because members can act as individuals or in terms of other social identities as needed. Moreover, such a group would also be able to psychologically reform as a group quickly if hostilities resume.

On the other hand, a group might have a great deal of socially minded intelligence without a high level of other individual and collective factors that predict intelligence. For example, a group of people with average IQ scores and without a formal structure or task-relevant abilities may nevertheless be able to adapt to a changing environment (for their individual and collective benefit) so long as they have a high level of socially minded intelligence. Here, the extent to which the variable component of socially minded intelligence is both positive and transferable will determine whether an otherwise unremarkable group can flourish in changing environments. Needless to say, a group with low individual, collective, and socially minded intelligence is not predicted to flourish in any circumstance.

The Dynamic Interactionist Metatheory of Socially Minded Intelligence

Socially minded intelligence has a dynamic interactionist metatheory (Turner & Oakes, 1986, 1997), which considers that understanding people and groups requires considering how “the mind (e.g., thoughts, emotions, memory, perception, imagination) and mental functioning are qualitatively transformed. . . through social interaction and shared activities” (Reynolds et al., 2010, p. 466). This is contrasted against an individualistic or reductionist metatheory, “where explanations of such collective phenomena are reduced to asocial, non-contextualised, abstract causes (e.g., temperament, mood, biology, individual differences, limitations and biases of cognition).” The dynamic interactionist metatheory, as represented historically by the social psychology of Sherif (1936), Lewin (1951), Asch (1952), and researchers in the social identity tradition (Tajfel, 1979; Turner & Oakes, 1997), builds on principles of Gestält cognitive psychology and applies these ideas to cognition about people (Turner et al., 1987). Specifically, this kind of interactionism explains psychological phenomena as resulting from a dynamic interaction between a person and their situation, where neither the person nor their situation is fully independent and can be understood in isolation (Reynolds et al., 2010). Accordingly, socially minded intelligence can be considered dynamically interactionist in that it refers to the part of a person or group’s intelligence that is formed in a given situation, depending on the interaction between qualities of individuals (the stable component) and the dynamic relations between individuals and groups (the variable/transferable component). This interaction constitutes socially minded intelligence, not the components by themselves.

There are several other psychological concepts, traditionally understood using an individualistic metatheory, that can similarly be reconceptualized using a dynamic interactionist metatheory. For example, the “standard theory” of power (Fiske & Berdahl, 2007; Keltner et al., 2003; Raven, 1993) is that it is the capacity to influence other people based on control over outcomes. However, it has been argued (Simon & Oakes, 2006; Turner, 1991, 2005) that this kind of power is better described as “power over”, with another kind of power (“power through”, or “social power”) following not from potential coercion but from the recruitment of human agency via shared identity. A similar analysis has been applied to leadership, reconceptualizing this not as “a solo process but one that is grounded in relationships and connections between leaders and those they influence (i.e., followers)” (S. A. Haslam et al., 2024, p. 3). This social identity approach to leadership (S. A. Haslam et al., 2020) argues that leadership can be understood not only in terms of individual qualities of leaders or their formal position within hierarchies but also in terms of the dynamic interaction between leaders and followers. This is not to say that an identity leader has no agency in their ability to lead, but rather that this ability is contingent on their social context. Similarly, although socially minded intelligence is contingent on the social context, individuals and groups may seek to maintain or increase their socially minded intelligence and transfer this across performance environments. Other psychological phenomena that have been reconceptualized using an interactionist metatheory in a similar way include personality (Reynolds et al., 2010; Turner & Onorato, 1999) and creativity (S. A. Haslam et al., 2013). In considering how intelligence can be determined by the dynamic interaction between individuals and groups, the concept of socially minded intelligence follows this tradition.

Socially Minded Intelligence in People

In this section, we discuss how the concept of socially minded intelligence might be conceptualized, measured, and improved for people and the groups they belong to.

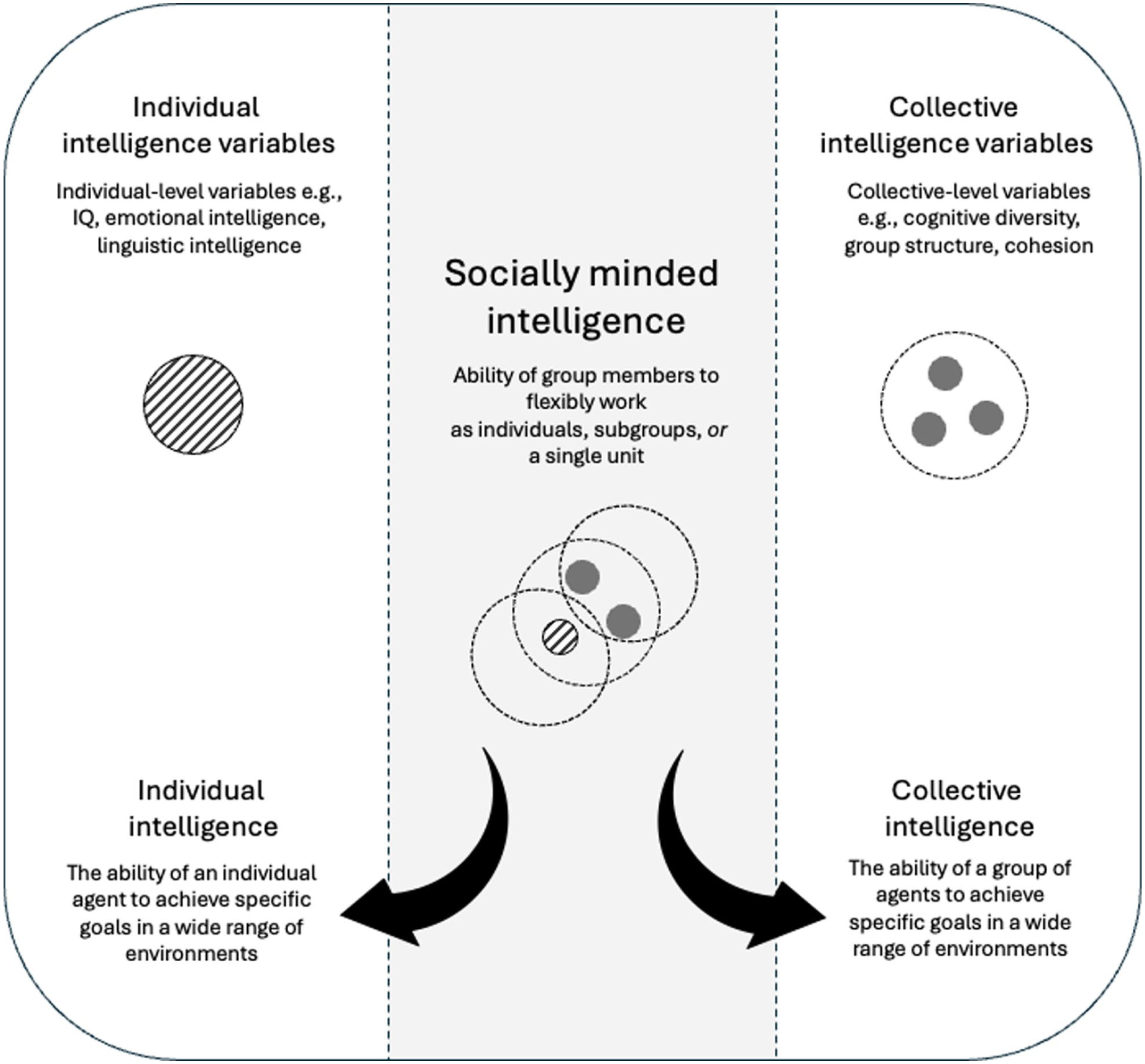

The Relationship Between Individual, Collective, and Socially Minded Intelligence

As previously stated, socially minded intelligence is considered to contribute to, rather than constitute, the intelligence of individuals and collectives. In other words, socially minded intelligence is not individual or collective intelligence per se, but rather an interactive process that contributes to both of these as outcomes. In this sense, socially minded intelligence is similar to individual and collective intelligences (see Figure 1), whether these are unitary concepts such as g or c or more specific intelligences such as linguistic intelligence or logical-mathematical intelligence. The primary difference between these existing intelligences and socially minded intelligence is that the former are generally conceptualized as either individual- or collective-level variables, whereas socially minded intelligence is conceptualized as an interaction between individual and collective-level variables. Indeed, it is because it accounts for the dynamic interaction between individuals and groups that we propose that socially minded intelligence contributes unique variance to individual and collective intelligence beyond that explained by existing intelligences.

Individual, collective, and socially minded intelligence.

Differences from Individual Intelligences

Socially minded intelligence is distinct from the other kinds of individual-level intelligences that we reviewed earlier in this article in that it is not a relatively stable individual-difference variable, but rather a function of individual-difference and contextual inputs. It shares similarities with social intelligence (Conzelmann et al., 2013; Kihlstrom & Cantor, 2020) to the extent that this ability allows an agent to connect with others, but social intelligence by itself is missing the variable/transferable component of socially minded intelligence that allows the latter to vary contextually. Accordingly, a person could have a great deal of social intelligence but not have any socially minded intelligence because they lack others to perceive, think, and act with—or because acting with those others goes against their personal goals. Another similar individual-difference intelligence is Sternberg’s (2003, p. 141) concept of successful intelligence—“the ability to achieve success in life in terms of one’s personal standards, within one’s sociocultural context.” Socially minded intelligence differs from this in conceptualizing the social (although not cultural) context as contributing to intelligence through its dynamic interaction with the individual. Ultimately, because socially minded intelligence is partially contextually determined, it does not provide the same information as existing individual intelligences, such as a person’s ability to achieve goals without working with others. In this sense, it is unfair to use a person’s socially minded ability as an indication of their purely individual ability. Indeed, it is not intended to explain the part of a person’s intelligence that comes from their abilities in isolation. Rather, it is intended to represent a person’s ability to be intelligent toward their own goals with other people in situ.

More broadly, considering how socially minded intelligence might contribute to a person’s individual intelligence enables a reevaluation of the tendency of people to conform or agree with others (e.g., Cialdini & Goldstein, 2004; Hoffman et al., 2001; Wood, 2000). For example, people tend to trust ingroup members more than outgroup members, even when this trust is based on arbitrary groups and even when it can lead to risk-taking behavior (Cruwys et al., 2021; Voci, 2006). In experiments to extract the influence of social environment from cognition, such tendencies appear to reduce individual intelligence (Clegg et al., 2017; N. Rhodes & Wood, 1992; Zuckerman et al., 2013). However, variables such as ingroup trust, cohesion, and the ability of group members to be influenced by leaders are vital for group dynamics, not least because they enable people to achieve goals collectively (Ellemers et al., 2004; Van Vugt & Hart, 2004; Wilmot & Ones, 2022) and are thus an inherent component of their socially minded intelligence. Accordingly, from a socially minded intelligence perspective, the individualized, static experimental context of psychology studies and intelligence tests is likely to overestimate the extent to which people who follow others are “biased,” rather than optimized for their social world. This prediction is consistent with research showing that heuristics (cognitive shortcuts) that lead to errors in experimental contexts can lead to successful performance in ecologically valid contexts (Gigerenzer, 2020; Oakes et al., 1994). Just as human rationality is less effective when deployed by individuals without the input of other people (Mercier & Sperber, 2018), so human intelligence more broadly appears impoverished when it is removed from its social context.

Differences from Collective Intelligences

Socially minded intelligence also differs from existing factors that contribute to collective intelligence because it is not (a) a fixed quality of group members or a configuration of traits (Adair et al., 2013; Williams Woolley et al., 2007; Woolley et al., 2010); (b) a fixed quality of the group itself (Girotra et al., 2010; Larson, 2009; Malone, 2018; Momennejad, 2021); or (c) a quality of group dynamics explained at the group level (e.g., Faraj & Xiao, 2006; Malone et al., 2010; Okhuysen & Bechky, 2009). Rather, it connects individual qualities (specifically, the ability to be socially minded) with group-level factors through consideration of the psychological relations between group members and the group in a given context. This means that it can explain how intragroup processes such as leadership and competition interact with the psychology of group members to shape collective intelligence dynamically. For example, a socially minded intelligence analysis predicts that a group where group members have a high (vs. low) average level of socially minded ability has the potential for more trust, cohesion, and coordination—but also the potential for less, depending on the salience of individual or subgroup identities and the degree to which their goals overlap with that of the group. In other words, there is no such thing as an inherently intelligent group in a socially minded sense—it depends on the dynamic interaction between individual group members and their socially minded context.

Moreover, socially minded intelligence further differs from other collective intelligence factors in that it can also be used to explain how collectives impact on the intelligence of individuals. For example, an individual group member’s potential to achieve their goals in different environments is predicted to be maximized in a context where they can form a social mind intersubjectively with others—if this social mind is directed toward their own individual goals. In other words, socially minded intelligence links individual psychology to group factors in a contextual, bidirectional way that other collective intelligence factors do not.

Other Differences

Both individual and collective socially minded intelligence also differ conceptually from the motivation or drive to work collectively. In particular, individuals may have a greater or lesser level of socially minded intelligence with the same level of motivation to work collectively, depending on the alignment of their goals with the goals of other group members. Similarly, a group in which individuals are highly motivated to work collectively may not have a great deal of socially minded intelligence if group members have low socially minded abilities.

Furthermore, because socially minded intelligence requires consideration of the target’s goals from the target’s perspective, it cannot be understood as a straightforward extension of the concepts of either individual or collective intelligence. For example, individual socially minded intelligence might potentially be conceptualized as simply expanding individual intelligence to account for the contributions of others. However, the individual socially minded intelligence of group members cannot be used to calculate the socially minded intelligence of the group itself, because the former does not consider the group’s goals in its calculation. Similarly, a group’s socially minded intelligence might be considered an expansion of the concept of collective intelligence in the sense that it includes the aligned contributions of individual group members. However, collective socially minded intelligence does not tell us how much socially minded intelligence a given group member has. Indeed, a group of strongly psychologically invested group members might hypothetically achieve its own goals at the expense of its group members’ individual goal achievement. This further justifies why we have defined individual and collective socially minded intelligence separately.

Measuring Socially Minded Intelligence

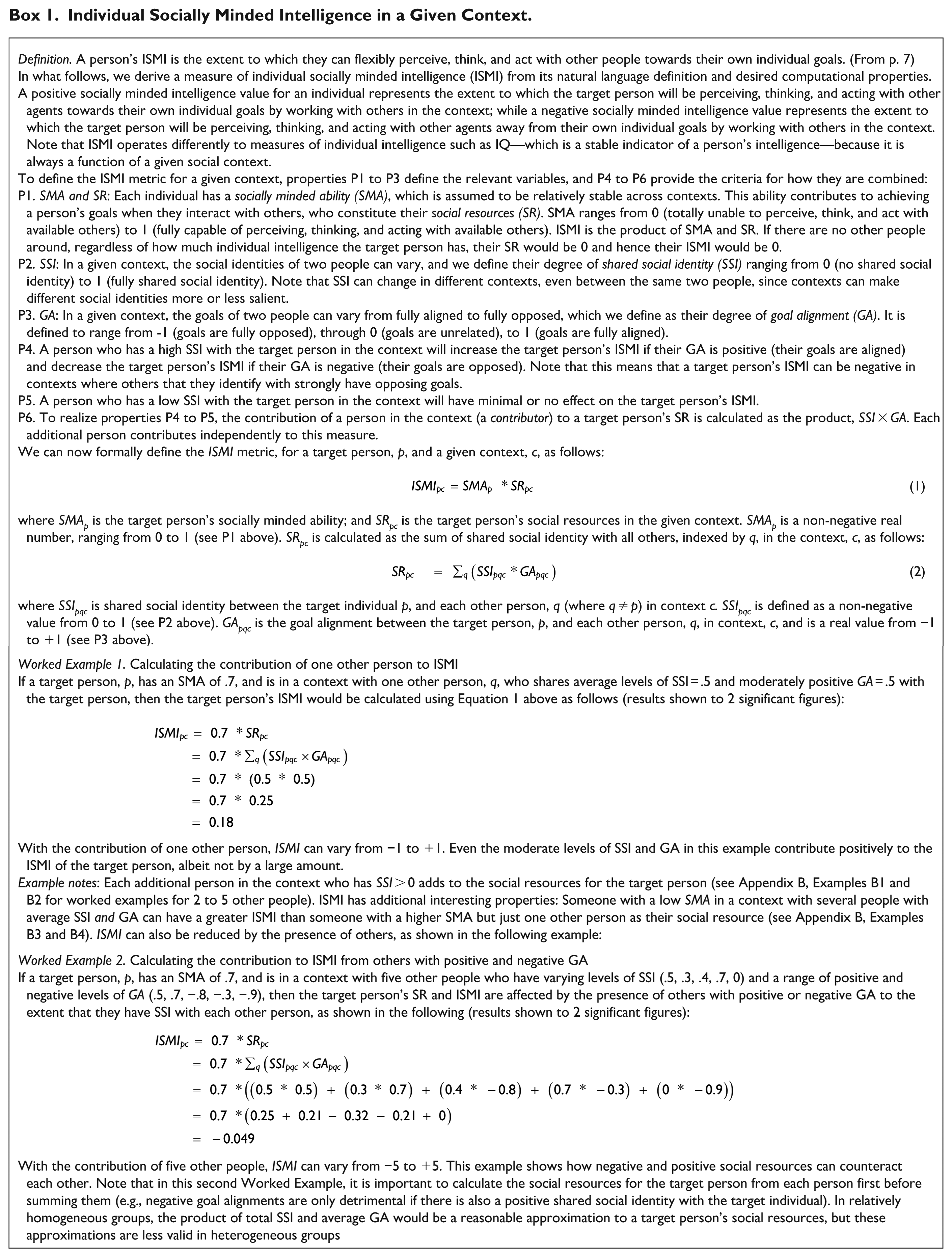

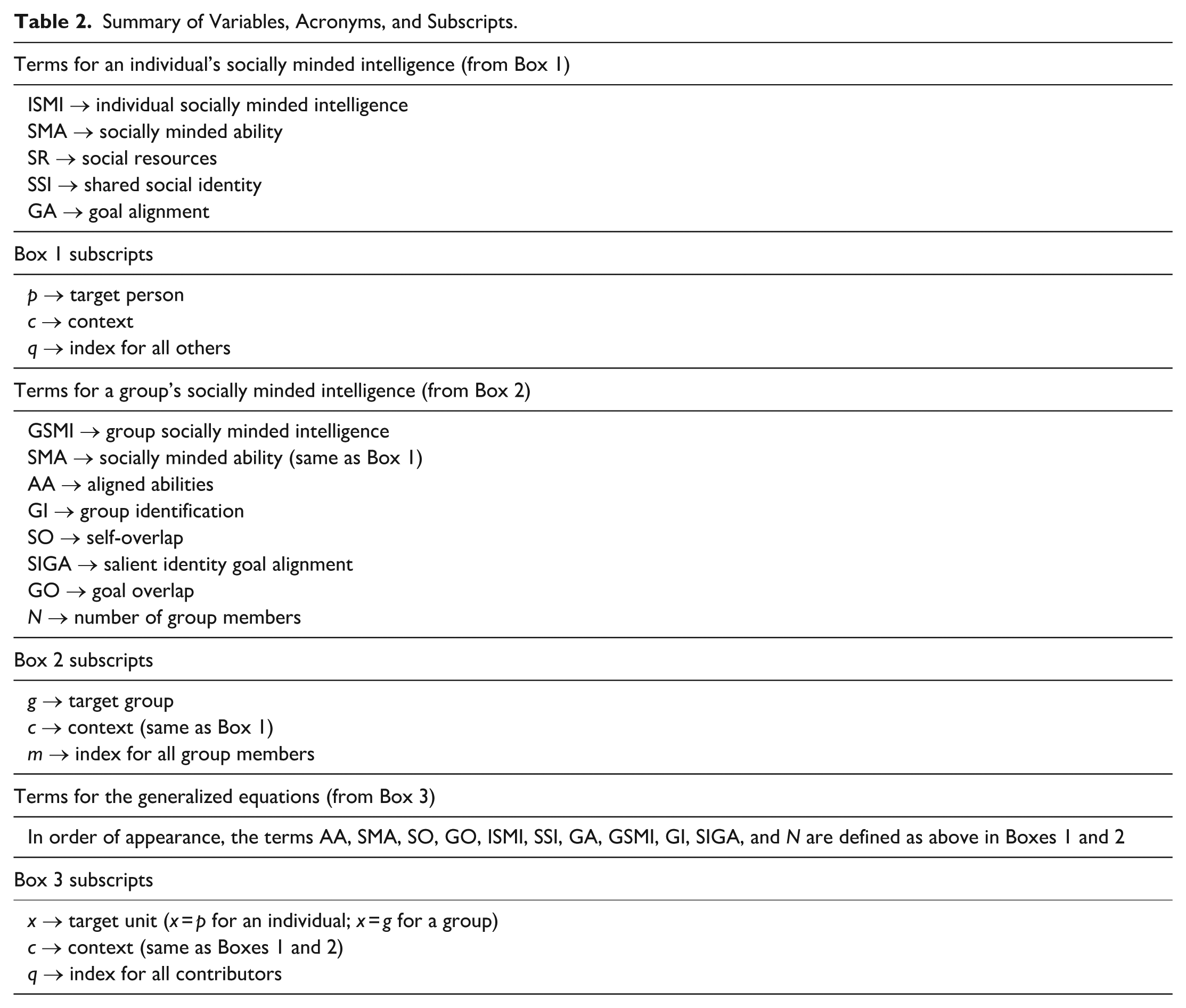

Measuring a person’s socially minded intelligence requires new methods that capture not only their abilities as an individual, but also their social environment. As a starting point, a target person’s ability to perceive, think, and act with others (when such others are available) can be operationalized using measures of their ability to flexibly construe the self in individual or collective terms. This is because seeing the self in collective terms allows individuals to integrate information from others into their perception of the world (Bentley et al., 2017; Coppin et al., 2016; Cunningham & Van Bavel, 2009; S. A. Haslam et al., 1996; Shankar et al., 2013; Van Bavel & Cunningham, 2012; Xiao & Van Bavel, 2012), to selectively incorporate others’ inputs into their own decision-making (Cohen, 2003; Esposo et al., 2013; Fielding et al., 2020; Graupensperger et al., 2021; Turner, 1991; Van Knippenberg et al., 1994; Voci, 2006), and to work with others toward shared goals (Ellemers et al., 2004; S. A. Haslam et al., 2000; S. A. Haslam & Reicher, 2012; Van Knippenberg, 2000; Van Knippenberg & Ellemers, 2003). Critically, in order to have socially minded intelligence, collective self-construal must be flexible, so that individuals are not “stuck” at a particular level of self-construal. One such construct that represents this flexibility is self-categorization ability (Skorich & Haslam, 2022). This and other similar abilities could be combined to form a composite measure of socially minded ability, SMA, representing the target person’s individual-difference traits and skills that are important for socially minded intelligence. SMA is the quantitative operationalization of the stable component of socially minded intelligence.

The social environment of a target person provides two key variables that measure the extent to which other people will contribute toward the target’s socially minded intelligence. The first of these reflects the extent to which each person present shares a sense of social identity with the target person (shared social identity, SSI; e.g., Khan et al., 2015). Because people are more trusting of (Cruwys et al., 2021; Voci, 2006), have better communication with (Greenaway et al., 2015, 2016), and are more well-intentioned toward those with whom they share a sense of social identity (Dovidio et al., 1997; Levine et al., 2005; Tajfel & Turner, 1979), shared social identity can be seen as representing the extent to which a person will perceive, think, and act with available others. The second relevant variable provided by the social environment is the extent to which the target person’s personal goals align with those they might potentially work with in the current context. One way to quantify this variable is to measure the goal alignment, GA, between the target person and each other person in the context.

The social resources, SR, of the target person combines their shared social identity and goal alignment with each other person in the current context. This term represents how much the potential social power (Simon & Oakes, 2006) represented by shared social identity is enabled by goal alignment. SR is the quantitative operationalization of the target person’s “socially minded context”—the variable (and potentially transferable) component that contributes to their socially minded intelligence in a given situation. A person’s socially minded intelligence (the extent to which they can perceive, think, and act with other agents toward their own individual goals) is therefore a function of their individual-difference socially minded ability and social resources, which in turn combines shared social identity and goal alignment in that context. This individual socially minded intelligence (ISMI) is the quantitative operationalization of the interaction between the stable component of socially minded intelligence (i.e., SMA) and the variable/transferable component (i.e., SR) in a given situation. Box 1 provides an example of how this measure might be calculated more specifically (see Appendix A for a list of relevant terms).

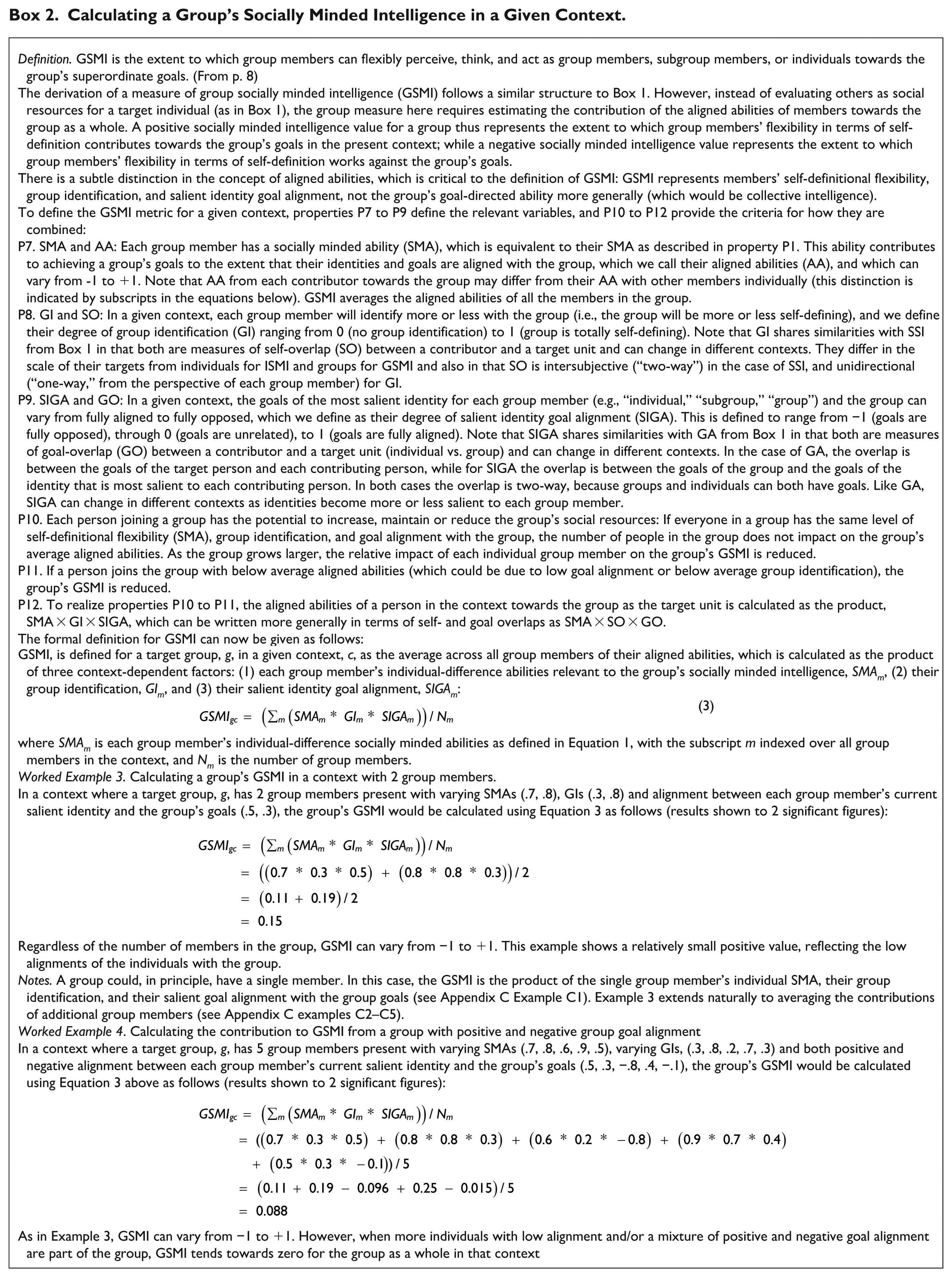

For groups, it is possible to calculate a similar measure of socially minded intelligence (the extent to which each person in the group can flexibly perceive, think, and act as a group member, subgroup member, or individual, and act accordingly toward the system’s superordinate goals). This requires measuring (a) the individual-difference abilities of group members that are relevant to socially minded intelligence; (b) the degree to which the group is self-defining for group members (i.e., “group identification”; Postmes et al., 2013); and (c) the extent to which the goals of the identity that is currently most salient to group members (e.g., individual, subgroup, superordinate group; Forehand et al., 2002) align with the group’s goals. In other words, a group’s socially minded intelligence (GSMI) is a function of group members’ individual-difference socially minded ability, SMA; their group identification, GI; and their salient identity goal alignment, SIGA. As with ISMI, GSMI is the quantitative operationalization of the interaction between the stable (SMA) and variable/transferable components (GI and SIGA) of socially minded intelligence in a particular situation. In this case, however, the target for which socially minded intelligence is calculated is a group rather than an individual agent. Box 2 provides an example of how GSMI might be calculated more specifically in a given context.

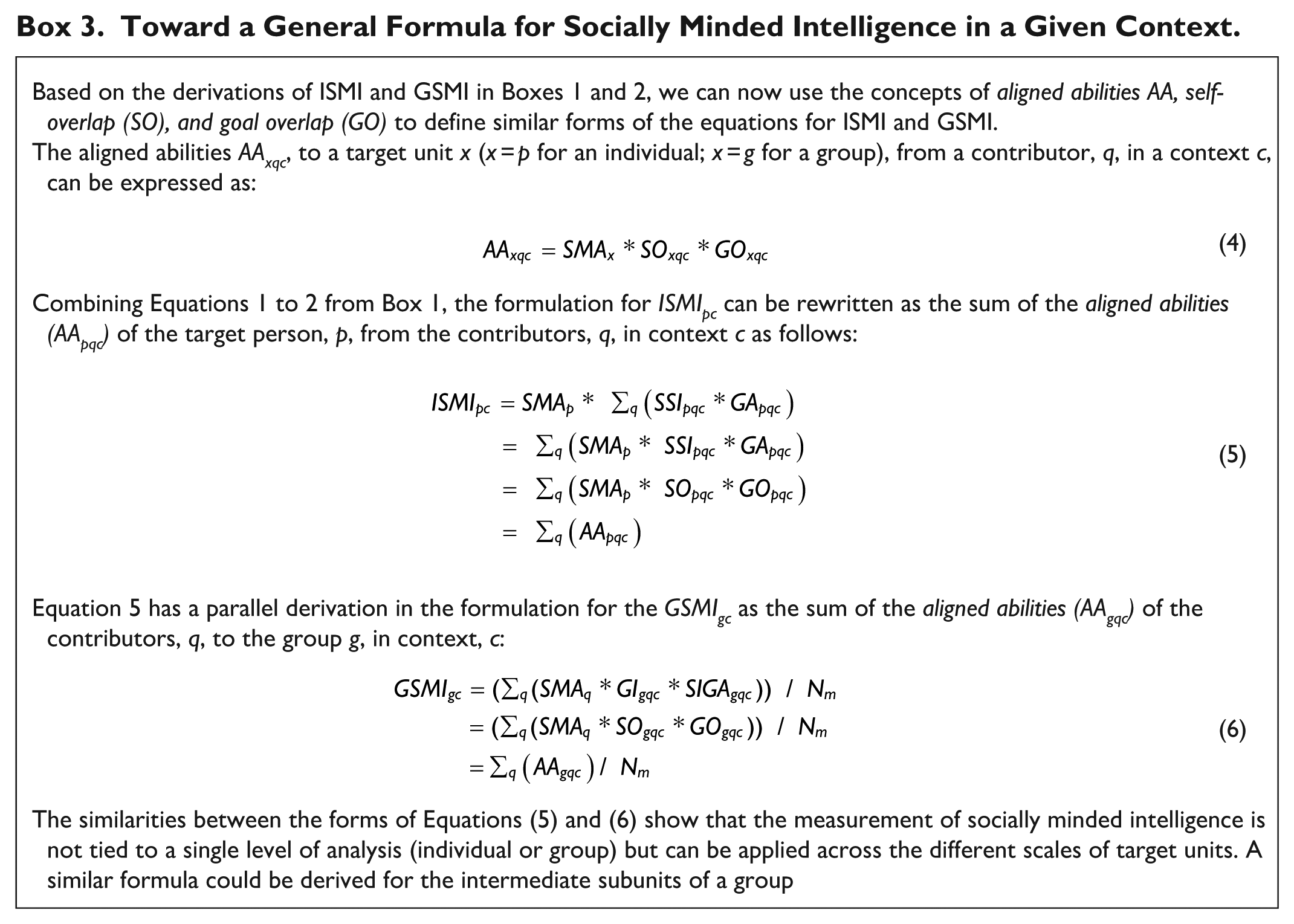

The definitions for ISMI and GSMI derived in Boxes 1 and 2 show a deep mathematical similarity between their formulations. The general concepts of aligned abilities, self-overlap, and goal overlap may also be useful for defining other ways to use the concepts of ISMI and GSMI (see Box 3).

Individual Socially Minded Intelligence in a Given Context.

Calculating a Group’s Socially Minded Intelligence in a Given Context.

Toward a General Formula for Socially Minded Intelligence in a Given Context.

The equations in Boxes 1 to 3 require that socially minded ability, self-overlap (group identification and shared social identity), and goal overlap are quantified in some way. While measures for some of these constructs, such as group identification (Leach et al., 2008; Postmes et al., 2013) and shared social identity (Khan et al., 2015) have been developed, others such as socially minded ability (which we have argued may be roughly represented by self-categorization ability; Skorich & Haslam, 2022) and identification of goals and their alignment (Seijts & Latham, 2000; Y. Zhang & Chiu, 2012) require further conceptualization and development.

Factors Impacting on Socially Minded Intelligence

We have previously discussed how socially minded intelligence differs from individual and collective intelligence, broadly speaking, in that it impacts on both of these as outcomes. Moreover, because socially minded intelligence is interactionist (i.e., it is constituted by the dynamic interaction between individuals and social contexts), it is also likely that individual and collective intelligence factors will have an impact on it in turn. We refer here to factors beyond those in the definitions outlined above (and their corresponding measurement equations), drawing primarily from research in psychology given that socially minded artificial agents do not yet exist. For each factor, we outline how these impacts might operate through the terms in the equations for calculating socially minded intelligence (see Boxes 1–3 for explanations of these terms).

Personality Traits

One set of individual factors that may impact on socially minded intelligence are personality traits that systematically predispose someone toward working with others. For example, agreeableness (“individual differences in the motivation to maintain positive relations with others”; Graziano & Tobin, 2017, p. 105) and extraversion (“individual differences in the tendencies to experience and exhibit positive affect, assertive behavior, decisive thinking, and desires for social attention”; Wilt & Revelle, 2017, p. 57). These kinds of “pro-social” factors can be considered as impacting on an individual’s socially minded intelligence through systematically increasing self-overlap across situations, leading to a “higher ceiling but lower floor” for their intelligence (higher and lower possible values of individual socially minded intelligence depending on goal overlap). While we do not predict that this will necessarily lead to higher individual socially minded intelligence, an inclination to work as a group member rather than an individual may predict higher individual socially minded intelligence on average, given the cooperative nature of much of human behavior (Boyd & Richerson, 2009). Indeed, both agreeableness (Wilmot & Ones, 2022) and extraversion (Wilmot et al., 2019) have been associated with positive outcomes for individuals in a range of domains.

Individual-Difference Intelligences

Social (Conzelmann et al., 2013; Kihlstrom & Cantor, 2020) and emotional (Kotsou et al., 2019; O’Boyle et al., 2011; Salovey & Mayer, 1990) intelligence may also shape socially minded intelligence to the extent that these improve an individual’s ability to know when being socially minded will increase their own individual intelligence. This may be good for individuals but bad for collectives because of the interdependence of these levels of socially minded intelligence as alluded to in Box 3. Specifically, an individual group member who optimizes their own self-overlap based on their level of goal overlap with others (i.e., higher shared social identity when goal alignment is high, lower shared social identity when goal alignment is low, no shared social identity when goal alignment is negative) will maximize their possible individual socially minded intelligence, but at the same time negatively impact on the group’s socially minded intelligence when the group’s goals differ from the individual’s (i.e., when there is higher group identification when salient identity goal alignment is high, and lower group identification when salient identity goal alignment is low). In other words, making self-overlap contingent on goal overlap gives more power to individuals and less to groups—which may ultimately undermine not only collective intelligence, but also individual intelligence (if other agents stop being socially minded with the “selfish” individual as a result). A promising individual-difference ability for resolving this tension is wisdom, which has been described as the ability to resolve conflicts “among different intrapersonal, interpersonal, and extrapersonal (i.e., group-centric) interests in people’s lives” (Grossman, 2017, p. 234). Wisdom may enable individuals to flexibly use socially minded intelligence in a way that accounts for both individual and collective responsibilities.

Group Dynamics