Abstract

This meta-analysis examines the effectiveness of a group of instructional approaches (i.e., cooperative, collaborative, and peer tutoring) at improving literacy outcomes for English language learners. Main effects analyses of a sample of 28 experimental and quasi-experimental studies reveal that peer-mediation is more effective for ELLs than individualized or teacher-centered comparison conditions (g=.486, SE=.121, p<.001). A number of potential moderators were examined, and two study quality variables proved significant. Also, grade level was a significant moderator, with middle school students demonstrating much smaller gains than elementary or high school students. Finally, descriptive analysis of moderators provides tentative evidence that ELLs showed greater gains on word-level outcomes than text-level outcomes and that interventions for which peer-mediation was one of several tightly-woven components were twice as effective as interventions utilizing peer-mediation alone.

Introduction

English language learners (ELLs) now comprise approximately 20% of the total U.S. school population (National Center for Education Statistics [NCES], 2011), and as the fastest growing group of students in U.S. schools (Francis, Rivera, Lesaux, Kieffer, & Rivera, 2008; McKeon, 2005), this proportion is likely to increase. Moreover, these culturally and linguistically diverse students are dispersing into regions of the country that have historically enrolled few ELLs (Capps et al., 2005). Already, 60% of all teachers have at least one ELL in their classroom (American Federation of Teachers, 2006).

However, most teachers have little preparation to provide the specialized learning these students require (Ballantyne, Sanderman, & Levy, 2008; Harper & de Jong, 2009; Menken & Antunez, 2001). For example, few teacher-preparation programs require that mainstream teachers enroll in courses specifically addressing the needs of ELLs, and those who do usually require only one course. Despite the many ELLs in U.S. schools, practicing teachers receive almost no professional development aimed at helping them work with ELLs. Consequently, most teachers report feeling unprepared to teach ELLs (Ballantyne et al., 2008).

The rapid growth and dispersion of the ELL population and the general lack of teacher preparation for working with them may contribute to the poor performance of ELLs on national and state exams. Unlike the narrowing of the performance gap between ELLs and their English-proficient peers on the Math portion of the National Assessment of Educational Progress (NAEP) during the previous 10 years, the performance gap on the Reading portion remains unchanged during that period (Wilde, 2010).

Previous syntheses of research about effective literacy instruction for ELLs (e.g., August & Shanahan, 2006) have concluded that too little experimental research exists to make strong claims about the effectiveness of many specific instructional practices. The authors of the National Literacy Report on Language Minority Children and Youth claimed that, in general, the characteristics of effective ELL instruction “overlap with those of effective instruction for nonlanguage-minority students” and “these factors need to either be bundled and tested experimentally as an intervention package or examined as separate components to determine whether they actually lead to improved student performance” (p. 520).

The study reported here addresses the need for evidence that might guide efforts to enhance literacy among a growing ELL population. Specifically, this article presents the results of a meta-analysis investigating the overall effectiveness of peer-mediated learning, an approach that has been widely investigated as a means for improving literacy outcomes for ELLs. The results of this synthesis are intended to respond directly to the National Literacy Panel’s recommendation to test the effectiveness of particular instructional approaches by exploring the evidence available from experimental and quasi-experimental studies. Meta-analysis addresses the two research questions guiding this study. First, main effects analyses provide a weighted estimate of the mean effect across studies to address the question, “Does peer-mediated learning improve literacy outcomes for English language learners?” Second, moderator analyses explore the extent to which measured variables explain heterogeneity of effects addressing the question, “Under what circumstances is peer-mediated learning effective for ELLs?”

Description of Peer-Mediated Learning

Peer-mediated learning refers broadly to an instructional approach that emphasizes student–student interaction, and it is intended to provide an alternative to teacher-centered or individualistic approaches to learning. In practice, peer-mediated learning includes a variety of approaches, each with distinct supporting literatures. The meta-analysis reported here synthesizes three variations of peer-mediated learning: cooperative, collaborative, and peer tutoring, a distinction used in previous syntheses (e.g., Cohen, 1994; Hertz-Lazorowitz, Kirkus, & Miller, 1992). Nonetheless, there are numerous precedents for treating these theoretically and practically different approaches as similar, though not synonymous, terms (Cohen, 1994; Johnson, Johnson, & Stanne, 2000; Slavin, 1996; Swain, Brooks, & Tocalli-Beller, 2002). 1

Cooperative Learning

Cooperative learning represents what Slavin (1996) calls “one of the greatest success stories in the history of educational research” (p. 43), and he claims that hundreds of control group evaluations have been conducted since the 1970s, with the most common outcome being an increase in academic achievement. Johnson et al. (2000) conducted a widely cited meta-analysis of the effects of cooperative learning on various measures of academic achievement, and the authors note that “cooperative learning is a generic term referring to numerous methods for organizing and conducting classroom learning” (p. 3).

A definitive characteristic of cooperative learning is the degree of structure in tasks and students’ roles (Oxford, 1997; Slavin, 1996). In this article, degree of structure is the defining criterion that distinguishes cooperative and the collaborative approaches described in the next section. In general, cooperative methods emphasize carefully structured groups, and students typically have well-defined roles. For example, in a jigsaw activity (Aronson & Patnoe, 2011), students in a small group are each responsible for mastering one aspect of a topic and for reporting to their group as the designated expert on that aspect. For the group to demonstrate mastery of the material, each person must adequately learn and then convey an aspect of the overall topic.

Collaborative Learning

Some reviews treat cooperative and collaborative methods as if they are similar, essentially identical, methods (e.g., Cohen, 1994). However, the present meta-analysis follows those researchers who see these two approaches as similar, but distinct, methods for engendering active, student-centered learning (e.g., Hertz-Lazorowitz et al., 1992; Mathews, Cooper, Davidson, & Hawkes, 1995; Oxford, 1997). Essentially, collaborative learning represents a less structured set of approaches to small group learning. Cooperative methods, however, tend to emphasize highly structured student roles and maintain more traditional teacher-centered distributions of power. In collaborative methods, completion of a complex task tends to be the central objective, and students are often left to their own devices to divide the labor, develop relations of power and authority, and to navigate task demands.

Peer Tutoring

Peer-tutoring approaches also vary widely (see Goodlad, 1998, for a more detailed discussion), though in general they utilize older, or more capable and more academically successful, peers to provide one-to-one instruction for struggling learners. Although peer tutoring can occur within grade levels, it is frequently used between grade levels, with older students tutoring younger students. Thus, by utilizing well-defined roles and structured relationships of power, peer-tutoring approaches contain many elements of more structured cooperative learning approaches. Of course, as with cooperative and collaborative approaches, peer-tutoring methods emphasize peer-to-peer interaction and seek to foster active, rich discussion from all participants.

Theoretical Rationale for Peer-Mediated Literacy Learning

Teacher-dominated instruction is the norm in most classrooms, and ELLs typically struggle in these monologic classrooms (Gutierrez et al., 1995). Even in programs specifically designed for language learners, ELLs are rarely afforded opportunities for active participation. For example, a nationally representative, longitudinal study in the United States of three widely used models of ELL instruction (i.e., Structured-English Immersion, Early-Exit Transitional Bilingual, and Late-Exit Transitional Bilingual) found that in all three models, teachers dominated classroom discourse and students were rarely provided opportunities for active learning. Instead, in more than half of observed instances, students provided no verbal responses at all (Ramirez et al., 1991).

Peer-mediated learning stands in contrast to the teacher-centered instruction ELLs typically encounter in classrooms. It is based on theories of learning that inform student-centered, dialogic instruction, specifically Vygotskian, sociocultural theories of language development, and psycholinguistic research inspired by Long’s Interaction hypothesis (Long, 1996).

Sociocultural Theories of Language Development

The work of Vygotsky, and sociocultural researchers who have utilized his ideas, provides the primary theoretical rationale for using peer-mediated approaches to improve literacy outcomes for ELLs (Donato, 1994; Lantolf & Thorne, 2006; Lee & Smagorinsky, 2000, Vygotsky, 1978). Vygotsky claims that thought is fundamentally social, and he argues that every cognitive development arises first in social interaction. This intrinsically social view of cognition shapes his description of learning and teaching and gives rise to one of the most well-known but widely misunderstood Vygotskian constructs: the zone of proximal development (ZPD). In its original sense, the ZPD refers primarily to child development mediated by an adult or more proficient other. The ZPD defines the difference between what a child can accomplish unassisted and what a child can accomplish with assistance from a more proficient other. From a Vygotskian perspective, unassisted performance reveals what has already been mastered in past development, and assisted performance indicates what is in the process of being mastered in current development.

The assistance offered to a learner by a more proficient other is typically called scaffolding, a term popularized by Wood, Bruner, and Ross (1976). Initial articulations of scaffolding focused on ways that adults or experts supported children through utterances, gestures, and facial expressions; more recently, second language (L2) scholars have argued that scaffolding can also be provided by peers (Donato, 1994; Lantolf, 2000; Swain & Lapkin, 1995), that is, working together in collaborative groups, students can scaffold one another to co-construct a performance that exceeds what any of the students would have produced individually. Thus, by suggesting that social processes inherent in spoken interaction during collaborative activity might improve individual performance, Vygotskian constructs like mediation and scaffolding provide the basic theoretical framework for the term peer-mediated learning used in this study.

Psycholinguistics and the Interaction Hypothesis

Work conducted in the field of second language acquisition (SLA) represents another theoretical rationale for the use of peer-mediated methods with language learners. Drawing on the work of Long (1981, 1996; see also Gass & Mackey, 2006; Pica, 1994) and his Interaction hypothesis, a line of psycholinguistic research suggests that language learning is especially effective during interaction. These researchers claim that interactionally modified language input coupled with purposeful language output improves language learning outcomes in ways that extensive language input alone cannot. Moreover, these researchers argue that interaction focuses learners’ attention on language and increases motivation for language learning. This contrasts with a view of SLA that suggests that the process is largely subconscious and driven almost entirely by the amount and availability of comprehensible input and that emphasizes reading and listening comprehension during instruction (e.g., Krashen & Terrell, 1983).

Taken together, these two theoretical perspectives suggest that students engaged in interaction can scaffold each other toward better language and literacy performance than typical, teacher-dominated or individualistic approaches allow. In particular, second language learners struggling to master the academic demands of schooling in the United States at the same time that they are learning the language may especially benefit from instruction that targets oral language and literacy development simultaneously.

Empirical Rationale for Peer-Mediated Learning

This study addresses two primary research questions. The first main effects question asks if peer-mediated learning is effective at improving literacy outcomes for English learners, and the second moderator question asks what variables explain variability in effects. Both questions highlight key questions raised by previous syntheses.

Does Peer-Mediated Learning Improve Literacy Outcomes for ELLs?

Previous syntheses of qualitative, descriptive (Genesee, Lindholm-Leary, Saunders, & Christian, 2005; Gersten & Baker, 2000), and quantitative, experimental (August & Shanahan, 2007; Cheung & Slavin, 2005) research of promising instructional practices for ELLs suggested that cooperative and collaborative learning were important components of classroom instruction that improves language, literacy, and academic learning for culturally and linguistically diverse students. Nonetheless, no previous meta-analysis of the effectiveness of peer-mediated learning at improving literacy outcomes for ELLs exists, and the National Literacy Panel’s search yielded too few high-quality experimental studies to even compute a mean effect size.

Previous meta-analyses of peer-mediated methods report consistently positive effects when compared with individualistic and teacher-driven approaches (Johnson et al., 2000; Johnson, Maruyoma, Johnson, & Nelson, 1981; Rohrbeck, Fantuzzo, Ginsberg-Block, & Miller, 2003; Roseth, Johnson, & Johnson, 2008). However, these meta-analyses do not focus specifically on ELLs or literacy outcomes. Instead, they provide empirical evidence of the effectiveness of peer-mediated approaches with language majority students.

Related meta-analytical research also suggests that peer-mediated learning might be effective at promoting both spoken and written language outcomes for second language learners (Keck, Iberri-Shea, Tracy-Ventura, & Wa-Mbaleka, 2006; Mackey & Goo, 2007). These two meta-analyses synthesized SLA studies of interaction, and both reported positive effect sizes when interaction was compared with more traditional language pedagogy (approximately 0.46 SD and 0.61 SD, respectively 2 ). However, these studies examined the verbal interactions of students and did not specifically examine the instructional approaches used.

Thus, previous syntheses suggest that peer mediation is generally more effective than teacher-centered or individualistic instruction, that interaction and negotiation of meaning improve oral and written language outcomes for second language learners, and that effective classroom instruction for ELLs typically includes a peer-mediated component. Nonetheless, none of these syntheses directly examine the ability of peer-mediated learning to improve literacy outcomes for ELLs. Thus, the present meta-analysis was conducted to address this more focused question.

Under What Circumstances Does Peer-Mediated Learning Improve Literacy Learning for ELLs?

Methodological, instructional, and learner variables potentially influence the effectiveness of any intervention designed to improve learning, and previous syntheses reported findings that provided the rationale for specific moderator analyses reported in this meta-analysis.

Several meta-analyses of peer-mediated learning compared the effectiveness of various types of peer-mediated learning, but results were inconsistent across syntheses (Johnson et al., 2000; Johnson et al., 1981; Roseth et al., 2008). Thus, it is unclear whether cooperative, collaborative, or peer tutoring is likely to be most effective. Degree of structure is a distinctive characteristic between these approaches and largely defines the difference between studies coded as cooperative and studies coded as collaborative in this meta-analysis. Some previous studies reported that larger academic gains were associated with more structured approaches (Fantuzzo, Riggio, Connelly, & Dimeff, 1989; Johnson et al., 2000; Johnson et al., 1981; Slavin, 1996), whereas others found that less structured, more conceptually driven and flexible methods were more effective (Cohen, 1994; Johnson et al., 2000; Rohrbeck et al., 2003). Similarly, some previous syntheses questioned whether peer-mediated learning was effective alone or only when included as one component of a complex intervention (August & Shanahan, 2007; Cheung & Slavin, 2005; Johnson et al., 2000; Johnson et al., 1981).

Second language research typically distinguishes between second language (SL) settings (i.e., learners learning a language spoken in the local community) and foreign language (FL) settings (i.e., learners learning a language not spoken in the local community) because of the importance of differences in access and exposure to the target language among settings (e.g., Lightbown, 2000; Turnbull & Arnett, 2002). Mackey and Goo (2007) found no differences in the effectiveness of interaction between SL and FL settings. Importantly, the National Literacy Panel only included studies of English learners conducted in the United States (i.e., SL settings); thus, the results of the National Literacy Panel purposefully left the question of language setting unanswered.

Several syntheses analyzed whether the type of measure used moderated effectiveness. Previous syntheses of literacy outcomes examined whether word-level outcomes differ from text-level outcomes. The National Literacy Panel found that word-level outcomes were associated with larger effects than text-level outcomes (August & Shanahan, 2007), but Keck et al. (2006) reported that lexical outcomes (d = .90) were essentially of the same magnitude as grammatical outcomes (d = .94). Keck and colleagues reported that effect sizes associated with standardized, school-based, and researcher-created assessments did not differ significantly. This finding is surprising given prior research that found researcher-created assessments generally produced larger effect sizes than standardized measures (e.g., Bloom, Hill, Black, & Lipsey, 2008; Slavin & Madden, 2011).

Finally, a number of other variables were examined in previous syntheses and were coded for analysis as potential moderators in this meta-analysis, including study design and quality, whether or not peer mediation was more effective alone or as one component of a more complex intervention package, teacher experience, age of students, and ethnicity of students. 3

This review of the empirical literature highlights the importance of both main effects and moderator analyses. No extant synthesis directly examines the effectiveness of peer-mediated learning at improving literacy learning for language learners, despite a clear call from the National Literacy Panel for experimental or quasi-experimental evidence of the effectiveness of particular instructional approaches; consequently, the first research question addressed by this meta-analysis asks whether or not peer-mediated learning is effective at improving literacy outcomes for ELLs. These main effects analyses provide a weighted mean effect size estimate of the average difference between peer-mediated learning and teacher-centered or individualistic learning across studies. Second, the inconclusive evidence in previous studies about the role that methodological, instructional, and learner variables play in the effectiveness of peer-mediated learning for ELLs suggests that moderator analyses are critical in determining where, how, and with whom peer-mediated learning is most likely to be effective. Consequently, the second research question addressed by this meta-analysis examines the extent to which variations in these variables (e.g., type of peer-mediated learning, English as a second language [ESL] or English as a foreign language [EFL] setting, word- or text-level outcome) across studies moderate the magnitude of the reported effect sizes.

Method

Criteria for Inclusion and Exclusion of Studies

A number of researchers have argued that not enough experimental evaluations of intervention effectiveness exist in the ELL literature (e.g., August & Shanahan, 2006; Slavin & Cheung, 2005). Therefore, this meta-analysis includes both experimental and quasi-experimental studies, and post hoc analyses provide empirical estimates of differences in effect sizes associated with study design and quality.

Types of studies

Experimental and quasi-experimental studies were included in the review. For studies in which non-random assignment was used, studies must have included pre-test data or must have statistically controlled for pre-test differences (e.g., ANCOVA). Similarly, studies that tested more than one treatment against a control group were included as long as one treatment could readily be identified as the focal treatment. If a study did not include a control group, it was excluded. Single-group designs were excluded as single-group effect sizes are not directly comparable, nor able to be synthesized, with two-group designs.

Similarly, published and unpublished studies were included to tap the elusive “gray literature” (Cooper, Hedges, & Valentine, 2009; Lipsey & Wilson, 2001). Published manuscripts included peer-reviewed manuscripts in journals and books, and unpublished manuscripts included dissertations and technical reports. Technical reports are often rigorous reports with methodological detail not presented in the abbreviated format favored by journals; dissertations also contain more detail than a published journal article but quality varies widely; however, neither technical reports nor dissertations undergo the peer-review process. Consequently, post hoc analyses explored differences in effect sizes associated with published versus unpublished studies.

For practical purposes studies must have been published in English, though the research may have occurred in any country with participants of any nationality. In addition, the target language must have been English to facilitate direct comparisons with ELLs in U.S. schools; however, participants may represent any language background, and instruction may have occurred in any language.

Types of participants and interventions

Studies must have tested the effects of peer-mediated learning involving students between the ages of 3 and 18, again to facilitate comparisons with U.S. students in K-12 educational settings. For example, in studies of peer tutoring, both students for whom outcomes were measured and students who acted as tutors must have been within this age range to preserve the focus on peer interactions. Also, participants must have included students identified as ELLs (though methods of identification and definitions of ELLs vary across states and districts in the United States), and results must have been exclusively, or disaggregated, for ELLs.

Interventions may utilize a number of instructional activities, but peer–peer interaction must have been a focal aspect of the intervention. Furthermore, comparison groups must not have received instruction for which peer-mediated learning was widely used, and studies that only provided a cooperative intervention were coded separately from those that involved more complex interventions in which peer-mediated methods were just one component (e.g., Success for All/Éxito para Todos). Studies for which peer–peer interaction could not be identified as a focal feature of the intervention were excluded, as were studies for which comparison groups used extensive peer assistance. When published descriptions were insufficient to determine a study’s eligibility, study authors were contacted. If sufficient information was still unavailable, the study was excluded.

Types of outcomes and instruments

Diverse instruments were used to assess effectiveness, including norm-referenced tests, researcher and teacher-created measures, and psychological and sociological instruments. These characteristics were coded to enable both inferential moderator and descriptive analyses.

Search Strategy for Identifying Relevant Studies

Multiple databases were searched using consistent combinations of keywords, though specific formats varied according to individual database preferences (e.g., AND used between terms for the PsychINFO search). Several databases were combined into simultaneous searches. For instance, the ProQuest search included the following individually selected databases: Dissertation Abstracts International, Ethnic News Watch, and several subsets of the Research Library collection—core, education, humanities, international, multicultural, psychology, and social sciences. Similarly, PsychINFO included the following databases, which were manually selected: ERIC, IBSS, CSA Linguistics, Language, and Behavior, PsychArticles, PsychINFO, and Sociological Abstracts. Furthermore, potentially relevant studies were cross-cited using the bibliographies of previous syntheses and identified studies.

All studies were identified through the following process: titles and abstracts were first skimmed to identify potentially relevant studies; if a study appeared to be a possible candidate, the full study was retrieved to the extent possible. If the study was not immediately available, Interlibrary Loan requests and librarian searches were pursued. If this did not succeed, attempts were made to contact the author of the study. Studies not retrieved at that point were deemed unavailable.

Studies were excluded at this point if closer examination revealed that they violated inclusion criteria or if an effect size could not be extracted from the information provided. As noted previously, attempts were made to retrieve necessary information from the authors, though in many cases data were no longer available or the authors could not be reached. To facilitate future syntheses that might use different inclusion criteria, these “near miss” studies are included in the References, but no further analyses were conducted with these studies.

The researcher functioned as the primary coder, and all of the studies were coded by the researcher. Reliability of inclusion and exclusion criteria, as well as coding of key substantive and methodological variables, was assessed by comparing the primary coding with the coding of two independent coders. The additional coders were doctoral students with relevant experimental and statistical training. After some discussion of the inclusion and exclusion criteria and multiple rounds of practice with examples, the other coders made inclusion/exclusion decisions for a subsample of 30 abstracts.

Description of Methods Used in Primary Studies

Previous syntheses suggested that high-quality experimental studies of effective literacy instruction for ELLs are scarce. Consequently, it seemed appropriate to cast a wide net, a long-standing approach to social science meta-analyses (e.g., Smith, Glass, & Miller, 1980). As a result, many small-sample studies utilizing quasi-experimental designs, with and without cluster randomization, were included and few large-sample studies with rigorous randomization were found. Furthermore, the broad conceptualization of peer-mediated learning resulted in a variety of interventions and approaches to data collection. The quality of included studies has a potential effect on the final synthesis; consequently, an analysis was conducted to determine the extent to which study quality was related to reported effects. Studies were coded to reflect the extent to which they used randomization, and the level at which randomization occurred. Similarly, studies were coded to assess the degree to which baseline equivalence between the control and treatment groups was measured in the original studies. For the sake of moderator analysis, study quality was assessed on a three-level scale as follows: (a) high-quality studies assessed pre-test equivalence and used a covariate to control for pre-test differences, (b) medium-quality studies assessed pre-test equivalence or used a covariate to control pre-test differences, and (c) low-quality studies did neither.

In several studies, pre-test data were available, but the original researchers did not use pre-test data in their post-test data analyses. That is, pre-test differences were left unadjusted in final analyses. In these situations, post hoc adjustments were made to control for pre-test differences. Specifically, pre-test means were subtracted from post-test means for both the treatment and the control groups, and these differences were used as the mean gain scores from which effect sizes were computed.

Analyses Used in This Meta-Analysis

Main effects analyses involved computation of a standard mean difference effect size, which is a weighted estimate of the difference between treatment and comparison groups (Cooper et al., 2009; Lipsey & Wilson, 2001). All effect sizes were calculated as Hedge’s g, which is an inverse-variance weighted estimate to control for sample size bias. Random effects models were assumed, primarily because the assumptions of a fixed model were untenable (e.g., the assumption that there is only a single effect size throughout the observed sample and all possible populations from which it could have been conceptually drawn). Heterogeneity was assessed using the Q statistic, which describes the degree to which effect sizes vary beyond the degree of expected sampling error, and I2, which indicates the amount of heterogeneity that exists between studies (Higgins, Thompson, Deeks, & Altman, 2003). Finally, participants in educational research are often assigned using nested groups (e.g., intact classrooms), and the estimates provided by standard analyses are unadjusted for these cluster effects. Although the effect size estimates are not usually too distorted by cluster effects, the standard errors on which the inverse-variance weights are computed are often dramatically incorrect (Hedges, 2007). Adjustments to standard errors were made using McHugh calculations (McHugh & Lipsey, 2007).

Empirical examination of publication bias involved three distinct analyses. First, a simple difference in means between published and unpublished studies provided a preliminary estimate of the magnitude of differences in average effect sizes. Second, the visual inspection of a funnel plot with missing studies imputed provided an analysis of the probability that small studies reporting null or negative results were underreported in the literature. Third, computation of Egger’s regression provided null hypothesis estimates that tested whether smaller sample sizes were associated with larger gains. None of these tests can directly confirm the presence or absence of publication bias; rather, taken together, they provide information about the likelihood of publication bias in observed results (Cooper et al., 2009; Lipsey & Wilson, 2001).

Moderating variables are those that may affect overall effect size estimates through covariation with the independent variables of interest. A number of study, treatment, and participant variables were analyzed as moderators in Comprehensive Meta Analysis (CMA) analysis and as correlates in SPSS. Separate analyses were conducted for each of these variables, and the results for these moderator analyses are presented separately for each moderator of interest. The included sample of studies was too small to allow for meta-regression analyses with sufficient statistical power to prevent capitalizing on chance. Thus, independent moderator analyses were conducted and simple bivariate correlations were provided to empirically examine the possibility of confounding variables. Finally, the relatively small sample of studies suggests that small effects might have gone undetected because of power limitations; consequently, descriptive analyses of differences in the means of moderator variables were also included to identify patterns of potential interest and future study.

Coding Reliability

Coding reliability was assessed through the measurement of inter-rater reliability. Following exclusion/inclusion reliability assessment, the researcher met with the additional coders to discuss and practice using the coding manual on three examples. Following this initial training, the coders coded five studies independently. The researcher then met again with the coders to discuss the initial coding and to practice together on two additional examples. Following the second training session, the two additional coders coded 10 more studies independently. Thus, the coders independently coded 15 studies each, with a total subsample of 25 studies included for the assessment of reliability. The studies were drawn evenly from published and unpublished studies. Cohen’s kappa was calculated for categorical variables, while Pearson’s r was calculated for continuous variables. Inter-rater reliability varied considerably across variables; mean Cohen’s kappa for categorical variables was .787 with a range of .318 to 1.0. Pearson’s r was calculated for continuous variables, and mean agreement among raters was .927 for continuous variables, though inter-rater reliability for continuous variables ranged between .85 and 1.0. Problematic variables were discussed and revised, and ultimately, all differences were resolved to consensus.

Results

Included Sample

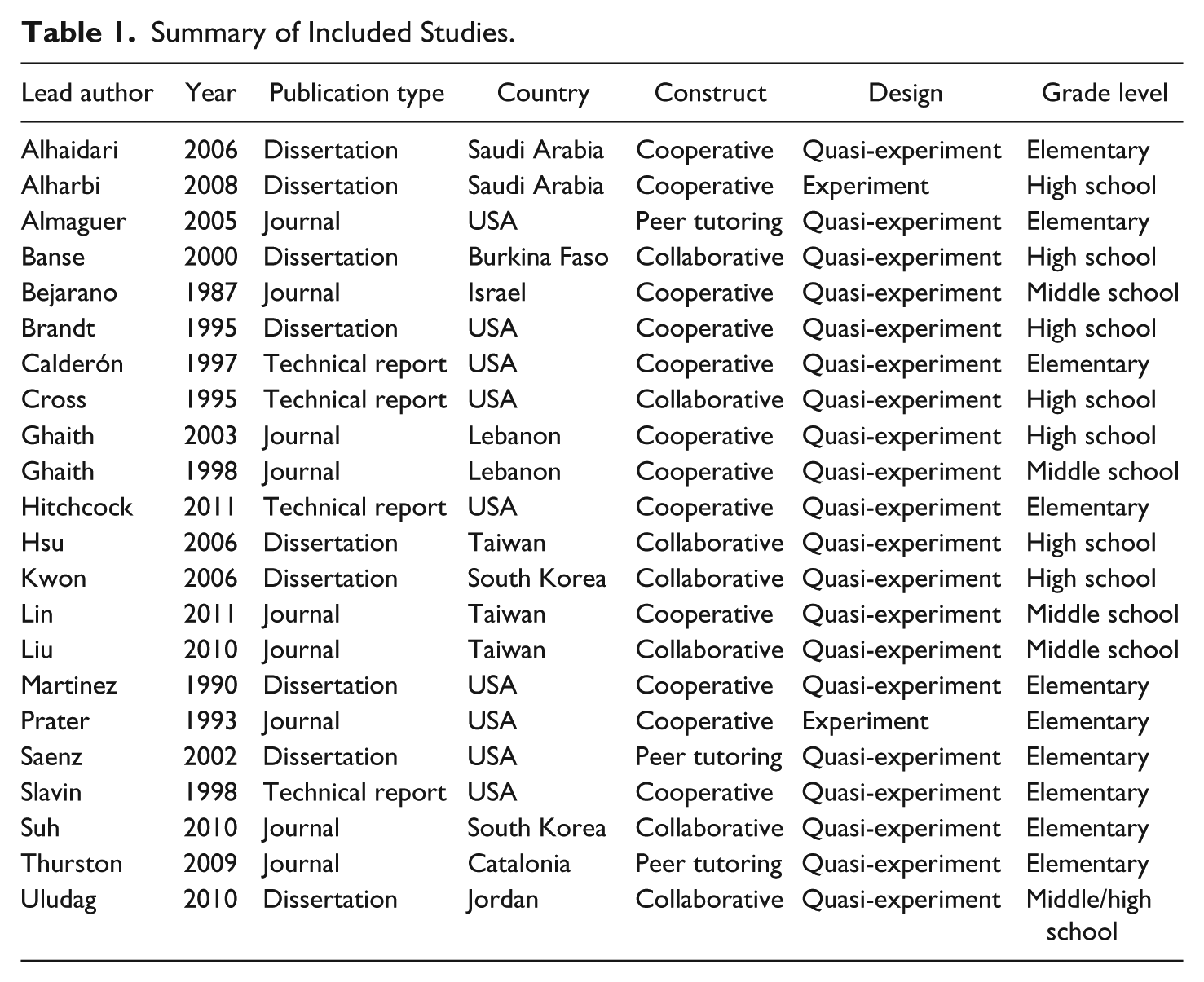

The sample of studies included for this study consisted of 28 independent samples that tested the effectiveness of peer-mediated learning at improving reading and writing outcomes. In some cases, a single study report provided data for multiple independent samples, such that the following 22 reports provided data for 28 independent samples. Table 1 provides a brief summary of the included studies.

Summary of Included Studies.

As indicated in Table 1, more than half of the studies were published since 2000, indicating that the field of peer-mediated learning remains quite active. Only 9 of the included studies were published in peer-reviewed journals, whereas the other 13 were dissertations or technical reports. This suggests that the search method tapped the unpublished literature deeply enough to facilitate analysis of publication bias. Similarly, 9 studies were conducted in the United States and the other 13 were conducted in other countries. Only 2 studies conducted in the United States were published in peer-reviewed journals, supporting the claims of previous syntheses that found few published studies (e.g., August & Shanahan; Genesee et al., 2006). Notably, only one study used a true experiment, indicating that insufficient variability in research design existed in the included sample to facilitate moderator analysis of this variable.

Main Effects Analysis

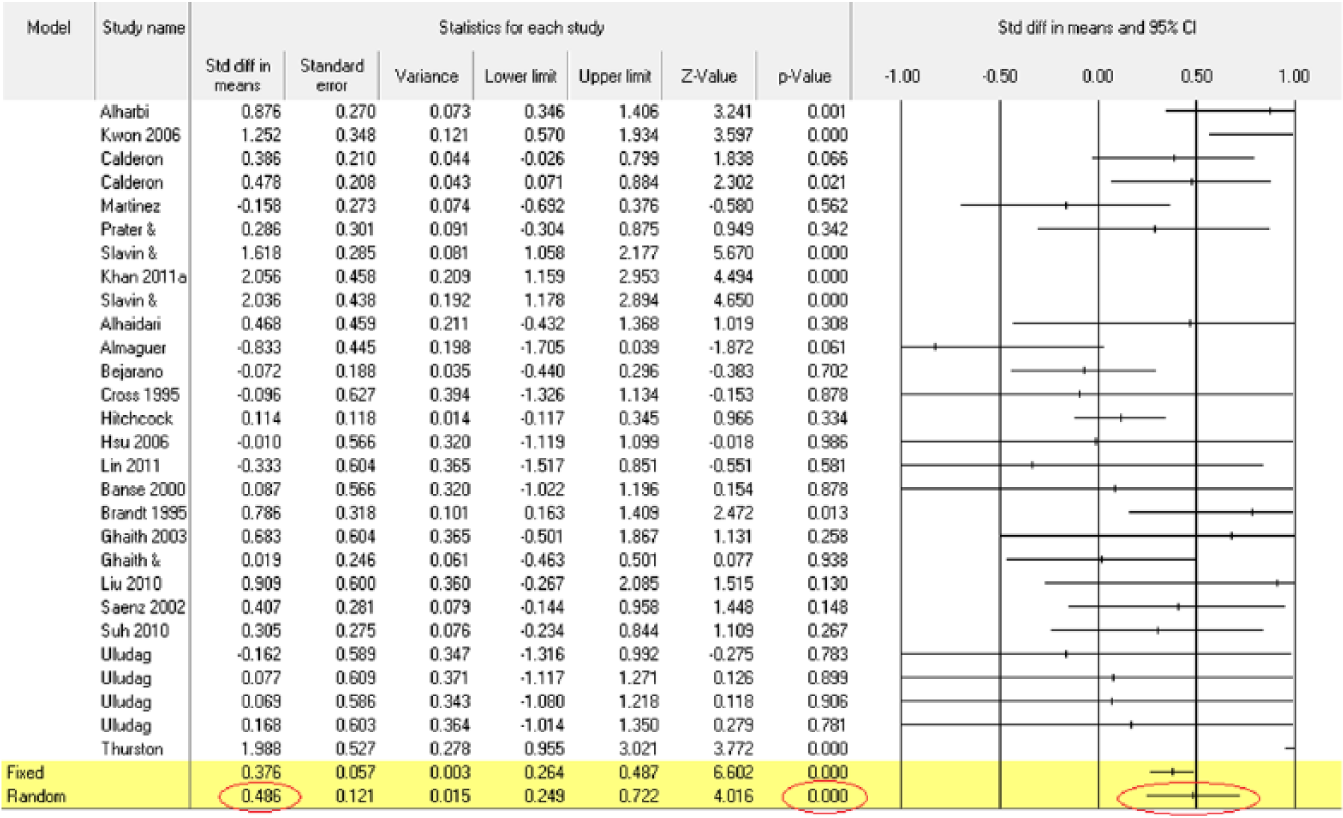

A random effects model of the un-corrected and un-Winsorized data generated a mean effect size estimate for the 28 literacy outcomes of .551 (SE = .111, p < .001); however, after adjustments for outliers, pre-test differences, and cluster randomization, the mean effect size estimate decreased and the variance increased slightly (.486, SE = .121, p < .001), suggesting that outliers and cluster randomization exerted a measurable effect on the original estimates. The adjusted distribution of literacy outcomes is illustrated by the forest plot in Figure 1.

Forest plot of literacy outcomes.

Publication Bias

The mean effect size for published studies (.442, SE = .24) is not much smaller than the mean effect size for unpublished studies (.524, SE = .142), and this difference between the mean effect sizes of −.082 provides a crude estimate of the upper bounds of potential publication bias.

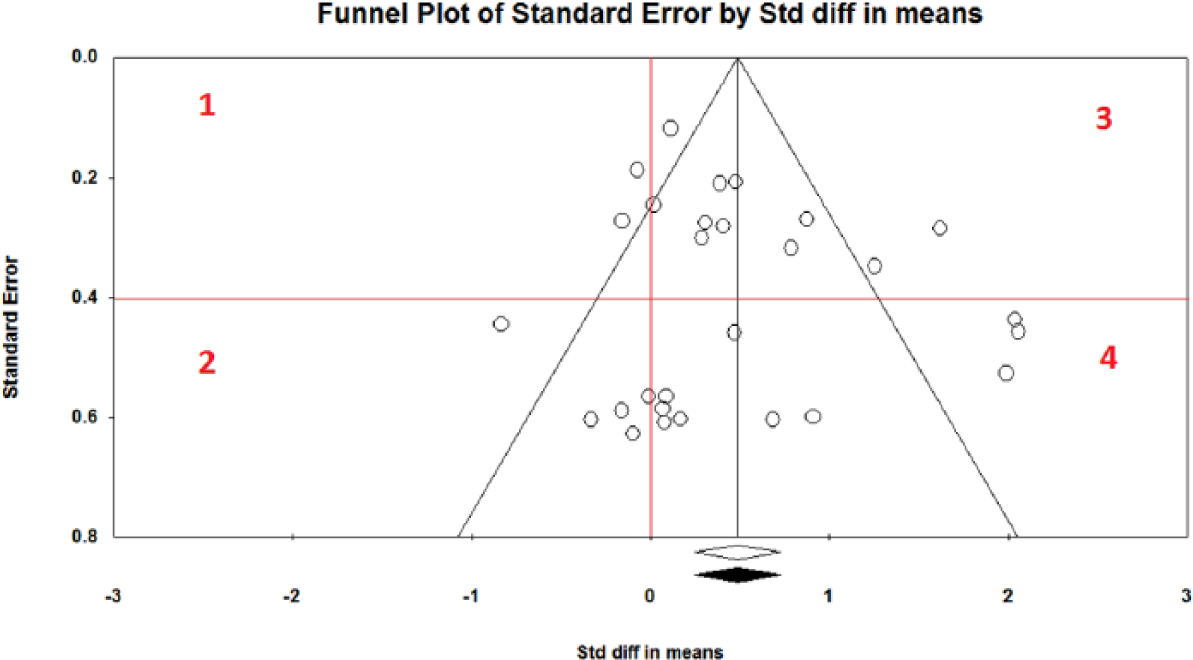

Examining a funnel plot with effect sizes plotted against standard errors is one meta-analytically appropriate method of visually examining the distribution for the presence of publication bias. In this case, the standard error serves as a proxy for sample size, and because smaller samples are much more likely to lack the statistical power required to attain statistical significance, we look at the small-sample studies to detect publication bias (Lipsey & Wilson, 2001). If there is no such bias, we expect small studies with negative and null results (i.e., Quadrant 2 in Figure 2) to be as frequent as small studies with positive results. The funnel plot in Figure 2 includes black circles for studies imputed to achieve a symmetric distribution, the “trim and fill” technique (Lipsey & Wilson, 2001). In this case, there were no studies imputed to achieve a symmetric distribution, which is inconsistent with the possibility of publication bias. Similarly, the black diamond indicates that the anticipated mean did not change at all under publication bias conditions.

Funnel plot with missing studies imputed.

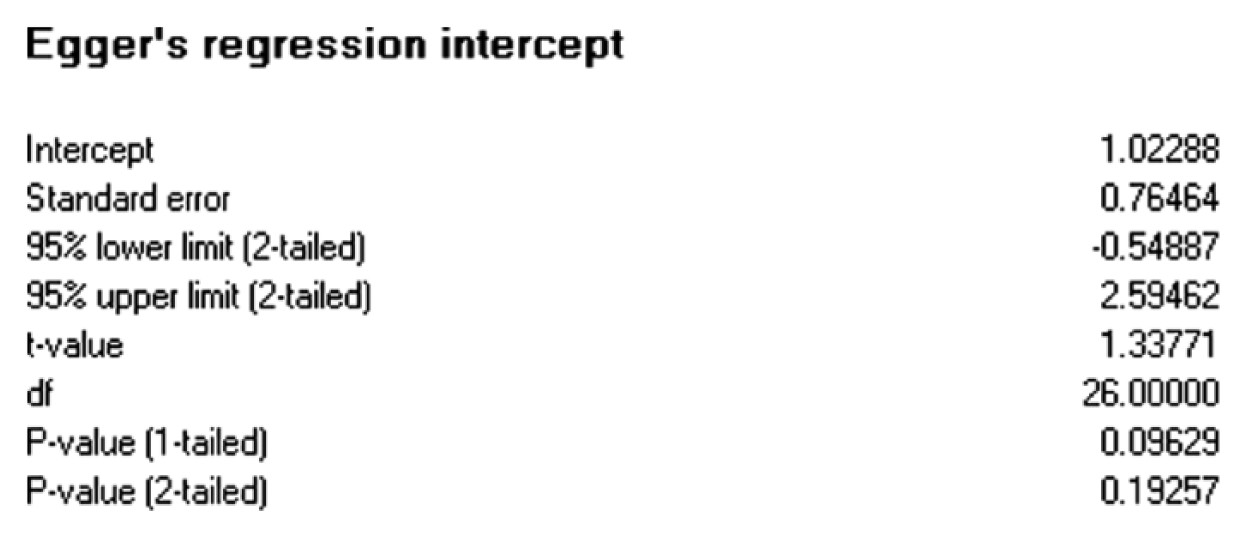

Egger’s regression intercept is a computational alternative to the visual inspection of the distribution (Sterne & Eggers, 2005). Generally, it is assumed that publication bias will be positive, that is, in the direction of statistically significantly positive effects, and because it provides a more conservative estimate of significance, the p value of the single-tailed test at α = .05 is typically reported. The null hypothesis tests whether the ratio of the effect size (ES)/standard error (SE) is >0. The Egger’s regression test presented in Figure 3 provided additional evidence of the improbability of publication bias in the literacy outcome distribution. The intercept was not significantly greater than zero for the one-tailed test (1.02, t = 1.338, p = .096) or the two-tailed test (p = .193).

Egger’s regression estimates.

In conclusion, these analyses provided no evidence that publication bias was likely for the distribution of studies. In addition, several studies in the sample had null or negative effect size estimates; thus, it seems unlikely that the literature search failed to uncover those studies that for one reason or another simply were not published. Overall, the difference in means between published and unpublished studies, the funnel plot, and Egger’s regression estimates all suggested that publication bias was not a factor in interpreting the data from the present meta-analysis.

Moderator Analysis

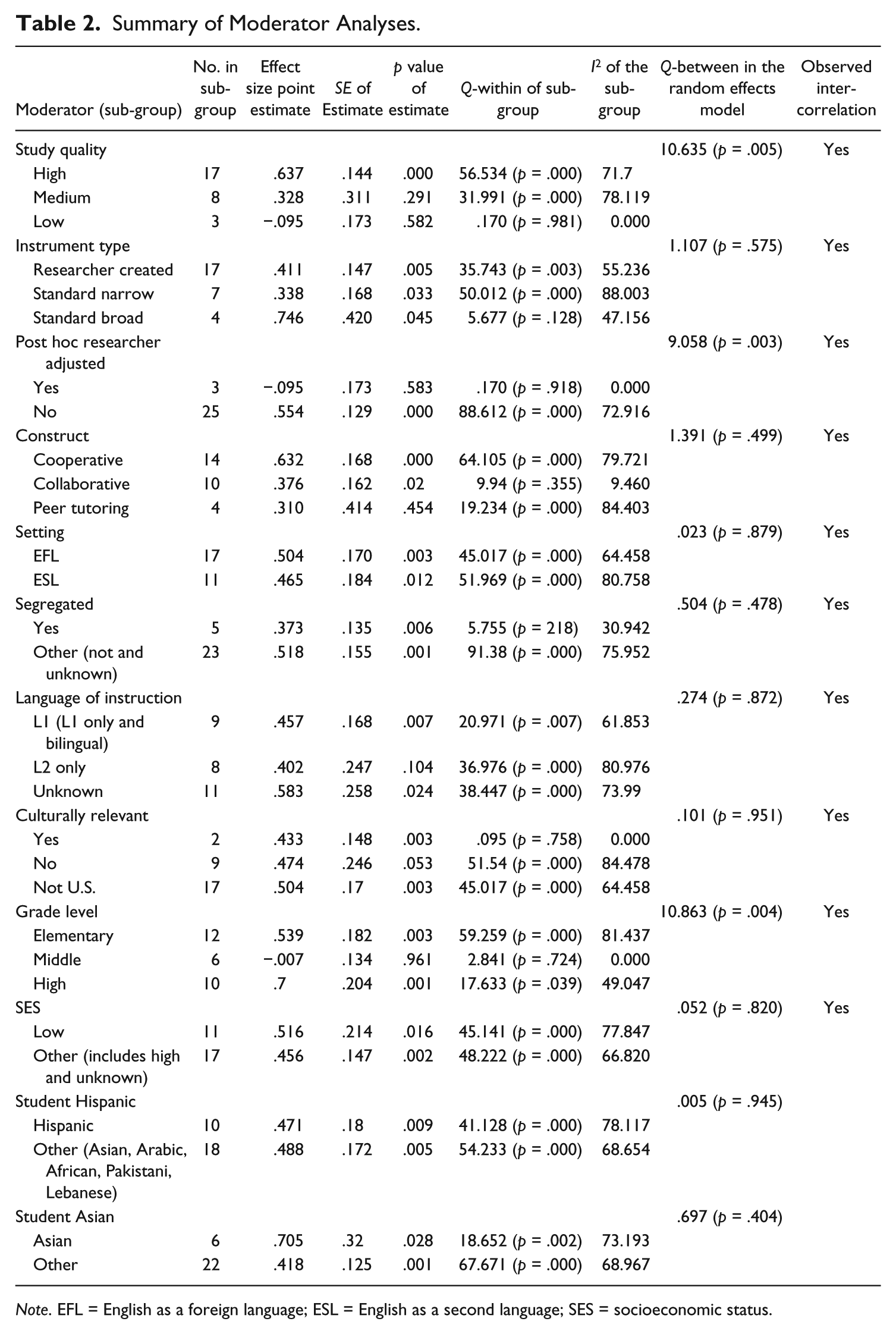

The distribution of literacy effect sizes was heterogeneous, as indicated by the Q (97.135, p = .000) and I2 (72.204) statistics; consequently, post hoc examination of moderator variables was conducted to explain some of this variability in effect sizes. Nonetheless, analysis of moderators was primarily motivated by a priori questions of interest, and findings remain qualified by the recognition that small differences may have been difficult to detect with the size of the sample used and confounding and lurking variables may have tempered any observed differences between sub-groups. Table 2 summarizes the results for measured variables reported in the 28 included studies, and the presence of significant bivariate correlations, analyzed as chi-square statistics, with other measured variables is indicated in the last column.

Summary of Moderator Analyses.

Note. EFL = English as a foreign language; ESL = English as a second language; SES = socioeconomic status.

As indicated in the Q-between column, the distribution of literacy outcomes demonstrated few statistically significant moderators; however, three moderators were statistically significant at the p = .05 level: study quality, post hoc researcher adjusted, and grade level. Post hoc adjustments of literacy outcomes resulted in much smaller effect sizes on average (g = −.095) than unadjusted (g = .554), with the direction of the effect actually switching to support the comparison groups. Similarly, as study quality increased, so did the magnitude of the mean effect size, a finding that is somewhat counterintuitive. One might expect that high-quality designs would mitigate the influence of bias and accident resulting in lower effects on average; however, this is similar to the findings in other meta-analyses of peer-mediated instruction that reported that low-quality studies tended to report lower effect sizes (e.g., Keck et al., 2006). Finally, grade level proved to be a statistically significant moderator, mostly because middle school students showed much smaller gains (g = −.007) than high school (g = .7) or elementary (g = .539).

Descriptive analysis of the moderators revealed interesting patterns among some of the non-significant moderators; nonetheless, for these variables that did not achieve statistical significance as moderators, either for lack of adequate power or because no moderating effect truly existed, tentative analysis indicates that future research might fruitfully examine the extent to which these variables influence the effectiveness of peer-mediated learning at improving literacy outcomes for ELLs. For example, standardized broadband measures produced larger gains (.746, p = .045) than either researcher-created instruments (.411, p = .005) or narrow-band standardized tests (.338, p = .033). This finding is different from other research that has typically found that broadband standardized instruments generate the smallest effect sizes (e.g., Lipsey et al., 2012). Moreover, word-level outcomes (.702, p = .052) were nearly twice the magnitude of text-level outcomes (.473, p = .004), echoing the findings of the National Literacy Panel (August & Shanahan, 2007) that found that text-level outcomes tended to be smaller than word-level outcomes. Complex interventions for which peer-mediated learning was one of many tightly interwoven components (.633, p = .001) reported outcomes nearly twice the magnitude of interventions that were purely peer mediated (.385, p = .012), giving some support to claims that peer-mediated instruction works better when coupled with direct instruction (e.g., Cheung & Slavin, 2005; Genesee et al., 2005).

Finally, setting was not a significant moderator and the point estimates for ESL (.465, p = .012) and EFL (.504, p = .003) were quite similar.

Discussion

The first question addressed in this study was whether peer-mediated learning promotes literacy outcomes for ELLs. Main effects analyses indicate that on average, peer-mediated learning approaches improved literacy outcomes by nearly half a standard deviation (.486, SE = .121, p < .001) when compared with teacher-centered or individualistic instruction. Moreover, there is no evidence that publication bias is present, suggesting that this estimated effect is robust to potential sources of bias (i.e., effects of outliers, cluster randomization, and publication bias).

To provide a sense of practical significance, one can imagine the impact an effect size of this magnitude would have on the performance gap between ELLs and non-ELLs on the fourth-grade NAEP Reading exam in 2008 (Lipsey et al., 2012). Non-ELLs had a mean score of 223 and ELLs had a mean score of 193 (Wilde, 2010), with a mean difference of 30 points. The standard deviation for all students on the 2008 NAEP reading exam was 34 points (Digest of Education Statistics, 2011); thus, the performance gap expressed as an effect size is approximately .882 SDs. 4 The mean effect size for peer-mediated learning of .486 SDs is more than half the magnitude of the achievement gap. This is not meant to say that using peer-mediated learning more consistently in the instruction of ELLs will cut the achievement gap in half as there are a number of factors that contribute to this performance disparity, including availability of appropriate program models for ELLs, validity concerns associated with using norm-referenced and standardized assessments with ELLs, and broad sociocultural influences that shape ELLs’ identities and opportunities to learn. Rather, this comparison is intended to provide an intuitively interpretable estimate of the practical significance of an effect size of this magnitude.

The second research question asked what variables moderate the effectiveness of peer-mediated learning for ELLs, and moderator analyses revealed only three statistically significant moderators: study quality, post hoc researcher adjustments, and grade level. Two of these variables highlight the importance of carefully considering and measuring the quality of the research being synthesized. Pre-test differences, when uncontrolled, can exert noticeable effects on post-test scores, and other variables of study quality also exert a measurable impact on the results. This meta-analysis supports the findings of Keck et al. (2006), which reported that higher effect sizes were associated with higher quality studies. To clarify, study quality was not closely associated with the inclusion of dissertations and technical reports. Of the 17 studies coded as high quality, most were unpublished dissertations (n = 9) or technical reports (n = 4); conversely, most of the three studies coded as low quality were published in peer-reviewed journals (n = 2).

The finding that the effectiveness of peer mediation is moderated by grade level was largely because studies of middle school ELLs reported much lower mean effect sizes (g = .007) than studies with high school ELLs (g = .7) or elementary ELLs (g = .539). There are a number of possible explanations for this finding, including developmental differences that make socialization particularly difficult for middle school students. Middle school is a particularly difficult time for ELLs, in general. ELL enrollment growth is considerably larger in middle schools than in elementary schools (Capps et al., 2005); heterogeneity among ELLs in terms of socioeconomic status, years of U.S. residency, and native language and literacy proficiency is greater for middle school students than for younger students (Rubinstein-Ávilla, 2003); middle school students learning English are confronted with more conceptually dense texts in subject areas (Cummins, 2007; ELL Working Group, 2009), and adolescent ELLs have fewer years to master these additional academic and language demands of U.S. schools than their elementary counterparts (Short & Fitzsimmons, 2007). Consequently, it is little surprise that ELLs drop out of school at higher rates than the general education population beginning in middle school (Rubinstein-Ávilla, 2003). It is unclear whether lower mean effect sizes for ELLs are part of the cause for or the result of these other issues, but the consequences are significant for both the learners and the schools who struggle to “retain the growing number of ELLs who are enrolled and to ensure their literacy development and content-rich education” (Rubinstein-Ávilla, 2003, p. 123).

Descriptive analyses offer tentative information relevant to questions uncovered in the literature review. Standardized, broadband measures yielded larger effect sizes than researcher-created measures; although three of the four studies utilizing standardized, broadband measures were also coded as high-quality studies, it seems unlikely that this finding is closely associated with study quality. Seventeen studies were coded high quality, and nine of those studies used researcher-created measures, suggesting that high-quality studies were not more likely to select standardized measures. Word-level outcomes were larger on average than text-level outcomes, offering tentative support of the findings reported by the National Literacy Panel (August & Shanahan, 2006) that found consistently larger effects for word-level outcomes. This finding suggests that though peer-mediated learning appears to offer a direct effect at improving comprehension directly (.473, p = .004), it may exert an even larger indirect effect on reading comprehension by promoting word-level outcomes that support long-term improvements in reading comprehension (.702, p = .052). This finding might also indicate that text-level outcomes remain resistant to the kinds of short-term indicators of learning typically used in experimental studies. Future research should continue to explore the relationship between oral language and reading comprehension, and longitudinal research should examine the long-term effects of peer-mediated learning on word- and text-level outcomes to determine whether the pattern observed in this meta-analysis remains consistent over time. Complex interventions that utilized a peer-mediated learning component reported larger effects on average than interventions that utilized only peer mediation, providing tentative evidence in support of claims that peer mediation is most effective when coupled with direct instruction (Cheung & Slavin, 2005).

Overall, this study provides an estimate of the effectiveness of peer-mediated learning at improving literacy outcomes for ELLs, but questions about important moderating variables remain largely unanswered due to limitations in sample size. Study-level variables like quality and type of measure used appear important, but the effects of many relevant teacher-level, student-level, and instructional variables remain to be clarified by future research.

Next Steps

The number of included studies in this meta-analysis was modest and limited the power of moderator analyses to detect potentially meaningful relationships among variables of interest. Future research should capitalize on the ongoing interest in peer-mediated learning for ELLs, and the increased number and quality of available studies would enable stronger conclusions about possible moderating variables (e.g., the finding here of no difference between FL and SL settings). This study might inform future research aimed not just at determining the effectiveness of approaches to ELL instruction but also at efforts to determine the processes and pedagogical factors that make peer-mediated learning effective for ELLs. For instance, too few studies reported information about teachers (e.g., years of experience and certification to work with ELLs) to enable moderator analyses of teacher-level variables. Likely, this line of research will require researchers to examine academic and linguistic mechanisms, and both qualitative and quantitative designs will be needed to fully understand the nature and limitations of these mechanisms. In addition, variables not included in this meta-analysis (e.g., relationships of power and student motivation) might be examined in the future to understand their influence on the effectiveness of peer mediation for ELLs. Finally, digital and 21st-century literacies are increasingly essential for ELLs’ participation in society and the workforce, and future research should explore the ways that peer-mediated learning facilitates ELL interaction during technologically mediated literacy practices.

This study addresses the long-standing call of the National Literacy Panel to provide empirical support for the effectiveness of particular instructional practices at improving literacy outcomes for ELLs, and the reported moderator analyses provide a useful starting point for more sophisticated analyses of the circumstances under which peer mediation is most effective with ELLs. The evidence documented in this study supports the rich qualitative evidence of previous syntheses that suggest that peer-mediated learning is an important component of quality classroom instruction for culturally and linguistically diverse students.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.