Abstract

This report presents the results of the development of a methodological approach to provide empirical evidence that family literacy programs “work.” The assessment techniques were developed within the action research project Literacy for Life (LFL) that the authors designed and delivered for 12 months, working collaboratively with three different cohorts of immigrant and refugee families in western Canada. The goal was to develop valid and reliable measures and analyses to measure the impact on literacy skill and knowledge in a particular version of a literacy program that incorporated real-world literacy activities into instruction for low-English-literate adults and their prekindergarten children, ages 3 to 5. The authors offer this approach to assessment as a promising way to measure the impact of socially situated literacy activity that requires taking the social context of literacy activity into account. They offer this work not as the answer to the challenge of documenting the value of working with families and literacy, but as one way to think about focusing curriculum and assessment within programs that validate the real lives of the participants and build bridges between those lives and literacy work within family literacy programming.

Ever since Brian Street’s (1984) seminal book Literacy in Theory and Practice, researchers, theorists, and classroom teachers have struggled to apply to the classroom Street’s assertion that literacy is always socially situated. Although different attempts have been made to wed literacy instruction to socially situated literacy activity (see Lee, 1997; Morrell, 2008; Morrell & Duncan-Andrade, 2002), the most commonly implemented literacy curricula in schools continue to embody Street’s antithetical construct of literacy as “autonomous” skills, separable from contexts of use and measurable as independent end points. Within this observation, it is crucial to point out that this autonomous, context-free construct of literacy has gained sufficient political traction that research into the teaching of reading and writing is funded, and the results accepted, primarily by the degree to which the research relies on such end-point measures of literacy ability.

Thus, a catch-22 (Heller, 1999) situation has arisen for literacy researchers. For those who wish to bring socially situated literacy activity into the literacy classroom, believing (as we did) that it would have positive effects on literacy growth and development and wishing to test this hypothesis, the holders of political power decreed that such hypothesized positive effects must be shown with measures of literacy skill that are designed to be free of any social context aside from that of the test itself.

It was against this background of theory and practice that we engaged in an attempt to explore the effects of engaging students in real-life literacy activity within one of the “messiest” of research conditions—the family literacy program, with the many confounds of volunteer samples, irregular attendance because of real-life responsibilities, retention in programs over time, variability of home lives and activities, and so on. We sought to develop measures of literacy growth that could be related to the frequency of engagement in socially situated literacy activity in the classroom. This required that we find a way to quantify the degree to which the literacy activities that our students engaged with were, in fact, “socially situated” as defined by sociolinguists and other literacy theorists (e.g., Bakhtin, 1986; Street, 1984). Although our work on measurements was on a pilot basis because of small sample sizes, we believe it provides significant theoretical and future practical implications for both teachers and researchers.

The assessment techniques we present were developed within the context of the action research project we called Literacy for Life (LFL) Intergenerational Literacy Program that we designed and delivered for 12 months, working collaboratively with three different cohorts of immigrant and refugee families in western Canada. Our goal for this aspect of the project was to find ways to develop valid and reliable measures and analyses to measure the impact, if any, on literacy skill and knowledge of offering a particular version of family literacy program that incorporated real-world literacy activity into instruction for low-English-literate and non- or low-schooled adults and their prekindergarten children, ages 3 to 5. The results of the analysis of the LFL program have been presented elsewhere (Anderson, Purcell-Gates, Gagne, & Jang, 2009; Anderson, Purcell-Gates, Lenters, & McTavish, in press), and we describe it only to the degree that it clarifies our development of the assessment procedures and highlights the significance of these developments.

Theoretical Framework and Relevant Research

In this article we report our efforts to develop valid and reliable measures that would allow us to test our hypothesis that having participants engage in socially situated, real-life literacy activity within the program would have a positive effect on their literacy growth. Our research took place within the theoretical frame of literacy as socially situated. This theory asserts that literacy is always situated within social and cultural contexts and within relationships of power and ideology (Barton & Hamilton, 1998; Street, 1984). Within this frame, there are multiple discursive literacy practices that can be inferred from texts and purposes for reading and writing those texts—and analysis of these practices must be shaped by and interpreted in terms of the sociocultural and sociolinguistic contexts within which they occur (Bakhtin, 1986; Barton & Hamilton, 1998; Street, 1984, 1995; Vygotsky, 1978). Socially situated literacy is literacy in use, and this lens, itself, is situated within a larger linguistic argument called “sociolinguistics” that arose in the 20th century in response to the dominant paradigm in the study of language known as structuralism (Culler, 1976).

Linguists such as Saussure studied language through a theoretical lens that intentionally focused on units of language outside of social contexts (e.g., “What makes this sentence a ‘good’ sentence?” “What are the syntactic deep structure rules of English? Spanish? German?”). Bakhtin (1986) and others argued, however, that there is no such thing as language outside of social context, that is, it doesn’t exist. Rather, language becomes language only when it takes on a communicative role within human activity. The study of sociolinguistics has grown over the years. Within Bakhtin’s social perspective, from which we worked, the basic unit of analysis for linguists is not the clause/sentence but rather the utterance. Utterances can be small (e.g., conversational exchanges) or large (a novel). An utterance is language that is both spoken and heard (or read and written) by real people. This means that speakers always have a listener in mind when they formulate an utterance and that writers always have a reader in mind when they write and vice versa. Thus, language is essentially communicative and dialogic. Bakhtin elaborates on this by describing what he called the “heteroglossic” nature of language use, emphasizing the fact that speakers (writers) never formulate an utterance outside of a landscape of voices: past, present, and future conversations—contexts for language use.

What Is “Real-Life Literacy Activity?”

Drawing on this theoretical frame, which views language as language in use, for purposes of our research we defined real-world literacy activity as the reading and writing by students in literacy classes of real-world texts for real-world purposes (e.g., reading menus to order food, writing letters to maintain friendships, writing checks to pay bills, reading novels to enjoy a good story).

Real-world texts

The reading and writing of real-world texts is an essential component of our conception of real-life literacy activity. Others have defined, and named, real-world texts in related but different ways. Some refer to them as everyday texts and equate them with informational text (Fountas & Pinnell, 2011) such as food labels and street signs. Purcell-Gates has referred to them as authentic texts and defined them as any type of text that people read or write, excepting those that are clearly written to teach people the skills to read and write (Purcell-Gates, Degener, Jacobson, & Soler, 2002; Purcell-Gates, Duke, & Martineau, 2007). We have named texts that are written to teach literacy skills: “school-only texts.” For the family literacy study, we also used this definition and refer to socially situated literacy as real-life literacy activity. Our use of the terms and definitions of real-life literacy activity and school-only literacy activity is descriptive and not judgmental. We do not consider real-world literacy activity in the classroom as “better” than school-only literacy activity. In fact, we consider the use of school-only texts as important to learning to read and write. We do, though, consider school-only texts as qualitatively different in that the reading and writing of them is not communicative and dialogic in the same sense as real-life literacy activity.

We rely on Bakhtin for our sense of the dialogic nature of real-life texts, that is, personal letters are written by a real person to another real person who is known and interested in the content, or storybooks are written for real children to listen to or to read. It is also from Bakhtin and the later work of Halliday and Hasan (1985), in particular Hasan (1989), that we draw our definitions and names of text types, reflecting Bakhtin’s understanding that language in use is never completely free of structural constraints but is always expressed in the form of genres that are socially constructed to serve social purposes (Hasan, 1989; Purcell-Gates, Perry, & Briseño, 2011).

Real-life social purposes

Real-life literacy activity within instructional contexts is not defined solely as the reading and writing of real-life texts. Within our conception of language as communicative, we assert that real-life texts must be read and written in the instructional context for real-life purposes, that is, social purposes involving both a reader and a writer. Within process writing instruction, this is often exemplified by the need of writers to consider their audience(s). Within the LFL program we observed the following real-life purposes for reading and writing of

Adults

Reading the Entering (writing) Composing

Preschoolers

Composing (while the teacher writes) Listening to the teacher read Reading

Real-life social contexts

The key to distinguishing real-life literacy activity from school-only literacy activity, in our scheme, is the social contexts within which the reading and writing take place. Real-life social activity motivates the reading and writing of real-life texts for real-life purposes in the classroom. Without this real social activity, or context, the purposes for reading and writing are rendered school only. For example, reading ingredients on a yogurt container to see if animal hooves have been used is not real-life outside of a real-life social context such as one that involves religious practice that prohibits the consumption of animal hooves. It is this type of “situatedness” that renders literacy activity as “socially situated.” We owe this example to an incident that occurred during the second year of the LFL program. A young girl from a Muslim background shared with her father one night that her LFL class had eaten yogurt for a snack. He told her that some yogurts used gelatin in their ingredients. She was not allowed to eat gelatin since it was made with animal hooves. It was forbidden by her religion. This young girl approached her LFL teacher the next day with this news, and the teacher created an authentic literacy event by reading and pointing to the list of ingredients to see if gelatin was included. This became a regular literacy event whenever yogurt was part of the snack provided.

Previous Research on Instructional Use of Real-Life Literacy

The research on measurement of socially situated literacy has evolved over time, and the work presented here builds on previous research. Turner (1995) conducted a study of first-grade children in six basal classrooms and six whole-language classrooms with the focus on the effects of instructional contexts on children’s motivation for literacy. Turner used a theoretical perspective in which authentic is defined as the reading or writing of real-life texts for real, and personally chosen, purposes. She observed in the classroom and used in her analysis, five types of literacy tasks: (a) drill and practice tasks, (b) tasks where children could manipulate materials to get an outcome such as games, (c) partner reading, (d) writing on self-selected topics, and (e) trade book reading of self-selected books. She classified the literacy tasks as “open (child-specified processes/goals, higher order thinking required) or closed (other-designated processes/goals, recognition/memory skills required)” (p. 411). She found the strongest predictors of motivation were open literacy tasks.

The LFL study centered specifically on preschool (as well as adult) literacy. Thus, Neuman and Roskos’s (1992) study of the results of placing real-life literacy objects (such as pencils, notepads, books, recipes, cookbooks, and so on) in children’s play areas in preschool settings was informative for our work. The researchers found significant changes in behavior for the children who had access to the literacy objects. The children in the intervention group displayed greater frequency, duration, and complexity of literacy play as well as greater complexity of literacy demonstrations during play. Studies such as these contributed evidence to the notion that involving children with real texts within life-like contexts is beneficial to literacy development.

Work on attempts to measure the degree of “real-lifeness” of literacy activities from a sociolinguistic theoretical lens began with the study by Purcell-Gates et al. (2002) that sought to measure correlations between the degree to which whole classes of adult literacy learners used real-life literacy materials and the change in frequency and type of their literacy practices. For that study, only real-life texts were focused on and rated. The ratings built on the degree to which the texts were read or written for real-life purposes (i.e., a newspaper to learn the day’s news of interest to the reader). It was during the measurement work done for that study that the role of real-life social contexts as motivators of what would be considered real-life purposes for reading and writing was clarified.

The measurement work from the adult literacy study was later incorporated into another large study that focused on issues of explicit teaching of written language features of science genres used in third grade science instruction (Purcell-Gates et al., 2007). Because the primary investigators of that study, building on their own teaching experience, held real-life literacy activity within instruction as preferable to a strictly skills-based approach to teaching, they included real-life reading and writing in both the experimental and control groups, manipulating only the degree of explicit teaching of the language features of the genres used in the science instruction. However, they decided to code and account for degree of “real-lifeness” of the literacy activity in both experimental and control groups as well as for degree of explicit teaching of science genres. The researchers worked to develop a coding scheme that would reliably reflect the degree of real-life reading and writing in the classes. They pulled apart the text aspect of real-life literacy activity and the social purpose, maintaining the motivating social context of the literacy activity as an indicator of the real-life nature of the social purpose. For example, to code the reading of procedures for how to construct a simple machine in order to build one, one must actually be in a real-world context of use for those procedures. Such a context was created by one teacher who arranged for a large file cabinet to be left in the middle of the room during the night and, when she tried and failed to locate a building maintenance person to remove it, she established through class discussion the need for a simple machine such as a pulley system to move the cabinet. This context led to the reading of information books and procedures for building such a device, which led to the students creating the means to successfully move the cabinet.

The LFL program incorporated an operational definition of real-life literacy activity that hewed quite specifically to that which was used in the two studies described above. For the coding of literacy activity for the LFL classes, we made minor changes to the scale for degree of real-lifeness of texts and purposes for reading and writing them that reflected the following differences in contexts between the present study and the science genres study. The science genres study was situated in a more homogenous and instructionally controlled setting: second- and third-grade students enrolled in public elementary schools. The LFL program served low-schooled immigrant adults and their preschool children who were attending voluntarily and who were fitting the program into very busy and sometimes chaotic lives. These differences led to different instructional foci, that is, adult basic second-language literacy instruction and preschool emergent-literacy second-language literacy instruction. Also, the teachers in the LFL program benefitted from what the researchers directing the science genres study had learned about coaching the teachers in the real-life literacy model. This resulted in slight modifications to the coding scheme that reflected greater teacher clarity in what was expected in the LFL program.

This article, which deals with measurement considerations for documenting the degree to which the literacy activity in the classrooms was socially situated, is the first in which we have focused on and presented the theoretical and measurement connection on which we have been working for quite some time (Purcell-Gates et al., 2002; Purcell-Gates et al., 2007; Purcell-Gates et al., 2011).

Research Focus

For the LFL action research study, we worked to establish ways to test the hypothesis that engaging students in real-life reading and writing would affect their English literacy abilities over and above what could be expected just by attending a family literacy program. We sought to construct ecologically valid indicators of the real-life literacy instruction that we were implementing with the students. This was an action research design to implement real-life literacy programs for families on a small-sample basis, with the long-term goal of “scaling up” if we could find promising indicators that this instructional model worked. Within this, we acknowledge the parametric constraints of establishing relationships between growth in literacy skill and degree of engagement in real-world literacy activity with a small volunteer sample. We worked within these constraints always from a pilot (or “let’s try this”) mentality.

Method

Within our theoretical frame, the design of measurement and evaluation techniques never occurs in a vacuum. To understand the measurement issues, one must understand the contexts within which they occur and for which the design work is done. Therefore, we first present essential elements of the LFL program and then move to the methodological presentation for the approach we took to rendering situated literacy activity available for the type of analysis that would appropriately test the hypothesis that engagement in real-life literacy activity is positively related to literacy growth for populations such as ours.

LFL Participants and Sites

The adult participants in this study were all volunteer students who wished to improve their abilities to read and write in English. Our original goal was to recruit adults with low literacy skills in their first language, and we did accomplish this goal to an extent. Of the adult or parent students, 49% (N = 17) were nonliterate or had low literacy skills in any language. Of these, 10 students had never attended school. The rest of the participants had been schooled in their countries of origin to varying degrees, with one student holding a university degree. All but two of the students were women. They all had young children between the ages of 3 and 5 enrolled in the program.

There were two sites for the program: one in a community center in an urban, low-SES area of Vancouver, British Columbia, in southwestern Canada, the other in the central city core of a city to the east of Vancouver. We refer to these as Site 1 and Site 2. Each site had one adult literacy instructor and one emergent literacy instructor. We limited the class size to 10 to 12 participants for each level at each site. The participants differed in many ways. At Site 1, all of the parents were of Chinese origin and had immigrated to Canada between 2 and 7 years before joining the program. None of the women spoke English fluently, and several did not speak English at all. Of the seven participants in Year 1, four had graduated from high school in China and one had not attended school at all. During Year 2, three of the students at Site 1 had high school diplomas, one had gone to seventh grade, and five had very limited English but we were never sure about their education levels. However, none of the women could read English-language texts such as recipes, novels, or newspapers. Some of their children began the program speaking some English, a few more could understand English but could not speak it, and three children could not speak or understand English. None of the children could read or write in Chinese or in English. Although all of the women participated in the adult portion of the program, it became apparent as we got to know them that their primary interests appeared to be in helping their children prepare for the Canadian English-speaking schools.

Site 2 presented a very different picture. It was located in a rapidly growing city east of Vancouver and was home to increasing numbers of primarily South Asian immigrants. Punjabi, Hindi, and Arabic were widely spoken in the community. Just prior to the start of the first year of the program, Canada began settling refugees from Africa in this area as well. In our search for sites the first year, we were drawn by school district leaders in this community to a small, storefront social service agency run by African immigrants for newly arrived African refugees. The majority of the women were from Sudan, with others coming from Somalia. The majority of them spoke little to no English. Most of them were unschooled, and none of them considered that they could read or write English. None of the children spoke English, nor could they read or write. As opposed to the participants at Site 1, it became apparent as we worked with the women at Site 2 that they all wanted to focus primarily on their own literacy, although they were thankful that they had a teacher to take care of their children. Concluding that we needed more space at the end of the first year (4 months), we moved to several portables connected to an elementary school for the second year of 8 months. The participants for Year 2 at Site 2 were all new, recruited through multicultural workers employed by the school district. The families were a mix of refugees and immigrants from Saudi Arabia, Ethiopia, Jordan, Afghanistan, and Syria.

As with many family literacy and adult education programs (Comings, Parrella, & Soricone, 1999), we saw a constant fluctuation of participants over the 12 months total of the program. To assess our goal of increasing the literacy levels of the participants, we needed pre- and posttest data. At the end of the program, we had these data for 10 adults and 14 children from Year 2 only, although we had pretest data or posttest data alone for several more. In addition, we decided at the end of Year 2 to give the nonliterate (at the beginning) students a more meaningful assessment, reflecting what they had actually been working on, and gave them the Test of Early Reading Ability–III, a test of emergent literacy knowledge. However, we had not done this at the beginning, so their growth is not reflected in the analysis.

The Program

The LFL program ran for a total of 12 months, with 3 additional months devoted to teacher development in ways to incorporate real-life, situated literacy activity into a family literacy (adult and early literacy) program. Two classes per week, 2 hours per class, were offered.

Each class began with the family-time-together component. Following this, the adults met with the adult literacy teacher and the children met separately with the emergent literacy teacher. The teachers in the program were also research assistants for the study and participated fully in weekly research meetings and writing, circulating, and commenting on written notes for each class (see below).

Family time together instruction

During this part of the program, parents and children met together with both the adult literacy teacher and the emergent literacy teacher. The instructors at each site collaborated on planning for, and took turns leading, these sessions. The overall purpose of these sessions was to introduce the parents to ways that they could assist their young children at home with acquisition of early literacy abilities. The activities also reflected ways that the parents and children could work together, through games, on early literacy skills.

Adult literacy instruction

The adult literacy teachers focused on engaging their students in real-world literacy activity, as defined above, with the goal of increasing the English literacy abilities of the students using this model. Direct teaching of skills (school-only literacy activity) was interwoven with real-world reading and writing activities as deemed necessary for different students. The nonliterate/nonschooled students required much more direct skill teaching than the others, although they all needed direct teaching of English-specific skills such as vocabulary, spelling patterns, and English textual genre features (e.g., reading the Domino’s® flyer to find out how to call and order a pizza).

Each day began with the parents signing the sign-in sheet for the purpose of documenting attendance for the study—a real-world literacy activity. This activity served as the dominant real-world literacy activity for some time for the nonliterate students, who learned to hold a pencil and make spaces between words (first and last names) and learned the names of the letters in their names and how to write them. Skill lessons—school-only literacy activity—for these beginning readers and writers included practicing the alphabet and learning letter sounds, using alphabet letter cards and an electronic program device, practicing writing their names, and learning to write short sentences describing their families and their countries of origin (e.g., “My name is Hassan. I come from Yemen.”).

The first-language-literate students spent more time engaged in real-world English reading and writing. They read English-language newspapers to learn about the lead content of certain foods, they wrote greeting cards to each other and to children celebrating birthdays, they wrote recipes for ethnic holiday celebrations, they read directions for using the computer where they learned to Google for information, they wrote immigrant stories to submit to the local newspaper, which was soliciting such accounts, and so on. Direct skill teaching—school-only literacy activity—centered on spelling newly learned English words, word meanings, and English grammar needed for different types of written texts.

The teachers always solicited, or responded to, ideas for real-world reading and writing from the students. For example, one woman (a refugee from Sudan) expressed the need to learn how to read a receipt. She knew that receipts were important in Canada and that store workers would always request one if she needed to return a product. However, they were totally mysterious to her. Responding to this request, the teacher brought in several different receipts of her own (while students pulled their own from handbags) and conducted lessons on (a) the purpose(s) of receipts and (b) how they are structured (items listed, often in abbreviations, and their prices next to them in a separate column, totals and tax listed at bottom, and so on). English words were learned along with their spellings, and the students learned to make receipts functional in their lives.

Because we were working entirely with English language learners, the teachers always made oral conversation the base of the instruction. Students were never required to focus on print before developing ideas and expressing them orally in English.

Emergent literacy instruction

The central vision for the program for the young children was that of the high-literacy-use home within which young children learn many emergent literacy concepts—concepts that serve them well when they begin school and that are usually assumed and built on by kindergarten and first-grade teachers (Purcell-Gates, 1995, 1996; Purcell-Gates & Dahl, 1991; Snow, Burns, & Griffin, 1998; Taylor, 1982). Rather than building the emergent literacy instruction on the didactic teaching of early literacy skills such as phonemic awareness, letter name knowledge, and sounds, we chose to re-create the literacy activities through which young children appear to learn such concepts in high-literacy-use homes. Emergent literacy research has documented that these early literacy concepts are acquired as children participate in the lives of their families, observing and engaging in the literacy activities that mediate those lives. So, for example, children appear to learn the most basic of emergent literacy concepts—that print says something, that is, is semiotically linguistic, and is used by people who can read it, or write it, for their different life activities—by observing its use in their family activities. Signs with the word STOP cause the driver to stop the car; children can say “Happy Birthday” or “Merry Christmas” to their grandparents (or cousins, friends, or teachers) by making a greeting card and writing a message on it; parents prepare a meal by reading the directions and following them from a cookbook or recipe card; and so on. In the process of these meaningful literacy activities in their homes, young children, with support and direction from significant others in their lives, learn how words can be written and learn letter names and letter sounds, phonemic awareness, and many concepts of print such as which direction to read, the difference between letters and words, and that people read print and not pictures. If they are read to from children’s storybooks, they also learn the vocabulary, syntax, and decontextualized nature of written language (Purcell-Gates, 1988).

Building on this documented process of early literacy learning, the LFL emergent literacy instructors designed a program in which the young children engaged in typical early childhood activities such as painting, playing games, making art projects, and listening to stories. Where we differed from most preschool programs, however, was in inserting into these activities a metafocus on print and texts. This meant that for each activity the teacher would either introduce texts that would mediate the activities or create activities that would require the reading or writing of texts. All of this was done within the construct of real-world literacy activity, adopted for this program—real-life texts for real-life purposes situated within appropriate social contexts.

For example, the class at Site 1 was in dire need of play materials for the children. This led to the teacher developing the idea with the children of making their own play mat where they could stage different games and play. It contained pictures of houses, roads, and so on. The teacher led the children in inserting text such as “Start,” “Stop” (on a stop sign), the children’s names on individual houses that they each drew for themselves, store names on pictures of stores that the children drew, and so on.

Always, the teachers explicitly pointed to the print, read it, and explained its purpose (e.g., “This word says ‘start’ and it tells us where to begin with our pieces. S-T-A-R-T. ‘Start.’”). They always drew the children’s attentions to print and texts, how it worked at the letter and word levels, and how it functions in the life activities of people (Bennett-Armistead, Duke, & Moses, 2005). The teachers also pointed out print in the environment, during outside walks or at play as well as in the classrooms. The children took environmental walks, during which their teachers pointed out the ubiquitous signs, logos, notices, and other forms of print in the neighborhood. Menus for snack time were generated and written by the teachers to be used later by the children when they “ordered” what they wanted to eat. With teacher support, the children made birthday and get-well cards for family or other class members, complete with emergent writing. Part of the construct of real-world literacy is that writing always has a real audience who will read the writing for real purposes. Thus, these greeting cards were always delivered to the person who was actually celebrating a birthday or recuperating from an illness. Grocery lists for trips to the store for snack items or craft items were generated and written by the teachers who then used the lists to purchase materials, sometimes accompanied by the children. Complaint letters were generated and written (modeled) by the teachers and sent to site managers requesting more heat or less interruption. Children and teachers watched trucks pull up to the school and speculated together what might be in them, based on the writing on the sides. The children also heard a wide range of books representing different genres (e.g., information books, rhymes, alphabet books, stories) read to them by the teachers. As for their parents, the sign-in sheet always began the sessions, with the children “signing in” as best they could and with the teachers scaffolding each child in this activity according to their level of development. Such an intentionally focused early literacy program was designed to bring the young students up to the levels of English emergent literacy knowledge held by their soon-to-be school peers from high-English-literacy-use homes.

Data Collection

The following data sources were used for the development of the measures of growth: (a) detailed teacher notes for each session, written to document program implementation, and (b) pre to post assessments of literacy ability. This type of data is typically gathered in action research studies.

Class field notes

Information in the field notes included (a) the time each participant began and ended participation for each class period (the sign-in sheets were also used for this), (b) each activity individuals engaged in during the session, (c) texts used and purposes set up for reading and writing them for each activity, and (d) research comments, insights, and recommendations for future instruction. These notes were written and circulated via email to the entire team within 2 days of each class. One of the project directors would then comment on each report in track changes, answering questions from the teachers, making suggestions for the upcoming class, and emphasizing and clarifying when a particular activity met the criteria for “real life.” Often other teachers would also make comments. These reports with comments were then recycled to the team via email for further comments, if needed.

Weekly 2-hour research meetings were held during Year 1 and biweekly meetings were held during Year 2. At these meetings, teachers reported on the state of their respective classes and individual students and raised issues regarding implementation of the instructional model. The minutes for these meetings served to triangulate with the class field notes for the analysis.

Assessments

The pre to post assessment data were used to document the degree to which we met our pedagogical goals to increase the English literacy levels of the adults and the emergent English literacy knowledge of the children. We used the normal curve metrics as our comparison, or control, group (Grant & Leavenworth, 1988). We reasoned that individual student growth as the result of participation in the program would be reflected only with unexpected movement of scores on the normal curve, referenced for each assessment. This necessitated the use of norm-referenced assessments with equivalent forms for both the adults and the children. For the adults, we chose the Canadian Adult Achievement Test (CAAT), widely used across Canada for adult basic education students and with immigrants and refugees included in the norming sample. We used the literacy-related subtests: Vocabulary, Reading Comprehension, and Spelling.

We gave the young children the norm-referenced Test of Early Reading Ability–III (TERA-3) to assess their growth in emergent literacy knowledge; it is widely used by researchers of early literacy development. We administered one form in the fall and the alternate form in the spring. We did collect end-of-year data for the children for Year 1, and although these data could not be used for our growth analysis, they were helpful for understanding the results of the growth analysis for Site 1. The participants were basically the same at Site 1 for both years, so we administered the alternate form (to the one given at the end of Year 1) of the TERA-3 for these children at the beginning of Year 2. The TERA-3 has three subtests: (a) Alphabet, measuring children’s alphabet and letter-sound knowledge; (b) Conventions, measuring familiarity with conventions of print such as book orientation, print orientation, and directionality; and (c) Meaning, measuring children’s ability to comprehend written material (Reid, Hresko, & Hammill, 2001, p. 7).

For both adults and children, we administered the pretest assessments on their third visit to the program (for Year 2). This was to ensure reliability of outcomes by increasing their comfort level with the teachers and the types of activities they would experience. We also believed that, with their lack of experience with Canadian schools, they might react negatively to being assessed on their first day and would likely fail to return.

Analysis

Our goal with the analysis was to document literacy growth for both the adults and their young children. However, simply documenting growth, even unusual growth as compared to the normal curve, does not indicate effectiveness of specific components of a family literacy program. The more significant question for us was whether our “real-life” literacy instructional model could account for some of this unusual growth, if it occurred, in literacy skill. Again, we were very aware of the parametric constraints on our ability to answer such questions, given the small number of participants and lack of control variables. Nonetheless, we felt it was necessary to start the process of developing ecologically sensitive outcome measures for intergenerational literacy programs. We offer our work on this as one such beginning. The description that follows presents our approach to this measurement challenge and presents the emerging picture of the results of this approach with the LFL program and participants.

Fidelity to treatment

How “real world” were the literacy activities made available within the classes? We needed to know this before we could assess how the specific literacy activities offered the students were related to their growth. For this analysis, the class field notes, written by the respective teachers, were coded for the degree to which the teachers involved their students in real-world literacy activity. To do this, we pulled from the teacher notes each instance of literacy activity. This literacy activity unit of analysis was defined as any activity that includes reading, writing, listening to reading, and watching writing by the students. The units were bounded by a focus on one text type within any given activity. Each unit of literacy activity was coded for the degree to which it was considered real life. This was done by breaking down each literacy activity according to text and purpose.

We determined what type of text was involved in the literacy activity and whether it was a real-life text. Texts were coded according to their specific genre (Hasan, 1989) and a real-life text was any text used by people, outside of a learning to read and write purpose or context. The texts were coded 1 if they were not used in the real world but were used to learn the skills of reading and writing. Text genres that were judged real world were given a code of 2.

Once the text genre was determined for each literacy activity, the purpose of that activity was also considered. The purpose was conceptualized as the purpose served by reading, writing, listening to, or observing the associated text for each literacy activity. This, in turn, required an analysis of the social context within which the literacy event took place. The purpose was real life and coded 2 if the purpose for the literacy event was the same as the purpose for using the text in similar social contexts in the real world outside of a learning to read and write purpose/context. If the purpose of using the text for the activity was judged school only (see above), it was coded 1. The real-life rating for each literacy activity was calculated by averaging the real-life ratings of both the text and purpose. Therefore, each literacy activity could be scored as 1, 1.5, or 2; the higher the number, the more real life the activity.

Interrater reliability

Randomly selected samples of data (20%) from teacher notes were recoded by two independent raters, and interrater reliability was calculated for two separate coding steps: identification of unit of analysis and real-life nature of literacy activities. The percentage agreement between the raters’ identification of the units of analysis was fairly high at 94%. There was significant interrater agreement on the real-world levels of the texts according to Cohen’s kappa (N = 285) = .89, p < .001.

Coding manual

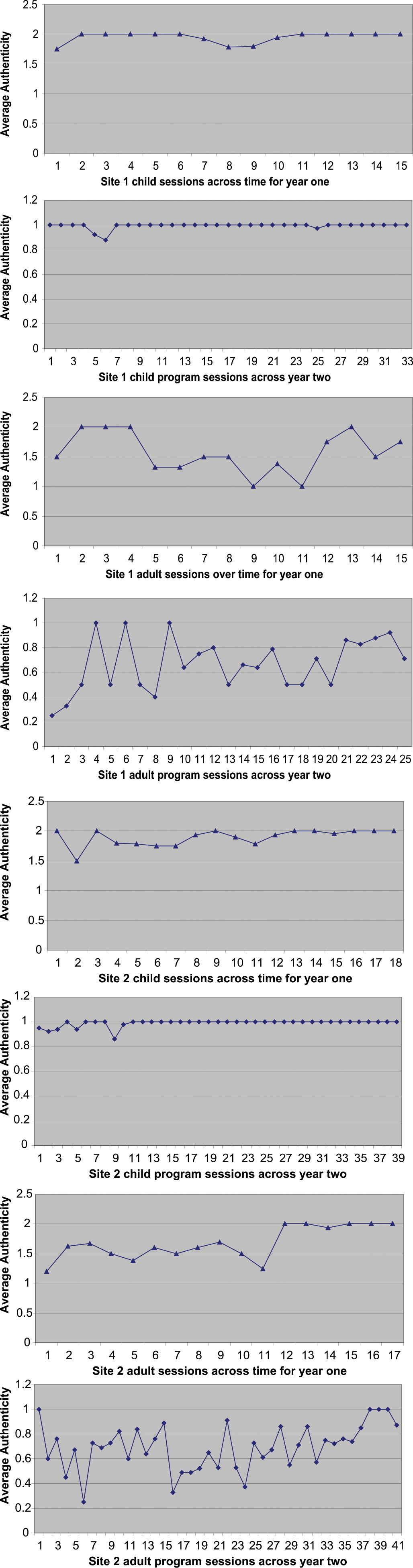

From this analysis, we created a coding manual for the rating of real-life literacy activity in classrooms (see the appendix). This is significant to the field in that it opens the door to the documentation of real-world literacy activity in classrooms with a high level of reliability. Using this coding manual, we scored all of the classroom field notes to ascertain the degree of real-world literacy in each class over the course of the study. All of the adult and early literacy programs maintained fairly high levels of real-life literacy activity. The results of this analysis can be seen in Figure 1. Note that the figures use the term authentic(ity) in place of real life, the latter reflecting the recent change in usage by the authors. For us, these two terms are interchangeable, given our specific definitions for authentic and real-life literacy activity.

Levels of authentic literacy activity offered for adults and children in the Literacy for Life Program for Year 1 and Year 2, by site

Degree to which each student participated in the treatment model

The fluctuation of attendance and the differing levels of schooling among our participants (common characteristics of family literacy programs) made estimating student experience with real-life literacy activity from class averages (as was done for the text study, described above) problematic. Thus, we sought to create a variable to capture each participant’s exposure to highly real-life literacy activity over the course of their participation in the program. We call this the exposure to real-life literacy activity variable. This variable was created by extracting all of the literacy activities that were available to the students in each session as described by the teachers in their daily program session notes and the class sign-in sheet with name and times in and out data. The overall real-life score for each literacy activity was determined as described earlier. Some activities were deemed highly real life and some partially real life, whereas other activities were deemed school only. To determine each participant’s exposure to real-life literacy activity, we counted how many highly real-life literacy activities each person was exposed to over the course of the program. We then counted how many highly real-life literacy activities were taught over the course of the program and that would have been available to each student had she or he attended every class or been present in a class when the activity was underway. To create a variable of exposure to real-life literacy activities, we divided the total number of real-life literacy activities that each person participated in over the entire program by the total number of available real-life literacy activities over the course of the program.

Literacy growth

We conducted the analysis for growth in English literacy abilities for the parents in the program with the nonparametric Wilcoxon signed rank test, using the pre- and postprogram assessment results on the CAAT for Year 2. Nonparametric analysis was required because of the small sample size and the nonnormal distribution of scores. Nonparametric methods were developed for use in cases where the researcher knows nothing about the parameters of the variable of interest in the population (hence the name nonparametric). They are perhaps more appropriately called parameter-free methods or distribution-free methods. Nonparametric methods are most appropriate when the sample sizes are small (< 100; StatSoft, n.d.).

The analysis for growth in early English literacy abilities for the children was similarly conducted with the Wilcoxon signed rank test, using the pre- and postprogram assessment results on the TERA-3 for Year 2. Again, a nonparametric test of significance was called for by the small sample size and the nonnormal distribution of scores. The nonparametric tests allowed us to test whether the parents and the children’s growth in literacy skill was greater than what would be expected according the normal curve metric used by the assessments.

Establishing impact of program on growth in English literacy ability

We then used the newly created exposure to real-life literacy variable (see above) to determine the relation between pre- and postprogram change scores for the child and adult assessment subtests (TERA-3 and CAAT, respectively) and their exposure to real-life literacy activity in the LFL adult and child programs. The change scores were calculated by subtracting the preprogram assessment from the postprogram assessment. Correlations were run for parents and children in the program for each subtest.

Results

Literacy Growth

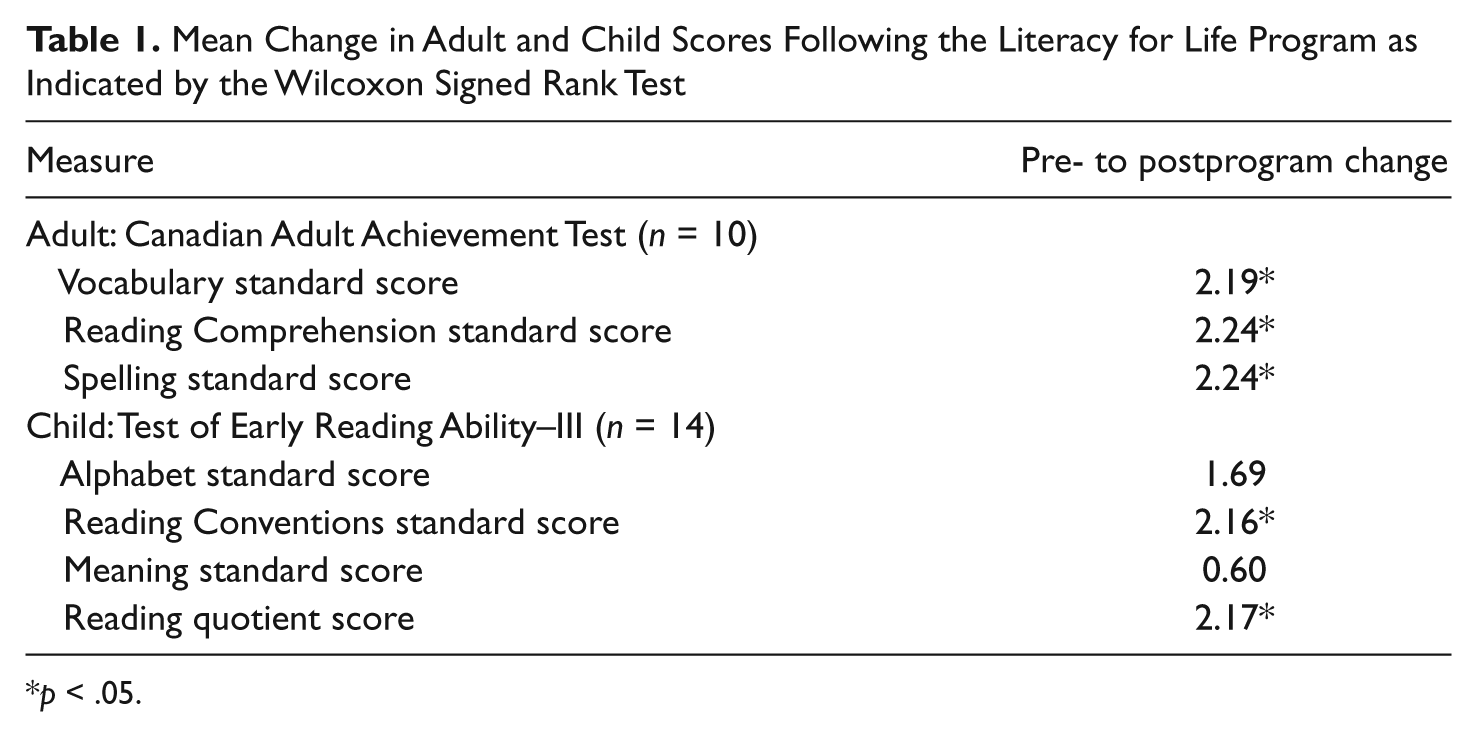

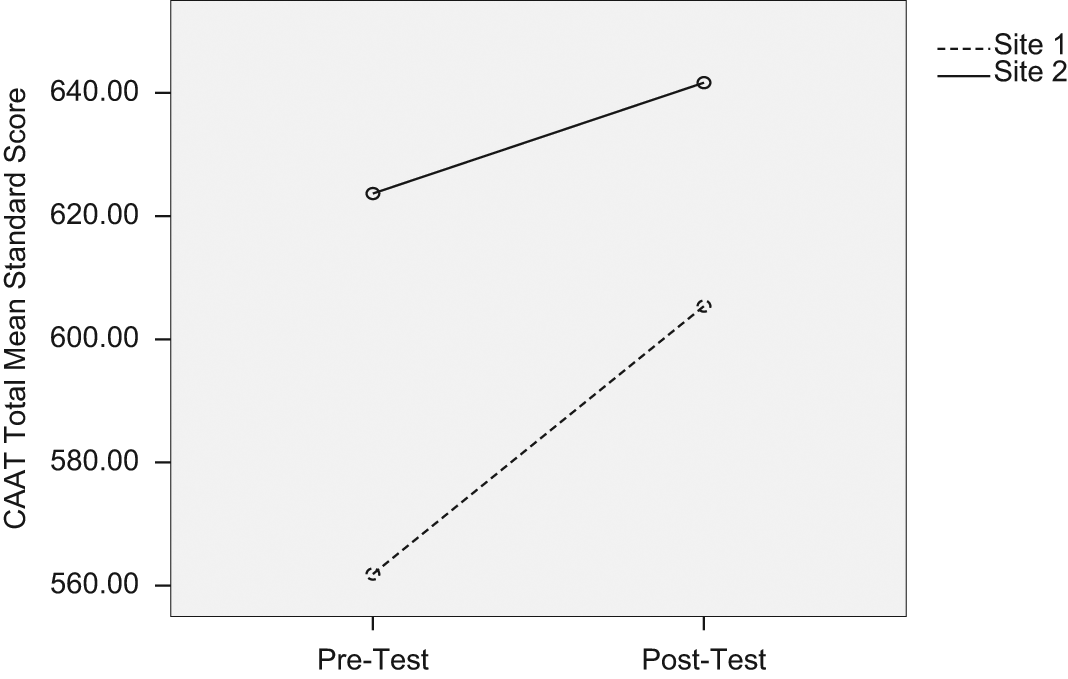

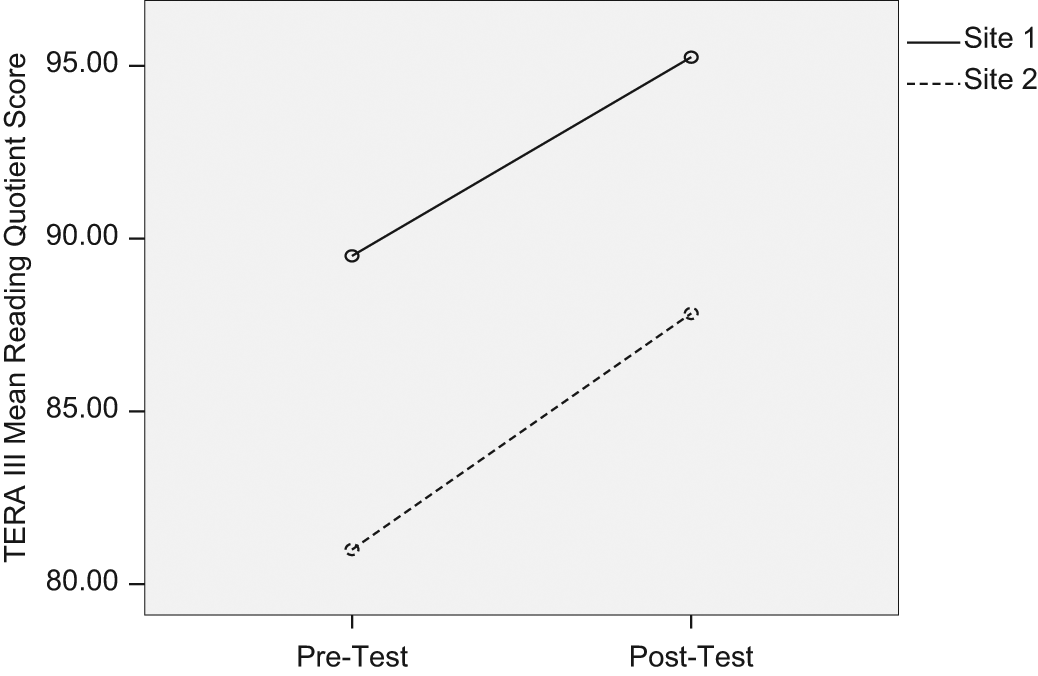

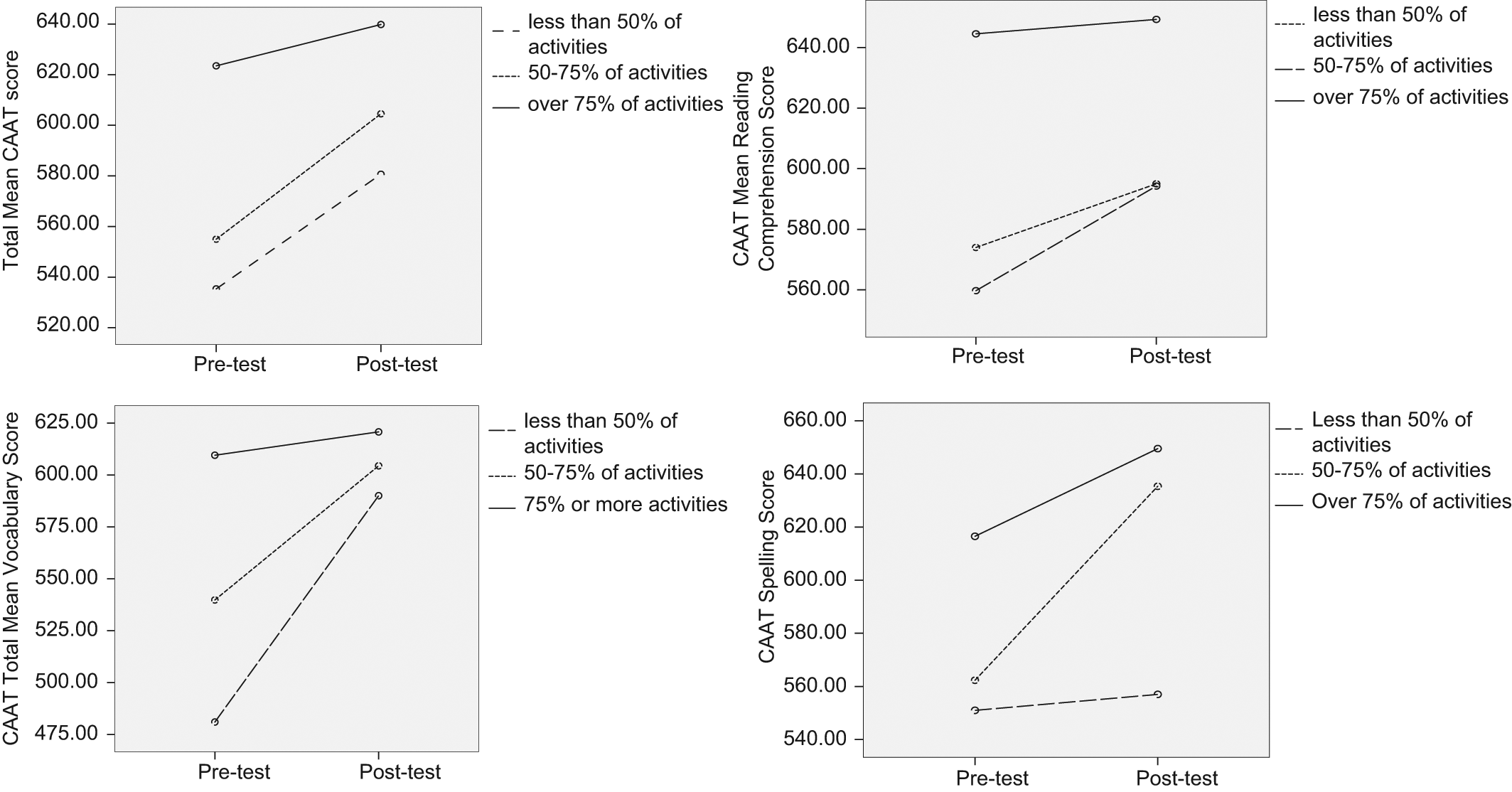

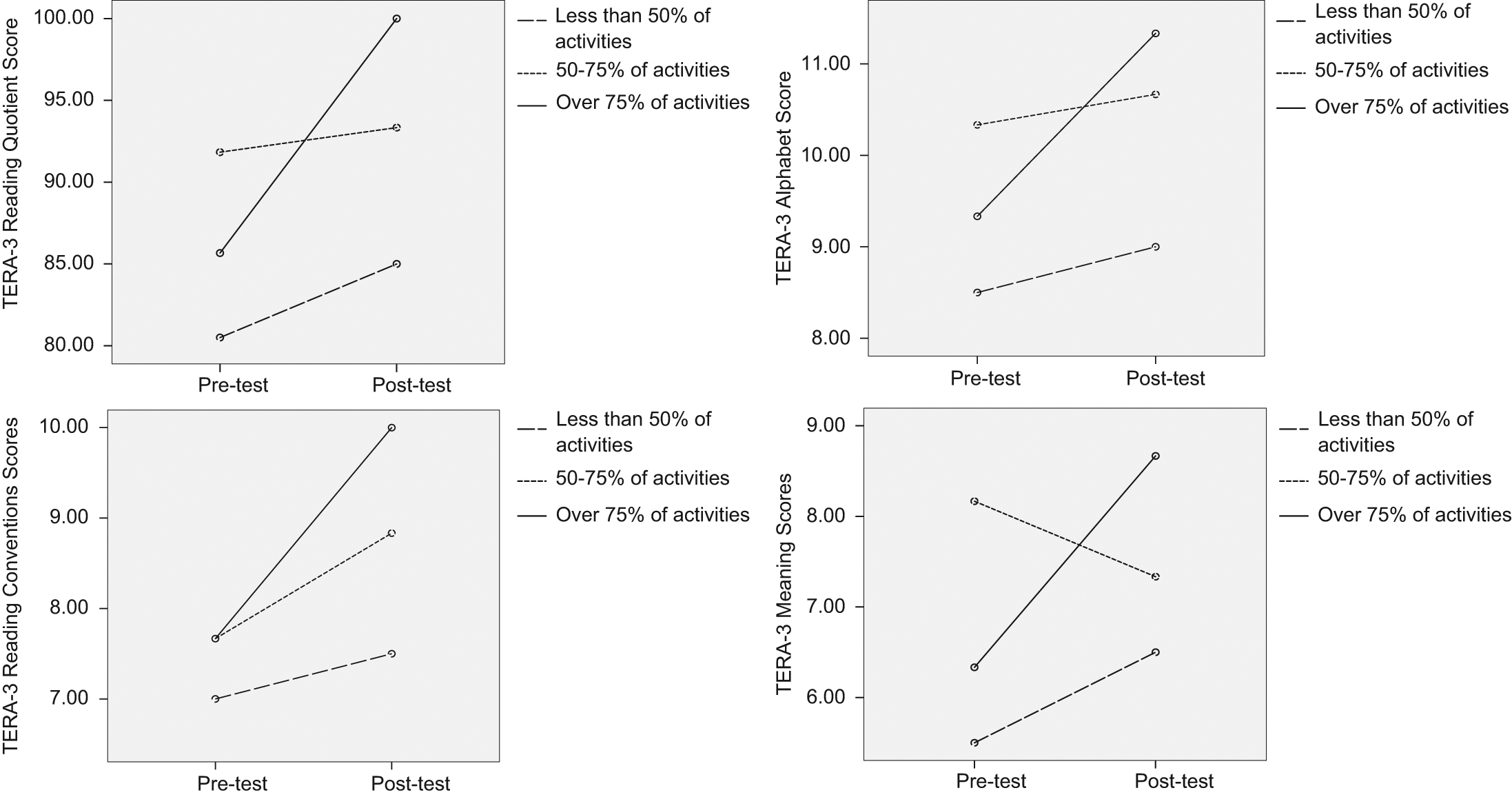

The results of the growth analysis are presented in Table 1 for the adults and children. The overall results for the adults and children are displayed in Figures 2 and 3. Both groups registered statistically significant growth in their English literacy and emergent literacy abilities as compared to expected growth, reflected in the normal distribution and related normed scores for each assessment.

Mean Change in Adult and Child Scores Following the Literacy for Life Program as Indicated by the Wilcoxon Signed Rank Test

p < .05.

Change in Canadian Adult Achievement Test (CAAT) Total mean standard score from pretest to posttest

Change in Test of Early Reading Ability–III (TERA-3) mean reading quotient score from pretest to posttest

The graphs in Figures 2 and 3 reveal a clear and consistent difference in score levels between the two sites for the adults as well as the children. The results for the parents may reflect the difference in time lived in Canada between Site 1 and Site 2. They may also reflect the fact that many of the participants at Site 1 had also participated in the LFL program for 4 months during Year 1, whereas those adults at Site 2 had participated only for the 8 months of programming in Year 2, the year that the pre- and posttest assessments were given. We can only speculate without additional information. For the children, the lack of growth for the Site 1 children in alphabet knowledge (see Table 1) reflects a ceiling effect. Again, this may reflect the fact that most of the children in Site 1 had participated in the program the year before. The negative growth for the Site 2 children in the Meaning subtest of the TERA-3 may be accounted for by the fact that new children continually entered the program at this site, and by the end, when the postprogram assessments were done, there were a number of children who had entered after the start date and who had experienced difficulties with leaving their parents, thus limiting their time in the early literacy program (see Anderson et al., 2009, for the results of an analysis of the challenges we faced in implementing this particular program and instructional level).

Impact of Program on Growth

Correlations between the exposure to real-world literacy variable and the change scores of the children and their parents revealed no significant relationships. This was to be expected, given the small sample size and the accompanying lack of statistical power. There are no nonparametric tests of significance for correlations for samples this size. However, we looked for patterns that would suggest directions of relationships with the results and give us some direction in our continuing search for valid and reliable ways to document progress in programs such as this one.

To do this, we divided the participants into three groups: low, medium, and high levels of real-life literacy activity exposure. The mean changes for each group for pre- and postprogram scores for each subtest were plotted. These graphs were created for both adults and children. Figures 4 and 5 illustrate the results of this analysis for the adults and children, respectively.

Adult mean Canadian Adult Achievement Test (CAAT) score changes as a function of degree of exposure to authentic literacy instruction in Year 2, across both sites

Child Test of Early Reading Ability–III (TERA-3) mean score changes as a function of degree of exposure to highly authentic literacy instruction in Year 2, across both sites

The graphs reveal that the children who were exposed to 75% or more highly real-life literacy instruction while attending the program had, as a group, a more dramatic increase in TERA-3 scores from the pretest to the posttest. As can be seen in the graphs in Figure 5, this pattern held for each subtest on the TERA-3: Reading Conventions, Meaning, and Alphabet. Similarly, the graphs illustrate that the children who were exposed to lower amounts of real-life literacy instruction showed overall less improvement and in some cases a decline in TERA 3 scores over the length of the program. It is interesting that the graphs also highlight the fact that the children in the program who were exposed to the lowest levels of real-world literacy instruction (less than 50%) scored consistently lower on all the TERA-3 subtests on both the pre- and posttests.

The graphs of adult CAAT scores (see the graphs in Figure 4) show a different story, one that reflects, perhaps, more complex elements of their experiences in the classes. The adults who were exposed to the highest levels of real-world literacy instruction showed the smallest change in pre- and posttest scores overall. As described above, this group of parents who were already literate in their first language and possessed greater control of English participated in more authentic literacy activity. Their pretest scores were higher, and although they improved, they did not improve consonant with the level of real-life literacy activity. Many more comparable students with more control variables are needed to explore this further.

Conclusions and Recommendations

The results are suggestive of promise for our approach taken toward measurement and analysis of situated literacy activity within family literacy programs. The promise lies in the fact that the results, and their clarification by the graphs, reflected what we as researchers and teachers felt was happening over the course of the program. We knew, as teachers know, that the adults and their children were gaining in literacy knowledge and skill. We knew that the growth looked different across the two sites. We strongly suspected that the engagement in real-life literacy activities gave a boost to this growth. However, as stated in the introduction, we knew that funders of family literacy programs would require a different type of proof of what was happening from what teachers felt was happening. Our sense that the quantitative results of our analytic plan felt familiar to us through our qualitative sense of the program was a reliability check on our measurement approach in our eyes.

As we stated above, we understood from the start that we were piloting ways to test the impact of situated literacy activity within family literacy programs. The LFL program was an action research program in which it is expected that researchers and participants will implement a plan for instruction and work together to refine it as they hold their goal in mind. Within this, we approached the measurement of progress toward that goal in the same way. The “let’s see if it works” approach was productive from our perspective.

The biggest challenge, and the focus of this article, lay in our wish to test the role of real-life literacy engagement on changes in literacy knowledge and skill. This amounted to negotiating the epistemological and paradigmatic borders of two very different views of literacy: (a) the view that sees literacy (and language) as always occurring within layers of sociocultural context and human activity that are mediated by oral and written texts and (b) the view that sees literacy as technological skill that is learned in decontextualized fashion, is transferrable across contexts, and can be tested as an end point and placed on scales of proficiency. Although in many ways we would agree with others (e.g., Street, 2001) that this cannot be done while maintaining epistemological validity and purity, we propose that in the process of attempting it, we have suggested ways to explore a third theoretical lens. This lens sees literacy skill and knowledge as developing within sociocultural contexts, including power relationships and historical processes (Purcell-Gates, Jacobson, & Degener, 2004). Within this lens, individuals’ skill development is facilitated by, and constrained by, the uses to which literacy is put—the texts that are read and written and the social activities within which reasons to read and write are motivated.

Exploring this lens further, we ask, “Can the skills and knowledge gained through engagement with socially situated literacy activity be measured quantitatively? Further, if we answer with a qualified ‘yes’ to this question, can we associate quantitatively measured growth with the degree of engagement with socially situated literacy?” Following is our thinking on this:

We can step away from issues of positivist or interpretive epistemologies and quantitative or qualitative paradigms to think about this from a “real-life,” pragmatic perspective (Dewey & Bentley, 1949). It does seem to hold that children who develop within social contexts that include many different instances of literacy use have an easier time learning to read and write and move more quickly into independence as readers and writers. One way to think about this is to consider children who grow up in cultures with few and restricted opportunities for reading and writing. If they enter schools that present them with unfamiliar literacy practices and literacy processes, they struggle to develop toward independence (Purcell-Gates, 1995; Snow et al., 1998). Likewise, learners who learn to read and write in school but fail to engage with literacy practice outside of school lessons do not develop as readers and writers to the same degree as learners who do engage (Purcell-Gates, 1996). It seems to take real-life literacy practice (i.e., socially situated literacy) to both prepare young children for learning to read and write in school and to help them move toward independence in literacy to the point where their literacy growth is self-propelled (e.g., Stanovich, 1986).

With regard to the reasonableness of measuring this development with assessments that depend on decontextualized, within-the-test, items, we offer (and acknowledge) a two-layered response. First, if we are going to move from a powerless to a powerful position in curricula decisions for family literacy programs, early-childhood programs, and adult education programs, in the current political context, we must use such assessments to assert “what works.” However, we need to do this as ethically as possible. This leads us to the second layer of response. although we may chafe over the failure of standardized, norm-referenced tests to account for different literacies or for the ways that social context and literacy abilities transact, they do seem to roughly account for development over time toward independence. Norm-referenced assessments serve primarily to differentiate among learners at supposedly the same age and education levels. Who are the best readers? Who are the least skilled? Who are in between? Teachers and others who give norm-referenced assessments usually will acknowledge that this rough sorting seems to hold true for the most part, that is, has validity.

The above points—that literacy skill develops within contexts of socially situated literacy practice and that measuring this skill with norm-referenced assessments has a rough validity as well as being required by those holding political power and program funding—are offered in support of the third lens that allows us to search for ways to measure socially situated literacy activity in the classroom. We believe we have begun to address these challenges with the work presented here. Building on the work of previous studies, we have developed ways to document and describe socially situated literacy activity in the family literacy classrooms and to use those descriptions (ratings) to develop an analytic prototype for showing the relationship of literacy growth to specific program elements—elements that because of their socially situated natures reflect more truly the complexities of the literacy lives of the participants.

Footnotes

Appendix

Acknowledgements

We would also like to acknowledge the assistance of Gen Creighton and Yuan Lai, research assistants, for their roles in teaching the adult classes and collecting data.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The data used in this presentation came from a study funded by the following sources: The University of British Columbia Bookstore, the Canadian Council on Learning, and the Canada Research Chairs Program.

Bios

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.