Abstract

There is no doubt that cochlear implants have improved the spoken language abilities of children with hearing loss, but delays persist. Consequently, it is imperative that new treatment options be explored. This study evaluated one aspect of treatment that might be modified, that having to do with bilateral implants and bimodal stimulation. A total of 58 children with at least one implant were tested at 42 months of age on four language measures spanning a continuum from basic to generative in nature. When children were grouped by the kind of stimulation they had at 42 months (one implant, bilateral implants, or bimodal stimulation), no differences across groups were observed. This was true even when groups were constrained to only children who had at least 12 months to acclimatize to their stimulation configuration. However, when children were grouped according to whether or not they had spent any time with bimodal stimulation (either consistently since their first implant or as an interlude to receiving a second) advantages were found for children who had some bimodal experience, but those advantages were restricted to language abilities that are generative in nature. Thus, previously reported benefits of simultaneous bilateral implantation early in a child's life may not extend to generative language. In fact, children may benefit from a period of bimodal stimulation early in childhood because low-frequency speech signals provide prosody and serve as an aid in learning how to perceptually organize the signal that is received through a cochlear implant.

Keywords

Not that long ago, a child born with hearing loss faced a lifetime of almost certain problems arising from deficits in spoken language abilities. Deficient language commonly leads to reading problems, limits academic performance, and ultimately curtails occupational and social opportunities. Toward the end of the 20th century, however, refinements in the design of the cochlear implant raised expectations that the futures of children with hearing loss would be brighter (Eisenberg & House, 1982). Numerous studies have demonstrated that children with severe-to-profound hearing loss understand and produce spoken language better when they have a cochlear implant, rather than hearing aids or tactile aids (e.g., Geers, 1997; Geers & Moog, 1991; Geers & Tobey, 1995; Svirsky, Robbins, Kirk, Pisoni, & Miyamoto, 2000). But as good as they are, cochlear implants have not completely eliminated the problems arising from childhood hearing loss. Gaps persist in the language capabilities of children with hearing loss compared to those of children with normal hearing (Geers, 2004; Nittrouer, 2009).

One treatment option that has been considered as a way of further closing the gap between children with normal hearing and those with hearing loss is binaural stimulation. This option is motivated both by the deleterious effects that unilateral hearing loss has on language development, and by the contributions that binaural hearing makes to our overall functioning in the real world. Children with untreated unilateral hearing loss experience deficits in language learning and in speech perception (e.g., Bess & Tharpe, 1984; Culbertson & Gilbert, 1986; Ruscetta, Arjmand, & Pratt, 2005; Welsh, Welsh, Rosen, & Dragonette, 2004), so there is no reason to expect that children with one implant would ever achieve comparable levels of language proficiency to those of children with normal hearing in both ears. We are effectively leaving these children with unilateral hearing loss.

Most studies examining potential benefits of bilateral cochlear implants, however, have not focused on language acquisition. Instead these studies have looked at the potential benefits of bilateral implantation on the kinds of auditory processing that impact how well we function in our everyday lives. This makes sense in light of the fact that binaural hearing provides obvious advantages to processes such as localizing the source of a sound in the environment and hearing a target signal in noisy acoustic landscapes (Boothroyd, 2006). Accordingly, clinical investigations have examined the effects of bilateral implants over one implant on just these sorts of processes. When speech stimuli are incorporated into experimental protocols, the task usually involves recognition in noise of consonants, single words, or sentences. The dependent measure is the difference in signal-to-noise ratio needed to recognize speech in the bilateral condition compared to the single-implant condition. Results of these studies generally show advantages in these particular auditory skills (localization and masking release) for two implants over one (Dunn, Tyler, Oakley, Gantz, & Noble, 2008; Kuhn-Inacker, Shehata-Dieler, Müller, & Helms, 2004; Litovsky et al., 2004; Peters, Litovsky, Parkinson, & Lake, 2007; Schleich, Nopp, & D'Haese, 2004; Senn, Kompis, Vischer, & Haeusler, 2005; Tyler et al., 2002). However, most studies have primarily included participants who were sequentially implanted, and a few authors have noted that factors such as age at time of first implant and amount of preimplant auditory stimulation in the ear with the second implant can influence the magnitude of the bilateral advantage (Galvin, Mok, & Dowell, 2007; Zeitler et al., 2008). In 2007, Murphy and O'Donoghue published a literature review on studies examining potential advantages of bilateral implants over a single implant. In all, 37 studies were included: 28 with adults only, 7 with children only, and 2 with adults and children. Eighteen of these were case studies with 5 or fewer patients, 14 included between 6 and 20 research participants, and only 5 had more than 20 participants. Accumulated results across studies supported the conclusion that bilateral implants provide advantages over a single implant when it comes to localizing sound and recognizing words or sentences in noise.

Although these measures tell us a great deal about binaural processing, they provide little information about how well children are acquiring their native language. Learning language involves more than just recognizing words, whether in noise or in quiet, and whether in isolation or in sentences. A child comes to the task of learning a first language with no expectations or knowledge about the syntax or grammar of that language. Children must discover how the language they are attempting to learn is structured, at all levels. For example, a child must determine, on her own, if word order is important in her native language, as it is in English, or if word order is permitted to vary freely. In languages that have few constraints on how words should be ordered, information about the relations among lexical items is conveyed by salient inflectional morphemes. Learning about these linguistic features generally happens within the first couple years of life. The consequences of failing to develop a complete familiarity with the syntax and grammar of one's first language may only become apparent after a child has completed the early elementary grades. Then it is too late to repair weaknesses in the child's faulty language system.

By considering which signal properties children with normal hearing use to learn about structure in their native language we may find clues about how best to augment the signal provided through an implant. Developmental studies have shown that one of these acoustic properties is simply silence. Early on, children learn to parse the signal into phrases based on where pauses occur in the ongoing signal (Hirsh-Pasek et al., 1987). Another property used by children is prosody, which consists largely of the rise and fall in vocal fundamental frequency. For example, fundamental frequency decreases near the ends of phrases and sentences, and children use such cues to begin parsing sentences into their constituent parts. This process is commonly referred to as prosodic bootstrapping (Gleitman & Wanner, 1982; Jusczyk, 1997; Morgan & Demuth, 1996; Söderstrom, Seidl, Kemler Nelson, & Jusczyk, 2003).

It has also been suggested that children use regularities in the relatively slow modulations of formant frequencies to discover word units. That suggestion arises partly from a study of sine wave speech in which the normally rich speech spectrum was replaced by three sinusoids that traced changes in the first three formants (Nittrouer, Lowenstein, & Packer, 2009). The stimuli were also presented as vocoded noise bands. Results showed that children recognized sentences presented as sine wave replicas as well as adults did, but were much worse at recognizing vocoded sentences. Nittrouer et al. used their finding to support the claim that children depend on recurring stretches of spectral change to begin identifying individual lexical units in the ongoing speech stream. Once children can parse the signal into separate words they can begin to explore the acoustic details that support word-internal phonetic structure. Thus, there are two kinds of rather “global” spectral structure that may be harnessed in early language learning: prosody and voiced formants. Neither of these properties is well represented with current methods of signal processing for cochlear implants. On the other hand, both vocal fundamental frequency and at least the first formant are likely to be available through hearing aids to many listeners with hearing loss.

Compared with the number of studies investigating bilateral implants, relatively few have examined whether there are advantages to combining one cochlear implant with a hearing aid. On first consideration, that may be with good reason. Other than the arguments made above concerning language learning, there is little reason to expect a hearing aid to provide any benefit when combined with an implant. Most individuals with severe-to-profound hearing loss are unable to achieve the same amount of gain with hearing aids as they can with implants. They generally cannot recognize speech through a hearing aid alone, but can do so reasonably well with an implant (e.g., Wolfe et al., 2007). Therefore, it could logically be argued that adding a second device that works well on its own, as an implant does, should be the best treatment. Contradicting that argument, however, are studies comparing speech recognition with a single implant to bimodal stimulation (i.e., an implant on one ear with a hearing aid on the other). All have reported advantages for the bimodal condition (Ching, Incerti, & Hill, 2004; Ching, Incerti, Hill, & van Wanrooy, 2006; Ching, Psarros, Hill, Dillon, & Incerti, 2001; Holt, Kirk, Eisenberg, Martinez, & Campbell, 2005; James et al., 2006), although concerns have been raised about how the signals provided by the two devices are matched (Mok, Grayden, Dowell, & Lawrence, 2006; Vermeire, Anderson, Flynn, & Van de Heyning, 2008). Only one investigation has compared the listening abilities of children with bilateral implants to those of children using bimodal stimulation (Litovsky, Johnstone, & Godar, 2006). That study looked at masking release for speech stimuli and minimal audible angle (which is related to localization) for eleven children with each kind of stimulation, and reported small advantages for the listeners with bilateral implants over those with bimodal stimulation. Of course, it may very well be that the optimal choice of stimulation differs depending on whether the goal is to facilitate release from masking and localization or to support language acquisition.

Finally, one control that was missing in many of the studies cited above is the inclusion of listeners with normal hearing. It is not enough to determine if one group of listeners with hearing loss performs better than another group of listeners with hearing loss. Even if statistically significant, that difference may be of little practical value if both groups remain dramatically worse off than listeners with normal hearing. When outcomes for children with hearing loss are compared to performance of children with normal hearing on standardized measures, it is common to use published means from children with normal hearing as benchmarks of expected performance. A concern with that practice is that any group of deaf children participating in an experiment could differ from the children used for obtaining the published means in some significant way, such as in terms of socioeconomic status. In addition, assessment methods can vary across test administrators, even for tools presumed to be standardized. Therefore, measures collected from children in experimental studies could have been obtained with somewhat different methods than those used to get the published norms. In this study, the children with normal hearing forming the control group had similar socioeconomic status to the children with hearing loss who participated, and testing was conducted using exactly the same procedures for all children. For these reasons the children with normal hearing in this study provided particularly relevant benchmarks.

In summary, this study tested the hypothesis that a period of bimodal stimulation early in the lives of children with severe-to-profound hearing loss might facilitate the acquisition of generative language abilities. Accordingly, the performance of children with one implant, bilateral implants, and bimodal stimulation on four language measures was examined. These children were all part of a larger study being conducted with a national sample, including children with normal hearing (Nittrouer, 2009). The purpose of this report is to compare developmental outcomes for these three groups of children with hearing loss and to describe how they are faring relative to children with normal hearing.

Method

Participants

The participants whose data are reported here came from a larger study (Nittrouer, 2009), which tested children with and without hearing loss (HL) from across the United States on their birthdays and half-birthdays from 12 months of age to 48 months. Birthdates of participating children were restricted to a period between August 1, 2002 and June 30, 2004 in order to ensure that all children had the implants and speech processors that were available within a given timeframe. On the other hand, children from various regions across the country were included to eliminate the opportunity for idiosyncratic factors that may be related to specific intervention programs to influence outcomes.

All children in this study had normal prenatal histories, full-term gestations, and no complications at birth. No child had any major health condition other than hearing loss that could delay language, cognitive, or motor development. All children had parents with normal hearing who reported speaking only English to their children. There was close to an equal number of boys and girls in each group. These methodological controls helped eliminate concern that some unintended, confounding source of variance might explain the outcomes.

All children with HL were fit with hearing aids when their HL was first identified. Parents all reported that their children wore their hearing aids consistently, at least until they received a cochlear implant. At that time some children continued to use a hearing aid on the unimplanted ear, and some did not. All parents reported that children wore their prescribed prosthesis (or prostheses) during all waking hours, other than bath time or when they were swimming.

All children with HL were served by intervention programs that focused on the acquisition of spoken language, and the stated goal of all the parents of children with HL was to have their children learn spoken English well enough to be educated in a mainstream setting without the aid of sign language interpreters. Nonetheless, parents of 25% of these children, both with and without HL, used signs to support their spoken language input to their children. Parents who chose to supplement spoken language input with signs indicated that they did so because they believed it would facilitate their children's learning of spoken English. The use of signs was evenly spread across groups. Similarly, participation in auditory–verbal therapy was evenly spread across groups.

For this report we only analyzed data collected at 42 months of age (±1 month). Data are included only for children who received at least one cochlear implant before their 42-month test session. All children with HL had better-ear pure tone averages (BE-PTAs) for the frequencies 0.5, 1.0, and 2.0 kHz of poorer than 70 dB HL. Audiometric information was collected from the children's audiologists. A threshold of 120 dB HL was used if there was no response at a given frequency. No child showed evidence of a progressive hearing loss. For the children whose hearing loss was not identified right at birth, there was no reason to suspect that it was anything other than congenital. For example, none of the children had an illness as an infant that might precipitate in hearing loss. To participate, children with HL had to be receiving intervention services at least once per week before age 36 months, and then be enrolled in a preschool program for at least 16 hours per week after 36 months of age. The intervention programs providing services to these children had to be ones that focused exclusively on serving children with hearing loss, rather than on serving children with a variety of disabilities. All implants and hearing aids used by the children in this study were fit and/or mapped by the children's own clinicians, who were not affiliated with this study. Of the 58 children with HL for whom data are reported here, 34 had implants from Cochlear Corporation: 29 with Freedom devices and processors, 3 with Contour 24 devices and Freedom processors, and 2 with Contour 24 devices and Sprint processors. Another 21 children had Advanced Bionics implants: All had HiRes 90k devices, but 10 had Harmony, 4 had Auria, and 7 had PSP processors. Three children had MedEl Combi40+ devices with Tempo+ processors. Children received their implants at surgical centers in or near one of these cities: Boston, New York, Atlanta, Pittsburgh, Jacksonville (FL), Columbus (OH), Cleveland, Cincinnati, Chicago, Minneapolis, Rochester (MN), St. Louis, Jackson (MS), Omaha, Oklahoma City, Dallas, San Antonio, Albuquerque, Phoenix, Seattle, and San Francisco. Of the 29 children who wore a hearing aid on the unimplanted ear for some period of time after receiving a first implant, 7 wore Widex aids, 2 wore Oticon aids, and the rest wore Phonak aids. Over the course of the study, no child received an updated speech processor. However, two children (one with one cochlear implant [CI] and one with bimodal CI + hearing aid [CI + HA]) had device failures, and had to be reimplanted. Both were implanted with the same device they had before the failure, and in both cases that happened to be Advanced Bionics HiRes 90k devices with Harmony processors.

Although not included in statistical analyses, means and standard deviations (SDs) on dependent measures are provided for 53 children with normal hearing (NH). Participants in the NH group all passed hearing screenings at birth, and at 36 months of age passed audiological screenings of the frequencies from 500 to 4,000 Hz (at octave intervals) presented at 20 dB HL to each ear separately.

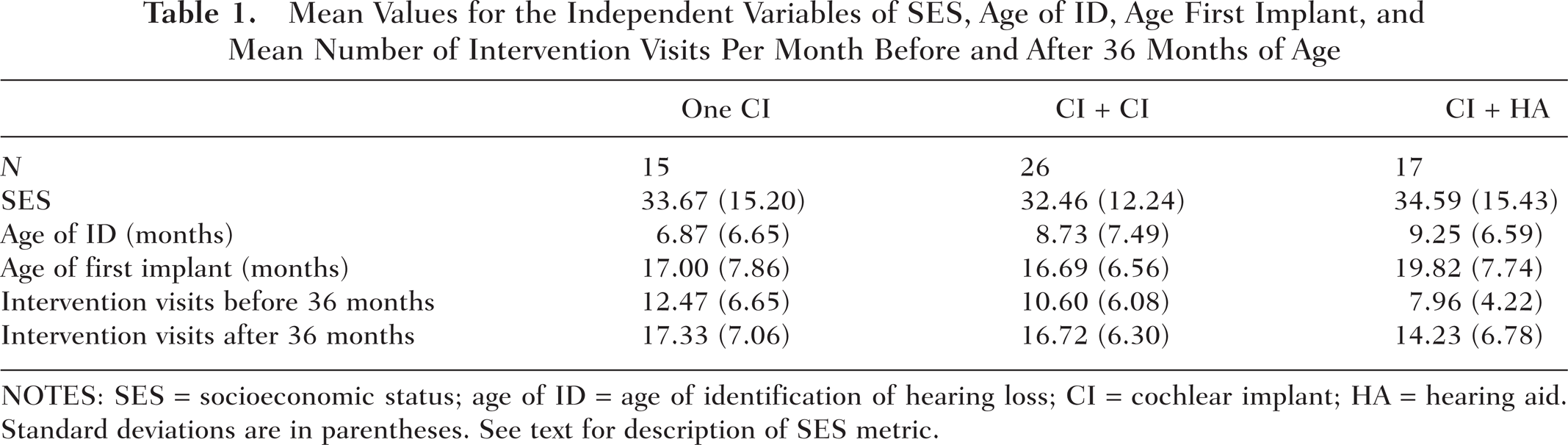

Table 1 shows means (and SDs) across the three groups of children with HL (those with one CI, bilateral CIs, or bimodal CI + HA) for each of the independent variables of interest: socioeconomic status (SES), age of identification of the hearing loss (age of ID), age at time of first implant (age first implant), and mean number of intervention visits. This last variable is listed separately for intervention visits before and after 36 months of age because children typically start attending preschool programs at 36 months of age. The values listed are the numbers of visits only, rather than the amount of time spent in intervention.

Mean Values for the Independent Variables of SES, Age of ID, Age First Implant, and Mean Number of Intervention Visits Per Month Before and After 36 Months of Age

NOTES: SES = socioeconomic status; age of ID = age of identification of hearing loss; CI = cochlear implant; HA = hearing aid. Standard deviations are in parentheses. See text for description of SES metric.

SES was computed as it has been in previous studies (e.g., Nittrouer, 2009; Nittrouer & Burton, 2005), using two 8-point scales. One scale indexes the occupational status of the primary income earner, and the other scale indexes the highest educational level achieved. Scores obtained on those two scales are multiplied together to derive an overall SES metric between 1 and 64. In this study, mean SES was close to 30 for all groups, and this generally indicates a household in which the primary income earner has a college degree and a middle-management type of position. No statistically significant differences were found among groups on any of these independent variables. Mean SES for children with NH in this study was 34.8 (SD = 14.2), which is similar to that for the groups of children with HL. Analyses of variance (ANOVAs) performed on these values failed to reveal significant differences among groups of participants for any of the independent variables.

Of the 26 children with bilateral implants by 42 months of age, 7 were simultaneously, bilaterally implanted, and the mean age at which they received those implants was 21.0 months (SD = 9.9 months). The other 19 children were sequentially implanted, and the mean age at which they received their second implant was 32.3 months (SD = 6.9 months).

Table 2 shows audiological results for children in each group. The information on this table is that which was received from children's audiologists for the audiological testing done closest to our 42-month data collection. BE-PTAs were computed from unaided thresholds at 0.5, 1.0, and 2.0 kHz. Aided PTAs were computed from thresholds at these same frequencies, with prostheses on. Measures of speech awareness (speech awareness thresholds, or SATs) and speech recognition thresholds (SRTs) reported here were obtained from one ear, with prosthesis on. Speech measures for both ears functioning together were available for only four children in the bilateral group (CI + CI) and from nine children in the bimodal group (CI + HA). For the four children in the bilateral group, mean SAT was 15 dB HL and mean SRT was 30 dB HL. For the nine children in the bimodal group, mean SAT was 21 dB HL and mean SRT was 20 dB HL.

Audiological Results for Children in Each Group

NOTES: CI = cochlear implant; HA = hearing aid; BE-PTA = better-ear pure tone average; SAT = speech awareness threshold; SRT = speech recognition threshold. The table presents means of BE-PTA; thresholds at 250 Hz in the unimplanted ear for children with one CI or CI + HA, the ear getting the second implant for children who received bilateral implants sequentially, or across both ears for children who received simultaneous bilateral implants; aided PTA); SATs for each ear separately; and SRTs for each ear separately. All PTAs are for the frequencies of 0.5, 1.0, and 2.0 kHz. All values are from most recent audiograms available at 42 months of age, except that BE-PTA and 250-Hz thresholds for implanted ears are from preimplant testing. All values are in dB HL. Standard deviations are in parentheses.

Because these children were implanted at various locations across the country, different instruments were used to evaluate word recognition, when it was evaluated. In fact, only 15 of these 58 children had word recognition scores reported in any of the audiological records collected over the course of this study: 5 children with just one CI, 6 children with bilateral implants, and 4 with bimodal CI + HA. Furthermore, when they were used, these materials were presented at different levels, and by different methods (live voice or recorded materials). These factors make it very difficult to compare results across children. Nonetheless, the range of scores were 42 to 96 for the children with one CI, 50 to 100 for the children with bilateral CIs, and 58 to 100 for the children with bimodal CI + HA.

Dependent Measures

Results of four language-related dependent measures are reported here. These four measures were selected from the broader set of dependent measures used in the larger study with several considerations in mind: (a) These measures are representative of overall language performance by these children. The same conclusions as those reached here would have been reached with any other subset of test measures. (b) We wished to examine language skills spanning a continuum from ones that may be viewed as basic in nature to ones that require greater sensitivity to the structure of one's native language. The language skills that (for the purposes of this study) were considered basic in nature were ones that speakers/listeners must have to function, but that do not require the level of language proficiency typically possessed by native speakers. These language skills are commonly assessed in clinical settings. We also wished to report on measures that reflect the acquisition of generative language, and those sorts of measures are usually beyond the realm of clinical assessments. (c) All measures reported here had linear developmental trajectories. That was not the case for all measures obtained in the larger study. For example, the numbers of times children imitated a parent was a measure that was sensitive to linguistic maturity, but the developmental course was curvilinear. Here, we selected measures that only increased as children got older.

Basic language measures. The first two measures described below were basic in nature:

A measure of how well children comprehend the language that they hear: For this measure, the Auditory Comprehension subscale of the Preschool Language Scales–4 (PLS-4; Zimmerman, Steiner, & Pond, 2002) was used. Test items in this task are designed to examine how well children understand specific communicative and linguistic elements, such as prepositions, word order, and inflectional morphemes. Responses in this task are generally elicited from the child by the experimenter. Raw scores of the numbers of items responded to correctly are reported.

A measure of expressive vocabulary: We used the Expressive One-Word Picture Vocabulary Test (EOWPVT; Brownell, 2000) for this purpose. Vocabulary measures are perhaps the most commonly used language measures in clinical assessment. They are easily obtained, and generally involve having parents complete questionnaires concerning the items in their children's lexicons. In contrast, the EOWPVT is an elicitation task. The experimenter shows the child pictures, and through nonverbal means attempts to get the child to provide lexical labels. Raw numbers of correctly labeled items are reported here.

Measures of generative language abilities. For the measures of generative language abilities, 20-minute videotaped language samples were obtained of each child interacting with a parent, usually the mother. Starting at the 5-minute mark, 50 consecutively occurring utterances that contained one or more real words were transcribed by two independent observers. Differences in transcriptions across the two individuals were resolved by joint viewing of the videotape after the independent transcriptions were completed, and discussing what was seen. These transcriptions were submitted to analysis by Systematic Analysis of Language Transcripts, Version 9 (SALT-9; Miller & Chapman, 2006). Here, we report just two of the measures obtained from those analyses:

Mean length of utterance (MLU): This is the mean number of morphemes per utterance, across the 50 utterances. It provides a measure of early syntactic ability. MLU is reported because it is an early indicator of how well children are learning to combine words.

Number of pronouns: This is the number of pronouns used in the 50-utterance sample. It provides a measure of early grammatical competency. To use a pronoun, the speaker must recognize the referent as a noun, and know its case and gender.

Procedures

Participants were recruited for this study through the distribution of brochures at schools, daycares, classes for teaching parents how to use signs with their infants, audiology centers, otolaryngology clinics, and early intervention programs. These brochures had postcards attached to them. If parents were interested in learning more about the study, they tore off the postcard and returned it to the central test site. A staff member from the central site contacted them, and provided further information. Thus, recruitment was largely handled centrally; audiology and intervention centers did not necessarily know if a family receiving services at their center was participating in this study, other than for the fact that we received reports from the children's audiologists.

The individuals who collected data at those test sites did so independently of their professional positions. No data were collected as part of a diagnostic or intervention protocol. All individuals involved in data collection attended two training sessions over the course of the study, and were required to demonstrate with a practice participant that they could collect data using standardized procedures before they collected data from actual test subjects.

The experimenters at the various test sites completed the auditory comprehension and EOWPVT tasks, videotaped the language sample, and mailed those materials back to the central site for scoring. All scoring at the central site was done by individuals who were blind with respect to the characteristics of the participants. Data were entered into and stored in a Microsoft ACCESS database on a SQL server. Primarily SPSS was used to do the statistical analyses reported here.

Results

Correlations of Language Outcomes With Independent Variables

Before examining between-group differences on the dependent measures, we wanted to see how much variance in those four measures could be explained by the independent variables. To do that, Pearson product–moment correlation coefficients were computed across all 58 children with HL between each of five independent variables and each of the four dependent measures. The five independent variables used in these analyses were ones generally considered to affect language outcomes: SES, Age of ID, Age of First Implant, Intervention Visits per Month (before 36 months of age, in this case), and BE-PTA. None of these correlation coefficients was significant (i.e., p > .10), except for those related to age at the time of the first implant.

In this study, the age the child was at the time of the first implant was perfectly correlated with the length of time that the child had an implant because all children were tested at the same age. Here, we actually report correlation coefficients between the duration of time since the first implant and scores on the dependent measures. These values are shown in Table 3. Three correlation coefficients were significant, but because no significant difference existed among participant groups in terms of when they received their first implant, this factor would not be expected to explain any significant group differences in dependent measures that might be found.

Pearson Product-Moment Correlation Coefficients Between Each Dependent Measure and Duration of Time Spent With the First Cochlear Implant, and Associated p Values

NOTES: Aud. Comp. = raw scores on the Auditory Comprehension subscale of the Preschool Language Scales–4; EOWPVT = Expressive One-Word Picture Vocabulary Test; MLU = mean length of utterance; Pronouns = numbers of pronouns produced in a 50-utterance sample.

We also computed correlation coefficients between length of time with a second implant and each of the dependent measures for children who had bilateral implants at 42 months of age. None of these coefficients was statistically significant.

Correlations Across Language Measures

Pearson product–moment correlation coefficients were computed for scores on each of the dependent language measures, with scores on all three other measures for these 58 children with at least one implant. These coefficients were highest when the two basic assessment tools (auditory comprehension vs. EOWPVT) were correlated, r = .81, and when the two measures of generative language (MLU vs. pronouns) were correlated, r = .80. All correlations between an assessment tool and a measure of generative language were lower. Specifically, they ranged from .37 for EOWPVT versus pronouns to .65 for auditory comprehension versus MLU. Consequently, we can conclude that measures for each type of language ability (basic or generative) were well correlated, but that measures of basic language abilities were not highly correlated with generative language.

Main Effect of Signs

To examine potential effects of using signs on outcomes for these children at 42 months of age, t tests were performed on each of the four language measures, with children divided into groups depending on whether or not signs were used with them. None of these results was statistically significant (again, p > .10 for all).

Main Effects of Stimulation

In doing analyses of these data, participants were grouped in several different ways depending on the kind of stimulation they had, and the history of change in stimulation.

Stimulation configuration at the time of testing. Table 4 shows mean scores for each group on the dependent measures, based on what stimulation configuration the child had at 42 months of age. Mean scores for children with NH are presented at the top of the table for comparison purposes only; these scores were not included in the statistical analyses. Table 5 shows the results of one-way ANOVAs performed on scores obtained from children with HL only, for each dependent measure separately. No statistically significant effects of stimulation were found for any of the dependent measures. Although statistics comparing outcomes for children with HL and those with NH were not done as part of this report, it is apparent from Table 4 that mean scores for all groups of children with HL were substantially poorer than those for children with NH.

Mean Scores (SDs) for Dependent Measures Grouped by Participants' Stimulation Configuration at 42 Months

NOTES: SD = standard deviation; Aud. Comp. = raw scores on the Auditory Comprehension subscale of the Preschool Language Scales–4; EOWPVT = Expressive One-Word Picture Vocabulary Test; MLU = mean length of utterance; Pronouns = numbers of pronouns produced in a 50-utterance sample; CI = cochlear implant; HA = hearing aid. Means (SDs) for children with normal hearing are given for comparison.

Results of ANOVAs Done on Each Dependent Measure for the Main Effect of Stimulation Configuration at 42 Months (Degrees of Freedom = 2, 55)

NOTES: ANOVA = analysis of variance; Aud. Comp. = raw scores on the Auditory Comprehension subscale of the Preschool Language Scales–4; EOWPVT = Expressive One-Word Picture Vocabulary Test; MLU = mean length of utterance; Pronouns = numbers of pronouns produced in a 50-utterance sample.

Mean BE-PTAs did not vary significantly among these groups, and BE-PTA was not found to account for a significant amount of variance on any dependent language measure. Nonetheless we worried there might be interactions between BE-PTA and the type of stimulation that clinicians and parents chose for individual children. Within any particular group, children with the best BE-PTAs might be expected to perform the best. Also, in considering only children with the poorest BE-PTAs (120 dB HL), it might be expected that hearing aids would be of no value at all for these children and so they would perform better with bilateral implants. To examine those assumptions, individual scores for each of the four dependent measures were plotted as a function of BE-PTA and are shown in Figure 1. There is no pattern of interaction between stimulation configuration and BE-PTA found here. In fact, children with the best BE-PTAs did not perform particularly well, compared to children with poorer BE-PTAs. At the poorest BE-PTA (120 dB HL), there is great overlap in scores among children with different stimulation configurations. Some children with the most profound hearing losses performed quite well with bimodal stimulation.

Scores on each of the four dependent language measures for all children with hearing loss, plotted according to what kind of stimulation they had at 42 months of age, as a function of BE-PTAs.

Children with 12 months of consistent stimulation. Children require an adjustment period with whatever kind of auditory stimulation they receive before they can obtain maximum benefits from that stimulation (e.g., Holt et al., 2005; Nicholas & Geers, 2006). Therefore, data were analyzed for only those children who had at least 12 months of experience with the stimulation configuration that they had at 42 months; that is, their stimulation was consistent since 30 months of age or earlier. Means and SDs for these groups are shown in Table 6, with means from children with NH at the top. By comparing scores from this table to those in Table 4 we see that children who had bilateral implants for at least 12 months prior to testing did not perform any better than the larger group of children who had bilateral implants at 42 months. In fact, there appears to be some slight decrement for the numbers of pronouns produced by the children with bilateral implants for at least 12 months compared with the larger group of children who had bilateral implants at 42 months of age. Results of one-way ANOVAs performed on scores for children with consistent amplification are shown in Table 7 and reveal no significant differences across the three groups. Again it is apparent that these children with HL did substantially more poorly than children with NH on these measures of language acquisition.

Mean Scores (SDs) for Participants With Consistent Amplification Since 30 Months, Grouped by Participants' Stimulation Configuration

NOTES: SD = standard deviation; Aud. Comp. = raw scores on the Auditory Comprehension subscale of the Preschool Language Scales–4; EOWPVT = Expressive One-Word Picture Vocabulary Test; MLU = mean length of utterance; Pronouns = numbers of pronouns produced in a 50-utterance sample; CI = cochlear implant; HA = hearing aid. Means (SDs) for children with normal hearing are given for comparison.

Results of ANOVAs Done on Each Dependent Measure for the Main Effect of Consistent Stimulation Since 30 Months (Degrees of Freedom = 2, 34)

NOTES: ANOVA = analysis of variance; Aud. Comp. = raw scores on the Auditory Comprehension subscale of the Preschool Language Scales–4; EOWPVT = Expressive One-Word Picture Vocabulary Test; MLU = mean length of utterance; Pronouns = numbers of pronouns produced in a 50-utterance sample.

Children with bilateral implants, based on stimulation before second implant. Next we wanted to see if the language abilities of children with bilateral implants may have been influenced by what they were using prior to receiving the second implant. To do that we went back to the original group of 26 children who had bilateral implants at 42 months of age, and grouped them according to what they were using, in addition to one implant, before they received their second implant. Scores for those three groups of children are presented in Table 8. Here, we see that children who had experience with bimodal stimulation for some amount of time fared the best. The children who received bilateral implants simultaneously performed most poorly. Table 9 shows results of the ANOVAs done on these scores for these groups, and reveals that there were significant effects of group for the measures of MLU and Pronouns.

Mean Scores (SDs) for the Participants With Bilateral Implants at 42 Months, Grouped According to What They Had on the Contralateral Ear Before the Second Implant

NOTES: SD = standard deviation; Aud. Comp. = raw scores on the Auditory Comprehension subscale of the Preschool Language Scales–4; EOWPVT = Expressive One-Word Picture Vocabulary Test; MLU = mean length of utterance; Pronouns = numbers of pronouns produced in a 50-utterance sample; HA = hearing aid. Simultaneous refers to children who received both implants at the same time. Means (SDs) for children with normal hearing are given for comparison.

Results of ANOVAs Done on Each Dependent Measure for the Main Effect of What Was on the Contralateral Ear Before the Second Implant (Degrees of Freedom = 2, 23)

NOTES: ANOVA = analysis of variance; Aud. Comp. = raw scores on the Auditory Comprehension subscale of the Preschool Language Scales–4; EOWPVT = Expressive One-Word Picture Vocabulary Test; MLU = mean length of utterance; Pronouns = numbers of pronouns produced in a 50-utterance sample.

Children with some bimodal experience versus those with none at all. Having found that the children with bilateral implants who had some experience with bimodal stimulation fared the best of all children with bilateral implants sparked a question: Could the failure to find significant differences among the three main groups in the first two analyses have been because of children with bimodal stimulation prior to receiving a second implant raising mean scores for the larger bilateral group? In other words, is there an advantage to language learning accrued by spending even some time with bimodal stimulation? To answer this question, we divided children according to whether they ever had bimodal stimulation or not. By coincidence, equal numbers of children fell into the categories of having had some experience and having had no experience with bimodal stimulation. Mean scores on the four dependent measures are shown in Table 10 and reveal that children who had bimodal stimulation at any point in their lives fared better than children who never had bimodal stimulation. Subsequent t tests performed on these scores revealed a significant bimodal advantage for MLU and Pronouns, the two measures that may be considered to be assessing generative language. These results are shown in Table 11.

Mean Scores (SDs) for Participants for Dependent Measures Grouped by Whether the Participant's Stimulation Configuration Was Ever Bimodal or Not

NOTES: SD = standard deviation; Aud. Comp. = raw scores on the Auditory Comprehension subscale of the Preschool Language Scales–4; EOWPVT = Expressive One-Word Picture Vocabulary Test; MLU = mean length of utterance; Pronouns = numbers of pronouns produced in a 50-utterance sample. Means (SDs) for children with normal hearing are given for comparison.

Results of t Test Done on Each Dependent Measure for the Main Effect of Whether Participant's Stimulation Configuration Was Ever Bimodal or Not (Degrees of Freedom = 56)

NOTES: Aud. Comp. = raw scores on the Auditory Comprehension subscale of the Preschool Language Scales–4; EOWPVT = Expressive One-Word Picture Vocabulary Test; MLU = mean length of utterance; Pronouns = numbers of pronouns produced in a 50-utterance sample.

Because dividing children in this way produced two statistically significant results, two-way ANOVAs were performed on these measures using bimodal stimulation and sign input as factors. This was done to determine if experience with bimodal stimulation interacted with sign experience. The main effect of sign input was not significant for either measure (MLU or Pronouns), nor was the Bimodal Experience x Sign Input interaction.

Finally, we were reminded that the duration of time a child spent with a first implant was positively correlated with scores on our dependent language measures. That piqued our curiosity regarding whether there was a difference between these two groups in terms of when children received their first implant that might actually explain the differences on dependent measures between the groups. Mean age at which children received their first implant was 17.7 months (SD = 6.6) for children who had some bimodal experience and 17.7 (SD = 8.0) for children who had no bimodal experience. There was no significant difference between these means, and so clearly age at first implant could not account for the differences on language measures. Nonetheless, we computed correlation coefficients between the length of time spent with the first implant and scores on each dependent measure separately for the two groups. None of the correlation coefficients was significant for the group of children who had bimodal experience, but all were significant for the children who had no bimodal experience. Results of those analyses are shown in Table 12. So, how long a child had an implant did not affect language abilities, if the child continued to wear a hearing aid on the unimplanted ear. However, length of time with an implant did influence language outcomes for those children who stopped using hearing aids when they received their first implants.

Pearson Product–Moment Correlation Coefficients Between Each Dependent Measure and the Duration of Time Spent With the First Implant, for Children With no Bimodal Experience, and Associated p Values

NOTES: Aud. Comp. = raw scores on the Auditory Comprehension subscale of the Preschool Language Scales–4; EOWPVT = Expressive One-Word Picture Vocabulary Test; MLU = mean length of utterance; Pronouns = numbers of pronouns produced in a 50-utterance sample.

Discussion

This study tested the hypothesis that bimodal experience early in the life of a deaf child could facilitate the acquisition of generative language. To that end, data from four language measures were examined for 42-month-old children who had at least one cochlear implant and compared based on their stimulation configuration: one implant, bilateral implants, or bimodal stimulation with an implant and a hearing aid. Before discussing how children in these groups performed relative to each other, it is interesting to compare their overall performance to that of children with NH. Table 10 shows that mean scores on all measures of language ability for children with HL, both those who had some experience with bimodal stimulation and those who did not, were one to two SDs below mean scores for children with NH. Thus, even these children whose hearing losses were identified early in life and who received what may be considered state-of-the-art treatments were lagging far behind children with NH. This finding emphasizes the need for further research to help identify effective treatment options for children with HL.

When outcomes were evaluated for children with HL sorted according to their stimulation configuration at 42 months of age (one CI, bilateral implants, bimodal stimulation), no significant differences were observed, even when length of time with their configuration was presumably long enough for benefits to have been realized. Generally speaking, experimental findings that simply fail to show significant effects are not newsworthy. In this instance, however, this lack of statistical significance has clinical implications. Bilateral implantation imposes much greater costs than unilateral implantation, financially as well as in terms of lost opportunities for future treatments. Consequently, the treatment should provide readily demonstrated benefits, and those benefits should be consistently observed across both children and skills examined. Although other studies have demonstrated benefits of bilateral implantation on sound localization and masking release (e.g., Litovsky et al., 2004; Tyler et al., 2002), no equivalent benefit was observed here for the acquisition of generative language.

Looking more broadly, it was found that children who had any amount of experience with bimodal stimulation had better generative language abilities than children with no bimodal experience at all. This finding may seem contradictory to expectations that individuals with the degree of hearing loss that these children had (all poorer than 70 dB BE-PTA) would not be expected to demonstrate good speech recognition with hearing aids alone (e.g., Wolfe et al., 2007). The suggestion being made is that the low-frequency signal these children heard through their hearing aids facilitated their acquisition of language, even if it was not sufficient on its own to allow them to recognize individual words or phonemes, as likely it was not in most cases.

Of course, there is no way of knowing exactly what acoustic properties these children with bimodal stimulation were recovering from the signals they were hearing, which might be considered a limitation of this report. However, it is difficult to say what measure could have been collected in order to identify the signal properties these children were getting through their prostheses. One clinical tool that is sometimes used to assess speech recognition is the percentage of single words correctly repeated. Arguably, it would have been useful to have this kind of measure for these children. But because clinical results were provided by children's audiologists and a measure of this sort was only occasionally obtained, this information was not available for most children. Beyond providing a general metric of recognition, however, it is not clear how such a measure might have helped to explain the group-related differences observed on MLU and Pronouns. Word-recognition scores are heavily dependent on the perception of consonant-related cues, and the early acquisition of syntactic structures is likely dependent on more global properties that span several segments, syllables, or even words.

On the other hand, we can make reasoned suggestions about the signal properties children with bimodal stimulation must have been recovering based on what we know about the acoustic properties that children with normal hearing use to bootstrap into syntactic competency. In all likelihood these children were able to get at least fundamental frequency from their hearing aids, which would allow them to use prosody to begin parsing the signal into linguistically meaningful units. Many of these children were also likely able to hear the first formant through their hearing aids, if not the second formant some of the time, as well. It has been suggested elsewhere that young children can recognize recurring patterns of change in these low-frequency formants, and use those patterns to begin chiseling individual lexical items from the ongoing speech signal (Nittrouer et al., 2009). Thus, these children had access to properties of the acoustic speech signal that typically developing children with normal hearing use during the earliest stages of language acquisition, and that likely helped them begin the language learning process.

The explanation above suggests that low-frequency hearing on its own provided benefits to these young children with HL, a suggestion that must be true to some extent because no significant correlations were found between the length of time that children with bimodal stimulation had one implant and language outcomes. This lack of a relation suggests that these children started the language learning process using whatever signal properties they were getting through their hearing aids, a suggestion that coincides with what we know about the roles of properties such as prosody and low-frequency formants in the earliest stages of language learning. At some point, however, it must be the case that the integrated electric–acoustic signal becomes critical to this learning. Other investigators studying combined electric–acoustic hearing in adults have argued that the benefits derived from combining the outputs of an implant and a hearing aid do not result from the linear addition of low- and high-frequency stimulation (Chang, Bai, & Zeng, 2006). Instead these investigators suggest that the low-frequency signal from a hearing aid helps the listener segregate information-bearing high-frequency signal components into separate streams. Subsequently the auditory object of interest (in this case, the linguistically relevant speech signal) comprising one stream can be recovered. We have no independent data to support the notion that the low-frequency signal these children heard through their hearing aids helped them recover the auditory object from the signal they received through their implant. All we do know is that the children with bimodal stimulation showed evidence of better language skills than the children who never had bimodal stimulation. Furthermore, we presume that some portion of that benefit must have been obtained from the combined signal because all children in this study used hearing aids prior to getting their first implants. This means that the differences observed in language abilities between children who had bimodal experience and those who did not must largely be attributed to the bimodal experience itself, rather than to having had access to whatever signal properties were available through their hearing aids before getting first implants.

It is important to the argument made here that significant correlation coefficients between length of time with a first implant and three of the dependent measures were obtained only for the children with no bimodal experience. Apparently the language learning process continued uninterrupted for the children who retained a hearing aid on the unimplanted ear after receiving an implant. For the children who discontinued using hearing aids once they received implants, it appears that language learning began anew when they received that first implant and started hearing a different kind of signal all together. This difference among the two groups suggests that it may be best to allow children to continue wearing a hearing aid after they receive a first implant.

In addition to providing information about what kind of stimulation might be best for children with HL, the results presented here also inform us about what kinds of dependent measures index language acquisition most keenly. In general, the measures of auditory comprehension and expressive vocabulary did not reveal differences among the groups of listeners, but the two measures of generative language did. This outcome makes sense because it is really the way that children are able to incorporate linguistic structure into their own productions that indicates how sensitive they are to that structure in their native language. A child might be able to understand others, the construct measured by the auditory comprehension task, without having keen sensitivity for linguistic structure. In fact, often just the context provided by the communication setting can aid performance on this task. Similarly, the size of a child's vocabulary tells us nothing about whether or not that child is able to string words together and inflect them properly. Teaching a child isolated vocabulary items is a fairly simple method of intervention, and so is commonly used. Helping children discover how to incorporate that vocabulary into syntactically correct sentences is more difficult, but much more important. So, although the process of obtaining and transcribing language samples is arduous, it appears to provide the most sensitive measures of language development.

In sum, the data reported here provided no support for the practice of bilaterally implanting children with hearing loss at very young ages. Other benefits might accrue from bilateral over unilateral implantation, such as better ability to localize sound, but that advantage apparently does not extend to language learning. Rather, some support, albeit less than conclusive in magnitude, was provided for the practice of giving children with HL a period of bimodal stimulation early in their lives.

Of course, many questions are left unanswered by this report. In particular, we do not know if all of these children with bimodal stimulation had the optimal match between implant and hearing aid, nor do we even know what constitutes the optimal match. Questions regarding how to map an implant and fit a hearing aid when they are to be used together must be examined. Another question that these data cannot answer is whether children with severe-to-profound hearing loss should continue with bimodal stimulation into childhood. The alternative would be to give them a period of time with bimodal stimulation as a step on the path to bilateral implants. We will only know the answer to that question after we follow the children tested here, and other children, for longer periods of time. Finally, choices regarding stimulation for children with HL are sure to be affected by emerging technologies, particularly those that will allow combined electric and acoustic stimulation to the same ear (e.g., Uchanski et al., 2009). In the future we may find ways to match our methods of auditory stimulation to children's hearing loss and learning needs more precisely, and make adjustments to those settings throughout childhood as hearing loss and needs change. The study reported here illustrates just one of the factors that would need to be taken into account in making such decisions: Low-frequency, dynamic spectral patterns in the acoustic speech signal may help facilitate children's discovery of linguistic structure in their native language.

Footnotes

Acknowledgments

The authors are grateful to Kimberly Knight for help searching the literature on bilateral and bimodal stimulation.