Abstract

The emergence of generative artificial intelligence (GenAI) has opened up new possibilities for qualitative research. However, in methodological development, GenAI is often treated as a passive tool rather than as an active actant. This article contributes to the emerging field of GenAI in qualitative research by introducing a structured actor-network theory-driven mapping-as-method protocol designed to systematically identify the sociotechnical entanglements of AI tools before their integration into research and to evaluate their role in the research afterwards. We argue that researchers need to engage critically with the contingencies, biases, and entanglements that define contemporary AI systems.

Keywords

Introduction

“Tools exist only in relation to the interminglings they make possible or that make them possible,” wrote Deleuze and Guattari (1987/2013) in the 1980s (p. 105). This observation is strikingly relevant in relation to new artificial intelligence (AI) technologies and especially generative artificial intelligence (GenAI). Indeed, GenAI is not only a tool, but a broad and complex societally entangled apparatus, built on the interminglings of technologies and infrastructures, humans, economy, culture, politics, power, ideologies, natural resources, fuel, human labor, histories, classifications, and meaning-making (Crawford, 2021; Lindgren, 2024).

With its rapid development and promises of unprecedented efficiency, GenAI has created imaginaries of revolutionary progress in how we work, communicate, and create. Among others, academia has been fast in adopting GenAI to facilitate teaching, administration, and research (Burton et al., 2024; Perkins & Roe, 2024), including methodological possibilities for qualitative research, as articles in this special issue demonstrate. What AI ends up being technologically, politically, practically, socially, and in the context of our article, in qualitative research, is not prescribed by nature but the result of sociopolitical processes in which we participate as scholars (Lindgren, 2024).

We argue for critical reflection among scholars integrating GenAI into qualitative research designs, contending that there is a need for an approach to address the wider questions of AI’s power, politics, and technology (see also Roberts & Bassett, 2023). The legislative regulation of AI technologies is years away and risks being outpaced by the rapid development of technology. With or without such legislation, we argue that there is an urgent need for academic critical reflexivity on how GenAI is used and how it affects research. The black-boxed nature of AI tools makes it difficult, if not impossible, for a researcher to evaluate AI’s ideological underpinnings, the biases in data sets and classification, the conditions of laborers developing the systems, and the environmental footprint from the development and use of GenAI models. Since the meanings, knowledge, and prompts generated by GenAI models for qualitative research are never neutral, but “rooted in society’s existing power structures and stereotypizations” (Lindgren, 2024, p. 20), scholars need to carefully consider how they engage with these technologies, which are known to echo and sometimes strengthen harmful social dynamics.

This article contributes to ongoing discussions on GenAI in qualitative research by using actor-network theory (ANT) (Latour, 2007) to examine GenAI as an actant—a human or nonhuman entity that acts within a network—in knowledge production. We use the method development from the workshop

The article proceeds as follows: We start by introducing the futures workshop method. We then continue to the theoretical and methodological framework of ANT and how we apply it in this article. Our analysis is structured around three questions: What happens when GenAI mediates future-imagining exercises? What challenges arise when GenAI, as a complex and black-boxed actant, becomes part of research designs and methodologies? What is the researcher’s role and responsibility in relation to these two questions? The article ends with a discussion and conclusion on the proposed research framework and the implications of nominating AI as a research actant.

Imagining Alternative Futures With GenAI

The vignette of this article is an outcome of a research project, Imagining Sustainable Digital Futures (2022–2025), which was motivated by the growing need to develop methodological approaches to strengthen the capacity to imagine alternative futures. Grounded in the idea that imagination is central to disrupting the assumed inevitability of present societal conditions, the project aimed to respond to calls for fostering imaginative capacities and experiential ways of thinking about the future (Galafassi et al., 2018; Yusoff & Gabrys, 2011). This need is particularly pressing, given that special methods are needed to break away from the present (Ketonen-Oksi & Vigren, 2024; Markham, 2020).

Imagination was conceptualized as a collective skill that can be trained and should not be left to the few in power (Eskelinen et al., 2020; Galafassi et al., 2018; Salmenniemi et al., 2024). This highlights the importance of speculative imagination in combining ideas in unexpected and unconventional ways (Ketonen-Oksi & Vigren, 2024). In the project, alternative futures were imagined together with young people, based on the premise that their perceptions, hopes, and fears about the future matter because the future concerns them most directly. The aim was to create a space in which their voices could be heard, their imaginative capacities fostered, and perhaps seeds of hope planted. By displaying the images in a public exhibition, the project invited a wider audience to come and join a journey to the year 2050 and imagine what it would be like to live in the futures that the young people envisioned. The exhibition at Art House Turku, called

The workshop was inspired by the researchers’ curiosity about how GenAI could spark imagination and facilitate the narration of desirable futures (Girardin, 2015). It was developed through seven pilots conducted during the winter of 2023 to 2024, involving more than 80 participants. Building on these experiences, the main 6-hr workshop took place in May 2024, during which six young people aged 16 to 18 years participated in a series of exercises designed to help them reflect on their current feelings about the future and imagine a desirable future in 2050. The participants were recruited on a voluntary basis through advertisements in local schools and youth centers and on social media. They received no compensation for their involvement. Their motivations for participating included learning more about AI, exploring alternative futures, and contributing to an exhibition.

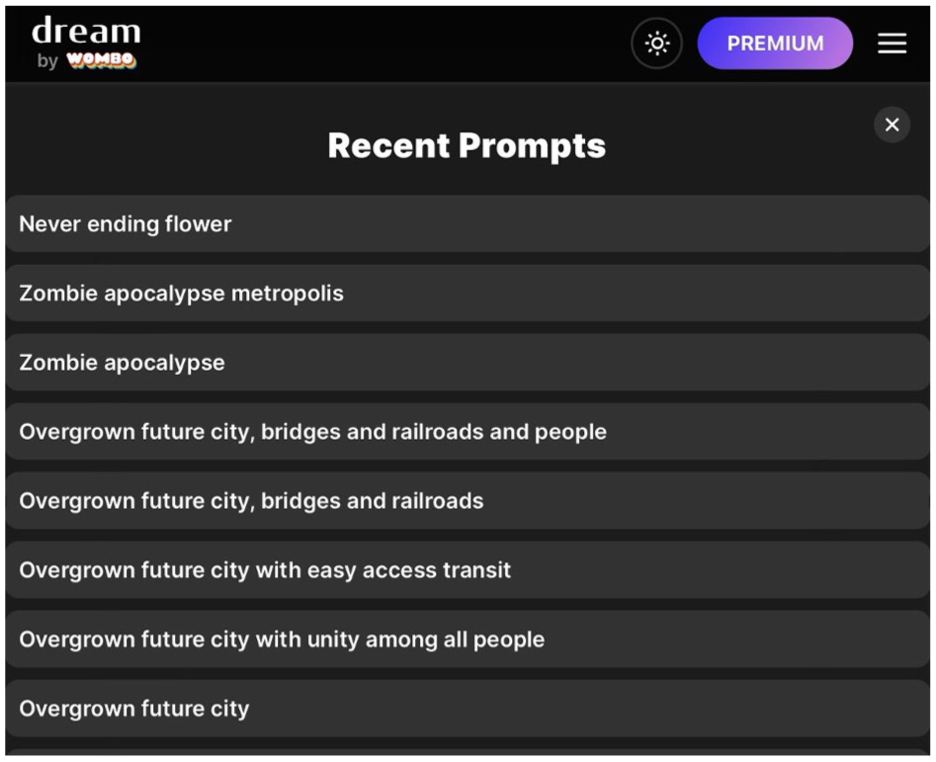

After warm-up activities, the participants wrote down their hopes and values by reflecting on the following questions: What matters to you? What should be different? What from 2024 would you want to preserve into 2050? For the image generation, we used the text-to-image AI app Wombo Dream, which was chosen for its ease of use and lack of major known controversies. The participants were guided to write a text prompt based on their reflections, and, if they wanted, they could use the app’s

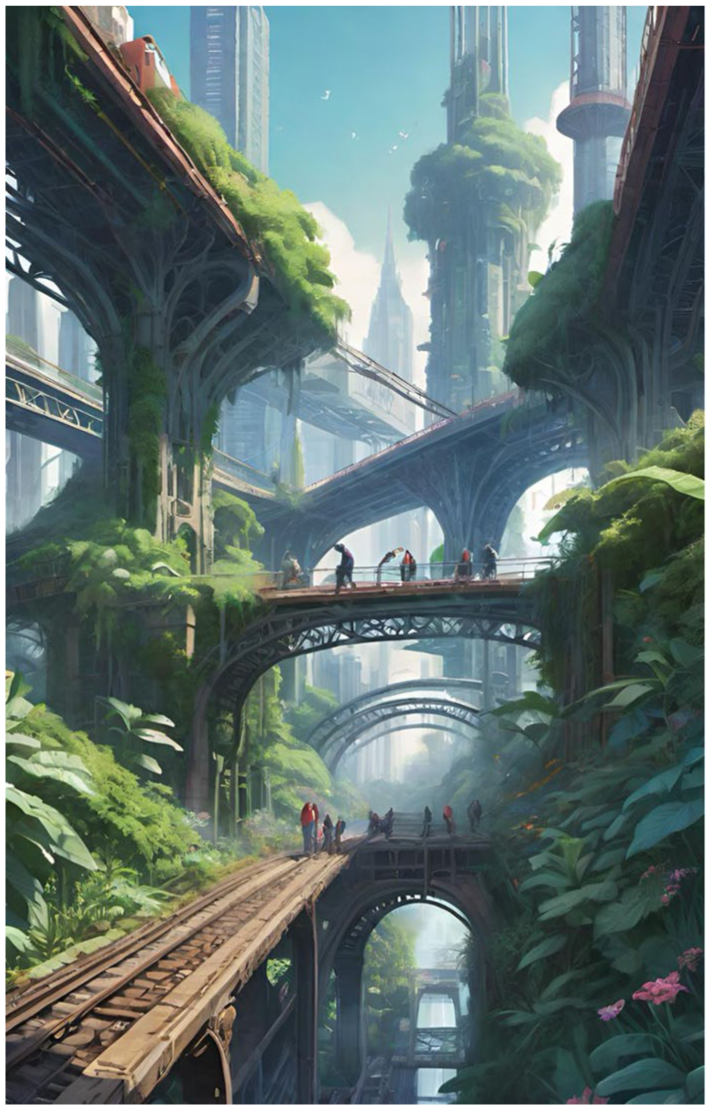

Figure 1 shows how the participant MOU envisioned the desirable future. In MOU’s own words, the image depicts a moment in which current society has been abandoned, nature has been allowed to take over, and people foster collective ways of living together. In the exhibition, the image was placed inside a black box and viewed through a small aperture. Figure 2 shows the prompt history of creating the image.

The Image Titled the Evergrowing Was Created by MOU Using the Text-to-Image AI App Wombo Dream.

Prompt History of MOU While Creating the Image the Evergrowing, Including Additional Prompts MOU Experimented With.

MOU wrote the following flash fiction story, translated from Finnish to English, to describe what living in the future, as depicted in the image, would feel like:

It’s 2050. The harms of past societies have been overcome, and nature has taken over. Overgrown structures are sustained by collaboration and hope. Cars, planes, and almost all other vehicles have been abandoned, except for a few working trains. People get around the city by walking, climbing, and cycling. Cities are only built upwards, not to take up more land. All residents live in communities and in peace alongside other communities. Everyone looks after one another and their common living spaces. Animals, plants, and people live in perfect harmony with each other, their peace undisturbed by anything or anyone.

We use this workshop as a vignette for examining the challenges and ethical concerns of integrating GenAI into qualitative research. Our methodological reflection does not focus on researcher–participant dynamics or the envisioned futures, both of which deserve separate, in-depth treatment. Instead, this article introduces a mapping-as-method protocol designed to foster critical engagement with research involving black-boxed AI applications. The next section describes the theoretical and methodological framework of ANT and how we apply it in the mapping.

GenAI as an Actant

One of the foundational principles of ANT is the idea of the distributed nature of agency, according to which social networks are composed not only solely of human actors, as traditional sociological theories often suggest, but also of nonhuman actants (Latour, 2007). We consider ANT particularly fitting for a self-reflexive evaluation of the use of GenAI in qualitative research designs, as it moves beyond viewing these technologies as mere tools. It allows us to understand them as active actants that contribute to research outcomes through their algorithmic design, training data, classification principles, and biases, influencing the kind of data produced, the responses of human participants, and the overall findings (Desai et al., 2017; Latour, 1992, 2007; Li & Zhu, 2024; Mol, 2010). This follows the ANT idea that actants shape research processes and findings. By acknowledging the role of AI systems as nonhuman actants, we can steer clear of reductionist approaches that attribute agency and influence solely to human actors, and instead delve into the various forces that interact to produce social outcomes (Latour, 2007; Mol, 2010).

We treat GenAI as both an assemblage and an actant. Through punctualization, the complex network of heterogeneous elements (data, interfaces, compute, and labor) is treated as if it were a single, unified actor (Law, 1992); through depunctualization, we reopen the black-boxed network to reveal its constituent network (training data, ownership, and environmental costs). This “zoom-in/zoom-out” toggle organizes our method (Latour, 2007), for example, Wombo Dream in use versus critique.

Throughout this article, we distinguish between AI as a broad techno-political assemblage and GenAI as specific applications that function as actants within this larger system. In ANT terms, actants never exist in isolation but are always constituted through their network relations (Callon, 1986). Thus, analyzing Wombo Dream as an actant necessarily involves tracing its connections to the broader AI assemblage. Its training data, computational infrastructure, and corporate ownership all form part of what makes it capable of action. While we primarily employ ANT as our analytical framework, we occasionally draw on assemblage thinking (DeLanda, 2016), as it has become integrated into contemporary ANT scholarship. In current ANT practice, assemblage often functions as shorthand for heterogeneous actor-networks, emphasizing their emergent and dynamic qualities. When we refer to the

Two ANT concepts are particularly relevant to our analysis. First,

Second,

The black box metaphor proves analytically relevant for two reasons. First, it connects ANT’s theoretical insights about network stabilization to the concrete challenges researchers face with AI tools. When Latour discusses black-boxing, he reveals how the successful elements of a system hide their construction, making them appear inevitable rather than constructed (Latour, 2009). With GenAI, this process is accelerated and multiplied: algorithmic black boxes within corporate black boxes within infrastructural black boxes.

Second, the metaphor illuminates the temporal dimension of our methodological challenge. The workshop’s future-imagining exercise served as our entry point; our primary focus is the present-tense struggle of researchers attempting to use black-boxed GenAI responsibly. The young participants’ future visions revealed how GenAI’s black-boxed biases shape imaginative possibilities. More importantly, however, this process exposed our own position as researchers unable to fully audit the tools we are embedding. This transforms the traditional ANT imperative to open black boxes: With GenAI, opening is not an achievable goal but an ongoing process that reveals the depth of entanglement between research methods and opaque technical systems.

Reimagining Methodological Frameworks: ANT and GenAI in Critical Research Design

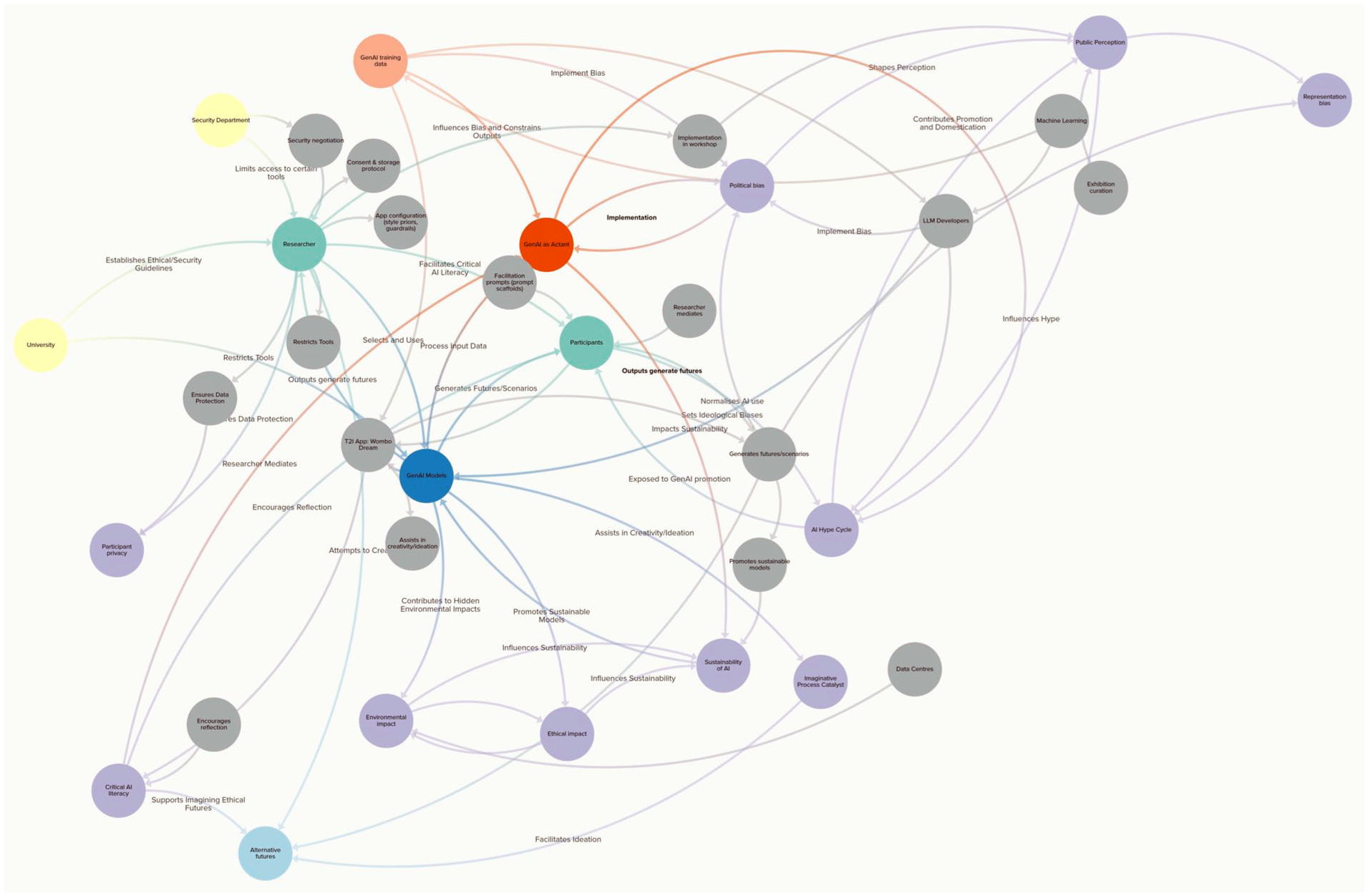

Methodologically, ANT structured our analysis in two key ways: network mapping and translation analysis. Using

The analysis is built on the three core principles of ANT. The first, symmetrical analysis, ensures that human participants and nonhuman elements are treated with equal attention. This principle guides us in examining the ways in which researchers, workshop participants, software interfaces, and GenAI systems all contribute to the outcomes. The second, network tracing, involves mapping the interactions between various actants, such as the GenAI models, participants, institutional structures, and technological systems. Finally, translation analysis focuses on how meanings and outputs are transformed as they move through this network of human and nonhuman actants. We acknowledge that the mapping is inherently limited, as there are an endless number of assemblages, “assemblages of assemblages” (DeLanda, 2016, p. 3). For the purposes of this article, we had to stop somewhere and keep the focus on what we consider most important in relation to the workshop method and the reflection on GenAI as an actant in qualitative research designs.

In addition, we understand that epistemic values reside not in individual actors but in networks themselves (Latour, 2007). Knowledge, therefore, emerges through what Latour calls circulating reference (Latour, 1999), chains of transformations in which each step translates rather than simply transmits information. However, in the context of our research, we acknowledge that GenAI also acts as a particularly powerful mediator (not merely an intermediary) that transforms participants’ textual prompts into visual outputs, actively shaping what futures become imaginable and expressible.

Our network mapping reveals why the black box metaphor remains analytically essential. Rather than seeking to fully “open” GenAI’s black box, which is an impossible task, we use the concept to trace how opacity circulates through research networks. Each node in our map represents a point where black-boxing occurs: Algorithmic decisions hidden from users, corporate strategies concealed behind public relations, and environmental costs obscured by geographical distance. The black box thus becomes not something to overcome but a condition to map, document, and work within.

Figure 3 shows the network mapping, capturing the complex interplay of human and nonhuman elements shaping the role of GenAI in research and society. Nodes are of three kinds: actors (human or nonhuman), practices (the stabilizing/performative work), and outcomes/values (futures endpoints). Connections are typed as governance/constraint, translation/material, or normative/ideational and are differentiated by direction and strength. Following ANT’s epistemological framework, we make the work explicit by representing

Typed Actor Network of the Images of the Future Workshop and the Infrastructure of GenAI.

Network Disruptions and Translations: A Vignette of GenAI as an Actant in Qualitative Method Development

GenAI as Actant Mediator in Future-Imagining Exercises

The node

In the playful exercise of creating images of a desirable future with GenAI, most participants felt it was easier to generate the image using the tool than to start with a blank sheet of paper. GenAI facilitated ideation but revealed biases when the participants’ imaginaries conflicted with Wombo Dream’s encoded assumptions. Prompts such as “sustainable community” repeatedly produced high-rise, technologically saturated landscapes: images of greened vertical urbanism that implicitly sidelined alternative, more communitarian imaginaries. Wombo Dream operated as an epistemic filter, encoding its own assumptions about what progress or sustainability should look like. These moments of friction illustrate ANT’s assertion that networks remain stable only through ongoing negotiations between actants (Goodwin & Kuehn, 2021). When participants resisted Wombo’s imposed framings, they were better able to articulate the future they wished to see. Thus, counter-arguing the AI-generated image helped foster imaginative capacities, as the participants were prompted to refine or correct the visual output to better reflect their desirable futures.

For example, Wombo Dream interpreted the coexistence of nature and urban infrastructure by placing greenery on the outskirts, whereas the participant imagined a city where plants grew in and between the buildings. Simultaneously, it can be asked what happens to creativity as a future-imagining capacity when GenAI becomes a co-creator (Edgell, 2024). The problematic dynamic became more intricate due to the difficulties that the participants encountered when attempting to tweak their prompts and experiment with the app’s different art styles. This experience also offers a valuable opportunity to foster critical AI literacy, as further discussed in later.

AI systems embody biases through their training data and classification choices (Akter et al., 2021; Crawford, 2021). The mapping reveals how GenAI is deeply shaped by the biases embedded in its design, development, and deployment. From ideological and political leanings (

The embedded biases manifest in how GenAI models negotiate social, economic, and political imaginaries. This is particularly relevant in the context of speculative futures, in which AI-generated output, whether textual or visual, does not merely reflect an open field of possible scenarios but subtly frames certain futures as desirable while marginalizing others. Importantly, this bias is not intrinsic to the architecture of LLMs or image generators themselves. Instead, it emerges as an artifact of the training and reinforcement process, in which curatorial decisions made during data set selection and model alignment actively shape AI’s epistemic commitments (Rozado, 2024). This is of particular concern in the context of the futures workshop, as the images were displayed in a public exhibition where they may have been viewed as standalone pieces, without the critical framing provided in the exhibition description.

The Black Boxes of GenAI as a Techno-Political Assemblage

Analyzing GenAI as an actant requires acknowledging its embeddedness within the broader AI assemblage, which Crawford (2021) calls the extractive system of computation. The hidden politics, biases, and environmental costs we reference are network properties that become visible when we depunctualize the actant to reveal its constitutive relations.

The nodes

The university’s concern was focused solely on data security rather than the broader ethical questions or the opacity of the GenAI systems that limit meaningful researcher oversight. Auditing the systems relied on the researcher, who found it a nearly impossible task. The first major issue that remained a black box concerned the nodes

Second, the nodes

The Researcher as an Actor in the AI Assemblage

GenAI systems are products of the political and economic landscapes in which they are developed, simultaneously contributing to shaping these landscapes. Nodes such as

The main criteria for choosing the GenAI app for the workshop were usability, suitability for the exercise, data privacy, and ethical concerns implied by legal battles. The chosen app, Wombo Dream, developed by a Canadian start-up Wombo Studios Inc, reported 60 million downloads and 1.5 billion artworks created by February 2023 (PR Newswire, 2023). On their website (Wombo, n.d.a), Wombo lists its values as building “the happiest place on the Internet” and “powering next-generation media to make people laugh and smile” using the latest AI techniques. While announcing the success of a funding round (PR Newswire, 2024: para. 10), the company promises to “keep pushing the boundaries of what’s possible, what’s probable, and what’s quirky in the world of AI.”

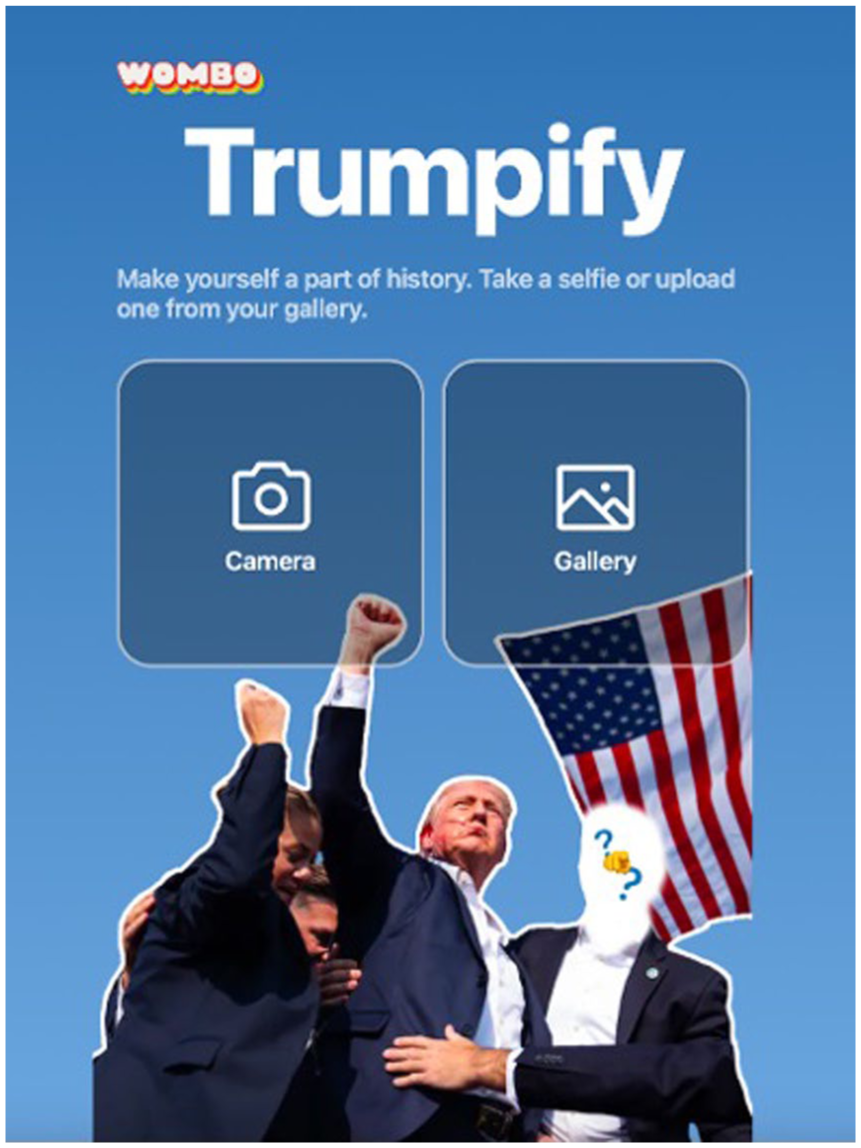

Their boundary-pushing style turned out to be an unpleasant surprise after the workshop. Subsequent controversies emerged around Wombo’s political content creation, particularly involving candidates in the 2024 U.S. presidential elections, as well as alleged privacy violations. For example, the Trumpify photograph frame enabled users to place their face onto the body of a Trump bodyguard in a widely circulated image captured after the assassination attempt (Figure 4). In the text for the photograph frame, Wombo declared, “We don’t choose sides–we live for the meme.” Given the growing concerns about AI and deepfakes in political campaigns (Diakopoulos & Johnson, 2021), such content was far from apolitical and effectively made Wombo an active participant in politics. Furthermore, in July 2024, a class action suit was filed, alleging that Wombo was violating an Illinois privacy law by capturing, storing, using, and sharing users’ biometric information without permission (McCrockey, 2024).

Screenshot of Wombo’s Trumpify Photograph Frame Published on Instagram on July 17, 2024, With the Caption “A Monumental Moment in American History”.

Thus, there is a need for the academic community to critically reflect on the narratives that attract us to introducing AI in our research. Concerns about the ethical challenges of AI being integrated into people’s everyday lives (Etzioni & Etzioni, 2017; Jobin et al., 2019) urge us to consider our responsibility in

Amid these concerns, our map highlights the importance of

Discussion

Our actor-network mapping demonstrates the need to attend to the deep material and human roots of AI systems, revealing the struggles researchers face in grasping this unfinished, and constantly evolving assemblage. As a theoretical and methodological framework, ANT has enabled the positioning of GenAI as a collaborative actor and coproducer of knowledge in the research process and has provided a more reflexive approach to research methodologies (Popa et al., 2015). This is especially relevant in fields like AI and GenAI, in which the design and operation of systems subtly influence everything from research data to creative outputs and the coconstruction of meaning (Escobar, 2012). Once deployed, technologies begin to interact with human users and other technologies in ways that their designers may not have anticipated (Edgerton, 2007), further complicating the distinction between human intention and technological outcomes (Sayes, 2014). The mapping protocol is thus not only a general ontology of AI but also an auditable method for situating GenAI within methodological contexts and tracing how particular interactions reveal the infrastructure behind the GenAI integration in methodological innovation.

The concept of the

While ANT scholars may find it unsurprising that GenAI functions as an actant in knowledge production, our contribution lies in developing a systematic protocol for making this agency analytically tractable in the method development context. First, our network mapping methodology provides researchers with a predeployment auditing framework that reveals hidden entanglements before tool integration. Second, it demonstrates how opacity operates not only as a singular barrier but also as a recursive property circulating through institutional, corporate, and infrastructural layers. Third, it shows how translation analysis can expose epistemic commitments embedded in ostensibly neutral technical processes. Finally, it offers an adaptable template for situating GenAI within method assemblages across diverse qualitative research contexts.

Here, researchers play a critical role in ensuring that GenAI is used reflexively and with an awareness of its broader societal impact. While GenAI tools offer possibilities for research, they also introduce challenges related to ethics, transparency, and human oversight. As these tools continue to evolve, vigilance is needed to ensure that AI is used responsibly, safeguarding the integrity of academic work while leveraging the benefits of these technologies (Burton et al., 2024; Perkins & Roe, 2024). The

These concerns point toward a multidimensional understanding of researcher responsibility that emerges from our ANT-informed analysis. By

Rather than attempting to resolve the methodological challenges of working with the black-boxed GenAI, we argue for an approach that actively works through them, treating GenAI’s networked complexities as a site of critical interrogation rather than as a technical problem to be solved. Recognizing GenAI as an actant in research means that its agency must be mapped, contested, and negotiated at every stage of the methodological process. This requires rethinking how research tools are selected and audited, as well as the responsibility and accountability behind these decisions. Our methodological choices reflect this accountability: Selecting a text-to-image tool foregrounded visual translation and aesthetic biases. At the same time, the workshop’s temporal constraints made prompt negotiation visible as a key site of human–AI friction, potentially obscuring dynamics that emerge over longer engagement. The exhibition framing positioned outputs as finished products, directing analytical attention toward aesthetic coherence rather than processual experimentation. These design choices enabled engagement with certain dimensions of black-boxing while bracketing others, exemplifying ANT’s insight that methods are performative practices that enact realities (Law & Singleton, 2013).

Finally, critical AI literacy should be integrated into research designs that involve GenAI applications, not only as an add-on but also as a necessary methodological intervention. This ensures that participants and researchers alike remain attuned to AI’s biases and epistemic constraints. Following Lindgren (2024), we advocate for critical AI research that questions necessity and envisions responsible technological futures. Ultimately, there is a need to reflect on the following questions: How could and should we as researchers resist the hegemonic narratives of the AI industry? How could our research interventions participate in the radical reimagining of AI’s technological development and role in society?

Conclusion

We documented struggles in responsibly integrating GenAI into qualitative research. These struggles reveal the recursive nature of AI’s black-boxing and the impossibility of full transparency. Through self-reflection and actor-network analysis of our method development, we demonstrate how the black box operates not as a singular barrier to understanding but as a recursive condition of contemporary AI systems. We argue that GenAI should not be treated merely as a shortcut to efficiency in research. Through an analysis using ANT as a framework, we demonstrate how GenAI is an actant that participates in knowledge production through algorithmic logics and embedded biases. The futures workshop, therefore, became a site for mapping how GenAI participates in knowledge production, foregrounding questions of agency, transparency, and the epistemic constraints these technologies impose. In this article, we propose that AI actor-network mapping provides a means for researchers to more fully account for the complexity of interactions in research environments. Our analysis offers a blueprint for researchers seeking to critically engage with the black box of AI systems integrated into their methodological toolkit. It demonstrates how network mapping and participatory methods can bridge theoretical critique with empirical enquiry, even when the opacity of the black box cannot be fully reduced.

We also underscore the necessity of ethical and methodological rigor in AI research, advocating for a holistic approach that critically audits GenAI tools. This includes not only their biases but also their environmental impact, governance implications, ideology and politics, and long-term epistemic consequences. This perspective encourages a reflexive approach to the use and analysis of technological systems, ensuring that their role is recognized as active and influential rather than passive, context-free, or neutral. The value of ANT in this context lies not in stabilizing networks but in making their fragilities, breakdowns, and potentials legible. This allows researchers to engage critically with the contingencies, biases, and entanglements that define contemporary AI systems and shape their integration into qualitative research.

Footnotes

Acknowledgements

The research experiment was first ideated with Katja Ollikainen. The further methodological development was done in collaboration with Elina Sutela and Taimi Mikkonen. The workshop was facilitated together with Taimi Mikkonen.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and publication of this article: This work was supported by the Research Council of Finland [grant number 367860].