Abstract

Harassment of scientists and science communicators is a growing concern, particularly on social media. While bystanders can help counter such harassment, few intervene. This research note presents the development, implementation, and evaluation of a social media intervention fostering bystander interventions, created through a transdisciplinary co-creation process with researchers, practitioners, influencers, and citizens. The resulting Instagram campaign featured (audio-)visual messages with scientific evidence, legal guidance, and example interventions. A comprehensive mixed-methods evaluation showed the campaign reached many users, received positive feedback, and produced impactful, reusable materials. We conclude with insights for research–practice collaboration and research on harassment in science communication.

Keywords

Introduction

Scientists and science communicators are increasingly affected by harassment (Global Witness, 2023; Nogrady, 2021), especially on social media, which is becoming a growing arena for science communication (Taddicken & Krämer, 2021). This harms targeted scientists’ mental health, productivity, and willingness to engage publicly (Global Witness, 2023; Nogrady, 2021; Seeger et al., 2024), while also eroding public trust in them (Egelhofer et al., 2024) and potentially creating “an atmosphere where the relevance of listening to researchers and using their research evidence is watered down” (Väliverronen & Saikkonen, 2021b, p. 9).

Countering harassment appropriately—without restricting critical discourse—is therefore crucial. The public can play a central role. Given harassment’s high reach and visibility on social media, users frequently witness it as bystanders (LfM, 2023). When bystanders intervene prosocially, by reporting hateful comments or expressing counterspeech, they foster a more deliberative discourse climate (Garland et al., 2022). However, most users do not intervene, potentially normalizing such behavior (LfM, 2023).

This underscores the need to encourage prosocial interventions against online harassment of scientists and science communicators. This research note reports the development, implementation, and evaluation of a social media intervention to raise problem awareness and intervention competence among online users. Responding to calls for stronger collaboration between science communication research, practice, and stakeholders (Fischer et al., 2024; Scheufele, 2022), our project adopted a transdisciplinary co-creation approach, involving scientists, science communicators, social media influencers, and citizens.

We review evidence on harassment in science communication and bystander intervention that informed our intervention’s design, then outline the transdisciplinary co-creation process, and the social media campaign’s development, implementation, and comprehensive evaluation. We conclude by discussing implications for research–practice collaboration and science communication research on harassment.

Online Harassment of Scientists and Science Communicators

Harassment of scientists and science communicators is not new, but has intensified with the rise of social media (Väliverronen & Saikkonen, 2021b). While social media expands the reach of science communication—making it more accessible, enabling participatory engagement (Shilubane et al., 2024; Taddicken & Krämer, 2021), and positively influencing public perceptions of scientists (Zhang & Lu, 2025)—it also facilitates the identification and targeting of scientists and communicators, amplifying attacks to large audiences (Celuch et al., 2022). Research documents a broad spectrum of harassment, from personal insults, discrimination based on beliefs, identity, or preferences, to threats of physical violence (Global Witness, 2023; Nogrady, 2021). It is essential to distinguish between harassment and legitimate counter-discourse. Not every critical statement is inherently hostile (Väliverronen & Saikkonen, 2021a); indeed, various aspects of the scientific system merit scrutiny (see e.g., Nature Editorial, 2017). Such critical engagement should be protected as part of a healthy public discourse.

For those targeted, harassment can have serious consequences, including psychological effects such as stress, anxiety, and depression (Global Witness, 2023; Gosse et al., 2021). For some, these experiences reduce willingness to participate in public discourse (Seeger et al., 2024). This “chilling effect” (Nogrady, 2021) may extend beyond those directly targeted: As many attacks occur publicly on social media, other scientists and communicators who witness them may self-censor out of fear of becoming targets themselves (Seeger et al., 2024). Scientists and communicators from marginalized communities, who often face disproportionately high levels of harassment (Gosse et al., 2021; Väliverronen & Saikkonen, 2021b), are especially at risk of being silenced—weakening the diversity in science communication. Harassment can also hinder research productivity (Global Witness, 2023) and deter scientists from pursuing controversial topics, thereby threatening academic freedom (Väliverronen & Saikkonen, 2021a).

Given these individual and societal consequences, calls to address harassment of scientists and science communicators are growing (Stein & Appel, 2021). While institutionalized support at universities is still under development in many places (Seeger et al., 2024), external organizations such as Scicomm-Support 1 in Germany or SafeScience 2 in the Netherlands are developing. However, less attention has been paid to the potential role of citizens—frequent bystanders to online harassment—in countering these attacks.

Fostering Competencies to Promote Bystander Intervention

Bystanders can intervene against online harassment publicly and directly (e.g., counterspeech) or indirectly (e.g., reporting) (Obermaier et al., 2025). The Bystander Intervention Model (BIM; Latané & Darley, 1970) suggests bystanders intervene prosocially when they (a) notice a critical situation, (b) perceive it as a threat, (c) feel personally responsible to act, and (d) identify an effective response (e.g., without endangering themselves) (Leonhard et al., 2018; Obermaier et al., 2016). Enhancing these decision-making components through competency-building can foster prosocial intervention.

To define these competencies, we draw on critical digital media literacy, i.e., the ability to identify, evaluate, and create digital content (Schmitt et al., 2018). This framework outlines skills required to recognize, understand, and respond to online harassment: awareness, reflection, and empowerment. Awareness means understanding that harassment occurs online and recognizing it. Reflection involves critically evaluating digital content, like distinguishing harassment from legitimate criticism. Empowerment enables users to respond actively, feeling responsible for engaging in online discussions and taking a stand when necessary.

These dimensions underpin perceived critical digital media literacy, which correlates with bystander intervention (Obermaier et al., 2025). To intervene, bystanders must be aware that online harassment against scientists and science communicators occurs and understand its consequences. Reflection enables them to critically evaluate harassment and distinguish it from legitimate opinions. Empowerment requires bystanders to see themselves as responsible and capable of intervening effectively and appropriately, particularly for counterspeech, where users need confidence in contributing to online discussions while considering their own safety (Schmitt et al., 2018). Research suggests empowerment drives bystander intervention: empowered users are more likely to engage in counterspeech or support others’ counterspeech efforts (Naderer et al., 2023; Obermaier et al., 2025).

Designing a Social Media Intervention—a Transdisciplinary Co-Creation Process

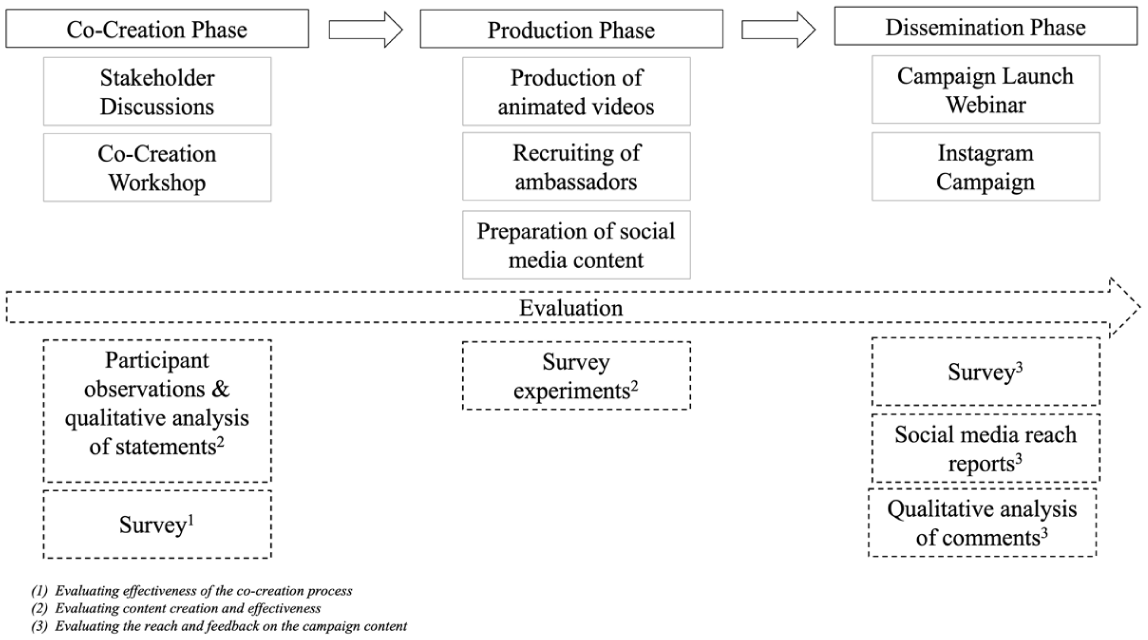

Citizens’ bystander intervention can mitigate harmful consequences of harassment targeting scientists and science communicators. Encouraging such intervention requires enhancing online users’ awareness, reflection, and empowerment. Yet, concrete initiatives fostering social media users’ intervention competencies have been lacking. We address this gap by developing, implementing, and evaluating an intervention for Instagram through a multiphase co-creation process involving co-creation, production, and dissemination phases (Figure 1).

Co-Creation Process Activities and Evaluation Methods.

We followed key recommendations for effective science communication. First, we adopted an evidence-based, theory-driven approach (Jensen & Gerber, 2020; Scheufele, 2022), with core communication goals focused on raising awareness, reflection, and empowerment, as outlined above. Second, we embraced a transdisciplinary approach (Fischer et al., 2024; Scheufele, 2022) by involving diverse stakeholders. Our core team included science communication scholars and practitioners from the Scicomm-Support, an institution supporting harassed scientists and science communicators. We also engaged influencers—credible digital opinion leaders with high persuasive power—to optimize intervention presentation. In addition, we involved citizens, particularly social media users, as potential bystanders and our primary target audience.

Finally, following best practices in evaluating science communication (Volk, 2024; Volk & Schäfer, 2024), we took a holistic approach, assessing each project phase (co-creation, production, dissemination) using mixed methods (Figure 1). We aimed to (a) evaluate the co-creation process’ effectiveness, (b) ensure content had the desired effect, and (c) assess campaign reach.

Our project took place in Germany, a key case with long experience enforcing strict online harassment laws like the NetzDG, which mandates swift removal of illegal content and influenced the EU’s Digital Services Act. Yet, “awful but lawful” harassment remains widespread, with reports of online harassment on scientists continuing to rise (Blümel, 2024).

Co-Creation Phase

Procedure

The co-creation phase began with internal discussions to outline a preliminary strategy and define key communication goals; followed by consultations with leading experts in science communication research and practice, as well as influencers, to present the plan and gather their feedback. Building on these steps, we conducted two core activities: a participant intervention and a co-creation workshop.

Participant intervention

We conducted an intervention at a public democracy festival, engaging citizens in discussions about online harassment of scientists and science communicators and possible bystander responses. Specifically, we had three interventions with approximately 10 participants each (n varied as participants could leave in between). All followed the same protocol: participants were informed that participation was voluntary, unpaid and could be discontinued at any time, and that their responses will be documented. They were then asked to imagine scrolling through a social media feed and encountering harassment comments under a scientist’s post. After presenting examples of potential interventions, we invited participants to evaluate how well these approaches might foster awareness, reflection, and empowerment, and to suggest their own responses. We conducted participant observation documenting participants’ statements and reactions, which informed the subsequent co-creation workshop.

Co-creation workshop

We held a 2-day co-creation workshop with 12 participants, representing the identified stakeholder groups: three scientists, three science communicators, three influencers, and three citizens. 3 The agenda included evidence-based inputs, group work, presentations, and discussions. To establish a shared knowledge base, we provided four short inputs on research on harassment of scientists and communicators, practical insights from the Scicomm-Support’s work with affected individuals, research on promoting bystander intervention, and lessons from prior harassment and hate speech campaigns. Participants then formed three mixed groups, each tasked with developing ideas for (a) raising awareness and reflection on harassment of scientists (Communication Goal 1); (b) empowering bystander intervention (Communication Goal 2); and (c) effective delivery of these interventions on social media (presentation modes). After two rounds of group work over two days, each group presented its results for plenary discussion and documentation.

In the final step, the project team reviewed the workshop outcomes, reached consensus on the most promising and feasible ideas, and developed the production strategy.

Results and Evaluation

In this phase, we implemented two evaluation methods: To evaluate the creation and effectiveness of content, we analyzed the documented statements and reactions of the participant observation. Two authors conducted qualitative consensual coding (both independently coded all protocols and met to agree on the final codes). Three themes emerged: (a) the importance of raising awareness about the issue and supporting science communicators facing harassment, (b) the challenge of distinguishing legitimate criticism from illegitimate harassment, and (c) the view that effective counterspeech should be brief, respectful, and avoid a condescending or overly pedagogical tone.

To evaluate the effectiveness of the co-creation process, we surveyed participants after the co-creation workshop about what they liked, disliked, suggested for improvement, and took away from the experience. 4 Survey results showed participants responded positively to the workshop’s structure, informative inputs, and especially its diverse composition of researchers, practitioners, influencers, and citizens. Suggested improvements included shortening group work in favor of more hands-on tasks. Key takeaways were learning new information and understanding other stakeholders’ perspectives.

Taken together, the activities in the co-creation phase, resulted in three conclusions for content production and its likely effectiveness. First, to raise awareness and reflection (Communication Goal 1), it was deemed necessary to inform about the prevalence and consequences of harassment against scientists and science communicators and to help users distinguish between harassment and criticism. Regarding the latter, respondents emphasized both the difficulty of making this distinction and its crucial role in their decision to intervene. This distinction was particularly important, as the goal of the intervention was not to curtail critical discourse. We also aimed to inform about the legal aspects and existing support structures. Second, to strengthen perceived empowerment (Communication Goal 2), it was considered essential to inform about easy, risk-free ways to intervene, as we were particularly concerned not to make users vulnerable to backlash. Our messages thus focus on providing positive direct feedback to targets to help them feel less isolated and to foster benign discourse (e.g., liking the original post of a harassed scientist or writing a positive comment) or getting involved indirectly (reporting harassment, sending private supportive messages to targets). However, opinions were divided on whether the communication goals of raising awareness, encouraging reflection, and promoting empowerment could be achieved more effectively by presenting the consequences of harassment against scientists, the difference between harassment and criticism, and the beneficial effects of intervention using vivid individual examples or general information. Third, for effective presentation, the following modes were identified: using visual content and short videos (max. 60 s) and showcasing easily replicable behavior. Specifically, to simplify bystander intervention, we agreed on providing shareable, copy-ready examples of sentences that communicate support toward targeted individuals. We ensured messages were concise and avoided an overly pedagogical tone, as emphasized by participants during observation and workshop. Furthermore, a suggestion by the involved influencers was to let affected scientists and science communicators themselves express appreciation for bystander intervention.

At the end of this phase, we also referred to the experts from our initial discussions to gather feedback on the co-creation outcomes. These condensed results informed the production phase.

Production Phase

Procedure

The co-creation phase produced a plan for an Instagram campaign with (audio-)visual content hosted on a website, including (a) animated videos to raise awareness, reflection, and promote low-risk bystander interventions; 5 (b) video messages from prominent science communicators appreciating bystander intervention; (c) summaries of scientific findings on harassment of scientists; (d) information on support structures; (e) legal guidance; and (f) ready-made bystander response examples.

For the animated videos (a), we conducted survey experiments to identify effective narratives (detailed below), then collaborated with a professional video agency through multiple feedback loops to refine content and format. For the appreciation videos featuring prominent science communicators (b), we recruited scientists and communicators through the project team’s network. We contacted 24 active public speakers who had previously experienced online harassment, inviting them to self-record brief reflections on those experiences and on how bystander interventions supported and motivated them to continue engaging in science communication. Five agreed to participate (four science communicators, one scientist). 6

We selected studies on the prevalence and consequences of harassment targeting scientists and science communicators (e.g., Global Witness, 2023; Nogrady, 2021) and adapted their key findings for Instagram posts (c). We contacted existing support structures for permission to advertise their services (d), consulted a lawyer on legal options for those affected and prepared this for social media (e), and gathered best practice counterspeech examples from literature, public discourse, and contacted communicators (f). In total, we produced 20 Instagram posts, in German and also English for broader accessibility.

Results and Evaluation

We conducted two online survey experiments to evaluate the animated videos as our core campaign component, assessing whether they increased individuals’ intervention intention. We obtained IRB approval from the Department of Media and Communication at the LMU Munich. Result tables, scales, and stimuli are in the Supplementary Material.

In Experiment 1, we tested four messages grounded in conclusions about the practical implementation of our two communication goals, which emerged from the co-creation phase (i.e., participant intervention and workshop) and aligned with theoretical considerations and empirical evidence on promoting bystander interventions (Obermaier et al., 2025; Schmitt et al., 2018): (a) raising awareness for the harm of harassment against scientists, (b) reflection on differentiating criticism and harassment, or empowerment for (c) intervention in general, and (d) low-risk intervention in particular—the latter being emphasized in the workshop as essential to avoid making users vulnerable to backlash. To address the debate about presentation modes raised in the workshop, we also examined whether these effects differed when messages were delivered as an example case (a chronological story about an individual) versus generic information (statistics) (Krämer & Peter, 2020), resulting in a 4 × 2 between-subjects design plus a control group. 7

Sampling

In early fall 2024, we recruited a quota-representative sample of German social media users aged 18+ years (N = 713; 48% female, 0.3% nonbinary, mean age = 44 years, SD=14.90, 55% university entrance qualification). According to a priori power analysis this sample size is able detect small effects with f > .15 (power = .80, α = .05).

Stimuli

Participants viewed one of eight plain text messages (Table S1) presented as social media posts. The control group saw no message. Messages (a) described harassment’s harmful consequences for scientists (awareness), (b) differentiated criticism from harassment (reflection), (c) explained that bystander inaction can signal approval (empowerment “reaction”), or (d) described low-risk intervention’s positive effects (empowerment “low-risk”). Each message had two versions: an evidence-based case (featuring a fictitious scientist) or generic information from empirical studies (e.g., “half of scientists”).

Measures

Participants provided sociodemographics, political orientation on a 11-point left-to-right scale (M = 5.50, SD = 2.72), social media activity (1 = almost always passive, 5 = almost always active; M = 2.46, SD = 1.52), and science-related profession (0%) 8 based on standard measurement. On 5-point scales (1 = do not agree at all, 5 = fully agree), we accounted for perceived message quality (11 items, α =.94, M = 3.42, SD = 0.84), message shareability (4 items, α = .90, M = 2.64, SD = 1.18), and reactance (4 items, α = .89, M = 2.19, SD = 1.00). We measured the perceived threat for targets (4 items, α = .94, M = 4.19, SD = 0.89), the perceived personal responsibility to intervene (3 items, α = .94, M = 2.57, SD = 1.23), and the degree of motivation for counterspeech (2 items, α = .87, M = 2.53, SD = 1.31), liking targets’ posts (M = 2.51, SD = 1.48), and reporting (M = 3.00, SD = 1.47).

Results and discussion

Participants rated all messages above the scale midpoint for quality, F(7, 618) = 0.88, p =.530, η2< 0.01. Message shareability scored slightly below the scale midpoint with no differences between versions, F(7, 618) = 1.01, p = .420, η2 = 0.01. For undesired effects (Volk & Schäfer, 2024), perceived reactance was below the scale midpoint equally across messages, F(7, 618) = 1.66, p = .120, η2 = 0.02. Participants perceived a higher threat of harassment against scientists with any awareness or reflection message versus no message, but messages did not affect perceived personal responsibility or intention to speak out or report. However, participants who read the generic empowerment message emphasizing low-risk actions were particularly willing to like posts from targets (Table S2 [Duncan post hoc test (p < .05)], small effects ranging from η2 = 0.02–0.03).

In sum, the results of Experiment 1 indicate that participants who saw any awareness or reflection message felt scientists faced more harassment, but only those who read the generic empowerment message were more willing to support targets through simple, low-risk actions like liking their posts.

All message versions were rated as high in quality, moderate in shareability, and low in reactance, so none were ruled out for production of the animated videos. Given the impact of the awareness message on perceived threat, we chose to produce a video that explains the harm caused by online harassment against scientists. A separate video focuses on reflection, helping viewers distinguish between criticism and harassment. For empowerment content, we opted for a generic message emphasizing the value of any positive, low-risk response (combining both empowerment messages). All three animated videos each portray a scientist, differing in gender, age, and origin to reflect the diversity of the scientific community. In the videos, the scientists share their research on social media, shown through their smartphones, while a narrator discusses the core themes of awareness, reflection, and empowerment, illustrated through these examples. 9

In Experiment 2, we tested how the animated videos (produced based on the results of Experiment 1) affected intervention intentions in harassment against scientists, resulting in a 3 × 1 between-subject design plus a control group. 10

Sampling

In late fall 2024, we recruited a quota-based sample of German social media users aged 18+ years (N = 531; 49% female, 0.4% nonbinary, mean age = 44 years, SD = 16.70, 60% university entrance qualification). A priori power analysis showed that this sample is able to detect small effects with f > .15 (power =.80, α = .05).

Stimuli

The animated videos (as described above), presented as social media posts, (a) described harassment’s harm to scientists (awareness), (b) explained the difference between criticism and harassment (reflection), or (c) explained that bystander inaction can signal approval of harassment while emphasizing low-risk intervention’s positive effects (empowerment).

Measures

Participants provided sociodemographics, political orientation on a 11-point left-to-right scale (M = 5.26, SD = 2.69), social media activity (1 = almost always passive, 5 = almost always active; M = 2.11, SD = 1.37), and science-related profession (0%). We inquired perceived message quality (11 items, α = .94, M = 3.95, SD = 0.84), message shareability (4 items, α = .89, M = 2.97, SD = 1.18), and reactance (4 items, α = .91, M = 1.71, SD = 0.98). We measured perceived awareness and reflection (6 items, α = .85, M = 3.53, SD = 0.84), and perceived empowerment (5 items, α = .82, M = 3.11, SD = 0.97). 11 We recorded the perceived threat for the targets (4 items, α = .90, M = 4.17, SD = 0.92), the perceived personal responsibility to intervene (3 items, α = .94, M = 2.50, SD = 1.20), and the degree of motivation for counterspeech (2 items, α = .85, M = 2.45, SD = 1.24), liking targets’ posts (M = 2.65, SD = 1.51), and reporting (M = 2.92, SD = 1.48).

Results and discussion

Participants rated all videos around the midpoint in perceived quality, F(2, 396) = 2.80, p = .062, η2 = 0.01, and shareability, F(2, 396) = 0.49, p = .610, η2 < 0.001. Perceived reactance did not differ between videos and was very low (<2.00 on average), F(2, 396) = 2.54, p =.080, η2 = 0.01. The reflection video increased reported awareness and reflection compared to the awareness video, which was not the case for the empowerment and control conditions. Empowerment was higher after watching the reflection or empowerment videos compared to the awareness video or control. While none of the videos affected BIM components or reporting intentions, all increased participants’ motivation to engage in counterspeech and like posts from targeted scientists, compared to the control (Table S3 [Duncan post hoc test, p < .05, or Games–Howell post hoc test], small effects ranging from η2 = 0.01–0.03).

Overall, the findings of Experiment 2 suggest that all videos, especially those on reflection and empowerment, increased participants’ awareness, reflection, and sense of empowerment, and made them more motivated to engage in low-risk supportive actions like counterspeech or liking posts from targeted scientists.

Dissemination Phase

Procedure

For the campaign, we created an Instagram account [@standup4science] and posted content from late November through December 2024. Dedicated project funding for advertising enabled the promotion of seven selected posts, enhancing the campaign’s visibility and reach. Ads targeted users aged 18+ years in German-speaking countries (Germany, Austria, Switzerland) and were further filtered by interests in science and science communication to reach audiences most likely to support harassed scientists and communicators.

To launch the campaign, we hosted a webinar in a local TV studio with a professional moderator. Two project team members—one researcher and one practitioner—discussed the campaign’s goals, development process, and content, and answered audience questions. The webinar, promoted to researchers, science communicators, journalists, and citizens, attracted 80 to 90 participants and was later broadcast on a regional TV channel.

Results and Evaluation

To evaluate campaign reach and feedback, we used a survey, reports of dissemination data, and qualitative content analysis. For the webinar, we conducted an online survey and gathered approximate viewership data from the regional TV channel. For the social media campaign, we provided an overview of social media reach metrics and qualitatively analyzed user comments.

Post-webinar, we surveyed participants on what they liked, disliked, and learned. 12 Respondents (N = 13) appreciated the professional setting and informative content, noting the topic’s relevance and learning about support structures. The TV channel reported approximately 42,000 average weekday viewers.

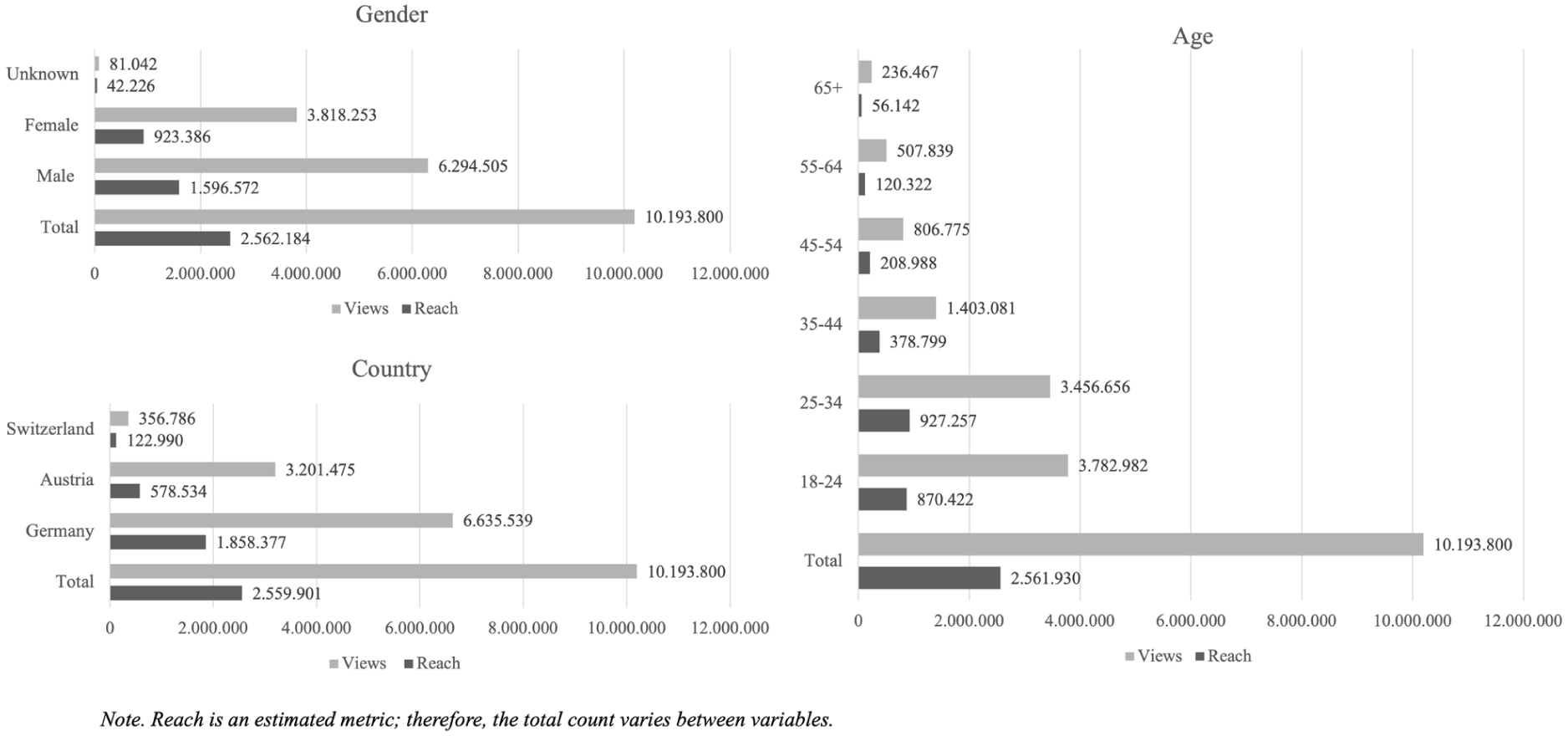

To evaluate the dissemination of the campaign itself, we report the number of views generated by the seven advertised postings and the number of individual Instagram profiles reached (i.e., reach). In total, the advertised content reached over 10 million views and about 2.5 million individual profiles (Figure 2).

Overview Reach and Views for Gender, Age, and Country.

To assess audience representativeness (Volk & Schäfer, 2024), we compared it by gender and age to general Instagram users in German-speaking countries. Our audience included slightly more male users and modestly overrepresented the youngest age group (Kaiser, 2025a, 2025b, 2025c).

The campaign postings generated few comments (28 comments for a total of 20 posts). These were qualitatively analyzed by two of the authors, via consensual coding. Overall, the comments were predominantly positive, particularly those related to the video messages by prominent science communicators. Four themes emerged: (a) affirmative feedback from potential bystanders (eight comments), mostly expressed through positive emojis (hearts, applause, smileys); (b) affirmative feedback from scientists and science communicators (12 comments), often expressing gratitude for raising awareness (e.g., “Thank you—support is so important!”); (c) neutral references to featured science communicators (three comments, e.g., “I haven’t seen him in a long time”); and (d) negative feedback with mild incivility (five comments), such as one accusing a featured communicator of political bias (in uncivil terms) and another questioning the trustworthiness of scientists in general.

Overall, results demonstrate substantial campaign reach and engagement, particularly among younger audiences, while maintaining a largely positive tone in user interactions. Although comment activity was limited, the responses suggest that the campaign’s messages resonated with both bystanders and science communicators.

Conclusion

This research note reports an evidence-based, transdisciplinary co-creation process to design, implement, and evaluate a social media intervention concerning online harassment of scientists and science communicators. Below, we reflect on key lessons for research–practice collaboration and future directions in science communication research on harassment and discuss limitations.

Considering the research–practice collaboration, the transdisciplinary approach integrating scientific evidence and practitioner and stakeholder insights proved highly valuable. Evaluation showed all stakeholders viewed the approach as beneficial and valued engaging with others’ perspectives. As scholars, we observed how practitioners, influencers, and citizens enriched the final campaign beyond our initial vision, while practitioners benefited from the research evidence we provided.

Despite these benefits and frequent calls for transdisciplinary collaboration (Fischer et al., 2024; Scheufele, 2022), it demands substantial time not typically accounted for in researchers’ and practitioners’ job profiles, adding to regular workloads. Future collaborations should consider involving social media professionals for content creation and campaign management to ease this burden. In addition, while influencer collaboration proved valuable, financial constraints limited it. Future projects integrating influencers’ expertise should allocate dedicated funding for appropriate compensation.

Our project has several implications for research. First, for future research on combating online harassment, our experimental evaluation revealed that while the videos did not increase participants’ general sense of threat or responsibility to intervene—unsurprisingly, given that the videos were not linked to a specific incident—they were still effective in keyways. Videos increased viewers’ motivation to engage in low-risk supportive behaviors, such as liking posts from targeted scientists or contributing counterspeech, without raising reactance among participants. These findings highlight the potential of video-based interventions to foster bystander awareness, reflection, and empowerment. They suggest that even without immediate context, such materials can encourage meaningful support for those targeted by online harassment. For research on bystander intervention, these findings underscore the importance of exploring the factors that encourage low-risk forms of intervention and of considering how anticipated sanctions (e.g., backlash from perpetrators) may influence individuals’ willingness to act. They also emphasize the crucial role of promoting critical digital media literacy related to online harassment, as such awareness and understanding can empower people to engage in prosocial intervention (Obermaier et al., 2025). Moreover, video formats appear especially promising for conveying complex issues—such as distinguishing between criticism and harassment—while still inspiring viewers to act. However, further research is needed to assess whether these effects persist over time, particularly when viewers encounter real instances of harassment, and to explore their impact across different cultural or national contexts.

Second, our findings underscore the critical role of audience perceptions of harassment against scientists and science communicators in shaping bystander intervention. In particular, they highlight the importance of distinguishing between legitimate criticism and illegitimate harassment. During the co-creation phase, participants discussed the difficulty of making this distinction, noting that negative feedback is not inherently inappropriate and can warrant engagement, whereas more severe or personal attacks are perceived as illegitimate. Findings from Experiment 2 further show that explicitly communicating this distinction was especially effective in increasing participants’ problem awareness and motivation to intervene. Together, these results suggest that many users struggle to differentiate legitimate science criticism from harassment—a gray area that offenders can strategically exploit because it lies outside clear legal boundaries. As audience evaluations appear to predict bystander intervention, future research should further examine how citizens perceive and navigate this boundary.

Third, the audience feedback highlighted that especially scientists and science communicators valued the campaign’s role in raising awareness of this issue, suggesting it may have helped them feel less isolated in facing harassment. Future studies should examine whether increased awareness and perceived social support help mitigate some of the negative psychological effects of harassment, with particular attention to marginalized groups who are disproportionately affected (Gosse et al., 2021; Väliverronen & Saikkonen, 2021b). Fourth, the messages by prominent science communicators expressing gratitude for bystander interventions received particularly positive engagement. Since we did not test the effects of these messages on bystander intervention in our experiments, this is a fruitful avenue for future research.

Finally, for future research and science communication alike, this study underscores the importance of addressing all groups involved in online harassment—not just the victims and perpetrators, but also the bystanders, whose actions can help shape a more respectful and evidence-based discourse.

Turning to the limitations, we note the short duration of the campaign (20 posts over 2 weeks) and its restriction to Instagram. Future initiatives with greater resources could extend campaigns across multiple platforms. Although we used paid promotion to enhance visibility, competition for attention on social media limited overall reach. The campaign was conducted only in German-speaking countries; however, to promote inclusivity, we also created and shared English versions of all materials, which remain available for continued use 13 . In addition, the response rate in our survey-based evaluation of the webinar was relatively low—a well-documented challenge in science communication evaluations (Volk & Schäfer, 2024). Finally, despite our comprehensive evaluation throughout all phases of the co-creation process—using mixed methods, including experimental testing of materials before production and during dissemination—we cannot yet assess the long-term societal impacts of the intervention (Volk, 2024).

Despite these limitations, our evaluation suggests that the intervention reached a substantial number of potential bystanders and had several beneficial outcomes. Notably, the co-created materials proved impactful and remain useful for future science communication efforts. Feedback from scientists further suggested that the campaign’s awareness-raising efforts were well received and may help mitigate some of the negative effects of harassment. We hope that these publicly available materials, together with the insights from our transdisciplinary co-creation process, will support researchers and practitioners in addressing the ongoing challenge of harassment in science communication.

Supplemental Material

sj-docx-1-scx-10.1177_10755470261421146 – Supplemental material for Prosocial Behavior in the Context of Online Harassment of Scientists and Science Communicators: Designing and Implementing a Social Media Intervention in a Transdisciplinary Co-Creation Process

Supplemental material, sj-docx-1-scx-10.1177_10755470261421146 for Prosocial Behavior in the Context of Online Harassment of Scientists and Science Communicators: Designing and Implementing a Social Media Intervention in a Transdisciplinary Co-Creation Process by Jana Laura Egelhofer, Bernhard Goodwin and Magdalena Obermaier in Science Communication

Footnotes

Acknowledgements

We would like to sincerely thank our collaborators at Scicomm-Support, especially Julia Wandt and Kristin Küter, for their valuable collaboration and commitment throughout this project.

Author Contributions

Conceptualization (J.L.E.), methodological implementation (M.O., JL.E., B.G.), data analysis (M.O., J.L.E.), writing: original draft (J.L.E., M.O.), writing: review & editing (J.L.E., M.O., B.G.).

Ethical Considerations

The Institutional Review Board of the Department of Media and Communication at LMU Munich approved our online survey experiments (approval: 2024-20) on August 6, 2024.

Consent to Participate

All participants in the online survey experiments and evaluation surveys provided informed consent before beginning the survey and were clearly informed that their participation was voluntary, in accordance with GDPR guidelines. Those who took part in the online survey experiments also received a detailed debriefing at the end of the study

Consent for Publication

Not applicable

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was supported by the German Federal Ministry of Education and Research.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets generated during and/or analyzed during the current study are available from the corresponding author on reasonable request.