Abstract

This research examines whether language styles in U.S. health news relate to scientificness. Study 1 uses human ratings to train machine learning models that classify sentences as scientific or nonscientific. A text mining approach predicts the proportion of scientific sentences, on the basis of language features. Two style categories emerge: one reflecting ideal professional standards of science journalism (hedging, neutrality, objectivity) and another linked to science complexity (low readability, complexity, formality). Study 2 then tests whether perceptions of these six features influence perceived scientificness and intended outcomes through message experiments.

Keywords

In analyses of health news stories, 1 researchers usually consider either the perceived characteristics of the messages or else what is being communicated. When they focus on perceived characteristics, the studies frequently address features like credibility (Vu & Chen, 2023), informativeness (Skovsgaard & Hopmann, 2020), or truthfulness (Ha et al., 2023), 2 without undertaking similar considerations of scientificness, as another relevant characteristic of health news. In such a context, scientificness implies that the story features evidence and findings derived from scientific research (Chang, 2022). More formally, scientificness refers to “the extent to which the proposed information has been acquired by scientific methods and is, therefore, a product of the scientific community” (Bromme et al., 2015, p. 38). Even if scientific content might enhance perceptions of other message features (e.g., credibility), each construct captures a unique evaluative dimension, such that not all credible, informative, or truthful content is necessarily scientific. Therefore, separately exploring the scientificness that characterizes health news is critical.

If researchers instead focus on what is being communicated, a primary consideration is the content or information presented, such as which topics tend to be covered more (Manganello & Blake, 2010) or the frames used to present these topics (Dan & Raupp, 2018). Relatively less research deals with style, even though a text’s style (i.e., use of words) also determines what is being communicated and delivers important information (Pennebaker et al., 2003; Tausczik & Pennebaker, 2010). This imbalance is evident in the minimal research that deals with scientificness in health news too. For example, Chang (2022) explores the content features that people associate with scientificness in health news, without assessing the associated styles. In an attempt to address both these gaps, the current research explicitly focuses on language styles that might be associated with scientificness in health news.

Addressing these two gaps in health news research is especially relevant in current information environments, in which most consumers obtain science information from general news sources. Specifically, 71% of consumers express interest in science news, especially as it pertains to health-related topics (e.g., medicine, nutrition, and brain health; Funk et al., 2017). Yet since the onset of the COVID-19 pandemic, health information has become increasingly problematic. In chaotic information environments, people struggle to differentiate between science-based information and unreliable misinformation (Naeem & Bhatti, 2020). This study seeks to determine in particular if specific language features signal scientificness to readers and thus influence their evaluations of health news stories, beyond the influence of content features (Chang, 2022).

According to language expectancy theory (LET), language is a rule-governed system, through which communicators form normative expectations based on the context, so communicative effectiveness depends on their adherence to those expectations (Burgoon et al., 2002; Burgoon & Miller, 1985). Building on this framework, we propose that readers hold expectations about specific language features that signal scientificness in news, and texts exhibiting those features are more likely to be perceived as scientific. We assess two categories of style features that might evoke perceptions of scientificness in health news: expected hallmarks of science reporting (hedging, neutrality, and objectivity) and the nature of the topics or substance being covered (readability, cognitive complexity, and formality). With the recognition that different audiences are more or less sensitive to style variations (Thomm & Bromme, 2012), we leverage both objective and subjective assessments. The former involves method triangulation linked to computational linguistics (Study 1), and the latter uses message exposures (Study 2) to gauge readers’ perceptions. In Study 1, a survey prompted respondents to indicate what leads them to perceive a health news story as scientific—that is, which criteria they apply to make such judgments. Annotators, presented with these criteria, then rated sentences in health news stories as scientific or not. With these ratings, we train classification models, using supervised machine learning, to classify any particular sentence as scientific or not, as well as calculate the proportion of scientific sentences in each article. In turn, we detect language features and test which ones are most significantly associated with the proportion of scientific sentences in a news story. In Study 2, we exposed participants to various health news articles and measured their subjective perceptions of the language features as determinants of their perceptions of the scientificness of health news stories. In this case, our specific focus is on health news coverage of research that reports on behavior–health associations, such as scientific studies that link modifiable lifestyle behaviors to disease risks or prevention. We also test if perceived scientificness informs the perceived effectiveness of the story for delivering health messages and the likelihood that readers adopt the health-related behaviors advocated by the research being reported on in the news article. With these two complementary approaches, we can assess potential misalignments: Linguistic features designed to convey scientificness may neither be perceived as such by readers nor sufficiently motivate their behaviors. To test these predictions empirically, we analyze health news published by well-known outlets in the United States and survey U.S. respondents.

Characteristics and Styles of Scientificness

As Thomm and Bromme (2012) define it, scientific discourse relies on shared norms within an expert community. It exemplifies these norms by adhering to common structures, presenting research in an organized manner, and using language that aligns with the requirements of the scientific method, argumentation, and theory. By adhering to these established conventions, scientific work gains acceptance and fosters trust; it also can affirm an author’s affiliation with the scientific community.

Health news stories often convey scientificness. First, advances in health knowledge usually stem from scientific research, some of which affects people and society more broadly, so journalists seek to share such insights (Atkin et al., 2008). In the process, journalists cover scientific content and also may borrow a scientific style, even if unconsciously. Second, scientificness can strongly influence quality evaluations. Texts judged as more scientific tend to be seen as more credible (Thiebach et al., 2015; Thomm & Bromme, 2012). Journalists may consciously or inadvertently strive for credibility in their stories by employing a writing style associated with scientificness.

Style refers to how people use words (Pennebaker et al., 2003), and these usages provide information about their mental, social, and situational states, as well as their intentions. Because writing is a social and communicative interaction between writers and readers, writers frequently incorporate their perspectives into their discourses to convey their attitudes about the content to the intended audience (Hyland & Tse, 2004). If writers (e.g., journalists) intend to display scientificness in their writing, readers should be able to detect that intention from their language style. Therefore, the language styles featured in a health news story plausibly might prompt readers to regard it as scientific, if that is the writer’s intent. Thomm and Bromme (2012) explore whether texts that rely on the passive voice are associated with scientificness, although their study varies both the grammatical voice and content elements associated with science (e.g., references and methods), so it is difficult to separate out the unique impacts. Still, they note that, compared with their recognition of content elements, participants are less likely to pay attention to or identify styles (e.g., passive voice). Therefore, to investigate language styles associated with scientificness in health news accurately and comprehensively, we adopt a novel research approach in Study 1, relying on annotations, machine learning classification models, and automated text analysis. In Study 2, we use a more traditional message experiment to detect readers’ perceptions.

Theoretical Framework

As noted previously, LET describes language as a rule-governed system that informs people’s normative expectations across contexts (Burgoon et al., 2002; Burgoon & Miller, 1985). To align their language with such expectations, communicators conform to standards of appropriateness, such that those norms get reinforced and legitimized during communicative exchanges. Although rooted in interpersonal communication, LET also has broader relevance (Buller et al., 2000). By applying LET to health news, we seek to determine which language features people expect to find in health news that presents scientific information.

When messages deviate from socially accepted norms for appropriate communication, they likely fail to exert the intended influence. The LET notion that communicative effectiveness depends on adherence to normative expectations implies a pertinent prediction regarding scientific health communication: Messages that readers perceive as consistent with their expectations of scientific writing—because they use expected linguistic features—should be more effective for signaling scientificness. Testing this proposition represents our second main research objective.

Readers’ expectations and journalists’ styles in health reporting likely reflect various inputs, such as journalistic professionalism or the inherent nature of scientific topics. To address our first research objective, we examine two broad categories of language features that represent those influences, which we label genre-related and substance-related. A journalistic style generally prioritizes neutrality and objectivity (Thomas, 2021), and in addition, science-related journalism must reflect the tentativeness and caution surrounding scientific research, such that it calls for hedging, to ensure accurate representations of the scientific findings (Jensen, 2008; Jensen et al., 2011). Language used in scientific health news probably is expected to adhere to these principles, more so than nonscience reporting. Therefore, such genre-related features likely are salient. Substance-related features instead might derive from the inherent complexity of scientific topics, which can shape how they are conveyed, such as with low readability, cognitive complexity, and formality. Such content tends to be dense, abstract, and technical.

Genre-Related Language Features

Hedging

A common linguistic strategy in scholarly discourse, hedging conveys tentativeness and acknowledges other possibilities and limitations (Hyland, 1996a, 1996b). In academic writing, careful presentations of unproven propositions are vital, and hedging functions to express caution and precision. Textual hedges (e.g., “possible” and “might”) convey purposive vagueness and create a deliberate distance between the writer and the conveyed content. In contrast, with boosters (e.g., “clearly” and “obviously”), writers assert their propositions with confidence and commitment (Hu & Cao, 2011; Hyland, 1998). As prior research suggests (Hyland, 1996a, 1996b), when writing contains more hedging, people associate it with being scientific. Extending this logic to coverage in news reporting on science research—and its contested, evolving findings—journalists might use rhetorical cues to signal uncertainty (Peters & Dunwoody, 2016), including hedging as one such feature. Thus, we predict:

Sentiment: Positive and Negative

Scientists avoid emotionally charged language to maintain perceived objectivity and adhere to general ideals for rational discourse (Kueffer & Larson, 2014). However, researchers competing for limited journal space might present their research in more value-expressive ways. A review of scientific PubMed abstracts (1974–2013) shows increasing uses of value-laden positive (e.g., “groundbreaking” and “promising”) and negative (e.g., “discouraging” and “unpromising”) words over time (Vinkers et al., 2015). A large-scale investigation of life science research also indicates a positivity bias; researchers use more positive than negative words (Wen & Lei, 2022). Despite this trend, to signal neutrality, authors might try to avoid emotional words (Pang & Lee, 2004). Then, regardless of its valence, sentiment could violate people’s expectations of neutrality in science. If the very presence of sentiment undermines perceived neutrality in scientific research, it likewise might do so in science journalism. Therefore, we assess its relation to the share of scientific sentences in health news to test whether,

Objectivity versus Subjectivity

In natural language, linguistic elements that convey opinions signal subjectivity (Wiebe et al., 2004), whereas objectivity is indicated by accuracy and language that upholds the notion of truth (Boudana, 2011). Accordingly, textual information can be classified broadly (Liu, 2010), as either facts, which are objective statements regarding entities, events, and their attributes (e.g., “The finding was published in the journal Science on Thursday”), or opinions, which consist of subjective expressions conveying individual evaluations, assessments, and conjectures about entities, events, and their attributes (e.g., “Many patients hope this new treatment option is a game changer for their chronic pain management”). Natural language researchers also have sought to detect subjectivity (Pang & Lee, 2004), including in media coverage (Junqué de Fortuny et al., 2012). For example, health news on Twitter tends to be seen as objective rather than subjective (Kolajo & Kolajo, 2018), probably because it often derives from scientific research (Atkin et al., 2008), and people expect objectivity in scientific writing. Therefore,

Substance-Related Language Features

Readability

Reflecting the “ease of understanding or comprehension due to the style of writing” (Klare, 1963, p. 15), the readability of most scientific texts (e.g., abstracts, introduction, and conclusions of journal articles) is low (Hartley et al., 2003; Hayes, 1992). Furthermore, the rapid progression of science has resulted in increased specialization, greater scientific rigor (Sawyer et al., 2008), and rapid cumulative growth of scientific knowledge (Plavén-Sigray et al., 2017). Such trends encourage more complex language in scientific writing. News stories covering health issues or knowledge advances report on complex topics, often by citing scientific articles characterized by abstract, technical language (Fang, 2005). Journalists may need to use similarly difficult or complicated language to be accurate. When Plavén-Sigray et al. (2017) analyzed 709,577 article abstracts, published between 1881 and 2015 in 123 highly cited journals in the general, biomedical, and life sciences domains, they identified a decline in the readability of scientific texts over time. They also proposed a reason for this decline: A rise in scientific jargon has led to increasingly specialized vocabularies, which make texts less accessible to general audiences. Accordingly, we predict a negative relationship between readability (i.e., opposite of density) and the percentage of scientific sentences. Education research has established different formulas to detect the readability of scientific texts or health news (Gazni, 2011; Plavén-Sigray et al., 2017). For this study, we apply the New Dale–Chall Readability Formula (Chall & Dale, 1995), for which higher scores indicate greater comprehension difficulty.

Cognitive Complexity

Cognitive complexity, or “the complexity of one’s thoughts” (Woodard et al., 2021, p. 95), can be revealed by the language styles that writers use (Pennebaker et al., 2003). It is determined by two dimensions: differentiation and integration. Differentiation reflects the width of perspectives and the extent to which a person maintains distinct conceptualizations of a specific subject; it is characterized by the use of exclusive words (e.g., “unless” and “rather”) and negations (e.g., “without” and “never”) (Pennebaker & King, 1999). Integration instead captures the depth of processing, according to hierarchical links established across differentiated dimensions (Woodard et al., 2021; Wyss et al., 2015); it involves the use of conjunctions (e.g., “because” and “whenever”) (Graesser et al., 2004). Researchers have applied automated text analyses to detect cognitive complexity in Bob Dylan’s lyrics (Czechowski et al., 2016), parliamentary and presidential debates (Wyss et al., 2015), interviews, press conferences (Slatcher et al., 2007), political blogs (Brundidge et al., 2014), and online news comments (Moore et al., 2021). Although people might regard texts with language styles associated with scientificness as more complicated than those without them (Thiebach et al., 2015), we know of no research that explores whether cognitive complexity embedded in word uses can predict the scientificness of a text or news story.

Formality Versus Contextuality

The meanings of formal texts usually do not vary across contexts, whereas informal texts require contextual information to support accurate interpretations (Heylighen, 1999). The context refers to “everything available for awareness which is not part of the expression itself, but which is necessary to correctly interpret the expression” (Heylighen & Dewaele, 2002, p. 297). Heylighen and Dewaele (2002) argue that contextuality is a crucial aspect of linguistic styles and define ranges from high or “contextual” to low or “formal” levels. In this domain, formality suggests that “a maximum of meaning is carried by the explicit, objective form of the expression” (Heylighen & Dewaele, 2002, p. 298). The formality of an expression implies the invariance of its meaning across situations (Heylighen, 1999), which should be an important characteristic of scientific writing. Investigating language corpora across different European cultures, Heylighen and Dewaele (2002) confirm that scientific writing demonstrates greater formality than conversations and language in novels or movies, for example. Accordingly, we hypothesize that formality functions as a significant predictor of scientificness in the texts and health news stories we analyze herein. Heylighen and Dewaele (2002) also establish a holistic metric of the extent of contextual dependence in a text excerpt, which we use to evaluate the degree of contextual nuance and formality in a linguistic sample and to test our hypothesis:

Health News Types: Research News, Research-Supported News, or General News

We have posited that journalists adopt a scientific style when covering health news stories, either because their story builds on a scientific report or because the style signals credibility. In the former case, news stories about scientific research always should feature a higher proportion of scientific sentences and relevant language features. But in the latter case, the proportion of scientific sentences should not vary across different types of stories. Therefore, we explore three types of health news stories: those building on a scientific study or journal article (research news), those mentioning scientific studies as supporting arguments (research-supported news), and others (general news). We seek answers to the following research questions (RQs):

Research Question 1: Do different types of news stories feature different percentages of scientific sentences?

Research Question 2: Are the language features that predict scientificness in different types of news stories consistent?

Study 1

News Website Samples (2019–2020)

We identified the top four news websites in the United States (Newman et al., 2020) that are not news aggregators and that feature health sections (CNN, The New York Times, Fox News, and The Washington Post) and then crawled the health news sections on their websites for 2019–2020. 3 The final sample includes 10,540 news stories (see Supplemental Table A1).

Establishing What Scientificness Means to the Public

According to prior literature, a piece of information is scientific if it is evidence-based (Radford, 2008), describes cause-and-effect relationships among events (Mertala, 2019), and involves systematic methods (Gauchat, 2011). To confirm whether the general public associates these features with scientificness in health news, we conducted a pilot study (see online Appendix A). The results confirm this consistency, so we use these three criteria of scientificness in the annotation process.

Determining Scientific Sentences

Annotating Scientific Sentences as Input for Machine Learning

We selected a sample of articles for annotation (online Appendix B). To ensure that the news stories contained news from different outlets, we randomly selected 264 stories from four outlets, constituting 12,593 sentences. To group sentences into scientific and nonscientific categories, we crowdsourced the annotation with the help of 835 MTurk workers. Each news article was assigned to a minimum of three annotators, and each annotator labeled all the sentences within a given article. Thus, each sentence was annotated by at least three annotators; if more than half of them rated a sentence as (not) scientific, it designated as such. Among the 12,593 sentences, 53.22% (N = 6,703) were categorized as scientific and 46.77% (N = 5,890) as nonscientific.

Training Classifiers With Machine Learning to Group Sentences

The 12,593 sentences in this news sample were split into training, validation, and testing sets, with a ratio of 60:20:20 (online Appendix C). For the machine learning effort, we employed the Bidirectional Encoder Representations from Transformers (BERT) model for news classification. The classification results are in Supplemental Table A2. After incorporating the additional features, the model’s accuracy improved to 92%, with F1-scores of .91 for nonscientific sentences and .93 for scientific sentences. This classifier then categorized 409,448 sentences in 10,540 articles from four media sources from 2019 to 2020. The results indicated that 52.65% (N = 215,569) of the sentences were scientific, and 47.35% (N = 193,879) were nonscientific.

Determining Story Type

Annotating Story Types as Inputs for Machine Learning

We recruited three coders to annotate the articles, using the procedure outlined by Krippendorff (2019) (online Appendix D). They identified each story as research news (reporting a particular study), research-supported news (reporting a health issue but citing research to support arguments), or general news (not mentioning any research). After establishing acceptable intercoder reliability (Krippendorff’s α from .70 to .79), they annotated randomly selected articles from the remaining sample until at least 100 articles from each news outlet had been grouped into each category, resulting in a total of 2,985 coded news articles.

Training Classifiers With Machine Learning to Group Stories Into Types

The annotated set of 2,985 news articles provided the foundation for the machine learning (online Appendix E). With the recognition that research news and research-supported news may share similar keywords, such that an accurate classification could be challenging, we divided the classification procedure into two steps. First, we trained a model to classify news into articles related to scientific research (research news and research-supported news) or else general news. Second, we trained a model to classify research-related articles into research news or research-supported news. The machine learning approach relied on the BERT model. The results in Supplemental Table A3 show that both the first- and second-step classifiers achieved accuracy and F1-scores exceeding 90%. When we applied the well-trained classifiers to all the news articles, the distribution of the three categories was as follows: research news (14.92%), research-supported news (14.92%), and general news (70.15%).

Text Feature Detection

For this study, we applied different methods, depending on which was most appropriate for each detection goal.

LIWC

With the Linguistic Inquiry and Word Count (LIWC) dictionary (Pennebaker et al., 2015), we detect hedging, sentiment, cognitive complexity, and formality. Hedging scores indicate the percentage of certainty words, less the percentage of tentative words, so positive scores indicate greater tentativeness or hedging, whereas negative scores suggest certainty. Next, we detected positive and negative words from the corpus. The positive and negative sentiment scores represent the number of positive or negative words, respectively, relative to the total word counts. We adopted Czechowski et al.’s (2016) approach for aggregating words from the LIWC 2015 categories of differentiation, conjunction, causality, insight, and preposition to generate cognitive complexity scores. Finally, we relied on Heylighen and Dewaele’s (2002) formula to generate formality scores by aggregating words with a nondeictic nature, 4 then subtracting words with a deictic nature (see Supplemental Table A4 for the formula).

Other Approaches

For style characteristics that LIWC cannot detect, we adopted alternative methods.

Objectivity versus subjectivity (supervised machine learning)

We relied on a classifier trained by Pang and Lee (2004) to detect subjective sentences in the sample. Objectivity scores represent the percentage of sentences that are not subjective, multiplied by 100 (see Supplemental Table A4).

Readability formula

We applied the New Dale–Chall Readability formula—.1579 (difficult words/words × 100) + .0496 (words/sentences). Difficult English words do not appear in the easy word list provided by Chall and Dale (1995). Higher scores indicate lower readability.

Results

Hypothesis Tests

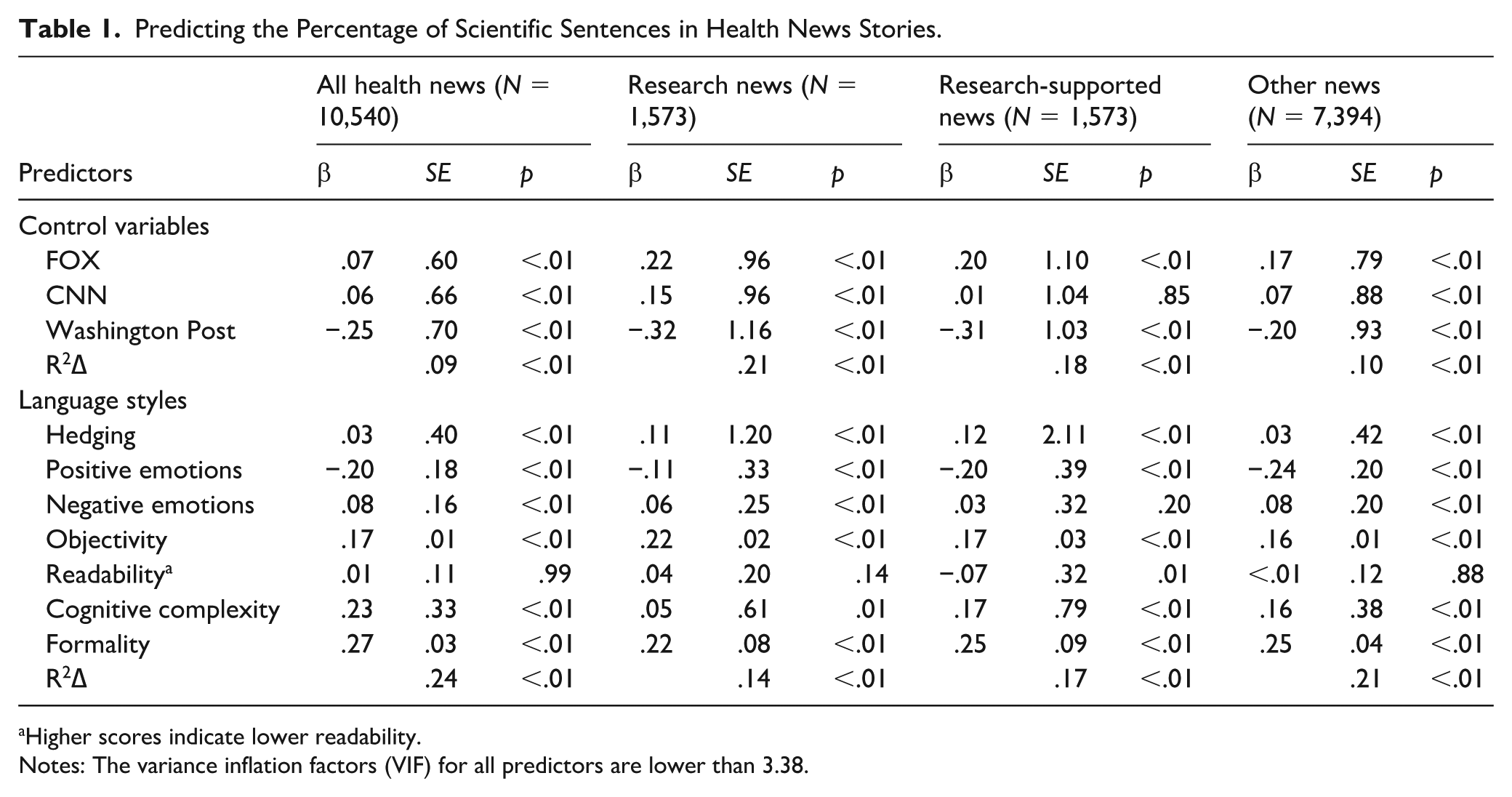

When we regressed the percentage of scientific sentences on the six predictors (mean-centered), controlling for media outlet variation, hedging (H1, β = .03, SE = .40, p < .01), objectivity (H3, β = .17, SE = .01, p < .01), cognitive complexity (H5, β = .23, SE = .33, p < .01), and formality (H6, β = .27, SE = .03, p < .01) were positive predictors of the percentage of scientific sentences, in support of H1, H3, H5, and H6. Positive emotion was a negative predictor (β = −.20, SE = .18, p < .01), in support of H2a (model 1, Table 1). However, inconsistent with H2b, negative emotion offered a positive predictor (β = .08, SE = .16, p < .01), and readability was not significant.

Predicting the Percentage of Scientific Sentences in Health News Stories.

Higher scores indicate lower readability.

Notes: The variance inflation factors (VIF) for all predictors are lower than 3.38.

Research Questions

With RQ1, we consider whether percentages of scientific sentences vary across news types. According to an analysis of variance, the effect of news type on the percentage of scientific sentences in the stories was significant (Supplemental Table A5). The percentage of scientific sentences was higher in research news (75.15%) than in research-supported news (58.67%) and general news (53.13%). With regard to RQ2, we investigate if the findings regarding the predictive effects of language features on scientificness vary across news types. The findings remained similar except for negative emotion and readability in the research-supported news sample (Table 1).

Discussion

Our analysis reveals, across all analyzed samples, that hedging, objectivity, cognitive complexity, and formality are positive predictors and positive emotion is a negative predictor of the percentage of scientific sentences. Contrary to our expectations, negative emotion emerged as a positive predictor, and readability did not significantly predict the percentage of scientific sentences. The patterns of results remain similar when we assess only research news stories.

Normative Expectations and Perceived Scientificness of Health News

Beyond the idea that language features objectively predict the scientificness of a health news story, it also is pertinent to consider how readers’ subjective perceptions of these language features inform their views. Drawing on LET, we propose that when health news messages align with idealized normative expectations of scientific writing—by featuring relevant linguistic features—they are more likely to be judged as scientific. In indirect support of this proposition, prior research shows that messages using expected language features, such as lexical and syntactic simplicity, tend to be more persuasive among audiences who expect information to be delivered concretely (Averbeck & Miller, 2014). Debates with low intensity languages, which align with voters’ expectations of trusted politicians, also appear more effective (Clementson et al., 2023). Building on this reasoning, we hypothesize:

When a news story features high levels of scientific content (e.g., scientific procedures), it generates more supportive attitudes toward the featured health issues (Chang, 2022). We argue that when readers perceive a news story as having the three genre-related and three substance-related language features associated with scientificness, they also find the news article more effective in delivering health messages. Such perceived message effectiveness (PME) (Dillard et al., 2007), as is often explored in health communication research, offers an important evaluative indicator of health messages (Noar et al., 2020).

Finally, as empirical evidence has established, health studies frequently yield behavioral recommendations, especially if they link modifiable behaviors to pertinent risks or protection tactics (e.g., sedentary time elevates cardiovascular risk), and news coverage often reports on these links and recommendations (Chang, 2016). We, therefore, test whether, in news articles that refer to research-supported recommendations, greater PME predicts stronger behavioral change intentions. Prior research indicates that PME is positively associated with intentions to adopt advocated behaviors (Bigsby et al., 2013). With Study 2, we test its effect on behavioral intentions to adopt recommendations being reported on, in line with the following hypothesis:

Study 2

Recruitment Platforms, Participants, and Procedures

We recruited and randomly assigned 362 U.S. MTurk participants who passed attention check questions to read a single news article, randomly selected from a set of 16 English-language stories that pertained to either reducing sun exposure to prevent skin cancer or reducing red meat consumption to prevent colon cancer, each of which involved eight story variations (see online Appendix G). The story variations help reduce the possibility that participants’ responses might be triggered by idiosyncratic characteristics of a particular news story. After reading the randomly assigned news story, they rated how effectively it delivers the message; how scientific the message is; and their perceptions of the (a) hedging, (b) neutrality, (c) objectivity, (d) readability, (e) cognitive complexity, and (f) formality of the story.

Measures

All questions were rated on 5-point Likert-type scales. The means and correlations among the variables are in Supplemental Table A6. Participants first rated their behavioral intentions using Chang’s (2020) scale, with three items: “I probably will/am likely to/would like to adopt the behavior advocated in the news” (α = .96). Then, they rated PME (how effectively the news story delivers the message) using Dillard et al.’s (2007) scale, with five items: “The message delivered in the news story is logical/plausible/sound/believable/contains persuasive arguments” (α = .89). Next, they rated how scientific the news appears to them, using a single item (Brewer, 2013; Chang, 2022; Thiebach et al., 2015).

Following these measures, they provided their perceptual ratings for both genre- and substance-related features. Perceived hedging used one item, “The news article is full of hedges (e.g., would, might, or probably) or words associated with uncertainty,” as did neutrality, “The overall tone of the news article is neutral,” whereas we measured objectivity with two items: “The journalist’s coverage of the health issue in this news story is objective/subjective [R]” (ρ = .43, p < .01). In addition, they rated the tone valence of the news article with two items, “The overall tone of the news story is positive/negative [R]” (ρ = .67, p < .01), which we averaged to gauge valence. Furthermore, participants rated readability. The readability formula from Study 1 was based on the number of difficult words, so we asked the Study 2 participants to rate the readability of the news story with two items that reflect this idea: “It is difficult to understand the news article” and “The words used in this news article are very difficult” (ρ = .74, p < .01). As in Study 1, higher scores indicated lower readability. They also rated the cognitive complexity of the article with two items, “It requires a lot of cognitive effort to understand the article,” and “I put a lot of cognitive effort into understanding the article” (ρ = .65, p < .01), and formality with one item: “The news article is written in a highly formal style.”

Results

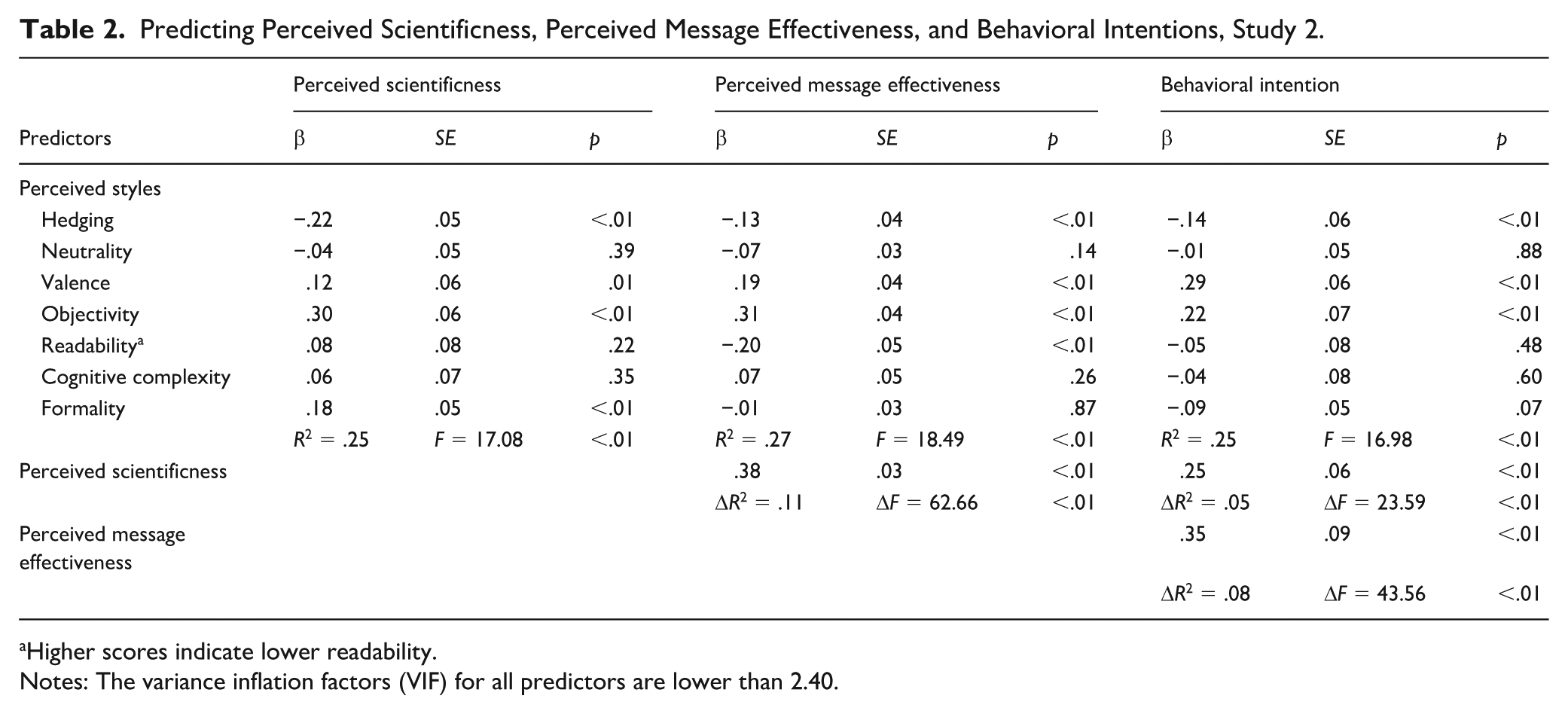

When we regressed perceived scientificness on the six characteristics (Table 2) (R2 = .25), objectivity emerged as a positive predictor, as expected. However, hedging was a negative predictor and valence a positive predictor. Valence reflects an average between positivity and reverse-scored negativity items, so we also analyzed these measures separately. As Supplemental Table A7 indicates, the positivity item significantly and positively predicted perceived scientificness, whereas the reverse-scored negativity item was not significant. Among the substance-related features, formality provided a positive predictor. In contrast with our expectations though, readability and cognitive complexity were not significant predictors. These findings thus offer support for only H7c and H7f.

Predicting Perceived Scientificness, Perceived Message Effectiveness, and Behavioral Intentions, Study 2.

Higher scores indicate lower readability.

Notes: The variance inflation factors (VIF) for all predictors are lower than 2.40.

Using multiple regression models (R2 = .38), we regressed perceived effectiveness on the six perceived styles (Step 1) and perceived scientificness (Step 2). Perceived scientificness significantly predicted the PME of the news (Table 2), in support of H8. Then, regressing intention to adopt the behaviors on the six perceived styles (Step 1), perceived scientificness (Step 2), and PME (Step 3) (R2 = .38) revealed that message effectiveness was a positive predictor of behavioral intentions (Table 2), in support of H9.

Discussion

As expected, objectivity and formality were positively associated with perceived scientificness, but in contrast with our expectations, hedging offered a negative predictor, and valence (but not neutrality) was a positive predictor. The significant effect of valence mainly appears attributable to the positive effect of the positivity measure. Readability, neutrality, and cognitive complexity did not contribute to assessments of scientificness. Notably, perceived scientificness was consistently associated with the perceived effectiveness of the story, which in turn encouraged stronger behavioral intentions.

General Discussion

Findings and Contributions

Prior research has focused on understanding people’s factual knowledge of science, their scientific literacy (for a review, see Laugksch, 2000), and their attitudes toward and perceptions of science (Wellcome Trust, 2021). Relatively less attention has been devoted to what leads people to perceive information, particularly health information, as “scientific.” In a notable exception, Chang (2022) provides preliminary insights into the content features in health news that contribute to perceptions of scientificness. To provide a fuller picture, we also address which language features are associated with such perceptions. This investigation is particularly timely, given the challenges facing science journalism today, such as the effects of the gig economy, competition from nonspecialist journalists, and the spread of scientific misinformation (Anderson & Dudo, 2023). These challenges contribute to growing concerns about the loss of public confidence and trust in science journalism. Identifying which factors, related to both styles and content, influence public perceptions of scientificness and enhance the effectiveness of news scientificness thus is essential.

By investigating the linguistic aspects of scientificness, our research confirms that language styles are associated with scientificness in health news stories, largely in line with our expectations. Without considering story types, among the genre-related styles studied herein, hedging and objectivity function as positive predictors and positive emotion as a negative predictor. Unexpectedly, negative emotion is a positive predictor, perhaps because health news often focuses on illnesses that require scientific advances to be overcome. The positive effects of negative words also might stem from the hybridity of scientific and popular journalistic styles, which evoke newsworthiness by employing negative words (Molek-Kozakowska, 2017). Among the substance-related styles, cognitive complexity and formality are positive predictors, whereas readability is not. As expected, research news contains a greater proportion of scientific sentences than research-supported or general news. For research news, the predictive effects of the language features mirror the patterns observed when we analyze all news type together.

Our findings indicate that the participants in Study 2, functioning as active decision-makers, respond positively to health news they perceive as scientific and express willingness to adopt the behaviors advocated by the research being reported in the news stories. By exploring the effects of language features on readers’ subjective perceptions, Study 2 further clarifies that perceived objectivity and formality are positively associated with perceived scientificness, echoing the Study 1 results. These language features encourage participants to regard a news story as more scientific, which increases the likelihood that they find it effective in delivering health messages and then adopt the behaviors referenced in the news.

Our multimethod design, perhaps unsurprisingly, also reveals some discrepant results across Study 1 (objective) and Study 2 (subjective). The computational linguistics approach in Study 1 uses method triangulation to identify language features; the classical message exposure paradigm in Study 2 captures readers’ subjective perceptions. By employing these different approaches, we can more accurately establish the relationships of language styles with perceived scientificness. Yet many consumers lack the ability to detect specific language features consciously, such that the Study 2 findings may indicate readers’ unconscious preferences. For example, readers might prefer to associate low hedging and high positivity with perceived scientificness. Such divergent findings underscore the value of multifaceted research approaches for unpacking how language styles shape readers’ perceptions. Ultimately, the goal is not merely to identify what constitutes objective scientificness but to understand which elements resonate with audiences.

Further Research Directions

Researchers can use similar approaches to explore whether language styles affect the degree to which a text signals other important characteristics in health communication, beyond scientificness, such as truthfulness, credibility, and informativeness. Our proposed approach might be applied to detect scientificness in other substantial text corpora too, such as social media posts about COVID-19. This study explores two common categories of language features that we expect to be associated with scientificness. Depending on the characteristics being researched, other language styles might be more relevant. For example, words associated with negation and discrepancy are negatively associated with credibility (Patro & Rathore, 2020).

In line with indications that research abstracts increasingly feature value-laden positive and negative words (Vinkers et al., 2015), we find a positive association between negative emotive language and perceived scientificness in Study 1. This reported association also might imply genre crossover effects, such that scientific reporting incorporates popular stylistic elements (Molek-Kozakowska, 2017). Extensions of the computational approach in Study 1 might identify linguistic features characteristic of popular journalism and then determine if those features increasingly appear in scientific reporting specifically or news reporting more broadly.

Another societally relevant goal is to compare language features in actual, science-based news with those in fake news. For example, fake news might employ fewer language features associated with scientificness, and if so, readers might be less susceptible to mistaking it for scientific information. However, if fake news uses language features that mimic those of science-based news, it would raise new concerns about the likelihood that readers perceive nonscientific information as scientific and thus suffer increased risk of becoming misinformed.

Further research might manipulate language styles to examine their effects on readers’ responses. For example, by crafting two news stories that cover the same issue content but vary in language styles (hedging, sentiment, objectivity, readability, cognitive complexity, formality), researchers could learn how those styles influence readers’ responses or the effects on perceived scientificness. The effects of language styles on readers’ other responses, such as perceived credibility and persuasiveness, also can be tested.

Formality emerged as a significant predictor of perceived scientificness in both Studies 1 and 2, consistent with our expectations. However, formality also tends to be more prevalent in low-context cultures like the United States, so a relevant extension for further research would be to test similar hypotheses in other cultural contexts. Culturally grounded expectations—shaped by individual, cognitive, cultural schemas (Boutyline & Soter, 2021)—influence interpretations of language features. Cross-cultural comparisons also might help determine whether the effects generalize across languages or are specific to certain linguistic and cultural environments.

Limitations

Due to a lack of established scales, Study 2 relies on single-item measures for some constructs, which are appropriate for conceptually homogeneous constructs. However, they also are susceptible to measurement error and may lack the reliability of multi-item scales (Diamantopoulos et al., 2012). The linguistic analysis for the four language features is based in the LIWC, which offers good transparency and psychological insights according to predefined linguistic categories. We used supervised machine learning for the subjectivity/objectivity classification though. It arguably captures emotional nuance and context-sensitive usage more effectively (Bantum et al., 2017), such that applications of machine learning to all the linguistic features might extend our findings. Our proposed, two-level typology distinguishes genre-related (e.g., objectivity and neutrality) from substance-related (e.g., formality and cognitive complexity) features, but some features (e.g., formality) could span both domains, and our framework might not capture such intersectionality fully. Moreover, our study focuses exclusively on English-language news outlets in the United States, so it remains unclear whether similar linguistic features signal scientificness in other cultural and linguistic contexts. Future research should broaden the scope to non–English-language settings to assess the universality or cultural specificity of these communicative markers. Finally, our analysis focuses exclusively on health news, so the generalizability of findings to other scientific domains remains an open question.

Conclusion

Our multimethod studies suggest that language styles shape scientific perceptions, which can motivate people to engage in beneficial, recommended behaviors. This research is especially relevant in modern-day settings, which feature misinformation, powerful challenges to science journalism, and diminished public trust. In such environments, identifying the factors that shape perceptions of scientificness and understanding their impacts represent critical goals.

Supplemental Material

sj-docx-1-scx-10.1177_10755470251386241 – Supplemental material for Exploring Language Styles Associated With Scientificness in Health News: A Dual Approach Using Text Mining and Reader Perception Studies

Supplemental material, sj-docx-1-scx-10.1177_10755470251386241 for Exploring Language Styles Associated With Scientificness in Health News: A Dual Approach Using Text Mining and Reader Perception Studies by Chingching Chang, Ying-Ju Chiu, Yu-Ming Hsieh and Hao-Hsuan Wang in Science Communication

Footnotes

Acknowledgements

We are grateful to Jeff Chen for his assistance with data collection for Study 1.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by the National Science and Technology Council, Taiwan (Grant No. 110-2511-H-001-001-MY3).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.