Abstract

Trust in science is polarized along political lines—but why? We show across a series of highly controlled studies (total N = 2,859) and a large-scale Twitter analysis (N = 3,977,868) that people across the political spectrum hold stereotypes about scientists’ political orientation (e.g., “scientists are liberal”) and that these stereotypes decisively affect the link between their own political orientation and their trust in scientists. Critically, this effect shaped participants’ perceptions of the value of science, protective behavior intentions during a pandemic, policy support, and information-seeking behavior. Therefore, these insights have important implications for effective science communication.

Trust in scientists and their findings is crucial for modern societies (Hendriks et al., 2016) and key to effective science communication that can tackle global challenges such as pandemics (Algan et al., 2021) and the climate crisis (Cologna & Siegrist, 2020). Even though scientists, in general, enjoy a relatively high level of trust (Hoogeveen et al., 2022; B. Kennedy et al., 2022), this positive view is not shared unanimously. In particular, there is manifold evidence that trust in scientists is a politically polarized issue: Conservatives trust science and scientists less than do liberals (Azevedo & Jost, 2021; Li & Qian, 2022). Conservatives also agree less with the scientific consensus on anthropogenic causes of climate change or human evolution, even if they are highly educated (Drummond & Fischhoff, 2017). Various explanations about the social-cognitive underpinnings of this political divide have been proposed. For example, some scholars have argued that polarized trust in science can be explained by differing cognitive sophistication or that conservatives might lack basic scientific or analytic reasoning skills (Pennycook et al., 2022). However, findings show that education might even amplify conservatives’ distrust in science, suggesting that better education cannot solve this issue (Drummond & Fischhoff, 2017). Others even proposed that conservatism is generally incompatible with trust in science as it may conflict with fundamental norms of science like universalism and communism (Lewandowsky & Oberauer, 2021). Instead of looking for fundamental differences between liberals and conservatives, we now provide a unifying stereotype-based explanation for both liberals’ and conservatives’ trust in science: We propose that trust in scientists is predicted by the perceived match between people’s stereotypes about scientists’ ideology and their own political orientation across the political spectrum. In other words, if most scientists are viewed as liberal, this ideological (dis)similarity might explain why conservatives distrust them while liberals do.

Understanding the roots of trust in scientists is crucial for building trust in science and reducing polarization, which could have various benefits on an individual and societal level. For example, people who trust scientists take climate change more seriously and are more willing to engage in climate-friendly behavior (Cologna & Siegrist, 2020; Rutjens et al., 2018). Trust in science can also lead to better medical decisions and help people reject ineffective alternative treatments (Soveri et al., 2021), and it can boost appropriate protective behaviors during a pandemic (Dohle et al., 2020; Sturgis et al., 2021). Across 12 countries (Algan et al., 2021), trust in scientists was the critical determinant of societies’ resilience in coping with the COVID-19 pandemic. These examples illustrate that people who trust scientists are, on average, better equipped for making decisions for their own and society’s benefit.

Given these various advantages of trust in scientists, it seems surprising that any specific political orientation (e.g., conservatism) would be linked to distrusting them. This political polarization is especially puzzling because scientists are often thought of as non-partisan experts, standing above the political divide (Flores et al., 2022; B. Kennedy & Funk, 2015). However, recent theorizing and research on stereotypes about groups suggest that this idealized view likely does not reflect how scientists are perceived in reality. Building on the assumptions from the Agency-Beliefs-Communion (ABC) model and the Stereotype Content Model on social group perception (Fiske et al., 2002; Koch et al., 2016; Koch, Imhoff, et al., 2020), we predict that people hold specific stereotypic beliefs about scientists’ political orientation and that these stereotypes are decisive for their trust in scientists. We define stereotypes as generalized beliefs based on someone’s social group membership (which does not necessarily imply inaccuracy; for a detailed overview of stereotype research, see Nelson, 2015).

These theoretical models posit that a social group’s perceived political orientation (i.e., conservative/liberal beliefs) is a central and spontaneously employed stereotype dimension of social group perception (Koch et al., 2016). Alongside other prominent stereotype dimensions such as a groups’ warmth/communion and competence/agency (Fiske et al., 2002; Koch et al., 2016; Koch, Imhoff, et al., 2020), people have a certain view of where to place a specific group and its members on the conservative-liberal spectrum. In general, stereotypes are highly influential when people have little information about someone (Rubinstein et al., 2018) and the political dimension, in particular, is especially relevant when people do not have relation-oriented goals with them (Nicolas et al., 2021). This is likely the case with scientists (a group that many only know from the news) who engage in unilateral science communication. Thus, this suggests that people who are confronted with scientists in general or scientists from specific disciplines (e.g., sociologists) might hold a specific, stereotypic image of these scientists’ political orientation.

Recent work on stereotypes about groups suggests that people compare their own political orientation with the stereotypically perceived political orientation of that group, and, then, based on this comparison, people decide how communal and trustworthy that group is (Koch, Dorrough, et al., 2020; Koch, Imhoff, et al., 2020): If they view the respective group as politically similar to themselves, they tend to trust and cooperate with that group, whereas dissimilarity is associated with distrust and reduced cooperation. More specifically to trust in science, recent research (Altenmüller et al., 2023) builds on similar stereotype-based reasoning (Beauchamp & Rios, 2020; Fiske & Dupree, 2014; Gligorić et al., 2022) and demonstrates that the more similar people feel toward scientists, the more they trust in scientists’ integrity and benevolence (i.e., morality-based trust) and scientists’ expertise (i.e., expertise-based trust). This is also in line with research on value similarity and trust in experts (Siegrist et al., 2000). Thus, the match of political stereotypes about scientists and people’s own political orientation might directly translate to their trust placed in scientists.

In sum, we propose a stereotype-based explanation for politically polarized trust in science. We expect that people hold spontaneous political stereotypes about scientists in general (e.g., “scientists are liberal”) and about specific scientific disciplines (e.g., “sociologists are liberal” and “economists are moderate”). We assume that the interaction between individuals’ own political orientation and these stereotypic perceptions of scientists’ political orientation may explain politically polarized trust in scientists. Consequently, we expect that conservative individuals trust stereotypically liberal scientists less than stereotypically conservative scientists. In contrast, liberal individuals should trust stereotypically liberal scientists more than stereotypically conservative scientists. To test this idea, we performed a series of highly controlled online studies (Studies 1–4) in Germany and the United States and a proof-of-concept study based on large-scale Twitter data (i.e., followers of political parties and scientists). We share all preregistrations, materials, code, and data on the OSF (https://osf.io/rvj4q). As Studies 3 and 5 relied on reanalyzing existing data, these studies were not preregistered. Regarding Study 3, we can only share data that is directly related to our reported analyses, but not the whole data set that an external collaborator collected. We cannot share data for Study 5, where sharing would violate the Twitter API terms of use. The Ethics Committee of the Faculty of Human Sciences, University of Cologne, approved this research project. All analyses were carried out in R (see OSF for used packages and versions), continuous predictors were mean-centered in regression analyses, and, in line with our preregistrations, we calculated one-sided p-values for directional hypotheses (as noted in the following analyses). Additional tables summarizing means, standard deviations, and correlations of key variables can be found in the Supplementary Information (Tables S1-S5).

Study 1

Methods

In our first study, we experimentally established the causality of the proposed effect. Participants were U.S.-based individuals recruited on Amazon MTurk (CloudResearch approved) in exchange for $0.50. We employed an experimental, between-subjects design and randomly assigned participants to one of two conditions (“liberal research institute”-condition, “conservative research institute”-condition). As preregistered, we used sequential testing as sampling strategy (Lakens, 2014), which means increasing the sample size if interim analyses are non-significant while controlling the type I error rate by adjusting the α-level. At the first analysis time, the predicted interactions were already significant (see below), even when using a corrected α of .0015. We thus determined data collection after collecting 203 participants (slightly above the preregistered 200 participants due to limited control over the recruitment process on MTurk). As preregistered, to ensure data quality, we excluded 4 participants who failed a basic attention check (see below). The final sample consisted of 199 participants (36.68% female; age (mean ± SD) 38.29 ± 10.53 years) who indicated, on average, to be politically moderate-to-slightly-liberal (3.08 ± 1.82, on a scale from 1 = very liberal to 7 = very conservative).

Participants read a short description of scientists working at either a liberal or a conservative research institute (e.g., “[ . . . ] this research institute is often described as liberal [conservative]. Many of their research topics seem consistent with core topics of the Democratic [Republican] party [ . . . ]”), depending on their experimental condition. Then, participants completed the METI trust measure (Hendriks et al., 2015), consisting of 14 bipolar items measuring morality-based trust (e.g., dishonest—honest, α = .98) and expertise-based trust (e.g., incompetent—competent, α = .95) in the imagined scientists on a 7-point scale. Participants also completed a manipulation check (“These scientists are likely”: 1 = very liberal, 7 = very conservative). Further, participants indicated how similar they felt to these scientists (“In terms of my own political and ideological views, I feel similar to this group”: 1 = not at all, 7 = completely), how they perceived the value of science produced by these scientists (“Relative to other scientific institutes, how much do you think society will benefit from the results produced by these scientists?”: 1 = not at all, 7 = very much) and they completed an attention check (“If you read this, select ‘not at all’”). Finally, participants reported their age, gender, and own political orientation using two items (“What is your political orientation?”: 1 = very liberal, 7 = very conservative; “What is your political preference?”: 1 = “strongly prefer Democrats,” 7 = “strongly prefer Republicans”), which we averaged as they were highly correlated, r = .84, p < .001.

Results

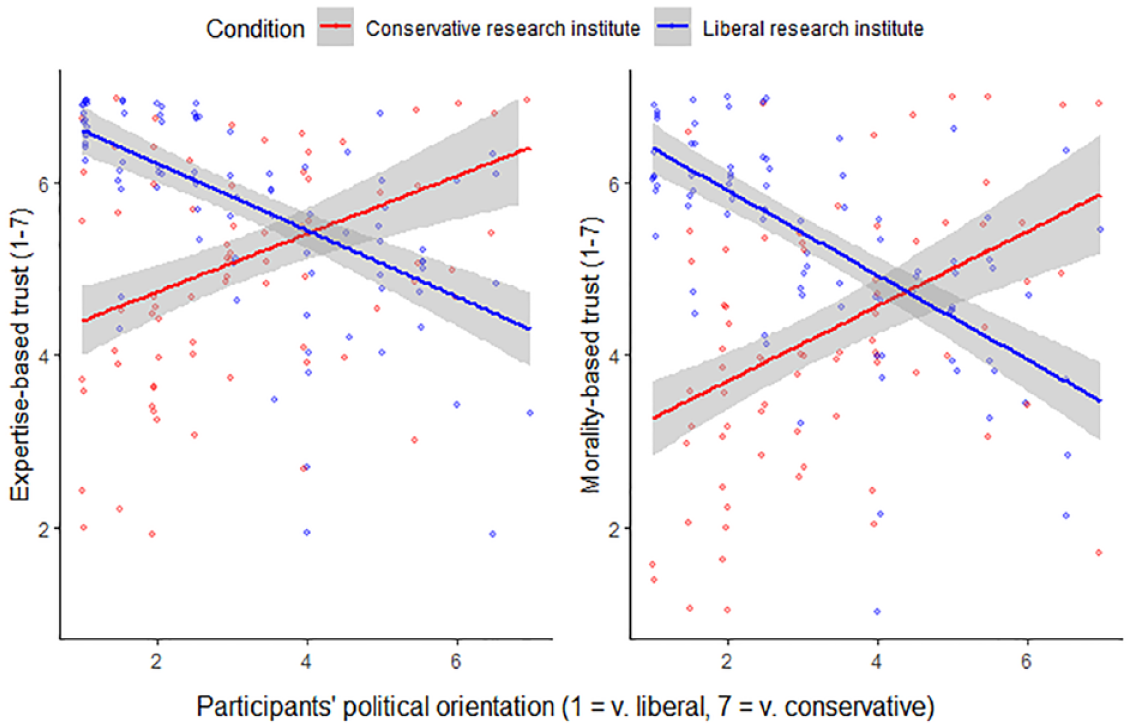

The manipulation check indicated a clear perceived difference, p < .001, d = 4.12, between the conservative (M = 6.32, SD = 0.91) and the liberal research institute (M = 1.84, SD = 1.23). The results provided experimental evidence that scientists’ perceived political orientation causally influences the link between participants’ own political orientation and their trust in these scientists, both for morality-based trust, B = −0.92, SE = 0.10, p < .001, ΔR² = .28, and expertise-based trust, B = −0.72, SE = 0.09, p < .001, ΔR² = .23. That means, for participants who imagined scientists working at a liberal research institute, conservatism was negatively associated with both types of trust. However, this pattern fully reversed for participants imagining scientists at a conservative research institute (Figure 1). We observed the same interaction when predicting participants’ indicated value of scientific output produced by these scientists, B = −1.09, SE = 0.11, p < .001, ΔR² = .30.

Expertise-Based and Morality-Based Trust as a Function of Condition (Conservative vs. Liberal Research Institute) and Participants’ Political Orientation in Study 1.

Importantly, as predicted, mediated moderation models suggested that the moderated relationship between political orientation and trust in scientists was mediated via perceived ideological similarity, both for expertise-based trust (index of mediated moderation = −0.35, 95% CI [−0.57; −0.16], Fig. S1a in the Supplementary Information) and for morality-based trust (index of mediated moderation = −0.68, 95% CI [−0.92; −0.46], Fig. S1b in the Supplementary Information).

Study 2

Methods

Study 2 investigated the proposed effect in the context of political stereotypes about 20 different scientific disciplines (e.g., sociologists, economists, and biologists). Participants were U.S.-based individuals recruited on Amazon MTurk (CloudResearch approved) in exchange for $0.75. Our sample size was not based on formal power analyses, which is not available for mixed models in commonly used software. Instead, we decided to recruit a large sample size of n = 1000. Eventually, 1,051 people took part in our study and 51 participants had to be excluded for failing the preregistered attention check (see below). The final sample thus consisted of 1,000 participants (48.15% female; age [mean ± SD] 41.17 ± 12.76 years) who indicated, on average, to be politically moderate (4.21 ± 2.86, on a scale from 0 = very liberal to 10 = very conservative).

Participants indicated their beliefs about political orientation (e.g., “What is the political orientation of [e.g., mathematicians]?”: 0 = very liberal, 10 = very conservative) and trustworthiness (e.g., “How trustworthy are [e.g., mathematicians]?”: 0 = very untrustworthy, 10 = very trustworthy) of scientists from 20 different scientific disciplines, presented in randomized order. To enable comparisons with previous stereotype research, participants also indicated each group’s agency (“How powerful are [e.g., mathematicians]”: 0 = very powerless, 10 very powerful) and communion (“How warm are [e.g., mathematicians]”: 0 = very cold, 10 = very warm; see Gligorić et al., 2022, for a similar approach). This data is shared on the OSF for future research projects but not analyzed here, as it goes beyond the scope of this article. The 20 rated disciplines were selected to represent the disciplines of the U.S. National Academy of Sciences (NAS), with some necessary aggregations (e.g., scientists in “mathematics” and “applied mathematical sciences” were merged into “mathematicians,” as we assumed that laypeople’s stereotypes for these groups would not differ). To get an even broader picture of stereotypes about academic researchers working with scientific methods, we added some theoretically interesting disciplines from the humanities (e.g., theologians and art scholars) and moreover climate scientists as a group with an exceptionally high political relevance. Participants then indicated their own political beliefs (“What is your political orientation?”: 0 = very liberal, 10 = very conservative), their age and gender, and completed an attention check (“If you read this, please choose ‘4’”).

Results

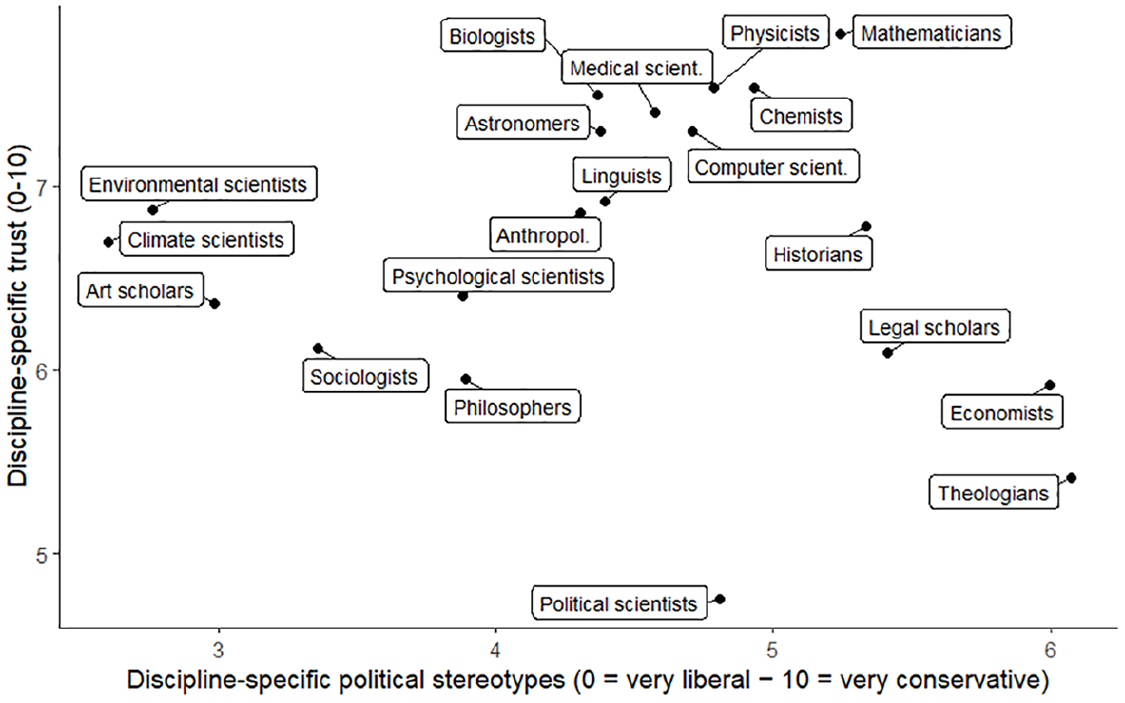

All disciplines were, on average, perceived to be either politically liberal or moderate, but none as clearly conservative (Figure 2). Across all disciplines, participants’ conservatism was negatively associated with trust in scientists (calculated by a mixed model with participants’ conservatism as fixed effect and discipline and rater as random intercepts), B = −0.14, SE = 0.02, p < .001, ΔR² = .03, similar to previous research. Crucially, in line with our prediction, we observed that this link was stronger when participants’ perceived scientists to be more liberal than when they perceived them to be more conservative (including participants’ stereotypic beliefs about scientists’ political orientation and their interaction with participants’ political orientation in the above mixed model as additional fixed effects), B = 0.07, SE < 0.01, p < .001, ΔR² = .05.

Discipline Mean-Ratings on the Two Dimensions of Disciplines’ Trustworthiness and Stereotypic Political Orientations in Study 2.

Speaking to the robustness and generalizability of this finding across disciplines, we observed this interaction effect in separate analyses for each of the 20 disciplines individually, all ps < .001 (Fig. S2 in the Supplementary Information). Further, this interaction remained significant even after including participants’ agency, their beliefs about scientists’ agency, and the interaction of these variables in the model and also when controlling for participants’ age and gender (both ps < .001, see OSF for details).

It should be noted that following previous literature (Koch, Imhoff, et al., 2020), we had preregistered using a slightly different mixed model where we planned to calculate a difference score between participants’ political orientation and their stereotypic perceptions of the group’s political orientation instead of an interaction. Even though this model was likewise significant and showed that calculated similarity predicted trust (p < .001), we do not report it here as it included an, in retrospect, less optimal test of the predicted interaction (see OSF for details).

Study 3

Methods

Study 3 replicated the observed interaction in a different country (Germany) and regarding a group of scientists studying a topic with high applied relevance: COVID-19. Participants were Germany-based individuals recruited via Prolific in exchange for £1.88. The data was collected as part of a larger study on “trust in scientists in light of COVID-19” by a collaborator who had included our measures in their study. This larger study included an intervention to change participants’ trust in scientists, and we thus analyzed only the data from the control group, where no (potentially biasing) intervention was administered. This group had a sample size of 325 (50.46% female; age [mean ± SD] 29.60 ± 10.00 years) and, on average, was politically liberal-leaning (3.55 ± 1.64, on a scale from 1 = left to 10 = right).

Participants first reported their trust in scientists in the context of the COVID-19 pandemic (e.g., virologists, but we did not specify any specific discipline). They completed five items (e.g., “I trust German scientists to do what is right during the COVID-19 pandemic”: 1 = disagree strongly, 7 = agree strongly; adapted from Dohle et al., 2020; Nisbet et al., 2015). Further, they reported their political stereotypes about these scientists (“What is the political orientation of German scientists during the COVID-19 pandemic?”: 1 = left, 10 = right), and how similar they felt to them (“In terms of my own political views and beliefs, I feel similar to scientists during the COVID-19 pandemic”: 1 = not at all similar, 10 = very similar). Moreover, participants reported their own political orientation (1 = left, 10 = right) and various other psychological and sociodemographic measures collected as part of the larger survey by our collaborator, which are not reported in this article. Finally, participants imagined a future pandemic similar to the COVID-19 pandemic and reported their intention to engage in four protective behaviors during this pandemic (i.e., wearing masks, social distancing, washing hands, and getting vaccinated; e.g., “If a new virus occurs in 10 years and a vaccine would be available, I would get vaccinated”: 1 = disagree strongly, 7 = agree strongly). We averaged these measures into one index of protective behavior intention (α = .75).

Results

The more conservative participants indicated to be, the more they trusted COVID-19 scientists when they (stereotypically) believed them to be rather conservative, and this association reversed when they (stereotypically) believed them to be rather liberal, B = 0.09, SE = 0.02, p < .001, ΔR² = .04. Importantly, we also observed the proposed interaction when predicting participants’ protective behavior intentions during a hypothetical future pandemic (e.g., mask-wearing and vaccination), suggesting relevant behavioral downstream consequences, B = 0.08, SE = 0.02, p < .001, ΔR² = .05. For participants who believed that scientists are liberal, conservatism negatively predicted trust and protective behavior intention, but this association reversed for participants who believed that scientists are conservative (Fig. S3 in the Supplementary Information).

In line with our explanation, this impact of political stereotypes about COVID-19 scientists on the relationship between participants’ political orientation and protective behavior intentions was serially mediated by similarity and trust (index of mediated moderation = 0.02, 95% CI [0.01; 0.04], Fig. S4 in the Supplementary Information).

Studies 4a and 4b

Methods

In Studies 4a and 4b, we investigated participants’ trust in scientists and its potential downstream consequences, namely policy support and information seeking, in the context of a stereotypically liberal (sociologists) compared to a stereotypically moderate discipline (economists). We chose these two disciplines based on our results from Study 2: While often concerned with somewhat similar questions grounded in the social sciences (e.g., both disciplines could plausibly have expertise on the same policies), sociologists are perceived as quite liberal, while economists are perceived as very moderate (maybe even slightly conservative-leaning).

Study 4a

Participants were U.S.-based individuals recruited on Amazon MTurk (CloudResearch approved) in exchange for $0.50. As in Study 1, we used sequential testing as sampling strategy (Lakens, 2014). However, as our interim analysis was non-significant regarding the predicted effects on policy support (see below), we had to collect the maximum preregistered sample size, resulting in 1,040 participants, and rely on a corrected α of .0467. In line with our preregistration, we excluded 200 participants who failed a strict attention check (see below). The final sample consisted of 840 participants (48.45% female; age [mean ± SD] 41.17 ± 13.09 years) who indicated, on average, to be politically moderate-to-slightly-liberal (3.40 ± 1.79, on a scale from 1 = very liberal to 7 = very conservative).

Participants first indicated the trustworthiness of sociologists and economists (“How trustworthy are [e.g., sociologists]?”: 1 = very untrustworthy, 7 = very trustworthy) and how ideologically similar they felt to each group (“In terms of my own political and ideological views, I feel similar to these scientists”: 1 = not at all similar, 7 = very similar). In addition, we presented two policy proposals (implementing financial literacy courses and implementing a television license, see OSF for details), and we randomized whether either sociologists or economists allegedly recommended each policy. We then measured whether participants would support this policy (“How much do you support this policy?”: 1 = not at all, 7 = completely). Further, participants indicated how they would split a fictional $100 donation to support the two policies. As an attention check, participants had to correctly indicate which group of scientists recommended which policy (one question per policy). Finally, participants reported their age, gender, and their own political orientation using the same items as in Study 1.

Study 4b

Again, participants were U.S.-based individuals recruited on Amazon MTurk (CloudResearch approved) in exchange for $0.50. Whereas Study 4a relied on a within-subjects design, Study 4b employed a between-subjects design. We randomly assigned participants to one of three between-subjects conditions (“sociological research institute,” “economic research institute,” or “interdisciplinary research institute”). Note, however, we treated the “interdisciplinary”-condition as fully exploratory, as highlighted in our preregistration, and, as the results regarding this condition were highly mixed and inconclusive, we do not further discuss this condition in this article (but all relevant data are shared on the OSF). Our sample size was not based on a formal power analysis, as we had no clear expectation for the possible effect size in a between-subjects design. Instead, we collected data from 762 participants (slightly above the preregistered 750 participants—250 per condition). Note, different from Study 4a, we did not use sequential testing as sampling strategy and thus rely on an α of .05. We excluded 21 participants who failed an attention check (see below). The final sample (without the exploratory “interdisciplinary”-condition, n = 246) thus consisted of 495 participants (46.45% female; age [mean ± SD] 40.44 ± 13.22 years) who indicated, on average, to be politically moderate-to-slightly-liberal (3.40 ± 1.75, on a scale from 1= very liberal to 7 = very conservative).

Participants imagined scientists working at either a sociological or an economic research institute (“[ . . . ] this institute is an institute for economic [sociological] research and that all researchers working at that institute are economists [sociologists] [ . . . ]”) or, exploratorily, imagined an interdisciplinary institute. Afterward, we measured participants’ trust in scientists working at the described research institute using the METI measure (see Study 1), with its two subdimensions expertise-based trust (Cronbach’s α = .94) and morality-based trust (Cronbach’s α = .95). Participants further indicated how much they would support a policy recommended by researchers from this research institute (“[ . . . ] This policy results from sociological [economic] research and theorizing, suggesting that this policy would have positive consequences for society”; “How much do you think would you support such a policy?”: 1 = not at all, 7 = completely) and how much they would be interested in seeking further information about their research (“How much would you be interested in learning about further findings and suggestions from these researchers?”: 1 = not at all, 7 = completely). In addition, we assessed perceived ideological similarity (“In terms of my own political and ideological views, I feel similar to these scientists”: 1 = not at all, 7 = completely). Participants also received an attention check question (“If you read this, select ‘not at all’”). Finally, participants reported their age, gender, and their own political orientation using the same items as in Study 1.

Results

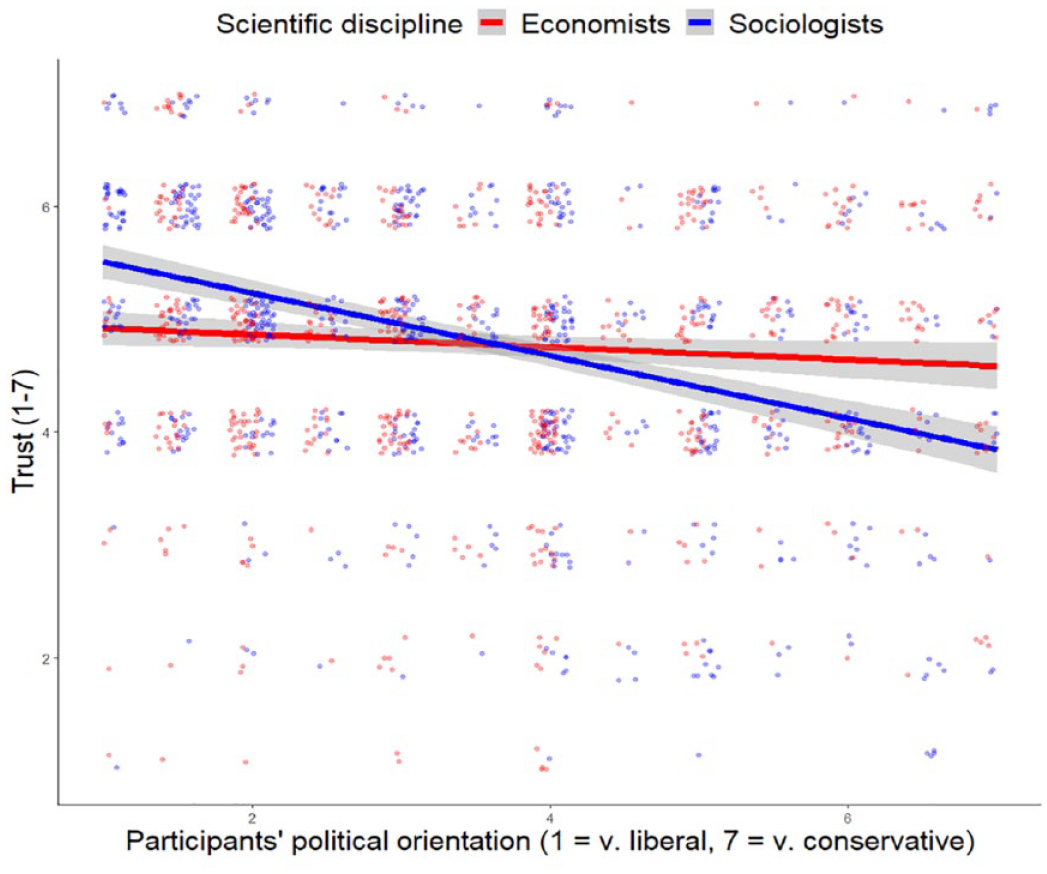

We find a significant interaction between participants’ political orientation and the evaluated discipline when predicting a general measure of trust in Study 4a (Figure 3), B = −0.22, SE = 0.03, p < .001, ΔR² = .02, and, in Study 4b, for predicting morality-based trust, B = −0.09, SE = 0.05, one-sided p = .038, ΔR² = .01, but not expertise-based trust, B = −0.05, SE = 0.05, one-sided p = .131, ΔR² < .01. Qualifying these interactions, conservative participants’ distrust in scientists (and its downstream consequences) was strongly present for a stereotypically liberal discipline (sociologists) but this effect was substantially reduced for a stereotypically moderate discipline (economists): In Study 4a, for sociologists, participants’ political orientation was negatively correlated with trust, r = −.35, p < .001, indicating that the more conservative participants were, the less they trusted sociologists. This effect was drastically reduced for economists, r = −.07, p = .033. In Study 4b, reflecting the proposed interaction, regarding sociologists, we observed strong conservative morality-based distrust, r = −.20, p = .001, which was reduced to non-significance regarding economists, r = −.04, p = .551.

Trust as a Function of Scientific Discipline and Participants’ Political Orientation, Study 4a.

In Study 4a, contrary to our predictions, we did not observe the expected interaction effect when predicting participants’ support for specific policies recommended by these scientists (e.g., liberals were not more likely than conservatives to support policies recommended by sociologists compared to policies recommended by economists), B = 0.04, SE = 0.09, one-sided p = .313, ΔR² < .01. The interaction pattern did also not emerge in secondary analyses regarding the split-donation task, B = 1.20, SE = 1.57, one-sided p = .223, ΔR² < .01. It seemed possible to us that pre-existing attitudes and knowledge about the presented policies may have overshadowed any effect of endorsement by specific scientists, which is why we used less specific policies in Study 4b.

In fact, in Study 4b, we detected the expected interaction pattern when predicting participants’ general policy support, B = −0.11, SE = 0.06, one-sided p = .030, ΔR² = .01, and intentions to seek further information from these scientists, B = −0.17, SE = 0.07, one-sided p = .005, ΔR² = .01. These discipline-dependent relationships of participants’ political orientation with their information-seeking intention as well as their policy support were, again, serially mediated via perceived ideological similarity and (morality-based) trust (for policy support: index of mediated moderation = −0.04, 95% CI [−0.07; −0.02]; for information seeking: index of mediated moderation = −0.04, 95% CI [−0.06; −0.01]; see Fig. S5 in the Supplementary Information).

Study 5

Methods

Study 5 added a real-world proof of concept that political polarization in trust in science is reduced for stereotypically moderate compared to stereotypically liberal scientists by looking at natural online behavior of all unique Twitter followers of the U.S.-American Democratic or Republican party. Using the Twitter API and the R package “rtweet” (Kearney, 2019), we obtained the user IDs (a unique number assigned to each Twitter user) of all followers of the Republican party (@GOP) and the Democratic party (@TheDemocrats). This resulted in a total of 5,174,381 followers, of which we excluded 1,196,513 Twitter users because they followed both the Democratic and the Republican parties, leaving their approximate political affiliation ambiguous. We thus focused on analyzing the following behavior of the remaining 3,977,868 followers (2,324,370 Republicans-affiliated and 1,653,498 Democrats-affiliated). Note that this procedure, of course, only provides an approximation of users’ political orientation and does not necessarily reflect the overall distribution of political ideology of all Twitter users.

We now explored whether the followers of the different parties differed in their likelihood to follow sociologists and economists. Using the website followermonk.com (followerwonk.com, n.d.), we further obtained a list of the 50 most popular sociologists and economists on Twitter as indicated by their Twitter biography (i.e., people describing themselves as “sociologist” or “economist” in their Twitter biography). Research assistants (blind to our hypothesis) checked each of these profiles for suitability: As our focus here was on political stereotypes about U.S.-American sociologists and economists, we excluded profiles that either were primarily affiliated with other disciplines or professions (e.g., a scientist who primarily worked as a bishop), clearly indicated a political orientation or affiliation (e.g., a scientist who stated that they had worked for the Reagan white house), or scientists that were not primarily U.S.-American according to their profile. Note, however, that for many accounts, we could not verify that they were active scientists. Of the remaining Twitter profiles, we selected the ten economists and sociologists with the most followers (see Supplementary Information, Table S6). We then investigated whether each Republican and Democratic follower in our sample followed any of these popular sociologists or economists. For each user, we aggregated this information into our two dependent variables (follows sociologists: yes or no; follows economists: yes or no).

Results

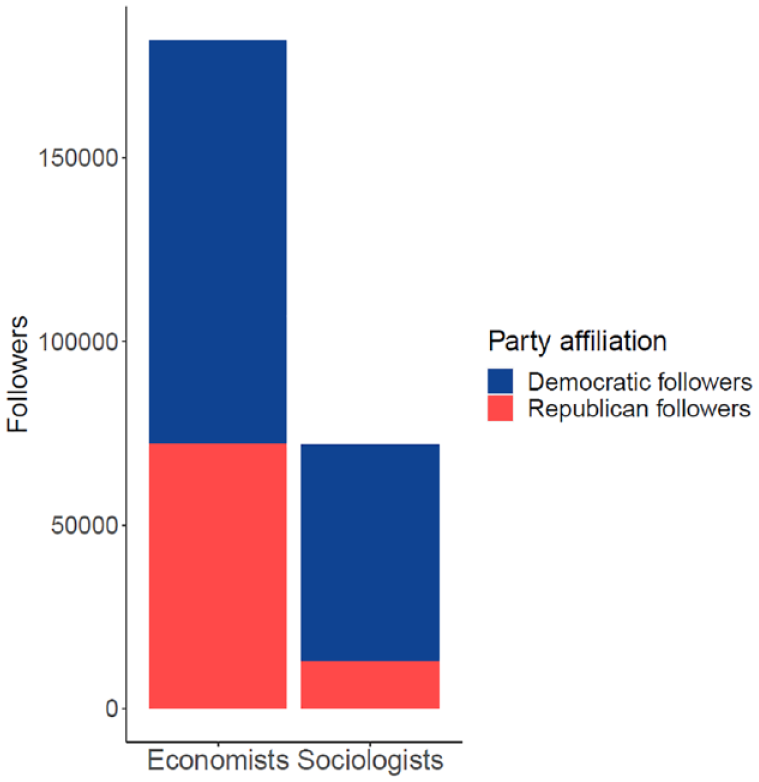

A logistic regression revealed that followers of the Republican Party were less likely to follow leading sociologists (i.e., a stereotypically liberal group) than followers of the Democratic Party, OR = 0.16, 95% CI [0.16; 0.17], p < .001 (Figure 4), reflecting highly polarized information-seeking preferences. In other words, Democrats-affiliated users had 6.25 higher odds to follow popular sociologists on Twitter than Republican-affiliated users. However, this asymmetry was drastically reduced for following economists (i.e., a stereotypically moderate group), OR = 0.48, 95% CI [0.48; 0.49], p < .001 (Figure 4): While still more Democrats followed popular economists on Twitter, Republicans were now only half as likely as Democrats to do so. This pattern resulted in a significant interaction of users’ political orientation (as approximated by their following of either the Democratic or Republican party on Twitter) and scientists’ discipline when predicting whether users followed the respective scientists, B = −1.07, SE = 0.01, p < .001.

The Total Number of Followers for 20 Popular Scientists (Economists and Sociologists) on Twitter as a Function of These Scientists’ Discipline and Their Followers’ Party-Affiliation on Twitter (Study 5).

General Discussion

Our studies highlight the importance of political stereotypes about scientists for explaining polarized trust in science. Across five studies, we found evidence that people hold stereotypes about scientists’ political orientation and that such stereotypes decisively influence the link between individuals’ own political orientation and their trust in scientists. Conservatives tend to distrust scientists when they believe that scientists are liberal, but this effect is substantially reduced or even reversed when scientists are perceived as more conservative. As expected, this effect was mediated by perceived ideological similarity, suggesting that the comparison of one’s own with a group’s perceived (i.e., stereotypic) political orientation is indeed a crucial cognitive process in forming trust judgments as theorized in the ABC model (Koch, Dorrough, et al., 2020; Koch, Imhoff, et al., 2020). Moreover, this pattern seems to be highly robust, as we observed it using samples from different countries (U.S.-Americans and Germans), different methodologies (experiments, surveys, and social media data), and across a variety of scientific disciplines (e.g., 20 disciplines in Study 2). Finally, this interaction pattern may have important downstream consequences: It was reflected in the perceived value of research findings (Study 1), protective behavior intentions during a pandemic (Study 3), information-seeking intentions (Study 4b), and actual information-seeking behavior (Study 5). In addition, there might be an effect on policy support; however, these results were more mixed: We did not observe an effect in Study 4a and, in Study 4b, the effect only showed in the (preregistered) one-sided test.

We demonstrate that one of the central drivers of trust in scientists is whether people perceive scientists as ideologically similar to themselves. Even if no information about scientists’ political orientation is explicitly given, people derive it from other cues such as scientists’ discipline. Interestingly, we observed that, on average, all investigated scientific disciplines were perceived as either politically liberal or moderate but none as clearly conservative (Figure 2). This pattern parsimoniously explains conservatives’ contemporary distrust in science (i.e., because, at the moment, most scientists are perceived as rather liberal) but highlights that this is not set in stone. For example, in Study 1, scientists working at a conservative research institute received more trust among conservatives than among liberals. Moreover, survey data tracking trust in science over many decades (Gauchat, 2012) clearly shows that political polarization in trust in science only emerged over time, from the 1990s onwards. These findings thus question perspectives suggesting that conservatism, in general, is incompatible with trust in scientists (Lewandowsky & Oberauer, 2021) or that conservatives’ distrust reflects a lack of cognitive skills (Pennycook et al., 2022). Of note, however, we, too, observed some evidence for ideological asymmetries in our data and, thus, cannot rule out additional explanatory contributions of these other perspectives on politically polarized trust in science. For example, for many disciplines, conservative distrust in liberal scientists was much more pronounced than liberal distrust in conservative scientists (e.g., for climate or environmental scientists); yet, there were also disciplines where this asymmetry flipped to a more pronounced liberal distrust in conservative scientists (e.g., theologians; Fig. S2 in the Supplementary Information). Nevertheless, the clear cross-over interaction patterns suggest that trust in scientists critically depends on political stereotypes—for conservatives and liberals.

Implications

These findings have some clear implications for science communication in practice and addressing conservatives’ contemporary distrust in science. First, an essential consequence of this stereotype explanation is that polarized trust in scientists should be strongly reduced for stereotypically neutral academic disciplines and scholars. Indeed, while sociologists (stereotypically liberal scientists) were met with strong conservative distrust and disinterest in their research (reflected in their information-seeking behavior), we observed a clear reduction of that negative perception for economists (stereotypically moderate scientists). Thus, scientists who are perceived as politically moderate (e.g., by discipline or institutional affiliation) should be especially likely to reach both political camps as they enjoy a relatively high level of trust and interest across the political spectrum. For example, it is well known that stressing scientific or expert consensus on a certain topic increases public trust and support (Bartoš et al., 2022; Rode et al., 2022). Our findings now suggest that highlighting that this consensus also includes (stereotypically) conservative scientists could make it even more effective among conservatives. Note, however, that actively advocating for a specific policy might also signal a scientist’s own ideological beliefs (potentially overriding broader stereotypic perceptions based on, for example, their discipline). Whether and how scientists’ communication behavior interacts with stereotypic perceptions is an important question for future research.

Second, our results highlight the relevance of morality-related aspects (e.g., perceived bias) for polarized trust: Here, the effects were stronger and more robust on morality-based trust than on expertise-based trust (studies 1, 4a, and 4b), which hints at polarization of trust in science being particularly grounded in aspects of morality (E. H. Kennedy & Muzzerall, 2022; Rapp, 2016)—this means in perceptions of scientists’ integrity and benevolence rather than their competence. Thus, information about scientific control processes (e.g., peer review, preregistrations, open materials) which make it less likely for scientists to bias their results in a desired (e.g., liberal) direction may increase trust (especially morality-based trust; Altenmüller et al., 2021; Hendriks et al., 2020; Rosman et al., 2022) among conservatives (i.e., reduce political polarization, Van Bavel et al., 2020).

Third, it might be promising to directly reduce people’s reliance on stereotypes when judging scientists’ trustworthiness altogether by providing individuating information about the communicating scientist (Altenmüller et al., 2023; Rubinstein et al., 2018). These ideas should be further scrutinized in future research.

Limitations

Moreover, future research may also address some of the limitations of our work. First, while we tried to investigate a diverse range of scientific disciplines, it is certainly possible that we missed some groups that are theoretically or practically relevant to our research question. This might be especially problematic, if conservatives and liberals systematically differ in what groups they see as relevant scientists and experts. In addition, we used sociologists and economists in Studies 4a, 4b, and 5. While there are many reasons to consider them suitable comparison groups (e.g., expertise on similar topics), perceptions of them, of course, likely differ in other characteristics besides political orientation. Further, their perceived ideological gap was not very big, which possibly contributed to some rather small effects in Studies 4a and 4b. Of note, while in line with our preregistrations, many of the observed effects in these two studies were only significant when tested one-sidedly. These problems associated with the comparison of sociologists and economists are also an important caveat regarding Study 5: Maybe, Democrats and Republicans simply differ in their interests typically associated with those two disciplines (e.g., social issues vs. financial issues), which is an alternative explanation for their following behavior on Twitter.

Second, our analyses were based on a bipolar concept of self-indicated political ideology (liberal vs. conservative, left vs. right) and U.S. participants. It would be interesting to see whether our results can be replicated with other conceptualizations of political ideology (Duckitt & Sibley, 2009; Feldman & Johnston, 2014) and in other political systems that are not dominated by only two parties. Importantly, at least in Germany (Study 3), where currently seven larger parties are represented in the federal parliament, we obtained very similar results.

Third, more generally, while it is reasonable to assume that the present psychological process of forming trust judgments via stereotype-based reasoning generalizes across samples, our participants cannot be considered representative of the general population. Moreover, data quality from sample providers has been repeatedly criticized (e.g., Chmielewski & Kucker, 2020). We thus implemented attention checks and used different sources (MTurk via CloudResearch, Prolific, Twitter) to ensure acceptable data quality. Given that we were mainly interested in experimental effects, one could even consider the use of such samples as a more conservative test of our assumptions (due to more noise in the data).

Finally, we focused on people’s generalized (i.e., stereotypic) beliefs about scientists, not considering the extent to which these are grounded in truth. Notably, a recent study indeed showed a growing liberal bias among scientists reflected in their political behavior (Kaurov et al., 2022). Thus, political stereotypes might represent (exaggerated) real differences (Jussim et al., 2015), and it might be interesting to investigate the role of stereotype-accuracy in the present context.

Conclusion

Overall, the present article provides evidence for a unifying stereotype-based explanation for conservatives’ and liberals’ trust in science. People across the political spectrum rely on their political stereotypes about scientists to inform their judgments, trusting scientists that they perceive as ideologically similar to themselves. This explanation deepens our understanding of trust in scientists and highlights the value of applying stereotype models to trust in science and science communication more generally. Further, it has important implications for future research on the social-cognitive underpinnings of political polarization of trust in science. And, maybe most importantly, we can derive practical insights into how to effectively communicate science in a way that reduces political polarization and reaches individuals on the whole ideological spectrum.

Footnotes

Acknowledgements

We thank Joris Lammers, Mario Gollwitzer, and Mathias Twardawski for their helpful comments on the first draft of this manuscript. In addition, we thank Simone Dohle for her generous support with the data collection in study 3.

Author Contributions

MSA, TW, and AS designed research (conceptualization and methodology); TW performed research; TW and MSA analyzed and visualized data; and MSA, AS, and TW wrote the paper. MSA and TW share the first-authorship.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by a Junior Start-Up Grant awarded to the authors by the Center for Social and Economic Behavior (C-SEB), University of Cologne.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.