Abstract

Considering growing deliberative turns within and beyond science communication coupled with calls for their systematic evaluations, this paper presents a legitimacy framework to analyze a recent consensus conference on the topic of genome editing. Drawing upon participant surveys (PSs) and interviews, it confirms difficulties of this deliberative method in achieving inclusive input from across society as well as conflicts between deliberative ideals, empirical communication practice, and ensuring impact on policy making. The case calls for more experimentation with hybrid online/offline approaches while staying aware of unequally distributed deliberation abilities and the need for unifying outputs.

Keywords

Introduction

In response to a growing discourse around an “erosion of democracy” (European Commission, 2020), more and more organizations, including many science organizations, are taking a “deliberative turn” (Dryzek, 2000). For Dubravka Šuica (2020), Vice-President of the European Commission for Democracy and Demography, for example, deliberative democratic initiatives can help to “reinvigorate democracy,” overcome divisions, and build understanding and consensus. The Organisation for Economic Co-operation and Development (OECD, 2020) even speaks of a “deliberative wave” in Europe but also Australia, Canada, the United States, and other OECD and non-OECD countries.

While the variety of deliberative methods is vast, so-called consensus conferences have arguably “travelled” especially “well” (Einsiedel et al., 2001). Selecting a small set of citizens to deliberate on an issue over an extensive period of time, consensus conferences typically follow three main aims: adding different perspectives and clarifying (and ideally easing) controversies; producing more informed, confident, and cooperative citizens; enhancing the legitimacy of policy- or other decision-making processes (Blok, 2007; Caluwaerts & Reuchamps, 2015; Einsiedel et al., 2001; Goodin & Dryzek, 2006; Ureta, 2016).

A core dimension of the responsible research and innovation (RRI) framework, consensus conferences, and other so-called “mini-publics” have been particularly prominent in the field of science and innovation with many scholars demanding their expansion (Capurro et al., 2015; Goodin & Dryzek, 2006; Merkelsen, 2011; Owen et al., 2013; Pansera et al., 2020). In an article in the journal “Science,” for instance, Dryzekdianne et al. (2020) request “global public deliberation to explore the science and its implications” (p. 1435) of new genome editing technologies. Renn (2021) and others (e.g., Webler & Tuler, 2021) call for the general integration of deliberative elements in the governance of technological and other, increasingly “systemic” risks.

Such developments go hand in hand with a move in science communication away from information-deficit models, where lack of uptake of scientific results or technological innovation is linked to insufficient public understanding and treated with top-down information, to more dialogue-oriented and participatory, including “deliberative” (Gastil, 2017), science communication (Davies, 2021; Merkelsen, 2011; Priest et al., 2018; Richter et al., 2022).

However, public deliberation in general and the use of consensus conferences in particular have also raised various lines of scholarly criticism. For example, we find long-standing discussions around the feasibility and desirability of consensus (Chambers, 2003; Dryzek, 2000), which have been re-emphasized with scholars describing an increasingly pluralistic and (epistemologically and ontologically) divided society (Irwin, 2017; Valkenburg, 2020). Additional questions about impact, inclusiveness, and representativeness have led to calls for more empirical investigations of the legitimacy effects as well as wider reflective learning concerning consensus conferences and public participation experiments in general (Chen, 2021; Delvenne & Macq, 2020; Dryzek, 2000; Jacobs & Kaufmann, 2021; Webler & Tuler, 2021). Looking back at “four decades of public participation in risk decision-making,” Webler and Tuler (2021) observe a need for more “systematic observations about performance” of public participation processes with “clarity about variables, measurable indicators, and important causal relations” (p. 512). Similar arguments can be found in recent reviews on (participatory) science communication, where Kappel and Holmen (2019) conclude that “the normative as well as the empirical framework for evaluating whether the employment of [. . .] special deliberative processes is indeed enhancing democratic legitimacy is yet to be developed fully” (p. 9).

The next sections will explain how this study responds to such conclusions. After reviewing the literature on deliberative participation with a focus on consensus conferences, Section 3 will explain how legitimacy and deliberation theory were used to systematically evaluate a recent consensus conference on the topic of genome editing initiated by the German Federal Institute for Risk Assessment (BfR). Drawing upon observations as well as surveys and semi-structured interviews with participating citizens, scientists, organizers, and invited decision-makers (DM), the finding section will show difficulties in ensuring inclusive input from across society. From a processual, or “throughput” perspective, it identifies conflicts between the information requirements emerging from deliberative ideals and empirical information sourcing practice. In terms of output, the conference showed its greatest impact on the personal learning of participants and their immediate surroundings. Resonating with previous findings, ensuring an impact on wider policy making emerged to be more challenging. The fifth section reflects on the legitimacy implications of these findings for consensuses conferences and deliberative approaches to public participation as a whole before the conclusion summarizes the contributions of this paper on an empirical and methodological level.

Literature Review

First applied by the Danish Board of Technology in the mid-1980s, a consensus conference typically brings together around 15 citizens who meet for two preparatory weekends to learn about an issue and determine a list of questions for a subsequent public session. During that session, a panel of selected professional experts (PE) is asked to respond. Based on this exchange, the citizens then deliberate among themselves and compile a concluding report. In a final public session, the report is presented to policy-makers, media, and the wider public (Dryzek & Tucker, 2008; Einsiedel & Eastlick, 2000). During the process, participants are provided with background material and/or lectures to introduce them to the method and the subject of debate (Nielsen et al., 2007). Usually, a facilitator and/or moderator provides support to the citizens, most importantly in preparing for the public conference and writing the final report (Hudspith, 2001). An advisory committee oversees the process, including the choice of citizens, the information provided, the list of potential experts, and the scope of the debate (Dryzek & Tucker, 2008; Hudspith, 2001).

Other examples of deliberative mini-publics include citizen juries, planning cells, deliberative polling, or “21st Century Town Meetings.” Many of them involve larger number of participants either face-to-face or in hybrid online–offline formats to meet prominent demands for larger n results (Dryzek & Tucker, 2008; Worthington et al., 2012). There have also been attempts to carry out global deliberations, for example in the form of “world-wide views” on biodiversity or climate and energy (Rask & Worthington, 2012, 2015)

Deliberative mini-publics typically “have some claim” to representativeness, though rarely in the statistical or in the electoral sense but rather in the sense that “the range of social characteristics and points of view in society are substantially present” (Dryzek & Tucker, 2008, p. 864). To enable such claims, they usually select participants through random sampling. Due to the small number of participants compared to, for example, representative surveys, a combination with targeted selection is supposed to ensure that a range of social characteristics are represented (Dryzek & Tucker, 2008; Rountree et al., 2022). Sometimes, the initial sample entails self-selection elements for example by posting calls for participation in newspaper advertisements (Dryzek & Tucker, 2008).

Since its initiation in Denmark, consensus conferences have spread across the globe, especially in the late 1990s and early 2000s (Einsiedel et al., 2001; Ureta, 2016). This has led to positive but also a number of negative evaluations in the scientific literature with criticism mainly evolving around issues of impact, inclusiveness, and representativeness.

Most prominently, consensus conferences and other mini-publics have been criticized for their limited impact on policy making (Delborne et al., 2013; Dryzek & Tucker, 2008; Guston, 1999; Ureta, 2016). As a result, we can find a “systemic turn” in deliberation research where scholars increasingly investigate how deliberative mini-publics should and can impact broader political systems (Rountree et al., 2022). Authors have proposed, for example, to tailor deliberation activities to windows of policy-making opportunity, in terms of topic (e.g., consideration of competing themes), timing (e.g., early stage engagement), and the involvement of DM (Burgess, 2014; Caluwaerts & Reuchamps, 2015; Delborne et al., 2013; Itten & Mouter, 2022; Kaplan et al., 2021; Rask & Worthington, 2012; Richter et al., 2022). Kaplan et al. (2021) found that the outputs from their deliberative method “proved most valuable for decision-making when the project had a direct connection to a policy decision and when there were strong ‘process champions’” (p. 9). This includes not only institutionalized DM but also advocacy and media organizations (Dean et al., 2022; Delborne et al., 2013; Worthington et al., 2012).

Rountree et al. (2022) warn though that such impact focus can create “a pragmatic orientation” (p. 2) where participants emphasize notions of efficiency, scope, and efficacy. Tensions with a deliberative orientation can emerge if it results in self-censoring or less creativity and restricts deliberative discussion (Rountree et al., 2022; Worthington et al., 2012). In fact, for political philosophers, such as Cristina Lafont (2015), the more deliberation is insulated from pressures that are exogenous to the discourse at hand (sectarian interests, manipulation, coercion, etc.), the more likely it is that participants will be willing and able to follow the force of the better argument in order to reach a considered judgment. (p. 46)

Recent empirical findings by Mockler (2022) suggest that involving policy-making actors in the deliberation process can indeed lead to domination and challenges for equality and inclusiveness. As a deliberative method, consensus conferences favor a model of communication that adapts to rational reasoning (Abels, 2007). It assumes that “actors have learning capacities and, moreover, are willing to engage in learning processes” (Papadopoulos & Warin, 2007, p. 456). However, “not all actors have the capability—in the case of the weaker—or the willingness—in the case of the stronger—to conform to the discursive pattern of deliberative interaction” (Papadopoulos & Warin, 2007, p. 455). This can “lead to a de-facto exclusion of groups of participants who cannot fulfil such demanding criteria” (Abels, 2007, p. 106) or to the domination of the process by some individuals over others (Mockler, 2022). Especially when trying to build consensus, there is a risk of forcing dominant norms, logics, and epistemologies while silencing dissenting voices, typically from marginalized background (Dean et al., 2022; Valkenburg, 2020).

Some propose over-sampling from marginalized groups and the integration of processual support mechanisms as a solution to this problem (Dean et al., 2022; Worthington et al., 2012). Others point to inherent tensions with ideals of representativeness. As Lafont (2015) remarks, it is generally “questionable” that stratified random selection “always provide an accurate representation of the population, since the categories that are relevant for sampling purposes (e.g., gender, ethnicity, economic status, etc.) vary depending on the issue” (p. 49). Jacobs and Kaufmann (2021) propose self-selection as “an equally, if not more effective means to increase the legitimacy of public decision-making” (p. 105). Such an approach, however, can reinforce selection bias toward the wealthier and better educated (Dean et al., 2022; Fishkin, 2020; Fung, 2006) and fuel critiques of mini-publics as a new form of elitism (Lafont, 2015). Mini-publics’ inherent enlightenment arguably implies that participating citizens no longer reflect the opinions of the general public (Lafont, 2015; Merkelsen, 2011). For Merkelsen (2011), it also shows opposing models of dialogue where asymmetric elements of scientific authority, particularly in form of the expert panel, meet deliberative attempts for symmetric and equal exchange that questions the authority of science. For others, deliberation methods simply “ignore the reality of the complex information environment from which deliberative participants draw” (Anderson et al., 2013, p. 955) including, for example, wider traditional and new social media coverages (Capurro et al., 2015). The following sections will explain how this study uses legitimacy and deliberation theory to shed more detailed light on these different lines of criticism.

Analytical Framework

Considering the deliberative goals of consensus conferences and arguments about their (potentially) legitimizing role for science communication and other processes (Caluwaerts & Reuchamps, 2015; Dryzek, 2000; Gastil, 2017; Kappel & Holmen, 2019), this study uses a legitimacy framework to meet calls for “systematic observations about performance” (Webler & Tuler, 2021, p. 512). It conceptualizes legitimacy as the foundation of (political) authority based on judgments of the conformance with recognized (democratic) norms or standards of behavior (Beetham, 1991; Risse, 2006). Following authors, such as Barker (1990) and Bernstein (2005), deliberation norms are seen as transcending prescriptive and empirical accounts of legitimacy. In other words, conformance with norms of deliberation is treated as the potential ground of both perceived and ideal type of legitimacy (Barker, 1990). Within the prescriptive political science literature, ideal type of legitimacy criteria are typically categorized into input and output and, more recently, throughput legitimacy (Risse, 2006; Schmidt, 2013). The following sub-sections will present these three dimensions in more detail, drawing from core theoretical literature as well as empirical operationalizations in the context of deliberation and wider public participation exercises.

Input Legitimacy

For Risse (2006), input legitimacy concerns the participatory quality of a process. It considers who has access and who can influence it (Abels, 2007; Caluwaerts & Reuchamps, 2015). As outlined above, many authors evaluate public participation against the criterion of representativeness, which can be understood as providing equal opportunities to access and clarity in the selection process (Abelson et al., 2003). Applying Rowe and Frewer’s (2000) framework to evaluate a consensus conference in Taiwan, Fan (2013) asks whether the conference reflects “the perspectives and particular characteristics of some groups, reducing the exclusion of diverse social perspectives” (p. 538). Following Dryzek (2000), ideally, “discursive democracy allows for dissent and for voices from the margins to be heard” (p. 168). “Candidates” for such efforts of inclusiveness include “ethnic and religious minorities, indigenous people, women, the old, gays and lesbians, youth, the unemployed, the underclass, recent immigrants, people on the receiving end of environmental risks, and future generations” (Dryzek, 2000, p. 86).

Throughput Legitimacy

In the words of Schmidt (2013), throughput legitimacy “covers what goes on in between the input and the output” (p. 14). While input legitimacy is a result of participation by the people, throughput legitimacy is about inclusive, open, accountable, and transparent consultation processes with the people (Schmidt, 2013).

Following Habermas’ theory of communicative action, process legitimacy in a deliberative sense requires that decisions rest on rational arguments where free and equal actors build understanding for each other and are willing to revise their preferences in light of new information and discussion (Bernstein, 2005; Chambers, 2003; Lafont, 2015). This implies that all participants in the process should have an equal chance of affecting the outcome by expressing their wishes, desires, and feelings, and introducing questions and counter arguments (Caluwaerts & Reuchamps, 2015; Dryzek, 2000; Fan, 2013; Lövbrand et al., 2011). However, rationality is also the ability to “sort good arguments from bad, not just to bring all reasons into play” (Dryzek, 2000, p. 174) to solve collective problems. In other words, deliberating subjects should transcend their personal preferences and points of view in favor of arguments that target the common good (Lövbrand et al., 2011).

Whether deliberation processes should aim for consensus is debated. The classical Habermasian model is consensus focused. However, more and more authors argue that arriving at noncontroversial definitions of the public good is hardly achievable or desirable in current pluralistic societies (Chambers, 2003; Dryzek, 2000). More recent literature suggests that deliberative exercises should aim at “disclosure” making divergences explicit by articulating “what is at stake, for whom, and why, and what type of learnings emerge in and through participation” (Van Bouwel & van Oudheusden, 2017, p. 507). In practice, this may mean that the final report “indicate[s] a range of opinions or acknowledge a minority’s dissension” (Hudspith, 2001, p. 313). For Valkenburg (2020), “consensus and contestation will both have some legitimacy at specific points” (p. 343). Dryzek (2000) proposes “workable agreements in which participants agree on a course of action, but for different reasons” (p. 170).

To facilitate procedural legitimacy, organizers of deliberative processes are suggested to implement a variety of measures. For example, they are supposed to address inequalities in the capacity to participate by making all information fully accessible, readable, and comprehensible (Abelson et al., 2003; Alcántara et al., 2014; Fan, 2013; Rowe & Frewer, 2005). Information should be “fairly balanced” (Blok, 2007) and “factual” (Alcántara et al., 2014). While external review is considered crucial to achieve these objectives, information provision is also supposed to be flexible, variable, and responsive. This is to enable participants to discuss and challenge all information presented and give them a sense of ownership (Abelson et al., 2003; Bernstein, 2005; Fan, 2013; Rowe & Frewer, 2005). Furthermore, organizers are supposed to provide incentives for actors to critically evaluate and reflect upon their own interests and preferences (Dryzek, 2000; Risse, 2006). Here, a key role for moderators emerges who can lead toward a facilitating communication culture without dominating or manipulating the process (Alcántara et al., 2014; Caluwaerts & Reuchamps, 2015; Rowe & Frewer, 2005). This includes ample time for discussion and an emphasis on mutual respect and concern for others (Abelson et al., 2003). It also includes qualities on the side of the moderator and other organizers, such as trustworthiness, fairness, integrity, credibility and impartiality (Schmidt & Wood, 2019).

The criterion of accountability is supposed to ensure that all actors involved in a process can be held to account for what they do based on internal and external transparency (Schmidt & Wood, 2019). Procedural information, reaching from the goals and political-administrative setting of a participation process, over participant selection, to decision-making and final results, can support such internal and external transparency. Internally, participants also need to understand their role and decision-making power (Alcántara et al., 2014).

Output Legitimacy

Output legitimacy can be understood as the effectiveness of a process for solution finding and people overall (Scharpf, 1970; Schmidt, 2013). This commonly implies, among others, that the participation process “should in some sense be cost-effective” (Rowe & Frewer, 2000, p. 17). For public participation scholars, it is also crucial that participants have the perception that the process has been “honestly conducted” with “serious intent to collect their views and to act on those views” (Rowe & Frewer, 2005, p. 262). This involves “maximizing the relevant information from the maximum number of all relevant sources and transferring it (with minimal information loss) to the other parties” (Rowe & Frewer, 2005, p. 263).

While there is debate among democracy theorists about the ideal output of deliberative mini-publics (Bächtiger & Goldberg, 2020; Fishkin, 2020; Habermas, 2020; Lafont, 2015), what some term a “realistic perspective” (Lafont, 2015) emphasizes that for deliberative processes not to be “empty,” they need to create “buy-in” from relevant actors with power resources (Bernstein, 2005, p. 165). Applying output criteria to consensus conferences, Guston (1999) distinguishes between direct impact on policy making (legislation, regulation, budget, etc.) and impact on general thinking within the policy-making realm by creating or changing knowledge and generating feedback on the topic among policy-makers, PE but arguably also 1 citizen participants (CP) and the wider public (see also Abelson et al., 2003; Caluwaerts & Reuchamps, 2015; Jacquet & van der Does, 2020). If one follows the normative goal of mainstreaming participation, creating experiential knowledge around participation to contribute to structural change is important too (Alcántara et al., 2014; Boulianne et al., 2020; Delborne et al., 2013; Jacquet & van der Does, 2020). This holds for the policy-makers and PE as well as the CP and the wider public (Alcántara et al., 2014; Fan, 2013).

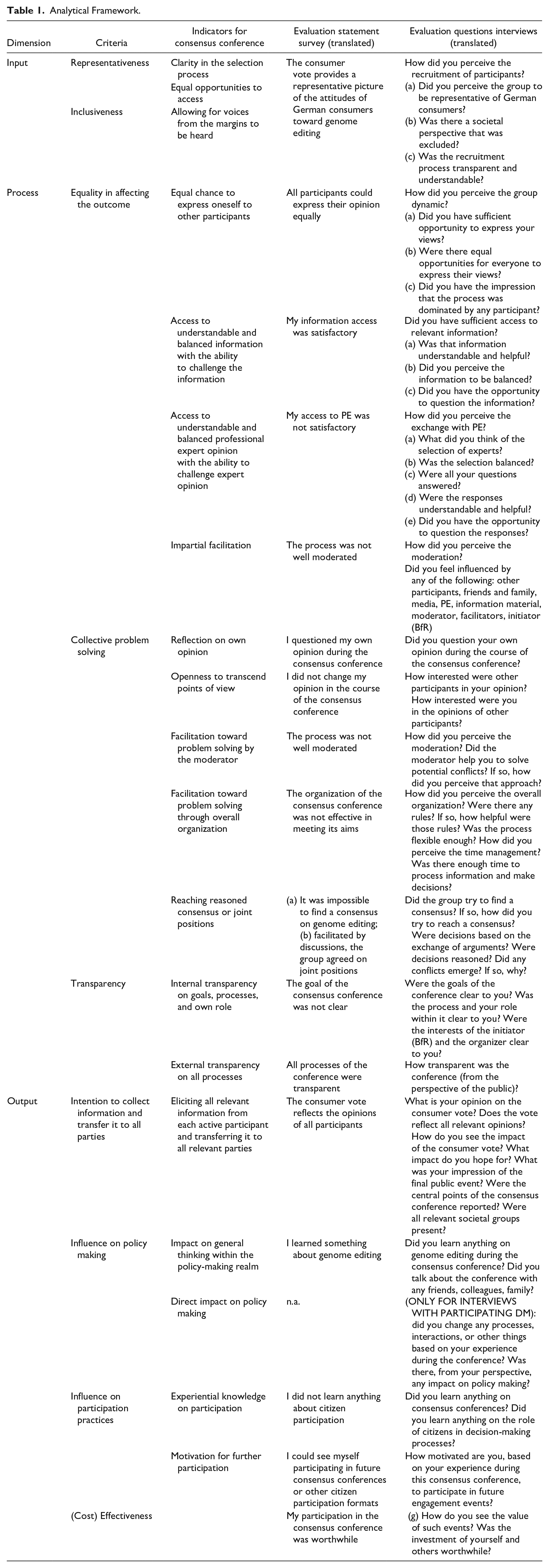

Table 1 provides a summary of the input, throughput, and output criteria just discussed. It also shows how the criteria were translated into survey statements and interview questions for the evaluation of the consensus conference studied in this paper. The next section will explain the study methods and empirical context in more detail.

Analytical Framework.

Empirical Context and Research Method

While public participation has been on the rise in many scientific fields, it has become particularly prominent in the European food sector, especially when it comes to scientific food risk analysis (Chatzopoulou et al., 2020; Dendler & Böl, 2021; Smith et al., 2021; Webler & Tuler, 2021). In line with these trends, BfR, a regulatory scientific organization in the remit of the German Ministry for Food and Agriculture (Dendler & Böl, 2021), initiated in 2019 a “Consumer Conference on Genome Editing in the Field of Nutrition and Human Health.”

Closely aligned with the Danish consensus conference model, the event consisted of two preparation weekends during which the “participants got to know each other, received an introduction to the scientific, technical and social aspects of genome editing and developed the questions that they wanted to pose to experts” (BfR, 2019b). During the third weekend, a panel of PE selected by the citizens provided answers to the questions, based on which the citizens drafted a consumer vote. In a public event the following day, two citizen representatives presented the vote to a panel of five DM from the fields of politics, public administration, science, industry, and civil society. “To give participants an unbiased and impartial introduction to the topic” (BfR, 2019a), an external communication agency facilitated and moderated the whole conference. The information material provided to the participants was initially compiled by BfR staff, then weighed by the external communication agency, and reviewed by an external scientific advisory board. The advisory board also reviewed an initial list of PE from which participants could select for the professional expert panel. To recruit CP, advertisements were published online and through the radio resulting in 147 registrations. After categorizing the applicants according to socio-demographic criteria (age, gender, profession, employment status), 20 participants in total were randomly selected from each category. Citizens with a professional link to the topic were excluded. All participants received 500 Euro compensation (BfR, 2019a, 2019c).

As a BfR employee, the author acted as an observer throughout the conference without engaging in organizational matters. Furthermore, and following arguments about the relevance of participants’ perceptions for questions of democratic quality and legitimacy (Barker, 1990; Knobloch & Gastil, 2022), all CP received a questionnaire asking them to rate a number of evaluative statements on a 6-point Likert-type scale. Statements were phrased positively and negatively to reduce response bias. Next to the closed statements, three open questions enquired whether the conference met the participants’ expectations and what they liked and did not like about the conference. To evaluate details, 14 CP, five DM from the public panel, three participating PE, and five individuals that were involved in organizational matters (O) were interviewed after the event in a semi-structured approach. 2 Interviews were conducted in German, lasted between 20 and 100 minutes, were recorded, and subsequently transcribed. The content of all interview transcripts, observational notes, and responses to the open questionnaire questions was analyzed with the software MAXQDA using the legitimacy criteria discussed above and summarized in Table 1 as a coding framework. Closed questionnaire responses were analyzed descriptively using the software SPSS. The following section will present the questionnaire results before discussing their legitimacy implications in the light of additional insights gained from the open questions, the interviews, and observations.

Results

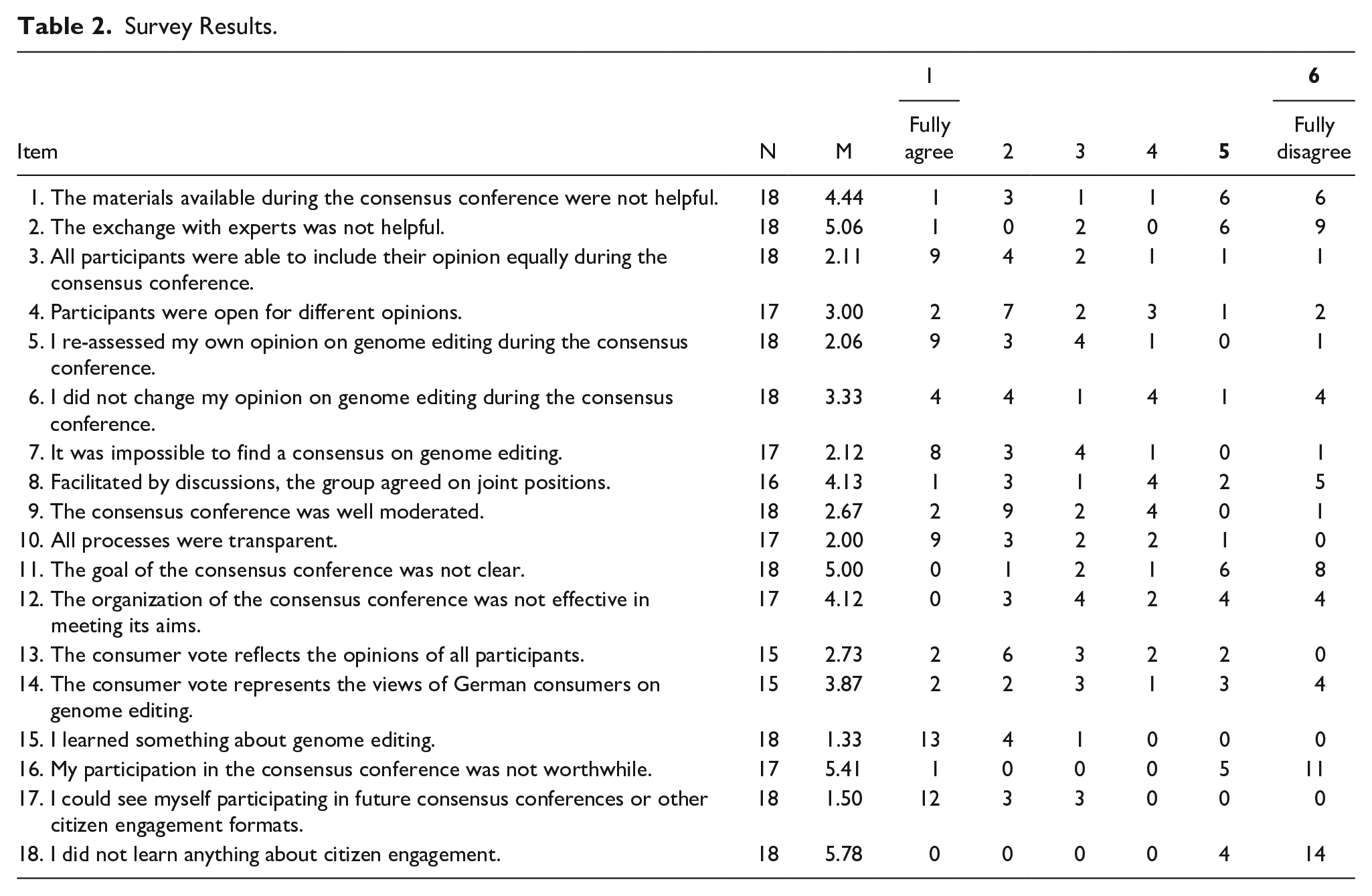

Table 2 presents the descriptive statistics of participants’ views expressed in the questionnaire. For each item, the number of respondents (N) and means (M) is reported.

Survey Results.

Input Legitimacy

Following the literature reviewed above and summarized in Table 1, consensus conferences are prescribed to include a range of views, including those from the margins, through a clear and transparent selection process. According to PS question number 10 (Q.10) and interviews, participants largely perceived the selection as transparent and, according to most interviewees, understandable. Twelve out of 15 respondents fully or somewhat disagreed with the survey statement “the consumer vote represents the views of German consumers on genome editing” (PS, Q.14). This picture was supported during the interviews as many interviewees felt that “only those applied that are interested in the topic” (Interview [Int.] CP) representing “a sample of willing citizens” (Int.CP). Participants saw those excluded that do “little reading” (Int.CP), have “limited education levels” (Int.CP), or are less “eloquent” (Int.CP). A number of interviewees missed “participants with migration background” (Int.CP).

When thinking about potential ways to improve representativeness and inclusiveness, some interviewees questioned the advertisement focus: if I walk around my proletarian neighbourhood and see people that do not necessarily have a high intellectual background, there are certainly some that are potentially moved by these questions. But they do not listen to Deutschlandfunk [national public radio broadcaster that was used to advertise the conference] or read the Tagesspiegel [national newspaper that was used to advertise the conference]. (Int.CP)

Others raised random sampling from public databases as a potential solution. An interviewee from the side of the organizers opposed such alternative recruitment paths though: “you need to have people that are willing to spend a weekend, in this case even three. You cannot do that with someone that does not have any interest in the topic” (Int.O).

Other CP raised how traveling for several days to a different location can create input barriers for groups like parents of young children. To address such issues, a couple of participants suggested to combine face-to-face with online formats, a suggestion that has already been implemented in multiple places (e.g., Delborne et al., 2011; Kaplan et al., 2021) and will be further addressed in the discussion.

Throughput Legitimacy

When it comes to the process, the literature review emphasized the importance of rational arguments where participants are equally included in building mutual understanding and joint learning targeted at the common good. The responses to Question 3 of the PS suggest that the majority of CP felt that all participants were able to include their opinion equally during the consensus conference. A similar picture emerged during the interviews although many interviewees stated that a few individuals at least tried to dominate the process. The group, in the words of participants, “managed these dynamics well” (Int.CP), “sanctioning” (Int.CP) such attempts and keeping it in “limits” (Int.CP), for example by “giving those the opportunity to express themselves that are more quiet” (Int.CP). Observations supported this impression with the group actively opposing, for example, attempts by single participants to convince others of a pre-written votum, giving the word to less dominant participants, or making sure that all participants were present when important decisions were made. Voting was usually seen as a last resort with participants rather trying to engage in verbal exchange to find agreements. Observations and many interviewees pointed to a high level of respect with “constructive” (Int.CP) discussions on the topic.

I told everyone afterwards that this was one of my best experiences: to see that people that do not know each other can communicate very appreciating with each other and have the patience to let each other finish speaking and express very contrary opinions. (Int.CP)

While such descriptions come close to deliberation ideals, observations and some interviewees supported arguments about deliberation placing quite high demands on participants, which can lead to de-facto exclusion. For example, one interviewee explained: “I have seen a few that simply never raised their hands. I think the discussion was too much for them. [. . .] that is a bit sad then that such personalities are pushed to the side a bit” (Int.CP).

Concerning the ideal of building mutual understanding based on a reflection of the own opinion and openness to change it, both survey and interview results are mixed. Eleven citizens fully or somewhat agreed that participants were open for different opinions; six fully or somewhat disagreed with that statement (PS, Q.4). While 16 fully or somewhat agreed that they re-assessed their opinion during the process (PS, Q.5), nearly half of the citizens fully or somewhat agreed that they did not change it (PS, Q.6). 3

The majority agreed that it was not possible to find a consensus (PS, Q.7) or to use discussion to come to joint positions (PS, Q.8). The group, therefore, decided to report a mix of convergent and divergent opinions in their final votum. While many citizens did not perceive this lack of a full consensus as a problem but rather as a result of a pluralistic view on a complex topic, some interviewees showed signs of a pragmatic orientation (Rountree et al., 2022): “the approach to incorporate every opinion made things endless and watered down the votum” (Int.CP). Here another, observed and often mentioned criticism comes into play: many citizens felt that there was “too little time between expert-hearing and then processing the new information and the [formation of the final votum] the following Sunday” (Int.CP). In particular, CP would have wished “to have experts during the first weekend already” (Int.CP) and have more time to “include new information [from expert hearing]” (Int.CP), perform “content discussion with the whole group” (Int.CP), “form an opinion” (Int.CP), and conduct “final editing” (Int.CP). As one participant stated: “finding consensus is super exhausting. We would probably need another weekend that all can convince each other” (Int.CP). For others, it was not so much a question of time but more the “completely opposing views” (Int.CP) that hindered consensus. A few interviewees pointed to challenges in making decisions on how wide and fundamental the topic should be addressed: if cattle is dehorned through genome editing, for example, that improves animal wellbeing. Then it was questioned [. . .] is it not the real problem that animals are kept too close [. . .] And then there was always this tendency to change the whole society and how the economy is at the moment. [. . .] So the tendency was not do we want to assess genome editing under the current conditions. Or do we want societal change? (Int.CP)

Here, the wide scope of the conference (considering impacts of genome editing on humans, animals, and plants) seemed to pose an extra challenge: what I did not like from the beginning and that went through to the end was the broadness, that [. . .] you take the method and say [. . .] we want food, that would be agriculture, animals. And then also human health, which is a completely different field. (Int.CP)

Some interviewees raised criticism concerning the facilitation and moderation. Several would have preferred more methodological guidance, especially during the discussions of the first weekends. At the same time, a few individuals complained about the moderator being too dominant or “judgemental” (Int.CP). Others voiced discomfort during the interviews and the observed event when the facilitators supported the group in the final transcription and editing of the votum. Here, the difficulty in providing facilitation without threatening perceptions of impartiality becomes explicit. Since the beginning, the BfR as a conference initiator was confronted with questions about impartiality due to its formal relationship to the Federal Ministry. For example, one NGO stated publicly: after citizens have been against the use of gene technology in food [. . .] for decades, the ministry wants to create its own ‚citizen votum’ that [. . .] can be used as a legitimacy base for pro gene technology policy decisions. [. . .] BfR is subordinated to the agricultural ministry. The ministry clearly says that it does not want to regulate technologies such as crispr cas [. . .] as gene technology. (https://www.keine-gentechnik.de/nachricht/33756/, translated)

Some participants voiced similar concerns during the event and the interviews. One citizen interviewee, for example, explained: “in the beginning I thought, well, maybe this is a simulation of citizen participation [. . .] whether we will be used as alibi in the political debate” (Int.CP). Such allegations strengthened the focus of the initiators and organizers on impartiality. This filtered through to the moderation and facilitation but also to a multitude of transparency and external review processes. Many stated during interviews and survey (Q.10 and Q.11) that goals, roles, and processes were indeed transparent. The majority of CP did, according to the interviews, not feel unduly influenced by the initiator, the organizing communication agency, or the moderator. In fact, the citizen quoted above continued the remark on initial reservations as follows: “I was positively surprised. [. . .] It was a fair process. We were provided with information material from critiques as well as supporters of this new technology” (Int. CP). This impression was supported in the survey and other interviews as the information material and the exchange with professional expert were largely considered helpful (PS, Q.1 and Q.2) and, according to the interviews, balanced in their perspectives.

However, transparency also brought its challenges. For example, a number of citizens felt left alone in sourcing additional information and in finding ways to communicate with each other outside the organized events: “what I missed was an online exchange. The participants exchanged intensively but via email etc. I would have liked a work space, where you can store files etc.” (Int.CP). Requests for additional information were usually met with significant delays. From the initiator’s perspective, ad hoc requests for additional information collided with their perceived need for external review and transparency of all information provided.

Output Legitimacy

Summarizing the literature review, creating output legitimacy necessitates the creation of an inclusive and (cost) effective impact on policy making as well as wider learning on the topic and citizen participation in general. Concerning the inclusiveness of the output, 11 out of 15 citizens fully or somewhat agreed that the consumer vote reflected the opinions of all participants (PS, Q.14). During the interviews and the observed event, many CP voiced their dissatisfaction with the final votum. While for some the wide scope and portrayal of divergence in the final votum was already problematic, others felt that important aspects were still missing or presented wrongly: “there are things in there that were not said. And there are things not in there, that were explicitly demanded to be included” (Int.CP). As mentioned in Section 5.2., many would have wished for more time to discuss and edit the final votum: we practically did a collection of information. And then there was little time left. What should have happened is that we cluster again and discuss and think what stays. And that did not happen. I found it very helpful that it was transcribed by the organizers. [. . .] But because of it some had the feeling that there are things in there that, if we had further discussed them, would not be in there. (Int.CP)

One citizen participant wondered whether a different format could have helped.

a format that allows for different opinions and makes them transparent. [. . .] It could be fictional interviews or [. . .] a webchat. [. . .instead of. . .] a long linear text with the aspirations of a scientific publication so to say, where it is black on white exact. The result of this conference is not exact. (Int.CP)

While consensus conferences are generally considered very time consuming and expensive (Abels, 2007), criticism around cost-effectiveness occurred but were not prominent. Both in the survey (Q.16 and Q.17) and the interviews, nearly all participants concluded that it was a worthwhile endeavor and showed motivation to engage in future participation processes. All CP indicated that they learned something about genome editing (PS, Q.15) and around citizen participation in general (PS, Q.18). One citizen interviewee, for example, concluded: [it] gives an insight into difficult decision-making processes. And if you imagine sitting in politics and you have the same problem. Whom do you believe and which interests do you pursue? Then you think for yourself, well okay it really is complex. So in that sense, sounds a bit lofty, but it indeed was a bit of a democracy exercise. (Int.CP)

A number of interviewed DM, organizers, and PE came to similar conclusions with one interviewee from the organizational team adding: “I saw, that, if you make an effort, you are surrounded by complaisant people. [. . .] The people were all [. . .] very engaged” (Int.O). Observations further supported an impression of a very motivated citizen group that participated not only in all formal events but spend large amount of their free time to engage with the process. According to the interviews, many also spoke about their experience with friends, family, and colleagues.

PE emphasized their individual learning too, especially from the direct exchange with citizens—an impression that was shared by citizens: “I especially liked that there was the possibility to speak directly with some [professional experts]. For that I would have liked more time” (Int.CP). One participant felt that the tight time schedule during the last weekend “resulted in every expert being able to only say one sentence more or less. And not being able to question whether he is right. This means I elevate the expert because they have interpretational authority” (Int.CP). One professional expert proposed to organize instead “small groups that interact with one, two or three from the citizen group for four hours” (Int. PE).

Looking at the open questions and the interviews, the biggest motivation for citizens to participate in the event was, next to personal interest in the topic and the participation experience, the opportunity to influence policy making. During interviews, DM reported individual learning but rarely that they shared this learning beyond their immediate circles or translated it directly into changes of (policy) agendas. For one interviewee, it is maybe too early to judge this. [. . .] Political conclusions for further handling of this technology will, realistically be made at the European level. [. . .] And for that there will be a discussion process. And in this discussion process the question how consumers see it will play a role. And therefore it is always good to be able to say: we spoke with consumers. We did this in a very ambitious way, not only through a telephone survey but in an elaborate discussion and the heterogeneity of the result shows that you cannot say clearly: the consumers don’t want this or they do not care at all. (Int.DM)

One interviewee raised that in my perception it was hardly covered by the press. That was also because on the same Monday [the day of the final event] there was an event where the press-center was filled to the last seat. [. . .] In other words, it is always a question of competing topics. Maybe one month later or earlier the event [. . .] would have been received very differently. (Int.DM)

Supporting the prominence of large n demands, some interviewees saw the lack of statistical representativeness as a problem, demanding instead “bigger surveys where you reach more people. Where the questions transmit information and where the result can make clear in a much more valid way: where are information gaps, where are certain opinions, that run completely past reality” (Int.DM). As mentioned previously, for other interviewees the lack of consensus diluted the ground for a clear impact. One decision-maker concluded: “the votum is so wide that it includes aspects that are hardly compatible. And then everyone, who looks at the votum, can feel confirmed” (Int.DM). Another reflected: “actually one would need a step somehow afterwards, where you say: OK, what do we do with what they said? Maybe this is why it diverted into well-known camps in the end” (Int.DM).

The last quote refers to the panel discussion during the final event where many felt that DM did not really address consumer concerns. One panelist critically reflected: “the discussion addressed the votum only very limitedly, to be honest. It was effectively a panel discussion that we could have had without the votum. Because it was about the different political and association positions on this” (Int.DM). To address this shortcoming, some interviewees suggested sharing the votum in advance instructing panelists to comment more directly. Others suggested organizing more direct exchange with the citizens, for example by including citizens in the commenting panel or facilitating more informal exchange.

Discussion

The results both support and contradict previous research, illustrating the inherently conflicting nature of deliberation exercises. On the input side, they resonate with previous studies (Dean et al., 2022; Dryzek & Tucker, 2008; Guston, 1999; Rask & Worthington, 2012) that point to the difficulties in ensuring inclusive participation from across society. While Jacobs and Kaufmann (2021) call to improve legitimacy through more self-selection, the case provided further evidence that self-selection tends to attract better educated, interested, and motivated parts of society (Dean et al., 2022; Fung, 2006). The resulting “representative sample of willing citizens,” as one participant termed it, impressed with extraordinary motivation to engage in a deliberation process that met many throughput ideals. However, inherent challenges, for example regarding unequally distributed abilities to engage in rational discourse, or finding consensus within increasingly contested context remained (Van Bouwel & van Oudheusden, 2017). While some authors urge organizers of deliberation events to remain open for all potential context (Richter et al., 2022; Rountree et al., 2022), a number of interviewees showed a “pragmatic orientation” (Rountree et al., 2022) arguing that the wide scope of the event coupled with a tight time schedule contributed to an output that they saw as too vague and diverse to have an impact on policy making. Instead, like in many previous studies, the main impact was perceived to be on individual learning around genome editing and citizen participation (Dryzek & Tucker, 2008; Guston, 1999; Rask & Worthington, 2012). To expand their learning, participants would have liked more direct exchange between citizens, PE, and DM. This resonates not only with previous demands for more informal and experimental exchange (Burgess, 2014; Dryzek, 2000; Guston, 1999; Roberts et al., 2020) but also with those authors that point to inherent conflicts between logics of science authority and deliberative questioning in consensus conferences (Merkelsen, 2011). Whether organizers should expand the time-frame to meet such demands is questionable. Recent research suggests that longer processes can “increase participants’ confidence in their issue-specific knowledge” (Knobloch & Gastil, 2022, p. 11). However, additional costs both for organizers and participants need to be considered. The study, therefore, supports Knobloch and Gastil’s (2022) call for more research to “explore exactly how much rigorous deliberation is required to produce desired outcomes” (p. 10).

Concerning proposals to connect closer to windows of policy-making opportunity, the findings show a mixed picture. While the relevance of potentially competing topics at a particular point in time was supported and some interviewees demanded the closer engagement of DM, existing connection with the ministry through the initiating organization (BfR) sparked internal and external criticism. Also considering the already apparent “pragmatic orientation” (Rountree et al., 2022) among participants, organizers of deliberation events seem to face a dilemma between ensuring output legitimacy and maintaining procedural openness, impartiality, and equality (Caluwaerts & Reuchamps, 2015; Guston, 1999; Nishizawa, 2005). While the adherence to deliberative ideals, such as the provision of externally reviewed information, may help to partially address this dilemma, it can detriment the simultaneous need to facilitate increasingly dynamic information seeking processes. As previous authors concluded (Anderson et al., 2013; Capurro et al., 2015), deliberation ideals partly ignore how participants seek their information in practice.

To address this issue, Anderson et al. (2013) suggest that organizers “might facilitate a discussion at the outset of the forum about the use of the Internet for information [. . .] Consensus conference organizers could even structure information sharing opportunities online” (p. 967). New hybrid formats that combine face-to-face deliberation with online interaction, may be able to address some of the other challenges identified, too. More precisely, they could help participants in their wider information sourcing, potentially also providing more opportunities to exchange arguments among participating citizens to address time issues, between citizens, PE, and DM to intensify learning or, possibly, with a wider (online) community to increase representativeness. If combined with novel advertisement and communication strategies, it may also help attract more diverse citizen groups to address issues of input inclusiveness.

In line with such calls, various hybrid formats have already emerged (Delborne et al., 2011; Itten & Mouter, 2022; Kaplan et al., 2021). Such developments face multiple procedural challenges, however. As Lafont (2015) summarizes, “We need not consult empirical research to see that even the most general necessary conditions for deliberation are best satisfied in small-scale face-to-face deliberation” (p. 46). In other words, another legitimacy dilemma emerges for deliberative science communication—this time not between output and throughput but between input and throughput legitimacy. Online participation adds several challenges to this dilemma, such as hurdles for facilitation practice and software capabilities or dealing with issues of digital incivility (Anderson et al., 2014; Delborne et al., 2011; Kaplan et al., 2021; Webler & Tuler, 2021). The latter can threaten not only processes of rationale deliberation but also contribute to further polarization within already highly contested discourses. Against this context, some formats of participatory science communication have explicitly moved away from the ideals of deliberative democracy. Resisting the tendency to further “close off” or “clean up” online spaces of science communication and engagement, they look instead at opportunities to “play in” and further “break [. . .] already broken spaces” (Mendel & Riesch, 2017, p. 681). For Ureta (2016), the future of democracy technologies “does not lie in running neat experiments in accordance with well-bounded scripts but must go in the direction of enacting multiple versions of experimental platforms, with all the mess and transformations that such a process entails” (p. 509).

To some extent, this is in line with deliberative theorists, who argue that inequality can be addressed by expanding the range of acceptable (from a deliberation perspective) modes of communication beyond rational argument (Dryzek, 2000). Based on a recent study of deliberation in Ghana, Chen (2021) argues that organizers should tailor their designs to their target group, especially when trying to include groups from lower education background. This necessitates creativity and flexibility for new approaches and sufficient resources to facilitate both. Similar arguments can be found in the recent RRI literature (Pansera et al., 2020).

While such input and throughput experimentation seem certainly worthwhile, inclusiveness challenges are likely to remain (Itten & Mouter, 2022). This relates to inequalities in (technical) resource access but also, for example, in the capacity to tell one’s story (Dryzek, 2000) or inherent inequalities in the production of (dominant) knowledge (Valkenburg, 2020). Furthermore, such experimentation does not necessarily pose a solution to the previously discussed output challenges. Especially policy-makers tend to prefer the presentation of clear results rather than messy plurality. As the case presented in this paper illustrates, limiting participation methods to the “disclosure” (Van Bouwel & van Oudheusden, 2017) of divergence may detriment crucial impact. Against this context, one should arguably not undervalue “the process of finding ways to make agreements” (Irwin, 2017, p. 13). Indeed, with an increasing erosion of democracy, “certain consensual ideals seem more important and powerful than ever” (Irwin, 2017, p. 14). When thinking about whether we should focus on what we share or what divides us, Irwin’s answer is that it can only be “both.” Identifying these output lines in a way that meets ideals of input and procedural legitimacy arguably emerges as one of the most difficult but also most urgent challenges for science communication and society as a whole.

Conclusion

In light of a (re)emerging deliberation trend within and beyond science communication coupled with calls for more systematic evaluations (Kappel & Holmen, 2019; Webler & Tuler, 2021), this paper used a legitimacy framework to evaluate a recent consensus conference on new genome editing technologies.

On an empirical level, the case supports previously described challenges of deliberative science communication in ensuring inclusive input from across society. From a processual, or “throughput” perspective, it identifies conflicts between the requirements emerging from deliberative ideals and empirical information sourcing practice as well as demands to develop convergent outputs to ensure policy impact.

On a methodological level, there has arguably been a shift in deliberation research away from exploring the internal dynamics of individual mini-publics to ways to increase impact on the overall political system (Curato et al., 2020; Rountree et al., 2022). The legitimacy framework used in this study made an attempt to consider both perspectives. In doing so, it allows to shed light on inherent conflicts and come to multifaceted conclusions. While using a legitimacy framework to evaluate public participation is not new, the study combined core legitimacy and deliberation theory with empirical insights gained from recent deliberation and wider participation exercises and, as called for (Kappel & Holmen, 2019; Webler & Tuler, 2021) a translation into empirically investigable indicators. It thereby assumed that norms of democratic deliberation are the ground of both perceived and ideal type of legitimacy (Barker, 1990). As such, the wider usefulness for the evaluation of other deliberation exercises, within and beyond the context of science communication, seems likely (for democratic societies), but should be investigated in further research.

Footnotes

Acknowledgements

The author takes whole responsibility for the analysis presented but would like to thank everyone involved in this deliberation event, especially all interview and survey participants, as well as Jan-Nikolas Hargart for his valuable research assistance.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Financial support for this research was granted by the German Federal Institute for Risk Assessment.