Abstract

Some people stick to beliefs that do not align with scientific consensus when faced with science communication that contradicts those misperceptions. Two preregistered experiments (total N = 1,256) investigated the causal role of motivated reasoning in the effectiveness of correcting misperceptions. In both experiments, accuracy-driven reasoning led to a larger corrective effect of a science communication message than reasoning driven by directional motivation. Individuals’ default reasoning made them just as receptive to the correction as accuracy-driven reasoning. This finding supports a more optimistic view of human receptivity to science communication than often found in the literature.

Some people hold beliefs that do not align with the scientific consensus. And some of them stick to these misperceptions, even when they are faced with evidence that directly contradicts their beliefs (e.g., Holman & Lay, 2019; Nyhan et al., 2013; Nyhan & Reifler, 2010). The current research investigated the causal role of motivation in holding on to misperceptions about scientific facts in the face of corrective science communication. To what extent is sticking to misperceptions driven by directional motivated reasoning? And can reasoning be influenced to increase the effectiveness of corrective information?

Vaccine and Food Safety

Misperceptions are factual beliefs that are false or contradict the best available evidence in the public domain (Flynn et al., 2017). They can be very harmful, for example, when they are related to health issues. Two health issues that suffer from misperceptions are vaccination and food safety. While vaccination programs are one of the most successful health interventions in the history of humankind, a notable proportion of the general public is skeptical of vaccines (Larson et al., 2016). This skepticism influences the decision to vaccinate (Joslyn & Sylvester, 2019), which can have very harmful consequences. For instance, preliminary data from 2019 indicate that measles cases have spread fast among clusters of unvaccinated people in countries with high overall vaccination coverage (World Health Organization, 2019). Furthermore, in the United States, vaccine refusal has been linked with outbreaks of vaccine-preventable diseases (Phadke et al., 2016). Trends such as these have led the World Health Organization to classify vaccine hesitancy as one of the most important threats to global health in 2019, together with threats such as climate change and HIV (World Health Organization, n.d.).

Focusing on the second issue, food safety, a primary example of harmful misperceptions can be found in beliefs about E numbers. E numbers are used in Europe to identify food additives and were introduced to reassure consumers that additives indicated with these numbers are safe to consume. Food additives indicated with E numbers can have important functions, such as preventing the growth of harmful bacteria that cause potentially fatal diseases like botulism (Food Standards Agency, n.d.). Paradoxically though, E numbers have evolved into a cause of worry among consumers over the safety of these additives (Haen, 2014; Saltmarsh, 2015). The misperception that these additives are unsafe can have serious negative consequences for food production, with food producers forced to look for costly replacements for safe and tested food additives. Moreover, consumers’ food choices likely suffer from avoiding food products that are actually safe to eat (Shim et al., 2011).

In the current research, we focused on correcting one misperception related to vaccination and one misperception related to food safety. By studying two different topics, we tested whether our findings are generalizable to diverse topics of misperceptions. In the first experiment, we provided corrective information to the misperception that childhood vaccines can “overload” a child’s immune system. In the second experiment, we provided corrective information to the misperception that food additives indicated with E numbers are unsafe to consume. Correcting such misperceptions is important because these beliefs inform opinions on important policy, such as policy about unvaccinated children going to day care, and behavioral intentions, such as the intention to avoid safe to consume food products. These beliefs may even be determinants of behavior (e.g., Joslyn & Sylvester, 2019). In addition, having an informed citizenry is a valid goal in itself.

Motivated Reasoning

Misperceptions can be difficult to correct because people are biased information processors. One important psychological source for bias is called directional motivated reasoning (Kunda, 1990). This means that one has a directional goal in reasoning, for instance, a preferred outcome. A directional goal can lead to biased reasoning in support of that goal. This type of reasoning explains the heartfelt supporter of the free market who believes that climate change is a hoax, because the preferred outcome of minimum climate change regulation biases reasoning toward believing climate change is not real. Similarly, directional motivated reasoning can explain the heavy smoker who rejects the evidence for a link between smoking and lung cancer, because one prefers to be able to smoke without having to worry about negative health effects. It can even explain paradoxical findings of corrective messages leading to “backfire” effects (Hart & Nisbet, 2012; Nyhan et al., 2013; Nyhan & Reifler, 2010; Zhou, 2016): Directional motivation can make one counterargue the corrective message, thereby strengthening the original misperception.

While numerous studies have focused on identifying sources of directional motivated reasoning, evidence for a causal role of directional motivated reasoning in holding on to misperceptions in the face of corrective information is to date limited. Previous research identified prior beliefs as a general source of directional motivated reasoning (e.g., Lord et al., 1979; Taber & Lodge, 2006), as well as more specific sources such as partisanship (Bolsen et al., 2014; Gaines et al., 2007; McCright & Dunlap, 2011) and related sources such as identity protection (Kahan et al., 2017; Lyons, 2018), conspiracy ideation and worldviews (Lewandowsky et al., 2013), and solution aversion (Campbell & Kay, 2014). Research on the causal effect of directional motivated reasoning in sticking to misperceptions, however, is difficult because one cannot easily manipulate an individual’s political ideology or worldview. To test a causal link between directional motivation and holding on to misperceptions, experiments where motivations are manipulated are needed to disentangle the effects of motivation from other processes (Leeper & Slothuus, 2014). This can be done, for instance, by seeking ways to experimentally induce directional motivated reasoning (Nyhan & Zeitzoff, 2018).

There is some experimental research on the causal effect of directional motivated reasoning on opinion formation (Bolsen & Druckman, 2015; Bolsen et al., 2014). This effect is demonstrated by comparing directional motivated reasoning to accuracy motivated reasoning. In contrast to directional motivated reasoning, an accuracy-motivated individual has the goal to come to what is believed to be the most accurate conclusion (Kunda, 1990). This experimental research has shown that inducing accuracy-driven reasoning has the potential to reduce the biasing effect of directional motivation on opinion formation (Druckman, 2012), for instance, regarding support for energy policy (Bolsen et al., 2014) and emergent energy technologies (Bolsen & Druckman, 2015). However, it is unclear whether these effects on opinion formation generalize to factual beliefs. Opinions are more subjective than factual beliefs, possibly making them more receptive to effects of motivated reasoning than, for instance, factual beliefs about the safety of vaccines. Nonetheless, these findings suggest that inducing accuracy motivation might be a suitable intervention to increase the effectiveness of corrective science communication in the face of misperceptions. Yet, there is a lack of studies on inducing accuracy motivation. Understanding when accuracy-driven reasoning overtakes directional reasoning is important in studying misperceptions (Druckman & McGrath, 2019; Flynn et al., 2017).

The current research aims to fill these gaps. The aim was to study the causal role of directional motivated reasoning in holding on to misperceptions in the face of corrective science communication and to investigate whether inducing accuracy motivation can aid in correcting misperceptions. We explored, not hypothesized, potential effects of directional motivation on policy opinions and intentions, because results in the literature regarding these second order outcomes are mixed (Flynn et al., 2017; Nyhan & Reifler, 2015). We conducted two preregistered experiments in which a science communication message was used to correct a misperception. In both experiments, the message itself was effective in reducing endorsement of the misperception. More important, type of motivation in processing of the message affected how strong the corrective effect of the messages was, with accuracy-driven reasoning leading to a larger correction than directional motivated reasoning. Our findings also partially demonstrated that the influence of motivation was not limited to the misperception itself but extends to second order outcomes policy support and intentions.

Experiment 1: Vaccine Safety

In the first experiment, a vaccine-related misperception was corrected. By measuring endorsement of the misperception before (prior) and after (posterior) motivation was manipulated and corrective information was presented, we could expose all participants to the corrective message while investigating the influence of motivation. Based on previous research on motivated reasoning (Bolsen & Druckman, 2015; Bolsen et al., 2014), we believed that an accuracy-motivated individual would be more susceptible to corrective information than a directionally motivated individual. Therefore, we expected that inducing accuracy motivation would lead to lower posterior endorsement of the misperception than inducing directional motivation, while controlling for prior belief certainty.

Although accuracy motivation may amplify the corrective effect of a science communication message, the effect of motivation on a belief is subject to reality constraints (Kunda, 1990; Molden & Higgins, 2005). When one holds a belief about reality with high certainty, the degree to which motivation can affect the interpretation of new information regarding that belief is constrained. For instance, you can prefer to be 20 cm taller than you actually are, but the certainty with which one’s height can be determined should inhibit this effect of motivation. Therefore, we expected that when individuals were very certain of their prior belief the effect of motivation would be limited, while under uncertainty the effect of motivation should be larger. Specifically, we expected that motivation would interact with prior belief certainty, such that the more certain the prior belief was, the smaller the corrective effect of accuracy motivation on the posterior belief would be.

Method

Among others, the hypotheses, sampling procedure, main analyses, and exclusion criteria were preregistered on the Open Science Framework (OSF; https://bit.ly/2P2EGdP). Initially, successful completion of an instructional manipulation check (IMC; see Measures section) was included as one of the exclusion criteria. IMCs are used to detect whether participants read the instructions and can increase statistical power and reliability of the data (Oppenheimer et al., 2009). After collecting data from 38 participants, we decided that the IMC was too sensitive. Based on the IMC, we needed to exclude 31 participants (81.58%), who actually appeared to complete the experiment seriously, as indicated by completion times (M = 474.23 seconds), reading time of the corrective information (M = 83.88 seconds) and coherence of responses to open questions. We believe that the overly sensitive IMC may be due to the instructions of the IMC being irrelevant to the question that was asked, not due to a lack of effort on the side of the participants. Therefore, this exclusion criterion was dropped. The preregistration was updated to reflect this new exclusion criterion (https://bit.ly/2Ry9SU4). We chose to keep the data for exploratory analyses (total N = 404), for ethical reasons (i.e., not wasting participants’ serious responses). The manipulation check and main analysis (reported below) included only those participants who participated in the experiment after the preregistration was updated (n = 372).

All data and the R script for the analysis can be found on the project page on the OSF (https://bit.ly/2Pv1mlY). Both Experiments 1 and 2 (see below) are part of a research project that was reviewed and approved by the Ethics Committee Social Science at Radboud University (ref. ECSW-2018-056).

Participants and Design

Participants were screened and recruited using online crowdsourcing platform Prolific. Prolific has been demonstrated to yield high-quality data and more diverse participants than student samples or other major crowdsourcing platforms (Peer et al., 2017). We screened participants prior to the experiment on whether they endorsed the misperception. They were presented with a list of five statements, including the misperceptions from Experiment 1 and Experiment 2 (see below) and were asked to select all of the statements they believed to be true. Only participants who indicated the statement “Giving young children multiple vaccinations overloads their immune system” was true were eligible for participation in the experiment.

Following a Bayesian sequential sampling procedure with optional stopping and maximum N (Schönbrodt et al., 2017), 542 participants (U.K. nationals) were recruited. Participants each received £0.85 for participating in the experiment. During the sequential sampling procedure, we checked the Bayes factor (BF) at predetermined intervals to evaluate the evidence in the data for or against the first hypothesis. The advantage of this procedure is that it allows for efficient data collection. No more or less data are collected than necessary for a specified level of certainty, since the BF indicates both evidence for an effect and evidence in favor of no effect. We continued data collection until there was strong evidence (0.1 > BF01 > 10; Schönbrodt et al., 2017) in favor or against our first hypothesis. We started checking the BF for the hypothesized effect when 171 participants (enough to detect a medium effect size with 0.90 power and α = .05; Faul et al., 2009) completed the experiment and continued to check it after every set of 50 new participants. If at any time the BF reached the required level of evidence for or against the hypothesis, data collection would be stopped. This was never the case, therefore data collection continued until the maximum N of 500 participants (excluding participants before the updated preregistration).

We collected data from a total of 542 participants. Three participants who completed the experiment too fast and one who did not participate through Prolific were excluded (not preregistered). This resulted in the planned 538 participants, of which 500 participated after the updated preregistration. At the start of the experiment, participants indicated their endorsement of the misperception. Even though we screened participants before the experiment on whether they held the misperception, only 407 (75.65%) indicated that they endorsed the misperception at the beginning of the experiment (score > 0 on the misperception measure; see Measures section). As we are interested in correcting misperceptions, only these participants were included in the analyses. Three participants who did not understand the misperception measure were identified by means of an open answer question in the experiment. Their response to the open answer question indicated a large correction in their endorsement of the misperception, but their response on the misperception measure conflicted with this. They were excluded post hoc. This resulted in 404 participants (269 female, 135 male, Mage = 38.95 years, SDage = 12.73) in the total data set, of which 372 participated after the updated preregistration.

The experiment consisted of a one-factor (motivation in reasoning; accuracy-driven reasoning vs. directional reasoning) between-subjects design. In one condition, accuracy motivation in reasoning was induced (n = 209), in the other condition, directional motivation in reasoning was induced (n = 195).

Materials and Procedure

The experiment was conducted using Qualtrics survey software. First, prior endorsement of the misperception was measured. Subsequently, participants were randomly assigned to two conditions. They were instructed to read a text either in a way that induces directional motivation or in a way that induces accuracy motivation, based on Bolsen et al. (2014). We piloted an earlier version of the manipulation in a preregistered experiment (https://bit.ly/2rwk2Ka). Based on the results of that pilot, we made some improvements to the manipulation. The instructions in the directional condition (85 words) included telling participants that we were interested in their judgment because they believed that vaccines can overload a child’s immune system and asking participants to be aware of this belief when reading the upcoming text. Furthermore, we asked them to apply their perspective, and to think of what would confirm their initial belief. The instructions in the accuracy condition (79 words) included telling participants we were interested in their judgment because we studied how people process information and come to conclusions and asking participants to be evenhanded. Furthermore, we asked them to apply various perspectives and to think of what would disprove their initial belief (for the full texts, see Appendix A). Participants were required to stay on the page with the instructions for at least 10 seconds. After 10 seconds, the button to continue to the next page appeared, which included the text “I will be aware of my belief and view the information from my perspective. I will try to think of what could confirm my initial belief” (directional condition) or “I will view the information in an evenhanded way and from various perspectives. I will try to think of what could disprove my initial belief” (accuracy condition).

Both groups were then presented with the science communication message (386 words), which contained information correcting the misperception. The information was based on information from Science magazine (Hickok, 2018), the National Health Service (NHS; 2016), the University of Oxford Vaccine Knowledge Project (n.d.), and the American Academy of Pediatrics (2008). The text explained that vaccines do not overload children’s immune system and that this knowledge is based on many scientific studies. One recent study was explained in more detail and an explanation was given of why vaccines do not overload the immune system. A graph was included in the text (see Appendix B for the full message).

After reading the corrective text, participants’ endorsement of the misperception was measured again (the posterior belief). We explained to participants that this second measure was not a test, but that we were interested in their belief. The remaining variables were measured, among which was an open answer question in which participants were asked to give a short justification for their answer on the measure of posterior endorsement of the misperception.

Measures

Endorsement of the misperception was measured twice, once at the beginning of the experiment and once after the motivation manipulation and corrective message. This not only increased power to detect an effect but also allowed us to investigate both the corrective effect of the message itself and the effect of motivation on receptivity to this message. Endorsement of the misperception was measured by asking participants to what extent they believed the following statement to be true: “Giving young children multiple vaccinations overloads their immune system.” Their response was measured on a visual analogue scale ranging from I am 100% certain this is false (−100) to I am 100% certain this is true (100) with I don’t know in the middle (0). Since we included only those participants who endorsed the misperception at the beginning of the experiment, the prior score on endorsement of the misperception is simply a score of how certain they were of their belief in the statement (i.e., prior belief certainty).

The manipulation check consisted of six statements. Half of these statements reflected the instructions from the directional motivation condition (e.g., “While reading the information, I tried to view the information from my perspective”), the other half were in line with the accuracy motivation instructions (e.g., “While reading the information, I tried to view the information from various perspectives”). Responses were measured on a 7-point scale ranging from strongly disagree to strongly agree. Average scores were calculated for following the directional and accuracy motivation instructions separately.

Several exploratory variables were measured at the end of the experiment. Perceived change in belief certainty was measured by asking participants if they became more or less certain of their initial belief, measured on a visual analogue scale ranging from I am much less certain now (−50) to I am much more certain now (50), with my certainty has not changed in the middle (0). Support for policy aimed at stimulating people to vaccinate their children and intention to vaccinate one’s children were measured with responses to single statements. Open-minded cognition (OMC) was measured using the OMC scale developed and validated by Price et al. (2015). Trust in the NHS, trust in scientists, perceived reliability of the information provided to the participant, perceived knowledge of vaccines, and importance of the topic were measured with single response items. All of these items were measured on 7-point scales. Additionally, an IMC (Oppenheimer et al., 2009) was included in a short text that introduced the demographic questions, to detect participants who were not following the instructions. The text instructed participants to ignore the first question, which was about political parties, and instead to mark the “Other” box and write “I read the instructions.” Religiosity and belief in complementary or alternative medicine were measured using a discrete (yes or no) response. Finally, age, gender, and education were asked. For the complete wording of all the questions, see the supplemental material. Not all variables were included in the analyses, because they were not of direct interest to the current research. Some background variables (e.g., trust) were measured because they could be relevant to compare the current research to existing research (e.g., Nyhan et al., 2014), others (e.g., OMC) were measured because they could provide useful insight for future research on correcting misperceptions. All data are available on the OSF (https://bit.ly/2Pv1mlY).

Data Analysis

One analysis was conducted to test both hypotheses simultaneously: an analysis of covariance (ANCOVA) with posterior belief as the dependent variable and motivation condition as the independent variable, prior belief certainty as continuous predictor, including the interaction term between prior belief certainty and motivation condition. The first hypothesis regarded the main effect of motivation on posterior endorsement of the misperception, the second regarded the interaction between motivation and prior belief certainty. In the confirmatory analyses, complementary BF are reported.

Results

Manipulation Check

Two one-tailed, independent-samples t tests were conducted to test whether the motivation manipulation had the expected effect on participants’ motivation in reasoning about the corrective information. As expected, participants in the directional condition (M = 5.38, SD = 1.06) scored significantly higher on the questions measuring directional motivation than participants in the accuracy condition (M = 4.96, SD = 1.18), t(369.75) = 3.57, p < .001, d = 0.37, 95% confidence interval (CI) [0.16, 0.58]. Also as expected, participants in the accuracy condition (M = 5.61, SD = 1.02) scored significantly higher on the questions measuring accuracy motivation than participants in the directional condition (M = 5.04, SD = 1.22), t(348.47) = 4.86, p < .001, d = 0.51, 95% CI [0.30, 0.72].

Confirmatory Analyses

In support of the first hypothesis, the main effect of motivation condition on posterior endorsement of the misperception while controlling for prior belief certainty was significant, F(1, 368) = 4.01, p = .046,

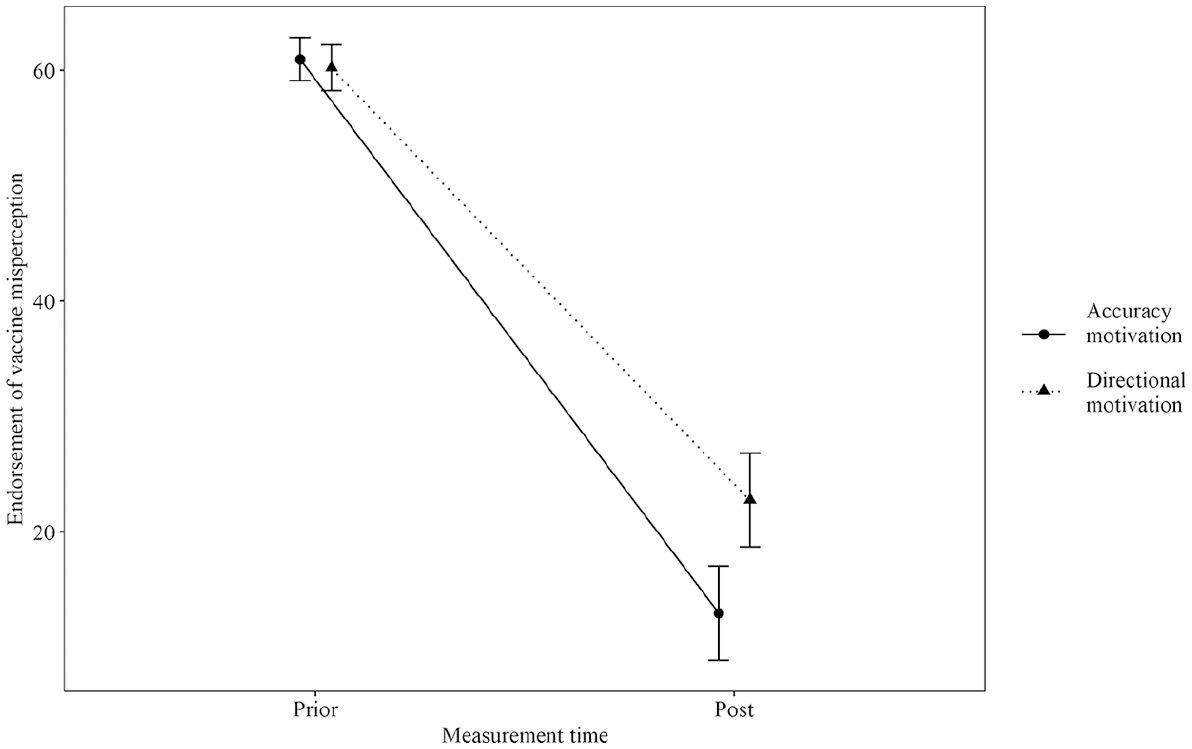

Prior and posterior endorsement of the vaccine misperception separated by motivation condition.

Analysis of the standardized residuals of the ANCOVA model indicated that four observations were classified as model outliers at > 3 SD. Further investigation showed that these four participants all changed their belief in the most extreme way possible; from 100 to −100. Although not preregistered, another ANCOVA was conducted to examine the effect of these four observations on the results. This second ANCOVA, excluding the four model outliers, also yielded a significant main effect of motivation condition on posterior endorsement of the misperception after controlling for prior belief certainty, F(1, 364) = 6.20, p = .013,

Exploratory Analyses

In addition to the hypotheses tests, exploratory analyses were conducted. In all these analyses, model outliers based on standardized residuals >3 SD were removed from the model. For the complete (preregistered) exploratory analyses, see the supplemental material.

The first of the exploratory analyses was similar to the main analysis, but included all participants, not just those who participated after the updated preregistration. The ANCOVA indicated that the main effect of motivation condition on posterior endorsement of the misperception found in the main analysis was again significant when all observations were included, F(1, 397) = 8.88, p = .003,

Second, we explored differences between the two conditions on policy support for vaccination and intention to vaccinate. Two ANCOVAs, with motivation condition as the independent variable, policy support or intention as the dependent variable, and controlling for prior belief certainty, yielded no significant effect of motivation condition (both ps > .09).

Discussion

Experiment 1 provided support for a causal effect of motivated reasoning on the effectiveness of correcting a vaccine-related misperception. In the face of a corrective science communication message, directional motivated reasoning made participants stick to a misperception more than accuracy motivated reasoning. Contrary to our expectations, the influence of motivation on the effectiveness of the correction was not moderated by certainty of the prior belief. Motivation seems to play a role in the effectiveness of a corrective message, regardless of how certain an individual was of a misperception. Exploratory analyses indicated that there was no effect of motivation on support for policy aimed at increasing vaccination or intention to vaccinate.

This experiment is the first to demonstrate a causal role of motivated reasoning in sticking to misperceptions in the face of corrective information. However, there are two limitations. First, the design of the experiment did not allow us to draw conclusions about the effectiveness of inducing accuracy motivation in correcting misperceptions because we could only compare the accuracy condition to the directional condition. It was unclear whether accuracy motivation would increase the effectiveness of the correction compared with a more natural (no motivational instructions) situation. Second, this experiment addressed one topic: vaccination. It was unclear whether the results would generalize to other topics. Both limitations were addressed in Experiment 2.

Experiment 2: Food Safety

In the second experiment, a food-related misperception was corrected and a control condition was added. First, as in Experiment 1, we expected that inducing accuracy motivation would lead to lower posterior endorsement of the misperception than inducing directional motivation, while controlling for prior belief certainty.

Second, research on motivated reasoning has shown that directional reasoning is likely to be the default reasoning style in information processing (Nyhan & Reifler, 2019). Therefore, we expected that inducing accuracy motivation would lead to lower posterior endorsement of the misperception than not inducing any motivation, while controlling for prior belief certainty.

The second hypothesis from Experiment 1, regarding the interaction between motivation and prior belief certainty, was dropped because of a lack of support for this hypothesis in Experiment 1.

Method

The setup was similar to that of Experiment 1, with the addition of a control condition in which we did not manipulate participants’ motivation in reasoning about the corrective message. Furthermore, the IMC was replaced by an instructed-response item (see Materials and Procedure section). Just as Experiment 1, the experiment was preregistered on the OSF (https://bit.ly/2Ryop21) and all data and the R script for the analysis can be found on the project page on the OSF (https://bit.ly/2Pv1mlY).

Participants and Design

Again, participants were screened prior to participating in the experiment. We selected only those that indicated the statement “Food additives indicated with an E number are unsafe to consume” was true. Following a Bayesian sequential sampling procedure with optional stopping and maximum N (Schönbrodt et al., 2017), 1,142 participants (U.K. nationals) were recruited. As in Experiment 1, they each received £0.85 for participating in the experiment. Again, we checked the BF at predetermined intervals during the sequential sampling procedure. We started checking the BF for the hypothesized effect when 50% of the maximum number of participants completed the experiment and continued to check it after every set of 111 (10% of max sample) new participants. If the BF for both the accuracy-directional contrast and the accuracy-default contrast indicated there was strong evidence (0.1 > BF01 > 10; Schönbrodt et al., 2017), data collection would be stopped. Since this was never the case, data collection continued until the maximum N of 1,114 participants, which yielded ~0.95 power to replicate the main effect of Experiment 1 (

We collected data from a total of 1,142 participants, because 21 failed an attention check, five did not meet the inclusion criterion related to nationality, and one completed the experiment too fast. In accordance with the preregistration, these participants were excluded from the analyses. Of the remaining 1,115 participants (one more than planned), 854 (76.59 %) indicated that they endorsed the misperception at the beginning of the experiment. Finally, and just as in Experiment 1, two participants who did not understand the misperception measure were identified post hoc and removed from the data. This resulted in 852 participants (596 female, 253 male, 2 nonbinary, 1 unidentified, Mage = 35.27 years, SDage = 12.29) in the total data set.

The experiment consisted of a one-factor (motivation in reasoning) between-subjects design with three conditions. The directional motivation and accuracy motivation conditions (n = 285 and n = 277, respectively) were the same as in Experiment 1. We added a “default” motivation condition (n = 290), in which we did not manipulate participants’ motivation.

Materials and Procedure

The procedure was similar to that of Experiment 1. The instructions in the default condition (17 words) were to read the text like one normally would. In contrast to the other two conditions, no time restrictions were applied to reading the instructions and the next button did not include any text. The corrective science communication message (362 words) was based on information from the Food Standards Agency (n.d.) and an article published in Food Quality and Preference (Bearth et al., 2014). The text explained that food additives indicated with E numbers are safe to consume, that an E number actually means that the additive passed safety tests, a short background about food additives, and how an E number is assigned to an additive. An edited graphic about the development of E numbers from The Netherlands Nutrition Centre (n.d.) was included in the text (see Appendix B for the full message).

The IMC was replaced by an instructed-response item, which was placed in the OMC scale. Instructed-response items are useful to identify careless responding (Meade & Craig, 2012). The statement read “To demonstrate you are paying attention, please answer ‘2’.”

Measures

The measures were the same as in Experiment 1, but edited to reflect the topic at hand. We added a question measuring participants’ belief that what is natural is good, measured by agreement with the statement “In general, I consider what is natural to be good” on a 7-point scale from strongly disagree to strongly agree. In addition, we asked them to report their nationality, as a means of checking the screening criterion for nationality. For the complete wording of all the questions, see the supplemental material.

Data Analysis

Both hypotheses were tested by conducting an ANCOVA with posterior belief as the dependent variable, condition as the independent variable, and prior belief certainty as continuous predictor. The first hypothesis, comparing accuracy motivation to directional motivation, was tested with the subsample of participants in the accuracy and directional conditions (n = 562). The second hypothesis, comparing accuracy motivation to the default motivation, was tested with a subsample of participants in the accuracy and default conditions (n = 567). Again, BF are reported in the confirmatory analyses.

Results

Manipulation Check

Similar to Experiment 1, one-tailed, independent-samples t tests were conducted to test whether the motivation manipulation had the expected effect on participants’ motivation in reasoning about the corrective text. As expected, on the questions measuring accuracy motivation participants in the accuracy condition (M = 5.50, SD = 1.00) scored significantly higher than participants in the directional condition (M = 4.93, SD = 1.16), t(552.49) = 6.29, p < .001, d = 0.53, 95% CI [0.36, 0.70], and the default condition (M = 5.00, SD = 0.96), t(560.75) = 6.07, p < .001, d = 0.51, 95% CI [0.34, 0.68]. Also as expected, on the questions measuring directional motivation participants in the directional condition (M = 5.06, SD = 1.14) scored significantly higher than participants in the accuracy condition (M = 4.47, SD = 1.20), t(556.57) = 5.96, p < .001, d = 0.50, 95% CI [0.33, 0.67], and the default condition (M = 4.67, SD = 1.09), t(571.05) = 4.18, p < .001, d = 0.35, 95% CI [0.18, 0.51].

Confirmatory Analyses

Replicating the results from Experiment 1 and supporting the first hypothesis, the main effect of motivation condition on posterior endorsement of the misperception, comparing the accuracy and directional conditions, was significant, F(1, 559) = 14.11, p < .001,

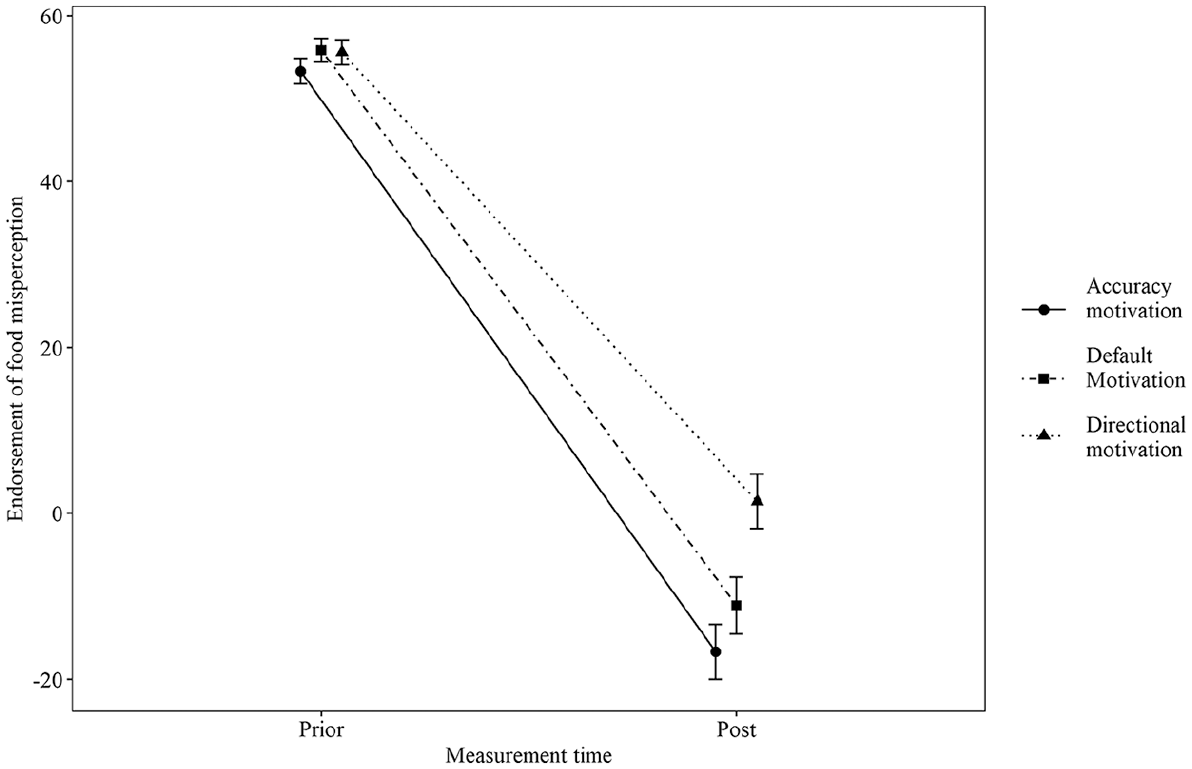

Prior and posterior endorsement of the food misperception separated by motivation condition.

Exploratory Analyses

Again, in all these analyses, model outliers based on standardized residuals > 3 SD were removed from the model. The complete (preregistered) exploratory analyses can be found in the supplemental material.

First, also as per the preregistration, we explored the remaining contrast by comparing posterior endorsement of the misperception in the directional condition to the default condition (n = 575). An ANCOVA, similar to the main analysis, indicated that participants in the default condition (M = −11.12, SD = 58.11) displayed significantly lower posterior endorsement of the misperception than participants in the directional condition (M = 1.44, SD = 55.41), F(1, 572) = 8.15, p = .004,

Second, we explored differences between the accuracy and the directional conditions on (1) support for policy aimed at reducing the use of E numbers in food products and (2) intention to avoid the consumption of food products with E numbers in them. Both ANCOVAs, with condition as the independent variable, policy support or intention as the dependent variable, and controlling for prior belief certainty, yielded a significant effect of motivation condition. Regarding policy against E numbers, participants in the accuracy condition (M = 4.81, SD = 1.51) scored significantly lower than participants in the directional condition (M = 5.30, SD = 1.52), F(1, 559) = 13.60, p < .001,

Finally, we explored the hypothesized interaction effect between prior belief certainty and motivation condition from Experiment 1. We conducted the same ANCOVA as in Experiment 1 with only the accuracy and directional conditions. Again, the ANCOVA yielded a nonsignificant interaction effect (p = .430), meaning that the effect of motivation on posterior endorsement of the misperception was not moderated by prior belief certainty.

Discussion

Experiment 2 replicated and extended the findings from Experiment 1. Again, we found support for a causal role of motivated reasoning in the effectiveness of correcting a misperception, this time for a misperception related to food safety, thereby supporting the generalizability of these results. Contrary to our expectations, we did not find that inducing accuracy motivation strengthened the corrective effect of the science communication message compared to the default motivation. Instead, exploratory analyses indicated that the default motivation lead to lower posterior endorsement of the misperception than directional motivation. In contrast to Experiment 1, the results indicated that the effect of motivation on posterior endorsement of the misperception was reflected in a difference in policy support and intention. Accuracy-motivated participants reported lower support for policy aimed at reducing the use of E numbers in food products and intention to avoid the consumption of food products with E numbers in them than directionally motivated participants. This difference was (partly) explained by the change in endorsement of the misperception.

General Discussion

The current research investigated the role of motivated reasoning in the effectiveness of correcting misperceptions about scientific facts. We found evidence for a causal role of motivated reasoning in the effectiveness of corrections, such that individuals who were driven by accuracy motivation were more receptive to the corrective information than individuals driven by directional motivation. Contrary to what we expected, the effect of motivation was not moderated by prior belief certainty. Whether in great doubt or very certain, motivation in reasoning about corrective information seems to affect how amenable one is to this information. Also contrary to our expectations, we found that in the case of a food-related misperception, individuals’ default motivation made them just as receptive to the corrective information as accuracy motivation. Directional reasoning made individuals stick to the misperception more than accuracy-driven reasoning or default reasoning. Finally, our findings demonstrated that the influence of motivation was not limited to the misperception itself. Specifically, the difference in posterior endorsement of the misperception between accuracy and directionally motivated individuals was reflected in second order outcomes: Support for policy aimed at reducing E numbers in food products and intention to consume less E numbers were lower among accuracy motivated individuals than among directional motivated individuals. This was not the case for Experiment 1, which might be explained by lower power as well as the fact that the average correction was larger in Experiment 2 than in Experiment 1.

These findings are cause for a more optimistic view of human receptivity to science communication than often found in the literature. Although we did find that directional reasoning might reduce the corrective effect of a science communication message, we found no evidence for the prevailing assumption in research on motivated reasoning that corrections often backfire (see Hart & Nisbet, 2012; Nyhan et al., 2013; Nyhan & Reifler, 2010; Zhou, 2016). There has been some debate about this backfire effect (Druckman & McGrath, 2019). In line with other research (Garrett et al., 2013; Guess & Coppock, 2018; Hill, 2017; van der Linden et al., 2018; Wood & Porter, 2019), we found no evidence of such a polarizing effect of our science communication message. To the contrary, our findings demonstrated that individuals are not automatically predisposed to defend their prior beliefs in the face of corrective information. Only when we induced a directional motivation did participants stick to the misperception more than participants would by default. Moreover, even individuals who were induced to have a directional motivation on average reduced their endorsement of the misperception, rather than increasing it.

How can these contrasting findings be explained? As suggested in the original work on motivated reasoning by Kunda (1990), the influence of directional reasoning is limited by people’s perceptions of reality and plausibility. Clear, one-sided information regarding factual beliefs is unlikely to lead to backfire effects (Flynn et al., 2017). In line with this, research demonstrates that biased updating requires, in addition to motivation, at least some level of ambiguity or balance in the information (Dixon & Clarke, 2013; Dixon et al., 2015; Sharot & Garrett, 2016) or should concern “softer” outcomes such as candidate favorability (Nyhan et al., 2019). Instead of backfiring, corrective information can be very effective if it “hits [people] between the eyes” (Kuklinski et al., 2000). In line with this idea, our message directly and very specifically contradicted the misperception. The current research demonstrated that a clear corrective message regarding a factual belief is likely to be effective in correcting misperceptions.

The current study provided the first step in investigating the causal role of motivated reasoning in sticking to misperceptions and in finding out whether this role is similar for different contentious issues (i.e., vaccine and food safety). There are, however, some limitations. First, because of demand characteristics in the motivation manipulation, part of the difference in posterior belief between the experimental conditions might be a result of response bias. Participants may have chosen to satisfy the researcher’s expectation, thereby biasing the main results of the experiments. As with most research relying on self-report measures, this cannot be ruled out completely (though see Mummolo & Peterson, 2019). However, we have reason to believe that demand characteristics do not explain the current findings. Participants were told, upon measure of their posterior endorsement of the misperception, that we were interested in their judgment and that this was not a test. If anything, a participant motivated to satisfy the researcher should in this case answer as honestly as possible. Furthermore, Prolific is known as a platform that treats their participants fairly. Participants knew that they would be paid for participating in research, regardless of whether they satisfied the researchers. Finally, there was no interaction between participant and researcher in the experiments, which would further reduce demand characteristics.

Then, there are a number of smaller limitations. The first considers the ecological validity of our motivation manipulation. We simply asked participants to process the information in such a way that it resembled either accuracy or directional motivated reasoning. This is not a real-life situation. Future research could make the motivation manipulation more ecologically valid, for instance, by investigating an appeal to accuracy motivation as part of the corrective message or by investigating directional motivation primes in a more complex information environment. Second, in the design of the current experiments we assumed that participants would be exposed to corrective information. However, a part of motivated reasoning is motivated selection of information (Knobloch-Westerwick & Meng, 2009). In research that manipulated motivation in information selection, researchers found different preferences for information as a product of accuracy motivation and defense motivation (similar to directional motivation; Winter et al., 2016). Although the debate on selective exposure is far from settled (cf. Garrett, 2009), the first challenge is to get people to read corrective information. The current research provides information only on what happens when this exposure is achieved in the first place.

The current study gives lead to some directions for future research. First, regarding the development of an intervention fostering accuracy motivation, it would be valuable to know which part of the motivation manipulation led to a difference in the corrective effect of the message. Was it being evenhanded, considering the opposite view (also see Lord et al., 1984), looking for disconfirmation, or a combination of all of those things? Second, a test of the “between the eyes”-effect of a correction would provide more insight into the conditions of belief polarization. Including both an ambiguous and clear correction in a paradigm such as the one we used should be of great interest for the debate on backfire and boomerang effects. Finally, research on motivated reasoning could benefit from a measure of the type of motivation in reasoning. Currently, indicators of motivation such as political ideology and worldview are often used. These indirect measures are useful, but a direct measure of motivation should be more valuable. Ideally, this measure could also be used to investigate individuals’ default response to different types of information, potentially uncovering a motivated reasoning “trait.”

Misperceptions can cause serious problems. This research focused on vaccine and food safety, but the results are expected to also apply to other hotly debated topics, such as climate change, gun control, or genetic modification of food. If there is a motive to hold on to a misperception, it will play an important role in correcting misperceptions. At the same time, this research supports an optimistic view of people’s receptivity to science communication. By default, we are open to new information and are likely to change our beliefs when information leads us to.

Supplemental Material

CorrectingMisperceptions_SupplementalMaterial_accepted – Supplemental material for Correcting Misperceptions: The Causal Role of Motivation in Corrective Science Communication About Vaccine and Food Safety

Supplemental material, CorrectingMisperceptions_SupplementalMaterial_accepted for Correcting Misperceptions: The Causal Role of Motivation in Corrective Science Communication About Vaccine and Food Safety by Aart van Stekelenburg, Gabi Schaap, Harm Veling and Moniek Buijzen in Science Communication

Footnotes

Appendix A

Appendix B

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.