Abstract

Concurrent operant assessments (COAs) offer a flexible model for evaluating instructional preferences for students with persistent, escape-motivated interfering behavior. In this article, we define the critical features of COAs and review what types of questions they can address for classroom educators. We then identify and describe a series of steps for planning and conducting COAs with supporting examples. Finally, we discuss how results can be used to inform instruction and intervention.

Every student is entitled to an engaging instructional environment that promotes their willing and active participation in learning activities. This applies to all students, including those who engage in behaviors that interfere with academic instruction. Such interfering behaviors can range from persistent off-task or disruptive behavior to higher-risk behaviors, such as property destruction, physical aggression, and elopement (i.e., leaving a designated area or classroom without permission). The function or purpose these behaviors serve varies by student and context. However, when interfering behaviors reliably occur during certain instructional activities, they are likely motivated—at least in part—to avoid or escape some aspect of instruction (Filter & Horner, 2009; Kern & Clarke, 2021).

Escape-motivated interfering behavior can set into motion cycles of negative reinforcement that affect the quantity and quality of teacher–student interactions (Gunter & Coutinho, 1997). Consider, for example, a student who swipes materials off their desk when presented a reading assignment. The teacher might respond by asking the student to pick up their materials, going to help a different student with the assignment, or even sending the student to the office. Any of these well-intentioned consequences result in the student delaying or avoiding the reading assignment. If the student’s interfering behavior is motivated by avoiding reading tasks, any of these consequences will only make the student more likely to engage in interfering behavior the next time they are presented with a similar task. Additionally, the teacher may begin withholding or diluting academic instruction to avoid triggering the student’s interfering behavior. Over time, this cycle can lead to fewer positive teacher–student interactions, fewer learning opportunities for the student, and a need for intervention (Gunter & Coutinho, 1997; Hirn & Scott, 2014).

Leveraging Student Choice

One way to promote engaging environments for all students—including those with interfering behavior—is to provide them opportunities to exercise agency in their own learning. When students can make choices about the aspects of their instructional environment, they are more willing to engage in learning activities (Gushanas & Smith, 2023; Kern & Clarke, 2021). The provision of instructional choice is one low-intensity antecedent strategy that has been shown to increase academic engagement and decrease disruptive behaviors for students with or at risk for a range of academic and behavioral challenges (Ennis et al., 2020; Royer et al., 2017).

For example, teachers might give students opportunities to choose the type of writing utensil they use for a written task, the order in which they complete a series of assigned tasks, the type of seat or seat location for a lesson, or what book to read during independent reading (Kern & Clarke, 2021; Lane et al., 2018). Although incorporating teacher-selected choice opportunities can be an effective preventive strategy for many students in the classroom, it may not be sufficient to meet the needs of students with persistent, escape-motivated interfering behaviors (e.g., Ennis et al., 2018; Lane et al., 2015). These students may benefit from individualized assessments to evaluate their unique preferences related to specific aspects of the instructional environment. Then, those preferences can be incorporated into the instructional routines in which these students struggle.

In this article, we present an overview of a choice-based assessment used to directly evaluate a student’s relative preferences among the aspects of their learning environment. This assessment—known as a concurrent operant assessment, or COA—allows a systematic evaluation of instructional preferences for students with escape-motivated interfering behavior who need individualized intervention. As these assessments are individualized, they require more effort relative to antecedent-based choice strategies, which typically involve presenting students with choices and asking them which one they prefer. Some students, however, may lack the expressive communication skills to verbally report on their preferences. For these students, COAs can be used to directly inform preference among different activities, while also offering a context for practicing the skill of making choices (Deel et al., 2021). Additionally, regardless of a student’s communication skills, it may be difficult for them to report on certain preferences without directly experiencing each option. For example, when given a choice between “working a little bit to earn short breaks” and “working a lot to earn long breaks,” a student might report they prefer the option with the longer breaks. Yet after experiencing each contingency, they might find they actually prefer the option with shorter (and more frequent) breaks.

Use of COA has the potential to help educators and other school specialists design learning conditions that promote engagement for the students who struggle most during instruction. These assessments also lend agency to the student by offering alternative ways to express their wants, needs, and preferences without having to rely on interfering behavior. In the sections that follow, we provide an overview of COAs and their potential for applications by educators in schools. Then, we narrow in to identify what educators can learn from COAs and describe a step-by-step process for planning and implementing the assessment. Finally, we discuss how to connect results of a COA to instruction and intervention.

What Is a Concurrent Operant Assessment?

The term concurrent operants comes from the field of behavior analysis. It refers to a scenario in which two or more alternatives are simultaneously available (Catania, 2013). Thus, a COA is an assessment that involves a series of choice opportunities. Specifically, a student is presented with a series of choices among two or more activities or conditions that are simultaneously available. They indicate their choice by engaging in one of the activities, though they are free to shift to another designated activity (condition) at any time. An educator tracks how much time the student spends in each condition. The amount of time spent is used to determine relative preferences among those conditions. The condition in which the student spends more time is identified as more preferred relative to the other available options. The student’s preferences can then be incorporated into instruction and intervention.

Concurrent Operant Assessments in Research and Practice

Research on applications of COAs to inform intervention has come largely from the behavior analytic literature. Many studies have taken place in clinical settings with children and adolescents with developmental disabilities. However, COAs have also been evaluated in elementary school settings and included students with emotional and behavioral disorders, students with learning disabilities, and students without disabilities who struggle academically or behaviorally (e.g., Lannie & Martens, 2004; Lloyd et al., 2020; Martens et al., 2003; Quigley et al., 2013; Staubitz et al., 2022). In addition, although most studies evaluating COAs in school settings have included researchers as implementers, others (e.g., Lloyd et al., 2022) have meaningfully involved educators in both planning and implementation. With respect to application, COAs have been used to inform aspects of academic and behavioral interventions, such as task difficulty and reinforcer quality (Lloyd et al., 2020; Quigley et al., 2013). Additionally, COAs have been incorporated as a component of intervention to give students a safe way to escape instruction without relying on interfering behaviors (e.g., Staubitz et al., 2022).

To our knowledge, COAs are not commonly used by educators in schools. Results of one survey study, however, suggested school practitioners who support students with interfering behavior had favorable impressions of the acceptability, feasibility, and utility of these assessments (Lloyd et al., 2021). Moreover, several aspects of COA procedures make them appropriate—and even uniquely suited—for implementation by educators and school specialists who support students with persistent interfering behavior.

First and foremost, COAs do not require evoking any form of interfering behavior to interpret results. In fact, COA procedures make these behaviors unlikely to occur at all, as the student is free to choose which activity they experience throughout the assessment. Second, COAs create a context for new teacher–student interactions that are distinct from the negative interaction patterns that commonly happen around interfering behavior in the classroom (Lei et al., 2016). Not only do they require one-on-one attention without any attempts to trigger interfering behavior, but they involve a shift in roles. That is, within COA, the student exercises control over which conditions or activities they experience (within teacher-specified options), and the teacher honors those choices. Third, COAs are flexible and efficient. As discussed more in the following sections, they can be designed to inform preference related to various aspects of a student’s learning environment, and results can be incorporated quickly into instruction and intervention.

What Can Educators Learn From a Concurrent Operant Assessment?

So far, we have identified the COA as a tool educators and school specialists can use to learn about a student’s needs and preferences related to their instructional environments. Now we turn to identifying four primary aspects of a learning environment COAs can inform. These include instructional tasks, contextual features of instruction, play/leisure activities, and work-to-earn contingencies.

With respect to instructional tasks, COAs can be used to identify a hierarchy of preferences among two or more tasks that differ in subject area, skill domain, or level of difficulty. For example, when given the option of working on letter–sound identification or phonological awareness tasks, a student might reliably choose to work on letter–sound identification, indicating a preference for that task relative to phonological awareness tasks. Instructional tasks address the “what” of instruction, whereas contextual features focus on the “how” of instruction and refer to ways instruction can be delivered. Contextual features might include instructional format (e.g., independent work, one-on-one instruction, partner work), how often opportunities to respond are provided, or the level of task structure.

A COA can be designed to focus solely on contextual features (holding the instructional task constant) or might assess preference among different combinations of task and context. For example, when given the choice between working on letter–sound identification independently and phonological awareness with one-on-one help from an adult, the same student referenced above might choose the phonological awareness task. This outcome would suggest the student is willing to engage in less-preferred tasks when working in a one-on-one format. It might also point to task difficulty as a relevant distinction between more- and less-preferred tasks. Evaluating student preferences among both task types and contextual features can inform instructional conditions most likely to promote willing task engagement.

The other two aspects of the learning environment relate less to instruction and more to reinforcement—or how to arrange consequences for task engagement that will support continued engagement. One aspect of reinforcement focuses on identifying what types of play or leisure activities are most preferred by the student (i.e., reinforcer quality). This can include what materials are available (e.g., action figures, iPad), who the play partners are (e.g., teacher, peer, no partner), and even the style of interaction by play partners (e.g., watching and commenting on student play vs. engaging in parallel play). Thus, a COA can be designed to identify a hierarchy of preference among different play conditions, indicating which play condition is most likely to reinforce task engagement and completion.

The fourth aspect of the learning environment we highlight is work-to-earn contingencies. Aspects of work-to-earn contingencies include the amount of work required to access reinforcement, the amount of reinforcement provided for meeting the work requirement, or how long students have to wait before accessing reinforcement. For example, a COA might be designed to evaluate whether a student prefers to work for 3 min to earn a short (e.g., 1-min) break, or work for 10 min to earn a longer (e.g., 5-min) break.

Preferences for work-to-earn contingencies can also be evaluated in combination with any of the other three components of learning environments (i.e., instructional tasks, contextual features, play/leisure activities). For example, an educator might use a COA to evaluate whether a student willingly chooses to work independently on phonological awareness tasks when they complete five tasks to earn 2 min of free play versus working with a partner on letter–sound identification when they must complete 10 tasks to earn 2 min of free play. These types of COAs can help educators identify what contingencies to program and for which tasks, increasing the likelihood the student will stay motivated to engage with those tasks.

How Are Concurrent Operant Assessments Conducted?

In this section, we present a step-by-step description of procedures for planning and implementing COAs, which includes collecting data and interpreting results. We recommend educators partner with a school specialist (e.g., behavior analyst, counselor, instructional coach) or other classroom personnel (e.g., another general or special education teacher, paraeducator) when planning and conducting a COA. Incorporating multiple perspectives can facilitate assessment planning. Dividing responsibilities can make implementation more feasible.

Planning

The planning process involves five steps, beginning with identifying assessment goals and ending with gathering assessment materials.

Step 1: Identify assessment goals

When planning a COA, the first step is to identify one or more goals for the assessment. As discussed earlier, COAs are flexible and can be used to address a wide range of goals that are connected to instruction or intervention. For example, one goal might be to identify a simple hierarchy of preference among a set of instructional tasks or play/leisure activities. Such preference hierarchies could then be used to inform how to schedule or sequence instructional tasks to promote engagement or to identify which play activities to incorporate into a reward system (see Connecting Results to Instruction and Intervention).

Other goals might be tied to a specific instructional task that the student has a history of avoiding. For example, the goal might be to identify what contextual features of instruction or which work-to-earn contingencies promote their willing engagement with the non-preferred instructional task. Once you have established the goal(s) of the assessment, the following steps should be completed with these goals in mind.

Step 2: Identify one or more adults to conduct the Assessment

At least one adult is needed to carry out the COA, but in many cases, having two adults is preferable. One adult plays a facilitator role. They introduce the choices at the start of each session, provide reminders to the student as needed, and collect data on student behavior (see Data Collection). The other adult is available to implement any choice conditions that involve instruction, play, or other activities requiring adult interaction. Ideally, the person who plays this role should be a teacher (or other school staff member) who typically interacts with the student during relevant instructional routines. Otherwise, preferences for less familiar adults might not apply in the usual classroom setting. The role of the facilitator is more flexible, and it might be played by a school specialist or another classroom educator. We recommend starting a COA with two adults. Once an educator gains familiarity with COA procedures, it will then be more likely that they can fulfill the roles of both facilitator and implementer, especially for COAs with few and simple choice conditions.

Step 3: Select the assessment location and set up choice stations

The most appropriate location for assessment depends in part on the type of COA conducted. Simple ones—such as those evaluating preference among three instructional tasks—could likely be completed in a student’s usual classroom setting. More complex variations (e.g., COAs designed to identify what contextual features and work-to-earn contingencies promote engagement with previously avoided tasks) or those involving activity choices that would distract other learners are best suited to implement in a separate setting in the school building. Such settings might include a general education classroom when peers are not present, a designated area of a self-contained classroom, a conference room, or even the school library or cafeteria when they are not otherwise in use.

Although there are variations on COA procedures (e.g., concurrent chains arrangement; Auten et al., 2024), we present a model in which each choice condition is assigned to a distinct physical location and the student indicates their choice by moving to the location in which the corresponding activity is present. Thus, the assessment setting should accommodate at least two distinct physical spaces, or choice stations, to which each set of conditions will be assigned. Stations might include different chairs at a table, separate desks, or areas of the room separated by furniture or painter’s tape. The types of preference you will be evaluating should inform the type of choice stations you select. For example, different chairs at one worktable might be a good fit for preferences among different contextual features of instruction, whereas using tape to demarcate different areas of a room would be appropriate for preferences among different play activities.

Technically, you only need two choice stations to evaluate preference. However, we recommend including a third “break” station that is always available, where the student can access a break on their own at any point during the assessment. This “break station” offers students a safe, reliable, and concrete way to communicate they are not willing to participate in either of the activities presented. Also, when a student chooses the break station over programmed activity choices, this information helps the educator know one or both activities should be adjusted to make them more preferred.

Finally, in addition to setting up choice stations, the facilitator should also identify a starting point, or specific location at which each session will begin. The starting point should be separate and relatively equidistant from each choice station to ensure the procedures do not bias the student toward choosing one station over another. For example, if you start a session when the student already happens to be at a certain choice station, and they stay there, it is unclear whether they actively chose that condition or simply did not respond to the facilitator’s prompt to make a choice. Example starting points include having the student stand behind the chairs that represent each choice station, on a marked area of the floor a few feet from the stations, or in the doorway of the classroom.

Step 4: Map out choice conditions and a sequence for presentation

Although some choice comparisons might be determined or adjusted during a COA based on student choice patterns, it is important to map out a plan for which general choice conditions will be presented, and in what sequence. The selection of choice conditions will depend on the primary goal of the COA. If the goal is to determine a hierarchy of preference among different instructional tasks, select a set of two to four instructional tasks (all of which the student has the basic skills to complete) and present two at a time as choice conditions.

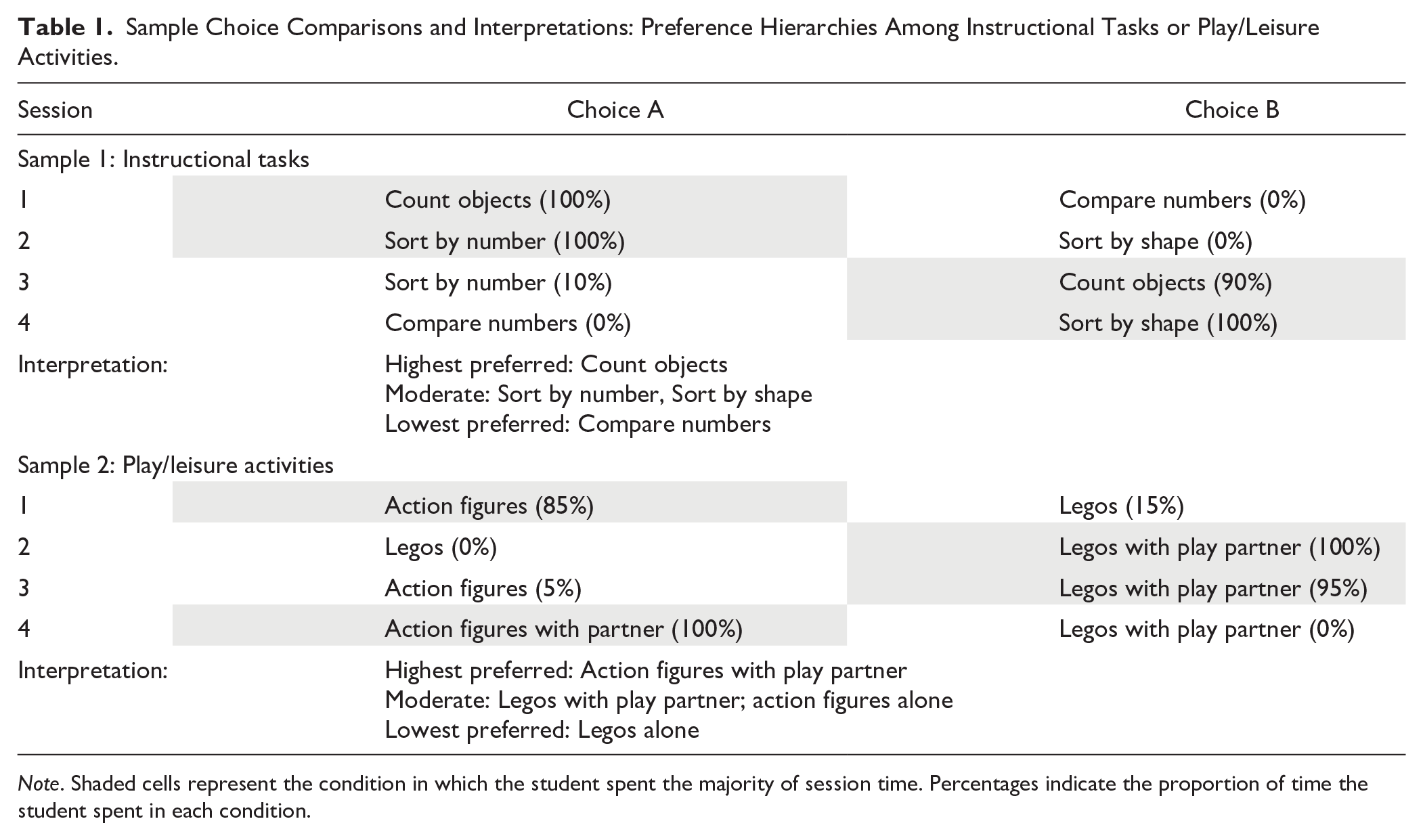

When assessing preference among a smaller set of choice conditions (e.g., three), you might present each possible pair of conditions (e.g., Choice A vs. Choice B; Choice A vs. Choice C; Choice B vs. Choice C). However, doing so with COAs involving a larger set of choice conditions would be time-intensive. Instead, you might continue to compare the most-preferred choice condition to each new choice condition you introduce. An example sequence of choice conditions for evaluating preference among instructional tasks is presented in Table 1 (see Sample 1). Similarly, if the goal is to determine a hierarchy of preference among different play/leisure activities, you would select a set of two to four play conditions and present them two at a time as choice conditions (see Table 1, Sample 2).

Sample Choice Comparisons and Interpretations: Preference Hierarchies Among Instructional Tasks or Play/Leisure Activities.

Note. Shaded cells represent the condition in which the student spent the majority of session time. Percentages indicate the proportion of time the student spent in each condition.

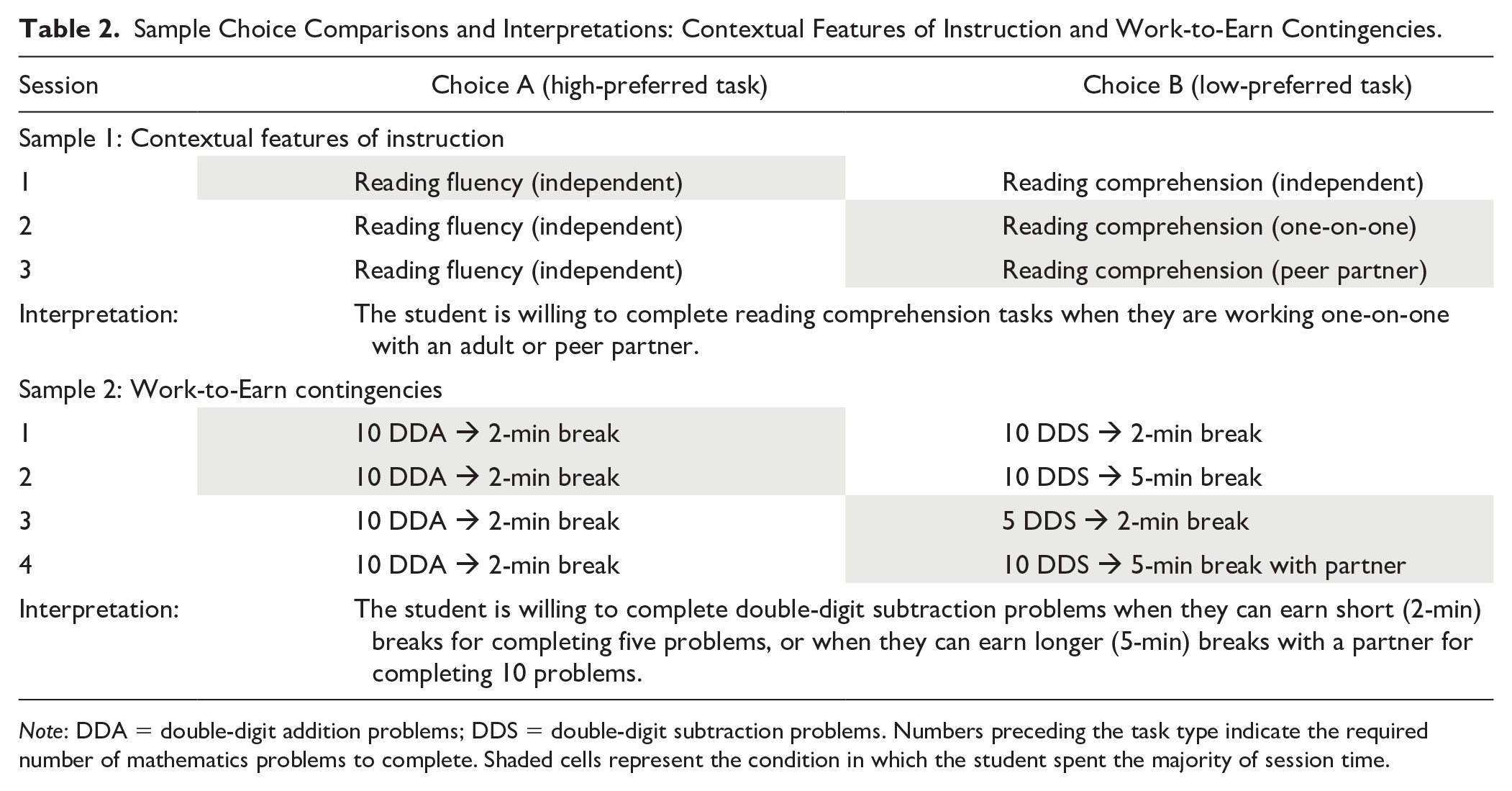

If you have already identified an instructional task the student commonly avoids, the goal might be to identify which contextual features or work-to-earn contingencies will promote engagement with the non-preferred task. In this case, you might include this task at one station and a more-preferred task at another station. Then, you would systematically add various contextual features or rewards to the less-preferred task condition to identify which are successful in shifting the student’s preference from the originally more-preferred to the less-preferred task. Example sequences of conditions for each of these COA types are provided in Table 2 (see Samples 1 and 2).

Sample Choice Comparisons and Interpretations: Contextual Features of Instruction and Work-to-Earn Contingencies.

Note: DDA = double-digit addition problems; DDS = double-digit subtraction problems. Numbers preceding the task type indicate the required number of mathematics problems to complete. Shaded cells represent the condition in which the student spent the majority of session time.

As you plan the choice conditions, you should also decide how long each session (i.e., choice condition comparison) will last. We recommend choosing brief (e.g., 3- to 5-min) sessions for efficiency. However, certain conditions—especially those comparing different work-to-earn contingencies—may need to be extended to ensure the student has time to complete the work requirement and contact the programmed reinforcer.

Step 5: Gather materials

Aside from gathering relevant instructional and play materials needed to implement the selected choice conditions, additional materials may be needed to set up choice stations. These might include a work table, a specific number of desks and chairs, or brightly colored painter’s tape to mark clear boundaries between stations. With respect to data collection, a stopwatch (or device with a stopwatch application) and data collection sheets will be needed (see Data Collection).

Implementation

Before starting the COA, an adult should introduce the upcoming assessment to the student. For example, the COA facilitator might say: Today we’re going to ask you to make some choices. First, I’ll tell you about each choice. Then, we’ll stand together in the doorway, and when I say “go,” you can move to whichever station you want. You can also move to a different station whenever you want to. Are you ready to make some choices?

The adult should also ask if the student has any questions, and then answer those questions.

If the student shows any resistance to engaging with the COA—either at the outset or by repeatedly choosing the break station despite modifications to programmed choice conditions—we recommend allowing the student to return to their regularly scheduled activity and trying again at another time. Forcing the student to engage in this assessment when they do not want to is not only inconsistent with the spirit of the COA (to promote student choice and autonomy) but is also unlikely to yield interpretable information about their preferences.

Before starting each session, the facilitator should arrange any necessary instructional or play materials at each choice station. For any conditions involving instruction or interaction with an educator, the educator should be seated or otherwise present at that choice station. Then, the facilitator introduces the available choices to the student. They might say, At this desk, you can work on letter tracing on your own. At that desk, you can work on word problems with Ms. Brooks. Remember, you can move to a different desk whenever you want to. You can also go to the carpeted area if you need to take a break.

How choices are introduced should depend on the student’s receptive communication skills. Some students will understand a spoken description of what will happen at each station, while others might need more explicit introductions to each condition (e.g., walking to each station and pointing to the tasks or materials available while describing what will happen there). Some students—including those with receptive language deficits—may benefit from briefly experiencing each condition to understand their options. For these students, the facilitator might guide them to each station and let them experience 30–60 s of each condition before asking them to make a choice. These extra steps might only be necessary at the beginning of the COA, or when new conditions they have not yet experienced are first introduced.

After describing the student’s choices, the facilitator guides the student to the starting point and prompts them to make a choice or to move to whichever station they would like. Once the student enters a station (which might be defined by both of their feet crossing the threshold of a taped-off area or sitting in a certain chair), the programmed activity should be implemented. For example, if the student enters a station with an iPad available, the facilitator or educator should make sure that the iPad remains available for as long as the student stays at that station. If the iPad is only available at that station, and the student attempts to bring it to a different station where only teacher attention is available, the facilitator should step in and remind the student that the iPad has to stay at that station. As another example, if the student enters a station that involves one-on-one instruction on reading fluency tasks, the educator should begin instruction as soon as the student enters the station (and stop instruction when the student leaves the station). At the end of each session, the facilitator clears or resets each station, guides the student back to the starting point, and presents the next set of choices.

Depending on the chosen session duration, the facilitator might provide a reminder half-way through the session (especially if the student has only been at one station) that the student can stay where they are or move to another station whenever they would like to. Such reminders can also be helpful if the student shows any signs of agitation or starts engaging in interfering behavior. For example, if a student is working on a writing task at one station and suddenly starts tearing the sheet of paper, a facilitator might say: “Remember, you can move to the other station whenever you want or you could go take a break.” If for any reason behavior begins to escalate, we recommend ending the session. Once the student is calm, the facilitator should check in with the student to see if they would like to return to regularly scheduled activities or continue with the choice assessment.

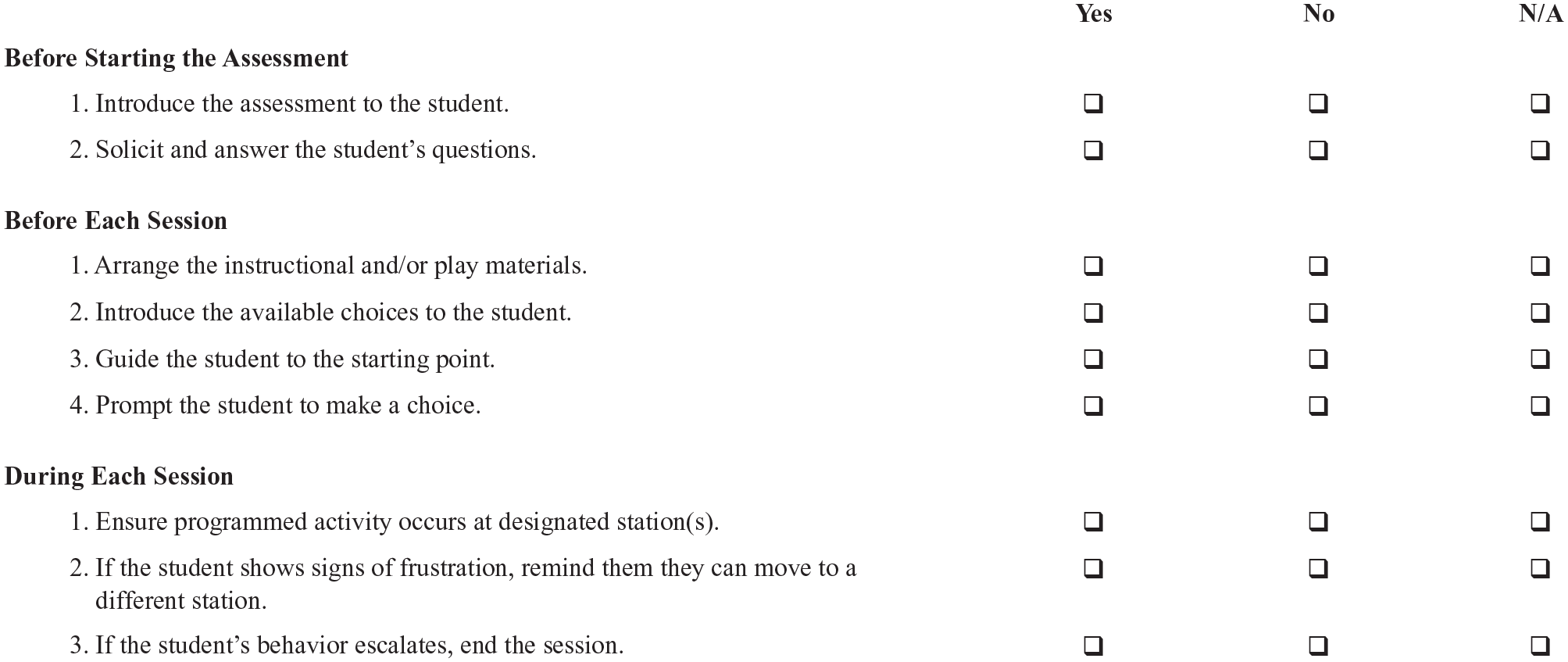

Simple implementation checklists can be used by educators and school specialists to support accurate implementation of assessment procedures (see Figure 1 for a sample checklist). Such tools might be especially useful the first few times educators implement COAs.

Sample Implementation Checklist: Concurrent Operant Assessment.

Data Collection

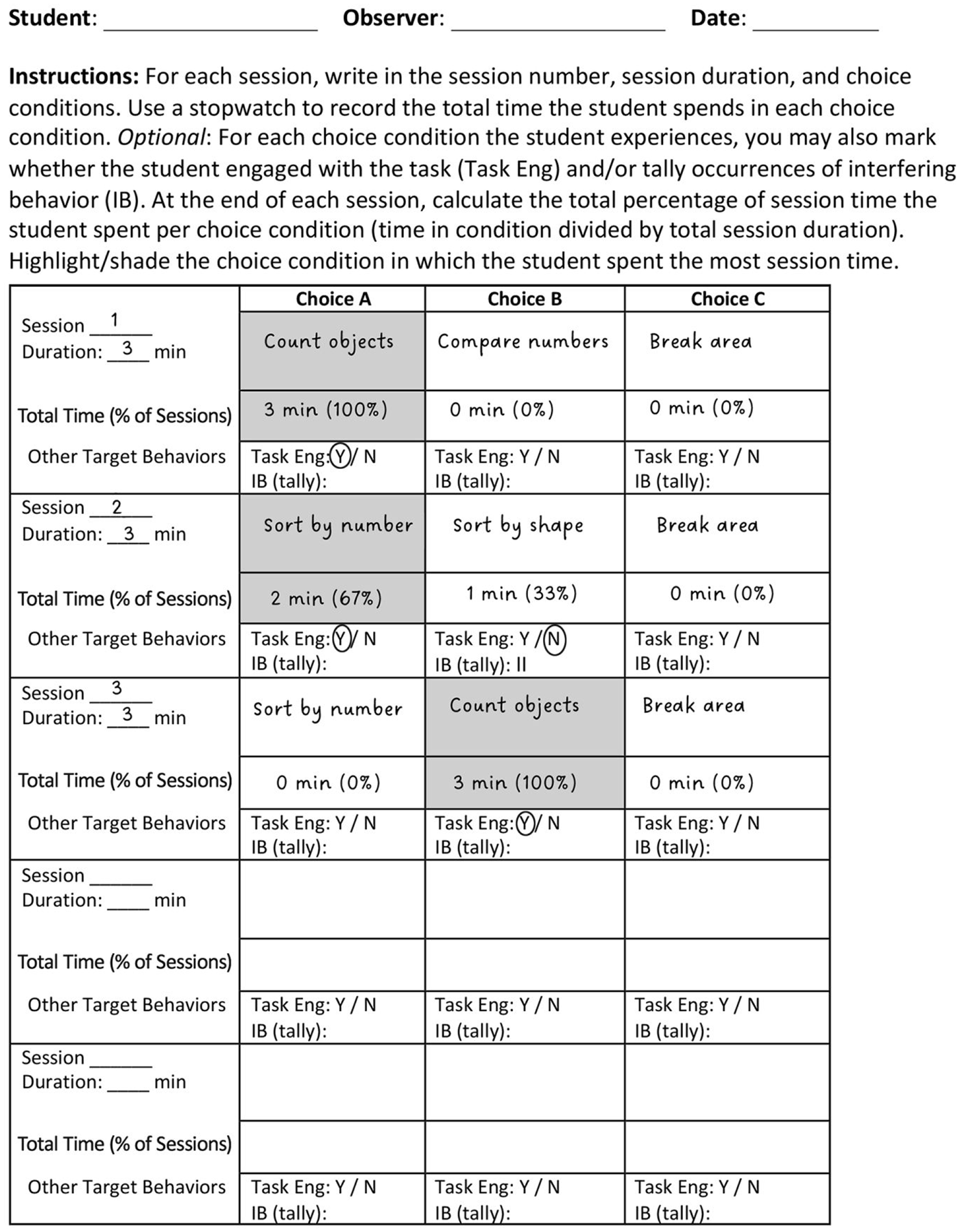

During each COA session, the facilitator collects data on student choice allocation, or how much time the student spends at each station. To do this, they simply need (a) clear definitions of the student’s physical presence at each choice station; (b) a stopwatch (or device with a stopwatch application); and (c) a data collection form. Defining what will “count” as entering or exiting each choice station facilitates both accurate data collection and implementation. For example, if stations are divided by lines of tape on the floor, clarifying that the student must have both feet inside the boundary will help the data collector know when to start the timer and help the implementer know when to initiate the chosen condition. After the student is prompted to “make a choice,” the data collector starts the stopwatch as soon as the student enters or accesses one of the stations. If they move to a different station, the data collector stops the clock, jots down the time, and restarts the timer for the current station. At the end of the session, the data collector sums the total time spent in each choice condition and divides it by the total session time to calculate the percentage of time spent in each condition (see Figure 2 for a sample data collection form).

Sample Data Collection Form.

Although time spent per station (choice allocation) is the only student behavior that must be tracked to interpret results, educators may choose to collect data on additional student behaviors (e.g., interfering behavior, task completion, item engagement) to provide context on what occurred during chosen conditions. For example, if a student spent relatively equal amounts of time between two play conditions yet engaged in interfering behavior in one of the conditions, educators might choose to incorporate the other play condition into enriched breaks throughout instruction. Similarly, if a student reliably chooses one instructional condition, yet does not consistently engage with the task, this might signal a need to adjust that condition or select another chosen condition with more reliable task engagement to incorporate in intervention. Collecting data on one or more additional behaviors could be as simple as marking whether the behavior occurred at any point during the session or marking tallies for each occurrence (see Figure 2).

Data Interpretation

The percentage of time spent in each choice condition is used to indicate the student’s relative preference for each condition. That is, if the student spends the majority (or all) of session time at one station, the condition assigned to that station is interpreted to be preferred relative to the other option. If the student switches back and forth between stations, resulting in relatively equal choice allocation, this pattern either indicates the student has no clear preference between the two conditions, or that they need more exposure to establish a preference.

To guide decision-making during the COA, educators can decide on a criterion percentage (e.g., 50%, 75%) they will use to determine preference. For example, you might set a preference criterion at 75%, such that if the student spends 75% or more time at one station, that station will be identified as more preferred. Sessions in which the student spends less than 75% of session time at both stations might be repeated to see if a clearer preference emerges after more exposure. If it does not, then you might conclude no difference in preference for that choice pairing and move on to the next set of conditions. If a third break station is included, and the student chooses to take breaks over both choice conditions, this pattern suggests a preference for neither condition (and a need to adjust at least one of the conditions to make them more preferred).

In addition to interpreting data from each COA session, educators also need to decide when to end the assessment. The end point will depend on the primary assessment goal. When the goal of the COA is to identify a preference hierarchy among a set of instructional tasks or play/leisure activities, the COA can end once you have presented each possible condition pairing or once you have conducted enough sessions to identify one or more tasks or activities that are more preferred than others. Alternatively, when the goal is to find contextual features or work-to-earn contingencies that will shift a student’s preference toward an initially avoided instructional task, the assessment can end once one or more conditions in which the student chooses to engage with that instructional task have been identified.

A final note on data interpretation relates to uncovering potential skill deficits. If the goal of the COA is to shift student responding to an initially less-preferred task and the student continues to choose alternatives to the task even after several adjustments to working conditions have been made, it may be the case that the student has an academic skill deficit that needs to be addressed before they are able to meaningfully engage with the activity. If skill deficits are suspected, these should be addressed prior to or in conjunction with adaptations to the learning environment.

Connecting Results to Instruction and Intervention

Ultimately, the purpose of conducting a COA is to identify potential changes to a student’s instructional environment that will promote their willing engagement in learning activities. In this section, we describe how results of different types of COAs can be incorporated into instruction and intervention.

Applications of Instructional Task Preference Hierarchies

As mentioned earlier, some COAs are designed to identify a hierarchy of preference among instructional tasks. In some cases, results of these COAs might inform further assessment. For example, identifying a task the student reliably avoids (i.e., chooses against) might be the first step in determining what changes to contextual features and reward contingencies are necessary to increase the likelihood of a preference shift toward the avoided task. However, simply knowing a student’s hierarchy of preference among instructional tasks can inform task-sequencing strategies that can support student initiation and completion of the less-preferred tasks. Applications of the Premack principle (Herrod et al., 2023; Premack, 1959) and high-probability instructional sequences (Common et al., 2019) are two example strategies that leverage differences in preference among activities to increase the probability a student will engage with a targeted task.

The Premack principle states that access to more-preferred activities can be used to reinforce engagement with less-preferred activities (Herrod et al., 2023; Premack, 1959). This principle is especially relevant for students who struggle to complete less-preferred tasks (Noell et al., 2003). The Premack principle can be applied by making access to a more-preferred activity contingent on completing a less-preferred activity. Consider an example in which a COA informed the following preference hierarchy: single-digit addition (most preferred), double-digit addition (moderately preferred), and single-digit subtraction (least preferred). To apply the Premack principle, you might set the schedule of task activities such that once the student completes single-digit subtraction facts, they can proceed to single-digit addition facts. In this way, access to the more-preferred activity is used to motivate completion of the less-preferred activity.

Instructional preference hierarchies can also be used to design high-probability instructional sequences. High-probability instructional sequences involve presenting multiple discrete task directives with a high probability of response prior to presenting a task directive with a low probability of response to “build momentum” for task engagement (Mace et al., 1988). High-probability instructional sequences have been shown to be particularly helpful for students who struggle to initiate less-preferred tasks (Lee et al., 2012; Wehby & Hollahan, 2000). Using the same example preference hierarchy described above, you might implement a high-probability instructional sequence by presenting a few single-digit addition facts (most preferred) before presenting a single-digit subtraction fact (least preferred) to increase the likelihood the student would initiate each single-digit subtraction fact. When leveraging instructional preference hierarchies to increase engagement with less-preferred tasks, it is important to remember that both approaches assume a performance deficit (“won’t do” problem) rather than a skill deficit (“can’t do” problem). That is, a student must have the basic skills to complete the less-preferred task for these strategies to be effective.

Applications of Play Preference Hierarchies

When a hierarchy of preference among play/leisure activities is identified via COA, results might be used to inform further assessment. One example might be to evaluate which play/leisure activities, when used as reinforcers, shift student preference toward an initially less-preferred task. However, results can also be incorporated immediately in various classroom reward systems. Hierarchies of preference among play/leisure activities can be used to identify which rewards to include within existing behavior reduction or skill acquisition strategies, and the conditions under which certain rewards can be earned. For example, you might reserve the most-preferred play condition as reinforcement for high-stakes instructional activities, such as participating in a skills inventory to inform student instructional groupings. Preference hierarchies can also be used to inform token economy systems, in which students earn tokens for demonstrating appropriate classroom behavior (e.g., academic engagement, work completion) and then exchange the tokens they earn for various rewards (Kazdin, 1977; e.g., Romani et al., 2017). Educators might assign different “prices” (i.e., number of tokens required to exchange for the reward) based on the student’s relative preference among those rewards. That is, more work completion would be required to earn the most-preferred play activity, whereas less work completion would be required to earn a less-preferred play activity.

Applications of Preference Among Contextual Features and Work-to-Earn Contingencies

Concurrent Operant Assessments that are designed to evaluate what contextual features of instruction or work-to-earn contingencies will shift student preference toward an initially avoided instructional task are perhaps the easiest ones to translate into instruction and intervention. This is because the condition(s) in which the student willingly chooses to engage with the less-preferred task is the intervention—or modification to the instructional environment—that can be brought back to the classroom. Such conditions might be reserved for when the less-preferred task is assigned.

For example, if results of a COA suggest a student will complete double-digit subtraction problems when frequent teacher check-ins and a visual aid are present, then these instructional supports would be made available at times when the student is expected to complete double-digit subtraction problems in the classroom. As another example, results of a COA might show that a student who initially avoided reading comprehension tasks chooses to complete these tasks when she can earn short interactive play breaks (e.g., tic-tac-toe with a friend) for every four questions answered. In this case, the same schedule and quality of reinforcement would be programmed for times the student is expected to complete similar tasks in the classroom. Importantly, such COA-informed conditions can be considered a starting point for aligning the learning context with what the student needs to be successful. Once the student begins to engage more with the task and experiences success, you can gradually fade components of the intervention condition. For example, you might gradually decrease the frequency of teacher check-ins or systematically increase the number of comprehension questions required to earn breaks.

Incorporating Concurrent Operant Assessments During Instruction and Intervention

Beyond completing COAs to inform adjustments to a student’s learning environment that are aligned with their preferences, educators can also incorporate concurrent operant arrangements into instruction and intervention. For example, you might present two or more simultaneously available instructional stations and allow the student to choose the station at which they work. Or, you might present two or more simultaneously available play conditions the student can choose between once they have completed assigned tasks.

Another option for embedding concurrent operant arrangements during instruction is to offer the student an alternative context to instruction that they are free to access at any time (e.g., break space, peace corner, reading nook). Such arrangements can be especially useful for students who engage in severe and persistent interfering behavior, as it provides them an appropriate way to escape instructional tasks without relying on such behaviors. Results of studies evaluating behavior interventions that include such arrangements have shown that students still choose to engage in instruction and intervention even when they have the option of going to a “break area” at any time (Staubitz et al., 2022).

Educators can also ensure play breaks that students earn by participating in and completing academic tasks are higher quality than breaks the student is free to access at any time (Peterson et al., 2009). For example, a break condition that is always available to a student might include escape from instruction and access to moderately preferred materials (e.g., puzzle, crayons, paper). In contrast, play breaks that are earned would include escape from instruction and access to more-preferred qualities of attention, materials, and activities (e.g., parallel play on iPad).

Conclusion

For students who engage in persistent interfering behaviors to escape or avoid instruction, COAs can offer educators and other school specialists a flexible assessment model for evaluating student preference. These individualized, choice-based assessments can help inform ways to adjust a student’s learning environment to better align with their needs and preferences. Results of COAs can inform hierarchies of preference among different instructional tasks or among various play/leisure activities. They can also point to which contextual features of instruction and systems of reinforcement will promote a student’s willing engagement with tasks they previously avoided. Conditions indicated as most preferred can then be incorporated into instruction and intervention to decrease interfering behavior and increase active engagement. Promoting such changes increases the likelihood that students experience success with those tasks, creating opportunities for the act of learning to become its own reward.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

Support for this work was provided by the National Center for Leadership in Intensive Intervention-2 (NCLII-2), a consortium funded by the Office of Special Education Programs #H325H190003.