Abstract

The current research aimed to provide initial psychometric validation of a new multifaceted mindfulness questionnaire (referred to as the State Four Facet Mindfulness Questionnaire, or the “state-4FMQ” for short) adapted from the commonly used Five Facet Mindfulness Questionnaire (referred to as the “trait-FFMQ”). The research was divided into two pre-registered studies. In both, undergraduates partook in a 20-minute mindfulness meditation (via audio recording), and then answered questions, including the state-4FMQ, pertaining to their experience during the meditation. In Study 2, participants additionally partook in a 20-minute control condition. The state-4FMQ was developed using exploratory factor analysis (EFA; Study 1) and confirmatory factor analysis (CFA; Study 2). In Study 2, a short-form of the state-4FMQ was established, and several additional forms of measurement validity were tested. EFA and CFA results supported a four-factor structure, which was identical to the trait-FFMQ with the exclusion of Nonreactivity. This newly created state-4FMQ, and its short-form, showed good internal consistency as well as convergent, predictive, and construct validity. In addition, it was found that some facets, more than others, predicted momentary well-being. The validity of the state-4FMQ shows that it can be used to measure multiple facets of state mindfulness across a variety of situations.

Keywords

Introduction

Mindfulness originates in various ancient spiritual traditions and is most clearly articulated through Buddhist scholarship (Keng et al., 2011). Despite its extensive history, the systematic investigation of mindfulness in scientific contexts has only recently blossomed (see Van Dam et al., 2018). Much of this can be traced to the pioneering work of John Kabat-Zinn in the 1970s, who explored the use of mindfulness meditation in treating patients with chronic pain through an intervention known as Mindfulness-Based Stress Reduction (MBSR; Kabat-Zinn, 1982). Since then, empirical research has demonstrated the beneficial effects of mindfulness on well-being via three main domains (outlined in a literature review by Keng et al., 2011): First are “real-world” interventional studies, which demonstrate the benefits of mindfulness-based interventions (including, but not limited to, MBSR). These interventions typically consist of several weeks of a structured program, designed for clinical populations (see Creswell, 2017; Dawson et al., 2020; Dhillon et al., 2017; Goldberg et al., 2018, 2022; Goyal et al., 2014, p. 201 for reviews). Second are lab-based studies, which demonstrate the benefits of procedures that employ short-term mindful inductions including, but not limited to, a single meditation (e.g., Erisman & Roemer, 2011; Feldman et al., 2010; Heppner & Shirk, 2018; Johnson et al., 2013, p. 20; Mrazek et al., 2012; Thompson & Waltz, 2007; Zeidan et al., 2015). Third are correlational studies, which demonstrate associations between mindfulness measures (most of which use trait mindfulness) and other measures (e.g., Brown et al., 2007; Carpenter et al., 2019; Chu & Mak, 2020; Donald et al., 2019, 2020; Prieto-Fidalgo et al., 2021). Given the frequency by which research examines the advantages and associations of mindfulness, it is critical for the field to operationalize mindfulness with valid and reliable measurement scales. While most of the groundwork has been done with regard to trait mindfulness, the goal of the current research is to develop a new state mindfulness measure, one that is both multi-dimensional and versatile.

Before arguing the need for a new state mindfulness measure, it is perhaps fruitful to begin by mentioning that early creations of mindfulness measures were trait-based, with the idea that mindfulness is a characteristic that can be developed through practice (noting that others have argued that mindfulness can be as conceptualized as an inherent disposition or a skill (e.g., Burzler & Tran, 2022), a process (e.g., Erisman & Roemer, 2011), an action (e.g., Preissner et al., 2024), and an outcome (Medvedev et al., 2022), all of which have been argued to be distinct from trait mindfulness). How best to define and operationalize mindfulness proved to be challenging, however, due partially to the fact that it derives from various Buddhist texts and traditions that inconsistently, and often vaguely, describe mindfulness in disparate contexts (Gethin, 2011; Grossman, 2008, 2011; Grossman & Van Dam, 2011). For instance, Medvedev (2022) highlights seven different definitions of mindfulness commonly cited in the psychology literature, each with a unique emphasis (for similar discussions see Davidson & Kaszniak, 2015; Lustig et al., 2024; Nilsson & Kazemi, 2016). Based on the myriad of ways mindfulness can be conceptualized, it is perhaps not surprising that there exist many different trait mindfulness measures in the literature (see Bergomi et al., 2013; Sauer et al., 2012 for reviews), and that the correlation between them is often weak or non-existent (e.g., Park et al., 2013; however, see Buhk et al., 2023 for a more recent study showing stronger convergence between trait measures).

These discrepancies also bleed over into challenges in determining whether mindfulness should be considered, and hence operationalized, as a uni-dimensional versus multi-dimensional phenomenon, and if a multidimensional phenomenon, what those dimensions should be (see Quaglia et al., 2015 for detailed discussion). The two most commonly used trait measures of mindfulness exemplify this distinction. While the trait Mindful Attention and Awareness Scale (trait-MAAS; Brown & Ryan, 2003) conceptualizes mindfulness as unidimensional (consisting of attention/awareness), the Five Facet Mindfulness Questionnaire (hereby referred to as the “trait-FFMQ”; Baer et al., 2006) includes the following five dimensions, which we return to later: Acting with Awareness (“ActAware”; attending to the present moment, as opposed to focusing attention elsewhere or behaving automatically), Describing (the ability to express one’s experiences in words), Nonjudging (the acceptance of one’s thoughts and emotions without evaluation), Nonreactivity (the ability to allow thoughts and emotions to come and go without becoming attached or carried away with them), and Observing (attending to or noticing both internal and external experiences, such as thoughts, emotions, bodily sensations, smells and sounds). Note, however, that the extent to which trait Observing loads onto the superordinate mindfulness construct appears to vary with meditation experience (see Burzler & Tran, 2022).

Although trait measures of mindfulness are commonly used in research studies, there is good reason to develop state measures since mindfulness is often referred to—by Buddhists and researchers alike—as a momentary state. For example, Jon Kabat-Zinn states that “we are all mindful to one degree or another, moment by moment” (Kabat-Zinn, 2003, p. 145–146). In a similar vein, mindfulness has been described as an inherent and universal human capacity (Brown & Ryan, 2004) that has the potential to improve well-being when experienced (Ludwig & Kabat-Zinn, 2008), as well as a state of consciousness, the qualities of which can vary considerably depending on the context (Brown & Ryan, 2003). In fact, Bishop et al. (2004) influentially proposed that mindfulness is best defined as a state-like quality that only exists when one’s attention to their experience is purposely cultivated with an open and non-judgmental attitude. As such, experiencing a state of mindfulness can happen during formal practices of mindfulness, such as meditation, or during instances of daily life (Bishop et al., 2004; Brown & Ryan, 2003, 2004).

In addition to its conceptual validity, one practical advantage of defining mindfulness as a state is that it can then be studied in more diverse and comprehensive ways. For example, state mindfulness can be measured in reference to a single experimental manipulation setup in a laboratory (e.g., a single session of meditation, or any other experimental manipulation), or in reference to multiple naturally occurring experiences as part of one’s daily life (outside a laboratory). This latter approach, referred to as intensive longitudinal design (ILD), can afford abundant statistical power since multiple timepoints (i.e., repeated measures) are obtained from a single participant. Two commonly used ILD approaches are the Experience Sampling Method (ESM) where participants respond to questions in reference to the present or immediately preceding moment through notifications sent at semi-random intervals through a mobile device, and the Day Reconstruction Method (Kahneman et al., 2004) where participants systematically reconstruct the previous day’s events and then respond to questions in reference to each event. These methods allow a much more fine-grained and ecologically valid investigation of the relationship between mindfulness and other psychological constructs (e.g., affect) as compared to the use of a single trait measure of mindfulness. In sum, based on the conceptual validity, and empirical usefulness, of considering mindfulness a momentary state, it behooves the field of mindfulness research to have readily available state measures of mindfulness.

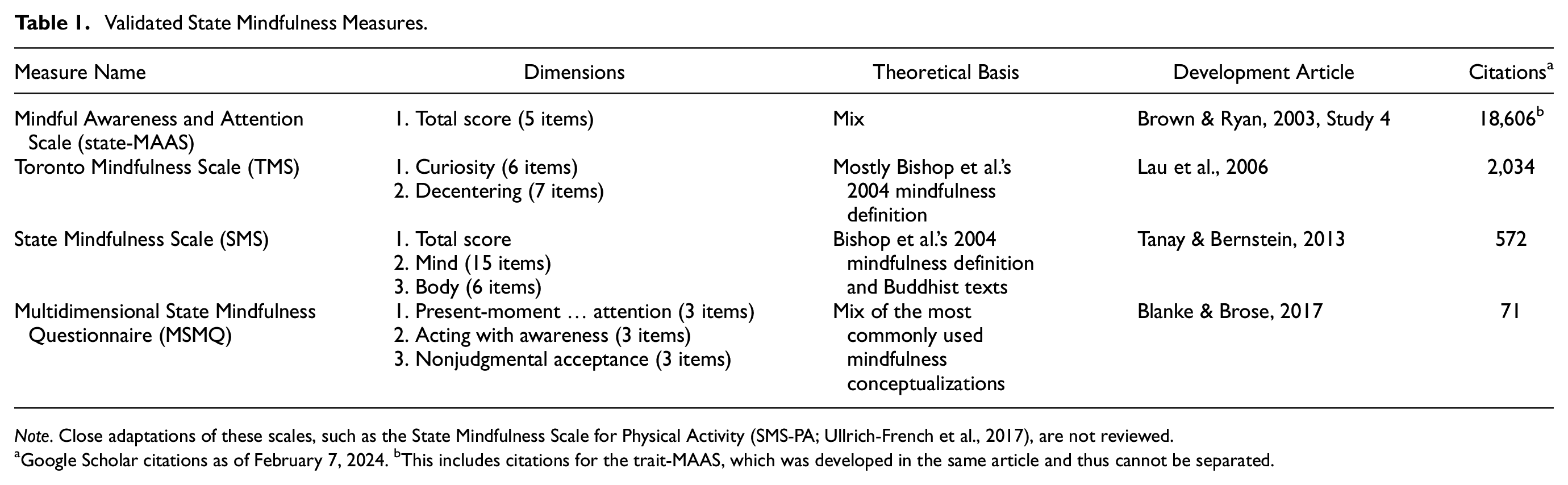

To date, there exist four validated state measures of mindfulness, which to our knowledge have yet to be reviewed altogether. In the next sections, we review these scales (in descending order of a number of citations), by addressing (a) the framework used to guide the creation of the scale, (b) the “referent experience” the scale was tested with (e.g., a single experimental manipulation vs. an ILD), (c) the multi-dimensionality of the scale, and (d) how the scale was validated (summarized in Table 1). This is then followed by a section arguing the need for a new state measure fashioned after the widely used trait-FFMQ, which is the goal of the current study.

Validated State Mindfulness Measures.

Note. Close adaptations of these scales, such as the State Mindfulness Scale for Physical Activity (SMS-PA; Ullrich-French et al., 2017), are not reviewed.

Google Scholar citations as of February 7, 2024. bThis includes citations for the trait-MAAS, which was developed in the same article and thus cannot be separated.

Of all the state mindfulness measures, the most used in research is the five-item State Mindful Attention and Awareness Scale (state-MAAS; Brown & Ryan, 2003). This scale was fashioned after the trait MAAS (15 items), and thus the two scales (state and trait) have analogous constructs. The MAAS is theoretically grounded in a broad and vague mix of sources including “our personal experience and knowledge of mindfulness (and mindlessness), published writings on mindfulness and attention, and existing scales assessing conscious states of various kinds.” (Brown & Ryan 2003, p. 825). The scale was purposely designed to capture what the authors argue is the one central component of mindfulness—attention to, and awareness of, the present moment—rather than any other components commonly associated with mindfulness such as an accepting attitude. Accordingly, the state-MAAS was developed with one dimension, “Attention and Awareness” (e.g., “I was doing something automatically, without being aware of what I was doing”, reverse scored), producing a total score across the five reverse-scored items. The scale was developed using an ESM design (92 students recorded three times per day for 14 consecutive days), though the scale has since been used in other contexts such as in reference to experimental manipulation of a meditation experience (e.g., Tan et al., 2014; Vinchurkar et al., 2014). Though no factor analysis was conducted in its development, the state-MAAS has shown sound psychometric properties, with an internal consistency of 0.92 and convergent validity with the trait-MAAS (Brown & Ryan, 2003).

The next most commonly used state mindfulness measure is the 13-item Toronto Mindfulness Scale (TMS; Lau et al., 2006). The TMS is theoretically grounded in a mix of sources, but mainly inspired by Bishop et al.’s (2004) description of mindfulness as attentional self-regulation and an orientation to experience the internal/external world with curiosity, acceptance, and openness. The scale was found to be (through factor analysis) two-dimensional, consisting of (a) a “Curiosity” dimension reflecting one’s awareness of the present moment and whether that awareness is characterized by an open and curious stance (e.g., “I was curious about each of the thoughts and feelings that I was having”), and (b) a “Decentering” dimension reflecting being aware of one’s thoughts and feelings without being entangled in them (e.g., “I experienced myself as separate from my changing thoughts and feelings”). These items were designed for, and developed with, the experimental manipulation of a meditation experience (a 15-minute unguided meditation session in a community sample and amongst individuals with mindfulness meditation experience). Though the TMS has primarily been validated in populations with previous meditation experience, and in reference to an immediately preceding meditation session, the scale has since been used in other contexts such as among non-meditators, and in reference to various experimental tasks (e.g., watching a film clip; Erisman & Roemer, 2011). The factor structure of the TMS was assessed using exploratory (EFA) and confirmatory (CFA) factor analysis. It has sound psychometric properties, with an internal consistency of 0.86 and 0.87, for “Curiosity” and “Decentering,” respectively. As the development article of the TMS did not include testing whether the dimensions do or do not load onto a superordinate mindfulness construct, the creators imply keeping the two dimensions separate, rather than using a total score.

A less commonly used state mindfulness measure is the 21-item State Mindfulness Scale (SMS; Tanay & Bernstein, 2013). Like the TMS, the SMS is theoretically grounded in Bishop et al.’s (2004) definition of mindfulness, as well as in traditional Buddhist scholarship (specifically the Theravada Abhidhamma and the Satipatthana Sutta), and the items were developed with systematic feedback from mindfulness researchers and instructors. The scale was found to be (through factor analysis, see below) two-dimensional, consisting of (a) a “Mindfulness of Mind” dimension reflecting awareness of mental events including one’s thoughts and emotions (e.g., “I was aware of what was going on in my mind”), and (b) a “Mindfulness of Body” dimension reflecting awareness of one’s body sensations (e.g., “I clearly physically felt what was going on in my body”). The scale was developed using a single experimental manipulation (a mindfulness meditation versus a control task, in a student and community sample). The factor structure of the SMS was assessed using EFA and CFA. It has sound psychometric properties, with an internal consistency of 0.90 and 0.95, for “Mind” and “Body,” respectively. Because the two dimensions were found to load onto a superordinate mindfulness construct, the SMS allows the use of either separate dimension scores or a total score.

The least commonly used state mindfulness measure is the 9-item Multidimensional State Mindfulness Questionnaire, which was developed in the German language (Blanke & Brose, 2017). Items for the MSMQ were chosen using a deductive approach, being drawn from the most commonly used mindfulness conceptions and measures based on reviews and citation counts; most of the tested items were adapted from a nonsystematic selection from the Cognitive and Affective Mindfulness Scale- Revised (CAMS-R; Feldman et al., 2007) and the trait-FFMQ (Baer et al., 2006) facets. Decisions about dimensionality were a mixture of the authors’ discretion (based on their reading of the previous literature) and results from their multilevel CFA, which led to the creation of a three-dimensional scale: (a) “Present-moment attention” (e.g., “I focused my attention on the present moment”), (b) “Acting with awareness” (e.g., “I sometimes did not stay focused on what was happening in the present”, reverse scored), and (c) “Nonjudgmental acceptance” (e.g., “Things went through my mind that I should not really be engaging myself with”, reverse scored). The scale was developed using an ESM design (70 students recorded six times per day for nine consecutive days). The MSMQ has adequate psychometric properties, with internal consistency ranging from 0.63 to 0.71 across the three dimensions. Though the three dimensions significantly load onto a superordinate mindfulness factor, the creators of the MSMQ do not comment on whether a total score calculation is recommended.

Finally, there have been sporadic attempts to create ad hoc state adaptations of the trait-FFMQ. This approach typically involves selecting a handful of items from certain trait-FFMQ facets and changing the wording to present tense and has been employed within various research designs (e.g., Eisenlohr-Moul et al., 2016; Friese & Hofmann, 2016; Gavrilova & Zawadzki, 2023; Raugh et al., 2023; Snippe et al., 2015). Critically, however, none of these studies psychometrically validated their state adaptations.

Although each of the validated state mindfulness measures has merit, here we argue the need for a new state mindfulness measure, one that is specifically fashioned after the trait-FFMQ. We begin by describing what we believe are some of the limitations of the current state measures and then proceed to explain our rationale for moving forward with our new measure, referred to as the “state-4FMQ.” Broadly speaking, an ideal state mindfulness measure would (a) be brief (noting that the 21-item SMS is too long to be used practically in an ILD design), (b) use accessible language (noting that the TMS may not be relevant to meditation-naive participants, and the MSMQ has not been validated in English), (c) include an appropriate number, and type, of dimensions (noting that the state-MAAS has just one dimension, the relevance of the TMS dimensions have been questioned, and the MSMQ failed to conduct an EFA first to develop a empirically driven theory of dimensionality), (d) include both positive and reverse coded items within a dimension in an attempt to address response bias, improve construct representation, and increase generalizability (noting that no state mindfulness measure does this: the state-MAAS is entirely reverse coded, the TMS and SMS are entirely positively coded, and the MSMQ’s Attention facet is entirely positively coded while its Awareness and Nonjudgement facets are entirely reverse coded), and (e) be versatile enough to accommodate references to both an in-lab manipulation as well as an ILD design. In creating a new state mindfulness measure, we aimed to accommodate all of these objectives and selected the trait-FFMQ as the basis to do so, based on the following reasons.

First, the trait-FFMQ is currently the most widely used multidimensional measure of mindfulness, and in a review of self-report mindfulness measures, it was regarded as providing the most comprehensive coverage of mindfulness for use in the general population (Bergomi et al., 2013). Second, past attempts to operationalize mindfulness that were theoretically derived produced exceedingly varied measurement scales. The development of the trait-FFMQ has a unique strength in that it was instead empirically derived via an EFA of 112 items collected from five independently developed self-report trait mindfulness measures, thereby consolidating a large pool of items from diverse sources through an unbiased statistical approach. In the same development article, the final EFA structure was then replicated in a 39-item hierarchical CFA showing a five-factor model with a superordinate mindfulness factor (i.e., the 39 items load onto five separate latent factors, and also those five factors themselves further load onto one superordinate latent factor). This data-driven finding of several (five) facets of mindfulness seems harmonious with the rich essence of mindfulness, as described in various Buddhist texts. Third, and related to the last point, the breadth of dimensions is critical since, as noted by Baer et al. (2004) in the development article of the Kentucky Inventory of Mindfulness Skills (a predecessor to the trait-FFMQ), different facets of mindfulness can uniquely predict a given dependent variable, and only a multidimensional measure can elucidate these differential relationships (e.g., see Medvedev et al., 2018; Petrocchi & Ottaviani, 2016; Prieto-Fidalgo et al., 2021 for articles that found selective predictive power of the different trait-FFMQ facets). By decomposing mindfulness into its constituent parts, researchers can better map out which facets of mindfulness account for the most variance in the dependent variable in a given context. This can be particularly useful in an ILD design, where an additional decomposition of within versus between person effects for each facet can provide a more precise modeling of underlying mechanisms of action.

Thus, the present research aimed to provide initial psychometric validation of a new multidimensional state mindfulness measure adapted from the trait-FFMQ. Such a scale would be useful as it would allow researchers to better assess the mechanisms of state mindfulness on outcome measures and to tailor mindfulness interventions to target the specific facets most associated with increased well-being. The research was divided into two studies. In Study 1, we developed and explored the factor structure of the state items. Following in the footsteps of the development of the trait-FFMQ, we made no assumptions about the dimensionality of the underlying structure. In Study 2, we confirmed this factor structure in an independent sample and investigated the following additional forms of measurement validity for the state-4FMQ: convergent validity (testing whether the measure is related to established measures of the same construct), predictive validity (testing whether the measure is predictive of a dependent variable in an expected way), robustness (testing whether the predictive value of the measure remains robust after accounting for covariates that are related to the measure), and construct validity (testing whether a measure designed to assess a particular construct is actually measuring that construct in an expected way).

Study 1

Introduction

The aim of Study 1 was to create and provide initial validation and internal consistency metrics of a new state adaptation of the trait-FFMQ (the “state-4FMQ”). We hypothesized that multiple facets would emerge from the EFA results, but given inconsistencies in the trait-FFMQ’s factor structure in the literature (e.g., Burzler & Tran, 2022), we had no a priori hypothesis about the specific number of facets or items that would emerge. We also hypothesized that the facets would themselves be moderately correlated and thereby suggestive of a superordinate mindfulness structure (tested in the CFA of Study 2, see below).

Method

We report how we determined our sample size, all data exclusion criteria, all manipulations, and all measures in the study.

Participants

Participants were undergraduate students recruited in 2022 through the UCSD participant pool, an online tool run by the Department of Psychology where undergraduate students sign-up to participate in research studies in exchange for course credit. Eligibility was restricted to participants who reported being at least 18 years old, able to complete the entire study in a private and quiet environment, having working audio on their computing device, and being comfortable listening to a 20-minute audio recording. The recruitment information, consent form, and protocol all referred to the study being about “relaxation” so as to not bias participants with the word “mediation” (see Dickenson et al., 2013). All participants gave their informed consent before participating and were compensated with course credit.

Combining the rule of thumb that sample sizes for EFA should be 5 to 10 participants per item, that our initial analysis involved 63 items, and that based on pilot data an estimated 15% of participants would be removed due to attrition and data cleaning, an initial sample of about 556 was needed. The collected sample consisted of 592 participants.

The following four exclusion criteria (as outlined in our pre-registration) were applied to the collected sample. First, 49 participants were excluded for failing to complete the entirety of the study (i.e., due to attrition). Second, 7 participants were excluded for failing to complete the study within ±3 standard deviations of the median study duration. Third, 24 participants were excluded for failing to correctly respond to at least three out of four attention check questions dispersed throughout the survey. Fourth, 26 and 20 participants were excluded for admitting (at the end of the study) to not answering the survey questions honestly, or not fully engaging in the relaxation exercise, respectively (see item wording in Supplemental materials). In sum, a total of 126 participants were excluded for not passing these criteria. While we acknowledge that our exclusion criteria are strict and therefore limit the ecological validity of obtained results, we chose to prioritize data quality over generalizability. We felt this approach was necessary as the online nature of our study made it susceptible to participants not putting forth their best effort.

The final sample thus consisted of 466 participants between the ages of 18 and 45 years (M = 20.66, SD = 2.91). The majority reported being female at birth (78.76%) and reported their ethno-racial group as Asian (53.00%), followed by Hispanic or Latino (19.31%), White (13.30%), Mixed (7.30%), Middle Eastern of North African (4.51%), Black or African American (1.29%), Prefer not to say (0.89%), First Nation or Indigenous American (0.21%), and Native Hawaiian or Other Pacific Islander (0.21%).

Procedure

This study was conducted entirely online and remotely, and all data were collected via the survey program Qualtrics. All questions were required to be answered, so there were no missing values in the data. After filling out demographic questions including age, sex (assigned at birth), and ethno-racial identity, they were asked to ensure they had working audio and were in a private and quiet setting before proceeding with the “relaxation exercise,” consisting of listening to a 20-minute audio recording. Participants then started (by pressing a key) the audio recording, and they could not advance with the experiment until the audio was finished playing.

The audio recording consisted of a 20-minute mindfulness meditation recording, which guides participants to focus on the breath while accepting and letting go of thoughts and feelings as they arise, using instructions like “when you get distracted by either your thoughts or your feelings, simply notice the thought or feeling and return your focus back to the breath.” This meditation style is one of the most popular forms of meditation in the West and is commonly used for individuals without previous meditation experience. Note that the meditation script intentionally avoided using any phrases or keywords that were used in the subsequent state mindfulness questions to avoid contamination (see Supplemental Materials for a full transcription of the audio). As in our previous studies (Bondi, 2021), the meditation script was a joint effort between the second author and an outside professional, both of whom had years of experience guiding mindfulness meditations. The outside professional did the voicing in the recording.

At the end of the meditation, participants completed our new state mindfulness measure by reflecting on their experience during the immediately preceding “relaxation exercise.” Participants then answered a few extra demographic questions including items pertaining to previous meditation experience, and last, the “survey honesty” and “exercise engagement” questions (used as exclusion criteria; see above).

Measures

State-4FMQ

To create the state-4FMQ, we followed the procedure used by other researchers when creating state adaptations of trait measures (e.g., Neff et al., 2021). First, we rephrased all 39 items of the original trait-FFMQ to employ present moment language so that they would be relevant to any state situation, and generalizable enough to be used across study designs (e.g., an in-lab experiment, or an ILD). In addition, we included 24 items in the opposite direction (i.e., reversing some positively coded trait items and vice versa) to ensure that each facet had the opportunity to have both positive and reverse-coded items. Though more research is needed regarding the effects of positive- versus reverse-phrased items (and other wording choices, particularly in the context of mindfulness measures, Karl & Fischer, 2020), including both is often recommended for reducing systematic response biases (e.g., Field, 2024, Weijters & Baumgartner, 2012, and see Grossman & Van Dam, 2011; Grossman, 2011 for arguments why using only reverse-phrased items, as in previous state mindfulness measures, might be problematic). Our research team piloted these items and further modified them to ensure they were accessible to individuals with or without previous meditation experience. This resulted in a total of 63 1 items to be tested in our study (see Supplemental Materials).

In the context of this study, the header text read, “Below are several questions about your experience during the 20-minute relaxation audio. Please respond with your honest opinion of what your experience was really like, rather than what you think your experience should have been.” All items were answered on a sliding scale from 1 to 5 with a resolution of 0.1 and with three labels: Not at all (1), Moderately (b), Completely/Entirely (c), with the order of items randomized to avoid potential order effects.

Meditation Status

This was used for descriptive purposes and included four questions. First, “Do you have any previous meditation experience (e.g., mindfulness meditation, transcendental meditation, loving-kindness meditation, etc.)?”, with Yes or No response options. Second, “About how frequently do you meditate?” with seven response options ranging from Several times a day, to Once a year or more. Third, “About how much time in minutes, on average, do you spend meditating per session?”, with nine response options ranging from 1–2 min to 60+ min. Fourth, “About how long have you been practicing meditation?”, with seven response options ranging from Less than 1 month, to 5+ years. In this study, we divided the sample into A) “current meditators” (n = 71; 15.25%) as participants that selected “Yes” for any previous experience, and once a week or greater for frequency of practicing; and B) “non-meditators” (n = 395; 84.76%) as all other participants. Note that the measurement of Meditation Status is examined more closely in Study 2.

Data Analysis

Basic descriptive analyses will report on means, standard deviations, and frequencies of demographics plus other relevant variables. To verify the univariate suitability of the data for EFA, the following metrics were considered for each individual item: histograms revealing inappropriate distributions (e.g., obvious ceiling or floor effects, bimodal or truncated distributions); extreme skew (>|1.0|) or excess kurtosis (>|1.5|) values; and extreme bivariate correlational values (r > |.80|) with other items. To verify the multivariate suitability of the data for EFA, the following metrics with their commonly reported cutoffs were considered for the pool of items: the Kaiser-Meyer-Olkin (KMO) test with cutoffs of a minimum of .5; .5 to .7 are mediocre, .7 to .8 good, .8 to .9 great, .9+ superb; the Bartlett’s Test of Sphericity, where the p-value should be less than .05; and the Determinant of the correlation matrix, which should be greater than 0.00001. Determinant values lower than this minimum recommended threshold indicate multicollinearity or singularity in the data, which can be problematic in factor analysis (Field, 2024; Hair et al., 2019).

Since the items are continuous, the maximum likelihood estimate would have been used to run the EFA if the assumption of multivariate normality, as assessed with Mardia’s skewness and kurtosis tests (Mardia, 1970), was met. Because multivariate normality was not met (see Results), the more robust Weighted Least Squares estimate was used. An oblique (oblimin) rotation was used to permit co-varying multidimensional factors. Note that unlike the development article for the trait-FFMQ, all analyses were conducted on items as opposed to parcels. To determine the number of factors to extract, three different methods were used to ensure a more robust estimate: Kaiser-Guttman rule, Scree plot, and Parallel analysis.

Item removal strategies in EFA for multidimensional constructs, including cutoff criteria for loadings and cross-loadings, differ widely in the literature and lack a gold standard (Guvendir & Özkan, 2022). For this analysis, all of the above steps were iteratively repeated until an acceptable factorial solution was found. At each iteration, the first step was to eliminate all items that failed to load greater than or equal to 0.40 onto any one factor. If any items were eliminated due to this step, we ran the next iteration before checking the first step again. Only after all items passed this first step at the start of a new iteration would we consider a second step of eliminating items that showed a cross-loading of greater than or equal to 0.20, which would ensure that all retained items have a difference of at least 0.20 between the highest and next highest factor loadings. This second step was done one item at a time, from the highest to lowest cross-loading item. Given the large number of items in the initial pool reflecting overlapping concepts, content redundancy within a single factor was also considered during this process. To ensure that this item reduction process was empirically supported, we also reported on the Bayesian Information Criterion (BIC) value of each iteration to ensure that each new iteration fits the data better than the previous iteration, which would be suggested if the BIC values approach 0 with each new iteration.

For the final iteration, we reported on model fit via the chi-square statistic (χ2), Tucker-Lewis fit index (TLI), and root mean square error of approximation (RMSEA). However, we note these fit criteria are less strict and less relevant for EFA as compared to CFA analysis (Study 2). Factors were further evaluated for appropriateness including all factors having at least three items each; the conceptual interpretability of each factor; and the lack of suspiciously strong bivariate correlations between the factors. Furthermore, internal consistency for each factor and all items together was calculated with omega total and Cronbach’s alpha statistics. While .70 or greater is a general rule of thumb for acceptable omega total and Cronbach’s alpha statistics, we acknowledge that this cutoff should be expected to vary, for example, by the number of items per factor (Field et al., 2012).

All data were analyzed using R (Version 4.2.2; R Core Team, 2022).

Results

Preliminary Steps

As a preliminary step, all 63 potential items were examined for univariate suitability for an EFA. This was first assessed by detecting items where 25% or more of all participant responses were at the floor or ceiling of the response options (i.e., a 1 or a 5 on the 1–5 sliding scale). Two potential items adapted from Nonjudging were removed for being at the ceiling. No other items needed to be removed based on visual inspection of histograms to detect items with obviously non-normal distributions. Further, all of the remaining 61 items met acceptable ranges of skewness (range = −0.75 to 0.44), excess kurtosis (range = −1.25 to 0.30), and bivariate correlation values with other items (range = 0.10 to 0.75).

Next, we determined the multivariate suitability of the pool of 61 items for EFA. The Kaiser-Meyer-Olkin (KMO) value was 0.89, suggesting that the sample size adequacy for running an EFA on the 61 items was great. Bartlett’s Test of Sphericity was highly significant, X2 (1830) = 13105.13, p < .001, suggesting that the item pool was correlated enough to run an EFA. However, the determinant of the correlation matrix was 1.5 × 10−13, suggesting the presence of a serious multicollinearity or singularity issue that was not detected with the bivariate item correlations. Note that this issue was unsurprising given that we purposely included a large number of interrelated items in the initial item pool as to ensure that all concepts embedded in the trait-FFMQ were represented with a range of viable state-adapted options, and since an unexpectedly small number of items (2) were removed in tests of univariate suitability for EFA.

Because the aforementioned empirical tests of univariate suitability failed to eliminate most items and thus left too many viable options, we re-examined the pool of 61 items to further reduce the number of options. The goal was to arrive at a final set of items that (a) resulted in an acceptable determinant value, (b) retained comprehensive coverage of all content areas (including heterogeneous content areas within a factor) covered in the trait-FFMQ, and (c) included both positive and reverse coded items within each content area. In cases where there were several viable items with high content overlap, we favored items that: (a) were adapted from trait-FFMQ items that in a previous study had been shown to be highly sensitive to change across different contexts and therefore more reflective of state rather than trait tendencies (Truong et al., 2020), (b) had been selected for use in previously validated short-forms of the trait-FFMQ (Bohlmeijer et al., 2011; Gu et al., 2016; Tran et al., 2013), and (c) required minimal content modification when adapted from the trait-FFMQ, and (d) was determined to be of high theoretical relevance. This process, which we acknowledge has an inherent but unavoidable subjective bias, resulted in a reduced pool of 25 items that we believed accurately represented a state adaptation of the trait-FFMQ while simultaneously minimizing conceptual redundancy to a degree reflected in an acceptable determinant value (see Supplementary Materials for items). For this pool of 25 items, the KMO value was very good at 0.86; Bartlett’s test of Sphericity was highly significant, X2 (300) = 3847.669, p < .001; and the determinant of the correlation matrix was 0.0002, suggesting that EFA was now suitable to our dataset.

EFA Analysis

In all iterations, multivariate non-normality was indicated by Mardia’s skewness and kurtosis tests, both being significant at p < .001, so the more robust weighted least squares (WLS) extraction method was used. In the first iteration, Kaiser rule, Scree plot, and Parallel analysis all suggested that five factors should be extracted. Thus, an EFA with an oblimin (oblique) rotation, using the weighted least squares extraction method, and five presupposed factors, was analyzed. The BIC of this iteration was −852.35. At this iteration, we removed four items that failed to load greater than or equal to 0.40 onto any one factor: item 27, 29, 30, 47.

In the second iteration, Kaiser rule, Scree plot, and Parallel analysis all suggested that five factors should be extracted. Thus, an EFA with an oblimin (oblique) rotation, using the weighted least squares extraction method, and five presupposed factors, was analyzed. The BIC of this iteration was −552.37. At this step, we removed two items which failed to load greater than or equal to 0.40 onto any one factor: item 21, 22. Note that this resulted in all state items derived from trait Nonreactivity being eliminated.

In the third iteration, Kaiser rule, Scree plot, and Parallel analysis all suggested that five factors should be extracted. Thus, an EFA with an oblimin (oblique) rotation, using the weighted least squares extraction method, and five presupposed factors, was analyzed. The BIC of this iteration was −432.27. At this step, we removed the one item that failed to load greater than or equal to 0.40 onto any one factor: item 8.

In the fourth and final iteration, Kaiser rule, Scree plot, and Parallel analysis all suggested that four factors should be extracted. Note that this was the first iteration that suggested four, rather than five, factors. Thus, an EFA with an oblimin (oblique) rotation, using the weighted least squares extraction method, and four presupposed factors, was analyzed. The BIC of this iteration was −343.49. All 18 items of this iteration were retained since they each loaded greater than or equal to 0.40 onto any one factor, and since there were no substantial cross-loadings. Note that at each iteration the BIC value converged closer to zero, indicating a progressive improvement in data fitting throughout the iterative process.

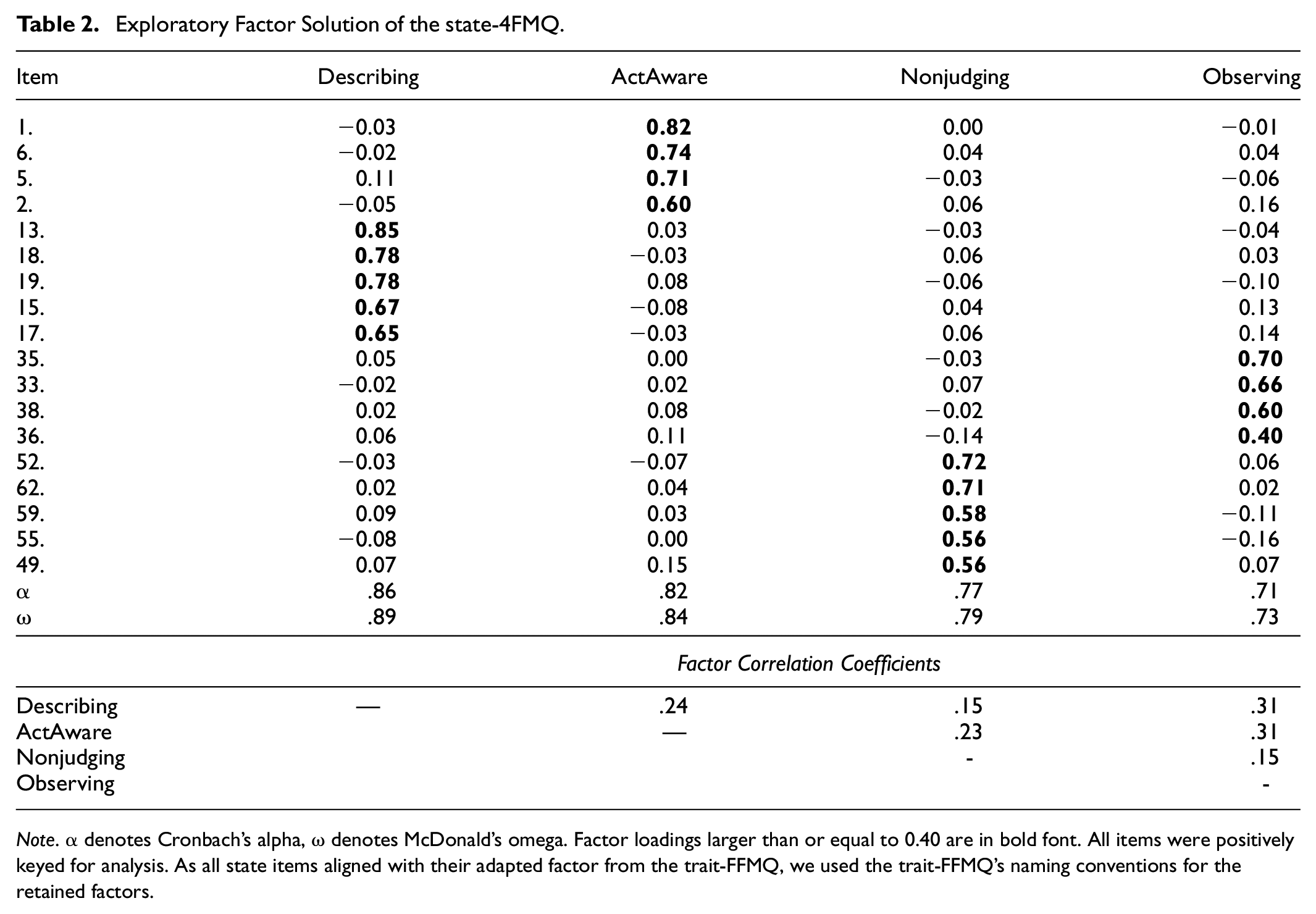

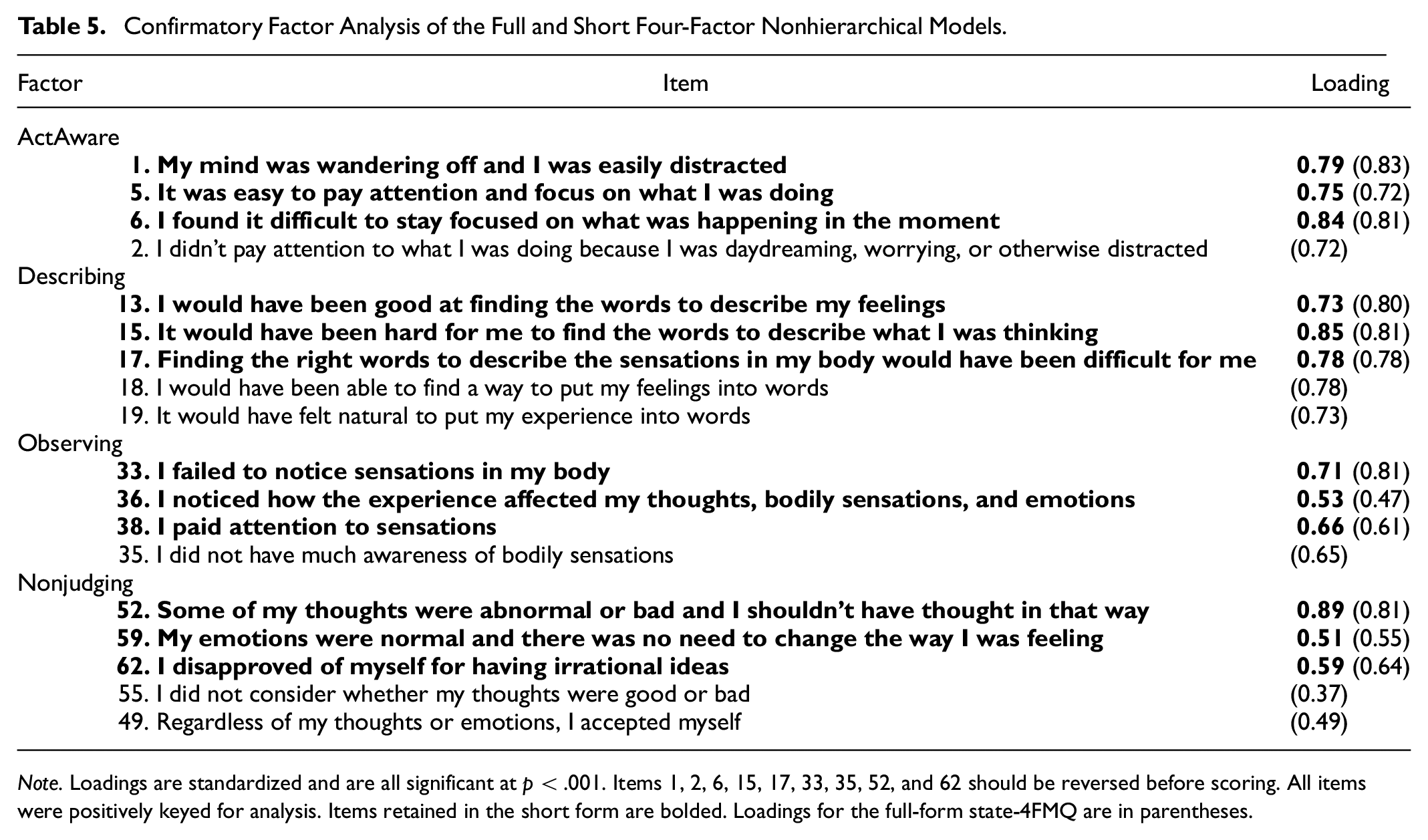

Table 2 (upper panel) shows the standardized factor loadings of the four-factor model that emerged from these final 18 items. All loadings were at least moderately large, ranging from 0.40 to 0.85 in magnitude, indicating that the items converged meaningfully onto their respective factors. Given that the four-factor structure aligned entirely with the factors of the trait-FFMQ, we retained the original trait label to label each state factor that emerged from the EFA. In the final version, ActAware had 4 items; Describing had 5 items; Observing had 4 items; and Nonjudging had 5 items. The four-factor EFA had a good data fit, with the following indices all falling within acceptable cutoff criteria: χ2(87) = 191.054, p < .001; TLI = .934; RMSEA = .051, 95% confidence interval = [.041, .060].

Exploratory Factor Solution of the state-4FMQ.

Note. α denotes Cronbach’s alpha, ω denotes McDonald’s omega. Factor loadings larger than or equal to 0.40 are in bold font. All items were positively keyed for analysis. As all state items aligned with their adapted factor from the trait-FFMQ, we used the trait-FFMQ’s naming conventions for the retained factors.

Table 2 (middle panel) shows the internal consistency of the factors. All factors were identified by at least four items and their internal consistency coefficients were satisfactory, ranging from .73 to .89 (MacDonald’s ω). Furthermore, the internal consistency of all items together was high (ω = .87).

Table 2 (lower panel) shows the factor correlations, clearly indicating nonzero relationships amongst all factors. This is consistent with the potential of a hierarchical solution (to be tested in the CFA in Study 2) and the plausibility of using a total score.

Study 1 Discussion

In Study 1 we found that a new 18-item, four factor state adaptation of the trait-FFMQ had a good data fit with satisfactory internal consistency. Notably, all state items aligned entirely with the adapted factors from the original trait-FFMQ, supporting the face validity of the state-4FMQ through a successful translation of trait to state items. Finally, correlations between factors were positive and significant as predicted, suggesting the possible existence of a factor structure with a superordinate dimension, to be tested in Study 2. The one unpredicted result was that all state items derived from trait Nonreactivity (and thus a state Nonreactivity factor) were eliminated during the EFA procedure, which we return to in the General Discussion.

Study 2

Introduction

Study 2 was conducted in four parts (described in detail, below), which allowed us to address two aims. First, we conducted a confirmatory factory analysis (CFA) to confirm the four-factor structure revealed in Study 1. For the CFA, we used all 18 items from the EFA (which we refer to as the full-form state-4FMQ) as well as a shortened version with 12-items (which we refer to as the short-form state-4FMQ, explained below). Second, data from Study 2 allowed us to further test the validity of the full- and short-form state-4FMQ, by testing for convergent, predictive, robustness, and construct validity.

Method

Participants

The participant pool and eligibility criteria were the same as described in Study 1, with the distinction that no individuals from Study 1 were included in Study 2. Unlike Study 1, all participants were tested in both a Meditation condition (as in Study 1) and a Control condition, randomized in order between Parts 1 and 3 (see Procedure, below). For the CFA, we only included data from participants that completed Parts 1-2 (i.e., completion of Parts 3-4 was not required), and were tested with the Meditation first (in Part 1). Had we included data from participants who were tested with the Meditation second (in Part 3), we feared this could potentially contaminate the CFA results (as these participants would have already been familiarized with the state-4FMQ (or other mindfulness) items if tested in the Control condition first. This was of the utmost importance since this contamination could lead to inaccurate interpretations of the underlying factor structure, which was the basis for the further validity analyses. By contrast, for the “further validation analyses” (e.g., convergent, predictive, etc.), we included data from any participant that completed Parts 1-4. The reason for this is two-fold. First, for some validity analyses (i.e., construct validity) we had to use data from both Parts 1 and 3. Second, for our other validity analyses (e.g., predictive validity derived from just the Meditation condition), we were less concerned about contamination since they more broadly assess relationships between variables, rather than the specific structure of the measurement model. Therefore, while previous exposure to items is critical to avoid in CFA to maintain the integrity of the derived factor structure, its impact was less pronounced in our other validity tests. As such, we describe data collection, exclusion criteria, and demographics separately for these two segments (i.e., CFA vs. “further validation analyses”).

First, for the CFA analysis, combining the rule of thumb that sample sizes should be 10–15 subjects per item in a confirmatory factor analysis (Pett et al., 2003), that our largest planned factor analysis involved 18 items, and that based on pilot data an estimated 15% of participants would be removed due to attrition and data cleaning, an initial sample of about 265 participants was needed. The collected sample consisted of 313 participants. The following five exclusion criteria were applied to the collected sample (as outlined in our pre-registration). First, 32 participants were excluded for failing to complete the entirety of Parts 1-2 (i.e. due to attrition) or failing to enter a matching participant ID between each of the two parts. Second, 9 participants were excluded for failing to complete the study within ±3 standard deviations of the median study duration. Third, 6 participants were excluded for failing to correctly respond to at least two out of four attention check questions dispersed throughout the two parts. Fourth, 11 and 9 participants were excluded for admitting (at the end of the study) to not answering the survey questions honestly, or not fully engaging in the relaxation exercise, respectively. Fifth, 4 participants were excluded for being outliers, defined as scoring ± 3 standard deviations of the mean total state-4FMQ score. In sum, a total of 71 participants were excluded for not passing these criteria. The final sample for the CFA analysis consisted of 242 participants between the ages of 18 and 46 years (M = 21.45, SD = 3.69). The majority reported being female at birth (85.54%) and most reported their ethnoracial group as Asian (50.00%), followed by Hispanic or Latino (24.79%), White (13.64%), Mixed (8.68%), Black or African American (2.07%), and Middle Eastern or North African (0.83%).

Second, for the further validity analyses, our sample size was based on an a priori power analysis for multiple linear regression calculated for the predictive validity analysis, with the following parameters: anticipated effect size f2 = .04 (based on pilot data); statistical power = .80; four predictor variables in the predictive validity model; and an alpha of .05. This results in a sample size of 301 participants. With an estimated 15% of participants being excluded due to attrition and data cleaning, we therefore aimed to collect data from 354 participants. The collected sample consisted of 455 participants. The following four exclusion criteria (as outlined in our pre-registration) were applied to the collected sample. First, 75 participants were excluded for failing to complete the entirety of Parts 1–4 (i.e., due to attrition) or failing to enter a matching participant ID between each of the four parts. Second, 28 participants were excluded for failing to complete the study within ±3 standard deviations of the median study duration. Third, 17 participants were excluded for failing to correctly respond to at least four out of eight attention check questions dispersed throughout the four parts. Fourth, 20 and 4 participants were excluded for admitting (at the end of the study) to not answering the survey questions honestly, or not fully engaging in either relaxation exercise, respectively. In sum, a total of 144 participants were excluded for not passing these criteria. The final further validity analyses sample thus consisted of 311 participants between the ages of 18 - 46 years (M = 21.34, SD = 3.83). The majority reported being female at birth (80.71%) and most reported their ethnoracial group as Asian (49.20%), followed by Hispanic or Latino (20.58%), White (17.36%), Mixed (8.68%), Black or African American (2.25%), Middle Eastern or North African (1.61%), and First Nation or Indigenous American (0.32%).

Procedure

This research was conducted entirely online and remotely, and all data were collected via the survey program Qualtrics. All questions were required to be answered, so there were no missing values in the data. The study consisted of four parts.

Part 1 (day 1): The order of events was as follows. First, participants filled out two state affect measures, which were randomized in order. Next, they were asked to ensure they had working audio and were in a private and quiet setting before proceeding with a “relaxation exercise,” consisting of listening to a 20-minute audio recording. They then started (by pressing a key) the audio recording, and they could not advance with the experiment until the audio was finished playing. The audio recording was evenly randomized to consist either of the Meditation audio described in Study 1, or a Control audio consisting of an informative narration about the science and benefits of several relaxation techniques that has previously been used in our lab (Bondi, 2021) and was designed to be affectively neutral (see Supplementary Materials for a full transcription of the audio). Note that the script in the Control condition only mentioned relaxation techniques that could not be practiced in the moment, such as gardening and journaling. Though the two conditions (Meditation and Control) differed on structure, timing, and word count, they were matched for duration of instruction, beginning with the instruction of closing the eyes and relaxing, and being voiced by the same individual (which was the same person who narrated in Study 1). No order effects were observed; participants who received the Control condition first did not differ in demographic characteristics or predictive model outcomes from those who received the Meditation condition first. After the audio recording, they completed the following measures in this order: the state-4FMQ, the two other state mindfulness surveys in randomized order, the two state affect measures in randomized oclarificationhe clarify of instructions question, and last, the survey honesty and exercise engagement questions.

Part 2 (day 2): One day later, the order of events was as follows. First, participants completed measures of trait mindfulness and chronic stress in randomized order, then they answered standard questions about demographics, and last, the survey honesty question.

Part 3 (day 8): One week after Part 1, participants repeated the procedure from Part 1 but were assigned to listen to the audio recording that they did not listen to in Part 1 (e.g., if they were assigned to listen to the Meditation audio in Part 1, they were assigned to listen to the Control audio for Part 3).

Part 4 (day 9): One day after Part 3, participants repeated the procedure from Part 2, but were asked to report on previous meditation experience instead of standard demographics.

Measures

State Mindfulness

The following state mindfulness measures were obtained following the relaxation exercises and instructed participants to reflect on their experience during the immediately preceding “relaxation exercise” (see above).

State-4FMQ

The 18-item State Four Facet Mindfulness Questionnaire from Study 1 was our primary measure of state mindfulness. The items were presented randomly. The scale showed acceptable internal consistency (see Results).

State-MAAS

The State Mindful Attention and Awareness Scale (state-MAAS; Brown & Ryan, 2003, reviewed in the General Introduction), a five-item scale designed to measure a recent or current expression of the mindful attention and awareness of one’s engagement in daily activities, is currently the most commonly used measure of state mindfulness. All items are reverse coded and rated on a 7-point Likert scale with three labels: Not at all (0), Somewhat (3), and Very Much (6). Items are averaged to calculate a total score. Mindfulness is conceptualized as a unidimensional construct. The scale showed acceptable internal consistency with Cronbach’s alpha coefficients ranging from α = .84 to .86 in the present study

SMS

The State Mindfulness Scale (SMS; Tanay & Bernstein, 2013, reviewed in the General Introduction), a 21-item scale comprising of a mindfulness of mind subscale, a mindfulness of body subscale, and a total score. Items are rated on a 5-point Likert scale from 1 = Not at all to 5 = Very Much. Subscales and the total score are averaged. The scale showed acceptable internal consistency with Cronbach’s alpha coefficients ranging from α = .90 to .93 for the total score, and α = = .79 to .90 for the subscales, in the present study.

State Affect

The following state affect measures were obtained before and after the relaxation exercises and instructed participants to reflect on their experience “right now, in this moment”.

State Anxiety

The state items from the State-Trait Anxiety Inventory (STAI; Spielberger, 1983). This 20-item test measures the presence and severity of current symptoms of anxiety. It is set up as a 4-point Likert- scale from 1 (“Not at All”) to 4 (“Very Much So”). State Anxiety was used because relief from anxiety is one of the most widely promoted benefits of mindfulness (e.g., Russ et al., 2017; Van Dam et al., 2018). The scale showed acceptable internal consistency with Cronbach’s alpha coefficients ranging from α = .93 to .94 in the present study.

State Stress

As no validated State Stress measure could be found in the literature, a composite score was calculated by combining the responses to three in-house questions. The first two questions have slider scales from 1 to 7 with a resolution of 0.1, with labels on each end and number markers in between, and the third question uses a 5-point visual analog scale. The first question asks: “How stressed do you feel right now?” (1 = not at all stressed, 7 = extremely stressed). The second question similarly asks: “How relaxed do you feel right now?” (1 = not at all relaxed, 7 = extremely relaxed) and is reverse coded. The third question asks: “How are you feeling right now?” and has a simple, traditional, yellow-and-black smiley face that can be adjusted from the neutral middle (starting point) to up to 2 points in the positive direction (making the face slightly, and then fully, smile) or up to 2 points in the negative direction (making the face slightly, and then fully, frown). State Stress was then calculated with the following equation: (Q1 + (8-Q2) + (1.4*(6-Q3))/21, such that a higher score indicates more stress. Even though the third item is not continuous, the composite score is calculated with a resolution of 0.1. State Stress was used because mindfulness-based techniques are one of the most used coping strategies to handle stress (e.g., Aguilar-Raab et al., 2021; Weinstein et al., 2009). These three in-house items showed acceptable internal consistency with Cronbach’s alpha coefficients ranging from α = .82 to .86 in the present study, which supports the use of the composite score as a measure of State Stress.

Other State Measures

Thought Valence

At the end of the relaxation exercises, participants were asked the following: “Imagine someone read the transcript of what you thought about in the 20-minute exercise. How would they rate the content of that transcript?”, which is answered on a 7-point Likert scale and includes 7 markers ranging from very negative to very positive (coded as 0 to 6). The wording of this in-house item was inspired by a previous ESM study (Gross et al., 2024) that found significant associations between Thought Valence, mindful attention, and mood. As a sanity check for this in-house item, participants were also asked to rate their confidence in their Thought Valence rating (i.e., “How confident are you about your estimate above?”, from Not at all confident (0) to Extremely confident (6)), and the mean ratings were relatively high (Meditation M = 4.14, SD = 0.96; Control M = 4.02, SD = 0.94).

Clarity of Instructions

Since guided meditations may seem esoteric or inaccessible to novice meditators, we include an additional question asked in Feldman et al. (2010) as a manipulation check to ensure the instructions were clear to participants after the relaxation exercises: “To what extent did you feel that the audio recording instructions were clear enough for you to understand what you were being asked to do?”, rated on a 7 point Likert scale with three labels: 1 = Not at all, 4 = Somewhat, 7 = To a great extent.

Trait Measures

Trait Mindfulness: The 39-item trait-Five Facet Mindfulness Questionnaire (Baer et al., 2006) was used to measure trait mindfulness. Note that we use the 39-item version, and not an abbreviated version, since more knowledge about its psychometric properties is available in the literature, and since it is the basis for the state-4FMQ. The scale showed acceptable internal consistency with Cronbach’s alpha coefficients ranging from α = .90 to .94 for the total in the present study.

Chronic Stress: The Perceived Stress Scale (PSS; Cohen et al., 1983) is a 10-item scale of stress felt in the past month. The scale showed acceptable internal consistency with Cronbach’s alpha coefficients ranging from α = .86 to .87 in the present study.

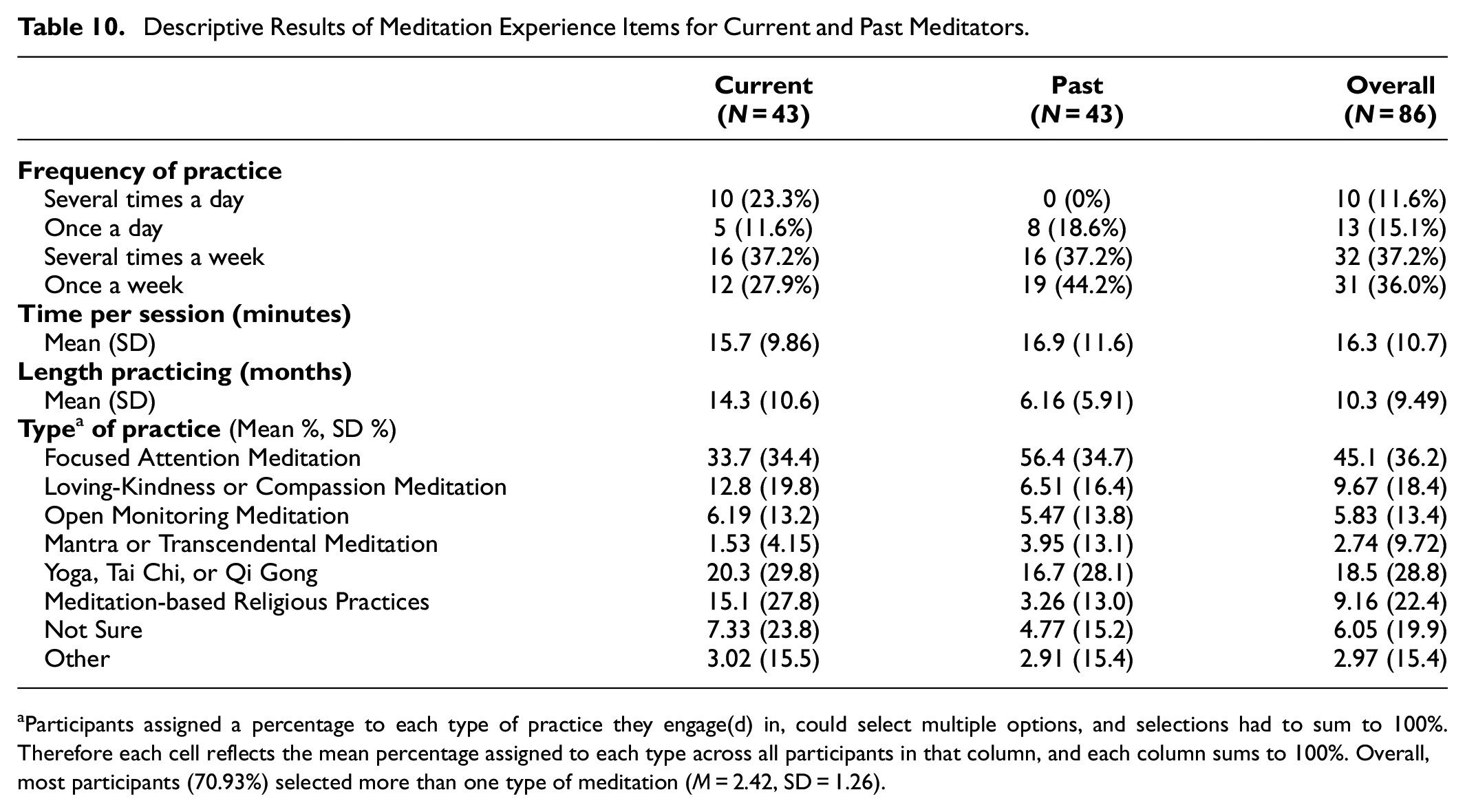

Meditation Status

This is an important construct to consider in any study involving meditation since the dramatic increase in popularity of meditation and related practices, such as yoga or smartphone led mindfulness practices, means that much of the population has at least some familiarity with meditation (see Burzler et al., 2019; Heppner & Shirk, 2018). Studies that rely on student or community samples (as opposed to targeted recruitment strategies such as reaching out to monks or MBSR instructors) usually rely on participant self-report measures of previous meditation experience that often include items on the frequency, duration, and/or type of meditation practiced. However, these studies use vastly heterogenous classification approaches with no current consensus as to best practices (for a discussion on issues arising from different definitions of meditation experience, see Davidson & Kaszniak, 2015; Van Dam et al., 2018). To be consistent with a commonly used classification in the literature (e.g., Baer et al., 2008; Burzler et al., 2019; Feldman et al., 2010; Schlosser et al., 2022), we operationalized “current meditators” being participants that reported currently practicing meditation or mindfulness at least once a week. Informed by Pang and Ruch (2019), we further operationalized “past meditators” as those who practiced at least once a week but no longer do so. All others were categorized as “non-meditators” (see Supplemental Materials for all item descriptions). Note that for simplicity we refer simply to meditators, rather than those with meditation or mindfulness experience. We acknowledge that stricter criteria involving how long one has been practicing for, the duration of each practice, and the type of practice, could be used in future studies involving samples from targeted populations.

Data Analysis

Basic descriptive analyses reported on means, standard deviations, and frequencies of relevant variables. Normality, as assessed with visual inspection of histograms, was verified and met for all variables of interest. The assumptions of all statistical tests were checked and met. The level of significance was set to 5% (p < .05) for all tests; however, we emphasized effect sizes rather than statistical significance since the latter is often misleading. Effect sizes were reported as the following: Pearson r values for bivariate correlations, with the rule of thumb that absolute values of .10 to .30 are weak effects, .30 to .50 are medium effects, and .50 and over are large effects (Cohen, 1988, p. 198); Cohen’s d for t-tests, with the rule of thumb that values around .20 are considered small effects, values around .50 are considered medium effects, and values around .80 or more are considered large effects (Cohen, 1988); Cramer’s V for chi-square tests, with the rule of thumb that values ≥.1 are weak, ≥.3 are moderate, and ≥.5 are large effects (Kakudji et al., 2020); and partial eta squared (η2) for analysis of variance (ANOVA) and regression models, with the rule of thumb that η2 = .01 indicates a small effect; η2 = .06 indicates a medium effect; and η2 = .14 indicates a large effect (Cohen, 1988). All ANOVA and regression models in this study use Type III sum of squares, which examines individual effects in light of all other model effects regardless of order. All data were analyzed using R (Version 4.2.2; R Core Team, 2022).

CFA Analysis

At 18-items, the state-4FMQ from Study 1 was relatively lengthy compared to most state mindfulness measures. We ultimately wanted the state-4FMQ to be as brief (yet comprehensive) as possible to facilitate its use in research designs with multiple measures and/or measures administered on multiple occasions, without overburdening participants. Inspired by previous literature and on the results of the EFA from Study 1, we thus created the short-form state-4FMQ using the same sample as the full-form (as has been done in the development of other measures, for example, Ullrich-French et al., 2021). The selection of which items to retain involved empirical (e.g., items with the highest factor loadings), conceptual (i.e., items that when grouped together minimized redundancy and maximized conceptual coverage within a factor), and theoretical (e.g., referencing the extant literature on the short-forms of the trait-FFMQ) considerations. We aimed to retain three items per factor, which is generally considered the minimum number of items needed for model identification (Kline, 2015). We hypothesized that the 12-item short-form would retain the psychometric properties of, and produce comparable results with, the full 18-item state-4FMQ. To test this, all of the following analyses were conducted on both the full (18-item) and short (12-item) state-4FMQ.

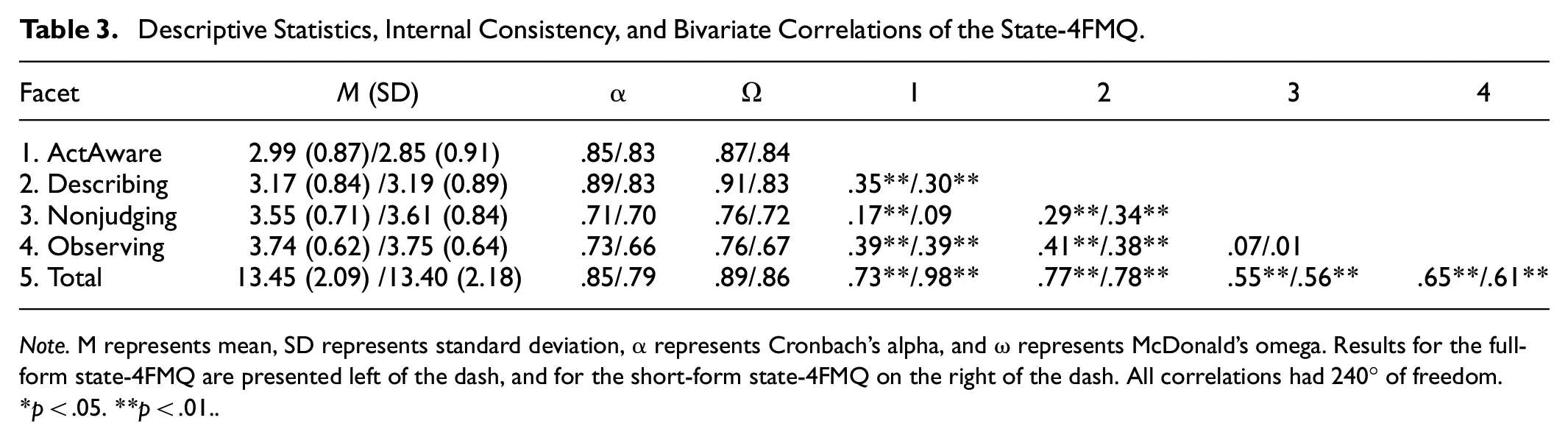

The main goal of the CFA was to confirm the accuracy of the four-factor structure of the state-4FMQ that emerged from Study 1 through EFA. Prior to conducting the CFA, we first calculated bivariate correlations amongst the state-4FMQ facets. Based on the results of Study 1 and the expectation that the facets would be different enough to be separable facets (as would be revealed in the four-factor CFA models), yet similar enough to be associated via one superordinate mindfulness construct (as would be supported by a hierarchical CFA structure), weak to moderate positive correlations between all facets were predicted. We also assessed the internal consistency of the state-4FMQ with Cronbach’s alpha (α) and McDonald’s omega (ω) statistics for each facet. Note that while 0.70 or greater is a general rule of thumb for acceptable estimates for both statistics, we point out that this cutoff can be expected to vary, specifically, being lower when the number of items per factor is small (Field et al., 2012). We also point out that there is some controversy in the appropriate range of acceptable alpha values (Streiner, 2003), and the argument has been made that lower cutoff values are appropriate in early stages of scale development (Nunnally, 1967). For these reasons, we believe that promoting the brevity of a measure is a fair tradeoff for relatively reduced internal consistency values.

To verify the suitability of the data for CFA, the state-4FMQ items were checked for univariate normality via extreme values for skewness (>|1.0|) and excess kurtosis (>|1.5| ) and visual inspection of histograms. The items were also checked for multivariate normality with Mardia’s multivariate skew and kurtosis tests (Mardia, 1970). Using the R-package lavaan (Rosseel, 2012), we employed a CFA on these items to test a four-factor solution. Replicating the development of the trait-FFMQ (Baer et al., 2006), error terms were not allowed to covary and items were constrained to load onto only one factor in accordance with the theorized measurement model. Unlike the development of the trait-FFMQ, CFA models used individual items as opposed to item parceling, since the latter is a controversial and less stringent practice and is not advisable when there are small numbers of items per factor (as in the current study). Further, one study found that using an item-level CFA of the trait-FFMQ produced comparable results to parceling (Christopher et al., 2012), suggesting there is little added value to the parceling method.

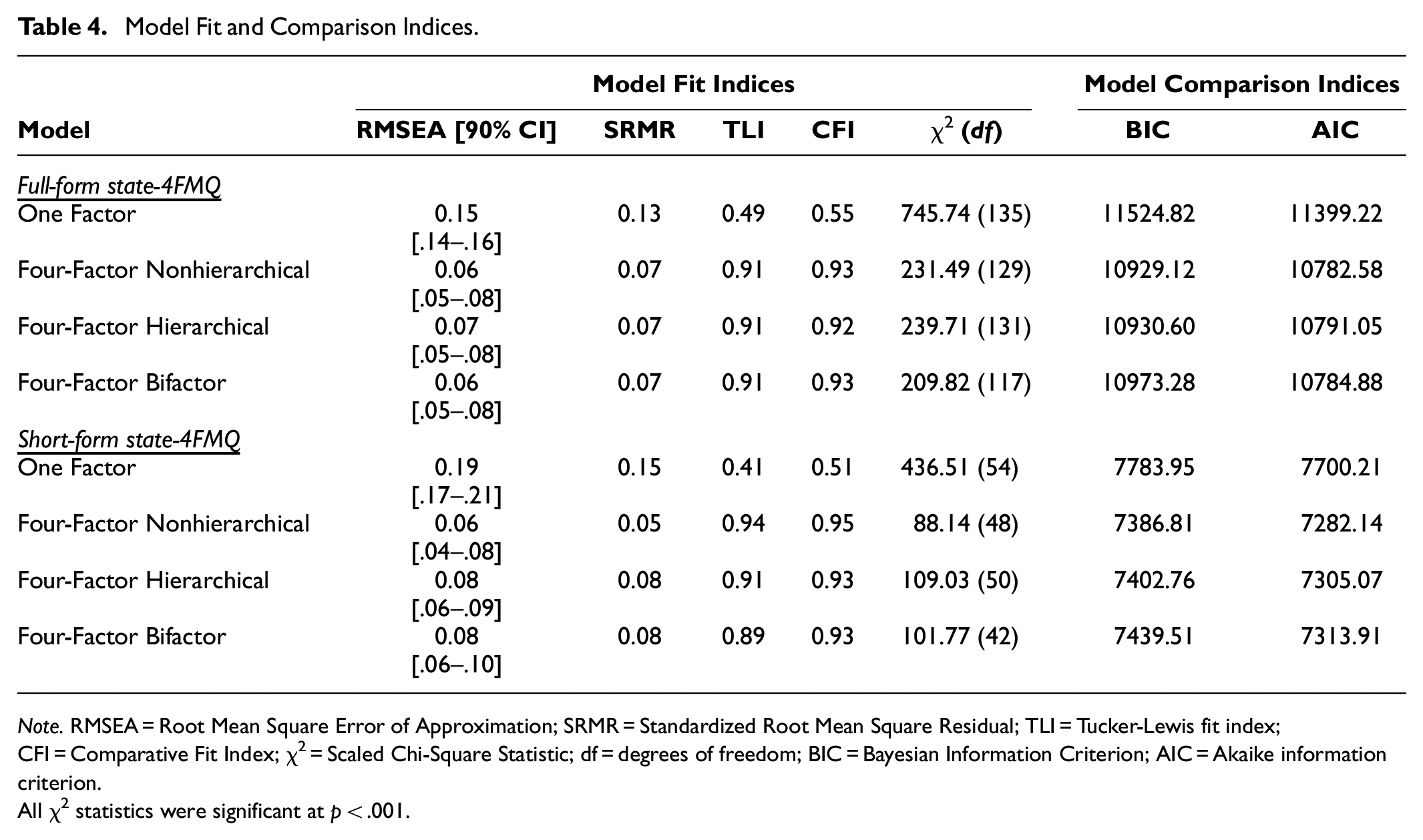

We hypothesized that (a) the results of the CFA would confirm a multidimensional structure with four distinct factors (i.e., a four-factor solution without a superordinate mindfulness factor, which we refer to as the “four-factor nonhierarchical” model), and (b) in a four-factor hierarchical CFA, the factors would strongly load onto a superordinate mindfulness factor (i.e., a four-factor solution with a superordinate mindfulness factor, which we refer to as a “hierarchical” model). The finding of a hierarchical structure would suggest that the four facets are sufficiently interrelated to be considered part of one overarching mindfulness construct. We hypothesized, however, that Observing might not strongly load onto the superordinate factor because our population was likely to have minimal to no meditation experience, and Baer et al. (2006) among others found that trait-Observing only significantly loaded onto a superordinate factor amongst individuals with sufficient meditation experience. The following indices, with suggested benchmarks by Hu and Bentler (1999) and Brown (2015), were evaluated collectively to provide an evaluation of how well each model fit the data: chi-square statistic (χ2; p > .05), root mean square error of approximation (RMSEA; <0.06) with its 90% confidence interval (0.00–0.08), standardized root mean square residual (SRMR; <0.08), Tucker-Lewis fit index (TLI; >0.90), and comparative fit index (CFI; >0.95).

Besides assessing individual model fit, we also aimed to determine the overall best fitting factor structure. In addition to our two main models described above, we were inspired by the moderate bivariate correlations of the facets we observed in the EFA, as well as factor structures that have been tested with the trait-FFMQ, to also compare the fit of the following additional models: a one-factor model containing only a total mindfulness score (which assumes that the state-4FMQ has a unidimensional structure), and an exploratory four-factor bifactor model (which is similar to the four-factor hierarchical model with the exception that latent variables are set as orthogonal to each other, and all items simultaneously load on one general factor and four specific factors). We hypothesized that the one-factor model would provide the worst fit, but had no a priori hypothesis about which of the three other models (i.e., the four-factor nonhierarchical, hierarchical, or bifactor model) would provide the best fit given the inconsistent factorial structures found for the trait-FFMQ (see Burzler & Tran, 2022). Note that determining the best-fitting factor structure of the state-4FMQ affects the scoring guidelines of the measure. Specifically, a four-factor hierarchical or bifactor model would support the use of both facet scores and a total score. By contrast, a four-factor nonhierarchical model would support the use of individual facets but not a total score, and a one-factor model would support only the use of a total score. Model comparisons mainly utilized Bayesian Information Criterion (BIC) values, though we will also reported on the Akaike information criterion (AIC). For BIC and AIC values, scores closer to 0 indicate a more parsimonious and better-fitting model. Though relevant differences between BIC values are rules of thumb, we used the following guidelines proposed by Raftery (1995): differences >10 as very strong evidence, 6 to 10 as strong evidence; 2 to 6 as positive evidence; and 0 to 2 as weak evidence, for a model being a better fit.

Further Validation Analyses

All analyses were conducted on both the full (18-item)- and short (12-item)-form state-4FMQ. Because the ultimate goal of this research was to create a viable short form measure of state mindfulness, in the Results, we present only results for the short-form (results using the full-form state-4FMQ were comparable to the short-form and are available in Supplemental Materials). Also note that some tests of construct validity (discriminant sensitivity and test-retest reliability) required using data from both the Meditation and Control conditions. By contrast, the convergent and predictive validity analyses included data from only the Meditation condition.

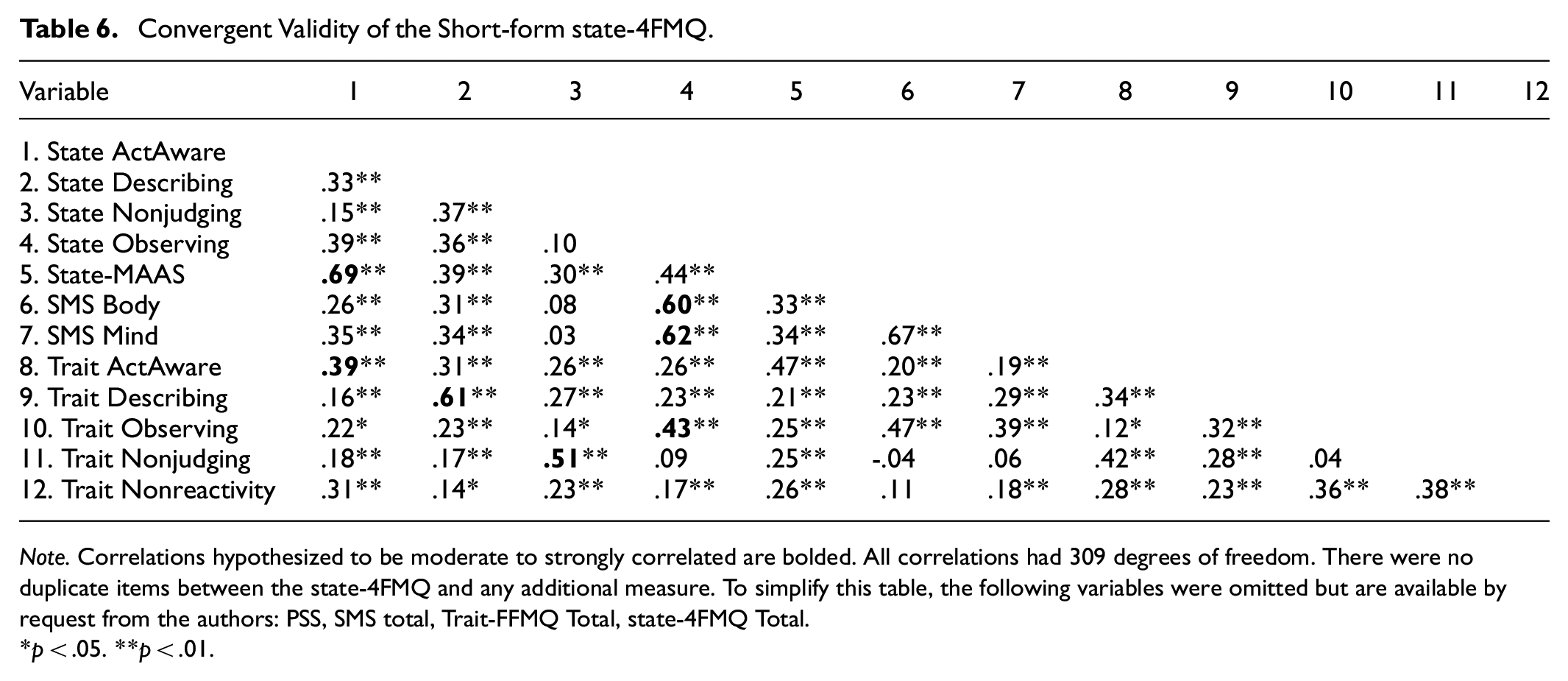

Convergent Validity

We assessed convergent validity via bivariate correlations between the state-4FMQ and several other measures. First were the two extant measures of state mindfulness, which were predicted to show weak to moderate positive correlations with the state-4FMQ facets, although the ActAware component of the state-4FMQ was predicted to be more strongly correlated with the state-MAAS since the latter focuses mainly on awareness. Though not preregistered, we later hypothesized a strong correlation between the state-4FMQ’s Observing subscale and the SMS, in line with previous results measuring this association with the trait-FFMQ’s Observing subscale (e.g., Navarrete et al., 2023; Tanay & Bernstein, 2013).

Second was the trait-FFMQ, where the aligned facets, for example, state ActAware and trait ActAware, should be more positively correlated than non-aligned facets, for example, state ActAware and trait Nonjudging. The correlations between the state- and trait-FFMQ should not be too high, however, else one could argue that our new state measure is behaving in a trait-like way. There is good theoretical reasons (Robinson & Clore, 2002a, 2002b) and empirical data showing rather weak associations between trait mindfulness and induced mindful states (Bravo et al., 2018; Heppner & Shirk, 2018) to suggest that our analysis should likewise show only moderate correlations.

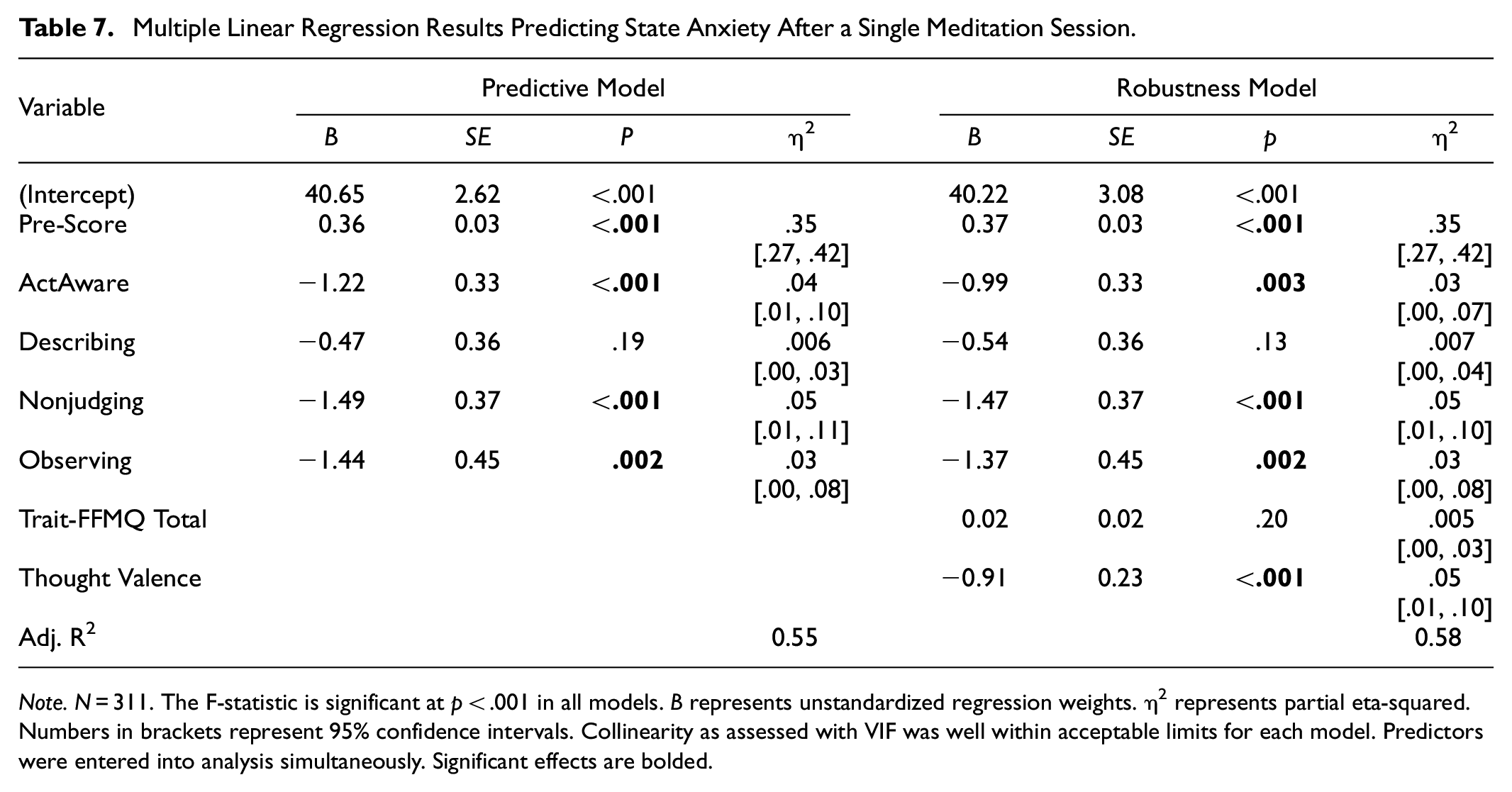

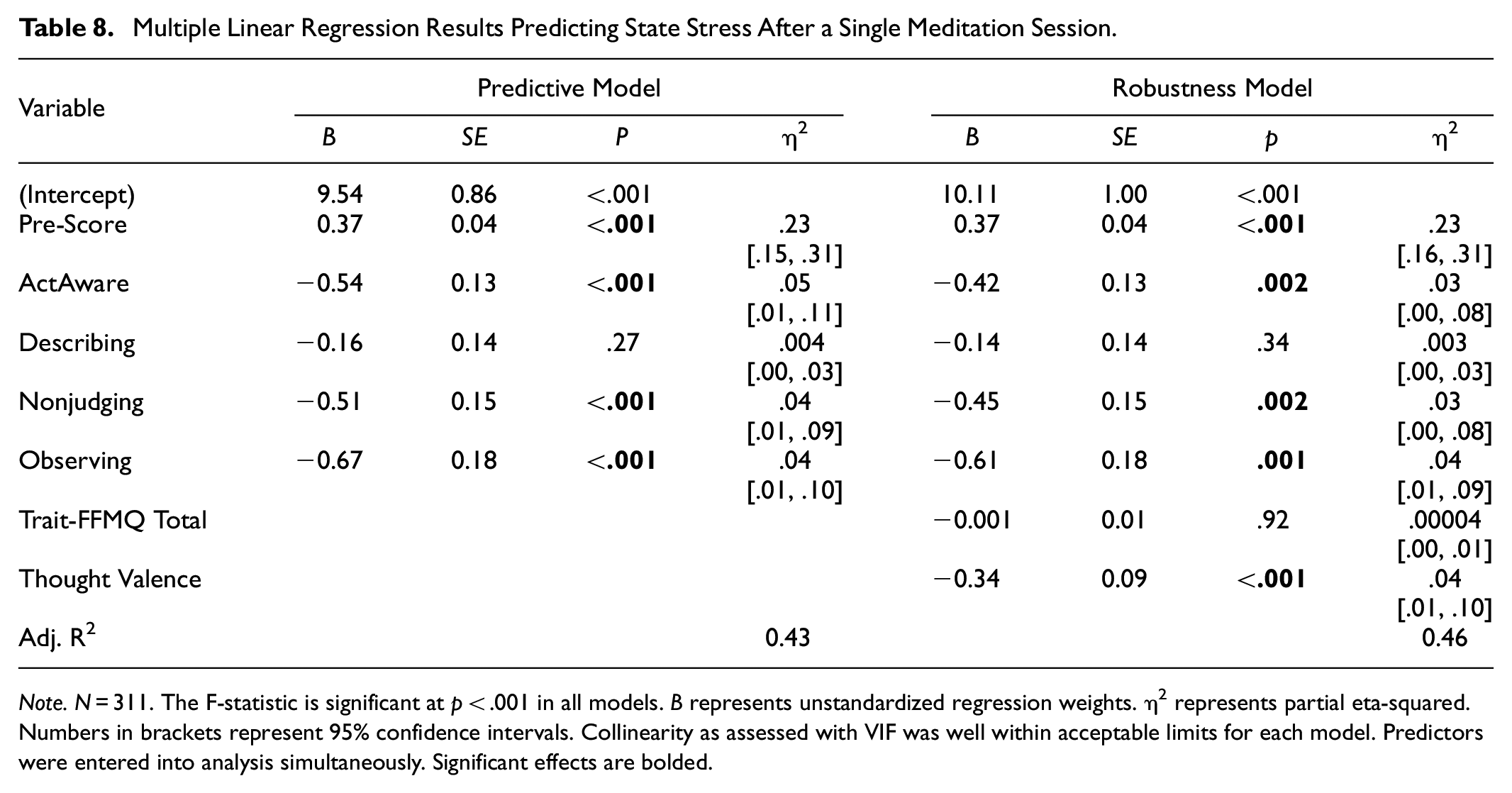

Predictive Validity

Here, we tested whether any (or all) of the facets of the state-4FMQ predict state affect, in the form of changes in State Anxiety and State Stress. This was tested separately for each of the two state measures, since Cronbach’s alpha statistics suggested they ought not be combined into a single metric (see Results). Using multiple linear regression, the post-intervention state affect score was the dependent variable, the pre-intervention state affect score was a covariate, and the state-4FMQ facets were entered simultaneously as predictor variables. Because our state affect measures are “negative” states, we expected a negative relationship between the state-4FMQ and state affect. Note that, in addition to providing a test of predictive validity, these multiple linear regressions allowed us to ask an empirical question—which state mindfulness facet best predicts state affect, in particular, state stress and anxiety, which we address by comparing effect size confidence intervals between facets.

It is perhaps important to explain our decision to obtain both a pre- and post-intervention score for state affect (with the pre-score being used as a covariate in the regression model) yet only a post-invention score for the state-4FMQ. First, we wanted to reduce the threat to internal validity that can occur when assessing the same items in close proximity (Campbell & Stanley, 1963) and felt this was particularly important for the state-4FMQ items, as they are the items being used to develop our new scale. Second, had we attempted to obtain pre-intervention scores for the state-4FMQ items, it would have been difficult to standardize the pre-intervention reference point (e.g., responding in reference to what activity the participant was doing just before the study began), which is critical since previous research has shown that the degree of mindfulness varies across different daily activities (e.g., Gross et al., 2024; Killingsworth & Gilbert, 2010; Raynes & Dobkins, 2025). This was not an issue for the dependent measure (i.e., the state affect scores), for which participants were instructed to report on how they were feeling “right now” (both pre- and post-intervention).

We hypothesized that ActAware and Nonjudging would most strongly predict state affect, followed by Describing, and that Observing would not be predictive. This prediction was guided by two recent meta-analyses reporting on correlational studies of the trait-FFMQ (Carpenter et al., 2019; Mattes, 2019). Of course, our results may be expected to differ from these reviews since we measure state, rather than trait, mindfulness; and since we use an experimental design to measure the benefit of a single meditation, rather than a correlational design to measure general associations between mindfulness and external referents. We also note the current research differs from most extant experimental designs investigating the benefits of meditation since most of these studies conduct interventions over the course of days, weeks, or even months at a time. Studies exploring the short-term effects of a single meditation session are a more sparse but growing area of research that generally suggests that there are likely measurable benefits to one session of meditation, albeit with relatively small and transient effects (e.g., see reviews by Gill et al., 2020, p. 202; Schumer et al., 2018; Williams et al., 2023).

Robustness

Given that we found evidence for predictive validity (see Results), in the next step, we tested whether the strength of the relationship between state-4FMQ and state affect remained robust when two other covariates were included in the model, namely the trait-FFMQ and Thought Valence. In particular, checking the strength of the relationship between the state-4FMQ and state affect in the presence of the trait-FFMQ is one important step in demonstrating the construct validity of the state-4FMQ. Before conducting this robustness analysis, we first tested whether either covariate (trait-FFMQ total score and Thought Valence) had the potential to account for some (or all) of the predictive validity results by looking at bivariate relationships between the state-4FMQ facet scores and the two covariates, and between the two covariates and state affect. We further confirmed whether a potential covariate interacted with the state-4FMQ facet scores in predicting state affect; if it did interact, that term was removed from the model.

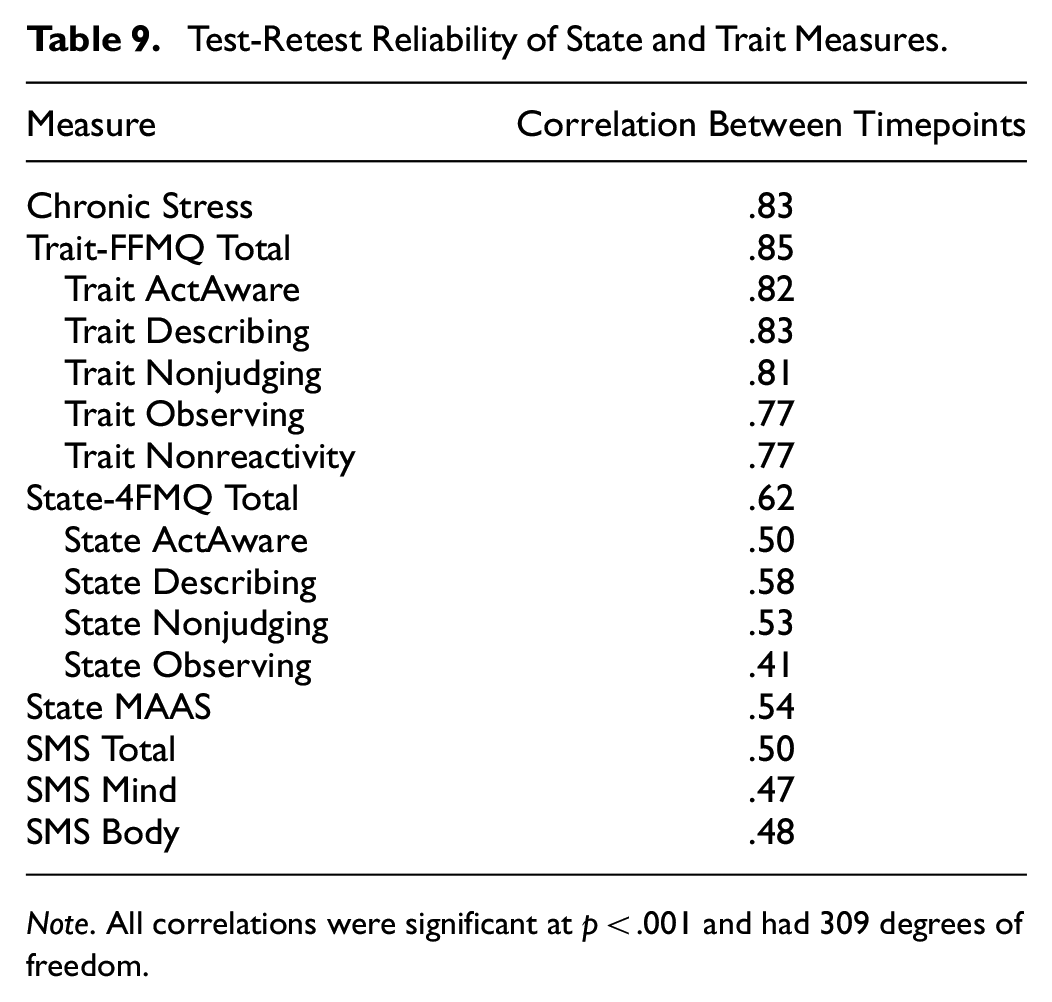

Construct Validity

Here, we asked if the state-4FMQ was behaving in a way that was consistent with its intended design of (a) being a “State” versus Trait” measure, as well as (b) detecting “mindful” states. Evidence that the state-4FMQ was behaving in a state-like way was addressed in two ways. First, when testing for robustness of the state-4FMQ (above), we reasoned that if the relationship between the state-4FMQ and state affect remained strong while accounting for the effects of the trait-FFMQ, this would demonstrate that the new state-4FMQ is not masquerading as a trait measure. Second, data from the two times points over which the state-4FMQ was collected (i.e., after the Meditation and Control conditions, i.e., parts 1 and 3, tested 1 week apart), allowed us to assess its test-retest reliability. If the state-4FMQ is truly a state measure, then its test-retest reliability should be substantially lower in comparison to that of the trait-FFMQ (the latter obtained from two time points over which the trait-FFMQ was collected, i.e.., parts 2 and 4, tested 1 week apart). To be comprehensive for these analyses, we conducted bivariate correlations of the scores for the following measures (which were each taken at two timepoints, one week apart): two trait measures (PSS, trait-FFMQ), and three state mindfulness measures (state-4FMQ, SMS, and state-MAAS). Note that we calculate bivariate Pearson correlations, rather than repeated measures correlations, since the one-week time gap between paired administrations was reasonably long enough to meet the assumption of independence of observations.