Abstract

The Learning, Executive, and Attention Functioning (LEAF) scale is a resource-friendly means of assessing executive functions (EFs) and related constructs (e.g., academic abilities) in children and adolescents that has been adapted for use with adults. However, no study in any population has investigated the factor structure of all LEAF EF items to determine whether items factor in a manner consistent with the originally proposed scale structure. Therefore, we examined LEAF scale responses of 546 young adults (Mage = 20.05, SD = 2.17). Upon removing academic items following a preliminary factor analysis, we performed principal axis factoring on the remaining 39 EF items. The final model accounted for 61.75% of the total variance in LEAF EF items and suggested that these items assess six moderately correlated EF constructs in young adults. We constructed six updated subscales to help researchers measure these EFs in young adults using the LEAF scale, each of which uniquely and differentially predicted measures of self-reported impulsivity, academic difficulties, and learning-related disorder history. Overall, the LEAF promises to be an accessible means of assessing a range of EF constructs in young adults, particularly when updated subscale structures based on factor analysis are used.

Keywords

Executive functions (EFs) are neuropsychological processes commonly associated with the frontal lobes and implicated in the supervision and coordination of sensory processing, cognition, and behavior to facilitate goal attainment (Diamond, 2013; Miller & Cohen, 2001). Proposed EFs range from the ability to focus selectively and prevent interference in the presence of distractors (i.e., attention control; Engle & Kane, 2004) to the ability to monitor, update, and manipulate goal-relevant information in one’s mind (i.e., working memory; Baddeley, 1992; Miyake et al., 2000). Although the precise nature and number of EFs is debated (Karr et al., 2018), they are believed to display unity (i.e., EFs positively and moderately-to-strongly covary; r≈ .40–.80) and diversity, such that modeling multiple EF constructs improves model fit when compared to just modeling one (Friedman & Miyake, 2017; Miyake et al., 2000).

Working in tandem, EFs are believed to underlie many real-world skills (e.g., emotional regulation; Gyurak et al., 2012), life outcomes (e.g., career and academic success; Barkley & Fischer, 2011; Best et al., 2011), and psychological constructs (e.g., impulsivity; i.e., the inability to inhibit behavioral impulses or thoughts, often resulting in actions that are unduly risky, maladaptive, or inappropriate to the situation; Dalley & Robbins, 2017; Enticott et al., 2006). They are also often impaired in individuals who display learning-related difficulties (e.g., those diagnosed with attention-deficit/hyperactivity disorder [ADHD]; autism spectrum disorder [ASD], and/or Tourette syndrome; Alderson et al., 2013; Demetriou et al., 2017; Eddy et al., 2009; Semrud-Clikeman et al., 2013; Willcutt et al., 2005). Given the close relations between EFs and psychiatric, psychological, and academic outcomes, the development and validation of measures to study individual differences in EFs is crucial.

In adults and children, EFs are often assessed by performance on cognitive tasks, with many distinct behavioral measures claiming to assess EFs (Diamond, 2013). Friedman and Miyake (2017) suggested that what is shared among these task-based EF measures is the need to maintain and manage goals (e.g., translate an arbitrary stimulus into a cue that is maintained in mind throughout task performance), and then use those goals to bias on-going processing (e.g., use the presence of that cue to override a habitual or default behavior in favor of a goal-relevant one). Much work has been done to try to isolate the ontology of EF within the context of cognitive task-derived measures (e.g., see the article by Karr et al., 2018 for a review), focusing predominantly on the domain-general constructs of inhibition, working memory monitoring and updating, and shifting as described by Miyake et al. (2000). Although behavioral measures of EFs display good within-subject variability—positioning them as powerful tools in experimental psychology—their utility as measures of individual differences in EFs has been challenged in recent years, primarily based on poor test–retest reliability and predictive validity (Eisenberg et al., 2019; Enkavi et al., 2019).

Parent- and teacher-report measures, such as the Barkley Deficits in Executive Functioning Scale—Children and Adolescents (BDEFS-CA; Barkley, 2011a) and the Behavioral Rating Inventory of Executive Function (BRIEF; Gioia et al., 2000), are also often used to assess EFs in children and adolescents. Both questionnaires have self-report adult versions that display good psychometric properties and predictive validity (BDEFS and BRIEF-A, respectively; Barkley, 2011b; Roth et al., 2005). Unlike EF tasks, self-report measures of EFs and related constructs (e.g., self-regulation) consistently display good between-subject variability (Eisenberg et al., 2019; Enkavi et al., 2019) and moderate within-subject correlations (Duckworth & Kern, 2011), supporting their utility as measures of individual differences in EFs.

Although questionnaires are a well-established means of measuring individual differences in EFs, they can be expensive to administer, particularly for research studies that require large samples. As EF questionnaires predict variance in important real-world and clinical outcomes, the development and validation of resource-friendly EF questionnaires for adults would greatly benefit future research aimed at measuring individual differences in EFs. Furthermore, many EF questionnaires (e.g., BDEFS, BRIEF-A) aim to assess EFs in daily life broadly (i.e., some item wordings relate to, e.g., missing appointments, driving habits, or chores and other activities of daily living). Item wordings that relate to daily life may be a strength of questionnaire measures of EFs when compared to task-derived EF variables, as EF tasks are typically administered within a controlled laboratory environment while researchers explicitly tell participants the correct way to respond to tasks. However, broader item wordings may not be ideal if researchers are interested in measuring self-reported EF abilities specifically within contexts like learning or during the performance of novel or complicated tasks, when cognitive demands are higher than everyday life and individuals may more intentionally recruit EF processes to help support their ability to achieve goals.

The Learning, Executive, and Attention Functioning (LEAF) scale (Castellanos et al., 2018; Kronenberger et al., 2014) is a recent, freely available parent- and teacher-report questionnaire originally designed to measure EF constructs (Cognitive-EF content area), non-EF learning-related cognitive processes (Cognitive-Learning content area), and academic abilities (Academic content area) in children and adolescents. LEAF developers purposefully framed the wordings for Cognitive items around the use of cognitive processes specifically within the context of learning and/or when cognitive demands are high. For example, many items incorporate words and phrases related to “learning,” “when you are supposed to concentrate,” “while completing work,” “while following assignments/instructions,” and/or when dealing with “information,” “[learning-related] material,” or “facts.” Item wordings in the LEAF imply that the individual has a goal they are trying to achieve, suggesting that the measured construct is relevant within the context of goal attainment. Although such wordings are also used for some items in other EF questionnaires (e.g., BRIEF-A, BDEFS), that the LEAF focuses on such wordings while omitting wordings about daily life more broadly might be particularly useful for researchers who wish to measure EF abilities specifically under more cognitive demanding contexts (e.g., learning), when individuals may be more prone to purposefully recruiting EFs.

The LEAF aims to assess difficulties associated with six putative EF constructs (Cognitive-EF content area): Attention (i.e., ability to sustain focus and ignore distractors), Processing Speed (i.e., ability to quickly complete tasks that require concentration), Visual-Spatial Organization (i.e., ability to organize and manipulate one’s environment), Sustained Sequential Processing (i.e., ability to plan and complete multistep tasks in their appropriate order), Working Memory (i.e., ability to maintain goal-relevant information in one’s mind in the presence of additional processing demands), and Novel Problem Solving (i.e., ability and willingness to process unfamiliar information).

In addition to these six EF-related subscales, two subscales aim to assess difficulties with non-EF cognitive abilities (Cognitive-Learning content area): Comprehension and Conceptual Learning (i.e., ability to track and understanding information) and Factual Memory (i.e., ability to maintain and memorize information). As EFs are known to predict academic achievement (Best et al., 2011), and as LEAF developers were particularly interested in assessing EFs and related cognitive processes within the context of learning, the LEAF also has an Academic content area comprising three subscales that aim to assess self-reported mathematical (Mathematical Skills subscale), reading (Basic Reading Skills subscale), and writing (Written Expression Skills subscale) difficulties. In children and adolescents, all 11 LEAF subscales displayed good internal consistency and unidimensionality while correlating with established EF questionnaires (e.g., the BRIEF; Gioia et al., 2000), task-derived EF variables, and tests of academic achievement, supporting the LEAF’s convergent and predictive validity in this population (Castellanos et al., 2018).

Given its strong psychometric properties, as well as previous evidence supporting the predictive utility of self-report EF measures in adults, the LEAF shows promise as a resource-friendly means of measuring individual differences in EFs in adults and has been used accordingly. For example, Marschark and colleagues (2015) used LEAF Cognitive-EF and Cognitive-Learning subscale scores to compare self-reported EF abilities between deaf adults and hearing controls. In contrast, Crandall and colleagues (2019) opted to average all LEAF Cognitive-EF subscale scores to create a general, composite EF measure that was predicted by demographic (e.g., having lived in a single-parent home) and psychological (e.g., levels of perceived stress) factors in adults. Haehnel et al. (2022), in turn, found that a composite EF variable (calculated from the LEAF’s reverse-scored Attention, Sustained Sequential Planning, and Novel Problem Solving subscale items) positively predicted the degree to which an individual perceived a sense of family health (e.g., the degree to which an individual reported feeling happy with their family) in a large sample of adults in the United States. Finally, Hanson et al. (2024) found that a latent variable extracted from the (reverse-scored) Attention, Working Memory, and Novel Problem Solving LEAF subscales negatively predicted symptoms of depression and anxiety in a sample of university students.

Despite the LEAF’s potential and previous use as self-report and parent-report EF measures in adults and children, respectively, no study in any population has investigated the factor structure of all LEAF EF items to determine whether items factor in a manner that is consistent with the scale structure originally proposed by Castellanos et al. (2018). Instead, to assess the unidimensionality of LEAF subscales, Castellanos and colleagues (2018) performed 11 separate exploratory factor analyses (EFAs) on parent-reported LEAF scores for children and adolescents clinically referred for psychological testing (e.g., for displaying attention/concentration problems). Each EFA was conducted on five items that the researchers believed assessed a particular EF construct. However, EFs are closely related cognitive processes that frequently work in tandem (Diamond, 2013; Miyake et al., 2000). As a result, the specific nature of the construct that is assessed by any single EF-dependent variable is rarely clear-cut, making the operationalization of putative EF measures difficult (Karr et al., 2018). Even if the wording of a given EF item reasonably seems to measure a specific EF, it is possible that that item better measures a separate but related construct. Such a finding can only be observed if a single-factor analysis is performed simultaneously on items from multiple proposed subscales. In other words, even if parent-report LEAF subscales display unidimensionality in children and adolescents when examined separately, it is not guaranteed that items will factor in the manner proposed by the developers (Castellanos et al., 2018) when factor analysis is performed on all LEAF EF items.

Similarly, although Cognitive-Learning items were conceptualized as measuring non-EF cognitive processes, they still correlated moderately (r≈ .50) with many BRIEF subscales in analyses by Castellanos et al. (2018). These moderate correlations may have emerged because Cognitive-Learning items assess constructs that require EFs but do not constitute EFs themselves (as Castellanos et al., 2018 suggested). However, they could also have occurred because these Cognitive-Learning items assess EFs. If the former is the case, then Cognitive-Learning items should be empirically separable from Cognitive-EF items, as EF measures should share something in common with themselves beyond what they share with other non-EF cognitive measures. If the latter is the case, then Cognitive-Learning and Cognitive-EF items would not be separable, which we would interpret to suggest that Cognitive-Learning items assess constructs that cannot be empirically distinguished from EFs. This can only be ascertained by using a single-factor analysis.

Finally, as the factor structure of behavioral EF measures is believed to change throughout the course of development (Karr et al., 2018), it is not guaranteed that the factor structure of LEAF items in adults will directly parallel that of children and adolescents. Thus, the primary objective of the current study was to investigate the factor structure of LEAF EF items in a young-adult, undergraduate sample using EFA. Using the results of this EFA, we then aimed to update the LEAF EF subscale structures to allow future researchers to better utilize the LEAF to isolate and measure EFs of interest in young adults.

A secondary objective of the current study was to investigate the predictive validity of updated LEAF EF subscale scores. As mentioned, impulsivity frequently co-occurs with, and likely implicates, EF impairments (Enticott et al., 2006). EFs also strongly predict academic abilities and achievement in both developmental and young adult populations (e.g., mathematical abilities, reading abilities, writing abilities; Costa et al., 2018; Raghubar et al., 2010; Ruffini et al., 2024). Finally, EF impairments are common among individuals who have experienced learning-related disorders (LRDs; e.g., ADHD, ASD, Tourette Syndrome; Alderson et al., 2013; Demetriou et al., 2017; Eddy et al., 2009; Semrud-Clikeman et al., 2013) when compared to healthy controls. Therefore, if LEAF EF subscales are valid measures of EF difficulties, LEAF EF subscale scores should significantly, positively predict self-reported impulsivity (Barratt Impulsiveness Scale [BIS-11]; Patton et al., 1995), mathematical difficulties (LEAF Mathematical Skills subscale), and reading and writing difficulties (LEAF Basic Reading Skills and Written Expression Skills subscales). Furthermore, LEAF EF subscale scores should be higher in individuals who report experiencing an LRD. Importantly, however, we also hypothesized that select LEAF EF subscales would emerge as significant, unique predictors even after controlling for other LEAF EF subscales. Such a pattern of results would suggest that LEAF EF subscales display unity (i.e., that LEAF EF subscales significantly predict outcomes of interest in part due to what is shared among all subscales) and diversity (i.e., using multiple LEAF EF subscales is preferred to using a single LEAF EF measure as certain subscales uniquely predict different outcomes).

Method

Participants

Between January 1, 2021, and August 31, 2023, 574 Canadian undergraduate students were recruited through an online university student recruitment pool. Participants were required to be between the ages of 18 and 30 years, attending university at the time of testing, and capable of understanding, reading, and speaking English fluently. Access to a computer that connected to the internet as well as a working microphone and webcam were also required. All participants received bonus points to be used toward their undergraduate course and were entered to win one of ten $25.00 Amazon Egift cards.

Of the 574 undergraduate students recruited to participate in this study, 14 participants did not complete either the LEAF or BIS-11 portions of the online questionnaire and were therefore removed from all analyses. Five participants who reported being above the age of 30 years and nine participants who displayed poor data quality (see the Data Analysis section) were also removed from all analyses, resulting in a final sample size of 546.

The 546 participants included in analyses had a mean age of 20.05 (SD = 2.17), with most (94.0%) being between 18 and 23 years of age. The sample was predominantly female (80.4%; male = 17.2%, other = 2.2%, prefer not to answer = 0.2%), and most participants reported speaking English as their first language (86.2%; French = 2.9%, Other = 10.9%). The sample was roughly distributed regarding their current year of undergraduate schooling (first year = 35.5%, second year = 25.1%, third year = 18.9%, fourth year = 17.2%, more than four years = 2.8%, undisclosed = 0.6%). Furthermore, 16.8% of participants reported having experienced an LRD over the course of their lives (i.e., answered “Yes” to, “Have you experienced any of the following: Learning Disability, ADHD, Autism Spectrum Disorder, Tourette’s Syndrome?”; “No” = 80.4%, “I prefer not to answer” = 2.7%).

Study Design

This cross-sectional study represents a portion of a larger study that investigated relations between self-reported physical activity and EFs in young adults. Undergraduate students completed an online questionnaire that included measures to assess their self-reported EFs (i.e., LEAF scale), impulsivity levels (i.e., BIS-11), physical activity, and other variables known to covary with EFs (i.e., demographic information, health history, bilingualism, perceived sleep quality, perceived stress, musical training, positive and negative affect, and connectedness to nature). On average, participants took Mdn = 26.00 minutes to complete the online questionnaire (Mminutes = 117.79, SD = 803.24; 94.1% of participants completed the questionnaire in 90 minutes or less). The large variation in completion time is due to participants being able to save and return to the questionnaire later, and the system did not subtract periods of inactivity. Within 1 week of the questionnaire, some participants (n = 212) also attended an online testing session over Zoom to complete additional EF measures. Online testing sessions only occurred during the first year of data collection, between January and December 2021. As the purpose of the current study was to investigate the factor structure and psychometric properties of the LEAF scale, only data from the LEAF scale, BIS-11, and demographic items are presented here. 1

This study was conducted following the Declaration of Helsinki and in accordance with the Canadian Tri-Council Policy Statement 2 (TCPS2), with the study procedures approved by the Local University Research Ethics Board (File # 2020-5291). All participants provided electronic informed consent immediately prior to completing the online questionnaire. To help ensure that the confidentiality of participant responses was maintained, all analyses were conducted on de-identified data sets. Processed data are available on request from the last author subject to institutional, federal, and provincial privacy regulations. Data analysis codes are also available on request from the last author. Materials used (i.e., the LEAF and the BIS-11) should be sought from their developers.

Measures

The Learning, Executive, and Attention Functioning Scale

The parent-report version of the LEAF scale (Castellanos et al., 2018; Kronenberger et al., 2014) was originally developed to assess learning-, EF-, and academic-related difficulties in children and adolescents between the ages of 6 and 17 years. The self-report adult version was developed and first used by Marschark et al. (2015). Both versions comprise 11, five-item subscales that fall into one of three content areas:

The Cognitive-Learning content area includes the Comprehension and Conceptual Learning subscale and the Factual Memory subscale, which aim to assess perceived difficulties with tracking and understanding information, and maintaining and memorizing information, respectively.

The Cognitive-EF content area comprises six subscales that aim to assess perceived difficulties associated with Attention (i.e., ability to sustain focus and ignore distractors), Processing Speed (i.e., ability to quickly complete tasks that require concentration), Visual-Spatial Organization (i.e., ability to organize and manipulate one’s environment), Sustained Sequential Processing (i.e., ability to plan and complete multistep tasks in their appropriate order), Working Memory (i.e., ability to maintain goal-relevant information in one’s mind in the presence of additional processing demands), and Novel Problem Solving (i.e., ability and willingness to process unfamiliar information).

The Academic content area includes the Mathematical Skills subscale, the Basic Reading Skills subscale, and the Written Expression Skills subscale, which aim to assess perceived difficulties associated with mathematics, reading, and writing, respectively.

All LEAF items are worded to emphasize difficulties with learning-related contexts. Items are scored using a Likert-type scale that ranges from 0 (“Never: Not a problem; Average for age”) to 3 (“Very Often: Major daily problem”), with higher scores representative of lower perceived cognitive functioning or academic ability. Ratings of 2 (“Often: Causes problems; Happens almost every day”) are worded to prompt responses when behaviors cause frequent problems, whereas ratings of 1 (“Sometimes: A little more than average; Not a big problem”) are worded to prompt responses when behaviors occur more often than average but do not cause major problems.

Barratt Impulsiveness Scale

The BIS-11 is a 30-item, self-report measure of trait impulsivity (Patton et al., 1995) well validated in young adults (Stanford et al., 2009). Items aim to assess an individual’s tendency to fail to inhibit maladaptive behaviors and/or thoughts in everyday life and are scored using a Likert-type scale that ranges from 1 (“Rarely/Never”) to 4 (“Almost Always/Always”). After reverse-coding all positively worded items, the 30 items can be summed and averaged to calculate an overall measure of impulsivity, with a higher score representative of greater impulsive tendencies. In the current sample, BIS-11 items displayed strong internal reliability (α = .85), consistent with other adult samples (Stanford et al., 2009).

Data Analysis

We cleaned raw data by considering the time that it took participants to complete the online questionnaire as well as by using reverse-scored BIS-11 items. Three participants completed the online questionnaire in <10 minutes, while another participant was missing questionnaire-completion time data. These four participants were removed from all analyses. In addition, we took the absolute difference between the average value of reverse-scored BIS-11 items and the average value of non–reverse-scored BIS-11 items for each participant; any participant whose value was >|3.0| standard deviations from the mean was removed from all analyses (n = 5). This resulted in nine participants (1.6% of the sample) being removed and left a total of 546 participants in the analyses. Note that as two participants did not complete the BIS-11 portion of the questionnaire, they were excluded from analyses involving BIS-11 items. We opted to retain these participants in our LEAF scale factor analysis because their questionnaire-completion times were consistent with the rest of the sample, and visual inspection of their LEAF data suggested that there was no reason to assume that their responses to these questions were invalid. After removing the nine participants, only 0.31% (143/46,267) of all LEAF and BIS-11 item values were missing. Therefore, we opted to use pairwise deletion for all analyses excluding reliability coefficients, which were calculated using listwise deletion.

We were specifically interested in the LEAF’s utility as a measure of EFs. Thus, we first sought to ensure that Academic, Cognitive-Learning, and Cognitive-EF subscale items factored separately. We were particularly interested in ensuring that Cognitive-Learning items separated from Cognitive-EF items, which would help support the hypothesis that Cognitive-Learning items are not measures of EFs (which we expected to share more in common with each other than with measures of other cognitive processes, even if those other cognitive processes may implicate EFs). To do this, we performed a preliminary EFA on all 55 LEAF scale items using principal axis factoring (PAF) with an oblique rotation method (Promax rotation). In a review of 304 EFAs published between 2007 and 2017 in selected psychological research journals, Goretzko et al. (2021) found that PAF was the most frequently used extraction method (i.e., >50% of reviewed EFAs used this method), likely due to Likert-type items frequently displaying skewness and PAF’s reliability in the face of multivariate non-normality. Castellanos et al. (2018) also used PAF when investigating the unidimensionality of LEAF scale items. As individual LEAF scale items in our sample were positively skewed, and a significant Mardia’s test of multivariate normality suggested that the data were not multivariate normally distributed (skewnessMardia = 53168.17, p < .001; Mardia, 1970), we felt justified in also using PAF as an extraction method. We used an oblique rotation method as we expected factors to correlate (Fabrigar et al., 1999).

For the preliminary EFA, we initially forced a three-factor solution based on the hypothesis that each extracted factor would correspond to each of the LEAF’s three content areas: Cognitive-EF, Cognitive-Learning, and Academic. Although this approach seemed to separate Academic items from Cognitive-EF and Cognitive-Learning items, it did not clearly separate the two Cognitive content areas. To further confirm that the two Cognitive content areas were not clearly separable, we ran an additional model while forcing a four-factor solution. All items that did not load with other EF items were removed from subsequent analyses. We defined an item as “non-EF” if it did not load at a magnitude of at least .30 on an EF factor (i.e., a factor in which the highest loading items were from the Cognitive-EF content area) when either a three- or four-factor solution was forced. Our rational for defining an item as non-EF was based on the work of Costello and Osborne (2005), who suggested that items should load onto a given factor at a magnitude of at least .30, indicating that the factor predicts approximately 10% of that item’s variance. Thus, when we define a LEAF item as “non-EF,” we specifically mean that less than 10% of its variance was predicted by a factor that most strongly predicted Cognitive-EF items in either the three- or four-factor model.

To determine the factor structure of the LEAF EF items, we then performed PAF with Promax rotation on all EF items as determined by our preliminary EFA. As with the preliminary model, the Kaiser criterion of Eigenvalues > 1.0 and theoretical considerations were used to determine the number of factors to retain in the model. 2 The model was also assessed using the following criteria: (a) a Kaiser-Meyer-Olkin measure of sampling adequacy > 0.5 (Kaiser, 1974) and (b) a significant result on Bartlett’s test of sphericity (p < .05) were observed, indicating the existence of sufficient shared variance between the variables to justify the use of factor analysis, (c) minimal-to-no cross-loadings (i.e., a minimal number of items loaded >.30 on two or more rotated factors), (d) a minimum of three items loaded on each factor, and (e) the model accounted for ≥50% of the total variance in LEAF EF items (Costello & Osborne, 2005). We then used the results of our main EFA to form updated LEAF EF subscales. Updated subscales were formed based on each extracted factor and included all items that loaded on the corresponding factor >.30. To maximize consistency with previous work, items that cross-loaded on two factors (6 of 39) were placed in the subscale that contained the most items from that item’s original subscale. For all but one of the six items that cross-loaded, the original subscale loading also happened to be the largest. A score for each updated subscale was then calculated and reported by averaging the scores of all updated subscale items. Consistent with past work (e.g., Crandall et al., 2019), a general EF score was also calculated by averaging all LEAF EF item scores.

The predictive validity of LEAF EF items was first assessed by examining Pearson correlations between the updated LEAF EF subscales and BIS-11 scores, LEAF Mathematical Skills subscale scores, and a combined LEAF Reading/Writing score calculated by averaging all LEAF Basic Reading Skills and Written Expression Skills subscale items (α = .90). Independent samples t-tests were also run to determine whether individuals who reported having experienced an LRD displayed significantly higher (i.e., poorer) LEAF EF subscale scores than those who did not. Next, to investigate whether/which updated LEAF EF subscales uniquely predicted outcomes of interest, multiple linear regressions were run with BIS-11, LEAF Mathematical Skills, and LEAF Reading/Writing Skills as outcome variables and all updated LEAF EF subscales entered as predictors. Multiple logistic regression was performed to evaluate whether/which of the updated LEAF EF subscale scores uniquely predicted the likelihood that an individual reported having experienced LRDs over the course of their life, with self-reported LRDs coded as 0 (“No”) and 1 (“Yes”), removing participants who answered, “I prefer not to answer” (n = 15).

For all inferential statistics, α was set at .05. All analyses were conducted using SPSS Statistics, Version 27.0 (IBM, Armonk, NY, USA) or the MVN package (Korkmaz et al., 2014) in software R (v4.3.1). Note that all LEAF item wordings presented are abbreviations of actual item wordings. For access to full LEAF item wordings, contact the scale developer.

Results

Preliminary EFA

Forcing a three-factor model on theoretical grounds accounted for 48.87% of the total variance in all 55 LEAF scale items. The Kaiser-Meyer-Olkin measure of sampling adequacy was deemed “marvelous” (KMO = .96; Kaiser, 1974), and Bartlett’s test of sphericity was significant (χ2(1485) = 20,402.17, p < .001), indicating that the data were suitable for factor analysis. Contrary to our expectations, most Cognitive-Learning and Cognitive-EF items loaded on the first factor (Eigenvalue = 20.35; % of total variance explained = 37.00%), which we interpreted to broadly represent “Executive Functioning” based on the three strongest loading items (Item 13: not stay focused, Item 12: mind drift, Item 14: easily distracted; factor loadings = .88–.94). Notably, 9 of the 10 items from the proposed Cognitive-Learning subscales loaded moderately to strongly (i.e., >.40; range = .48–.80) on this factor, often loading more strongly than Cognitive-EF items. All Basic Reading Skills (Items 46–50) and Written Expression Skills (Items 51–55) items loaded on the second factor (Eigenvalue = 3.67; % of total variance explained = 6.67%) along with three Cognitive-EF items (Item 18: write/read slow, Item 23: puzzles difficult, Item 24: drawing difficult; range = .25–.41) and one Cognitive-Learning item (Item 3: poor comprehension reading; factor loading = .46). In addition to loading on the first factor, Item 25 (poor attention visual) also cross-loaded on this second factor at .31. All Mathematic Skills (Items 41–45) items loaded on the third factor (Eigenvalue = 2.86; % of total variance explained = 5.19%). For full preliminary three-factor model results, see Supplementary Table 1.

As Cognitive-EF and Cognitive-Learning items did not factor separately in our three-factor model, we ran an additional model forcing a four-factor solution. However, even with an additional factor forced, Cognitive-EF and Cognitive-Learning items still did not factor separately. Instead, the original “Executive Functioning” factor split into two EF factors, the first of which (Factor 1) represented Attention and Memory (i.e., the 10 strongest loading items were from the Attention and Factual Memory subscales; range = .63–.97), while the second (Factor 3) represented Planning, Organization, and Problem Solving (i.e., this factor predominantly loaded items from the original Visual-Spatial Organization, Sustained Sequentially Processing, and Novel Problem Solving subscales). Basic Reading Skills and Written Expression Skills items still factored together (Factor 2) in this four-factor model, while Mathematic Skills factored separately (Factor 4). Excluding Item 18 (write/read slowly), the Cognitive-EF and Cognitive-Learning items that did not load on the original “Executive Functioning” factor when three factors were forced did load onto one of the two EF factors at a magnitude of at least .30 in this four-factor model. For full preliminary four-factor model results, see Supplementary Table 2.

As all Cognitive-Learning items loaded with Cognitive-EF items at a magnitude of at least .30 when three and/or four factors were forced, we opted to interpret these items as measures of EFs and included them in our main EFA. As all Basic Reading Skills, Written Expression Skills, and Mathematic Skills items loaded separately from cognitive items in both models, we removed these Academic items from our subsequent EFA. Given that Item 18 (write/read slowly) did not load at a magnitude of at least .30 on any EF factor when three or four factors were forced, we did not include it in our main EFA. We did not find it surprising that it loaded with Academic items, given the wording of this item.

Main EFA

Correlations among the remaining 39 LEAF EF subscale items were generally moderate and significant (r = .30–.50), the sampling adequacy was deemed “marvelous” (KMO = .96), and Bartlett’s test of sphericity was significant (χ2(741) = 13349.21, p < .001), indicating that the data were appropriate for factor analysis.

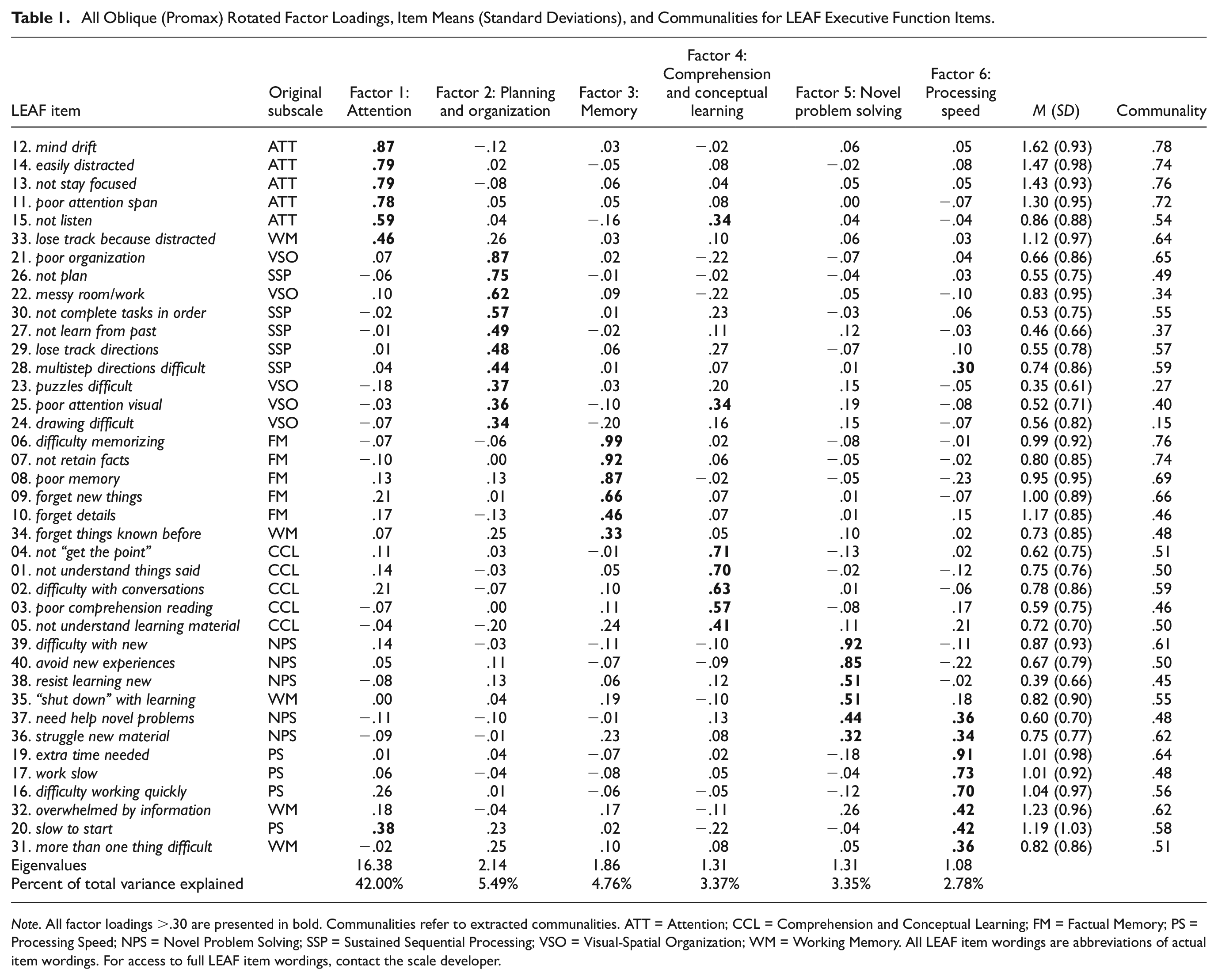

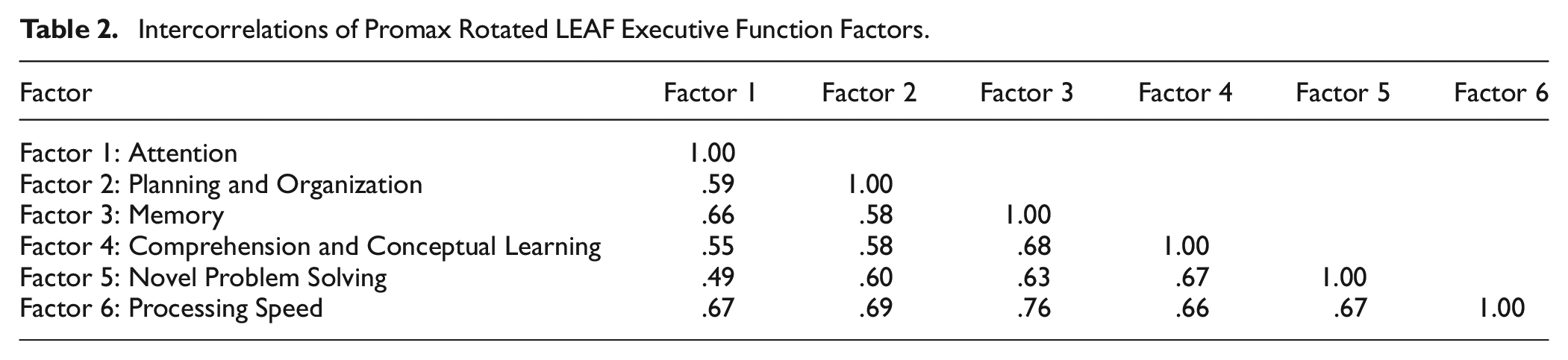

The EFA highlighted a six-factor solution with Eigenvalues greater than 1, which accounted for 61.75% of the total variance in all EF subscale items. Minimal cross-loadings were observed, and the extracted communalities for most items were moderate to high (i.e., between .50 and .80; Fabrigar et al., 1999). All Promax-rotated factor loadings, Eigenvalues, and percentage of total variance accounted for by each extracted factor are displayed in Table 1. The correlations among rotated factors were moderate to large in magnitude (r = .49–.76), a common finding in EF research (Miyake et al., 2000), and can be found in Table 2.

All Oblique (Promax) Rotated Factor Loadings, Item Means (Standard Deviations), and Communalities for LEAF Executive Function Items.

Note. All factor loadings >.30 are presented in bold. Communalities refer to extracted communalities. ATT = Attention; CCL = Comprehension and Conceptual Learning; FM = Factual Memory; PS = Processing Speed; NPS = Novel Problem Solving; SSP = Sustained Sequential Processing; VSO = Visual-Spatial Organization; WM = Working Memory. All LEAF item wordings are abbreviations of actual item wordings. For access to full LEAF item wordings, contact the scale developer.

Intercorrelations of Promax Rotated LEAF Executive Function Factors.

Factor 1 (“Attention”) accounted for 42.00% of the total variance and included all five of the original Attention subscale items. All Attention items loaded strongly on the factor (.59–.87), with Item 12 (mind drift) displaying the strongest factor loading. One item from the original Working Memory subscale (Item 33: lose track because distracted) also loaded on this factor at .46.

Factor 2 (“Planning and Organization”) accounted for 5.49% of the total variance and contained all five of the original Visual-Spatial Organization items and all five of the original Sustained Sequential Processing items, albeit with Item 25 (poor attention visual) and Item 28 (multistep directions difficult) cross-loading on Factors 4 and 6, respectively. We opted to name this factor “Planning and Organization” due to the wording of the two strongest loading items (Item 21: poor organization; Item 26: not plan), which loaded at .87 and .75. Notably, the extracted communalities for Item 23: (puzzles difficult) and Item 24 (drawing difficult) from the original Visual-Spatial Organization subscale were low (i.e., < .30), suggesting that the final model only accounted for a small proportion of variance in these items.

Factor 3 (“Memory”) accounted for 4.76% of the total variance. All five original Factual Memory items loaded strongly on this factor (range = .46–.99), with Item 06 (difficulty memorizing) loading the strongest. One original Working Memory subscale item (Item 34: forget things known before) also loaded on this factor at .33.

Factor 4 (“Comprehension and Conceptual Learning”) comprised all five of the original Comprehension and Conceptual Learning items and explained 3.37% of the total variance. Factor loadings ranged from .41 to .71 (Item 04: not “get the point”). In addition, one Attention subscale item (Item 15: not listen) and one Visual-Spatial Organization subscale item (Item 25: poor attention visual) cross-loaded on this factor—both at a magnitude of .34 or less—although both loaded at a higher magnitude on their original subscales.

Factor 5 (“Novel Problem Solving”) accounted for 3.35% of the total variance. All five of the original Novel Problem Solving subscale items loaded on this factor at a magnitude of at least .30, albeit with two of these items (Item 37: need help novel problems; Item 36: struggle new material) cross-loading on Factor 6. One original Working Memory subscale item (Item 35: “shut down” with learning) also loaded strongly on this factor at .51.

Factor 6 (“Processing Speed”) accounted for 2.78% of the total variance and comprised the four original Processing Speed items that were retained for the main EFA (range = .42–.91), although Item 20 (slow to start) cross-loaded on the Attention factor at .38. Two Working Memory items (Item 32: overwhelmed by information; Item 31: more than one thing difficult) also loaded on this factor at .42 and .36, respectively. Finally, as noted, two Novel Problem Solving items (Item 37: need help novel problems, Item 36: struggle new material) cross-loaded on this factor at .36 and .34, respectively, while one Sustained Sequential Processing item (Item 28: multistep directions difficult) cross-loaded on this factor at .30. We opted to term the factor “Processing Speed” given that the three strongest loading items (Item 19: extra time needed; Item 17: work slow; Item 16: difficulty working quickly; range = .70–.91) came from the original Processing Speed subscale.

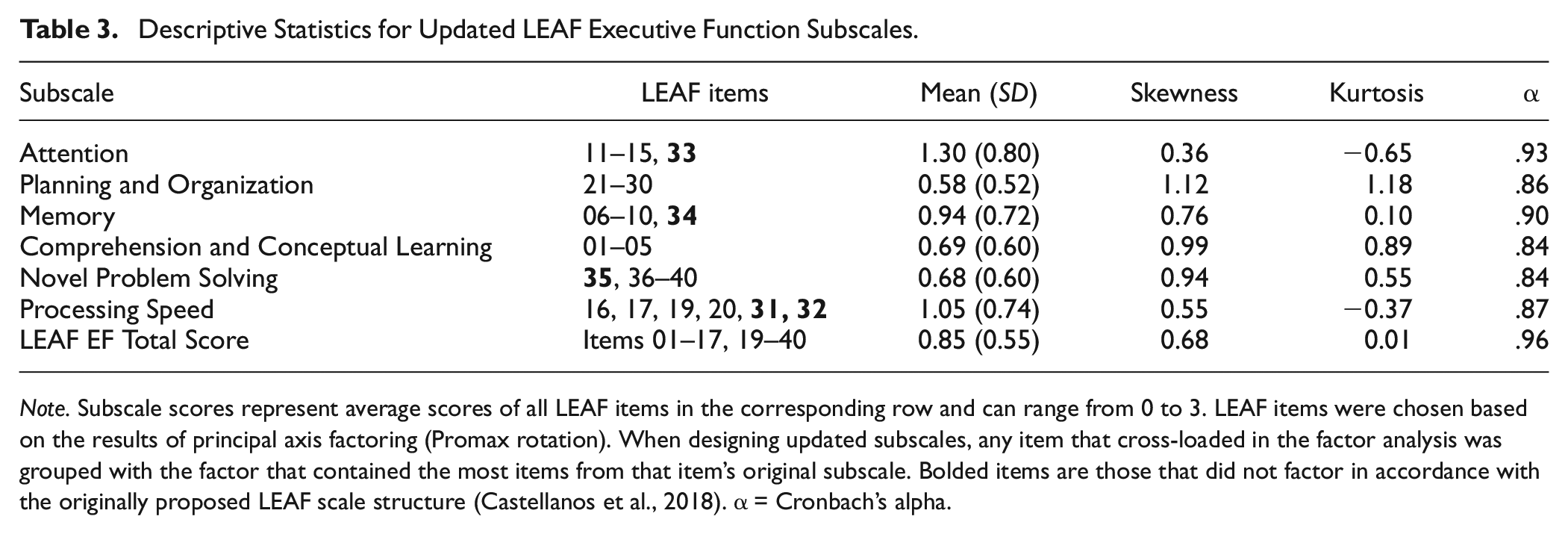

In short, the structure of the originally proposed LEAF EF subscales was generally maintained (Castellanos et al., 2018), albeit with the Visual-Spatial Organization and Sustained Sequential Processing subscales merging into a Planning and Organization factor and no coherent Working Memory factor emerging. Thus, using the results of our factor analysis, we formed six LEAF EF subscales using the 39 LEAF items. Our revised subscales, including their descriptive statistics and reliability coefficients, are shown in Table 3. Updated subscales displayed good internal consistency (α = .84–.93), and means and standard deviations were consistent with previous work that used the LEAF in young adult samples (e.g., Marschark et al., 2015).

Descriptive Statistics for Updated LEAF Executive Function Subscales.

Note. Subscale scores represent average scores of all LEAF items in the corresponding row and can range from 0 to 3. LEAF items were chosen based on the results of principal axis factoring (Promax rotation). When designing updated subscales, any item that cross-loaded in the factor analysis was grouped with the factor that contained the most items from that item’s original subscale. Bolded items are those that did not factor in accordance with the originally proposed LEAF scale structure (Castellanos et al., 2018). α = Cronbach’s alpha.

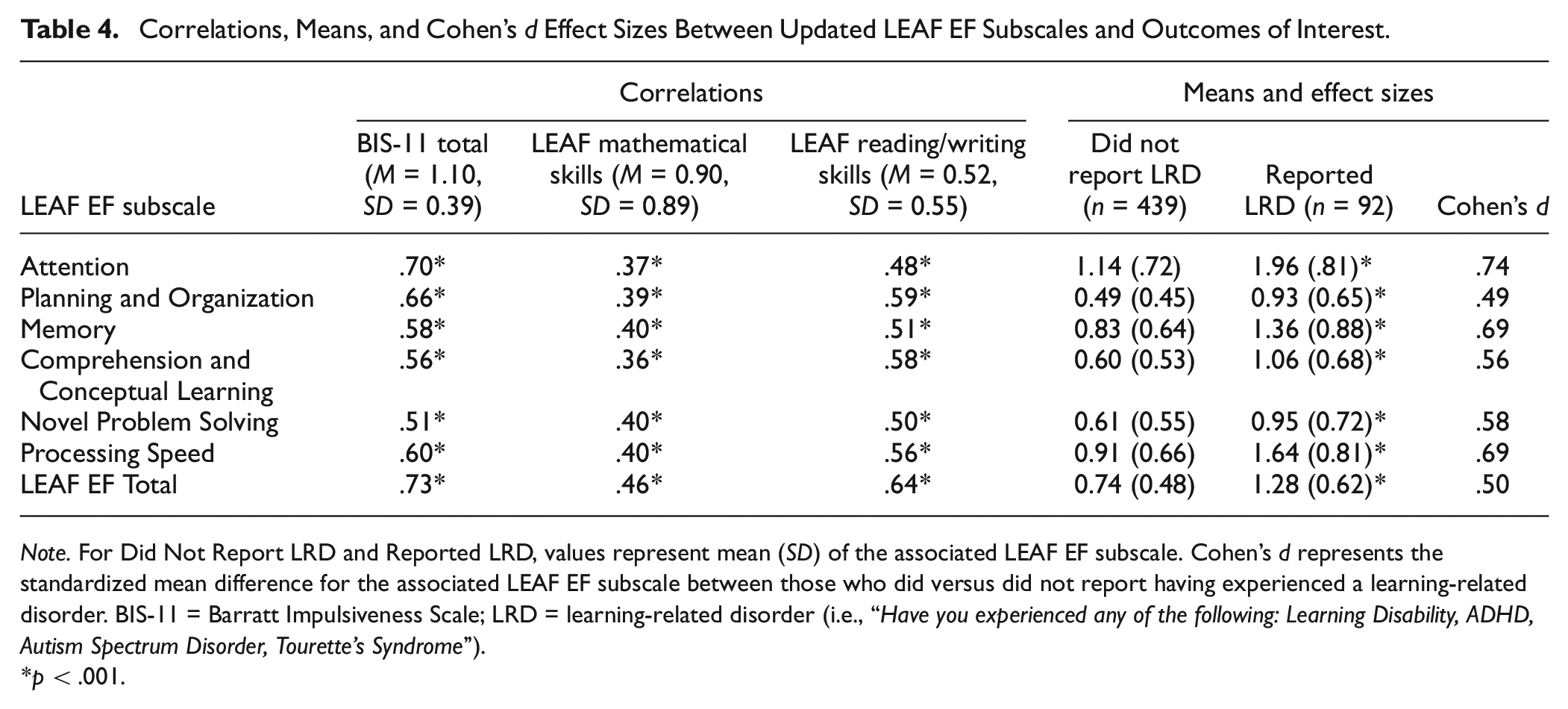

Predictive Validity of the LEAF

All updated LEAF EF subscale scores significantly and moderately-to-strongly (r = .36–.73) correlated with the BIS-11 total score, the LEAF Mathematical Skills subscale score, and the LEAF Reading/Writing score. Furthermore, independent samples t-tests revealed that all updated LEAF EF subscale scores were significantly larger (d = .49–.74; indicative of greater self-reported EF difficulties) in individuals who reported having experienced an LRD during their lives than in those who did not (Table 4).

Correlations, Means, and Cohen’s d Effect Sizes Between Updated LEAF EF Subscales and Outcomes of Interest.

Note. For Did Not Report LRD and Reported LRD, values represent mean (SD) of the associated LEAF EF subscale. Cohen’s d represents the standardized mean difference for the associated LEAF EF subscale between those who did versus did not report having experienced a learning-related disorder. BIS-11 = Barratt Impulsiveness Scale; LRD = learning-related disorder (i.e., “Have you experienced any of the following: Learning Disability, ADHD, Autism Spectrum Disorder, Tourette’s Syndrome”).

p < .001.

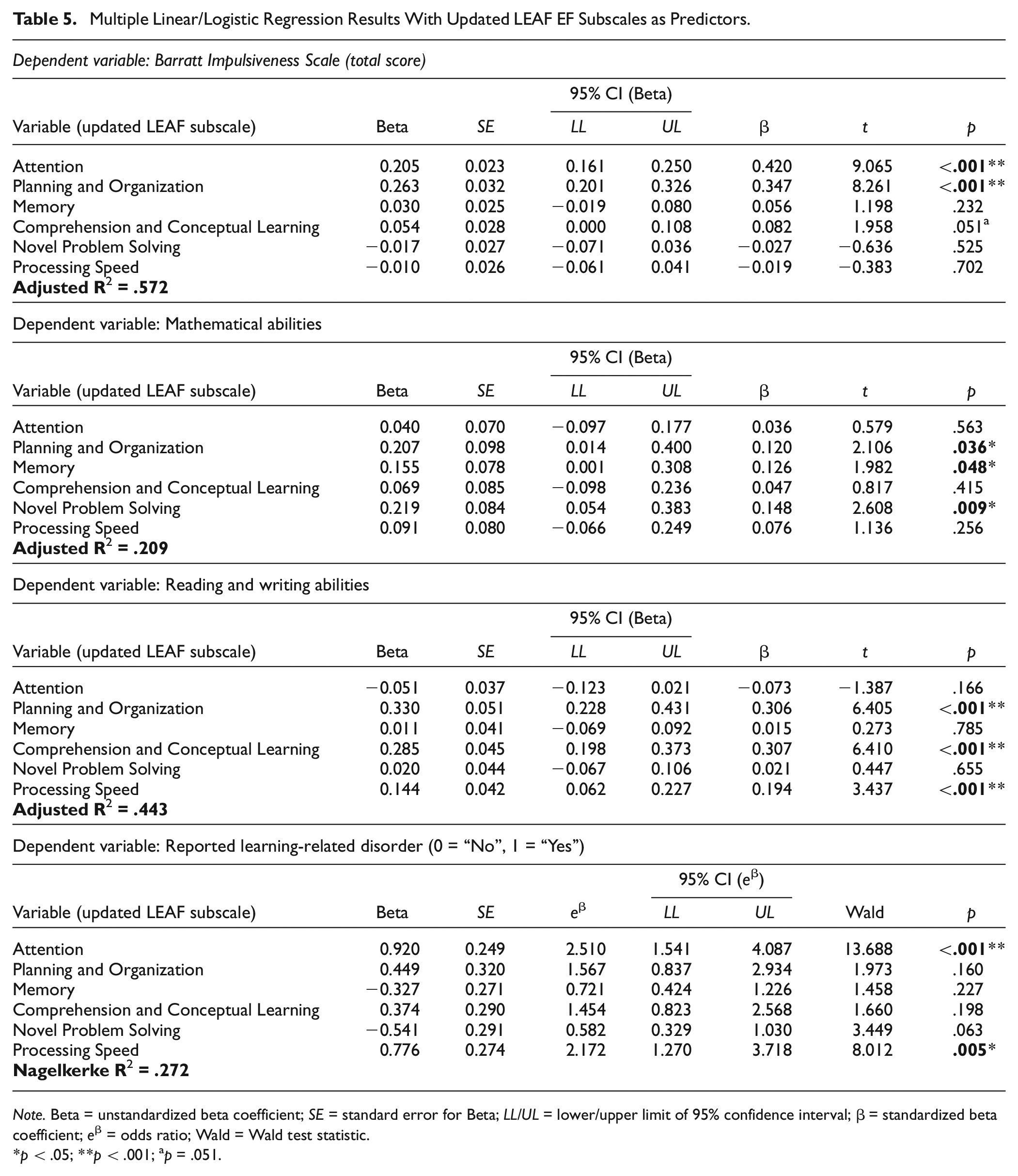

Importantly, however, multiple linear/logistic regression analyses with all updated LEAF EF subscales entered as predictors revealed that, for any given outcome of interest, only certain LEAF EF subscales emerged as significant, unique predictors once variance shared among all LEAF EF subscales was accounted for. Results of these analyses for each outcome of interest can be found in Table 5. Briefly, LEAF EF subscales significantly predicted approximately 57.2% of the variance in BIS-11 total scores, F(6, 537) = 121.957, p < .001, with self-reported Attention (B = 0.205, t = 9.065, p < .001) and Planning and Organization (B = 0.263, t = 8.261, p < .001) difficulties positively and uniquely predicting self-reported impulsivity. As expected, LEAF EF subscales also significantly predicted approximately 20.9% of the variance in LEAF Mathematical Skills subscale scores, F(6, 539) = 24.931, p < .001, with self-reported Planning and Organization (B = 0.207, t = 2.106, p = .036), Memory (B = 0.155, t = 1.982, p = .048), and Novel Problem Solving (B = 0.219, t = 2.608, p = .009) difficulties emerging as positive, unique predictors of self-reported mathematical difficulties. LEAF EF subscales significantly predicted approximately 44.3% of the variance in LEAF Reading/Writing scores, F(6, 539) = 73.251, p < .001, with self-reported Planning and Organization (B = 0.330, t = 6.405, p < .001), Comprehension and Conceptual Learning (B = 0.285, t = 6.410, p < .001), and Processing Speed (B = 0.144, t = 3.437, p < .001) difficulties positively and uniquely predicting self-reported reading and writing difficulties. Finally, for LRD history, when all updated LEAF EF subscales were entered as predictors, the model was significant, correctly classifying 85.7% of cases, χ2(6, N = 531) = 94.863, p < .001, Nagelkerke R2 = .272. Self-reported Attention (B = .920, Wald = 13.688, p < .001) and Processing Speed (B = .776, Wald = 8.012, p < .001) difficulties positively and uniquely predicted whether an individual self-reported having experienced an LRD over the course of their lives.

Multiple Linear/Logistic Regression Results With Updated LEAF EF Subscales as Predictors.

Note. Beta = unstandardized beta coefficient; SE = standard error for Beta; LL/UL = lower/upper limit of 95% confidence interval; β = standardized beta coefficient; eβ = odds ratio; Wald = Wald test statistic.

p < .05; **p < .001; a p = .051.

Discussion

The current study helps reveal the factor structure of LEAF EF items in young adults. Upon removing academic-related items following a preliminary factor analysis, PAF with Promax rotation on the remaining 39 LEAF EF items revealed a six-factor solution that was generally concordant with the originally proposed LEAF scale structure in children and adolescents (Castellanos et al., 2018). These factors corresponded to five of eight constructs proposed by the developers to be assessed by the LEAF (i.e., Attention, Memory, Comprehension and Conceptual Learning, Novel Problem Solving, and Processing Speed; Castellanos et al., 2018), with items from the Visual-Spatial Organization and Sustained Sequential Processing subscales appearing to jointly measure a Planning and Organization construct. However, contrary to the LEAF scale structure proposed for children and adolescents (Castellanos et al., 2018), no Working Memory factor emerged.

All six updated LEAF EF subscales correlated moderately-to-strongly with self-report measures of impulsivity, mathematical difficulties, and reading/writing difficulties. Updated LEAF EF subscales also differentiated individuals who reported having experienced an LRD from those who did not. These findings were likely driven, in part, by all updated LEAF EF subscales sharing something in common. However, different LEAF EF subscales emerged as unique predictors of each outcome once what was shared among all LEAF EF subscales was accounted for. In short, multiple regression results support the predictive validity of the updated LEAF EF subscales, suggesting that—as would be expected from a measure of EFs—they display unity and diversity.

Contrary to expectation, however, no clear separation between the original Cognitive-Learning and Cognitive-EF subscale items emerged in our preliminary analyses. We interpreted this to mean that, in young adults, Cognitive-Learning items assess constructs that do not display a clear empirical distinction from those assessed by the original Cognitive-EF items (i.e., the two content areas were not clearly separable by means of factor analysis). Thus, we felt justified in including all Cognitive-Learning items in our primary EFA as measures of EFs.

The proposed LEAF Working Memory items did not form a coherent factor in our analyses. Instead, the original five Factual Memory items and one of the original Working Memory items factored together. Consequently, the original Factual Memory items could be capturing a working memory EF construct. At face value, it does make sense to interpret Factual Memory items as non-EF—for example, digit span task performance (e.g., immediately recall a series of numbers read aloud) conceptually overlaps with Factual Memory subscale item wordings (e.g., “forget new things,” “not retain facts”) and is not impaired in individuals with frontal lobe damage. However, performance on delayed-response tasks (e.g., maintain presented information in mind over a short delay) also overlaps with Factual Memory item wordings but, in contrast, is often impaired in individuals with frontal lobe damage, particularly when a distractor is presented during the delay period (D’Esposito & Postle, 1999). In consideration of this latter finding, it is unsurprising that Factual Memory items did not empirically separate from Cognitive-EF items, correlating particularly strongly with our updated Attention subscale, a construct known to crucially compliment working memory processes at the task level (Engle, 2002). As such, we opted to name our updated subscale “Memory” as opposed to Factual Memory to capture the fact that these items may contain variance attributable to both Factual Memory and Working Memory processes.

This is not to say that the other original Working Memory items do not measure EFs. Extracted communalities for the original Working Memory items were moderate-to-high (i.e., >.50), suggesting that our main model accounted for a substantial amount of variance in these items. These items also loaded strongly (i.e., >.50) on Factor 1 of our preliminary three-factor model, which we broadly defined as “Executive Functioning.” Rather, our findings suggest that the originally proposed Working Memory items appear to assess other EF constructs assessed by the LEAF as opposed to forming their own coherent factor. Indeed, this finding highlights the strength of our analytic approach (vs. Castellanos et al., 2018): Although original LEAF Working Memory items displayed unidimensionality in a sample of children and adolescents (Castellanos et al., 2018), our results suggest that this may have been due to these items assessing what is common among all EF measures as opposed to a distinct EF construct.

Although the Visual-Spatial Organization and Sustained Sequential Processing items were originally proposed to measure two separate constructs, our six-factor model suggested that all 10 items from these two subscales measure a single construct, which we termed “Planning and Organization” based on the two highest loading items (Item 21: poor organization; Item 26: not plan). The original Visual-Spatial Organization and Sustained Sequential Processing subscales aimed to measure individuals’ perceived ability to organize and manipulate their environment and plan and complete multistep tasks in their appropriate order, respectively. Given that these items did not separate in our factor analysis, we interpret the construct to represent an individual’s self-reported ability to plan and organize both physically (i.e., manipulate objects in one’s external environment in a goal-directed manner) and temporally (i.e., plan a series of actions to complete over time).

LEAF EF and BIS-11 total scores appear to share a little over 50% of their variance, which suggests that these scales assess overlapping, but ultimately distinct, constructs. Consistent with this idea, BIS-11 total scores were only uniquely predicted by updated LEAF Attention and Planning and Organization subscales once all LEAF EF subscales were accounted for. Although only the BIS-11 total score was used here, the BIS-11 can be broken down into attentional, non-planning, and motor subscales (Patton et al., 1995), with the presence of attentional and non-planning items likely contributing to the strong correlations observed between the BIS-11 and the updated LEAF EF subscales. Despite the presence of some cognitively worded items in the BIS-11 (e.g., regarding concentrating easily, being a steady thinker, struggling to pay attention, etc.), we do not see the BIS-11 and updated LEAF Attention and Planning and Organization subscales as redundant with each other. For example, many items in the BIS-11 emphasize impulsive actions/thought within everyday life (e.g., squirming at plays/lectures, planning for job security or trips, buying items on impulse, etc.). Such wordings are purposefully not present in the LEAF, which focuses on Attention and Planning and Organization difficulties specifically within the context of learning or while performing complex tasks (e.g., struggling to stay focused on learning materials or perform multistep directions).

Castellanos et al. (2018) found that the original LEAF Comprehension and Conceptual Learning, Factual Memory, Working Memory, and Novel Problem subscales moderately and significantly correlated with the calculation subtest of the Woodcock-Johnson III (WJ-III) in children and adolescents (|r| = .28–.40). These results appear partially consistent with our own findings in young adults with the updated LEAF EF subscales. We found that self-reported mathematical difficulties (LEAF Mathematical Skills subscale) were uniquely predicted by self-reported difficulties with Planning and Organization, Memory, and Novel Problem Solving, but not Comprehension and Conceptual Learning. It is notable that Castellanos et al. (2018) did not control for other LEAF subscales when investigating correlations between the WJ-III calculation subtest and LEAF subscale scores. Thus, their significant correlations between mathematical abilities and the Comprehension and Conceptual Learning subscale could have been driven by shared variance across LEAF subscales.

Regardless, in both adults and children, working memory—both the passive maintenance of information in mind and the control/manipulation of that information—is known to be implicated in mathematical computations, particularly when those computations require multistep processes (Raghubar et al., 2010). Similarly, task-based working memory composite scores predict mathematical abilities (e.g., as assessed by tests of academic achievement; Berg, 2008) in children even once other cognitive abilities (e.g., processing speed) are accounted for. Thus, it is not surprising that the updated Memory (which assesses self-reported abilities to maintain information in mind and likely captures working memory) and Planning and Organization (which, in part, assesses self-reported abilities to use step-by-step directions held in mind to complete complex tasks; e.g., Item 28: “multistep directions difficult”; Item 29: “lose track directions”) subscales significantly predicted the LEAF Mathematical Skills subscale score. Similarly, the ability to approach and reason through novel problems (e.g., word problems) is a standard characteristic of mathematical-based assessments (e.g., Woodcock-Johnson IV; Schrank et al., 2014), with the development of problem-solving skills argued to be an important outcome of mathematical education (Ukobizaba et al., 2021). Thus, it is not surprising that the updated Novel Problem Solving subscale also uniquely predicted self-reported mathematical difficulties.

All original LEAF Cognitive subscales significantly and moderately correlated with the WJ-III writing samples subtest in the analyses of Castellanos et al. (2018). Similarly, they found that a basic reading skills composite score (WJ-III Letter-Word Identification and Word Attack subtests) displayed significant, small-to-moderate (|r| = .19–.43) correlations in children with all original LEAF Cognitive subscales excluding Attention and Sustained Sequential Processing. This pattern of results is consistent with our findings with adults, as all updated LEAF EF subscales correlated with the LEAF Basic Reading Skills and Written Expression Skills subscales that emerged in the preliminary EFA that we conducted, although the magnitude of the correlations we observed (r = .48–.64) was larger than that reported by Castellanos et al. (2018). We suspect that this was partly driven by us using self-reports that used the same response options as the updated LEAF EF subscales as opposed to performance-based measures of reading/writing abilities (Dang et al., 2020; Eisenberg et al., 2019).

Once what was shared among all updated LEAF EF subscales was accounted for, however, the updated Planning and Organization, Comprehension and Conceptual Learning, and Processing Speed subscales emerged as unique predictors of self-reported reading and writing difficulties. Indeed, Castellanos et al. (2018) observed that the Comprehension and Conceptual Learning subscale was the Cognitive subscale that correlated most strongly with both the reading and writing WJ-III measures, likely, in part, due to subscale wordings emphasizing difficulties associated with “understanding” learning-related materials. Our other findings are also consistent with previously literature. For example, in children, a recent review found that task-based measures of planning (e.g., Tower of London, Tower of Hanoi) significantly predicted writing abilities in seven of nine reviewed studies (Ruffini et al., 2024). Similarly, in a sample of elementary school-aged children, the teacher-reported BRIEF Planning and Organization subscale significantly predicted variance in a standardized reading comprehension test even after demographic variables (e.g., age) were accounted for (Costa et al., 2018). Finally, at the latent variable level, task-derived measures of processing speed (e.g., how rapidly individuals identify a target stimulus within a visual array containing a small number of non-target stimuli) significantly predicted markers of reading ability (e.g., ability to accurately and efficiently read increasingly difficult/obscure words) across multiple age ranges in children and adolescents even after other cognitive variables (e.g., working memory capacity and inhibitory control modeled as latent variables) were accounted for (Christopher et al., 2012).

Finally, as expected, individuals who reported having experienced a learning disability, ADHD, ASD, and/or Tourette syndrome also reported significantly higher scores (indicative of greater impairments) on all six of the updated LEAF EF subscales, with updated Attention and Processing Speed scores predicting unique variance in LRD history. Admittedly, our LRD history item was not optimally worded, as it combined multiple distinct neuropsychological disorders together in a single question. Each of these disorders (or combinations of these disorders) may be characterized by a unique pattern of EF impairments (e.g., Craig et al., 2016; Happé et al., 2006), although a recent meta-analysis suggests that children with ADHD and ASD have similar EF profiles (Townes et al., 2023). It is important to reiterate here that the purpose of our multiple regression analyses was to provide evidence for the predicative validity of updated LEAF EF subscales in young adults. The specific nature by which EFs relate to impulsivity and academic achievement, as well as how EF deficits manifest in specific clinical populations, is likely complex, and a thorough investigation of these relations was beyond the scope of the current study. Rather, we believe that results from our multiple regression analyses support the claim that updated LEAF EF subscales act in a manner consistent with what would be expected of an EF measure. Thus, updated LEAF EF subscales appear to show potential as effective means of assessing self-reported EF constructs in young adults, particularly when researchers are interested in measuring EFs within contexts related to learning or under high cognitive demand (vs. daily life broadly).

Particularly on the level of self-report, EFs conceptually overlap strongly with other constructs, such as self-regulation, delay of gratification, and conscientiousness (Duckworth & Kern, 2011). Therefore, our lack of established self-report measures of EF to analyze the convergent validity of the LEAF is a key limitation of the current study, making it difficult to clearly determine empirically whether LEAF EF subscales measure EFs or separate related-but-distinct constructs. Future work will be crucial to confirm the convergent validity of updated LEAF EF subscales in young adults by investigating their relations with other validated self-report measures of EF like the BRIEF-A or BDEF-S as has been done with children (Castellanos et al., 2018). However, we recommend that future research goes beyond using only zero-order correlations between the LEAF and other self-report EF measures to establish its convergent validity. Instead, it is important to show that the LEAF correlates with other EF measures even after controlling for constructs known to conceptually and empirically relate to EF on the self-report measurement level (e.g., self-regulation). Such an approach could help more confidently establish LEAF EF subscales as measures of EFs as opposed to related-but-distinct constructs. It may also help to clarify what distinguishes self-reported EFs and related constructs ontologically.

Given that our population was comprised of undergraduate students, EF abilities may have been greater than those of the average population. As a result, we do not know whether LEAF EF items would factor similarly in other populations, particularly clinical populations that often display EF impairments (e.g., Kennedy et al., 2008; Snyder, 2013; Willcutt et al., 2005). Furthermore, our sample was rather homogeneous, composed predominantly (i.e., over 80%) of females, with most individuals reporting English as their first language. Thus, researchers who use the LEAF with our updated subscales to measure EFs in different populations should interpret subscale scores cautiously. That said, although the best approach when determining the appropriate sample size for EFA is debated, performing EFA with a sample size of 546 and a subject-to-item ratio of 14:1 is consistent with current best practices (Goretzko et al., 2021). Thus, we feel confident that our results likely reflect the general underlying structure of LEAF EF items well, at least in young adult undergraduate students. Still, our analyses were exploratory in nature, and future research is required to confirm our updated scale structure with a well-powered sample and examine whether developmental differences exist in the LEAF’s factor structure as has been found for behavioral EF measures (see the article by Karr et al., 2018, for a review).

In the meantime, the six updated LEAF EF subscales may help future researchers employ the LEAF to isolate and measure EFs of interest in young adults. Consistent with previous research (Friedman & Miyake, 2017; Miyake et al., 2000), the moderate-to-strong correlations between the six factors and the results of our multiple regression analyses suggest that LEAF EF items assess separate but related constructs in young adults. The separate-but-related nature of the EFs assessed by the LEAF should prove useful not only for researchers interested in investigating general relations between EFs and a variety of clinically relevant outcomes (e.g., levels of perceived stress) but also for researchers interested in, for example, isolating the specific nature of EF-related deficits in clinical populations. Indeed, it has already been successfully used accordingly in adult samples (e.g., Crandall et al., 2019; Haehnel et al., 2022; Hanson et al., 2024; Marschark et al., 2015, 2017). Moreover, the inclusion of Academic items might be particularly attractive to researchers interested in examining links between EFs and academic performance within a single measure (Best et al., 2011; Peng & Kievit, 2020; Zelazo & Carlson, 2020), unlike measures such as the BDEFS (Barkley, 2011b) and BRIEF-A (Roth et al., 2005), which are not only costly but also do not assess academic abilities using the same rating scale nor EFs specifically within learning-related contexts.

Conclusions

The LEAF has shown potential as a resource-friendly measure of EFs in children, adolescents, and young adults; however, no study had yet investigated the factor structure of all LEAF EF items to ensure that items factor in a manner that is consistent with the originally proposed scale structure (Castellanos et al., 2018). EFs are closely related cognitive processes that often work in tandem. The specific nature of the processes that underlie a single EF variable, whether it be derived from a cognitive task or an individual scale item, is rarely clear-cut. We showed here that, although the LEAF factors in a manner that is generally consistent with its original proposed scale structure, some individual items that appeared to display face validity as measures of a specific EF factored with items believed to assess a different construct. Using these results, we constructed six updated subscales that will allow future researchers the ability to optimally isolate and assess EFs of interest in young adult samples using the LEAF scale. Future research should aim to confirm our model in wider community and clinical samples of young adults, as well as other age groups.

Supplemental Material

sj-docx-1-asm-10.1177_10731911251317788 – Supplemental material for Factor Structure and Psychometric Properties of the Learning, Executive, and Attention Functioning (LEAF) Scale in Young Adults

Supplemental material, sj-docx-1-asm-10.1177_10731911251317788 for Factor Structure and Psychometric Properties of the Learning, Executive, and Attention Functioning (LEAF) Scale in Young Adults by Cory A. Munroe, Jennifer Leckey, Shannon A. Johnson and Sophie Jacques in Assessment

Footnotes

Acknowledgements

The authors would like to acknowledge Erin Higgins and Maddy Nugent for their help with data collection, as well as Lilian Menzner for her assistance with data coding.

Author Contributions

Cory A. Munroe: Conceptualization, Formal Analysis, Investigation, Validation, Visualization, Writing—Original Draft, Writing—Review & Editing.

Jennifer Leckey: Conceptualization, Funding Acquisition, Investigation, Methodology, Project Administration, Supervision, Writing—Review & Editing.

Shannon A. Johnson: Conceptualization, Methodology, Supervision.

Sophie Jacques: Conceptualization, Data Curation, Funding Acquisition, Methodology, Supervision, Validation, Writing—Review & Editing.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Social Sciences and Humanities Research Council (Explore39175 to S.J., Canadian Graduate Scholarship—Master’s to J.L., Doctoral Fellowship to J.L.); and Nova Scotia Research and Innovation (Graduate Scholarship—Master’s to J.L.].

Data Availability

Aggregate data are available from the last author subject to institutional, federal, and provincial privacy regulations. Analyses codes are also available from the last author. Materials used in this paper were developed by third parties and should be sought from their respective developers/copyright holders.

Supplemental Material

Supplemental material for this article is available online.