Abstract

Portable and flexible administration of manual dexterity assessments is necessary to monitor recovery from brain injury and the effects of interventions across clinic and home settings, especially when in-person testing is not possible or convenient. This paper aims to assess the concurrent validity and test–retest reliability of a new suite of touchscreen-based manual dexterity tests (called EDNA™MoTap) that are designed for portable and efficient administration. A minimum sample of 49 healthy young adults will be conveniently recruited. The EDNA™MoTap tasks will be assessed for concurrent validity against standardized tools (the Box and Block Test [BBT] and the Purdue Pegboard Test) and for test–retest reliability over a 1- to 2-week interval. Correlation coefficients of r > .6 will indicate acceptable validity, and intraclass correlation coefficient (ICC) values > .75 will indicate acceptable reliability for healthy adults. The sample were primarily right-handed (91%) adults aged 19 and 34 years (M = 24.93, SD = 4.21, 50% female). The MoTap tasks did not demonstrate acceptable validity, with tasks showing weak-to-moderate associations with the criterion assessments. Some outcomes demonstrated acceptable test–retest reliability; however, this was not consistent. Touchscreen-based assessments of dexterity remain relevant; however, there is a need for further development of the EDNA™MoTap task administration.

Dexterity most commonly refers to skilled voluntary movements of the arm and hands to complete specific tasks (Backman et al., 1992; Kimoto et al., 2019) and is regarded as a fundamental component of everyday functional performance (Case-Smith et al., 1998; Yang et al., 2015). Dexterity has two main subcategories: manual dexterity and fine-motor dexterity. Manual dexterity refers to gross-motor grasp and release of objects with the hands, whereas fine-motor dexterity refers to in-hand manipulation of objects (Yancosek & Howell, 2009). Measures of dexterity give an indication of upper-limb ability and disability (Eliasson et al., 2006; Gunel et al., 2009), and therefore, the evaluation of dexterity is important for clinical and research settings to enable an understanding of neuromotor function (Tesio et al., 2016; Yang et al., 2015).

Valid and reliable clinical measures of manual and fine-motor dexterity have existed for many years (Yancosek & Howell, 2009). For example, the Purdue Pegboard Test (PPT), an assessment of unimanual and bimanual finger and hand dexterity, was first studied for its validity and reliability in 1948 (Tiffin & Asher, 1948), and the BBT, an assessment of manual dexterity, in 1985 (Mathiowetz et al., 1985). Many tests of dexterity can demonstrate high levels of reliability (including retest, inter-rater, and internal consistency) and validity (including criterion, construct, discriminate, face, and content) (Yancosek & Howell, 2009). As such, these standardized tests of dexterity can be used with confidence for a wide range of clinical and non-clinical groups, both in research and applied settings.

Despite the well-established psychometric properties of dexterity assessments, these tests present challenges in their administration and/or availability, both of which can limit the feasibility or practicality of assessment, especially when repeated numerous times. Foremost, these clinical tests are required to be conducted in-person by a practitioner to ensure standardized administration, accurate recording of completion time and error rates, and that no artifacts (e.g., poor attention to task instructions) influence performance. The requirement for in-person administration can limit the practicality and usability of these tests, as well as the accuracy of assessment when face-to-face administration is not possible (Ostrowska et al., 2021). In both clinical practice and research, there is often a case or need for remote assessment of motor function, a point that the COVID-19 pandemic has clearly demonstrated (Bettger et al., 2020; A. C. Lee, 2020; Mantovani et al., 2020). Furthermore, access to valid and reliable assessments of dexterity in the home or remote settings can enhance the clinician’s ability to monitor progress of recovery and adjust treatment regimes and may provide more equitable access across demographics and cultures (Pitchford & Outhwaite, 2016).

In addition to their clinical applications, dexterity assessments have shown ecological relevance in manual handling professions and workplace compensation cases (Yancosek & Howell, 2009). With the universal use of technology such as computers, tablets, and smartphones, targeted finger tapping has become an important, ecologically valid skill in a wide variety of contexts over the lifespan (e.g., in schooling, the workplace, and daily living), and one shown to correlate with general manual ability (Kizony et al., 2016). Validity and reliability of tapping assessments have been shown in the classification of motor impairment in people with a range of impairments including Paralympic athletes (Hogarth et al., 2019), Parkinson’s disease (C. Y. Lee et al., 2016; Mitsi et al., 2017; Růžička et al., 2016; Trager et al., 2020; Wissel et al., 2017), stroke (Tomisová & Opavský, 2009), and peripheral nerve damage (Zhang et al., 2018). Furthermore, the common use of tapping tasks in tablet-based rehabilitation programs (M. Lee et al., 2021; van Beek et al., 2020) and functional neuroimaging research (Witt et al., 2008) further demonstrates the practical relevance of these tasks. Together, touchscreen-based tapping tests of dexterity have obvious face validity in the 21st century; however, careful validation of these types of tasks are necessary to ensure appropriate clinical and research use.

Tablet-based applications for rehabilitation have become common place and can play an important complementary role in the treatment of various brain-related conditions including stroke (Ameer & Ali, 2017; Kizony et al., 2016; M. Lee et al., 2021; Pugliese et al., 2018, 2019; Rogers et al., 2019; Wilson et al., 2021), acquired brain injury (Rogers, Mumford, et al., 2021), and multiple sclerosis (van Beek et al., 2020). In the case of training, these tablet-based programs involve a range of tapping, grasping, and/or pinching actions that are embedded in engaging game-like tasks to encourage dexterity rehabilitation. Interventions based on these types of tasks have shown sound feasibility (Ameer & Ali, 2017; Kizony et al., 2016; Rogers, Mumford, et al., 2021; van Beek et al., 2020), promising efficacy for improving upper-limb function (Ameer & Ali, 2017; Rogers et al., 2019; Wilson et al., 2021), and neuromuscular facilitation effects (Larsen et al., 2016). Further, in-home, tablet-based rehabilitation can be more effective than a standardized, active-control therapy like GRASP (Wilson et al., 2021). However, programs may be less acceptable if the tablet-based programs do not appropriately meet the cognitive and motor ability of users over time (Givon Schaham et al., 2018; Pugliese et al., 2018, 2019). Despite the promise of tablet-based rehabilitation interventions, clinical use of tablet-based assessment tools has been far more limited compared with traditional in-person testing.

Although progress has been made in tablet-based assessment of upper-limb skill (Block et al., 2019; de Souza et al., 2019; Hoseini et al., 2015; Kizony et al., 2016; Liu et al., 2021; Mitsi et al., 2017; Mollà-Casanova et al., 2021; Pitchford & Outhwaite, 2016; Wissel et al., 2017), these research tools have either not been appropriately validated against “gold-standard” assessments (de Souza et al., 2019; Kizony et al., 2016; Liu et al., 2021; Pitchford & Outhwaite, 2016) or are not appropriate for unsupervised administration (Block et al., 2019; Hoseini et al., 2015). For example, a tablet version of the Motor Function Measure (Vuillerot et al., 2013) was developed by de Souza et al. (2019), but the validity of the tablet scores against therapist scores was only presented for 3 of 37 participants. Similarly, Kizony et al. (2016) evaluated the usability of a tablet app for stroke rehabilitation, but did not report any reliability or validity outcomes.

Three recent developments in tablet-based dexterity assessment do provide some promising validation data. The iMotor two-target task, developed by Apptomics, is administered on a 7-inch touchscreen tablet and requires users to alternatively tap between two targets as fast and accurately as possible for 30 seconds. The iMotor task has shown good-to-excellent test–retest reliability (r = .85–.96), moderate correlation with Unified Parkinson’s Disease Rating Scale scores (UPDRS; r = .35–.55), and high predictive validity (area under the curve [AUC] = 83%–86%) (Mitsi et al., 2017; Wissel et al., 2017). A similar task developed by C. Y. Lee et al. (2016) requires fast tapping between two targets for 20 seconds and has also been shown to share a moderate correlation with the motor subscale of the UPDRS (r = .5). For a series of rapid tapping and oculo-manual coordination tests presented on a 12-inch touchscreen tablet, Mollà-Casanova et al. (2021) reported a strong correlation with the BBT (r = .70) and excellent intra- and inter-rater reliability (intraclass correlation coefficient [ICC] = .90–.96) for a cohort of stroke survivors. The rapid tapping task requires users to tap the screen with one finger as many times as possible in 10 seconds, whereas the oculo-manual coordination task requires users to touch a 4 × 8 grid of targets as fast as possible. Although these tasks provide some evidence for the reliability and validity of touchscreen-based tapping assessments, the scope of the validation data was limited. The iMotor task only appears to have been used in Parkinson’s cohorts, so it is unclear if these tasks are appropriate for other clinical and healthy populations. The tapping task used by Mollà-Casanova et al. (2021) demonstrated a strong correlation with the BBT, but had a weaker correlation with the Nine-Hole Peg Test, which requires a higher degree of fine-motor control. The lack of in-hand object manipulation remains a potential issue with touchscreen tapping assessments, particularly if tasks are intended to assess fine-motor dexterity. Furthermore, the above tasks employ simple motor responses that require very little cognitive involvement, which does not align well with cognitive-motor integration requirements of effective rehabilitation programs or common daily tasks requiring both cognition and dexterity (Rogers, Jensen, et al., 2021; Rogers, Mumford, et al., 2021). Consequently, to inform clinical and research use, there is a need to assess the concurrent validity of tapping tasks with varying degrees of cognitive involvement against measures of both manual and fine-motor dexterity.

To meet this need for an accessible and portable test of dexterity—ideally suited to unsupervised, in-home administration that complements in-person therapy and assessment—the aim of this study is to assess the concurrent validity and test–retest reliability of a newly developed touchscreen-based test of dexterity among healthy young adults. The “MoTap” test was designed as part of the ElementsDNA (or EDNA™) rehabilitation system (Rogers et al., 2019; Rogers, Jensen, et al., 2021), programmed using Unity software (Unity Technologies, San Francisco, USA), and administered on a 22-inch touchscreen device (Elo™). The test suite comprises three different tapping tasks that vary in the complexity of movement and cognitive involvement; the Two-point Tap, the Radial Tap, and the Go/no-go Tap (see section “Method” for more detail). The tasks were designed to meet the speed and accuracy demands of established in-person assessments of dexterity. The Go/no-go Tap was designed to provide a more cognitively challenging task, aligning with the cognitive-motor requirements of tablet-based rehabilitation programs (Rogers et al., 2019; Rogers, Mumford, et al., 2021).

Concurrent validity of the EDNA™MoTap will be assessed against the well-established PPT and BBT. It is hypothesized that the Two-point Tap will achieve r > .6 with the unimanual PPT tasks, r > .5 with the two bimanual PPT tasks (i.e., both hands and assembly tasks), and r > .7 with the BBT. It is expected that the Radial Tap will achieve r > .6 with the unimanual PPT tasks, r > .5 with the bimanual PPT tasks, and r > .7 with the BBT. Finally, it is expected that the Go/no-go Tap will achieve r > .6 with the unimanual PPT tasks and the BBT, and r > .5 with the bimanual PPT tasks. Test–retest reliability of the EDNA™MoTap will be assessed with repeated measurement after a 1- to 2-week interval. It is hypothesized that the Two-point Tap will achieve ICC > .9 for retest reliability, the Radial Tap will achieve ICC > .9 for retest reliability, and the Go/no-go Tap will achieve ICC > .75 for retest reliability.

Method

Ethics Information

The study protocol complies with ethical regulations and has been approved by the Australian Catholic University Human Research Ethics Committee (application ID: 2021-131E). Written informed consent will be obtained from all participants prior to participation in the research. Informed consent for ongoing participation will also be obtained prior to participation at time-point 2. Participants will not be compensated for their participation in the study.

Sampling Plan

Power Analysis

For concurrent validity, a sample size calculation was completed using the pwr package (Champely, 2020) (see section “Code Availability”). There are no previous validations of tablet-based tapping tasks in healthy adults; however, similar tapping type tasks have shown moderate correlations with motor performance for stroke survivors and those with Parkinson’s Disease (r = .35–.70; Mollà-Casanova et al., 2021; Wissel et al., 2017). An alpha level of .05 and power of .8 were adopted. Although r > .6 will be considered acceptable for healthy adults, r = .4–.6 will be considered to provide acceptable support for subsequent validation studies in clinical samples. Therefore, this study will be powered to detect criterion test correlation of r = .40, requiring a minimum sample of 46 participants. For test–retest reliability, a sample size calculation was completed using the ICC.Sample.Size package (Rathbone et al., 2015) (see section “Code Availability”). Similar two-point tapping tasks have demonstrated excellent test–retest reliability for stroke survivors and those with Parkinson’s Disease (r = .85–.96; Mollà-Casanova et al., 2021; Wissel et al., 2017). Again, an alpha level of .05 and power of .8 were adopted. Assuming a 10% attrition rate, a null reliability with ICC = .7 and expected reliability with ICC = .9, a minimum sample of 26 participants is required.

Participant Recruitment

To account for 5% data loss, a minimum sample of 49 participants will be recruited. Participants will be conveniently recruited from the staff and student cohorts of the Australian Catholic University. Advertisements calling for participants will be distributed via unit homepages, lead author visits to lectures, and via word-of-mouth. As per ethical approval, advertisements will briefly outline participation requirements, will make voluntary participation clear, and will explicitly note that participation (or non-participation) will not result in any advantage or disadvantage to participants. Participant recruitment and data collection are planned to occur between February 2022 and September 2022.

Inclusion and Exclusion Criteria

Participants will be eligible for inclusion if they are typically developing and aged 19–35 years. Participants will be ineligible for inclusion if they have a neurological, developmental, or motor disorder or impairment, have a recent upper-limb injury, or are unable to understand the instructions of the assessment tasks. Participant data will be excluded from the analyses if there are missing values on a per-outcome basis, but participant data will be included for all analyses with complete data.

Procedures

Blinding

Data collection and analyses will not be performed blind to the conditions of the experiments.

Description of EDNA™ MoTap Instrument

EDNA™MoTap includes three tasks, varying in complexity of movement and cognitive involvement. The Two-point Tap has been developed in accordance with a previously developed tablet-based tapping task (Mitsi et al., 2017; Wissel et al., 2017), whereas the Radial Tap and the Go/no-go Tap tasks have been developed to increase the movement difficulty and cognitive demands, respectively.

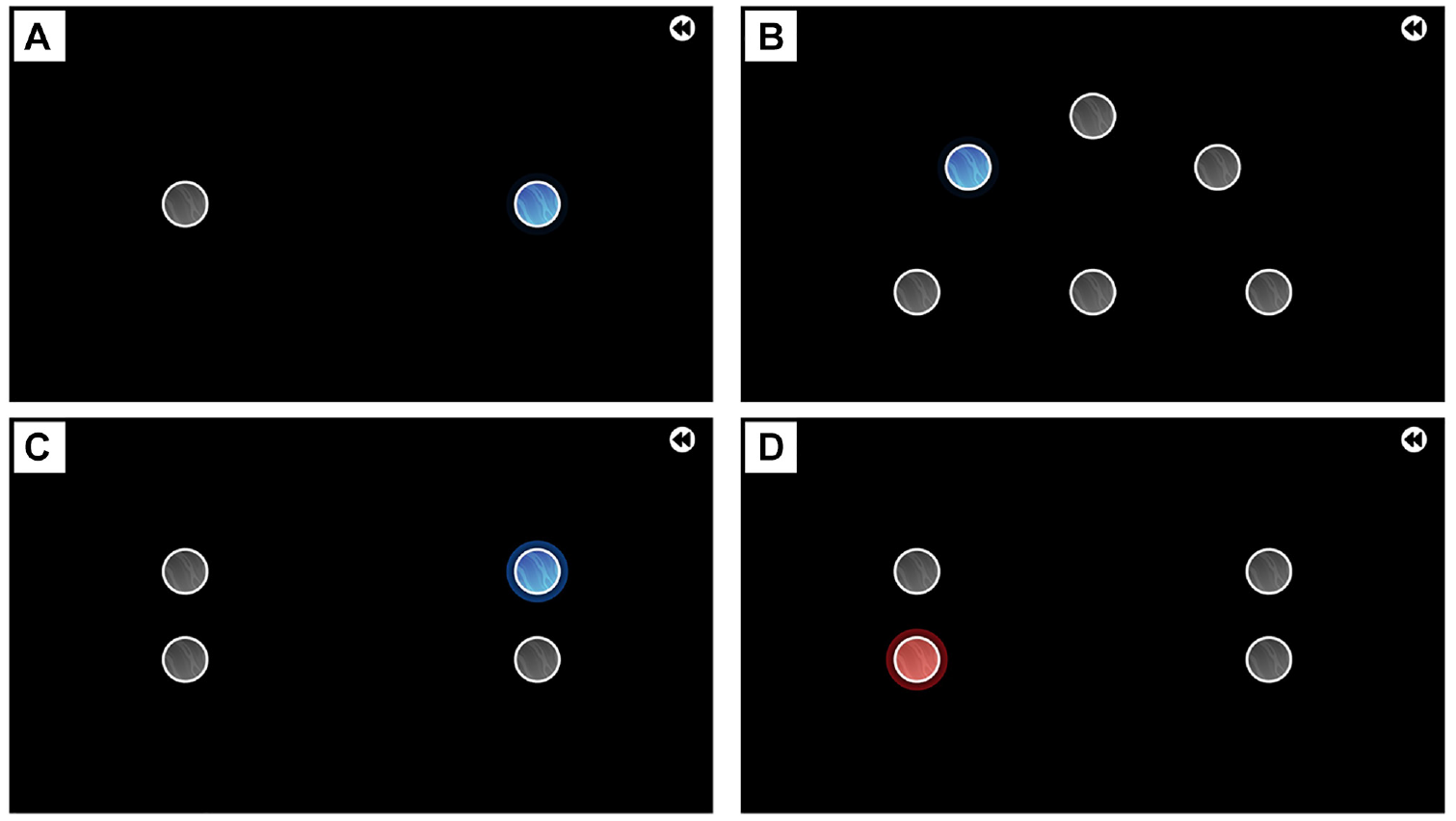

The Two-point Tap (Figure 1A) involves tapping between two stationary circles as quickly and accurately as possible. The two circles are separated by 24 cm and both have a 3-cm diameter. The aim of the task is to complete as many accurate taps as possible in a 30-second trial. Outcome measures include the total number of taps, the total number of accurate taps, total number of inaccurate taps, mean inter-tap duration, and inter-tap duration variability.

Images of the EDNA™MoTap Tapping Tasks. (A) Two-Point Tap Task, (B) Radial Tap Task, (C) Go/No-Go Tap Task Where Participant Should Tap the Blue Circle, and (D) Go/No-Go Tap Task Where Participant Should Tap the Gray Circle Above the Red Circle.

The Radial Tap (Figure 1B) displays five 3 cm circles spread around a home-base circle in a 180° radial pattern. Radial circles are positioned 12 cm from the home circle. The task requires sequentially tapping the radial circles, separated by the home circle, as quickly and accurately as possible. The aim of the task is to complete as many accurate taps as possible in a 30-second trial. Outcome measures include the total number of taps, the total number of accurate taps, total number of inaccurate taps, mean inter-tap duration, and inter-tap duration variability.

The Go/no-go Tap (Figure 1C and 1D) combines rapid tapping between circles with a go/no-go paradigm (Wessel, 2018). Left-side and right-side pairs of circles are separated horizontally by 24 cm, and pairs are separated vertically by 6 cm. Circles have a 3-cm diameter. The participant is required to tap between the left-side and right-side pairs of circles as quickly and accurately as possible. The correct circle to tap is determined by the color of the illuminated circle. If the circle is blue, the blue circle is the correct circle to tap (Figure 1C). If the circle is red, the correct circle to tap is the gray circle on the same side of the screen (Figure 1D). Each circle is illuminated 10 times, resulting in a total of 40 taps per trial. To ensure a prepotent response to tap the blue circles, the proportion of blue (go) to red (no-go) trials for each circle is 8:2 (i.e., 20% no-go) (Wessel, 2018). Outcome measures include the total number of accurate taps, total number of inaccurate taps, mean inter-tap duration, inter-tap duration variability, total number of error taps (i.e., accurate taps on the incorrect target or no-go target), response time to go (i.e., blue) circles, and response time to no-go (i.e., red) circles.

Demographic Information

Prior to completing the assessment tasks, participants will complete a short demographics survey. Survey responses will provide information relating to age, sex, socio-economic status (SES), movement disorders or impairments, and familiarity with touchscreen and other electronic devices (e.g., computers, mobile phones). Familiarity with touchscreen devices will be measured using a 4-point Likert-type scale ranging from 1 (very unfamiliar) to 4 (very familiar), and number of hours a day using a touchscreen device, assessed using 14 options ranging from 0 hours per day to >12 hours per day.

Concurrent Validity Procedures

Concurrent validity of the three EDNA™MoTap tasks will be assessed for fine-motor and manual dexterity. The Purdue Pegboard Test (PPT) will be used to assess concurrent validity for fine-motor dexterity. The BBT will be used to assess concurrent validity for manual dexterity. Both of these assessments have well-established reliability and validity for a range of healthy and clinical populations (Yancosek & Howell, 2009) and can be considered as “gold-standard” criterion assessments of dexterity.

Upon arrival for session 1 (T1), the procedures for the session will be explained to participants. Participants will first complete the criterion assessments (i.e., PPT and BBT) in a randomized order, followed by the three EDNA™MoTap tasks in a randomized order. Randomization of criterion and EDNA™MoTap task completion has been generated using the randomizeR package (Uschner et al., 2018) (see section “Data Availability”). Participants will be allocated a randomized task sequence in sequential order of participation.

Relevant assessments from the PPT and BBT will be completed in accordance with standard testing procedures (Mathiowetz et al., 1985; Tiffin & Asher, 1948; Yancosek & Howell, 2009). For the PPT, participants will complete a 30-second trial with their dominant hand first, followed by their non-dominant hand, then with both hands. Finally, participants will complete the 60-second (bimanual) assembly task. To ensure reliable measurement, participants will complete a total of three trials for each task, with the mean of scores to be used for analyses (Yancosek & Howell, 2009). For the BBT, participants will complete a single 60-second trial with their dominant hand first, followed by their non-dominant hand.

For the EDNA™MoTap, participants will have each task explained to them verbally prior to a practice period. The practice period will last 20 seconds, after which the participant will be asked if they understand the task. If the participant is unclear on the task, they will be given a second practice period, after which they will complete their trials. Participants will complete the EDNA™MoTap tasks with their dominant hand first, followed by their non-dominant hand, before completing the next task. Participants will be given a break of at least 1-minute between each task. After completing all EDNA™MoTap tasks with each hand, they will repeat all tasks again, and the average of the two trials will be used for analysis.

Test–Retest Reliability Procedures

Participants will attend a second session (T2) within 1–2 weeks following T1. Upon arrival at T2, the same procedures relating to EDNA™MoTap will be explained to participants. Given that participants will be familiar with the tasks, they will be given a maximum of one practice period for each task. Participants will complete the three EDNA™MoTap tasks in the same order as they completed them at T1. As with T1, participants will repeat all tasks a second time, and the average of the two trials will be used for analysis.

Analysis Plan

Pre-Processing

All data processing and analyses will be conducted using R (R Core Team, 2021) in the RStudio environment (RStudio Team, 2021). Data will be checked for completeness, and incomplete cases excluded according to the inclusion and exclusion criteria. Cases with outliers, defined as values ±3SD from the mean, will be removed on a per-outcome basis. Normality will be assessed using the Shapiro–Wilk tests. Scatterplots of each EDNA™MoTap outcome measure and (a) the total number of pegs placed for each task (PPT) and (b) the total number of blocks moved (BBT) will be used to visually determine the linearity of relationships.

Concurrent Validity Analyses

Pearson’s correlation (for linear relationships) or Spearman’s correlation (for monotonic relationships) will be calculated to assess the concurrent validity of outcome measures. Correlation coefficient and 95% confidence intervals will be interpreted as follows: 0.00–0.10 = “negligible,” 0.10–0.39 = “weak,” 0.40–0.69 = “moderate,” 0.70–0.89 = “strong,” and 0.90–1.00 = “very strong” (Akoglu, 2018; Schober et al., 2018) (see Supplementary Material for more information). Furthermore, the coefficient of determination (R2) will be provided to evaluate the proportion of variance accounted for by EDNA™MoTap outcomes (Ozer, 1985).

Test–Retest Reliability Analyses

For each outcome measure, ICCs will be used to provide a relative assessment of reliability, whereas Standard Error of Measurement (SEM) will be provided as an absolute assessment of reliability (Weir, 2005). Using the irr package (Gamer et al., 2019), ICC (3, k) estimates and their 95% confidence intervals will be calculated based on mean-rating (i.e., average of repeat assessments within a given day), absolute-agreement, two-way mixed-effects models (Koo & Li, 2016). Interpretation of reliability will be based on the 95% confidence intervals of the ICC estimate as follows: 0.00–0.49 = “poor,” 0.50–0.74 = “moderate,” 0.75–0.89 = “good,” and 0.90–1.00 = “excellent” (Koo & Li, 2016) (see Supplementary Material for more information). SEM will be calculated as follows: SEM = SD√(1 − ICC), where SD is the standard deviation of scores from all participants (Weir, 2005).

Data Availability

All relevant data will be made publicly available upon acceptance for publication. Data will be de-identified to ensure participant confidentiality and pre-processed using the relevant code to ensure useability (see section “Code Availability”). Data will be stored on the project osf.io (https://osf.io/esjby), in the Data folder. The task randomization sequences are available within the Output folder.

Code Availability

Except for source code for the EDNA™MoTap program, which is protected as a commercial product, all code will be made publicly available upon acceptance of this work for publication. The code to be used is currently available on the project osf.io (https://osf.io/esjby), in the Code folder. Code used for sample size calculation is available in the sample.size.calculation. Rmd file. Code used to produce the task randomization sequence is available in the randomization.Rmd file. Code to be used for pre-processing, analyses, and visualization is available in the analyses.Rmd file.

Results

Participant Recruitment

Participants were recruited for this study between June 2022 and January 2024, which was longer than originally planned. The first group of participants (n = 25) were recruited as part of a student research project which concluded in September 2022. The second group of participants (n = 14) were recruited as part of another student research project which concluded in September 2023. During this time, older adult participants were also recruited; however, data for these participants are not reported here. The remaining participants (n = 7) were recruited between November 2023 and January 2024 to meet the minimum sample size requirement of 46 participants.

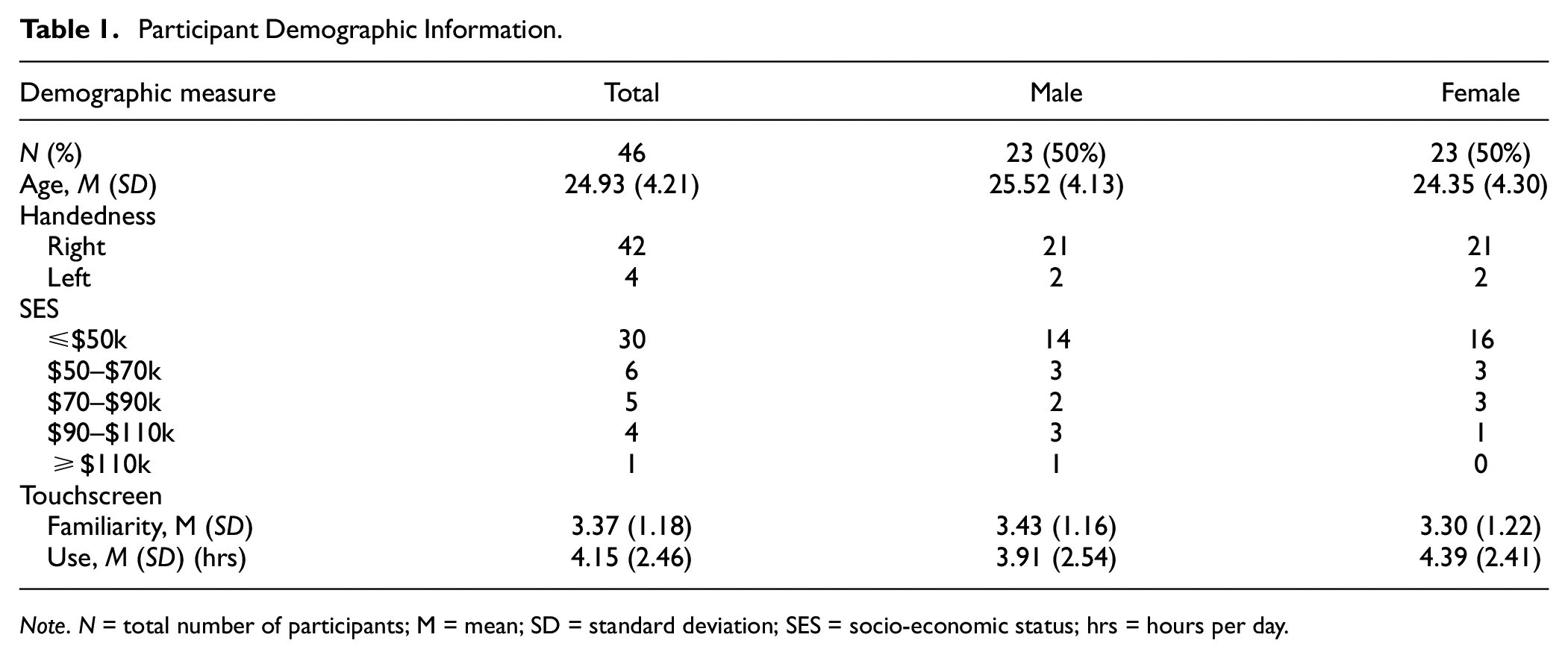

Participants

Participants were healthy adults (n = 46, 50% females) aged between 19 and 34 years (M = 24.93, SD = 4.21). Of the sample, 91% were right-handed. The sample were somewhat to very familiar with touchscreens (M = 3.37, SD = 1.18) and used touchscreens for a mean of 4.15 (SD = 2.46) hours per day. All participant characteristics are presented in Table 1.

Participant Demographic Information

Note. N = total number of participants; M = mean; SD = standard deviation; SES = socio-economic status; hrs = hours per day.

Data Output

All raw data output are available on the project osf.io (https://osf.io/esjby) in the Output folder.

Concurrent Validity

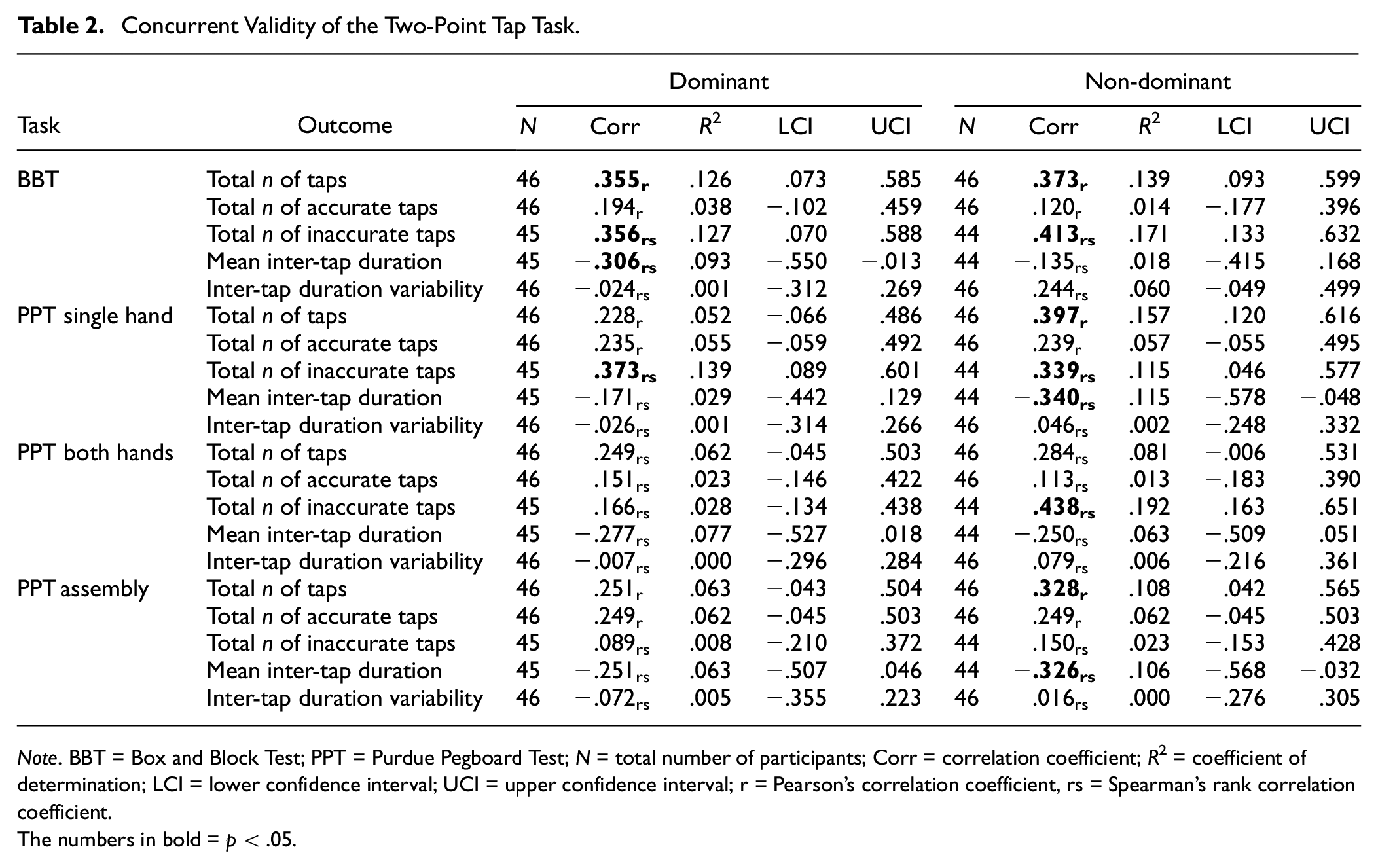

Two-Point Tap Task

Concurrent validity of the two-point tap task against the BBT showed significant weak-to-moderate correlations for both dominant and non-dominant hands for total number of taps (Dom: r = .355; non-Dom: r = .373) and total inaccurate taps (Dom: rs = .356; non-Dom: rs = .413) (Table 2). The two-point tap task had a significant association with the single-hand task of the PPT for both dominant and non-dominant hands only for the total number of inaccurate taps (Dom: rs = .373; non-Dom: rs = .339) (Table 2). There were no other significant associations between two-point tap outcomes and the BBT or PPT for both the dominant and non-dominant hand. These findings do not support the hypotheses, with the outcomes showing either a “weak” or “moderate” correlation between the two-point tap task and the criterion measures at best. As such, these findings suggest that the two-point tap task may not be a valid measure of fine-motor dexterity within the current sample.

Concurrent Validity of the Two-Point Tap Task

Note. BBT = Box and Block Test; PPT = Purdue Pegboard Test; N = total number of participants; Corr = correlation coefficient; R2 = coefficient of determination; LCI = lower confidence interval; UCI = upper confidence interval; r = Pearson’s correlation coefficient, rs = Spearman’s rank correlation coefficient.

The numbers in bold = p < .05.

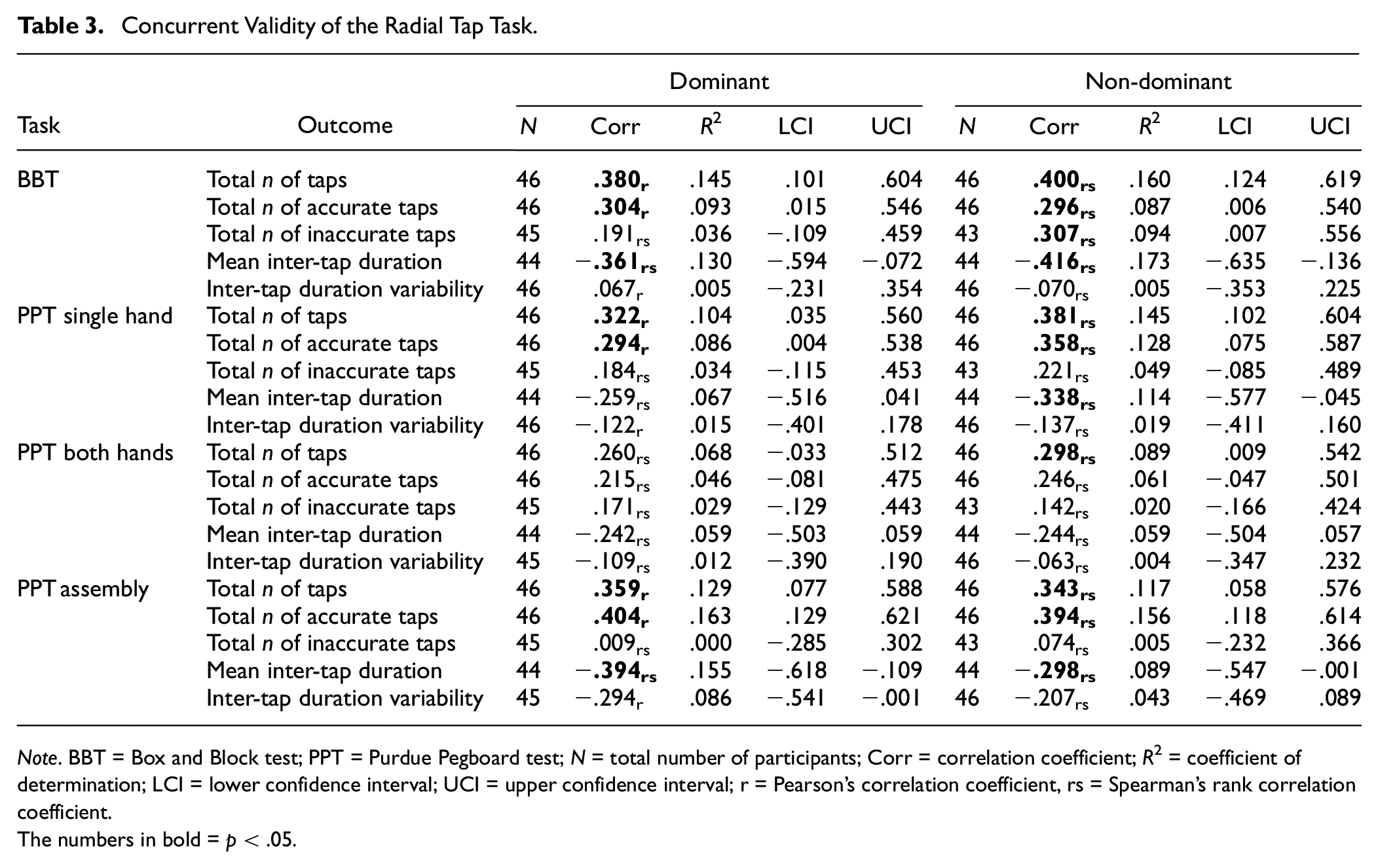

Radial Tap Task

The concurrent validity of the radial task (Table 3) against the BBT indicated significant weak-to-moderate associations for both the dominant and non-dominant hands for total number of taps (Dom: r = .380; non-Dom: rs = .400), total number of accurate taps (Dom: r = .304; non-Dom: rs = .296), and mean inter-tap duration (Dom: rs = −.361; non-Dom: rs = −.416). Significant weak-to-moderate associations were found between the PPT single-hand task and the total number of taps (Dom: r = .322; non-Dom: rs = .381) and total number of accurate taps (Dom: r = .294; non-Dom: rs = .358) and between the PPT assembly task and the total number of taps (Dom: r = .359; non-Dom: rs = .343), total number of accurate taps (Dom: r = .404; non-Dom: rs = .394), and mean inter-tap duration (Dom: rs = −.394; non-Dom: rs = −.298) The findings do not support the hypotheses, with findings showing either a “weak” or “moderate” correlation between the radial tap task and the criterion variables at best. As such, these findings suggest that the radial tap task may not be a valid measure of fine-motor dexterity within the current sample.

Concurrent Validity of the Radial Tap Task.

Note. BBT = Box and Block test; PPT = Purdue Pegboard test; N = total number of participants; Corr = correlation coefficient; R2 = coefficient of determination; LCI = lower confidence interval; UCI = upper confidence interval; r = Pearson’s correlation coefficient, rs = Spearman’s rank correlation coefficient.

The numbers in bold = p < .05.

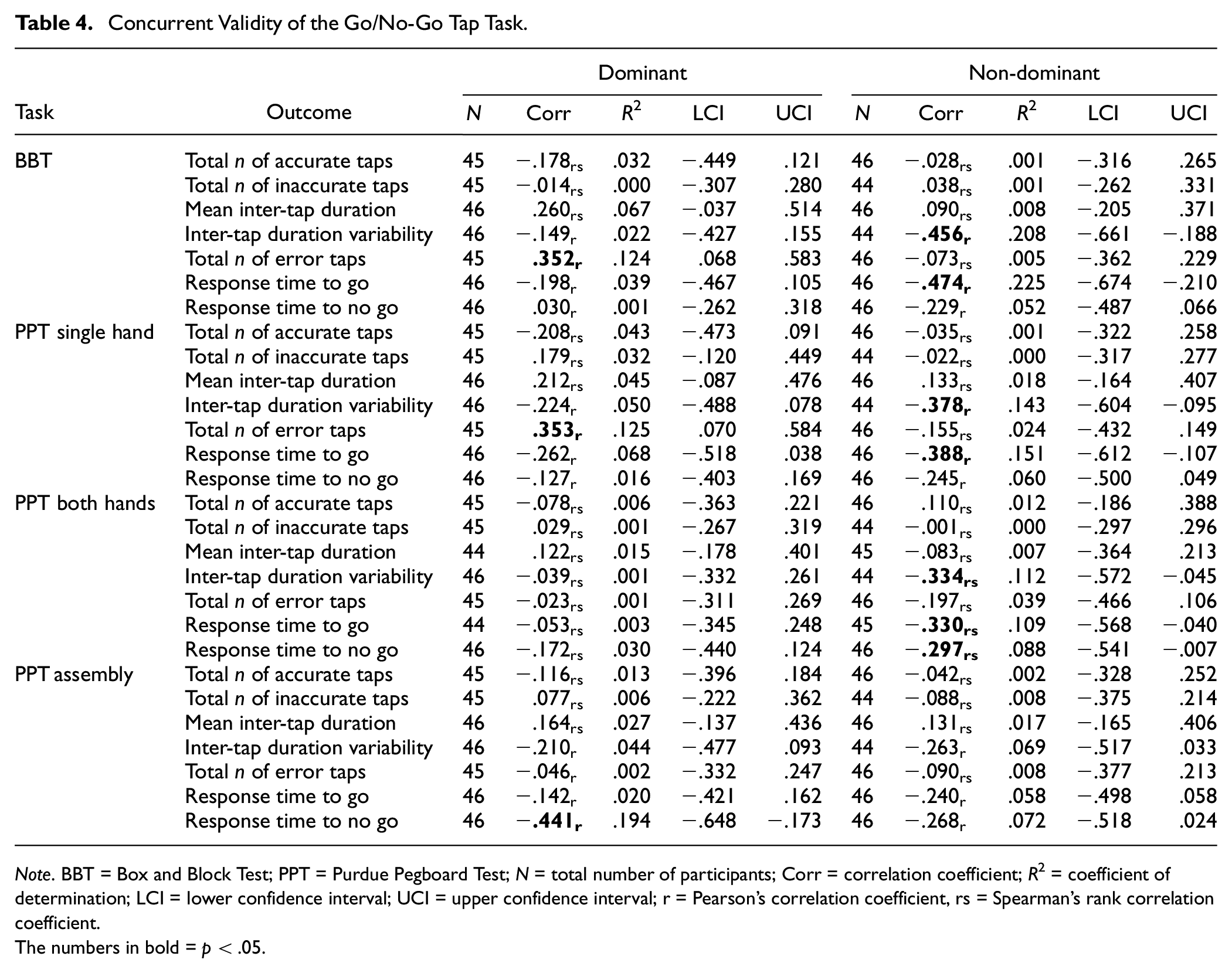

Go/No-Go Tap Task

For the Go/no-go tap task (Table 4), there were no significant associations between outcomes and the BBT or PPT for either the dominant or non-dominant hands. However, there were significant weak-to-moderate associations for some outcomes related to either the dominant hand (e.g., total n of error taps against the BBT [r = .352] and PPT single hand [r = .353]) or non-dominant hand (e.g., inter-tap duration variability against the BBT [r = −.456], PPT single hand [r = −.378], and PPT both hands [rs = −.334]). Despite the significant associations, these values fell below the predetermined cut-offs for the Go/no-go tap task to be considered a valid assessment of dexterity for the current sample; hence, the hypotheses were not supported.

Concurrent Validity of the Go/No-Go Tap Task.

Note. BBT = Box and Block Test; PPT = Purdue Pegboard Test; N = total number of participants; Corr = correlation coefficient; R2 = coefficient of determination; LCI = lower confidence interval; UCI = upper confidence interval; r = Pearson’s correlation coefficient, rs = Spearman’s rank correlation coefficient.

The numbers in bold = p < .05.

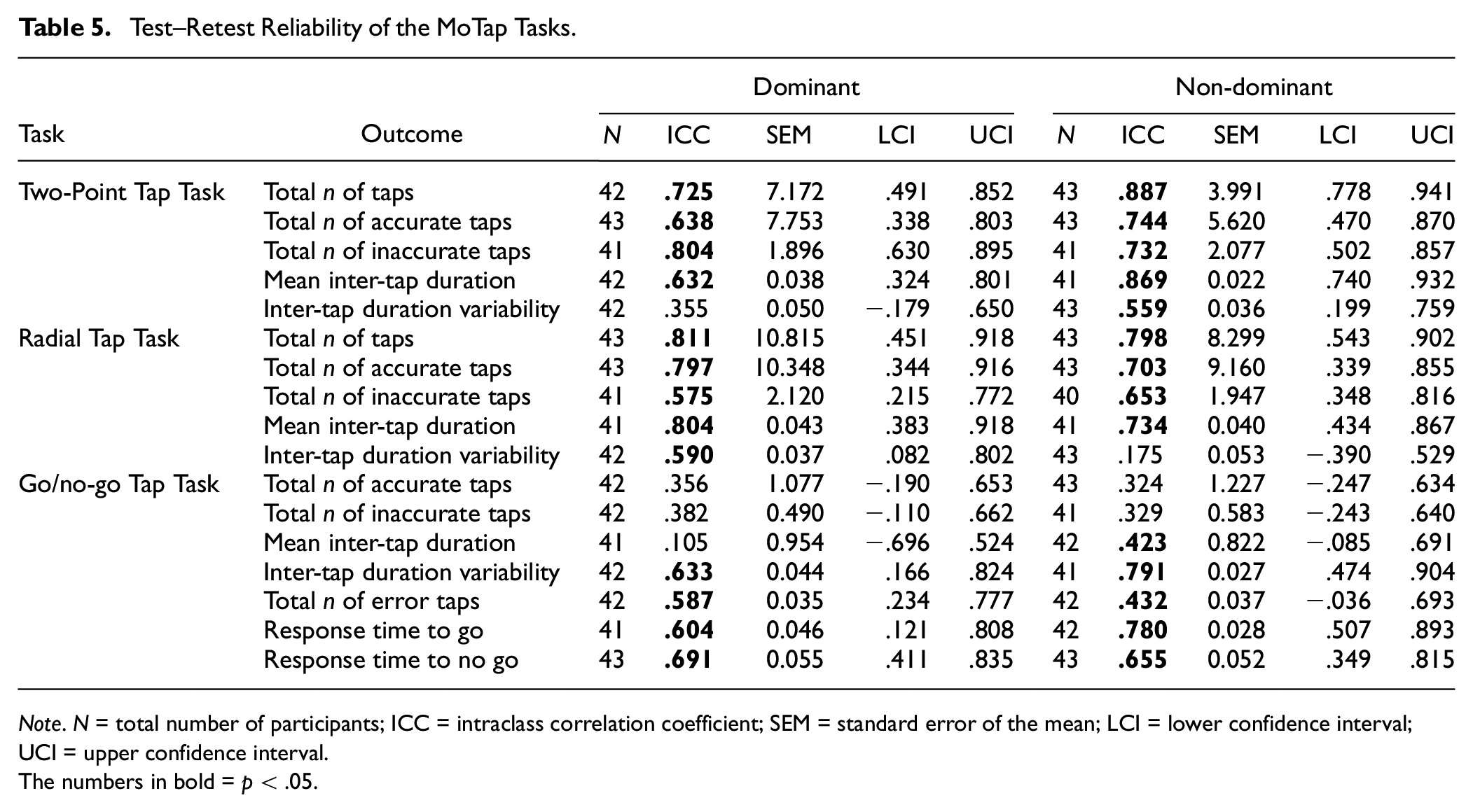

Test–Retest Reliability

Test–retest reliability of each EDNA task is presented in Table 5. An acceptable ICC (i.e., > .75) was observed for both hands on the number of total taps for the radial tap task only (Dom: ICC = .811, non-Dom: ICC = .798). Some other tasks achieved acceptable ICC values for some outcomes with either the dominant or non-dominant hand (Table 5). Although the acceptable ICC criterion was not reached, ICC values were moderate for both hands for most outcomes across the three MoTap tasks. This includes ICC values > .5 for the total number of taps, total number of accurate taps, total number of inaccurate taps, and mean inter-tap duration for both the two-point and radial tap tasks. For the go/no-go task, ICC values were > .5 for both hands for the inter-tap duration variability, total number of error taps, response time to go, and response time to no-go outcomes.

Test–Retest Reliability of the MoTap Tasks.

Note. N = total number of participants; ICC = intraclass correlation coefficient; SEM = standard error of the mean; LCI = lower confidence interval; UCI = upper confidence interval.

The numbers in bold = p < .05.

Discussion

This study aimed to assess the concurrent validity and test–retest reliability of the EDNA™ MoTap suite of tasks, evaluated against gold-standard dexterity assessments in healthy younger adults. Contrary to predictions, our results show that the MoTap tasks achieved only “weak” (0.10–0.39) to “moderate” (0.40–0.69) levels of concurrent validity, at best, and hence did not meet the pre-specified cut-offs for validity (Corr coefficient > .70). Indeed, only some outcomes for some tasks demonstrated acceptable levels of concurrent validity to justify investigations with clinical samples for either the dominant (i.e., total number of inaccurate taps on the radial tap task against the PPT assembly task) or non-dominant hands (i.e., inter-tap duration variability on the go/no-go task against the BBT). Acceptable test–retest reliability was shown for the radial tap task for the total number of taps outcome, but not for any outcomes on the two-point tap or go/no-go tasks. Mixed findings in our study may reflect the somewhat homogeneous sample of healthy adults who we tested. We suggest that the case for touchscreen-based dexterity assessments remains encouraging and that future validation of the EDNA™ MoTap assessment should consider use of a more extensive familiarization phase and examine a wider cohort of participants based on SES, health, and other correlates. These issues are discussed in detail below.

Although many of the findings reported in this study did not meet the pre-specified correlation coefficient cut-offs for the MoTap tasks, there is substantive empirical evidence suggesting touchscreen-based assessments can accurately measure dexterity across a range of settings. However, we need to be mindful that the generalizability of these findings is confined mainly to the assessment of manual dexterity (Rabah et al., 2022), dominant hand assessment (Angelucci et al., 2023), and clinical samples such as people with Parkinson’s disease (C. Y. Lee et al., 2016; Mitsi et al., 2017; Wissel et al., 2017) and stroke survivors (Mollà-Casanova et al., 2021). Moreover, there is little research to date assessing the remote and unsupervised application of these tools, despite the growing emphasis on improving accessibility and usability for patients and clinicians alike (Ostrowska et al., 2021; Pitchford & Outhwaite, 2016). Although the MoTap tasks feasibly lend themselves to remote assessment of dexterity, further adaptations are required to establish strong validity and test–retest reliability of the tasks in question.

The weak-to-moderate correlations between the MoTap assessments and traditional tasks (i.e., the BBT and PPT) may reflect the fine-motor endpoint control that needs to be enlisted when picking up and grasping small objects, an ability that is not possible in tablet-based assessments that involve finger touch only (Rabah et al., 2022). Empirical findings have also considered that these differences may affect validity and, as such, may contribute to the lack of high correlations in our findings despite significant associations (Elboim-Gabyzon & Danial-Saad, 2021; Rabah et al., 2022; Van Laethem et al., 2023). Much of the validation work has been conducted on neurological samples (with associated hand/finger impairment) where a wider range of abilities can be expected relative to our more homogeneous sample of healthy young adults (Elboim-Gabyzon & Danial-Saad, 2021; Rabah et al., 2022). Clinical tests for dexterity (e.g., BBT or PPT) have been specifically designed to differentiate dexterity between healthy individuals and neurological groups with hand and finger impairment (e.g., stroke patients; Rabah et al., 2022). Consequently, it may be that traditional hand function assessments (such as the BBT and PPT) may not address important constructs related to specific functioning required for touchscreen operation (Elboim-Gabyzon & Danial-Saad, 2021; Rabah et al., 2022).

Another consideration relates to the tasks included in the MoTap assessment and the subtle differences in movement that they could assess. Although not included in the MoTap tasks assessed here, repetitive tapping tasks are well-established and provide a window to fine-motor control (Gopal et al., 2022) in both healthy (Rabah et al., 2022) and clinical (Mollà-Casanova et al., 2021; Térémetz et al., 2015). Outcomes may include a combination of force control, tapping rate, and errors in finger selection and sequencing (Térémetz et al., 2015), capturing different components of dexterity like psychomotor speed, rhythmic timing, motor sequencing, and perceptual-motor coupling when performed to an external cue (Rabah et al., 2022). Importantly, repetitive tapping tasks have shown a high sensitivity when distinguishing between mild and moderate motor impairment and therefore may suggest a different aspect of dexterity being measured compared with the MoTap tasks assessed in this study (Mollà-Casanova et al., 2021).

Inclusion of extended familiarization tasks may have reduced the potential for practice effects, resulting in improved test–retest reliability (Jenkins et al., 2016; White et al., 2018). Familiarization sessions are known to improve the assessment of test–retest reliability on computer-based tasks (Pitchford & Outhwaite, 2016). Previous work using a computerized cognitive battery of tasks have highlighted the benefit of brief familiarization sessions to counter initial practice effects and improve reliability (White et al., 2018, 2019). Although participants were provided a minimum 20-second practice period, it is likely that this was not a sufficient period of time to reduce initial practice effects. Indeed, practice effects on simple tasks are mainly evident between the first and second time of assessment. Thereafter, performance on basic cognitive and motor tests tend to plateau, enabling clear determination of meaningful change when tests are re-administered (White et al., 2019).

Considering our increasingly tech-centric lifestyle, the utilization of digital remote assessments may provide a more ecologically valid tool compared with traditional assessments of dexterity. Furthermore, the nuances of digital assessments may uncover more sensitive measures of dexterity that are not picked up in traditional assessments but, in turn, are more pertinent to day-to-day tasks that revolve around the use of touchscreens. The MoTap tasks have potential to provide these measures; however, the limitations of the current work must be addressed before this possibility can be realized.

The MoTap assessment could be extended to include a repetitive tapping task, enabling a more comprehensive picture of fine-motor skill in otherwise healthy samples. Importantly, it is recommended that an investigation into practice effects with the MoTap tasks, and particularly the timing of a performance plateau, be conducted to provide a more detailed understanding of the test–retest reliability of the tasks.

Conclusion

The EDNA™ MoTap assessments showed a mixed pattern of significant correlations with standardized tests of manual dexterity, but not to a level that would support its use in healthy populations. Test–retest reliability results were more encouraging, showing moderate levels of reliability for numerous outcomes, but not exceeding the desired ICC ≥.90 level. Taken together, the EDNA™ MoTap tasks show some potential in the assessment of manual dexterity but require additional evaluation on a more heterogeneous sample of adults and with possible practice effects fully controlled in the test regime. Incorporation of a repetitive tapping task as part of the suite is also warranted (Gopal et al., 2022). It remains important to refine the design, validation, and implementation of digital assessments to improve accessibility and reduce costs to individuals in both clinical and non-clinical settings. Furthermore, given our current dependency on touchscreen devices, it is imperative to better understand the ecological significance of these behaviors in a tech-driven world and, hence, their relevance to assessment compared with traditional dexterity measures that may not capture the requirements of touchscreen applications.

Supplemental Material

sj-docx-1-asm-10.1177_10731911241266306 – Supplemental material for Portable Touchscreen Assessment of Motor Skill

Supplemental material, sj-docx-1-asm-10.1177_10731911241266306 for Portable Touchscreen Assessment of Motor Skill by Thomas B. McGuckian, Jade Laracas, Nadine Roseboom, Sophie Eichler, Szymon Kardas, Stefan Piantella, Michael H. Cole, Ross Eldridge, Jonathan Duckworth, Bert Steenbergen, Dido Green and Peter H. Wilson in Assessment

Footnotes

Author Contributions

P.H.W. contributed to conceptualization. T.B.M., J.L., N.R., S.E., S.K., and S.P. contributed to data curation. T.B.M. contributed to formal analysis. J.D., P.H.W. contributed to funding acquisition. T.B.M., J.L., N.R., S.E., S.K., S.P., and P.H.W. contributed to investigation. T.B.M. and P.H.W. contributed to methodology. T.B.M. and S.P. contributed to project administration. R.E. contributed to software. P.H.W. and T.B.M. contributed to supervision. T. B.M. contributed to visualization. T. B.M., M.H.C., and P.H.W. contributed to writing—original draft. All contributed to writing—review & editing.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: The authors wish to disclose that three authors, Professor P.H.W., Professor J.D., and Mr. R.E., are affiliated with Dynamic Neural Arts, the developer of the EDNA™ system. The authors declare no other competing interests.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is supported by RMIT through the Design and Creative Practice Enabling Capability Platform and the Australian Government, Department of Industry, Innovation and Science, Accelerating Commercialisation grant, an element of the Entrepreneurs’ Program. The funders have/had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript.

Supplemental Material

Supplemental material for this article is available online.