Abstract

The ability to quantify within-person changes in mental health is central to the mission of clinical psychology. Typically, this is done using total or mean scores on symptom measures; however, this approach assumes that measures quantify the same construct, the same way, each time the measure is completed. Without this quality, termed longitudinal measurement invariance, an observed difference between timepoints might be partially attributable to changing measurement properties rather than changes in comparable symptom measurements. This concern is amplified in research using different forms of a measure across developmental periods due to potential differences in reporting styles, item-wording, and developmental context. This study provides the strongest support for the longitudinal measurement invariance of the Anxiety Scale, Depression/Affective Problems: Cognitive Subscale, and the Attention Deficit Hyperactivity Disorder (ADHD) Scale; moderate support for the Depression/Affective Problems Scale and the Somatic Scale, and poor support for the Depression/Affective Problems: Somatic Symptoms Subscale of the Dutch Achenbach System of Empirically Based Assessment Youth Self-Report and Adult Self-Report in a sample of 1,309 individuals (

Central to psychology’s mission to examine mechanisms and treatments for psychopathology is the ability to measure change in symptoms over time. Studies typically quantify change via increases or decreases in total scores on self-report measures; however, this assumes that the total score quantifies symptoms the same way at each time point. For example, change score approaches assume that a score of 13 at baseline is comparable to a score of 13 at posttreatment (i.e., is largely compromised by the same symptom profile and identical factor structure). The ability for a measure to quantify the same construct, the same way, across different times points is referred to as

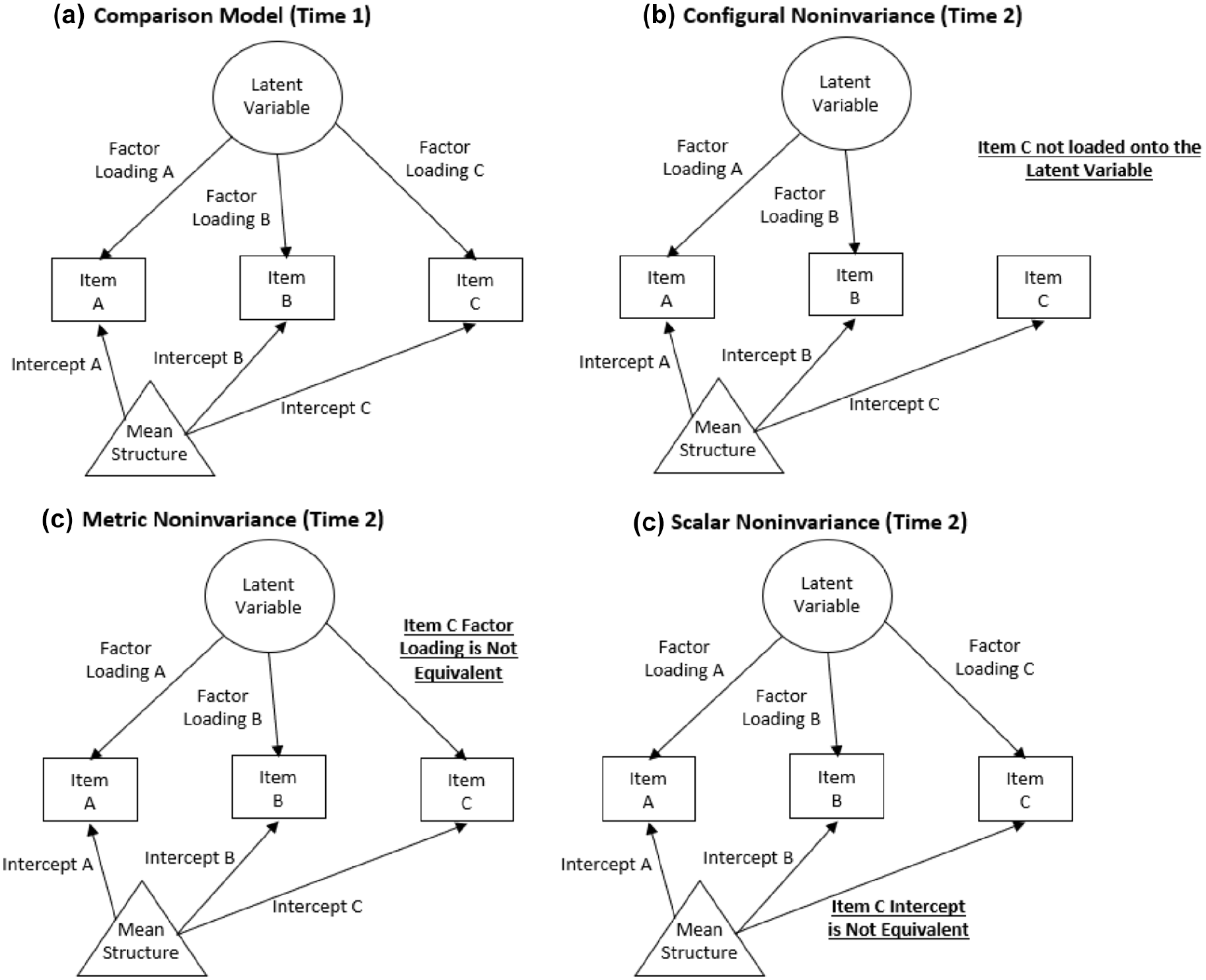

The three most commonly assessed types of measurement invariance are configural, metric (i.e., “weak”), and scalar (i.e., “strong”; for a more detailed review on measurement invariance, see Putnick & Bornstein, 2016). We provide a brief conceptual overview here, with more technical information available in the Methods section. Configural invariance refers to the equivalence of model form (i.e., which items load onto which latent constructs). Metric invariance refers to equality of factor loadings. Scalar invariance refers to equality of item intercepts (i.e., the average response to an item when the associated latent score is zero). For visualization of these measurement invariance types, see Figure 1. Without measurement invariance, a questionnaire does not measure a construct the same way across different time points, precluding mean comparison of scores to evaluate change in the underlying construct. A nonpsychological example would be to consider if you weighed yourself on a scale at home today and re-weighed yourself using the same scale from the moon tomorrow. The subject and measurement tool are constant, but the underlying measurement properties change overtime in a way that invalidates direct comparison of the two measurements.

Visualization of Measurement Invariance Types Illustrated by the Measurement Properties of Item C.

This concern is amplified when different measures are used to assess the same construct at different time points in a longitudinal study. For example, the TRacking Adolescents’ Individual Lives Survey (TRAILS) is a large prospective cohort following 11-year olds and reassessing them every 2 to 3 years. At the onset of TRAILS, participants completed the Youth Self-Report (YSR; Achenbach, 1991) from the Achenbach System of Empirically Based Assessment (ASEBA, a comprehensive set of assessments designed to assess adaptive and maladaptive functioning) to assess youth psychological health. The YSR includes 112 items and has been disaggregated into several different factor structures based on researcher/clinician needs. The TRAILS data documentation provides two strategies: syndromes (comprised of 11 scales) or

Given the size of the ASR/YSR, the few investigations into their longitudinal measurement invariance have tested select subscales to maintain computational brevity. For example, Barzeva et al. (2019) found that the social withdrawal scales were measurement invariant in people measured four times in the TRAILS study using both the YSR and ASR. Research from the Netherlands Twin Registry (Abdellaoui et al., 2012) found that the ASR Thought Problems Subscale was measurement invariant across three age groups (12–18, 19–27, and 28–59 years). However, only one time point per participant was used in this analysis, so longitudinal measurement invariance

The theoretical and clinical utility of a large, longitudinal dataset such as TRAILS for garnering developmental insight through adolescence and across the transition to adulthood is immense, if the foundational psychometric work is done to inform future longitudinal modeling and data collection. Given its widespread use in TRAILS and other studies, the present investigation evaluated the longitudinal measurement invariance of

Method

Participants

Data were drawn from the TRacking Adolescents’ Individual Lives Survey (TRAILS), a prospective cohort study examining psychosocial development and mental health in youth. Adolescents aged 11 years were recruited and invited to attend regular follow-up assessments every 2 to 3 years. Two separate cohorts were followed by TRAILS—one population-based and another clinic-based (Huisman et al., 2008; Oldehinkel et al., 2015). Adolescents in the population-based cohort were recruited from 135 schools in five municipalities in the north of The Netherlands, including both urban and rural areas. Eligible participants were required to be enrolled in primary school, and of 2,935 youth who met this criterion, 2,230 (76%) provided informed consent from both parent and child to participate. The clinic-based cohort consisted of children referred to a psychiatric outpatient clinic before the age of 11 for a variety of psychiatric and behavioral problems. Cohorts were not analyzed separately because inclusion in the “clinical cohort” was strictly determined by recruitment site, and these two groups did not account for the potentiality of (a) participants in the clinical cohort who, at a later point, had no need of/stopped receiving clinical services and (b) participants in the population cohort who, at a later point, were in need of/received clinical services. This study utilized data from 1,309 participants (

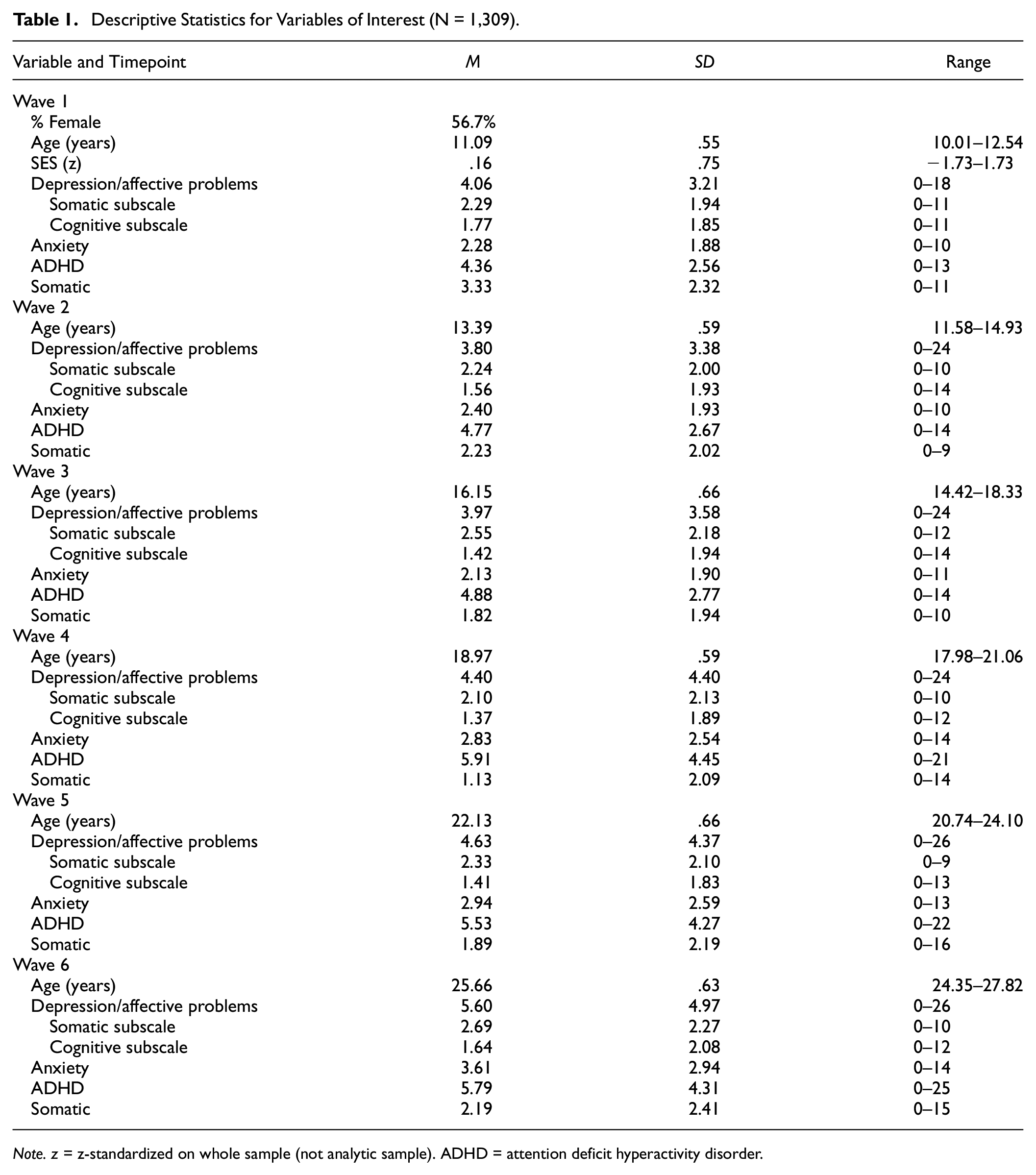

Descriptive Statistics for Variables of Interest (N = 1,309).

Procedures

In this study, symptoms were measured at each assessment (Waves 1–6) using either the YSR or the ASR (determined by participant age at the time of assessment). Children started the study with the YSR at approximately 11 years old and shifted to the ASR when they turned 16 years old (Wave 4).

Missing Data Analyses

Participants were removed if they were missing 100% of symptom data at any time point (removing these participants solved some issues with model convergence). Individual analytic datasets were created for each scale to maximize sample size by only removing participants missing data on a given

T-tests and chi-square tests examined whether the analytic sample (

Measures

Symptoms

During Waves 1 to 3, symptoms were measured using the YSR (Achenbach, 1991). During Waves 4 to 6, symptoms were measured using the ASR were used during (Achenbach & Rescorla, 2003). Item wording can be found in Table 2. All items were answered using a 3-point Likert-type scale (0–2), with higher endorsements indicating more severe symptoms. For the descriptive (Table 1) and missing data analyses (described above) involving symptom summary statistics, scores were determined by taking the average of the items responded to in the scale in interest and then multiplying by the total number of items in the scale. Symptom summary scores were not calculated for observations with <80% of item-level data.

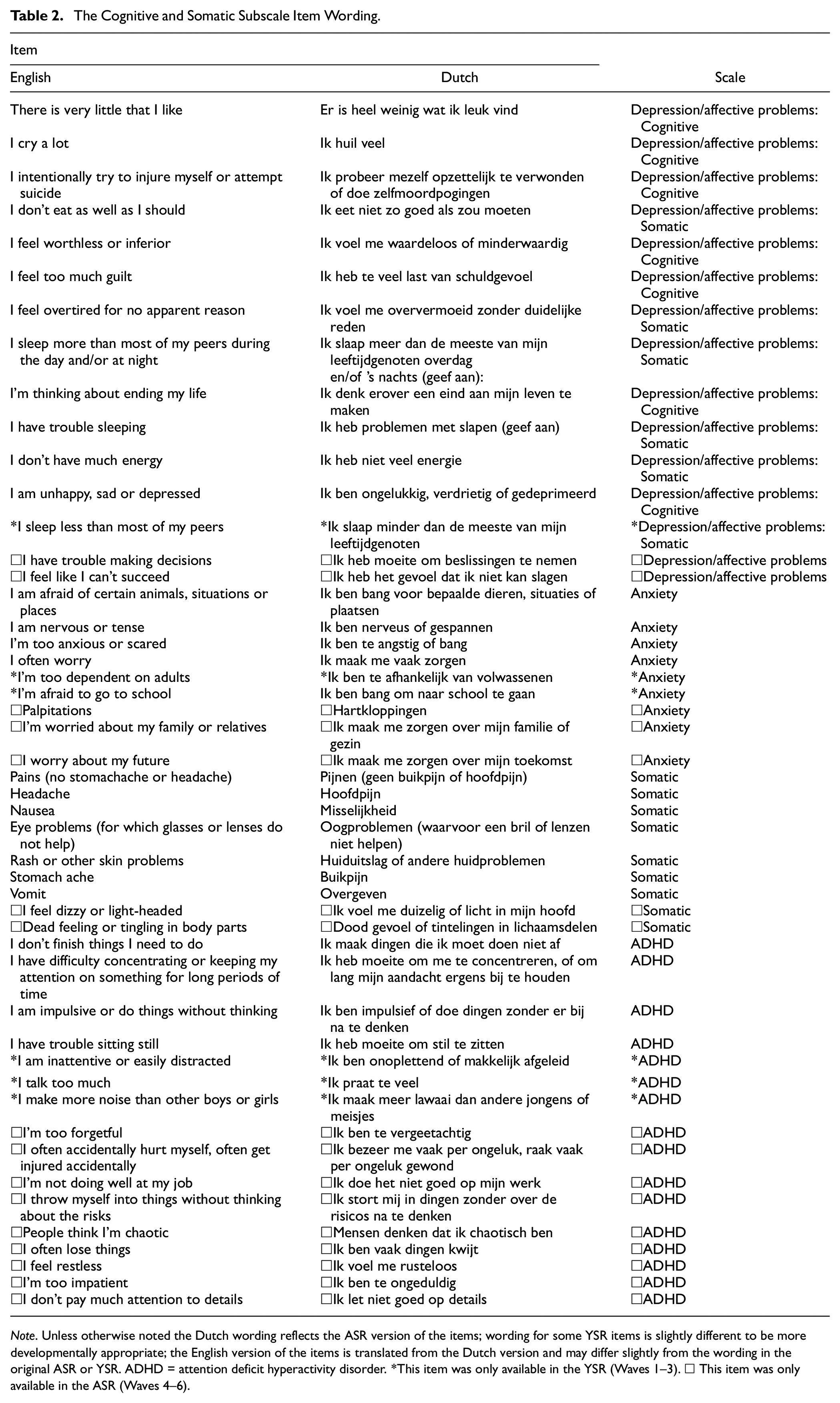

The Cognitive and Somatic Subscale Item Wording.

Depression/Affective Problems

The YSR Depression/Affective Problems Scale had 13 items (split into a seven item Cognitive Subscale and a six item Somatic Subscale based on item content in previous TRAILS studies [Bosch et al., 2009]). The ASR Depression/Affective Problems Scale had 14 items (split into a seven item Cognitive Subscale and a five item Somatic Subscale based on item content in previous TRAILS studies [Bosch et al., 2009], refer to Table 2 to compare items and wording between measures). The Ω reliability coefficient at Waves 1 and 4 (first wave using the ASR) were .74 and .86 (respectively) for the Depression/Affective Problems Scale, .58 and .72 (respectively) for the Somatic Symptoms Subscale, and .69 and .80 (respectively) for the Cognitive Symptoms Subscale.

Anxiety

The YSR Anxiety Scale had six items, and the ASR Anxiety Scale had seven items (refer to Table 2 to compare items and wording between measures). The Ω reliability coefficient at Waves 1 and 4 (first wave using the ASR) were .63 and .78, respectively.

Attention Deficit Hyperactivity Disorder

The YSR ADHD Scale had seven items, and the ASR ADHD Scale had 13 items (refer to Table 2 to compare items and wording between measures). The Ω reliability coefficient at Waves 1 and 4 (first wave using the ASR) were .71 and .85, respectively.

Somatic

The YSR Somatic Scale had seven items and the ASR Somatic Scale had nine items (refer to Table 2 to compare items and wording between measures). The Ω reliability coefficient at Waves 1 and 4 (first wave using the ASR) were .71 and .83, respectively.

Sociodemographic Variables

Participant sex was assessed at Wave 1, when participants could respond that they identified as “Female,” which was scored as “0” or “Male,” which was scored as “1.” Age was assessed at all assessments. SES was measured at Wave 1 and Wave 4. SES was estimated using five indicators: family income, maternal educational level, paternal educational level, maternal occupational level, and paternal occupational level using the International Standard Classification of Occupations (Ganzeboom & Treiman, 1996). A composite measure of SES was calculated for the TRAILS cohort based on five

Statistical Methods

All analyses were conducted in R Version 4.2.2 (R Core Team, 2013). Analyses were conducted in lavaan (Rosseel, 2012). Template code was adapted from https://longitudinalresearchinstitute.com/tutorials/item-factor-analysis-measurement-invariance-2nd-order-growth-model-ecls-k/. The analytic code and output is available as supplemental material (https://osf.io/hbafn/?view_only=65d1c791a5b74fe7ab71ee0eca56ecdc). Data are not publicly available due to privacy regulations but can be requested for replication, unconditionally and free-of-charge, from TRAILS at www.trails.nl.

All models were estimated with a theta parameterization, pairwise deletion for missing data, a combination of diagonally weighted least squares (DWLS; for parameters) and weighted least square mean and variance adjusted (WLSMV; for robust standard errors) estimation, and nonlinear minimization subject to box constraints (NMLINB) optimization. The first factor loading for each factor was constrained to 1 for identification. Variances and covariances were estimated freely. Latent variable means were constrained to zero and propensity variances for items were constrained to 1 unless otherwise specified below. Items that were in one version of a scale but not the other were still modeled at the appropriate time points to maximize fidelity to clinical use of this measure; however, items that only appeared in one version of the measure were only constrained to equality in different waves of that particular measure. For example, the item “I sleep less than most of my peers” was only assessed in the YSR (i.e., Waves 1–3). As such, the specific equality constraints for testing measurement invariance in this item were only specified in Waves 1 to 3.

As described in the introduction and shown in Figure 1, three types of measurement invariance were tested: configural, metric, and scalar (listed here with increasing stringency). The configural invariance model only imposes the constraint that each item loads onto its specified factor at each time point. The metric invariance (i.e., “weak”) model adds constraints that the factor loadings of an item on its factor are equivalent across timepoints. Finally, the scalar invariance (i.e., “strong”) model incorporates the constraint that item intercepts (in this case thresholds between item-response options) be equivalent across timepoints while latent variable means are allowed to vary. Thus, while the first timepoint for each latent variable mean is set to zero (identical to configural, metric, and scalar invariance models for scaling reasons), latent variable means are estimated freely for later timepoints. There were no additional residual variances because item responses were modeled using thresholds (given ordinal rather than continuous response scales); thus, the scalar invariance model tests both strong and strict invariance. Items that had response options that were not all endorsed at one or more timepoints were dichotomized (“0” = “0” and “1–2” = “1”) at all timepoints to facilitate comparison of item thresholds in that particular sample. The only item this was relevant for was the self-injury item.

Chi-square tests of fit are reported but were not heavily considered regarding conclusions due to over-sensitivity to negligible differences in large sample sizes. Acceptable model fit criteria were a comparative fit index [CFI] ≥ .95, root mean square-error of approximation [RMSEA] ≤ .06, and standardized root-mean-square residual [SRMR] ≤ .08 (Hu et al., 1999). Metric invariance was evaluated based on the following cut-off criteria in change of model fit comparing the metric invariance model to the configural invariance model: −.010 change in CFI, .015 change in RMSEA, and .030 change in SRMR (Chen, 2007). Scalar invariance had identical criteria when comparing the scalar invariance to the metric invariance models except the cut-off for SRMR was reduced to .010 (per Chen, 2007). It is worth noting that these cut-offs were established using continuous data. To our knowledge, cut-offs have not yet been established using ordinal data, and some estimators for ordinal data (including the DWLS/WLSMV used here) have a tendency not to discover misfit (Xia & Yang, 2019). As such, results are preliminary and would benefit from reanalysis when appropriate cut-offs for ordinal data are established.

Results

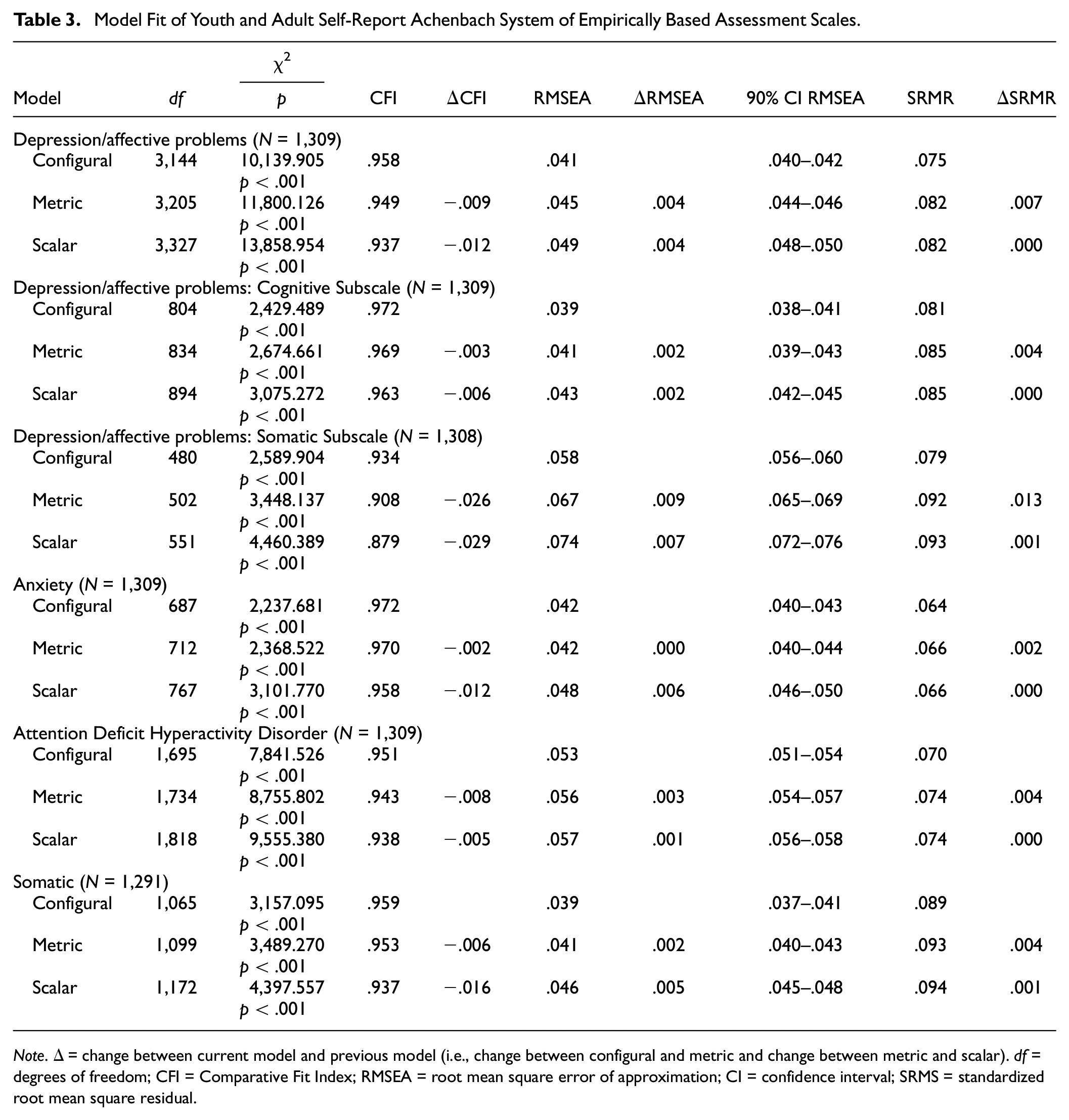

Tables 3 includes details about the fit of each model in the population-based sample and the clinic-based sample (respectively). All factor loadings and item thresholds for the models can be found in the supplemental material (https://osf.io/hbafn/?view_only=65d1c791a5b74fe7ab71ee0eca56ecdc).

Model Fit of Youth and Adult Self-Report Achenbach System of Empirically Based Assessment Scales.

Depression Symptoms/Affective Problems Scale

All three interpreted fit indices supported acceptable model fit for the configural invariance model (CFI = .958, RMSEA = .041, and SRMR = .075). Only RMSEA supported acceptable global model fit for the metric model (CFI = .949, RMSEA = .045, SRMR = .082) and the scalar model (CFI = .937, RMSEA = .049, SRMR = .082). All comparisons of model fit supported metric invariance (ΔCFI = −.009, ΔRMSEA = .004, ΔSRMR = .007). Only two out of three comparisons of model fit, specifically ΔRMSEA and ΔSRMR, supported scalar invariance (ΔCFI = −.012, ΔRMSEA = .004, ΔSRMR = .000).

Cognitive Symptoms Subscale

Two out of three fit indices (CFI and RMSEA) suggested acceptable model fit for all three invariance models (configural: CFI = .972, RMSEA = .039, SRMR = .081; metric: CFI = .969, RMSEA = .041, SRMR = .085; scalar: CFI = .963, RMSEA = .043, SRMR = .085). All three comparisons of model fit supported metric and scalar invariance of the Cognitive Symptoms Subscale (metric: ΔCFI = −.003, ΔRMSEA = .002, ΔSRMR = .004; scalar: ΔCFI = −.006, ΔRMSEA = .002, ΔSRMR = .000).

Somatic Symptoms Subscale

Two out of three fit indices (RMSEA and SRMR) suggested acceptable model fit for the configural invariance model (configural: CFI = .934, RMSEA = .058, SRMR = .079). All model fit indices indicated unacceptable model fit for the metric and scalar models (metric: CFI = .908, RMSEA = .067, SRMR = .092; scalar: CFI = .879, RMSEA = .074, SRMR = .093). Both ΔRMSEA and ΔSRMR supported metric and scalar invariance of the Somatic Symptoms Subscale (metric: ΔRMSEA = .009, ΔSRMR = .013; scalar: ΔRMSEA = .007, ΔSRMR = .001). ΔCFI was the only comparison of model fit that did not support metric and scalar invariance (metric ΔCFI = −.026, scalar ΔCFI = −.029).

Anxiety Scale

All three interpreted fit indices supported acceptable global model fit for all three invariance models (configural: CFI = .972, RMSEA = .042, and SRMR = .064; metric: CFI = .970, RMSEA = .042, and SRMR = .066; scalar: CFI = .958, RMSEA = .048, and SRMR = .066). All comparisons of model fit supported metric invariance (ΔCFI = −.002, ΔRMSEA = .000, ΔSRMR = .002). Only two out of three comparisons of model fit, specifically ΔRMSEA and ΔSRMR, supported scalar invariance (ΔCFI = −.012, ΔRMSEA = .006, ΔSRMR = .000).

ADHD Scale

All three interpreted fit indices suggested acceptable global model fit for the configural invariance model (CFI = .951, RMSEA = .053, SRMR = .070). Only two of the three interpreted fit indices (specifically, RMSEA and SRMR) suggested acceptable global model fit for the metric and scalar invariance models (metric: CFI = .943, RMSEA = .056, SRMR = .074; scalar: CFI = .938, RMSEA = .057, SRMR = .074). All three comparisons of model fit supported metric and scalar invariance (metric: ΔCFI = −.008, ΔRMSEA = .003, ΔSRMR = .004; scalar: ΔCFI = −.005, ΔRMSEA = .001, ΔSRMR = .000).

Somatic Scale

Two of the three interpreted fit indices (CFI and RMSEA) supported acceptable global model fit for the configural and metric invariance models (configural: CFI = .959, RMSEA = .039, and SRMR = .089; metric: CFI = .953, RMSEA = .041, and SRMR = .093). Only RMSEA supported acceptable global fit of the scalar invariance model (CFI = .937, RMSEA = .046, SRMR = .094). All comparisons of model fit supported metric invariance (ΔCFI = −.006, ΔRMSEA = .002, ΔSRMR = .004). Only two out of three comparisons of model fit, specifically ΔRMSEA and ΔSRMR, supported scalar invariance (ΔCFI = −.016, ΔRMSEA = .005, ΔSRMR = .001).

Discussion

The YSR and ASR are widely used self-report measures of psychological symptoms and well-being. To facilitate their use across developmental periods in research and clinical practice, the YSR and ASR were designed to be comparable measures designed to be developmentally appropriate for youth and adults, respectively. However, the use of these measures to quantify change in symptoms for the same individual requires that they assess psychopathology in the same way across time and across measure forms despite different item wordings to complement intended developmental stages (i.e., YSR→ASR)—otherwise known as longitudinal measurement invariance.

To date, no study has investigated the longitudinal measurement invariance of the Depression/Affective Problems Scale (or its constituent Cognitive and Somatic Subscales), Anxiety Scale, ADHD Scale, and Somatic Scale of the YSR and ASR in a sample where participants completed both measures. This study finds differential support for each of these measures, underscoring the value in separately considering the psychometric properties of multidimensional scales. Results will be discussed in the order they were presented in the Results section (i.e., Depression/Affective Problems, Anxiety, ADHD, and Somatic).

Out of the Depression/Affective Problems Scale, the strongest support was for the Cognitive Symptoms Subscale, which featured consistently good model fit (except the SRMR which was consistently above the cut-off) and all comparisons of model fit indices supported all tested levels of invariance. There was slightly less support for the broader Depression/Affective Problems Scale. Specifically, while all three change indices supported metric invariance, ΔCFI did not support scalar invariance. While the change in model fit statistics is the focal measurement of interest in invariance testing (because it focuses on how model fit reacts to the constraints that define the invariance), it is worth considering that all three absolute model fit statistics (CFI, RMSEA, SRMR) only indicated adequate fit for the configural model. Neither CFI not SRMR supported adequate global fit of the metric or scalar invariance models. There was less support for the global model fit, and longitudinal measurement invariance of, the Somatic Subscale of the Depression/Affective Problems Scale. Two relative change metrics, ΔRMSEA and ΔSRMR, supported the metric and scalar invariance of this scale; however, ΔCFI supported neither metric nor scalar invariance. In fact, the magnitude of the changes in CFI were quite notable (2.6–2.9 × the acceptable cut-off) relative to the other models reported here. With respect to global model fit, only two indices (RMSEA and SRMR) supported acceptable model fit for configural invariance. No global model fit indices supported the metric or scalar invariance models. Consequently, combination use of the Depression/Affective Problems Scale and Cognitive Symptom Subscale of the YSR and ASR are likely suitable for clinical work or research in adolescent and/or adult populations when depression symptoms are of interest; however, the Somatic Symptoms Subscale be used with caution or with adjustments to account for measurement noninvariance (Putnick & Bornstein, 2016).

The Anxiety Scale showed the strongest support for both global model fit and longitudinal measurement invariance across the tested scales, as all metrics supported its psychometric properties except ΔCFI for the scalar invariance model. The ADHD Scale, which had the greatest item-level differences between the YSR and ASR, had consistently strong support for longitudinal measurement invariance (although the CFI was below the cutoff for acceptable model fit in both the metric and scalar invariance models). Finally, all three relative change metrics supported metric invariance and two of the three (ΔRMSEA and ΔSRMR) supported scalar invariance of the Somatic Symptoms Scale. However, it is worth noting that the SRMR was above the cutoff for acceptable global model fit in all three models, and CFI was also below the acceptable cutoff in the scalar invariance model.

One of the key strengths of this study is the inclusion of both the YSR and ASR across multiple time points. Thus, instead of solely testing the longitudinal measurement invariance of one of these measures or using the YSR and ASR in different groups, we were able to evaluate the appropriateness of transitioning from the YSR to the ASR for the same participant or client, as most appropriate for their age. In addition, the sample was large enough to bolster confidence in the generalizability of these findings. Generalizability is further amplified by the fact that this is not an exclusively clinical sample; thus, there are less concerns regarding restriction of range or Berkson’s Bias (Berkson, 1946) than if this study were conducted in a strictly clinical or nonclinical sample. Finally, this study is also the first we are aware of that tested the longitudinal measurement invariance of all of the

However, this study should also be considered in light of its limitations. First, the self-injury item had to be dichotomized due to lack of participants selecting the most severe option at some waves. Although this is not surprising given the item content relative to the ages of assessment, these are still modeling deviations from standard scoring of the YSR and ASR. Second, as would be expected of most psychiatric symptom data, responses were largely skewed toward less severe responses. Third, meaningful analyses comparing population versus clinical subpopulations were not possible with these data, given lack of information regarding ongoing clinical status in either group.

Conclusion

In conclusion, this study supports the longitudinal measurement invariance of the YSR and ASR Depression/Affective Problems Scale, Cognitive Symptom Subscale of the Depression/Affective Problems Scale, Anxiety Scale, ADHD Scale, and Somatic Scale. The greatest concerns for longitudinal measurement invariance were for the Somatic Symptoms Subscale of the Depression/Affective Problems Scale. Consequently, clinicians and researchers should carefully consider which items to use, and how to aggregate them, when considering the YSR/ASR as a potential measure to track mental health symptoms overtime and across developmental stages. However, additional work is needed to replicate this study in other samples (e.g., in active episodes of poor mental health) and with different durations between assessments.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: D.P.M. was supported by the National Research Service Award F32 MH130149 and grant no. OPR21101 from the California Governor’s Office of Planning and Research/California Initiative to Advance Precision Medicine. NMG was supported by Harvard University’s Mind Brain Behavior Interfaculty Initiative and the National Institute Of Mental Health of the National Institutes of Health under Award Number K23MH132893. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health. TRAILS has been financially supported by grants from the Netherlands Organization for Scientific Research NWO (Medical Research Council program grant GB-MW 940-38-011; ZonMW Brainpower grant 100-001-004; ZonMw Risk Behaviour and Dependence grants 60-60600-97-118; ZonMw Culture and Health grant 261-98-710; Social Sciences Council medium-sized investment grants GB-MaGW 480-01-006 and GB-MaGW 480-07-001; Social Sciences Council project grants GB-MaGW 452-04-314 and GB-MaGW 452-06-004; ZonMW Longitudinal Cohort Research on Early Detection and Treatment in Mental Health Care grant 636340002; NWO large-sized investment grant 175.010.2003.005; NWO Longitudinal Survey and Panel Funding 481-08-013 and 481-11-001; NWO Vici 016.130.002, 453-16-007/2735 and Vi.C.191.021; NWO Gravitation 024.001.003), the Dutch Ministry of Justice (WODC), the European Science Foundation (EuroSTRESS project FP-006), the European Research Council (ERC-2017-STG-757364 en ERC-CoG-2015-681466), Biobanking and Biomolecular Resources Research Infrastructure BBMRI-NL (CP 32), the Gratama foundation, the Jan Dekker foundation, the participating universities, and Accare Centre for Child and Adolescent Psychiatry.