Abstract

Although the

A plethora of psychometric research highlighted various sources of systematic variation that can affect multi-item measurements next to the latent attribute a scale intends to measure (e.g., Meredith, 1993; Millsap, 1997, 2007; Podsakoff et al., 2003; van Bork et al., 2022). Even when different items aim to measure the same attribute, semantic multidimensionality or wording effects may occur due to differences in the item formulations (e.g., Gnambs, 2015; Gu et al., 2017; Marsh et al., 2010; Ponce et al., 2021; Schroeders & Gnambs, 2020). Furthermore, the raters’ familiarity with the item content or individual response styles can introduce systematic variation in measurements (e.g., Adams et al., 2019; Liu et al., 2017). Recent research also highlighted that even single items can carry substantial meaning beyond the common trait (e.g., Achaa-Amankwaa et al., 2021; McCrae et al., 2019; Stewart et al., 2021), which might manifest as multidimensionality for individual items in longitudinal data based on the assumption of stable individual item effects across multiple measurement occasions (e.g., Eid, 1996; Geiser & Lockhart, 2012; Kenny, 2021; Marsh & Grayson, 1994).

Following the (revised) latent-state-trait (LST-R) theory (Steyer et al., 2015), the response of a person to an item is determined by four factors, (a) the attribute of the person at the occasion of measurement typically referred to as a trait, (b) the situation in which the person is assessed, (c) measurement error (random variation), and (d) systematic effects of an item. To account for the latter, previous examinations of psychological measures have acknowledged item-specific traits (e.g., Eid & Kutscher, 2014; Joshanloo, 2022; López-Benítez et al., 2019; Scarpato et al., 2021), or method effects for individual items (Cogo-Moreira et al., 2021; Erhardt et al., 2022; Geiser et al., 2019; Holtmann et al., 2020; Thielemann et al., 2017). Although modeling item-specific traits allows disentangling situation-specific effects and modeling a latent trait for each item across different time points, this approach confounds common and specific item effects (i.e., Factors

The present study demonstrates the potential of person-specific item effects for providing a nuanced specification of a latent attribute of interest and exemplifies this approach with the five-item

The (Multi-)Dimensionality of the SWLS

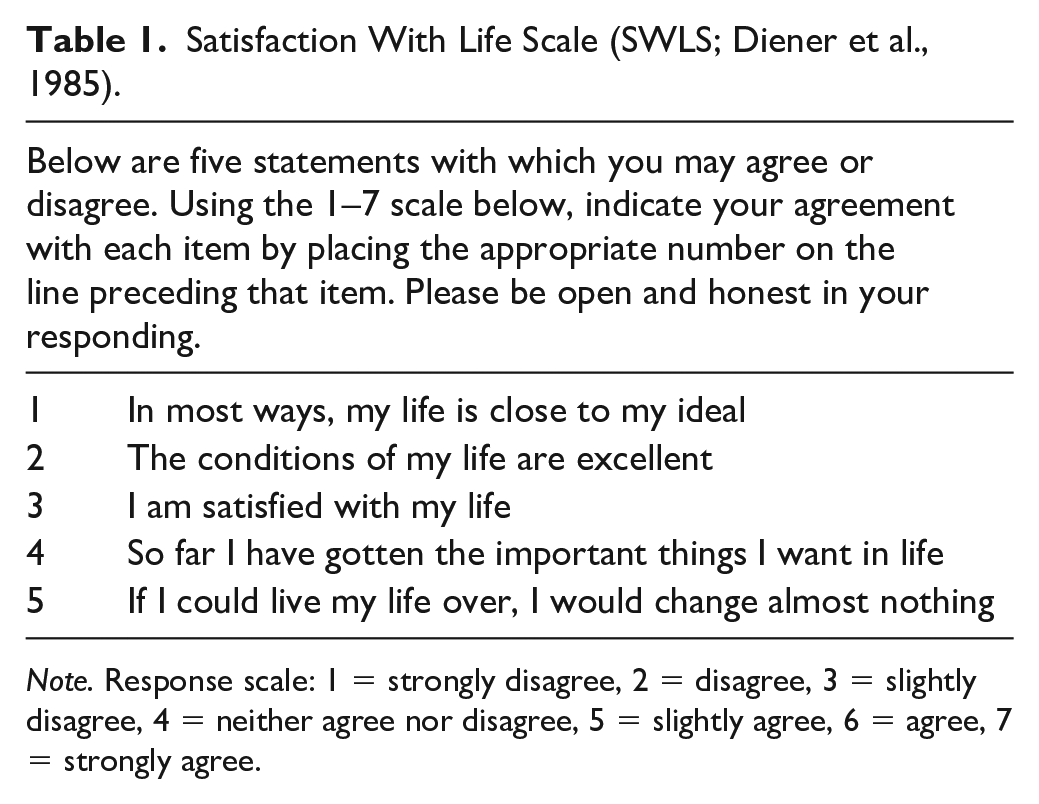

The SWLS (Diener et al., 1985) is a brief instrument including only five items for measuring the cognitive aspect of subjective well-being in the form of life satisfaction ratings (see Table 1 for the items). In contrast to other instruments that also consider life satisfaction in specific domains such as relationships, health, or finances, for example, the

Satisfaction With Life Scale (SWLS; Diener et al., 1985).

The development of the SWLS was guided by a unidimensional conceptualization and supported a single dimension using principal-axis factor analysis (Diener et al., 1985). Subsequently, the SWLS has been repeatedly subjected to exploratory and confirmatory factor analytic research (see Pavot & Diener, 2008, for a review). Although these studies overwhelmingly demonstrated that a single factor tends to account for the majority of the variance of the responses to the SWLS, some analyses indicated that different items might exhibit also unique variance (see Erhardt et al., 2022; Holtmann et al., 2020; Pavot & Diener, 1993, 2008). Especially, the fifth item of the scale (“If I could live my life over, I would change almost nothing”) frequently showed lower factor loadings and item-total correlations as compared with the first four items of the scale (e.g., Pavot & Diener, 2008; Senecal et al., 2000). Some research even suggested that the items might capture different facets of life satisfaction because the last two items refer to the past, whereas the remaining items address current satisfaction (e.g., Bai et al., 2011; Hultell & Gustavsson, 2008; Sachs, 2003).

Thus, although the SWLS is typically considered a unidimensional scale, there is some evidence that systematic differences exist between the items. With regard to the construction of the scale—building on the respondent’s individual cognitive processes—it is plausible that the five items differ not only by a constant difficulty or discrimination parameter, but differences for assessing life satisfaction with different items can be person-specific. This is supported by recent applications of Erhardt et al. (2022) and Holtmann et al. (2020), who modeled SWLS items with person-specific item effects. So far, these types of analyses are rare in applied research, because they have rather strong data requirements and involve complex psychometric models. An introduction into one of these modeling approaches that allows for the specification of person-specific item effects in longitudinal data is provided in the Appendix. These challenges notwithstanding, psychometric analyses with item-effect variables can help gain a better understanding of the substantive contribution of person-specific item effects to explaining psychological or behavioral outcomes of subjective well-being.

The Contribution of Person-Specific Item Effects for Subsequent Analysis

Previous research on person-specific item effects highlighted several advantages of the more complex psychometric models in comparison to unidimensional construct definitions at each time point (Cogo-Moreira et al., 2021; Erhardt et al., 2022; Geiser et al., 2019; Holtmann et al., 2020; Thielemann et al., 2017) such as (a) less restrictive assumptions on the factorial structure, which can substantially increase model fit, (b) a more accurate specification of the latent states that account for person-specific item effects in the response process, or (c) the possibility to study the person-specific item effects, for instance, in test construction (e.g., for item selection). However, so far, the contribution of person-specific item effects for applied psychological research has received little attention. Therefore, we consider different perspectives and further investigations on the meaning of person-specific item effects in the SWLS.

Different Perspectives on the Meaning of Person-Specific Item Effects

The presence of person-specific item effects in the SWLS suggests that different items for assessing life satisfaction are understood or dealt with differently by the respondents. Such effects have also been referred to as “systematic error” (see van Bork, 2019; van Bork et al., 2022) which implies two opposite interpretational perspectives: The person-specific item effects are systematic and (a) contribute useful, content-related information for substantial analyses or (b) reflect a form of measurement error, thus, representing nuisance for substantive analyses.

Following the first perspective, person-specific item effects can be considered stable person characteristics that measure a more nuanced concept of life satisfaction based on the item content. Like in individual difference research focusing on so-called personality nuances (e.g., McCrae et al., 2019; Stewart et al., 2021), where item effects are considered secondary traits that are closely related to the focal construct but reflect unique domain content not shared with the other items. Such a unique domain content for the SWLS items may refer to the different perspectives on life satisfaction. For example, Item 3 assesses life satisfaction most directly, while Items 1 and 2 are more specific as they refer to ideal and excellent life conditions. In contrast, Items 4 and 5 include a retrospective component that is not shared by the other items (see Bai et al., 2011; Hultell & Gustavsson, 2008; Sachs, 2003). Thus, one could imagine that a respondent might experience high life satisfaction, even though his or her conditions do not perfectly match one’s ideal. Even though someone would like to change parts of her/his current life, this might not strongly affect the global assessment of her/his life. As such, each item might capture a slightly different aspect of life satisfaction that is not shared by the other items and, thus, can reflect stable interindividual differences between persons beyond the common trait (i.e., global life satisfaction).

In contrast, the second perspective considers person-specific item effects as stable person characteristics, which are not conceptually related to life satisfaction, but represent distinct domain-independent effects. For instance, response styles of the persons can systematically affect item responses and will be captured as person-specific item effects, if they are not constant for different items, but interact with item characteristics like content, wording, or length (e.g., Adams et al., 2019; Kam & Fan, 2020; Liu et al., 2017). For the SWLS, the item instruction and response scale are equal for all items. Similarly, the items differ only slightly in length and complexity. Thus, structural differences between SWLS items may mainly refer to the specific content. Still domain-independent effects are possible, due to more general person characteristics like familiarity with the item content or motivation that can systematically affect the responses to (individual) items (van Bork et al., 2022). Also, other sources of method variance may be present, for instance, groups of persons can systematically respond differently to specific items. As an example, Holtmann et al. (2020) acknowledged rater-specific effects (i.e., differences between self, parent, and peer ratings), which can be disentangled from person-specific item effects in their modeling approach. An overview of different sources of method variance is provided in Podsakoff et al. (2003). Thus, while the second perspective treats person-specific item effects as a form of error that introduces bias in relations among latent constructs if it is not accounted for (e.g., Podsakoff et al., 2003), the first perspective views person-specific item effects as a facet of domain content that may be useful not only on psychometric grounds but also for substantive analyses. Although modeling person-specific item effects does not allow for distinguishing the different sources of multidimensionality, it allows for identifying whether multidimensionality is present and, more importantly, for further investigations on the item effects.

Further Investigations on the Meaning of Person-Specific Item Effects

Scrutinizing person-specific item effects is important to gain a better understanding of the identified multidimensionality and can help discern different aspects of the focal construct. To do so, the specification of the latent variables becomes essential because the interpretation of the latent variables varies depending on how the latent variable was defined, that is, the chosen identification constraints (see the Appendix for psychometric details). Typically person-specific item effects are modeled as differences between a given item and a latent state variable as measured by a reference item. Consequently, the means, variances, and correlation coefficients of the latent state variables and item–effect variables can substantially vary depending on the chosen reference item. For example, choosing the third SWLS item as the reference, which measures life satisfaction most directly, will allow for investigating differences of all other items to this direct measure. Instead, when choosing the fifth SWLS item as the reference, which shows the largest differences in psychometric properties in the scale, will allow for describing differences to this retrospective evaluation of life satisfaction. From a methodological perspective, both identification constraints are equally valid and no preference can be given to either one. However, the choice matters from a substantive, content-related point of view because the resulting latent variables are interpreted differently. Thus, the choice of the reference item should be guided by theoretical considerations that allow properly addressing the specific research question at hand.

It is also straightforward to integrate a measurement model with person-specific item effects into a larger structural equation model to gain a deeper understanding of the response process. This has recently been demonstrated in the investigations of Erhardt et al. (2022) and Holtmann et al. (2020) for the SWLS and by Thielemann et al. (2017) for the life satisfaction scale of the FPI-R. For example, Holtmann et al. (2020) showed that person-specific item effects were robust across different rater groups, while Thielemann et al. (2017) examined several explanatory variables to explain item-effect variables. Finally, Erhardt et al. (2022) investigated person-specific item effects in a multi-construct context. They investigated the homogeneity of the correlation structure between item-effect variables and states, both within and between constructs. In their application, a heterogeneous correlation structure that matched the item content was considered as an indicator for semantic multidimensionality in the five items of the SWLS.

Although previous research demonstrated the robustness of person-specific item effects and also tried to explain them based on item content and bivariate relations with other constructs, little is known about their contribution to substantive analyses in terms of their incremental validity. Incremental validity investigates the degree to which a new measure of a construct explains or predicts a phenomenon of interest relative to other measures (e.g., Hunsley & Meyer, 2003). Accordingly, we are interested in whether person-specific item effects in the SWLS provide additional information for predicting relevant criterion variables beyond the common states. Our application focuses on measures of psychological and physical health because of the SWLS’ popularity in the epidemiological and clinical context (e.g., Pavot & Diener, 2008).

The Present Study

The present study examines the relevance of person-specific item effects for predictive analyses. We apply a multi-state model with latent difference variables (e.g., Erhardt et al., 2022; see also Appendix) for the measurement of item effects in the SWLS (Diener et al., 1985) that was administered at three measurement occasions. As suggested by previous investigations (e.g., Erhardt et al., 2022), substantial person-specific item effects were expected that may be valuable for substantive analyses. Accordingly, we first detail the multidimensionality in our application and consider two different identification schemes. Then, we investigate the contribution of the item–effect variables for predictive analyses of two health outcomes (i.e., indices of psychological and physical health) and explore whether they explain incremental variance beyond the latent state variables. Finally, the generalizability of these results is demonstrated by replicating the analyses with the identic sample for different measurement periods.

Method

Sample and Procedure

The

We report how we determined our sample size, all data exclusions, and all measures in the study. For the present analyses, we considered six measurement occasions from 2008 to 2013. The complete sample originally consisted of

Instruments

The five SWLS items (Diener et al., 1985) were administered as part of a personality inventory on identical item positions (014–018) at all six measurement occasions. The items were presented in Dutch on 7-point response scale from 1 = strongly disagree to 7 = strongly agree. No missing values were observed for any item—meaning there was no item-specific non-response for the participants that responded on all six measurement occasions. The item means fell between 4.56 and 5.57, while the respective standard deviations ranged from 1.08 to 1.63 (see Table S1 in the Supplemental Material for descriptive statistics).

Health outcomes for the respondents were measured with two instruments in 2010 and 2013 (i.e., the last wave in each of the two analysis periods). The short

Statistical Analyses

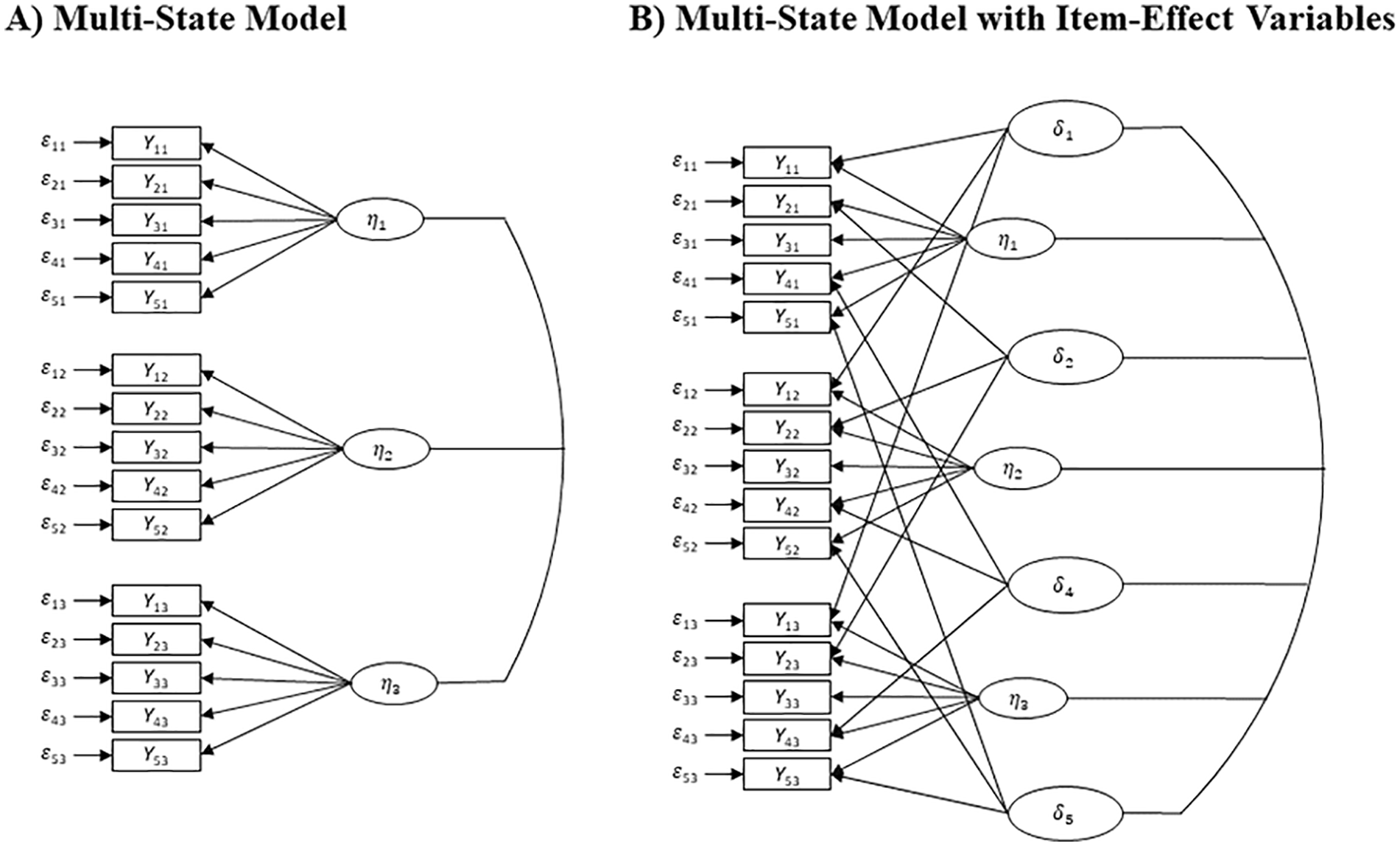

Multidimensionality in the SWLS was evaluated by comparing a multi-state model without item–effect variables to an extended model with item–effect variables (see Erhardt et al., 2022; Thielemann et al., 2017) as illustrated in Figure 1. The manifest variables

Multi-State Model With and Without Item–Effect Variables for the Satisfaction With Life Scale (Diener et al., 1985).

We estimated the different structural equation models using a maximum likelihood algorithm in

Open Practices

The raw data and study material are available to the research community at https://lissdata.nl. Moreover, a detailed analysis code that allows for reproducing the reported findings is available in a public repository at https://osf.io/ekcqh/?view_only=7085df46f5494121b23eae9b3c28ace1.

Results

Person-Specific Item Effects in the SWLS

In accordance with the initial theoretical considerations for the item content and presentation, we investigated the measurement model for the SWLS with the two different reference items (3 or 5) in the two measurement periods.

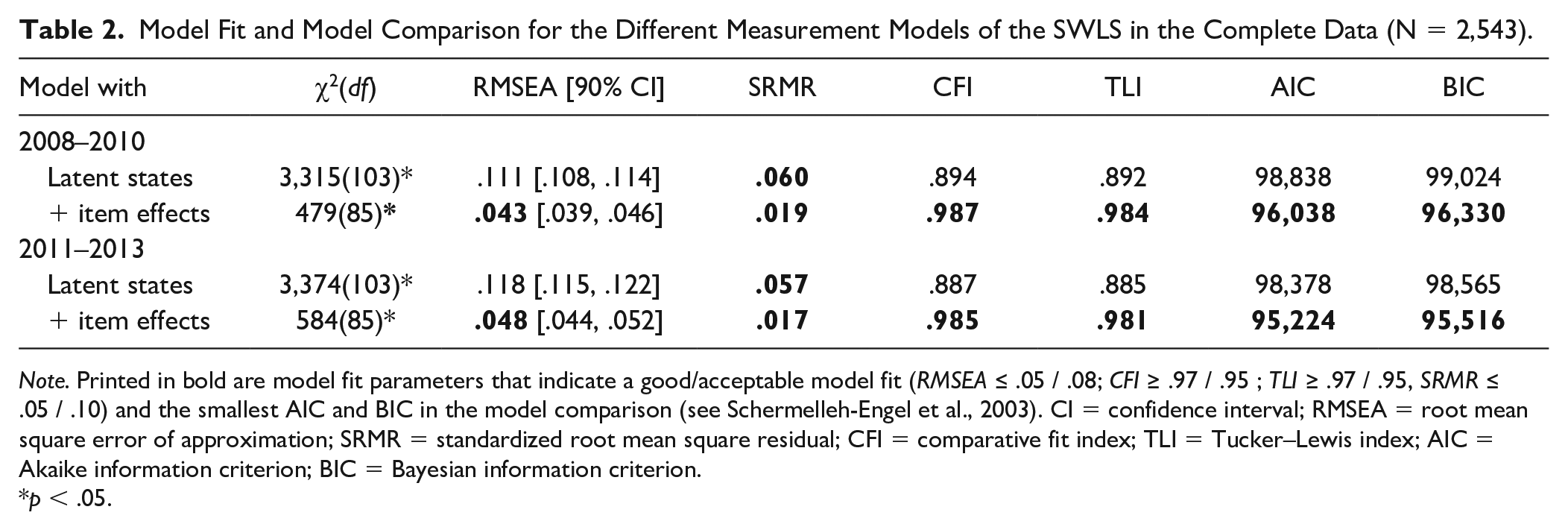

A unidimensional model for the SWLS was not supported at the different measurement periods as indicated by RMESAs > .11 and CFIs/TLIs < .90 (see Table 2). Instead, the inclusion of person-specific item effects resulted in substantially improved model fits. The choice of the reference item does not affect model fit, as both identification schemes are equally valid. At the two measurement periods, the RMSEAs were .04 and .05, while the SRMRs, CFIs, and TLIs fell at .02, .99, and .98, respectively. Moreover, all model comparisons using the information criteria favored the models with item-effect variables. The results for the incomplete data were the same (see Supplemental Table S6).

Model Fit and Model Comparison for the Different Measurement Models of the SWLS in the Complete Data (N = 2,543).

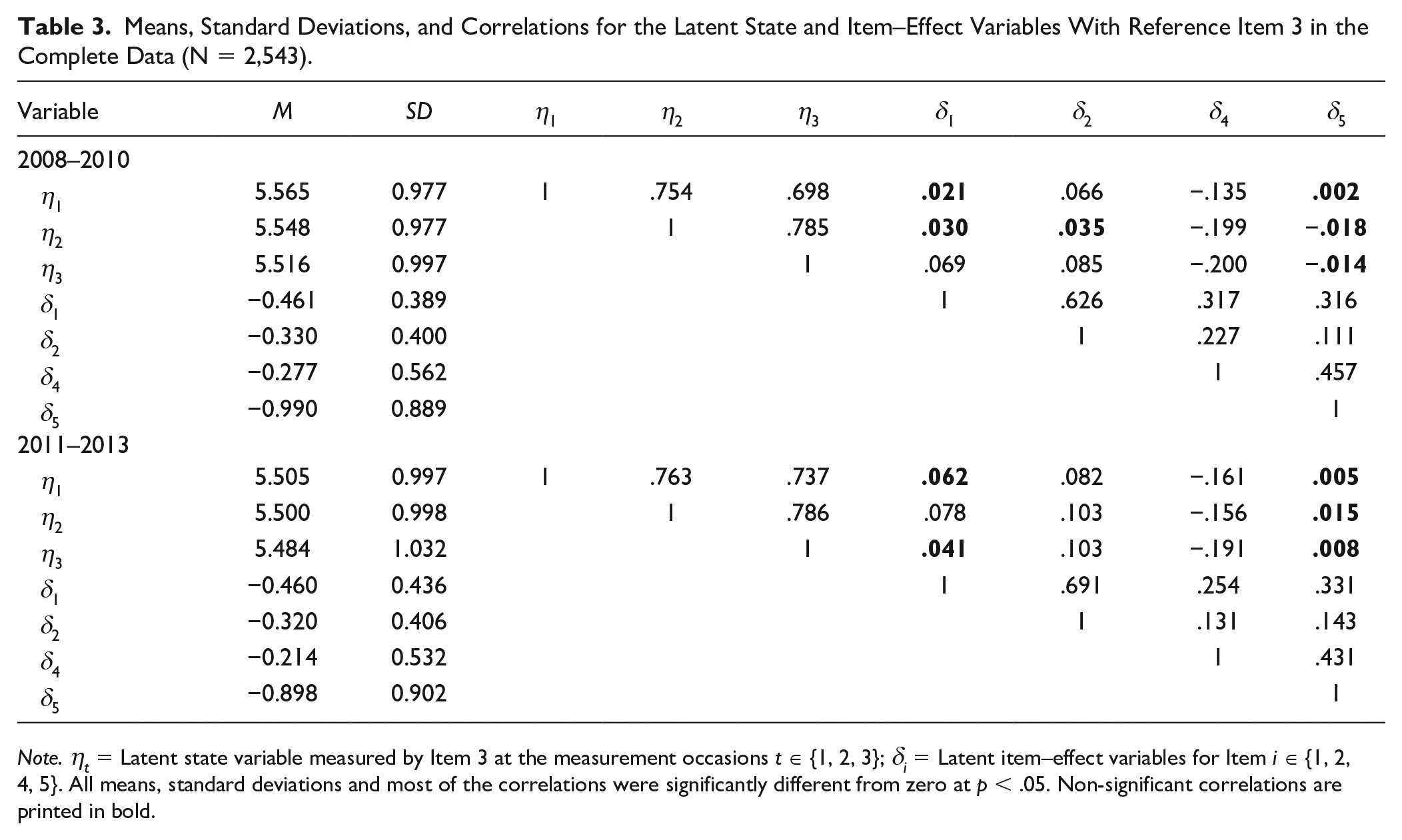

Accordingly, we further investigated the parameter estimates of the multi-state model with item-effect variables. Table 3 provides the parameter estimates when defining the states with Reference Item 3 and the item-effect variables as stable intra-individual differences of each other item to this reference. The latent states show that the participants were, on average, rather satisfied with their lives with latent means around 5.5 on a 7-point scale. Substantial interindividual variation of around one scale point showed that respondents differed in their reported life satisfaction. Moreover, life satisfaction was a rather stable construct in the studied sample as demonstrated by the substantial correlation between the latent states that exceeded

Means, Standard Deviations, and Correlations for the Latent State and Item–Effect Variables With Reference Item 3 in the Complete Data (N = 2,543).

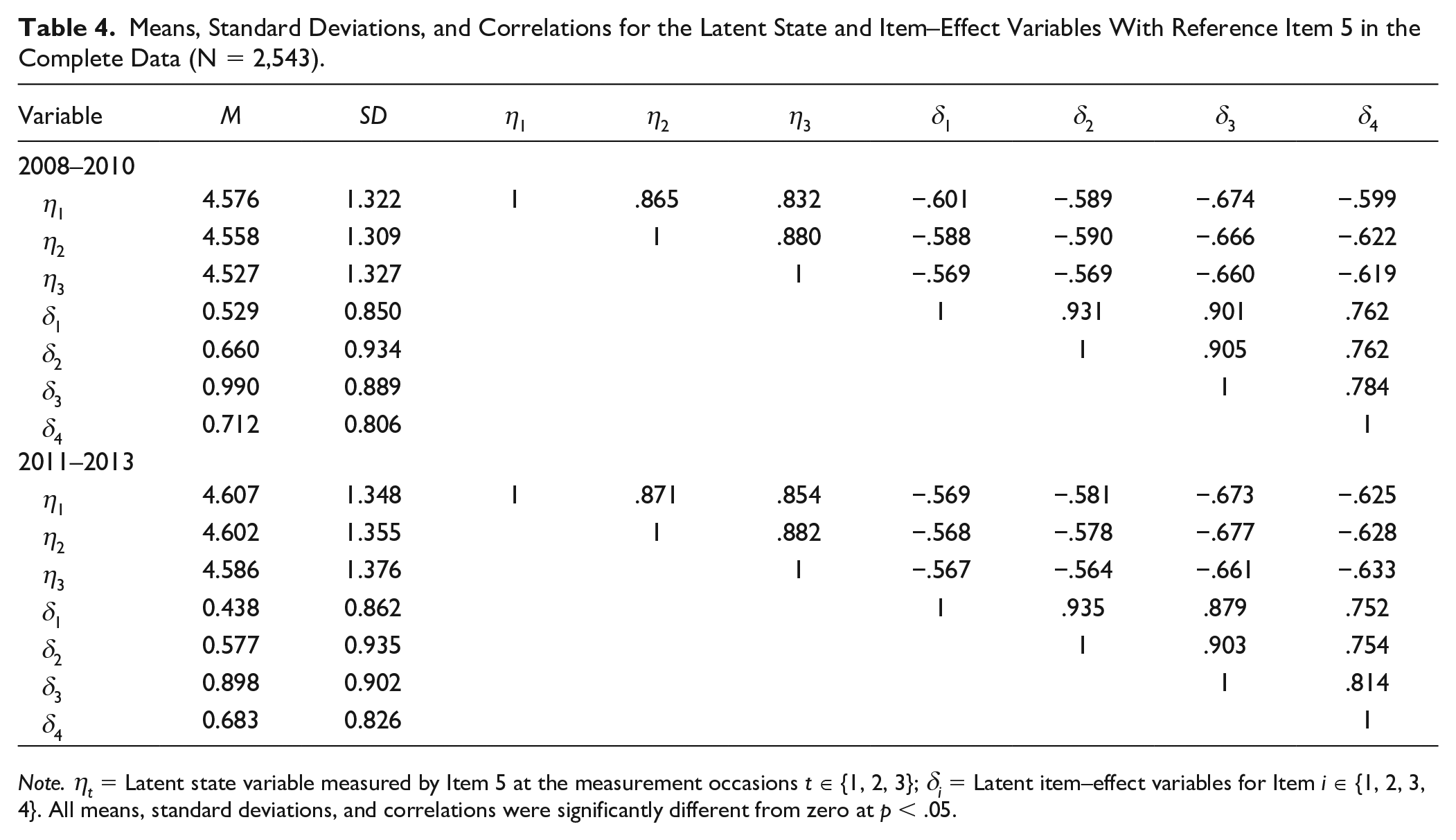

Table 4 provides the parameter estimates when defining the states with Reference Item 5 and the item-effect variables representing the inter-individual differences in the responses to each item as compared with Item 5. Rating life satisfaction with Item 5, as one would change almost nothing in life results in lower means of the states of around 4.5 (i.e., around one scale point lower), but larger inter-individual differences (i.e.,

Means, Standard Deviations, and Correlations for the Latent State and Item–Effect Variables With Reference Item 5 in the Complete Data (N = 2,543).

Prediction of Health Outcomes

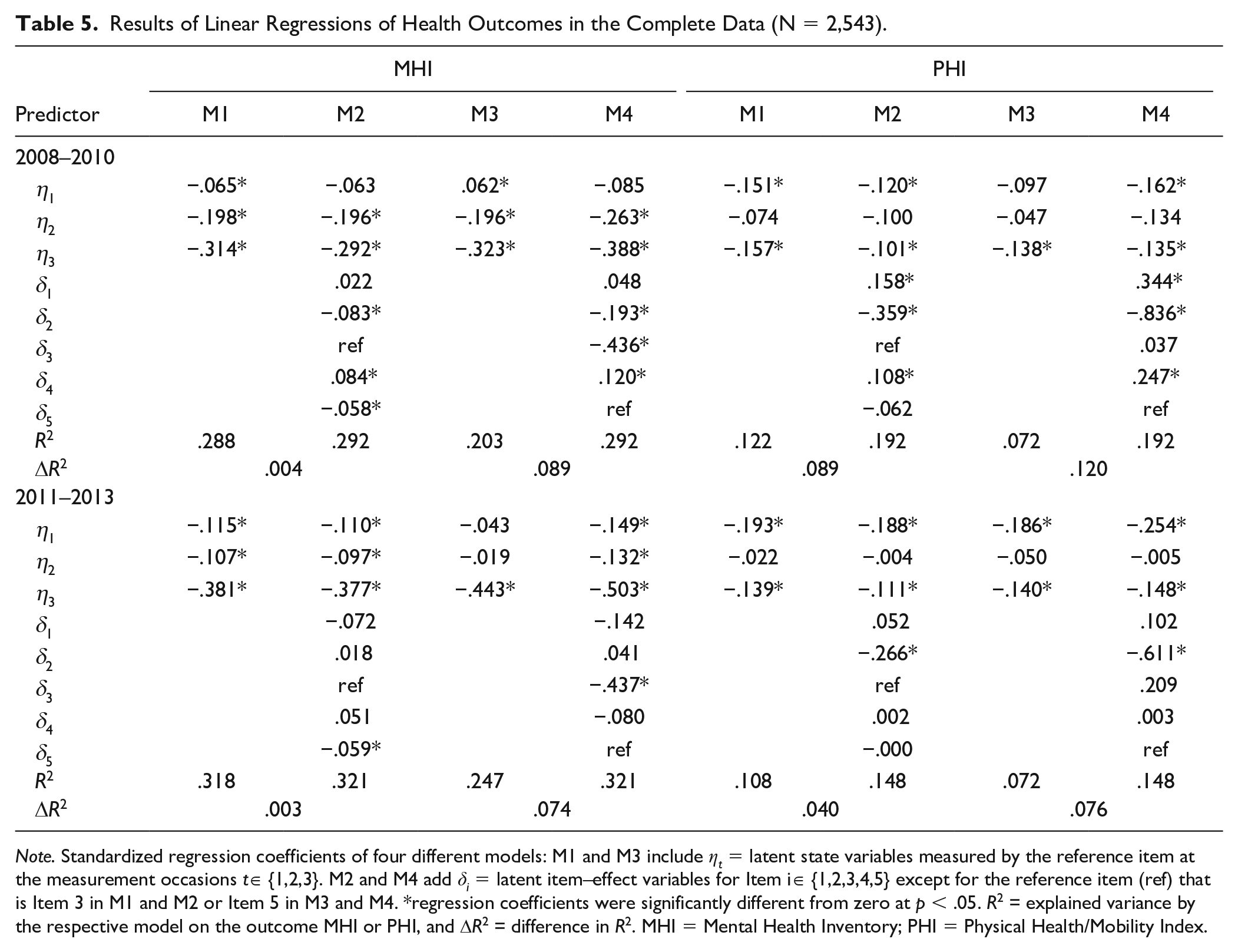

To evaluate the relevance of the modeled item–effect variables for the prediction of the two health outcomes, we compared linear regressions of the MHI-5 or PHI index on either the latent state variables alone or on the latent state and item-effect variables together. Superior prediction accuracy of the latter would indicate that item-effect variables contain substantive information for the prediction of health. The respective regression results are summarized in Table 5 for the different outcomes, measurement periods, and identification schemes. Detailed results on model fit comparisons for the analysis without and with item-effect variables are provided in Table S5 in the Supplemental Material.

Results of Linear Regressions of Health Outcomes in the Complete Data (N = 2,543).

The choice of the reference item had no impact on the explained variance in the complete model using states and item–effect variables as predictors because both identification schemes are equally valid. Thereby, the latent variables of the SWLS scale could explain substantial variance in the MHI-5 index with slight differences between the measurement periods (2010 = 29.9% explained variance, 2013 = 32.1% explained variance) as well as in the PHI index (2010 = 19.2% explained variance, 2013 = 14.8% explained variance). As such, life satisfaction measures that account for item–effect variables, stronger predict the mental health index than the physical health index. The relevance of item–effect variables in this prediction substantially depended on the used identification scheme.

For the MHI-5 index, the latent states (i.e., life satisfaction measured by Reference Item 3) had a substantial impact and explained around 30% of the variance. As expected, higher life satisfaction indicated lower psychological distress. Although the most recent measurement of life satisfaction exhibited the strongest effect, the previous state variables added incremental information. This was not the case for the item–effect variables which increased the explained variance by <0.5%. A similar pattern was found for both measurement periods. As such, we can consider the item–effect variables as a nuisance without substantial meaning in this analysis. In contrast, the latent states (i.e., life satisfaction measured by the Reference Item 5) had a less strong impact and explained only around 20% of the variance, whereas again the most recent measure exhibited the strongest effect. In this case, substantial incremental information was added by the item–effect variables, whereas the specific effect of Item 3 was the most important. These two analyses suggest that primarily Item 3 substantially predicted the MHI-5 index.

A different pattern emerged for the PHI index. Life satisfaction measured by Reference Item 3 was less relevant for predicting physical health problems and explained only around 12% of the outcome variance. Moreover, the first state variable had a comparable impact on the outcome as the most recent measurement. In addition, the impact of the state variables decreased when adding the item–effect variables as additional predictors which explained around 7% incremental variance. Thereby the effect of Item 2 “The conditions of my life are excellent” was most important for predicting physical health problems. Thus, fewer mobility problems are reported, especially if the participants have higher values on this item in comparison to the reference item that assesses general life satisfaction. This pattern was even more prominent when choosing Item 5 as the reference. The more specific specification of the states explained less variance of around 7%, and accordingly more incremental variance referred to the item–effect variables. Again, the item–effect variable of Item 2 was the strongest. Accordingly, the more nuanced construct specification offered more detailed insights into the psychological phenomenon of interest. Thereby a global specification of life satisfaction was good for explaining mental health, but a more concrete specification based on the conditions of life was beneficial for predicting physical health. The overall pattern of the results was comparable in both measurement periods (see Table 5). Also, the same differences between the two identification schemes, and the same results on the relevance of specific predictors can be obtained in the incomplete data. Yet, the explained variance was slightly lower in the larger samples (see Supplemental Table S9 for details). Overall, we can consider our results on the multidimensionality in the SWLS and their predictive validity as stable, at least in our large sample.

Discussion

Measurement models with person-specific item effects can contribute to psychological assessment by revealing multidimensionality in item responses and identifying secondary content traits with substantial meaning beyond the primary trait. We showed this by modeling a well-studied instrument (i.e., SWLS) as a multidimensional construct. In contrast to previous research that primarily viewed person-specific item effects as an unwanted source of nuisance (e.g., Eid & Kutscher, 2014; Joshanloo, 2022; López-Benítez et al., 2019; Scarpato et al., 2021), the present study adopted an alternative stance and considered them a meaningful subject of investigation. Specifically, in relation to theoretical considerations and previous investigations on the meaning of person-specific item effects in the SWLS, we pointed out how such effects can be informative for substantive analyses. Thereby, we showed how to separate systematic variance components that are common for all items of a scale from measurement error-free and stable item–effect variables using well-defined latent variables in the tradition of LST-R theory (Pohl et al., 2008; Steyer et al., 2015). This approach allowed for closely studying item–effect variables themselves and for using them in subsequent analysis. Importantly, we showed the predictive validity of item–effect variables that is plausible in relation to the item content. Our results suggest that responses to the SWLS are more stronger related to an indicator of mental health than of physical health (i.e., the latent variables of all items together explained up to 30% or 20% of the respective health index). This general result on the predictive validity is supported by previous studies, which investigated comparable constructs in large community samples, but modeled the SWLS as a unified factor (e.g., Cheung & Lucas, 2014; Hinz et al., 2018). In addition, we showed that a general definition of life satisfaction (i.e., in terms of Item 3 “I am satisfied with my life”) was sufficient for investigating the relation with mental health, but a more differentiated view was beneficial for predicting physical health. Especially person-specific effects for Item 2 “The conditions of my life are excellent” contributed to the explanation of physical health next to a general construct definition. This might support the interpretation of person-specific item effects as secondary traits with substantial meaning (similar to so-called personality nuances; e.g., McCrae et al., 2019; Stewart et al., 2021).

Implications for Psychological Assessment

The study of person-specific item effects can support applied psychological assessments in several ways. As has been demonstrated in our application, the inclusion of person-specific item effects can guard against severe misspecifications of measurement models. This does not only improve conventional indices of model fit but also prevents severe structural parameter bias in, for example, regression weights or explained variances (McClure et al., 2021; Rhemtulla et al., 2020). More importantly, when person-specific item effects are viewed as a secondary trait rather than mere measurement bias, they provide additional information on individual differences between respondents without requiring the administration of additional items. Consequently, modeling person-specific item effects allows for more parsimonious assessment instruments. Moreover, in contrast to previous research on the incremental contribution of single items for personality research (e.g., Achaa-Amankwaa et al., 2021; Stewart et al., 2021), our modeling approach managed to specify proper measurement models for each item effect. As such, systematic item–effect variables were distinguished from random measurement error and, thus, allowed examining true score effects with criterion variables. For such analysis, particularly the interpretation and specific source of person-specific item effects is important. To prevent from ad hoc secondary analyses without careful considering the conceptual questions,

Limitations and Directions for Future Research

The present study focused on the advantages of modeling person-specific item effects in the SWLS to strengthen the evidence on the impact of such effects for psychometric and substantive analyses. Accordingly, we emphasized its potential for applied psychological measurement and also provided the respective analysis code to aid similar analyses for future research. However, we readily acknowledge that the presentation of the technical details of our modeling approach in the Appendix was rather concise. A detailed psychometric introduction into modeling item–effect variables is given in Erhardt et al. (2022) and Thielemann et al. (2017), while the strengths of the LST-R theory, in general, are described, for instance, in Steyer et al. (2015), Geiser and Lockhart (2012), or Eid (1996). Moreover, the benefits of separating item–effect variables for investigating their source were recently also pointed out by van Bork et al. (2022). Furthermore, the presented model should be considered a starting point for future research. For example, one possible extension might be accounting for common method effects (e.g., Holtmann et al., 2020) or adjusting for explanatory variables (Thielemann et al., 2017) when investigating the validity of person-specific item effects. However, because the model is already rather complex, it remains to be seen whether these model extensions can be useful for applications on a broader scale. Another downside of the presented analyses with item–effect variables is their increased complexity in comparison to traditional unidimensional construct definitions in terms of (a) data requirements, (b) model specification, (c) model inspection, (d) subsequent analyses, and (e) scientific communication. For example, modeling item–effect variables in longitudinal data requires at least three measurement occasions for the same persons and items. More latent variables have to be identified with specific model assumptions on the stability of the person-specific item effects that might or might not be violated in a specific situation. If multidimensionality is observed (i.e., substantial inter-individual differences are prevalent on item–effect variables), it is not clear without ancillary information whether these represent trait-relevant item content or rather some form of measurement bias such as motivational characteristics or specific response styles. It can also be computational more demanding and more challenging to incorporate an item-based specification of a focal construct in substantial analyses; especially when the relations among multiple constructs are of interest. Finally, the requirements for a comprehensive reporting of respective results increase because various multivariate relations are possible and the choice of a specific identification scheme can substantially impact the disentangled information. Thus, even though the results on the person-specific item effects are promising in our application on the five items SWLS, whether these advantages outweigh the potential drawbacks needs to be answered for each application and setting anew.

Conclusion

Recent advances in psychometric modeling allow in-depth evaluations of person-specific item effects beyond the common trait. The present study identified relevant item–effect variables in the SWLS and, more importantly, demonstrated their stability and incremental predictive validity. As such, we showed that the more nuanced construct definition in relation to the individual items could offer a much more detailed perspective for predicting mental and physical health outcomes. Although these modeling approaches require a profound psychometric understanding because they are substantially more complex as compared with traditional unidimensional construct definitions, we believe that item–effect variables are a promising path for future research that allow more nuanced construct specifications and more detailed insights into psychological phenomena.

Supplemental Material

sj-docx-1-asm-10.1177_10731911221149949 – Supplemental material for The Predictive Validity of Item Effect Variables in the Satisfaction With Life Scale for Psychological and Physical Health

Supplemental material, sj-docx-1-asm-10.1177_10731911221149949 for The Predictive Validity of Item Effect Variables in the Satisfaction With Life Scale for Psychological and Physical Health by Marie-Ann Sengewald, Tina H. Erhardt and Timo Gnambs in Assessment

Footnotes

Appendix: Modeling Person-Specific Item Effects

In general, method effects can be present when assessing latent constructs with multi-item scales (e.g., Campbell & Fiske, 1959; Lord & Novick, 1968; Steyer et al., 2015). In cross-sectional data, multitrait-multimethod (MTMM) models can be used to account for homogeneous method effects that generalize across, for example, different items of a scale, like differences between positive and negative item formulations, or between multiple rater groups (e.g., Eid, 2000; Henninger & Meiser, 2020a, 2020b; Kam & Fan, 2020; Koch et al., 2018; Pohl et al., 2008). This restrictive assumption has been relaxed in longitudinal data to acknowledge method effects for individual items (e.g., Cogo-Moreira et al., 2021; Eid, 1996; Eid & Kutscher, 2014; Erhardt et al., 2022; Geiser & Lockhart, 2012; Holtmann et al., 2020; Marsh & Grayson, 1994; Thielemann et al., 2017). In the following, we investigate the multidimensionality that is implied by this approach and describe how multi-state models can be extended for including item–effect variables that disentangle item-specific variance components.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Data Availability Statement

The study was not preregistered. The LISS panel data were collected by CentERdata (Tilburg University, The Netherlands) through its Measurement and Experimentation in the Social Sciences project (MESS) funded by the Netherlands Organization for Scientific Research. More information about the LISS panel can be found at: www.lissdata.nl. The data and materials are available at https://lissdata.nl, while the computer code is provided at ![]() .

.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.