Abstract

Artificial intelligence (AI) systems need to adapt to changing circumstances to maintain relevance in dynamic environments. Inspired by the adaptive advantages of human forgetting, this study investigates the integration of a forgetting function into an AI system. We implemented this mechanism as a training window within the Cognitive Shadow (CS) system, an AI designed to learn and emulate human decision models. This training window hyperparameter—applicable to supervised machine learning algorithms—aims to address the issue of concept drift by prioritizing recent information. The effectiveness of this addition was tested with a simple strategy game similar in dynamics to rock-paper-scissors. Participants played individually against an AI opponent for three 60-round sessions. CS was trained during Session 1 to learn the decision patterns of the player and actively predicted and countered human decisions in Sessions 2 and 3. Analyses showed that including the training window significantly improved prediction accuracy in both Sessions 2 and 3 by emphasizing recent, relevant data. These findings highlight the potential of incorporating human-inspired forgetting mechanisms to enhance AI performance in interactive and dynamic environments, with implications for future decision support systems.

Introduction

The continued development of artificial intelligence has highlighted many limitations inherent to human cognitive abilities. Artificial intelligence systems have grown to be vastly more capable than humans at many tasks involving memory, attention and computation. Indeed, humans now find themselves unable to compete with refined AI in complex decision-making challenges such as Chess or competitive video games (OpenAI et al., 2019). Although impressive, these accomplishments of AI are narrow and result from extensive training and specialization. Humans may still outperform AI in more naturalistic, complex and dynamic tasks where a high level of flexibility is needed (Korteling et al., 2021). For example, in tasks such as air traffic management or aircraft piloting, humans are still considered necessary, although they can be supported by AI systems to improve their performance (Bekier et al., 2011; Marois et al., 2023). We believe one reason for this human advantage in adaptability lies in our memory, and more specifically, our ability to forget.

Memory is radically different in computers when compared to humans. Where computers have a set amount of information that can be encoded, there is no such clear limitation in humans. Computers also retain information for as long as needed, with no clear analogue to human forgetting. In humans, memory is a cognitive function that seems to have evolved to help avoid potential threats by prioritizing the most relevant information (Korteling et al., 2021). One of the ways human memory remains focused on relevant and new information is by forgetting (Altmann & Gray, 2002). Indeed, forgetting allows more cognitive resources to be used on selected memories rather than competing ones (Kuhl et al., 2007). This is achieved by a process of interference, where new learning inhibits the retention of previous information (Wixted, 2004).

AI systems typically have no such automatic forgetting function, relying instead on humans to delete information that is no longer relevant or takes up too much space (Khan et al., 2019). Keeping as much information as possible is assumed to be optimal in most prediction contexts, as more data should typically only be beneficial. However, this approach can be flawed because it can occupy too much space, take too much time to compute, and fail to focus on the most relevant data (Timm et al., 2018). Furthermore, in complex and evolving contexts, a problem called “concept drift” can occur when the functional relationship between the inputs and the outputs of a model shifts over time, rendering past data deprecated or less relevant (Lu et al., 2019). Without adaptation to concept drift, AI predictions can become obsolete when the modeled pattern has shifted, either progressively or abruptly. Context attunement is therefore a desirable function in the presence of concept drift. Forgetting is one way to adapt to changing contexts or strategies by focusing on recent, relevant data.

In complex and dynamic tasks, AI systems are used to support and compensate for limitations in human decision-making. One approach, called policy capturing, aims to learn the decision-making pattern of a human agent to signal when a decision diverges from the captured policy (Nokes & Hodgkinson, 2017). Some policy capturing models consider it important to integrate mechanisms approximating human capabilities in terms of information processing and analysis into AI policy capturing models (Labonté et al., 2021). This approach may have benefits in dynamic situations, because it allows AI systems to account for the imperfections of human cognition that are especially apparent in complex and unstable contexts (Gonzalez, 2024). Based on this approach and the apparent benefits of forgetting in humans, such increased context attunement (Nørby, 2015), it is hypothesized that adding a feature analogous to forgetting to an AI policy capturing system would improve its predictive performance.

Cognitive shadow (CS) is an AI system that continuously learns the decision pattern of an individual during tasks that require a decision or judgment. Outputs can either be nominal (a class/diagnostic/choice) or values on a continuous scale like estimations, forecasts or control settings (speed/depth) for example (Lafond et al., 2013; 2019). It is a prototype policy-capturing system developed by Thales relying on seven automated supervised machine learning algorithms: logistic regression, decision trees, k-nearest neighbors, neural networks, naive Bayes, random forests, and support vector classifiers. These algorithms are trained in real-time following each decision by Python scripts using the Scikit-Learn library. The system builds decision models based on the analysis of decisions made by humans in defined situations with pre-established parameters. The decision models thus constructed can be used to predict future decisions. This system has been tested in various contexts, including dynamic tasks such as anti-submarine warfare (Labonté et al., 2021) and naval anti-air defense (Labonté et al., 2020). To enhance the predictive capabilities of CS, a new capability inspired by human cognition was added to its model as a hyperparameter. This was an ability to effectively forget, momentarily, some of the information gathered by the system. This new hyperparameter took the form of a training window, meaning that CS would automatically choose whether to consider all training data, or less while making a prediction. Based on this hyperparameter, the opponent modeling system can decide to consider all data, or the 80%, 60%, 40%, or 20% most recent data. The best data cutoff value is determined like any machine learning hyperparameter, through a cross-validation loop that tests different values and selects the one leading to the best predictive accuracy (Bischl et al., 2023). This was designed to enhance performance by allowing the system to learn only from relevant information, mimicking in some ways forgetting in humans.

In order to test decision-making in dynamic situations, computer simulations, or microworlds, are often used (Gonzalez et al., 2005). The policy capturing performance of AI systems can also be tested in these microworlds (Labonté et al., 2020). Video games can offer a similar avenue for this same purpose, as many require dynamic decision-making. In the video games literature, some studies have explored policy capturing, using the term player modeling, to increase engagement in various ways. One approach is to use the player model to create an opponent that predicts and counters future actions (Pfau et al., 2019). A first study showed that CS could be used in this way effectively (Lavoie-Hudon et al., 2021). This player modeling paradigm was found to be a useful medium in which to test the predictive performance of CS.

Previous work on adversarial AI in gaming has focused on building more adaptable and complex opponents to engage players. AI opponents have evolved from simple rule-based systems to using more advanced techniques such as reinforcement learning (i.e., OpenAI et al., 2019). Dynamic difficulty adjustment systems have also been explored to maintain player engagement by modifying AI behavior in real-time (Yannakakis & Togelius, 2015). However, these approaches often struggle to create opponents adapted to players regarding both their difficulty and strategy. This study extends these efforts by integrating a forgetting mechanism inspired by human cognition into an AI system used for player modeling. This approach prioritizes recent and relevant player data, enhancing adaptability in adversarial decision-making.

Objective

To our knowledge, the use of AI incorporating a training window to anticipate and counter the future actions of a player has not yet been tested or documented. The purpose of this study is to determine whether the addition of a training window improves the adaptation of an AI system to human decisions in a complex and dynamic system. Thus, this paper tests a new version of CS with this addition against a version without it to predict human decisions in a strategy game. We hypothesize that the integration of a training window into CS will allow it to predict and counter human decisions more accurately.

Method

In order to address the objective of this study, a relatively simple strategy game was developed. The experiment was conducted with human participants playing against CS, with no training window. The data gathered with these participants were then analyzed to test the predictive accuracy of CS with and without the training window.

Strategy Game

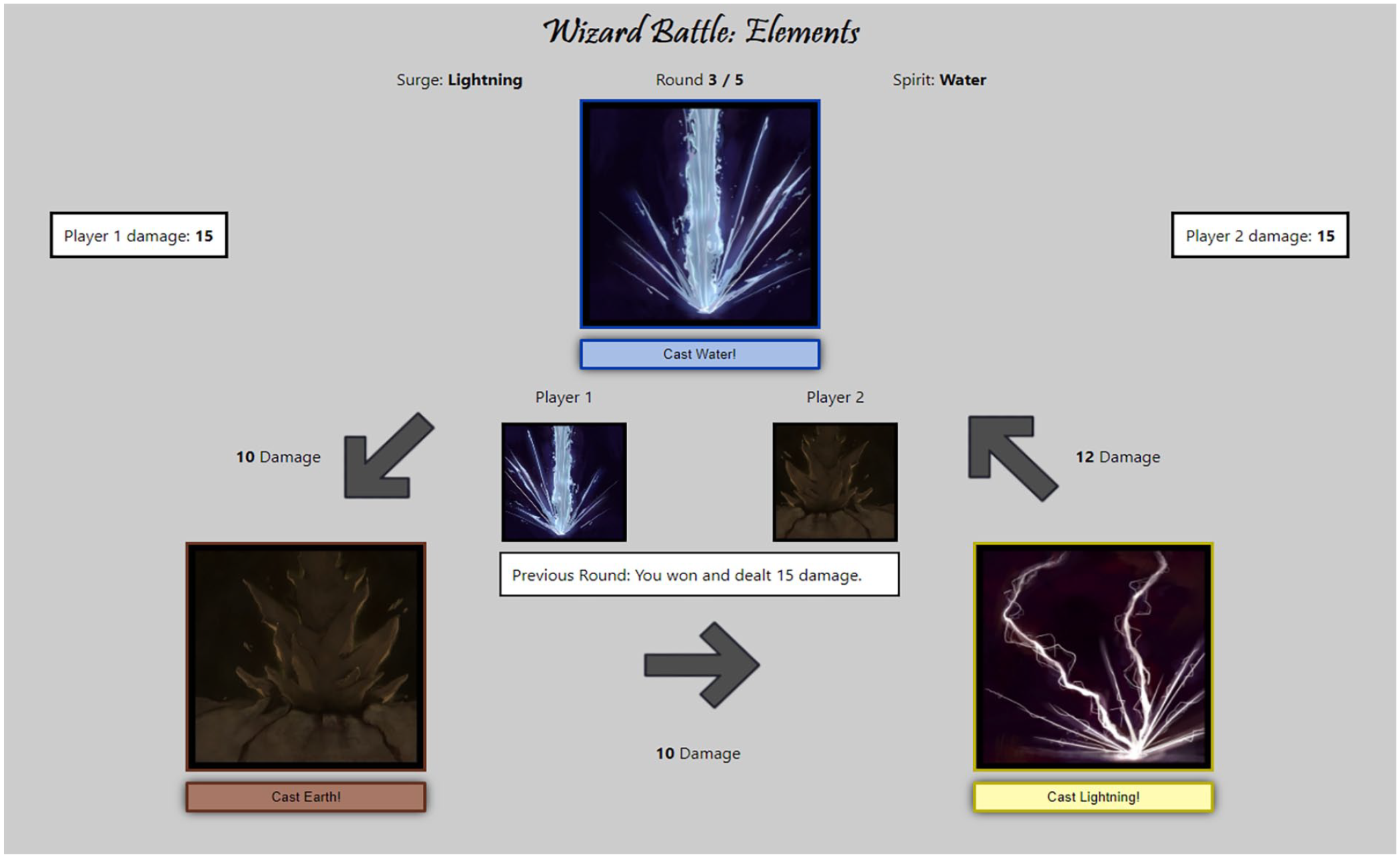

A game named Wizard Battle: Elements was built for this experiment. It featured a duel between a human player (Player 1) and an artificial opponent (Player 2), controlled by CS. The game interface is presented in Figure 1. The principles of the game are based on rock-paper-scissors. The game is played in sequences of five rounds called battles. In each round of a battle, players take additive damage if they lose. The players must pick between a water, earth or lightning spell to cast each round. As in rock-paper-scissors, each spell defeats another spell, ties against itself and loses against the remaining spell. A baseline of 10 damage is dealt to the player who loses a round.

Interface of the Wizard battle: Elements game.

This game also adds a layer of complexity to rock-paper-scissors by adding two contextual modifiers that affect the damage inflicted by each spell. The first variable, named Surge, adds five damage points to the affected spell, whereas the Spirit variable reduces damage by 3 points if the player that chose the affected spell loses the round. Either every round (Surge) or every battle (Spirit), these variables randomly affect one of the three spells. To win a battle, a player must have the lowest amount of damage accumulated after five rounds. This game was designed to allow for strategy and concept drift while maintaining experimental control.

Player Modeling Parameters

As mentioned, CS analyzes the decisions of participants to model them using supervised learning algorithms. We selected 13 parameters that may be considered by players when deciding which spell to cast. These included the Surge and Spirit variables, the number of rounds played in the current battle (out of five) and the cumulative damage difference between the two players. Nine additional parameters were considered, which correspond to the spells cast by each player in the last three rounds and the result of these three rounds (win, loss or tie).

Participants

A total of 92 participants were involved in the experiment. All of them were students or employees from Université Laval who received 10 CAD for their one-hour participation to the experiment. Because of issues in data collection, six participants were excluded from the analyses, leaving a total of 86 participants (42 men, mean age = 26.8) with valid data.

Procedure

The experiment was conducted entirely online, with participants and the experimenter communicating through Microsoft Teams. The game was developed as a web application, which allowed participants to play remotely, while allowing data to be automatically sent to the CS server through a representational state transfer application programming interface (REST API).

To ensure the success of the experiment, participants shared their screens with the experimenter, who guided the players through the different stages of the experiment. Importantly, participants were not informed at first of the true purpose of the experiment. They were told during recruitment and until the last session of the experiment that the study was conducted to test a new platform that would be used to run decision-making experiments. They were not told who or what their opponent was and had no indication that the system was learning based on their decisions. Before playing, participants read a visual tutorial explaining the game rules. Then, they played two practice battles excluded from the analyses to become familiar with the functioning of the web platform. They were instructed to play using a strategy of their choice to try to win the most rounds. The experiment lasted three sessions of 60 rounds each.

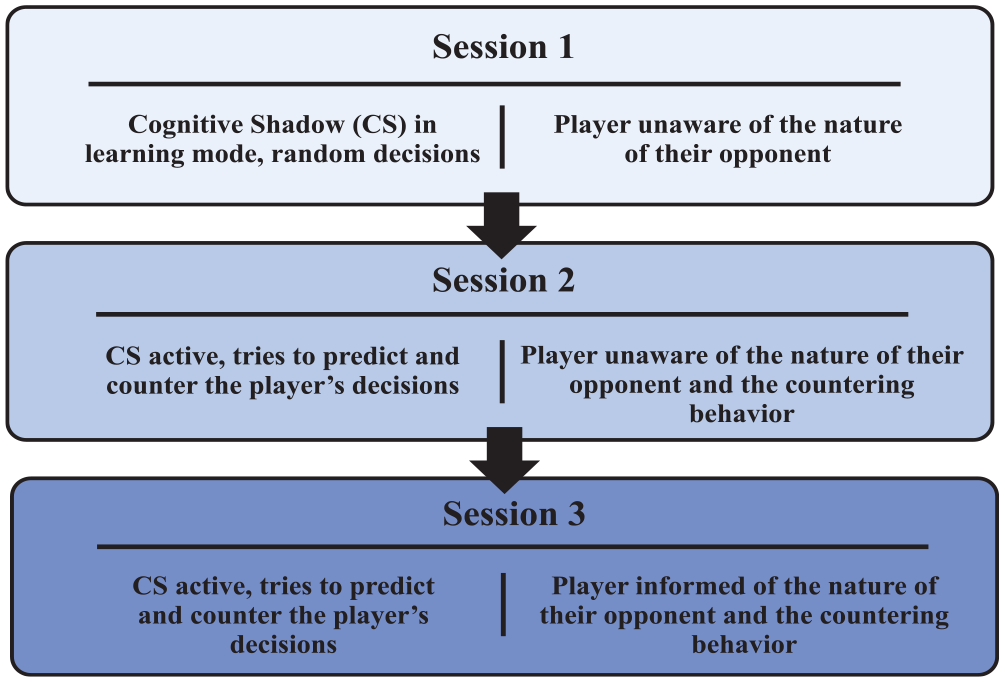

The experimental design is presented in Figure 2. In Session 1, CS was in learning mode, so spells cast by the artificial opponent were chosen randomly. In Session 2, CS became active. Without the player being aware of it, CS tried to counter their decisions by predicting their choice of spell and selecting the spell that would beat it. Before Session 3, the participants were informed of the nature of their opponent and the countering behavior. Participants were invited to take this new information into consideration to defeat CS in Session 3.

Behavior of CS and information given to the player throughout the three experimental sessions.

Results

Using data collected from the experiment, analyses were performed to assess the predictive accuracy of the models with and without the training window. Paired samples t-tests were conducted to detect differences between the accuracy with and without the training window. The alpha level used was .05. Session 1 is not presented here as CS was in its training phase.

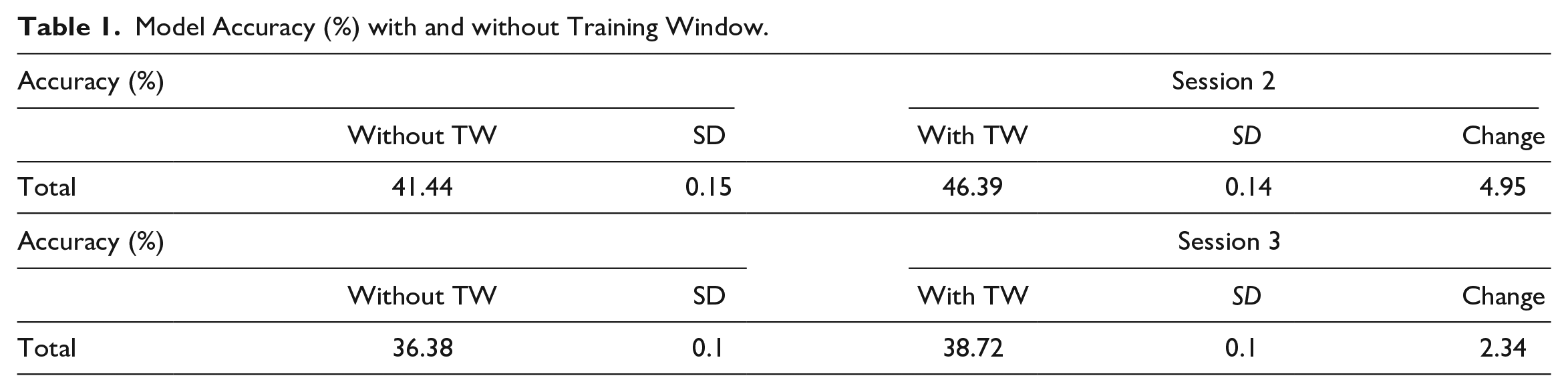

Results show that in Sessions 2 and 3, the average accuracy increased with the addition of the training window. As shown in Table 1, in Session 2, accuracy increased from 41.44% to 46.39%, t(85) = 4.645, p < .001, d = .501. In Session 3, accuracy increased from 36.38% to 38.72%, t(85) = 2.655, p = .005, d = .286. The results also show lower accuracy in Session 3 compared to Session 2, both with the training window, t(85) = 4.626, p < .001, d = .499, and without it, t(85) = 2.834, p = .003, d = .306.

Model Accuracy (%) with and without Training Window.

Discussion

The current paper investigated the effects of adding a new feature analogous to human forgetting to a policy-capturing AI system. The ability of the system to predict and counter players in a strategy game was compared with and without this new training window feature. The results show an improvement in predictive accuracy in both sessions in which CS was active. This indicates that the addition of the training window to CS increased its predictive performance. This gain in predictive accuracy was maintained across both sessions, although the increase was greater in Session 2 compared to Session 3. The results also show that CS generally produced more accurate predictions during Session 2 compared to Session 3.

The improvement in predictive accuracy when adding the training window supports our hypotheses and suggests that this new feature enables better adaptation to dynamic decision-making contexts. With this forgetting function, CS selectively focused on fewer, but more recent and relevant data, improving its ability to capture decision patterns. This mechanism parallels human memory processes, where prioritization of recent experiences aids decision-making in rapidly changing situations (Nørby, 2015). The successful use of this mechanism for improving policy capturing performance supports human factors approaches advocating the integration of mechanisms approximating human capabilities into policy capturing models (Gonzalez, 2024).

The different results between Session 2 and Session 3 could be explained by differences in decision patterns across these two sessions. In Session 2, participants were not aware they were playing against an AI system. Therefore, they had changing but relatively stable decision patterns which were easier to learn for CS. In Session 3, participants had just been told that they were playing against an AI opponent that attempted to counter their decisions. They could then try to intentionally confuse CS in an effort to win more rounds and were invited to do so. The resulting changes in strategy could explain the decrease in accuracy. Despite this additional challenge in the last session, the addition of the training window still yielded a significant benefit. These results demonstrate the robustness of the training window even under challenging conditions. Its ability to continue adapting even when prior data becomes less predictive due to strategic shifts or participant deception was also demonstrated. Overall, the opponent modeling method successfully gave an edge to the AI-player (up to 13% better outcomes compared to the chance rate of 33%) despite the adversarial context which made policy capturing considerably more challenging. Deliberate or even involuntary regularities in human player behavior were thus partly learned by CS and better so when managing concept drift with the training window.

One limitation to consider is that CS works for classification and regression tasks, but not for complex sequential decision-making tasks that would require the use of reinforcement learning models (which would also require more data). The simplicity of the game used in this study also presents limits. Although controlled environments are advantageous for studying human-AI interaction, the results may not be fully applicable to real-life decision-making situations.

Future Directions

Future directions for this work include improving upon the forgetting function created for this study. The effectiveness of this simple feature has been demonstrated, but it only partly replicated the complex nature of forgetting in human memory. One potential improvement would be to add a memory library, which would save multiple past strategies/patterns that could then be recovered. Other improvements based on human memory such as giving additional weight to the decision-making patterns exhibited early in the experiment could also be explored.

Future directions could also include investigating further the reactions of players facing player modeling systems in video games. Despite this paper focusing on the policy capturing performance of the system, using CS as an opponent has already been shown to enhance player engagement (Lavoie-Hudon et al., 2021). Studying other aspects of player reactions such as decision-making pattern change could also be informative. For example, the observed performance differences between Sessions 2 and 3 may be indicative of important changes in behavior when players know they are facing AI. Metacognitive processes may then be involved in recognizing their own individual strategy and countering the AI system (Nicolay et al., 2022). Such reactions could have implications for the design of future decision support systems.

Moreover, the reactions of players to features inspired by human cognition such as a forgetting function have yet to be tested. Evaluating the effects of such features may provide critical insights into human-AI interactions. For instance, reactions could be influenced by factors such as anthropomorphism (Epley et al., 2007). Progressively adding features that replicate aspects of human thinking to a player modeling system could reveal more about how players relate to different AI systems. Other cognitively inspired features such as one analogous to human limits on cognitive load indeed warrant investigation (Cowan, 2010). This could improve the real-time performance of AI systems through better computation management. These improvements would then likely also influence the experience of players.

Conclusion

In summary, the addition of a training window to an existing AI system enhanced its performance in a player modeling context. These results show that adding a function that is analogous to forgetting to an AI system can improve its ability to capture human decision-making patterns. This can be applied to many contexts where modeling decisions is seen as valuable, including video games, defense and security and healthcare. More generally, these findings support the addition of features based on human cognition to policy-capturing AI systems for studying human-AI interactions.

Footnotes

Acknowledgements

We thank Jean-Sébastien Thivierge and Jiye Li from Thales for software implementation and data analysis, as well as Jodie Simoneau for assistance with data collection. Finally, we thank Vincent Poiré and Mika Dubé for creating the game interface.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research received financial support in the form of a scholarship from the Natural Sciences and Engineering Research Council of Canada (NSERC) awarded to Coralie Bureau, and of a DND-NSERC partnership grant awarded to Sébastien Tremblay and to Heather Neyedli with Defense Research and Development Canada- Atlantic and Thales (grant DNDPJ518400-17).