Abstract

A great challenge to employing questionnaires is ensuring that respondents understand them. One possible strategy is to explain the meaning of items but there is a risk in biasing respondents’ answers. In this study, we assessed how explaining the meaning of items to respondents might affect their responses and the validity/reliability of the Positive System Usability Scale (PSUS). We employed straightforward, general explanations that we felt matched the intended meaning of the items. We found a statistically significant difference between the Explanations and No Explanations groups, but we judged this effect as not practically significant. We found the validity and reliability of the questionnaires from both groups to be acceptable. Finally, the reliability of the PSUS questionnaires given to the Explanations group was systematically higher than the No Explanations group, suggesting that correctly explaining items may enhance the reliability of questionnaires, perhaps by enhancing respondents’ understanding of the questionnaire items.

Introduction

Subjective questionnaires are an instrumental tool in the application and research of usability. Questionnaires are great because, when applied well, they can be easy to administer, require little time, facilitate comparisons between products, and provide a method to summarize and communicate with non-expert stakeholders. One of the greatest hindrances to their use is that respondents may not understand the items, producing fruitless ratings, potentially leading to incorrect results (e.g., failing or passing a product when it should not be), or to puzzling response patterns (e.g., all 3s). Investigators are limited as to how they can solve this problem.

The System Usability Scale (SUS, Brooke, 1996) is a prime example of this challenge and how it might be addressed. Finstad (2006) found that one SUS item (“I found the system very cumbersome to use”) was difficult to understand for respondents whose primary language was not English. His recommended solution was to substitute the word “awkward” for “cumbersome” to increase readability. Bangor et al. (2008) supported this modification as they reported doing so drastically reduced the number of questions they received when administering the SUS. This represents a rare case in which changing the wording of an item is recommended. Generally, such changes are discouraged because modifications to previously validated scales can change their interpretation and render any psychometric qualities of a scale unknown.

To mitigate careless responding and acquiescence bias, the SUS was created using an alternate item format so that half of the items are positively worded, and the other half are negatively worded (Sauro & Lewis, 2011). However, it has been found in practice that the alternating item format may lead to misinterpretation, response mistakes, and miscoding by the scorer (Sauro & Lewis, 2011). To address these issues Sauro and Lewis (2011) created the Positive System Usability Scale (Positive SUS) which converts all the negatively worded SUS items into positive statements. They compared the response results between the Positive SUS and the original SUS and found that there was no detectable difference in response patterns or mean scores. Contrasting with Finstad (2006), this approach is much more resource intensive and thus many investigators may not be able to pursue questionnaire modification and validation.

Another option is to adopt another measurement instrument, but investigators may be impeded by limited time or knowledge of alternatives. Yet another strategy is to explain the meaning and intent of items to respondents. This may take many forms including an overview of the interpretation of items, answering clarifying questions from respondents, or giving written instructions on how to read and interpret questionnaire content. Although faster and easier to apply than other options, these strategies risk introducing conductors’ bias and influencing responses to the items.

In this study, we examined the most, arguably, benign form of this final strategy: Explaining the relevance and meaning of questionnaire items without comment on directionality (e.g., this product would be lower on this aspect). We explored how these explanations may affect response patterns, the psychometric qualities (reliability and validity) of the usability scale, and how helpful these explanations were to respondents.

Method

Design

Sixty participants retrospectively rated 10 products (selected from Kortum & Bangor, 2013) using the Positive SUS (Sauro & Lewis, 2011). We also used the Adjective Rating Scale to measure the convergent validity of ratings in both conditions (Kortum & Bangor, 2013). We randomly assigned participants into two groups. The No Explanations group rated products without any additional information. The Explanations group received the PSUS with 10 statements, each coupled to a Positive SUS item, explaining the item’s intended meaning.

Afterwards, both groups rated how understandable the Positive SUS items were (“The statements I answered about each product were understandable”) and how well the explanations matched their interpretations (“The supplemental information matched with my interpretation of this statement”; 10 times for each item-explanation coupling). We gauged the Explanation group’s perceived helpfulness of the explanations (“The supplemental information was helpful for me to understand the statements”; “I feel confident that I could have answered the statements without the supplemental information”). We asked the No Explanations group how helpful the explanations would have been for them (“The supplemental information would be helpful for me to understand the statements”; “I would have an easier time in answering the statements with the supplemental information”) after they had rated all the products.

We asked both groups if their responses would have differed based on the absence (Explanations; “My responses would have been the same if I had not been given the supplementary information”) or addition (No Explanations; “My responses would be the same if I had been given the supplemental information”) of the explanations. Responses were collected with a scale ranging from 1 “Strongly disagree” to 5 “Strongly agree.”

Participants

We recruited 60 undergraduate students from Rice University using the online participant portal, SONA. Participants ranged from 18 to 25 years old. There were 11 males and 46 females. Two participants identified themselves as non-binary, and one participant preferred not to disclose their gender identity. Students reviewed the IRB-approved informed consent form before participating in the study. Students received partial course credit upon completion of the study.

Materials and Procedure

Participants were randomly assigned to retrospectively rate 10 products using one of two versions of an online Qualtrics survey, one without any additional information on the meaning of items (the No Explanations group) and the other with additional information (the Explanations group). The 10 products were selected from products that Kortum and Bangor (2013) identified as ubiquitous, best-in-class, and spanning across software, hardware, and web-based systems. Some products on the list were excluded from the study because they are no longer commonly used (e.g., landlines). The 10 chosen products were Amazon’s website, ATMs, Microsoft Excel, Gmail, Google Search, iPhones, microwaves, Microsoft PowerPoint, the Nintendo Wii, and Gmail. Consistent with the recommendation from (Sauro, 2011) the products’ names were substituted for the word “system” for each corresponding PSUS scale to increase readability. Responses were captured on a 5-point Likert scale from 1 “Strongly disagree” to 5 “Strongly agree.”

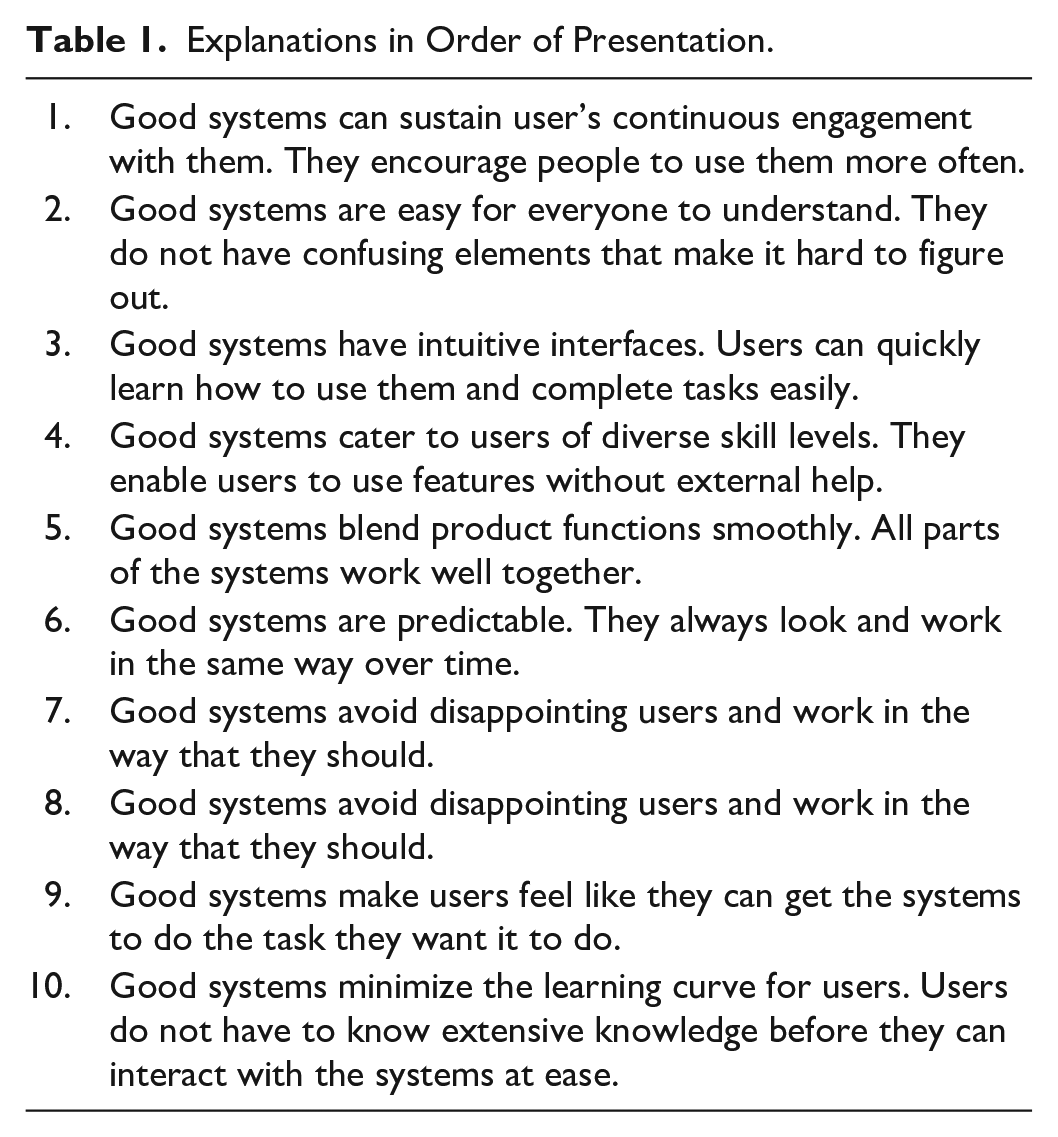

In the No Explanations version of the task, the rating of each product was composed of three parts. First, participants reported their level of experience with the product (“I don’t use the product; Anchors: low, medium-low, medium, medium-high, or high). Second, the Positive SUS (Sauro & Lewis, 2011) was presented to measure participants’ perceived product usability. The Positive SUS is a 10-item scale with responses from ranging from 1 “Strongly disagree” to 5 “Strongly agree.” Then, the Adjective Rating Scale (Bangor et al., 2009) was used as a single question to measure the usability by choosing from seven adjectives (Anchors: Worst imaginable, Awful, Poor, OK, good, Excellent, Best imaginable). Participants who reported having no experience with the product were not presented with the Positive SUS or Adjective Rating scale for that product. The presentation order of the 10 products was randomized. The Explanations version was the same as the No Explanations version, except that additional information was provided to explain the meaning and relevance of each Positive SUS item. Each explanation (Table 1) was coupled to a Positive SUS item. The explanations were written to be below an 8th-grade level as evaluated with the FK readability test (Flesch, 1948).

Explanations in Order of Presentation.

Results

Positive SUS Scores

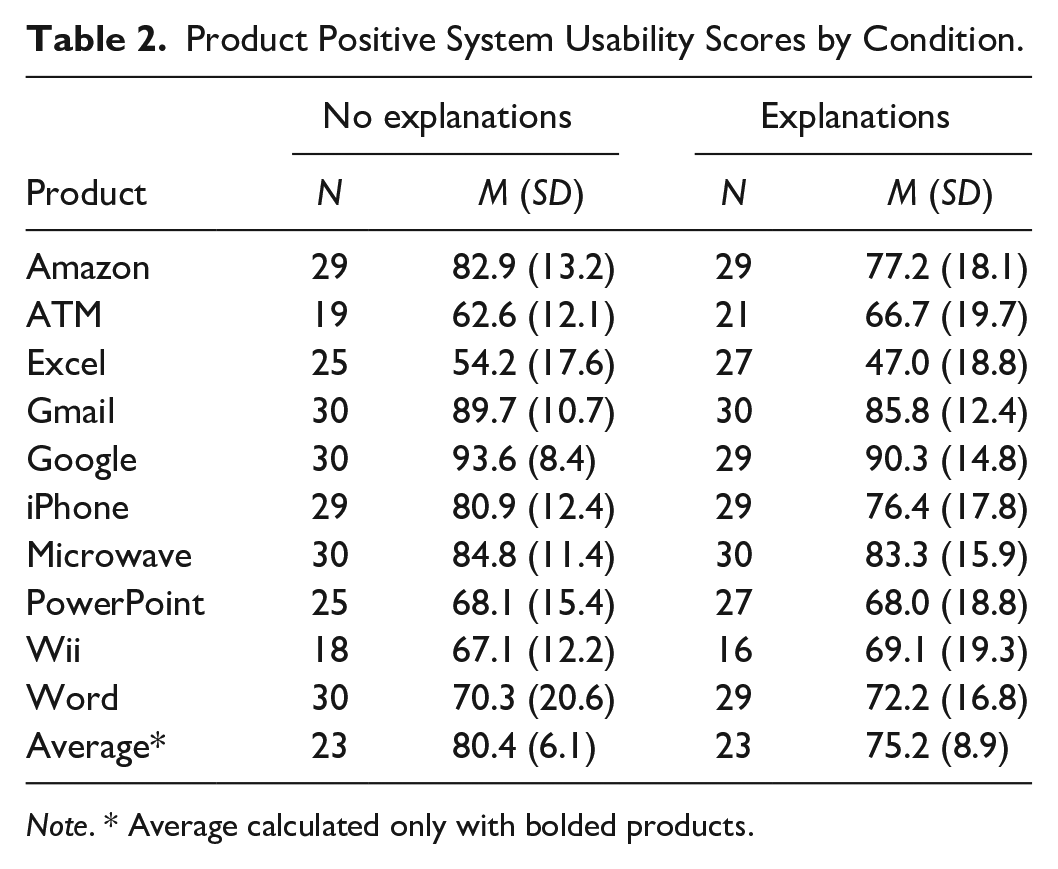

The average Positive SUS scores for each product by condition are recorded in Table 2. After eliminating participants for missing data, data from 46 participants and 8 of the products (bolded in Table 2) were retained for a mixed ANOVA analysis. The interaction between Condition and Product was not statistically significant, F (7, 308) = .75, p = .63, GES = .01. There was a statistically significant main effect for Explanations, F (1, 44) = 5.40, p = .03, GES = .03. The main effect for Product was also statistically significant after a Hyunh-Feldt sphericity correction, F (5.0, 220.1) = 45.78, p < .001, GES = .43.

Product Positive System Usability Scores by Condition.

Note. * Average calculated only with bolded products.

In practical terms the difference between groups’ average Positive SUS ratings was 5.2 points or about half a letter grade on the SUS letter grade scale (Bangor et al., 2009). On the raw scale this amounts to about 2 ticks on average. Put another way, participants in the Explanations scale scored two items one tick lower or one item two ticks lower on average. This difference was observed for 5 (Amazon, Excel, Gmail, Google, and iPhone) of the products. The average Microwave and PowerPoint PSUS score was only about 1 point or less different between the two groups. The average Word PSUS score was higher for the Explanations group than the No Explanations group, showing that the overall difference was not consistent across products.

The main effect for products was not further analyzed as it was neither surprising, these products were expected to differ from one another, nor was it of interest to our research question.

Reliability

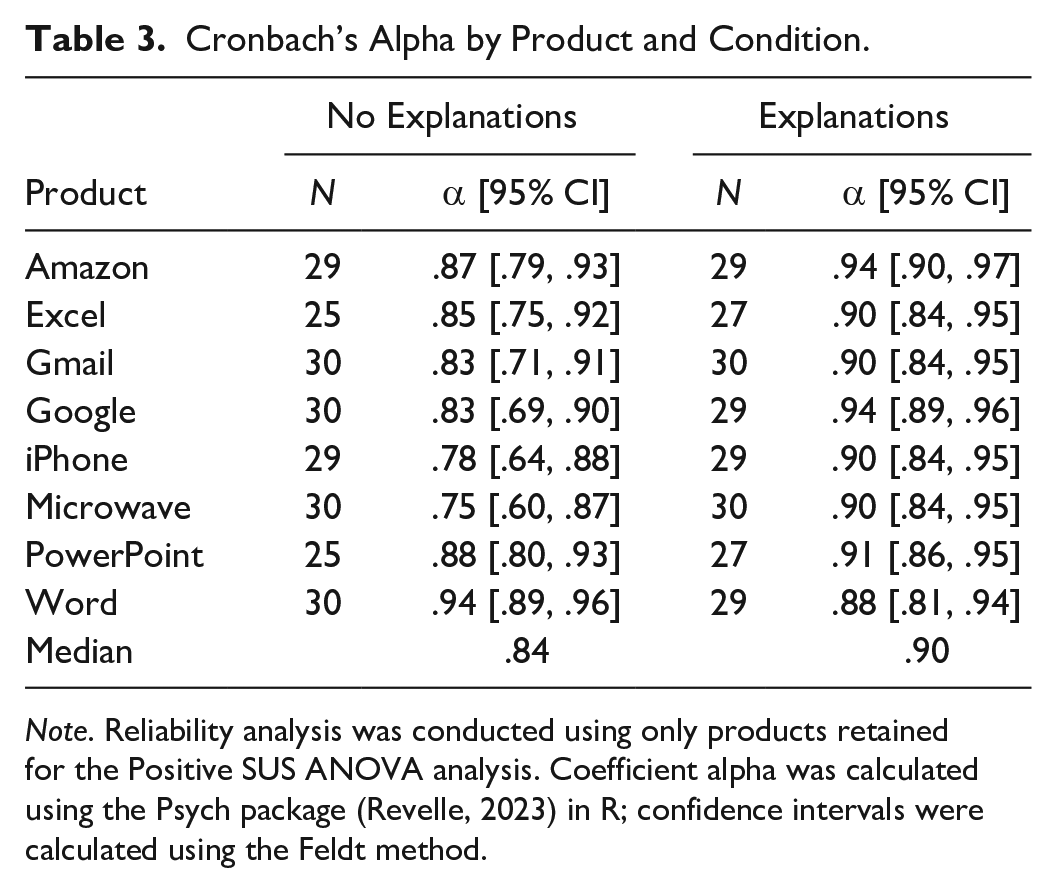

As can be seen in Table 3, for the No Explanations group, the reliability of the Positive SUS for all eight products met the general recommended threshold of α = .70. Explaining the meaning of the items appeared to not negatively affect scale reliability as Cronbach’s alpha exceeded the recommended threshold for the Explanations group’s Positive SUS scores as well. Interestingly, the reliability of the Positive SUS for the Explanations group was consistently higher, as evidenced by the confidence intervals, than that of the No Explanations group for all products but Word. This result may indicate that participants in the Explanations group had a more similar response pattern to one another than participants in the No Explanations group.

Cronbach’s Alpha by Product and Condition.

Note. Reliability analysis was conducted using only products retained for the Positive SUS ANOVA analysis. Coefficient alpha was calculated using the Psych package (Revelle, 2023) in R; confidence intervals were calculated using the Feldt method.

Validity

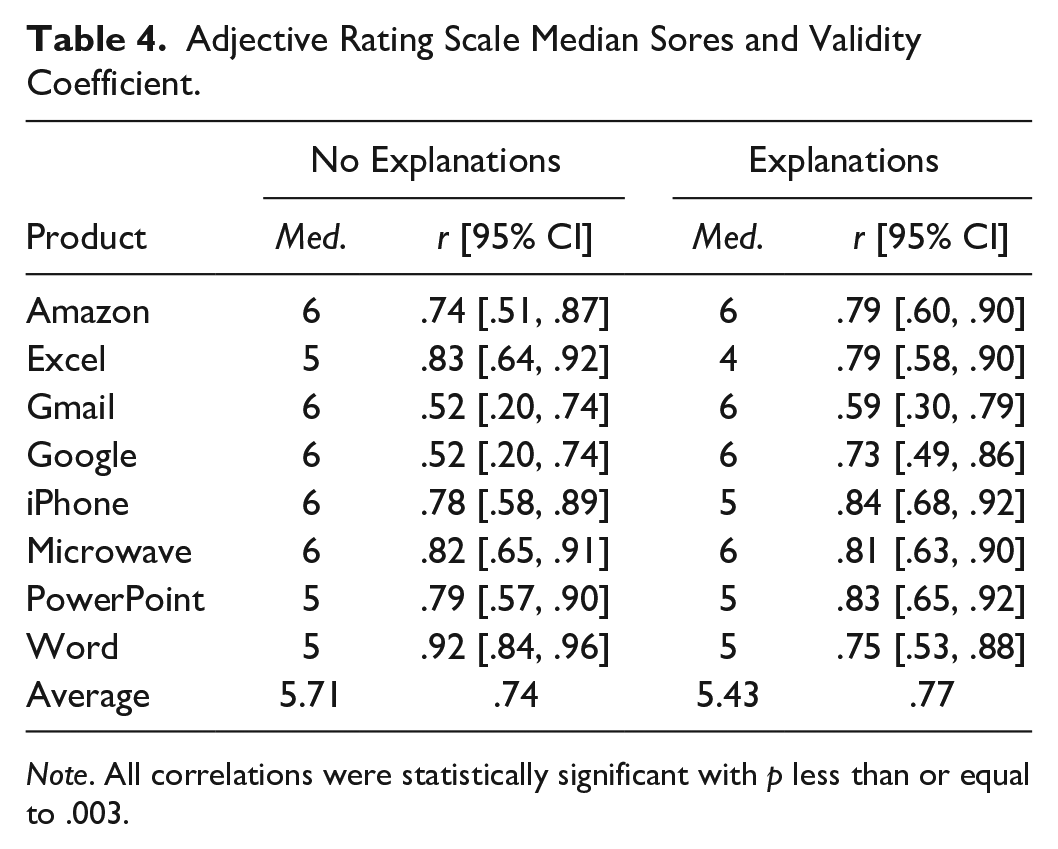

Looking at convergent validity, the relationship between Positive SUS scores and Adjective Rating scores (see Table 4) was statistically significant for all eight products and was of comparable strength for both groups (No Explanations average r = .74; Explanations average r = .77), suggesting explaining the items did not undermine the validity of the Positive SUS.

Adjective Rating Scale Median Sores and Validity Coefficient.

Note. All correlations were statistically significant with p less than or equal to .003.

Understandability

Participants in both groups indicated that they could understand the Positive SUS items. The small difference between the No Explanations groups ratings (M = 4.40, SD = 0.56) and the Explanations readability ratings (M = 4.27, SD = 0.52) indicates that the explanations did little to improve participants reading of the items, probably because the items were understandable without any aid.

Match of Meaning Between Explanations and Items

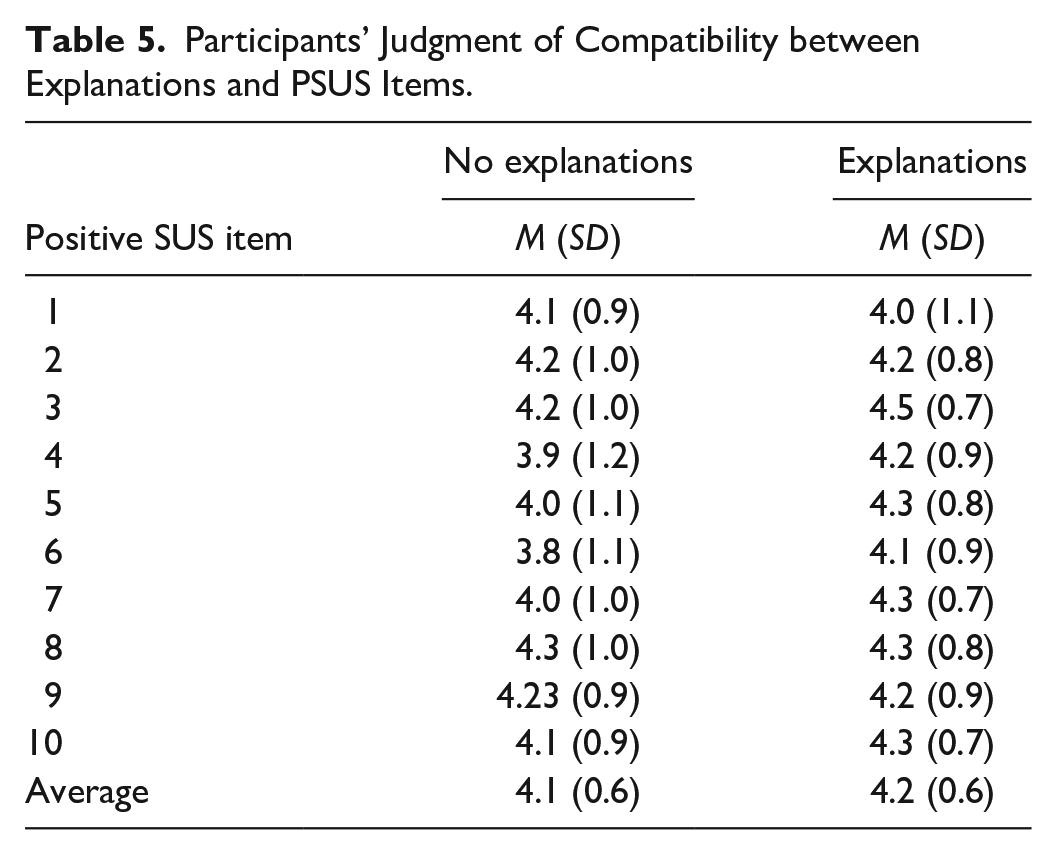

Participants reported that the explanations given to them matched their interpretation of the Positive SUS items. The Explanation group gave a higher rating on average (M = 4.24) compared to the No Explanations group (M = 4.08) but not enough for us to test it inferentially. This result suggests that the explanations were generally consistent with their coupled items. The ratings are summarized at the item level in Table 5.

Participants’ Judgment of Compatibility between Explanations and PSUS Items.

Helpfulness

Participants responses were middling in how helpful they found the explanations. There was a small difference in the prospective helpfulness ratings of the No Explanations group (M = 3.90, SD = 1.06, Median = 4) and the post-response helpfulness ratings from the Explanations group (M = 4.20, SD = 0.71, Median = 4). But when asked if they could have answered the questions without explanations, the Explanations group responded largely in the affirmative (M = 4.03, SD = 1.03, Median = 4) while the No Explanations group mostly agreed to the statement that they would have an easier time responding if they had been given the explanations (M = 3.80, SD = 1.13, Median = 4).

Would Responses Have Changed?

When asked if they felt their responses would have been different had they been given a different version of the Positive SUS, participants seemed uncertain. The No Explanations group trended slightly more towards the middle of the response scale (M = 3.27, SD = 1.28, Median = 3) indicating more uncertainty than those in the Explanations group which trended more towards indicating that their answers would have been the same had they not been given the additional information (M = 3.63, SD = 1.13, Median = 4).

Were Responses Just Random Noise?

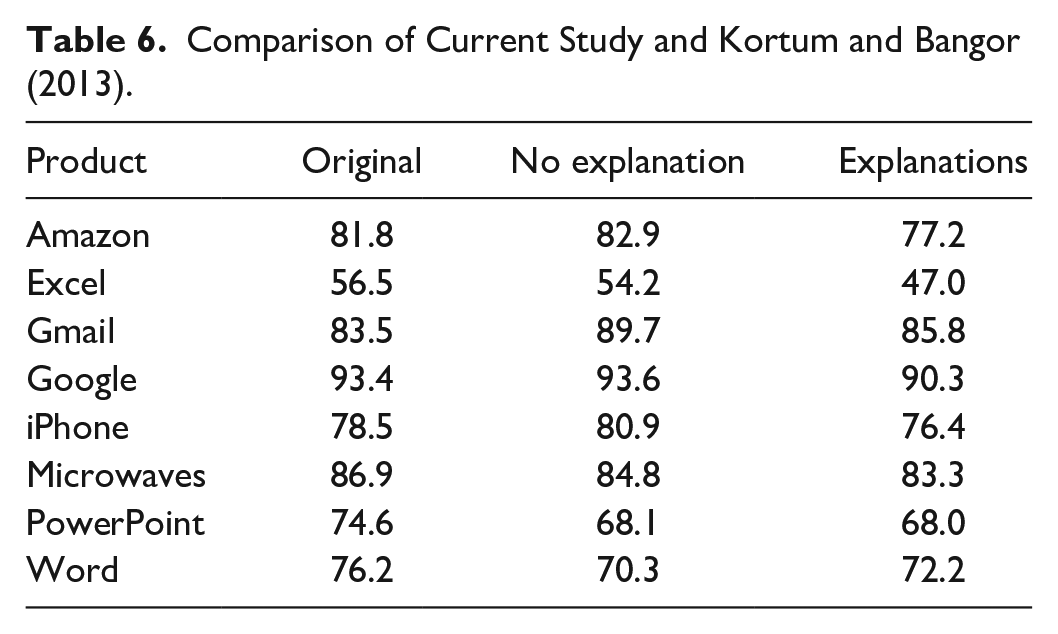

An alternative explanation for the results found in this paper is that participants just responded at the extremes of the scales or in a random manner. To at least partially address this alternative hypothesis, we compared the ratings from the current study to those from Kortum and Bangor (2013). As can be seen in Table 6, for the most part, the average ratings from the original study and the two experimental groups are not too far apart from another.

Comparison of Current Study and Kortum and Bangor (2013).

This was confirmed using Lin’s Concordance Correlation Coefficient (CCC), a measure of agreement between for continuous variables. The closer to 1 that CCC gets, the higher the agreement between the two groups. Both the No Explanations (CCC = .93 [.76, .98]) and the Explanations groups (CCC = .91 [.73, .97]) were found to have high agreement with the scores from the original study.

Discussion

Based on the results, we concluded that when explanations are consistent with questionnaire items, there do not seem to be many detectable downsides. Although we observed a statistically significant difference, a 5.19-point difference on average only amounts to about 2 Likert ticks’ difference as the Positive SUS has a scalar of 2.5 points from raw to scaled score. Under most circumstances, this is likely not a practical difference.

Psychometrically speaking, the scores collected from both groups behaved similarly from a validity standpoint and all evidence suggests that the average scores from both groups are consistent with those reported by Kortum and Bangor (2013), which we interpreted as evidence against the possible explanation that both groups produce meaningless or random responses; bolstering the conclusion that both groups produced earnest ratings.

One interesting observation is that the internal reliability of the scales collected from the Explanations group was on average higher than the No Explanations group. This may indicate that the explanations enhanced respondents’ understanding of the items and helped to reduce error variance or lead to a more standardized response pattern. This suggests a positive upside to adding clarifying statements to questionnaires. This increase in reliability is likely irrelevant given that reliability was also far above the acceptable threshold for the No Explanations group. But this may be an upside worth considering with smaller respondent samples as such samples are likely to be subject to problems stemming from variability than larger samples. We feel that the increase in internal reliability due to explanations should be replicated and explored with other questionnaires to further establish the existence and magnitude of this effect.

Although there were no discernible drawbacks to the Explanations, there also may not be enough benefits to worry about generating and adding them in the first place. Although both groups generally reported they found the explanations helpful or potentially helpful, participants indicated more than not that their responses to the Positive SUS would have changed one way or another. Participants in the Explanations group reported they were confident they did not need the extra information to complete the Positive SUS, undermining the purpose of the explanations. That said, it may be that most participants do not need the assistance but the few that do need assistance benefit from the additional information.

Conclusions

The takeaway from this study is that test moderators probably should not worry that they may be unduly influencing usability questionnaire responses by providing additional information to help respondents understand the test content. The caveat is that the explanations need to be consistent with the meaning and intent of the items. How to determine the level of consistency or the appropriateness of that additional information is an entirely different question that requires further investigation.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.