Abstract

This research aims to study trust in Human-Autonomy Teaming (HAT). A multiple regression model was employed to explore potential predictors in tasks that might dynamically influence trust levels. By computing entropy across different system layers of a remotely piloted aerial vehicle system, we measured the influence of these dynamic predictors on trust. The findings suggest the presence of potential trust predictors in human-autonomy interactions as measured using entropy. Some layers had to be combined due to high multicollinearity, addressed through Principal Component Analysis (PCA). Further research is required for a more in-depth understanding of these components and their implications for trust dynamics.

Introduction

In the contemporary landscape of research and development, human-autonomy teaming has attracted substantial attention. This concept pertains to the operation of humans with intelligent and autonomous agents, working interdependently toward a common goal (Chen & Barnes, 2014; McNeese et al., 2018). For autonomous agents to be recognized as legitimate team members, they should embody a digital or cyber-physical entity that exhibits self-governance and can communicate with other teammates utilizing its own capabilities (Demir et al., 2016; Myers et al., 2019).

Effective human-autonomy teaming involves transforming autonomous systems to be better teammates, not merely making them more independent (Johnson et al., 2011). Autonomous systems must evolve to become trusted teammates (Abbass et al., 2018). Without human trust in their autonomous counterparts, situations could become risky or unpleasant, for example, drivers not trusting their autonomous cars (Hartwich et al., 2018).

Human-automation trust is defined as the attitude that an individual team member believes an agent will assist them in achieving their goals in situations of uncertainty and vulnerability (J. D. Lee & See, 2004). To measure trust, researchers have employed various methods, such as questionnaires, for example, the Trust in Automation Scale (Lewis et al., 2018) and Trust in Automated Systems Survey (Jian et al., 2000), and objective measures such as observations based on performance (Law & Scheutz, 2021).

Recent studies indicate that trust is not static but dynamic, evolving based on continuous interactions and experiences. Dynamic trust in automation can be influenced by various factors, ranging from individual characteristics like culture and age to system aspects like reliability and automation level (Yang et al., 2023). Researchers have sought to simulate real-world scenarios to better comprehend trust dynamics. For example, J. Lee and Moray (1992) utilized a simulated pasteurization task to track participants’ trust levels over multiple trials, while Manzey et al. (2012) analyzed human operator trust across both positive and negative interactions with an automation platform. Both studies identified significant trust adjustments based on system performance.

Previous researchers have used Dynamical Systems Analytical methods, such as recurrence quantification analysis to study trust. For example, Mitkidis et al. (2015) used multivariate recurrence quantification analysis to study the degree of synchrony in human-human teams, suggesting that interpersonal physiological synchrony may predict interpersonal trust. Grimm et al. (2018) applied Joint Recurrence Quantification Analysis (JRQA) to explore team communication in a remotely piloted aircraft system. In addition to JRQA, other methods utilizing information entropy (Shannon, 1949) were applied, measuring the changing amount of information reorganization in a system over time. This paper will employ this latter method to investigate the potential relationship between trust and changing entropy system states using predictive linear regression techniques.

The Current Study

While the dynamics of trust in human-autonomy teaming (HAT) have been acknowledged, the mechanisms influencing its variations over time remain underexplored. This study aims to address this gap by examining trust as a dynamic variable responding to human-automation interactions. We investigate two main questions: RQ1: How do specific task events influence dynamic trust levels in HAT? RQ2: Can entropy calculations across system layers yield insights into trust dynamics? We hypothesize that H1: Trust in automation will fluctuate in response to continuous interactions with the autonomous system, and H2: Trust in teammates will be influenced by the type of teammate (AI/synthetic versus human).

This study measures system reorganization and its relationship to subjective trust, aiming to provide a more objective view of trust evolution based on system dynamics. The goal is to contribute to optimally designed human-autonomy interactions that enhance trust and collaboration.

Methods

A total of 42 individuals, aged between 18 and 31 (M = 20.5, SD = 2.9), participated in the research, recruited from the Georgia Institute of Technology and surrounding areas. Participants were organized into 21 teams, each comprising three members, with two members per team being participants and the other either an autonomous agent pilot or confederate experimenter pilot. The study was approved by the Georgia Tech Institutional Review Board.

The research employed the Cognitive Engineering Research on Team Tasks-Remotely Piloted Aircraft System-Synthetic Task Environment (CERTT-RPAS-STE; Cooke & Shope, 2005). The task environment comprised three separate stations, each equipped with two screens, a keyboard, and a mouse. The goal was to take clear pictures of ground target waypoints. The Air Vehicle Operator (AVO) was either an autonomous agent or a trained experimenter, controlling the remotely piloted aircraft RPA’s altitude and airspeed adjustments in response to requests from the Photographer (PLO) and data from the Decision Making/Planning and Control (DEMPC). The Photographer (PLO) adjusted camera settings and negotiated altitude and airspeed with AVO to take clear photos of targets.

Participants were randomly assigned roles of either Decision Making/Planning and Control (DEMPC) or PLO. They were seated in different workstations, unable to see or hear other teammates. In the autonomous teammate condition, the pilot role was played by a synthetic teammate computer agent (Ball et al., 2010). The process began with an introduction and review of training materials, followed by a practice mission. Teams then carried out four missions, each lasting approximately 40 min. Participants completed three questionnaires: one before training, another after Mission 3, and a third after Mission 4. This study focuses on the first two questionnaires, as all participants transitioned to working with the human (experimenter) AVO at Mission 4.

Measures

Trust was assessed using two questionnaires. The first, originally developed by Mayer and Gavin (2005) and modified by Demir et al. (2021), used a 5-point Likert scale for 25 items. The second, the Checklist for Trust between People and Automation Scale (Jian et al., 2000), comprised 12 items on a 7-point Likert scale.

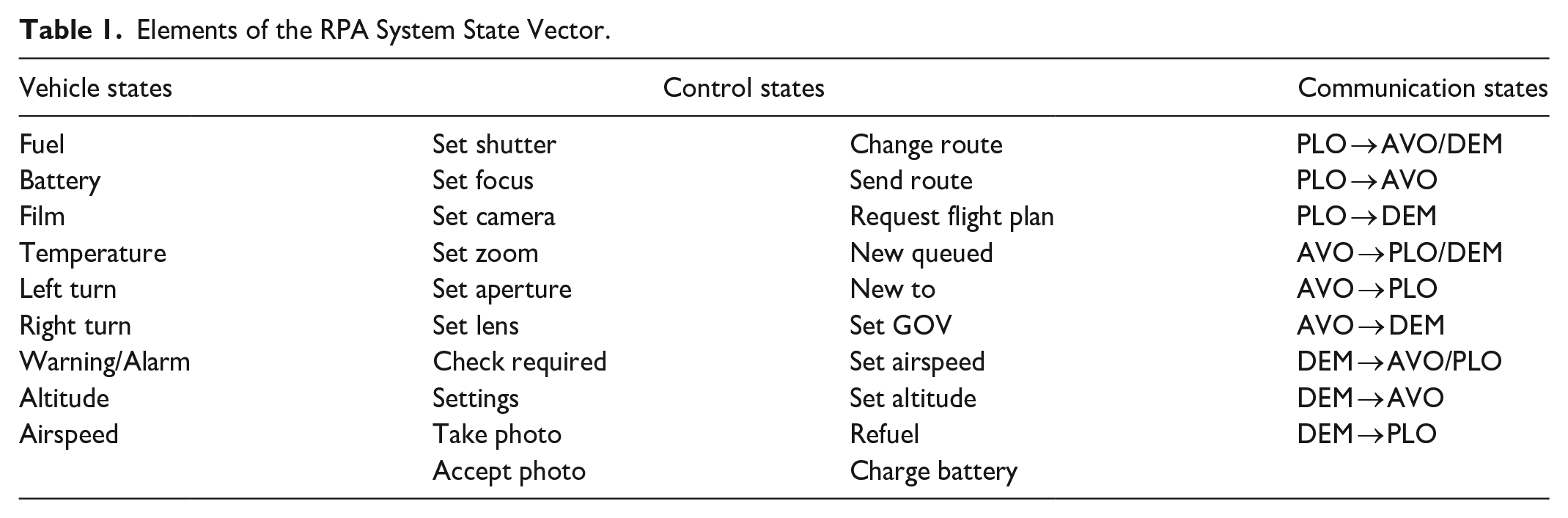

System Layers were analyzed to understand interactions and their potential relationships with trust dynamics. These layers, each holding a set of events or controls, included alarm warning events, turning events, change of altitude and airspeed, switch resources, AVO related events, PLO related events, DEMPC related events, AVO control, PLO control, and DEMPC control (Table 1).

Elements of the RPA System State Vector.

Results

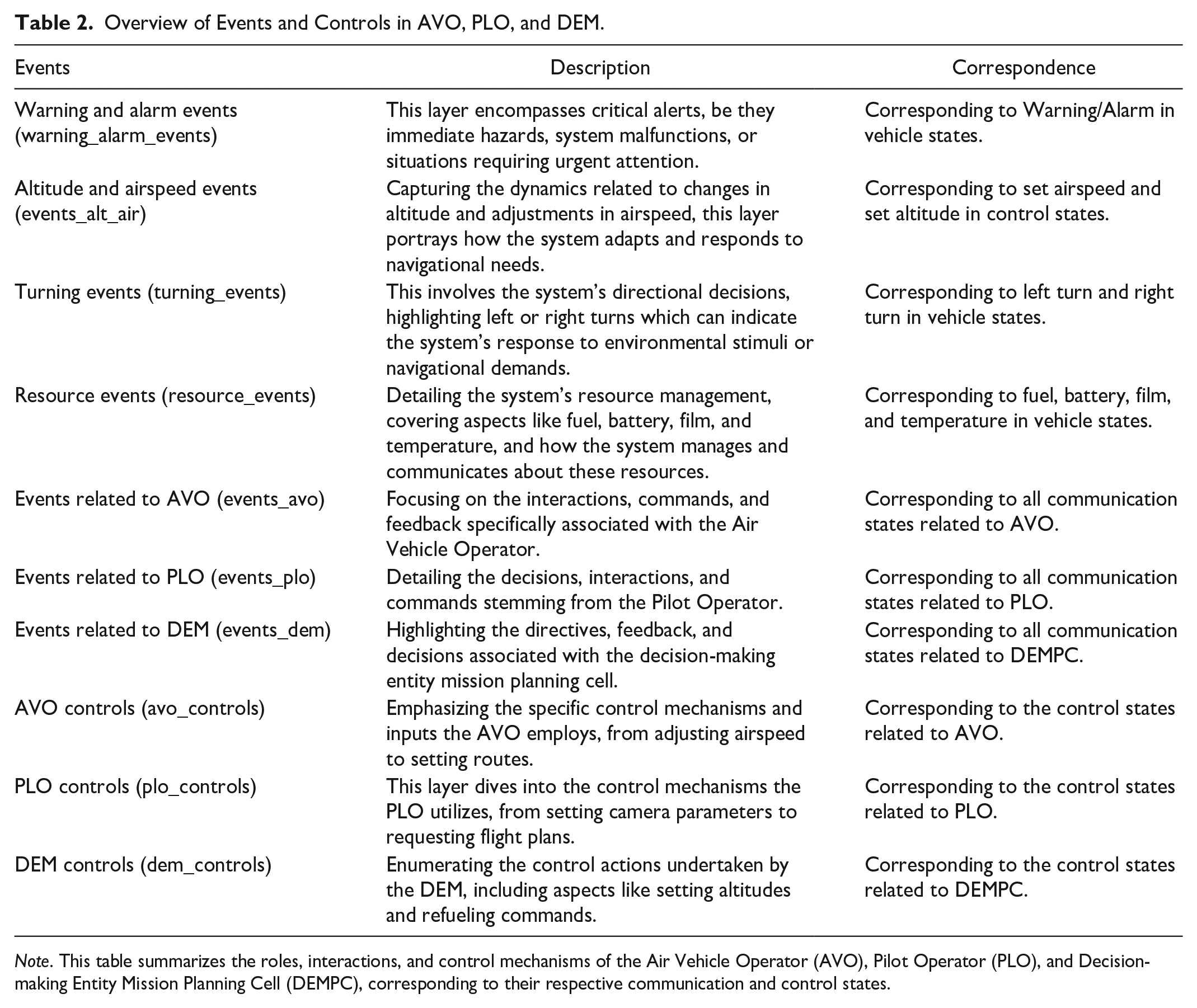

Entropy was calculated across ten different events across system layers (Table 2) in the RPA task (alarm warning events, turning events, change of altitude and airspeed, switch resources, AVO related events, PLO related events, DEMPC related events, AVO control, PLO control, DEMPC control) for Mission 1, Mission 2, and Mission 3, coinciding with trust survey recordings. (Team 9 mission 4 is the average score as the original data was missing.)

Overview of Events and Controls in AVO, PLO, and DEM.

Note. This table summarizes the roles, interactions, and control mechanisms of the Air Vehicle Operator (AVO), Pilot Operator (PLO), and Decision-making Entity Mission Planning Cell (DEMPC), corresponding to their respective communication and control states.

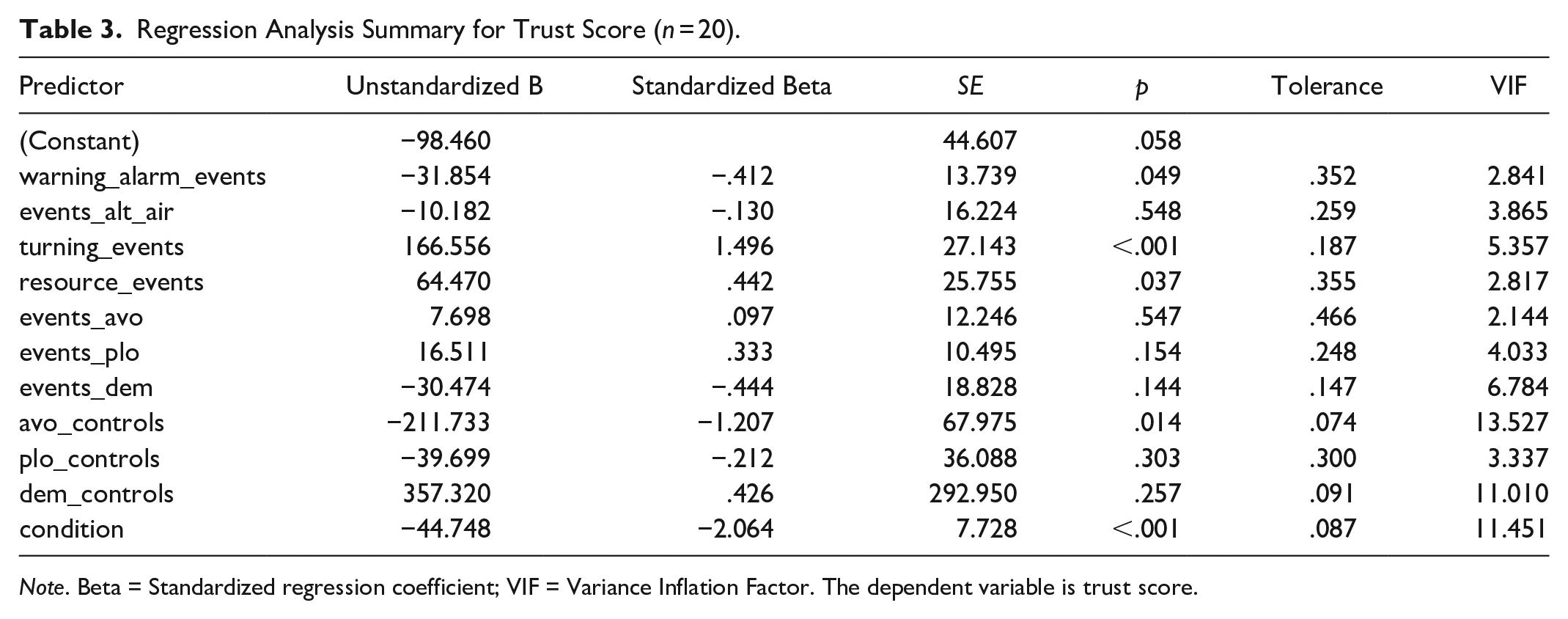

Cook’s distance evaluation found Team 5 to have a Cook’s distance in the 95th percentile, indicating a highly influential observation. Team 5 was removed from subsequent analyses. The results indicated that approximately 91.9% of the variance in the dependent variable can be explained by the model (R2 = .911, F (11, 8) = 7.470, p = .004). After adjusting for the number of predictors, the adjusted R2 was .789, indicating that the model accounts for approximately 78.9% of the variance in trust change when considering the number of predictors included. Specifically, “turning_events” (β = 166.556, p < .001) and “resource_events” (β = 64.470, p = .037) were significant predictors of trust score, with “turning_events” showing a particularly strong positive association. In contrast, “warning_alarm_events” (β = −31.854, p = .049) and “avo_controls” (β = −211.733, p = .014) were found to be negatively associated with the dependent variable, with “avo_controls” showing a notably larger negative effect. The distinction between synthetic and human teammates significantly predicted outcomes (β = −44.748, p < .001), indicating that being in a team with the synthetic teammate was associated with a decrease in the trust score (see Table 2).

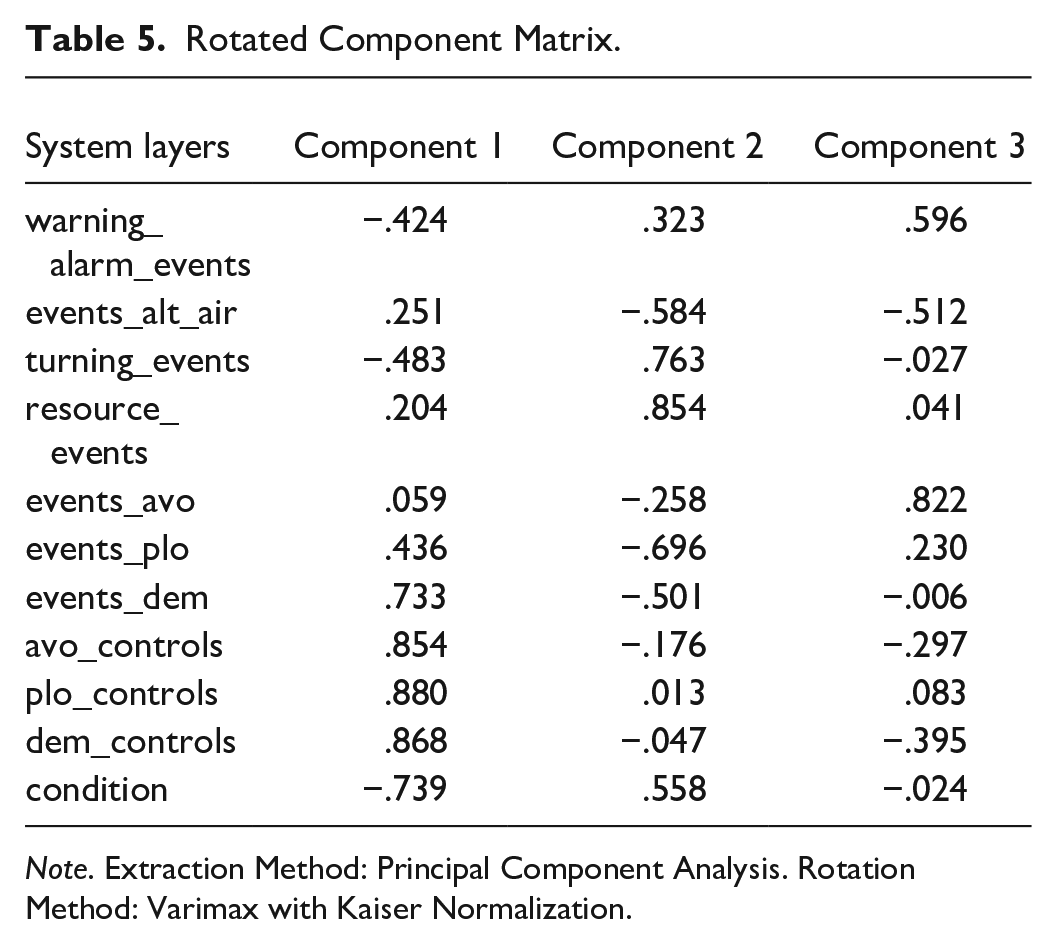

Collinearity diagnostics revealed that several variables exhibited VIF values above the commonly used threshold of 5, suggesting multicollinearity. Specifically, “avo_controls” (VIF = 13.527) and “dem_controls” (VIF = 11.010) had elevated VIF values, with the types of teammates (Condition) giving a VIF of 11.451. These findings suggest that the variance of the coefficients for these predictors could be inflated due to multicollinearity. To mitigate multicollinearity, a Principal Component Analysis (PCA) with Varimax rotation was conducted. Three components with eigenvalues over 1 were retained, explaining 77.79% of cumulative variance (49.57%, 16.58%, and 11.64% respectively). The rotated component matrix is shown in Table 3.

Regression Analysis Summary for Trust Score (n = 20).

Note. Beta = Standardized regression coefficient; VIF = Variance Inflation Factor. The dependent variable is trust score.

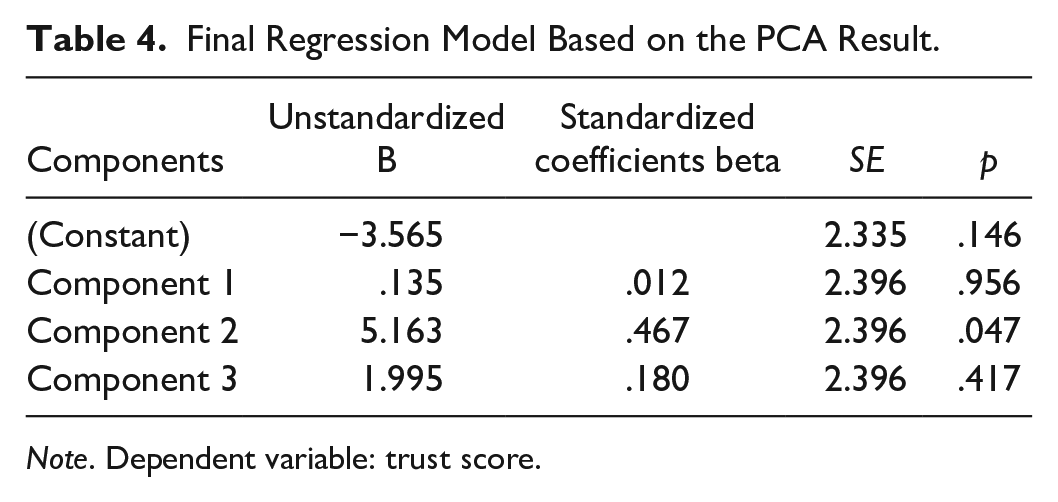

After reducing the dimensionality of the predictors, a final multiple regression analysis was conducted to predict the trust score using the three principal components derived from the initial set of variables. The overall model was not significant (R² = .250, F (3, 16) = 1.780, p = .191), but component 2 reached statistical significance (β = 5.163, p = .047) (see Table 4). According to the component matrix, warning alarm events (.323), turning events (.763), resource events (.854), and condition (synthetic teammates vs human teammates) (.558) positively loaded on Component 2. This finding echoes the results of the initial regression model (see Table 5).

Final Regression Model Based on the PCA Result.

Note. Dependent variable: trust score.

Rotated Component Matrix.

Note. Extraction Method: Principal Component Analysis. Rotation Method: Varimax with Kaiser Normalization.

Discussion

This research explored the dynamics of trust in HAT, focusing on identifying potential correlations between dynamical activities and interactions with autonomous systems and trust levels.

Hypothesis 1 proposed a correlation between trust in automation and interactions with the autonomous system. The final regression model, using principal components derived from PCA, addressed various interaction metrics. Although the overall model was not significant, Component 2 showed a statistical correlation, indicating a potential relationship between trust and vehicle states, specifically warning alarm events, turning events, and resource events. Regarding Hypothesis 2 (trust in teammates will be influenced by the type of teammate), Component 2 indicated a correlation with fluctuations in trust due to changes in teammate conditions. This supports the notion of trust as a dynamic and context-dependent variable in HAT systems.

These findings align with emerging trends in human-automation interaction research, suggesting that machine data, complemented by human data, could offer a more comprehensive understanding of trust dynamics (Yang et al., 2023). The study provides empirical support for the theory that trust dynamics in HAT are significantly influenced by the nature of interactions and the context in which they occur. The results also provide insights into humans’ perceptions of their teammates as human or AI, echoing findings by Feng et al. (2019) and Merritt and McGee (2012). There may be a trend where, in high-risk environments, humans demonstrate greater trust in perceived AI entities than in human counterparts.

Limitations of the study include the modest sample size and the focus solely on relation-based trust measurement tasks. Future research should explore how continuous interactions are associated with changes in trust, whether it strengthens, decays, or stabilizes. While this study has uncovered relationships between trust levels and human-AI team interactions, it did not delve deeply into the dynamic nature and multidimensional characteristics of trust. Future research should explore the nuances of incremental trust changes and possibly employ more frequent and diverse trust measurement methodologies.

In conclusion, the findings provide partial evidence supporting significant fluctuations in trust in automation based on continuous interactions. Understanding these dynamic changes is important for designing AI systems that are intelligent, reliable, and capable of adapting to user needs and expectations.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.