Abstract

The current study utilizes a mixed-method approach to investigate the efficacy and affordances of three modern collaborative technologies (Slack, Google Hangouts, and Microsoft Teams). Distributed teams of three participants collaborated to plan a simulated trip with various requirements using one of three modern collaborative technologies (Slack, Google Hangouts, Microsoft Teams). The study elicited team performance, perceived platform usability, and qualitative focus group data. Quantitative results show that Microsoft Teams resulted in significantly worse team performance than Slack and Google Hangouts; additionally, only Slack was positively associated with its usability and team members’ perceptions of team effectiveness. Two themes were identified regarding how these technologies afforded and shaped team practices and social interactions: (a) technology leads to a loosely defined (or no) leadership, and (b) technology promotes the sense of team and trust in ad hoc teams performing fast-paced and time-sensitive tasks.

Keywords

Introduction

In response to the global COVID-19 pandemic, the rapid acceleration toward virtual, distributed team environments has become not just a temporary adjustment but a lasting transformation in workplace dynamics, with distributed teams becoming an organizational norm (Smite et al., 2023). While many laud this shift as a positive step for future workers, the complexity and variety of the collaborative technologies leveraged can also complicate how we live and work together. This evolution necessitates an in-depth empirical investigation of how different tools impact team performance and perceived effectiveness and how the benefits and limitations of various design features drive such differences. While there is a large body of existing work that examines the utilization and interaction behaviors associated with collaborative technologies, little research attends to the actual empirical team performance and rich teaming experiences when developing many of these technologies.

In fact, computer-mediated distributed teamwork has long been a central research agenda in the field of human factors and has subsequently become critical to conduct work in various applied settings (Erez et al., 2013; Simão Filho et al., 2016). Distributed teams can swiftly respond to multiple issues and provide a variety of benefits, including company downsizing, geographic dispersion of essential employees, granting employees greater flexibility (Townsend et al., 1998), offsetting high travel costs, and tight schedules (Raisinghani et al., 2010). The ongoing COVID-19 global pandemic also has made such benefits more evident, as about half of employed individuals switched to working from home remotely (Brynjolfsson et al., 2020). However, a slew of new issues has arisen from such increased use: what distributed teaming tool to choose, how to use them effectively, and the effects of that tool on team performance and development. As the current global pandemic has shown a more urgent demand for distributed remote work and the applications available to meet users demands, the current study seeks to empirically compare three modern collaborative technologies (Slack, Google Hangouts, Microsoft Teams) for efficacy in usability and performance in a simulated task for ad hoc teams.

Specifically, the current study focuses on how above mentioned three mainstream collaborative technologies affect team performance, perceived effectiveness, and interactions during an everyday collaborative team task using a mixed-method approach. Table 1 presents the three research questions guiding this study: (a) How do team performance and perceived team effectiveness vary across the three platforms?, (b) What are the perceived common benefits/limitations specific to each of these collaborative technologies? Moreover, how does usability vary across each platform?, (c) What are the perceived common benefits/limitations of these collaborative technologies, regardless of the specific platform, and how are these manifested in teamwork interactions?

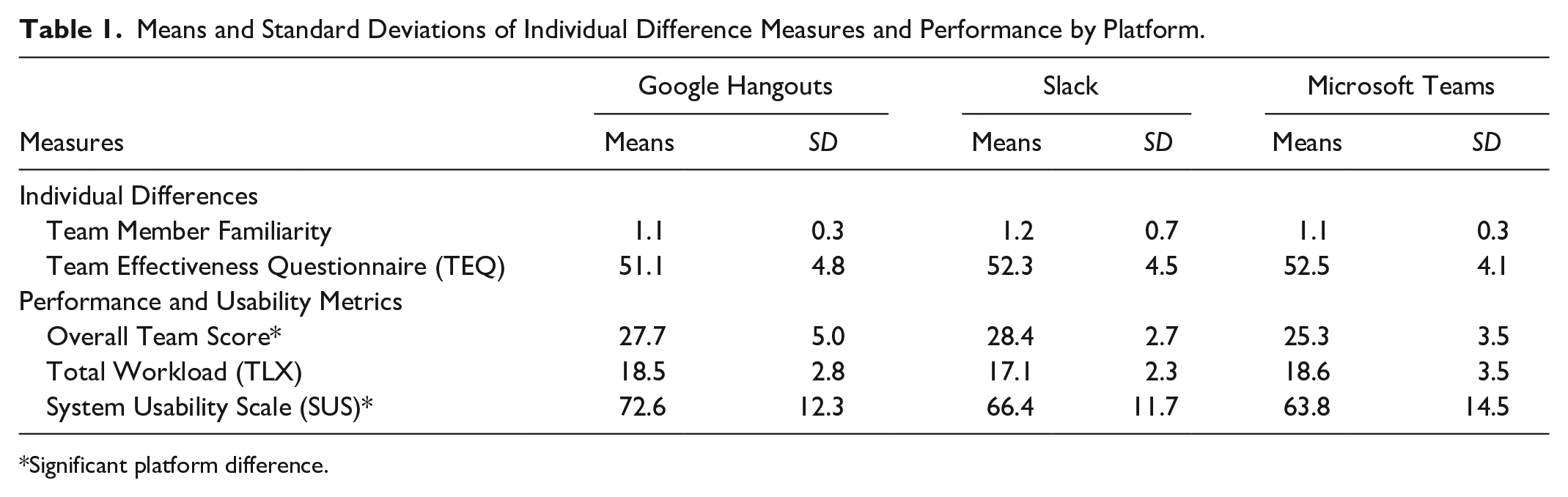

Means and Standard Deviations of Individual Difference Measures and Performance by Platform.

Significant platform difference.

Studying the above mentioned three collaborative technologies with real human teams contributes to understanding computer-mediated collaborative human behavior for future work in several ways. First, we offer one of the first empirical studies to compare team performance, perceived team effectiveness, and interactions across multiple mainstream collaborative technologies, extending current literature on computer-mediated teamwork in human factors. Second, we identify how these technologies afford and shape team practices and social interactions and highlight how some design features are beneficial or disadvantageous for distributed teamwork. Third, this study contributes to a better understanding of the intertwining relationship between technology, leadership, and trust in a distributed setting.

Background

Studies of distributed teamwork and collaborative technologies are a long-standing hallmark of communities interested in human behavior and technology like human factors. There have been various studies showcasing the fact that collaborative technologies often lead to enhanced co-located and distributed team effectiveness. For example, Slack was found to be very useful in educational situations when global work is required of students (White et al., 2017) and was also a helpful tool for research collaboration within and across lab spaces (Gofine & Clark, 2017). However, there is a dearth of direct empirical and controlled comparisons of these technologies that utilize a mixed-methods approach to identify quantitative differences and contextualize those differences from qualitative experiences. The following review of the literature highlights how this gap affects distributed teamwork research and collaborative technology design.

Past literature has largely ignored distributed remote work within ad hoc teams. Ad hoc teams are a particular team that comes together to work for a limited period to complete a joint task and then disbands after the joint task (Calefato & Lanubile, 2010). Such teams have a significant place in applied settings as they are frequently used for business collaborations (Calefato & Lanubile, 2010), software engineering (Cherry & Robillard, 2008), and other fast paced teaming environments (Dustdar, 2004). Long-term teams are the converse of ad hoc teams as they may have worked together in the past, typically work for more extended periods, and may have expectations to work together again in the future (Haines, 2014). Ad hoc teams have a specific advantage when working remotely as it allows them to come together and complete work quickly. Unfortunately, this often means ad hoc teams are limited to text-based communication only (Calefato & Lanubile, 2010; often as a matter of convenience), and a consequence of the choice of collaborative tool is critical to their development and performance (Calefato & Lanubile, 2010). Even though the rising prevalence of distributed ad hoc teams has advanced rapidly throughout the pandemic, many details regarding collaborative technology usage and performance remain unknown.

Methods

Participants

One hundred eleven undergraduate students were recruited from a large university in the United States. Participants were offered course credit in exchange for their participation and were recruited from an online pool. Participants were formed into triads, resulting in 37 teams (seven teams were dropped from analysis as they served as pilot studies).

Ad Hoc Teamwork Scenario

The task created required team members to work independently and coordinate with each other but did not require specialized knowledge, given our participant population of college students. The task required teams to create an itinerary for a business trip to Los Angeles for a work-related conference for a week. The itinerary required three main elements: flight information for each team member, hotel accommodations, and restaurant reservation details for one evening. Specific constraints were placed based on individual team member’s preferences, resulting in a total of 18 constraints across flight, accommodations, and restaurants. The itinerary requirements of the task were explained to all participants, who each received two restrictions for each trip element, a total of 6 out of the full 18 restrictions available. Therefore, team members were required to communicate with each other to determine if trip details were satisfactory.

Design and Procedure

Teams consisting of three participants were randomly assigned to one of three possible platform conditions. In each three person team, team members were randomly assigned identifiers to refer to each other in team communications (User 1, User 2, or User 3). Participants gave their informed consent before beginning the study. The pre-task survey collected demographics, familiarity with the other participants, and familiarity with each of the three platforms. Next, participants were asked to use their collaborative tool’s features as the only method of communication with other team members. Next, the teams were given the task instructions and requirements but were not told how to assign tasks to team members, only that each team member should share their unique restrictions with other team members. Finally, teams were told that their score was based upon how closely and accurately their itinerary met all restrictions. After any questions were answered, participants were instructed to watch a training video on the collaborative technology they were about to use. Once the video finished, participants’ screen recording was started, and they were told to begin the team task. After 10 of the allotted 15 min passed, participants were given a warning and informed to spend the remaining time communicating their final plan and creating the final itinerary deliverable. After 15 min, a researcher informed the participants to conclude. Participants then completed the workload measure, Team Effectiveness Questionnaire, and the SUS. Finally, the two triad teams were separated into different rooms to complete a focus group session.

Measures

Individual Perceived Workload

The National Aeronautics and Space Administration Task Load Index (Hart & Staveland, 1988) was used to measure participants’ perceived workload of the team task. Participants indicated their perceived workload by responding to six factors of workload on a five-point Likert scale. Responses for each question were summed, with higher scale scores indicating higher perceived workload.

Individual Perceived System Usability

The participants rated the usability of each platform using the System Usability Scale (Brooke & others, 1996). Participants rated their agreement to ten usability statements on a 5-point Likert scale, anchored by “Strongly disagree” and “Strongly agree.” Responses for each item were summed and re-scaled from a 40-point scale to a 100-point scale making the lowest possible score 0 and the highest 100, with greater scores indicating higher perceived usability.

Team Performance

Team performance was measured according to performance on the experimental task. Points were granted for not only providing the required information but also meeting the various constraints. This distinction was made by scoring based on accuracy and whether an answer was provided, with each of these conditions earning a single point. Therefore, a correct answer would result in two points, an incorrect answer one point, and a missing response received no points. In addition, each participants’ screen recording was observed to confirm the itinerary’s information (e.g., confirming the cost of an airline ticket). As a result, team performance scores could range from 0 to 33 points, with higher points indicating better team performance.

Team Effectiveness

Participants reported their perceptions of the effectiveness of their team’s task performance by completing the modified Team Effectiveness Scale (Rentsch & Klimoski, 2001). Participants responded to eight statements using a five-point Likert scale anchored by “Strongly disagree” and “Strongly agree.” Items were summed and ranged from 5 to 40, with higher scale scores indicating greater perceived team effectiveness.

Team Focus Group

An in-depth qualitative analysis was used to investigate the focus group data (Strauss, 1987). Its goal is to explore how individuals construct their personal perceptions, understandings, and accounts of the felt experiences in such teamwork (Smith & Osborn, 2003). Two authors were involved in the four-step qualitative analysis: (a) the transcriptions of participants’ responses were combed through to acquire a sense of the overall summary of their experiences of the collaborative technologies and mediated teamwork; (b) themes and sub-themes regarding benefits/weaknesses of each technology and how its affordance affected teamwork were identified; (c) cases and examples of themes and sub-themes were grouped to generate a detailed description in an iterative process; (d) themes and sub-themes were synthesized to summarize fundamental aspects of participants’ experiences of computer-mediated teamwork.

Results

The primary dependent variables of interest were team performance (the computed team score), perceived system usability, and overall task workload to answer multiple questions of this research (such as the performance and usability differences between platforms). In addition, we were also interested in the relationship between team-related individual differences (prior team experience, general perceptions of teams, and participant familiarity) and how they might impact team performance, usability, and workload. All dependent variables are illustrated in Table 1.

All participants in our study had very low familiarity with other members of their team. The independent variable was the platform used (Hangouts, Slack, and Teams). The dependent variables were analyzed with multivariate analysis of variance (MANOVA) procedure. The multivariate main effect of technology used was significant, Wilks’ λ = 0.72, F (12, 162) = 2.42, p < .05, η p 2 = .15, indicating a significant effect of the platform on some of the dependent variables. Univariate analyses showed significant group (platform) differences in team score, F (2, 87) = 5.29, p < .05, η p 2 = .11, and system usability scale, F (2, 87) = 3.69, p < .05, η p 2 = .08.

The other dependent variables showed no group differences. Follow-up Bonferroni-corrected pairwise comparisons showed that for team score, groups using Teams (M = 25.3, SD = 3.5) scored significantly lower than groups using Slack (M = 28.4, SD = 2.7). The team scores of groups using Hangouts were marginally significantly better than Teams (p = .055). The difference in team scores between Google Hangouts and Slack was not significantly different. Pairwise comparisons of system usability score showed that Hangouts had a significantly higher system usability score than Teams but was not significantly different from Slack. There were no significant differences in usability between Slack and Teams.

We also examined associations between team score and prior team experience, familiarity, and individual differences in TEQ and SUS to complement the means analysis. One of two significant correlations was with the Slack platform. Team score was significantly positively associated with usability (r = .45, p < .05); that is, the more Slack was perceived as having good usability, the higher the team score. In addition, the only other significant correlation was a positive association between team score in Slack and perceived Team Effectiveness (r = .40, p < .05). The positive association indicates that Slack teams’ perceptions of their team’s effectiveness accurately reflected their actual performance. However, no other platform showed this positive association; that is, perceptions of team effectiveness did not relate to team score.

In summary, the comparisons of platforms show that teams using Slack performed best, as measured by team score, while those using Microsoft Teams performed the worst. Recall that team score was a composite variable calculated from the quality of the final itinerary (i.e., the extent to which it met all restrictions). However, analysis of perceived usability showed that Hangouts was perceived as the most usable of the three platforms.

Qualitative Analysis

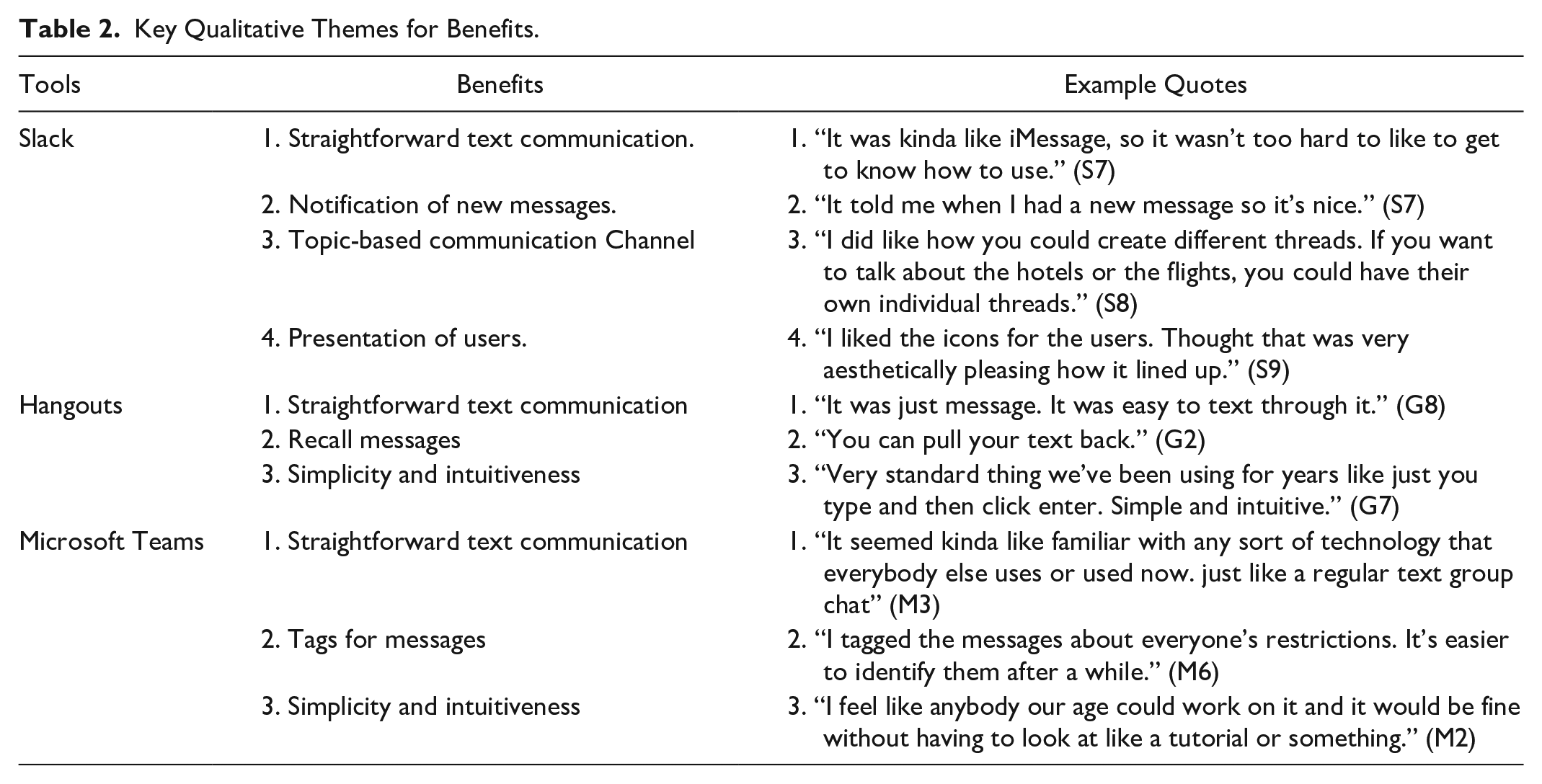

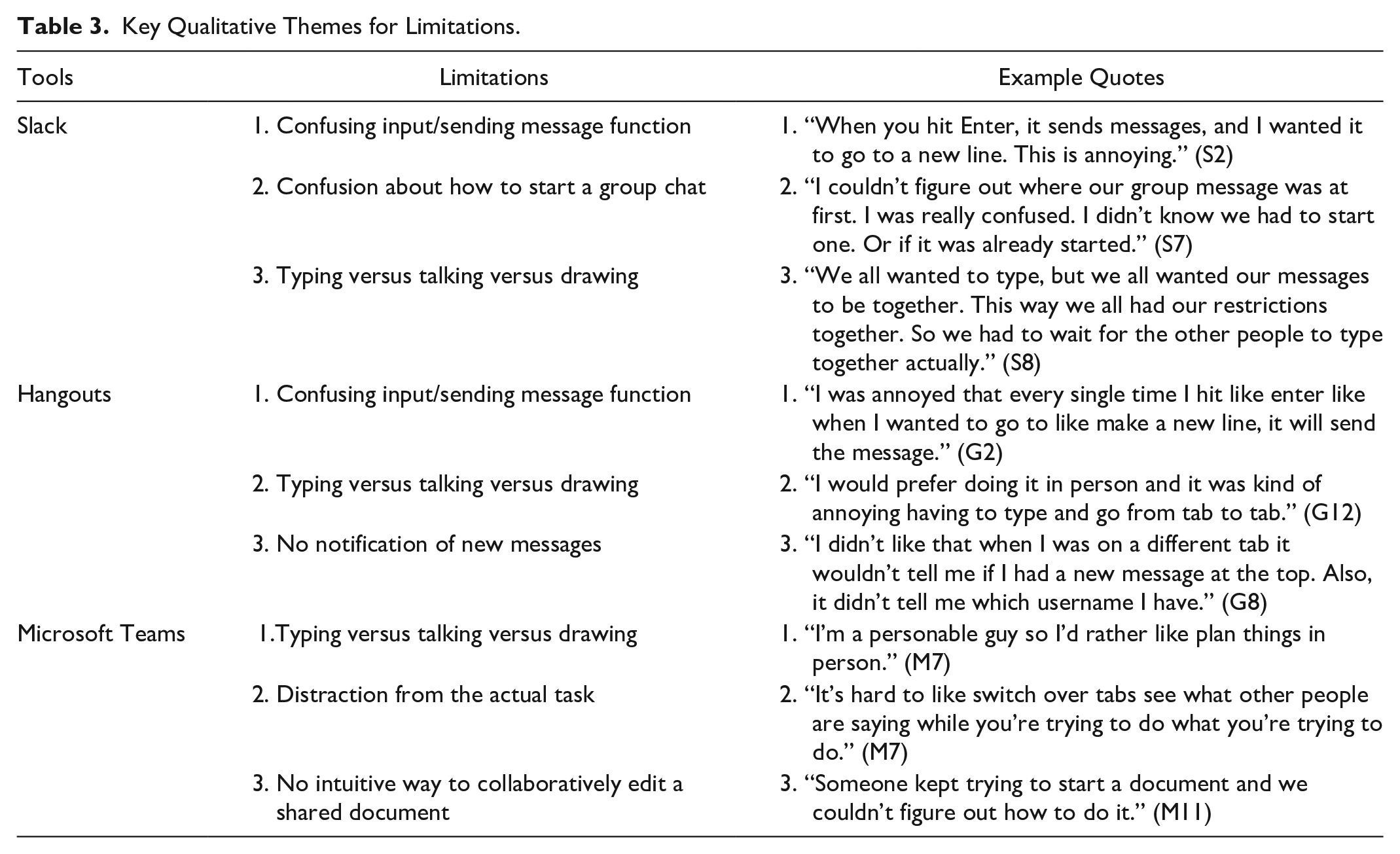

All participants acknowledged that the collaborative technology (e.g., Slack, Google Hangouts, or Microsoft Team) played a significant role in their team practices. However, no team considered a particular technology overly positive or negative. Instead, many participants held mixed feelings and highlighted both benefits and limitations of each tool—some focused on the design features (e.g., input method and interface), while others were concerned with the mediated experiences (e.g., comparing to face-to-face interaction). Tables 2 and 3 summarize key themes emerging in participants’ perceptions of each technology along with example quotes (S: Slack; G: Google Hangouts; and M: Microsoft Teams).

Key Qualitative Themes for Benefits.

Key Qualitative Themes for Limitations.

Participants reported that all three tools shared some similar benefits for mediating and supporting teamwork. The “straightforward text communication” was especially highlighted as a main advantage across all three. In addition, participants described that even if they had never used these tools before, the learning curve was extremely low due to an instant messaging functionality like texting. For them, the similarity to traditional communication tools seemed to be a critical factor in making collaborative technologies user desirable (e.g., willing to use) and friendly (e.g., easy to use). However, they also mentioned that the type-and-enter input method used in these tools was a double-edged sword: it did not obviously afford more complicated tasks such as organizing content via new lines or bullet points (this feature is supported in each platform, however, many users could not see its signifier).

In addition, while various specific benefits and limitations regarding each tool were noted, an emerging consensus was that Slack was perceived as more complicated, while the other two more “simple and intuitive.” This finding does not mean that participants had a negative attitude toward Slack. Instead, they appreciated a wide range of its design features, including new message notifications, topic-based communication channels, and aesthetic user presentation, considering those supportive of their team practices. They even complained about how other tools did not incorporate such features (e.g., G8’s comment regarding no new message notification on Google Hangouts) or became “too boring” (e.g., “the plain interface was pretty boring. Just pretty standard” [M10]). Of course, some revealed that such complexity might also introduce extra barriers to their teamwork. As members of S7 explained, it was challenging to be aware of how to create a group channel or identify where it was/whether it already existed in Slack because they had never used this tool and the design was not straightforward. It took them a certain amount of time to tackle this technological challenge. However, once they passed this stage, the benefits of Slack’s features emerged.

Discussion

One of the primary questions of this research was to examine how collaborative software platforms affected team performance. We believe that this study is one of the first empirical comparisons of the dominant collaborative platforms on the most substantial variable: team performance. Despite all the platforms providing roughly similar features (e.g., chat) and roughly similar user interface layouts (e.g., main chat window, rooms, or channels viewer), we note several team performance differences between them. For example, Slack produced significantly higher quality team products than the other platforms, and this was despite Slack not being considered the most usable of the platforms. On the other hand, Hangouts was perceived as the most usable yet did not result in the best team score. This result may be due to participants incorrectly perceiving Hangouts’ sparse, utilitarian interface and feature set as ease of use.

Another insight was the significant association between participants’ perception of team effectiveness (TEQ) and team performance, but only with Slack. The presence of an association implies that some unique aspect of Slack, not present in Hangouts or Teams, may have either allowed teams to be more aware of their performance or other team members. The quantitative analysis does not allow us to determine what this feature or user interface affordance might be, but as the qualitative analysis shows, it appears to be Slack’s orientation and encouragement to organize conversations by channels or topics. While Teams offers essentially the same functionality, it is likely a weaker affordance. The qualitative analysis also shows some shortcomings of all the platforms, which may have hindered optimal team behavior. For example, when we again consider Fiore et al.’s stages of collaboration, it becomes clear that some tools were better able to support some stages than others (Fiore et al., 2003).

Design Recommendations for Future Collaborative Technologies

Grounded in our findings, we provide potential recommendations for designing future collaborative technologies. Especially, we highlight the importance of considering: (a) structuring coordination and (b) mechanisms for trust and awareness in such design.

Structured Communication Through Channels and Threads

Slack and Microsoft Teams allow users to create the traditional so-called channels or threads for specific communication of different team-related topics. While in Google Hangouts, users must create separate chat windows and invite others to the chat using their contact or email address. In Slack, we routinely observed that participants created and used these different threads to discuss specific aspects of the task, such as flights, hotels, and restaurants. Both Hangouts and Teams have similar capabilities, yet they were often viewed as hindrances because of their complexity to deploy. In this sense, designing future collaborative technologies should emphasize affording more focused communication. The channeling of topical communication provides team members with an organized and structured manner to actively track, retrieve, and contribute information.

Mechanisms for Trust and Awareness

Trust was initially not a specific focus of this study, but through qualitative data, it is clear that trust plays a significant role in distributed teamwork in collaborative technologies for ad hoc teams. Participants routinely noted that due to the limited time of the task and the lack of specific affordances in the collaborative technologies, they, in many ways, were forced to develop on the fly trust in their team members. This finding is likely not the same type of trust that we see as developing over a prolonged period but the swift trust researched in many virtual teams (Meyerson et al., 1996). In terms of design, it is beneficial for team members to see what their team members are working on and increase the ease for individual teammates to share their work to develop more robust trust over time, which is something Teams has improved greatly in recent updates and other collaborative platforms should seek to emulate.

Conclusions and Limitations

In this paper, we have provided a comparative evaluation of the collaborative technologies Slack, Google Hangouts, and Microsoft Teams to better understand multiple aspects of distributed teamwork behavior and collaborative technology usage and design. We have highlighted that even though Slack supported significantly higher performance and was the only platform associated with perceived team performance, it was not perceived as the most usable platform. Qualitative follow-up data indicates this result is likely due to the influence of the overly complex affordances provided by Teams and the excessive simplicity of Hangouts, and lack of threads to conceptually separate work. However, some limitations should be noted when interpreting these results. Specifically, our teamwork task (group travel itinerary creation) is somewhat artificial compared to real-world team-based work. However, work requiring team collaboration is highly variable, and it is challenging to consider a specific type of task as “prototypical.”

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.