Abstract

Traditional Human System Integration (HSI) processes are at risk of being eliminated given the industry driven velocity and continuous delivery methods of Agile and Development to Operations Engineering. One such process at risk is usability standard checklists like MIL-STD 1472. The DoD Design Criteria Standard—Human Engineering, or MIL-STD 1472 is a military standard that provides guidelines for the ergonomic design of military systems and equipment. It is primarily focused on ensuring that military systems are designed to optimize human performance, safety, and comfort. However, it can be time-consuming to use given that it contains over 4,400 compliance items in its entirety. In an Agile DevOps environment, the typical delivery of products is 2 weeks with some software products boasting deployments every 11 min. Incorporation of usability and rigorous human factors checklists in the design of products using a DevOps environment is a challenge given the shorter timelines and smaller increments between product development and deployment combined with the expertise and hours needed to execute the checklists. The need for regression testing, the act of re-checking items to make sure new features have not created unforeseen usability issues, exacerbates the problem. Software quality testing for each release demands automatic methods to reduce the efforts of recursive testing. Industry standard methods in agile engineering utilize heuristics for quick turn low-cost usability inspections. This paper describes the derivation of a set of usability heuristics based on MIL-STD 1472 which can be used to identify potential human factors issues more quickly, with less cost, and reduced amount of expertise required. The paper also gives an example of a case study which incorporated automatic checking to support usability experts keeping pace with the high velocity pipelines of software development in an agile DevOps environment.

Keywords

Introduction

Human Factors Engineers need to adapt to the industry demand for reduced development time of products using agile development. This requires sharpening well refined methods to work at a faster pace and smaller scale. A partner of Agile software engineering is the set of practices called DevOps, which is short for Development to Operations. The fundamental goal of DevOps is to shorten the system’s development life cycle. DevOps boasts a principle of continuous integration and continuous delivery (CI/CD) with smaller updates to the deployed products (Kim et al., 2021). Development teams may deploy updated versions of the software at each sprint, iteration, or many times during a sprint. Incorporation of Usability, Human Factors (HF), and User Experience (UX) into the design of products using the DevOps environment is a challenge given the shorter timelines and smaller increments between product development and deployment (Steinberg, 2022). This paper describes one Human Factors Engineering team’s efforts to synchronize HF and UX product design with quick turn feature development in the DevOps environment, improving the quality of the design and proving relevancy. The team was able to successfully address the challenges of implementing an industry standard checklist of over 4,400 compliance items by distilling the checklist into those items for which automated testing could be implemented and using the remaining line items to create usability heuristics to assist our human factors practitioners in quickly assessing new features prior to releases.

Practitioner Challenge—Devops

Three significant practices that enable DevOps include: (a) agile development, (b) incremental stakeholder reviews and refinement of development effort, (c) automated testing.

Agile Development and the Speed of Relevance

Prior to agile development methods, software developers used waterfall processes where a list of features needed for a product was produced and tested in entirety prior to release. Agile development is a method for generating software in small increments, producing a smaller scale feature upon each delivery. One of the problems with the non-agile approach is that it takes a longer time for users to obtain a developed product. Many instances found that by the time a product was available for use, the need was no longer relevant. The United States Government digital services department recommends agile development practices (Department of Defense (DoD), 2019a), and states the objective “of delivering effective, resilient, supportable and affordable solutions to the end user” while the need is still relevant.

Stakeholder Review and Reprioritization of Effort

Stakeholder review and reprioritization affords developers the opportunity to get feedback and correct plans and priorities at each small increment of delivery. It reduces the cost of rework and ensures software efforts are being allocated toward features that add value to the product. There is a perception by some product managers in industry that Human Factors is overcome by executing the current methods of Agile and DevOps because it boasts including user feedback as a part of the process. However, user centered design principles can be more effectively incorporated into the design process with continual evaluation of the product to ensure each software release not only builds quality products, but also adds value to the user.

Automated Testing

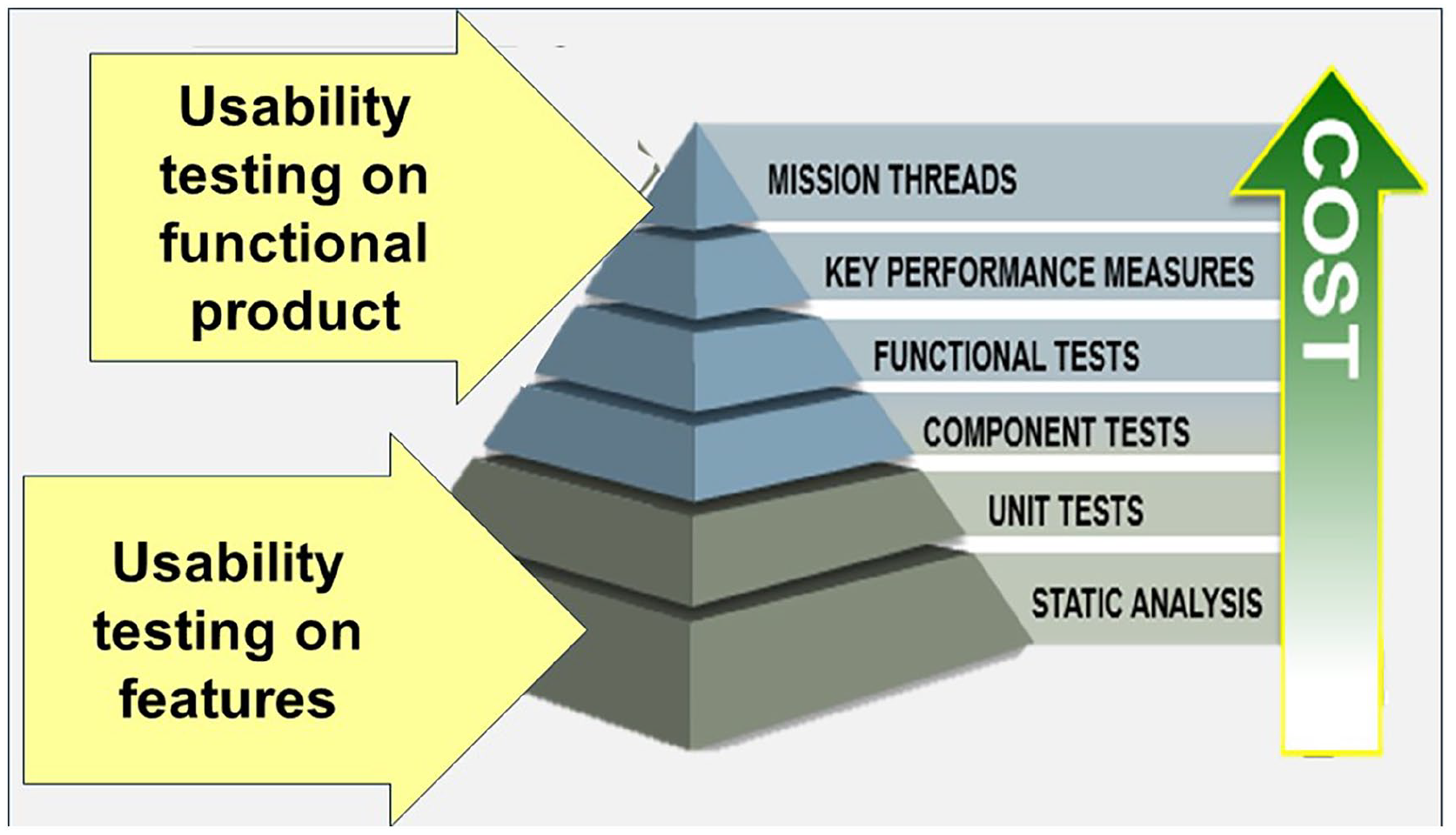

Quality testing and usability testing prior to release are important to software development. In an agile environment with continuous integration and continuous delivery, the frequency between deliveries increases the need for automated testing. One of the most significant contributors to software testing costs is regression testing or retesting to make sure new features do not break any previous product capabilities. The cost of regression testing emphatically drives the need for automated testing. In DevOps, there is a philosophy of doing simple software testing at the lowest levels of development. This is typically called the software quality test pyramid. For software quality testing, the early tests are low level small component or unit tests moving up to more complex integrated component testing at the highest test of the pyramid. At the lowest level of the pyramid, the tests are the least costly and more automated and become more expensive and involve more manual involvement as you move up the pyramid. The goal of our UX/HFE team is to apply this principle for usability testing in the same way software developers use this principle for quality testing. This involves auto-checking of usability checklists, which will be discussed further.

Use of Industry Checklists—MIL-STD 1472

MIL-STD-1472 covers a broad range of topics, including anthropometry, biomechanics, environmental factors, and human-computer interaction (Department of Defense (DoD), 2012). First released in 1959, it is reviewed and updated by experts and contains a wealth of insight from human factors engineering research and expert knowledge to prevent teams from making egregious human interaction design errors. It has been updated and re-released many times and is currently on revision H (Department of Defense (DoD), 2019b). Although it encompasses over 4,400 items covering all areas of human factors engineering, our UX team ascertained that the total number of items related to software involved 1100 items.

There are two significant challenges associated with incorporating MIL-STD 1472 reviews into the design of software products. The first is the extensive time and expertise required for compliance assessment to check each item line by line. Even the most experienced practitioners may require weeks or more to complete a rigorous review for a complex design. This becomes problematic in DevOps where a new release is targeted for every two weeks or less. There is not enough time to find, much less correct, any discrepancies found using the compliance assessment. The second challenge is the expertise needed to become fluent with MIL-STD 1472. The topics are broad and vast making it extremely difficult for a person to become fluent in understanding each design guideline and how to apply it. Given the demands of UX/HFE practioners for research, design, and usability testing, it is a tremendous challenge to become experts in all areas of MIL-STD 1472. These two challenges provide justification for the reason many of these rigorous checklists are at risk of being eliminated in an agile development world.

Usability Heuristics

Usability heuristics have a history rooted in the field of human-computer interaction (HCI) and usability engineering. Heuristics are rules of thumb or guidelines that help designers and evaluators create and assess user interfaces. Jakob Neilsen created one of the most prominent sets of usability heuristics. First published while he was working with Sun Microsystems in the 1990s, these heuristics became widespread in the Human Computer Interaction design community (Nielsen, 1992).

Usability heuristics can help save time in the design and evaluation process of user interfaces. UX/HFE practioners have been afforded many benefits from the use of Heuristics. (a) They help promote early detection of issues before they become embedded in the final product. (b) They provide a structured approach for evaluating key tenants of usability and provide consistent evaluation categories. Consistency affords more efficient evaluations and comparisons between design alternatives. (c) UX/HFE practioners can more efficiently identify common usability issues without extensive usability testing and training in all aspects of human computer interaction disciplines.

Many sets of heuristics have been developed over the years, including alternatives from other usability researchers such as Schneiderman and Wickens (Nielsen, 1994; Wickens et al., 2021). The question of our research team is “how do these heuristics compare with MIL-STD 1472?” Given the recognized value of MIL-STD 1472 and the need for heuristics to keep pace and scale with DevOps, our team ventured into an effort to develop a set of heuristics to facilitate and map to MIL-STD 1472 verification. While heuristic evaluations are a valuable tool for saving time in the design and evaluation process, it is recognized that they are not a substitute for a complete line-by-line review of all items in MIL-STD 1472. However, our team realized the benefits of these heuristics to complete preliminary evaluations provided the opportunity for practitioners to keep pace with the high velocity software pipelines being used today.

Realization of the Need for Heuristics

Our team of practitioners is a diverse group comprised of a variety of backgrounds and experience levels. The team has people with experience in graphic design, engineering, psychology, technical writing, and psychics. Having a team with such a diverse skillset is very beneficial in allowing us to have successful brainstorming sessions with many different ideas for solving the various challenges we encounter in our design process. This also allows us to partner with team members with different areas of expertise when creating experiments, optimizing the design of the product and the output metrics. However, with such different educational backgrounds and professional experiences, there are no common courses we have all taken which can be referenced when onboarding new team members. Not all team members have prior experience with MIL-STD 1472 or are familiar with complex UX concepts and testing methods. Therefore, we must create a way to provide them with a high-level overview of the concepts of MIL-STD 1472 and areas to pay particular attention to when reviewing the graphical user interfaces of various software products.

There are approximately 30 different software products our team is currently supporting. Since our User Experience (UX) team and Software Development staff is following the agile development practice, these products are making changes every 2 weeks. These changes must be constantly reviewed and assessed by a team member to ensure MIL-STD 1472 compliance and to meet our contractual obligations. The development teams demo their progress every iteration during the system demos. However, since there are so many development teams and products, there are often demonstrations occurring concurrently, and no single UX team member can attend them all. As a result, all team members attend the development teams’ biweekly sprint demos to review the progress made in the applications. When attending the demos, team members are watching to see if any MIL-STD 1472 principles are being violated or if any products are not consistent with the rest of the system. As a result, we needed a way for the team to have a cohesive approach and a quick reference for the high-level concepts covered in MIL-STD 1472.

Creation of MIL-STD-1472G Heuristic Categorization

The team began by identifying which lines of MIL-STD-1472 are relevant to software. From the line-by-line analysis, it was identified that approximately 1,100 lines are applicable to software. While 1,100 is a manageable number compared to the entirety MIL-STD 1472, it is still far too much for all members to maintain expertise of each line item. The next step was to separate the line items that could be defined using a specified visual appearance and design style. An estimate of 500 items were identified that could be defined by a user interface style guide. These items included font sizes, foreground to background colors, and behaviors of specific graphics. The items can be incorporated into software libraries that can be built once and incorporated into automatic testing at each software release.

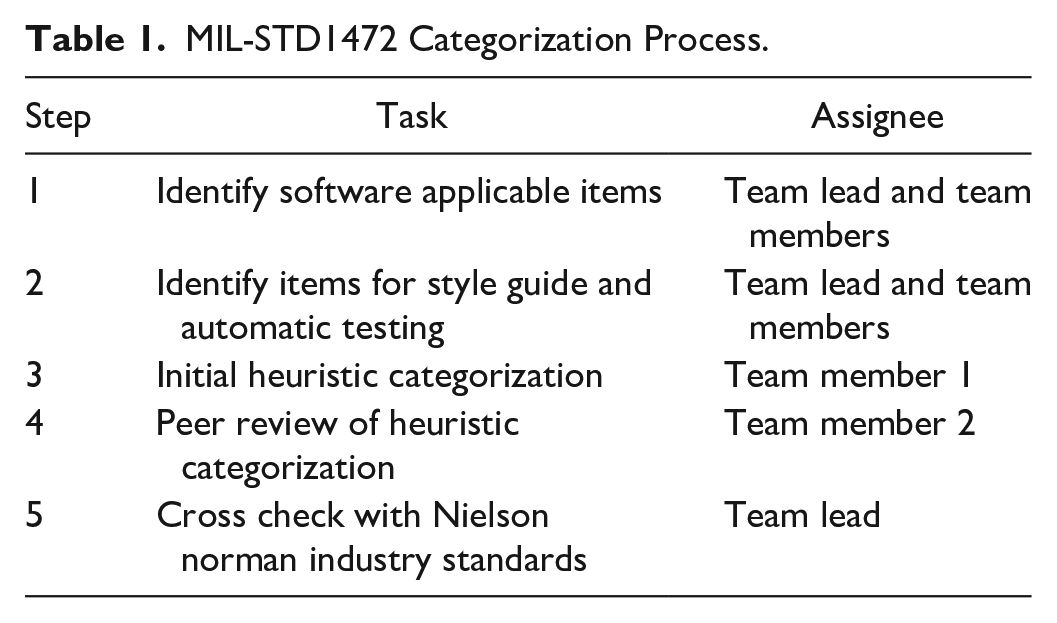

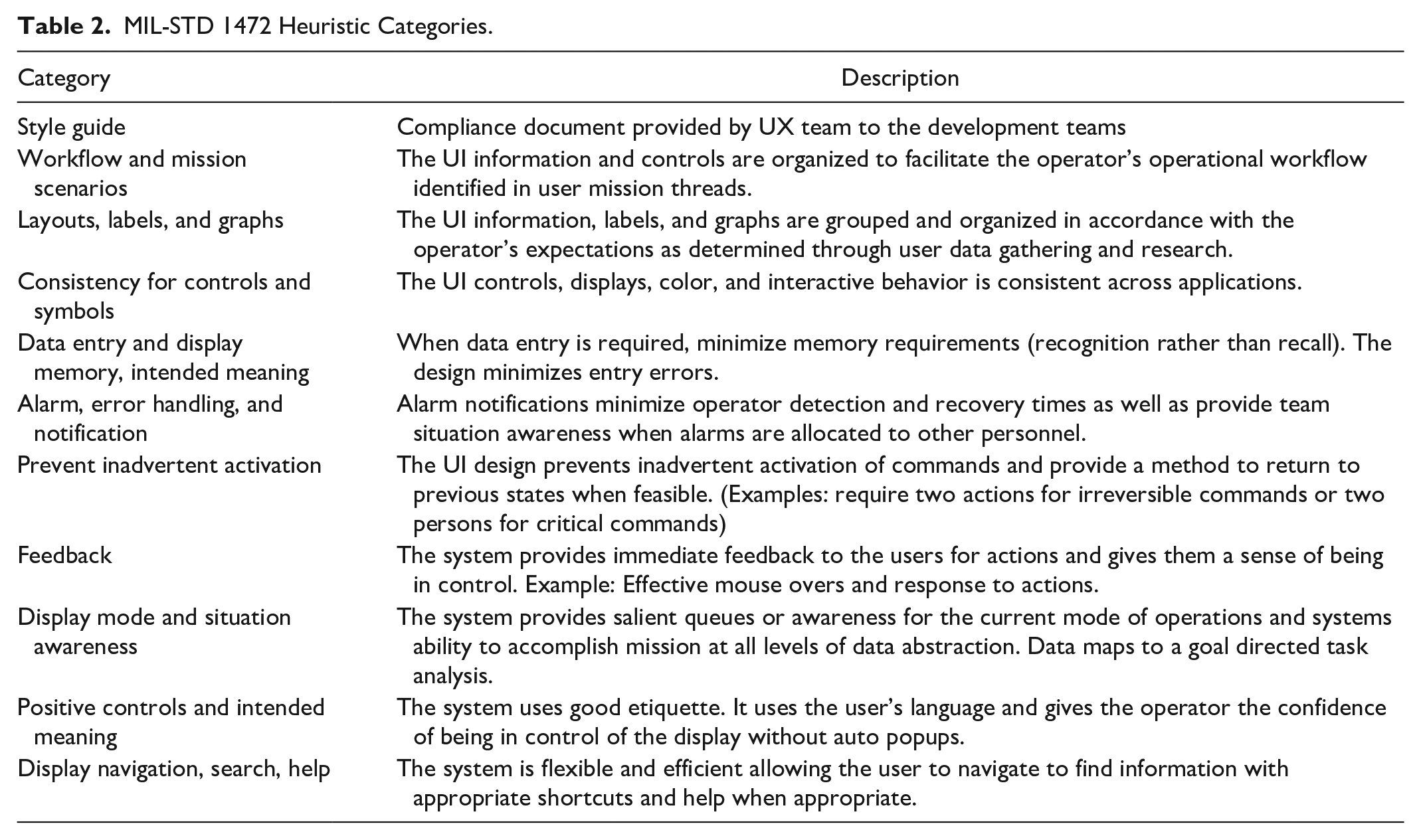

To create the heuristics, the team did a second line by line evaluation to assign categories to each of the line items. The process for assigning the heuristics is shown in Table 1 below. First, each team member was assigned a section of MIL-STD 1472 (e.g., 5.1, 5.2. . .). Team members went through all software applicable lines their section and identified a high-level heuristic or compliance document with which the lines could be attributed. Then a second, different team member peer reviewed the heuristic categories which had been assigned. If the peer reviewer disagreed with the initial assessment, they would leave a note with their preferred categorization and a justification. Finally, the team lead reviewed all software applicable lines of MIL-STD 1472, reviewed the heuristic categories, and adjudicated any discrepancies that had occurred in the peer review. The result of the extensive categorization process led to a total of 1 compliance document and 10 heuristics which can be seen in Table 2.

MIL-STD1472 Categorization Process.

MIL-STD 1472 Heuristic Categories.

To assess the reasonableness and completeness of the teams’ MIL-STD 1472 heuristics, they were compared with the Neilsen heuristics, the most widely accepted in the user experience industry. A simple mapping found that the proposed heuristics covered the Neilsen heuristics effectively.

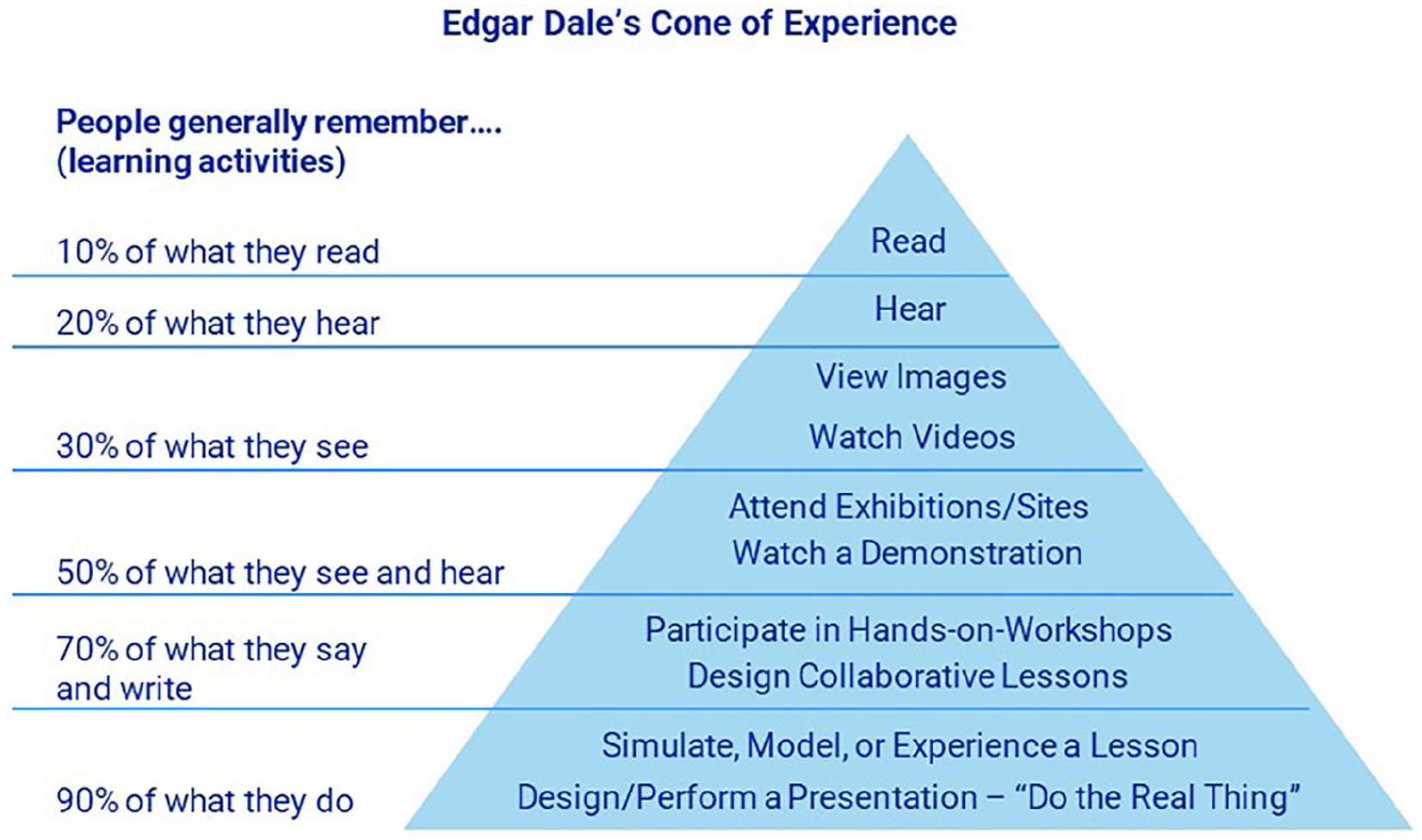

These heuristics were used to train and familiarize new team members with the guiding principles of UX and MIL-STD 1472 compliance. During the onboarding process, new team members were presented with the style guide and heuristics to read and review. This corresponds with approximately 10% retention in the Cone of Experience (Lee & Reeve, 2017) as shown in Figure 1. Team members were then trained to apply these heuristics by attending the biweekly demos and using the heuristics to identify areas for improvement in the software and potential MIL-STD 1472 violations. This corresponds with approximately 90% retention in the Cone of Experience (Figure 1). Rather than needing to memorize all of MIL-STD 1472, team members were able to identify which heuristic is being violated and reference the line-by-line mapping to find all applicable lines of MIL-STD 1472 for that heuristic. This greatly reduced the amount of time needed to identify MIL-STD 1472 violations, since team members only needed to review the 25-90-line items corresponding to that heuristic, rather than the entirety of MIL-STD 1472 itself.

Edgar Dale’s cone of experience.

Results—Benefits of Heuristics and Auto-testing

Automatic Testing

Based upon our UX/HFE practitioners’ expertise on previous programs, automatic testing saved 8 to 20 hr of lab time for every release. This equates to a range of $15 to $20 k for each software release based upon cost estimates and prior experience with software developers and human factors engineering experts. The automation of some basic usability tests can be completed at the time period of lowest costs (e.g., Unit Testing and Static Analysis) where it can have the best return on investment and support the methodology of the Agile DevOps test pyramid as shown in Figure 2.

Testing pyramid for agile DevOps (Steinberg, 2022).

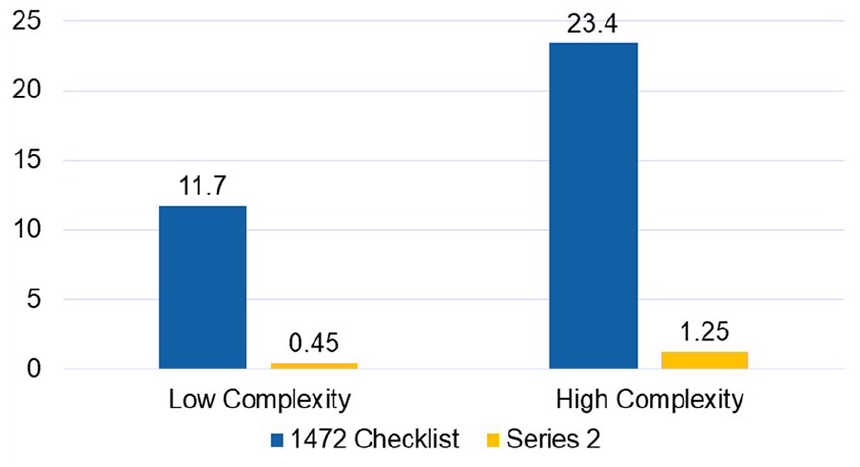

Usability Inspection

Our team found that using the heuristics for usability inspections took only 4 to 6% of the time it takes to do a full MIL-STD 1472 checklist. There was also an improvement in accuracy in finding the line items of non-compliance since this approach eliminated the risk of taking a line intended for hardware out of context. The usability heuristics were found to be effective at identifying potential line violations. When a usability issue was identified with the usability heuristics, then the detailed non-compliance checklist was more easily uncovered in a fraction of the time required to do an exhaustive MIL-STD 1472 checklist inspection. The trends show that the difference is even more pronounced the higher the complexity of the user interface as shown in Figure 3.

Usability inspection time savings (Hours).

Conclusion

Incorporation of rigorous human factors checklists in the design of products in a DevOps environment is a challenge given the shorter timelines and smaller increments between product development and deployment. When combined with the expertise and hours needed to execute the extensive checklists, there is a substantial risk that HFE/UX evaluations can delay the regular cadence of software releases (Steinberg, 2022). Given the high velocity software pipelines being used in SW development, traditional HFE/UX methods are at risk of becoming obsolete. Our team identified a method for incorporating a rigorous usability checklist, MIL-STD 1472, into an Agile and DevOps Engineering environment. Furthermore, our team identified a means for automatic usability testing of a significant portion of the checklists, reducing the cost of regressive testing. These discoveries provide evidence that rigorous checklists can still be effective in current agile engineering methods.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.