Abstract

Multi-stage validation (MSV) has become an important concept in nuclear power plant control room design and modernization. MSV consists of multiple stages of collecting evidence that establish reasonable confidence in the design. The Guideline for Operational Nuclear Usability and Knowledge Elicitation (GONUKE) was created as a framework to support different types of human factors evaluations across different stages of the design and development life cycle. The GONUKE framework did not originally address MSV, but it espoused safety case approaches that build different evidences toward design completion, which is a complementary approach to MSV. In this paper, we show how GONUKE specifically aligns to MSV. We then expand the discussion to include multi-stage evaluation. As defined in GONUKE, there are three types of evaluation. Validation is empirical evidence collected through human-in-the-loop studies, whereas verification is expert comparison of a design against formalized standards, and epistemiation is expert knowledge transfer to design.

Introduction

The U.S. nuclear power industry has a thorough regulatory review process that includes human factors engineering (HFE). A license to operate a nuclear power plant features an extensive Final Safety Analysis Report (FSAR) that is submitted to the U.S. Nuclear Regulatory Commission (NRC). The FSAR includes a chapter exclusively devoted to HFE (U.S. Nuclear Regulatory Commission [U.S. NRC], 2016). In the review of the operating license application, the U.S. NRC aims to ensure that HFE has been adequately considered in the design. Specific HFE guidance includes:

• NUREG-0700, Human-System Interface Design Review Guidelines (O’Hara & Fleger, 2020), which outlines detailed design requirements for human-system interfaces (HSIs).

• NUREG-0711, Human Factors Engineering Program Review Model (O’Hara et al., 2012), which details the processes expected as part of HFE.

• NUREG-1764, Guidance for the Review of Changes to Human Actions (Higgins et al., 2007), which prescribes a process to evaluate the risk importance of human activities.

These guidance documents may be applied to modifications to existing licenses such as when control room upgrades are undertaken, or they may be used for new builds with reactor designs that haven’t previously been certified.

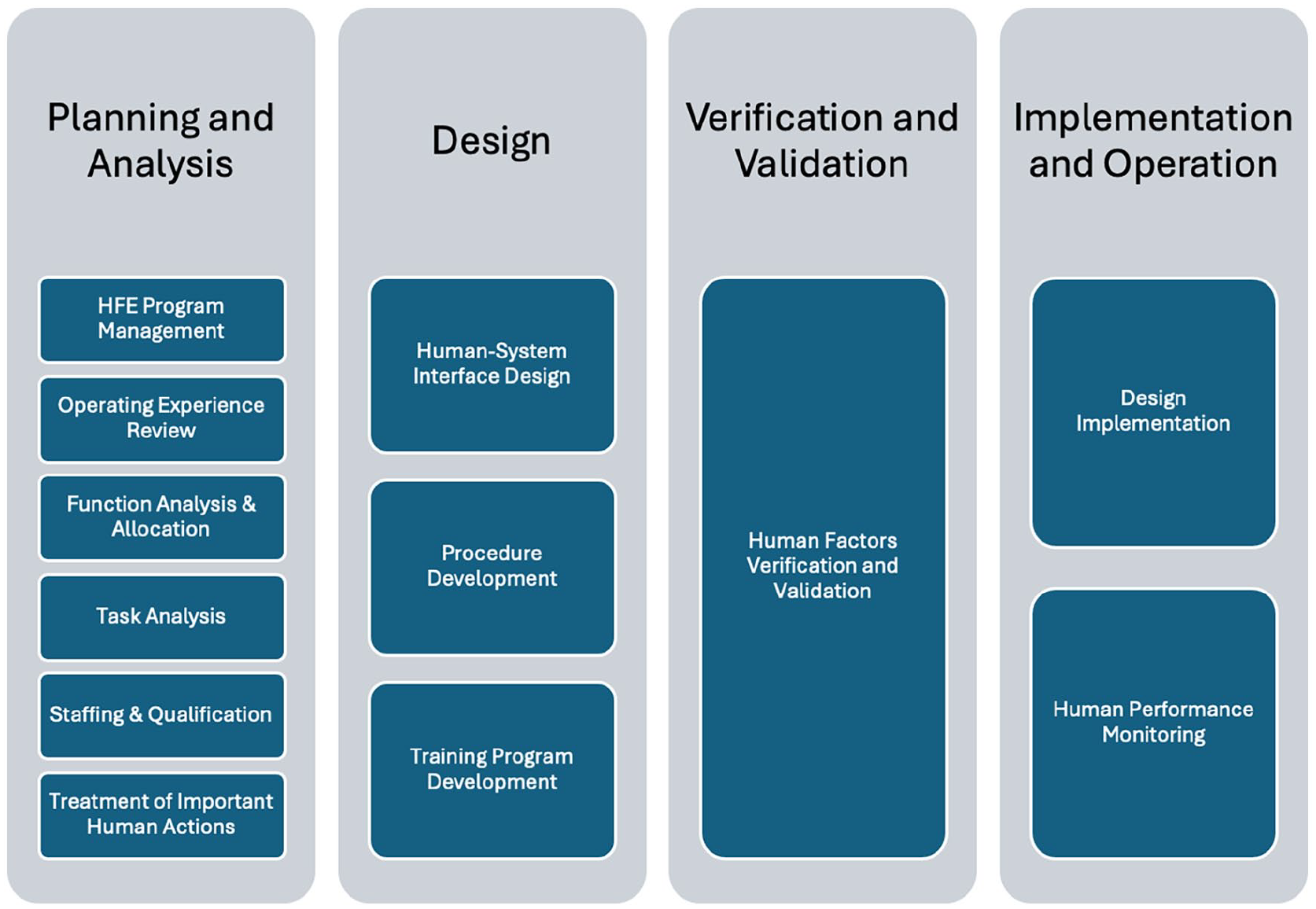

NUREG-0700, -0711, and -1764 are written for the regulator and specify review guidance for what the regulator looks for in the FSAR. Thus, they are not direct roadmaps for licensees to complete HFE activities, but they provide anchor points to which the licensee can calibrate their HFE activities. NUREG-0711 comes closest to a process map that vendors and utilities may follow. It suggests four phases of HFE—Planning and Analysis, Design, Verification and Validation, and Implementation and Operation, each with subelements, as depicted in Figure 1.

The phases and subelements of the HFE review process (adapted from NUREG-0711).

As noted, NUREG-0711 suggests aspects of HFE to be considered as part of the licensing review. However, when NUREG-0711 is transcribed into a process for HFE, there is an implied sequence in which the subelements are conducted. Planning and Analysis (first column) logically involves gathering all the information used to inform the Design (second column). Of course, Verification and Validation (third column) can only be completed once a Design is in hand, and Implementation and Operation (fourth column) are deployment activities after Design and Verification and Validation are complete.

Of particular interest to the rest of this paper is the Verification and Validation phase of NUREG-0711. The guidance for Verification and Validation centers on integrated system validation (ISV). ISV is a summative evaluation of the completed design. This seemingly singular focus on one final evaluation does not comport with many user-centered design best practices that espouse:

• Formative evaluations early in the design, and

• Iterative evaluations to mature the design.

Boring (2015) notes that the types of evaluations may vary considerably from formative to summative design. A largely qualitative evaluation early in the design may be more useful to shape the design, while a quantitative evaluation after the design is complete can ensure the design meets quality acceptance criteria for successful deployment. Supplemental guidance to NUREG-0711 clarifies alternate evaluations prior to ISV (U.S. Nuclear Regulatory Commission [U.S. NRC], 2014), but the stated purpose of ISV is final acceptance of the design in terms of HFE. From a regulatory perspective, documenting the final acceptance test of the human operability of a system is more important than intermediate results prior to finalizing the design. Requiring ISV does not, however, preclude the importance of iterative verification and validation activities leading up to the final design. ISV is definitely not intended to be a limiting factor on more extensive HFE evaluations throughout the design life cycle.

The GONUKE Framework

The Guideline for Operational Nuclear Usability and Knowledge Elicitation (GONUKE; pronounced gō-nūk) was developed to account for the mismatch between NUREG-0711 as a regulatory review document and the common use of NUREG-0711 as a roadmap for HFE processes (Boring et al., 2015; 2021). GONUKE functions as a framework to show the value of different types of HFE evaluations that are possible across the life cycle of system development, especially for the design of nuclear power plants. The implicit purpose of GONUKE is to encourage additional evaluations beyond those explicitly required for ISV.

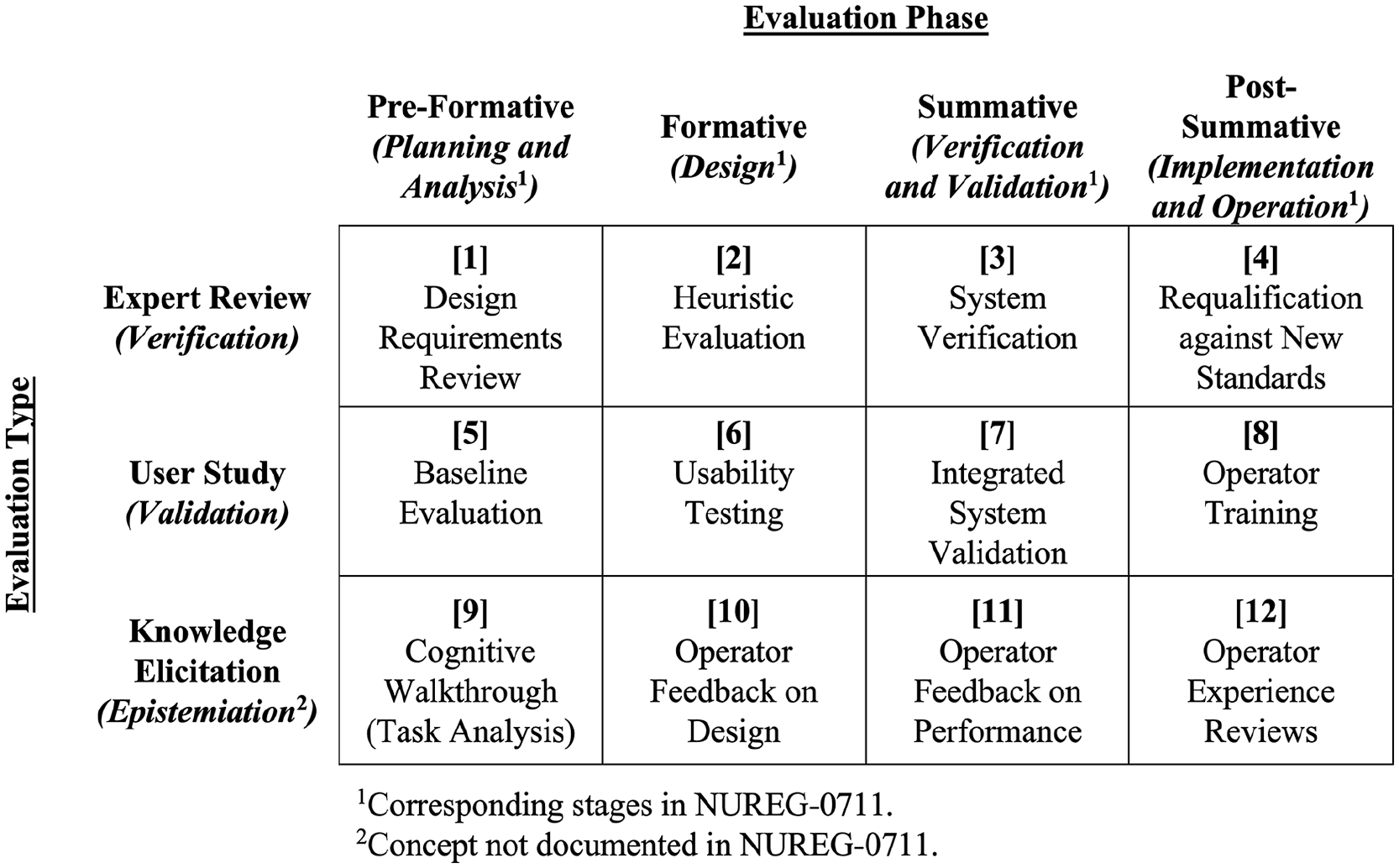

As depicted in Figure 2, GONUKE provides a rubric of 12 possible evaluation categories. GONUKE presents as a table with two dimensions: Evaluation Phase and Evaluation Type. The Evaluation Phases span the horizontal axis and include Pre-Formative, Formative, Summative, and Post-Summative, which correspond to the Planning and Analysis, Design, Verification and Validation, and Implementation and Operation phases of NUREG-0711, respectively. Formative refers to evaluations conducted as the design is still under development, while summative refers to activities after the design is finalized. Thus, Pre-Formative and Formative phases inform the system design, while Summative and Post-Summative confirm the design.

The GONUKE framework of evaluation types according to evaluation phase and type.

The Evaluation Types descend the vertical axis and include: Verification (comparison of the HSI to a set standard like NUREG-0700), Validation (human-in-the-loop studies), and Epistemiation (operational knowledge transfer). Epistemiation exists as a special case of evaluation designed to capture expert knowledge, such as when a previously manual system is digitized and human expertise is documented and used to design a digital control system (Boring et al., 2016).

The GONUKE evaluation categories are presented at a high level and do not in current form specify HFE methods. For example, Category 6 (Usability Testing) represents the intersection of the formative design evaluation phase and the user study evaluation type. Because GONUKE is a flexible guidance framework and not a method, it does not recommend a particular type of usability testing. Example use cases are being developed to help users better apply the approach and determine the appropriate HFE methods for each GONUKE category (Kovesdi et al., 2023). GONUKE is meant as an evaluation framework, but a corresponding design method has been developed (Mortenson et al., 2022).

Recent clarification on putting GONUKE into practice highlights, among other considerations, that GONUKE is well suited to a graded approach (Boring et al., 2021). Legacy plants, for example, feature upgrades that may not warrant a full suite of human factors evaluations, because only some aspects of operations are changed with the upgrades. GONUKE provides a convenient rubric for selecting those evaluations that are needed and ruling out evaluations that do not establish the safety and usability of new control systems.

Multi-Stage Validation

Multi-stage validation (MSV) has become an important concept in nuclear power plant control room design and modernization. As defined in NEA-7466, Multi-Stage Validation of Nuclear Power Plant Control Room Designs and Modifications, MSV consists of multiple stages of collecting evidence that establish reasonable confidence in the design (Nuclear Energy Agency, 2019). The emphasis is on systematic accrual of evidence, meaning each stage of validation builds on previous evidence, and each stage has its own validation goals and methods (Institute of Electrical and Electronics Engineers, 2021). Building evidence across multiple stages is akin to iterative design and evaluation commonly employed in user-centered design approaches. There remains open discussion whether or not MSV encompasses subsystem validation (SSV), whereby subsystems are user tested individually and later aggregated during ISV. A more common interpretation of MSV is that whole systems are tested across successive rounds of development from formative to summative stages.

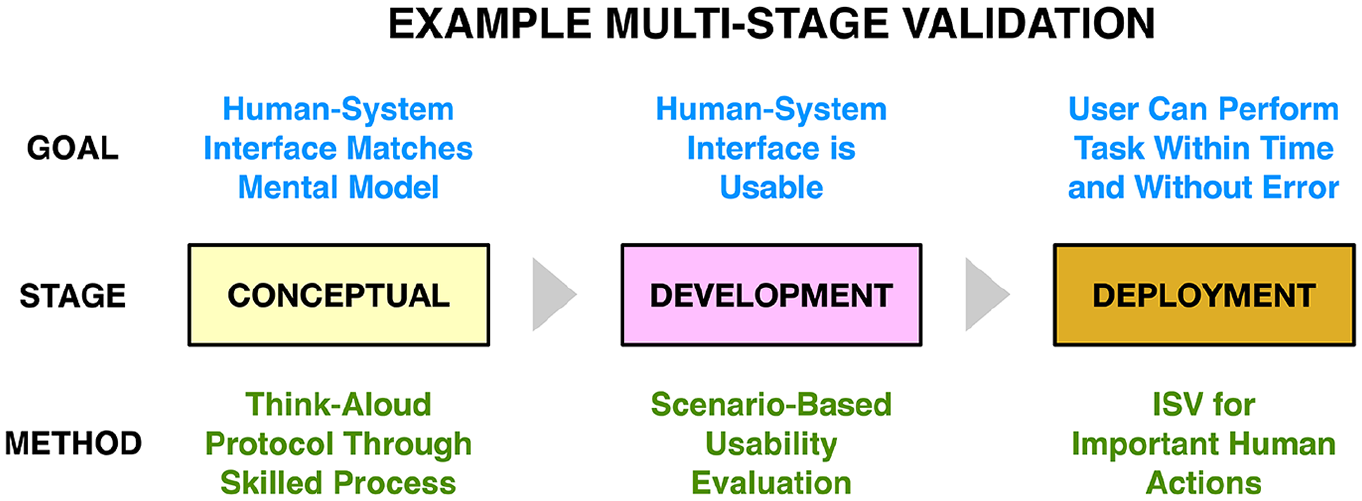

Figure 3 demonstrates three stages of an MSV for a nuclear power application. The system design evolves from conceptual, to development, and to deployment stages. Similar stages have been called conceptual validation (CV), preliminary validation (PV), and ISV (Kovesdi et al., 2023). Each stage respectively features a different goal, from aligning the HSI to the reactor operators’ mental model, to ensuring the HSI is usable, to ensuring that the operator can complete tasks while using the system within pre-defined time windows and without committing errors. The method used for each stage of validation also changes correspondingly, from a think-along protocol as operators talk through their processes for skilled tasks, to usability evaluations across representative scenarios, to ISV for important human actions as determined by NUREG-1764.

Example multi-stage validation for different goals, stages, and methods.

GONUKE and Multi-Stage Evaluation

The GONUKE framework did not originally address MSV, but GONUKE helps HFE researchers pick appropriate types of evaluations at different phases of evaluation. GONUKE can be used to down select the appropriate evaluation at a single evaluation point, or it can be used across successive evaluation phases to support MSV.

GONUKE espouses the use of safety cases (Boring & Lau, 2016), which build different evidences toward satisfying overall design completion (Bishop & Bloomfield, 1998). Safety cases can encompass multiple types of evidence; crucially, they are not limited to validation approaches alone and are compatible with the range of evaluation types covered in GONUKE. GONUKE covers MSV, but it also includes evaluations beyond MSV. Here it becomes necessary to expand the discussion to include multi-stage evaluation (MSE), meaning verification, validation, and epistemiation. Beyond MSV, using GONUKE makes possible multi-stage verification and multi-stage epistemiation. Epistemiation remains a relatively new evaluation concept, but there is certainly reason to consider MSE broadly across the more established areas of both verification and validation. A synonymous term to MSE for most applications would be multi-stage verification and validation (MSV&V).

Multi-stage evaluation occurs at multiple points in time. The four evaluation phases in GONUKE suitably align to the shifting maturity of the design, as it evolves from an early, formative design to a complete, summative design. Unique to GONUKE, different types of evaluations can be deployed to support different phases of evaluation, suggesting value of MSE beyond MSV.

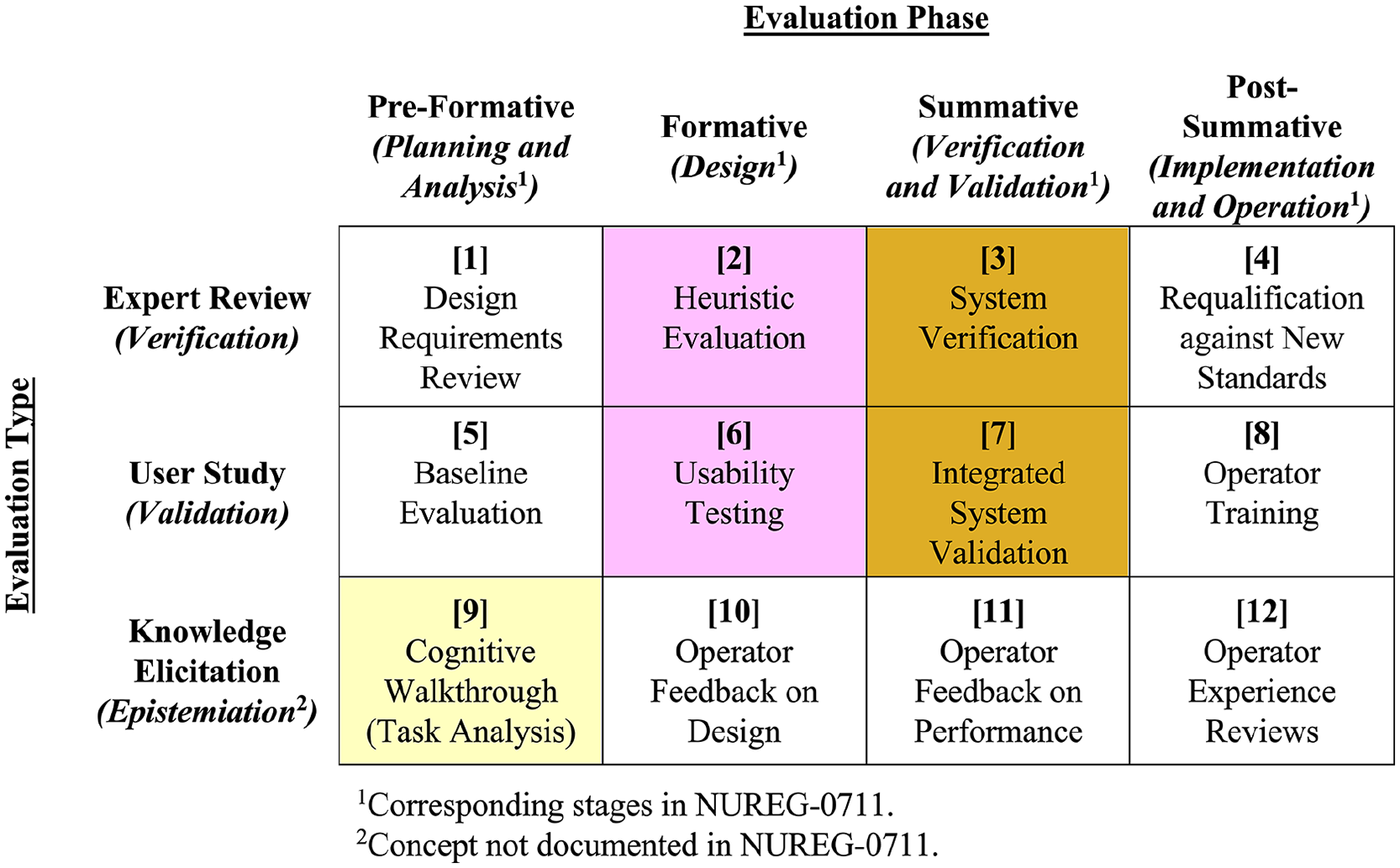

The added value of MSE can be seen in Figure 4. Using the color scheme from Figure 3, yellow suggests the conceptual stage, purple suggests the development stage, and burnt orange suggests the deployment stage. Figure 4 shows how the evaluation type shifts with each stage. The conceptual stage features epistemiation (GONUKE Box 9), capturing insights and knowledge from the operators to help formulate the initial design.

Example of GONUKE used across multi-stage evaluation.

Next, during the development stage, a two-pronged approach of verification and validation is adopted. For verification (GONUKE Box 2), a heuristic evaluation (Nielsen, 1994) is used to align the design with fundamental usability principles, while for validation (GONUKE Box 6) a usability study including representative users engaged with a prototype is used. Commonly, an initial heuristic evaluation is performed by HFE experts to refine the design prior to user testing. Validation could consist of multiple iterations, whereby each usability study reveals areas for improvement, which are incorporated into a new prototype and again tested with representative users. This iterative design-prototype-evaluate cycle can be completed multiple times if the design still exhibits the need for refinement.

Finally, after the design is complete, the deployment stage is at hand. In this example, the final stage also uses both verification and validation. For verification (GONUKE Box 3), the final design is checked against requirements found in NUREG-0700. For validation (GONUKE Box 7), again user testing is employed, but this time in the form of ISV. Rather than gathering inputs to refine the design, as was the case in the formative development stage, now objective performance measures are used to establish that the system as built can be effectively and safely used by human operators.

Example Application

This example covers HFE evaluations on a turbine control system upgrade at a nuclear power plant. Commercial nuclear plants in the U.S. feature a 40-year operating license, and many plants have recently extended that license to continue operating beyond 40 years. Over the history of these plants, technology underwent a digital transformation, such that the analog instrumentation and controls have encountered obsolescence. The license extension and need for digitization have led to significant upgrades at plants. There has nonetheless been resistance to these upgrades, not least of which is the significant cost associated with upgrades (Joe et al., 2012). Upgrades are conducted across systems at the plant, whereby one system might be upgraded per each 18-month plant outage.

One of the most desirable and usually first digital upgrades at a plant is the turbine control system. In a fission reactor, steam is generated through heat transfer from a radioactive source. That steam is used to spin a turbine connected to a generator to produce electricity. The turbine control system upgrade can be coupled to a plant uprate, allowing the plant to generate more electricity, which can allow the upgrade to pay for itself.

The turbine is controlled through adjustments to the amount of steam produced, governor valves to direct the flow along different stages of the turbine, and a latching mechanism to the generator. The turbine operates at a constant speed when generating electricity, but the amount of steam required to meet load requirements can vary. The purpose of the turbine control system is to regulate these functions, ensure reliable electricity generation, and protect the turbine from damage. Legacy turbine control systems were either mechanical or early screenless digital electrohydraulic controls, while newer systems are digital controls with screens. Upgrades to turbine control systems therefore involve replacing analog instrumentation and controls with a digital display and accompanying digital input devices such as keyboards and trackpads.

The authors were involved in an industry-first upgrade of a turbine control system using a full-scope simulator and prototypes of the new digital control system. The authors approached this across multiple development stages. The first stage featured static mockups of the new displays. Functional prototypes were introduced at a second stage. Finally, an ISV was conducted with the installed system at the plant training simulator. For the static evaluations, reactor operators walked through scenarios involving normal (e.g., startup and synchronization to grid) and abnormal (e.g., grid disturbance) turbine operations. They also completed a screen-by-screen walkthrough of the over 40 screens to determine where there were misalignments to their mental operational model. During these formative evaluations, 116 design issues were identified and later remedied through a design iteration, prior to studies with the functional prototypes. The user testing of the functional prototypes revealed a half dozen additional issues. By the time ISV was undertaken, there was high confidence that all HFE issues were mitigated, and no additional issues were identified during this latter stage of evaluation.

This example illustrates the value of both the multi-pronged use of different evaluation types and the multi-stage evaluations. The use of formative evaluations is estimated to have saved millions of Dollars over the engineering changes that would have been required on a deployed system if HFE issues were only found during the summative ISV stage of evaluation. In this example, many of the design issues were not identified through operator studies, suggesting the value of multiple types of evaluation. Throughout these stages, GONUKE provided structure to the MSE.

A similar MSE approach could be applied not only for upgrades to existing reactors but also for new nuclear power plant designs. Advanced reactors are currently being designed and feature increased control automation, increased data automation, and different concepts of operation compared to legacy plants (Boring, 2023). New designs cannot make use of previous HFE work at a plant that hasn’t been built and do not lend themselves to graded approaches. Instead, they require new design and evaluation documentation to support licensing by the regulator. The plant design should cover conceptual, preliminary, and final evaluation phases, but the design also benefits from nuanced treatment of the type of evaluation. For the new plant, the specific measures that inform the conceptual, preliminary, and final evaluations differ, because different information is needed. GONUKE provides a way to frame the data needs to ensure the right human performance information is provided at each phase.

Discussion

GONUKE can be applied to crisscross different types of evidence for different design purposes, spanning formative to summative applications. Multi-stage evaluation provides a useful process to evaluate a system design as it matures. GONUKE is well suited as a framework to consider multiple stages of evaluation and supports current efforts at MSV. Additionally, GONUKE considers multiple types of evaluation. This paper demonstrates the value of verification, validation, and epistemiation at different stages of design.

Despite its nuclear-centric name and while GONUKE is purposely designed to meet the rigors of HFE evaluations for nuclear facilities, GONUKE can support HFE for other technology domains. For consumer applications like user experience (UX), MSE can assist user-centered design processes by identifying appropriate HFE methods for different needs. Likewise, for safety-critical HFE such as aerospace, military, energy, and infrastructure, it loosens an overreliance on particular evaluation methods and helps mature the HSI to a higher human readiness level (Human Factors and Ergonomics Society, 2021) suitable for deployment.

For example, the Boeing 737 MAX Maneuvering Characteristics Augmentation System (MCAS) is a case where an adjustment was made to the design of an automated system to lower the nose position of the plane to prevent stalls (Gates & Baker, 2019). After an initial round of testing and safety analyses, Boeing introduced modifications to the MCAS that allowed it to function with a single sensor. The late-breaking changes overlooked several new failure modes that could inadvertently cause the plane to overcompensate the lowering of the planes’ nose position. Only some of the effects of the modified MCAS were fully tested. The software was verified, but the overall HSI was not validated through experimental studies. We speculate that the use of a second round of evaluations including ISV might have prevented the faulty MCAS activations that led to the fatal crashes of two 737 MAX airplanes. This example illustrates the importance of MSE. GONUKE could guide such evaluations to ensure overall system safety.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work of authorship was prepared as an account of work sponsored by Idaho National Laboratory (under Contract DE-AC07-05ID14517), an agency of the U.S. Government. Neither the U.S. Government, nor any agency thereof, nor any of their employees makes any warranty, express or implied, or assumes any legal liability or responsibility for the accuracy, completeness, or usefulness of any information, apparatus, product, or process disclosed, or represents that its use would not infringe privately owned rights.