Abstract

One approach to training is using scaffolding to assist the novice trainee in performing tasks while they develop fundamental skills. This paper describes the implementation and testing of visual scaffolding to support training of attentional management skills. The training tool was developed specifically for sensor operators of unmanned aerial systems (UAS) to learn attentional skills associated with managing the competing demands of UAS operational tasks. A key part of the training included attentional scaffolding—visual highlighting that was used to guide the trainee’s attention. Three different evaluations were conducted to assess performance while leveraging scaffolding: Two were performed with U.S. Navy and U.S. Coast Guard (USCG) sensor operators, and one was performed in a human-in-the-loop (HITL) study. In this paper, we detail the Navy and USCG studies, summarize the HITL study, and synthesize findings across the evaluations.

Introduction

Attentional management is an increasingly critical skill in many contexts. Industry operators, transportation dispatchers, pilots, locomotive engineers, cybersecurity specialists, and military personnel (e.g., intelligence analysts, operators of unmanned aerial systems [UAS]) monitor and respond to multiple data trends while dealing with a variety of ongoing and interrupting tasks. Given the important nature of this work, training is needed to help personnel effectively allocate attention across their multiple tasks.

In a recent project for the Navy, we developed a training tool to teach attentional management skills to sensor operators of UAS. This tool, the Attentional Trainer To Improve Control of Unmanned Systems (ATTICUS), has been described previously (Sebok et al., 2019; Sebok et al., 2020). The tool emulates six of the key tasks that sensor operators perform, and simulates the cognitive demands associated with monitoring the behavior of ships at sea while dealing with multiple interruptions.

ATTICUS was developed with several end users in mind: Navy sensor operators who need to practice multitasking, experimental participants in a human-in-the-loop study to evaluate effectiveness (Sebok et al., 2021), and scenario developers who create missions for training or experiments (Sebok et al., 2020). During this development process, multiple tests were conducted to gather input and evaluate the effectiveness of different aspects of the training tool.

This paper describes the development and evaluation of attentional scaffolding–forms of support that guide an operator to specific locations, activities, or tasks. This support is gradually reduced and eventually removed as learner proficiency increases. Scaffolding is a technique employed in training to reduce initial cognitive load when the learner is confronted with new material. The intent with scaffolding is to allow trainees to work within the zone of proximal development (ZPD; Vygotsky, 1978). The ZPD is the task difficulty region that is challenging but not overwhelming. When tasks are presented in the ZPD, learning is enhanced. Empirical evidence shows that scaffolding is an effective training aid that improves transfer of skills to the operational environment (Hutchins et al., 2013).

Detailed discussions of the ATTICUS training tool are provided in other papers (Sebok et al., 2019; 2020). A summary is provided below. The unique contribution of this paper is the description of the attentional scaffolding and three testing events that evaluated the approach.

ATTICUS offers training on three aspects of attentional management: visual Scanning, task Prioritization, and Interruption management, referred to as SPI. Research and operational experience indicate these skills can be trained and transfer to the work environment (Peng & Miller, 2016). ATTICUS provides training through short instructional videos, part-task training, mission performance supported by visual scaffolding, and detailed feedback on attentional management and objective task performance.

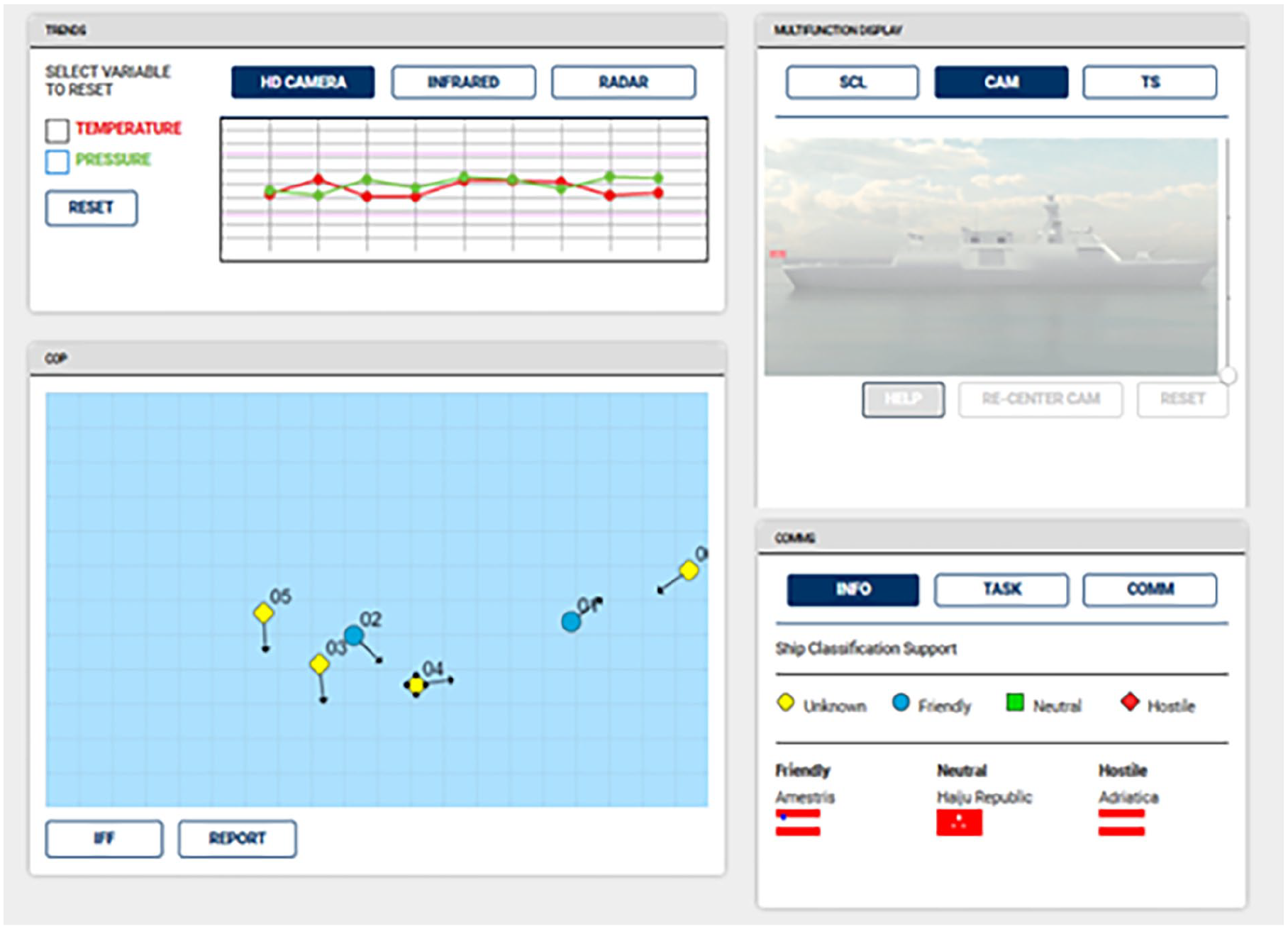

Figure 1 shows a screenshot of the ATTICUS multitasking environment with four Areas of Interest (AOIs).

ATTICUS multitasking environment. In the upper right AOI, the camera display is activated.

The Common Operating Picture (COP), at the lower left, presents ships (colored symbols) that move continuously across the display. COP tasks include monitoring for suspicious behaviors (e.g., change in direction, rendezvous with another ship) and classifying unknown ships. When an unknown ship appears on the COP, trainees click on the ship and access the camera (upper right AOI) to learn more about the ship, such as the flag and whether it has weapons.

The upper left AOI shows the trend display. There are three sets of trends, each accessed by a tab. If a trend goes out of bounds, it needs to be reset by clicking the “Reset” button. The upper right AOI is a multifunction display that provides access to the Ship Classification (SCL) card, Camera (CAM), and Troubleshooting (TS) area. These are used for inputting detailed information on COP ships (SCL), viewing ships up close (CAM), and repairing simulated sensor failures (TS). The lower right AOI provides two tabs of information related to symbols and tasking, and a third tab (COMM) lets the user respond to auditory communications. Table 1 lists each AOI, its associated task, whether the task is an ongoing task (OT) or an interrupting task (IT), and whether the IT is self-initiated (SI) or event-driven (ED). Upon mission completion, trainees receive detailed feedback on attentional management and objective task performance.

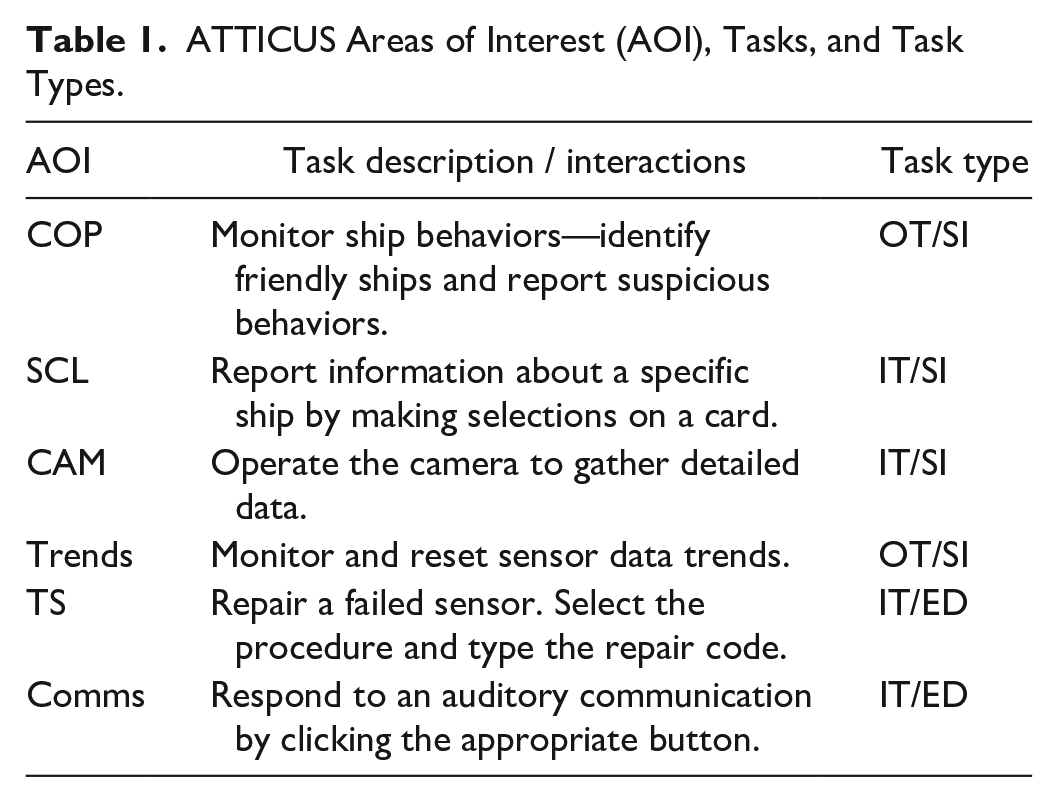

ATTICUS Areas of Interest (AOI), Tasks, and Task Types.

Design Approach

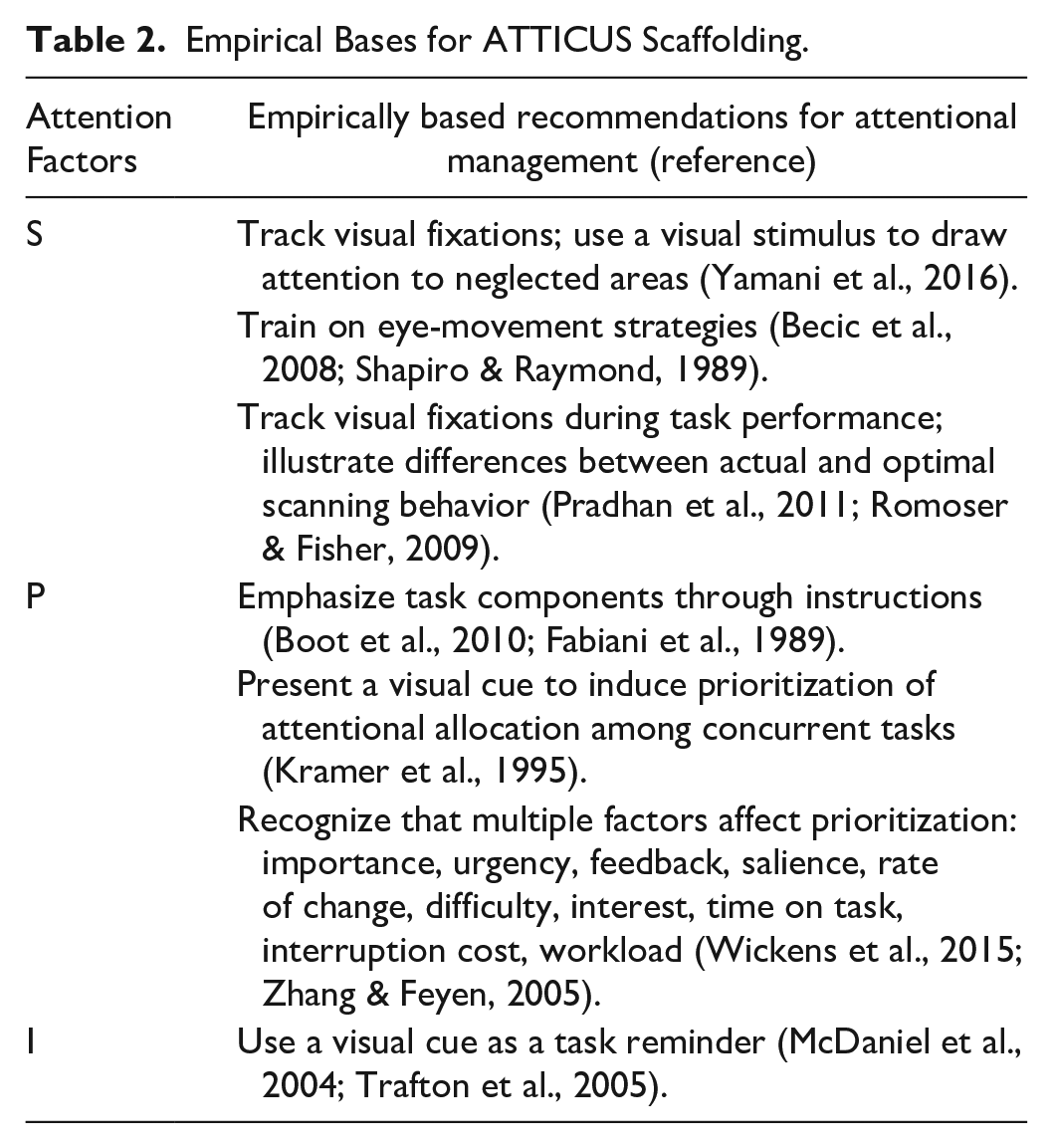

Attentional management training was offered through videos, scaffolding, and mission-based practice and feedback. Scaffolding was developed to guide the trainee’s attention in performing missions according to the principles taught in the SPI training videos. Table 2 lists the SPI factors and the empirical findings that were applied in the design of ATTICUS scaffolding.

Empirical Bases for ATTICUS Scaffolding.

Attentional scaffolding was implemented as magenta highlighting (a color not used elsewhere in the display). Scaffolding appeared based on the determination of a neglected area. ATTICUS uses mouse hover and clicks to identify where the trainee’s attention is focused. SPI requirements are identified based on the design of a particular mission and actions that the trainee has taken. If a mismatch occurs between where the trainee should be attending and where they are attending, scaffolding appears to guide their attention. ATTICUS uses rules to determine the most critical AOI at any point in the mission to prevent multiple AOIs from being highlighted at once.

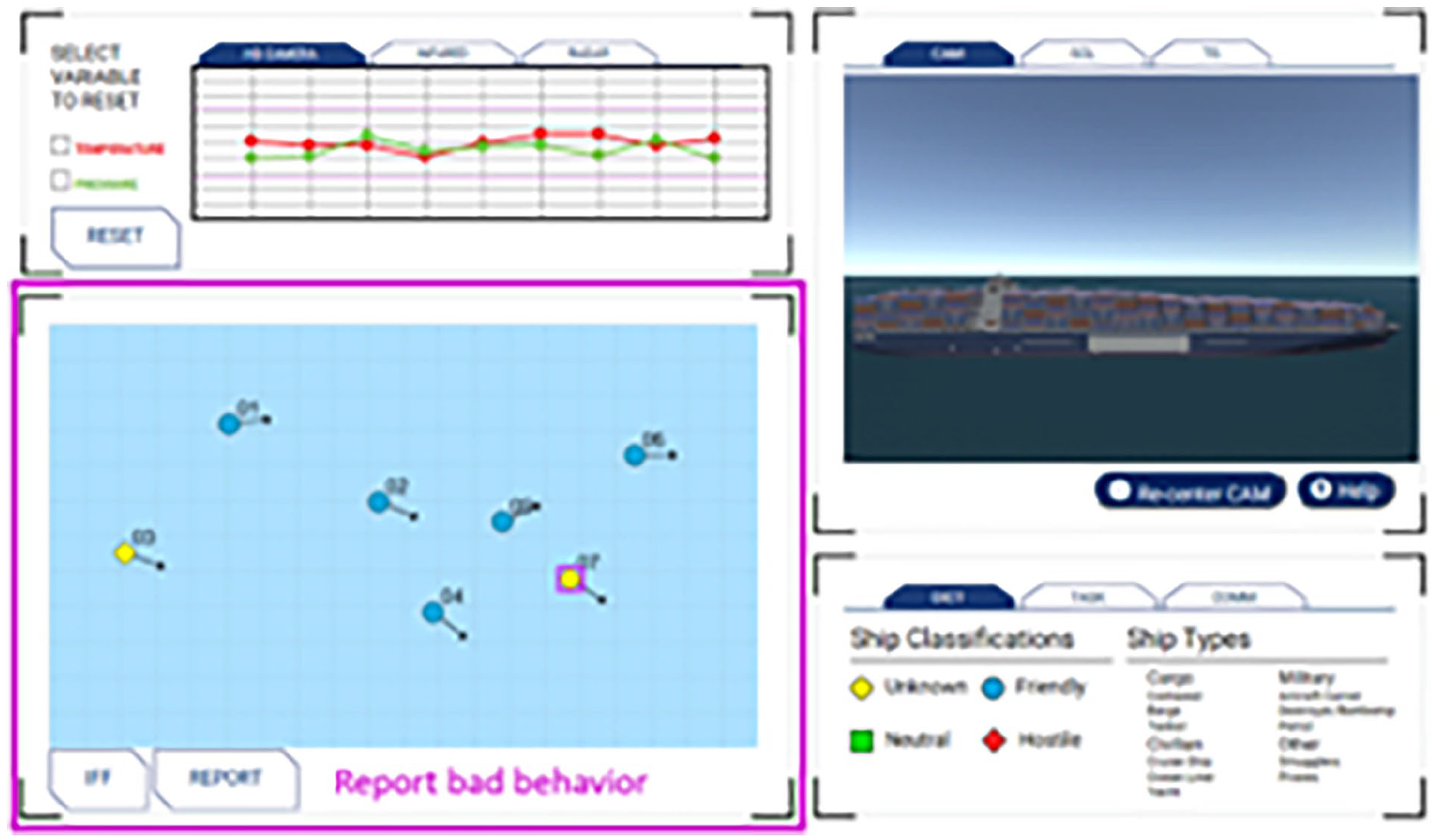

There are four levels of scaffolding: maximum, moderate, minimum, and none. Maximum scaffolding offers highlighting around the AOI, highlighting around a relevant aspect of that AOI (e.g., a ship on the COP, a tab in the trends AOI), and text explaining what the trainee should do. Moderate scaffolding removes the text but kept the AOI and detailed area highlighting. Minimal scaffolding simply highlights the AOI. None means that no highlighting is used. Figure 2 shows an example of maximum scaffolding indicating a need to report a suspicious ship behavior.

Scaffolding to support scanning.

Scaffolding in ATTICUS is enabled at the maximum level when the trainees are first exposed to missions. As trainee performance improves on SPI measures, scaffolding is removed in a stepwise manner until, eventually, no scaffolding is applied. The scenario developer designs the mission and specifies the SPI scoring criteria for removing scaffolding.

Measures of Performance and Cues for Scaffolding

Performance during a mission was evaluated in two primary ways: objective task and attentional management (SPI) performance. Objective task performance was calculated as five percentages and an overall average. For example, if the mission included 20 ships, and the trainee correctly classified 10 of them, the trainee received a score of 50% on the SCL task. If there were eight communications, and the trainee correctly responded to six, the trainee received a 75% for Comms. Similarly, trends, troubleshooting, and suspicious ship behavior reporting are based on the percentage that were correctly identified or addressed during a training mission. The overall score is the average across the five objective tasks.

Attentional management performance was rated on a scale of one to five. One indicated poor performance, and five indicated optimal performance. Scanning was assessed based on mouse hover and clicking behaviors on the COP and trend display. If a trainee neglected the COP or trends, scaffolding drew the trainee’s attention to those areas. Prioritization for interrupting tasks was based on instruction or workload. For example, if the trainee had been informed that troubleshooting was a high-priority task, and they neglected a troubleshooting need, scaffolding would draw attention to that task. Similarly, in the workload-based condition, if the COP workload increased suddenly (e.g., by the presence of additional ships on the COP), scaffolding would direct the trainee’s attention to the COP. Interruption management was assessed by the trainee returning to the task that had just been interrupted. If the trainee failed to return to the task after completing an interrupting task, scaffolding would draw their attention to the previous ongoing task.

Testing

The scaffolding capabilities were assessed in three different evaluations. The first two evaluations were done with potential end users, sensor operators in the U.S. Navy and U.S. Coast Guard (USCG). The third study was an experiment conducted with college students who participated in training across fifteen 20-min training sessions. Given the small sample sizes in the tests with potential users (n = 4 and n = 5 for the Navy and USCG tests, respectively), we were looking for data trends rather than statistical significance. The experimental study, with 30 participants, enabled tests of statistical significance to be performed.

While the two user testing events were small, they included actual potential end users. A frequent and valid criticism of university-based studies is that college students are not necessarily representative of the end-user population, so these testing events were critical for us to evaluate the possible effectiveness of the attentional management training tool.

Navy Sensor Operator Evaluation

Former Navy sensor operators participated in four rounds of testing with ATTICUS. The operators were introduced to the tool and given instructions on completing four missions. Each testing round was performed over a 1-month period between February and May and included four missions.

Scaffolding was evaluated in Rounds 3 and 4. The first two rounds of testing did not include scaffolding. Round 3 included scaffolding. Round 4 removed the scaffolding.

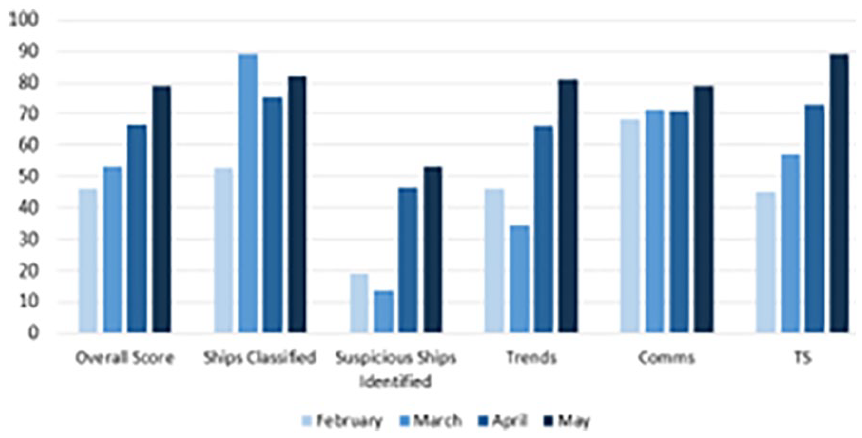

Figure 3 shows performance on objective tasks in ATTICUS; it suggests that learning occurred, and modifications to the tool supported performance. In the figure, each color represents a separate round of testing. Light blue bars represent the first round, with progressively darker blue bars indicating performance in the second, third, and fourth rounds of testing. This figure suggests that overall scores improved across the testing sessions. Particularly, suspicious ship identification appeared to be improved by the addition of scaffolding in Round 3. The apparent benefits of scaffolding–in terms of detecting and reporting suspicious ship behavior–appeared to be maintained even after scaffolding was removed in Round 4.

Overall averages on objective tasks across the testing period.

USCG Study

A second user-based evaluation of ATTICUS was conducted with five active-duty USCG sensor operators. The operators were introduced to the ATTICUS project and tool. They were then asked to watch videos for training and perform part-task training. Following the training, the operators performed six missions. The testing was done at the operators’ convenience within a month. They were asked to perform the missions in a predefined order.

The five participants were split into those who performed missions with scaffolding (n = 2) and without scaffolding (n = 3). Other than the presence or absence of scaffolding, the missions were identical. This testing was done to (a) examine possible performance trends, relative to trainees gaining experience with the missions and (b) explore possible scaffolding-related performance trends.

The first mission was simply a familiarization. This mission had no scaffolding; all trainees experienced the same training. Following this initial trial, participants each performed five training missions. These missions were identical (except for scaffolding) for all participants and increased in difficulty as participants progressed.

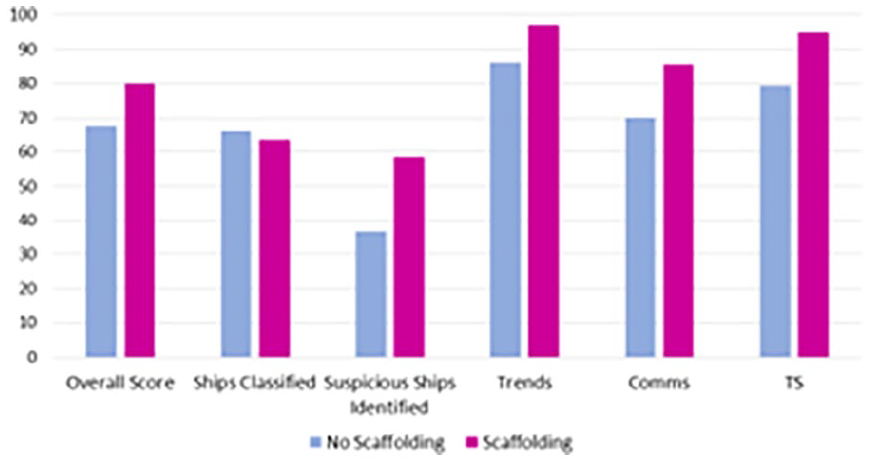

Figure 4 shows the overall objective performance scores averaged across missions. Blue bars show performance without scaffolding, and magenta bars show performance with scaffolding. Several trends emerge. Participants’ overall scores appear to be better with scaffolding (vs. without scaffolding). Likewise, apart from ship classification, performance on individual tasks seems to be better with scaffolding. Although a larger sample size is needed to detect statistically significant differences for any of the reported findings, scaffolding performance scores seemed to differ most from non-scaffolding performance scores on the suspicious ship identification (reporting bad ship behavior) task. This is a low salience task, particularly for changes in speed or direction. If the trainee is not looking at the COP when the behavior happens, the trainee is likely to miss it. It is plausible that scaffolding increased the salience of the ship, thus increasing the likelihood that the tester noticed a change.

Average objective task scores across the five training missions.

Trends, comms, and troubleshooting scores also appear to be better in the scaffolding conditions, reaching performance ceilings in the final mission. The missions were progressively more difficult across the testing sessions, so the potentially improved performance in later missions is especially interesting.

The fact that ship classification was apparently not improved by scaffolding is worth unpacking. The SCL task is multi-step process: The trainee reviews a card to see what information is needed and then uses a camera to find the data. Scaffolding provides a reminder to return to an interrupted SCL task, but it does not indicate the subtask. For most of the tasks, scaffolding draws the trainee’s attention to the information they need to complete the task. However, scaffolding does not offer such detailed assistance with the SCL task, suggesting that more specificity in the scaffolding may be required to produce a benefit in this task.

Human-in-the-Loop (HITL) Study

A systematic evaluation compared ATTICUS training against task-focused training. The study included two nearly identical training conditions. One group of 15 participants had ATTICUS training: videos on attentional management strategies; scaffolding (removed based in part on attentional management performance); and feedback on attentional performance. Another group of 15 participants (Fixed Difficulty condition) had part-task training and mission practice, but did not receive the attentional management training, scaffolding, or feedback.

All trainees in this study experienced 15 training sessions. Except for the use of scaffolding, the training missions were identical. In the ATTICUS condition, scaffolding was removed gradually throughout the study, based on trainee performance and heuristics.

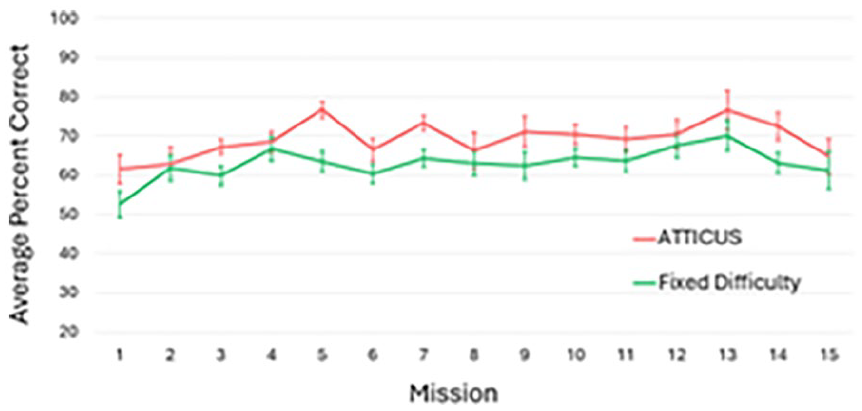

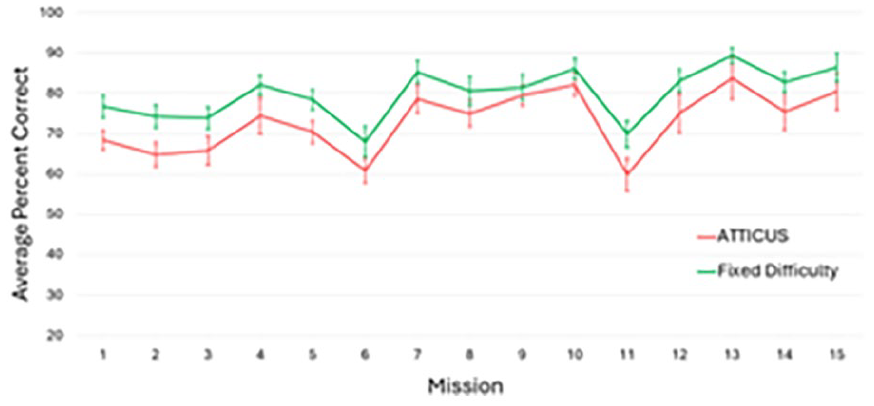

Figure 5 shows performance across missions of participants in the ATTICUS versus the Fixed Difficulty conditions. The analysis revealed, for the COP, a significant effect of training (improvement in performance across practice) F (1, 27.37) = 4.54, p < .05, η2 p = .22), a marginally significant advantage for the ATTICUS group, F (1, 27.01) = 3.30, p = .08, η2 p = .11), and no interaction, F (1, 27.16) = .08, p = .77, η2 p = .003, suggesting both groups learned at a similar rate.

Performance on reporting suspicious ship behaviors.

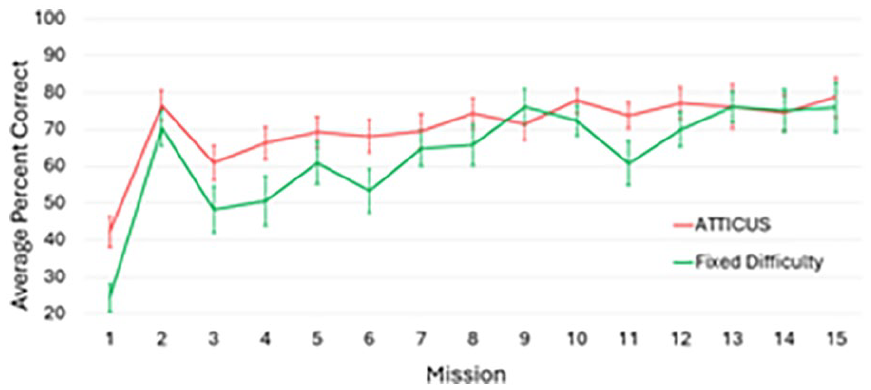

Figure 6 indicates the improved performance on trend monitoring and response in the ATTICUS condition. The analysis revealed significant learning for both groups, F (1, 27.37) = 9.12, p < .01, η2 p = .61), a significant ATTICUS advantage with a large effect size, F (1, 27.01) = 14.26, p < .001, η2 p = .35), and an interaction between the two variables F (1, 27.02) = 4.50, p = .04, η2 p = .14), signaling that the Fixed Difficulty group gradually caught up with the ATTICUS group over the course of the experiment.

Performance on the trend monitoring task.

Figure 7 shows the performance on the ship classification task. The analysis revealed a significant effect of mission, F (1, 27.50) = 44.51, p < .001, η2 p = .68), and a significantly greater accuracy for the Fixed Difficulty than the ATTICUS group F (1, 27.01) = 4.77, p = .04, η2 p = .15). The marginally significant interaction between mission and group, F (1, 27.02) = 3.89, p = .06, η2 p = .13), indicated that the initial ATTICUS cost was essentially eliminated by Mission 7. The troubleshooting and comms tasks were performed equally well in both conditions.

Performance on the ship classification task.

Results

The key findings of the experiment, supported by trends in user testing, are that the visual scaffolding appeared to provide an effective tool for training attentional management, and trainee performance improved with practice. In particular, the situation awareness tasks of monitoring the COP and monitoring trends were improved with scaffolding.

As the Navy and USCG studies seemed to suggest—and the HITL study indicated—scaffolding offered performance benefits on objective task performance. As the Navy testing showed, the apparent performance benefits appeared to be maintained even after the scaffolding was removed. The experimental study provided statistically significant findings that showed early benefits to the entire suite of attentional management tools, particularly with respect to the ongoing tasks of monitoring the COP (to report suspicious ship behavior) and monitoring and resetting trends. In summary, visual scaffolding improved operator performance on the critical tasks associated with maintaining situation awareness.

Limitations

Multiple rounds of user testing were performed throughout ATTICUS development. The USCG study compared performance in scenarios with and without scaffolding. This evaluation compared the extreme conditions of maximum scaffolding with no scaffolding. It did not investigate removal of scaffolding.

The experimental study included gradual scaffolding removal, based on a combination of heuristics and performance. The Navy study investigated scaffolding implementation and removal, but it did so in the context of multiple changes to the system. These three evaluations suggest a benefit of scaffolding for training the critical task of maintaining situation awareness of ongoing tasks in a multi-tasking context. However, no single study specifically addressed only the effects of adaptively removed scaffolding on training performance.

Another limitation is that the two end-user tests (U.S. Navy and USCG) looked at trends in the data to suggest differences in performance. Only the experimental study included data that indicated significant findings.

Final Remarks

A potential next step is to conduct an experiment that investigates the different factors affecting performance: type of training aid (i.e., videos, scaffolding, feedback), adaptive scaffolding removal schedule, and individual differences in multitasking ability. Ideally, such an analysis could clarify whether and how different training factors contribute to overall performance benefits.

One potential consideration is using scaffolding or visual highlighting in the design of the information systems for UAS operators. For example, because ships follow predictable patterns when showing suspicious behaviors, it should be possible to use artificial intelligence to identify potential suspicious behavior events based on patterns of movement or speed changes. Visual highlighting can then be applied to draw attention to these ships, essentially providing scaffolding in the system design.

Footnotes

Author’s Note

Angelia Sebok is also affiliated to University of Northern Colorado, Greeley, CO, USA.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was supported by the Naval Air Warfare Center Training Systems Division, Contract N68335-18-C-0138. Any opinions, findings, conclusions, or recommendations expressed are those of the authors and do not necessarily reflect the views of the Navy. The authors gratefully acknowledge the guidance and support of Laticia Bowens and Tashara Cooper (NAWCTSD).