Abstract

There is a growing interest in leveraging AI technologies to enhance training development in high-consequence industries. This study investigated the integration of emerging artificial intelligence (AI) tools, such as ChatGPT, into the training development process. Previous research has demonstrated the potential of AI through content generation and support tools to improve training design practices. Building upon this foundation, we analyzed the use of generative AI tools via workshops with task and domain experts. By observing task expert interactions, we identified potential benefits, challenges, and limitations associated with the use of generative AI in training development. A domain expert evaluation provided valuable feedback on the quality of training materials developed with generative AI. Findings from this study contribute to a deeper understanding of how AI can augment training development in high-consequence industries, offering valuable guidance for training practitioners seeking to enhance training efficiency and effectiveness.

Keywords

Introduction

Training practitioners in high consequence industries (e.g., aerospace, defense, healthcare, transportation, energy), have explored a range of novel technologies to enhance the efficiency of training design, development, and delivery. This training requires frequent updates to meet operational demands, and practitioners cannot accept any tradeoff of quality for efficiency in its development. As such, there has been a rising interest in leveraging artificial intelligence (AI), especially with large language models (LLMs) like ChatGPT, to augment design/development. Prior work has demonstrated that automating training content generation can reduce demands and improve productivity for training personnel (e.g., Champney et al., 2010; Nicholson et al., 2009). Access to automated support tools, decision-aids, and repositories may enhance the practices of designers (Ayers et al., 2023; Wood et al., 2021), and there is near-future potential for automation technologies in this space (e.g., General Services Administration, 2023; Ray et al., 2023).

This work sought to extend prior work by Nguyen et al. (2023), which mapped recent AI technologies to innovations in training design and development. Through a set of workshops with experienced subject-matter experts (SMEs), we sought to obtain richer insights into the creation and perception of training materials for an aviation-related training example (passenger cabin safety) developed using generative AI tools. This study aimed to address the following research questions: (a) How can the Task SME utilize AI to create training materials? (b) At what stages of development do the Task SME encounter challenges? (c) Where can AI tools and workflows used by the Task SME be enhanced? And (d) What were SMEs’ assessment of the quality of AI-generated training materials?

Approach

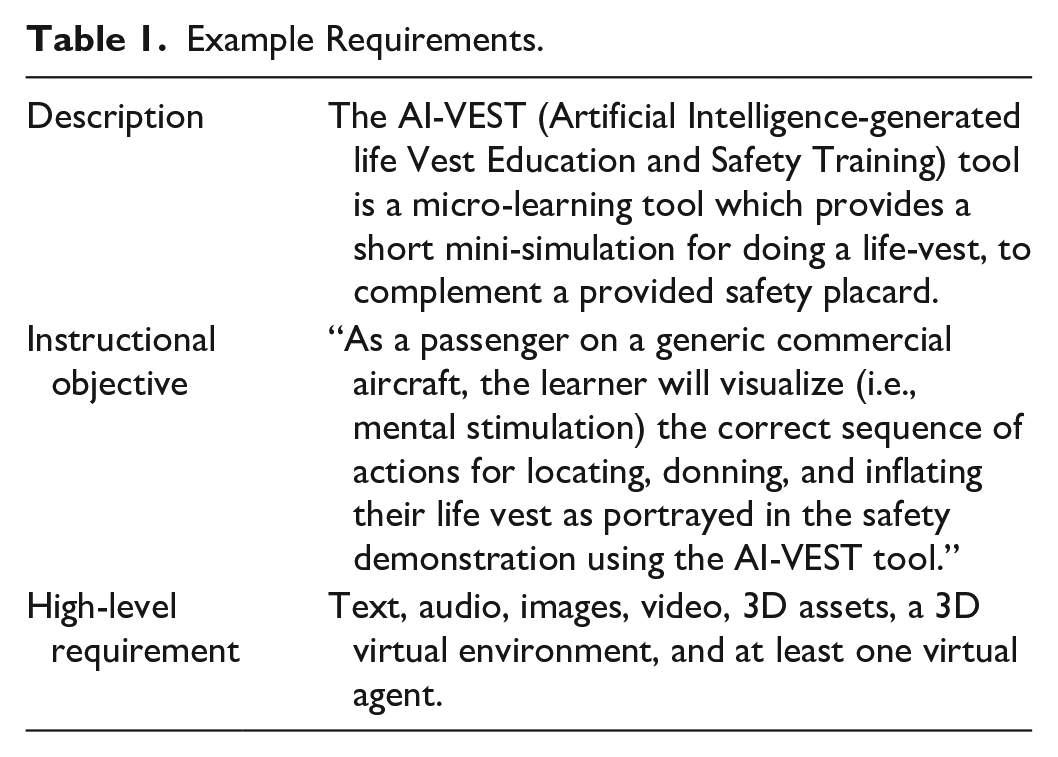

First, our team identified a set of instructional objectives relevant to training for passenger cabin safety duties. We defined the requirements for instructional materials based on Nguyen et al.’s (2023) review, focusing on the inclusion of diverse multimedia materials (see Table 1), time constraints (~1.5 hr), and examples of generative AI tools to scaffold the task. For our Task SME workshop, we reached out to individuals with expertise in multimedia development, instructional design, and system design to enrich our discussions on the use of generative AI for aviation-related training materials. A Task SME with over 20 years of experience in training, simulation, and videogames development, engaged in the workshop to develop exemplar materials and provide their insights on the use and value of generative AI tools in training design/development.

Example Requirements.

We then presented the example training materials to a Domain SME for qualitative evaluation. The Domain SME reported about 6 years of experience in the aviation industry, including 2 years in an instructor role, and 2 years at a regional air carrier. The SME discussed the quality of training materials using an adaptation of Dessinger and Mosseley’s (2006) evaluation criteria (i.e., utility, feasibility, propriety, accuracy, and suitability; see also Sonnenfeld et al., 2023). In both workshops, we conducted semi-structured discussions with the SMEs, informed by our research questions and the SWOT (strengths, weaknesses, opportunities, threats) framework (Chermack & Kasshanna, 2007; cf. Engelbrecht et al., 2019; Sinatra et al., 2023). Their insights helped us to understand the potential use, quality, and value of AI-generated training materials and associated tools in high consequence industries.

Findings

The products of this effort included: (a) a task analysis document, (b) task experts’ insights on areas for improvement in the use of AI for design and development, (c) domain experts’ insights on the quality of instructional materials developed with generative AI tools, and (d) our aggregated observations. Our task analysis outlined steps and operations used by the Task SMEs when applying generative AI tools to develop multimedia materials under time constraints. Their insights highlighted areas for improving AI-assisted design and development, offering critical input on refining tools, including cost and design benchmarks. The evaluation conducted by Domain SMEs provided valuable perspective into the quality of AI-assisted training materials, emphasizing the need to ensure ecological validity throughout development. Their assessment clarified the potential suitability of these tools and materials within the aviation domain. Finally, our observations identified potential opportunities and areas of concern in the integration of AI generative tools for training development. Our findings contribute a localized case study within the training community’s broader understanding of the use and value of generative AI tools in high consequence industries.

Task SME Workshop Findings

Strengths

Discussions of the strengths of AI tools in developing training materials centered around three main areas: General, Audio Generation, and AI Agents. General strengths included the pace of innovation in generative AI and the timeframe that these innovations may have an impact on training design/development (1–2 years), open-source collaboration, and the general capabilities of the tools for text synthesis and generating realistic concept art for training. The tools were viewed as novice-friendly and the ability to view prompt history was valued. Strengths related to audio generation centered around output quality, cost/time savings, localization and dubbing capabilities, and customization including the use of real voices (own/self/stock). Strengths for agent generation included concepts of developing agents-as-characters, animation features (action, expression, speech), and the ability to apply dialogue constraints.

Weaknesses

Issues and frustrations with using the AI tools unveiled weaknesses across six categories: System Errors, System Limitations, Usability, Quality, User-System Interaction, and Function Allocation. System errors included generation failures, system lag & timeouts, and undesired results. System limitations related to the quantity and size of input/output, credit/subscription models, natural language understanding and generation, browser compatibility, the lack of a single-source tool/interface, and the inability for the tools to infer and apply workflows either implicitly or explicitly. Usability issues across multiple tools concerned unintuitive interfaces, unclear system status, a dearth of system feedback, an inability to natively organize output, and lack of standardization in features and interfaces. Numerous quality issues were identified, including trust in output (see also Hicks et al., 2024), source transparency, input-output consistency, accuracy (with respect to content and domain), domain relevance, goal relevance, context specificity, resolution (e.g., of videos and 3D meshes), and design cohesiveness—the last related to whether individual outputs could be integrated without detracting from an instructional design, training curricula, or learning context.

Similarly, we identified a broad range of user-system interaction challenges. The SME found the process of prompt refinement tedious and highlighted the necessary lengths of prompts and dialogues needed to successfully output a minimally viable result, and the variations in effective prompting needed between individuals (e.g., by role, expertise) and across tools. A lack of useful system feedback or clear application of user feedback was observed. A degree of negative reinforcement following system errors was observed, such that the tools would count system errors against the credits/tokens available to the user. A lack of native workflows forced the SME into a repetition of basic tasks and prompts. Challenges associated with acceptance of the technologies among users and organizational buy-in and support (subscriptions, training) were discussed, and the potential overconfidence of domain novices in outputs. Overall, even with prior familiarity in applying these tools in their work, the SME found the process of using generative AI exclusively for training materials to not be worth the investment of their time in its current state.

Researchers also observed weaknesses related to function allocation between the system and the users. Preparing to effectively use these tools would involve extensive preparation and translation of resources including source materials, instructional design documentation, and prompt templates to the specific context and tool. Without a cohesive, single-source tool or interface, the extent of what is generated may be described as piecemeal outputs, which need to be manually integrated into a cohesive instructional intervention. Prompt revisions and specifications, as well as manual content revisions, might be necessary at every single step of the process, particularly for high-risk domains. The SME highlighted examples of where the quality of human artists, designers, and developers may vastly outperform the products of AI-generated training assets, with positive trade-offs for AI tools warranted only for generative audio and virtual agents under the constraints provided. Overall, there was a clear impression that—at least in training development contexts—human users may be allocated the most tedious of tasks (e.g., prompt refinement, transferring materials, organizing outputs, integrating content). Given the quality issues, it may be inappropriate to allocate creative tasks to generative AI tools over expert developers.

Opportunities

Despite these potential challenges associated with the use of generative AI for the development of training materials for high consequence industries such as aviation, discussions with the SME highlighted opportunities categorized with respect to Resources, Features, Development, Interoperability, and Applications. Of the desired features, a single-source application integrating the capabilities of these different tools within a traditional development software environment was desired. In part, this was viewed as potentially addressing an issue with managing tasks behind the subscriptions/credit gatekeeping mechanisms used across multiple tools. Improved capabilities for 3D object and environment generation were particularly desired, as well as features for collaborative content generation. For Development, the SME viewed potential opportunities for generative AI leveraging dialogue (developer-AI) and real-time speech-to-content generation. An ability to automate workflows for generating assets given existing source materials, and cross-platform capabilities were mentioned, with repeated discussion of the capabilities of AI in assisting the development of virtual instructors and other characters for training contexts.

A foundation for these capabilities stressed by the SME was the importance of Resources such as bespoke LLMs at multiple levels of instantiation. Organizational-level LLMs trained on a corpus of policies, procedures, and existing training materials could be leveraged for the development of instructor agents and associated dialogue, feedback, narratives derived from the underlying expert model(s). Task-level LLMs may instantiate the content from this database for specific training interventions and to support adaptive training techniques. The SME also mentioned the opportunity that a regulator-level LLM may provide in aggregating regulatory and guidance material related to safety, operations, and training within high-consequence industries; although the researchers caution the extent guardrails necessary to ensure that source materials are explicitly referenced. Other resources discussed included the ability to use generated content as reusable objects/assets within the development pipeline, and software development kits for integrating AI tools within existing development platforms. Opportunities related to interoperability among generative AI tools were observed, at the front-end (e.g., to allow seamless integration tools) and on the back-end (e.g., to allow integration of materials with development platforms and training software, including learning record stores and management systems).

Lastly, use cases for AI tools for training development were outlined. These included the use of generative AI for instructional dialogue (learner-AI), for the moderation of that dialogue, the derivation of instructional narratives from source materials through the inferred expert model. Given the quality issues associated with these tools, the SME saw generative AI being valuable for developing content for K-12 educational materials but constrained in application within high-consequence industries toward developing design mockups and other conceptual support in lieu of being a central element of the design/development pipeline.

Threats

While discussed or observed indirectly as industry-level issues encountered during the workshop, a few potential threats to the success of generative AI tools in this space were identified. With respect to the generation of 3D assets and environments, there is currently a lack of innovative AI tools suitable for training development contexts. Relatedly, differentiation among tools may also be difficult for practitioners, as the space for generative text, audio, and images becomes increasingly saturated. Practitioners may be left dependent on accounts across multiple disparate platforms at risk of obsolescence as new technologies dominate the landscape. There is a need for generative AI tools tailored for use in high-consequence industries. There is also a need to continue the development and refinement of AI tools that can successfully leverage inputs beyond text (e.g., speech, images, schematics, models, video, data) and intuitively align with user goals. Lastly, the inflated importance of prompt definition and refinement processes may need to be overcome for the tools to be more widely adopted by designers/developers.

Stack

The stack of generative AI tools tested or discussed by the SME included: ChatGPT, Perplexity, Meta AI (text generation); Leonardo AI, Midjourney, Adobe Photoshop Firefly, PIXVERSE, Krea.ai, Lumalabs.ai (images); llElevenLabs Speech Synthesis, Adobe Audition (AU), UDIOBETA (audio); invideoAI, Captions (video); HYPERHUMAN, Meshy (3D assets); as well as Convai, inworld, NVIDIA ACE (agent generation).

Domain SME Workshop Findings

To conclude our findings, we met with the Domain SME to discuss the suitability of the AI-generated training materials. The SME reported no personal experience with generative AI but had general familiarity through recent social media integrations. The SME informally rated the overall quality of the AI-generated training materials highly across the assessment criteria of interest (utility, accuracy, propriety, feasibility, suitability). For this workshop, we were most interested in the specific themes arising from the discussion rather than their summative perception of the examples.

Strengths

Discussions of the strengths of generative AI focused on the positive qualities of the example materials reviewed during the workshop. Regarding utility, the clarity and organization of content and the inclusion and repetition of key information pertaining to the learning objective were valued by the SME. Based on the exemplar materials, the SME viewed the tools as being useful for conveying procedural (“step-by-step”) information and considered the material useful for novice learners. The SME noted the importance and uniqueness of the generated material repeating that the life vest is to be inflated outside of the aircraft—an aspect of the procedure not emphasized enough in current materials, referencing familiarity with such incidents.

In terms of accuracy, the discussion centered on the verisimilitude of the AI generated text, audio, and video content, including a perceived veracity of the audio and “key points” (cf.

Weaknesses

While not accounted for in the informal ratings of the Domain SME, a breadth of weaknesses of the AI-generated training materials were discussed. The content was viewed as too deficient in specificity to be useful in training contexts (utility). The generated content was viewed as repeating ancillary information, while omitting context regarding why certain actions are necessary during the procedure. The generated content included erroneous details of the location of passenger life vests with a lack of applicability across different types of air carrier operations and aircraft (operational specificity). The example materials also lacked appropriateness and specificity to any given learning context: “If it were for cabin crew and trying to help engage passengers more and learn from the material, it would be great for that. But in terms [of] passengers, I’m not sure when they would be able to access this information—before or during the flight?”

Discussions of the accuracy of generated materials centered on subjects of concern to the researchers. The veracity of textual information—with erroneous information estimated accounting for around 10% of the total by the Domain SME—and the veracity and plausibility of AI-generated images/graphics were of particular concern. The SME quickly judged that the AI-generated images of life vests were not appropriate to the context, although they did not notice—or at least verbalize—the extent to which the images misrepresented the text/audio instructions (see Threats). The explicit need for personnel (e.g., designers/developers, instructors) to verify, validate, and tailor AI-generated text and images before implementing such materials in training was reiterated by the SME throughout the discussion.

Weaknesses in the feasibility of the materials included issues related to connectivity and accessibility (interactions with the AI agent were paywalled), and the dependence on instructional personnel to diligently verify, validate, and tailor content—with an emphasis on the role of designer/developers in ensuring content adheres to established instructional design principles (e.g., redundancy; Mayer & Fiorella, 2021). While many of the weaknesses in the suitability of materials have been outlined in relation to other criteria, a unique addition here is that the SME viewed the AI-generated materials as being ill-suited for use in training experts.

Opportunities

Following their positive overall reception of the examples, the SME discussed the potential suitability of AI tools in distilling the key points of procedural steps, producing viable training mockups, and for content to be used as introductory/recap material, such as a preview or advance organizer of other training content (i.e., pre-/post-training content). The reactions of the SME to the text/audio content (i.e., benchmark parity) suggest the potential suitability of generative AI for objectives related to knowledge of the steps of a given procedure, while their reactions to video content (source ambiguity) suggest the plausible suitability of AI-generated videos for describing and modeling interpersonal/intrapersonal skills. The overall appraisal was that, should remaining issues in the veracity and operational specificity of AI-generated content be resolved, there is a positive outlook for generative AI in aviation training.

Threats

Only the veracity of AI-generated text/images was explicitly called out as a potential threat by the SME. Our observations extended this by noting the potential for veracity judgement errors by both novices and experts—that is, given the verisimilitude (“truthiness”) of generated content, end-users in these domains may not be able to accurately identify erroneous details or omissions. For example, the SME assumed that demonstrated content may have shown some consistency with regulatory materials. Relatedly, that this verisimilitude occurs without sufficient and reliable verification of the veracity of content (see Hicks et al., 2024), poses a potential threat to the adoption and use of generative AI technologies for training design/development in high-consequence industries such as aviation. Most concerning may be the potential for source ambiguity due to the relative parity of videos generated by readily available AI tools: “I’m not sure if they were developed by AI or not—going back to [the] video portion, [I’m] not sure if it was an AI or an actual human.” That is, we observed the current potential for an experienced operator to believe they are watching a human instructor when receiving unverified, unvalidated, and potentially erroneous or operationally irrelevant information.

Discussion

In the current study, we investigated one potential use case for AI in the training design/development lifecycle. Through our study of experts’ use of generative AI in the creation of instructional materials for passenger cabin safety, we were able to better understand the potential use and value of these tools. One key finding from our case study was that generative AI fundamentally changes the nature of several tasks involved in creating training materials. Previously, a lot of time and effort was needed for the ideation and development of creative assets. Designers and graphic artists invested a lot of time in ensuring a shared mental model of what a final illustration would likely look like and produced one or two examples for further refinement. Now, AI tools may be leveraged to quickly generate hundreds of possible assets, albeit of dubious quality and applicability. Consequently, additional time, effort, and other resources may need to be dedicated to front-end analyses, prompt engineering, selection of tools and materials, and careful review and refinement of the products of AI-assisted training material development.

Another key finding from our case study was the importance and potential workload of validating and tailoring AI-generated content before use in training. While the clarity of content emerged as a strength, a clear weakness was the specificity and applicability of material across different operations. Despite these and similar weaknesses and threats, there appear to be clear opportunities in leveraging AI for creating mockups and introductory materials overviewing basic procedures and interpersonal interactions. Both workshop SMEs expressed a positive outlook for the near-future use of generative AI in the aviation domain, contingent upon resolving issues in content accuracy and operational specificity. Based on this case study, we offer a few suggestions for the training community in high-consequence industries such as aviation.

First, research and guidance should be furthered pertaining to the development of LLMs tailored to domains, organizations, and jobs/tasks in high consequence industries. Such work may be a foundation for ensuring the specificity and applicability of generated training materials. Second, organizations should establish policies and procedures pertaining to the verification and validation of generative AI and similar technologies in the training lifecycle. These will be necessary to ensure the veracity of generated materials across media formats.

Third, research and guidance on function allocation between generative AI technologies and human users should be established and informed by human-systems interaction literature. The workload of creative workers should not be overburdened by mundane tasks. Fourth, developers of generative AI tools should consider the challenges described in this report. While the verisimilitude (albeit not veracity) of text, audio, and video content developed by AI may be approaching parity with human-generated materials, the quality of images and 3D assets are insufficient for use in high consequence industries. Practitioners might consider generating lower fidelity visual representations until the quality of these tools improves (see Jentsch, 1996).

Finally, organizations and managers should ensure that training designers/developers are given sufficient support and time for learning and professional development related to the use of AI technologies and given sufficient opportunities to practice these skills on less-critical aspects of training design/development (e.g., concept art, design mockups).

Conclusion and Takeaways

Our findings offer a deeper understanding of the potential and challenges associated with the application of AI tools within the training lifecycle. We provide the groundwork for analyzing and refining the design and use of AI tools for training development in high consequence industries. Future research should cautiously explore the integration of generative AI tools with simulation technologies. There are clear trade-offs in speed and quality when using AI tools for training development, as substantiated in this case study. Careful attention must be given to both process and product in the integration of generative AI in the training lifecycle to ensure that decision-makers do not inadvertently compromise training efficacy. By doing so, we may be able to better leverage these tools to revolutionize the training lifecycle and ensure operational readiness in sectors such as aviation.

Footnotes

Acknowledgements

We greatly appreciate the contributions of our SMEs to all aspects of this work.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was in part supported by the United States (U.S.) Department of Transportation (DOT) Federal Aviation Administration (FAA) collaborative research agreement 692M152440003; program manager: FAA ANG-C1, the NextGen Human Factors Division; program sponsors: FAA AVS, the Aviation Safety Office, and FAA AFS-280, the Air Transportation Division - Training & Simulation Group. The views expressed herein are those of the authors and do not reflect the views of the U.S. DOT/FAA or the University of Central Florida.