Abstract

The introduction of Large Language Models (LLMs) have captured public imagination and represent a marked improvement in AI in Education (AIED) capabilities. But there is concern that student reliance on automated tools to complete written assignments may lead to a decline in learning. This study investigated whether participant use of LLMs to complete a writing assignment affected retention of learning content. Undergraduate participants (N = 109) were randomly assigned to complete a writing assignment under one of three conditions: (1), with the assistance of a Retrieval-Augmented Generation (RAG)-based AI psychology tutor; (2) with the assistance of unmodified GPT-4 Turbo; (3) with no AI assistance. After completing the writing task, students completed a posttest quiz to assess their retention of learning material. The control condition had the lowest mean quiz score (M = 9.22, SD = 3.90), followed by the RAG AI tutor condition (M = 10.81, SD = 4.12), and unmodified GPT-4Turbo (M = 11.31, SD = 3.88), with significant differences between the AI tutor condition and the control condition (p = .036); and between the GPT-4 Turbo condition and control (p = .003); but not between the AI tutor and GPT-4 Turbo conditions (p = .283).

Introduction

The AI in Education (AIED) movement represents an initiative by educators to incorporate cutting-edge technology into classrooms. This trend is not new. Nearly 100 years ago, the first automated multiple-choice machine enabled students to select from various responses to a question and receive immediate feedback by manually rotating a dial (Pressey, 1926). Several decades later, the behaviorist B. F. Skinner constructed his own “teaching machine”, a device which allowed students to pencil in their own written responses before receiving automated feedback (Skinner, 1958). Early forms of technologically-assisted instruction appeared in tandem with the advent of computers growing increasingly sophisticated as technologies advanced. Such devices were heralded as means of allowing students to learn at their own pace and for teachers to save time from grading—claims which continue to be touted by the AIED movement of today (Baker & Smith, 2019).

The psychologist Benjamin Bloom famously demonstrated that individualized tutoring can lead to two standard deviation gains in student learning compared to traditional classroom teaching methods (Bloom, 1984) and this has served as a conceptual starting point for much of the student-focused AIED movement (Holmes & Tuomi, 2022). Adult, one-on-one, face-to-face human tutoring has since been considered the gold standard for enhancing student academic performance, and it has also been accepted as common knowledge that human tutors are more effective than computer tutors (Graesser et al., 2001). But recent research comparing human tutors with computer tutors has indicated that the gap is closing (du Boulay, 2019; Kulik & Fletcher, 2016; VanLehn, 2011). Advancements in computing power, algorithms, and big data analytics have led to the creation of transformer-based Large Language Models (LLMs) marking an exciting new chapter in AIED capabilities (Moore et al., 2023). AI tutors powered by LLMs show particular promise.

The introduction of ChatGPT in November 2022 demonstrated the potential for LLMs to interact with users in a remarkably lifelike way, and this capability is already being incorporated into AIED tools (Stamper et al., 2024). Natural language processing LLMs are pre-trained and display a remarkable ability to formulate in-depth knowledge from their training sets without any access to external memory (Roberts et al., 2020). This is accomplished by fine-tuning models on large amounts of text data creating a series of vector text embeddings which serve as a parameterized knowledge base (Wang et al., 2023). There are, however, certain limitations as these models cannot easily expand or revise their memory and they are prone to “hallucinations”—instances when the model will generate a response which appears valid when it is, in fact, not accurate (Marcus, 2020). Some of these issues can be remedied using further fine-tuning, but this can prove costly and somewhat inflexible (Ding et al., 2023). Alternatively, the parametric memory of LLMs can be supplemented with retrieval-based memory by giving models access to an external knowledge base when generating responses to user queries. This Retrieval-Augmented Generation (RAG) technique means that models can easily have their knowledge base expanded and revised, improving the accuracy and reliability of model-generated responses (Lewis, 2020). Using RAG also lowers computational and financial costs by providing models with access to the most relevant and up-to-date information without the need to continually fine-tune (Dong, 2023).

Deploying a RAG system for educational purposes requires a series of steps. First, relevant educational content (e.g., textbooks, articles, lecture slides, etc.) needs to be identified and processed before being uploaded into a vector database. Next, a background system prompt can be chosen which provides the LLM with instructions on how it should respond to user requests. A typical RAG workflow (a) allows users to submit a query; (b) passes that query through the vector database using similarity-based retrieval algorithms which generates a response; (c) combines the user query, together with the vector database response, with the background system prompt and is delivered to the LLM; (d) and then generates a response to the user. The responses generated by querying the vector database can be optimized by altering specific parameters like chunk overlap and chunk size with the goal of providing contextually relevant information to supplement the parametric knowledge base of the LLM (Modran, 2024).

The use of LLM-based tutors is on the rise in AIED, making it important to assess their educational impact on students. There is a concern that students using generative AI tutors for assignments might not engage with the learning content as thoroughly as they would without AI assistance. This study aimed to investigate the effects on learning when students used a RAG-based AI tutor to help complete writing assignments.

Undergraduate participants were randomly assigned to complete a short writing assignment either with or without the use of generative AI to assist them. Learning was later assessed via a multiple-choice quiz. It is additionally important to investigate whether implementing a RAG procedure provides any significant benefits when compared to freely accessible LLMs like ChatGPT. Given the difficulty of developing a RAG-based system, we included two generative AI experimental conditions: one in which participants completed the writing assignment with the assistance of a RAG-based AI tutor with access to relevant textbook materials, and another condition offering the assistance of OpenAI’s unmodified GPT-4Turbo API. To rule out any differences in participant quiz performance due to expectancy effects, participants in both the AI tutor condition and the unmodified API condition were told that they would be using an “AI Psychology Tutor” to help them complete the writing assignment, and both groups were presented with the same user interface.

Our initial hypothesis posited that the use of AI assistance in completing the writing assignment would result in lower quiz scores. We assumed that undertaking the assignment without AI support would require participants to invest more time in reading the learning material leading to a greater depth of cognitive processing. To ensure greater generalizability of results, this study made use of a writing assignment taken from an undergraduate introductory psychology course at Oregon State University.

Method

Participants

Undergraduate students recruited from the Oregon State University psychology research subject pool (N = 109) completed a 1-hr online study for research credit.

Materials

This study made use of a custom-built RAG system using the GPT-4Turbo API (OpenAI, 2024) and a Qdrant vector database containing content uploaded from the classical and operant conditioning chapter of an open source introductory psychology textbook (Lumen Learning, 2023). We hosted our RAG system on Amazon Web Services and embedded the tutor within a Qualtrics survey. The AI tutor had a custom user interface modeled after the user interfaces of other popular LLM tools, with users being able to type queries into a text box and receive a text response.

An unmodified version of the GPT-4Turbo API was used as an approximation of LLMs such as ChatGPT. This unmodified API utilized the same user interface as our RAG tutor.

Procedure

We tasked participants with completing a short writing assignment under one of three randomly assigned conditions: (1) with the assistance of a RAG-based AI tutor; (2) with the assistance of unmodified GPT-4Turbo; (3) without the assistance of any generative AI. The writing assignment asked participants to use principles of classical and operant conditioning to design a 5-day behavior modification plan to modify one of their own bad habits. All three conditions were provided access to a passage from a textbook chapter outlining the fundamental principles of classical and operant conditioning.

After completing the writing assignment, participants completed an 18-item multiple-choice quiz with questions relating to the classical and operant conditioning topics covered within the provided textbook passage. This was followed by a brief survey assessing how much effort participants put into completing the writing task as well as other demographic questions.

Results

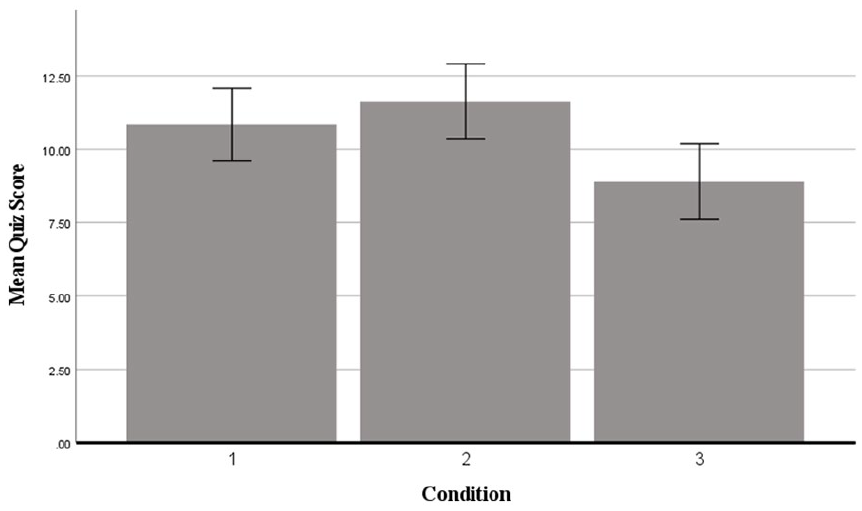

An ANCOVA revealed a significant difference in quiz scores between conditions when controlling for participant self-reports of effort (“How much effort did you put into completing this writing assignment?”; p = .008, R2 = .150). The control condition had the lowest mean quiz score (M = 9.22, SD = 3.90), followed by the RAG AI tutor condition (M = 10.81, SD = 4.12), and unmodified GPT-4Turbo (M = 11.31, SD = 3.88; see Figure 1).

Mean quiz score by condition.

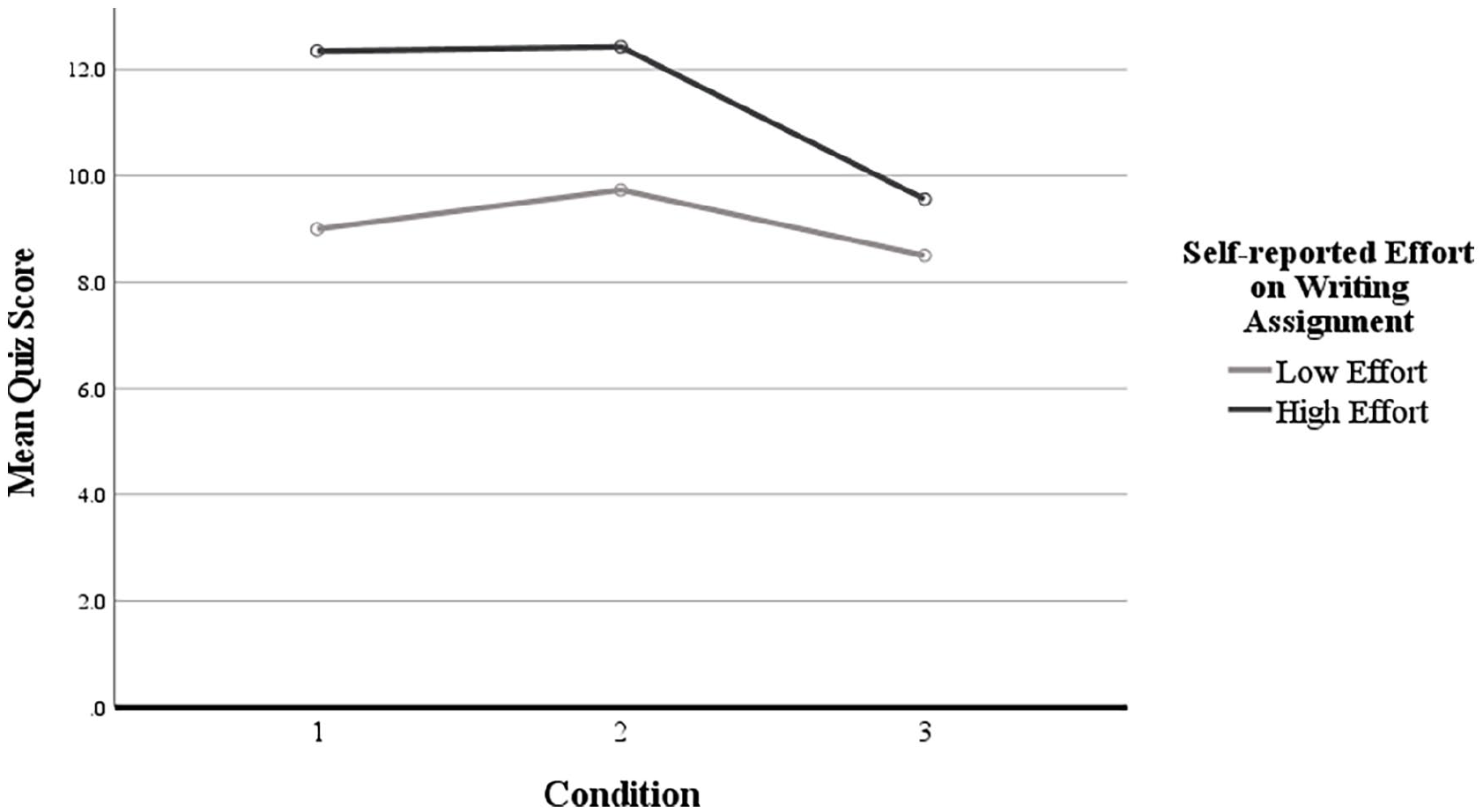

Contrasts indicated a significant difference between quiz scores for the AI tutor condition and the control condition (p = .036) and between the GPT-4Turbo condition and control (p = .003), but not between the AI tutor and GPT-4Turbo conditions (p = .283). To further investigate the relationship between effort and quiz score, we performed a median split of the effort variable (Figure 2).

Mean quiz score versus condition for high and low effort.

Discussion

These results suggest that participant use of either a RAG-based AI tutor or a freely available LLM like ChatGPT to assist in the completion of writing assignments may increase overall learning of a topic. It is possible that the generative nature of working with the AI led to greater participant interaction with, and learning of, the relevant material. But it was not AI use alone which made the significant difference; it was also the amount of effort participants reported to have put into their work. Together, these findings indicate that instructors might not need to worry about student AI use–even when it is used to complete writing assignments. Ultimately, whether AI tutors or generative AI enhance student learning may depend on how students choose to use them. An AI tutor can be a valuable tool to strengthen student learning, but the effectiveness still depends on the effort students put into their work.

The lack of significant difference in quiz scores between the AI tutor and unmodified GPT-4Turbo conditions may indicate that, at least for this content and task, a RAG-based system is unnecessary. For tasks which involve more detailed, subject-specific knowledge or for more sophisticated content, a RAG system may be more appropriate. It is likely that the OpenAI GPT models incorporated text relating to classical and operant conditioning in their training data sets. It is possible that a similar conclusion could be drawn for many other academic tasks, and that students can derive benefits from using free, widely available LLMs like ChatGPT.

The fact that we used an actual writing assignment from an undergraduate introductory psychology course suggests that our results may generalize to similar assignments in other undergraduate courses, although the need for further research remains.

This study did not evaluate the presence of hallucinations. Future work may benefit from investigating the prevalence of inaccurate information provided by students when using LLMs to complete academic tasks. Student use of LLMs may also raise questions related to data security and privacy.

There is also an insufficient exploration in the AIED literature comparing the use of different LLMs to assist students. This study utilized OpenAI’s GPT-4Turbo, but other models such as Gemini, Claude, and open-source LLMs like Llama3, Bloom, or Falcon 2 could also be assessed. If open-source models prove to be as effective as more expensive options, tutors could be developed and shared freely, thereby removing barriers to access. Ultimately, the potential for LLMs to serve as assistants to students represents an exciting new avenue of inquiry within the AIED movement.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.