Abstract

The present paper is based on a case study that aimed to provide evaluation tools for supporting human-centered design assessment of human-AI teaming in a virtual testbed environment. The authors worked from the lens of industrial/organizational psychology and human factors to identify a set of easily measurable constructs linked to relevant behaviors, subjective experiences, and worker well-being outcomes. The first of two major contributions is a description of the holistic approach employed that pointed to the importance of valuing user empowerment within a virtual testbed environment, with a review of empirical evidence that suggests worker empowerment is critical for both ensuring worker well-being and efficient and productive outcomes. Second, a set of theoretical propositions are developed highlighting the potential key role of empowerment in facilitating performance in human-AI teaming systems, thereby laying the foundation for future HF/E research in this area.

Keywords

Media, professional conferences, private and governmental policies, and academic organizations have shown a growing interest in the importance of using human-centered approaches in the design of systems involving human-AI teaming. The present case study was initiated to provide evaluation tools that could support research in this area. In particular, the present study consisted of a human-centered design assessment for a virtual testbed currently being developed by Sonalysts (Asiala et al., 2023). Researchers at Sonalysts agreed to share details about their development efforts with us, and we offered to provide assessment recommendations for them to consider.

Despite frequent calls for AI to improve human welfare, there remains a fundamental lack of theoretical frameworks and constructs to guide the integration of human psychological needs in AI research and development efforts. In the present case study, we worked from the lens of industrial/organizational psychology and human factors and set out to identify a set of easily measurable constructs and models that consider relevant behaviors, subjective experiences, and worker well-being outcomes. We found current evidence suggests that human empowerment may be critical in human-AI-robotic teaming systems to facilitate efficient and productive outcomes and also to ensure worker well-being.

Empowerment generally refers to the autonomy and self-determination associated with individuals and communities. Human empowerment has been found to be linked to improved task performance (D’Innocenzo et al., 2016; Juyumaya, 2022), worker engagement (Juyumaya, 2022), decision-making (Ford & Fottler, 1995), increased creativity (Llorente-Alonso et al., 2023), and well-being (Juyumaya, 2022).

One goal of this paper is to examine the potential importance of empowerment for facilitating performance in human-AI teaming systems. We discuss the process of developing rationales and recommendations to be shared with Sonalysts.

We begin by defining and conceptualizing the employment of two empowerment theories: structural empowerment (Kanter, 1977) and psychological empowerment (Spreitzer, 1995). We then discuss an integration of the autonomous agent teammate-likeness scale (Wynne & Lyons, 2019), and go on to review our set of final recommendations regarding holistic assessment for user empowerment evaluation during testing and development of virtual testbeds. In doing so, we fill a gap in the current literature, integrating user well-being into human-centered design in AI research and development (The White House, 2023; U. S. Surgeon General, 2022; Wienrich et al., 2023). Additionally, we seek to contribute to research calls requesting novel approaches to develop and evaluate AI methods in real-world settings (Hager & Gianchandani, 2024), such as the Sonalysts test bed.

We then advance six theoretical propositions utilizing empowerment theory to assess human-AI teaming that can be used to guide developers and researchers engaged in human-centered design efforts. A typology of empowerment cues and how they may influence human-AI teaming is presented. We posit that the need to assess human psychological demands is a critical factor in the development, research, and implementation of human-centered AI teaming.

Empowerment and Autonomous Agent Teammate Likeness

Two theories of empowerment were used. The first is Kanter’s theory of power and structural support, which is the fundamental model of empowerment (Zhang et al., 2018) and commonly referred to as structural empowerment. Consistent with aspects of macroergonomic theory, this theory focuses on the role of the organization and management, entailing social workplace conditions and organizational policies that emphasize opportunities such as on-the-job learning and development, support in the form of feedback and guidance, information regarding material defining company performance, its values, and policies, as well as resources such as temporary help and time to carry out work (Kanter, 1977). The second is Spreitzer’s model of intrapersonal empowerment in the workplace, commonly referred to as psychological empowerment. Psychological empowerment reflects the influence that individual perceptions of empowerment strategies may have on behavioral outcomes. Psychological empowerment emphasizes self-determination as a sense of choice in initiating and regulating one’s actions, competence as the self-efficacy specific to one’s work, meaning represents a fit between the work role and one’s beliefs and values, and impact that can influence strategic, administrative, or operating outcomes in one’s workplace (Spreitzer, 1995). Together, these two theories provide a more holistic understanding of user empowerment.

While we recommended the empowerment scales to reflect the affordances provided by the system and their subsequent impact on the user, we also recommended the inclusion of the autonomous teammate likeness scale (AAT; Wynne & Lyons, 2019), which reflects the lasting impression of the human-AI interaction on the user. The AAT is defined as, “the extent to which a human operator perceives and identifies an autonomous, intelligent agent partner as a highly altruistic, benevolent, interdependent, emotive, communicative, and synchronized agentic teammate, rather than simply an instrumental tool” (Wynne & Lyons, 2019, p. 3) . While the agreement of AI as a teammate may be in question, humans cannot help but anthropomorphize non-human animals and objects, including AI systems (Alabed et al., 2022).

We contend that integrating the three above-mentioned concepts is needed for holistic assessment of human-AI teaming then what is currently available; structural empowerment to examine the affordances provided by the system, psychological empowerment to examine the effects of the affordances on the individual user and their behavior, and perception of autonomous teammate likeness to examine the impact of the human-AI interaction.

Methods

We searched the PsycInfo database for published articles containing scales for empowerment using the keywords: “structural empowerment,” “psychological empowerment,” and “empowerment.” The search revealed the instrument measuring psychological empowerment culture, developed by Schermuly et al. (2023) from Spreitzer’s psychological empowerment model, and the organizational empowerment scale, developed by Matthews et al. (2003) from Kanter’s structural empowerment model, as well as the autonomous agent teammate-likeness scale (Wynne & Lyons, 2019).

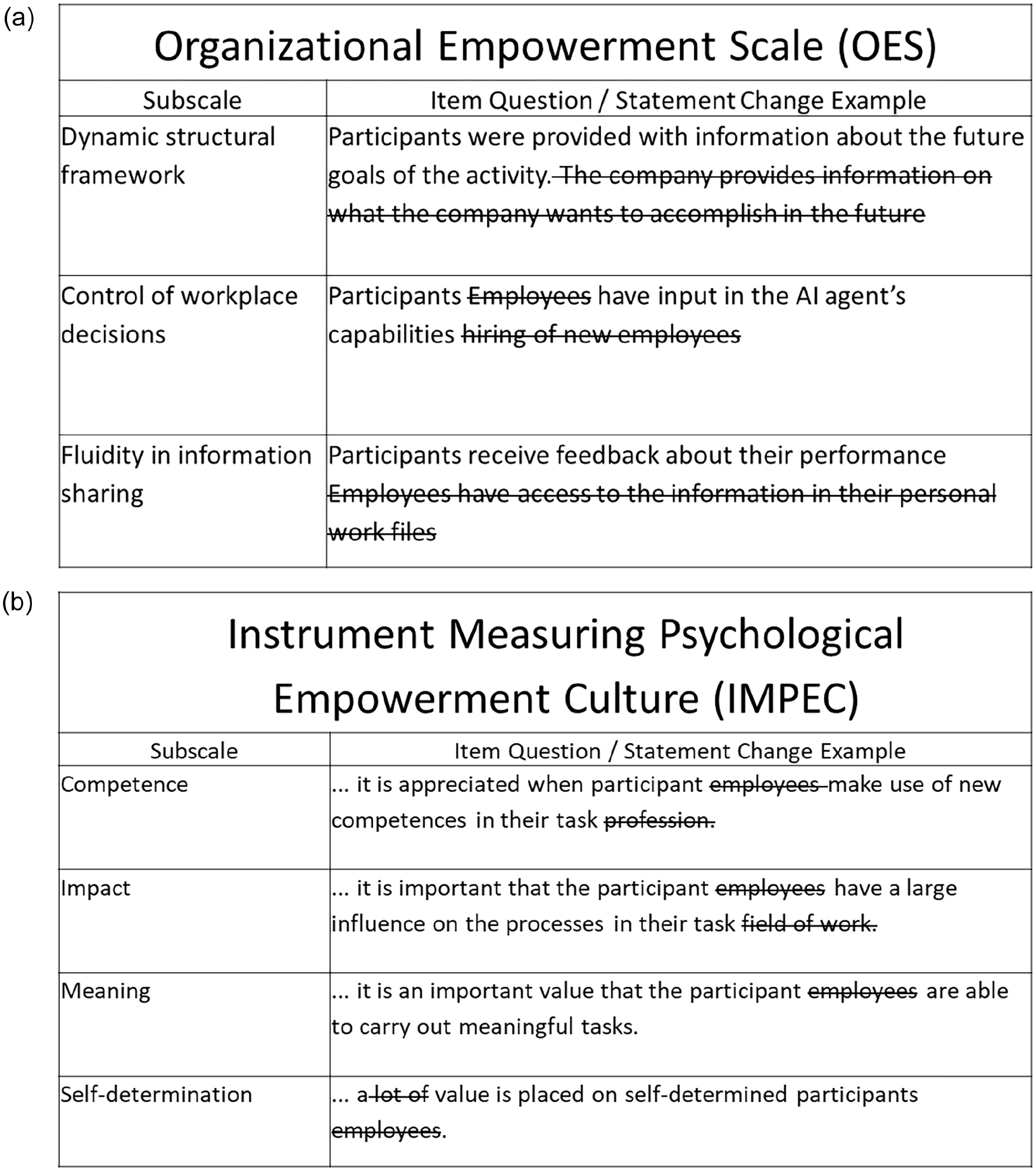

We then began to adjust these scales to fit the context of the Sonalysts virtual testbed (for details about the testbed under development see [https://www.sonalysts.com/: McCarthy et al., 2023]). Minor edits were made to the scale items to reflect relevant aspects of team interaction and personnel training in a testbed context (see Figure 1a and b). The source material for the scale provided the statistical validity information that was needed to reduce survey length by removing any scale item with a confirmatory factor loading of less than 0.70 (Matthews et al., 2003; Schermuly et al., 2023).

Examples of the edits were made to the scale items. These changes sought to reflect relevant aspects of team interaction and personnel training in a testbed context

Drawing from the modified version of the scales, we assembled and recommended two alternate survey forms to accommodate two known testbed needs. The first was a short 10-item survey comprised of the highest loading items from the subdimensions of each scale, which is intended to assess testbed sessions lasting less than 30 min. The second was a longer 22-item survey comprised of the two highest loading items from the subdimensions of each scale, intended to assess testbed sessions lasting longer than 30 min. Due to the novel application of the empowerment survey items in an AI context, we also recommended conducting regular confirmatory factor analyses of the resulting human-centered empowerment surveys using data gathered from the AI testbed itself as a further check on its validity. The deliverable documents and recommendations were presented to members of the Sonalysts test bed development team, including the original scales, our edited versions of the original scales, the 10- and 22-item survey forms, and the R code that can be used to conduct confirmatory factor analyses.

Propositions

During the evolution of our project, it became clear that a theoretical framework regarding empowerment is relevant to the design of all human-centered AI systems. Empowerment is shown to be strongly associated with improved employee performance (D’Innocenzo et al., 2016; Juyumaya, 2022; Llorente-Alonso et al., 2023), improved employee initiative (Conger, 1989), and employee decision-making effectiveness (Conger & Kanungo, 1988; Ford & Fottler, 1995) . Additionally, it is associated with many positive psychological outcomes such as employee well-being (Juyumaya, 2022), willingness to learn (Wilson, 1993), creativity (Llorente-Alonso et al., 2023). Empowerment has also been found to facilitate a positive emotional work climate (Conger, 1989). These many strengths led us to propose several theoretical propositions that could be used by researchers and system developers to guide the creation and development of effective human-AI teaming systems.

Structural Empowerment

We first propose the structural empowerment model as a framework to guide the design of program affordances offered by a human-AI teaming system. The three dimensions of structural empowerment, opportunity, support, information, and resources, could be used as an assessment framework for human-AI teaming and has the potential to yield valuable user experience information.

Proposition 1: Incorporate opportunities for AI systems to encourage user development and growth. Such opportunities may occur in many forms, such as providing incremental increases in user customization options through skill repetition or increasing user latitude in regulating AI involvement in system functions.

Proposition 2: Support the user by ensuring regular feedback is offered and provide easy accessibility to tools that guide effective system use. Performance feedback is a critical component when performance goals are presented. Simple user feedback, such as comparing previous and current metrics of performance can positively influence task performance. Efficiency suggestions, such as action shortcuts, may further support the user during their interaction with the AI system.

Proposition 3: Ensure sufficient information is available regarding AI system functions and task outcomes is conveyed to the user. Information about AI, its capabilities, and its limitations are important in ensuring realistic user expectations and operational transparency.

Proposition 4: Ensure the AI system supplies the user with the resources to troubleshoot situations that may be ambiguous, confusing, or when users lack the requisite skills or knowledge to proceed confidently. Resources such as easily accessible help features can be made available in moments when the user may be unsure how to proceed or what affordance would be most useful.

Psychological Empowerment

The next propositions focus on the use of psychological empowerment as an evaluation tool when assessing the effectiveness of the affordances provided by the human-AI teaming system. Due to varying levels of technological understanding and perceptions of AI capabilities, many users may not find system affordances and options to be as intuitive as the developers intended, or which would be obvious to more experienced users. Employing psychological empowerment (self-determination, competence, meaning, and impact) as a means to evaluate the user experience can be expected to provide valuable information regarding the effectiveness of human-AI teaming.

Proposition 5: Users should feel a sense of choice and autonomy with all their interactions with the AI system. This could be reflected in the level of decision-making the user shares with the AI. Whenever possible, the user should be in the position of primary decision maker.

Proposition 5: Providing system feedback to the user that can build or maintain their belief in their ability to operate or interact with the AI system successfully. Feedback information regarding aspects of user competence can increase user self-efficacy specific to human-AI teaming.

Proposition 6: Human-AI teaming should provide an opportunity for the user to gain or maintain a level of meaning during task engagement. Meaning in work is a critical motivator, especially for engaging workers over the long term, and reflects when there is a good fit between the user’s work role and how they can impact the end goal of the system or tasks.

Discussion

After considering the needs of developing an AI test bed, we arrived at two conclusions: meeting user psychological needs needed to be a design priority, and some aspects of user empowerment represented a critical factor to evaluate in a human-AI test bed environment. To that end, we aimed to make two main contributions to the human factors/ergonomics literature. The first contribution was to provide a holistically-based evaluation tool to measure the impact of AI on the psychological well-being of the human user across three dimensions. The structural empowerment dimension assesses the affordances provided to the user by the AI system or agent. The psychological empowerment dimension assesses the subjective impact user-AI interactions, and the AAT dimension assesses the user’s attitude toward the AI system. In order to provide recommendations to the Sonalysts team, we conducted a literature review and identified two primary models for conceptualizing empowerment: Kanter’s theory of structural empowerment and Spreitzer’s model of psychological empowerment. We then generated a set of design recommendations and assessment needs based on the available empirical evidence. We also found the user perception of the AI system itself to be an important additional contributor to the overall psychological needs assessment. Therefore, our human-centered assessment draws elements from the structural and psychological empowerment models and also the autonomous teammate-likeness scale. We then developed a novel assessment tool customized to the specific needs of the Sonalysts testbed.

Our second contribution provides six theoretical propositions intended to guide the development and implementation of human-AI teaming systems. These propositions advance the literature regarding product design and user experiences by providing a clear framework to assist developers and researchers in designing affordances and features that take into consideration user psychological needs. This framework may assist incorporation of design elements to increase positive user experiences and psychological well-being, and offer meaningful contributions to the user. Implicit to a human-centered empowerment assessment is to include user empowerment considerations in the design of AI systems. Such assessment will have upstream implications that could alert designers to the importance of providing measurable affordances that support and encourage user empowerment.

Incorporation of psychological empowerment as an assessment tool may be critical in facilitating successful future and ongoing human-AI teaming projects. Together, the propositions introduced address relevant psychological needs of the user and answer calls for more human-centered AI design efforts generally (The White House, 2023; U. S. Surgeon General, 2022; Wienrich et al., 2023). We believe the approaches recommended herein during the development and implementation of human-AI teaming projects represent a simple addition that has the potential to have a profound effect on facilitating and guiding technological advances through human-centered design.

Sonalysts, Inc. is currently developing the testbed, and they expect to have an initial version by the end of the year. They are releasing the testbed as an open-source research tool. Therefore, they are making strategic decisions to include measurement tools that they feel are likely to be of general interest while allowing researchers to integrate measures that are more specific research questions. As a result, they have decided to include the AAT measure in the base testbed, allowing researchers to add the empowerment measures as their interests dictate.

The major limitation of this case study is the lack of scale validation of the human-centered assessment tool in an actual AI testbed. This assessment tool requires further work to ensure that the content, criterion-related, and predictive validation are significant and appropriate to the context of AI-user interaction. The purpose of this recommendation was not to provide a finalized measurement tool and framework but to instead supply a needed foothold to be built upon to ensure human interests and well-being considerations are inherent in the AI tools that will shape our future.

Footnotes

Acknowledgements

We thank Dr. Robert Henning, University of Connecticut, for providing the opportunity and support to complete this project and Dr. Jim McCarthy and his team at Sonalysts, Inc. for the opportunity to support their product development.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This paper was supported by Grant Number 5T03OH008610-20 from CDC–NIOSH. Its contents are solely the responsibility of the authors and do not necessarily represent the official views of NIOSH. For further information, please contact the corresponding author, Christian Piscopo, at Christian.piscopo@uconn.edu.