Abstract

In recent years, an increasing number of studies in human-robot interaction (HRI) have used images or videos of robots as a simple, inexpensive, and customizable substitute for physically embodied robots. Thus, the question arises whether results from studies using depictions can be validly applied to interactions with embodied robots. This study investigated the effect of embodiment in HRI within an industrial interaction scenario, focusing on perception, trust, and task performance. Eighty-five participants interacted either with an embodied robot or its depiction via a computer screen. Results showed that the embodied robot was perceived as more likable, intelligent, and safer, eliciting higher initial trust. Primary task performance was observed to be higher in interaction with the embodied robot, while secondary task performance was higher in interaction with the robot’s depiction. These findings challenge the generalizability of depiction-based HRI research, highlighting the importance of considering embodiment in future research designs.

Introduction

Recently, there has been a notable shift in the experimental approaches employed in human-robot interaction (HRI) research. The use of two-dimensional depictions of robots, such as images or videos, has become widely embraced as a simpler, cheaper, and more customizable substitute for physically embodied robots (Deng et al., 2019; Li, 2015)—a development that has been particularly accelerated by the challenges of the COVID-19 pandemic. During this time conventional laboratory studies involving human participants were challenging or even impossible due to social distancing regulations in many countries (Feil-Seifer et al., 2020). As a result, many of the recent findings in HRI research are derived from studies in which HRI was investigated using depictions of robots. Considering this, the question arises as to whether conclusions drawn from studies employing robot depictions validly apply to interaction with embodied robots.

To approach this question, a clarification of the meaning of embodied and depicted is needed. The range of robot embodiment levels studied in research complicates the comparability of experimental results. While, for example, a copresent physically embodied robot could clearly be classified as embodied, this categorization is less straightforward if this robot is telepresent, for example, presented on a monitor via a live feed (Tung & Law, 2017). The definition by Onnasch and Roesler (2021) provides a possible way of categorizing embodiment: they distinguish between two types of exposure to a robot. Embodied refers to the interaction with a real three-dimensional robot in which direct physical interaction is possible. Depicted stands for the interaction with either two-dimensional agents or images and videos of a real robot displayed on a two-dimensional screen (Onnasch & Roesler, 2021).

Previous studies have investigated the effects of embodiment on various interaction parameters with both subjective and objective measures. A majority of previously conducted studies support the effect that embodiment positively influences the measured outcomes—which is coined as the embodiment hypothesis (Li, 2015; Wainer et al., 2006). Importantly, this hypothesis was primarily explored and therefore experimentally backed up within the realm of social robotics, focusing largely on perceptual elements (Kidd & Breazeal, 2004; Kiesler et al., 2008; Roesler et al., 2023).

However, if we step away from the social domain and the perception by itself, more diverse outcomes are revealed (Bartneck, 2002; Kidd & Breazeal, 2004; Pereira et al., 2008; Powers et al., 2007). Oftentimes, in task-related settings, trust and performance are of main interest (Bainbridge et al., 2008). For these constructs, some studies revealed that participants interacting with embodied robots developed higher levels of trust (Rae et al., 2013) and demonstrated greater compliance (Bainbridge et al., 2011) compared to those interacting with depictions. However, contrasting findings from other studies suggest no significant impact on trust (Kidd & Breazeal, 2004). Regarding task performance, several studies showed a positive influence of embodiment on task-related metrics (Bartneck, 2002; Deng et al., 2019), while others report again no significant differences (Hoffmann & Krämer, 2011; Wainer et al., 2007). In summary, previous research draws a somewhat inconclusive picture of the influence of embodiment on HRI. While some studies argue for comparability, others report significant differences between interactions with embodied robots and depictions of robots (Bainbridge et al., 2011; Deng et al., 2019; Kiesler et al., 2008; Powers et al., 2007; Rae et al., 2013; Wainer et al., 2006, 2007). The dissimilar results of these studies are most likely due to the differences in experimental setups, such as the robots used, measurement methods, operationalization of the dependent variables, and experimental interaction scenarios (Hoffmann & Krämer, 2013). The latter—a variety of experimental interaction scenarios—is an important factor that needs to be emphasized here again.

Predominantly, embodiment has been explored in the context of social HRI (Deng et al., 2019; Kiesler et al., 2008; Roesler et al., 2023). In contrast, its implications in industrial settings remain relatively underexplored. These interaction contexts differ greatly: in a social context, interaction is a matter of social exchange and communication, whereas interaction in an industrial context usually involves joint work on a task (Onnasch & Roesler, 2021). Indeed, some studies suggest that the interaction context determines whether an embodied robot or the depiction of a robot is evaluated more positively (Hoffmann & Krämer, 2011). Thus, findings on the effects of embodiment in social interaction scenarios may not be transferable to industrial HRI. This study wants to address this gap by focusing on an industrial HRI scenario and investigating how embodiment influences perception, trust, and task performance.

Method

Participants

To achieve the targeted sample size of 90 which was based on an a priori power analysis, a total of 99 participants took part in the experiment. Fourteen participants were excluded from data analysis based on failures during the experimental procedure, resulting in a final sample of N = 85 participants with a mean age of 26.49 years (SD = 7.18). Of the final sample, 58.82% identified as female, 38.82% identified as male, 1.18% as diverse, and 1.18% did not indicate a gender. Participants were recruited via the local university. Participation in the experiment was voluntary and informed consent was obtained. Participants received course credit as compensation.

Task and Apparatus

In the experiment, participants were instructed that this study was conducted in cooperation with a manufacturing company to optimize their manufacturing process. The fictional company was described to be a manufacturer of juggling balls, as, based on a paradigm used in previous studies with industrial robots, juggling balls were chosen as the objects used in the interaction (Roesler et al., 2024; Weidemann & Rußwinkel, 2021). The laboratory simulated a workstation within a large assembly line, and the participants were instructed to take on the role of an employee at this workstation and to perform their tasks in cooperation with a robot. Participants operated a Panda robot by Franka Robotics, using pre-scripted control software hosted on a JATOS server (Lange et al., 2015) to transfer the juggling balls along the simulated assembly line.

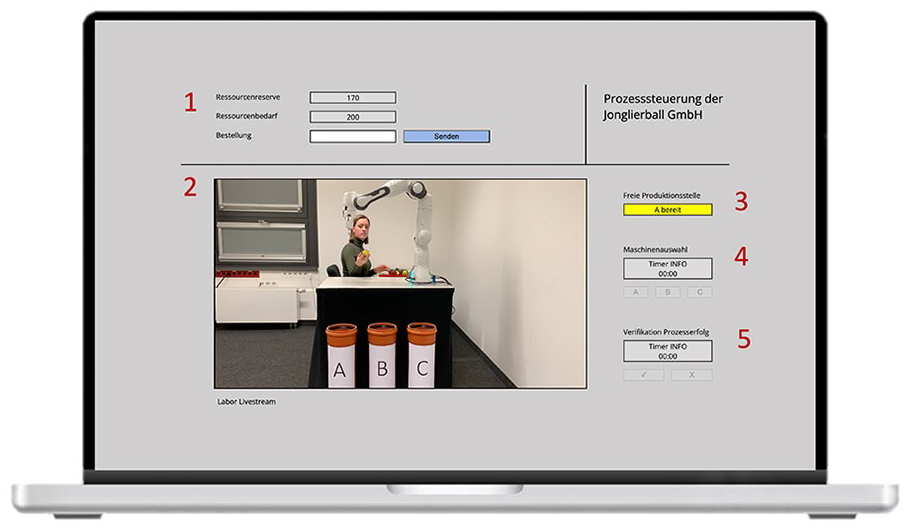

The interface setup drew inspiration from various experimental environments commonly utilized in human-automation interaction, for example, AutoCAMS 2.0 (Manzey et al., 2008), or M-TOPS (Domeinski et al., 2007). Participants in the embodied condition sat at a table facing the robot and operated it using the control software on a laptop in front of them. Participants in the depicted condition interacted with a depiction of the Panda robot for which a simulated live stream was embedded in the control software interface (Figure 1, Area 2). The experimenter simulated an upstream workstation behind the robot. The collecting tubes labeled “A,” “B,” or “C” simulated downstream machines. The primary task was to operate the robot to transfer the balls handed over to the robot from the upstream station to the machine (A, B, or C) that currently had free capacity. The importance of this task was emphasized with a cover story stating that reacting to different production speeds at the machines would ensure production flow efficiency.

Depiction of the control software interface for participants in the depicted condition. Note: for participants in the embodied condition, the simulated livestream [Area 2] was replaced by a blank field in the control software interface, but the participants’ workstation was positioned to have the same field of view as the simulated livestream.

The interaction was structured as follows: First, the robot received a ball from the upstream station (i.e., the experimenter), while the participant received a notification via control software indicating the next available machine (Figure 1, Area 3). Next, the participant used the control software to select the appropriate machine by clicking the A, B, or C buttons within a specified timeframe (Figure 1, Area 4). Participants were instructed that after this timeframe, the robot would choose a machine randomly, which should be avoided for maximum efficiency. The robot then passed the ball to the selected machine. Simultaneously, participants observed whether the robot passed the ball to the correct machine and clicked a button marking the robot’s movement as correct or incorrect within a specified timeframe (Figure 1, Area 5). Participants were informed that the experiment would continue either way, but that a note would be made in the system if a robot movement was marked as incorrect. The cover story was that this information would then be included in the evaluation of the company’s production statistics.

In addition to the primary task, participants completed a secondary task that consisted of simple calculations, specifically subtraction tasks (e.g., 400-360 = ?) inspired by Domeinski et al. (2007), framed as part of the logistics process (Figure 1, Area 1). Each of the arithmetic tasks was active until solved or if not solved after 8 s, it was replaced by a new one. Participants were instructed to treat this task as a purely secondary task, and not to deprioritize the main task.

Design

A one-factorial between-subjects design with the factor type of exposure was used for this study, differentiating the two levels embodied and depicted. In the embodied condition, participants used the control software to operate a physically embodied robot. In the depicted condition, participants used the same software to operate the robot remotely while watching a simulated livestream displayed in the control software. In the embodied condition this field was empty, with participants able to view the robot across the edge of their screen, maintaining the same viewing angle and distance.

Dependent Measures

The dependent variables assessed in this study were perception and trust attitude as well as primary and secondary task performance.

Perception

Participants’ perception was assessed across perceived safety, perceived intelligence, and likeability using the Godspeed questionnaire (Bartneck et al., 2009), which consists of semantic differential items rated on five-point Likert scales. As this study was conducted in German, the translated version by Giuliani & Foster (2013) was used.

Trust

Trust was measured using subjective measures and error detection, acknowledging that trust is considered a dynamic attitude that may change with experience and time which can influence the behavior (Hancock et al., 2011; Lee & See, 2004). The study differentiated between initial and dynamic trust (Hoff & Bashir, 2015), assessed before and after the interaction, respectively, via two single items. A single item was used as it correlates highly with one of the most widely used unidimensional trust questionnaires (Rieger et al., 2022). Additionally, participants’ detection of robot errors was tested, presumed to decrease with higher trust levels due to less thorough quality checking. Moreover, a single item was used to assess perceived reliability initially and after the interaction.

Task Performance

Participants’ task performance was measured by recording the number of correctly completed tasks in the primary and secondary tasks. For the primary task, this was based on whether the participant clicked the correct machine (i.e., according to the notification) to pass the ball to. For the secondary task, the number of correctly completed calculation tasks was measured.

Procedure

Before the data collection, a checklist of the local ethics committee was used to ensure the harmlessness of the experiment. The experiment began with informed consent and after filling out a demographic questionnaire, participants received detailed instructions on the experiment. Following the instruction, participants were asked to rate the static robot (presented as a picture in the depicted condition) in terms of initial trust and perceived reliability. Before the actual task started, participants completed a training session to familiarize themselves with the experimental environment and gain a better understanding of the task. The training was designed to include five trials, that is, five juggling balls that would be handled by the workstation. The subsequent experiment consisted of 20 trials in total. Two trials—namely trial 14 and trial 18—were intentionally programmed in such a way that the robot made an error and did not pass the ball to the machine that the participants had chosen in the control software. Following the experimental trials, participants completed the questions measuring dynamic trust and reliability as well as the three scales of the Godspeed questionnaire (Bartneck et al., 2009). Primary as well as secondary task performance were assessed during task completion. In total, the experiment lasted approximately 30 min.

Results

Perception

Results showed that the embodied robot was perceived as significantly more likable (M = 2.65, SD = 0.59) than the depiction (M = 2.37, SD = 0.47), t(83) = 2.40, p = .019, d = 0.521. Moreover, participants rated the embodied robot as significantly more intelligent (M = 2.51, SD = 0.63) than the depiction (M = 2.17, SD = 0.57), t(83) = 2.60, p = .011, d = 0.564, and the embodied robot was perceived as safer to interact with (M = 3.29, SD = 0.67) compared to the depiction of the robot (M = 2.92, SD = 0.71), t(83) = 2.43, p = .018, d = 0.527.

Trust

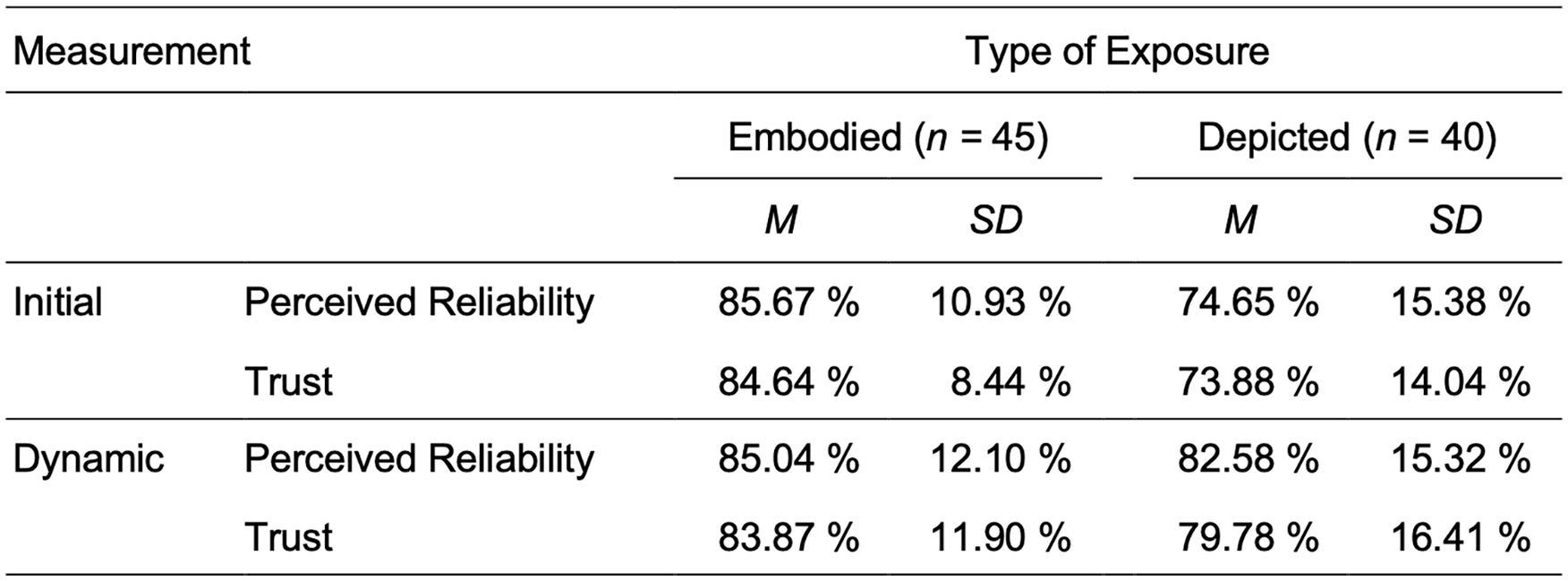

The analysis revealed that the experimental group interacting with the embodied robot reported significantly higher initial reliability (t[83] = 3.84, p < .001, d = 0.834) and initial trust (t[83] = 4.22, p < .001, d = 0.943) toward the robot, as can be seen in Figure 2. In contrast, no statistically significant differences between the two groups were found for dynamic reliability (t[83] = 0.829, p = .410, d = 0.180) and trust (t[83] = 1.326, p = 0.189, d = 0.288). In addition to the questionnaire data, the participants’ reactions to the erroneous trials were analyzed. As results show, there was no statistically significant relationship found between the number of detected errors and the type of exposure for trial 14 (χ2 [1, N = 85] < 0.001, p = 1) or trial 18 (χ2 [1, N = 85] = 0.402, p = .526).

Initial and dynamic trust and perceived reliability by types of exposure.

Task Performance

To investigate task performance, the number of correct secondary tasks was examined. Participants interacting with the depiction of the robot showed a significantly higher task performance (M = 61.4, SD = 22.19), than participants interacting with the embodied robot (M = 50.02, SD = 20.94), t(83) = −2.43, p = .017, d = 0.528.

A brief exploratory analysis was conducted to examine the sample before it was adjusted due to performance criteria. The adjustment excluded participants with multiple additional errors beyond those programmed, to maintain a consistent level of actual reliability across participants so as not to bias the subjective assessment. The analysis explored the relationship between the experimental condition and the number of participants with additional errors in the primary task. Results showed significantly more participants with additional errors in the depicted condition (n = 20) compared to the embodied condition (n = 8), (χ2 [1, N = 99] = 4.072, p = .044), and thus a higher task performance in interaction with the embodied robot.

Discussion

The objective of this study was to investigate how embodiment influences perception, trust, and task performance in industrial HRI. The first key finding regarding perception was that the embodied robot was perceived as more likable, intelligent, and safer in the interaction. The results of this study are consistent with the results of previous research (Bainbridge et al., 2011; Kidd & Breazeal, 2004) and support the embodiment hypothesis (Wainer et al., 2006).

The second key finding was concerning perceived reliability and trust. The group that interacted with the embodied robot reported significantly higher initial reliability and trust toward the robot. These results are in line with the effects suggested by prior research (Rae et al., 2013). In contrast, for dynamic reliability and trust, no statistically significant differences were found between the interaction with the embodied robot and the depiction. This fact is in accordance with the definition of dynamic trust by Hoff & Bashir (2015), according to which dynamic trust is influenced by the experience with the robot and adapts to real reliability. Since participants interacting with the embodied robot experienced exactly the same reliability of the robot as participants interacting with the depiction, it is reasonable to expect a similar level of trust in both groups for dynamic trust. Performance-based aspects of the robot seemed to have overwritten attribute-based aspects (Hancock et al., 2011). An analysis of the results for error detection showed no significant differences between groups, although it should be noted here that this method of assessing a behavioral consequence of trust may not have been valid.

The third key finding is that participants interacting with the embodied robot showed a significantly higher task performance in the primary task, while participants interacting with the depiction of the robot showed a significantly higher task performance in the secondary task. A possible explanation for this difference could be that the primary task in the lab was much more salient due to the size of the robot, the sound of its movements, and the presence of an experimenter (Hoffmann & Krämer, 2013). In addition, there may have been an increased task difficulty of the primary task in the embodied condition, since the effort of visual attention allocation to obtain the same information was much greater in the laboratory. Participants had to switch from the control software on their computer screen to the robot in the open space and back, while in the depicted condition, all information was processed within the control software. A resulting focus on the primary task and simultaneous lower task performance in the secondary task in the embodied condition is plausible.

This interpretation of the results would be compatible with the findings of Shinozawa et al. (2005). In their study, they compared robots and virtual agents in different representations and found that for 3D tasks participants preferred (3D) robots, whereas for 2D tasks they tended to prefer virtual agents on screens. These results are reflected in the present study in that the participants collaborating with the real 3D robot in the lab performed better in the primary task of controlling the robot, while participants in the depicted condition with a 2D representation of the robot performed better in the 2D secondary task. Since real robots are often used for action implementation tasks it would be interesting to investigate this aspect in more detail in future research.

In addition to the already mentioned limitations of this study, it is important to draw attention to some further issues that should be considered regarding the generalizability of the results. One limitation regarding the experimental design is that while the tasks and interaction in the two conditions were kept as similar as possible, the basic settings of the experiments differed considerably. While the experiment in the embodied condition took place in a lab under the supervision of an experimenter, the participants in the depicted condition completed the experiment from home, or remotely on a private computer, without the supervision of an experimenter. This experimental design was adopted due to the COVID-19 pandemic regulations. In a further study, it would be interesting to investigate the two variations of the type of exposure under the same circumstances in a lab setting.

Regarding the experimental task, another limitation of this study is that participants did not interact directly with the robot, but instead were operators and observers of an interaction between the robot and the experimenter (Onnasch & Roesler, 2021). This type of interaction was chosen to achieve a high degree of comparability between the two conditions. It is, however, possible that the participants perceived the task as controlling an interface rather than controlling a robot.

In addition, it should be mentioned that in the instruction, participants were told to pass on the balls to an available machine to achieve an efficient production flow. They were however instructed that, if not ordered otherwise, the robot would distribute the balls randomly. While this scenario was chosen to maintain the experimental manipulation even if participants made a mistake or miss, this possible autonomy of the robot during the task could have introduced a confounding variable influencing the participant’s trust in the robot.

Conclusion

All in all, this study provides valuable insights into the influence of embodiment on perception, trust, and task performance in industrial HRI. The analysis revealed significant differences between the embodied robot and the depiction of the robot in a dynamic task-related scenario. Results showed that the embodied robot was perceived as more likable, intelligent, and as safer to interact with compared to the depiction. At the same time, the effects of embodiment on trust toward the robot were only significant before an interaction had taken place, suggesting that the interaction itself might outweigh the influence of the type of exposure to the robot for trust and perceived reliability. Furthermore, depending on the interaction scenario, it might be beneficial to match the type of exposure to the task to be performed, as for physical 3D tasks the results showed significantly higher task performance with the embodied robot while for cognitive 2D tasks, the task performance was higher with the depiction.

The results underline the importance of designing HRI research studies in such a way that the evaluation of a robot is not merely based on a first impression, but on interaction in a suitable task. While avoiding depictions for research on embodied robots altogether would be ideal, this will not be achievable—especially since during the COVID-19 pandemic, online HRI studies have become well established. Nevertheless, the generalizability of depiction-based research to interaction with embodied robots should be further challenged.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.