Abstract

The rise of large language models offers new opportunities for disseminating more effective and equitable healthcare information. While past research has extensively investigated users’ perceptions of chatbots, few have examined the design strategies for chatbot information presentation. The objective of this research aims to explore how communication style (conversational or informative) and language style (technical or non-technical) affect user acceptance and ability performing a knowledge seeking task with a healthcare chatbot in a 2 × 2 between-subjects design. Following this, participants engaged in semi-structured interviews, where we analyzed participants’ experiences through inductive thematic analysis. Our findings indicate users generally found conversational chatbots to be more understandable with greater perceived interaction freedom than informative chatbots. These insights highlight the importance of aligning chatbot communication with users’ expectations. Future research should validate these findings with larger and more diverse populations, considering the impact of various chatbot interaction styles on user experience and long-term effectiveness.

Introduction

With the growing access and innovation in technology, coupled with the growth of large language models (LLM) such as OpenAI’s ChatGPT and Google’s Bard, there are growing opportunities to deliver more effective and equitable healthcare information through healthcare-based conversational agents (CAs) and chatbots (Karabacak & Margetis, 2023). Previous research has demonstrated the efficacy of chatbots to effectively disseminate health information. Notably, past healthcare chatbots have been used to deliver virus and vaccine education during the COVID-19 pandemic (Amiri & Karahanna, 2022). CAs have also been used to successfully assist individuals with diet recommendations, smoking cessation, and cognitive behavioral therapy (Fadhil, 2018; Fitzpatrick et al., 2017; Wang et al., 2018). Considering these applications, it is important to acknowledge that healthcare related information is often unfamiliar, complicated, and exceptionally technical (“Understanding Health Literacy,” n.d.), and nearly 90% of adults in the United States struggle with health literacy (“An Introduction to Health Literacy,” n.d.). There may be socioeconomical factors that are associated with lower levels of health literacy and access to consumable health information. Additionally, individuals with less education and literacy skills, as well as those with limited English proficiency, exhibit particularly pronounced levels of limited health literacy (Kutner et al., 2006; NPR et al., 2018). Given this, to fully harness the capabilities of CAs, further research is needed to understand optimal design strategies for their effectiveness, especially when addressing complex communication contexts such as health information.

Most prior studies have investigated users’ perceptions and intentions to use chatbots in a general context, examining positive and negative characteristics which lead to different outcome measures such as acceptability and trust. For example, previous research has noted the influence of anthropomorphic design cues and communicative agency framing, emphasizing the significance of human-like cues (Araujo, 2018). Nicol et al. (2022) demonstrated the acceptability of chatbot-delivered cognitive-behavioral therapy for adolescents with depression and anxiety. Similarly, Nadarzynski et al. (2019) showed that while most internet users would be receptive to using healthcare chatbots, concerns about the quality and accuracy of the information provided may hinder engagement, emphasizing the need for user-centered and theory-based approaches to design effective AI chatbots. Despite these efforts, the field of human-AI interaction still lacks unified models to explain the fundamental aspects of the interaction experience with conversational AI (Rapp et al., 2021). Given the proliferation and the increasing capability to implement this technology in a variety of contexts, the models supporting these designs will grow in importance. A key characteristic of this, is the context of the interaction experience in various presentation modalities employed by chatbots (i.e., text, verbal, visual) and how this influences user preference (Vaidyam et al., 2019). When designing chatbots for health education, minimizing the cognitive load of the user is an important aspect to consider, as this can lead to users being more likely to adopt it (Al-Maskari & Sanderson, 2011). Cognitive load encompasses the effort to process and memorize new information for decision-making and is categorized into two types: intrinsic and extraneous. Intrinsic cognitive load is determined by the inherent complexity of the information, while extraneous cognitive load pertains to how information is presented (Sweller, 2010). Past research has examined extraneous cognitive load in the context of chatbots as Biro et al. (2023) found that the usability of the chatbot was greatly influenced by the chatbot persona and the chatbot’s effectiveness was significantly impacted by the use of technical language. Yin and Neyens (2024) showed videos convey health information more effectively while text-based information is more efficient for users. Even with these findings, the full understanding of how specific contexts influence the communication of information by healthcare chatbots is limited. In this study we addressed this gap by exploring how the communication style (conversational or informative) and the language style of a chatbot (technical or non-technical) impact the ability of an information seeking task. Following this task, we conducted semi-structured interviews to understand the design characteristics of the chatbot that positively or negatively impacted their experience. The objective of this study is to evaluate user preferences and feedback through post-experience interviews to identify design implications for future healthcare chatbots and to identify differences in user evaluations based on individual experiences.

Methods

This study employed semi-structured interviews to evaluate how different information presentation styles for healthcare chatbots impact users’ perspectives and to identify design characteristics that may impact subjective outcomes of using the chatbots. As such, we did not focus on objective performance related metrics for the interaction with the CAs, concentrating solely on users’ subjective interactions. The study implemented a 2 × 2 between-subjects design to investigate differences in communication style (conversational or informative) and language style (technical or non-technical language) for a healthcare chatbot. Participants were randomly assigned to each condition of the chatbot. The study employed a Wizard of Oz style methodology (Biro et al., 2023) (where we simulate and control a chatbot agent) to emulate a healthcare education chatbot for consistency and internal validity. In this design, participants were told they could ask questions pertaining to blood pressure and based on the communication style of the chatbot, there were pre-defined scripts for how the chatbot (i.e., the “Oz”) would respond.

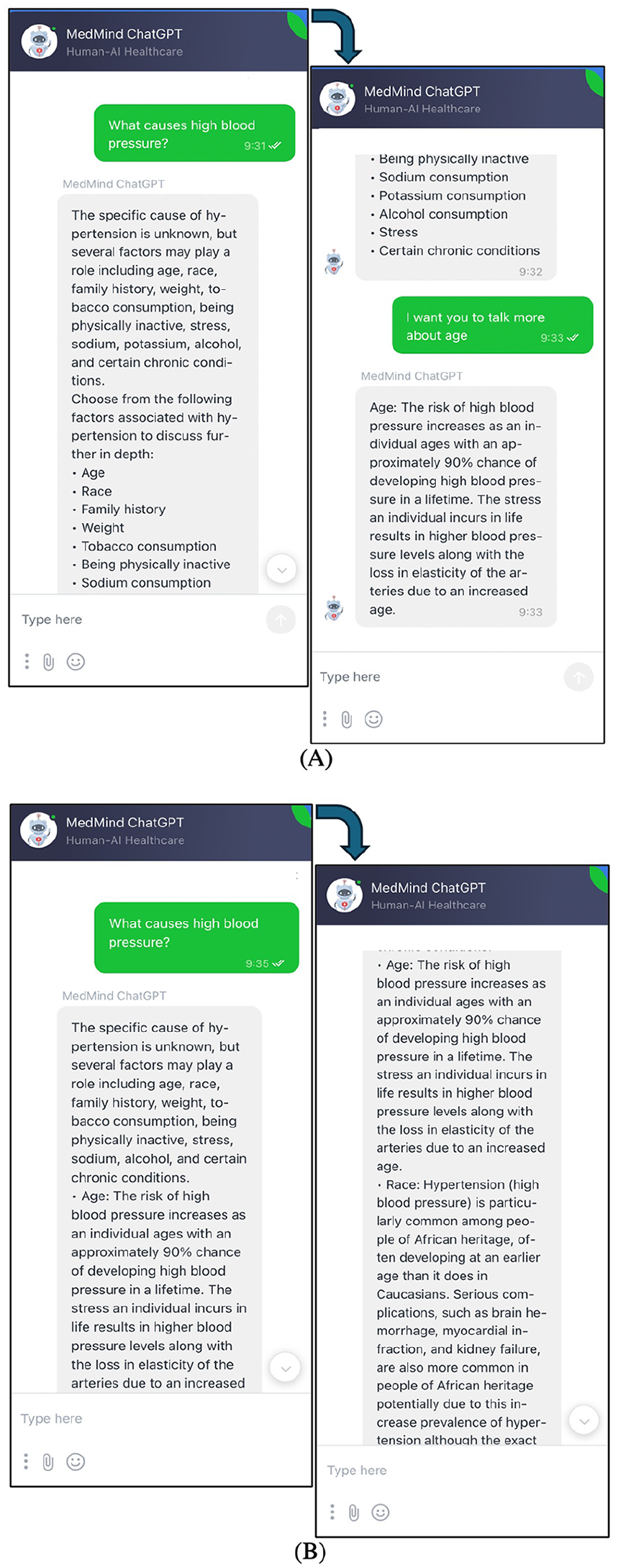

In such a design, this was able to emulate a CA as the chatbot allowed for diverse user input styles, had four different conditions of response, and adapted responses based on users’ conversation history and the context of their questions. The chatbot resided (Figure 1) in a conversational channel on a prototype healthcare patient portal. Jivochat (2012) was the software used to employ the conversational channel on a WordPress website and the person controlling the chatbot responded through this messenger system. Following the approval from the Clemson University Institutional Review Board (IRB) (IRB2023-0414), we began recruitment for the study. All participants were required to be between the ages of 18 to 32 and must be able to read, write, and speak English. Participants were recruited from Clemson University’s campus and from the general population. Additionally, to be included in the analysis, all participants had to pass the Short Assessment of Health Literacy—English (SAHL-E) developed by Lee et al. (2010). This was to ensure all participants possessed sufficient background knowledge on basic health literacy concepts, excluding those who did not from our analysis. Interviews were conducted in-person following the interaction of the chatbots. After receiving confirmation of verbal consent and interacting with the CA, interviews started with questions related to their positive and negative perceptions of the chatbot. Furthermore, they were asked questions specially related to the information presentation of the healthcare chatbot and how they would like future chatbots to communicate health information (e.g., video, pictures, audio). Participants reflected on future customization features of chatbots and shared their views on how chatbots can be improved to help them learn about healthcare topics. All interviews were recorded with a Evida V618 Device.

Chatbot designs used for the study (A) conversational style and (B) informative style.

We employed Otter.ai (2016) for interview transcription and utilized inductive thematic analysis (Braun & Clarke, 2006) to analyze the transcripts, using Atlas.ti (1993) for coding analysis. In the inductive analysis approach, we derived meaning and identified significant themes in the underlying data. The process began with the examination of the interview transcripts to gain comprehensive understanding of the data. Next, the first author analyzed the transcripts to develop initial codes specifically related to their positive and negatives perspectives with their interaction of the chatbot. Following this, both authors reviewed and evaluated these codes, leading to the discernment of initial themes which aligned with prevalent patterns in the data that were then refined throughout the analysis of the interviews. While our analysis focuses on the compressive interaction experience with the CA, this paper specifically analyzes only one controlled variable: the communication style (conversational or informative). The analysis does not include an exploration of the language style (technical or non-technical) for this qualitative study.

Results

In total, there were (N = 44) participants included in our analysis, excluding one participant who did not pass the SAHL-E. The mean age of the participants was 26.2 years old (SD = 3.2 years). An equal number of males (n = 22) and females (n = 22) participated, with the majority of participants being graduate students (n = 33) while the rest were undergraduate students (n = 6) or were not students (n = 5). In addition, half of participants (n = 22) use chatbots weekly while almost half (n = 20) seldom or never use chatbots, and few of the participants use the chatbots daily or all the time (n = 2). There were an equal number of participants (n = 11) for each of the four conditions in the study, and the average time of interaction with the chatbot was 12 min and 22 s (SD = 2 min and 26.3 s).

Emerging Themes From the Interviews

The initial themes encompassed: (1) difficulty with learning healthcar-related information, (2) desiring for the ability for chatbots to display information in their preferred way, (3) appreciating aspects of the various chatbots’ communication style, (4) user experience pertaining to all chatbot conditions, and (5) suggestions for improvement for healthcare chatbots. The final themes that emerged from the data: (1) overall design and user experience, (2) perceptions unique to the conversational chatbot, (3) perceptions unique to the informative chatbot.

Overall Design and User Experience

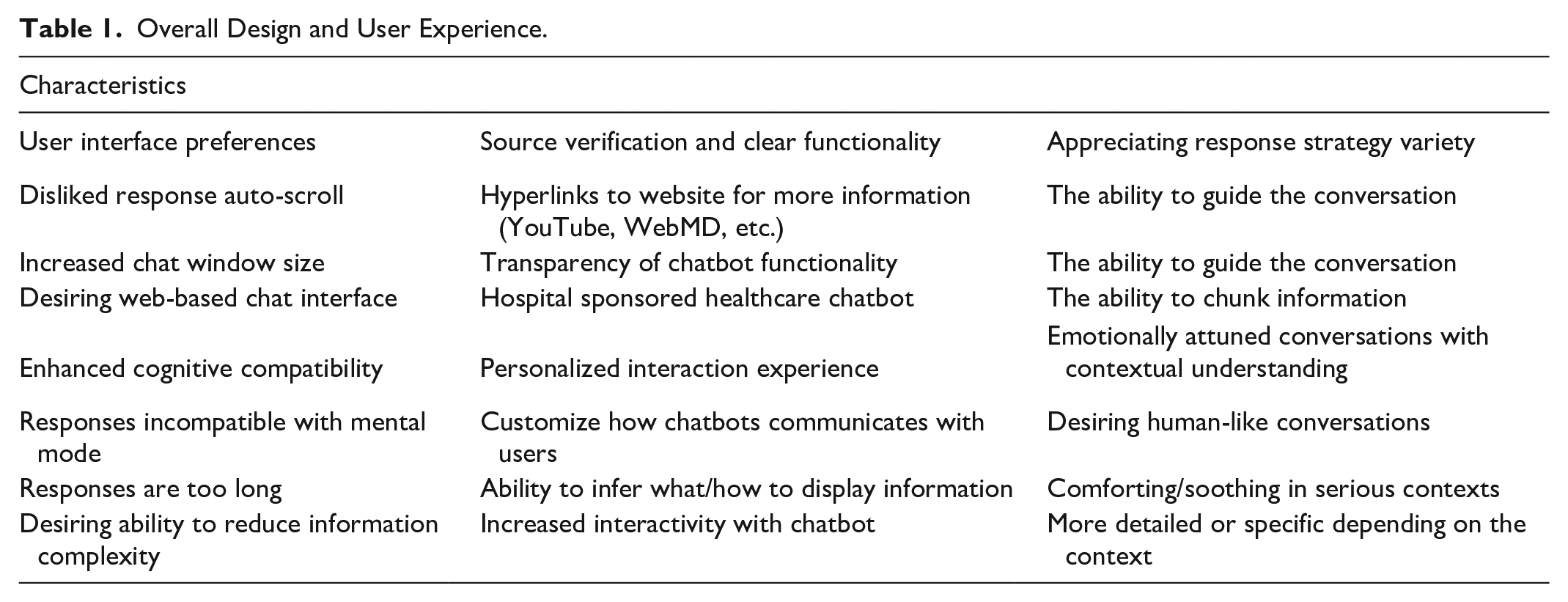

The study found similar perceptions of the overall design and user experience across all conditions of the chatbot, and these findings are summarized in Table 1. With respect to the design, the participants expressed discontent with the size of the chatbot window and the response auto-scroll that was implemented in the study. Moreover, participants generally reported disliking when the chatbot made multiple responses in a single interaction. One participant summarized this by saying, “I disliked when it tried to do multiple messages at once. Especially because the box itself is thin but long . . . when another one popped up, it took the text very far away, and I had to keep scrolling back up” [P14]. Furthermore, when considering the design of the chatbot, the participants expressed an option to have a dedicated web-based chat interface as opposed to the conversational channel implemented in this study, citing its ability to “structure and present” [P33] health information. When considering the experiences that users have with the chatbot, there was a general desire to reduce the information complexity and be able to customize how chatbots interact with users. Participants suggested different modalities of communication as a means to accomplish this, as one participant suggested that “some people prefer text, some people prefer audio, [others] prefer audio and video together, right? I like videos . . . [and] I need to hear, but [for] explanation . . . the ideal chatbot . . . can give a video with audio cues, explaining . . . high blood pressure” [P18]. Moreover, through the interviews, the participants offered suggestions on strategies to enhance the interactivity of the conversational agents. Many users expressed frustration with AIs struggling to grasp specific context of certain questions, while other voiced discontent over the challenge of phrasing their inputs effectively. Underscoring this theme, P14 compared chatbot responses to internet searches, saying “some AIs are very blind and will pop up the first Google search when it is the third tab down that is the true answer . . .so being able to [give] it more information . . . so it can adjust its parameters” [P14]. Thus, the evidence from the interviews highlights the importance of aligning future AI interactions with users’ expectations by formatting responses to users’ preferences for interaction style.

Overall Design and User Experience.

Perceptions Unique to Conversational Chatbot

The participants in the conversational condition discussed this chatbot more positively as opposed to the participants who interacted with the informational chatbot. This led the participants to express aspects of the chatbots they appreciated that were unique to the experiences in the conversational experimental condition. One such design aspect was their perceived appreciation for the interaction freedom of the chatbot. One participant stated they “had the freedom to explore more than if it was just a normal ‘learning module’ . . . It was nice that you could keep probing and ask questions” [P4]. Moreover, they specifically mentioned how they appreciated the conversational aspects of the chatbot as if there was something else “I need to know about high blood pressure . . . It was giving me options. I can talk more about this. And I can talk more about that. So it was beneficial.” [P13]. In addition to this, participants found this chatbot to be more understandable than those who interacted with the informative chatbot. P5 remarked the language of the conversational chatbot is, “good because sometimes it uses informal and conversational communication . . . it’s good to have, like broader audience . . . and [that it] gives options on what could be next.”

Perceptions Unique to Informative Chatbot

While participants who interacted with the informative chatbot also expressed aspects of appreciating the language use and understandability of the chatbot, there was a stark contrast in the perceived learning difficulty for some participants when using the informative chatbot. It was a common theme among participants that the responses of the chatbot were too long, with one participant saying “[it] gave me a lot of information, which is important, but also, it’s a lot of things to read. . . so I was confused at the end” [P7]. This quote illustrates a common scenario where users sought specific, concise answers, however, the chatbots responses were perceived as complex explanations. It was exchanges such as these which led participants in the informative condition to express the need for chatbots that are more interactive to help users. P2 highlighted this concern, expressing “for a long text, it is so much, so it is hard to learn something about that. And I think interactive is the best option and quick option to get like faster answers.” Another participant made an association between the chatbot’s communication style and a textbook, but stating that when, “a person is explaining to you, they know how to break that information down in a more digestible way” [P14].

Discussion

The results demonstrate the nuanced factors for the design of a healthcare chatbot, emphasizing the impact of communication style and language on user perspectives. While providing universal insights to all chatbot designs, such as the potential reading disruption caused by overly quick responses, it also emphasizes the importance of tailoring chatbot interactions to specific contexts. For instance, the study underscores the significance of considering the specific health related issues being discussed and users’ emotional states when responding to health-related queries. The unique findings between the conversational and informative conditions unveil nuanced user preferences for the display of health information for CAs. However, future research could validate the themes identified in the study with larger samples for quantitative significance being that there were (n = 11) per condition. The limitations of the study include the short interaction time participants had with the chatbot, potentially not capturing its long-term effectiveness or user experience. Future research should conduct longitudinal studies with chatbots utilizing various information presentations to assess sustained impact and user engagement. In addition to this, other limitations of this study include its controlled environment as the chatbot displayed answers uniformly to all participants, lacking the robustness often observed in real-world interactions with contemporary conversational agents. This may hinder the study’s ability to replicate the varied and dynamic scenarios in which users typically engage with modern chatbots. Nonetheless, the study’s findings support the need for future research to investigate design and implementation strategies for chatbots within specific contexts. With previous research in information system technology showing a general lack of emphasis on the context of use, questions have arisen about the applicability of the results in this field to real-life settings (Nielsen & Sahay, 2022; Venkatesh et al., 2011). Recent work by Hsu et al. (2023) attempt to bridge this gap, focusing on information presentation styles in the realm of e-commerce, demonstrating a clear effect for the level of intrinsic and extrinsic task complexity for chatbots on user experience. Yet, the understanding of this effect is limited to business contexts (Hsu et al., 2023). Adopting a similar perspective, this paper’s research contributes to the existing knowledge gap for information presentation designs for healthcare-based chatbots and conversational agents. One such design implication is the demonstration of communication style on the extrinsic task complexity of seeking knowledge about blood pressure. The participants in our study generally reported the conversational chatbot to be more understandable and have higher interaction freedom than the informative chatbot, as many participants who engaged with the informative chatbot expressed high levels of information complexity. These finding highlight the need for healthcare chatbots to present information that is easily understood and communicate with contextual considerations. Furthermore, the lack of comprehensive understanding for the diverse contextual factors across distinct domains (e.g., health, e-commerce, customer service), suggests we may not be able to leverage the literature from general chatbots for specific chatbot purposes. In order to bridge this gap, improved user and context models need to be developed for the needs of specific users across different conversational contexts (Brandtzaeg, 2018).

This perspective advocates for the analysis and redesign of the interaction between human and conversational agents–not just for specific interaction sequences, but with the intention to enhance generative responses across a diverse user range within various conversational contexts (Brandtzaeg, 2018). As a result, it is imperative for the designers of future systems of conversational agents to gain insight on users’ desires, needs, and way they use chatbots (Brandtzaeg, 2018). Given the complex and technical nature of health-related information (“Understanding Health Literacy,” n.d.), future work should ensure the interaction and presentation of information is aligned with users’ expectations and preferences to enhance the understanding of healthcare information.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.