Abstract

To facilitate the adoption of extended reality (XR) technologies in education, various interventions, such as XR technical skills training and user-friendly authoring toolkits, have been designed for educators. However, traditional assessment tools often fall short, primarily focusing on interventions’ outcomes, such as usability, rather than on educators’ acceptance and readiness. This study aimed to bridge this gap by developing a new metric that assesses educators’ preparedness for integrating XR technologies into their teaching practices. Forty-one participants completed the developed survey before and after creating XR lessons. Exploratory and confirmatory factor analyses revealed two key factors: perceived XR authoring proficiency and perceived XR’s educational values. The developed survey can be utilized to capture educators’ perceptions of barriers and needs, providing practitioners with targeted feedback to enhance XR applications in educational settings.

Introduction

Integrating extended reality (XR) technologies into educational settings can enhance active learning by providing immersive experiences (Huang et al., 2016). Nevertheless, the widespread adoption of XR within educational settings has been limited due to several substantial barriers. Many educators lack the technical skills required to effectively utilize XR technologies (Alalwan et al., 2020; Ashtari et al., 2020; Manuri & Sanna, 2016). For example, a large-scale survey with 3,651 teachers found that 92% of teachers rated their digital skills for creating augmented reality (AR) as low (Sánchez-Cruzado et al., 2021). Furthermore, the educational community has not fully recognized XR’s potential benefits. To achieve the practical application of digital learning technologies, such as XR, in the classroom, it is essential for educators to be aware of the benefits of XR (Camilleri & Camilleri, 2017; Venkatesh & Davis, 2000; Wright et al., 1995). Finally, the complexity of XR authoring toolkits, which often require advanced programing skills, poses a challenge for educators without technical skills. These tools assume that users have a certain level of scripting and programing skills (Gaspar et al., 2020; Yang et al., 2020).

Previous efforts to address these barriers have included providing XR-related technical skill training and facilitating the authoring process with tools (Cerqueira & Kirner, 2012; Kim et al., 2023) designed for non-technologist educators. Despite these efforts, measuring the effectiveness of such efforts remains challenging due to the absence of metrics for assessing educators’ readiness to create XR modules.

Traditional evaluation metrics to assess XR authoring tools have focused more on tools’ outcomes, such as usability and performance (Dengel et al., 2022), rather than on educators’ acceptance and readiness to use XR. For example, the Technology Acceptance Model (TAM) predicts if users are likely to use XR by assessing the XR tool’s perceived usefulness and ease of use (Venkatesh & Davis, 2000). However, it is also important to measure how ready educators are to develop XR modules for successful integration into education. This preparedness focuses on lowering barriers to entry and enabling educators to participate more easily in integrating XR into their teaching practices.

Another alternative for this metric, the System Usability Scale (SUS), captures usability after users’ interaction with XR tools (Brooke, 1996). Since the SUS is focused on the usability of the tool, it does not address educators’ readiness to integrate XR. This limits pre- and post-use perception analysis for evidence-based evaluations. Educators’ thresholds for integrating XR into their teaching practices can be lowered by interacting with an XR authoring tool; however, it cannot be fully evaluated without this before-and-after comparison of changes in educators’ perceptions regarding tool use. In addition, these existing surveys rely on generic items; thus, they not only do not align with the specific benefits associated with XR (e.g., enhancing student engagement) and fail to capture XR’s unique affordances or requirements.

Given these gaps, there is a need for a survey that measures educators’ preparedness for creating XR modules, offering an educator-centered evaluation. This study aims to design such a survey instrument that (1) focuses on educators’ perspectives and needs, (2) explicitly addresses the unique affordances and requirements of XR in education, and (3) can be administered pre- or post-interventions to capture changes in educator perceptions. The objectives are to develop a questionnaire with items from the literature, to assess its factors through exploratory factor analysis (EFA), and to validate its structure with confirmatory factor analysis (CFA).

Initial Survey Development

This paper followed the survey design procedure suggested by (DeVellis & Thorpe, 2021). The process started by identifying potential factors to be measured and creating a pool of items. After reviewing this initial pool, the survey was administered to participants. The items were then adjusted based on the results from EFA and CFA.

Factors Affecting Educators’ Readiness for XR Authoring

Educators’ preparedness for XR implementation is influenced by several factors identified through the literature review: self-efficacy, challenges faced in creating XR lessons, and perception of XR’s educational value.

Self-efficacy beliefs, central to effective technology integration, involve not only possessing the necessary skills but also the confidence to execute these skills effectively in various scenarios (Bandura, 1977). This confidence is often lacking, as studies show that many educators feel under-prepared for technology usage (Sánchez-Cruzado et al., 2021; Tzima et al., 2019).

Challenges in creating XR lessons are multifaceted, including limited digital proficiency (Akçayır & Akçayır, 2017; Ashtari et al., 2020), the technical complexity of XR toolkits (Gaspar et al., 2020; Nebeling & Speicher, 2018; Yang et al., 2020), and the time-consuming nature of XR authoring (Alalwan et al., 2020; Horst et al., 2022; Kim et al., 2024). Semi-structured interviews conducted by Ashtari et al. (2020) indicated that five out of six domain experts lacked computer skills and confidence in developing XR content. They were also unsure of how to begin and the subsequent steps, while interacting with XR authoring tools. Additionally, educators often struggle with limited time due to existing teaching responsibilities, complicating further integration of XR technologies (Kim et al., 2024).

Another barrier to XR adoption in education is educators’ limited understanding of XR’s advantages. TAM suggests that the perceived usefulness of technology significantly influences its adoption (Venkatesh & Davis, 2000). Thus, without a clear understanding of XR benefits, it is hard to envision educators willing to incorporate XR into their educational settings (Camilleri & Camilleri, 2017; Wright et al., 1995).

Affordances and Requirements in XR-Based Learning

The survey also incorporated key affordances and requirements for XR-based learning, such as ease of learning (Ashtari et al., 2020; Cubillo et al., 2014; Sánchez-Cruzado et al., 2021), ease of use (Gandy & MacIntyre, 2014; Mota et al., 2018; Park, 2011), efficiency (Horst et al., 2022; Kim et al., 2024; Scrivner et al., 2016), interactivity (Dengel et al., 2022; Huang et al., 2016; Moorhouse & Jung, 2017), realistic scenarios (Giasiranis & Sofos, 2016; Huang et al., 2016; Voukelatou, 2019), customizability (Aguayo & Eames, 2023; Kim et al., 2024; Klopfer & Squire, 2008), and alignment with educational goals (Kim et al., 2024). These factors were integrated into the survey items to ensure they reflect the real-world application and challenges of using XR in education.

Additional inclusivity considerations were made to ensure the generalizability of the survey instrument and to address the research objectives. Items too specific to specific domains were excluded to maintain broad relevance across educational fields. Furthermore, the survey was designed to be applicable both before and after potential interventions.

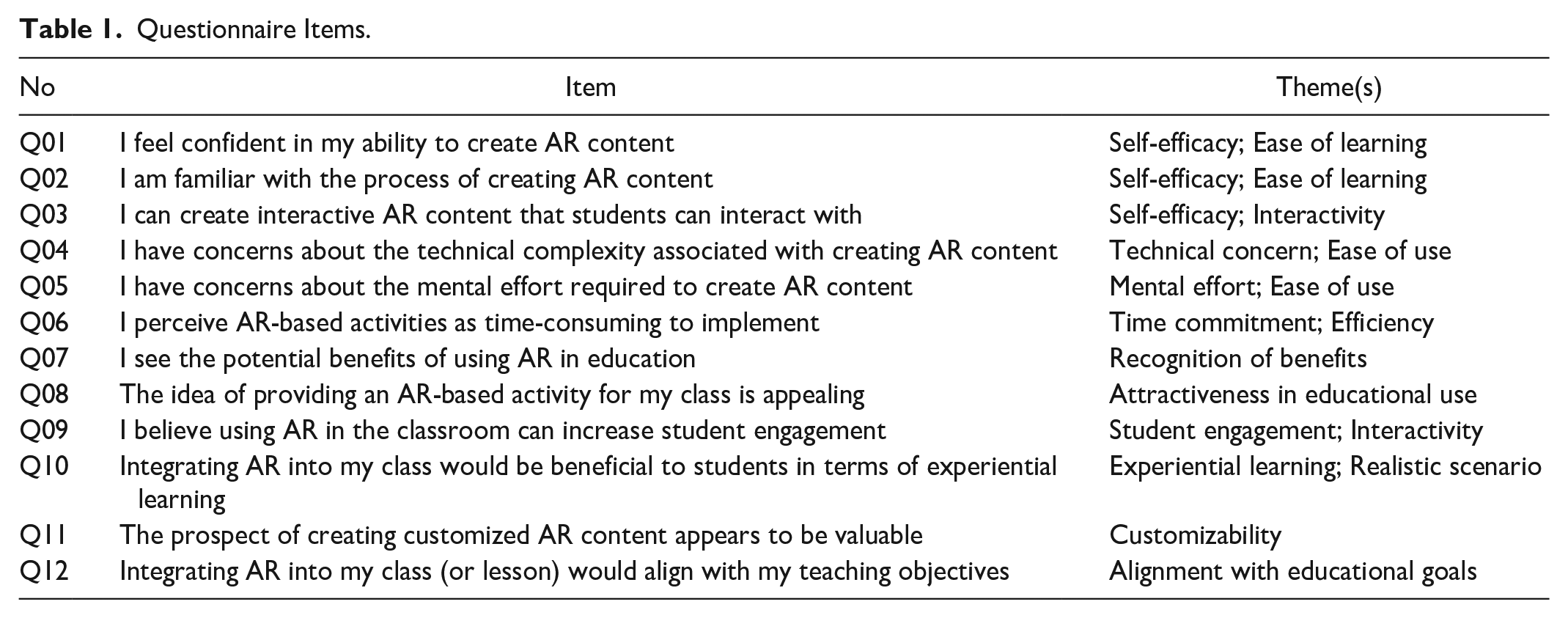

The initial questionnaire items are presented in Table 1. Questions 1 to 3 relate to self-efficacy; questions 4 to 6 pertain to challenges faced in authoring XR lessons; and questions 7 to 12 address the perception of XR’s educational value. Additionally, the survey was designed to integrate XR affordances and requirements into each item (e.g., interactivity, ease of learning). This initial list was developed by the authors and also by adapting items from other validated surveys (Ahn et al., 2004; Brooke, 1996; Hart & Staveland, 1988; Horst et al., 2022; Venkatesh & Davis, 2000). The validation of this survey was conducted using AR in an educational setting; thus, the specific term “AR” was used in the initial survey questionnaires for this study (Table 1).

Questionnaire Items.

Method

Participants

Forty-one participants (34 males, 7 females) were recruited for the study, with an average age of 47.3 year (SD = 15.3). Of them, 37 were flight instructors and four were pilots. All had received aviation weather education from sources such as pilot ground schools, flight academies, and colleges. Teaching experience among flight instructors averaged 11.5 year (SD = 12.6). Three participants had experience with 3D authoring tools. This study was approved by Iowa State University Institutional Review Board.

Task and Procedure

The experiment began with consent and a demographic survey. Participants were then asked to complete the survey. Participants used an AR authoring tool designed for aviation weather training (Kim et al., 2023). They first watched a tutorial video explaining how to use the authoring tool. After they felt comfortable with the interface, participants completed creating three AR lessons related to aviation weather training. After authoring all three lessons, they filled out the survey once again.

Data Analysis

EFA was conducted using scree plot analysis and varimax rotation according to the Kaiser Rule (Costello & Osborne, 2019; Kaiser, 1960). The suitability of the data for EFA analysis was evaluated with the Kaiser-Meyer-Olkin (KMO) measure and Bartlett’s test of sphericity. All datasets used in this study showed a KMO value larger than 0.5 and Bartlett’s test p-value smaller than .05, indicating that the dataset is appropriate for factor analysis. Items with factor loadings less than 0.5 were removed (Snedecor & Cochran, 1980).

The stability of the factor structure was assessed through two distinct EFAs, comparing results before and after the intervention to identify any structural changes. Subsequently, the EFA with a pooled dataset with both pre- and post-experimental samples tested the questionnaire’s applicability regardless of when the questionnaire was administered. Reliability analysis was also performed to evaluate the internal consistency of the items (Cronbach, 1951).

Following the EFA, CFA was performed to verify the structure of the questionnaire. To assess the goodness of fit for the models, several statistical measures were utilized. These indices include the ratio of the chi-square to the respective degrees of freedom (χ2/df), which assesses fit relative to the model’s complexity (Wheaton et al., 1977); Comparative Fit Index (CFI) (Bentler, 1990; Hu & Bentler, 1999) and Tucker–Lewis Index (TLI), both of which compare the hypothesized model to a baseline model (Bentler, 1990; Tucker & Lewis, 1973); Adjusted Goodness of Fit Index (AGFI), which penalizes unnecessary parameters (Baumgartner & Homburg, 1996); Parsimony Normed Fit Index (PNFI), which evaluates whether the model provides a simple yet adequate structure for explaining the data (Mulaik et al., 1989), Root Mean Square Error of Approximation (RMSEA), which gages the model’s fit to the population covariance matrix and evaluates its accuracy (MacCallum et al., 1996), and Standardized Root Mean Square Residual (SRMR) which measures the discrepancies between the observed correlations and those predicted by the model (Hu & Bentler, 1999).

Results

Exploratory Factor Analysis

Pre-Experiment Dataset. A KMO value of 0.770 and a Bartlett’s test p-value of <.001 were obtained. The EFA revealed a three-factor structure with three items (Q1−3) for confidence in XR authoring, three items (Q4−6) for perceived challenges in XR authoring, and six items (Q7−12) for the perceived XR’s educational values. The Cronbach’s α confirmed the internal consistency of the questionnaire items, with α values of .685, .791, and .908, respectively.

Post-Experiment Dataset. A KMO value of 0.707 and a Bartlett’s test p-value of <.001 were obtained. The EFA revealed a two-factor structure with six items (Q1−6) for perceived XR authoring competency and six items (Q7−12) for perceived XR’s educational values. The Cronbach’s α for each factor structure was .817 and .912, respectively.

Pooled Dataset with Pre- and Post-Experimental Samples. A KMO value of 0.847 and a Bartlett’s test p-value of <.001 were obtained. Similar to the results of EFA with the post-experimental dataset, the EFA revealed a two-factor structure with six items (Q1−6) for perceived XR authoring competency and six items (Q7−12) for the perceived XR’s educational values. The Cronbach’s α for each factor structure was .869 and .913, respectively.

Confirmatory Factor Analysis

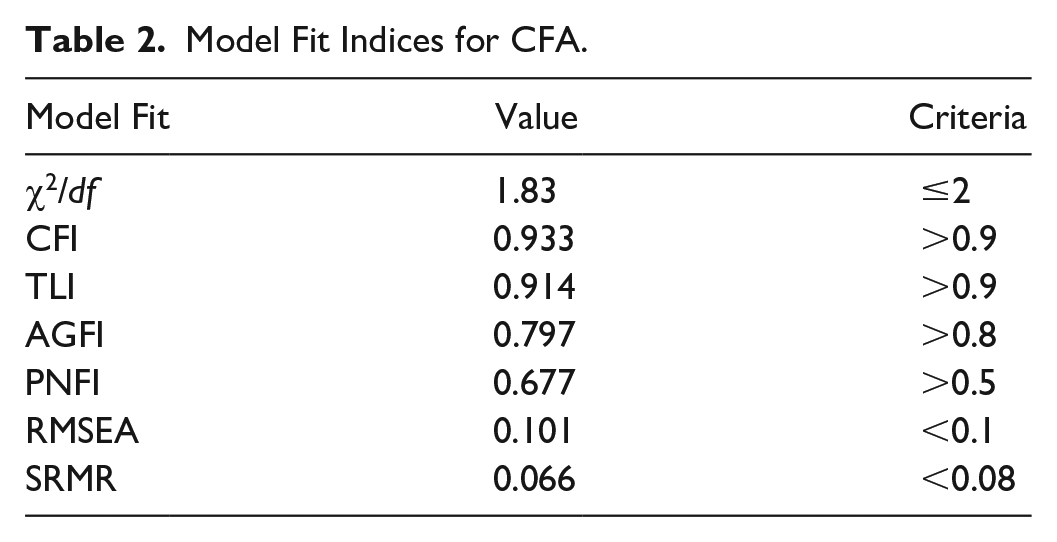

Initial Model. CFA on the pooled data, aimed at verifying the structure identified by EFA, initially indicated a poor model fit (χ2/df = 2.10; CFI = 0.899; TLI = 0.875; AGFI = 0.743; PNFI = 0.665; RMSEA = 0.116; SRMR = 0.085).

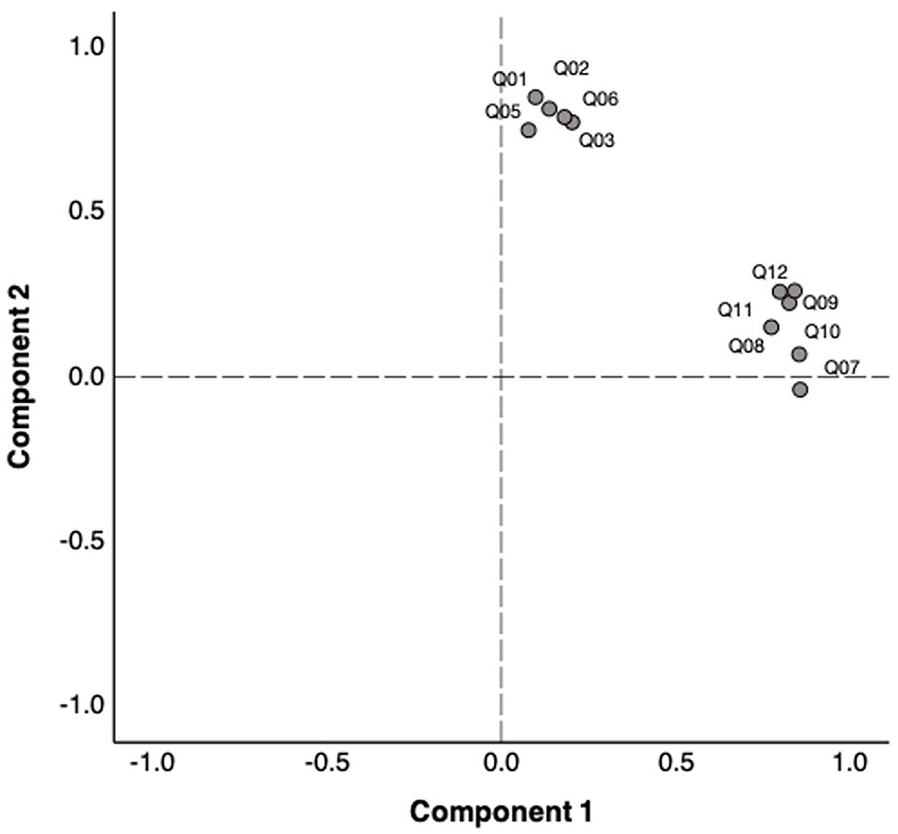

Final Model. To improve the model fit, an item related to concerns about technical complexity (Q4) was deleted due to its R2 of .339, which is lower than .4. This adjustment significantly enhanced the model fit (Figure 1).

Principal component analysis with final model.

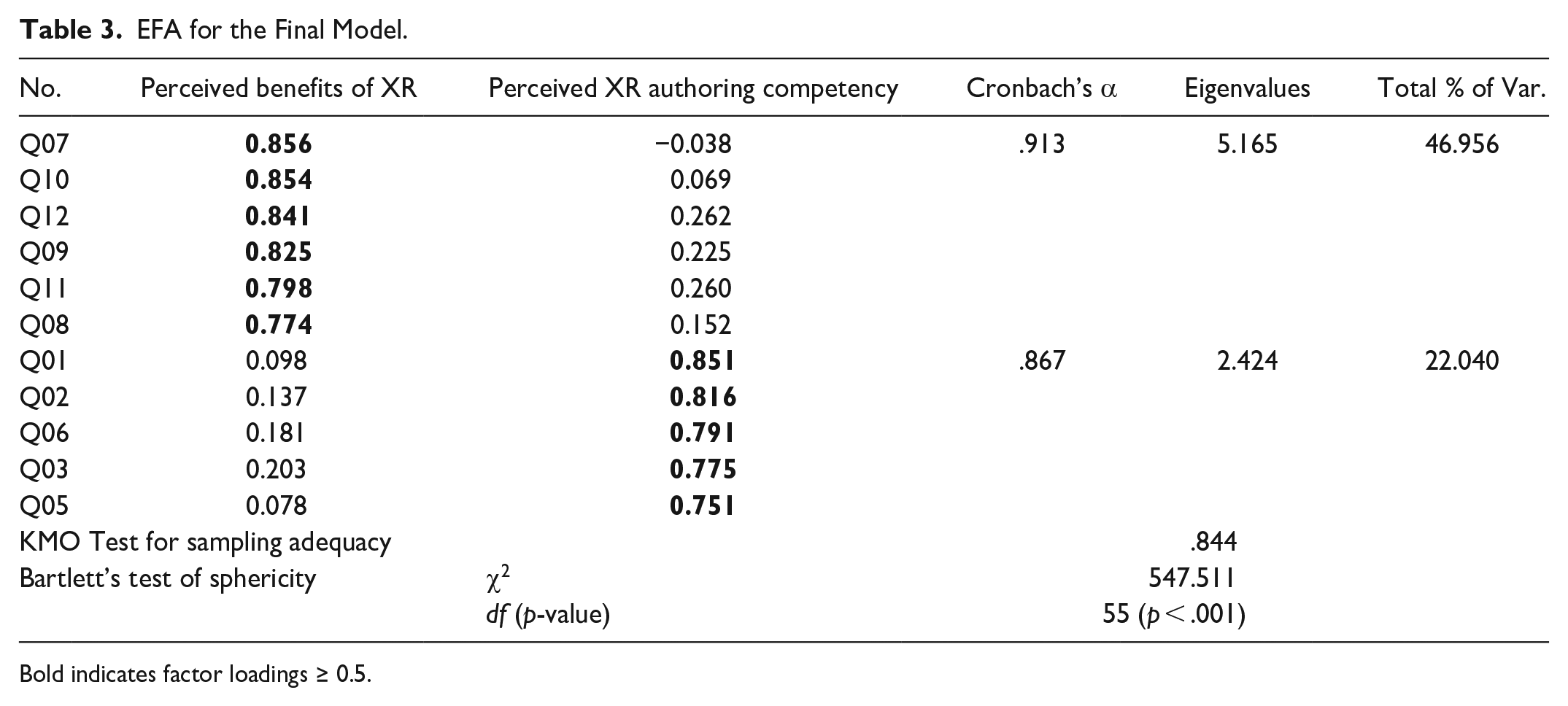

The final model, with 11 items, demonstrated a high KMO value (0.844) and significant Bartlett’s test result (p < .001), alongside a satisfactory model fit (χ2/df = 1.83; CFI = 0.933; TLI = 0.914; AGFI = 0.797; PNFI = 0.677; RMSEA = 0.101; SRMR = 0.066) (Tables 2 and 3). EFA, with this final survey, included 11 items grouped into two factors: perceived XR authoring competency (Q1−3, 5–6) and perceived XR’s educational values (Q7−12). Reliability tests, with Cronbach’s α values of .867 and .913, confirmed the internal consistency of the items (Table 3).

Model Fit Indices for CFA.

EFA for the Final Model.

Bold indicates factor loadings ≥ 0.5.

Discussion

This study aimed to develop and validate a subjective survey instrument to measure educators’ preparedness for creating XR learning modules. It identified two major factors critical in assessing educators’ preparedness: perceived XR authoring competency and perceived XR’s educational values.

EFA, with pre-experimental data, identified three factors: confidence in XR authoring, perceived challenges in XR authoring, and the perceived XR’s educational values. However, EFA with post-experimental data showed these factors evolved into two: perceived XR authoring competency and perceived XR’s educational values. The shift from three to two factors post-authoring experience indicates a merging of confidence and challenges into a single factor. This suggests that the experience of the XR authoring process with the given authoring tool might increase educators’ confidence and reduce perceived concerns, thus merging two previously distinct factors into a unified factor. EFA with pooled dataset further supported this two-factor framework.

The introduced survey addresses gaps and practically contributes to the educational field concerning incorporating XR technologies into educators’ teaching practices. First, the survey gauges educators’ preparedness to develop XR lessons, providing insights into their readiness to integrate XR into teaching. It identifies specific barriers, revealing areas where educators may need more support or resources (e.g., need more time to become familiar with the XR concept, worried about additional time commitment, unsure about the benefits of XR to their lessons).

Second, the survey addresses the distinct affordances and needs associated with XR in educational settings. The items are specifically designed to deliver feedback relevant to educational XR applications. This feedback helps developers and researchers enhance the design of XR applications or strategies for implementing them in educational contexts.

Finally, the survey can be administered at different stages, such as pre- and post-interventions (e.g., technical skill training or authoring tools). This allows for comparisons of educators’ readiness before and after such interventions, revealing the actual effectiveness of those interventions. This insight is useful for continuously refining training programs/strategies or user-friendly authoring toolkits to align better with educators’ requirements.

Conclusion

This study developed and validated a survey instrument to measure educators’ preparedness for creating XR learning modules. Focusing on educator-centered evaluation, it incorporated metrics to assess their readiness and capture the identified affordances of XR. Exploratory and confirmatory factor analyses revealed two key factors: perceived XR authoring proficiency and perceived XR’s educational values. The developed survey provides tailored feedback on interventions designed to assist educators in employing XR within educational contexts, enabling targeted enhancements in XR technical training programs or user-friendly XR authoring tools. As a result, the survey’s application can guide the development of effective XR integration strategies, promoting broader adoption of XR technologies in educational settings.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Partnership to Enhance General Aviation Safety, Accessibility, and Sustainability (PEGASAS), a Federal Aviation Administration (FAA) Center of Excellence for General Aviation under cooperative agreement 12-C-GA-ISU. Statements and opinions expressed in this paper do not necessarily reflect the position or policy of the US Government, and no official endorsement should be inferred.