Abstract

The rapid integration of artificial intelligence (AI) across various industries has given rise to human-AI teams (HATs), where collaboration between humans and AI may leverage their unique strengths. However, these teams often face performance challenges due to mismatches between human expectations and AI capabilities, hindering the effectiveness of these future workforce teams. Addressing these discrepancies, team training, particularly cross-training, has emerged as a promising intervention to align expectations and enhance team dynamics. This study explores the efficacy of different cross-training approaches and human/AI team role assignments on team training reactions and perceived task performance in an advertising co-creation task. The findings suggest that cross-training significantly improves both training reactions and task performance perceptions. By extending traditional team training methods to HATs, this research suggests that cross-training may serve as a viable strategy to improve team effectiveness and support the future workforce.

Keywords

Introduction

The rapid adoption of artificial intelligence (AI) across industries is reshaping workplaces, giving rise to human-AI teams (HATs), where humans and AI collaborate interdependently toward shared goals (Chaudhary et al., 2023; Kadir et al., 2019; Makarius et al., 2020; O’Neill et al., 2022). Despite the potential to capitalize on unique strengths, HATs may underperform compared to traditional teams or only outperform in select situations, highlighting persistent effectiveness issues (Demir et al., 2016; McNeese et al., 2018). These poor results may stem from a misalignment between established human expectations and mental models for teamwork and the actual capabilities of AI teammates, reflecting a gap in understanding and integration (Endsley, 2023; McNeese et al., 2023).

In addressing these challenges, team training, specifically cross-training (i.e., training on different teammates’ roles), emerges as a promising solution from the HFES literature (Salas et al., 2008). Indeed, team training has effectively enhanced performance by aligning expectations and supporting shared mental models (SMMs) (Canon-Bowers, 2007; Smith-Jentsch et al., 2001; Volpe et al., 1996). This promise has led to calls for more research on training’s impact on HATs (O’Neill et al., 2022; O’Neill et al., 2023), seeking avenues to overcome the cognitive and affective hurdles that hinder productive teaming. For example, while humans often view AI roles in creative tasks negatively (Wu & Wen, 2021), team training may positively influence cognitive biases that affect teaming abilities (Musick et al., 2021).

Despite this warrant, there is limited research specifically on HAT team training (National Academies of Sciences, Engineering, and Medicine [NASEM], 2022). What scholarship that does exist suggests that team training can enhance critical teamwork constructs like trust and coordination (Johnson et al., 2023; Walliser et al., 2023). Furthermore, strategies like cross-training have demonstrated performance benefits in the tangentially related human-robot teaming literature (Nikolaidis et al., 2013; Nikolaidis et al., 2015). However, there is a gap in understanding how training may influence participants’ perceptions of their HAT work. This gap emphasizes the need for research on cross-training approaches in HATs that are tailored to human experiences, aiming to improve both the depth and practical impact of training for human participants.

As such, our quasi-experimental study seeks to address this gap by examining the effects of different cross-training types and role assignments on training reactions and perceived task performance within HATs. In this investigation, we are guided by two research questions:

How do cross-training type and assigned HAT role affect participants’ reactions to team training for advertising co-creation tasks?

How do cross-training type and assigned HAT role affect perceived performance in advertising co-creation tasks?

By answering these questions, we contribute to the HFES training literature, demonstrating how team cross-training can be extended and adapted from traditional human settings to enhance human perceptions of their HAT efforts.

Methods

This online, quasi-experimental survey assessed the effect of cross-training type (positional modeling, positional clarification, or control; between subjects) and human/AI role assignment (editor/writer or writer/editor; between subjects) on training and team performance perceptions in an advertisement co-creation task. Participants were randomly assigned roles and paired with ChatGPT (3.5 turbo), which fulfills the other team role. The study included a 15 min cross-training session and two 10 min co-creation tasks, with data collected via Qualtrics.

Recruitment and Participants

Participants were recruited using Prolific, an online recruitment platform that has been shown to produce high-quality responses (Douglas et al., 2023). Exclusion criteria for participating in the survey were: (a) age (18+), (b) English language fluency, and (c) advertising experience. In total, 204 participants completed the survey. The average age of participants was 36.33 years (SD = 10.85).

Experimental Design and Independent Variables

This study utilized two between-subjects independent variables (IVs) for manipulation: teammate role and cross-training type. Role assignments were based on Siemons (2022) work on ideal AI team roles, with the writer (“creator”) focusing on creativity and the editor (“perfectionist”) on detail and consistency. Cross-training types, adapted from Marks et al. (2002) toward unique human-AI collaboration needs, were (a) positional clarification (i.e., theoretical role explanations of AI capabilities), (b) positional modeling (i.e., combined theory with demonstrations of AI capabilities), and the control group (i.e., individual training without cross-role exposure). These manipulations were studied together as theoretical and practical considerations naturally make role and cross-training elements intertwined (Blickensderfer et al., 1998). Time (post-training, post-task) was an additional IV when repeated measures were used.

Dependent Variables (DVs)

Participant training reactions were measured using an internally developed measure informed by Level 1 of Kirkpatrick and Kirkpatrick’s (2016) model, where participants rated the quality of their training post-training and post-tasks. For perceived task performance, participants were asked to assess their team’s collaboration on advertisement tasks for each task using a measure adapted from O’Neill et al. (2009). Both were measured using 5-point Likert-type scales. Additionally, we collected demographic information and individual difference measures related to participants’ AI experience and attitudes toward AI.

Results

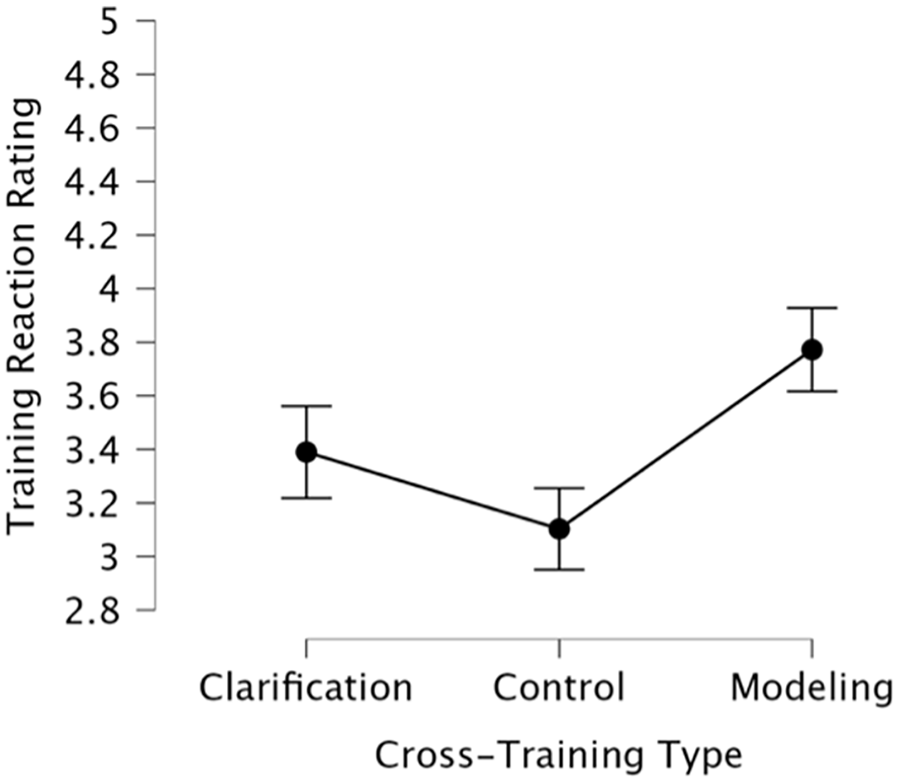

To address RQ1, we performed a repeated measures analysis of covariance (RMANCOVA) with a 2 (Role: Editor, Writer) × 3 (Training Type: Clarification, Control, Modeling) × 2 (Time: Task 1, Task 2) design, including covariates related to their attitudes toward AI and AI experience (measured through Likert-type measures). Significant effects were found for training type on training ratings (F[2, 196] = 11.89, p < .001, η² = .10), with positional modeling (t[196] = 4.64, p < .001) and positional clarification (t[196] = 2.75, p = .013) both being rated higher than the control; positional modeling and clarification were not significantly different (t[196] = −1.87, p = .063). Role assignment and interactions were insignificant. See Figure 1 for the post-hoc comparisons.

Post-hoc comparison of cross-training type on reaction rating.

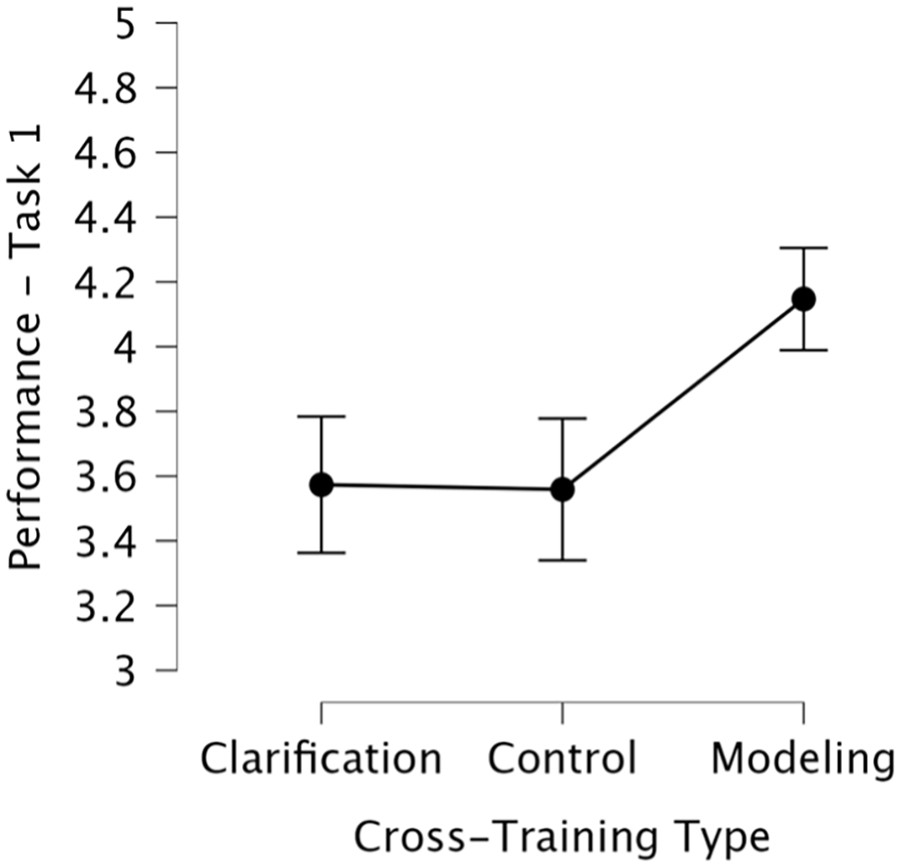

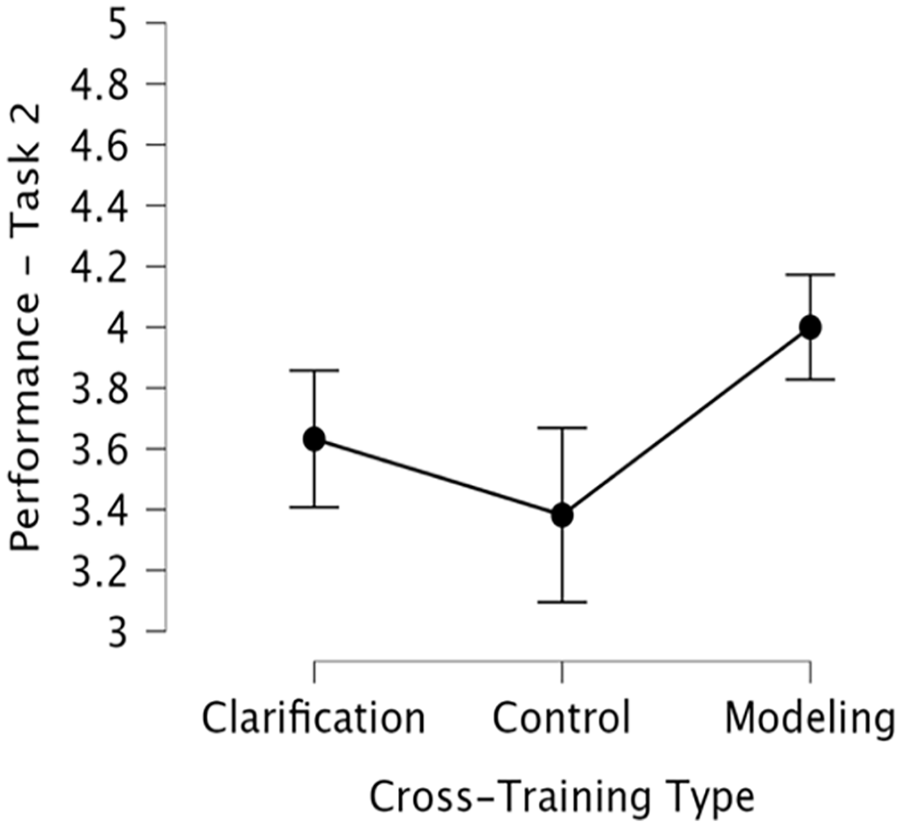

For RQ2, ANCOVAs for each task, controlling for the same covariates, showed training type’s significance (Task 1: F[2, 195] = 10.01, p < .001, η² = .09; Task 2: F[2, 195] = 6.36, p = .002, η² = .06), with positional modeling outperforming the control (t[195] = −4.04, p < .001) and positional clarification (t[195] = −3.69, p < .001) in Task 1 but only the control in Task 2 (t[195] = −3.56, p = .001). Role assignment and interaction effects were insignificant. See Figures 2 and 3 for the post-hoc comparisons.

Post-hoc comparison of cross-training type on perceived task performance (Task 1).

Post-hoc comparison of cross-training type on perceived task performance (Task 2).

These findings build off the rich traditions of human team training success in HFES (e.g., Salas et al., 2008; Volpe et al., 1996), offering cross-training as a path forward to enhance expectations and understanding to support effective workforce HATs. Specifically, this research highlights the potential effectiveness of positional modeling as a valuable strategy to adopt in HAT team training programs for positive reactions to training and task performance. Further, this points to potential benefits after time-on-task with an AI from positional clarification in terms of perceived task performance. As such, when done in a human-centered way and tailored to the specific needs of HAT tasks, these results indicate the cross-training is a potentially viable path forward to support human experiences in HATs.

Discussion

Addressing a notable gap in the HAT literature—limited human-centered, team training research—this study emphasizes the value of team training for HATs, encouraging greater investment into research on HAT team training strategies across contexts. This research suggests that cross-training may help participants’ reactions to training and their HAT’s perceived performance, especially through positional modeling. This cross-training type’s emphasis on observational learning should be further explored for how it can help construct appropriate SMMs that support participants learning about and perceptions of working with an AI (Bandura, 2003; Cannon-Bowers, 2007). Indeed, by seeing their AI teammate in action, participants may have gained a clearer understanding of AI’s strengths and limitations, leading to the higher ratings for this training type, especially for setting initial expectations around their shared task. Additionally, this study is one of the first to study training using actual AI technology, ChatGPT, moving away from the Wizard-of-Oz methods typically used in previous HAT training research (e.g., Johnson et al., 2023). This allows for a more authentic assessment of how human-AI interactions influence training reactions and task performance perceptions, offering new methods for future HAT training research and practice.

Conclusion

In conclusion, this study highlights the potential of cross-training to enhance training reactions and perceived task performance within HATs. The findings suggest that positional modeling, a type of cross-training, significantly improves both training reactions and task performance perceptions. However, the quasi-experimental design poses limitations, and future research should explore the impact of cross-training on objective performance measures. By extending traditional team training methods to HATs, this research indicates that cross-training may be a viable strategy to improve team effectiveness and support the future workforce.

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: This work received internal funding from the first author’s institution.

Funding

The author(s) received internal funding support for the research, authorship, and/or publication of this article.