Abstract

Colonoscopy can reduce the risk of colon cancer by 90%. Due to the lack of surgeon experience, the miss rate of cancerous polyps can reach up to 20%. While simulators have been developed to reduce the high learning curve, these simulators lack real-time feedback needed for successful colonoscopies. The goal of the study was to improve upon colonoscopy training by designing a graphical user interface (GUI) through a two-phase study that included 10 semi-structured interviews and a usability study with 10 participants. Results showed that simulators do not mimic colonoscopy in patients and lack real-time feedback. Additionally, medical residents experience challenges like navigating the scope and loop reduction. User testing results show that users were satisfied with the GUI. Additionally, feedback on polyp detection, cecal intubation time, and force was perceived as useful. As such, the GUI with real-time and post-training feedback has the potential to improve performance in colonoscopy.

Keywords

Introduction

Colonoscopy is a surgical procedure performed over 15 million times annually in the United States (Seeff et al., 2004) that allows surgeons to screen for colorectal cancer (Nee et al., 2020) by detecting polyps (abnormal tissue) in the colon. This is critical because colorectal cancer is the second leading cause of death in the United States (Edwards et al., 2010). To reduce the risk of colorectal cancer, polyp detection has been shown to reduce the risk by 90% (Winawer et al., 1993). However, approximately 20% of polyps are typically missed during colonoscopy due to the experience level of the surgeon performing the procedure (Jha et al., 2021). Specifically, surgeons who performed a small number of colonoscopies per week have lower polyp detection rates (Sekiguchi et al., 2023). To lower the morbidity and mortality from colorectal cancer, research has shown that it is crucial to detect and remove the cancerous polyps and identify early-stage malignancies (Imperiale et al., 2009). However, due to the complexity of colonoscopy, the learning curve for a surgeon to achieve competency and be able to detect all polyps can reach up to 250 colonoscopy procedures (Park et al., 2013). As such, there is a need to improve upon current training in colonoscopy to reduce the learning curve and the risk of not detecting polyps.

One way to shorten the learning curve for colonoscopy is using simulation-based training since it improves performance in the early stages of learning (Sturm et al., 2008). Simulation-based training also allows trainees to practice different skills and techniques in a wide range of medical procedures (Goodman et al., 2019), in a safe learning environment without endangering patients’ lives (Kamran, 2011), and provide feedback during training. A common method of providing training feedback is through evaluation reports and checklists (Sidhu et al., 2004). However, these methods can be unreliable (Sidhu et al., 2004) and do not adequately identify the specific areas where residents need improvement (Vassiliou et al., 2005). As such, providing objective feedback during simulation-based training is needed. However, most physical simulators in colonoscopy training lack integrated feedback measures (Placek et al., 2019).

In Gabrani et al. (2020) review of endoscopic simulators, they emphasized the need to integrate proven-effective elements of simulation-based training which included performance feedback. Providing feedback in simulation-based medical education is the most crucial component for achieving effective learning outcomes (Issenberg et al., 2005). Specifically, prior work has shown that when medical trainees received real-time visual feedback on force exerted during training in laparoscopic knot-tying and suturing tasks, they were able to complete the tasks with greater efficiency and performance (Rafiq et al., 2008). Research has also shown that the absence of feedback on performance in colonoscopy simulators leads to no evident learning and performance acquisition (Mahmood & Darzi, 2004). Therefore, these findings suggest that providing effective feedback during medical simulation-based training can improve performance.

To our knowledge, most current colonoscopy simulators do not provide real-time and post-training performance feedback during simulation-based training. For example, the Olympus colonoscopy simulator is a physical simulator that automatically records parameters such as time, insertion force and depth (Haycock et al., 2010). Kale et al. (2013) developed a user interface for the “active colonoscopy training model” to provide real-time force feedback for colonoscopy. Another simulator called “The AccuTouch Colonoscopy Simulator” records and displays the colon on a video screen and records 14 performance measures including total procedure time, withdrawal and insertion time, force and percentage of time with no patient discomfort (Sedlack & Kolars, 2003). However, these simulators do not integrate a graphical user interface (GUI) that provides real-time and post-training feedback on their performance and recommendations for improvements.

Thus, we aim to improve upon current training in colonoscopy by understanding the shortcomings of current medical simulators and designing a GUI to provide real-time and post-training feedback. In addition, as a method of reducing the learning curve and the risk of missing polyps during colonoscopy. To achieve this, this study has two phases. The first phase of this study identifies the users’ perceptions and needs for colonoscopy training by investigating the advantages and disadvantages of current training simulators, the challenges faced by medical residents in training, and the feedback and performance metrics needed for improving colonoscopy training. Then, the second phase uses the results obtained to develop and identify the potential utility of the GUI by measuring user satisfaction and understanding the perceived usefulness of the force, time, and polyp feedback.

Phase 1: Gathering user Needs

The first goal of this study was to understand the user’s perception and needs in current colonoscopy training through semi-structured interviews and qualitative analysis. Specifically, the first study was aimed to answer the following research questions (RQ):

Participants and Procedures

The data collected consisted of seven experts and three medical residents from Hershey Medical Center (HMC). Experts were defined as someone who has performed more than 500 colonoscopies (Koch et al., 2008). While residents were defined as someone who has performed less than 200 colonoscopies (Koch et al., 2008). Experts in our study were two from general surgery,two gastroenterology, one quantum rectal surgery, one endoscopy, and one colon and rectal surgery. While the three medical residents were from general surgery. Demographics reported indicated a total of seven males (five experts and two residents) and three females (two experts and one resident).

Experts and medical residents were recruited using the snowball method. Participants interested in this study were contacted via email with the purposes of the study, details on the procedure, Institutional Review Board (IRB) protocol, and Zoom link. Once participants joined the Zoom meeting, they were asked to read the purpose and procedure of the study and any questions were answered. Then verbal consent was obtained, and participants were compensated with a $10 Amazon gift card. Then the zoom meeting was recorded, and participants engaged in semi-structured interviews to understand their perception of colonoscopy training. The experts interview questions were divided into “training methods,” “assessing performance,” “clinical challenges,” and “learning curve.” For residents, interview questions were divided into “training methods,” “clinical challenges,” and “future training.” At the end of the semi-structured interview, all participants were thanked for their time and participation in the study.

Qualitative Data Analysis

To understand the user’s perception in colonoscopy training, a qualitative inductive content analysis (Hsieh & Shannon, 2005) was conducted. The Zoom recording audio captured for the 10 interviews had a total of 221 min which were transcribed using Otter.ai. Then, the transcriptions were checked for accuracy by listening to all interviews and editing the transcript accordingly. A codebook was then developed by two raters using an inductive analysis approach (Hsieh & Shannon, 2005), see here for codebook. Next, two raters used NVivo (Dhakal et al., 2022) to code individually the 20% of the data. Then the inter-rater reliability (IRR) was calculated, obtaining an 83% (Cohen’s Kappa = 0.83) level of agreement between the raters. Since the level of agreement is considered excellent (Fleiss et al., 2013), the raters coded the remaining interviews.

Results

This section highlights our results for all research questions. For purposes of this analysis, “f” refers to the frequency that a node has been discussed, “E” is considered an expert, and “R” is considered a medical resident. Our codebook (here) includes definitions of all themes identified.

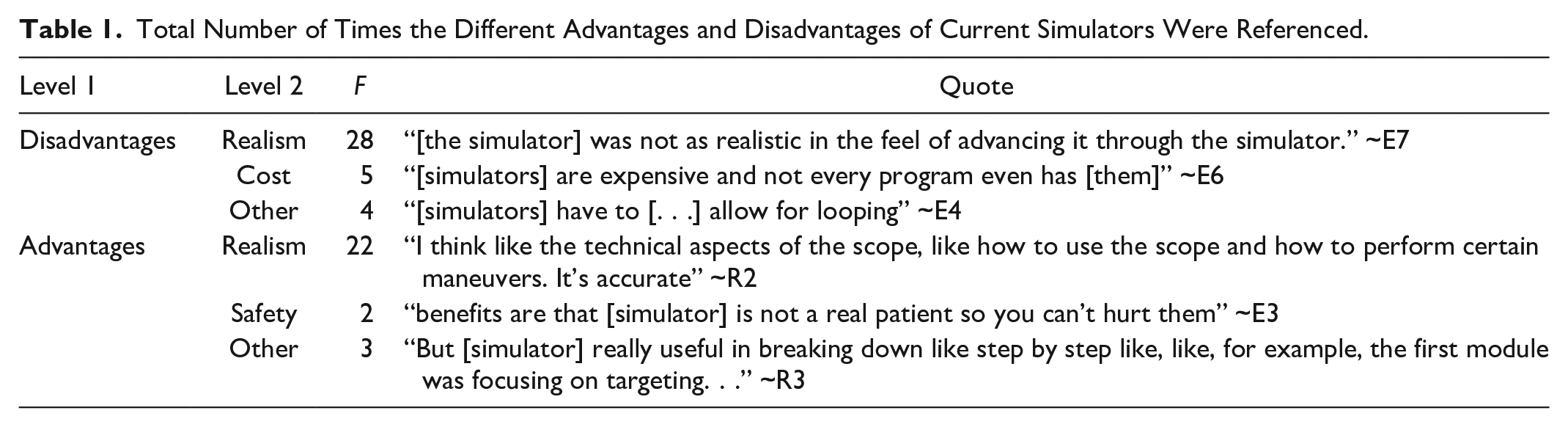

The first research question was developed to understand the advantages and disadvantages of colonoscopy training simulators. To answer this question, an inductive content analysis showed that the disadvantages of current colonoscopy simulators (f = 37) were mentioned more frequently than the advantages of simulators (f = 22), see Table 1. Since the results show that the disadvantages are more prominent than the advantages, there is a need to improve upon current simulators.

Total Number of Times the Different Advantages and Disadvantages of Current Simulators Were Referenced.

RQ2: What are the main challenges that medical residents experience when performing colonoscopies?

The aim of our second research question was to understand the current challenges medical residents experience during colonoscopy. To answer this question, an inductive analysis identified two main themes from the interviews: mechanical challenges (f = 90) and surgeon knowledge (f = 33). The two most common challenges for mechanical challenges were related to

Other mechanical challenges included

RQ3: What are the key feedback and performance indicators needed for successful colonoscopies?

The last research question was developed to explore what types of performance feedback are most important during colonoscopy training. To answer this question, an inductive analysis identified two main themes: summative (f = 71) and real-time feedback (f = 18).

The most common indicator was

The next most mentioned indicator was

The third most mentioned indicator was

Another indicator that was mentioned was

Lastly,

These results indicate that the key feedback and performance indicators needed for successful colonoscopies are integrating real-time feedback, polyp detection, and cecal intubation time. Therefore, there is a significant need to provide real-time feedback and include key indicators during training.

Phase 2: Prototype Development and Usability Testing

To reduce challenges in colonoscopy training identified in phase 1, the second phase of this study focuses on designing a graphical user interface (GUI) for colonoscopy simulators. This GUI aims to provide residents with real-time and post-training feedback on performance metrics including polyp detection, cecal intubation time, and force. In the second phase of this study, we sought to understand the utility of the GUI for colonoscopy training by measuring user satisfaction.

Prototype Development

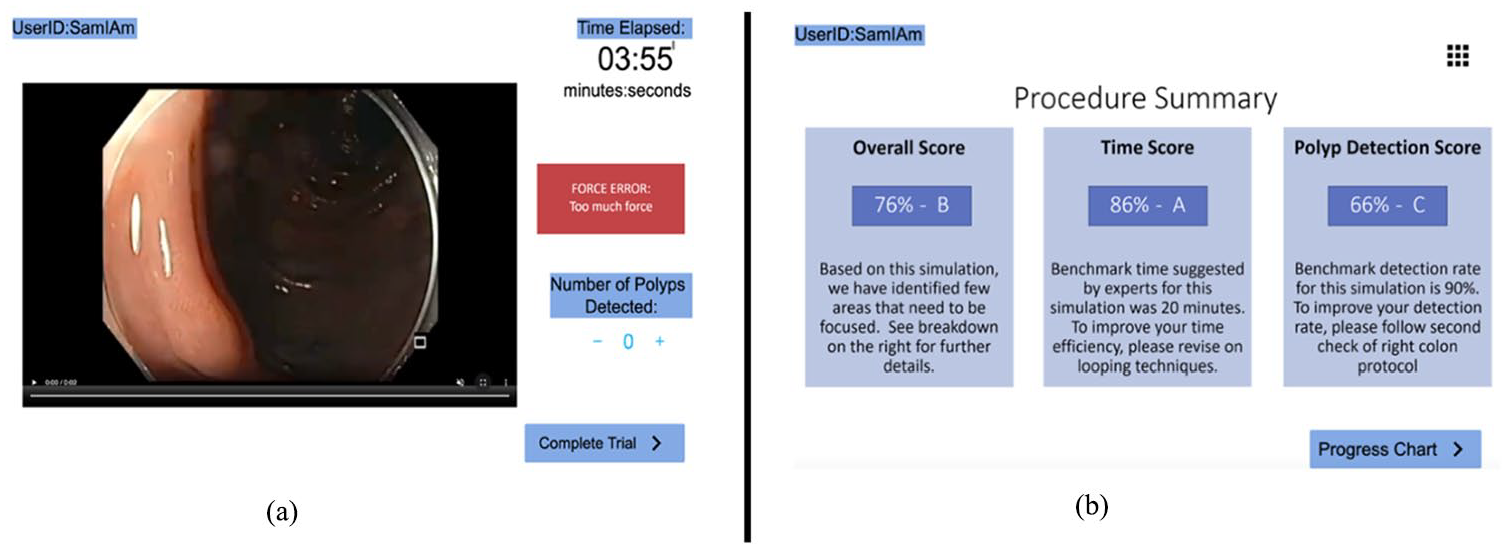

Based on the results from the first phase of this study, the interactive GUI was developed using a prototyping software called Proto.io (PROTOIO, 2011). The GUI first included a home screen which explained the goals of the training. Next, a patient screen was added which included patient information such as name, age, gender, weight, health condition, reason for visit, and medical history. Then, a procedure screen shows a 1 min section of a pre-recorded colonoscopy procedure video (Pochapin, 2011). In this screen, the GUI integrated the key feedback and performance indicators such as real-time and post-training feedback on polyp detection, cecal intubation time, force exerted, and benchmark values. The GUI incorporated real-time feedback on cecal intubation time by adding a timer on the procedure screen. The polyp feedback was integrated by adding a polyp button to the endoscope and force feedback by adding “force warnings” pop ups (Figure 1a). After the user completed the trial, a procedure summary screen appeared which included simulated feedback on overall score, time score, and polyp detection score (Figure 1b). Then a progress report screen showed a summary of the users’ progress, scores, and a recommendation section of all the trials.

Colonoscopy graphical user interface (GUI) with the (a) procedure screen with pre-recorded colonoscopy procedure, timer, force warning, and polyp detection counter and (b) procedure summary screen with feedback indicators such as overall score.

Materials and Methods

After developing the GUI, we sought to evaluate its utility by measuring user satisfaction and determining the usefulness of the polyp detection, cecal intubation time and force feedback. Specifically, we aimed to answer the following research questions (RQ):

Participants and Procedure

The data collected consisted of eight medical residents and two medical students from HMC. Residents in our study were seven from surgery and one from oncology. Medical students were graduate students pursuing a medical degree. Demographics reported indicated a total of six males (residents) and four females (two residents and two medical students).

Participants were recruited using the snowball method (Naderifar et al., 2017). Participants interested in this study were asked to read the purpose and procedure of the study and informed consent was obtained according to the IRB protocol. Next, all participants were compensated with a $15 cash. Participants were then instructed to navigate through the GUI, which was displayed on a Micro-Star International (MSI) 11th Gen Intel ® Core ™ i7-11375H with a 15.6” screen size laptop. Participants were then instructed to engage in a think-aloud protocol as they completed two GUI conditions.

The first GUI condition assumed that the user was a first-time user and had not completed any previous colonoscopy training using the GUI. While the second condition assumed that the user had four previous training sessions using the GUI. In both conditions, users followed the same procedure. First, they were asked to verbalize when they observed a polyp on the 1 min pre-recorded video. Residents were also asked to press the polyp button attached to the endoscope when they detected a polyp during the pre-recorded video. Then, they were required to review the procedure summary screen which provided feedback on overall score, polyp detection score, and time score. Then participants were asked to complete the Post-Study System Usability Questionnaire (PSSUQ) used in previous research to study usability (Lewis, 1992). Two open-ended survey questions were also added to the survey that asked participants “Was the force, time, and polyp feedback useful and why?” and “What recommendations would you have to improve the current design of the interface?”

Results

To answer our research questions, all statistics were analyzed with SPSS (v. 29.0) with a significance level of 0.05.

The first research question was developed to assess the utility of the GUI based on user satisfaction. To answer this question, the 18 statements in the PSSUQ survey were analyzed. Prior to analysis, the reliability of the PSSUQ survey was calculated using a test of reliability, Cronbach’s Alpha on the survey results for each of the ten participants. The scale had a high level of internal consistency, as determined by Cronbach’s Alpha of .948. Based on the reliability of the survey, the average scores of the survey questions for all participants for each GUI condition were computed.

A one sample t-test was conducted to determine whether the mean scores for the survey responses were significantly different than a score of 4 (neutral) for both GUI conditions. No outliers were detected in a boxplot for each of the two GUI condition scores. The assumption of normality was not violated, as assessed by Shapiro-Wilk’s test (p = .496). Data are means unless otherwise stated. Results showed a statistically significant difference for the average mean score in the first GUI condition and the score of 4 considered as neutral in the PSSUQ survey, (M = 2.2359, SD = 0.608), t(9) = −9.175, p < .001. Results also showed a statistically significant difference for the average mean score in the second GUI condition and the score of 4 considered as Neutral in the PSSUQ survey (M = 1.9789, SD = 0.848), t(9) = −7.531, p < .001. According to the Likert scale, where 1 signifies “strongly agree” and 7 represents “strongly disagree,” our results for the survey’s average score was 2 representing “Agree” in both conditions. These results suggest that users expressed satisfaction when using the GUI in both conditions. This supports our hypothesis that users would be satisfied when using the GUI.

The last research question was developed to assess the perceived usefulness of the real-time feedback that was integrated in the GUI. To answer this question, the first open-ended survey question in the PSSUQ survey was analyzed. Specifically, this question asked, “Was the force, time and polyp feedback useful and why?.” Results showed that 67% of the participants found force, time, and polyp feedback useful. For example, one resident answered, “all three were useful to keep track and provide feedback.” Another resident stated, “the time is clear in the upper corner and the polyp count is clear.” Additionally, another resident answered “yes, real-time feedback is important.” One medical student also stated “yes, very useful learning tool.” One participant only found the force and polyp useful and answered, “force was useful but need to specify type and polyps’ feedback was useful.” However, only one participant found the time not as useful stating “time wasn’t useful because this should not be graded but just a benchmark.” These results might suggest that integrating polyp, time, and force feedback would be useful, since most of the participants perceived the feedback in the GUI to be positive.

Conclusion and Future Work

To improve upon current colonoscopy training, we designed a graphical user interface (GUI) that can be paired with colonoscopy simulators to reduce the learning curve and the risk of missing polyps during colonoscopy. To design the GUI, the first phase of this study showed that (a) current simulators lack realism and do not fully represent colonoscopy in actual patients, (b) challenges that medical residents experience range from navigating the scope and loop reduction, to lack of surgeon knowledge, and (c) simulators lack critical real-time feedback indicators such as polyp detection and cecal intubation time. With these results, the second phase of the study was focused on designing and evaluating the utility a GUI based on the identified needs. Results from the second phase of this study showed that (a) users were satisfied when using the GUI in both conditions and (b) integrated feedback metrics such as polyp, cecal intubation time, and force feedback were perceived useful for training. Our two-phase study reveals that current colonoscopy simulators lack real-time feedback and do not address challenges experienced by residents. We can preliminarily conclude that designing a GUI that integrates real-time and post-training feedback has the potential to be useful in improving colonoscopy training. Future work will develop a higher-fidelity GUI to determine its impact by measuring and providing feedback on the force exerted, cecal intubation time, and polyp detection using the Kyoto Kagaku Colonoscopy Simulator. In addition, future work will evaluate if the GUI can potentially reduce the residents’ learning curve and identify ways to make it more interactive. Lastly, future work will ensure that the GUI can be used with any type of colonoscopy simulator. The implications of these findings offer insights that can reshape and improve medical education. Firstly, the identification of shortcomings in colonoscopy simulators and the different challenges experienced by medical residents highlights a pressing need for innovation. Furthermore, medical educators and designers from various disciplines can draw inspiration from this research to further study and integrate real-time and post-training feedback into simulation-based training in other medical procedures such as laparoscopic and endoscopic surgeries. This can ensure medical trainees receive feedback on metrics that lead to successful medical procedures. As such, the study also stimulates a wider discussion on how real-time feedback can potentially be utilized to improve training and reduce the learning curve in different medical procedures.

While this study provides valuable knowledge and promising results in the field of medical simulation-based training, there are some limitations that need to be addressed. Firstly, the semi-structured interviews were conducted via zoom which could have limited the amount of participation or conversations from the participants. In addition, for timing purposes we asked participants in one question to provide their feedback on the polyp, time and force feedback. Future work will be dedicated to ask participants to provide individual feedback on each measure to capture a more accurate representation of their satisfaction with each feedback measure. Another limitation is related to the smaller sample size of 10. As such, future work should aim to increase the sample size and variability of participants by collecting data from more than one medical institution.

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Coauthor Dr. Miller and Dr. Moore owns equity in Medulate, which may have a future interest in this project. Company ownership has been reviewed by the University’s Individual Conflict of Interest Committee and is currently being managed by the University.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Research reported in this manuscript was supported by the National Institute of Diabetes and Digestive and Kidney Diseases under award number R01DK137230. The content is solely the responsibility of the authors and does not necessarily represent the official views of the NIH.