Abstract

Time pressure under a time constraint is a commonly found factor in complex domains that can impair visual search. Detecting when a user is subject to a time constraint is crucial for implementing timely interventions to counteract its detrimental effect on performance. Eye tracking, a non-intrusive method for recording eye movements, offers promising potential for time pressure detection. The present study investigates whether a classifier trained exclusively on eye tracking metrics can reliably classify if a user was under time pressure. For this study, eye tracking data from 40 participants was collected as they searched for objects in a virtual living room under different timing conditions and varying reward incentives, and 13 eye tracking metrics were calculated. The results showed that the support vector machine (SVM) classifier was the best-performing model with 0.82 AUROC, 74% accuracy, and 75% f1 score. This demonstrates the potential of eye tracking in detecting time pressure. These results, while promising, underline the importance of combining eye tracking with different physiological and behavioral measures to improve time pressure detection.

Keywords

Introduction

When a visual search task is performed under a time constraint, it is theorized that information filtering is decreased and/or information processing speed is enhanced (Driskell & Salas, 1996). So, the presence of a time constraint can either enhance or impair search performance. Thus, real-time detection of time pressure is essential for prompt intervention to alleviate potential performance decrements caused by time pressure.

Eye tracking, a non-intrusive method for recording eye movements (Holmqvist & Andersson, 2017), offers a promising solution in detecting time pressure in real-time. While previous studies have used eye tracking to detect and classify time-induced stress (Pfeiffer et al., 2020; Stoeve et al., 2022; Yousefi et al., 2022), they used subjective or stress-correlated (i.e., saliva samples) measures as output. As such, this literature focused on detecting aftereffects of time pressure that include stress, not time pressure itself. However, stress responses are highly variable across people, and it does not necessarily predict performance. For instance, an airport officer with a genetic predisposition for higher heartbeat variability may indicate higher stress levels but can still perform well under time pressure; whereas another officer may have lower heartbeat variability whose performance suffers significantly under time pressure.

Visual search in naturalistic settings and complex domains often includes factors like time pressure. However, prior studies mostly investigated time pressure detection in laboratory-type tasks like Stroop tests. Thus, there is a need to explore time pressure detection in a visual search task performed in a naturalistic environment. Virtual reality (VR) is a suitable medium for this investigation since it allows head movements, thus eliciting more naturalistic behavior (Jerald, 2016).

Given our interest in performance and objective external factors, our focus is detecting when time pressure occurs rather than its effects. To this extent, the present work explores the following research questions: (1) can a classifier trained exclusively on eye tracking metrics reliably detect if a user is under time pressure while performing a naturalistic visual search task presented in virtual reality, and (2) which eye tracking metrics are the most promising in detecting the presence of a time pressure?

For this study, eye tracking data of 40 participants was collected as they searched for objects in a virtual living room under different timing conditions and varying reward incentives. Thirteen eye tracking metrics were calculated, ten of which were selected and fed into six classifiers as training data. The classifiers were then evaluated on hold-out testing data to determine the best performing classifier.

Methodology

Participants and Apparatus

Forty participants (15 female, 25 male; age range: [18, 39]; M = 22.6, SD = 4.39) were recruited and all reported having normal or corrected-to-normal vision. One participant’s data was discarded due to recording errors, leaving a sample size of 39 for data analysis and 5% of this data was lost after filtering.

The VR headset used was an HTC Vive Pro Eye (1,440 × 1,600 pixels resolution per eye, framerate of 90 fps, nominal field of view of 110°) with a built-in eye tracker recording participant gaze at 90 Hz at an accuracy of 1° visual angle. Experimental scenes were created using Unity 3D, and the computer used to run the experiment had an Intel Xeon W-2225 processor at 4.10 GHz and 32 GB of RAM, with an NVIDIA Quadro RTX 4000 graphics card.

Experiment Design

The two independent variables were: (1) time constraint (i.e., with time limit, without time limit) and (2) reward value (i.e., no reward, low value, high value), which yields a 2 × 3 within-subject factorial design. Reward value was not introduced prior because it was accounted for in the training process as a training feature and found to be a weak predictor as will be shown below. A Latin Square Crossover Design was used to moderate presentation order effects. One and only one target was present per trial.

Choosing the Time Constraint & Reward Values

The time constraint had to be chosen such that it allowed participants to find any target while still setting a challenge. A pilot study on six participants found a mean search time of 1.97 s in our experimental setup. Therefore, a 3-s time limit was used for the experiment. The low (1 point) and high (10 points) reward values were chosen based on earlier literature (Bourgeois et al., 2018).

Scene Development and Design Considerations

Twenty unique objects were randomly selected as targets per block. The target and reward order were randomized per participant. Target objects were presented on different surfaces such as the floor, shelf, and table, based on semantic appropriateness. For example, a book could appear on a table, shelf, or couch, while a floor lamp could only appear on the floor and not on top of a shelf or table.

At the beginning of the experiment, participants viewed a presentation that had all the objects and their corresponding names to ensure there was no confusion in finding the correct object during the study (Le-Hoa Võ & Wolfe, 2015). Object locations were randomized every trial to maintain environment novelty and control the effect of search history (Wolfe, 2020) and target eccentricity (Le-Hoa Võ & Wolfe, 2015). No target appeared on the same surface in consecutive trials.

Experimental Procedure

Participants were seated in a stationary chair but were free to rotate their heads. At the start of each trial participants started with an initial central fixation for 3 s, followed by the target information for 6 s. The target information provided instructions of the target name, reward value, and whether a time constraint was imposed or not. After that, the virtual living room loaded, and the search began. A search was terminated either when: (a) the user found the target, fixated their eyes on it, and clicked the trigger on the controller, or (b) time ran out in case of a timed trial. Participants could quit a trial at any time by clicking the trigger on the controller. Participants received $15 as compensation for their participation, and to incentivize participants to perform at their best, the top three performers received $100, $75, and $50 respectively.

Gaze Data Collection

User gaze was captured using the 90 Hz eye tracker of the HTC headset and Unity. The SRanipal software development kit (SDK) developed by HTC for the eye tracker allows for the collection of several eye tracking measures like pupil diameter, gaze origin, and gaze direction vector. To record the users’ gaze, we collected the coordinates of the gaze direction vector, then we used the built-in Raycast function in Unity to project a ray from the pupil in the direction of that vector. If the projected ray collided with an object, we assumed the user was looking at the object. We then extracted the collision point coordinates which corresponded with the user’s gaze in 3D space. The data collection script was written in C#.

Data Processing

This section describes the pipeline used to convert the raw gaze data into features, followed by the procedures to train each classifier. The class labels were binary, where a value of 1 represented a trial with a time constraint, and a value of 0 denoted a trial without a time constraint. Code for data preprocessing and all classifiers except the multilayer perception (MLP) neural network were written in R. The processed data was then fed into a Python script for the MLP training and evaluation.

Data Preprocessing

Data preprocessing was performed using an R script after data collection. First, missing and invalid data entries were removed. The SRanipal SDK reports fields such as eye_valid and openness per eye to indicate data collection validity. According to the SDK, an entry with eye_valid value less than 31 was considered invalid.

Feature Calculation and Selection

The following features were chosen based on their inclusion in prior studies or their observed susceptibility to time pressure in our study. For instance, pupil dilation metrics, such as those utilized in previous experiments (Baltaci & Gokcay, 2016; Hirt et al., 2020; Ren et al., 2014), were selected due to their strong correlation with autonomic system activity (Partala & Surakka, 2003). Similarly, well-established eye tracking metrics like fixation count, duration, and saccadic velocity were included in our feature set. Less known metrics like convex hull volume and target latency were also incorporated based on our preliminary analysis (El Iskandarani & Riggs, 2023), which demonstrated a significant main effect of time pressure on these metrics.

Thirteen eye tracking metrics were calculated (blink count, blink rate, fixation count, max pupil dilation, mean fixation duration, mean pupil dilation, mean saccadic distance, mean saccadic duration, mean saccadic velocity, search time, search volume, target latency, verification time) and three non-eye tracking features (participant ID, reward value, and trial outcome) were also considered, totaling 16 features.

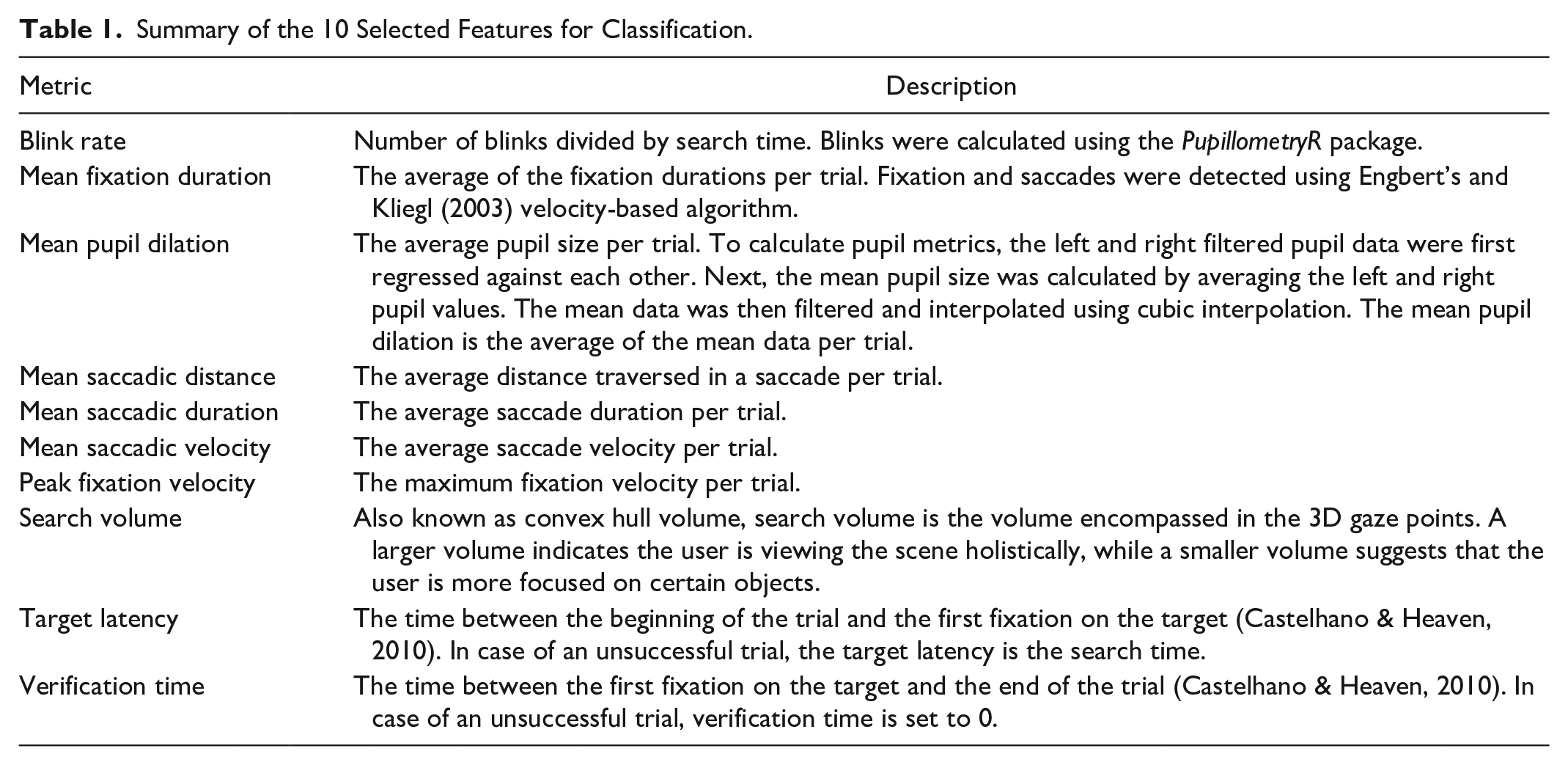

To reduce model complexity and training time, features were selected from the above-mentioned set. First, redundant features, that is, features with correlation values above .75, were identified and removed. Next, features were ranked in terms of importance and selected using recursive feature elimination (Darst et al., 2018) (RFE) implemented in the caret R package. RFE explores all possible sets of predictors and attempts to minimize collinearity and dependencies by removing the weakest features. Out of the 16 features, 10 were selected. For brevity, only the selected features are described in Table 1.

Summary of the 10 Selected Features for Classification.

Model Descriptions

Like the rationale behind our choice of features, the machine learning models were chosen based on their usage in similar studies. The support vector machine, for instance, was used to classify and detect time stress in virtual reality (Pfeiffer et al., 2020; Stoeve et al., 2022). A deep learning model was also included since deep learning was found to outperform conventional machine learning methods in various classification tasks (Sujatha et al., 2021). The classifiers used are listed below in alphabetical order.

Training/Testing Data

After filtering and processing, the dataset consisted of 4,625 observations with 2,289 untimed and 2,336 timed trials. The training testing split was set at 80/20 such that each participant was represented equally in both training and testing sets, that is, if a participant performed 100 trials, 80 were used for training and the rest were used for testing. Both training and testing datasets were also balanced in terms of number of timed and untimed trials. Thus, our results portrayed the classifier’s ability to detect stress in priorly introduced users, not new users. To evaluate the classifiers on training data and tune hyperparameters, 10-fold cross-validation was implemented and predictions were aggregated from the 10 folds to calculate the performance measures. After that, the classifiers were evaluated using the hold-out testing data.

Performance Metrics

To calculate the performance metrics, a threshold of

Results

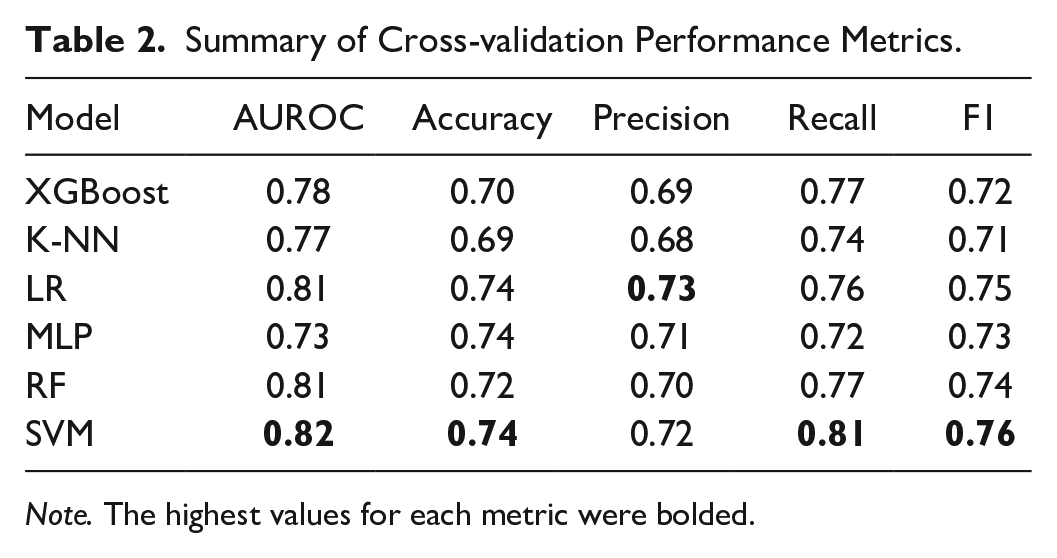

Here we first report the 10-fold cross-validation performance of each classifier on the training dataset using the metrics: AUROC, accuracy, precision, recall, and F1. We then report the performance of the classifiers on the test dataset.

Cross-validation Performance

The cross-validation performance metrics in Table 2 showed that SVM was the best classifier across all performance metrics except precision, where LR is marginally more precise. LR and SVM performed similarly except in recall where SVM has a significant margin ahead of LR. Next, we look at the testing performance to check whether the classifiers fitted well and how they performed on new data.

Summary of Cross-validation Performance Metrics.

Note. The highest values for each metric were bolded.

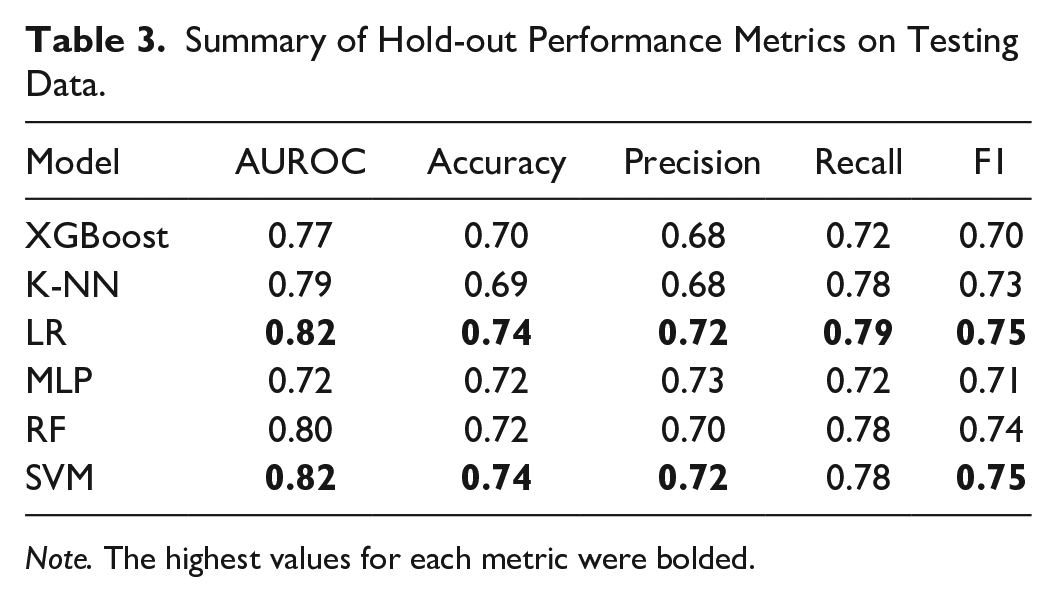

Hold-out Performance

Comparing cross-fold in Table 2 to hold-out performance in Table 3, we observe that the classifiers performed consistently with their training performance across all metrics. LR and SVM remain the best performing classifiers, albeit the difference between them is smaller in the testing data compared to the training data.

Summary of Hold-out Performance Metrics on Testing Data.

Note. The highest values for each metric were bolded.

Discussion

For this study, we investigated the performance of six classifiers in detecting the presence of a time constraint in a naturalistic visual search task using eye tracking metrics. The selected features were target latency, verification time, peak fixation velocity, search volume, mean saccadic velocity, mean saccadic duration, mean saccadic distance, mean pupil dilation, mean fixation duration, and blink rate. We provide plausible justification for why these features were ultimately selected.

First, target latency captures the time to search the scene and find the target among distractors, while verification time indicates the cognitive process of identifying and confirming that it is indeed the target (Castelhano & Heaven, 2010). Time pressure is theorized to have different effects on each of them; time pressure may increase information filtering without necessarily impacting the time to cognitively process the target. Second, given time pressure was found to result in faster response times (Rieger et al., 2021), speed-sensitive metrics that include peak-fixation velocity, mean saccadic velocity, mean saccadic duration, and mean saccadic distance are expected to be selected features. This is in line with previous literature that found saccadic measures to be indicators of arousal and sympathetic nervous system activation, which are highly correlated with stress (Di Stasi et al., 2013; Salinas & Stanford, 2021). Third, a smaller search volume indicates a more focused search strategy (Imants & de Greef, 2014), so including search volume in the selected features underscores time pressure’s impact on the reduction in spatial exploration. Finally, the inclusion of pupil dilation and blink rate is anticipated for they are well-documented indicators of stress (Baltaci & Gokcay, 2016; Haak et al., 2009; Partala & Surakka, 2003; Yousefi et al., 2022). Collectively, this set of 10 features considers different aspects that pertain to time pressure.

In terms of classifiers, LR and SVM were found to be the best performing classifiers with 0.82 AUROC, 74% accuracy, and 75% f1 score on test data. Similar to a previous study (Pfeiffer et al., 2020), the results showed that SVM was the best classifier across our set of performance metrics. This comes to no surprise, as SVM is one of the best-performing methods for classification tasks. In addition, we had lower accuracy scores compared to previous studies, and we posit several reasons for that. First, dataset size and dimensionality greatly impact training and classification performance (Vabalas et al., 2019). For instance, classifiers like SVM may be more robust than other models with small and sparse datasets, while neural network methods may require larger datasets to perform well (Barbedo, 2018). Our dataset was significantly larger than comparable studies (Pfeiffer et al., 2020; Stoeve et al., 2022; Yousefi et al., 2022) given each trial was considered an observation. Second, the nature of the task contributes to the variation, and our study focused on a visual search task in a naturalistic environment. Visual search is highly dependent on bottom-up features like color, saliency, and discriminability (Wolfe, 2020) which we varied across trials and participants to illicit naturalistic visual search. Thus, we expected to have more variability in our data and less accurate scores from our classifiers compared to a more controlled task like the Stroop test. Nevertheless, here we show that a classifier trained solely on eye tracking data could achieve 74% accuracy in detecting an external factor like time pressure.

Although the results here show that time constraint detection using eye tracking is promising, basing predictions on one measure is not ideal because of individual differences that add to the variance in the data and decrease classifier performance accuracy. One way to account for this variance would be to pair eye tracking with other physiological measures to train the classifiers, and we posit that heart rate data is a valid option for enhancing classifier performance. Heart rate has long been recognized as a valuable physiological indicator of stress (Laborde et al., 2015). Given time pressure’s correlation with stress, heart rate can offer various features that can improve time pressure detection. One example is reduced heart rate variability (HRV), characterized by less variation between consecutive heartbeats, which has been associated with higher stress levels (Boonnithi & Phongsuphap, 2011).

There are various ways to build upon this study. First, new data could be collected to test the classifiers on new individuals. This would allow us to investigate whether the classifiers can generalize to bigger sample sizes and reliably account for individual differences in response to time constraints. Second, we can train the classifiers on more advanced metrics (e.g., scanpath analysis; El Iskandarani et al., 2023) that could enhance classifier performance. Fourth, we could also investigate what metrics would be optimal for time constrain detection in real-time and what interventions would be beneficial to mitigate the decrement in visual search performance. While pairing eye tracking with other signals like heart rate may prove critical to obtain the most accurate classification performance, eye tracking’s potential in this field is yet to be fully uncovered as more metrics and analysis methods are developed.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported in part by the National Science Foundation (NSF grant: #2008680, Program Manager: Dr. Andruid Kerne).

Availability Statement

Data, code scripts, and further information on model selection and hyperparameter tuning are available upon request from the authors.