Abstract

In this paper, we report results from a heuristic usability evaluation we conducted of an active industrial exoskeleton used for enhancing hand grip strength. We focused our usability evaluations on four tasks which are critical for successfully using the exoskeleton—assembly, donning, doffing, and disassembly of the exoskeleton. Seven evaluators used a combination of the Nielsen usability heuristics, and Schneiderman’s golden rules, to find usability problems and rate the severity of the usability violations for the four tasks. Results indicate that the visibility of system status, and error prevention heuristics were violated the most.

Keywords

Introduction

Recently, wearable industrial and occupational exoskeletons have emerged as a promising solution to alleviate the problem of work-related musculoskeletal disorders in industrial workplaces and maintain worker productivity and safety while performing industrial tasks (Elprama et al., 2022; Howard et al., 2020; Kermavnar et al., 2021; Kim et al., 2018; Kuber et al., 2022, 2023; McFarland & Fischer, 2019; Medrano et al., 2023; Papp et al., 2020; Reid et al., 2017). Unlike traditional engineering controls such as equipment and workstation redesigns that require significant modification of work environments, or automation of material handling tasks, exoskeletons are worn by workers, so their use requires little to no modifications to the workplace and the work environment. Many scientific investigations have been launched into the effectiveness of exoskeletons in reducing musculoskeletal injuries—most of these studies have been in laboratory settings and compare simulated task performance from various high-risk occupations such as construction, manufacturing and material handling with and without exoskeletons (Baltrusch et al., 2019; Cha et al., 2020; De Bock et al., 2023; de Looze et al., 2016; Farris et al., 2014; Gillette & Stephenson, 2019; Harant et al., 2023; Hwang et al., 2021; Jackson & Collins, 2015; Kermavnar et al., 2021; Latella et al., 2022; Luger et al., 2021; Pinho & Forner-Cordero, 2022; Qu et al., 2021; Schmalz et al., 2022).

Studies on user fit and comfort have also been conducted using empirical techniques such as user testing (Baldassarre et al., 2022; Elprama et al., 2022; Kim et al., 2018; Luger et al., 2023; Meyer et al., 2019; Pesenti et al., 2021; van Dijsseldonk et al., 2020). But there have been no studies evaluating the design of these exoskeletons with respect to the violations of usability principles. Unless we evaluate the designs of exoskeletons using usability inspection methods such as a human factors expertise driven heuristic evaluations, we will not know how the designs of these wearable devices can be improved, and how the design affordances and usability heuristic violations are likely to impact large scale implementation of exoskeletons in industrial work contexts. If the design is poor, then industry is unlikely to buy into and embrace these important technologies, and the investment into building effective exoskeletons would not yield significant benefits for reducing musculoskeletal injuries and thereby protecting worker health. Our study, the first of its kind, focuses on a heuristic evaluation of an active soft exoskeleton for the hand which imparts hand grip strength. The exoskeleton consists of a glove and a powerpack worn in either a backpack or in a hip carry. The sensors on the palm of the hand and on the fingers get activated when the user moves their hand to perform a task, thereby providing grip strength support to the user. We evaluated violations of usability heuristics in the hand exoskeleton when assembling, donning, doffing and disassembling the device, given that these are the basic tasks that the design of any exoskeleton needs to first afford and support for any user to successfully use the exoskeleton. Our basis for evaluating the design of the device when performing these four tasks was that if we were unable to assemble the device because of major usability problems, we would be unable to don it and use it for an actual industrial task. Our rationale reflects what might occur in practice if industry were to implement this—there would be some form of assembly (even if the device would be kept partially assembled), there would be some elements of donning of the device in practice, most likely some doffing, especially if workers were to use this for prolonged periods of time during work shifts, and some degree of disassembly for cleaning, sanitizing, maintenance and storage. If during these basic tasks, the design of the exoskeleton reflected major usability problems, this would also discourage workers from accepting the device and pose significant barriers for widespread industry adoption. Our study is unique and significant in that before evaluating task performance with the exoskeleton, we wanted to evaluate usability violations in the device when attempting to assemble, wear, doff, and disassemble and store the device which we think are fundamental and critical tasks if we are to successfully use any exoskeleton for improving task performances.

Methods

Study Approach and Procedure

We conducted the study in four phases—an initial study planning and orientation and training phase, the usability problem identification and categorization phase, the severity rating phase, and the rating discussions and reconciliation phase. In the initial study planning and training phase, seven evaluators, all human factors engineers with training and expertise in human factors methods, met and reviewed the usability heuristics together, and discussed how the process of evaluation would work. We also familiarized ourselves with the exoskeleton and how it operated. We created Excel spreadsheets for conducting the evaluations. Evaluators were reminded that a single usability problem could be a violation of multiple heuristics. In the second phase, the evaluators each independently evaluated the exoskeleton to discover usability problems when assembling, donning, doffing, and disassembling and storing the device and sent their evaluation spreadsheets back to the project lead. The usability problems identified by individual evaluators were then combined and categorized with specific problem labels that represented the problems evaluators identified. The evaluators then independently rated the severity of the usability problems and returned the ratings to the lead. The project lead then organized discussion meetings in the last phase to discuss extreme ratings (criteria for discussion presented in the next section) to see if the rating could be explained, reconciled and revised. During the discussions, the evaluators who assigned the extreme ratings elaborated on the rationale for their severity ratings, and based on the discussions, either revised their ratings or kept their ratings. All discussion meetings were audio recorded, and the project lead then kept track of whether the ratings were changed after discussion and if the extreme ratings converged to the point of resolution.

Usability Heuristics, Ratings Scale, and Discussion Criteria

Similar to other researchers conducting heuristic evaluations of usability (Klarich et al., 2022; Momenipour et al., 2021; Zhang et al., 2003), we combined Nielsen’s usability heuristics (Nielsen, 1994, 2005), and Schneiderman’s golden rules for interface design (Shneiderman et al., 2016) to generate 14 evaluation heuristics including visibility of system status, match between system and real world, user control and freedom, consistency and standards, error prevention, recognition rather than recall, flexibility and efficiency of use, aesthetic and minimalist design, recognizing, diagnosing and recovering from errors, help and documentation, universal usability, offering informative feedback, designing to yield closure, and using users’ language in the design. For rating the severity of the problem, evaluators used the following severity rating scale: 0: I don’t agree that this is a usability problem at all; 1: Cosmetic problem only; need not be fixed unless extra time is available; 2: Minor usability problem; fixing this should be given low priority; 3: major usability problem; important to fix, so should be given high priority; 4: Usability catastrophe; imperative to fix this problem. Before the rating process was initiated, evaluators were reminded again of severity categories and what they imply and, in particular, to consider whether the problem would be a one-time problem or a persistent problem that occurred every time a user would use the device, and to imagine a conversation with the designer of the device and what they would prioritize as a “must immediately fix” usability problem. After each evaluator independently assigned severity ratings to all the usability problems identified, the evaluators sent back their spreadsheets to the project lead who then compiled each evaluator’s ratings for each usability problem into a master spreadsheet.

To decide if a discussion meeting was warranted, the team agreed that transitioning from a rating of 2 to a rating of 3 was indicative of moving from a minor problem to a major usability problem, so extreme ratings fulfilling the following criteria would be discussed: (a) 2 or more ratings of 0 and 2 or more ratings of 4; (b) 2 or more ratings of 0 and 2 or more ratings of 3; (c) 2 or more ratings of 1 and 2 or more ratings of 4; (d) 2 or more ratings of 1 and 2 or more ratings of 3; (e) 2 or more ratings of 2 and 2 or more ratings of 3; (f) 2 or more ratings of 2 and 2 or more ratings of 4. The project lead then arranged for a discussion of the findings among the evaluators based on the ratings in the final spreadsheet.

Tasks Evaluated in Study

We evaluated the usability of the exoskeleton when assembling the exoskeleton, then donning it, then doffing the device, and finally when disassembling the device and storing it. The device comes disassembled in a case and requires the user to first assemble it, and then don the backpack or hip carry to use the device. Each evaluator independently assembled, donned, doffed, and disassembled the device for evaluating the usability of the device during these tasks. We documented problems in each task separately, keeping notes on usability problems that we encountered when performing these tasks, and the heuristics the device violated in each task phase.

Statistical Analysis

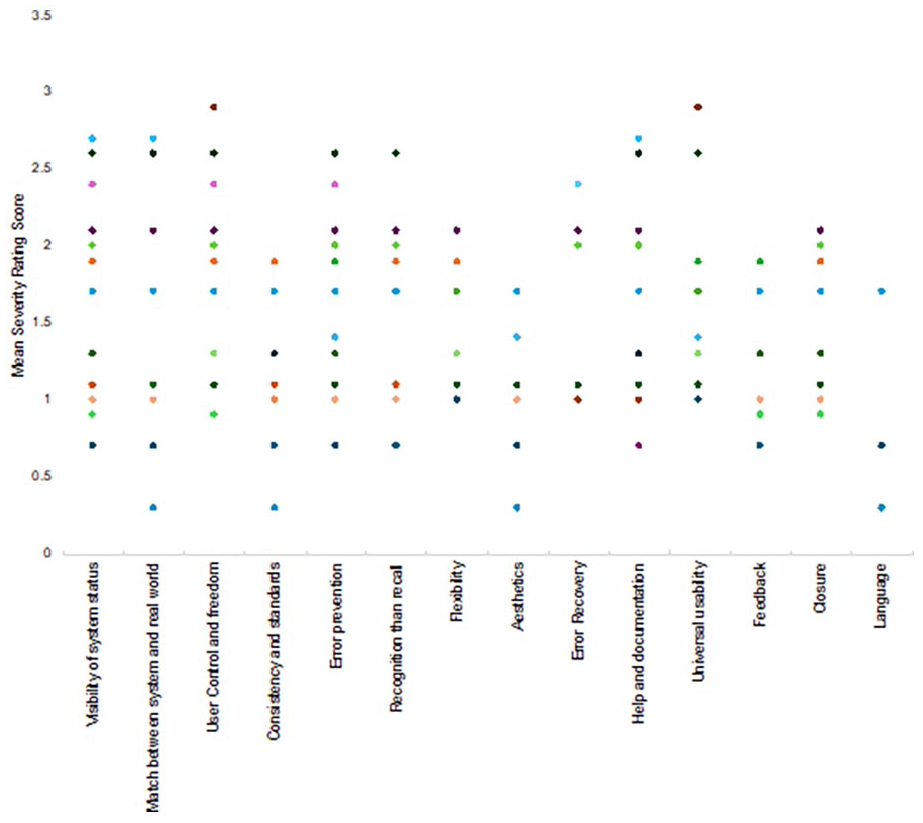

Four sets of descriptive analyses were conducted, and visualizations generated: (1) determining which heuristic was violated using a frequency distribution of usability problem counts with heuristics; (2) binning analysis of mean severity ratings over all tasks and all problems identified; (3) relating the mean severity scores for all problems for each heuristic using dot plots; (4) determining the relative severity of usability problems in various task phases with box plots.

Results

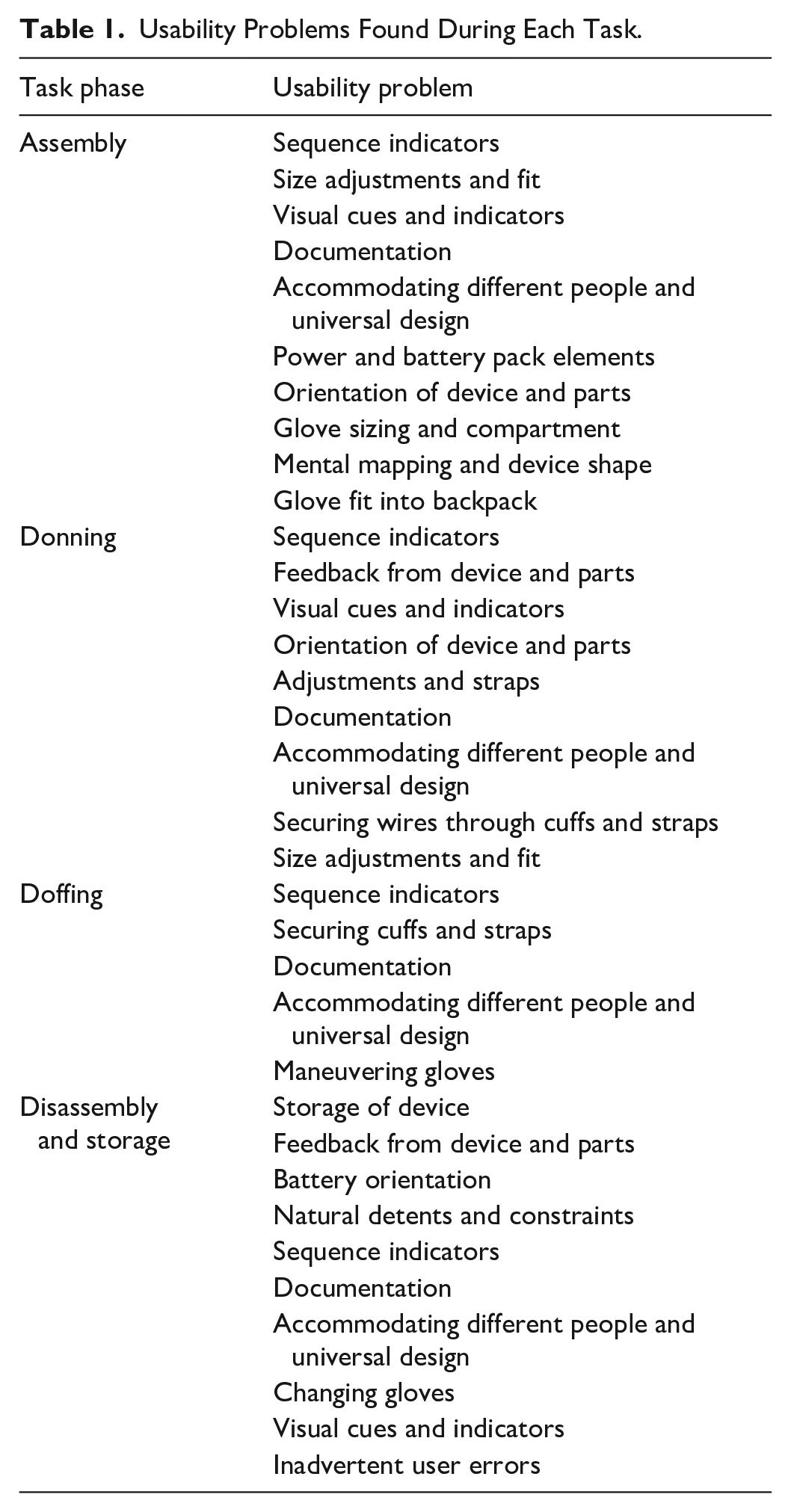

From our heuristic evaluation of the exoskeleton, collectively among all the 7 evaluators, we found 10 usability problems during the assembling of the exoskeleton, 9 usability problems during the donning task phase, 5 usability problems during the doffing phase, and 10 problems during the disassembly and storage task phase. These usability problems organized by the tasks are presented in Table 1.

Usability Problems Found During Each Task.

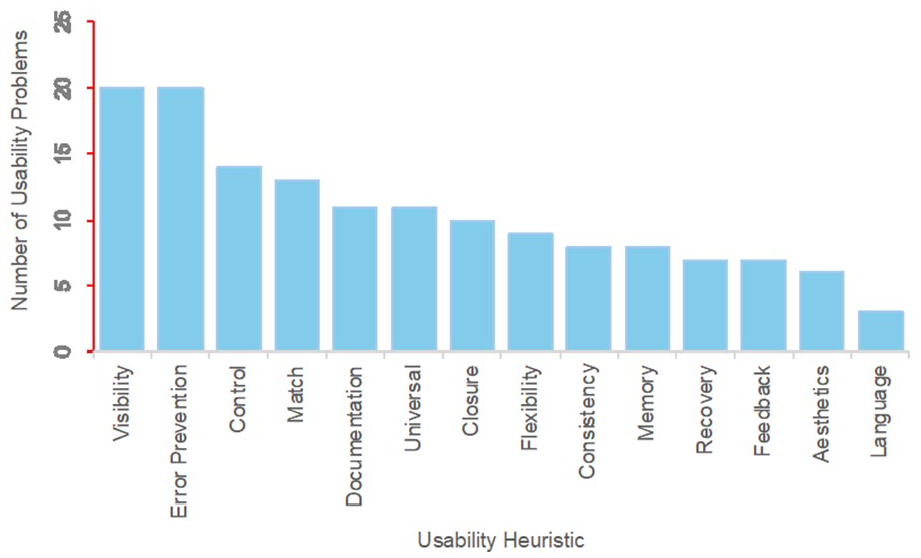

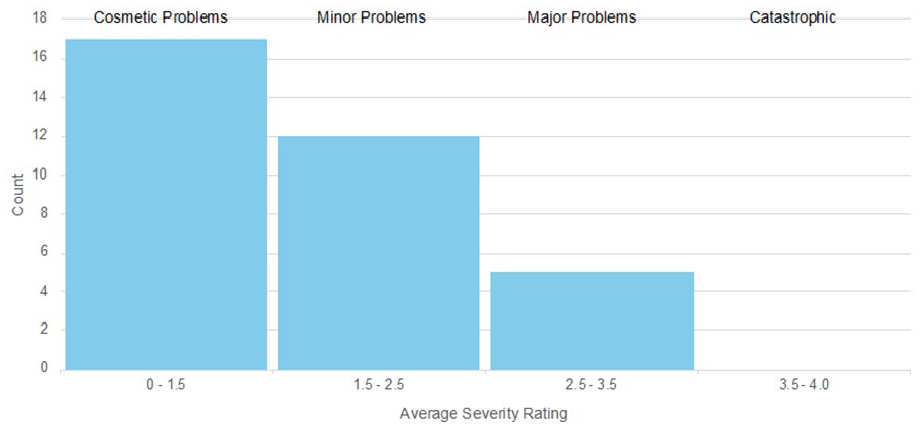

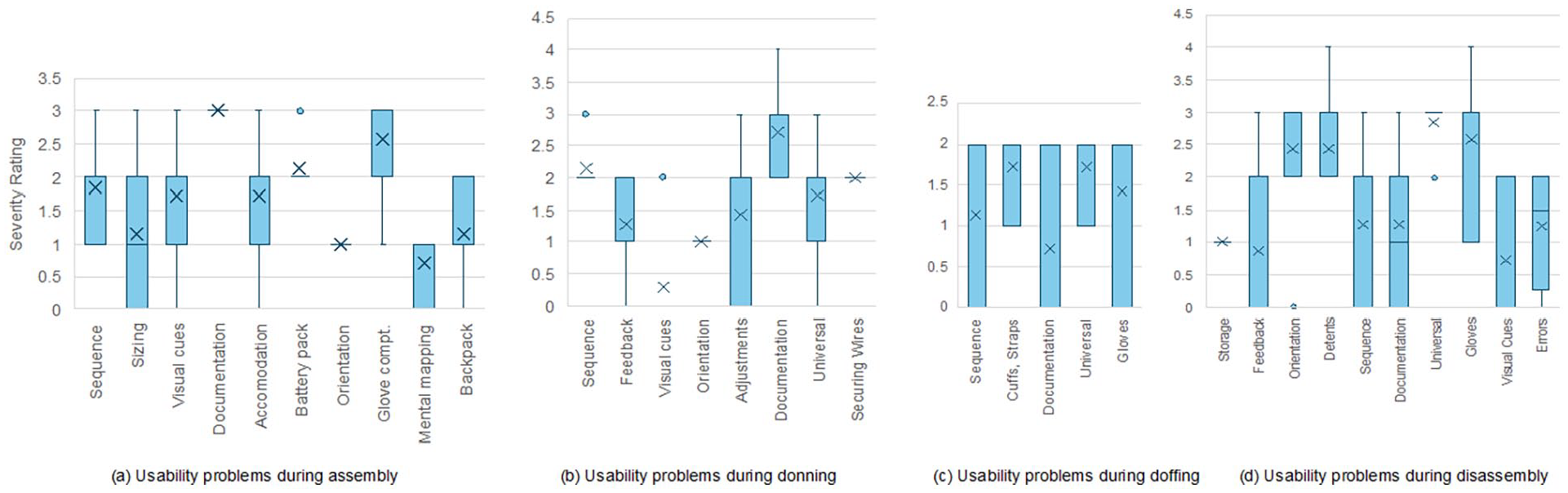

For the usability problems found, we generated frequencies of each heuristic violated. Over the 34 usability problems uncovered during the evaluation over all the 4 tasks (assembly, donning, doffing, and disassembly), the visibility of system status heuristic and the error prevention heuristic were the most violated, followed by user control and freedom and match between system and real world (Figure 1). Together, they make up nearly 45% of the 147 total violations over all tasks, problems and heuristics evaluated. Figure 2 summarizes the severity of the problems found in the device based on the mean severity ratings for each problem assigned by the seven evaluators. Because the mean severity rating is not a whole number, the severity ratings represented in Figure 2 are divided into four bins (Zhang et al., 2003)—a mean severity rating equal to or above 3.5 is a catastrophic usability problem; a mean rating equal to or above 2.5 but below 3.5 is a major usability problem; a mean rating equal to or above 1.5 but below 2.5 is a minor problem; and a mean rating below 1.5 is a cosmetic problem. As can be seen from Figure 2, there were no catastrophic problems, 5 major problems, 12 minor problems, and a majority of 17 cosmetic problems with the device. Based on the severity ratings, usability problems during doffing were rated less severe than problems during assembly, donning and disassembly (Figure 3). Donning and disassembly tasks revealed problems most evaluators rated higher in severity than problems during assembly, with no usability problem during assembly rated >3.

Number of usability problems by the usability heuristic across assembly, donning, doffing, and disassembly tasks.

Average severity rating of usability problems binned by cosmetic, minor, major, and catastrophic problems.

Usability problems and task phases: (a) Usability problems during donning. (b) Usability problems during doffing. (c) Usability problems during disassembly. (d) Usability problems during disassembly.

As can be seen from Figure 4, except for consistency and standards, flexibility, esthetics, error recovery, feedback, closure and language, every other heuristic received at least one average rating >2.5 for at least one problem with the five major problems mapping to violations of these heuristics.

Usability problems plotted by heuristic and severity.

Discussion

Based on the average severity ratings, five major usability problems were documented in the study which we felt would be persistent problems every time a user used the device, problems that were easy to fix and hence needed to be priorities for the designers of the device: (1) the difficulty in assembling the glove connector-power pack pair given the lack of indicators and visibility on the device on how to do this; this is particularly a problem given the connector has the various size gloves connected to it, so this would impact the fit and sizing of gloves for the user, and would not prevent user errors; (2) inadequacies in the documentation on assembly of the glove connector to the powerpack to address the problem described in (1); (3) discrepancies between the arm cuffs included in the device and the cuffs specified in the manual resulting in problems in donning the cuffs to hold the glove cord securely in place and resulting in confusing and erroneous sequences during donning; (4) tedious, difficult disassembly tasks that require strength, leverage and difficult actions from the user and lacking in universal design of the device during disassembly; and (5) difficulty with disassembling the glove connector-powerpack to remove glove and change out glove sizes if needed; there was a lack of visual indicators on the device to effect this action from the user.

Some additional minor problems we found included: (1) a lack of assembly and donning sequence indicators in the device; (2) sizing and fitting problems with the gloves; (3) a lack of visual cues and indicators in the device for elements such as the front and back sides of the glove, left and right gloves, and orientation and insertion of the batteries into the pack so mental mapping of orientation of parts in the device would be difficult for users; furthermore, the glove shape did not match the shape of the hands and there was no constraint in the design to fit the glove in the hands; straps in the device made quick adjustments difficult. Overall, we found the device to be well-designed, with no catastrophic usability problems and most of the usability problems being either cosmetic or minor in nature. The major problems we found are also easy but essential to address for a seamless user interaction.

Conclusions

In evaluating the potential for large scale industrial implementation of exoskeletons in general, our view is that critical product design elements and features such as whether the device is portable and storable, is simple and parsimonious in design with minimal number of parts, is easily cleaned and sanitized, is maintainable and repairable, in addition to its quality and reliability over the long run (in providing the active or passive support to the worker promised by the device over extended work shifts, 24/7, all through the year), and importantly, how much it costs, will be important device design considerations manufacturers of exoskeletons will need to consider. From the perspective of the industry, we think many critical factors will impact adoption of exoskeletons including the space requirements and constraints for storing these devices (some devices such as the hand exoskeleton we evaluated are small and fit in a small case; other exoskeletons that support the shoulder and the back are larger and need more storage space), how quickly they can be put on and taken off with minimal to no training burden (much like regular personal protective equipment such as hard hats and safety glasses), and most importantly, the costs and benefits for industries to widely implement these for every worker.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.