Abstract

As research interests have been increased on emotions and affect in Human Factors and Human-Computer Interaction (HCI), extensive research has been conducted on developing emotion detection technologies. However, little research has been conducted on the necessary former steps–for example, identifying what emotions are involved in specific use cases and how those emotions need to be treated. The present paper introduces a novel affective design tool, “Task Emotion Analysis (TEA)” following the traditional Human Factors method, Task Analysis. TEA involves basic task analysis but provides (1) what types of emotions are induced from or engaged with each task, (2) why they are induced, and (3) how they can be mitigated through interactions with technologies. Sample TEA results are provided to demonstrate its effectiveness. We hope that TEA can provide a legitimate method for the design of empathic interfaces and contribute to conducting deeper emotion analysis in Human Factors and HCI research.

Introduction

In Human Factors, human behavior and cognition have dominated research, but emotions and affect have been peripheral and sporadic until recently (Jeon, 2017). Since the introduction of terms such as Affective Computing (Picard, 1997) and Emotional Design (Norman, 2004), more research has been conducted on emotions and affect. However, research has focused on developing emotion detection technologies (Wang, Lee, et al., 2022; Wang, Song, et al., 2022), with little attention given to the necessary preceding steps–for example, identifying the specific emotions involved in particular use cases and understanding how these emotions need to be addressed. The present paper proposes a preliminary Task Emotion Analysis (TEA), derived from the traditional Human Factors method, Task Analysis.

In emotion research in the context of technology use, various approaches have been employed. Affective Computing (Picard, 1997) aims to create a computer that intelligently communicates with humans by recognizing and expressing emotions. With the recent development of machine learning and AI, emotion recognition techniques have proliferated. Kansei Engineering (Nagamachi, 2002) is a domain and method per se for tackling these recent developments. In Kansei Engineering, researchers attempt to identify a perceived image (Kansei) of a product and obtain that image using different psychophysiological experiments. However, it is a process of creating the core image of the product rather than defining its functions and structure. Although terms like Emotional Design (Norman, 2004) or Hedonomics (Helander, 2002) have served as a catchphrase in user experience design, no specific method has been proposed to accomplish them. In sum, there have been various approaches, but few attempts have been made to create new methods for considering emotions and affect in Human Factors.

Task analysis is one of the widely used methods in Human Factors to identify the components of a task, as well as users’ perceptual, cognitive, and behavioral demands related to the task (e.g., Gordon, 1995; Roth & Mumaw, 1995). The earliest efforts were focused on applying scientific approaches to break down tasks into basic and systematic components (Annett & Stanton, 1998). Cognitive Task Analysis (CTA) focuses on the cognitive components of users’ behavior while performing a task (Chipman et al., 2000). It identifies the mental processes and skills required for proficient task performance and recognizes changes that occur during skill development (Redding et al., 1990). In Human Factors, researchers have applied the existing methods to other domains or have creatively modified them for new applications–for example, the Sound Card Sorting task was derived from the traditional Card Sorting task (Jeon, 2015). Likewise, we adapted Task Analysis to articulate emotional demands related to the task. There has been a similar attempt (Crowson et al., 2020), but actual analysis was not provided in their paper. By analyzing plausible emotional states triggered by each event in the interaction with artifacts, designers can deeply understand the situation, predict interactions, and alter user experiences.

TEA involves basic task analysis, but provides (1) types of emotions induced by event triggers or engaged with tasks, (2) why they are induced, and (3) how they can be mitigated in the process of interactions with technologies. We provide descriptions of TEA components and structure, demonstrate sample analyses in three use cases to show its effectiveness, and discuss its implications.

Task Emotion Analysis (TEA)

The goal of Task Emotion Analysis is to analyze each step of the task with the target user’s emotional states in mind to understand their psychological states, predict their next behaviors, and update their interactions and experiences accordingly. This analysis will ultimately help designers create emotionally engaging (including not emotionally bothered or frustrated) interactive artifacts.

From different techniques such as functional (Rasmussen, 1986), ecological (Gibson, 1979), or procedural approaches to task analysis, the event trigger model (Dix et al., 2004) was selected for Task Emotion Analysis. It was because emotion is defined as physiological responses of the brain and body to threats and opportunities (Damasio, 1994), or “triggering stimuli.” Our focus of emotion analysis was centered around event triggers. We considered various task analysis representations, including List, Hierarchical, Functional Flow, Decision/Action Diagram, and Operational Sequence Diagram, but List-type task analysis was adopted due to its ease of modification for analysis components.

The iterative design process commenced with preliminary analysis using the existing list-type task analysis. To enhance generalizability, we selected three interactive scenarios: assistive robotics, automated vehicles, and multi-agents. Researchers independently analyzed emotions for each task using the list format task analysis, and then compared and contrasted the components of each analysis refining the level of analysis and the definition of essential components through repeated iterations.

Regarding the definition of analysis components, the iterative elaboration process yielded five core components–event, trigger, action duration, emotion mappings, and design implications. Event is defined as a task that consists of triggers, which can be initiated by a user or system. Recognizing the transitory nature of emotions, action duration becomes crucial for tracing their dynamic changes. Emotion mappings can be accomplished using either a dimensional or discrete approach. The dimensional approach diagram visualizes the trajectory of the user’s emotional states, whereas the discrete approach allows designers to clearly label states, facilitating ideation of intervention or mitigation strategies.

Sample Analysis

To depict the example analysis, we chose three different task domains. Then, we applied the TEA method to analyze each step of the tasks with users’ plausible emotional states. This process allowed us to revisit the structure of the TEA and update it.

Sample Scenario 1: Assistive Robotics

The first example domain is within the realm of assistive robotics, specifically targeting interventions for children with autism spectrum disorder (ASD). In these interventions, robots are programed to display a variety of emotions through gestures and facial expressions, enhancing the children’s ability to interpret and engage in social interactions—an area where individuals with ASD often experience challenges. The parents of children with ASD often seek interventions to address sensory processing difficulties. Emotion-based robotic interventions, utilizing video-based instruction and socially assistive robots, have become popular due to their potential in improving sensory and emotional processing in children with ASD.

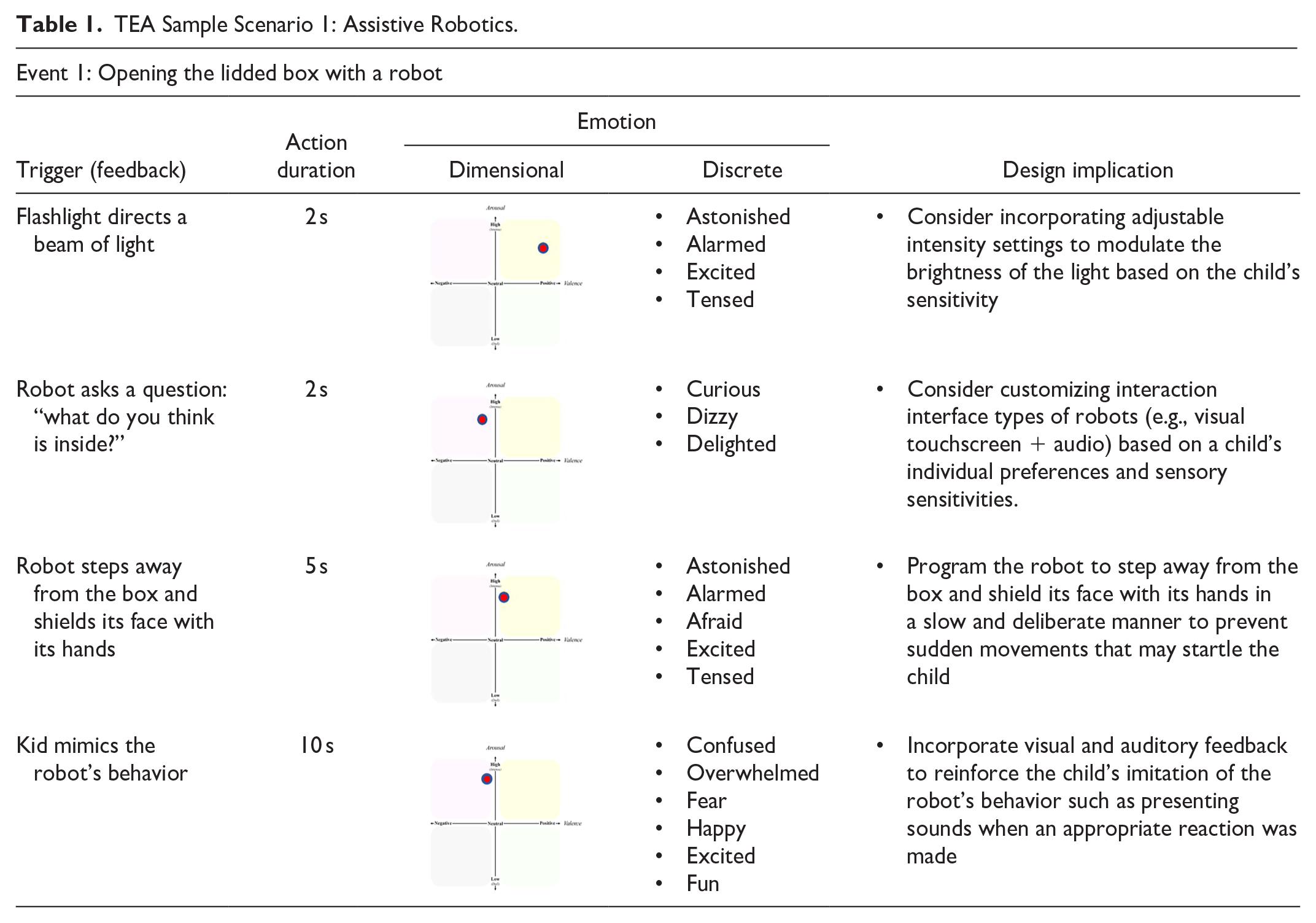

Our analysis focused on specific interactions between a robot and a child with ASD, applying the TEA (Task-Emotion Analysis) method to examine each step of the interaction and identify plausible emotional responses. This scenario was developed with input from experts in robotic interventions and utilized in studies to optimize the effectiveness of robot-assisted therapy (Javed et al., 2019). In the presented scenario, the robot interacts with a child using sensory stimuli such as light and sound to elicit and gage emotional responses. For instance, the robot might use a flashlight to create a visual stimulus, and then observe and react to the child’s emotional response, as detailed in Table 1. Each interaction aims to help the child better process sensory information and express emotions, critical components in ASD therapy. Our TEA results, as summarized in the table, outline the triggers, action durations, and specific emotions experienced by the child, providing valuable insights for refining robot behaviors and interaction strategies to enhance therapeutic outcomes.

TEA Sample Scenario 1: Assistive Robotics.

Sample Scenario 2: Takeover Request in Automated Vehicles

The second example domain pertains to tasks within the realm of automated driving vehicles. In level 3 automated driving, also referred to as “conditional automation,” drivers are not required to keep hands on the steering wheel while the vehicle controls its speed and direction (Society of Automotive Engineers, 2021). However, when the vehicle encounters challenging situations beyond its control capabilities, such as adverse weather conditions, road hazards, or construction zones, the system may prompt the driver to regain control of the vehicle. At this stage of automated driving, there is an interaction process between the in-vehicle agent and the driver when requesting a transfer of control.

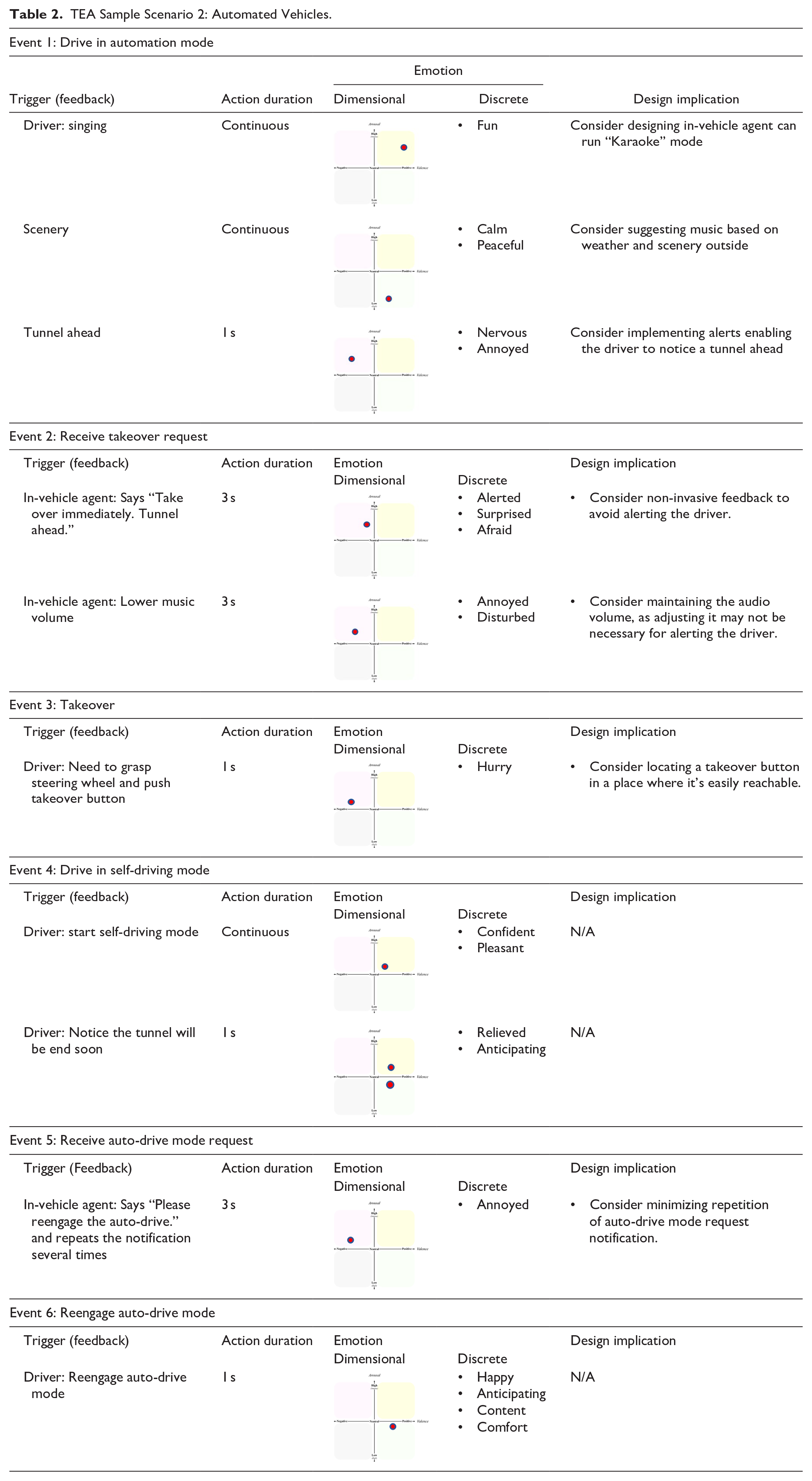

Our analysis focused on specific events involving a takeover request scenario designed by Wang, Lee, et al. (2022) and Wang, Song, et al. (2022). In their study, the vehicle in level 3 automation initiates a takeover request as it approaches a tunnel. When the driver receives a speech-type takeover request along with a visual notification on the navigation panel from an in-vehicle agent, they must cease their current activities and engage in driving by using a toggle attached to the steering wheel. After passing the hazard zone, the in-vehicle agent asks the driver to reengage in the automated driving mode.

Table 2 shows our results of TEA for the takeover request scenario in automated level 3 driving situations. Each event represents a stage of situations that can occur during the transferring control task. For example, in Event 2, a trigger that provokes an emotion from the user (driver) is when the in-vehicle agent says “Take over immediately. Tunnel ahead.” This process takes around 3 s, and the driver may feel alerted, surprised, or afraid at this moment. In this case, a designer who uses our TEA could consider a non-invasive feedback method to avoid eliciting negative emotions in drivers while maintaining urgency in the display.

TEA Sample Scenario 2: Automated Vehicles.

Our analysis focused on specific events involving a takeover request scenario designed by Wang, Lee, et al. (2022) and Wang, Song, et al. (2022). In their study, the vehicle in level 3 automation initiates a takeover request as it approaches a tunnel. When the driver receives a speech-type takeover request along with a visual notification on the navigation panel from an in-vehicle agent, they must cease their current activities and engage in driving by using a toggle attached to the steering wheel. After passing the hazard zone, the in-vehicle agent asks the driver to reengage in the automated driving mode.

Table 2 shows our results of TEA for the takeover request scenario in automated level 3 driving situations. Each event represents a stage of situations that can occur during the transferring control task. For example, in Event 2, a trigger that provokes an emotion from the user (driver) is when the in-vehicle agent says “Take over immediately. Tunnel ahead.” This process takes around 3 s, and the driver may feel alerted, surprised, or afraid at this moment. In this case, a designer who uses our TEA could consider a non-invasive feedback method to avoid eliciting negative emotions in drivers while maintaining urgency in the display.

Sample Scenario 3: Multi-Agents

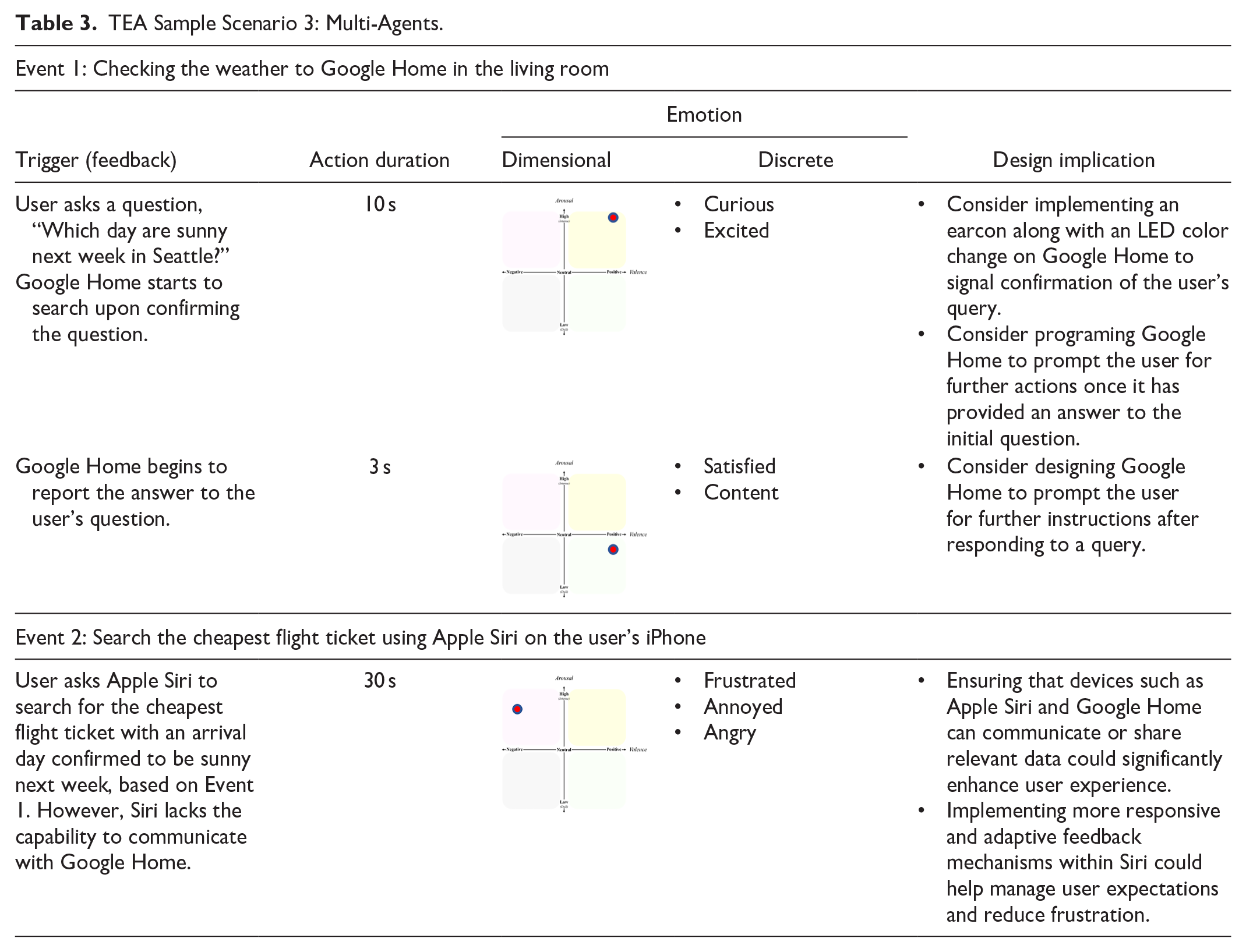

The third example of Task Emotion Analysis contains two sample events in Table 3. The design implication of this example is that multiple agents would be able to be aware of contexts of user activities across multiple devices using real-time data (Faye et al., 2015; Santos et al., 2010). Until Event 2 takes place, the user does not realize that Google Home and Apple Siri are not synchronized with user activities across the user’s multiple devices, so the user’s emotional state shifts from the first and fourth quadrants to the second between Event 1 and Event 2. This means that the user’s emotion can change from a positive state to a negative state within 1 min (a short window of time).

TEA Sample Scenario 3: Multi-Agents.

The user’s end goal is to book the cheapest one-way flight ticket to Seattle with a specific weather condition, and the user prioritizes a sub-task of finding out which days are sunny next week using Google Home in the living room at home. While riding a taxi, the user wants to reach the end goal on the user’s iPhone using Apple Siri, but it does not have capability to track the user’s activity in Event 1. From Event 1 to 2 of Table 3, we can observe that two different devices and agents, Google Home at the user’s home and Apple Siri on the user’s iPhone do not communicate with each other, and the user needs to repeat the command, “Which days are sunny next week in Seattle?” in Event 2. This experience can cause a change in the user’s emotional state as described both in the dimensional graphs of arousal and valence and in discrete descriptions in Table 3. Here, we can imagine that the user’s acoustic tone in Event 2 may differ from the one in Event 1 if a real-time emotion analysis has been done (Faye et al., 2015; Val-Calvo et al., 2020). Therefore, we suggest that these two different agents across multiple devices should communicate with each other by exchanging the information of the user’s activities and would be able to recognize the user’s current emotional state while having voice interaction with the user. This way, the user would be able to achieve the goal seamlessly using multiple agents across multiple devices while the user’s overall emotions stay on the positive side across the events.

Discussion

To identify plausible emotions associated with specific tasks and understand their implications in the interactive artifact design process, we devised a novel analysis and design tool, Task Emotion Analysis, by adapting Cognitive Task Analysis. While elaborating on the constructs and format, we gained valuable insights. Similar to cognitive task analysis, the level of analysis can vary. Consequently, the level of analysis has not been standardized across the three examples provided. Skill and discernment are essential for researchers to select the most fitting level that aligns with their objectives. Norman’s (2004) emotion model was used to guide strategic approaches for alternative designs. Example 1 focused more on the visceral level, whereas Examples 2 and 3 emphasized more on the behavioral level. Given that task analysis is a decomposition technique for specific tasks, reflective level strategies may not manifest as expected. Concerning emotion mappings, instances arose where emotions were concurrently mapped onto opposite valence dimensions (e.g., excited vs. nervous). Further investigation into the types of design strategies that can emerge in such cases would be intriguing. Familiarity with diverse emotion theories and models (e.g., Jeon, 2017) can assist researchers in formulating design implications grounded in psychological mechanisms. However, delving into underlying mechanisms in the analysis may extend beyond the scope and render it less accessible, leading to their exclusion. While the proposed method appears promising and practically implementable, refinement and validation through empirical studies are warranted.

Limitations

The emotional states we discussed in the present paper solely focus on integral affect, which means that emotions derived from the task itself. However, it would be fairly difficult to predict users’ incidental affect (i.e., emotions derived from the task-irrelevant context). Suppose that a user is furious because of a fight with a friend right before the use of the machine. The emotions will still influence the interaction with the machine. Possibly, the emotionally intelligent machine would identify/recognize the user’s emotional states and dynamically/adaptively interact with the user. However, it is difficult for designers to predict everyday life’s emotional situations. There might also be backfire effects. Depending on the user group or task domain, affective design can make users feel a “gimmick” or a bad “joke.” Task Emotion Analysis can be a method to create emotionally engaging interactive products, but the strategy can begin with avoiding emotionally frustrating products or situations. Even though the proposed method seems to be promising and practically implementable, this analysis method should be further sophisticated and validated with empirical studies.

Conclusion and Future Work

To make a more systematic approach to emotional design practice in Human Factors and HCI, we devised a new method, Task Emotion Analysis (TEA). The present paper introduces this new method with sample analyses. We will assess additional values of the outcomes of TEA by expert interviews. We will also compare its outcomes with those coming from general Task Analysis to see how emotional aspects can be added by employing this method. With more validation, we hope that this method can serve as a legitimate method for emotional design in Human Factors.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.