Abstract

Utilizing advanced machinery in team environments often necessitates reliance on a leader, or “operator,” who is in charge of interfacing with technology directly on the team’s behalf. This is particularly evident in modern military missions, where teams depend on operators of robotic machinery to safely navigate dangerous tasks or hazardous terrain. The present work is part of a larger study on integrating a semi-autonomous quadruped robot into military training exercises. This analysis focused on how trust in an operator controlling Spot influenced different aspects of human-robot interaction (HRI) among the team. Operator trust was found to be positively correlated with positive perceptions of the robot, trust in and reliance on the robot, and willingness to use the robot for future exercises. Improving operator trust, thereby shifting the focus to human-human interaction, may prove an effective avenue for bolstering confidence in robotic systems.

Keywords

Introduction

Increasingly, humans interact and rely on advanced technological systems in a variety of contexts. O’Neill et al. (2022) drew a distinction between automation (technologies that perform specific, pre-programmed tasks) and more complex autonomous technologies that possess additional decision-making abilities, free from human involvement. These so-called autonomous agents, though highly capable, lack complete autonomy in most practical settings (Soltanzadeh, 2022). Current autonomous agents still require some degree of human interaction for safe application in medical fields, industry, manufacturing, and other high-risk environments. For instance, a computer with autonomous features may require human authorization to execute a task or provide information that influences the human decision (O’Neill et al., 2022). Thus, understanding how the interface influences the system—from how technology is introduced to how it is operated—is crucial to mitigating risk and optimizing overall success.

Appropriately developed trust in autonomous agents is closely tied to a system’s overall ability, performance, and effectiveness (de Visser & Parasuraman, 2011). Yet in team settings, utilizing advanced machinery for a common goal often necessitates the reliance on a leader, or “operator,” who is in charge of interfacing with technology directly on behalf of the team. Consequently, users often interface more directly with the human operator than with the technology itself. This is particularly evident in modern military missions, where teams depend on operators of robotic machinery to safely navigate dangerous tasks or hazardous environments (Billing et al., 2021).

Robots like Spot, a quadrupedal robot developed by Boston Dynamics, can provide value to military teams (Moses & Ford, 2021). As outlined in Spot’s Terms and Conditions of Sale, the weaponization of Spot, like all other robotic technologies developed by Boston Dynamics, is strictly prohibited (Boston Dynamics, 2024). Nevertheless, Spot’s 360° horizontal view, long range, and ability to navigate rocky terrain make the robot useful for reconnaissance, clearing objectives, and enemy distraction, among other tasks. Spot also exhibits some beneficial autonomous functioning in its collision avoidance and self-righting capabilities. In fact, in the past few years, the French army has deployed Spot in training exercises and evaluated its use in various combat scenarios (Quand l’EMIA, 2021). The potential for robots like Spot to add value to nonmilitary teams is clear as well. For instance, The New York City Police Department has relied on Spot robots as public safety tools for investigating bomb threats and surveilling other potentially dangerous areas (Rubinstein, 2023). Spot has also assisted commercial groups in active construction zones for tasks like LiDAR scanning (Wetzel et al., 2022).

While the integration of robots like Spot into teams is becoming more widespread, the role of the operator in these contexts is not well understood. Robots themselves are traditionally regarded as completely autonomous agents (Smithers, 1997), yet this is rarely true. Similar to many other robots, Spot’s movements and feedback must be controlled and interpreted remotely by a human operator through a tablet. Consequently, there is a need to fill the current gap in understanding of operator trust and its ramifications on human-robot interaction (HRI) specifically in team environments.

The work presented here, which is part of a larger study on integrating quadrupedal robots like Spot into military teams, aimed to explore this gap. This analysis focused on how trust in the operator controlling Spot influenced different aspects of HRI in the team as a whole. Our research context involved teams of cadets utilizing Spot during training exercises by interfacing with an operator. As such, we hypothesized that operator trust would positively correlate with positive perceptions of the robot, trust in and reliance on the robot, and willingness to use the robot in future exercises and operations.

Method

Participants

To study HRI and operator trust, cadets at the United States Military Academy at West Point were provided access to Spot as a resource during training exercises. Cadets were between 18 and 21 years of age and were inexperienced with using robots in military training. Forty-nine cadet participants (36 male-identifying and 13 female-identifying) engaged directly with the operator of Spot during the exercise (n = 49).

Materials

The Spot Robot

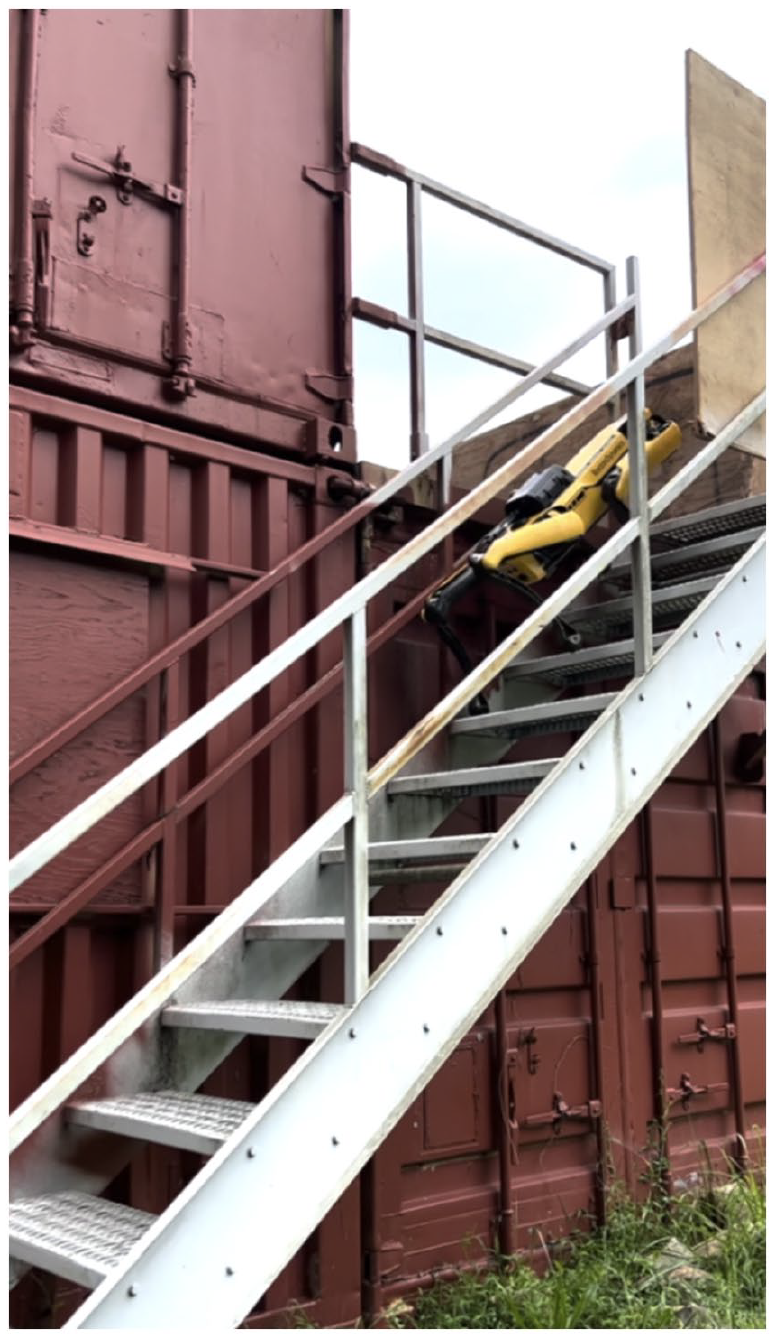

Boston Dynamics’ quadrupedal robot Spot weighs approximately 70 lbs and has maximum dimensions of 1,100 × 500 × 700 mm. Spot can be controlled remotely through either Ethernet connection or WiFi, and its range is long. (Spot was mostly controlled from 50 m or less during the robot interaction task, and the limits of Spot’s range were never encountered during the study.) Spot is equipped with 360° horizontal view and a maximum speed of 1.6 m/s. The robot can navigate terrain with a slope of up to ±30 degrees and steps with heights of up to 300 mm (Figure 1). Spot’s 90 min battery life was sufficient for it to run continuously for the duration of the robot interaction task.

Spot ascending a flight of stairs on the objective.

Robot Interaction Task

Cadets worked in teams of roughly 36, called “platoons,” which were further divided into four more specialized “squads” (about nine cadets each) to complete one of two tasks while interacting with Spot: The first task, called an “attack,” consisted of stealthily approaching and eventually striking on a target (an enemy vehicle with a few enemy combatants) from dense woods. The second task, called a “raid,” involved a surprise offensive strike on an objective. This objective contained several buildings and structures that cadets had to “clear” of enemy soldiers, weapons, or other hazards. In both the attack and the raid, one cadet was designated as a “platoon leader” who was charged with additional planning and executive decision-making. Both tasks were designed to simulate modern military missions and were predetermined by the Cadet Field Training regimen, which is an essential part of cadets’ required Cadet Leader Development Training. The cadets were supervised and assessed throughout the task by an instructor, who was present for the duration of the exercise.

Measures

Post-exercise survey data were collected to assess cadets’ attitudes toward both the operator and Spot during the robot interaction task. The Trust in Leaders Scale predictability and competence subsections (Adams et al., 2008) were utilized as a measure of operator trust. Trust in and reliance on Spot were measured using (a) the Trust Perception Scale—HRI 14-item subscale (Schaefer, 2016) and (b) the Lee & Moray trust/reliance questionnaire (Lee & Moray, 1992). The Godspeed scale quantified robot perceptions regarding Spot’s anthropomorphism, animacy, likeability, perceived intelligence, and perceived safety (Bartneck et al., 2008). A Willingness to use Robot in Future scale followed the Technology Acceptance Model intention/usage behavior information (Venkatesh & Davis, 2000). For clarity, questionnaire wording was slightly modified (e.g., “automation aid” was rephrased as “robot”).

Procedure

Spot was operated by a researcher without prior military experience who was trained to proficiency such that their performance in controlling Spot remained consistent between trials. The researcher thus served as a natural language interface operator, ensuring that Spot functioned as well as possible.

The operator waited near the Landing Zone for the cadets to arrive at the site by helicopter. Soon after the team landed, the operator (1) briefly demonstrated Spot and its basic capabilities for the entire platoon and conferred with the platoon leader and instructor on where they wanted Spot to be positioned for most efficient use based on their strategic plan–each for approximately 3 minutes.

The cadets then completed the robot interaction task in the field, spending between 30 and 60 minutes interacting with Spot. As directed by cadets, the operator remotely steered Spot and relayed feedback from its cameras throughout the duration of the exercises (Figure 2). Spot was controlled via Ethernet. At the conclusion of the task, Spot was guided back to the Landing Zone, where the operator replaced Spot’s battery with a fully-charged one and awaited the next platoon’s arrival.

Researcher controlling Spot using a tablet and sharing Spot’s camera views with cadets.

Results

Through the human operator interface, cadets successfully utilized Spot for a variety of purposes to advance their mission. These tasks included reconnaissance of the objective, possible traps, and enemy stationing as well as clearing objectives. By utilizing Spot rather than relying on their human teammates for these tasks, cadets were able to avoid several human casualties.

Post-exercise survey data indicated that cadets had wide-ranging perceptions of both the operator and Spot. Data from the attack exercises (n = 9) and the raid exercises (n = 40) were combined for correlational analysis. Analysis for each measure’s relationship with operator trust scores was performed using the “corrplot” R package (Wei & Simko, 2021). The Bonferroni correction method was implemented to address family-wise error.

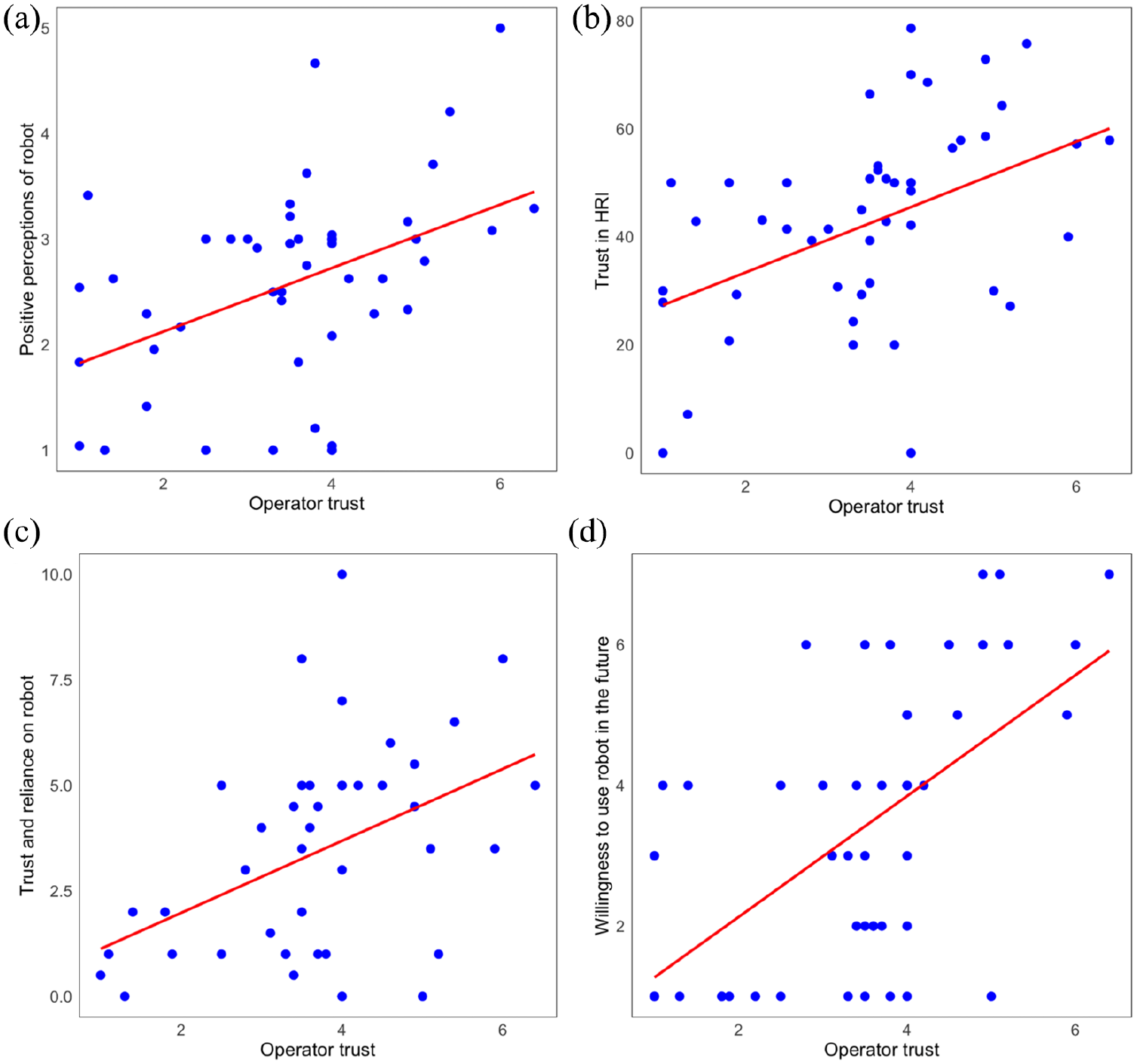

Correlational analysis revealed that, as hypothesized, operator trust was positively and significantly associated with all of the following reported measures:

Positive perceptions of the robot as measured by Godspeed (r[48] = 0.43, p = .002), where y = 0.301x + 1.523 (Figure 3a),

Trust in HRI as measured by the Trust Perception Scale—HRI (r[48] = 0.43, p = .002), where y = 6.05x + 21.3 (Figure 3b),

Robot trust and reliance as measured by and Lee & Moray (r[43] = 0.43, p = .003), where = 0.853x + 0.274 (Figure 3c),

Cadets’ willingness to use the robot for future exercises and operations as measured by Willingness to use Robot in Future (r[47] = 0.58, p < .001), where y = 0.860x + 0.410 (Figure 3d).

Scatterplots visualizing the relationships between operator trust and (a) Godspeed scores, (b) Trust Perception Scale—HRI scores, (c) Lee & Moray scores, and (d) Willingness to use Robot in Future scores.

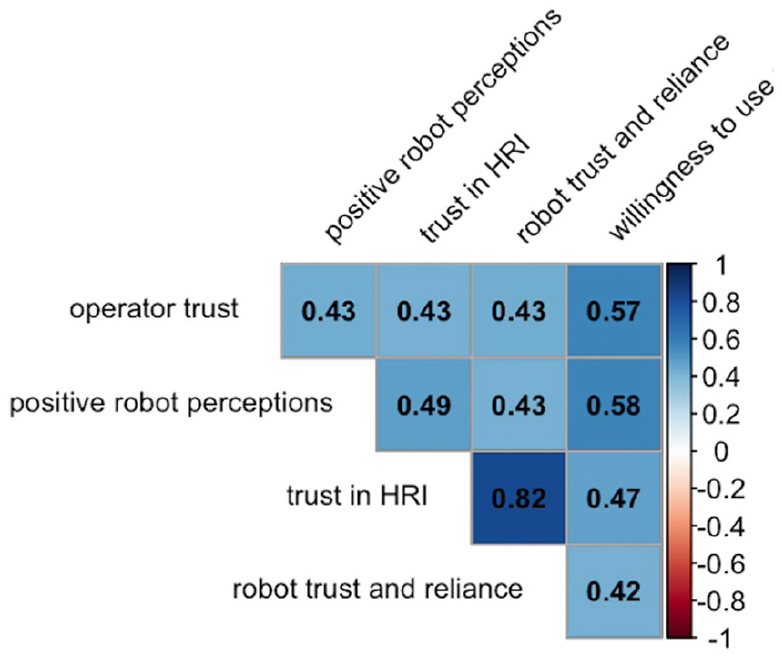

While prior research demonstrated the importance of trust in human-autonomy teaming, the present study extended these findings to human-robot relationships. Indeed, the correlation matrix (Figure 4) confirmed that positive robot perceptions, trust in HRI, and robot trust and reliance scores also shared positive correlations with cadets’ willingness to use the robot in the future.

Correlation matrix visualizing associations of operator trust with positive perceptions of the robot, trust in and reliance on the robot, and willingness to use the robot for future exercises and operations (abbreviated as “willingness to use” above).

Cadets who reported high operator trust tended also to perceive Spot as a trustworthy, reliable, and useful teammate. This novel finding holds practical significance for implementing semi-autonomous technology in both military teams and other collaborative settings. Focusing on establishing trust in the operator—rather than just trust in the robot—may prove worthwhile for encouraging teams to be fully open to using robotic technology and trusting in its capabilities, thereby maximizing its utility.

Discussion

Technology holds great promise to make life safer, more user-friendly, and more efficient in a variety of contexts. Yet modern autonomous systems are, in practice, semi-autonomous at best. This study highlights the significant role of operator trust in how humans perceive, trust, and use their technological teammates. Meaningful, positive correlations were evident between operator trust and measures of trust in HRI, positive robot perceptions, robot trust and reliance, and willingness to use the robot in the future.

These measures, as indicated by previous literature, are critical to overall team success and are associated with the extent to which the full potential of novel semi-autonomous technologies can be realized in team environments (O’Neill et al., 2022). Yet with the rapid advancement of new technology, overcoming users’ skepticism of autonomous agents is an increasingly difficult challenge. Improving operator trust, thereby shifting the focus to human-human interaction, may represent a more effective avenue for bolstering confidence in robotic systems and achieving overall positive outcomes.

Limitations

Limitations of the present research include the cadets’ lack of operational military experience and training with robotics, varying levels of prior exposure to Spot, and inconsistencies in the amount of time Spot was utilized during the exercises (which varied in length).

Additionally, this study did not consider performance measures for Spot during the training exercises. Performance of the robot is known to affect trust (van den Brule et al., 2014), which suggests that Spot’s performance could have directly influenced reported trust in Spot and, by extension, trust in the operator. Furthermore, performance measures for cadets were not possible in the current study because the training exercises were largely controlled by the instructors, who facilitated the cadets’ scaffolded learning throughout.

Future Research

The larger study encompassing this research involved collecting interview data in which leaders, mentors, and cadets were asked to describe how they utilized Spot, rate how well they used it as a resource, brainstorm new ways to use Spot in the exercise, explain perceived limitations of Spot for their specific task, and assess Spot’s ability to assist them in accomplishing their overall mission. Future research could include qualitative analysis of this material in relation to operator trust.

Additional studies are needed to better differentiate the function of operator trust in various offensive military operations (e.g., ambush, feint, demonstration, counterattack, raid) as well as in defense, stability, and support combat scenarios.

Other future directions related to operator trust in human-robot teams could include:

• Investigating factors that influence operator trust, which may include certain demographics or characteristics of the operator and the teammates’ prior experience interacting with the operator.

• Exploring how other variables like task domain and priming (with the robot and/or with the operator) relate to operator trust.

• Understanding the role of operator trust outside of military contexts (e.g., industrial or civilian applications) that involve group collaboration with a robot teammate.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the ONR award N00014-22-1-2813.