Abstract

Detecting small objects reliably is particularly difficult in modern neural architectures, where scale imbalance, background clutter, and high object density frequently degrade feature quality and prediction accuracy. To address these challenges, we propose YOLO-Super Resolution and Attention (YOLO-SRA), a multi-scale neural architecture enhanced with attention and super-resolution. The architecture introduces High-Resolution Feature Enhancement (HRFE) to better represent small objects without incurring high computational cost, a Grouped Multi-Scale Split Attention (GMSA) mechanism to efficiently extract features from densely distributed objects, and Weighted Fine-Grained Cross-Scale Fusion (WFCF) network for adaptive multi-scale feature integration with Unmanned Aerial Vehicle (UAV)-specific adjustments. The Spatial-Attentive Non-Maximum Suppression (SA-NMS) strategy is further employed to reduce missed detections in overlapping regions. Extensive experiments on the VisDrone dataset demonstrate that YOLO-SRA outperforms the baseline YOLOv11, achieving 12.5% and 11.3% increase in mAP

50 and mAP

Keywords

Introduction

Accurate detection of small objects in complex scenes remains a fundamental challenge in machine learning, owing to large scale variations, dense spatial distributions, and environmental factors such as occlusion and illumination changes.1–3 Small and densely packed objects pose particular difficulties for conventional detectors, 4 as minor feature degradation or misalignment can lead to missed detections. 5 Over the past decade, deep learning has provided a transformative pathway to address such challenges. With the success of convolutional neural networks (CNNs),6,7 deep learning-based object detectors now surpass traditional approaches in both accuracy and speed.8–10 Current mainstream detectors can be broadly categorised into two-stage and one-stage methods. Two-stage models, represented by Faster R-CNN 11 and Mask R-CNN, 12 first generate region proposals and then refine them through classification and bounding box regression. One-stage models, exemplified by the YOLO series13–25 and SSD, 26 predict object categories and locations directly on grids or anchor boxes through a single forward pass, achieving higher efficiency. Given the stringent requirements of real-time applications, one-stage detectors are generally favoured for unmanned aerial vehicle (UAV) aerial imaging, which has been widely applied in agriculture, forestry, emergency rescue, and environmental management.27–32

Despite these advances, existing methods are not yet fully adapted to UAV aerial data. UAV aerial images differ substantially from natural images, as illustrated in Figure 1: targets are often small-to-medium in scale, densely distributed, and embedded in complex backgrounds. 33 Environmental factors such as vegetation occlusion, shadows, seasonal variation, and illumination changes further complicate feature extraction. In contrast, standard benchmarks such as PASCAL VOC 34 and MS COCO 35 predominantly contain objects with clearer contours and simpler scene composition, and therefore do not fully reflect the density, scale variation, occlusion, and distant small targets commonly observed in practical UAV applications. Consequently, models trained on these datasets often struggle to learn robust representations, resulting in missed detections of dense small objects and tiny distant targets and, ultimately, suboptimal recognition performance.

Examples of manually collected images and UAV aerial images.

Low-resolution image further exacerbates these challenges, 36 as blurred object boundaries degrade detection accuracy and hinder the extraction of discriminative features for small objects. Super-resolution techniques37–40 have been shown to reconstruct high-frequency details and enhance fine-grained object representation. However, existing approaches often rely on computationally expensive preprocessing or fail to integrate efficiently with detection networks, limiting real-time applicability in UAV scenarios. Similarly, attention mechanisms41–44 can selectively focus on salient spatial and channel-wise features, suppress irrelevant background, and strengthen responses to densely distributed objects, yet conventional attention modules may introduce redundancy or overlook cross-scale dependencies, reducing their effectiveness for small and densely packed objects in complex aerial scenes.

To overcome these problems, we propose

The main contributions of this paper are summarised as follows: A High-Resolution Feature Enhancement (HRFE) method that integrates super-resolved details with original features via attention, enhancing small-object representation while maintaining computational efficiency. A Grouped Multi-Scale Split Attention (GMSA) that applies selective attention across grouped feature maps, improving extraction efficiency and detection of densely distributed small objects. A Weighted Fine-Grained Cross-Scale Fusion (WFCF) network that achieves adaptive fusion with UAV-specific detection head adjustments. A Spatial-Attentive NMS (SA-NMS) strategy that alleviates suppression among overlapping objects by replacing hard deletion with smooth confidence decay.

The article is organised as follows. Section 2 provides related work on YOLO and its algorithmic variants. Section 3 describes the proposed method. Section 4 presents numerical results through examples. Finally, Section 5 concludes the work and suggests potential future developments.

Advances in YOLO-based methods for unmanned aerial vehicles

YOLO 18 reformulates object detection as a single-stage regression problem, directly predicting bounding boxes and class probabilities on image grids, achieving a balance between accuracy and speed that enables wide adoption in real-time applications. 46 Since the introduction of the first YOLO model, successive versions have continuously advanced detection performance. To mitigate gradient vanishing, YOLOv9 25 introduces Programmable Gradient Information (PGI). In contrast, YOLO-World enhances YOLO with open-vocabulary detection capabilities via vision–language modelling and pre-training on large-scale datasets. YOLOv11 15 emphasises edge deployment by incorporating the C3K2 module for parameter reduction. Regarding feature fusion and optimisation, YOLO-ACR 47 introduces adaptive channel–spatial feature fusion and augments the loss with dynamic scaling and coordinate distribution modelling to improve localisation and detection accuracy on natural-image benchmarks. YOLOv13 16 employs a Hypergraph-based Adaptive Correlation Enhancement (HyperACE) mechanism for global cross-location and cross-scale feature fusion. In contrast to YOLO-ACR, which focuses on fusion and loss optimisation for general natural-image detection, YOLO-SRA targets UAV aerial imagery and introduces HRFE/GMSA/WFCF/SA-NMS to better handle dense small targets and complex backgrounds.

These innovations not only drive continuous performance improvements on standard benchmark datasets, but also highlight key optimisation directions—such as parameter reduction and enhanced feature fusion—that are particularly relevant for addressing the challenges of UAV aerial image detection. Such advances provide a useful foundation for adapting and deploying YOLO-based models in UAV scenarios.

With the increasing application of UAVs across various fields,48–51 researchers have proposed UAV-oriented adaptations of YOLO to address domain-specific challenges. For instance, GGT-YOLO 1 integrates transformers52,53 to enhance feature extraction. To mitigate small-object information loss, CF-YOLO 2 and Drone-YOLO 3 refine multi-scale feature extraction and fusion through neck network optimisation. YOLO-DKR 54 explores automated design by applying Differentiable Architecture Search (DARTS) 55 with kernel reuse technology, overcoming constraints of manually crafted architectures. Additionally, some researchers optimise performance via loss function improvements, PARE-YOLO 56 uses Exponential Moving Average (EMA) to balance positive and negative sample weights, while HFC-YOLO11 57 integrates boundary alignment penalties and scale-adaptive weight mechanisms into the Complete Intersection over Union (CIoU) geometric constraint framework. Despite these advances, detecting small, densely distributed UAV objects remains challenging. We adopt YOLOv11 as the baseline due to its strong generalization and real-time efficiency for UAV-specific improvements.

Applications of super-resolution algorithms in object detection

Super-resolution algorithms aim to reconstruct high-resolution images from low-resolution counterparts. Early methods were primarily based on interpolation 40 and reconstruction, 38 while deep learning 58 has since established neural network-based models as the dominant approach. Low resolution often degrades accuracy due to blurred object boundaries, and super-resolution methods can enhance detection by recovering finer details. Two main strategies exist. The first is data preprocessing, 59 where super-resolution networks are applied prior to detection. DCASR 37 and YOLO-MST 39 employ this two-step pipeline by enhancing image quality before passing the data into the detection model. The second is tight integration of super-resolution and detection networks. SRNet-YOLO, 60 introduces a feature map resolution reconstruction module that jointly learns super-resolved features for detection, reconstructing P5 feature maps to the size of P3 to recover fine-grained details.

Motivated by these findings, we design the High-Resolution Feature Enhancement (HRFE) module for UAV detection. Instead of directly substituting inputs with super-resolved images (an approach that incurs high computational cost), HRFE extracts high-resolution features and fuses them with base image features via an attention mechanism. This design enhances image detail representation while maintaining efficiency, effectively improving detection performance on UAV aerial image.

Attention mechanism

Attention mechanisms selectively emphasise informative features to improve representation learning. 61 Channel attention models inter-channel dependencies to strengthen discriminative feature channels,42,43 whereas spatial attention highlights object-relevant regions and suppresses background interference.41,44 Self-attention (e.g., scaled dot-product attention) captures long-range dependencies by modelling global correlations among features. 52

Integrating attention mechanisms into YOLO has demonstrated notable improvements in real-time detection tasks. 62 For example, SCCA-YOLO 63 sequentially combines spatial attention with shared semantic and channel self-attention to improve YOLOv8 22 accuracy in autonomous driving applications. YOLO-FaceV2 64 employs a separated enhancement attention module to handle occluded faces, focusing on affected regions within YOLOv5. Inspired by these approaches, we propose Grouped Multi-Scale Split Attention (GMSA), which enhances responses to dense small objects and strengthens feature extraction through selective attention.

NMS algorithm

Non-maximum suppression (NMS) 45 is a standard technique in object detection for retaining high-confidence bounding boxes while suppressing redundant ones. Traditional NMS applies hard deletion based on a fixed intersection-over-union (IoU) threshold, which can lead to missed detections in dense small-object scenarios due to mutual suppression. Variants such as DIoU-NMS 65 and CIoU-NMS 66 refine overlap calculation, and Adaptive-NMS 67 dynamically adjusts the IoU threshold according to candidate box density. Soft-NMS 68 replaces hard deletion with smooth confidence decay, and Softer-NMS 69 further incorporates regression uncertainty, yet neither explicitly accounts for spatial relationships between objects. To overcome these limitations, we propose Spatial-Attentive NMS (SA-NMS), which calculates spatial attention weights based on the distance between candidate box centers, quantifying spatial correlations. Combined with smooth confidence attenuation, SA-NMS fully abandons hard deletion and more accurately mitigates mutual suppression among densely packed objects.

Proposed method

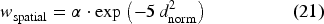

The overall architecture of the proposed YOLO-SRA is illustrated in Figure 2. The network follows the standard YOLOv11 design, consisting of three main components: the backbone, neck, and head.

Overall architecture of the YOLO-SRA model. The blue dashed boxes highlight the newly introduced detection heads and enhanced feature fusion pathways in comparison to the YOLOv11 baseline.

Let the input image be

To enhance tiny object detection, the High-Resolution Feature Enhancement (HRFE) module enriches the input image representation by injecting high-resolution details. Additionally, the Grouped Multi-Scale Split Attention (GMSA) mechanism is integrated into the backbone to improve feature extraction for densely distributed small objects.

Although YOLOv11 15 performs well on natural-image benchmarks, its default design is less effective for UAV aerial object detection, primarily due to the pronounced domain shift between natural images and UAV aerial imagery and the prevalence of small, densely distributed targets in aerial scenes. Specifically, YOLOv11 is largely optimised for natural-image characteristics, where objects are typically larger, less crowded, and exhibit clearer contours; in contrast, UAV aerial images often contain numerous tiny instances embedded in complex backgrounds, which requires stronger fine-grained feature preservation and more effective cross-scale fusion. To address this, we propose the Weighted Fine-Grained Cross-Scale Fusion (WFCF) network, as illustrated in Figure 2.

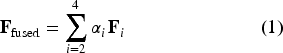

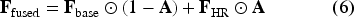

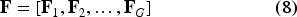

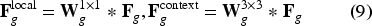

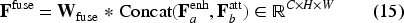

Existing multi-scale fusion designs can be suboptimal in UAV aerial imagery for two reasons: (i) detection heads designed for large objects may be under-utilised in UAV scenes dominated by small targets, and (ii) uniform fusion schemes may not sufficiently emphasise fine-grained cues needed for densely distributed small objects. To address these issues, we introduce three design choices in WFCF: adaptive detection-head configuration, learnable fusion weights, and PixelShuffle-based upsampling. Together, these choices prioritise small/medium objects and improve feature alignment in dense UAV scenarios. To realise these design choices, WFCF simplifies the backbone by pruning redundant layers while preserving core feature extraction capacity, reducing model size and computation. To improve sensitivity to tiny objects, we add a P2 detection head, and remove the original P5 detection head (primarily intended for large objects), thereby reallocating capacity towards small and medium scales. In the neck, conventional upsampling is replaced with PixelShuffle, which rearranges channel information into spatial resolution to better preserve fine details and reduce feature blurring. For the learnable weighted fusion mechanism, let

Cross-layer connections ensure accurate alignment between feature maps of different resolutions, improving the discriminability of dense small objects. These modifications allow the network to focus computational resources effectively on UAV-specific challenges, significantly improving detection accuracy in aerial scenarios.

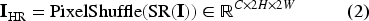

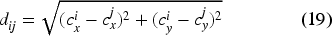

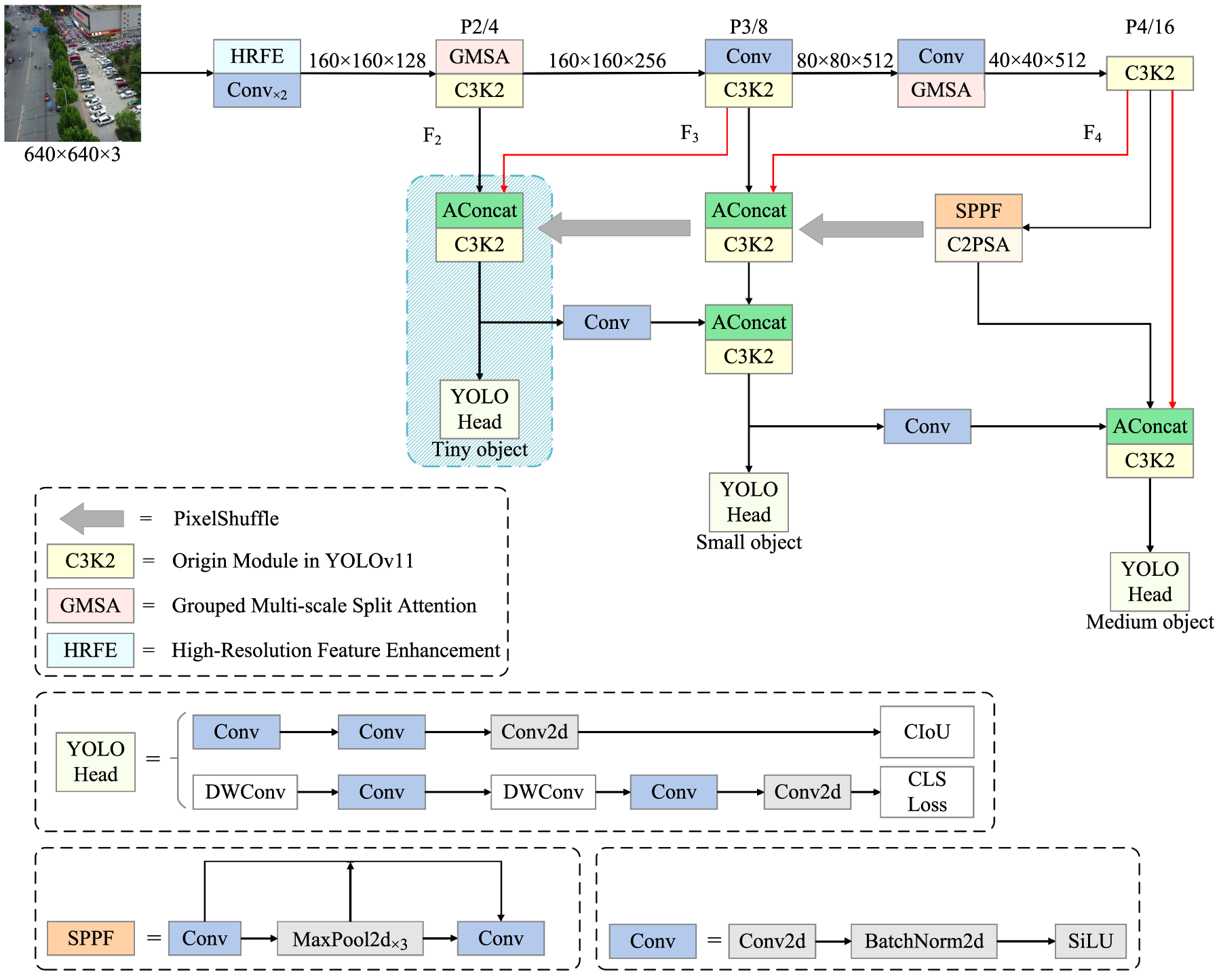

Traditional super-resolution-based detection methods typically upsample the entire input image to improve detection accuracy. Although such enlargement can enhance small-object visibility, it substantially increases computational cost and often compromises real-time performance, limiting practical deployment. The proposed HRFE module addresses this trade-off through a lightweight dual-path design that fuses high-resolution feature information with base features while preserving the original input resolution. Sigmoid-based attention maps are used to adaptively weight detail-enhanced and original features according to target scale and scene complexity, and residual enhancement injects the fused information back into the input representation. This design enables effective detail enhancement without the computational burden associated with full-image super-resolution pipelines. The structure of HRFE is illustrated in Figure 3.

Structure of the HRFE module. The super-resolution (SR) path extracts high-resolution features and upsamples them via PixelShuffle before fusion with the original feature path.

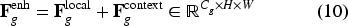

Let the input image be

The two feature maps are concatenated along the channel dimension:

A Sigmoid-activated attention map

Finally, a residual connection adds the fused features back to the original input:

This process improves the recognition of small and blurred objects in UAV images without significantly increasing computational cost, balancing enhancement and efficiency.

Although channel and spatial attention mechanisms are well established and have been incorporated into YOLO-style detectors, many existing attention designs are not tailored to UAV aerial small-object detection, where targets are tiny, densely distributed, and embedded in complex backgrounds. In particular, attention is often applied at a single feature level or without explicitly exploiting multi-level cues, which can limit sensitivity to dense small objects.

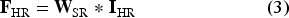

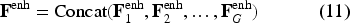

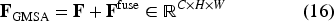

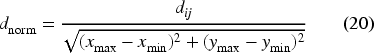

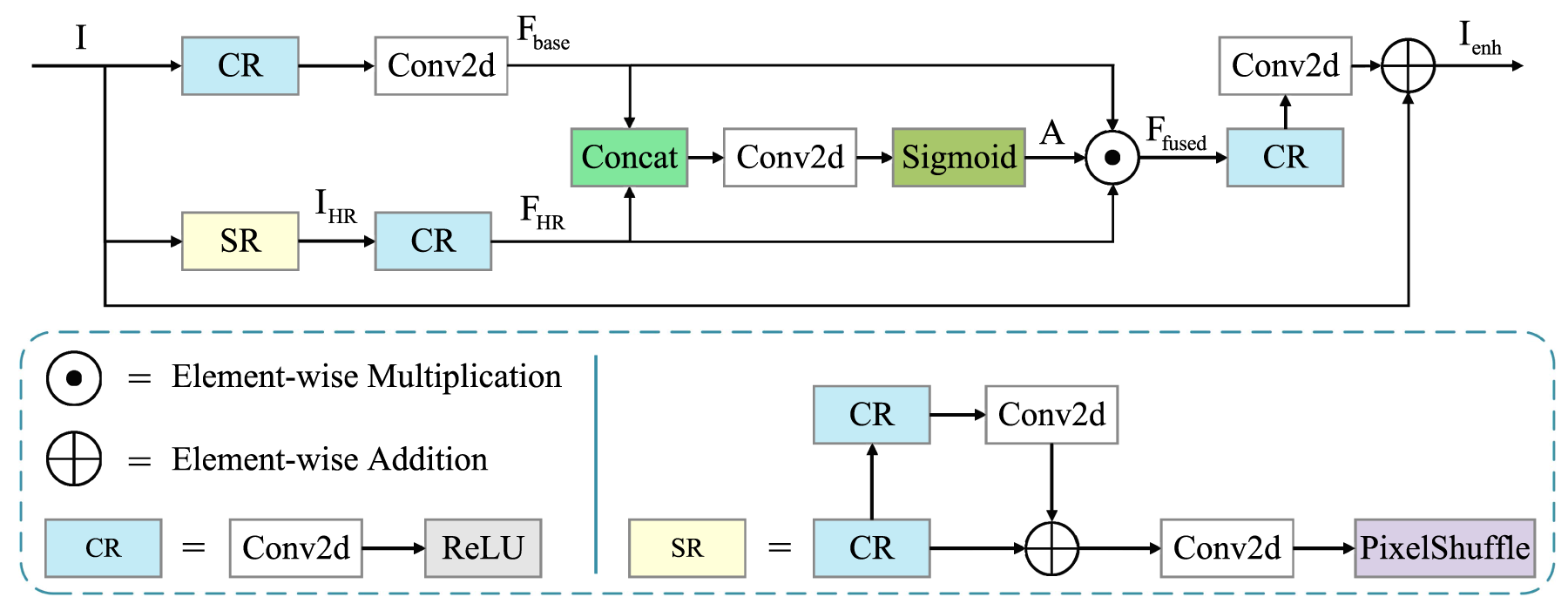

To address these challenges, we propose the Grouped Multi-Scale Split Attention (GMSA) module with UAV-oriented design choices. GMSA groups input channels and processes them in parallel to control computational overhead while maintaining feature extraction capacity. Each group is further processed by dual parallel branches, and multi-scale feature aggregation is employed to enhance sensitivity to dense small targets. Moreover, channel attention is applied to key feature partitions rather than the full feature map, helping suppress background interference while strengthening responses on small-object regions. The structure is illustrated in Figure 4.

The structure of the GMSA. GMSA employs the grouped parallel strategy and attention mechanism to enhance the model’s feature extraction capability.

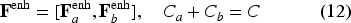

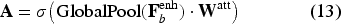

Given input feature

Each group

Finally, all group-level features are concatenated:

To suppress background noise and enhance small object features, channel-wise attention is applied to the second partition

Subsequently, the unweighted

This grouped multi-scale split attention mechanism enhances small object representation by combining local detail extraction with global contextual suppression, improving detection performance in dense UAV aerial images while maintaining computational efficiency.

Traditional NMS

45

employs a hard suppression mechanism with a fixed threshold. Let the set of candidate boxes be

Due to the globally uniform threshold, standard NMS tends to over-suppress in dense object scenarios, erroneously removing valid predictions.

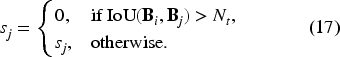

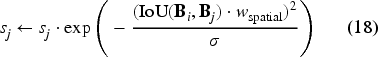

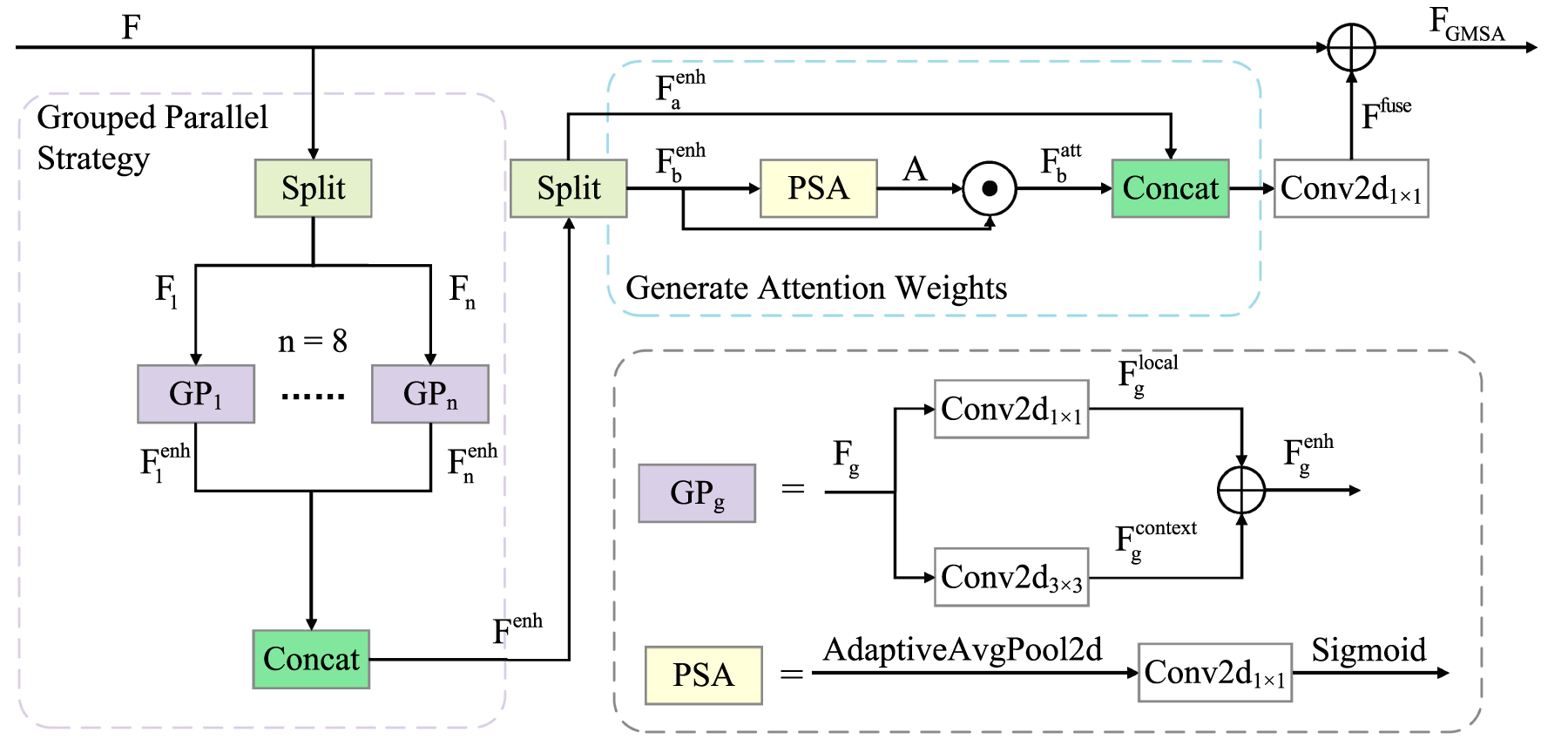

To address the limitations of existing NMS strategies in dense UAV scenarios, we further propose Spatial-Attentive NMS (SA-NMS). Unlike conventional NMS and soft-NMS variants that rely primarily on IoU-based suppression, SA-NMS incorporates a distance-normalised spatial attention weight

The spatial attention weight

Finally, the spatial attention weight is defined as Equation 21:

This design allows suppression to adapt dynamically to spatial distance, providing strong suppression for nearby boxes and weak suppression for distant ones, thereby balancing precision and recall in dense and edge object scenarios.

To evaluate the effectiveness of the proposed YOLO-SRA for UAV aerial image detection, systematic experiments were conducted on the VisDrone dataset, 33 assessing multiple aspects including detection precision and recall. Results demonstrate that YOLO-SRA significantly improves detection accuracy compared with mainstream methods, highlighting the adaptability of the proposed modules to UAV-specific challenges.

Dataset

The VisDrone2019 dataset, 33 developed by the AISKYEYE team at Tianjin University, consists of 288 video clips (261,908 frames) and 10,209 static images captured by multiple UAV platforms. It covers diverse scenes, object densities, weather conditions, and lighting environments. The dataset includes 10 object categories, with 6,471 images for training, 548 for validation, and 3,190 for testing, of which 1,610 test images are annotated.

Evaluation metrics

We evaluate detection performance using Precision (P), Recall (R), mAP

50, and mAP

Experimental setup

The model is implemented in PyTorch and trained on an NVIDIA GeForce RTX 4090 GPU. Input images are resized to

Experimental results

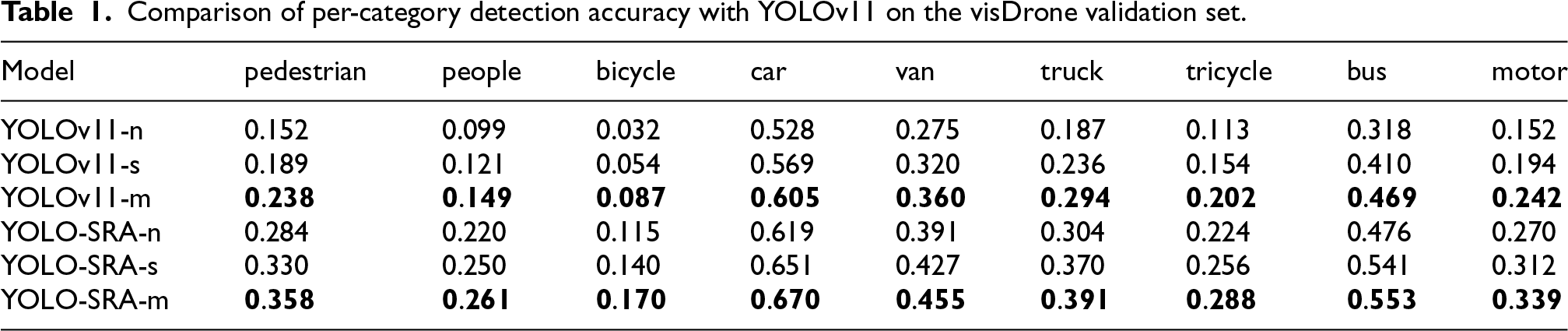

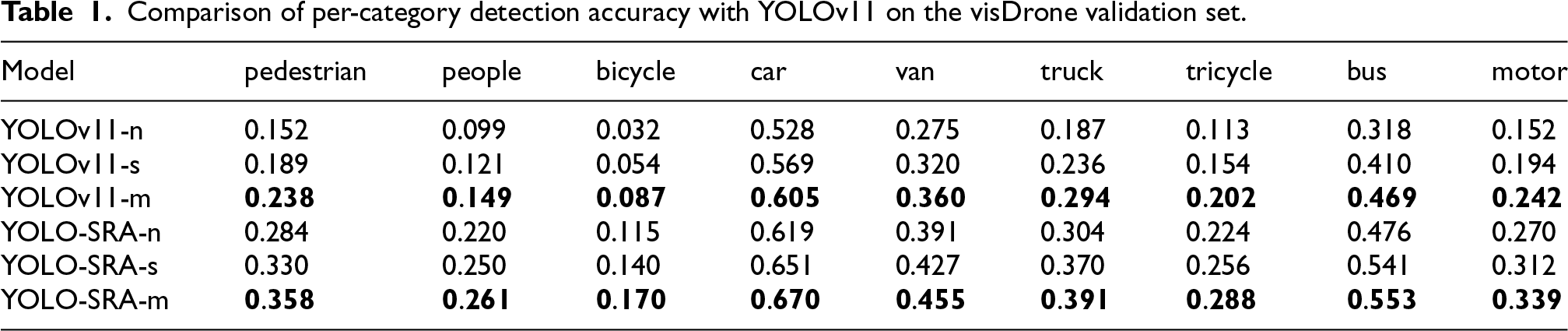

Our model is built based on the YOLOv11 architecture. Therefore, we conducted a systematic comparison of presented the detection accuracy of objects in different categories and different variants of YOLOv11 on the dataset, with detailed results provided in Tables 1 and 2.

Comparison of per-category detection accuracy with YOLOv11 on the visDrone validation set.

Comparison of per-category detection accuracy with YOLOv11 on the visDrone validation set.

Comparison with YOLOv11 on the visDrone validation set.

The experimental results indicate that the YOLO-SRA series consistently improves performance while reducing model parameters. Across the n, s, and m sizes, YOLO-SRA outperforms YOLOv11 with fewer parameters; for example, YOLO-SRA-s has only 36.2% of the parameters of YOLOv11-s. Although the computational load increases slightly, precision, recall, mAP

50, and mAP

Overall, YOLO-SRA achieves an approximate 10% improvement in mAP

50, with YOLO-SRA-s demonstrating an 11.3% increase in mAP

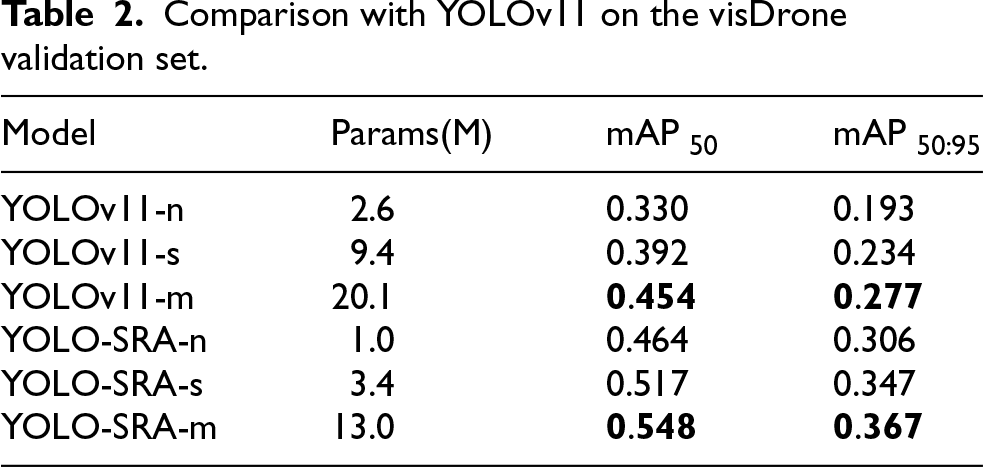

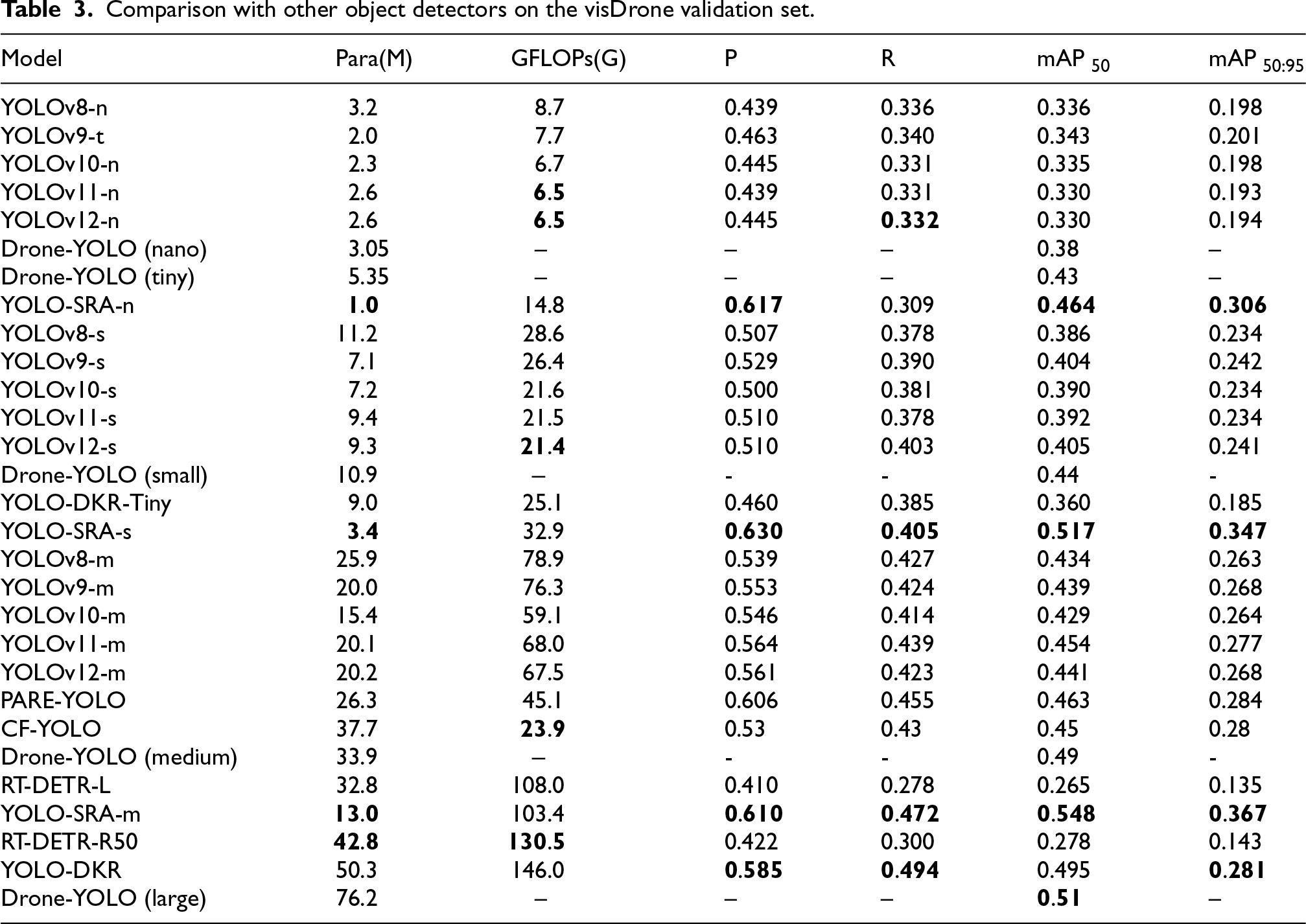

Comparisons with a diverse set of object detectors in Table 3 further demonstrate the competitiveness of YOLO-SRA. A key strength of YOLO-SRA is its compact parameter footprint: across comparable model scales, it uses fewer parameters than most competing methods. While YOLO-SRA incurs a modest increase in GFLOPs, this reflects a deliberate trade-off to improve feature representation for small and densely distributed targets. We report GFLOPs as a proxy for computational cost (and, by extension, potential energy demand on UAV platforms), noting that actual energy consumption depends on hardware and deployment conditions. Overall, YOLO-SRA achieves strong performance on the main evaluation metrics (e.g., mAP

50, mAP

Comparison with other object detectors on the visDrone validation set.

In particular, YOLO-SRA attains competitive accuracy relative to larger baselines such as Drone-YOLO (large) and YOLO-DKR, despite using substantially fewer parameters. Moreover, several recent YOLO-style variants (e.g., PARE-YOLO and CF-YOLO) do not achieve higher accuracy despite having larger parameter counts, and the RT-DETR series shows lower detection performance under the considered setting while requiring higher computational cost. These results indicate that YOLO-SRA offers a favourable balance between model compactness and detection performance, supporting its suitability for UAV-based object detection.

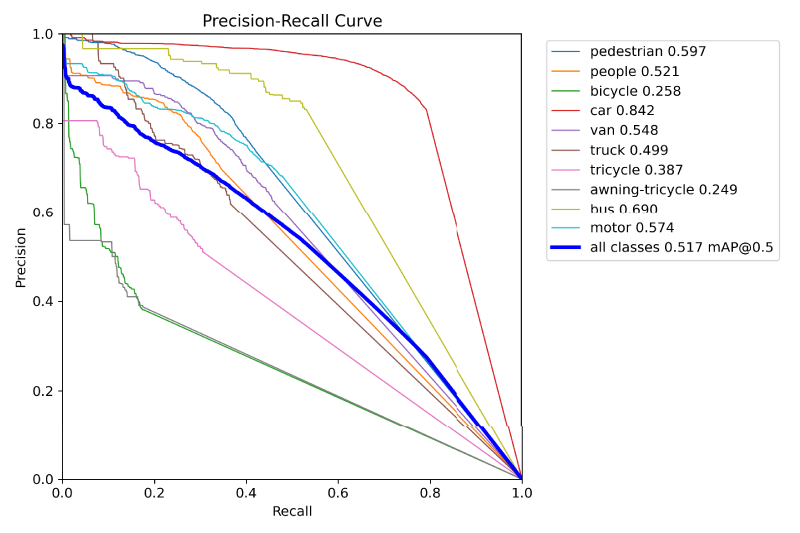

To provide a detailed assessment of the model’s performance in detecting small objects, we present per-category precision-recall curves for YOLO-SRA-s on the VisDrone validation set in Figure 5. The results are computed using an IoU threshold of 0.5, and the corresponding mAP 50 values are determined by calculating the area under each curve, offering a clear visualisation of detection accuracy across different object classes.

The precision-recall curve of YOLO-SRA-s on the VisDrone validation set.

Validation of module effectiveness

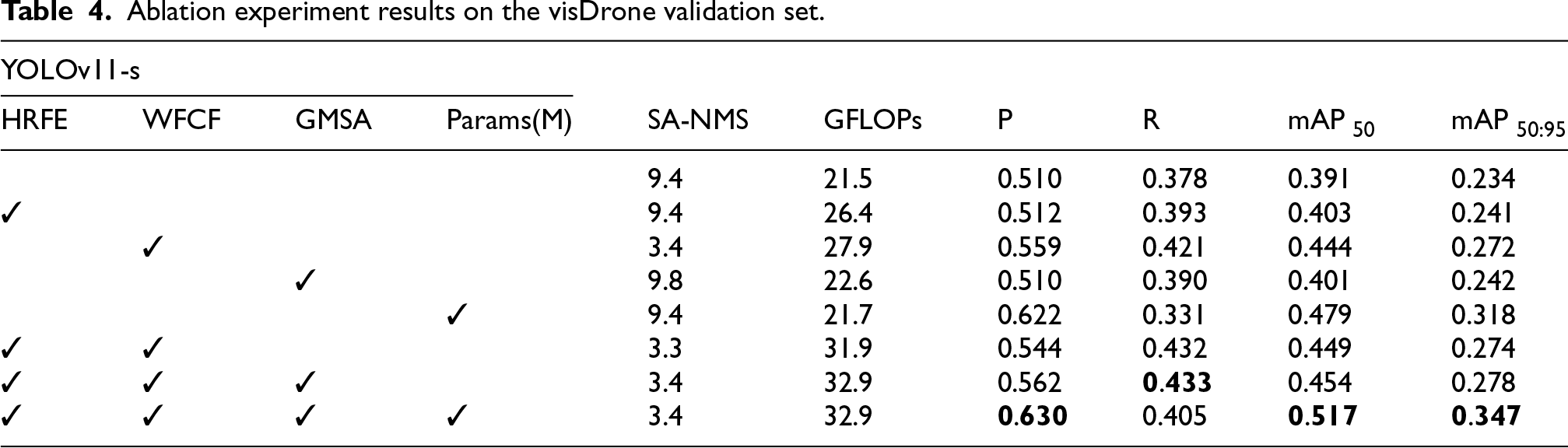

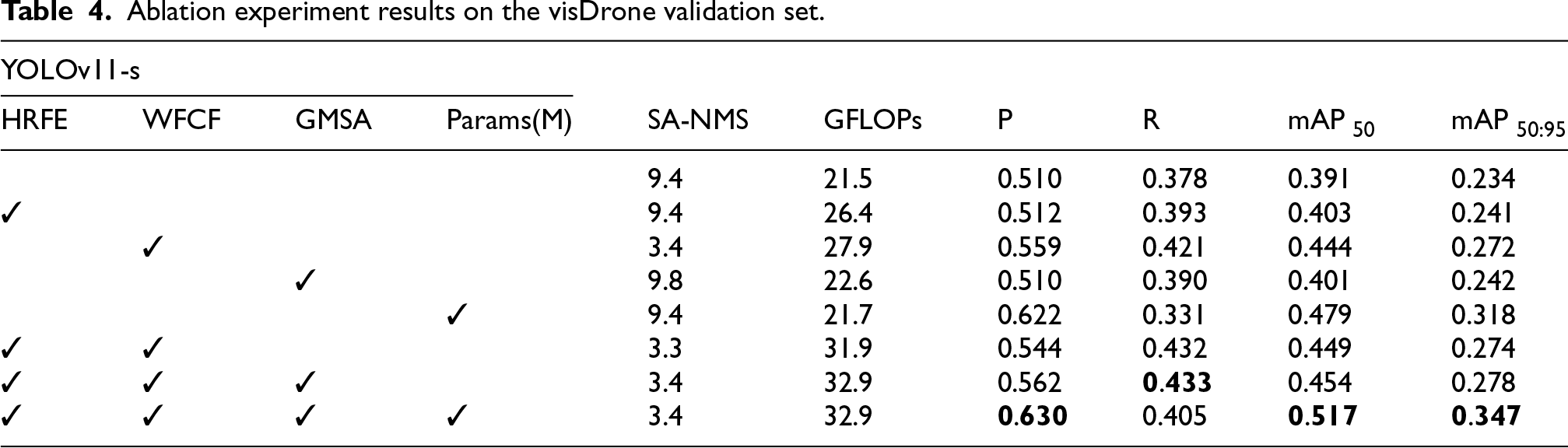

To evaluate the impact of each proposed component, ablation studies were conducted on the VisDrone validation set using YOLOv11-s as the baseline model. The results, summarized in Table 4, illustrate the progressive performance improvements achieved by integrating individual modules and their combined configurations.

Ablation experiment results on the visDrone validation set.

Ablation experiment results on the visDrone validation set.

Each module exhibits distinct functional advantages. The WFCF module delivers a substantial performance gain while markedly reducing model parameters. SA-NMS slightly affects recall but significantly improves precision. HRFE and GMSA enhance precision and optimise feature extraction. The combination of all four modules achieves the best overall performance, maintaining low parameter count, balancing precision and recall, and attaining peak detection accuracy.

Regarding computational efficiency, WFCF and its combinations demonstrate clear lightweight advantages, preserving high performance despite the parameter reduction. SA-NMS introduces negligible computational overhead while providing notable gains. The overall computational cost increase from HRFE and GMSA is modest relative to the performance improvements, confirming the effectiveness and synergy of the proposed modules.

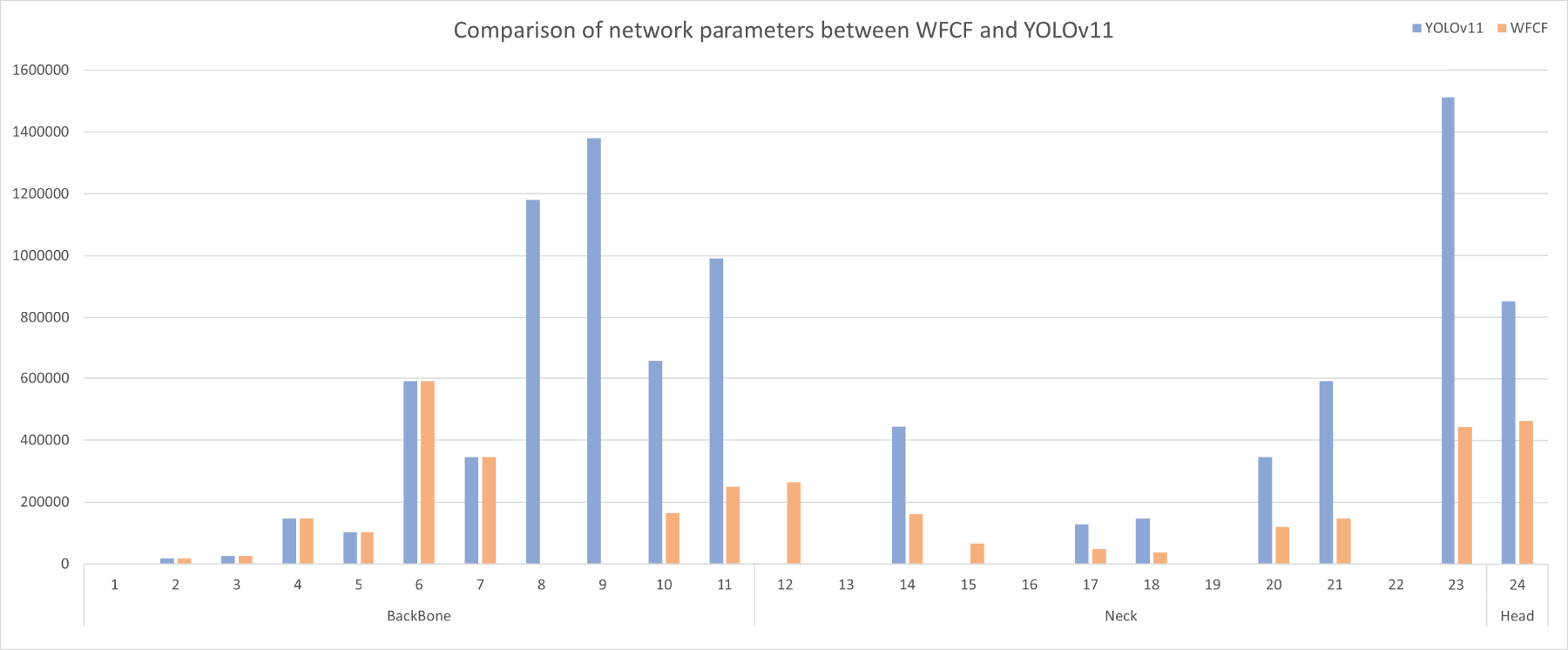

The WFCF module achieves substantial parameter reduction primarily by simplifying the backbone network. Specifically, decreasing the backbone depth reduces the channel dimensions of its output features, which propagates to the neck and head due to inter-layer feature dependencies, leading to a chain reduction of parameters. Figure 6 illustrates this effect, showing that backbone simplification directly decreases parameter counts in subsequent network components, confirming the efficiency of WFCF in reducing model complexity.

Comparison of network parameters between WFCF and YOLOv11. In the backbone, the parameter-free layers correspond to components removed by WFCF. In the neck, the parameter-free layers are operations such as Concat and Upsample, which inherently contain no trainable parameters.

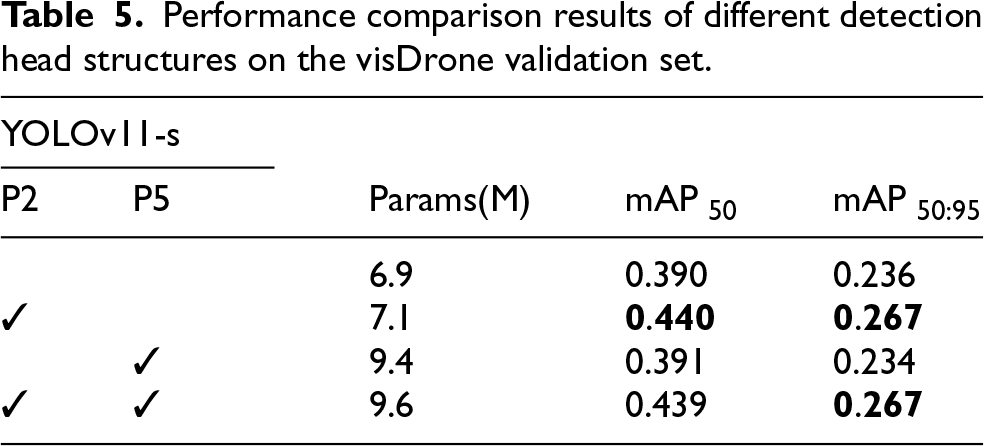

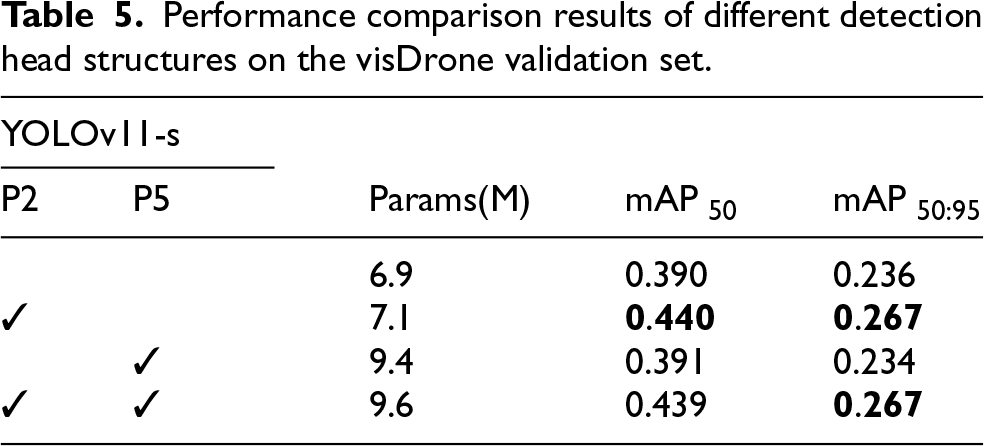

To investigate the influence of detection head design on model performance and efficiency, three strategies were evaluated: removing P5, adding P2, and combining both. The results, compared with the original YOLOv11-s, are presented in Table 5.

Performance comparison results of different detection head structures on the visDrone validation set.

Performance comparison results of different detection head structures on the visDrone validation set.

Removing the P5 detection head reduced both parameters and computational load with minimal impact on performance. Adding the P2 detection head slightly increased parameters and computational cost but substantially improved detection accuracy. The combined strategy reduced parameters relative to adding P2 alone, achieved lower computational load, and maintained comparable performance, with higher precision. These results validate the design choices for the detection head in WFCF, balancing efficiency and performance.

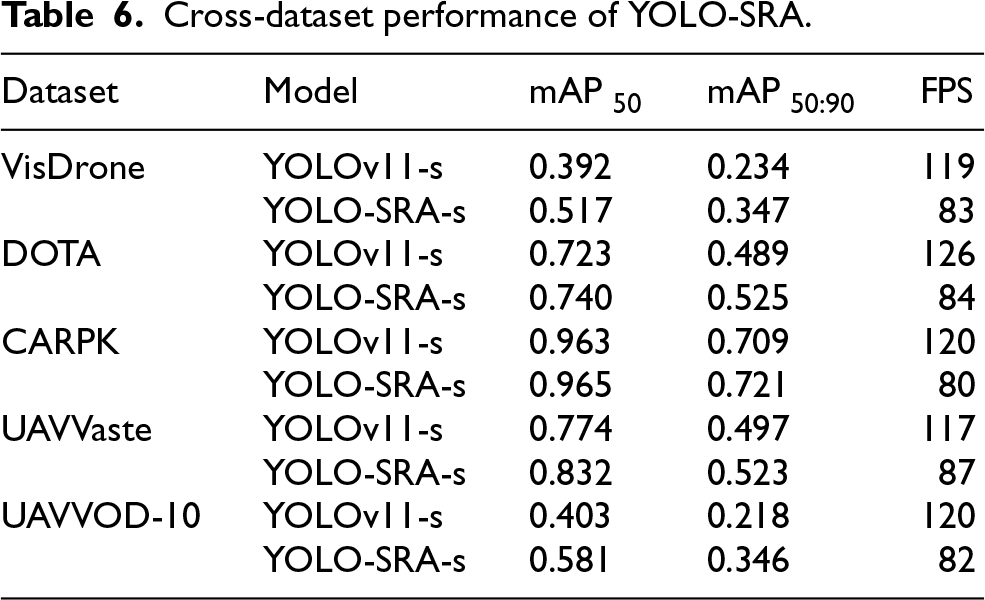

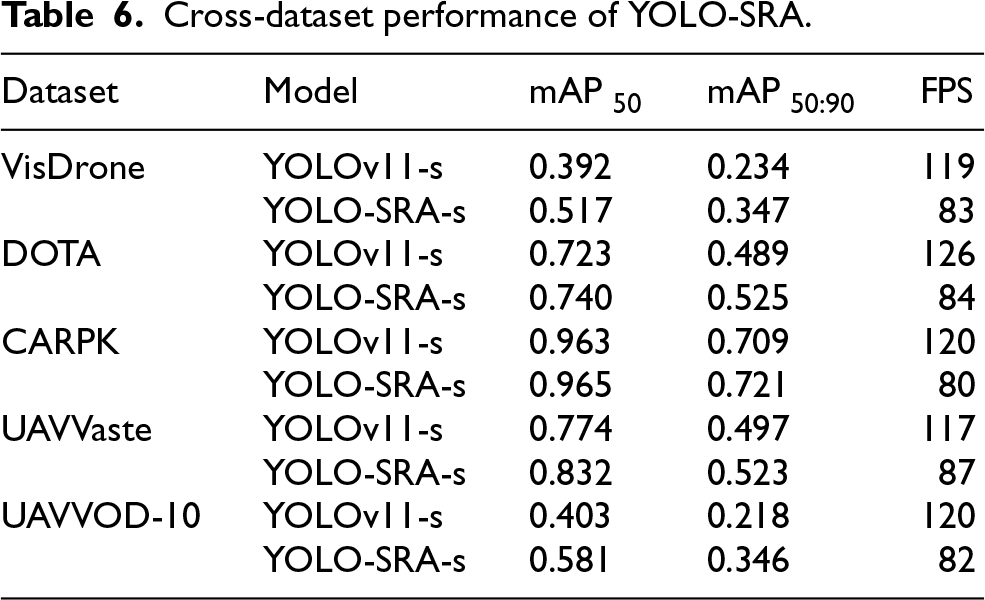

To further evaluate cross-dataset robustness and practical real-time feasibility, we conduct comparative experiments between YOLO-SRA-s and the baseline YOLOv11-s on five representative datasets covering diverse UAV and small-object scenarios. The datasets include VisDrone, 33 DOTA, 70 UAVVaste, 71 UAVUOD-10, 72 and CARPK. 73

As shown in Table 6, YOLO-SRA-s achieves consistent performance gains or maintains comparable performance relative to YOLOv11-s across all evaluated datasets, indicating good robustness under varying scene characteristics and data distributions. In terms of efficiency, YOLO-SRA-s maintains real-time inference capability, achieving FPS values in the range of 80–87 across datasets. Although this is moderately lower than YOLOv11-s, the reduction reflects an accuracy–efficiency trade-off introduced by enhanced fine-grained and cross-scale feature processing. Moreover, YOLO-SRA provides a lightweight configuration with as few as 3.4M parameters (in the smallest variant) and multiple model scales, supporting deployment under different resource constraints on UAV platforms.

Cross-dataset performance of YOLO-SRA.

Cross-dataset performance of YOLO-SRA.

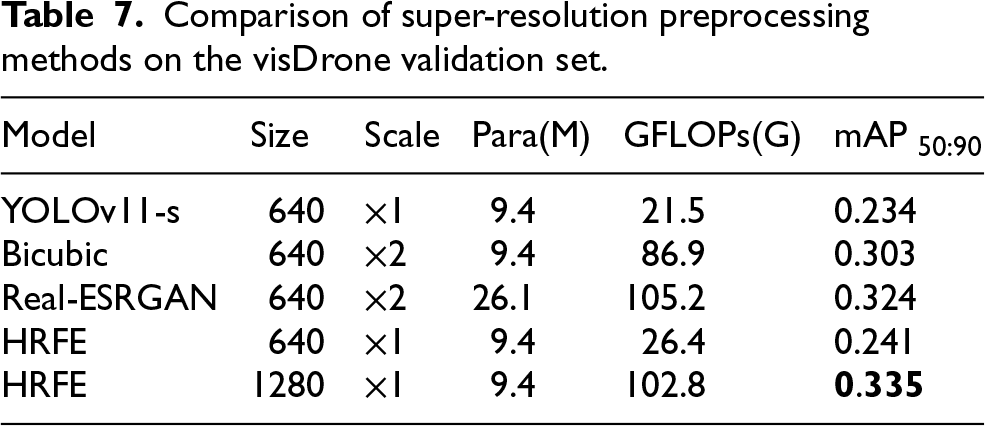

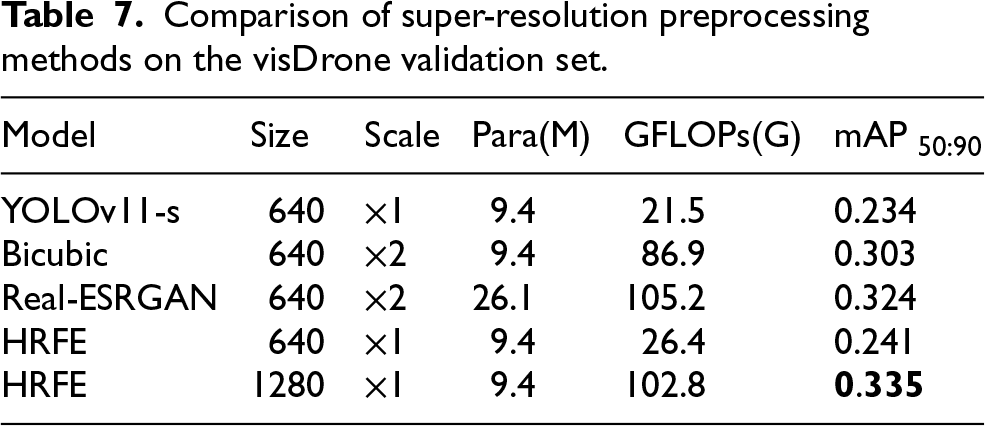

To quantify the performance differences between HRFE and standard super-resolution (SR) preprocessing strategies, we conduct comparative experiments in this study. As shown in Table 7, increasing effective image resolution can improve UAV small-object detection performance; however, conventional SR preprocessing often involves a non-trivial accuracy–efficiency trade-off. For example, interpolation-based upsampling (e.g., Bicubic) introduces no additional learnable parameters, but increases inference cost due to the enlarged input resolution, while providing limited accuracy gains. In contrast, learning-based SR methods such as Real-ESRGAN 74 can yield larger accuracy improvements, but at the expense of substantially increased model complexity and computational overhead. By comparison, HRFE enhances the detector’s input representation by injecting high-resolution feature details while preserving the original input resolution. This design reduces the overhead associated with full-image upsampling and supports flexible adaptation to different input sizes, making it well suited to UAV small-object detection scenarios.

Comparison of super-resolution preprocessing methods on the visDrone validation set.

Comparison of super-resolution preprocessing methods on the visDrone validation set.

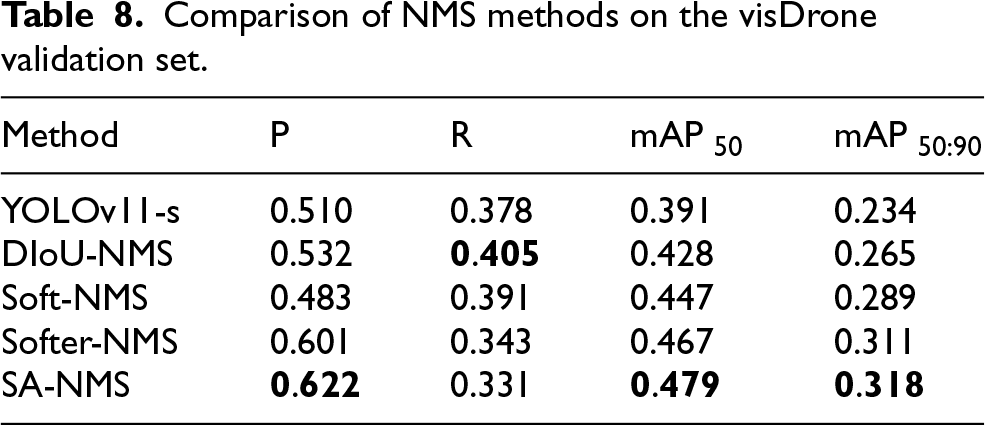

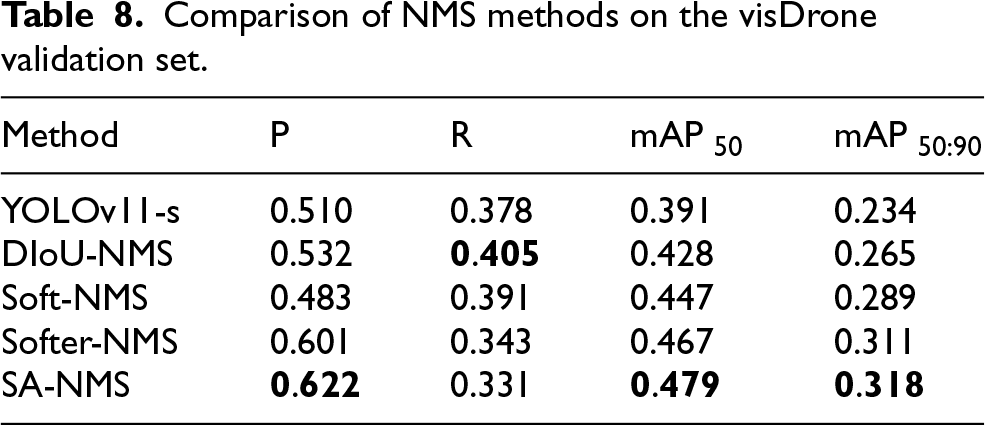

To evaluate the effectiveness of the proposed SA-NMS, we conducted comparative experiments on the VisDrone validation set. As reported in Table 8, replacing standard NMS with SA-NMS improves the main detection metrics for the baseline detector under the same evaluation protocol. Moreover, SA-NMS achieves favourable overall performance compared with representative NMS variants, indicating that incorporating spatial-awareness into the suppression strategy is beneficial for dense small-target UAV scenes. These results support the effectiveness of the proposed SA-NMS design.

Comparison of NMS methods on the visDrone validation set.

Comparison of NMS methods on the visDrone validation set.

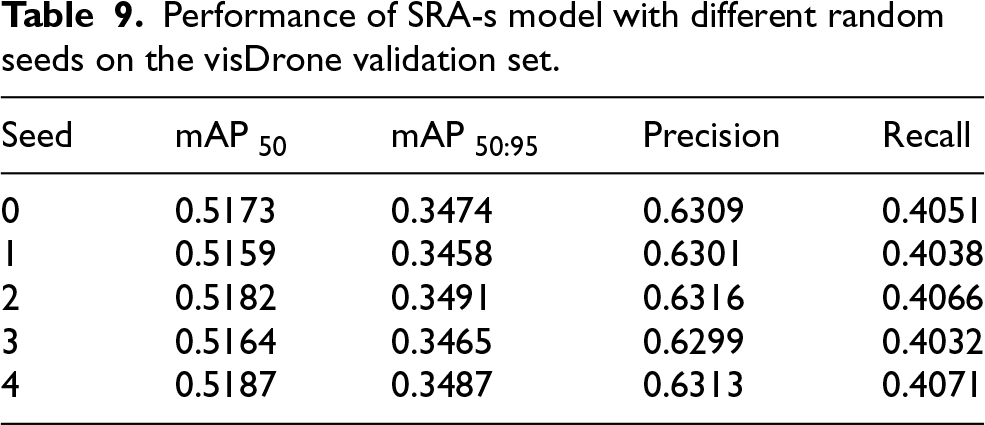

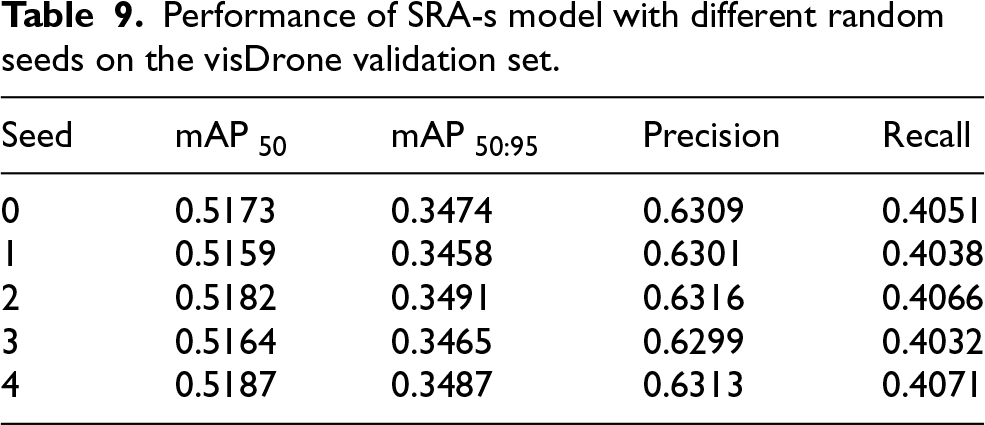

To assess experimental reproducibility and performance robustness, we conducted five independent runs of the proposed SRA-s model under the same experimental setting, varying only the random seed. Each run includes a complete training and evaluation cycle, and the aggregated results are reported in Table 9.

Performance of SRA-s model with different random seeds on the visDrone validation set.

Performance of SRA-s model with different random seeds on the visDrone validation set.

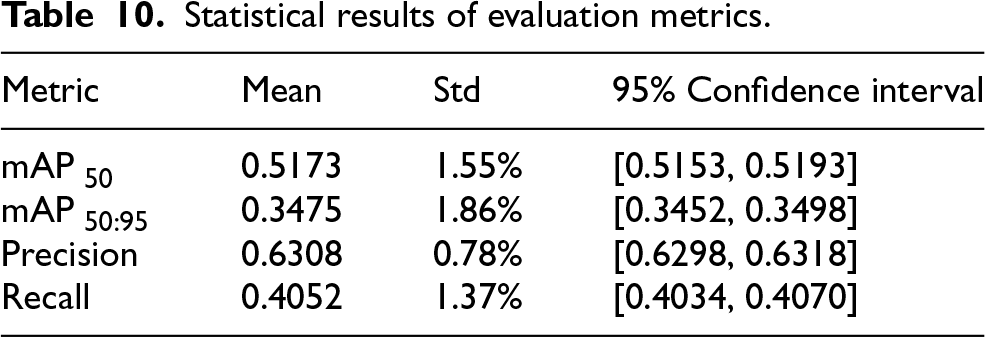

Using the results in Table 9, we computed the mean and standard deviation for the four primary metrics across the five runs, and constructed 95% confidence intervals using Student’s t-distribution. All statistics are reported to four significant figures, and the detailed results are summarised in Table 10.

Statistical results of evaluation metrics.

To further strengthen statistical rigour, we performed significance testing at a significance level of

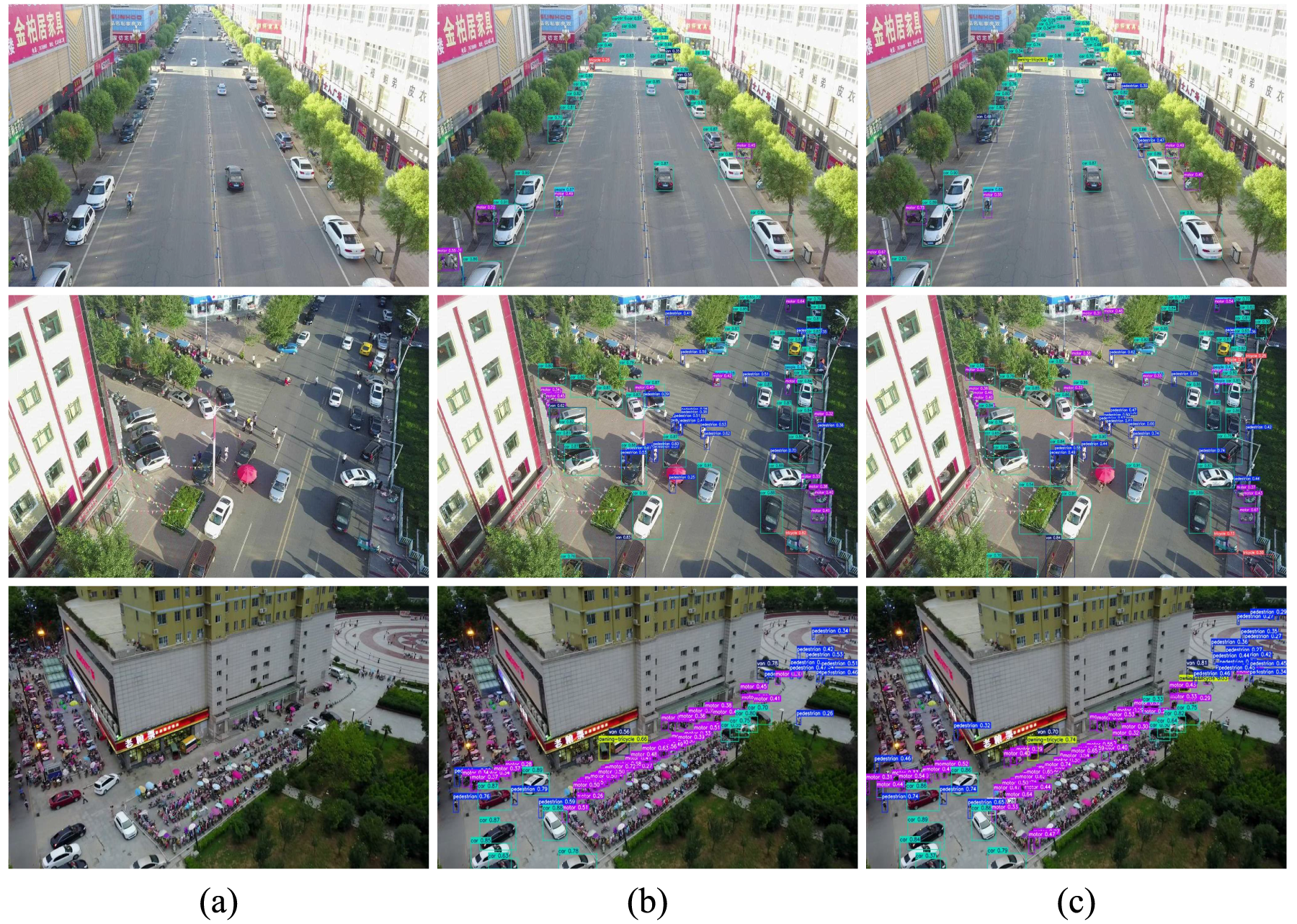

To provide a balanced qualitative comparison, we selected challenging scenes from the VisDrone dataset. Figure 7 shows a representative example:

Visualisation results on the VisDrone dataset: (a) original image, (b) detections produced by YOLOv11-s, and (c) detections produced by YOLO-SRA-s. Object categories are indicated by colour-coded bounding boxes.

This paper presents YOLO-SRA, a novel neural architecture for small-object detection. To fully leverage fine-grained information in high-resolution images, the approach introduces High-Resolution Feature Enhancement (HRFE), which extracts detailed features via a super-resolution path and fuses them with the original image, enhancing edge textures and small-object representation while avoiding excessive computational cost. To efficiently extract both local and global features, a Grouped Multi-Scale Split Attention (GMSA) mechanism is proposed, combining grouped parallel processing with selective attention to enhance the model’s ability to capture both local details and global context. Multi-scale feature integration is further refined through Weighted Fine-Grained Cross-Scale Fusion (WFCF), which uses learnable attention weights and adaptive upsampling to improve representation of small, densely distributed objects, with the added P2 detection head further boosting sensitivity to tiny objects. Finally, Spatial-Aware Non-Maximum Suppression (SA-NMS) optimises detection results in dense scenes through a spatially adaptive suppression mechanism combined with a Gaussian-based confidence decay.

YOLOv11 and other general-purpose object detectors are primarily developed for natural-scene imagery and may be less effective in UAV aerial settings characterised by dense small targets and cluttered backgrounds. In contrast, YOLO-SRA is designed to better match these characteristics by strengthening fine-grained feature representation and cross-scale aggregation. Experiments on the VisDrone dataset indicate that YOLO-SRA improves detection performance on key metrics while maintaining a relatively compact parameter footprint. These results support its suitability for small and densely distributed objects in aerial scenarios. Nevertheless, achieving higher accuracy can incur additional computational cost, which remains a practical constraint for resource-limited UAV platforms. In future work, we will further explore strategies to improve the accuracy–efficiency balance to enhance deployability in real-world UAV applications.

Footnotes

Acknowledgments

This work was partially supported by the National Natural Science Foundation of China (62376127, 61876089, 61876185, 61902281, 61403206), the Natural Science Foundation of Jiangsu Province (BK20141005), the Natural Science Foundation of the Jiangsu Higher Education Institutions of China (14KJB520025), Jiangsu Distinguished Professor Programme.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.