Abstract

Accurate segmentation of low-contrast images plays a crucial role in computer-aided diagnosis and treatment, particularly for early lesion detection and clinical decision support. To address the limitations of existing approaches in boundary localisation and multi-scale context modelling, we propose a lightweight and efficient hybrid segmentation framework, referred to as SwinFuseNet. The proposed architecture combines the strengths of detection-based and Transformer-based models. Specifically, the Global Pyramid Attention Backbone Network integrates a shifted window-based Transformer mechanism to enhance the global representation of blurred lesions in low-contrast images. In the feature aggregation stage, two dedicated modules–Dynamic Zoom Fusion and Spatial Interaction Fusion—are introduced to adaptively integrate information from multiple layers, effectively refining local boundary representations and fine-grained structural features. Additionally, a lightweight attention subnetwork is employed to highlight salient regions while suppressing background noise, thereby improving overall segmentation precision. Experiments conducted on four publicly available low-contrast image segmentation datasets (ISIC 2018, PH 2, LUNA16, Kvasir-SEG and Brisc2025) demonstrate that the proposed method significantly outperforms existing models, including variants of U-Net and a recent detection-based segmentation framework. On the ISIC 2018 dataset, the proposed network achieves a Dice coefficient of 0.9873 and an Intersection-over-Union score of 0.9566, representing improvements of 4.48% and 6.53% respectively over the current state-of-the-art, showing a remarkable 78% and 60% improvement, respectively, toward perfection from the best alternative algorithm. These results confirm the effectiveness and practical relevance of the proposed method in the domain of low-contrast medical image segmentation.

Introduction

Low-contrast image segmentation plays a vital role in computer-aided diagnosis and treatment,1–4 particularly for the early detection of lesions (less than 1,000 pixels 2) and for supporting clinical decision-making. However, several challenges persist, including blurred lesion boundaries, variability in morphology, and inconsistent illumination. 5 These issues are particularly critical in medical imaging, where precise segmentation directly affects diagnostic accuracy 6 and therapeutic planning.

Deep learning-based segmentation architectures have made notable progress in recent years. For instance, U-Net 7 employs a symmetric encoder–decoder design with skip connections to bridge semantic gaps 8 between layers, while UNet++ 9 extends this concept with nested dense skip paths to enhance feature reuse and spatial detail. 10 Attention-based variants, such as Attention U-Net, 7 introduce spatial and channel-wise attention to highlight lesions and suppress irrelevant regions.

Multi-scale context modelling is another key technique for refining boundaries and detecting small lesions. PSPNet 11 applies pyramid pooling for scale-aware feature fusion, and the DeepLab series12–14 utilises atrous convolutions and probabilistic graphical models to capture fine structural details. More recently, Transformer-based architectures have demonstrated strong capabilities in modelling long-range dependencies. The Swin Transformer, 15 in particular, introduces a hierarchical, shifted-window mechanism that balances efficiency and global contextual modelling.

Despite these advancements, existing methods often struggle with balancing global context and fine-grained details, many existing methods face limitations in fusing features across multiple depths or dynamically adapting to diverse lesion characteristics. Conventional fusion strategies often apply static weighting, which may not generalise well across imaging scenarios. 16 Furthermore, global attention modules, though powerful, tend to introduce substantial computational overhead.

To address these limitations, we propose

In the network’s fusion stage, two novel modules—Dynamic Zoom Fusion (DZF) and Spatial Interaction Fusion (DSIF)—are introduced to adaptively integrate multi-resolution features. These modules use gated and multiplicative interactions to improve boundary localisation and fine-grained20,21 feature representation. Finally, a lightweight attention subnetwork22,23 is employed to emphasise lesion regions and suppress background interference, yielding improved segmentation accuracy on low-contrast medical images.

Related work

Attention mechanisms in low-contrast image segmentation

Attention mechanisms have become fundamental in enhancing model sensitivity to salient regions in low-contrast image segmentation. Channel attention approaches such as SENet 24 and ECA-Net 25 model inter-channel dependencies to refine feature representation, while spatial attention modules such as CBAM 26 and Coordinate Attention 27 highlight relevant spatial locations while suppressing background noise.

Advanced variants including Attention U-Net, 28 CA-Net, 29 and DANet 30 integrate attention mechanisms into skip connections or decoding layers. These designs significantly improve segmentation accuracy, particularly in cases involving blurry or poorly defined lesion boundaries, which are common in low-contrast imaging scenarios.

Multi-scale feature fusion

Low-contrast medical images 31 often present large variability in object size and anatomical structure. Effective multi-scale feature fusion is thus essential for accurate segmentation. Fully Convolutional Networks (FCN) 32 introduced end-to-end pixel-wise prediction, and Feature Pyramid Networks (FPN) 33 proposed a top-down hierarchical fusion scheme. Similarly, DeepLab models12–14 and PSPNet 11 employ pyramid pooling and dilated convolutions to aggregate contextual information across scales.

Other approaches, such as Multi-ResUNet, 34 leverage parallel multi-resolution paths to enhance detail retention, while DMFNet 35 implements a two-stage fusion strategy to improve cross-layer interactions. These models are particularly beneficial for low-contrast conditions, where fine-grained structure may be difficult to discern.

Transformer-based segmentation methods

Transformer architectures have recently been introduced into medical image segmentation to capture long-range dependencies beyond the receptive field of conventional CNNs. TransUNet 36 integrates a Vision Transformer (ViT) encoder with a U-Net-style decoder to jointly model global and local features. Swin-Unet 37 introduces a hierarchical Transformer with shifted window attention, which improves efficiency while preserving global context.

Other models, such as TransFuse, 38 use a dual-branch structure to integrate convolutional and Transformer-based features via multi-scale fusion. 39 These Transformer-based frameworks have shown substantial promise for low-contrast image segmentation, where long-range interactions help resolve ambiguous and diffuse anatomical boundaries. 40

Dynamic interaction fusion techniques

Static feature fusion strategies often fail to generalise across diverse imaging scenarios. To address this, dynamic interaction fusion methods adapt feature processing based on input content.41–43 For example, Dynamic Filter Networks 44 generate convolutional kernels conditioned on the input, while CondConv 45 learns a set of expert filters that are adaptively combined. DyReLU 46 proposes input-adaptive activation functions for improved representation learning. Such techniques offer finer control over feature modulation, which is particularly advantageous for segmenting structures in low-contrast medical images where intensity variations are subtle and spatial boundaries are ill-defined.

Methodology

Global pyramid attention backbone network (GPABN)

We introduce the Global Pyramid Attention Backbone Network (GPABN), designed to capture both fine-grained local details and global contextual semantics in low-contrast image segmentation. By integrating hierarchical Transformer encoding with multi-scale pooling and attention fusion, GPABN enhances representational capacity while maintaining computational efficiency.

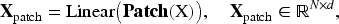

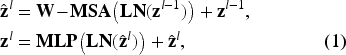

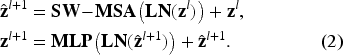

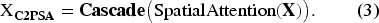

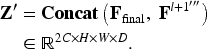

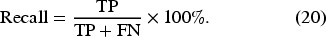

As illustrated in Figure 1(a), GPABN comprises five sequential stages that progressively encode features from low-level details to high-level semantics and aggregate multi-scale contextual information for robust representation. (a) the Global Pyramid Attention Backbone Network (GPABN), which integrates hierarchical Swin Transformer stages with multi-scale pooling and attention to capture both local and global features. (b) two successive Swin Transformer Blocks, alternating between two window-based multi-head self-attention (W-MSA) for efficient global–local dependency modeling. (c) the C2PSA module, a cascaded spatial attention mechanism that progressively enhances focus on lesion boundaries while suppressing background noise. Spatial down-sampling is applied between stages via patch merging, enlarging the receptive field while preserving essential structure. In this formulation,

Here,

The outputs of both branches are concatenated and fused with a

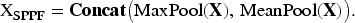

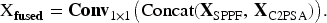

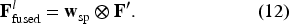

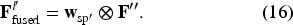

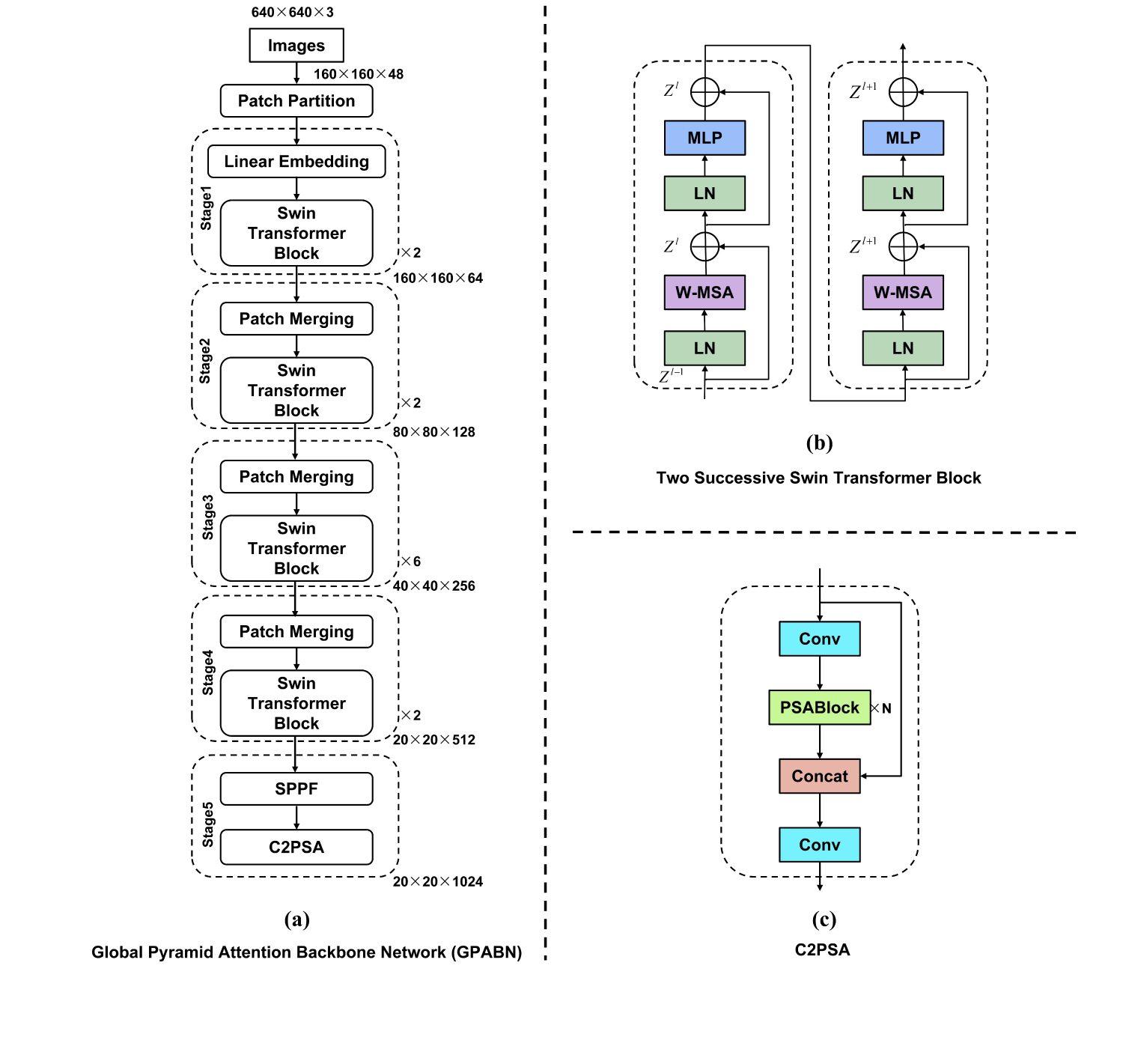

We propose an innovative SwinFuseNeckNetwork (SFNN). As illustrated in Figure 2, the core innovation of SFNN lies in the design and deep integration of the Dynamic Zoom Fusion (DZF) module and the Dynamic Spatial Interaction Fusion (DSIF) module. This architecture significantly enhances feature extraction and multi-scale feature fusion in medical image segmentation tasks by seamlessly combining multi-scale aggregation, content-aware fusion, and efficient refinement, thereby substantially improving feature processing capabilities. Architecture of SwinFuseNet. (a) DZF Module: The Dynamic Zoom Fusion (DZF) module adjusts the feature maps through multiple convolutional operations, followed by smoothing and pooling operations. It then fuses the results to enhance low-contrast image feature extraction. (b) DSIF Module: The Spatial Interaction Fusion (DSIF) module applies spatial and channel attention mechanisms to refine feature maps, utilizing identity maps for feature fusion and downsampling for efficiency. (c) Fusion Module: The Fusion module splits and processes feature maps, utilizing average pooling and convolution for feature enhancement, followed by a final sigmoid function to adaptively fuse features across different scales. (d) Identity Map: A 1

These are summed into

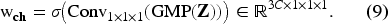

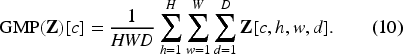

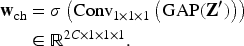

We then compute a channel-wise descriptor via global mean pooling with uniform weighting and a

The gated output is:

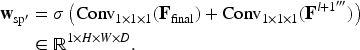

To emphasize important spatial regions, we extract spatial descriptors from the fine and coarse branches via separate

The final output of the DZF module is:

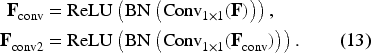

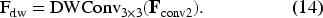

Building on

A

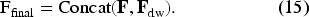

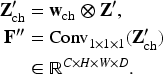

The final output of this branch is formed by concatenating the processed map with the original identity input:

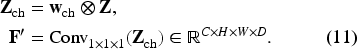

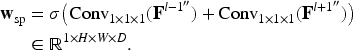

Channel attention is then applied. A global average pooling (GAP) followed by a

These weights are applied to the concatenated feature map to form a gated representation:

Spatial descriptors are extracted from

The final output of the DSIF module is obtained by spatially gating

Datasets

Metrics

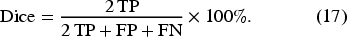

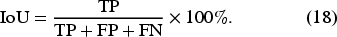

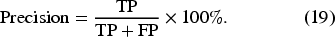

We assess our model using four metrics: Dice Score, Jaccard Coefficient, Precision, and Recall. Except for model complexity, all metrics are derived from the confusion matrix entries: True Positive (TP), True Negative (TN), False Positive (FP), and False Negative (FN).These metrics are derived from the confusion matrix entries as follows:

In this study, we utilised the SwinFuseNeckNetwork, a compact segmentation architecture, configured with a depth multiplier of 0.50, a width multiplier of 0.25, and a maximum channel capacity of 1024. The entire training pipeline was executed on a single NVIDIA RTX 4090 GPU, leveraging the PyTorch framework with Python 3.8 and CUDA version 12.2. The model was trained from the ground up, without any reliance on pre-initialised weights. Input images were resized to

Quantitative results

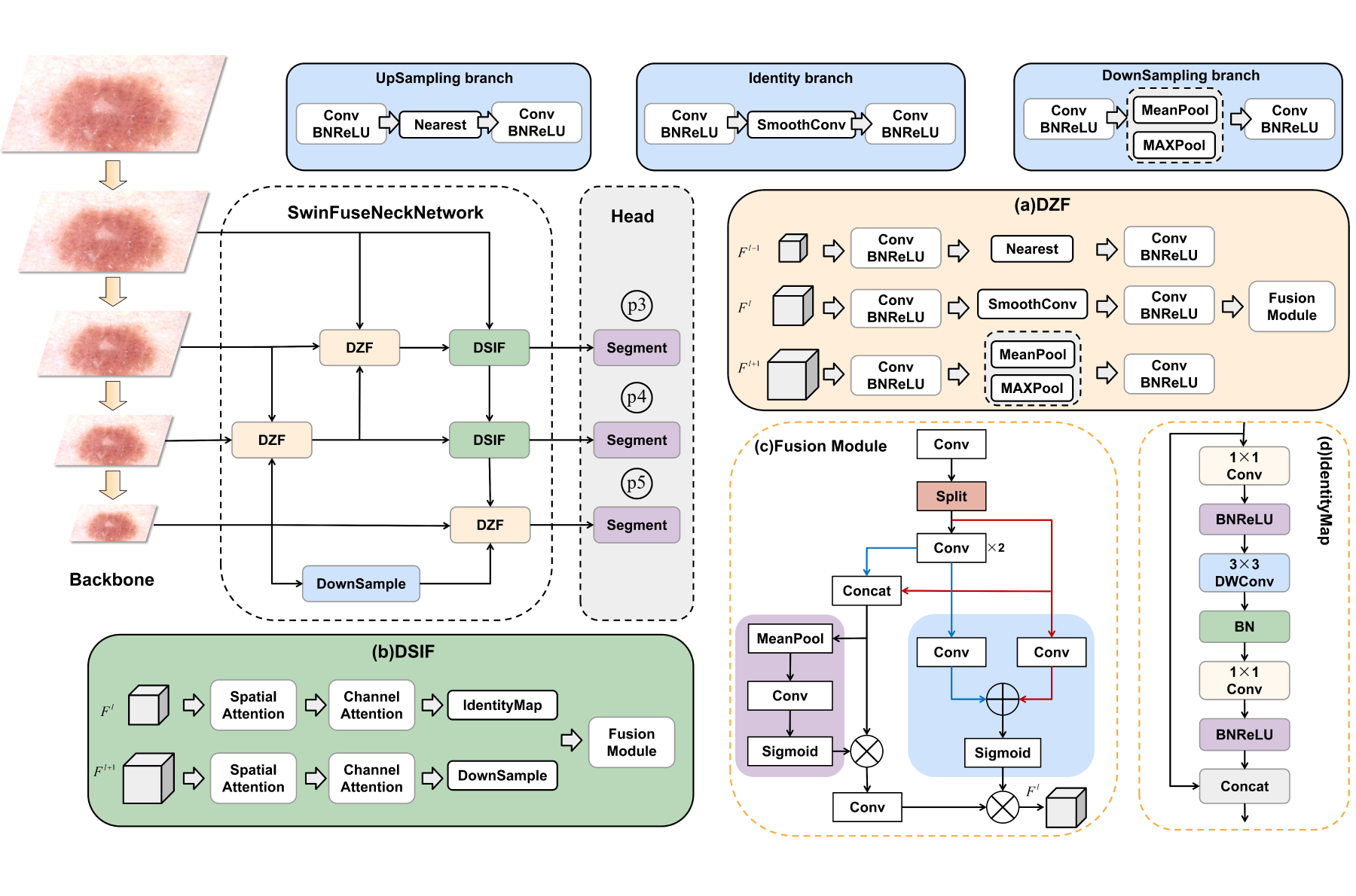

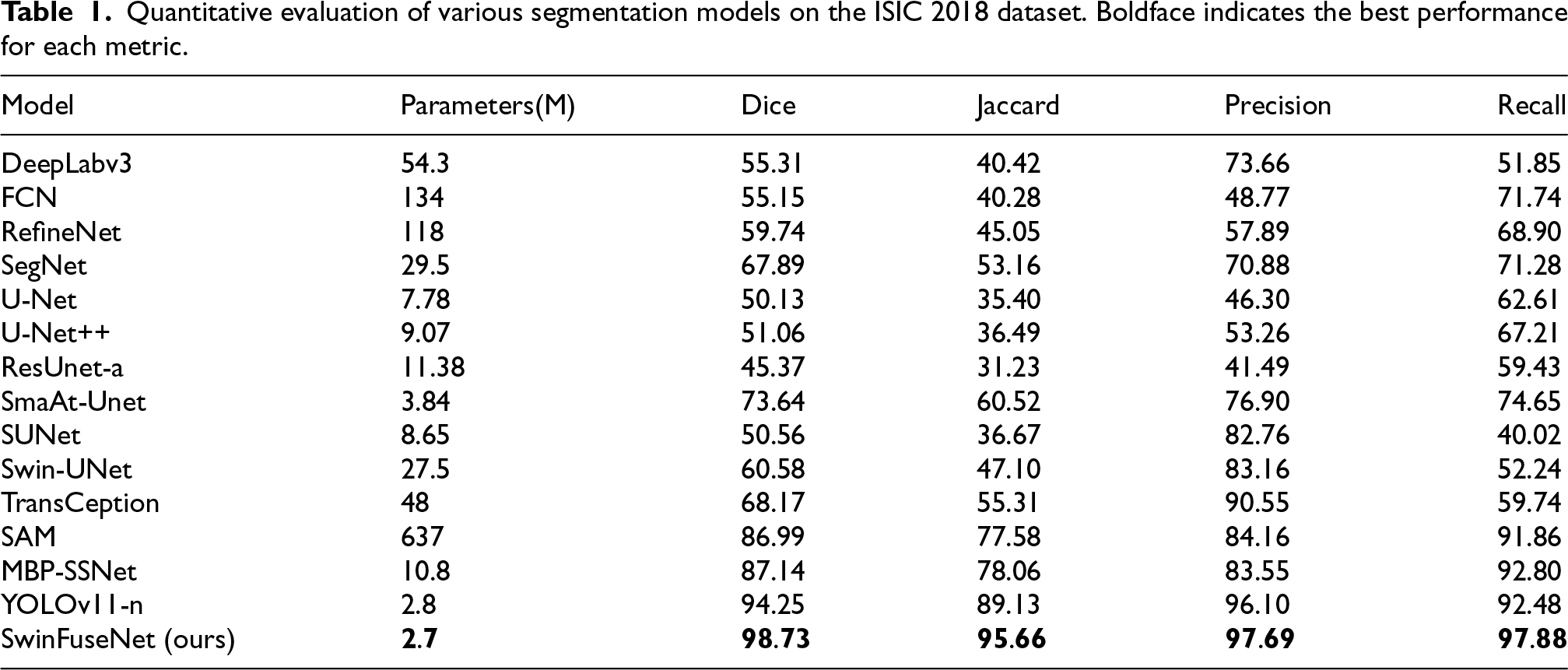

Tables 1 to 8 present a comprehensive comparison between the proposed

Quantitative evaluation of various segmentation models on the ISIC 2018 dataset. Boldface indicates the best performance for each metric.

Quantitative evaluation of various segmentation models on the ISIC 2018 dataset. Boldface indicates the best performance for each metric.

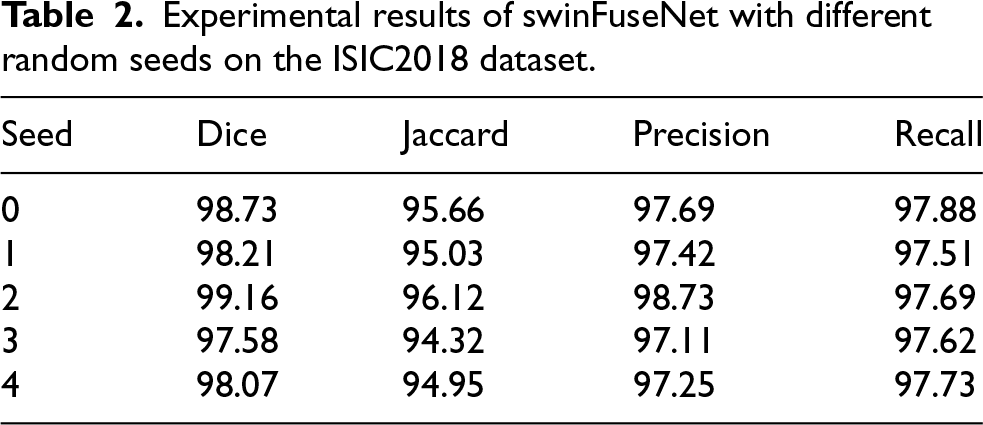

Experimental results of swinFuseNet with different random seeds on the ISIC2018 dataset.

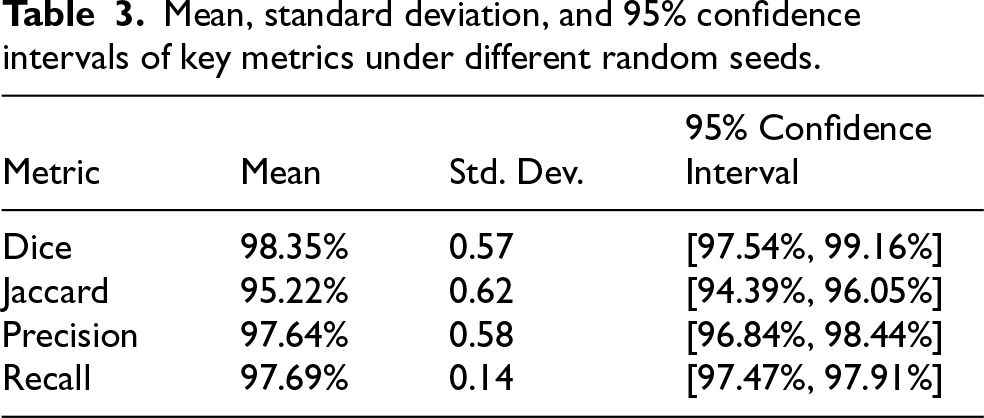

Mean, standard deviation, and 95% confidence intervals of key metrics under different random seeds.

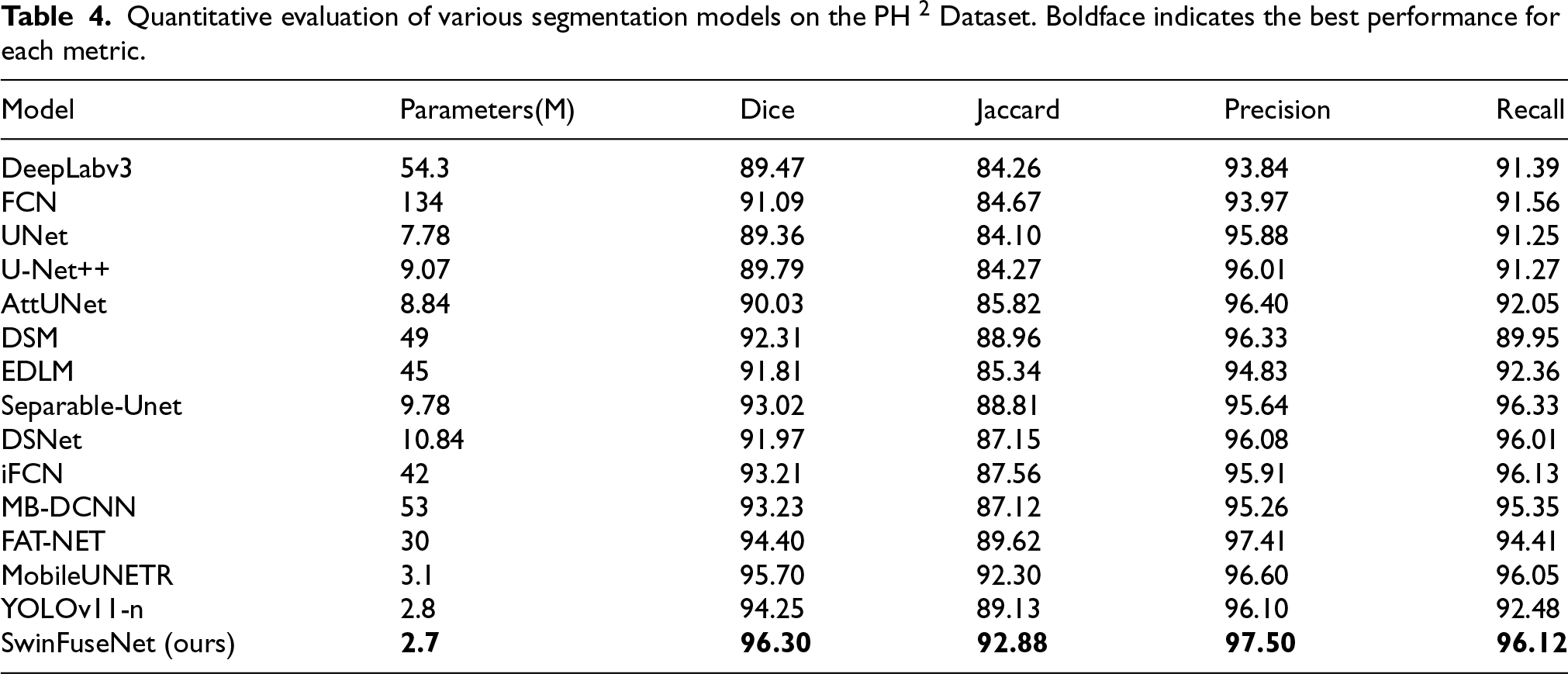

Quantitative evaluation of various segmentation models on the PH 2 Dataset. Boldface indicates the best performance for each metric.

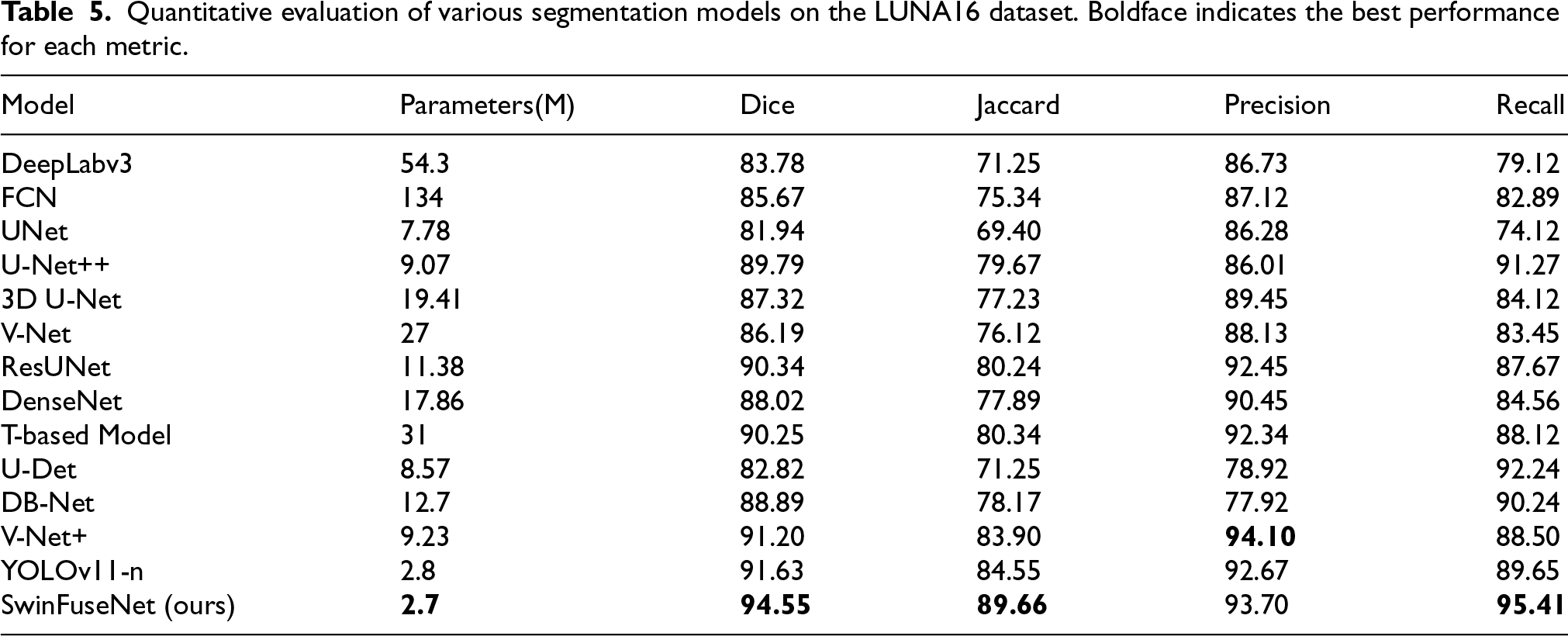

Quantitative evaluation of various segmentation models on the LUNA16 dataset. Boldface indicates the best performance for each metric.

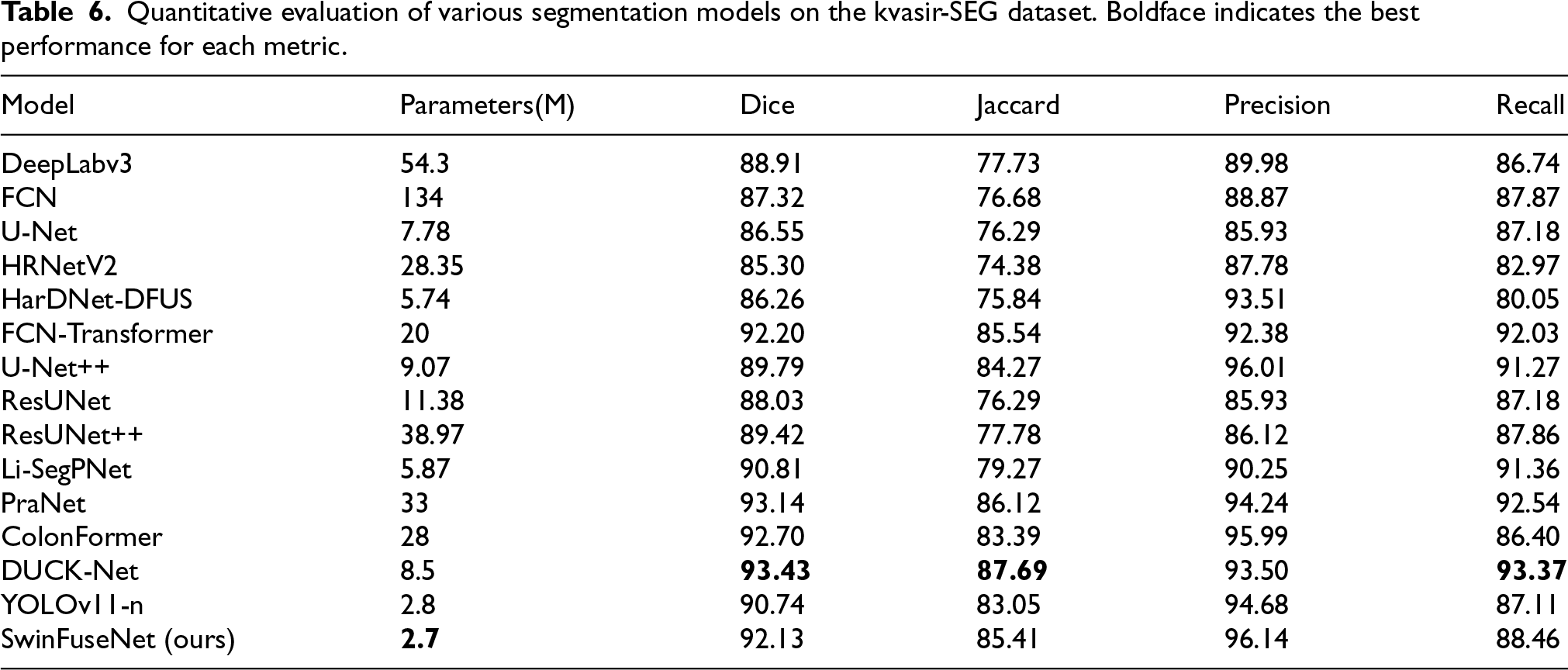

Quantitative evaluation of various segmentation models on the kvasir-SEG dataset. Boldface indicates the best performance for each metric.

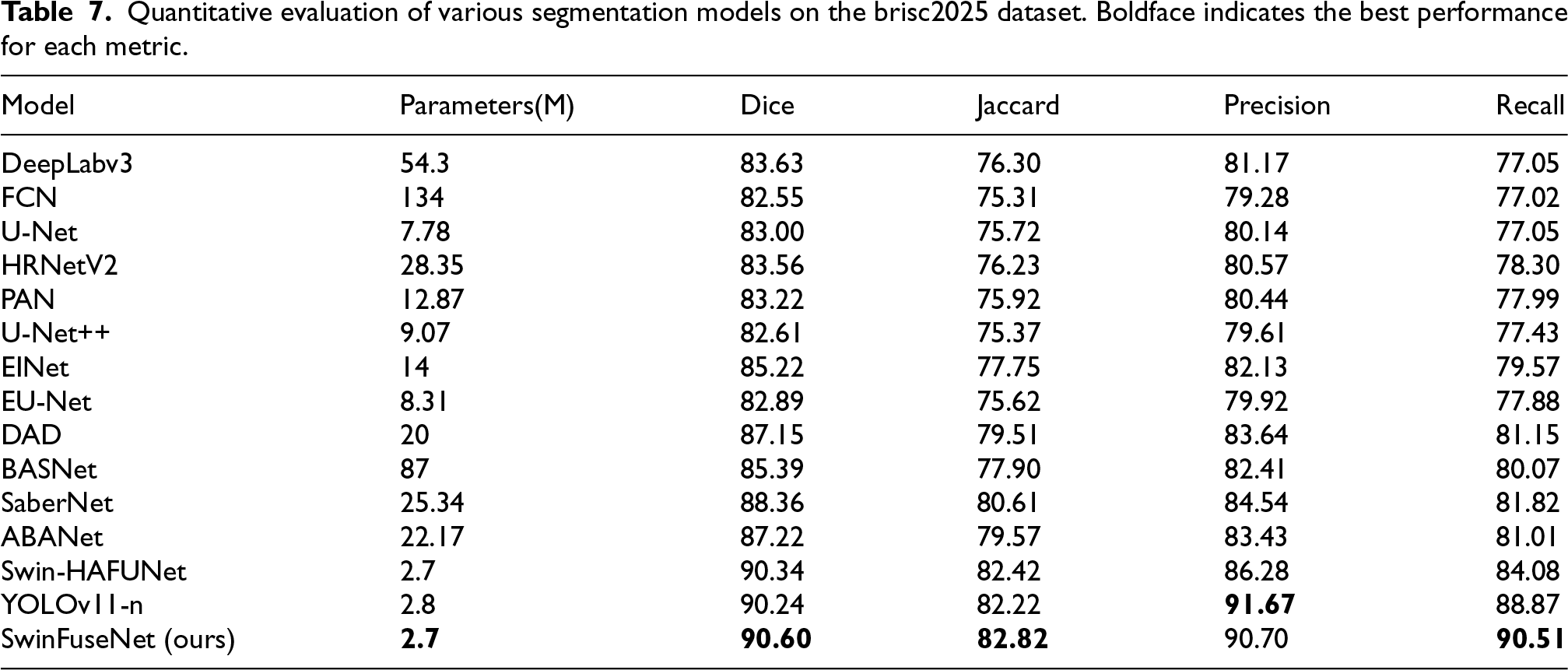

Quantitative evaluation of various segmentation models on the brisc2025 dataset. Boldface indicates the best performance for each metric.

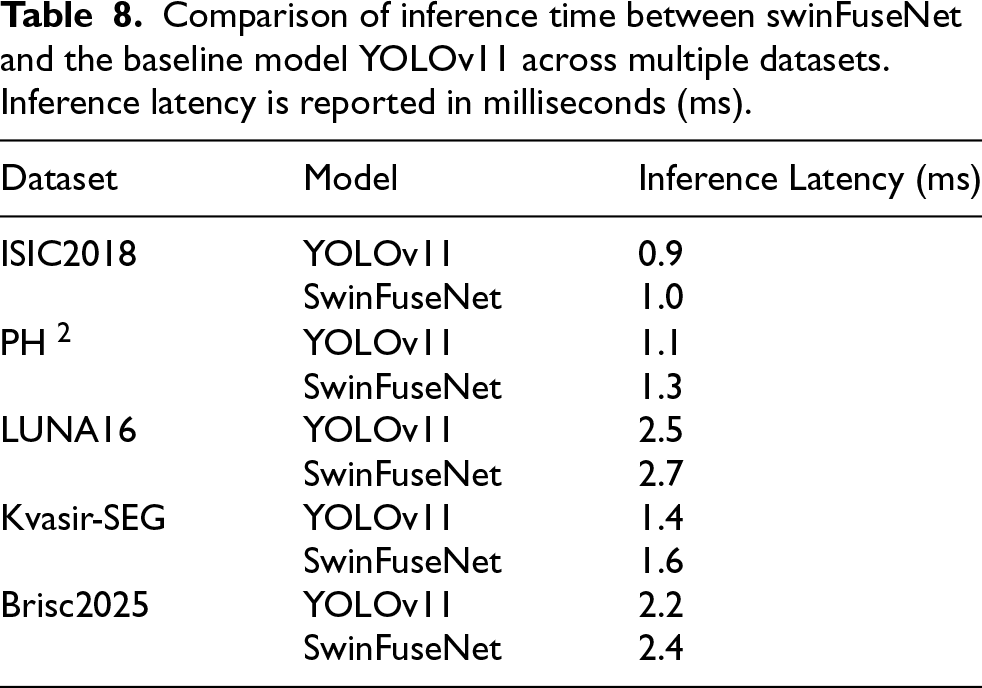

Comparison of inference time between swinFuseNet and the baseline model YOLOv11 across multiple datasets. Inference latency is reported in milliseconds (ms).

On the

Tables 2 and 3 demonstrate the stability and robustness of SwinFuseNet under different random seeds. The results show consistently high performance across multiple runs, with small standard deviations and narrow 95% confidence intervals. This indicates that the improvements achieved by SwinFuseNet are not due to random fluctuations, but reflect reliable and reproducible performance in low-contrast image segmentation.

As shown in Table 4,

Performance on the

On the

On the

To further examine the practicality of SwinFuseNet for deployment, we evaluate not only accuracy but also runtime efficiency. The corresponding inference latency results are summarized in Table 8 and discussed in detail in the following subsection.

Table 8 shows that incorporating Transformer modules into the YOLOv11 backbone results in only a negligible increase in inference latency—about 0.18 ms on average across the five benchmark datasets. Despite this small overhead, SwinFuseNet remains much more efficient than fully Transformer-based models, which often suffer from deployment bottlenecks due to heavy computational demands.

This efficiency stems from its hybrid design: Transformer layers are selectively integrated into the backbone for capturing long-range dependencies, while the neck (SFNN) and prediction head largely preserve lightweight CNN-based structures. Such a design allows SwinFuseNet to benefit from global context modeling while maintaining compactness, with just 2.7M parameters compared to YOLOv11-n’s 2.8M, thereby keeping both model size and inference time under control.

Across ISIC2018, PH 2, LUNA16, Kvasir-SEG, and Brisc2025, SwinFuseNet demonstrates consistent performance improvements over YOLOv11-n, with average gains of +2.24% in Dice, +3.67% in Jaccard, +0.90% in Precision, and +3.56% in Recall. These results indicate that the proposed method not only improves lesion boundary delineation and recall sensitivity but also sustains computational efficiency. Overall, SwinFuseNet provides a balanced trade-off between accuracy, parameter compactness, and inference latency, making it a suitable candidate for practical deployment in resource-constrained medical imaging scenarios.

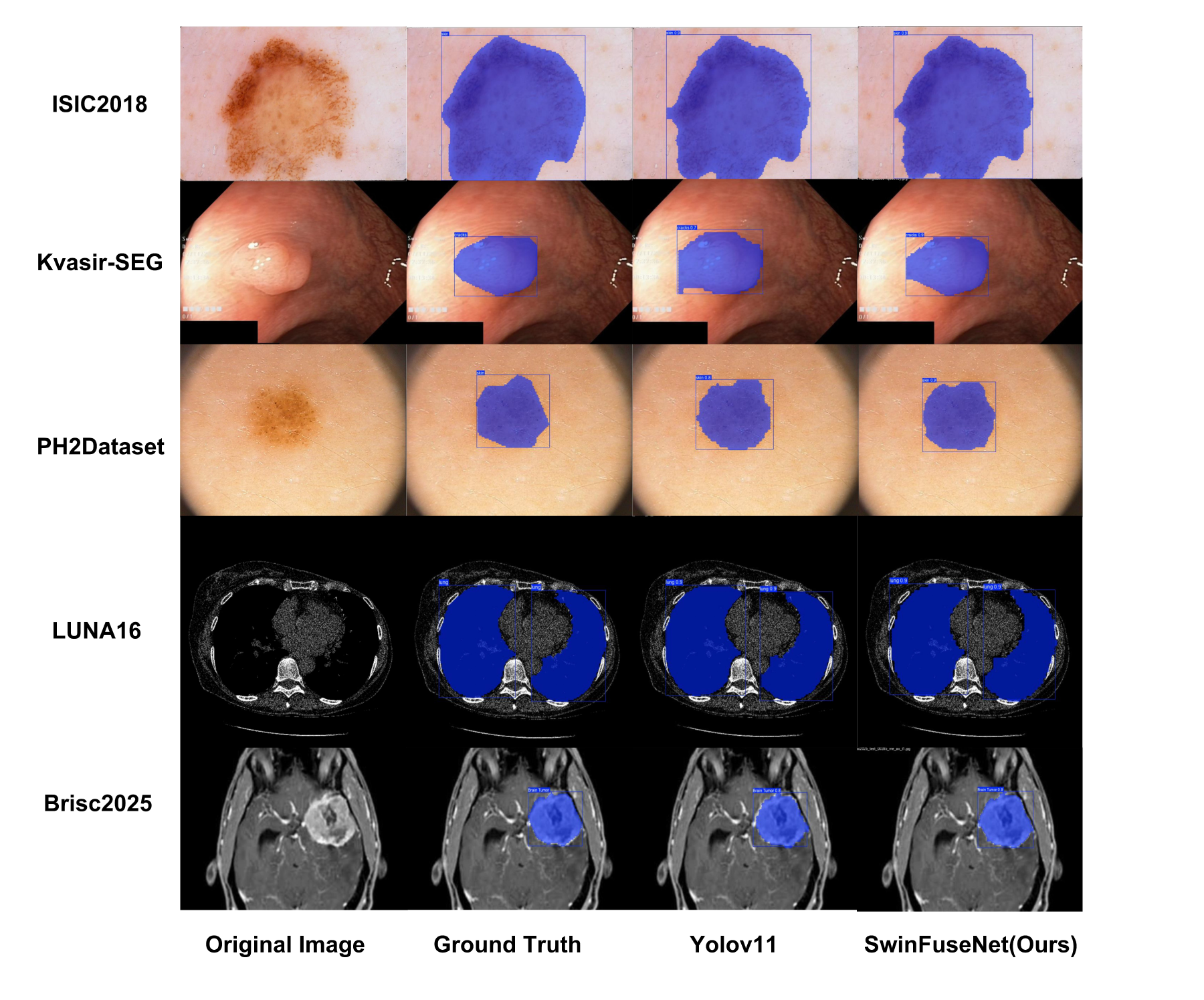

Qualitative results

Figure 3 presents a visual comparison between SwinFuseNet and YOLOv11 on the ISIC2018, PH 2Dataset, LUNA16, Kvasir-SEG and Brisc2025 datasets. By integrating Transformer architectures with YOLO, SwinFuseNet enhances segmentation performance by effectively combining multi-scale feature fusion with fine-grained feature extraction through its Global Pyramid Attention Backbone Network and SwinFuseNeckNetwork. As shown in the figure, SwinFuseNet demonstrates superior accuracy in segmenting targets across various scales compared to YOLOv11.

Qualitative Comparison of SwinFuseNet with YOLOv11 on ISIC2018, PH 2Dataset, LUNA16, Kvasir-SEG and Brisc2025 Datasets.

In particular, we emphasize that many images in ISIC2018 and PH 2 exhibit extremely subtle grayscale differences between lesions and surrounding tissue, with boundaries that are irregular, fuzzy, or partially missing. Conventional U-Net-like models often fail in these cases because they rely on static skip connections and fixed fusion strategies, which are insufficient to capture fine transitions or adaptively emphasize ambiguous regions. As a result, the predictions tend to either oversmooth boundaries or miss small protrusions along lesion edges.

SwinFuseNet addresses these challenges through the

Although the visual differences are subtle, these refinements are clinically meaningful. In dermatology (ISIC2018,

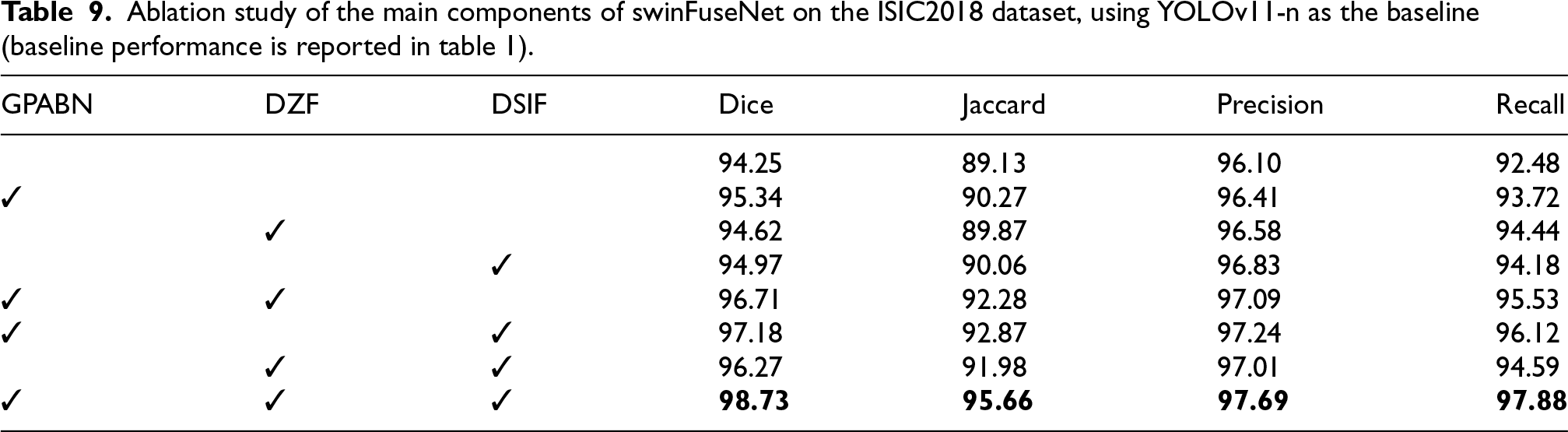

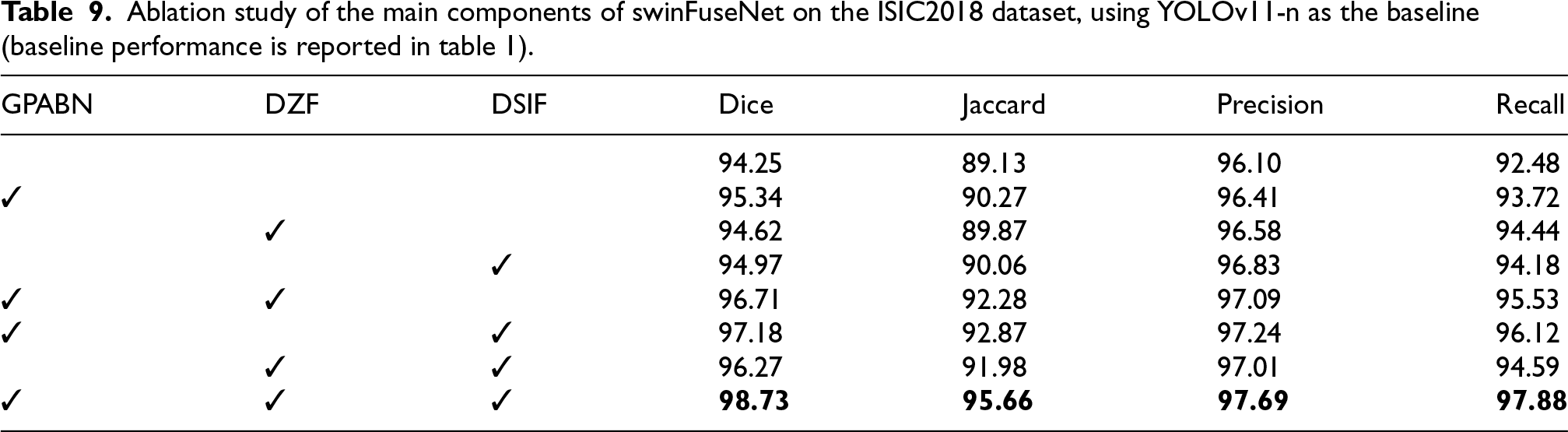

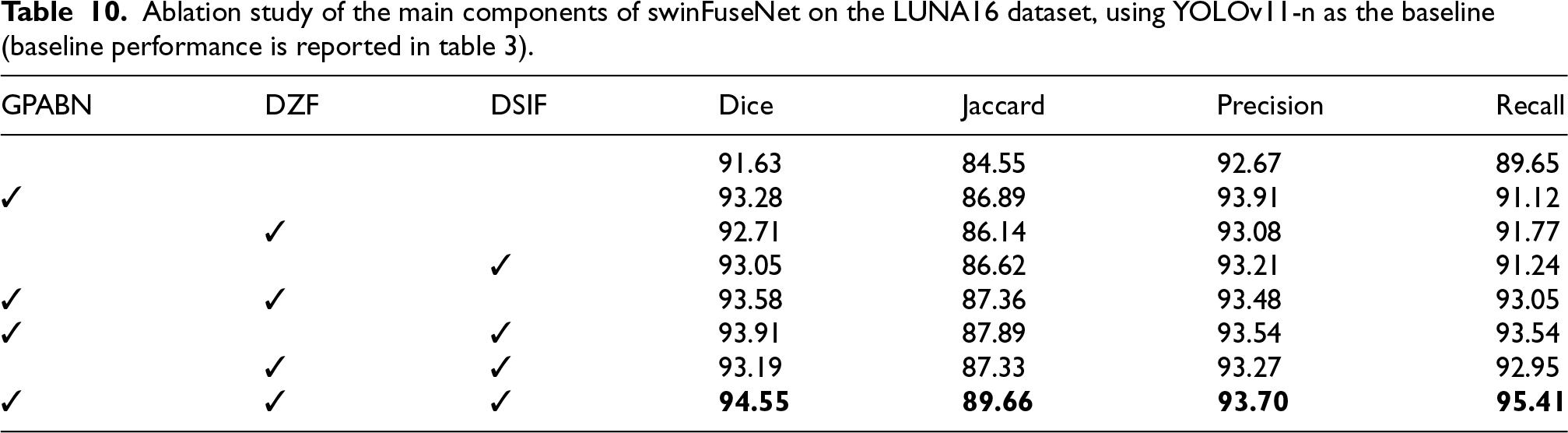

We performed ablation studys on the ISIC2018 dataset and LUNA16 dataset to evaluate the contribution of each module in SwinFuseNet. The results are summarized in Tables 9 and 10:

Ablation study of the main components of swinFuseNet on the ISIC2018 dataset, using YOLOv11-n as the baseline (baseline performance is reported in table 1).

Ablation study of the main components of swinFuseNet on the ISIC2018 dataset, using YOLOv11-n as the baseline (baseline performance is reported in table 1).

Ablation study of the main components of swinFuseNet on the LUNA16 dataset, using YOLOv11-n as the baseline (baseline performance is reported in table 3).

Averaged over ISIC2018 and LUNA16, adding the Global Pyramid Attention Backbone Network (GPABN) alone improved Dice from 92.94% to 94.31% (+1.37%), Jaccard from 86.84% to 88.58% (+1.74%), Precision from 94.39% to 95.16% (+0.77%), and Recall from 91.07% to 92.42% (+1.35%). These results confirm that GPABN effectively enhances hierarchical context modeling, capturing both global semantics and fine-grained local structures to boost segmentation performance.

Impact of the fusion modules (DZF and DSIF)

Averaged across the two datasets, adding DZF alone improved Dice from 92.94% to 93.67% (+0.73%), Jaccard from 86.84% to 88.01% (+1.17%), Precision from 94.39% to 94.83% (+0.44%), and Recall from 91.07% to 93.11% (+2.04%). Similarly, adding DSIF alone improved Dice to 94.01% (+1.07%), Jaccard to 88.34% (+1.50%), Precision to 95.02% (+0.63%), and Recall to 92.71% (+1.64%). These improvements demonstrate that DZF and DSIF enhance feature representation by adaptively integrating multi-scale information and reinforcing local spatial dependencies.

Combined impact of GPABN and fusion modules

When modules were combined, the improvements became more pronounced. GPABN+DZF raised Dice from 92.94% to 95.15% (+2.21%), Jaccard from 86.84% to 89.82% (+2.98%), Precision from 94.39% to 95.29% (+0.90%), and Recall from 91.07% to 94.29% (+3.22%). GPABN+DSIF further improved Dice to 95.55% (+2.61%), Jaccard to 90.38% (+3.54%), Precision to 95.39% (+1.00%), and Recall to 94.83% (+3.76%). Combining DZF and DSIF without GPABN achieved Dice 94.73% (+1.79%), Jaccard 89.66% (+2.82%), Precision 95.14% (+0.75%), and Recall 93.77% (+2.70%). Finally, integrating all three modules yielded the best overall results: Dice 96.64% (+3.70%), Jaccard 92.66% (+5.82%), Precision 95.70% (+1.31%), and Recall 96.65% (+5.58%). These results highlight the complementary strengths of GPABN’s hierarchical attention and the adaptive feature interactions of DZF and DSIF, validating the overall design of SwinFuseNet for robust low-contrast image segmentation.

In summary, the effective integration of GPABN and fusion modules greatly enhances low-contrast image segmentation, validating the design choices for multi-scale feature fusion and fine-grained feature extraction in SwinFuseNet.

Conclusion

This paper has introduced

The proposed backbone,

Extensive experiments on four benchmark datasets (ISIC 2018, PH 2, LUNA16, and Kvasir-SEG) demonstrate that SwinFuseNet consistently outperforms state-of-the-art baselines, including U-Net variants and YOLOv11, across multiple evaluation metrics. On the ISIC 2018 dataset, it achieves Dice and IoU scores of 98.73% and 95.66%, marking relative improvements of 4.48% and 6.53% over leading competitors. These results confirm the model’s effectiveness and practical applicability in real-world low-contrast segmentation tasks.

SwinFuseNet not only advances the performance frontier for low-contrast image segmentation, but also establishes a versatile architectural blueprint for future research in lightweight, accurate, and interpretable medical image analysis systems.

In future work, we aim to further enhance SwinFuseNet’s generalisability by incorporating domain adaptation techniques and evaluating its performance across diverse imaging modalities beyond dermatology and radiology. Additionally, we plan to investigate approaches for uncertainty quantification (Bayesian deep learning or Monte Carlo dropout) and explainability (attention heatmaps or Grad-CAM) to improve the model’s clinical interpretability and trustworthiness. We also outline the challenges of real-time deployment, focusing on the trade-offs between segmentation accuracy, inference speed, and hardware constraints, particularly on edge devices in resource-constrained settings. By improving segmentation accuracy, SwinFuseNet has the potential to enhance diagnostic precision and support personalized treatment planning in clinical settings.

Footnotes

Acknowledgments

This work was partially supported by the National Natural Science Foundation of China (62376127, 61876089, 61876185, 61902281, 61403206), the Natural Science Foundation of Jiangsu Province (BK20141005), the Natural Science Foundation of the Jiangsu Higher Education Institutions of China (14KJB520025), Jiangsu Distinguished Professor Programme.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.