Abstract

Some scholars argue that the public is generally myopic in their attitudes about disaster preparedness spending, because they prefer to spend money on disaster response rather than preparedness, despite the greater cost effectiveness of the later. Given voters’ general lack of policy information, it is possible that limited support for preparedness comes from lack of information about its efficacy. In this paper, we build on these studies by examining how people respond to new information about the effectiveness of policy initiatives in the context of public health and the COVID-19 pandemic. Through two online survey experiments with over 3400 respondents, we demonstrate that information can lead people to update attitudes about preparedness, illustrating the potential for information campaigns to increase support for preparedness policies. Our results suggest that information about the efficacy of preparedness can increase support for these policies, and the information effect exists even for individuals whose prior beliefs were that public health programs were ineffective. These results suggest that information can make people more supportive of preparedness spending, which could provide electoral incentives for its provision. We conclude by providing some directions for future research to enhance our understanding of public opinion and preparedness spending.

Introduction

Previous research suggests that people support elected officials who provide relief spending in the wake of crisis (Bechtel and Hainmueller 2011). Although preparedness spending is significantly more cost effective than relief spending, existing scholarship suggests there is a widespread absence of electoral incentives for these initiatives (Gailmard and Patty 2019; Healy and Malhotra 2009; Stokes 2016). As a result, it is not surprising that federal spending on preparedness lags behind optimal levels, while relief spending has increased over time (Healy and Malhotra 2009). Current literature primarily addresses these patterns related to the onset of disasters and climate change, but as pandemics increasingly threaten communities around the world, it is vital to also understand the conditions under which voters might support preparedness spending to mitigate the effects of crises in public health.

While some scholars argue the public is generally myopic in their attitudes about preparedness spending (Achen and Bartels 2016; Healy and Malhotra 2009), it is possible that limited support for preparedness comes from lack of information about its efficacy (Andrews, Delton, and Kline 2021; Gailmard and Patty 2019; Sainz-Santamaria and Anderson 2013). This raises the question—if people have better information about preparedness, do they become more supportive of these measures?

There is widespread evidence that voters lack basic policy information, and that people are able and willing to revise their beliefs when exposed to new, persuasive information (Carrieri, Paola, and Gioia 2021; Diamond, Bernauer, and Mayer 2020; Hill 2017; Lupia and McCubbins 1998). Across dozens of policy issues experimental evidence suggests that respondents change their beliefs when exposed to persuasive messages (Coppock 2023; Tappin, Berinsky, and Rand 2023). The effect of information on attitudes extends to people’s attitudes about emergency preparedness (Bechtel and Mannino 2021), and other work suggests that these attitudes can translate into voting intentions if local news media draw lessons from distant events for their audience (Jamieson and Van Belle 2018; 2022).

In this paper, we build on these studies by examining how people respond to new information about the effectiveness of policy initiatives in the context of public health preparedness. Through two online survey experiments, we demonstrate that information can lead people to update attitudes about preparedness, illustrating the potential for information campaigns to increase support for preparedness policies.

This paper is structured in four further sections. First, we review prior literature about public opinion and electoral incentives for preparedness, demonstrating the areas of debate surrounding the electoral implications of preparedness and relief spending while highlighting the gap in our knowledge about the conditions under which individuals might support preparedness policy. Second, we describe the experimental design we use to test how information about policy effectiveness leads to increased support for preparedness using a national probability sample of 1595 participants recruited from Qualtrics and another sample of 1819 participants recruited via Amazon Mechanical Turk.

Next, we present our results which demonstrate that informing participants that better preparedness could have saved lives during the COVID-19 pandemic generates support for increased public health spending. We also examine how the effect of information varies based on prior beliefs about the effectiveness of preparedness spending. Our results suggest that information about the efficacy of preparedness can increase support for these policies, and the information effect exists for individuals who received the COVID-19 treatment even if their prior beliefs were that such programs are ineffective.

Finally, we conclude with a brief discussion of the implications of our results for the understanding of public support for preparedness spending, and we provide some directions for future research to enhance our understanding of public opinion and preparedness spending.

Literature Review

Prior scholarship clusters around two empirical findings related to voter behavior and crises such as disasters. First, previous research suggests that voters reward politicians for their immediate response (Gasper and Reeves 2011; Reeves 2011) and for relief spending after the onset of crises such as disasters (Bechtel and Hainmueller 2011; Fair et al. 2017; Velez and Martin 2013). For example, President Obama’s response to Hurricane Sandy appeared to have boosted his approval ratings ahead of the 2012 Presidential election (Velez and Martin 2013). This type of behavior by voters creates incentives for politicians to spend resources on disaster response even though spending on preparedness might save up to $14 for every dollar spent on preparedness (Healy and Malhotra 2009).

The electoral effects of crisis relief and response seems to be context dependent (Abney and Hill 1966; Boin et al. 2016), and poor government responses could lead to sustained electoral losses (Eriksson 2016; Montjoy and Chervenak 2018; Olson and Gawronski 2010). The public may also respond to disaster damage by becoming less supportive of democratic institutions in the wake of a poor response (Carlin, Love, and Zechmeister 2014). However, others have argued that it might prompt increased participation in elections and consolidate support for the incumbent government if the response is viewed favorably (Fair et al. 2017). Essentially, the findings in the existing literature suggest that the public rewards relief assistance from elected officials as long as it is provided in a timely and sympathetic manner.

One reason that preparation spending may not be prioritized is because it is difficult to observe these activities, and voters may not be sufficiently informed to reward elected officials for this spending, even if voters support these policies. This absence of observability could even lead to perverse outcomes if individuals perceive preparedness and mitigation spending as evidence of corruption (Gailmard and Patty 2019).

A second finding in previous scholarship is that voters fail to respond to the relative benefits of relief and preparedness spending, preferring concentrated benefits from relief spending after a disaster to more cost-effective policies to mitigate future crises (Achen and Bartels 2016; Andrews, Delton, and Kline 2021; Gailmard and Patty 2019; Healy and Malhotra 2009; Heersink, Peterson, and Jenkins 2017; Stokes 2016). However, we know little about how voters respond when presented with information about cost effectiveness, especially as voters may be more supportive of preparedness spending when they learn about the benefits of these policies (Bechtel and Mannino 2021).

In short, while the standard explanation is that voters punish incumbents for adverse events as a function of their myopia (Achen and Bartels 2016; Healy and Malhotra 2009), there remains a gap in our knowledge about how individuals perceive the relative merits of preparedness and relief spending when they are more informed about the costs and benefits of such spending.

The existing findings about voter behavior could also be the result of them lacking information about preparedness. This prompts our research question: Do people change their preferences about preparedness when they learn about its potential benefits?

Research Design

Prior research demonstrates the paucity of citizens’ political knowledge, which can make it difficult to form policy preferences that are consistent with their interests (Delli Carpini and Keeter 1996; Galston 2001). Our theoretical approach to investigating if information affects beliefs about disaster preparation and response builds on two related claims about people’s knowledge. First, most people have little knowledge of the actions of governments vis-à-vis disaster relief and/or preparedness. Second, people also know very little about the cost effectiveness of disaster preparation versus relief.

Based on these assumptions about individuals' knowledge of policy, we expect that providing them with information has the potential to change beliefs about government spending on pandemic preparedness. The reason is relatively straightforward. If people do not know much about preparedness/relief and its effectiveness, then even a small amount of information may change people’s preferences.

To test if information about preparedness affects beliefs and opinions, we designed a survey experiment and recruited a general population sample using Qualtrics between June 29-July 14, 2021 and a convenience sample using Amazon Mechanical Turk from April 4–6, 2022. We recruited two different samples at two different times to help ensure that our findings were not unique to a particular sample or time period.

Our total sample consists of 3414 respondents comprised of 1595 from Qualtrics and 1819 from MTurk. In the analyses we report, we combine the respondents together, because separate analyses do not differ substantively from the combined analysis. 1

In each survey, respondents were randomly assigned to one of three different treatments or the control group leading to about 900 respondents per group. We pre-registered our experimental design through the Open Science Foundation. 2

After consenting to complete the survey, respondents answered a variety of demographic questions before we asked them about their opinions regarding public health spending and disaster preparation. In particular, we examined if respondents believe that: public health is a government responsibility, public health spending is ineffective, public health spending could be better used for other purposes, and/or public health spending is unlikely to benefit the respondents. These pre-treatment questions allow us to examine how attitudes change depends on prior beliefs, and if respondents have these beliefs (Wehde and Nowlin 2020), then it is straightforward to understand why they would not support public health spending. In particular, if respondents do not believe that disaster/public health preparation spending is effective, then it is not particularly surprising that respondents would not support preparedness policies.

We also asked respondents to report their preferred allocation between disaster relief and preparedness and provided some background about each category as both pre-treatment and post-treatment question (see below for more information about this question), which allows us to examine how attitude change depends on prior beliefs (Clifford, Sheagley, and Piston 2021). After these introductory questions all respondents were randomly assigned to receive either the control group or one of three treatment conditions.

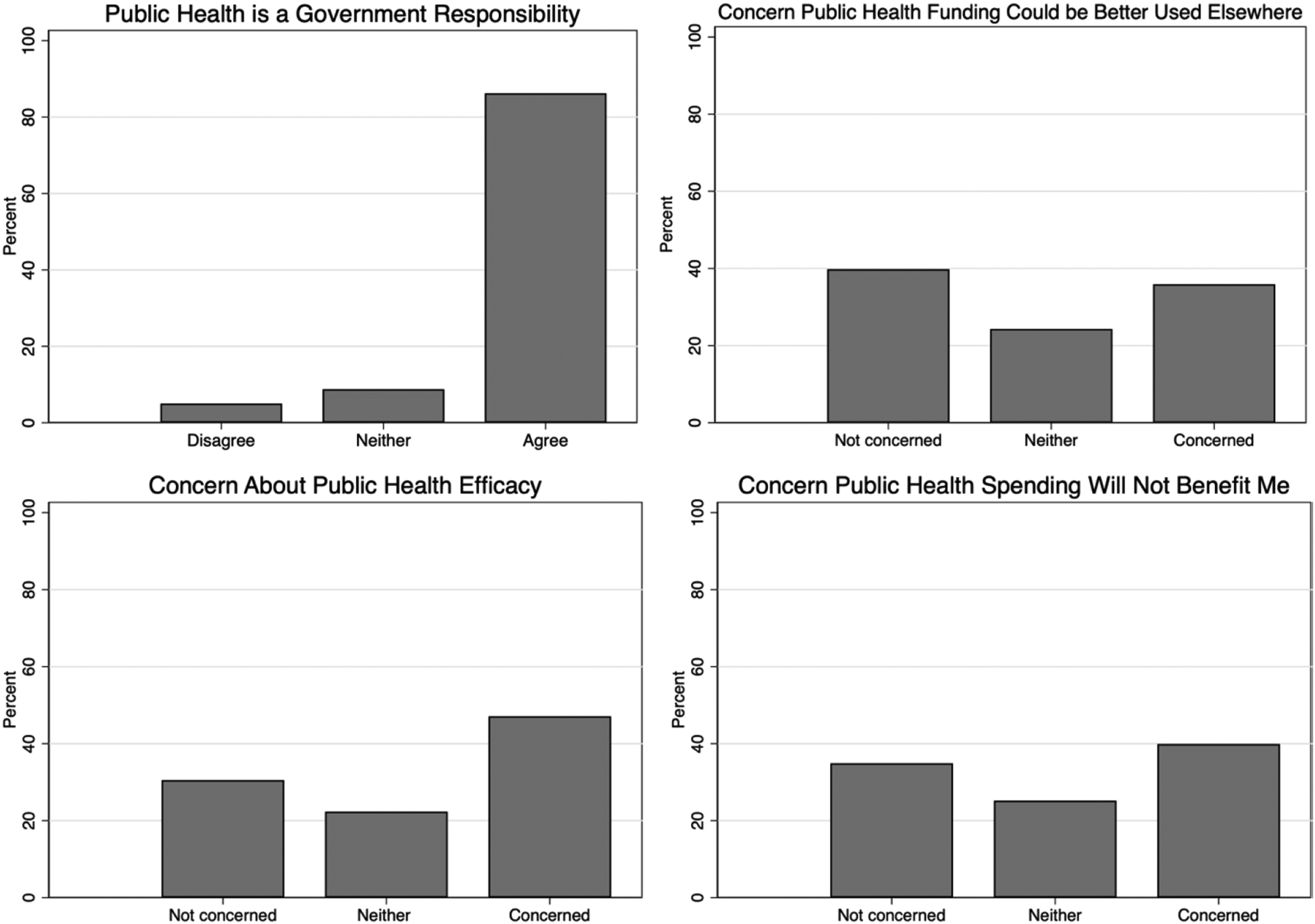

Descriptive statistics demonstrate the importance of understanding people’s prior beliefs as a possible cause of their attitudes about preparedness spending. Figure 1 presents histograms that visually demonstrate the distribution of responses to questions relating to responsibility for preparedness spending and the effectiveness of preparedness spending, with responses ranging from 1 (strongly disagree and disagree) to 3 (strongly agree and agree).

3

For ease of interpretation, we combined disagree and agree responses to create a trichotomous variable. In short, we find there is a large amount of variation in attitudes about public health spending. Histograms of beliefs about public health preparedness spending.

The histograms demonstrate the variation in beliefs about public health spending. Across the four different questions the answers suggest: • Most participants agreed that it is the government’s responsibility to reduce the impact of public health threats. • Many respondents were concerned that public health funding could be ineffective. • Responses varied considerably about whether public health funding could be better used elsewhere. • People’s beliefs about whether public health funding might not benefit them were evenly distributed across the three categories.

These descriptive statistics demonstrate that many people have beliefs about public health funding that might relate to their preferences for public health preparation compared to response. Understanding peoples’ prior beliefs about public health spending is essential to understanding both why people might not support preparedness spending, and also the types of information that might change people’s opinions. If people do not believe that disaster preparedness is effective, then it is hardly surprising that they would not support such spending. Among those who do not support disaster preparation an important distinction is between those who are uninformed about disaster (public health, in our case) spending and those who are informed but unsupportive. The difference between these two reasons for opposition to spending affects what we infer about the reason for people’s resistance to disaster preparedness and the type of information that might change their minds to support preparation or politicians who advocate for preparedness.

Treatments

We designed our treatment vignettes using information that comes from knowledgeable, trustworthy sources so that the conditions for persuasion are met, and the treatments could be expected to affect respondents’ beliefs (Lupia and McCubbins 1998). We describe the three treatments and our rationale for each one below.

4

The limited research about how information affects beliefs about disaster preparedness led us to design an experimental treatment that would give us a good opportunity to identify if information could cause respondents to change their beliefs about pandemic preparation. Our focus in this experiment was not to understand the scope conditions or moderating factors that might affect whether information influences beliefs. As is normal in an experiment, we designed the COVID-19 treatment to have a causal effect, it was by no means guaranteed to be effective. Even a treatment about saving 200,000 lives could fail to affect beliefs. Respondents might believe that COVID is a special case and not think that pandemic preparedness, in general, is effective or worthwhile. In other words, while our treatment claims that past spending would have been effective it does not inform respondents that future spending would be effective—respondents have to make that inference themselves. As such, our treatment is similar to a statement that a politician could make in advocating for greater pandemic spending in general. For the statement to affect beliefs, respondents must change their beliefs about pandemic preparation spending, and it is possible that people believe the COVID treatment but not that pandemic spending is effective/efficient in general.

Studying pandemic preparation during a pandemic is clearly an unusual context, but it does provide some useful features for our purposes. First, the COVID example seems like a clear case where the United States was not well-prepared for a pandemic, and it is therefore easy to make the case that better preparation would have saved lives. Second, the COVID pandemic had effects nationwide, and therefore it is unlikely that people oppose preparedness spending because they do not see themselves as potentially affected by another pandemic. For example, people in Arkansas are unlikely to be affected by an earthquake and for that reason they might oppose money being spent to retrofit buildings for earthquake resilience. The experiment represents a balance of a treatment designed to identify a causal effect with sufficient realism such that the results speak to real-world situations.

A report by the National Safety Council suggests that better preparedness would have prevented about 200,000 deaths from the Opioid crisis in the United States since 2015. These estimates are corroborated by other studies.

This treatment was designed to see if support for preparedness extends to different areas other than COVID. The Opioid epidemic differs from COVID in a lot of ways (for example, it seems to be longer lasting, people’s individual choices seem more relevant, and it does not spread directly between people), and these differences are good in our context. If even something as different as the Opioid epidemic leads to increased support for public health spending and/or pandemic preparation, then it suggests that respondents are influenced by information about preparedness across a wide variety of possible conditions.

Outcome Variables

We used a variety of different measures of respondent preferences to gauge the effect of our treatment vignettes. Our goal is to understand if information affects respondents’ preferences for public health spending on preparedness and response. Accordingly, as outcome measures, we look at support for increased public health and preparedness spending, and we ask respondents to identify their preferred allocation of a hypothetical budget between public health response and preparedness to better resemble decision-making with a budget constraint. We use multiple measures to help ensure that any treatment effects we identify are not unique to a particular outcome measure. 5

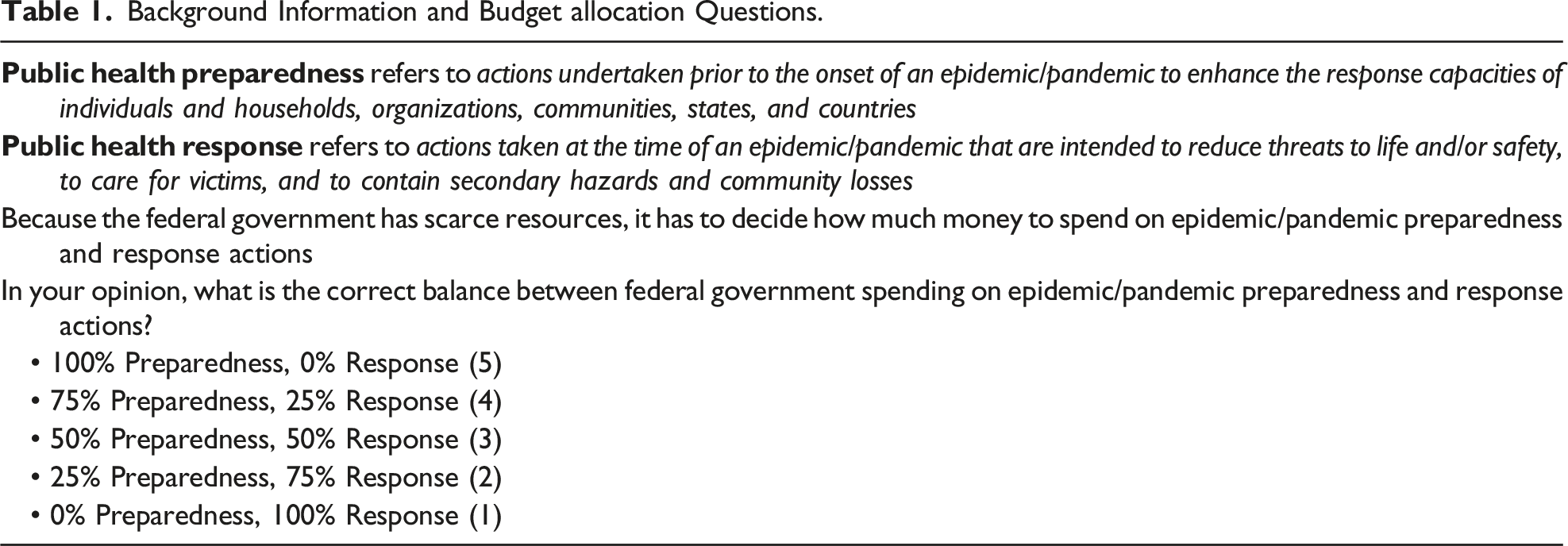

Background Information and Budget allocation Questions.

In addition to the question about the tradeoff between pandemic preparation and response, we also asked the following three outcome questions after respondents were exposed to a randomly assigned treatment. For each question the response category was a reverse-coded 7-point Likert scale that ranged from “Strongly Agree” (7) to “Strongly Disagree” (1). • The federal government should increase public health spending in 2021. • The federal government should increase pandemic preparedness spending in 2021. • The federal government should increase public health spending to reduce Opioid-related deaths in 2021.

In an attempt to understand if the treatments were likely to affect voting, we also asked the following question with more likely (1), less likely (−1) or neither more or less likely (0) as the response categories. • If your Member of Congress votes to increase public health spending in 2021, how would it affect your vote in the 2022 Congressional elections?

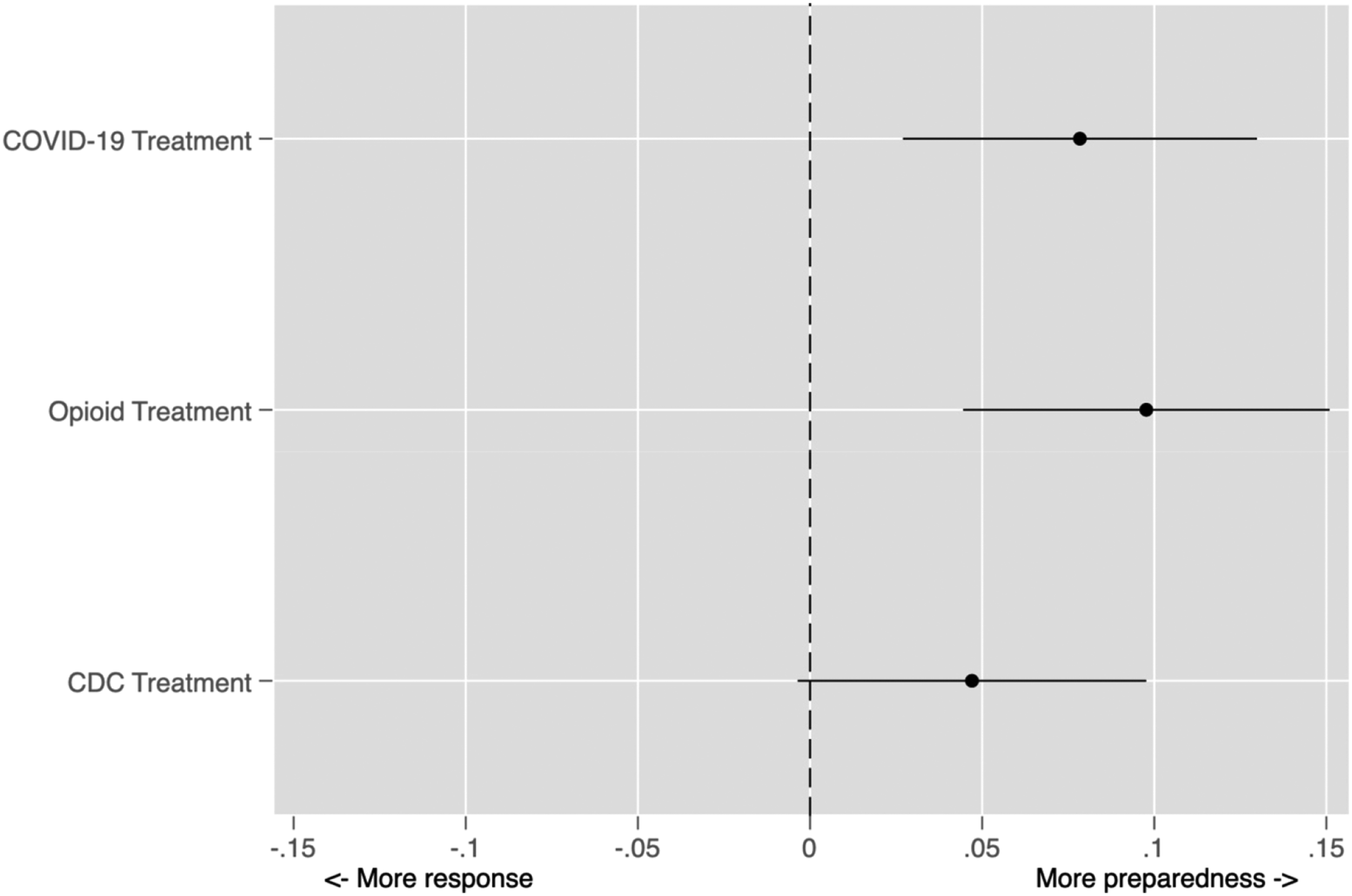

Summary of Our Hypotheses.

Results

We turn now to analyzing the effect of our various treatments on how respondents answer the different outcome questions. In short, we show that our treatments affect beliefs, although we also find results that demonstrate nuance in how people think about preparedness policies, with implications for understanding the puzzle underlying relative underinvestment in preparedness policies compared to response.

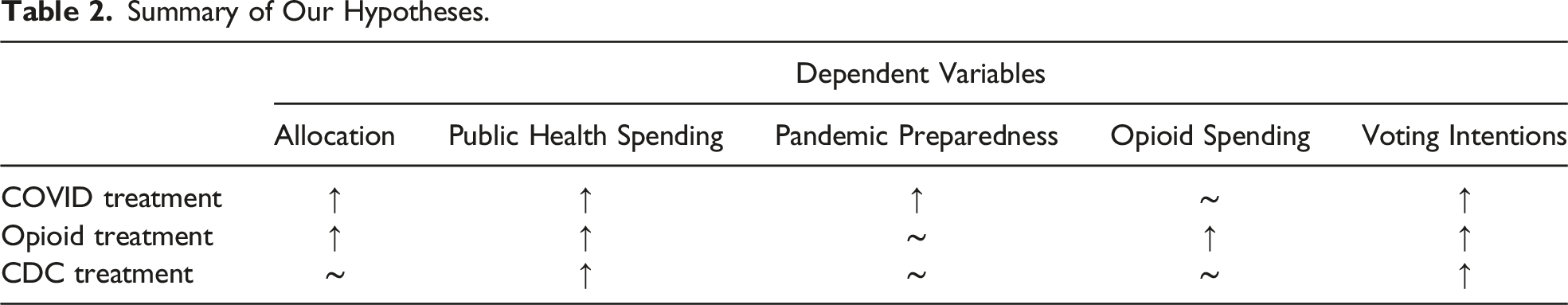

The Allocation of Preparedness and Response Spending

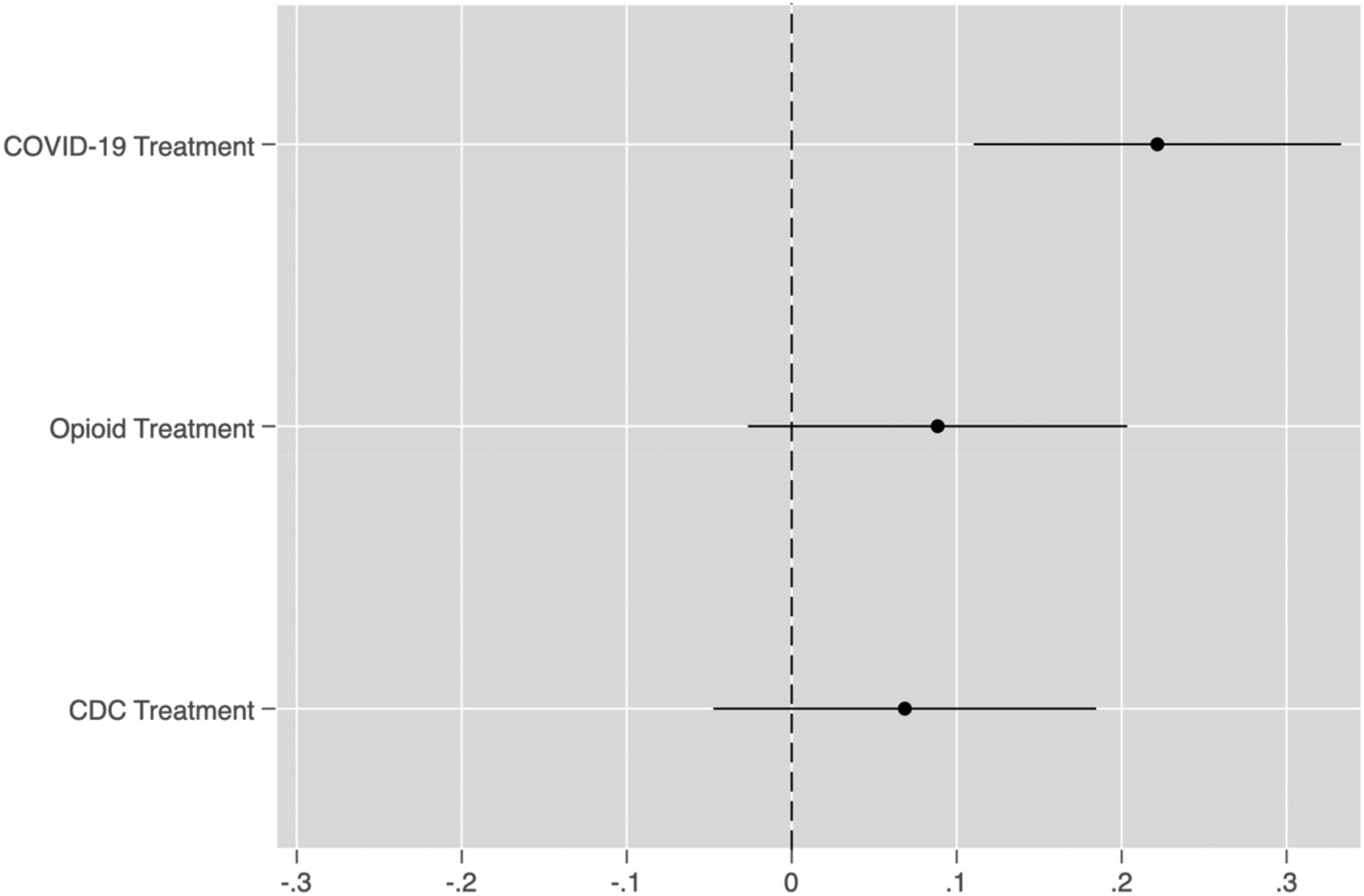

We first report the results related the effect of our treatments on preferences for allocation between spending on preparedness or response. In Figure 2, we display the average treatment effect for each of our three treatments using coefficient plots with 95% confidence intervals. To estimate the treatment effects, we used a linear probability model and included controls for whether the respondent was recruited via MTurk or Qualtrics, measures of the personal impact of the COVID-19 pandemic and the Opioid epidemic, prior attitudes about preparedness spending, political trust, party ID, political ideology, political moderation, political interest, income, sex, age, race and ethnicity.

7

Average treatment effects on the allocation of preparedness and response Spending.

Consistent with our expectations, information about the benefits of public health spending (i.e., COVID and Opioid treatments) leads to an increase in support for pandemic preparedness. As expected, the CDC treatment does not lead to a significant increase in support for greater allocation to pandemic preparation. The CDC treatment is insignificant at conventional levels, and this result accords with our expectations that the CDC treatment should not affect the allocation between preparation and response. Given that we fielded our experiment in the midst of a global pandemic, we take this as considerable evidence that respondents are not simply primed to support greater pandemic spending by just any information about public health and spending. Rather, the results suggest that opinion change is specific to the treatments that invoke pandemics and preparation, which is consistent with the idea that respondents are learning from the experimental treatments.

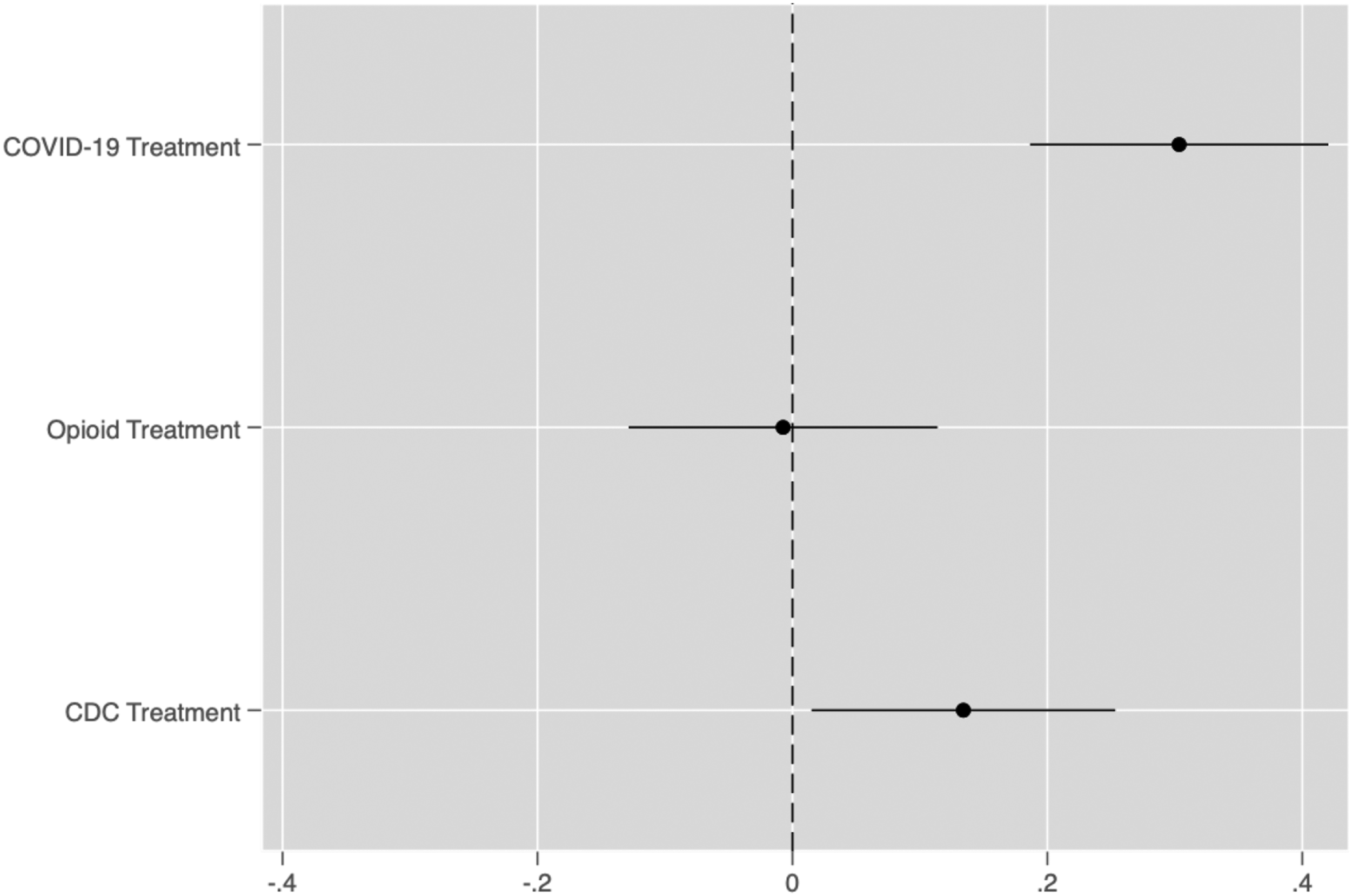

Increased Public Health Spending

We expected that all three of our treatments will increase support for greater public health spending. Figure 3 plots the estimated treatment effects for each of the three treatments. The results demonstrate that only the COVID-19 treatment appears to shift opinion towards increased public health spending. In contrast, neither the Opioid treatment nor the CDC treatment lead to more support for public health spending, which is inconsistent with our expectations that both treatments would lead to more support for public health spending. Average treatment effects on support for increased public health spending.

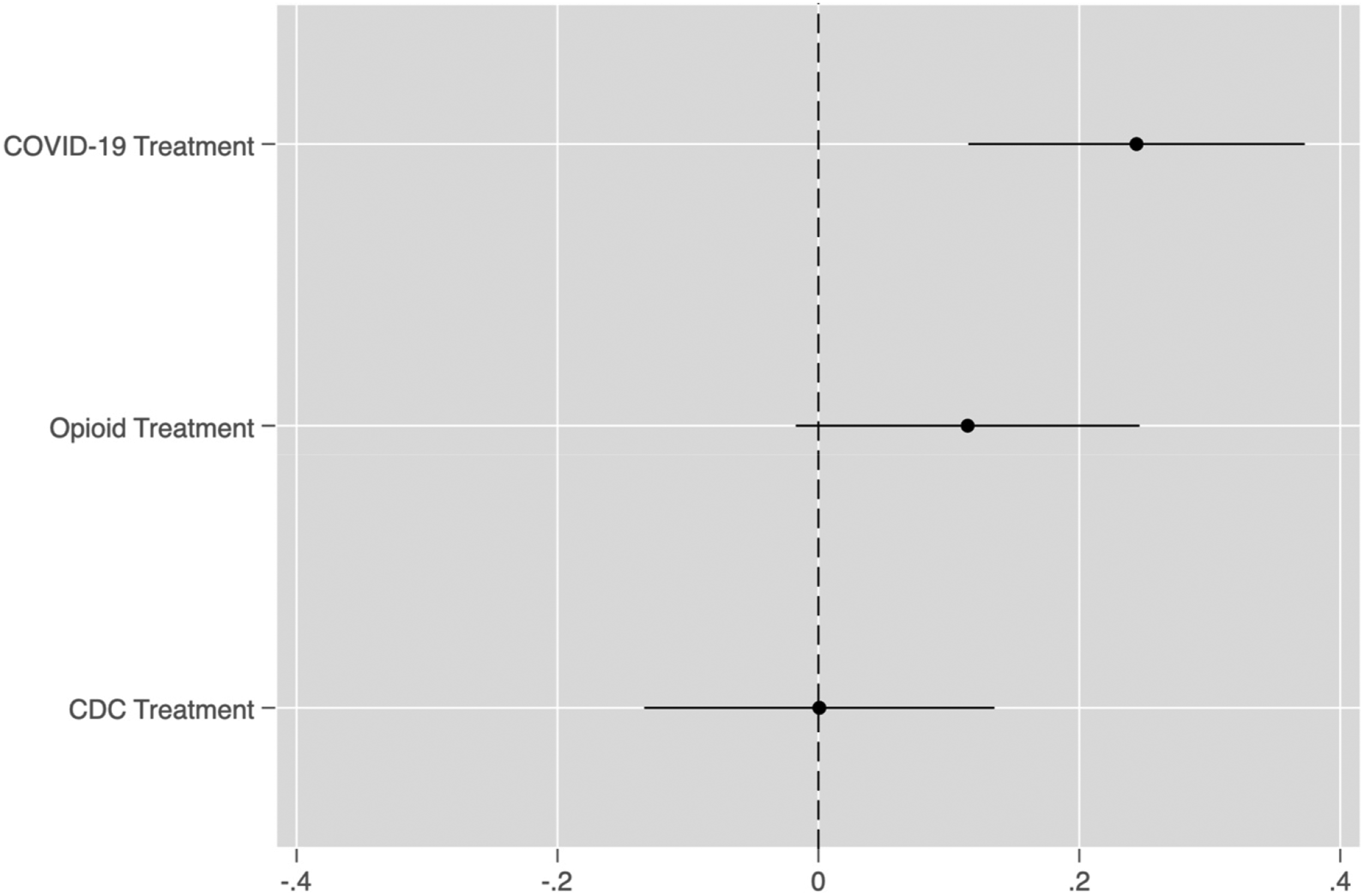

Increased Pandemic Preparedness Spending

We next present results related to the effect of our treatments on preferences for pandemic preparedness spending. We expect that the COVID-19 treatment will affect preferences for pandemic spending, but neither the Opioid nor CDC treatments would affect these preferences. In Figure 4, we display the estimated treatment effects. As expected, the COVID treatment increases support for pandemic spending and the Opioid treatment does not. Contrary to our expectations, the CDC treatment does lead to more support for pandemic preparedness spending. It seems plausible that this effect occurs because the ongoing COVID pandemic makes people more sensitive to the information about the decline in CDC spending; however, since both samples were recruited during the pandemic we cannot identify if the effect would have varied under different circumstances. Average treatment effects on support for increased pandemic preparedness spending.

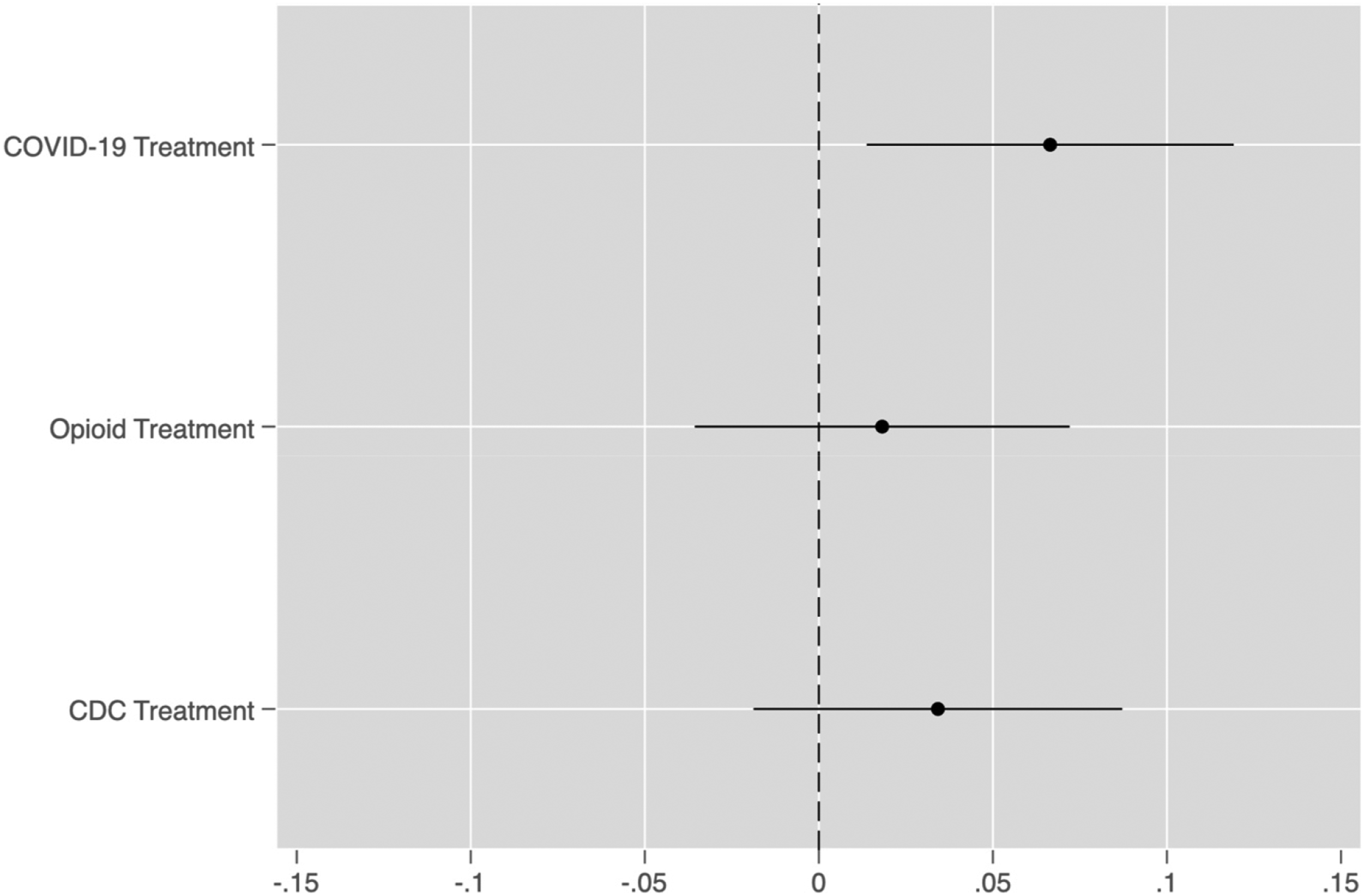

Increased Public Health Spending to Reduce Opioid-Related Deaths

To help us understand if information about the benefits of public health leads to an increase in support for greater spending we look at the effect of a treatment about the Opioid epidemic. We expect that the Opioid treatment will affect opinions about spending on Opioid-related deaths, but we do not expect the other treatments to affect this outcome. As displayed in Figure 5, we do not find that the Opioid treatment affects responses, and we do find that the COVID treatment affects support for increased spending on efforts to reduce deaths from Opioids. This result is very anomalous given our predictions, and we lack a good explanation for why the COVID treatment seems to affect preferences for spending on Opioid abuse prevention.

We suspect that one reason why the Opioid treatment may not change respondents’ beliefs is that Americans largely assign individual responsibility for the Opioid crisis. Previous polling suggests that the majority of people place blame for the crisis on the individuals taking prescription painkillers or doctors prescribing these medications, and not the government (Sylvester, Haeder, and Callaghan 2022). These prior beliefs may help explain why people do not respond to information about the effectiveness of preparedness spending to increase support for these policies Figure 5. Average treatment effects on support for increased federal public health spending to reduce Opioid-related deaths.

Voting Intentions

While it is important to understand the drivers of support for preparedness spending, government responsiveness ultimately depends on electoral consequences for politicians’ support for or opposition to these initiatives. As a result, our final analyses concern support for incumbent Members of Congress based on their support for increased public health spending.

We find mixed evidence in support of our expectations in Figure 6. We find that respondents assigned to the COVID treatment are more likely to vote for an incumbent who proposes increased public health spending. However, we do not find that the other two treatments affect support for incumbents. Again, we cannot be sure if this effect only occurs because the experiment took place in the midst of the COVID pandemic or not. To identify the conditions under which information about pandemic preparation affects respondents’ beliefs requires more research with different treatments that take place under varying conditions. Average treatment effects on voting intentions.

Moderating Effects of Respondents’ Beliefs

We are also interested in the moderating effects of prior beliefs about public health spending on the effects of our experimental treatments. This is particularly important to understand, because it may help shed some light on how the treatments interact with prior beliefs and provide some insight, therefore, about how these effects occur. Given that our treatments speak directly to the effectiveness of preparedness spending and that they provide information that could change respondents’ preconceptions that these policies are ineffective, we focus especially on how concern about public health spending efficacy might moderate our treatments.

For ease of interpretation and to give us more observations at various levels of concern about public health spending, we transformed the original variable about respondents’ beliefs into a trichotomous scale as follows: Not Concerned (1 and 2), Neither (3), and Concerned (4 and 5), which also matches Figure 1.

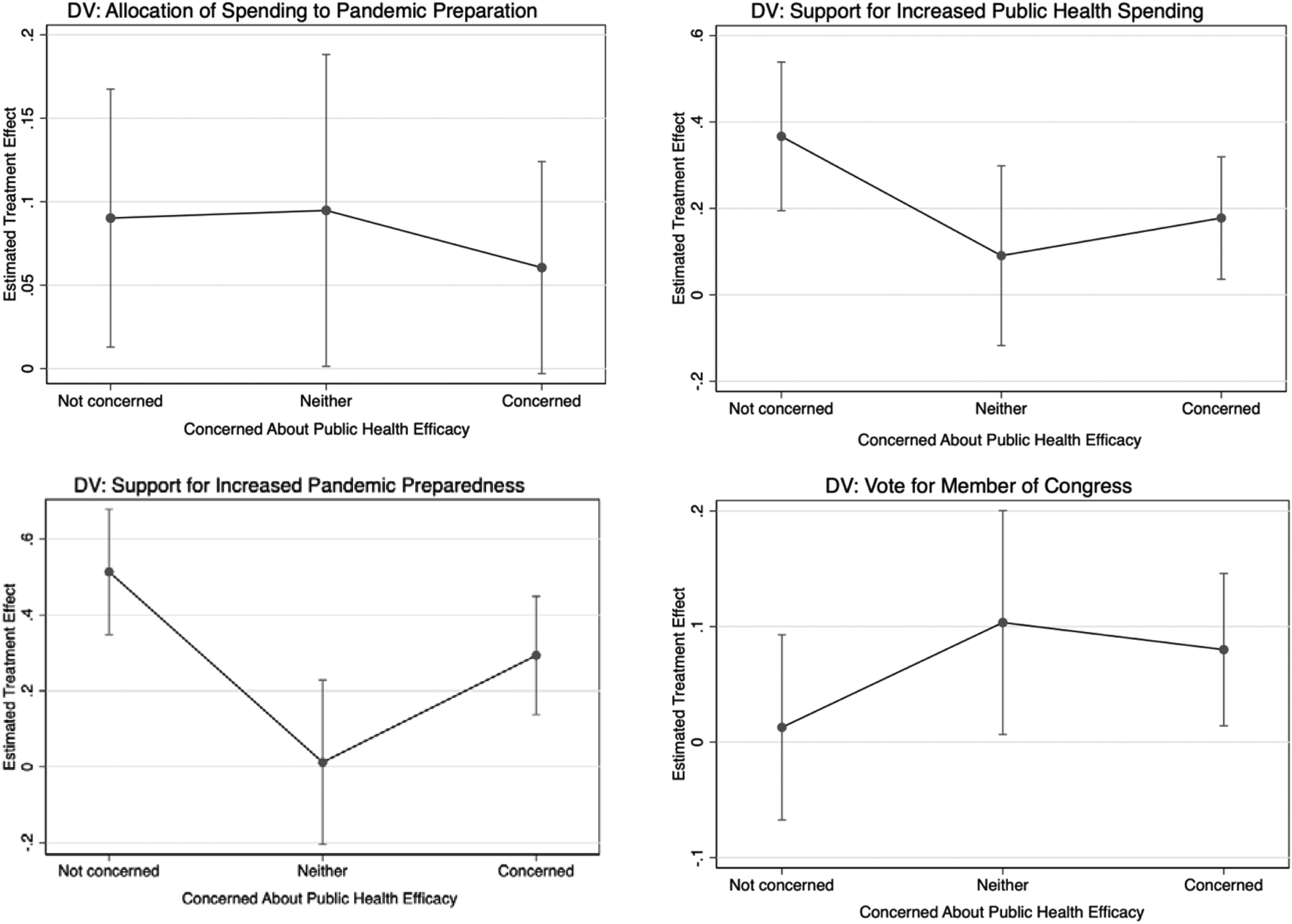

We interact this variable with our treatments to estimate how treatment effects vary with beliefs about public health spending and display the results in Figure 7. The estimated treatment effects compare those in the control group to those in the COVID-19 treatment group who have similar prior beliefs about public health efficacy. Positive values on the Y-axis indicate that the average response among those in the treatment group with those beliefs is greater than the average for the control group. Relationship between beliefs about public health effectiveness and treatment effects.

Figure 7 reports these results with 90% confidence intervals. We only display the results from the COVID-19 treatment because we are most interested in its effects. The results for the other treatments appear in the appendix. Each estimated treatment effect is based on a significantly smaller number of observations, because each depends on the respondents with a given category of the public health effectiveness question causing a significant increase in our confidence intervals.

Regardless of prior beliefs, the estimated treatment effects are consistently positive. Respondents assigned to the COVID-19 treatment were more supportive of the different outcomes than respondents assigned to the control condition at almost all levels of prior concern about the effectiveness of public health spending. At the same time, the treatment effects were smaller among those most concerned about the efficacy of public health spending, which suggests that while respondents incorporate the information we provided, their prior beliefs also condition the size of the treatment effect.

Collectively, these results suggest that respondents’ prior beliefs about the effectiveness of public health spending affect how individuals respond to the COVID-19 treatment, but that information can still shift attitudes among even those with prior beliefs that would seem less supportive of public health spending. This is important, because it suggests that the provision of information can cause respondents to update their prior beliefs, and in this case lead to support for public health spending (Hill 2017). These results provide some reason for optimism about the prospects of “correcting” myopia and that people will support disaster preparation after being presented with information about the utility of these policies. From the evidence presented here, pessimistic accounts of voter myopia might be overstated—if voters are given information about the effectiveness of public policy, our results suggest they may support these policies.

Treatment Effect Heterogeneity by Party Identification

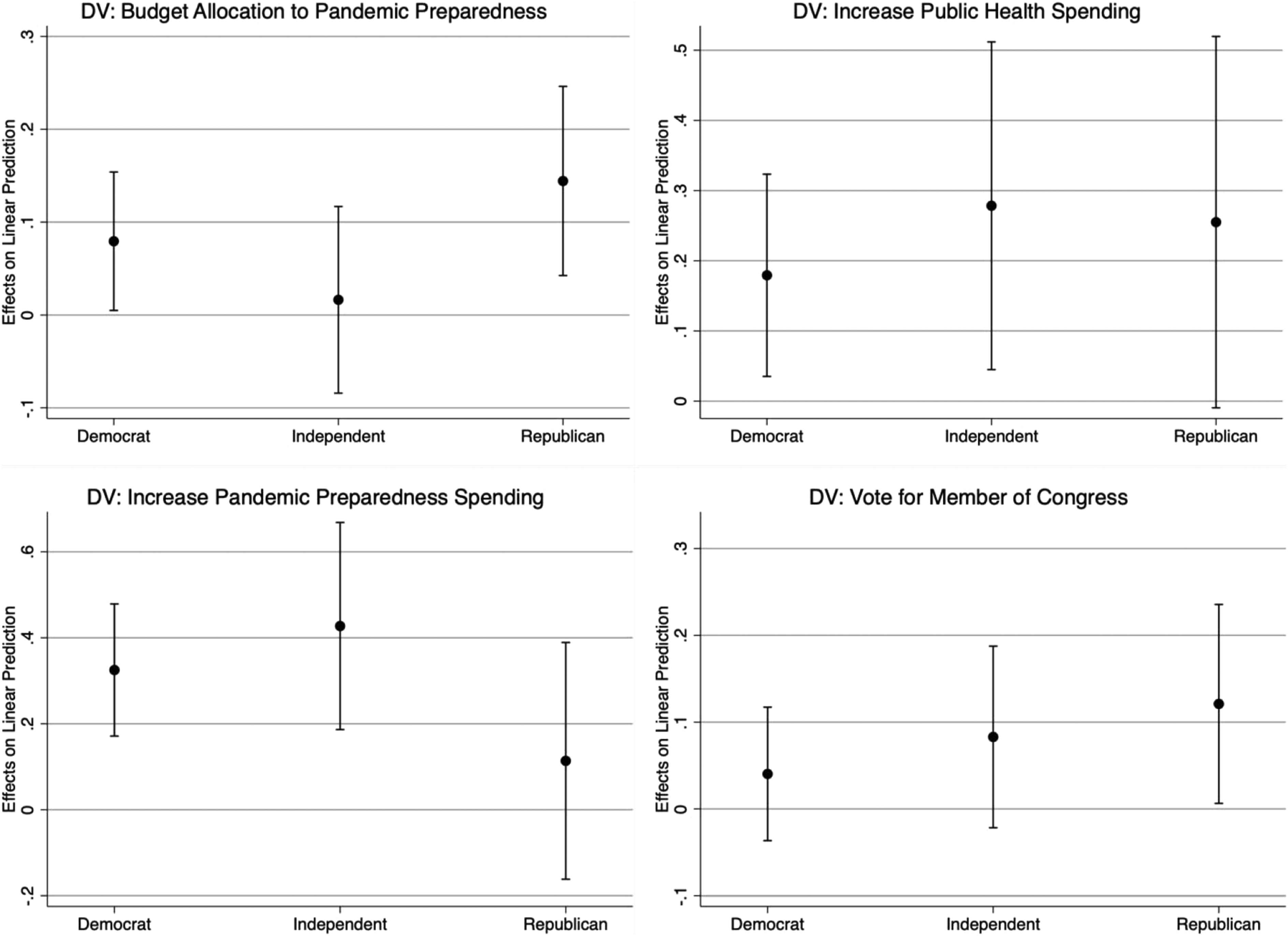

Given the politicization of the COVID-19 pandemic and the differing responses to it by the political parties, we also thought it useful to examine whether the treatment effects are meaningfully different by the political party of the respondent. Theories of motivated reasoning suggest that Democrats and Republicans will interpret the treatment differently (Taber and Lodge 2006; 2016); however, recent research suggests that Democrats and Republicans actually respond very similarly to a wide variety of experimental treatments designed to test for motivated reasoning (Coppock 2023). To investigate which pattern is more consistent with the data from our experiment we interacted the COVID-19 treatment with a dummy variable indicating if the respondent identifies as a Democrat, Independent or Republican and included various demographic variables to account for the fact that party identification is not randomly assigned. In the context of COVID’s politicization we would expect that if motivated reasoning were at play we would observe a positive treatment effect for Democrats and a negative or no treatment effect for Republicans, but we do not have clear expectations for Independents.

In Figure 8, we report the estimated effect of the COVID treatment on each of our dependent variables. For all three types of partisans (Democrats on the left, Independents in the middle, and Republicans on the right), we observe a mix of significant and insignificant treatment effects. Interestingly, no treatment has consistently positive effects across all three groups, and every treatment has a null effect for at least one of the partisan groups. These results do not seem consistent with the general idea of motivated reasoning, because we would have expected Republicans to resist all three treatments and therefore have null or negative effects for each outcome; however, we observe a positive treatment effect for both a greater allocation to pandemic preparedness instead of response and for the probability of voting for a candidate who supports pandemic preparedness. Relationship between party ID and estimated treatment effects.

8

In the context of political campaigns and elections, these results suggest that respondents of all political parties may be willing to support greater spending on pandemic preparation if they are informed about its benefits.

Learning vs. Priming

A possible interpretation of these results is that they reflect priming rather than learning or changing beliefs. The difference between priming and learning has been discussed by a variety of scholars before, typically in the context of campaigns. In this context, priming is said to occur if the information affects the importance people place on an issue and/or changes the criteria that people use to evaluate policies or politicians. On the other hand, learning is said to occur if individuals change their policy positions to match those of a political candidate.

Our domain is slightly different in that we are studying opinion about policy issues rather than candidates. One way scholars have tried to empirically discriminate between learning and priming in studying opinions about policy is to look at the different estimated treatment effects based on respondents’ prior knowledge (Lergetporer et al. 2018). The intuition is that if learning is at play, then we should observe that the effect of the information is the greatest for those with the least prior knowledge.

Unfortunately, we did not ask respondents about their knowledge of pandemic preparation so we cannot use the exact same approach to studying learning. Our best take at addressing the priming versus learning issue is to reconsider the results in Figure 7. The x-axis reflects whether respondents are concerned about the efficacy of public health spending. The clearest sign of learning, we think is whether there is a positive treatment effect for those who are concerned with the efficacy of public health spending, because the COVID treatment provides information that is contrary to this group’s prior beliefs. Of the four estimated treatment effects in Figure 7, three are significant for the concerned respondents and the fourth treatment effect is positive, but not quite significant. This suggests that learning explains at least part of the estimated treatment effects for this group.

At the same time, those not concerned about public health efficacy also exhibit significant treatment effects in three of the four estimates. These effects could certainly reflect learning as the information may be novel and lead them to increase their support for pandemic preparation. At the same time, the estimated effects for these respondents could also indicate priming, because the treatment makes pandemic preparation more salient leading to more support for spending. One difficulty about differentiating between the two explanations is that the two explanations are not mutually exclusive either across or within subjects. A treatment may lead to increased support for public health spending by priming its importance to some respondents or teaching some respondents new information that changes their mind. It may also be possible that for a given subject some portion of their opinion change is related to priming and some related to learning. If we used well-known information that primed subjects but did not inform them of anything, then it would be easier to eliminate learning as an explanation. However, in this case it seems likely that the information treatment did not provide well-known information and therefore both explanations are possible.

Yet, to our minds, there remain a number of other reasons why learning seems more likely to explain the results than priming. First, our experiment took place squarely during the COVID-19 pandemic and it therefore seems likely that most respondents would already be attuned to its existence, even if they were unaware of the positive effect of better preparation. Therefore, if priming operates by raising the salience of an issue, then in this context it would seem relatively unlikely because of the baseline salience of COVID already. The COVID treatment was the only treatment with a consistent effect, and given the existence of the pandemic we would not expect to prime people about COVID. However, the information in the treatment is likely novel to most people, suggesting that the treatment effects likely reflect learning. Second, Tesler (2015, 807) argues that “media and campaign content should tend to prime predispositions and change policy positions.” The design of our treatment and outcome questions seems to tap into policy positions rather than predispositions, making learning more likely than priming. Third, we do not estimate a significant causal effect of the other treatments on the respective dependent variables (see appendix), which we might expect if there was a general priming effect to these treatments.

While it is hard to definitively rule out priming in lieu of respondent learning, we believe the case is strongest for learning as an explanation for our empirical results. However, the results certainly raise the need to better understand if priming or learning is most likely given respondents’ beliefs and characteristics.

Discussion

In this paper we argue that one reason why people prefer disaster response to preparation is that they lack information about the efficacy of preparedness, and therefore are unlikely to support spending money on it. Using an online survey experiment conducted on two different samples, we demonstrate that information about the effectiveness of preparedness policies can lead to increased support for preparedness policies, and that this also can translate into voting intentions towards incumbent Members of Congress.

The most consistent treatment effects we estimate relate to the COVID pandemic. This does not seem particularly surprising as the COVID pandemic has affected everyone causing major transformations across society, and therefore, learning that better preparation would have saved lives seems incredibly salient. The differential effects of the treatments, however, raise important questions about what type of information affects beliefs and how context affects whether information changes beliefs. One possibility is that the widespread nature of the COVID pandemic has made respondents generally more willing to support pandemic preparedness, and the relatively narrower effects of Opioids do not create sensitivity to public health preparation.

A second contribution of this paper is to examine how individuals’ beliefs about preparedness relate to our estimated treatment effects. We find that concerns about the effectiveness of preparedness policies are important in shaping how people respond to information about public health spending, but the COVID-19 treatment still seems to increase support for public health spending among those we might expect to be opposed to it.

Collectively, the paper suggests reasons that individuals are responsive to information related to preparedness. Our results suggest that popular opposition to preparedness is not immutable, but information campaigns to inform the electorate could lead to greater support for policies that have the potential to reduce harm from public health threats. Further research should build on the results of this study, our findings suggest voters might be uninformed, but they are not necessarily as myopic, irrational, or short-sighted as commonly believed.

Supplemental Material

Supplemental Material - Correcting Myopia: Effect of Information Provision on Support for Preparedness Policy

Supplemental Material for Correcting Myopia: Effect of Information Provision on Support for Preparedness Policy by Nicholas Weller in Political Research Quarterly

Supplemental Material

Supplemental Material - Correcting Myopia: Effect of Information Provision on Support for Preparedness Policy

Supplemental Material for Correcting Myopia: Effect of Information Provision on Support for Preparedness Policy by Nicholas Weller in Political Research Quarterly

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.