Abstract

To some, purposiveness is the core of the enactive approach. Others, however, view the notion of purposes as a pre-scientific crutch – one that will eventually be replaced by mathematical analyses of the kind of dynamical systems that we are. Yet advances in cognitive neuroscience have not vanquished the problem of purpose, only driven it underground. Rather than explicitly confronting this problem, in attempting to uncover the true aims of a target system, contemporary cognitive scientists implicitly rely upon their own aims in informing the construction of experimental procedures, measurements, and models. Such aims are essential for making choices of relevance vs irrelevance or signal vs noise, by means of which essential principles are abstracted from a disorderly biological substrate. Pragmatism is all the rage – why should cognitive science buck the trend? Well, precisely because the targets of cognitive science are the very purposive agents upon which the pragmatist stance depends. The promise of enactive cognitive science is an account of this purposiveness – yet such a project cannot rely solely on dynamical formalisms that are inadequate to the task. Instead, it depends upon the organicist critique of these abstractions and proposal for a thermodynamically-grounded account of the purposive agency that underpins them.

Heidegger:

Der Spiegel:

Heidegger:

– Der Spiegel (1981/1976)

Introduction

The aim of autopoiesis theory, as Humberto Maturana and Francisco Varela declared it in 1980, was to understand living systems via a ‘mechanistic’ approach that involved only what can be found ‘anywhere else in the physical world… blind material interactions governed by aimless physical laws’ (p. 74). In service of such a mission, in Maturana's words, ‘any attempt to characterise living systems with notions of purpose or function was doomed to fail’ (p. xiii).

A lot can change in a few decades. By 2002 – to the presumed horror of those who had once rejoiced at this naturalistic ‘destruction of teleology’ (Beer, 1980) – we find Varela declaring that there is in fact ‘a real teleology implied in the notion of autopoiesis’, that it is a source of ‘subjectivity, intentionality and meaning’, and thus that, ‘organisms are subjects having purposes according to values encountered in the making of their living’ (Weber & Varela, 2002).

This search for an alternative ‘science of meaning’ is, alongside the rejection of a ‘representation-first’ view of cognition, one of the central pillars that defines Varela et al.'s (1991) presentation of the enactive approach. Yet the term ‘enactivism’ is also used more loosely to refer to the endorsement of the second, anti-representationalist, pillar alone. Disagreement thus persists about whether autopoiesis supplies an adequate basis for an alternative ‘science of meaning’, and indeed whether any such basis is even needed (Barandiaran, 2017; Ward et al., 2017).

This broad tent of enactive cognitive science has been pitched across a fault line. On one side: cyberneticists, who hew to Maturana’s machinistic view within which mind may be continuous with life, but only to the extent that both are continuous with non-life and all can be subsumed within the mathematics of dynamical systems theory. On the other: ‘organicists’, who take living systems to constitute a genuinely new sort of organisation – one that is necessary for a system to be cognitive and which cannot be straightforwardly approached via the same modelling strategies used in ordinary physics.

Beyond a generally anti-representationalist bent, those inside the tent all share a commitment to the continuity between life and mind, a focus upon processes rather than static structures, and a preference for holistic descriptions that emphasise interconnectedness over isolated movements. In addition to their disagreement around the status of intrinsic purposiveness, they differ on the relevance of material and energetic aspects of life to a theory of cognition. These two points of disagreement, I will argue, are linked. If we adopt the cyberneticists’ mathematical abstractions, we will find no distinction in kind between life and non-life from which a realist account of purpose might be constructed. To secure the status of cognition as a natural, rather than merely conventional kind, we must look for thermodynamic joints in types of physical processes that can instantiate these structures.

What does a metaphysical debate about purpose have to do with the prospects for enactive cognitive

The scientific fertility of the cybernetic approach is due not to the superiority or minimality of its metaphysical commitments but to the fact that it does not force the cognitive scientist to swap out the baggage they have already taken on board: namely, the idea of mind as machine and cognition as a formal phenomenon. The organicist view demands, and offers, more. It requires attending not only to the abstracted dynamics a system’s structure enables, but also to the precise way said this system depends upon these dynamics in turn. What it offers is a robust account of the distinction between purposive organisms and everything else.

In section one, I explain why we should care about this distinction, for the notion of purposiveness is not simply a philosopher’s concern. The lack of a good account of it, as I describe, continues to trouble contemporary cognitive science. In section two, I introduce the cybernetic programme for solving this problem and situate Maturana & Varela’s (1980) early work on autopoiesis as a continuation of this tradition of abstracting away from thermodynamic and material specificities of living cells, to identify the same properties across a range of systems, scales, and substrates. In section three, I explain how these properties are formalised in the language of dynamical systems theory, and, in section four, I describe how the generality of such descriptions fails to capture the distinctive properties underpinning the purposiveness of living systems. In section five, I’ll describe an alternative motivation for adopting dynamical frameworks, derived not from an attempt to either ground or eliminate the notion of ‘purposes’, but rather to ape the successes of mathematical physics. I’ll then explain why the same sort of success should not be expected in the biological sphere of heterogeneous and highly-plastic systems.

Even though a cybernetic analysis fails, as I’ll argue in section seven, we should not abandon the pursuit of refining our phenomenological understanding of our own agency via scientific investigation. Alternative frameworks to dynamical systems theory are available. Theoretical biologists have repeatedly confronted the limitations of DST for analysing living systems, independently of enactive aims, and, as I describe in section eight, they have offered alternative accounts of biological organisation that do not abstract away from its thermodynamic underpinnings. In short, we can develop a robust account of what makes a system purposive, and, insofar as cognitive science implicitly depends upon an implicit notion of purpose, such an account is not orthogonal to scientific investigation, but essential to guiding it.

The Problem of Purpose

A dominant trend within contemporary neuroscience is an increasing appreciation that many of the details abstracted away from a doctrine that treats neurons as the fundamental units of cognition (cf. Barlow, 1972; McCulloch & Pitts, 1943) may be much more significant for our cognitive processing than appreciated. The dendritic trees of a single neuron have been described as performing computational operations that would require a multi-layered artificial neural network to execute (Jones & Kording, 2022; Mel, 2016; Poirazi & Papoutsi, 2020). The precise details of individual spike timing that are ignored by a presumption of rate coding have been argued to be potentially key to the coordination of behaviour (Brette, 2015) and there is a gathering sense that there is more to the brain’s variability in activity than has been reflected in the traditional view that it is an ‘indication of the brain’s imperfection, a noise that should be averaged out to reveal the brain’s true attitude toward the input’ (Buzsáki, 2004).

Another, parallel, trend is the appreciation that higher-order relational patterns exhibited by neuronal ensembles may be where the cognitive action takes place leading the suggestion of a ‘rapidly growing trend in the field towards the neural population doctrine’ (Saxena & Cunningham, 2019). Given such conflicting perspectives, how should we demarcate the functional units of the brain?

Certainly, god forbid, not through

Clearly, however, not everything the brain does is cognitive, behaviourally relevant, or even functional in the broadest sense. At the macroscale of a suspended 40oz mass, the brain exerts a force on the surrounding cerebrospinal fluid and, at the mesoscale of neural ensembles, lots of brains regularly undergo intense bursts of uncoordinated electrical activity, called seizures. These phenomena may have causes, but they do not have reasons and many of them really are irrelevant to the cognitive or behavioural neuroscientist.

Accordingly, critics of ‘bottom-up neuroscience’ have argued that we cannot simply observe the relevant components and interactions of amid the brain’s causal thicket in a theory-neutral manner. There are, as Glennan (2002) points out there are ‘no mechanisms simpliciter, but mechanisms for behaviors’ (p. 344) And so, what neuroscience needs, Krakauer et al. (2017) argue, is top-down ‘“organismal-level thinkers” to develop detailed functional analyses of behaviour’. Once we have this understanding of the coherent behavioural outputs that the brain’s parts are coordinated towards producing, then we are positioned to answer whether the brain’s ‘code’ is a matter of spike timing or average firing rate, in terms of understanding which variations are causally relevant for producing said behaviour and which are just noise.

The challenge of this ‘behaviour-first’ approach, however, as critics of behaviourism pointed out throughout the late 20th century is that very different movements may have the same behavioural import and extremely similar ones, from the raising of the wrist to the lowering of the eyelid, mean very different things in different contexts. As no physical movement is identical to any other, so a particular ‘type’ of behaviour is no more a matter of direct observation than a particular neural mechanisms (Block, 1981; Chisholm, 1957; Geach, 1957). In order to identify behavioural types, our categorisations must be informed by the purpose of the particular bodily movements in question. For the 20th century philosopher of mind, this meant understanding of the inner belief-desire states that lead to a behaviour and, for the 20th century computational functionalist, this meant identifying interactions among the physical elements that caused it and mapping these to logical transformations among symbols that encode those propositional attitudes.

The 21st-century cognitive scientist is left with a conundrum. To classify a movement as this or that type of behaviour they might look to similarities or differences in the computational mechanisms that produce them. But, to identify the ‘same mechanism’ amid the molecular flux of a brain, the cognitive neuroscientist is enjoined to appeal to similarities in the categories of gross behavioural output that these various sets of physically heterogeneous parts tend to cause. Neither categorical framework is independently standardised or stable (Poldrack & Yarkoni, 2016; Sullivan, 2009, 2017). And so, for all the advances in neural imaging or the kinematic analysis of bodily motion, the contemporary cognitive scientist remains caught within the same conceptual circle as the 20th century psychologist (Cao & Rathkopf, 2019; Francken et al., 2022).

To Francken et al. (2022), this circularity is neither unique to cognitive science nor especially inimical to its progression. Instead, they appeal to Hasok Chang’s (2008) pragmatist account of epistemic iteration in which concepts are refined through reciprocal adjustments between different classificatory practices that progressively move these towards increasing coherence. Now the question becomes what determines coherence? Chang laments the difficulty of giving a more precise account, but, in his more recent book, he offers a more definitive analysis in terms of ‘aim-oriented coordination’, noting that the idea of things ‘fitting together’ is ‘meaningless, unless there is a purpose under which the fit is judged’ (Chang, 2018, p. 45).

Such a pragmatist analysis is all well and good when it comes to Chang’s task of explaining classificatory practices in chemistry or physics. Here, the question of what it is to be an agent that aims to develop better explosives or skincare solutions can be taken for granted. As such, he does not tackle this question directly. But to treat our own aims as a primitive foundation is uniquely problematic when we are dealing with a science in which the targets of our investigations are precisely the sort of agents whose aims shape that practice. If one hopes that cognitive science could help us ‘get behind’ the nature of our own agency, then pragmatism offers only an incomplete solution.

Where enactivism (in the most general sense) purports to go beyond pragmatism is by offering account of agential systems that avoids the pitfalls of both atomistic behaviourism and cognitivist dualism. It does this by treating the circularity of brain and behaviour a feature of the agent itself, rather than merely of the conceptual frameworks deployed by external scientific observers (Hurley, 1998). On this view, it’s correct that a movement cannot be interpreted in isolation but nor is its purpose to be determined by the independent inspection of a distinct brain-bound behaviour-causing mechanism either. As Skinner himself argued, the relationship between and behaviour is not one of hidden cause to observed effect, but rather of mutual interdependence in the production of a broader pattern of activity for, ‘The skin is not that important a boundary’ (1969, p. 228). And so, the way to think about purpose of a particular behaviour, as Juarrero (1999) argues, is in terms of the broader patterns of activity it is involved in sustaining. Indeed we might see this as the real value of the behaviourist approach: not an injunction to focus only upon external motions, but rather to use a language that is, ‘neutral with respect to the classical distinctions between the “mental” and the “physiological” – that is, a language of the mind “not as psychological reality or as cause, but as structure”’ (Merleau-Ponty, 1983, p. 5).

How then should we understand this structure? Is it an emergent and open-ended normative domain realised in the transformative patterns of practice that constitute an agent’s form of life? Or is it a differential equation?

The former option is the kind of description favoured by enactive philosophers with more organicist leanings. To a scientist like Buzsáki, however this will smell suspiciously like a bunch of ‘made-up words’. What, to the neuroscientist, is a ‘form of life’? For such vague phenomenological suggestions to be something that can inform cognitive science, he argues, we need an operational means to describe and classify behavioural ‘structures’ that is ‘free from philosophical connotations and can be communicated across laboratories, languages, and cultures’ (Buzsáki, 2020, p. 1).

If the general appeal of enactive approaches is the recognition that purposive behaviour is particular type of pattern, rather than the result of a particular type of cause, then the specific appeal of what I am calling its ‘cybernetic’ branch is a belief that the universal language of mathematics can provide precisely such a ‘philosophy-free’ operationalisation of that pattern. In the main, this means using dynamical systems theory to supply a taxonomy of behaviours, not as atomic motions, but rather as a substrate-neutral structured dynamics that may be neural, bodily, and, potentially, even exhibited by non-organismic systems. Thus, liberated from a rationalist framework of beliefs and desires, these ‘time-honored relics of philosophical speculation’ should, as Watson (1913) hoped, ‘trouble the student of behavior as little as they trouble the student of physics’ (p. 166).

Still, for allespite Watson’s assurances, the kind of enactivist concerned with subjectivity, intentionality and meaning is likely to remain troubled. Getting rid of propositional attitudes sounded like great fun at the time but haven’t we thrown the baby out with the bathwater? Unfortunately, as I’ll argue in section four, a cybernetic formalisation of autopoietic autonomy in terms of a dynamical systems theory does indeed end up eliminating any distinction in kind between purposive agents and other physical systems. Fortunately, as we’ll see in section 6, for all the physics-derived credentials and mathematical rigour, dynamical descriptions are not simply objective patterns that can just be read off from neutral observation of a system’s activity over time. Cybernetics is not the end of philosophy, and there is no escape from questions of purpose.

The Purpose of a System Is What It Does

For the cyberneticist, the problem of purpose has such a simple resolution that it’s a wonder it caused so many decades of philosophical angst. Purpose, or teleology, as Rosenbleuth, Wiener and Bigelow, explained in their classic (1943) paper, is simply a matter of regularity, where a regularly honed in upon state is the goal of said behaviour. To classify a particular movement in behavioural terms is simply to identify the particular state of affairs it is involved in stabilising, and the goal of an organism is just as observable as the target of a torpedo. The purpose of sweating is to lower body temperature; the purpose of shivering is to raise it. The purpose of train spotting or flower arranging – being embedded in a complex sociocultural-extended network of feedback loops – are more difficult to identify in practice, but no different in principle. As Stafford Beer (2002) summed it up in a prosaic and oft-quoted dictum, ‘the purpose of a system is what it does’.

Analysing individual movements as part of regular dynamical patterns, the cyberneticists argued, allows us to classify these movements in terms of their ‘goal-directedness’ while, at the same time, sidestepping all sorts of ‘metaphysical complications’ or speculations as to unobservable intentions (Ashby, 1940). The motivation for thinking that stability and control capture what we’re implicitly appealing to with talk of purposiveness and goal-directedness is a parallel with biological homeostasis. It’s an intuitive idea that the most minimally purposive behaviour is that of an organism striving to keep itself alive. Such a drive, W. R. Ashby (1956) argued, can be completely understood in terms of regulation and the need to keep particular physiological variables to within a viable range. The concepts of survival and stability can thus, he argued, ‘be brought into an exact relationship’ (p. 197) In the spirit of mind-life continuity, everything that sophisticated human cognisers do may then be treated as a variation on this theme.

Maturana and Varela’s work in

Cognitive scientists, however, are rarely interested in the capacities of single cells. Accordingly, for autopoiesis to supply a guiding paradigm for behavioural individuation, it was necessary to abstract away from the distinctive ways in which cells literally produce their internal metabolic network and surrounding membrane through a process of molecular degradation and resynthesis that reciprocally depends upon the very catalytic structures that are being re-synthesised.

For Maturana, this meant understanding self-

Still, the disagreement here is largely terminological. Varela was equally keen to extend the idea of self-determining patterns of activity beyond cellular metabolism to encompass the sensorimotor dynamics of the nervous system. And so, rather than heeding his own caution about trivialising the meaning of production, his solution was to introduce the more general term ‘autonomy’ to describe this weaker requirement of mutual dependence in a network of processes (Varela, 1979). It is this putative generalisation of autopoiesis, also referred to as operational closure, which played the central role in The Embodied Mind. The subsequent enactive literature has similarly understood autonomy to be satisfied by any precarious network of interdependent processes, which may be understood far more broadly just the metabolic activities of decomposition and synthesis (Di Paolo & Thompson, 2014; Thompson, 2010).

And so, whether or not the label ‘autopoiesis’ is taken to refer to the general organisational principle, or only to the specific molecular realisation of it, Maturana and Varela both speak of this essential principle as a general recursive activity – something that living systems may happen to manifest ‘in physical space’, but which might equally be instantiated in the behavioural space of a society; the abstract space of a set mathematical operations whose range is restricted to a subset of its domain; the sensor-effector loops of a simple robot; or a biological nervous system.

To extract an essential principle with this level of generality, it is not only contingent chemical specificities – such as the particular amino or nucleic acids of terrestrial metabolism – that must be disregarded. As Maturana and Varela (1980) explicitly propose, we must disregard material and thermodynamic considerations altogether develop a purely ‘formal theory’ (p. 113). This treatment of the energetic and material as contingent factors was criticised at the time by philosopher of biology Gail Fleishchaker (1988a, 1988b) for abstracting away the essential aspects that distinguish the living. Still, when it comes to extracting only those features of a cell in virtue of which it is Before the essentials could be seen, we had to realize that two factors must be excluded as irrelevant. The first is “materiality” .... Also to be excluded as irrelevant is any reference to energy, for any calculating machine shows that what matters is the regularity of the behavior—whether energy is gained or lost, or even created, is simply irrelevant. (Ashby, 1962, pp. 260–261)

Such an assumption is far more contentious in philosophy of biology (Nicholson, 2013). Unfortunately, the ambiguity around the autopoietic notion of autonomy has allowed it to play different sides depending on context. Those seeking a bright-line between living systems and non-living machines (who I’ll refer to throughout as ‘organicists’) can embrace the cellular notion of autopoiesis as describing the specificities of a self-producing metabolic network that is unique to the living. In contrast, cognitive scientists interested in general principles of perception-action coordination may focus more on the general dynamics of self-regulation and apply notions of autonomy to patterns of sensorimotor dynamics, whether exhibited by an organism or a robot. Unfortunately, as described by Nave (2025) and Di Paolo et al. (2022), and this has led to properties that are unique to the spontaneous self-productive activities of metabolic systems being mistakenly attributed to systems that are autonomous only in the weaker cybernetic sense. As I’ll argue in section 8, it turns out that these properties are more important for solving the problem of purposive behaviour than enactive cognitive science has often appreciated. But, before we get there, let’s see how far cybernetic autonomy alone can take us in solving the problem of behaviour.

From Autopoiesis to Dynamical Systems Theory

If we agree, with Ashby, that cognitive science is the study of regularities of behaviour, then the debate between the classical cognitive scientist and the cybernetic enactivist can be framed as a question of

To this end, contemporary cyberneticists appeal to dynamical systems theory [DST] (Chemero, 2009; Friston, 2010; Port & van Gelder, 1998; Thelen & Smith, 2002). Unlike the rationalist roots of classical cognitive science, DST derives from analytical mechanics and the attempt to describe physical laws governing the position and momentum of bodies in space. Its basic framework is a set of variables that can take on a constrained domain of values, corresponding to the state space of the system, whose change over time is governed by a set of differential equations with controllable parameters.

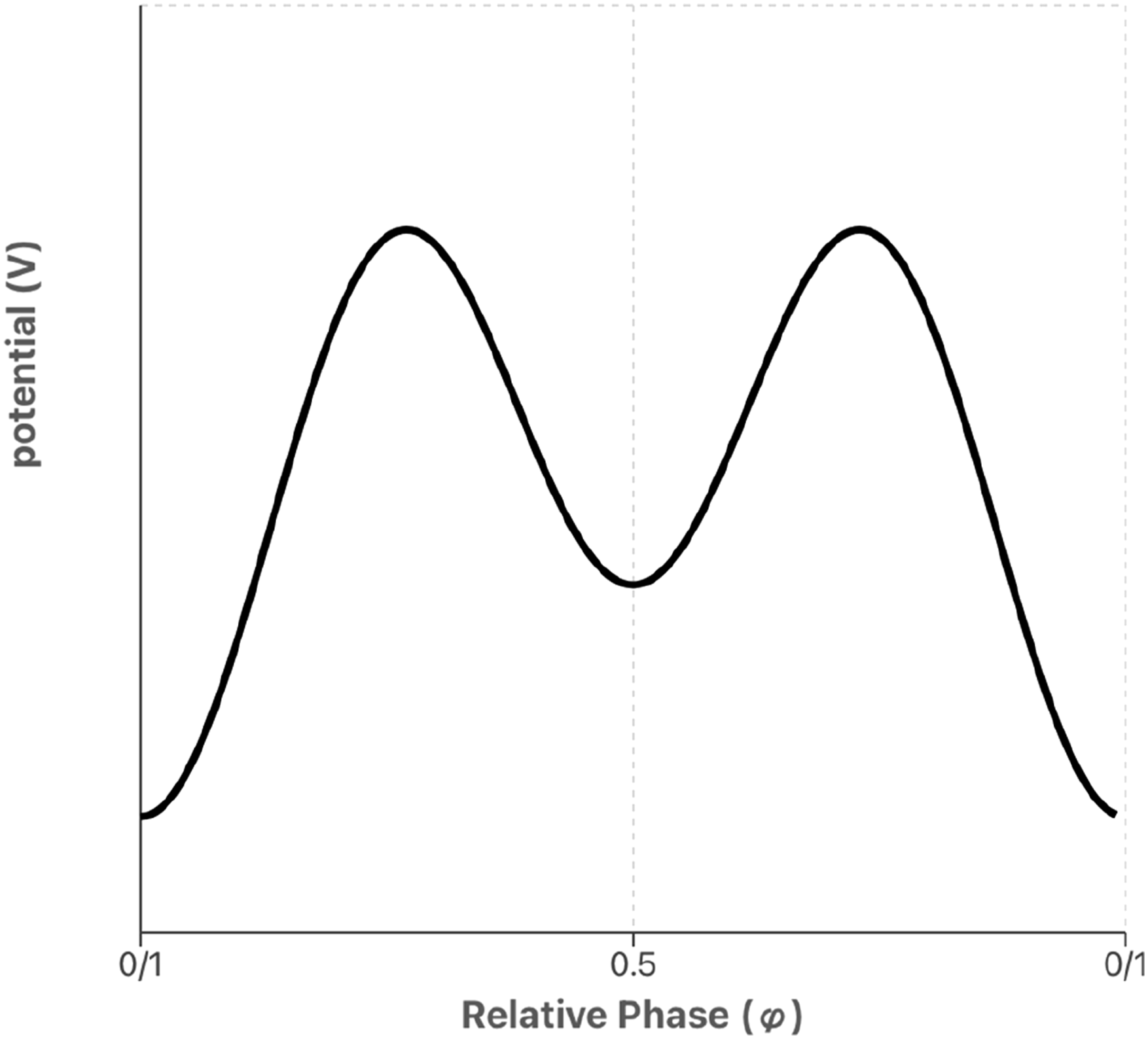

As a mathematical formalism, DST is broader than analytical mechanics and its variables need not correspond to position and momentum but might be anything we choose – from muscle tension to membrane potential – allowing the same dynamical equations to be identified across different systems and scales. One favoured case study in the versatility of this language is the Haken-Kelso-Bunz equation, introduced to describe the observation that limb oscillations (such as finger wagging) tend to converge upon in-phase or anti-phase behaviour, and to switch from the latter to the former above a certain frequency threshold. The core mathematical structure underlying the HKB equation can be described as a potential function

As depicted in Figure 1, below. The potential function for the HKB model as a function of relative phase, with a deep attractor at in-phase (0) and a shallower attractor at anti-phase (0.5)

From this, we can derive the equations of motion in terms of the rate of change of this potential function (equation (2)). This describes the steepness of the slope of the potential function at that point, which corresponds to the ‘force’ driving the system through its different states.

The cos functions in the potential mean that this will always be minimal at

The idea that this sort of emergent behaviour captures the idea of autonomy lies in the fact that the behaviour of the HKB system is not a linear combination of two individual systems, like adding batteries in a series to raise the voltage of a circuit. The ‘collective variable’ of relative phase (

As with the liberal and conservative readings of ‘self-production’ described in the previous section – whether these coupled oscillators count as autonomous depends upon how restrictively we understand processual interdependence and how finely we individuate the respective processes. Still, given that the particular dynamics of each oscillator would change if they were decoupled from one another, and the collective entrainment would cease, the system appears to meet Barandiaran’s (2027 definition of process closure as applying in any case ‘where the behaviour of the whole emerges from the interaction dynamics of its component parts in a self-organized [...] manner’. (p. 411). In this manner, the system is also ‘precarious’, to use Di Paolo & Thompson (2014) term, for the coupling between systems that sustains a particular process is sensitive to factors, such as the

In sympathy with Maturana and Varela’s aim of identifying formal and organisational structures, independent of their material or energetic basis, the HKB equation itself is a mathematical structure, independent of reference to any particular physical system. This non-specificity allows the same model to be used to describe phase switching in a wide variety of coordinative behaviours, whether speech production, social coordination, cortical dynamics, or problem solving (Chemero, 2009). In this manner the ‘language of pattern-forming dynamical systems’, Scott Kelso (1999) argues, can act as ‘one language to cut across biophysicese, biochemese, neurophysiologese, and psychologese’. (p. 229). In this language, synergies, like those described by the HKB equation, serve as ‘the fundamental atoms of behaviour, brains and life’ which may be combined in a ‘kind of grammar’ to provide a principled description of complex behaviours (Kelso, 2009, p. 9). In respect of this neutrality, DST thus appears to satisfy the monist ambitions of Skinner and Merleau-Ponty with an account of behaviour not as a discrete bodily movement but as a ‘gestalt’: a set of qualitative features that emerge from quantitative changes in interacting systems.

The Triviality of Adaptive Control

So, dynamical systems theory provides substrate neutral descriptions of patterns of behaviour, but where are the purposes in this picture? And what makes an application of DST distinctively ‘enactive’. In itself this is just a modelling tool that can be used to great effect in studying brain-body systems, with no need to have strong opinions about enactivism or cybernetics at all (John et al., 2022). Where cybernetic enactive ideas come in is with the observation that a description like the HKB equation not only describes the behaviour that a system

These attractors, Thompson (2010) suggests, appear to offer a formalisation of the notion of an intrinsic goal, as something that a system’s behaviour consistently tends towards, reliably returns to when perturbed, and which is produced by relationships between parts of this system. Such directedness without external cause has excited many outside the enactive or cybernetic fold with the seductive impression of intrinsic purpose, intentionality, or teleology (Dupuy, 2009; Veldman, 2024). Still, someone of a more eliminativist disposition might see these mathematically formalised patterns as supplying a way to classify behaviours without need for any appeal to intentions or aims altogether. After all, if the power of dynamical descriptions is their generality, then in describing attracting states as ‘intrinsic purposes’ there seems a risk of over-attributing purposiveness beyond merely living agents.

So, does DST supply an adequate naturalisation of purposiveness? And, even if it doesn’t, can DST take over the work that we needed intentional states to do in providing a non-circular and non-dualist classification of behaviours? My argument is that DST does neither. It fails

In the late 90’s, the possibility that some non-living systems might qualify as legitimate cognisers in their own right may not have seemed obviously disruptive to the enactive programme. At the time of

Thompson’s statement on the future possibility of artificial autonomy was written shortly prior to the 2012 boom in artificial neural networks, representing a triumph of the cybernetic approach to intelligence. Fifteen years on, we now have many, vastly more sophisticated, candidates for autonomy from outside the biological realm. Accordingly, the cybernetic understanding of purposiveness is regularly cited as justification for attributing goal-directedness and agency to artificial neural networks – insofar as their behaviour involves minimising the value of some function to reliably converge upon a particular state (Babcock & McShea, 2021; Flint, 2020; Kenton et al., 2023). Insofar as this is also exhibited by AIs and other machines, the idea that goal-directed agency is an ethically significant property has been suggested as a possible support for extending the ‘moral circle’ beyond organisms to encompass artificial entities also (Danaher, 2020; Long et al., 2024).

That this account of purposiveness generalises beyond the living is in tension with enactivism’s focus on a Jonasian continuity in which, as Thompson puts it, ‘Mind is life-like and life is mind-like’ (Thompson, 2010). Still, the implication of machine purposiveness alone is not, in itself, sufficient to undermine the cybernetic account. That would be chauvinist. The problem with this cybernetic account of purpose is not merely that it generalises to machines. The problem, as Ashby (1962) saw, is that it generalises to

In the attempt to develop the cybernetic picture into a less trivial account of purposiveness, many subsequent thinkers have focused on these more ‘adaptive’ dynamics, attributing agency only to systems that pursue a goal in response to a sufficient variety of perturbations or by a sufficient variety of means (see Garson, 2016). In Nagel’s (1977) terms, persistence must be supplemented with ‘plasticity. And yet, the kind of systems where we can find highly complex, stability-seeing dynamics need neither be living organisms, nor artefacts developed by organisms like us (Bedau, 1992). A rock, after all, is not so simple when we consider the interactions between the numerous particles that compose it. As with macroscopic dynamics of a pendulum, the configuration of these particles is driven by the tendency to minimise energetic potentials – now involving competing forces at multiple scales. At the sub-atomic level, the positive charge of protons creates an electrostatic field that draws in an equivalent number of negatively charged electrons thereby reducing their potential energy. At the super-atomic level, further ‘balance’ principles govern the molecular bonds making up the structures of macroscopic solids, which are typically modelled as spring mass pendulums. And, at the level of these macroscopic solids, gravitational fields determine the stable orbits of large enough solids.

The point about these competing drives towards stability among coupled systems at multiple scales is that their interaction creates complex dynamical landscapes with multiple local minima. Over long enough timescales, ‘stable’ molecules are just metastable moments, liable to eventually shift into more globally stable states when subject to an energetic perturbation that is over a certain threshold. In this new state, the molecule will once again behave like a ‘feedback control system’ that returns to a rest state if perturbations are within a certain range or ‘adapts’ into a new stable regime when this threshold is exceeded. We

The ethical import of a cybernetic account of purposiveness seems much less compelling once we see that it does not ‘distinguish biological systems’ as advocates of a recently influential variation of cybernetic enactivism, the Free Energy Principle, have claimed (Friston, 2010, p. 127). And so, unless we wish to acknowledge the significance of preserving energetic balance to electrons and risk concerning ourselves with their moral right as cognisers to achieve this, we should not interpret such behaviour in these ethically and intellectually loaded terms.

Cybernetic Eliminativism

Critics of enactivism are correct that this analysis of autonomy does not legitimate realism about purposiveness. Instead, Villalobos has argued for a return to a Maturanian position, within which normative terms, like success and failure, are understood solely in terms of the external purposes we might recruit some system towards (Villalobos & Palacios, 2021). Such notions, Maturana (2011) argued, ‘do not connote biological processes but connote opinions of the speaker about the nature of what occurs with the living being’. (p. 150). In short, the cybernetic eliminativist agrees with the enactivist that dynamical formalisms are constitutive of autopoietic/autonomous unities but rejects the idea that descriptions of these dynamical landscapes in terms of purpose and intentionality are anything more than an observer-dependent convention. Regular patterns of behaviour are real and can be classified. Intentions, aims, or purposes are not.

Many advocates of the dynamical stance have defended it in these terms. Chemero (2009), for instance, argues that dynamical descriptions of the brain are ‘blissfully metaphysics free’ in a way that computational or representational ones are not. Advocates of ‘bottom-up’ neuroscience have similarly presented DST as offering, as John et al. (2022) et al. put it, ‘principled ways to reformulate “folk psychological” terms used to describe behaviour in a manner free of our “anthropocentric biases”’.

Still, is the theoretical neutrality of this constitutive domain of dynamical descriptions really as secure as it seems? Certainly, the claim that it is ‘metaphysics-free’ is misguided. Both dynamical and computational descriptions are formal structures, whether differential equations or recursive functions (Beer, 2023). While the mathematical structure itself may not have metaphysical import, the application of one or the other formalism to describe some phenomena does – insofar as a formalism selects certain features as essential while ignoring others.

Any arbitrary pattern of behaviour could be described under either formalism; a Turing machine can be a dynamical system; a Watt-Governor can be a computer. There is far less daylight between these two descriptions than fierce debates over priority might suggest (Deacon & Rączaszek-Leonardi, 2019; Kampis, 1991). And so, the mere fact that some activities of a brain can be described in terms of a particular differential equation does not license the inference that this description has exhaustively characterised the essential conditions of being a mind, any more than the fact that I can accurately describe my friend Amy as a fast runner means that I have successfully defined what it is to be her. A hamstring strain would not transform her into an entirely new person.

To make a definitional claim that an organism ‘is a dynamical system’ is to assert that there is some ultimate differential equation, which does not merely describe certain behaviours that it happens to exhibit but which captures the underlying invariant essence of what it is to be this organism. This is the ontology of the mathematical physicist for whom everything there is to say about either a particular system, or a particular class of systems, is a matter of defining all the changes that are compatible with the conservation of this dynamical identity. In this regard, there ‘is no difference between physics and, say, cybernetics’ (Varela, 1979, p. 10). Despite its processual flavour, then, the ontology of DST is fundamentally essentialist. ‘The whole point of the analysis’, as Chirimuuta (2024) notes, ‘in a certain sense is to reduce change to stasis’ (p. 195).

With such an equation in hand, we would be able to categorise any variation, either within a system or between systems of the same type, in terms of whether or not it preserves the relationships said equation specifies as invariant (Longo & Montévil, 2014). This is a symmetry – a change that changes nothing. Rotate a square by 90° or reflect it around its diagonal, and absolutely nothing happens. The square is exactly the same as it was before. In the same way, whether a pendulum is at a displacement of 45° or 0°, whether it is in Paris or Berlin, makes no difference at all to its underlying dynamical equations governing its behaviour. If, however, some variation is not consistent with our definition, if we stretch a square into a rectangle or cut the string of the pendulum, then we do not have to say that the model was ‘wrong’ for failing to predict said event. Instead, we can say that the particular kind of system that our model described has been destroyed: it has lost its identity as a pendulum and a new system has been created in its place. In this sense, the existence of a pendulum can be exhaustively characterised in terms of a dynamical model.

Assertions that DST is metaphysically-neutral tend to assume that because dynamical systems underpin the ontology of contemporary physics, so what it is to be a particular physical object just

That dynamical models are the language of the physicist does not, however, change the fact that the average dynamical model is often many levels removed from fundamental physical processes - particularly so in the neurocognitive sciences. A state space made up of 100 neurons is a far cry from the 6 × 100 trillion variables needed to specify the position and momentum of every single atom making up that neural population

2

. Speaking of the ‘states of variables’ in such a model is no more ‘physical’ or less abstract than speaking of the ‘tokening of a symbol’. And so, the argument that the dynamical systems perspective captures the true physical workings of the brain cannot simply be based on the idea that it is

One alternative is to point to the success of contemporary physics in identifying ‘projectible kinds’. Such projectible kinds, as Jantzen (2015) describes, are those that support inductions regarding both what the same token system would do under certain variations and how different token systems will behave, given they are the same dynamical kind. In this regard, one might take the physicist’s dynamical ontology as exemplifying how any scientific ontology must be structured if it is to support successful induction. On this view, the fact that the identity of any particular system through time is underpinned by mathematical invariants is what allows us to determine possible transformations in advance, and so to predict how said system, or class of similar systems, will behave in various counterfactual circumstances. Accordingly, the idea that the dynamical stance offers a language of behaviour that can turn cognitive science into a ‘purely objective branch of the natural sciences’ (Watson 1913, p. 158), need not be based on whether this language could, in principle, be derived from microphysical laws governing the component parts of the brain-body-environment system, nor need it involve a denial of any metaphysical import. Rather, the dynamicist may accept that their approach to the brain is no less abstract than any other description but defend it on the basis that by adopting the same abstraction structure as the physicist, their approach will finally allow cognitive and behavioural science to share in the physicist’s inductive success. Unfortunately, however, it won’t.

Biological Instability

The problem with extending an ontological framework from fundamental particles to organisms is that cows are not spherical, and they are far more unruly and bad-tempered than electrons. This heterogeneity and changeability is undeniable. Nonetheless, insofar as a cybernetic framework treats stability is definitive of survival, so the cyberneticist is committed searching for order beneath the mess. As a recent neuroscience paper, citing Ashby, puts it, ‘brain networks

This doctrine of stability, as Chirimuuta (2024) demonstrates, has implicitly structured both historical and contemporary neuroscientific investigation. But why

This creation of invariance involves various mechanisms at different points in the descriptive process. The first is in the initial construction of a set of variables, whereby microphysical flux is erased. What looks like the ‘same’ neural state from the perspective of a particular model corresponds to an enormous variety of different microphysical configurations, such as the position of particular ions, which will be changing even within the lifetime of even a single measurement. Likewise, parameters describing the coupling between these neuron-variables, which may seem stationary when measured by repeated experiments over the course of several months, are underpinned by highly fluid components, such as the actin skeleton, which shapes the small protrusions reaching out from one neuron to another and which can be broken down and regenerated in cycles shorter than a minute (Star et al., 2002). In accordance with the cyberneticist’s downplaying of material turnover, the first thing a dynamical model dismisses is precisely the substrative churn that distinguishes the metabolic cell from the ordinary machine.

Even once our variables are defined, it is not a straightforward matter to read off the dynamical invariants of the system. Which invariants we discover depends upon which perturbations we subject the system to and, unlike the physicist, the behavioural neuroscientist is not expected to consider every possible variation. To identify the dynamics of decision-making in mice, we don’t consider how these will manifest on Mars, but only within the narrow context of a laboratory experiment with conveniently stereotyped stimuli and constrained degrees of freedom for behavioural expression. The background hope as Nastase et al. (2020) describe is that just as electrons are electrons, wherever you go, so the dynamical invariants discovered in the lab will remain invariant beyond it. This is only a hope, however, and one that has little support when ‘the object of investigation is […] inherently plastic and sensitive to the context of its surroundings, which is what the brain is to an extreme degree’ (Chirimuuta, 2024, p. 15).

Fortunately, there’s a final trick up the cyberneticist’s sleeve. By labelling variation as noise, it can be disregarded as a deviation from the underlying invariant behaviour, which can be discovered by averaging out these variations – either across a population or over multiple trials. Through dismissing these variations, we can sustain the illusion of genericity and continue to treat different members of a class or repeated samples of the individual as instances of the same fundamental type.

This assumption that a population of individuals are well represented by an average has already proven misleading even in a population of genetically-identical single cells, much less obviously heterogeneous than neurons, leading Altschuler and Wu (2010) to argue for ‘a hidden world’ of variation behind the stable average. Still, one might consider the precise appeal of more sophisticated mathematical tools to be how they enable the identification of an even more deeply hidden ‘true’ world of invariance behind this world of variation.

This flexibility can cut two ways. On the one hand, by allowing us to describe fluctuations with more structure than simple symmetric variation around a set value, dynamical methods can allow for the removal of more complex ‘noise profiles’, in order to uncover underlying regularities that are more dynamic than a static average. On the other hand, rather than removing this variability as a deviation from that regularity, we might instead treat the fact that we can describe it in terms of some fixed equation as indicating that it should be incorporated as a direct manifestation of a more complex sort of regularity – and so conclude that it is not ‘noise’ at all.

A direct example of this from neuroscience involves the use of Fourier analysis to decompose complex time-varying fluctuations in the electrical activity of neural ensembles into a set of oscillatory components – dynamical ‘atoms’ –describable by simple, invariant dynamical equations. The magic of Fourier analysis is that this is theoretically possible for

Fortunately, because oscillations are paradigmatic dynamical patterns, so they are ideally suited for the holistic dynamical approach. Here, to show that an oscillation is genuinely causal for behaviour would not mean looking it as a discrete ‘mental cause’ and attempting to map it to some behavioural output as a separate ‘effect’. Instead, the cybernetic enactivist looks to the dynamics of the whole brain-body system asks whether these exhibit sensitivity to the rhythm of said oscillation, or whether they are indifferent to it (Klimesch, 2018). One might expect this to be simple enough where we have a behaviour whose oscillatory structure is readily apparent, as in the rhythmic alternations of running, walking, swimming, breathing etc. (Marder & Calabrese, 1996). And so, it seems that identifying the characteristic frequencies and phases of certain bodily movements should enable the theory-neutral extraction of which units and activities are implicated in that broader dynamic, which are functional, and which are irrelevant to the control of said behaviour.

The problem, however, is that no natural pattern of whole organism behaviour is truly regular. Heartbeats, wing flaps, and stride rate all fluctuate over and above their average rhythm. This variation might indicate an imperfection of the control system or even the presence of some ‘underlying pathology’ as König et al. (2016) put it. Or it might not. The elderly tend to have higher movement variability (Guimaraes & Isaacs, 1980) but then so do soldiers who are cope better with fatigue (Ulman et al., 2025). Variability can increase risk of falling (Heiderscheit, 2000), but it can also distribute mechanical loads to protect joints and muscles (Van Waerbeke et al., 2022). Too much variation in heart rate is deadly, but insufficient variation is a standard marker for poor health 5 . Accordingly, some physiologists and biomechanical researchers now suggest that the correct amount of variability is a sweet spot between too much and too little (Lipsitz, 2002; Stergiou et al., 2006; Waschke et al., 2021).

Perhaps, some of the finer variations that were removed as noise are actually ‘causal for behaviour’ too. A lovely example is the analysis of insect flight, which, was ‘deemed to be impossible for decades’ as a matter of ‘near consensus’ amid the flapping flight dynamics community – a particularly striking case of the ‘data being wrong (Taha et al., 2020). As Taha et al. describe, it turned out that the supposition that wing flapping frequency is high enough to be irrelevant to the aerodynamics of the whole insect obscured how this apparent ‘noise’ played a functional role in allowing said insect to counteract perturbations.

The dynamical modeller’s reply: well fine, but it’s precisely an interest in the importance of variability that got us into non-linear differential equations in the first place! We agree that labelling certain variabilities as mere noise, ‘only reflects our ignorance of refined mechanisms’ and we should ultimately incorporate these regularities in a more sophisticated model (Buzsáki, 2019, p. 340). After all, there’s nothing we couldn’t ultimately redescribe in terms of a stable equation.

Sure, I never doubted you. But when any possible activity can be retroactively redescribed in terms of some underlying stability, the possibility of such a description provides no guide when it comes to identifying

The point I am making is this. Just as dynamical descriptions are flexible enough to describe any trivial system, so they are also flexible enough to describe any one particular system in multiple, conflicting ways. In the philosophy of cognitive science, triviality and indeterminacy have traditionally been viewed as key lacunae of computational description. What the challenges of dynamical modelling reflect, however, is that these are not unique vices of recursive functions compared to differential equations. They are simply inherent issues for any mathematical description of a physical system.

Like any formalisms, dynamical descriptions codify assumptions we have already made, they do not tell us which assumptions to make. Or, more precisely, they tell us only which assumptions we would need to make for some selected description to apply to our target. With the right choices, any activity can retroactively be recast under some form of invariance, but, given that DST can only describe living systems in such terms, the observation that we always find underlying stability in biological behaviours seems more like a product of mathematical limitations. That provides poor evidence for an underlying principle governing that variation, if we could never have found things to be otherwise. Constructing mathematical models is a perfectly respectable way to make a living, but, as Gyllingberg et al. (2023) describe, the danger is when the rigour and consistency of such formal language leads us to forget that it is our construction, not the sole work of the system itself, and other descriptions are possible.

A Parting of the Ways

To the extent that dynamical models are the stock in trade of empirical work in enactive cognitive science, so the latter has often been regarded as merely a variant of the dynamical stance. Such an interpretation is not inaccurate when it comes to those whose main aim is to demonstrate the viability of this as an alternative experimental paradigm to that offered by representationalist and internalist understandings of the mind. Nonetheless, in the kind of enactive work that I would treat as representative of a more ‘organicist’ tendency there is frequent emphasis on aspects such as ‘precariousness’ and processes of ‘open-ended becoming’ (Di Paolo, 2009, 2021; Di Paolo et al., 2022). Processes such as the, ‘transformation of relations, creation of novel configurations, changes of frame, and emergence of new variable sets’ which, as Di Paolo et al. (2018, p. 109) note ‘can be described mathematically only in partial or a posteriori terms’.

Drawing upon Gallagher’s (2017) and Godfrey-Smith’s (2001) notion of a ‘philosophy of nature’, Meyer and Brancazio (2022) have contrasted this sort of work with the projects of ‘scientific enactivism’, which have largely been carried out in a cybernetic vein. On their account, this organicist philosophy is best understood as a post-scientific ‘utopian’ endeavour. While there’s nothing wrong with such work in principle, they, somewhat fairly, critique a tendency for ‘speculative or analogical’ descriptions, ‘that leave it unclear what enactivism has to offer practitioners in particular cognitive sciences in return for abandoning their successful research’ (p. 7).

Rigorous mathematical descriptions, as we’ve seen, can be just as problematic and misleading as speculative metaphorical ones. What progress in cognitive science needs, I’ve argued, is precisely a way of understanding intrinsic purposiveness that a mathematical abstraction alone cannot deliver. This is precisely what an organicist philosophy of nature (or of life) has to offer, yet it is not often enough appreciated that this offer requires exposing the limitations of the very framework in which enactivism has made its strongest forays into the science of cognition.

At this point, one might resort to the phenomenological argument that our mathematical descriptions are always downstream of a pre-reflective lived experience that cannot itself be mathematically-derived (Nemati, 2024). We might further specify this in terms of something like Jonas’s claim that ‘life can only be known by life’, suggesting that this offers a basis for understanding behaviour that is neither restrictively anthropocentric, complacently anthropomorphic, nor ruthlessly eliminativist. On such a view, our ability to identify the purpose of another systems movements is based on our understanding of the needs and capacities that we, as living systems, share. The same line of thought, as Ng (2020) describes, is also found in Hegel, who treats, ‘our power to grasp organic unity as […] simply due to the fact that we are this unity and activity, meaning that grasping the form of internal purposiveness is simply part of the process of understanding ourselves’ (p. 16).

To stop with something like this lived experience of shared needs as a primitive foundation for scientific practice, however, would raise the same issues as the pragmatist attempt to ground scientific categorisations in the perspective of the investigator. This cannot be enough when the perspective of a living, experiencing agent is precisely the thing that we’re trying to understand. While our first-personal experience of this may be an important guide in scientific practice, persistent controversies around the attribution of intentionality and purposiveness indicate it is far from consistent to enough to amount to anything like an adequate shared understanding. Many mature rational humans would restrict purposiveness to themselves. Other mature rational humans are liable to experience concern for the welfare of abstract shapes moving with the right dynamics, the flow of electrons through a semiconductor, or to just about anything with googly eyes.

So, a first-personal understanding of life is not infallible. Life is not always known by life. That we attribute intentionality to a variety of inanimate objects indicates that we often mistake the lifelike for the living, while disputes over the status of AI reflect that, even with extended observation, we are sometimes unsure on what side of the boundary a system lies. To critique the validity of a Jonasian recognition in the case of the thermostat or the Roomba, and validate its application in the case of another lifeform, we can’t just rely on our internal sense of empathy (Villalobos & Ward, 2016). We need to explicate the form of life, and specifically metabolism, in terms of both its phenomenological and empirical dimensions. After all, while the primacy of subjective experience may be central to phenomenology so too, as Husserl increasingly came to recognise, is the corrigibility of even the apparently essential structures identified through phenomenological analysis (Berghofer, 2018).

Providing this analysis was the initial aim of autopoietic enactivism. The failure of the cyberneticist’s dynamical account needn’t mean we should abandon that project. So, what might an alternative understanding of the phenomenon of life look like?

What life knows

What supports an argument that we should reject the adequacy of dynamical system descriptions, rather than renouncing what said models cannot describe, is the fact that life is demonstrably characterised by precisely the kinds of ‘creations of novel configurations’ that Di Paolo et al. (2018) argue outstrip dynamical derivations. Accordingly, numerous theoretical biologists have also argued against the exhaustiveness of the differential equation, independently of any enactive aims (Hooker, 2013; Kampis, 1991; Koutroufinis, 2017; Pattee, 2001; Rosen, 1999).

In an organism, organisation may change in whatever way is compatible with continued viability: the loss of enzymes that extract energy from the catalysation of glucose metabolism along one pathway may be replaced by alternatives that channel its breakdown along an alternative pathway (Kim et al., 2022); two kidneys can become one (Dicker & Shirley, 1971); and the incorporation of genetic material from another species via lateral transfer, or indeed the endosymbiosis of an entire individual, can transform the possibility space of an organism altogether. The evolution of the mammalian placenta may have depended on the former mechanism (Harris, 1991), while the latter process ignited the fuel of the metazoan revolution, when an ancient archaeal cell absorbed the bacterium that would become our mitochondria (Sagan/Margulis, 1967).

That these incorporatory events belong to the past, and are now stable features of the relevant species, makes them

Our definition of an ordinary object is exhaustively characterised by our mathematical model of it, and so, when a pendulum’s string breaks, we say it is the system, not the model, that has broken down. Living systems, however, individuate themselves, and by recognising the continuity of this process, we can recognise that, while the mathematical invariants may collapse, the life itself continues. A disembodied mathematician could never know this continuity. The fact that we can, as Jonas (2001) argues shows that it must be something other than invariance of dynamics that life knows when it knows life.

Here is how I think we can be more specific about what it is that life knows. If Ashby, Maturana & Varela were wrong to think that the important features of autopoiesis can be elaborated in abstract organisational terms alone, the natural move is to look to precisely those features left out as irrelevant: namely the material and energetic aspects underpinning an autopoietic organisation. There is a rich history of work in the thermodynamics of life, that I will not review here but the key point, as Fox Keller (2008, 2009) describes in her magisterial history of self-organisation, is that overlaps in terminology and mathematical tools have tended to conceal the radically different understanding of self-organisation that this perspective makes available.

Such an understanding, Nicholson (2013) argues, suggests a view of organisms not as machines that move but as processual entities whose distance from equilibrium depends on continual activity. Ordinary objects are comfortably nestled within upland valleys in the energetic terrain, but organisms continually roam from peak to peak. By breaking down available energy supplies to power this process, the organism increases entropy, in respectful deference to the second law, while smuggling some portion of that energy into the work of maintaining its elevated position. This means that, unlike an ordinary stable object, an organism is not merely something that is robust to external energetic input, but something that constitutively depends upon this, insofar as it must continually consume and dissipate energy in order to keep itself going. The continued existence of the organism thus depends not upon keeping itself one particular state or pattern of behaviour, but the ability to continually flow through viable regimes, where the dissipation of energy can be channelled into the creation of any configuration that supports the continuation of this process. In such a regard, the energy consumption of an organism is not like fuel to an engine, but is, as Jonas (2001) puts it, ‘the total mode of continuity (self-continuation) of the subject of life itself’ (p. 76, n. 13).

This might distinguish the phenomenon of life from the phenomenon of returning to local equilibrium, but energy dependence holds for any ongoing process in a world with friction. And so, distinguishing between organisms and other processes requires more than an appeal to precarious networks of interdependent processes, as proposed in Di Paolo & Thompson (2014). This would still be satisfied by any cyclical flow, such as the hydrological cycle (Mossio & Bich, 2017). There are no issues in describing such phenomena under DST, in terms of cyclical or toroidal attractors, rather than fixed points.

What is needed, as Sachs (2023) describes, is a more restrictive account of the specific way that processes depend on each other in living systems alone. One option is offered by (Montévil & Mossio, 2015) and Moreno and Mossio (2015) who have explored what an autopoiesis-inflected view of autonomy would look like if this were developed through closer observation of the features of cell metabolism that are left behind in cybernetic abstractions. This, they argue, means treating the constraints that enable these processes – described by our dynamical equations – as themselves being thermodynamic entities that are liable to degrade and in need of continual regeneration. The implications of the idea of living systems as being those that construct their own constraints has also been developed by, among others, Christensen and Hooker (2000), Collier (2004), Juarrero (1999), and Kauffman (2019). The most important point here, as Mossio and Bich (2017) suggest, only by looking at the causal regime of a cell in these terms that we can see what distinguishes biological organisation from various non-biological cycles, such as the cyclical interactions among pendulums.

As in a pendulum, the coherent pattern of a cell emerges when energy flows are constrained by some invariant structure to produce a macroscopic dynamical effect. This is just the general principle of causation by constraint that is at work in all organised mechanisms – the kind of causation that is realised by channelling the inherent downwards flow of water into a mill race and turning a meandering stream into the pressurised driver of a water wheel. What makes the cell different from the pendulum or the mill race, however, is the fact that the constraints that constitute it would not exist independently of their regeneration by the coherent network of other constraints and processes that they reciprocally enable. These enabled processes are not merely dynamic patterns. They are a sets of reactions that synthesise (1) the membranes, pumps, pores, and turbines that both produce electrochemical gradients and channel the spontaneous flow of ions back down said gradients to power the metabolic work of their own synthesis and (2) the enzymes that catalytically accelerate these reactions to a rate sufficient to compensate for the speed at which said cellular structures tend to degrade.

The pendulum needs energy to oscillate but it does not do anything to seek out to this energy and nor does it need to. The constraints underpinning that tendency will persist in the absence of its manifestation, reintroduce the energy supply, and the process will begin again. The pendulum’s parts are vulnerable only to external perturbations that will eventually disrupt their locally stable state and degrade them towards equilibrium. What stability they have is passive, rather than being dependent upon the activity that they enable. Indeed, the lifetime of a pendulum is

For a cell, it’s the opposite. The passive stability of most cellular structures is much shorter than the lifetime of the cell itself, and the extension of their duration directly depends on the cell’s continual activity. Take energy away from the cell and it will disintegrate much faster. Unlike for a pendulum, however, this disintegration of parts in starvation situations is not a straightforward path towards the loss of organisation. An increase in the degradation rate of one constraint may, for instance act as backup energy supply that powers movement toward a new nutrient source. A particularly clear example of this is in certain species of marine bacteria, where starvation-induced loss in biomass, at a rate of as much as 9% per day, provides energy that is channelled into increased motility in seeking out new energy sources (Keegstra et al., 2025).

Insofar as the parameters constraining the particular movement of a robot are instantiated in the inherently stable silicon, copper, and trapped charges of computer memory, so these constraints do not depend upon the activity they produce for their regeneration. The reason the dynamics of the machine or pendulum are not purposive then, is because the stuff they are made of is not sufficiently unstable. This makes the overall pendulum more stable, in so far that it can exist for a long time without needing to ‘do’ anything, but also less stable in so far as the energy released by its slow degradation cannot be channelled into the work of its own regeneration and repair. To sum up, contra Beer (2002), the purpose of a system is not what it does, but what it needs to do, and the robot need do nothing at all.

Crucially, to understand autonomy in terms of this more narrowly restrictive feature of cell metabolism does not mean that we have to restrict ourselves to a strict notion of autopoiesis at the molecular level alone. Moreno, Mossio and Montévil’s proposal builds on Stuart Kauffman’s (Kauffman, 2003) work on autocatalysis at the origin of life, and catalysts are indeed a paradigmatic example of how restricting lower-level degrees of freedom creates new organisational patterns at a level above. Moving from the language of catalysis to the more general notion of constraint, however, opens up the possibility of identifying this circular dependence at other scales, such as the sensorimotor and the sociolinguistic. Indeed, insofar as neuroscientists employ dynamical equations, on my account, they are already implicitly, appealing to constraints. To view the nervous system in terms of its constraint

A neural constraint is thus more abstract than a particular catalyst, in the sense that the ‘same’ constraint may be realised by many microphysical configurations. Unlike the under-constrained abstractions of a cognitive scientist looking for a neural code or a control parameter, however, the granularity of a constraint is determined internally to the system’s organisation, in terms of which range of configurations are compatible with its continuing to play the constraining role necessary for the generation of a processes that underpins the organisation said constraint both produces and depend upon.

The material interdependence does not prevent the organisation of a constraint-closed system from being partially described in formal terms. Indeed, a second source of inspiration is Rosen’s (1991) category theoretical account, in which constraints are analogous to functions that map from an input to an output. Unlike operational closure, however, which Varela and Bourgine (1992) presented as being an algebraic property, constraint closure is not a property of any mathematical formalisation in itself. Instead, a formalism may

The final point about such a description is that it does not require that we can fix a particular set of mathematical operations that defines any particular organism – which would raise exactly the same problems as defining organisms in terms of a particular set of dynamical equations. Instead, the functions and processes involved may be defined only in terms of their relations to each other, and so, as DiFrisco and Mossio (2020) argue, ‘The particular set of constraints and their inter-relations […] can change over time as long as later regimes causally depend on earlier regimes. Hence, what must remain the same over time is closure construed as a general theoretical principle and not as a specific regime of closure’ (p. 183). This minimalism allows the organisational requirement to apply to an organism at all times, but only in so much as it applies to any organism at any time. To be useful for scientific practice, more specific models will naturally be necessary. All that looking at such specific models from this perspective requires is the recognition that these are only contingent manifestations of the ongoing process of constraint closure and that such models could benefit from explicitly incorporating this historical and provisional nature, perhaps in something like the manner suggested by Montévil and Mossio (2020).

For all of these reasons then, constraint closure seems closest to offering what we’re looking for: namely, a description of autonomy that is preserved through transformations of specific organisational structures. One that can be applied beyond a single cell but that is not trivially applicable to arbitrary systems. By moving from a catalyst to the more general (but not

The Purpose of a System Is What It Needs to Do

The cybernetic neuroscientist seeks regularity in variation and is puzzled by the brain’s mercurial tendencies when faced with identical inputs. To the cellular biologist, however, randomness is increasingly expected with the inherent noisiness of microscopic activity being important in everything from driving molecular transport (Astumian & Hänggi, 2002; Hoffmann, 2012) to determining cell fates (Losick & Desplan, 2008). Indeed, Soto et al. (2016) propose that while the default state of a physical object is inertia, such that it is variation that demands explanation, in biological systems proliferation and variation are the defaults and the task is to explain how these are constrained to support continued viability. Accordingly, cell biologists are increasingly aware of the dangers of presuming that irregularity equals irrelevance. As Altschuler and Wu (2010) state, ‘the challenge is no longer to demonstrate that populations of “seemingly identical” cells are heterogeneous. […] Rather, the daunting challenge is to determine which, if any, components of observed cellular heterogeneity serve a biological function or contain meaningful information’ (p. 559).

In section 6, I argued that the question of whether variation is purposive or functional cannot be answered by seeking some deeper regularity underlying that variation. If we try hard enough, we will always be able to gerrymander

When it comes to multicellular neural networks, identifying how these depend upon the activities and interactions that they collectively produce is far less straightforward than for a single cell. Nonetheless, scientists and philosophers are increasingly concerned about the entanglement of metabolic and neurodynamic processes, and there is a growing suspicion that cognition may not be so detachable from the distinctive material and thermodynamic properties of the brain as once believed (Cao, 2022; Seth, 2025; Stapleton, 2016). One particularly interesting example highlighted by this perspective is the question of how memories that last for decades can be underpinned by cellular structures with lifetimes as short as a few minutes – a question that depends on unpicking the assumption of inherent stability that, as discussed in section 6, has been implicit in abstract synaptic models (Crick, 1984; Meagher, 2014; Mongillo et al., 2017). This de-abstraction turns the Hebbian model on its head. Rather than asking how activity induces plastic change in a stable structure, the question becomes how an intrinsically unstable structure is stabilised through the activity patterns that it produces as an enabling constraint (Gershman, 2023; Gershman et al., 2021).

The problem of behaviour individuation is one argument for why the increased complexity-cost of abandoning computational functionalism for a thermodynamically and metabolically-entangled approach to cognitive phenomena is worth paying. And so, for all that the notion of biological autonomy is deeply entangled with metaphysical questions around identity, individuation, and normativity that troubled philosophers such as Kant, Jonas and Canguilhem, it is not merely a post-scientific ‘philosophy of nature’ but also a pre-scientific framework that can inform the direction of particular scientific research programmes.

Whether such research programmes will be productive remains to be determined. Still we can find a hopeful demonstration of the potential scientific fertility of adopting alternative philosophical standpoints in Soto and Sonnenschein’s (2021) work on the tissue organisation field theory of cancer. Inspired the idea of a proliferative default state, this proposes that the causes of cancer are best understood, not in terms of somatic mutations in cancer cells themselves, but rather in terms of a failure of surrounding tissues to appropriately constrain these cell’s inherent tendency to proliferate – a constraint that is necessary to maintain the closure of the larger multicellular organisation. Whether correct or incorrect this is an empirical hypothesis that is situated within the kind of organicist stance I’ve described, and which generates both predictions and possible interventions. If enactive cognitive science is to go beyond the (entirely worthwhile) exploration of the possibilities afforded by replacing computational formalisms with dynamical ones, then an organicist philosophy of nature offers a rich place for these scientific research programmes to start.

Conclusion

I’ve presented constraint closure as an organicist account of life in so far as it is explicitly intended to account for a teleological dimension that is unique to living organisms (Mossio & Bich, 2017). Whether one thinks it has succeeded in describing anything that deserves to be called ‘

This normativity is far more generative than suggested on a simple homeostatic picture, for the purpose of life is not to secure the invariance of any properties or parts, that are specific to that individual. And so, the identity of an individual organism is not the kind of thing a dynamical model allows us to define in advance, once and for all. Understanding the distinctions formalised in any particular model about which activities count as behaviours with a particular purpose, and which do not, requires stepping outside that model to look at the experiential background that informed its construction. This background, I’ve argued, is our understanding of how certain activities play a constitutive role in supporting the life of the system in question. At the same time, this is not just phenomenological given and can itself be analysed in terms of the understanding of autonomy offered by constraint closure.

This account may place limits on our ability to generalise about purposes beyond a specific encounter with a specific organism. It may make purposes far less ‘metaphysically uncomplicated’ than the cyberneticist hoped. Nonetheless, insofar as neither the natural, nor our ability to cognise it, is exhausted by what is mathematically formalisable, so such a view is as naturalistic as anything else has claim to be. By thus explicating

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Leverhulme Trust (ECF-2023-636).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.