Abstract

External representations (

1. Introduction

External representations (

Modifying the definition of Harvey (2008), and taking a broad biosemiotic perspective, an external representation (

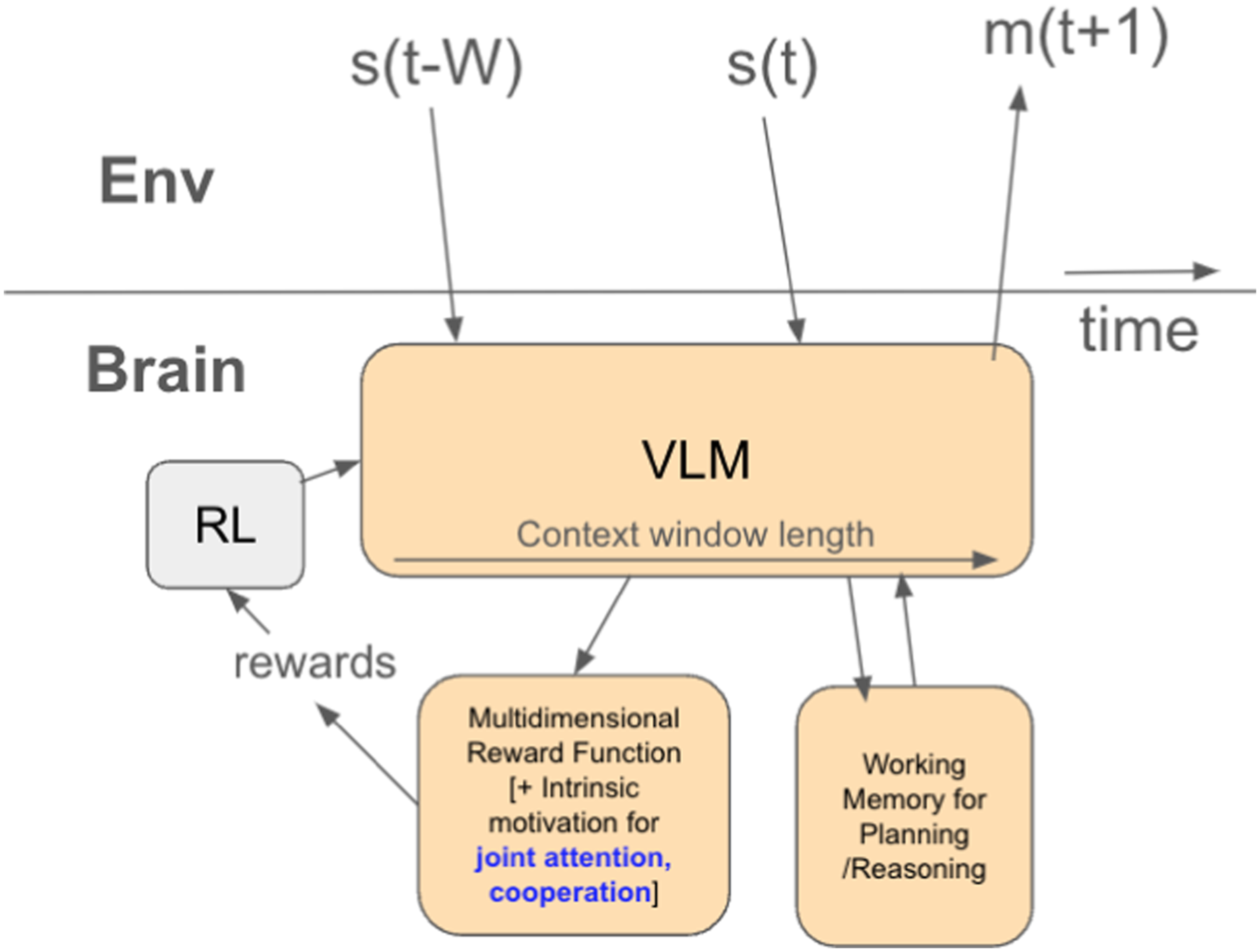

In contrast to nativist claims that propositional thought needs special neural symbol processing machinery, (Pinker, 1994) we take, along with others before us, a more constructivist position (Dennett, 2008; Heyes, 2018; Moore, 2021). Contrary to Harvey (2008), we do not believe it is theoretically impossible that special neural symbol processing machinery could have come to exist; after all, the triplet code came to be about amino acids, genes about their phenotypes, and neural firing patterns about actions. This shows that there is a general tendency for Minimal neural requirements for open-ended ER production, capable of unbounded discourse, free retrieval, and infinite generativity van Mazijk (2022b). It consists of a suitable RL-modulated, multi-modal model trained for next-step prediction (e.g.: a vision-language model (VLM), or other multimodal transformer), a multidimensional value system which includes intrinsic motivation (reward production) for establishing cooperative joint attention, and a prefrontal working memory for imagination (needed for running policies offline). With this core innate minimal neural machinery, embodied and enactive metaplasticity can be ignited, which is then sufficient for open-ended ER use (Roberts, 2022). The whole system can be thought of as the Peircian interpreter in his triadic framework for understanding how a sign and an object can have meaning (Iliopoulos, (016a).

2. Overview of external representations

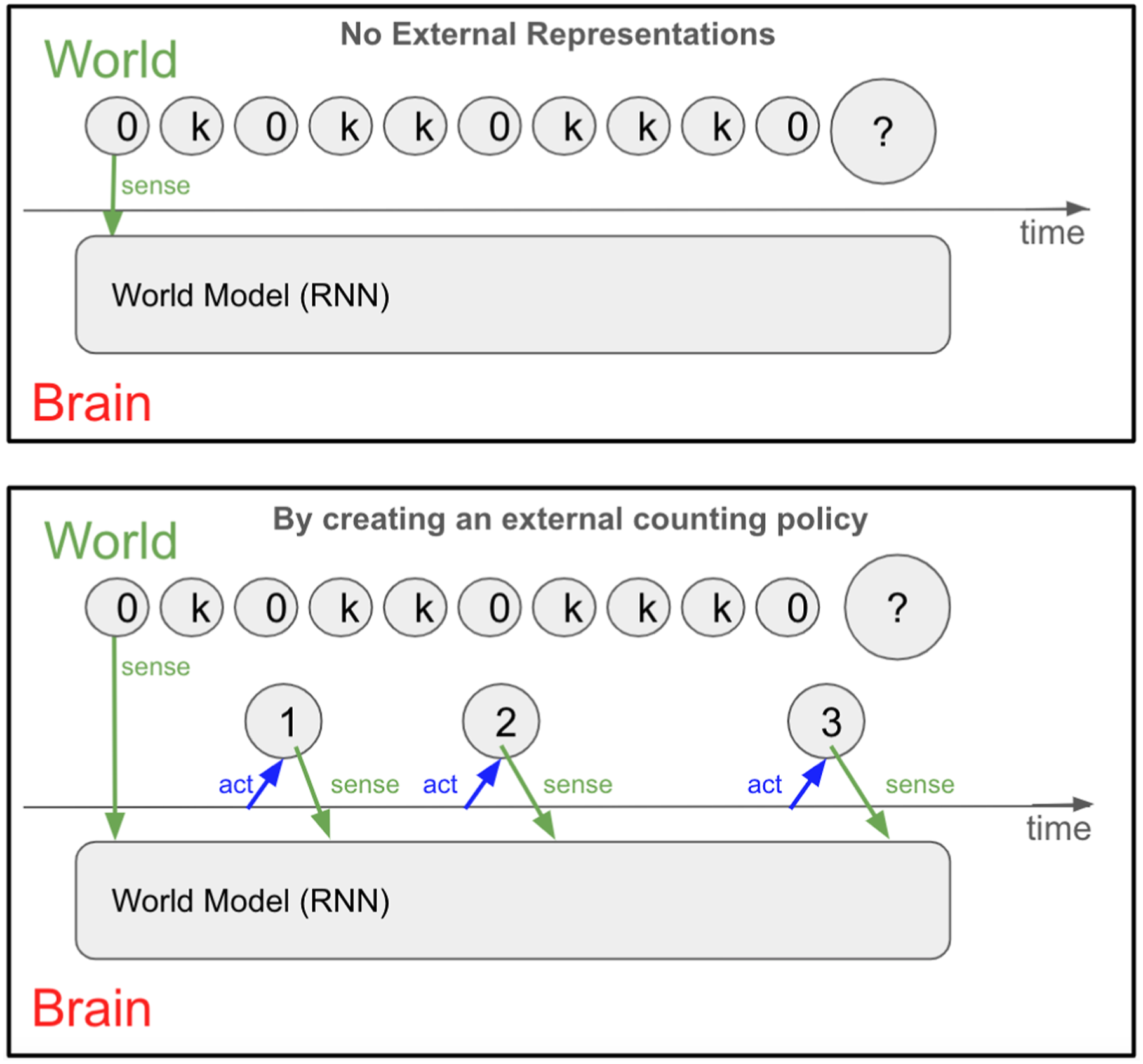

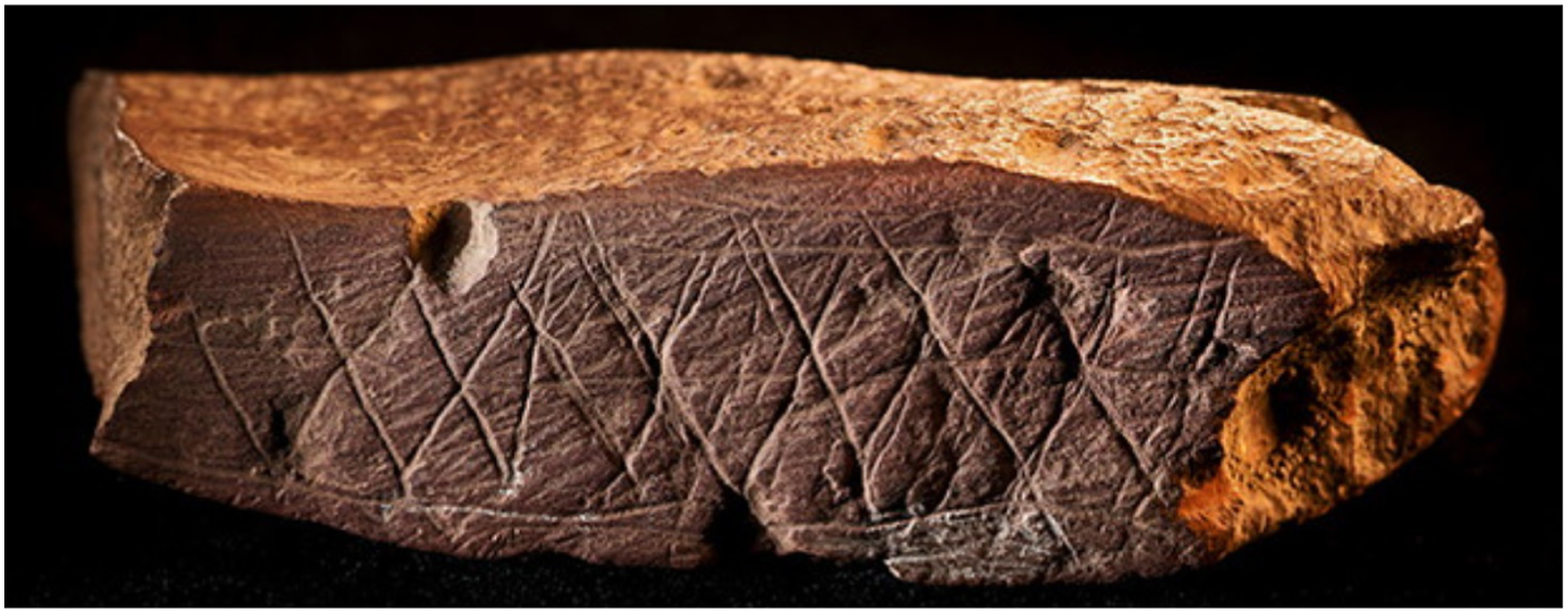

We claim that propositional thought is a sensorimotor policy for the creation and manipulation of The process by which a policy for constructing By using an external counter object and an ‘increment’ policy, it is possible to learn a simple rule for generalizable prediction, not learnable by a standard recurrent neural network (RNN) or a long-short term memory network (LSTM) as shown by Evans (2022).

Why are humans unique in their motivation and efficiency in creating open-ended, novel, effective

The latest evidence suggests that the evolutionary transition to upright posture and gait began from 6-4MYA (Harcourt-Smith, 2010). There is evidence for ancestral species inhabiting marshes in the Miocene (Niemitz, 2010), with hominids having to adapt to seasonal mosaic environments including wading in wetlands (Domínguez-Rodrigo, 2014). Such rapidly variable environments imposed strong selection pressure for diverse hunting and foraging tasks, requiring improved abductive reasoning skills (Potts, 1998b,a; Ambrose, 1998; Charnov et al., 1976).

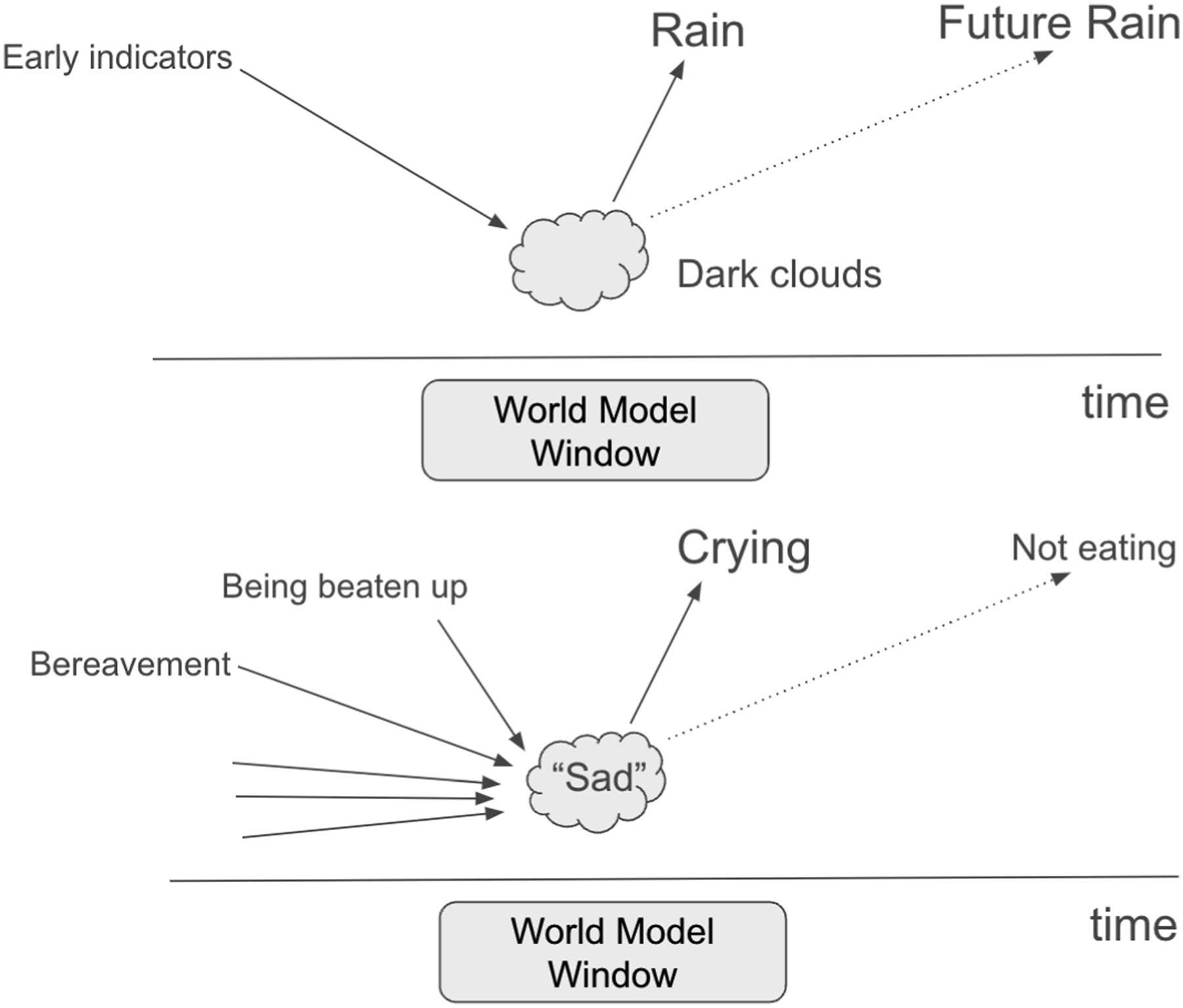

With the ability to make inferences from complex natural signs about invisible topics, it was then a small step to From bush reading to mind-reading. Dark clouds are to rain what the word ‘sad’ is to tears. Words are the naturalization of meaning. This is contrary to the position of Bar-On and Moore (2017), who claim that a recipient capable of understanding natural meaning (i.e. without an intentional sender) is fundamentally different from one capable of understanding non-natural meaning. However, our view is also distinct from Fitch (2010) as we propose that a special causal inference focus on currently invisible antecedents and consequences was needed, and this may have required pre-Gricean naming of antecedents and consequences when they were visible as in Figure 2 such that associations could be made between events displaced in time.

Once such

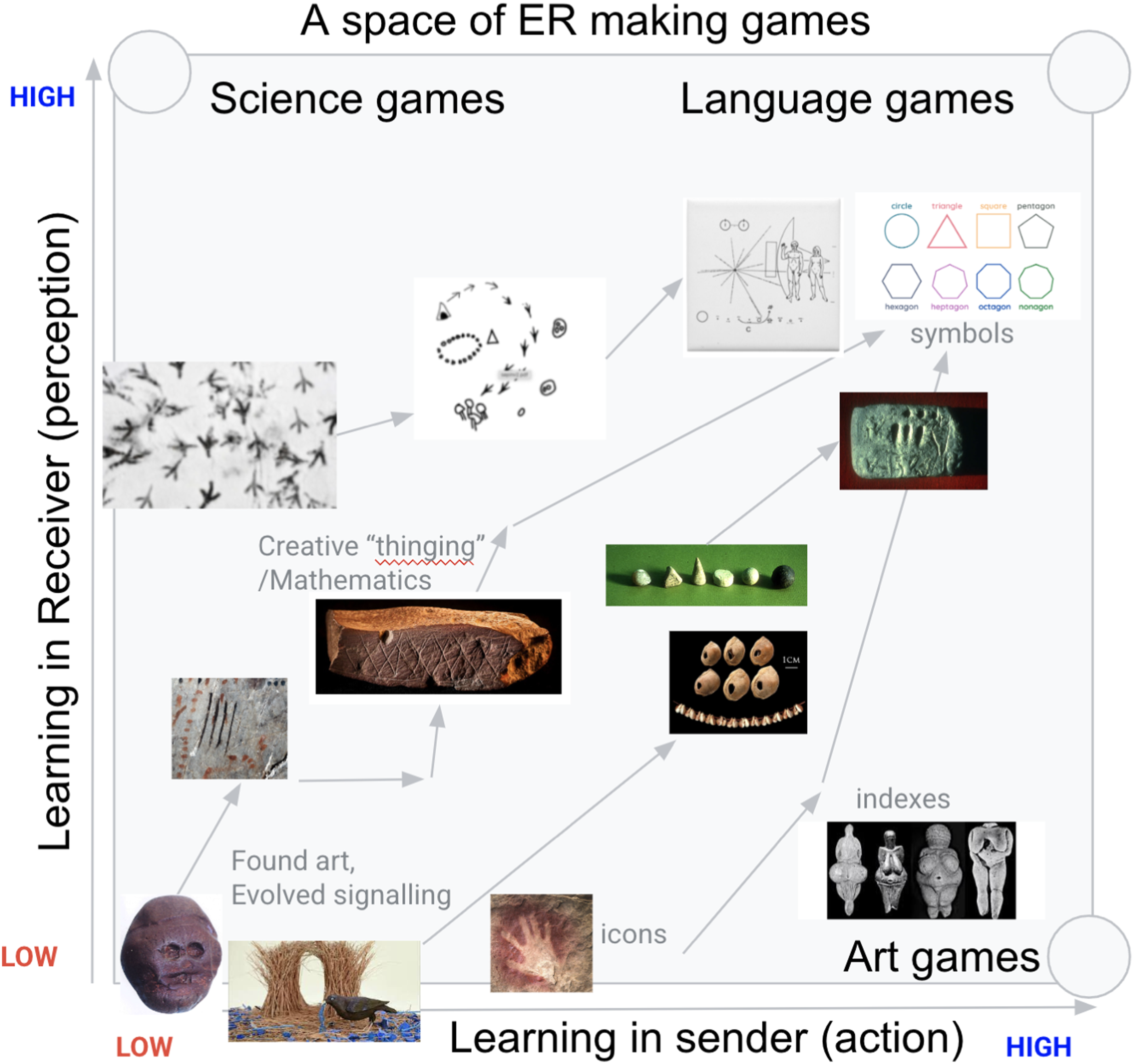

We define a space of games for ER creation, illustrated in Figure 5, with prototypical instances being: A space of ER creation games organized by the complexity and dependencies of learning required during ontogeny in the sender and receiver. In science games, there is no learning in the sender (there is no sender), only the receiver must construct a model of the observed world. In art games, there is no learning by the recipient; the sender executes a search in order to manipulate the receiver without any need for their cooperation, that is, with icons and indexes. In language games, both sender and receiver must learn on the basis of shared reward to achieve their communicative goals. From this process, arbitrary symbolic conventions can arise. On the bottom-left at the origin, we have pure Carnapian communication. Here evolution has done the heavy lifting to produce effective signals and responses in a hard wired manner. No learning happens in either sender or receiver. In the special case of found art, the ER arises by chance alone.

3. The constructivist nativist tension

3.1. Nativism

Nativist arguments for the origin of language and thought propose that, in some form or other, we have innate, unlearned, genetically evolved specialist internal representations in the brain for symbol processing that are about language. These are said to augment the connectionist mechanisms of Hebbian learning and Reinforcement learning present in non-human primates.

Nativist explanations come in various flavours, for example, the language of thought hypothesis describes internal symbolic representations as mentalese, with its own grammar and semantics (Fodor, 1975; Fodor and Pylyshyn, 1988). The physical symbol systems hypothesis states that tangible symbols reside in the neural circuitry of the brain, and are necessary for human level intelligence (Newell and Simon, 1976). Chomsky proposes an innate neural mechanism implementing a universal grammar, shared by all humans (Chomsky, 2014), and Pinker proposes a more gradualist genetic theory of language evolution, in short that we have a language instinct (Pinker, 1994). Cosmides and Tooby view the mind as composed of multiple, genetically specialized cognitive modules, a computational system primarily internal and brain-based, constructing internal representations of the external world, with symbols seen as functions of these internal cognitive processes (Cosmides et al., 1992).

More recently, Tenenbaum claims that the brain contains probabilistic structured, compositional programs for internally representing knowledge, which allow causal reasoning that is not possible with connectionist systems alone (Tenenbaum et al., 2006, 2011). Gary Marcus outlines the limitations of neural networks to generalize out of distribution, specifically their inability to form genuine hidden causal models of phenomena, and seeks solutions in symbolic neural architectures (Marcus, 2003).

An attempt to integrate sub-symbolic connectionist neural mechanisms with the physical symbol systems referred to by Fodor reached its apogee in the Neural Turing Machine Graves et al. (2014). This was an implementation of a Turing Machine with a tape and read and write heads, which could be trained by backpropagation of error and gradient descent. Biologically plausible variants of backpropagation exist (Lillicrap et al., 2020). Such neuro-symbolic architectures add inductive biases to standard connectionist architectures (Spies et al., 2022; Shanahan and Mitchell, 2022). These architectures attempt to integrate the physical symbol system hypothesis (Newell and Simon, 1976) with connectionist models (d’Avila Garcez and Lamb, 2020; Fernando, 2011).

However, there is now considerable evidence against nativist explanations for language and thought, or at least a weakening of existing evidence. There is a wealth of stimulus, for example, social shaping by adults of infants’ and children’s utterances, that goes counter to Chomsky’s poverty of stimulus argument, which states that children don’t have enough data to learn language by associative mechanisms.

The evidence for critical periods, that is, specific times in development where language learning is possible, is weak (Lenneberg, 1967). Even if children can learn phonemes that interfere with subsequent learning of novel phonemes as adults, this can be taken as evidence for domain-general cerebral plasticity (Cheour et al., 1998; Kuhl, 2004). 1 Evidence from severely abused and neglected children not exposed to language, in fact, argues that language can be learned later in life. For instance the case of Genie, she was able to learn simple grammar, such as prepositions ‘under’, ‘next to’, ‘beside’, and ‘over’, and developed a considerable vocabulary (Fromkin et al., 1974). She was able to produce three and four-word sentences at the time of writing and continued to improve, despite her horrific treatment. In any case, Heyes has argued that the existence of a critical period does not in itself provide evidence for or against a constructivist hypothesis because if the critical period is due to domain-general windows of cerebral plasticity, both nativist and constructivist explanations can explain a lack of language acquisition (Heyes, 2018).

There is no convincing evidence for brain regions that are uniquely specialized for language (Anderson, 2008; Poldrack, 2006), with increasing emphasis on a distributed language connectome broader than Broca’s and Wernicke’s areas as traditionally formulated in the classic model (Tremblay and Dick, 2016). Language learning ability is instead strongly correlated with general sequence learning abilities (Christiansen and MacDonald, 2009; Misyak and Christiansen, 2012).

Also, the effectiveness of explicit theory of mind is correlated with children’s exposure to particular ways of talking about others, that is, using sentential complement syntax and the frequency with which parents use mental state verbs (de Villiers and de Villiers, 2000). This makes it more likely that it is a culturally inherited set of linguistic tools that allows children to think about propositional attitudes rather than an innate explicit theory of mind module in the brain (Moore, 2021; Hutto, 2012), although an implicit ToM does seem to exist in all primates (Butterfill and Apperly, 2013).

3.2. Constructivism

In the opposing camp, constructivists broadly emphasize that language and thought are constructed to varying degrees during developmental interactions between domain-general neural learning mechanisms and the environment, thus avoiding the heavy burden that nativism puts on genetic mechanisms (Tomasello, 1995). The term constructivism stems from Piaget, Bruner, and Vygotsky’s notions that childhood learning results in concepts emerging through experience, for example, the concepts of noun, verb, and object persistence (So, 1964; Bruner, 1974; Vygotsky et al., 1994). Connectionism later sought to operationalize these explanations (Elman, 1996).

Constructivist explanations come in various flavours which differ in the proposed mechanisms and substrates for acquisition of novel cognitive functionalities such as language. Piaget’s so-called radical or cognitive constructivism emphasizes individual self-constructed learning mechanisms sparked by the child’s brain in a bi-directional interaction with the environment involving assimilation and accommodation, rather than socially learned policies (Von Glasersfeld, 2013), whereas Vygotsky’s (and Bruner’s) social constructivism emphasizes the role of more pragmatic social interactions with others and the environment (Vygotsky et al., 1994; Bruner, 1974).

Quartz and Sajnowski are on the Piagetian side when they describe themselves as neural constructivists, proposing that structural (hardware) changes in neural networks caused primarily by synaptic, axonal, and dendritic growth could create non-stationary neural substrates for novel internal representations (Quartz and Sejnowski, 1997). Modern machine learning, until the very recent and unexpected success of large language models, fell on Piaget’s neural constructivist side also, seeking neural mechanisms for internal representation formation. The Beta-VAE is an example of this, a neural network with learning rules designed to extract disentangled visual concepts about images such as lighting direction, skin colour, or age (Higgins et al., 2016). In it, each ‘concept’ is represented internally as the neural activation of a single neuron in a hidden layer.

In contrast to Piaget’s cognitivist constructivism and its neural internal representationalist extensions described above, our approach champions enactive constructivism, which is more in keeping with Vygotsky (Baerveldt and Verheggen, 1999). Instead of claiming, as the Beta-VAE does for instance, that concepts are primarily internal representations formed in the brain, we propose that concepts are constituted primarily by having sensorimotor policies. These policies allow us to modify things like lighting direction by moving lamps or changing the shapes of objects through construction, independently of each other. We can change the shapes of things independently of the directions by which they are illuminated, suggesting that these concepts of shape and illumination are distinct. Our grasp of a concept is demonstrated by enacting policies in the world itself. The demonstration of localized activation in the brain associated with an enacted concept is not logically necessary for demonstrating that we have such a concept (Ward et al., 2017).

A concept is best understood as a sensorimotor policy for its enactment, rather than as an internal representation in the brain. The enactive constructivist account says the brain is not a computational device that processes internal representations before externalizing them through behaviour (Iliopoulos, 2016b). In keeping with this position, Christiansen and Chater have outlined a cultural theory of language evolution that is broadly consistent with an enactive constructivist view, in which linguistic constructions are the units of cultural evolution (Christiansen and Chater, 2016). Similarly, Overmann has analysed how writing systems culturally evolve, with tendencies to abstraction and automatization. One of the main features of material construction (ERs) is their facilitation of sustained, communal reuse, which creates new stable niches that then allow mutually agreed salient features, and eventually neural changes by the Baldwin effect (Overmann, 2021).

The most convincing evidence for our enactive constructivist position is the remarkable success of large language models (LLMs) such as GPT-4 (OpenAI, 2023) and Gemini (Team et al., 2023). The capacity of LLMs for language use does not depend on specialist neural symbol processing machinery (Fernando et al., 2023; Wei et al., 2022). It depends on being exposed to the right kinds of language data. They provide a proof in principle that associative learning is sufficient for complex language use. Counter to Chomsky, nothing more than efficient attention-guided Hebbian associative learning, supervised by prediction of the next token in the text sequence, seems to be necessary for learning grammar (Vaswani et al., 2017; Radford et al., 2021). All the ‘cleverness’ displayed by these models is in the conditional structure of language itself.

Both humans and LLMs exhibit similar ‘semantic content effects’, that is, failures in achieving systematic consistency in abstract reasoning tasks. They both reason better about more common and plausible semantic settings grounded in realistic situations (Dasgupta et al., 2022). These new results raise the possibility that failures of humans in reasoning tasks, unless ‘materially anchored’ by real-world examples, may be explained by LLM-like training biases rather than requiring more complex theories of conceptual blends (D’Andrade, 1989; Hutchins, 2005). LLM neural activation predicts nearly all the variance in neural responses to sentences in humans (Schrimpf et al., 2021), and surprisal (a probabilistic measure of the amount of new information conveyed by a word) in LLMs predicts reading time in humans (Goodkind and Bicknell, 2018), all suggesting similar mechanisms of neural processing in humans and LLMs. Whilst theory of mind tasks are harder for LLMs in a single step (Trott et al., 2023), just as mentalization in humans may require multiple steps of reasoning (System 2 processes Kahneman (2011)), for example, explicitly prompting oneself to ask why someone might have acted as they did, ToM in LLMs may also improve by learning better multi-step mentalization strategies (Kosinski, 2023; Moghaddam and Honey, 2023; Sahoo et al., 2024).

Furthermore, the same transformer architecture that can model language in an LLM is also able to model the ‘grammar’ of images and the ‘grammar’ of robotic actions (Brohan et al., 2023), suggesting that our brain may implement something akin to a giant multi-modal transformer, predicting the next state or action in a sequence of rich multi-modal contexts. We appear to possess a domain-general generic world model capable of next-step prediction (Clark, 2013). Prior to transformers, it was believed that specific algorithms for internal concept formation, such as the Beta-VAE, were needed (Higgins et al., 2016), but transformers have largely made these machine learning algorithms somewhat obsolete, suggesting instead that symbols and concepts arise enactively due to a tight sensorimotor coupling with the world in a continuous sensorimotor loop.

3.3. Minimal neural requirements

Figure 1 shows a minimal set of neural mechanisms needed for our enactive constructivist argument. The figure shows (1) a multi-modal transformer capable of associative learning within a maximum context window, which implements a sensorimotor policy (Vaswani et al., 2017). This is capable of operating in two modes: engaged and neutral. When engaged, it senses and acts in the world. When in neutral, it runs the same process but without explicit motor execution of acts, that is, offline imagination (Hamrick, 2019). (2) An intrinsic motivation for joint attention through gaze following (Mundy, 2018), which is lacking in non-human primates (Tomasello, 2008; Savage-Rumbaugh and Lewin, 1994). (3) A reinforcement learning system capable of sensorimotor policy improvement (Niv, 2009), and (4) a multi-objective homeostatic reward system which implements a handful of intrinsic motivations for innate goals (Keramati and Gutkin, 2014). We aim to explain a plausible historical trajectory to open-ended ER use by means of only the algorithmic functionality above, combined with a set of selective pressures or ‘games’ that this machinery was selected for playing and which were culturally evolved.

The critical principle to appreciate from Figure 1 is that so-called ‘neural representations’ or ‘neurological substrates’ are best seen as neural world models that output predictions or commands at time t+1, conditioned on the sensory state of the world at time t. This tightly coupled intentional arc generates words, images, and actions step by step, rather than ballistically (i.e. in one go, without a sensorimotor feedback loop) outputting a fully formed externalization of something that was internally represented in a fully formed manner at first. What is implemented by the neural substrate is a sensorimotor policy which learns a sensorimotor sequence from sensory state s(t) to motor output m(t+1).

3.4. Neuroscientific evidence

Evidence from neuroscience can be interpreted in a manner consistent with Figure 1. We focus on the most promising candidates for the neurological substrate of a VLM. These are the associative areas in the brain that are well-positioned to integrate sensory and motor information to learn sensorimotor sequences.

Whilst there is disagreement about the extent to which human brains are allometrically scaled-up versions of non-human primate brains (Herculano-Houzel, 2012), recent evidence shows that a greater cortical surface is allocated to association cortex (Preuss, 2017), with changes in the connectivity of the executive prefrontal cortex (Rilling, 2014) and changes in language areas (Rilling, 2014). Indeed, studies of skulls show enlarged parietal lobes in humans compared to Neanderthals (Pereira-Pedro et al., 2020). The parietal cortex is substantially expanded in the

Compared to monkeys, the intraparietal sulcus (IPS) shows more representational complexity for complex 2D and 3D perceptual object concepts (Orban et al., 2006), ranging from simple to abstract (Iriki and Taoka, 2012). Parietal cortex lesions result in tool and constructional apraxias (Bruner et al., 2023), consistent with failures of goal-directed (causal/intentional) complex sensorimotor sequence learning, which involves observation and execution of tool actions (Orban and Caruana, 2014), critical for imitation, and therefore the cultural evolution of tool use (Stout and Hecht, 2017) and possibly more innate abilities such as numerosity (Coolidge and Overmann, 2012). Failures of understanding when and how to execute a sensorimotor sequence, such as finger counting (Andres et al., 2008), may be failures of goal-directed perceptual concept using policies.

When chimpanzees were tasked with copying the configuration of three rectangular blocks arranged as ‘line’, ‘cross-stack’, and ‘arch’, they couldn’t do it. We propose the inability to perform a copying task is because chimpanzees don’t have the mutually agreed perceptual concepts just listed (e.g. in the form of words) agreed with the task setter, which allows them to set appropriate measurable perceptual goals for success (Potì et al., 2009). This is not to deny that non-human primates can undertake goal-directed behaviour, but to say that their goals are largely innate or self-generated. Their ability to acquire new socially learned goals is severely limited compared to language users. In fact, the evidence for copying is (to our knowledge) limited to learning novel policies to execute old goals already possessed by the recipient, not the communication of explicit new goals themselves (Hobaiter and Byrne, 2010; Horner and Whiten, 2005; Van De Waal et al., 2014; Whiten et al., 2009).

As a note of caution against taking neurological properties as evidence for the nativist position, specific cortical enlargements may have both genetic and enactive explanations due to structural plasticity (Schmidt et al., 2021). It has been shown that brain regions enlarge after playing video games (Colom et al., 2012) or navigating as a London taxi driver (Maguire et al., 2000).

Along with others, we believe the right way to think about the brain-behaviour relation is that external representation and tool use bring forth enlargement in neural representations of sensorimotor policies (Malafouris, 2013; Kirsh, 1995; Piazza and Izard, (2009). Even evidence for increased gene expression associated with synaptic transmission and plasticity in human brains, compared to non-human primates, may have a developmental secondary basis (Verendeev and Sherwood, 2017; Preuss et al., 2004). This would appear to have domain-general effects rather than implementing specific modules, for example, for language Sherwood et al. (2008).

4. Functions of external representations

Donald (1991) has listed several properties of ERs, which he calls exograms, in contrast to the engram (Semon, 1921): unlimited physical media, unconstrained format, permanence, unlimited capacity, unlimited perceptual access, spatial structure, and clear access. The extended mind hypothesis, introduced by Andy Clark and David Chalmers, argues that cognitive processes can sometimes extend beyond the confines of the individual’s brain and include parts of the external world. An example of this is cognitive offloading, where external tools reduce cognitive load, enhanced problem-solving, information storage, guiding behaviour, and externalizing thoughts (Clark and Chalmers, 1998).

However, Clark’s extended mind hypothesis remains committed to internal representations. Extensions of cognition in the world are secondary scaffolds that merely support internal thought processes. Theirs is a weakly embodied framework (Aston, 2019). The view presented here is more radical and aligns with the lines proposed by Malafouris, who outlines the ‘representational fallacy [which] pertains to treating material culture as the epiphenomenal product of a representation-processing mechanism located inside the brain’ (Iliopoulos, 2016a p247; Malafouris, 2013). Instead, he proposes material culture plays a constitutive role in the generation of cognition. Colin Renfrew refers to ‘substantialization’ as the means by which concepts are brought forth enactively, which is related to Malafouris’ material signification (Renfrew and Zubrow, 1994).

Ingold goes as far as to be wary of talking about even external representations, taking an enactivist position, saying, ‘Cookings, story-tellings, and whistlings are not representations, they are not traits, indeed they are not objects of any kind; they are rather enactions in the world’, material scaffolds of a sensorimotor policy (Ingold, 2020).

4.1. Extending primate neural world models

Pertaining to the generic world model shown in Figure 1, there is considerable evidence that sensorimotor policies are implemented in the brain and that these can be optimized by associative and reinforcement learning (Miranda et al., 2020; Ha and Schmidhuber, 2018; Russek et al., 2017; Daw et al., 2005; Akam et al., 2015; Wikenheiser and Schoenbaum, 2016). Executive prefrontal systems allow planning, that is, running counterfactual experiments, trying out a variety of different policies in the imagination, and improving them before execution in the world itself. Daniel Dennett calls creatures with this kind of ability ‘Popperian’ (Dennett, 2008); Ha and Schmidhuber, 2018).

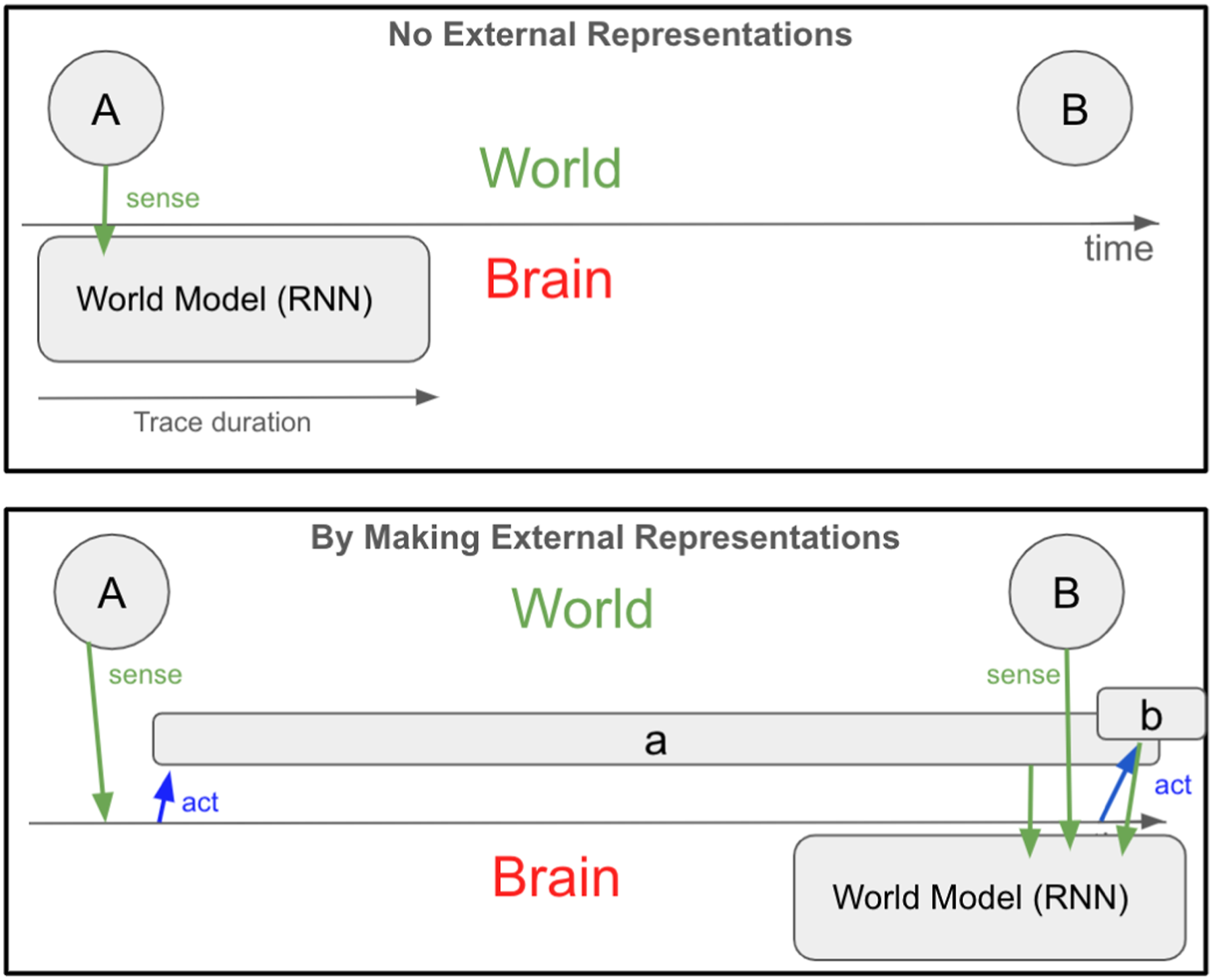

However, primate neural model-building machinery has evolved to model events of significance at normal primate behavioural timescales, with data that is directly sensed, for example, in the visible, audible, tactile, and proprioceptive fields (Niv, 2009). ERs extend the neural world model in Figure 1 in two ways: (1) extending the associative time window and (2) making the invisible visible. We consider each in turn.

4.2. Extending the associative time window

There is no evidence that apes can learn

Evidence that apes can remember associations made over short time scales, many years later, for example, when recognizing human foster parents, is not evidence against the above claim (Lewis et al., 2023). Caching in squirrels and scrub-jays is also not evidence of the ability to learn new relations separated over long time scales. It demonstrates only the ability to learn new relations separated over short time scales, for instance, the location of buried nuts, and an ability to recall them over long time scales (Clayton and Dickinson, 1998).

Whilst the associative windows of neural world modelling algorithms could potentially have slowly been lengthened by genetic evolution in early hominids, there is a faster way: namely, creating ERs. There is evidence that language use can extend neural world models in this way. For example, children’s ability to anticipate future needs and act accordingly develops alongside their narrative abilities (Atance and O’Neill, 2001). Non-human primates do not routinely invent and put things into the world (like words) with the intention of extending their planning and predictive abilities in this way.

The ability to create ERs was a tremendous ‘software upgrade’ to existing neural world modelling ‘hardware’, allowing its application to broader domains. ERs can make visible (or audible, or touchable) messages that stand for topics potentially displaced in space and time. These messages could be modelled at convenient behavioural timescales, and stand in for temporally displaced topics they represent. We perform such ‘imagination improving or aiding’ actions in the world to increase the domain of applicability of our ‘primate’ imagination algorithms.

If we pretend that something we make in the world, for example, a clay object or a word, stands for another object such as the sun, we can model the sun’s slow dynamics at a faster timescale by simply moving the clay object, or by using the word.

Consider this minimal example. Figure 2 shows two events in the world,

Once these new ERs have been created in the world and policies developed for their manipulation, these policies can be run offline, that is, imagined (Hills and Butterfill, 2015). In other words,

4.3. Making the invisible visible

Neural world models cannot model invisible things unless those things can be rendered sensible (Penn et al. (2008). Consider an abstract situation in which ‘naming’ a hidden variable and using it to predict visible states is preferable to only modelling visible variables. When trying to predict the continuation of the sequence 0k0kk0kkk0kkkk0kkkkk0, without knowing a counting sensorimotor policy, it would be necessary to learn a growing number of transition rules such as

It has been demonstrated that a neural network, even a recurrent neural network, is not capable of generalizing to values of k outside the range that they were trained on Evans (2022). It does not invent the concept of a number or the operator ‘add’. In fact, this is closely related to the core criticism Gary Marcus makes of connectionism Marcus (2003). Marcus then aims to solve the problem by proposing new neural mechanisms. We, however, propose that the solution lies in external representation invention and use.

If one were to invent an ER-based policy for ‘counting’ by creating a physical instantiation of a variable called ‘number of k’ and learning an ‘increment by one’ policy conditioned on the state of the variable ‘number of k’ and exploiting a set of ordering relations of external number representations, then greater generalization would be possible. This is because the same procedure applies to strings of k of any size.

Using the ‘number of k’ variable and the ‘increment by 1’ operator gives (for large ‘number of k’) a shorter and more general theory for explaining the data than learning each transition rule from ‘number of k’ to ‘number of k + 1’ in isolation. In machine learning, it is known that shorter theories will be selected in model comparison by the automatic Bayes Occam’s razor MacKay (1992), as they generalize better.

Consider a more natural situation of predicting human behaviours related to ‘anger’. We support a two-system account of a theory of mind in which there are both implicit (Carnapian/behavioural) and explicit (Gricean/ER mediated) mechanisms (Butterfill and Apperly, 2013), which will be outlined below. The causes and the consequences (objective effects) of what we label as anger are often not hidden, for example, body postures, behavioural changes, and facial expressions. These are not unique to humans, sharing brain structures with other primates (Panksepp, 2004; LeDoux, 2000). Indeed, some overt displays of anger may be both old and advantageous from an evolutionary perspective.

However, these behaviourally observable causes and consequences of anger are

To see this is the case, it is sufficient to understand that several emotional states may cause the same body postures, behavioural changes, and facial expressions. Consider increased heart rate and facial flushing which can be caused by anger and by running fast in sport. None of these observable variables has a one-to-one correspondence with anger itself. Furthermore, anger may also exist without any observable consequences, or with the consequence being delayed until much later, for example, in cases of delayed revenge.

Many consequences of anger are not old and advantageous from an evolutionary perspective, for example, blowing the horn on one’s car during road rage or switching off the boiler while someone is having a hot shower. Feelings associated with the words ‘anger’ or ‘thirst’ have been described as intervening variables by Whiten (Whiten, 2014). He intends them to be ontologically real essential mental states. However, we propose that whilst such feelings of anger certainly exist, it is possible to have such feelings without explicitly knowing one is angry.

When one has an explicit theory of mind as opposed to merely an implicit theory of mind, one is capable of utilizing introspection and behavioural observation to classify one’s emotion as anger (Butterfill and Apperly, 2013). Without the capacity to invent and use ERs, one is not able to do this. There is no evidence that non-human primates without external representations have such a belief representation system (Horschler et al., 2020).

As Humphrey has described, by introspection, an individual has access to many feelings due to hormones, etc., which by observing others we do not (Humphrey, 1978), and these can provide extra constraints for inventing and enacting an emotion word such as ‘anger’. Fenici and Garofoli have investigated how linguistic practices were invented by ancient humans to allow understanding of others’ actions in terms of mental reasons (Fenici and Garofoli, 2017). They conjecture that the integration of embodied actions within pantomimic capacities scaffolded the cultural construction of propositional structures and narrative practices, eventually resulting in the culturally diverse practices of folk psychology we observe today (Hutto, 2012).

For example, children’s understanding of verbs describing mental processes is initially context-specific and rather naive, only later becoming more general across contexts. It is only in humans that these felt states have been enacted by gesture or vocalization and refined in a community to more precisely refer to initially vague feelings common to the vast majority of speakers.

It is this critical process of enactment and community refinement of what was previously nothing more than a private feeling in an individual and a constellation of behaviours that constitutes the construction of an emotion word such as ‘anger’.

Whilst neither the feelings nor effects of anger are culturally constructed, the ER for anger

Typically, we learn to label our emotions in primary childhood attachment relationships (Fonagy and Campbell, 2016). Just because the ER for anger is a culturally constructed concept does not mean it is entirely arbitrary and not tied to essential (native) attractors in animal behaviour dynamics. Coming up with the term ‘anger’ is to cut nature at its joints. This is why the term has caught on and been so useful.

Without the term existing, we do not deny it is possible for an individual to discover the structure present in anger’s causes and consequences by observing a large number of instances of causes and consequences of anger. But here is the problem with that implicit approach (Butterfill and Apperly, 2013). Suppose there are x anger causes and y anger consequences, you would need to learn x * y ‘rules’ without positing the anger hidden variable. However, by entertaining an anger variable which can take states 0 or 1 (angry or not angry), you only need x + y ‘rules’ for predicting the possible consequences of anger from its causes.

In short, making up intervening hidden variables and using them in neural world models simplifies neural world models and increases their power Evans (2022), Whiten (2013, 2014). The use of x * y rules about visible behaviours (instead of x + y rules) is how the implicit minimal theory of mind referred to by Butterfill and Apperly (2013) operates; it does not require ERs about invisible states such as ‘anger’ to be invented and used for prediction.

There is no evidence that chimpanzees understand false beliefs in cases where more than a minimal or implicit ‘theory of mind’ is needed, that is, where what is required is more than associating visible or audible events in the sensory field (Call and Tomasello, 2008; Butterfill and Apperly, 2013). Villiers et al. show that human children understand false beliefs explicitly typically only when they can use ERs like ‘John thinks that the toy is in the box’ (de Villiers and de Villiers, 2000), although there is controversy on this topic (Westra, 2017).

The evidence points to a distinct implicit minimal theory of mind process capable of entertaining belief-like states shared with infants and non-human primates. This process is surprisingly capable and depends on a subtle evaluation of perceived cues. It is augmented by a much more sophisticated explicit theory of mind system that comes with ER use (Moore, 2021). It is only when mental states are talked about or otherwise represented that they can be explicitly modelled (Hutto, 2012). Once emotions and beliefs could be named, behaviour could be more efficiently modelled and predicted (Chater, 2018).

David Kirsh, in his article ‘Thinking with External Representations’, shares our view that

However, Kirsh and others still believe that these external representations are not primary but augment symbolic thought processes that originate internally Tversky (2014). Other examples of making the invisible visible involve externally representing entities such as atoms, gravity, germs, electromagnetism, and genes.

4.4. Transfer of mastery from convenient to inconvenient domains

We view mastery as consisting of an effective (error-correcting) sensorimotor policy to achieve a goal. In many cases of material construction, such as during stone knapping, the maker’s sensitivity to the perceived and task-relevant features of the stone influences their actions. Actions are not merely ballistic executions of a pre-conceived internal specification (Tennie et al., 2017; Ingold, 2013). Instead, the properties of the stone must be discovered, with each stroke revealing a new property of the medium (Malafouris, 2013).

Contrast this to 3D printing, where the physical printer is designed to exteriorize a perfect internal representation of the object present in the computer. With knapping, the final form of the object arises through a tight coupling of the sensorimotor contingencies of the knapper with the stone’s own properties (Malafouris, 2013), achieving in a skilled knapper ‘maximum grip’ and a ‘tight intentional arc’ (Merleau-Ponty, 2013).

This sensorimotor policy is the kind of thing that the transformer in Figure 1 implements. When Rodney Brooks says the world is its own best model, he means that by learning perceptually contingent motor actions, mastery can be achieved without needing ‘internal representations’ (Brooks, 1991).

How can mastery in one convenient domain of competence be exapted (re-used) for mastery in a more inconvenient and complex domain? Whilst Hutchins and Fauconnier speak of internal mental representations (concepts/meaning) being projected onto external material structures, with the association of conceptual structure with material structure resulting in a ‘conceptual blend’ (Hutchins, 2005; Fauconnier, 1997), our enactive perspective eschews internal representation talk.

Whilst Hutchins goes a step further than Fauconnier in proposing the material anchor, he still makes use of mental conceptual structure, which we do not need in our formulation. We deny that associations can be made between anything that is not directly sensible. We do not need to speak of conceptual structures or conceptual blends to understand the examples Hutchins gives. A queue is, according to Hutchins, a material structure blended with a conceptual structure (the notion of sequential order).

A simpler way to understand a queue is that it is a set of material elements which can be operated on by a sensorimotor policy to achieve certain goals. For example, FIFO (first in first out) or LIFO (last in first out) queues consist of being able to implement the appropriate policy for establishing sequential order and executing popping and pushing actions to the queue. The concept of sequential order is itself dependent on lower-level sensorimotor policies (operations/procedures) for determining the ordering of two elements. In our terms, the ‘material anchor’ is the physical (data) structure that provides the substrate for the sensorimotor policy implemented by the brain.

If the properties of the unfamiliar elements have relations that are shared with the familiar elements, then transfer from the mastered to the unmastered may be even easier (Evans, 2022). For example, clay can be easily divided into objects, as can the things it models, such as bushels of wheat. This allows easy-to-implement sensorimotor policies on the clay to be applicable to more complex policies on the wheat. Dividing bushels of wheat may take longer than the associative time window of the VLM in Figure 1, whereas dividing clay, which represents the bushels of wheat, is much faster (Schmandt-Besserat, 1992; Iliopoulos, 2016b).

ERs allow analogy and relational reasoning by exposing systematic relational correspondences, for example, that the sun is to the solar system what the nucleus is to the atom, allowing physical and cognitive competence in one domain to be transferred to another (Gentner, 2003).

A slightly more complex example is the method of loci used to form a memory palace (Hutchins, 2005). This works because if a policy already exists for traversing a set of familiar elements in sequential order, and if unfamiliar elements can be associated with each of the familiar elements, then that new set of unfamiliar elements can be recalled without needing to form new associations between the unfamiliar elements directly. If making N new associations of unfamiliar elements with N familiar elements is easier than forming N-1 associations between unfamiliar elements, then it follows that the use of a memory palace will aid in learning a new order of unfamiliar elements.

One step further from the queue and the memory palace, Overmann has described how material policies for externally representing simple number concepts like ordinal sequence can use body-counting, of which finger counting is only one form, with later culturally constructed forms being, for instance, the number line Overmann (2018). Overmann has analysed in detail the cultural trajectories that increasingly sophisticated material forms and associated policies to operate on these material forms have taken (Overmann, 2018).

Applied mathematics contains countless examples

In problem-solving domains, drawings can help us solve insight learning tasks such as the 9-dot problem better (Lewis-Williams, 2002; Öllinger et al., 2014; Spiridonov et al., 2019). Creating the highly ritualized ERs of mass and force and the mathematics that operate on equations permits the prediction of the motion of many objects (Wigner, 1990). The visuospatial reasoning engendered by Venn diagrams, the periodic table, and Feynman diagrams helps make sense of other aspects of experience that are difficult or impossible to understand without external props that can be conveniently manipulated (Tversky, 2005).

In language, metaphor is the means by which this transfer is achieved. Competence with policies for balancing on a seesaw can be transferred to understanding ‘balance’ in a financial or mental health context. See also Lakoff and Johnson (2008) for a thorough and detailed analysis of the grounding of conceptual terms in concrete experience. For example, the container schema, which has the structure of interior, boundary, and exterior, can be transferred to more abstract domains (Malafouris, 2013). Semantic extensions of perception verbs to conceptual verbs, like from ‘see’ to ‘know’ and from ‘hear’ to ‘understand’, have been observed cross-culturally (Evans and Wilkins, 2000).

4.5. Other functions of external representations

External representations permit new kinds of categorical judgement that would not have been possible without them. Humans can categorize on the basis of cross-dimensional relations, that is, same/different on only some properties of multiple objects, whereas non-human primates cannot ignore more predispositionally salient properties in order to focus on less salient ones for the purposes of classification (Christie et al., 2007; Gentner and Colhoun, 2010; Thompson and Oden, 2000; Overmann, 2021). This is consistent with the fact that humans are capable of inventing a salient

Evidence for this is that apes can be trained to identify relations-between-relations only if they are first trained to use a symbol system by which propositional representations can be encoded and manipulated (Thompson and Oden, 2000).

In a revealing pair of recent articles, it is clear that both Gopnik and Povinelli share a nativist belief that higher order concepts such as ‘same’ and ‘different’ are implemented by culture-independent neural mechanisms rather than being constructed through socio-cultural agreement (Walker and Gopnik, 2017; Glorioso et al., 2021). This is revealed when Povinelli criticizes (Walker and Gopnik’s, 2017) experimental procedure for detecting whether 18-month-old children have a concept of same and different. The basis of their criticism is that the task is solvable by the detection of perceptual variability between or among stimuli.

It is our position that ultimately

As neither animals nor 18-month-old infants reach such agreements on the meanings of same/different in a given context, it is unreasonable to expect experiments to determine that they have this concept. Such an experiment can only show what perceptual predispositions an animal has, prior to a concept of same/different being agreed upon, if such an agreement is indeed possible.

Language is the prime example of a set of

Folk psychology concepts are not literal descriptions of content-bearing internal states, but convenient fictions (Moore, 2021). If two people behave differently given exactly the same stimulus, then an ER about a hidden (mental) state can explain the difference (Moore, 2021).

Using ERs, it is possible to explicitly instruct a recipient to use skill X for task Y, rather than it being necessary for a specialized neural transfer mechanism to discover this (Bengio et al., 2013; Pan and Yang, 2010; Weiss et al., 2016). Clear examples of language-based transfer come from prompt engineering of large language models. By choosing the right text prompt, an LLM can be made to carry out novel reasoning tasks in a zero-shot manner (Jiang et al., 2019; Wei et al., 2022).

Human

ERs allow remarkable intersubjective plasticity, that is, for ‘diverse collectives of individuals and groups to adopt and transition between numerous social identities [roles] and behaviours with rapidity and flexibility’ Aston (2019) p65. Barrett et al. emphasize the creativity of constructed narrative intelligence in creating complex social spaces Barrett et al. (2007), rather than the opposite causality proposed in the social brain hypothesis, which suggests that we genetically evolved big brains because of selection for larger group sizes (Dunbar, 1998).

Linguistic and other material scaffolds, such as apps and clothing, allow human socio-technical systems to emerge. External representations can be seen as affecting social and cognitive niche construction. Ironically, this has been made clear by an article which tries to prevent us from inappropriately anthropOxfordomorphizing LLMs. It argues that large language models can be thought of as engaging in role-play, being easily prompted to take on various roles (Shanahan et al., 2023). However, the very same properties of language that make this possible for LLMs make it possible for us to be prompted into occupying a diversity of roles (specify a diversity of games for us to play). What distinguishes us from LLMs is that we have a multidimensional reward system and modify our LLM by reinforcement learning during engagement in environments with other agents.

5. From bush reading to mind reading

We now address the question of what prompted us to start making external representations in the first place. One of the main problems to solve is how ostensive-inferential (Gricean) communication can arise without paradoxes (Moore, 2017). A necessary but not sufficient component of Gricean communication is that the recipient can entertain things like the communicative intents (goals) of the sender. A goal is not directly sensible. To do so, we claim that a sender would have needed to name these invisible mental states, that is, turn them into a signal which the recipient understood as meaning an intent (goal) of the sender. How can this have happened?

Most of us with urban lifestyles, especially in the West, are unaware that we are almost completely blind to the complex goings-on in the natural world. When we go for a walk in the woods or a meadow, we miss practically all the meaning in the things that are happening around us (Brown Jr, 1986). Bush reading provides an intermediate situation where names need not be created for hidden states because, in bush reading, contextualized cues form a myriad of natural signs or indexes, from which their causes can be inferred based on a very broad and complex context (Gell et al., 1998). Bush reading requires learning to understand (and also actively produce for teaching purposes) complex denotation systems (Willats, 2006).

We argue that selection for bush reading pre-adapted humans to put effort into developing ER technologies (such as the policy for making and agreeing on the names of things) for inferring the hidden causes and consequences of visible contextualized cues/signals.

The step to be made from bush reading to mind-reading was for a sender to invent a name for a felt mental state, for example, to enact an intermediate variable (Whiten, 2013). If this strange creative act by the sender is done, then the same associative machinery used in bush reading can be used by the recipient to associate an ER, such as the word ‘sad’, with its underlying feeling, its causes, and its consequences, see Figure 4. In that figure, dark clouds and the word ‘sad’ both refer to hidden causes and consequences of the cue/signal. For example, dark clouds might mean it will rain, and the utterance ‘sad’ might mean the person will need comforting, both depending on context.

Communicative intentions, such as wanting the consequences of one’s sadness attended to, can best be anticipated and understood by speaking them, that is, by naturalizing them. Modifying the example from Bar-On and Moore (2017), the word ‘sadness’ is to the currently invisible causes and consequences of sadness what a dark cloud is to the possibility of rain.

There is evidence that

We can find no evidence that non-human animals track footprints to find invisible prey without using smell (Boesch and Boesch-Achermann, 2000; Janson and Byrne, 2007). The use of smell is quite different from visual tracking because smell becomes more intense as the tracked animal gets closer, whereas the footprint and other bush craft indexes do not need to resemble the animal itself in the direct way smell does.

After the origin of bipedalism around 6 MYA (Demenocal, 1995), hominids were exposed to seasonal mosaic environments (Domínguez-Rodrigo, 2014). Variability selection theory proposes that hominids needed to adapt to rapid climatic oscillation from 1.7 MYA to 1.0 MYA, with shrinking forests and woodlands and expanding grasslands, which placed pressure on the hominin niche (Potts, 1998b; Wynn et al., 2021). Increasingly variable, severe, and risky glacial/interglacial cycles over the past 800,000 years and more dramatic short-term climatic events, such as the volcanic winter arising from the Mount Toba supervolcano explosion, produced strong selection pressure resulting in severe human population bottlenecks (Ambrose, 1998).

Under marginal arid conditions with less vegetation, there would have been strong selection pressure for tracking: reading animal tracks, scat, broken branches, or disturbed vegetation to infer the recent presence, type, size, age, health, and possible future movement of game (or predators) (Liebenberg, 1990). Tracking would also have been easier in open country. Plant foraging, that is, using clues to where certain plants grow, for example, insect presence or bird calls (e.g. near water sources), would have become more valuable, as would have been indicators of nearby fresh water sources. To follow sparser food sources, navigation would have been needed over longer distances, using the position of the sun, stars, moss growth, and tree bending as indicators of cardinal direction or location.

There are conditions in which making inferences from signs about hidden topics is very helpful. For example, by seeing lots of nettles, clover, foxgloves, thistles, or poppies, one can infer that some kind of human habitation or disturbance was likely to have been at this site. There is no formal logical reason for there to be human habitation given nettles (e.g. the nettles could be generated for other reasons), and there is no arbitrary symbolic linguistic relation between human habitation and nettles either (unless one is agreed on previously), and there is no resemblance between nettles and human habitation. Instead, nettles are demonstrative of human habitation when found in a certain context. In Grice’s terms, the nettle has ‘natural meaning’ (Grice, 2020) or is an index (Gell et al., 1998).

Bush reading is replete with skills for inferring hidden events from their visible effects or antecedents. Hidden eggs or moving game from footprints, a future storm from cloud formations (cirrus followed by cirrostratus indicating a warm front and rain) and wind direction (using the cross-winds method), inferring direction from understanding the signs of the prevailing wind, for instance the ‘wedge effect’ on growth in a line of trees, the shape of trees, the asymmetric distribution of tree roots, the moss growing on bark, and the alignment of lettuce leaves north-south when exposed to lots of sun (Gooley, 2010).

‘The Inuit know that when purple saxifrage is in flower, the reindeer are in calf’. Precise timing of recent disturbances less than fifteen minutes old is noticeable from the reddening and then blackening of brittlegill (Gooley, 2010). Many rhymes have been invented to represent these facts, for example, ‘When the glow-worm lights her lamp, the air is always damp’, or ‘sea-gull, sea-gull sit on the sand, it’s never good weather when you’re on the land’ (Gooley, 2012). Finding animals requires understanding their tracks, scat, slough, remains, refuse, dens, and odour (Canterbury, 2015). From the tracks, many things can be inferred, such as, behaviour, age of the animal, when the track was made, the direction of the animal, etc (Liebenberg et al., 2010; Brown, 1983).

We became adept at seeing references to things (that did not necessarily resemble those things to which they referred) that were not immediately there, that is, the events which represented their hidden causes. The most thorough analysis of tracking and its relation to cognition is by Kim Shaw-Williams, who has proposed the social trackways theory. According to this theory, tracks of humans (and other animals) record narratives of what their authors were up to in the past, and these were constructed with communicative intent. Tracks are durable, and unlike mental states, sometimes you can see the tracks being made. Through association alone, one can observe the complex ‘grammatical’ relations between tracks and the track-maker’s behaviour and intention. Tracks provide a highly rich and ready-made representational narrative (Shaw-Williams, 2017).

In their article ‘Learning to induce causal structure’, Ke et al. (2022) show that if an LLM, as shown in Figure 1, is trained to associate paired ERs of a causal graph with data from a structure in the world that is well described by that causal graph, then it can generalize to infer novel causal structures in the world from that data. Therefore, if the children in Gopnik’s causal inference experiments (Kushnir et al., 2010; Gopnik et al., 2004; Seiver et al., 2013) can be shown to have been trained with causal model language describing the generative system responsible for an experience, associated with the observations (and interventions) from that experience, then an architecture no more complex than Figure 1 is sufficient to explain their causal inference ability. No other special neural causal inference machinery is needed.

Once we became good at using general indexes that did not resemble the things they represented, for understanding those things, hidden and not-hidden, it was a small step to being able to make our own references to topics, hidden and not-hidden, to manipulate ‘recipients’ who had this index understanding ability, in what we call ‘art games’. Gell has described this process as the basis of art (Gell et al., 1998). Iliopoulos writes, ‘in deliberately manipulating material signs, individuals would have exercised their significative beliefs about them, allowing others to observe and assimilate their habitualized dispositions towards things taken to share certain qualities or attributes’ (Iliopoulos, 2016a) p257.

But how could the step be made from passive observation of messages to the making of messages? Intentional senders cannot have come first unless they thought they had something to send to. This problem is related to the ‘paradox’ in the origin of animal communication (Krebs and Davies, 2009), which has two possible origins. The first is that a signal arises from the receiver’s exploitation of a pre-existing action in a sender, which then becomes ritualized. This has a material analogy in early artistic processes whereby found objects with an initial resemblance to a prototype were modified by a ‘receiver’ to look more like the thing the receiver thought they should look like (e.g. the Makapansgat pebble (Bednarik, 1998)).

The second way a signal can arise is if the sender exploits an existing perceptual property of the receiver, consider how an orchid may mimic a female bee to such an extent that the male bee will choose it in preference to a real female bee (Scott-Phillips, 2015). It is this second signal manipulation process by the sender of bush-reading-driven causal inference skills in the receiver that we suggest is the route to the

6. A space of External representation making games

We describe a space of

6.1. The science game

In the science game, visible topics are named, and hidden states are named to explain visible topics (Evans, 2022). Policies are learned for inferring the hidden meanings of indices (policies for observation and experiment). This is done during the practice of bush reading (Liebenberg, 1990), where an explanatory model is made to predict and understand events displaced in space and time on the basis of visible evidence. No learning takes place in the sender as there is no intentional sender at all. The sign is natural. All the learning takes place in the receiver, who comes to infer the meaning of those natural signs and make sense of the causes of the observed variables.

There may be a rich syntactical structure in the visible variables which implies a complex semantics about the physical and biological processes that constructed them. In the face of unpredictable environments, highly structured, rigid, repetitive, and redundant rituals are likely to develop (Hobson et al., 2018). An interesting unconscious consequence of such rituals might have been to ‘keep all else equal’; an important principle in scientific experimentation, which may have allowed more effective observations of causally relevant variables (Demmrich, 2023).

6.2. The language game

In language games, both the sender and receiver learn (Számadó and Szathmáry, 2006; Steels, 1999; Wang et al., 2018). By language game, we mean any game that involves agreement about conventional forms of communication by both sender and receiver. This may include the ‘language’ of visual art or mathematics as well as natural language. Our classification is based on distinct functional dynamics of learning

Increasing abstraction is a typical finding over many iterations of transmission and has been demonstrated experimentally in human communication tasks of this kind. Various perceptual priors determine whether complete arbitrariness of sign evolves (Hawkins et al., 2021; Fan et al., 2020; Fernando et al., 2020). Overmann has analysed increasing abstraction in the mathematical domain, where such ‘language’ game dynamics also operate (Overmann, 2023). There are many variants of such games, only some of which are Gricean.

In this corner are also non-natural language convention creation systems and processes, each with different success criteria and methods for valid and invalid communication about topics, such as refutability (Popper, 2005), clarity, freedom from contradiction, and unambiguousness of reference (Cassirer et al., 1955). How to use traffic lights, lighthouses, smoke signals, and ostrich eggshell beads used by !Kung San hunter-gatherers of the Kalahari can be taught (Wiessner, 1982), or transmitted by a process of cultural inheritance (Heyes, 2018). All these existing conventional systems are likely to have adaptations for their transmission (Csibra and Gergely, 2009; Heyes, 2018), such as exploiting mechanisms of selective social learning, imitation, mind-reading, or natural language.

There can also be resistance to transmission. For example, in the realm of visual art, a ‘primitive’ artist refuses to accept such conventions (Berger, 2009), while an outsider artist does not know them (Ferrier, 1998).

6.3. The art game

We define an art game as any communication game in which the recipient is not trained and does not need to learn to be appropriately acted on by the artwork. In an art game, the sender learns a new way to modify the recipient’s experience by producing some message (

In Figure 5, on the left, some bird footprints are shown. If I know that you already have a competence for following these to find eggs, then I can play an art game and learn to produce abstractions of these footprints (arrows), which you immediately recognize as meaning that you should follow them, without requiring novel conventional agreement. Eventually, the arrow acquires meaning independently of the original context in which it was invented. The diagram shows an arrow being used to signal to aliens the route of the Pioneer spacecraft out of the solar system from Earth. Whether aliens will understand this external representation is a moot point.

This is a universal and functional definition of art as a discovery process by the sender. It is an algorithmic formal description of a communication process not requiring conventional agreement with a recipient. It defines a natural kind of art that could happen anywhere in the universe (Hacking, 1991). Our definition does not need to cohere with particular Western biases that pervade institutional, representational, and expressive theories of art (Bloch, 2014; Carroll and Carroll, 1999; Zeki et al., 1999; Arnheim, 19540; Gombrich, 1961; Hague, 1990; Collingwood, 2016; Carroll and Carroll, 1999). For us, an art game is an extreme case of a communication game. Many instances of art as defined by current theories of art may not be produced as purely the result of art games as we define them here. They may involve conventional agreements with the recipient, that is, contain elements of language games. Our definition is a definition of an art process ‘from the outside’ rather than ‘from the inside’, as Bloch puts it (Bloch, 2016).

Why define this strange kind of game? Because an art game has a power that language games do not. Evolution plays art games (as we define them) regularly; the result being mimicry by the creation of Carnapian ERs. An example is how butterfly eyespots may deter predators or deflect attacks (Kodandaramaiah, 2011). This does not require agreement from the predator. In fact, even if a butterfly could speak to a bird, there is nothing it could say to stop itself from being eaten. Language games are of no value in this setting.

Artists exploit their knowledge of the recipient’s perceptual priors to construct novel external representations that achieve the functional goal they desire, without agreement from the recipient. For example, performances (rituals) or images may be invented that exploit the recipient’s innate preferences (Dissanayake, 1992, 1998). However, in the case of animal mimicry, it is not the animals themselves who are the agents that produced the

In the domain of sound, an example of an art game would be the creation of an onomatopoeia (Davis, 2022), literally the ‘giving of a name’ as the Greeks speculated was the origin of language (Rastall, 2021). The onomatopoeia is an example of an art game rather than a language game because the recipient doesn’t have to do any work but can infer the meaning of the sound through direct resemblance alone. The artist, in Alva Noë’s terms, has reorganized the receiver’s experience of a topic to help communicate about it in a new way (Noë, 2015, 2023).

Non-human primates cannot be trained to draw depictions (Savage-Rumbaugh and Lewin 1994; Tomasello, 2008; Oxford, 1962; Seghers, 2014), whereas human children are easily motivated to exploit perceptual priors to discover effective external representations that influence a recipient. They invent ‘graphic equivalents’ for the objects that they want to draw (Kellogg and O’Dell, 1967; Arnheim, 1954; Winner, 2006). Parts of a picture (picture primitives) are used to refer to parts of the world by denotation systems (Willats, 2006), and relations between picture primitives are used to refer to relations between parts of the world by drawing systems. Both systems depend on what the child is trying to achieve (Tomasello, 2009).

Children do not typically aim to produce a viewer-centred photorealistic image whose final aim is mimicry from a single viewpoint. Instead, they often seek a description of things independently of any particular point of view (Marr, 2010). Similarly, the topic in many oil paintings is the object experienced over extended time and space (Elkins, 1997).

In the denotation system, points, lines, and regions are used to represent objects in the world. Initially, children use regions to represent volumes, but later learn that lines can be used to represent the edges of shapes much better. This involves rejecting previously used rules. Over time, the mismatch between picture production and picture perception spurs children to find new solutions, for example, to communicate spatial order, enclosure, join relations, spatial direction, and relative size. We will see later that similar depictive goals and trajectories of discovery are apparent in prehistoric art (Froese, 2019).

7. An evolutionary trajectory to fully Gricean external representations

We argued in the previous sections that the neural machinery sketched in Figure 1 is sufficient to explain all human ER invention and use. Here, we consider a genetic evolutionary trajectory to that machinery, as well as a cultural evolution-based trajectory through the pragmatic space of ER-making games defined in the previous section, which is capable of bootstrapping full Gricean communication. Our approach takes from ‘intentional act analysis’ the requirements for an effective lineage explanation, that is, small changes, no sky hooks, new meaning patterns should become available, the change should be adaptive, and the change should fit with the empirical evidence (van Mazijk, 2022a).

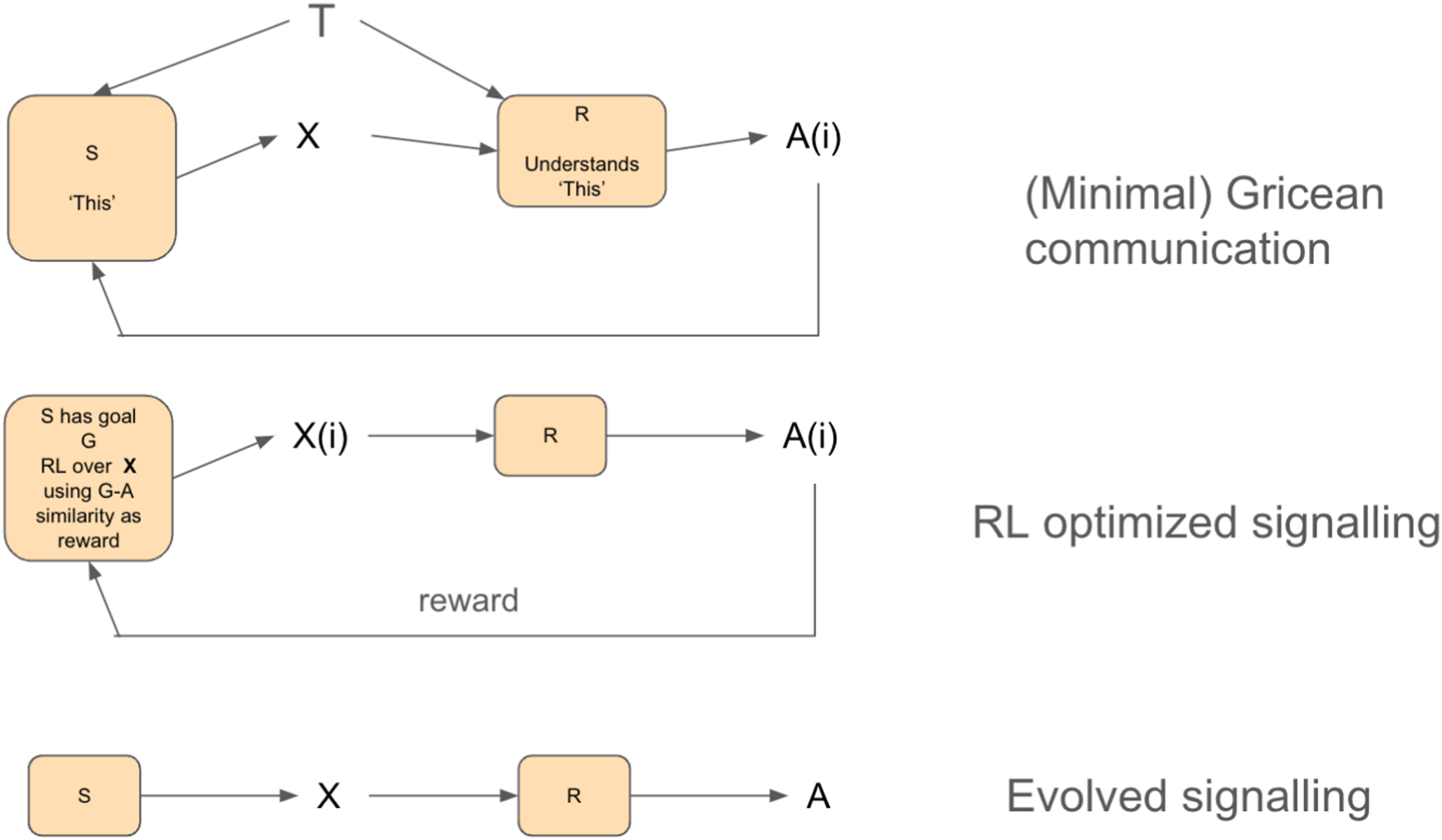

To achieve their communicative goal, senders can use increasingly sophisticated search algorithms for finding a good stimulus (ER) which, when presented, will achieve the desired behaviour in the receiver (perhaps but not necessarily by using knowledge of the receiver’s mental states). There are pre-Gricean, minimally Gricean, and fully Gricean algorithms for these kinds of interaction (Moore, 2021; Butterfill and Apperly, 2013; Bar-On, 2021). The ER perspective and the minimal neural machinery in Figure 1 impose logical constraints that determine how such trajectories of algorithms can evolve, for example, language must come prior to explicit (but not implicit) ToM (Moore, 2021). Figure 6 shows different kinds of ER-making processes that should be carefully distinguished, each one depending on increasingly complex abilities, with only the last one (top) being capable of developing a full propositional attitude, that is, of producing ERs that represent invisible causes such as mental states, allowing fully Gricean communication (Butterfill and Apperly, 2013). A plausible evolutionary trajectory of communicative algorithms from bottom to top.

7.1. Game type 1

Let us call the simplest algorithm in Figure 6 (bottom) evolved signalling. S (the evolved sender) produces an external representation X which results in R (the evolved recipient) producing action A. This, to a first approximation, describes most Carnapian animal signalling, such as vocalizations of non-primates, bee dances (von Frisch, 1967), cicadas’ mating songs, firefly flashes, and octopus colour changes (Bar-On, 2021). This corresponds to the bottom-left corner in Figure 5, where there is no exploratory learning in sender or receiver in this type of communication game. Evolution has ‘designed’ the signal X. Millikan would say that the signal’s proper function was communication, evolved by mutual adjustment between signallers and receivers over evolutionary time (Bar-On, 2021). Certainly, no mind-reading is required. The signals need not be treated by their users as communicative to accomplish their purpose. No thinking about others’ states of mind is needed. Cultural as well as genetic evolution may be the substrate for this kind of signalling, for example, in bowerbird decorative styles (Madden, 2008).

7.2. Game type 2

A more complex process in the middle of Figure 6 is reinforcement learning optimized signalling. S (a Signaller capable of learning by RL) can search over different actions X(i) to induce R to produce a desired action A(i), which maximizes reward for S. Animals may try out a variety of actions to manipulate other animals in this way, if they are intrinsically rewarded for doing so. The aim is the manipulation of the receiver for the sake of the sender van Mazijk (2022b). If S is motivated to direct the attention of R as measured by joint gaze direction, then several attention-directing signals may be explored and tested for efficacy by S. Gestures (but less so vocalizations) are under voluntary control in non-human primates (Tomasello, 2008).

Eventually, a demonstrative such as a gesture of pointing, or an arbitrary sound meaning something like ‘this’ standing in for a gesture, may be invented. This would have no specific referential content but have a strong correlation with internal mental states of the sender, such as them wanting the recipient to attend to the topic at hand (Bar-On, 2021). 2 In this way, the recipient may come to know that S intends them to make a response A(i), but without requiring an explicit theory of mind, merely by tracking perceptual states that are correlated with intentions (Moore, 2017).

At this transitional stage, external representations would have been ‘practice-embedded’ without free availability, tied to specific contexts and shared practices, being brittle references, non-compositional, non-systematic, and lacking generality (van Mazijk, 2022b).

7.3. Game type 3

This game leads to a more complex and efficient process for communication at the top of Figure 6, which Moore has called minimally Gricean communication (Moore, 2017). Let S have a goal that R produces A(i), which will maximize S’s reward. S knows R produces A(i) in response to an externally observable event T. Through joint attention (possibly developed in game type 2 above), S is capable of making R attend to T because R knows that S intended them to attend to T when, for example, the sound ‘this’ combined with pointing was uttered, while S simultaneously (within the neural world model temporal context window) produces the external representation X. R is then capable of inferring that S intended X to be associated with T. Therefore, A(i), which was once executed in response to only T, is now executed in response to X. X does not need to resemble T, but R is practiced with such arbitrary meanings from their background in bush reading.

Also, conventions (biases) develop during ontogeny by associative learning about the kinds of properties of T (e.g. shape) that X is typically about, as shown by investigations of the order of word learning in children (Crane, 2000). S can modify X or the joint attention process, for example, pointing, to improve the effectiveness with which R should interpret X to mean T. Only implicit theory of mind has been used. Interaction theory, described by Froese and Gallagher, operates at this dynamical systems level of embodied sociality, showing that pre-Gricean processing can do a lot of work in achieving joint attention and coordination (Froese and Gallagher, 2012).

7.4. Game type 4

Building upon the minimal Gricean communication framework above, in game type 4, new Xs can be invented that stand for internal mental states such as emotions or beliefs. For example, S can pair X with conditions in which S feels thirsty, and where T corresponds to one of many possible means by which S’s thirst could be allayed by R, and A(i) are actions on R’s part that would help to allay S’s thirst. This is the enactment of an intermediate variable in Whiten’s terms, which comes to express a desire/goal of S (Whiten 2013, 2014), also called an avowal (Bar-On, 2004). The structural organization of the game is no different from the minimal Gricean setting. The only difference is that ‘thirst’ is a more general intermediate variable that refers to an internal state in S, rather than a specific external topic T.

Other Xs could come to mean the self, for example, a handprint, which would make R execute actions associated with R having seen S, even when S was not currently present. 3 Once communicative intent can be understood, increasingly sophisticated games can be invented, a game being defined as a goal and a set of constraints for play. By this stage, methods exist for teaching the meanings of arbitrary Xs, for example, transmitting arbitrary rituals, a ubiquitous finding in all cultures which allows achieving desirable outcomes through obscure means (Demmrich, 2023).

An explicit theory of mind has now been developed because, through the enactment of intermediate variables, Xs have been created that refer to internal states in the sender, and full Gricean communication is possible. S and R can model, using their world model that includes these Xs, their own intentions and those of others. This is factic knowledge, not merely practical knowledge (Millikan, 2017). R can explicitly model what S wants when uttering X. This is possible because the ERs for ‘this’, ‘self’, ‘thirsty’, etc., have been invented and can be understood. The utterance X is produced with speaker meaning and interpreted as such (Bar-On, 2021). The speaker intends to communicate their state of mind to the recipient, and the recipient seeks to infer that state of mind. This is possible because both can represent states of mind using external representations.

Scott-Phillips has a different point of view (Scott-Phillips, 2015), proposing that language developed as an adaptation in a species already genetically adapted for ostensive-inferential communication, thus already capable of mind-reading and the intentional stance. We claim that explicit ostensive-inferential communication arose only with linguistic capacities (Bar-On, 2021), but that simple forms of implicit and embodied approximations to inferring communicative intent arose culturally prior to language and were needed for bootstrapping language.

8. External representations and pretend play

Pretend play is fully Gricean, that is, when X comes to stand for T, it is known by sender and receiver that X is not T but is intended to be about T, and that S and R are pretending together that X stands for T in some mutually constructed, bracketed-off systematic map between domains (Bennett, 1996). One wonders how

We argue that other animals do not engage in fully Gricean pretend play because it requires that the recipient knows that the sender intends that X be about T, and that X is not merely about T by resemblance alone, that is, pretending is a language game rather than an art game.

Cats play with many objects that scaffold similar sensorimotor policies as mice, for example, rubber bands, mittens, wadded-up post-it notes, and feet under blankets. But would it be fair to say that a cat pretends that the ball of wool is a mouse? Lorenz describes that it is because the ball resembles a mouse that it releases a fixed action pattern in the cat (Schleidt, 1974). In line with this, the ball of wool does not release all the mousy action patterns, for example, the cat does not eat it, it is hoped. The cat does not understand the principle of representation but is at the whim of the creator of the ‘material scaffold’.

Cats cannot pretend that any arbitrary thing is a mouse; there has to be a resemblance to a mouse for it to treat it as a mouse, whereas we can pretend

Similarly, when a cat play fights, it is not pretending to fight by doing something else like jumping or climbing; it is having a not very serious real fight. To claim that when a lion cub growls in a fight it is pretending that the growl has aggressive intent is to claim that, based on the knowledge that aggressive intent is the hidden cause of growls in fights, and by reasoning that if they growl, the recipient will infer that they have aggressive intent, they decide to emit a real growl for this reason. If they were capable of such reasoning, then they could be generally more Machiavellian in other respects, demonstrating a higher level of manipulative reasoning, for example, pretending to be vulnerable or pretending to be affectionate or in distress to gain an advantage or control over a situation before attacking another lion.

While thanatosis or ‘playing possum’ is seen in various species, such as ground-nesting birds ‘pretending’ to have a broken wing to lure predators away from their nests, or possums playing dead when threatened, these behaviours are instincts; it is evolution that has done the work of producing the pretence, not the animal. As far as we know, a lion cub does not even randomly search for stimuli that might deceive another lion cub in a play fight, even without an understanding of the opponent’s hidden mental states.

Neither do chimpanzees have a general capacity for inventing props to pretend with; that is, they don’t make performances or objects in the world that are intended to be