Abstract

Consensus formation is investigated for multi-agent systems in which agents’ beliefs are both vague and uncertain. Vagueness is represented by a third truth state meaning borderline. This is combined with a probabilistic model of uncertainty. A belief combination operator is then proposed, which exploits borderline truth values to enable agents with conflicting beliefs to reach a compromise. A number of simulation experiments are carried out, in which agents apply this operator in pairwise interactions, under the bounded confidence restriction that the two agents’ beliefs must be sufficiently consistent with each other before agreement can be reached. As well as studying the consensus operator in isolation, we also investigate scenarios in which agents are influenced either directly or indirectly by the state of the world. For the former, we conduct simulations that combine consensus formation with belief updating based on evidence. For the latter, we investigate the effect of assuming that the closer an agent’s beliefs are to the truth the more visible they are in the consensus building process. In all cases, applying the consensus operators results in the population converging to a single shared belief that is both crisp and certain. Furthermore, simulations that combine consensus formation with evidential updating converge more quickly to a shared opinion, which is closer to the actual state of the world than those in which beliefs are only changed as a result of directly receiving new evidence. Finally, if agent interactions are guided by belief quality measured as similarity to the true state of the world, then applying the consensus operator alone results in the population converging to a high-quality shared belief.

1 Introduction

Reaching an agreement by identifying a position or viewpoint that can ultimately be accepted by a significant proportion of the individuals in a population is a fundamental part of many multi-agent decision making and negotiation scenarios. In human interactions, opinions can take the form of vague propositions with explicitly borderline truth values, i.e. where the proposition is neither absolutely true nor absolutely false (Keefe & Smith, 2002). Indeed, a number of recent studies (Balenzuela, Pinasco, & Semeshenko, 2015; Crosscombe & Lawry, 2015; de la Lama, Szendro, Iglesias, & Wio, 2012; Perron, Vasudevan, & Vojnovic, 2009; Vazquez & Redener, 2004) have suggested that the presence of an intermediate truth state of this kind can play a positive role in opinion dynamics by allowing compromise and hence facilitating convergence to a shared viewpoint.

In addition to vagueness, individuals often have uncertain beliefs, owing to the limited and imperfect evidence that they have available to them about the true state of the world. In this paper, we propose a model of belief combination by which two independent agents can reach a consensus between distinct and, to some extent, conflicting opinions that are both uncertain and vague. We show that, in an agent-based system, iteratively applying this operator under a variety of conditions results in the agents converging on a single opinion which is both crisp (i.e. non-vague) and certain.

However, beliefs are not arrived at only as the result of consensus building within a closed system, but are also influenced by the actual state of the world. This can arise both by agents updating their beliefs given evidence, and by them receiving different levels of payoff for decisions and actions taken on the basis of their beliefs. In this paper, we model both of these processes when combined with consensus formation. We consider the case in which a population of agents interact continually at random, forming consensus where appropriate, but occasionally receiving direct information about the state of the world. Defining a measure of belief quality, taking account of the similarity between an agent’s belief and the true state of the world, we then record this quality measure in simulations that combine both consensus building and belief updating from evidence and compare these with simulations in which only evidence-based updating occurs. In these studies, we observe that combining evidence-based updating and consensus building results in faster convergence to higher quality beliefs than when beliefs are only changed as a result of receiving new evidence. This would seem to offer some support for the hypothesis put forward by Douven and Kelp (2011), that scientists may gain by taking account of each others’ opinions as well as by considering direct evidence.

In addition to direct evidence, there are also indirect mechanisms by which agents receive feedback on the quality of beliefs. For example, when agents make decisions and take actions based on their beliefs, they may receive some form of reward or payoff. In such cases, it is reasonable to assume that the higher the quality of an agent’s beliefs, i.e. the closer the beliefs are to the true state of the world, the higher the payoff that the agent will receive on average. Here we investigate a scenario in which the quality of an agent’s beliefs influences their visibility in the consensus building process. This is studied in simulation experiments in which interactions between agents are guided by the quality of their beliefs, so that individuals holding higher quality opinions are more likely to be selected to combine their beliefs.

The remainder of the paper is structured as follows. The next section gives an overview of related work in this area. Then we introduce a propositional model of belief, which incorporates both vagueness and uncertainty, and proposes a combination operator for generating a compromise between two distinct beliefs. A set of simulation experiments are then described, in which agents interact at random and apply the combination operator, provided that they hold sufficiently consistent beliefs. Next, we combine random agent interactions and consensus formation with belief updating, based on direct evidence about the true state of the world. After this, we describe simulation experiments in which agent interactions are dependent on the quality of their beliefs. Finally, we present some conclusions and discuss possible future directions.

2 Background and related work

A number of studies in the opinion dynamics literature exploit a third truth state to aid convergence and also to mitigate the effect of a minority of highly opinionated individuals. For example, de la Lama et al. (2012) and Vazquez and Redener (2004) study scenarios in which interactions only take place between agents with a clear viewpoint and undecided agents. Alternatively, Balenzuela et al. (2015) define the three truth states by applying a partitioning threshold to an underlying real value. Updating is pairwise between agents and takes place incrementally on the real values, except that the magnitude and sign of the increments depends on the current truth states of the agents involved. An alternative pairwise three-valued operator is proposed by Perron et al. (2009), and is applied directly to truth states. In particular, this operator assigns the third truth state as a compromise between two opinions with strictly opposing truth values. The logical properties of this operator and its relationship to other similar aggregation functions are investigated by Lawry and Dubois (2012). For a language with a single proposition and assuming unconstrained random interactions between individuals, Perron et al. proves convergence to a single shared Boolean opinion. This framework is extended by Crosscombe and Lawry (2015) to languages with multiple propositions and to include a form of bounded confidence (see Hegselmann & Krause, 2002), in which interactions only take place between individuals with sufficiently consistent opinions. Furthermore, Crosscombe and Lawry (2015) also investigate convergence when the selection of agents is guided by a measure of the quality of their opinions and shows that the average quality of opinions across the population is higher at steady state than at initialization.

One common feature of most of these studies is that, either explicitly or implicitly, they interpret the third truth value as meaning ‘uncertain’ or ‘unknown’. In contrast, as stated in our introduction, we intend the middle truth value to refer to borderline cases resulting from the underlying vagueness of the language. So, for example, given the proposition ‘Ethel is short’, the intermediate truth value means that Ethel’s height is on the borderline between short and not short, rather than meaning that Ethel’s height is unknown. This approach allows us to distinguish between vagueness and uncertainty, so that, for instance, based on their knowledge of Ethel’s height, agents could be certain that she is borderline short. A more detailed analysis of the difference between these two possible interpretations of the third truth state is given by Ciucci, Dubois, and Lawry (2014).

The idea of bounded confidence (Deffuant, Amblard, Weisbuch, & Faure, 2002; Hegselmann & Krause, 2002) has been proposed as a mechanism by which agents limit their interactions with others, so that they only combine their beliefs with individuals holding opinions that are sufficiently similar to their current view. A version of bounded confidence is also used in our proposed model, where agents each measure the relative inconsistency of their beliefs with those of others, and are then only willing to combine beliefs with agents whose inconsistency measure is below a certain threshold.

The aggregation of uncertain beliefs in the form of a probability distribution over some underlying parameter has been widely studied with work on opinion pooling dating back to Stone (2004) and DeGroot (1974). Usually the aggregate of a set of opinions takes the form of a weighted linear combination of the associated probability distributions. However, the convergence of alternative opinion pooling functions has been studied by Hegselmann and Krause (2005) and axiomatic characterizations of different operators are given by Dietrich and List (2016). All of these approaches assume Boolean truth states; indeed, there are very few studies in this context that combine probability with a three-valued truth model. One such is that of Cho and Swami (2014), who adopt a model of beliefs in the form of Dempster–Shafer functions. The combination operators proposed by Cho and Swami, however, are quite different from those described in this paper and result in quite different limiting behaviour. The operator investigated in this paper was first proposed by Lawry and Dubois (2012) as an extension of the approach of Perron et al. (2009) to take account of probabilistic uncertainty; to our knowledge, it has not, up to this point, been studied in an agent-based setting. Hence, in contrast with the work of Lawry and Dubois (2012), the focus of this paper is on the system-level behaviour of the proposed operator rather than on its theoretical properties.

3 A consensus operator for vague and uncertain beliefs

We consider a simple language consisting of n propositions

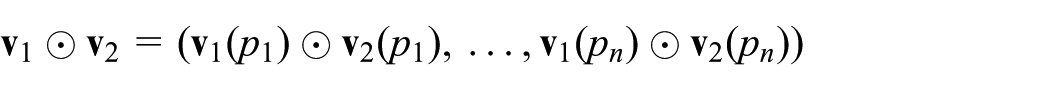

Truth table for the consensus operator.

The intuition behind the operator is as follows: in the case that the two agents disagree, if one agent has allocated a non-borderline truth value to

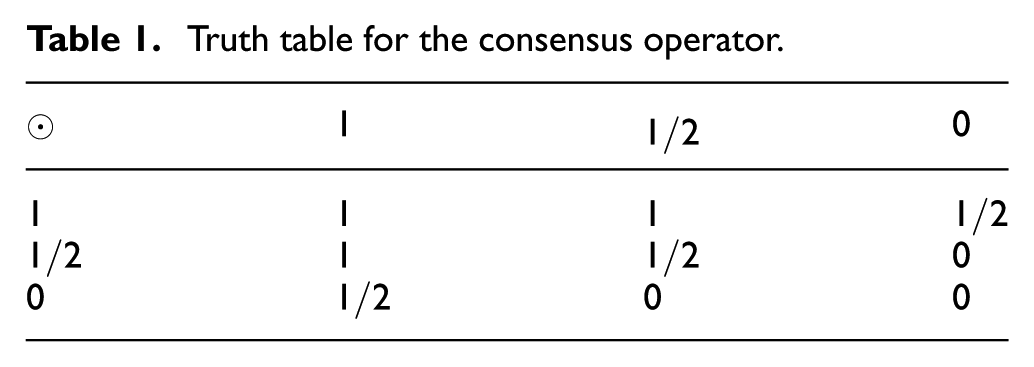

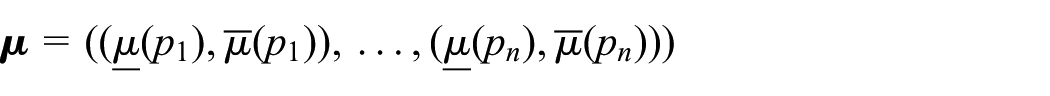

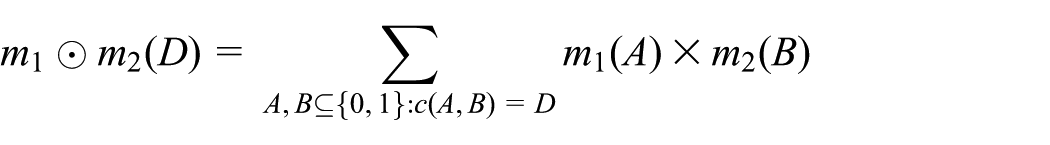

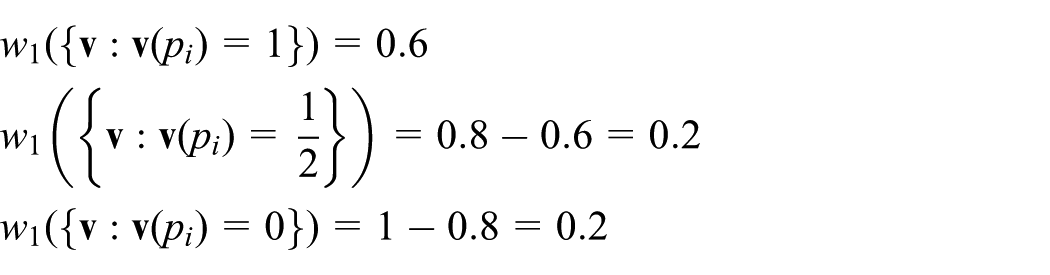

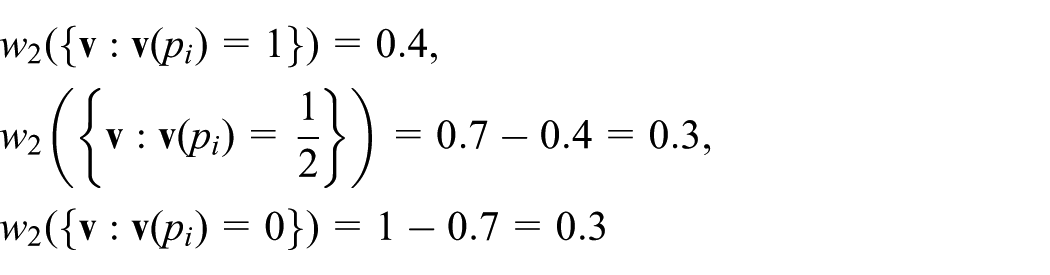

Here we extend this model to allow agents to hold opinions that are uncertain as well as vague. More specifically, an integrated approach to uncertainty and vagueness is adopted, in which an agent’s belief is characterized by a probability distribution w over

and

The probability of each of the possible truth values for a proposition

Here we let

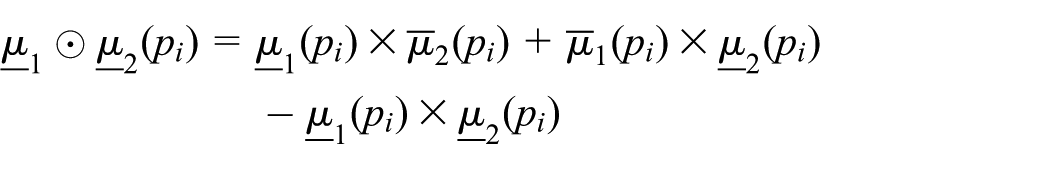

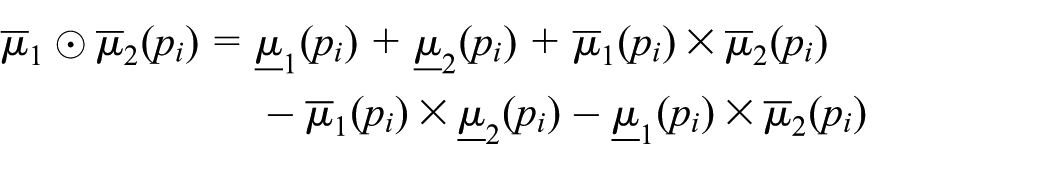

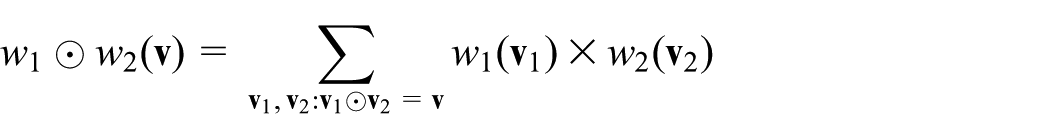

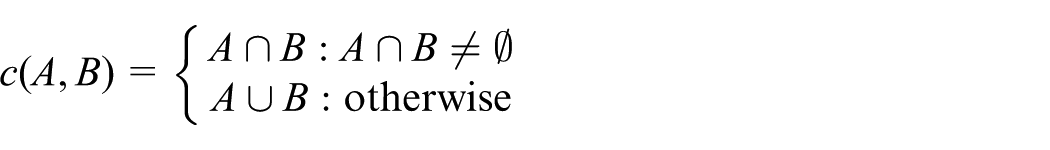

The following definition expands the consensus operation ⊙ from three-valued valuations to this more general representation framework.

where

and

If

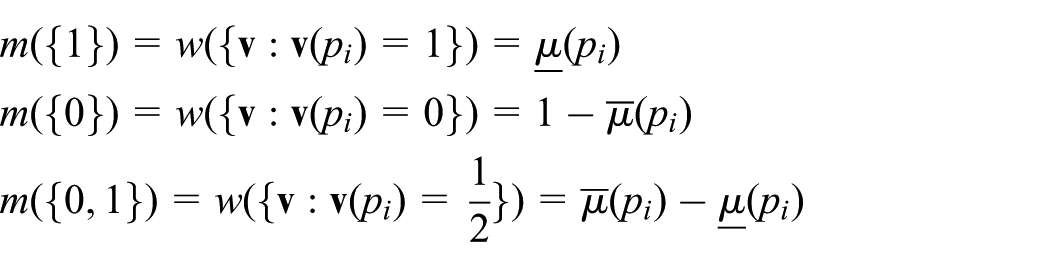

In other words, assuming that the two agents are independent, all pairs of valuations supported by the two agents are combined using the consensus operator for valuations and then aggregated. Interestingly, this operator can be reformulated as a special case of the union combination operator in Dempster–Shafer theory (see Shafer, 1976) proposed by Dubois and Prade (1988). To see this, notice that, given a probability distribution w on

In this reformulation then, the lower and upper measures

Then the combination of

The belief and plausibility of

and

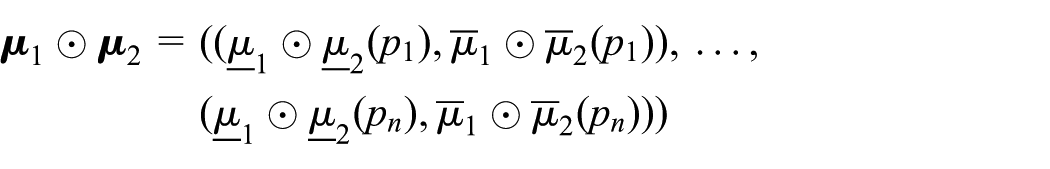

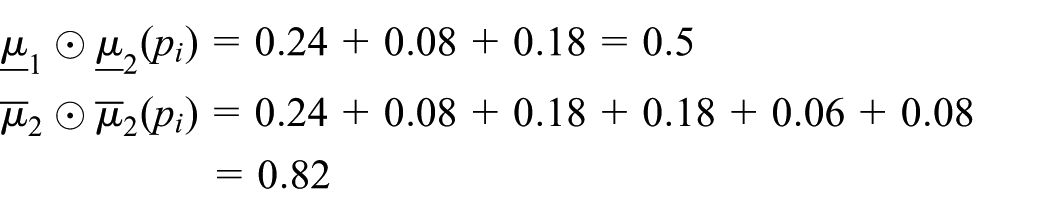

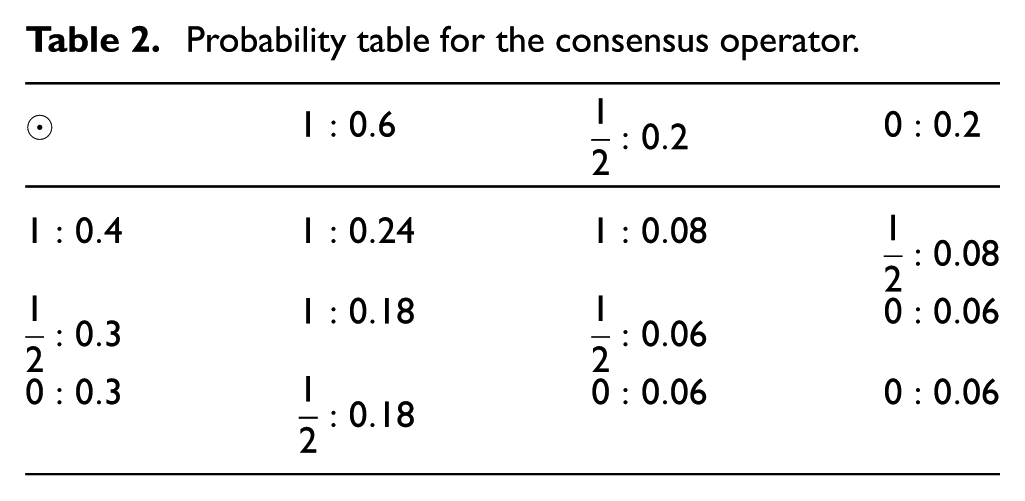

From this, we can generate a probability table (Table 2). Here, the corresponding truth values are generated as in Table 1 and the probability values in each cell are the product of the associated row and column probability values. From this table, we can then determine the consensus belief in

Probability table for the consensus operator.

We now introduce three measures that will subsequently be used to analyse the behaviour of multi-agent systems applying the operator given in Definition 1.

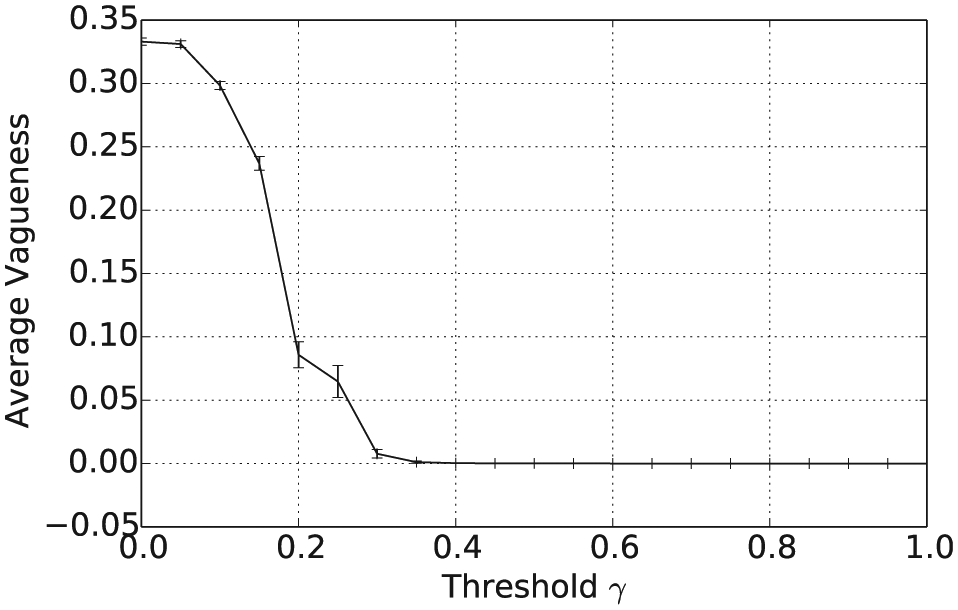

The degree of vagueness of the belief

Definition 2 is simply the probability of the truth value

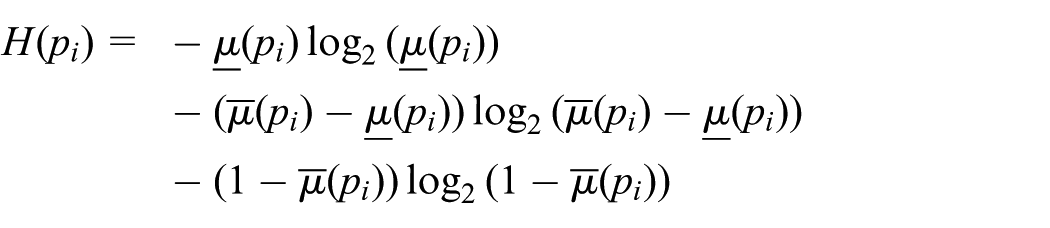

The entropy of the belief

where

Definition 3 corresponds to the entropy of the marginal distributions on

The most certain beliefs then correspond to those for which for every proposition

The degree of inconsistency of two beliefs

Definition 4 is the probability of a direct conflict between the two agents’ beliefs, i.e. with agent 1 allocating the truth value 1 and agent 2 the truth value 0 or vice versa, this being then averaged across all n propositions.

4 Simulation experiments with random selection of agents

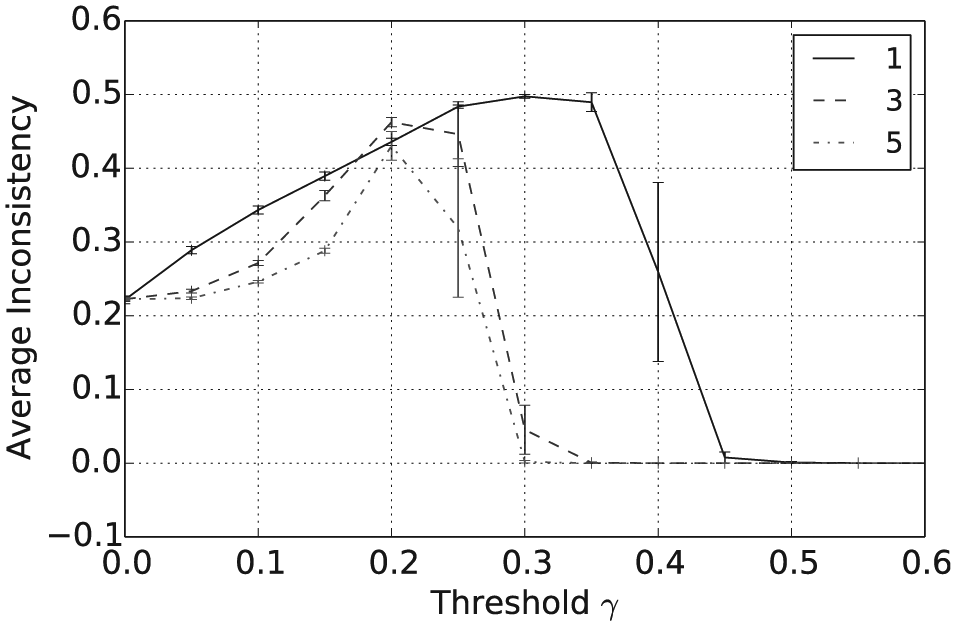

We now describe simulation experiments in which pairs of agents are selected to interact at random. A model of bounded confidence is applied according to which, for each selected pair of agents the consensus operation (Definition 1) is applied if and only if the measure of inconsistency between their beliefs, as given in Definition 4, does not exceed a threshold parameter

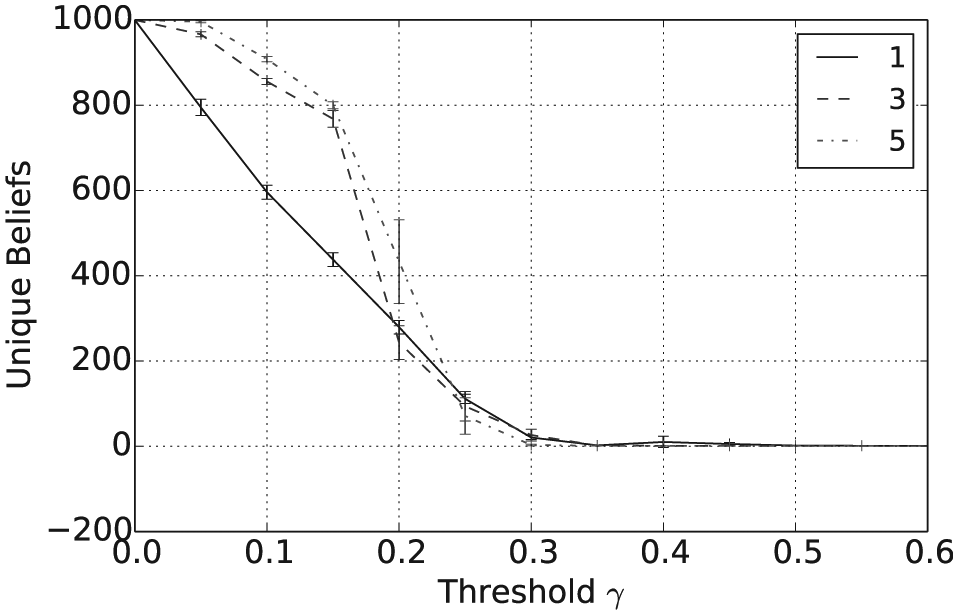

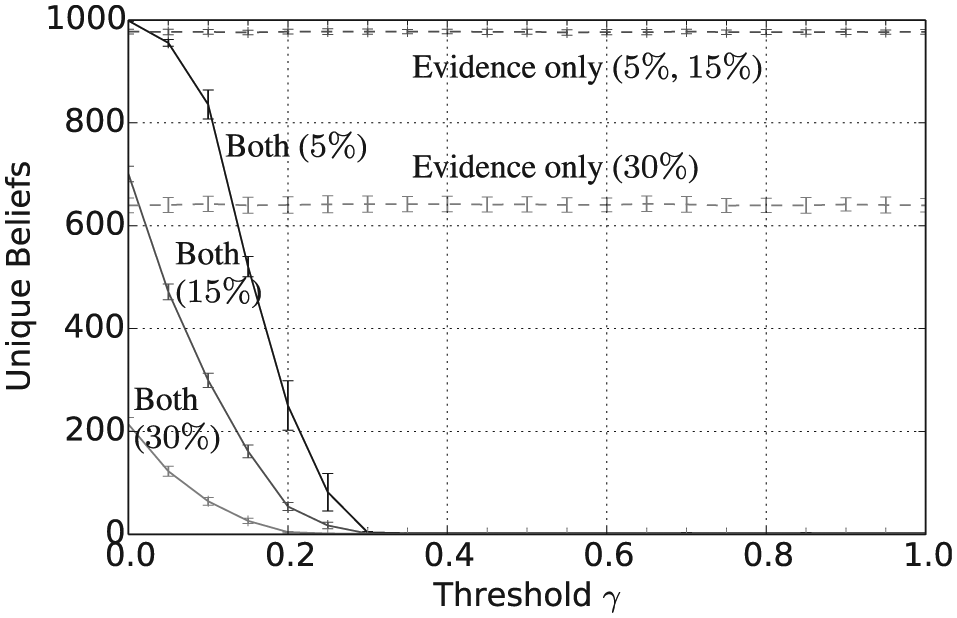

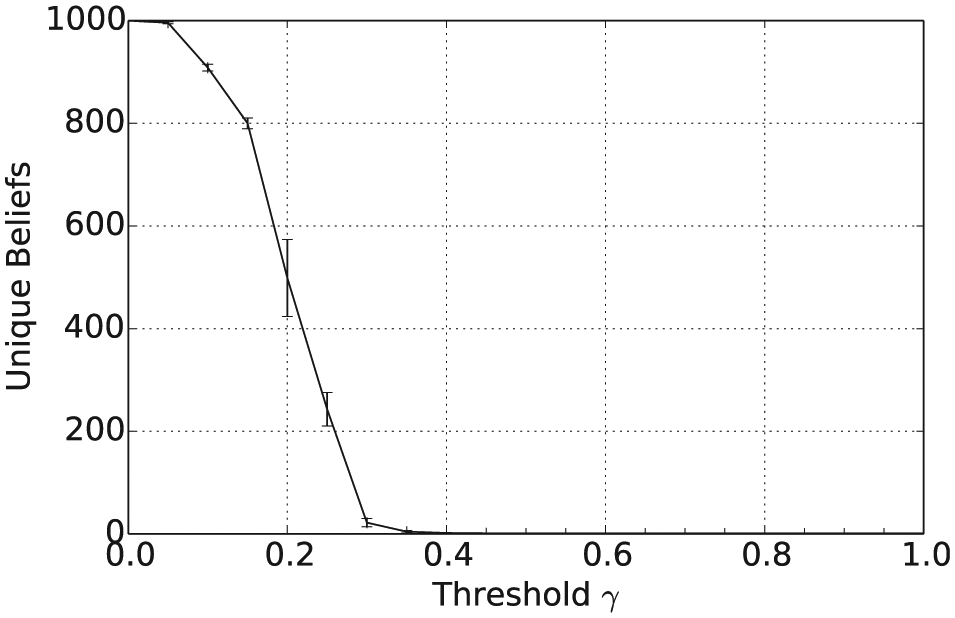

Figure 1 shows that the mean number of unique beliefs after

Number of unique beliefs after

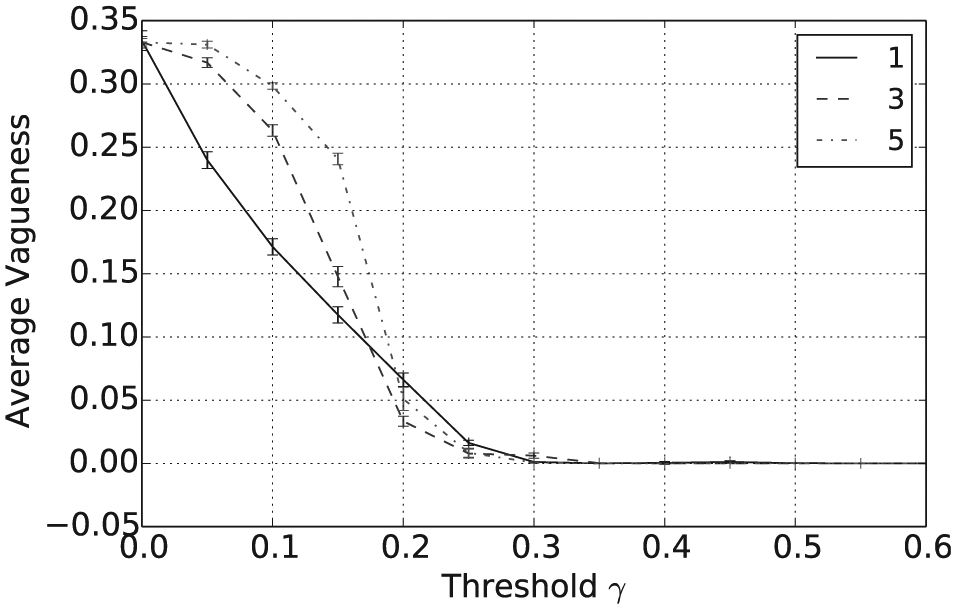

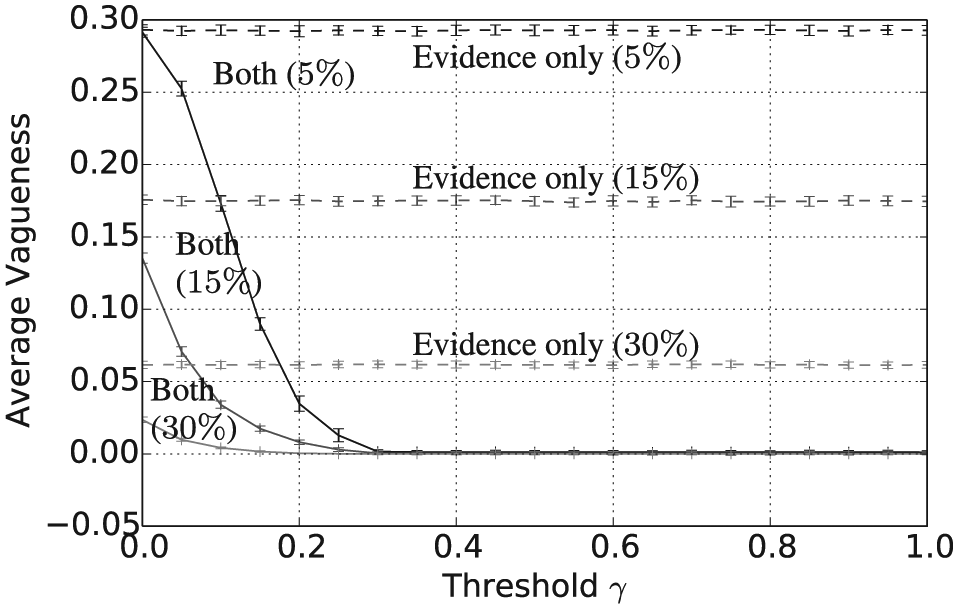

Average vagueness after

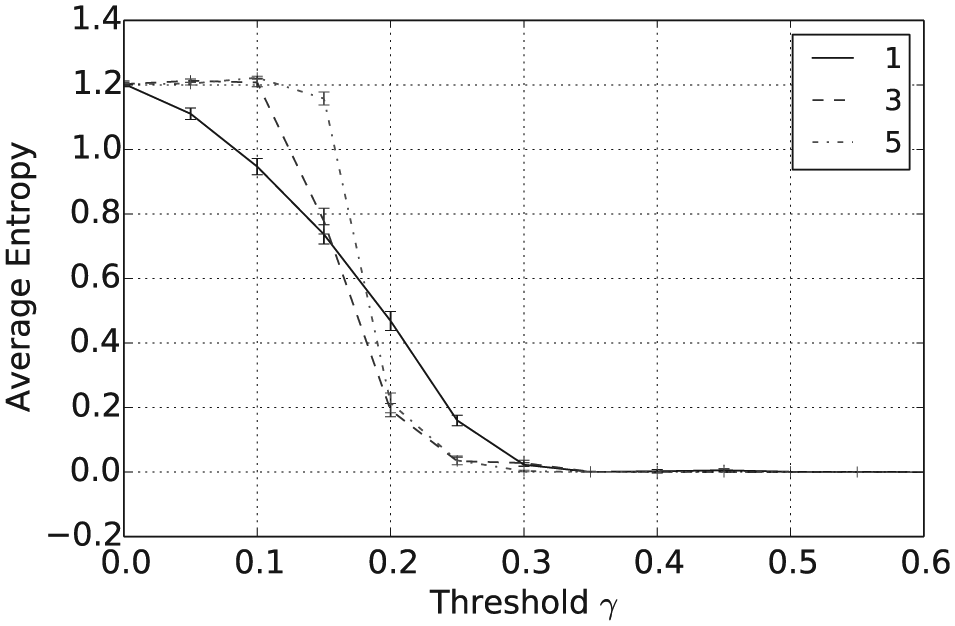

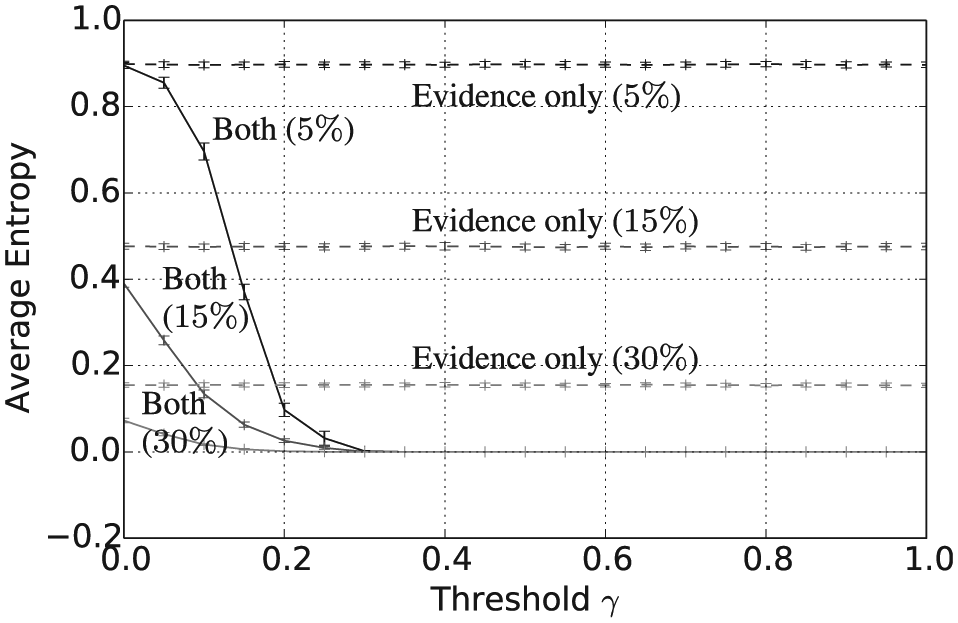

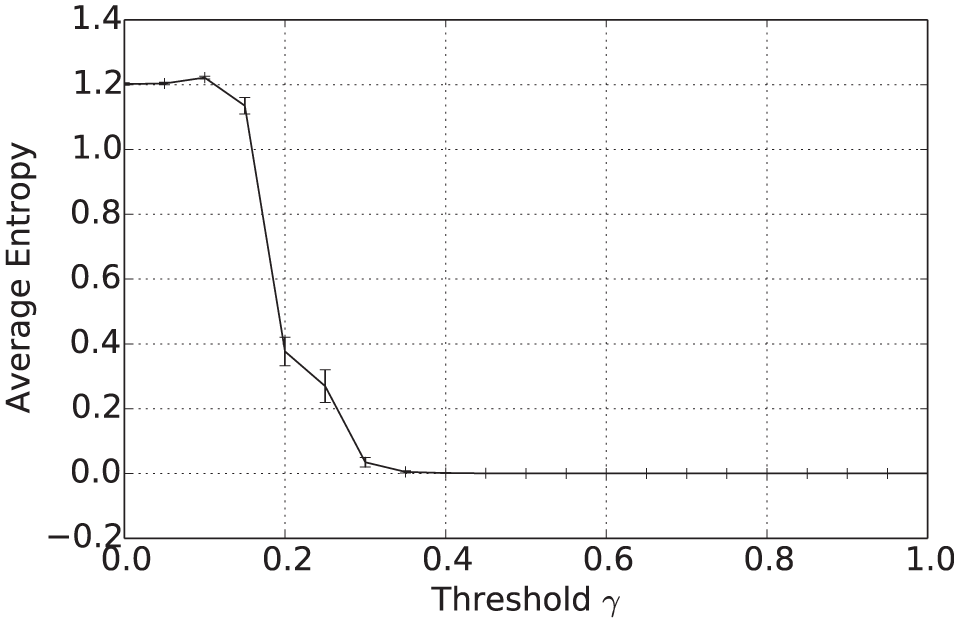

Average entropy after

In addition to the overall consensus reached between agents when

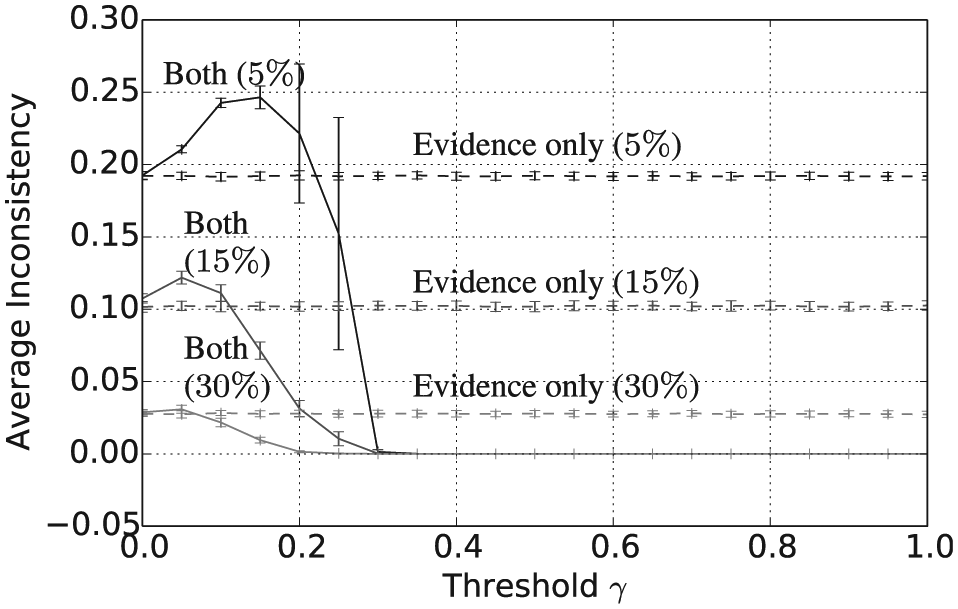

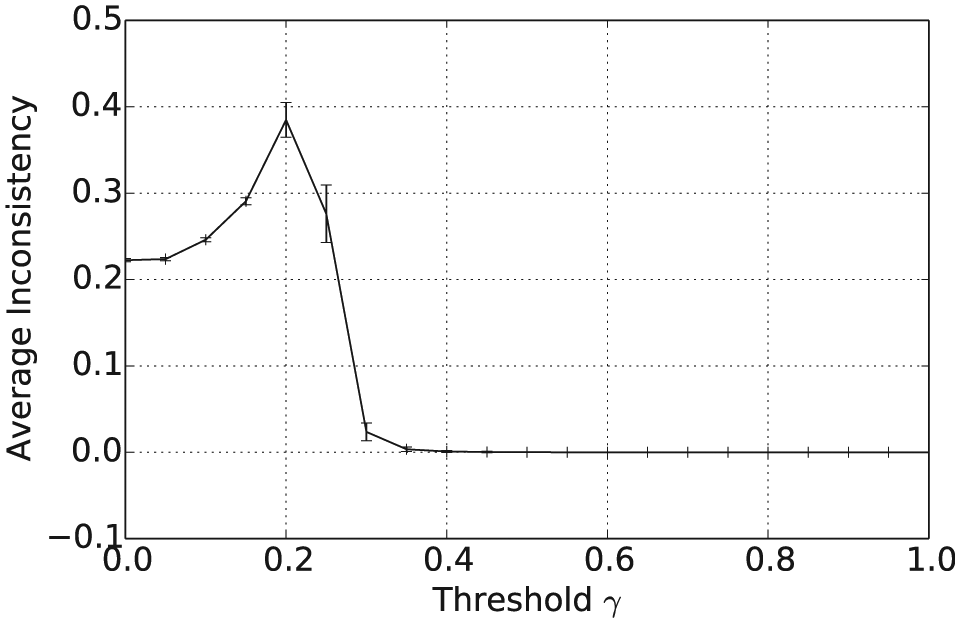

Average pairwise inconsistency after

5 Simulation experiments involving consensus formation and belief updating

Hegselmann and Krause (2005) investigated an opinion model in which agents receive direct evidence about the state of the world, perhaps from an ongoing measurement process, as well as pooling the opinion of others with similar beliefs. Their original model involved real valued beliefs but has been adapted by Reigler and Douven (2009) to the case in which beliefs and evidence are theories in a propositional logic language. The fundamental question under consideration is whether or to what extent dialogue between individuals, for example scientists, helps them to find the truth, or whether they are instead better off simply to wait until they receive direct evidence. In this section, we investigate this question in the context of vague and uncertain beliefs, where consensus building is modelled using the combination operator in Definition 1. Direct evidence is then provided to the population at random instances when individuals are told the truth value of a proposition. These agents then update their beliefs by adopting a compromise position between their previous opinions and the evidence provided.

We assume that the true state of the world is a Boolean valuation

Let

Notice that

corresponding to agents’ expected payoff from their beliefs about proposition

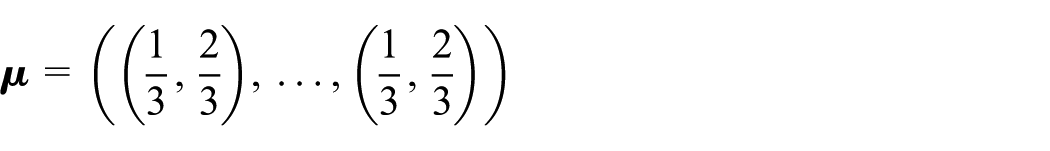

The simulations consist of 1000 agents with beliefs initially picked at random from

In other words, the agent adopts a new set of beliefs formed as a compromise between previously held beliefs and the evidence, the latter being interpreted as a set of beliefs where

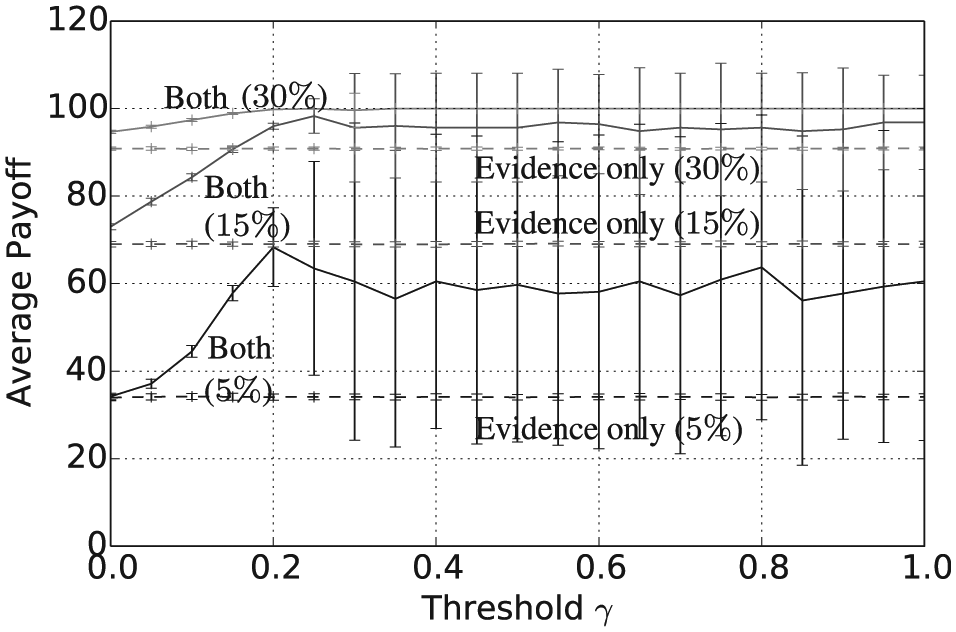

In this section, we focus on evidence rates of

Number of unique beliefs after

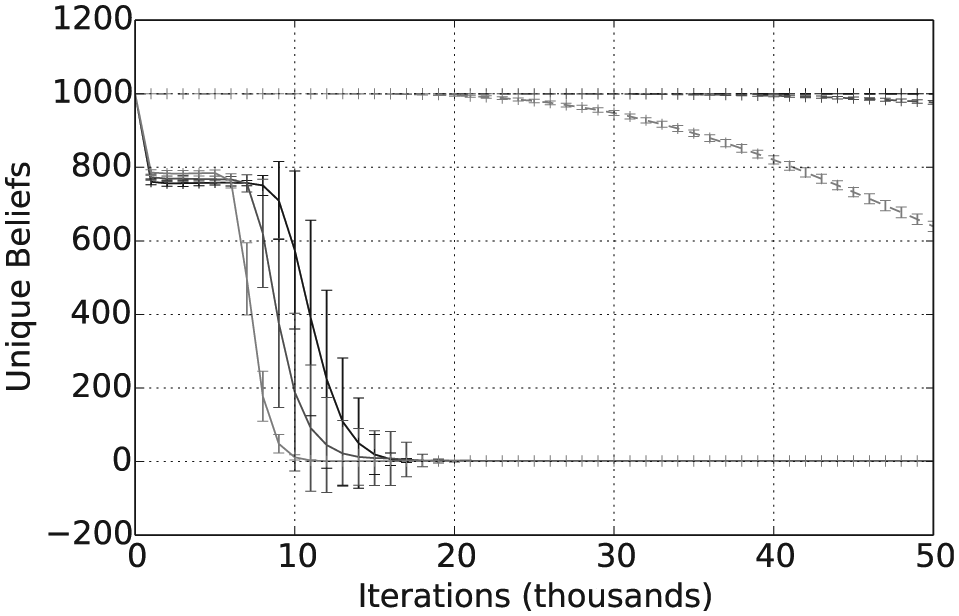

Number of unique beliefs over

Taken together with Figure 5, Figures 7 and 8 show that, assuming a sufficiently high threshold value

Average vagueness after

Average entropy after

Average pairwise inconsistency after

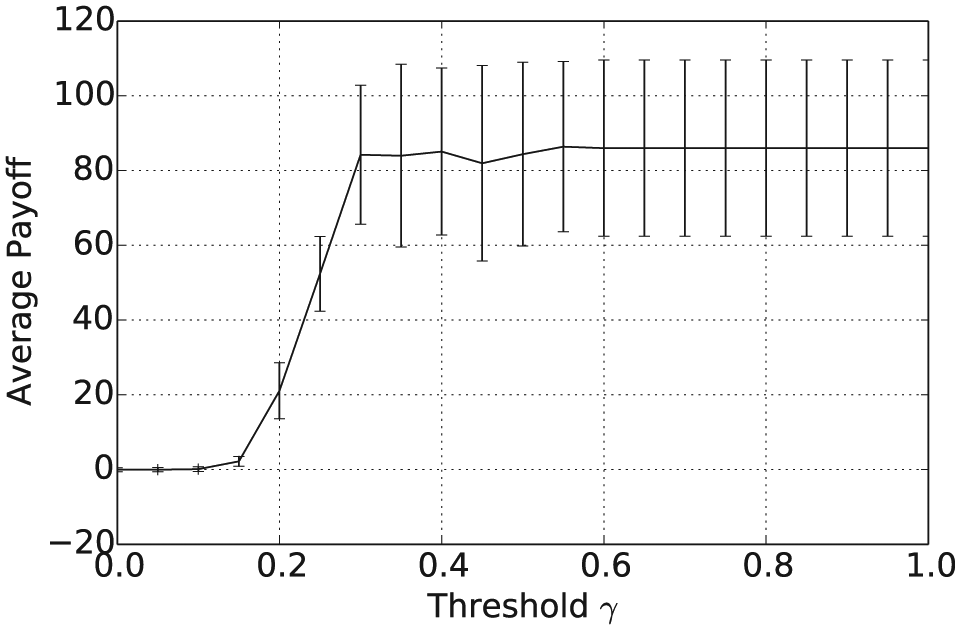

Average payoff after

6 Simulation experiments with agent selection influenced by belief quality

In this section, we consider a scenario in which agents receive indirect feedback about the accuracy of their beliefs in the form of payoff or reward obtained as a result of actions that they have taken on the basis of these beliefs. Furthermore, we assume that the closer that an agent’s beliefs are to the actual state of the world then the higher their rewards will be, on average. Hence, we use the payoff measure given in Definition 5 as a proxy for this process so that agent selection in the consensus building process is guided by the payoff or quality measure of the agent’s beliefs. More specifically, we now investigate an agent-based system in which pairs of agents are selected for interaction with a probability that is proportional to the product of the quality of their respective beliefs. For modelling societal opinion dynamics, this captures an assumption that better performing agents, i.e. those with higher payoff, are more likely to interact in a context in which both parties will benefit from reaching an agreement. In biological systems, there are examples of a similar quality effect on distributed decision making. For instance, honeybee swarms collectively choose between alternative nesting sites by means of a dance in which individual bees indicate the direction of the site that they have just visited (List, Elsholtz, & Seeley, 2009). The duration of the dance is dependant on the quality of the site and this in turn affects the likelihood that the dancer will influence other bees. Artificial systems can, of course, be designed so that interactions are guided by quality, provided that a suitable measure of the latter can be defined, as is typically the case in evolutionary computing.

We now describe the results from running agent-based simulations mainly following the same template as before but with an important difference. Instead of being selected at random, agents were instead selected for interaction with probability proportional to the quality value of their beliefs, as given in Definition 5. The true state of the world

Figure 11 shows the mean number of unique beliefs for the consensus operator after

Number of unique beliefs after

Average vagueness after

Average entropy after

Average pairwise inconsistency after

Figure 15 shows the average quality of beliefs (Definition 5) at the end of the simulation, plotted against

Average payoff after

7 Conclusions

We have investigated consensus formation for a multi-agent system in which agents’ beliefs are both vague and uncertain. For this, we have adopted a formalism that combines three truth states according to probability, resulting in opinions that are quantified by lower and upper belief measures. A combination operator has been introduced, according to which agents are assumed to be independent and in which strictly opposing truth states are replaced with an intermediate borderline truth value. In simulation experiments, we have applied this operator to random agent interactions constrained by the requirement that agreement can only be reached between agents holding beliefs that are sufficiently consistent with each other. Provided that this consistency requirement is not too restrictive, the population of agents is shown to converge on a single shared belief that is both crisp and certain. Furthermore, if combined with evidence about the state of the world, either in a direct or indirect way, consensus building of this kind results in better convergence to the truth than evidential belief updating alone.

Overall, these results provide some evidence for the beneficial effects of allowing agents to hold beliefs that are both vague and uncertain, in the context of consensus building. However, we have only studied pairwise interactions between agents, while in the literature it is normally intended that pooling operators should be used to aggregate beliefs across a group of agents (DeGroot, 1974; Dietrich & List, 2016). Hence, future work should extend the operator in Definition 1 so as to allow more than two agents to reach agreement at any step of the simulation. Probabilistic pooling operators can also take account of different weights associated with the beliefs of different agents; it will be interesting to investigate whether this can be incorporated in our approach. Another avenue for future research is to consider noisy evidence. Evidential updating is rarely perfect and, for example, experiments can be prone to measurement errors. It would be interesting, therefore, to ask how the combined consensus building and updating approach described in this paper copes with such noise. In the longer term, the aim is to apply our approach to distributed decision making scenarios such as, for example, in swarm robotics.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is partially funded by a PhD studentship from the UK Engineering and Physical Sciences Research Council as part of a doctoral training partnership, grant number EP/L504919/1. All underlying data is included in full within this paper.